Submitted:

03 February 2025

Posted:

05 February 2025

You are already at the latest version

Abstract

Keywords:

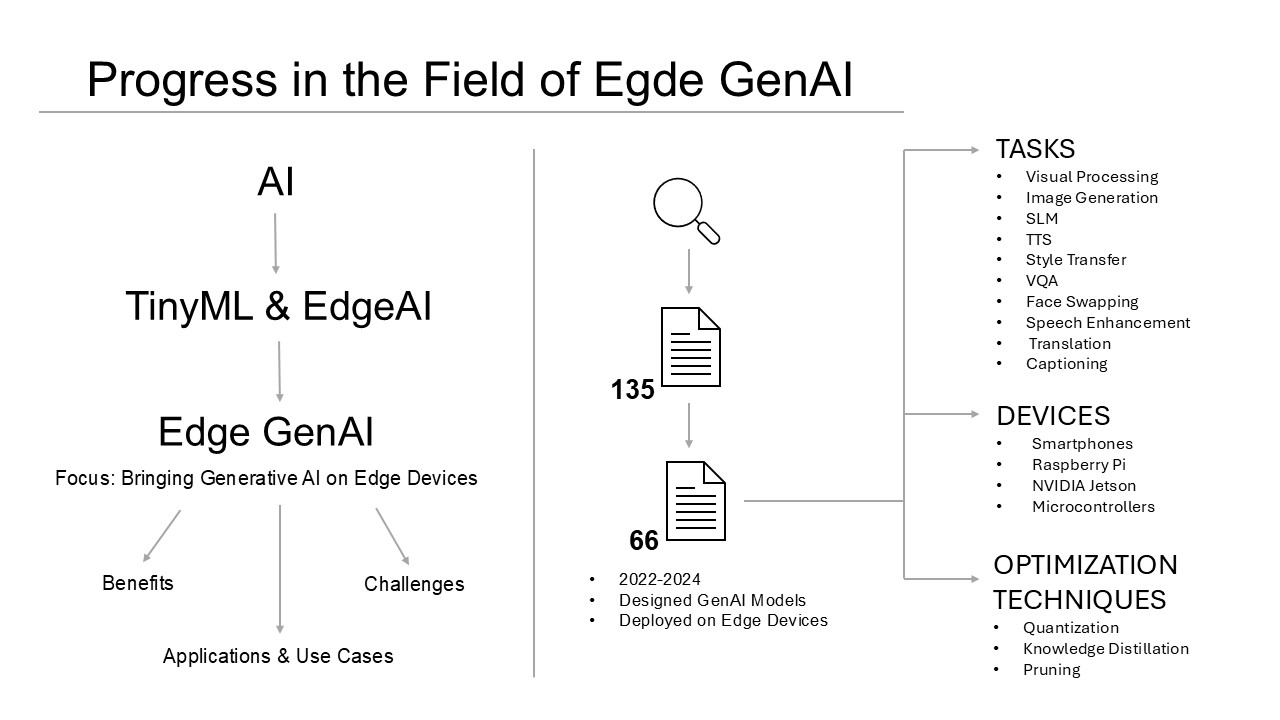

1. Introduction

1.1. Contributions

1.2. Organization

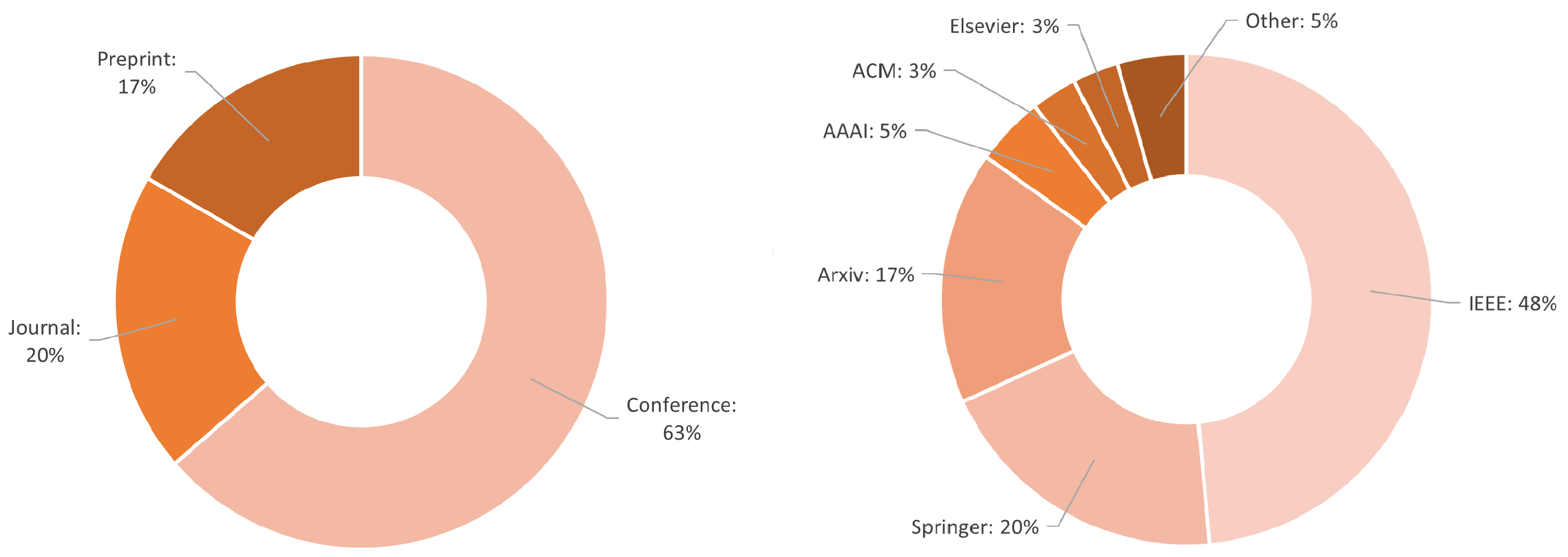

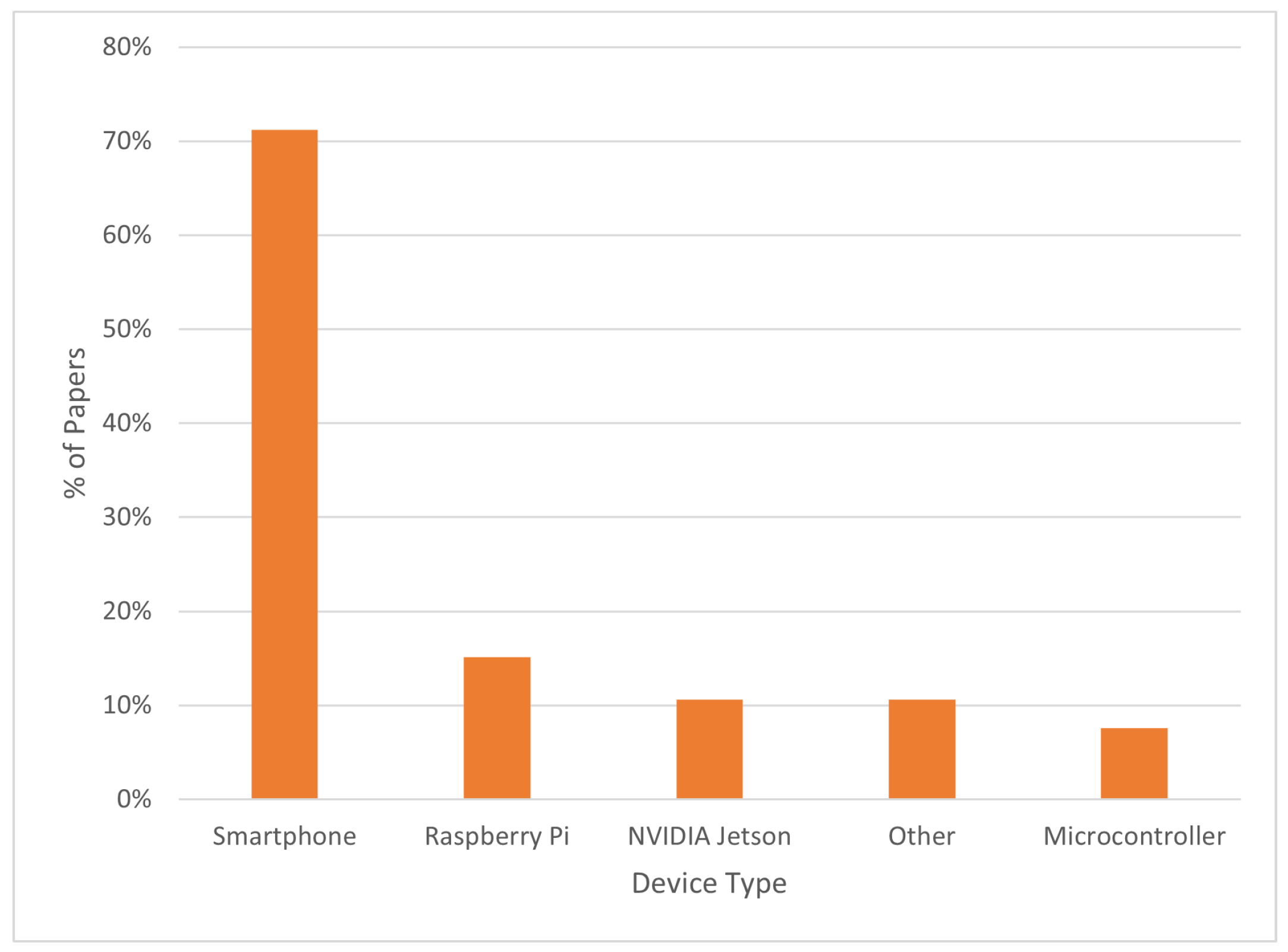

2. Methodology

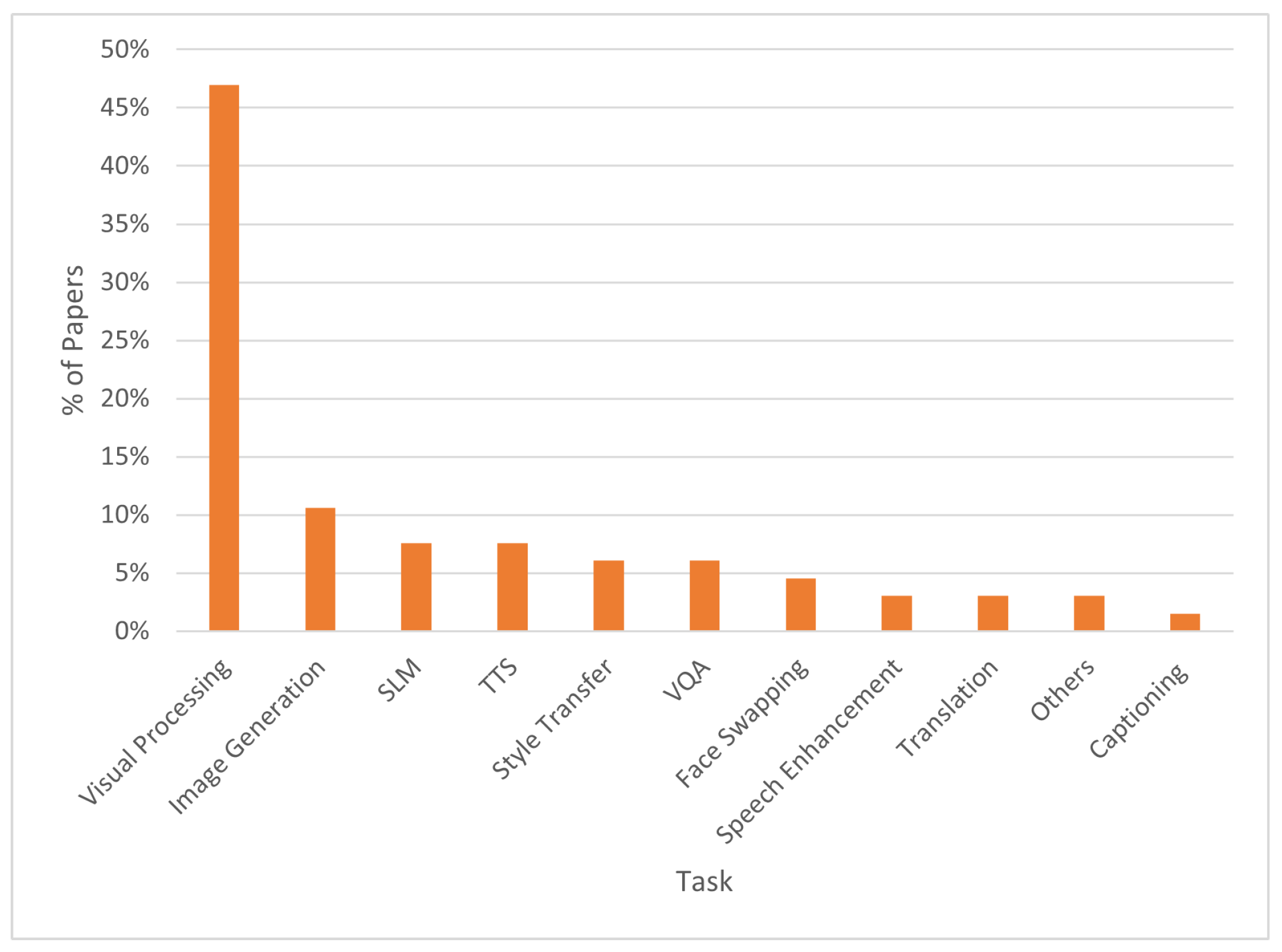

3. Use Cases for Edge GenAI

3.1. Assisting People with Disabilities

3.2. Personal Digital Assistants

3.3. Real-Time Image Enhancement and Super-Resolution

3.4. Video Surveillance and Health Monitoring

3.5. Anonymization

3.6. Autonomicity

4. Edge Generative AI Approaches

4.1. Visual Question Answering

- Attention-based two-stage early exit: the processing was exited for questions that cannot be answered and if further processing won’t improve the accuracy.

- Question-aware pruning: only the most salient part of the image to answer a given question was processed, while the remaining part of the image was pruned.

- Adapting to grid-based models: region-based models, more accurate but computationally expensive, were converted to grid-based models, less accurate but more efficient.

4.2. Image and Video Captioning

4.3. Text-to-Speech

4.4. Speech Enhancement

4.5. Neural Machine Translation

4.6. Neural Style Transfer

4.7. Face Swapping

4.8. Visual Processing Tasks

- Learned Smartphone ISP on Mobile GPUs [65]

- Realistic Bokeh Effect Rendering on Mobile GPUs [66]

- Efficient Single-Image Depth Estimation on Mobile Devices [67]

- Super-Resolution of Compressed Image and Video [68]

- Reversed Image Signal Processing and RAW Reconstruction [69]

- Instagram Filter Removal [70]

4.9. Image Generation

- AI and ML: Techniques like Generative Adversarial Networks (GANs) can create realistic images by learning from a large dataset of existing images.

- Procedural Generation: Creating images algorithmically based on a set of rules or parameters, often used in video games and simulations.

4.10. Small Language Models

5. Common Optimizations

6. Discussion and Future Directions

7. Conclusions

8. Patents

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Fiorenza, G.; Pau, D.P.; Schettini, R. Action Prediction with Edge Generative AI for Mice Pre-clinical Studies. In Proceedings of the 2024 International Conference on Computational Science and Computational Intelligence (CSCI). IEEE, 2024.

- EDGE AI FOUNDATION. https://www.edgeaifoundation.org/about, 2024. Accessed on 4 December 2024.

- About the EDGE AI FOUNDATION. https://www.edgeaifoundation.org/, 2024. Accessed on 4 December 2024.

- Ancilotto, A.; Farella, E. Painting the Starry Night using XiNets. In Proceedings of the 2024 IEEE International Conference on Pervasive Computing and Communications Workshops and other Affiliated Events (PerCom Workshops). IEEE, 2024, pp. 684–689.

- Cao, Q.; Khanna, P.; Lane, N.D.; Balasubramanian, A. Mobivqa: Efficient on-device visual question answering. Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies 2022, 6, 1–23. [CrossRef]

- Tan, H.; Bansal, M. Lxmert: Learning cross-modality encoder representations from transformers. arXiv preprint arXiv:1908.07490 2019. [CrossRef]

- Cho, J.; Lu, J.; Schwenk, D.; Hajishirzi, H.; Kembhavi, A. X-lxmert: Paint, caption and answer questions with multi-modal transformers. arXiv preprint arXiv:2009.11278 2020. [CrossRef]

- Kim, W.; Son, B.; Kim, I. Vilt: Vision-and-language transformer without convolution or region supervision. In Proceedings of the International conference on machine learning. PMLR, 2021, pp. 5583–5594.

- Yu, Z.; Jin, Z.; Yu, J.; Xu, M.; Wang, H.; Fan, J. Bilaterally Slimmable Transformer for Elastic and Efficient Visual Question Answering. IEEE Transactions on Multimedia 2023, 25, 9543–9556. [CrossRef]

- Yu, Z.; Yu, J.; Cui, Y.; Tao, D.; Tian, Q. Deep modular co-attention networks for visual question answering. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2019, pp. 6281–6290.

- Chen, Y.C.; Li, L.; Yu, L.; El Kholy, A.; Ahmed, F.; Gan, Z.; Cheng, Y.; Liu, J. Uniter: Universal image-text representation learning. In Proceedings of the European conference on computer vision. Springer, 2020, pp. 104–120.

- Shen, S.; Li, L.H.; Tan, H.; Bansal, M.; Rohrbach, A.; Chang, K.W.; Yao, Z.; Keutzer, K. How much can clip benefit vision-and-language tasks? arXiv preprint arXiv:2107.06383 2021. [CrossRef]

- Rashid, H.A.; Sarkar, A.; Gangopadhyay, A.; Rahnemoonfar, M.; Mohsenin, T. TinyVQA: Compact Multimodal Deep Neural Network for Visual Question Answering on Resource-Constrained Devices. arXiv preprint arXiv:2404.03574 2024. [CrossRef]

- Rahnemoonfar, M.; Chowdhury, T.; Sarkar, A.; Varshney, D.; Yari, M.; Murphy, R.R. Floodnet: A high resolution aerial imagery dataset for post flood scene understanding. IEEE Access 2021, 9, 89644–89654. [CrossRef]

- Sarkar, A.; Chowdhury, T.; Murphy, R.R.; Gangopadhyay, A.; Rahnemoonfar, M. Sam-vqa: Supervised attention-based visual question answering model for post-disaster damage assessment on remote sensing imagery. IEEE Transactions on Geoscience and Remote Sensing 2023, 61, 1–16. [CrossRef]

- Mishra, A.; Agarwala, A.; Tiwari, U.; Rajendiran, V.N.; Miriyala, S.S. Efficient Visual Question Answering on Embedded Devices: Cross-Modality Attention With Evolutionary Quantization. In Proceedings of the 2024 IEEE International Conference on Image Processing (ICIP). IEEE, 2024, pp. 2142–2148.

- Goyal, Y.; Khot, T.; Summers-Stay, D.; Batra, D.; Parikh, D. Making the v in vqa matter: Elevating the role of image understanding in visual question answering. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2017, pp. 6904–6913.

- Safiya, K.; Pandian, R. Computer Vision and Voice Assisted Image Captioning Framework for Visually Impaired Individuals using Deep Learning Approach. In Proceedings of the 2023 4th IEEE Global Conference for Advancement in Technology (GCAT). IEEE, 2023, pp. 1–7.

- Wang, Y.; Lou, S.; Wang, K.; Wang, Y.; Yuan, X.; Liu, H. Automatic Captioning based on Visible and Infrared Images. In Proceedings of the 2024 IEEE International Conference on Robotics and Automation (ICRA). IEEE, 2024, pp. 11312–11318.

- Gao, C.; Dong, Y.; Yuan, X.; Han, Y.; Liu, H. Infrared Image Captioning with Wearable Device. In Proceedings of the 2023 IEEE International Conference on Robotics and Automation (ICRA). IEEE, 2023, pp. 8187–8193.

- Arystanbekov, B.; Kuzdeuov, A.; Nurgaliyev, S.; Varol, H.A. Image Captioning for the Visually Impaired and Blind: A Recipe for Low-Resource Languages. In Proceedings of the 2023 45th Annual International Conference of the IEEE Engineering in Medicine & Biology Society (EMBC). IEEE, 2023, pp. 1–4.

- Uslu, B.; Çaylı, Ö.; Kılıç, V.; Onan, A. Resnet based deep gated recurrent unit for image captioning on smartphone. Avrupa Bilim ve Teknoloji Dergisi 2022, pp. 610–615. [CrossRef]

- Kılcı, M.; Çaylı, Ö.; Kılıç, V. Fusion of High-Level Visual Attributes for Image Captioning. Avrupa Bilim ve Teknoloji Dergisi 2023, pp. 161–168.

- Nguyen, H.; Huynh, T.; Tran, N.; Nguyen, T. MyUEVision: an application generating image caption for assisting visually impaired people. Journal of Enabling Technologies 2024, 18, 248–264. [CrossRef]

- Aydın, S.; Çaylı, Ö.; Kılıç, V.; Onan, A. Sequence-to-sequence video captioning with residual connected gated recurrent units. Avrupa Bilim ve Teknoloji Dergisi 2022, pp. 380–386.

- Pezzuto Damaceno, R.J.; Cesar Jr, R.M. An End-to-End Deep Learning Approach for Video Captioning Through Mobile Devices. In Proceedings of the Iberoamerican Congress on Pattern Recognition. Springer, 2023, pp. 715–729.

- Huang, L.H.; Lu, C.H. Average Sparse Attention for Dense Video Captioning From Multiperspective Edge-Computing Cameras. IEEE Systems Journal 2024. [CrossRef]

- Huang, S.H.; Lu, C.H. Sequence-Aware Learnable Sparse Mask for Frame-Selectable End-to-End Dense Video Captioning for IoT Smart Cameras. IEEE Internet of Things Journal 2023. [CrossRef]

- Lu, C.H.; Fan, G.Y. Environment-aware dense video captioning for IoT-enabled edge cameras. IEEE Internet of Things Journal 2022, 9, 4554–4564. [CrossRef]

- Yousif, A.J.; Al-Jammas, M.H. A Lightweight Visual Understanding System for Enhanced Assistance to the Visually Impaired Using an Embedded Platform. Diyala Journal of Engineering Sciences 2024, pp. 146–162.

- Wang, N.; Xie, J.; Luo, H.; Cheng, Q.; Wu, J.; Jia, M.; Li, L. Efficient image captioning for edge devices. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence, 2023, Vol. 37, pp. 2608–2616.

- Lin, J.; Yin, H.; Ping, W.; Molchanov, P.; Shoeybi, M.; Han, S. Vila: On pre-training for visual language models. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024, pp. 26689–26699.

- Lin, J.; Tang, J.; Tang, H.; Yang, S.; Chen, W.M.; Wang, W.C.; Xiao, G.; Dang, X.; Gan, C.; Han, S. AWQ: Activation-aware Weight Quantization for On-Device LLM Compression and Acceleration. Proceedings of Machine Learning and Systems 2024, 6, 87–100.

- Atienza, R. Efficientspeech: An on-device text to speech model. In Proceedings of the ICASSP 2023-2023 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2023, pp. 1–5.

- Chevi, R.; Prasojo, R.E.; Aji, A.F.; Tjandra, A.; Sakti, S. Nix-TTS: Lightweight and end-to-end text-to-speech via module-wise distillation. In Proceedings of the 2022 IEEE Spoken Language Technology Workshop (SLT). IEEE, 2023, pp. 970–976.

- Kim, J.; Kong, J.; Son, J. Conditional variational autoencoder with adversarial learning for end-to-end text-to-speech. In Proceedings of the International Conference on Machine Learning. PMLR, 2021, pp. 5530–5540.

- Piper. https://github.com/rhasspy/piper, 2022. Accessed on 9 January 2025.

- Kong, J.; Kim, J.; Bae, J. Hifi-gan: Generative adversarial networks for efficient and high fidelity speech synthesis. Advances in neural information processing systems 2020, 33, 17022–17033.

- Ren, Y.; Hu, C.; Tan, X.; Qin, T.; Zhao, S.; Zhao, Z.; Liu, T.Y. Fastspeech 2: Fast and high-quality end-to-end text to speech. arXiv preprint arXiv:2006.04558 2020.

- Nguyen, V.T.; Pham, H.C.; Mac, D.K. How to Push the Fastest Model 50x Faster: Streaming Non-Autoregressive Speech Synthesis on Resouce-Limited Devices. In Proceedings of the ICASSP 2023-2023 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2023, pp. 1–5.

- Yang, G.; Yang, S.; Liu, K.; Fang, P.; Chen, W.; Xie, L. Multi-band melgan: Faster waveform generation for high-quality text-to-speech. In Proceedings of the 2021 IEEE Spoken Language Technology Workshop (SLT). IEEE, 2021, pp. 492–498.

- Ciapponi, S.; Paissan, F.; Ancilotto, A.; Farella, E. TinyVocos: Neural Vocoders on MCUs. In Proceedings of the 2024 IEEE 5th International Symposium on the Internet of Sounds (IS2). IEEE, 2024, pp. 1–10.

- Park, S.; Choo, K.; Lee, J.; Porov, A.V.; Osipov, K.; Sung, J.S. Bunched LPCNet2: Efficient neural vocoders covering devices from cloud to edge. arXiv preprint arXiv:2203.14416 2022.

- Chen, S.; Weng, J.; Hong, S.; He, Y.; Zou, Y.; Wu, K. TransFiLM: An Efficient and Lightweight Audio Enhancement Network for Low-Cost Wearable Sensors. In Proceedings of the 2024 IEEE 21st International Conference on Mobile Ad-Hoc and Smart Systems (MASS). IEEE, 2024, pp. 150–158.

- Šljubura, N.; Šimić, M.; Bilas, V. Deep Learning Based Speech Enhancement on Edge Devices Applied to Assistive Work Equipment. In Proceedings of the 2024 IEEE Sensors Applications Symposium (SAS). IEEE, 2024, pp. 1–6.

- Nossier, S.A.; Wall, J.A.; Moniri, M.; Glackin, C.; Cannings, N. Convolutional Recurrent Smart Speech Enhancement Architecture for Hearing Aids. In Proceedings of the INTERSPEECH, 2022, pp. 5428–5432.

- Vaswani, A. Attention is all you need. Advances in Neural Information Processing Systems 2017.

- Tan, Z.; Yang, Z.; Zhang, M.; Liu, Q.; Sun, M.; Liu, Y. Dynamic multi-branch layers for on-device neural machine translation. IEEE/ACM Transactions on Audio, Speech, and Language Processing 2022, 30, 958–967. [CrossRef]

- Li, S.; Zhang, P.; Gan, G.; Lv, X.; Wang, B.; Wei, J.; Jiang, X. Hypoformer: Hybrid decomposition transformer for edge-friendly neural machine translation. In Proceedings of the Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing, 2022, pp. 7056–7068.

- Kim, Y.; Rush, A.M. Sequence-level knowledge distillation. arXiv preprint arXiv:1606.07947 2016.

- Ancilotto, A.; Paissan, F.; Farella, E. Xinet: Efficient neural networks for tinyml. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2023, pp. 16968–16977.

- Huo, J.; Kong, M.; Li, W.; Wu, J.; Lai, Y.K.; Gao, Y. Towards efficient image and video style transfer via distillation and learnable feature transformation. Computer Vision and Image Understanding 2024, 241, 103947. [CrossRef]

- Suresh, A.P.; Jain, S.; Noinongyao, P.; Ganguly, A.; Watchareeruetai, U.; Samacoits, A. Fastclipstyler: Optimisation-free text-based image style transfer using style representations. In Proceedings of the Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, 2024, pp. 7316–7325.

- Kwon, G.; Ye, J.C. Clipstyler: Image style transfer with a single text condition. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2022, pp. 18062–18071.

- Reimers, N. Sentence-BERT: Sentence Embeddings using Siamese BERT-Networks. arXiv preprint arXiv:1908.10084 2019.

- Ganugula, P.; Kumar, Y.; Reddy, N.; Chellingi, P.; Thakur, A.; Kasera, N.; Anand, C.S. MOSAIC: Multi-object segmented arbitrary stylization using CLIP. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2023, pp. 892–903.

- Xu, Z.; Hong, Z.; Ding, C.; Zhu, Z.; Han, J.; Liu, J.; Ding, E. Mobilefaceswap: A lightweight framework for video face swapping. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence, 2022, Vol. 36, pp. 2973–2981.

- Ancilotto, A.; Paissan, F.; Farella, E. PhiNet-GAN: Bringing real-time face swapping to embedded devices. In Proceedings of the 2023 IEEE International Conference on Pervasive Computing and Communications Workshops and other Affiliated Events (PerCom Workshops). IEEE, 2023, pp. 677–682.

- Ancilotto, A.; Paissan, F.; Farella, E. Ximswap: Many-to-many face swapping for tinyml. ACM Transactions on Embedded Computing Systems 2024, 23, 1–16. [CrossRef]

- Berger, G.; Dhingra, M.; Mercier, A.; Savani, Y.; Panchal, S.; Porikli, F. QuickSRNet: Plain Single-Image Super-Resolution Architecture for Faster Inference on Mobile Platforms. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2023, pp. 2187–2196.

- Conde, M.V.; Vasluianu, F.; Vazquez-Corral, J.; Timofte, R. Perceptual image enhancement for smartphone real-time applications. In Proceedings of the Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, 2023, pp. 1848–1858.

- LI, H.; Guan, J.; Rui, L.; Ma, S.; Gu, L.; Zhu, Z. TinyLUT: Tiny Look-Up Table for Efficient Image Restoration at the Edge. In Proceedings of the The Thirty-eighth Annual Conference on Neural Information Processing Systems, 2024.

- Ignatov, A.; Timofte, R.; Denna, M.; Younes, A.; Gankhuyag, G.; Huh, J.; Kim, M.K.; Yoon, K.; Moon, H.C.; Lee, S.; et al. Efficient and accurate quantized image super-resolution on mobile NPUs, mobile AI & AIM 2022 challenge: report. In Proceedings of the European conference on computer vision. Springer, 2022, pp. 92–129.

- Ignatov, A.; Timofte, R.; Chiang, C.M.; Kuo, H.K.; Xu, Y.S.; Lee, M.Y.; Lu, A.; Cheng, C.M.; Chen, C.C.; Yong, J.Y.; et al. Power efficient video super-resolution on mobile npus with deep learning, mobile ai & aim 2022 challenge: Report. In Proceedings of the European Conference on Computer Vision. Springer, 2022, pp. 130–152.

- Ignatov, A.; Timofte, R.; Liu, S.; Feng, C.; Bai, F.; Wang, X.; Lei, L.; Yi, Z.; Xiang, Y.; Liu, Z.; et al. Learned smartphone ISP on mobile GPUs with deep learning, mobile AI & AIM 2022 challenge: report. In Proceedings of the European Conference on Computer Vision. Springer, 2022, pp. 44–70.

- Ignatov, A.; Timofte, R.; Zhang, J.; Zhang, F.; Yu, G.; Ma, Z.; Wang, H.; Kwon, M.; Qian, H.; Tong, W.; et al. Realistic bokeh effect rendering on mobile gpus, mobile ai & aim 2022 challenge: report. In Proceedings of the European Conference on Computer Vision. Springer, 2022, pp. 153–173.

- Ignatov, A.; Malivenko, G.; Timofte, R.; Treszczotko, L.; Chang, X.; Ksiazek, P.; Lopuszynski, M.; Pioro, M.; Rudnicki, R.; Smyl, M.; et al. Efficient single-image depth estimation on mobile devices, mobile AI & AIM 2022 challenge: report. In Proceedings of the European Conference on Computer Vision. Springer, 2022, pp. 71–91.

- Yang, R.; Timofte, R.; Li, X.; Zhang, Q.; Zhang, L.; Liu, F.; He, D.; Li, F.; Zheng, H.; Yuan, W.; et al. Aim 2022 challenge on super-resolution of compressed image and video: Dataset, methods and results. In Proceedings of the European Conference on Computer Vision. Springer, 2022, pp. 174–202.

- Conde, M.V.; Timofte, R.; Huang, Y.; Peng, J.; Chen, C.; Li, C.; Pérez-Pellitero, E.; Song, F.; Bai, F.; Liu, S.; et al. Reversed image signal processing and RAW reconstruction. AIM 2022 challenge report. In Proceedings of the European Conference on Computer Vision. Springer, 2022, pp. 3–26.

- Kınlı, F.; Menteş, S.; Özcan, B.; Kıraç, F.; Timofte, R.; Zuo, Y.; Wang, Z.; Zhang, X.; Zhu, Y.; Li, C.; et al. AIM 2022 challenge on Instagram filter removal: methods and results. In Proceedings of the European Conference on Computer Vision. Springer, 2022, pp. 27–43.

- Sargsyan, A.; Navasardyan, S.; Xu, X.; Shi, H. Mi-gan: A simple baseline for image inpainting on mobile devices. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2023, pp. 7335–7345.

- Verma, S.; Sharma, A.; Sheshadri, R.; Raman, S. GraphFill: Deep Image Inpainting using Graphs. In Proceedings of the Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, 2024, pp. 4996–5006.

- Ayazoglu, M.; Bilecen, B.B. Xcat-lightweight quantized single image super-resolution using heterogeneous group convolutions and cross concatenation. In Proceedings of the European Conference on Computer Vision. Springer, 2022, pp. 475–488.

- Gendy, G.; Sabor, N.; Hou, J.; He, G. Real-time channel mixing net for mobile image super-resolution. In Proceedings of the European Conference on Computer Vision. Springer, 2022, pp. 573–590.

- Luo, Z.; Li, Y.; Yu, L.; Wu, Q.; Wen, Z.; Fan, H.; Liu, S. Fast nearest convolution for real-time efficient image super-resolution. In Proceedings of the European conference on computer vision. Springer, 2022, pp. 561–572.

- Angarano, S.; Salvetti, F.; Martini, M.; Chiaberge, M. Generative adversarial super-resolution at the edge with knowledge distillation. Engineering Applications of Artificial Intelligence 2023, 123, 106407. [CrossRef]

- Chao, J.; Zhou, Z.; Gao, H.; Gong, J.; Yang, Z.; Zeng, Z.; Dehbi, L. Equivalent transformation and dual stream network construction for mobile image super-resolution. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2023, pp. 14102–14111.

- Deng, W.; Yuan, H.; Deng, L.; Lu, Z. Reparameterized residual feature network for lightweight image super-resolution. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2023, pp. 1712–1721.

- Gankhuyag, G.; Huh, J.; Kim, M.; Yoon, K.; Moon, H.; Lee, S.; Jeong, J.; Kim, S.; Choe, Y. Skip-concatenated image super-resolution network for mobile devices. IEEE Access 2022, 11, 4972–4982. [CrossRef]

- Liu, Y.; Fu, X.; Zhou, L.; Li, C. Texture-Enhanced Framework by Differential Filter-Based Re-parameterization for Super-Resolution on PC/Mobile. Neural Processing Letters 2023, 55, 12183–12203. [CrossRef]

- Sun, X.; Wang, S.; Yang, J.; Wei, F.; Wang, Y. Two-stage deep single-image super-resolution with multiple blur kernels for Internet of Things. IEEE Internet of Things Journal 2023, 10, 16440–16449. [CrossRef]

- Gao, S.; Zheng, C.; Zhang, X.; Liu, S.; Wu, B.; Lu, K.; Zhang, D.; Wang, N. RCBSR: re-parameterization convolution block for super-resolution. In Proceedings of the European Conference on Computer Vision. Springer, 2022, pp. 540–548.

- Lian, W.; Lian, W. Sliding window recurrent network for efficient video super-resolution. In Proceedings of the European Conference on Computer Vision. Springer, 2022, pp. 591–601.

- Xu, T.; Jia, Z.; Zhang, Y.; Bao, L.; Sun, H. Elsr: Extreme low-power super resolution network for mobile devices. arXiv preprint arXiv:2208.14600 2022.

- Yue, S.; Li, C.; Zhuge, Z.; Song, R. Eesrnet: A network for energy efficient super-resolution. In Proceedings of the European Conference on Computer Vision. Springer, 2022, pp. 602–618.

- Gou, W.; Yi, Z.; Xiang, Y.; Li, S.; Liu, Z.; Kong, D.; Xu, K. SYENet: A simple yet effective network for multiple low-level vision tasks with real-time performance on mobile device. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2023, pp. 12182–12195.

- Liao, J.; Peng, C.; Jiang, L.; Ma, Y.; Liang, W.; Li, K.C.; Poniszewska-Maranda, A. MWformer: a novel low computational cost image restoration algorithm. The Journal of Supercomputing 2024, pp. 1–25. [CrossRef]

- Li, Y.; Wu, J.; Shi, Z. Lightweight neural network for enhancing imaging performance of under-display camera. IEEE Transactions on Circuits and Systems for Video Technology 2023, 34, 71–84. [CrossRef]

- Fu, Z.; Song, M.; Ma, C.; Nasti, J.; Tyagi, V.; Lloyd, G.; Tang, W. An efficient hybrid model for low-light image enhancement in mobile devices. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2022, pp. 3057–3066.

- A Sharif, S.; Myrzabekov, A.; Khudjaev, N.; Tsoy, R.; Kim, S.; Lee, J. Learning optimized low-light image enhancement for edge vision tasks. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024, pp. 6373–6383.

- Liu, Z.; Jin, M.; Chen, Y.; Liu, H.; Yang, C.; Xiong, H. Lightweight network towards real-time image denoising on mobile devices. In Proceedings of the 2023 IEEE International Conference on Image Processing (ICIP). IEEE, 2023, pp. 2270–2274.

- Flepp, R.; Ignatov, A.; Timofte, R.; Van Gool, L. Real-World Mobile Image Denoising Dataset with Efficient Baselines. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024, pp. 22368–22377.

- Xiang, L.; Zhou, J.; Liu, J.; Wang, Z.; Huang, H.; Hu, J.; Han, J.; Guo, Y.; Ding, G. ReMoNet: Recurrent multi-output network for efficient video denoising. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence, 2022, Vol. 36, pp. 2786–2794.

- Ignatov, A.; Malivenko, G.; Timofte, R.; Tseng, Y.; Xu, Y.S.; Yu, P.H.; Chiang, C.M.; Kuo, H.K.; Chen, M.H.; Cheng, C.M.; et al. Pynet-v2 mobile: Efficient on-device photo processing with neural networks. In Proceedings of the 2022 26th International Conference on Pattern Recognition (ICPR). IEEE, 2022, pp. 677–684.

- Ignatov, A.; Sycheva, A.; Timofte, R.; Tseng, Y.; Xu, Y.S.; Yu, P.H.; Chiang, C.M.; Kuo, H.K.; Chen, M.H.; Cheng, C.M.; et al. MicroISP: processing 32mp photos on mobile devices with deep learning. In Proceedings of the European Conference on Computer Vision. Springer, 2022, pp. 729–746.

- Raimundo, D.W.; Ignatov, A.; Timofte, R. LAN: Lightweight attention-based network for RAW-to-RGB smartphone image processing. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2022, pp. 808–816.

- Zheng, J.; Fan, Z.; Wu, X.; Wu, Y.; Zhang, F. Residual Feature Distillation Channel Spatial Attention Network for ISP on Smartphone. In Proceedings of the European Conference on Computer Vision. Springer, 2022, pp. 635–650.

- Rombach, R.; Blattmann, A.; Lorenz, D.; Esser, P.; Ommer, B. High-resolution image synthesis with latent diffusion models. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2022, pp. 10684–10695.

- Orhon, A.; Siracusa, M.; Wadhwa, A. Stable Diffusion with Core ML on Apple Silicon. https://machinelearning.apple.com/research/stable-diffusion-coreml-apple-silicon, 2022. Accessed on 2 January 2025.

- Asghar, Z.; Hou, J. World’s first on-device demonstration of Stable Diffusion on an Android phone. https://www.qualcomm.com/news/onq/2023/02/worlds-first-on-device-demonstration-of-stable-diffusion-on-android, 2023. Accessed on 2 January 2025.

- Chen, Y.H.; Sarokin, R.; Lee, J.; Tang, J.; Chang, C.L.; Kulik, A.; Grundmann, M. Speed is all you need: On-device acceleration of large diffusion models via gpu-aware optimizations. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2023, pp. 4651–4655.

- Choi, J.; Kim, M.; Ahn, D.; Kim, T.; Kim, Y.; Jo, D.; Jeon, H.; Kim, J.J.; Kim, H. Squeezing large-scale diffusion models for mobile. arXiv preprint arXiv:2307.01193 2023.

- Hu, D.; Chen, J.; Huang, X.; Coskun, H.; Sahni, A.; Gupta, A.; Goyal, A.; Lahiri, D.; Singh, R.; Idelbayev, Y.; et al. SnapGen: Taming High-Resolution Text-to-Image Models for Mobile Devices with Efficient Architectures and Training. arXiv preprint arXiv:2412.09619 2024.

- Castells, T.; Song, H.K.; Piao, T.; Choi, S.; Kim, B.K.; Yim, H.; Lee, C.; Kim, J.G.; Kim, T.H. EdgeFusion: On-Device Text-to-Image Generation. arXiv preprint arXiv:2404.11925 2024.

- Li, Y.; Wang, H.; Jin, Q.; Hu, J.; Chemerys, P.; Fu, Y.; Wang, Y.; Tulyakov, S.; Ren, J. Snapfusion: Text-to-image diffusion model on mobile devices within two seconds. Advances in Neural Information Processing Systems 2024, 36.

- Kim, B.K.; Song, H.K.; Castells, T.; Choi, S. Bk-sdm: A lightweight, fast, and cheap version of stable diffusion. In Proceedings of the European Conference on Computer Vision. Springer, 2025, pp. 381–399.

- Zhao, Y.; Xu, Y.; Xiao, Z.; Jia, H.; Hou, T. Mobilediffusion: Instant text-to-image generation on mobile devices. In Proceedings of the European Conference on Computer Vision. Springer, 2025, pp. 225–242.

- Xu, J.; Li, Z.; Chen, W.; Wang, Q.; Gao, X.; Cai, Q.; Ling, Z. On-device language models: A comprehensive review. arXiv preprint arXiv:2409.00088 2024.

- Zheng, Y.; Chen, Y.; Qian, B.; Shi, X.; Shu, Y.; Chen, J. A Review on Edge Large Language Models: Design, Execution, and Applications. arXiv preprint arXiv:2410.11845 2024.

- Laskaridis, S.; Katevas, K.; Minto, L.; Haddadi, H. Melting point: Mobile evaluation of language transformers. In Proceedings of the Proceedings of the 30th Annual International Conference on Mobile Computing and Networking, 2024, pp. 890–907.

- Xiao, J.; Huang, Q.; Chen, X.; Tian, C. Large language model performance benchmarking on mobile platforms: A thorough evaluation. arXiv preprint arXiv:2410.03613 2024.

- Wang, F.; Zhang, Z.; Zhang, X.; Wu, Z.; Mo, T.; Lu, Q.; Wang, W.; Li, R.; Xu, J.; Tang, X.; et al. A comprehensive survey of small language models in the era of large language models: Techniques, enhancements, applications, collaboration with llms, and trustworthiness. arXiv preprint arXiv:2411.03350 2024.

- Liu, Z.; Zhao, C.; Iandola, F.; Lai, C.; Tian, Y.; Fedorov, I.; Xiong, Y.; Chang, E.; Shi, Y.; Krishnamoorthi, R.; et al. Mobilellm: Optimizing sub-billion parameter language models for on-device use cases. arXiv preprint arXiv:2402.14905 2024.

- Abdin, M.; Aneja, J.; Awadalla, H.; Awadallah, A.; Awan, A.A.; Bach, N.; Bahree, A.; Bakhtiari, A.; Bao, J.; Behl, H.; et al. Phi-3 technical report: A highly capable language model locally on your phone. arXiv preprint arXiv:2404.14219 2024.

- Hu, S.; Tu, Y.; Han, X.; He, C.; Cui, G.; Long, X.; Zheng, Z.; Fang, Y.; Huang, Y.; Zhao, W.; et al. Minicpm: Unveiling the potential of small language models with scalable training strategies. arXiv preprint arXiv:2404.06395 2024.

- Thawakar, O.; Vayani, A.; Khan, S.; Cholakal, H.; Anwer, R.M.; Felsberg, M.; Baldwin, T.; Xing, E.P.; Khan, F.S. Mobillama: Towards accurate and lightweight fully transparent gpt. arXiv preprint arXiv:2402.16840 2024.

- Yi, R.; Li, X.; Xie, W.; Lu, Z.; Wang, C.; Zhou, A.; Wang, S.; Zhang, X.; Xu, M. Phonelm: an efficient and capable small language model family through principled pre-training. arXiv preprint arXiv:2411.05046 2024.

| Paper | Approach | Params | Footprint | Device | Latency (ms) |

|---|---|---|---|---|---|

| Cao et al. [5] - | Set of optimization applied to | - | - | NVIDIA Jetson TX2 | 361 |

| MobiVQA | LXMERT | Pixel 3XL | 165 | ||

| Set of optimization applied to ViLT | - | - | NVIDIA Jetson TX2 | 213 | |

| Yu et al. [9] | Slimmable framework applied to | 59 M | - | Snapdragon 888 | 167 |

| MCAN: MCAN-BTS (D = 512, L = 6) | Dimensity 1100 | 361 | |||

| Snapdragon 660 | 439 | ||||

| MCAN-BTS (1/4 D,L) | - | - | Snapdragon 888 | 87 | |

| Dimensity 1100 | 127 | ||||

| Snapdragon 660 | 197 | ||||

| MCAN-BTS (1/4 D, 1/6 L) | 9 M | - | Snapdragon 888 | 58 | |

| Dimensity 1100 | 93 | ||||

| Snapdragon 660 | 160 | ||||

| Rashid et al. [13] - TinyVQA | Comapct Multimodal DNN developed through KD and int8 quantization | - | 339 KiB | GAP8 processor | 56 |

| Mishra et al. [16] | Transformer-based architecture with int8 quantization | - | 58 MB | Samsung Galaxy S23 | 2.8 |

| Paper | Approach | Params | Footprint | Device | RTF |

|---|---|---|---|---|---|

| Chevi et al. [35] - Nix-TTS | Non-autoregressive, end-to-end model developed through KD | 5. 23 M | 21.2 MB | Raspberry Pi 3B | 1.97 |

| Atienza [34] - EfficientSpeech | non-autoregressive, two-stage model | 1.2 M | - | Raspberry Pi 4B | 1.7 |

| Nguyen et al. [40] - FastStreamSpeech | non-autoregressive, two-stage model | - | - | MediaTek Helio G35 processor | 0.01 - 0.1 |

| Paper | Task | Contribution | Devices |

| Sargsyan et al. [71] - Mi-GAN | Image Inpaitning | Combination of adversarial training, model reparametrization, and knowledge distillation for high-quality and efficient inpainting. | iPhone7, iPhoneX, iPad mini (5th gen), iPhone 14-pro-max, Galaxy Tab S7+, Samsung Galaxy S8, vivo Y12 |

| Verma et al. [72] - GraphFill | Image Inpaitning | Coarser-to-finer method that employs a Graph Neural Network (GNN) and a GAN-based Refine Network, demonstrating the effectiveness of GNNs for image inpainting. | Samsung Galaxy S23 |

| Ayazoglu et al. [73] - XCAT | Single-Image Super-Resolution | Mobile device-friendly quantized network incorporating the proposed HXBlock. | Mali-G71 MP2 GPU, Synaptics Dolphin NPU |

| Gendy et al. [74] - CDFM-Mobile | Single-Image Super-Resolution | SISR model incorporating the developed CDFM block for optimized performance and speed. | Snapdragon 970, Synaptics VS680 board |

| Luo et al. [75] - NCNet | Single-Image Super-Resolution | Mobile-friendly fast nearest convolution plain network (NCNet) that achieves the same performance as nearest interpolation residual learning while being faster. | Google Pixel 4 |

| Angarano et al. [76] | Single-Image Super-Resolution | They proposed EdgeSRGAN, a GAN-based solution for SISR, along with EdgeSRGAN-tiny, obtained through KD from EdgeSRGAN. | Google Coral Edge TPU USB Accelerator |

| Berger et al. [60] - QuickSRNet | Single-Image Super-Resolution | VGG-like architecture for SISR that demonstrates the effectiveness of simpler designs in achieving high levels of accuracy and on-device performance. | Snapdragon 8 Gen 1 |

| Chao et al. [77] - ETDS | Single-Image Super-Resolution | Lightweight network named ETDS for real-time SR on mobile devices based on Equivalent Transformation and dual-stream networks, achieving superior speed and quality compared to previous lightweight SR methods. | Dimensity 8100, Snapdragon 888, Snapdragon 8 Gen 1 |

| Deng et al. [78] - RepRFN | Single-Image Super-Resolution | They proposed a lightweight network structure based on reparameterization, named RepRFN, and designed a multi-scale feature fusion structure. RepRFN achieves a balance between performance and efficiency. | Snapdragon 865, Snapdragon 820, Rockchips RK3588 |

| Gankhuyag et al. [79] - SCSRN | Single-Image Super-Resolution | Highly efficient SR network that can deliver high accuracy and fast speed, where element-wise addition operation is excluded, and lighter skip-concatenated layer is introduced. | Galaxy Note20, Galaxy Z Fold4, Synaptics Dolphin smart TV platform |

| Liu et al. [80] - TELNet | Single-Image Super-Resolution | They designed an SR network named TELNet based on the proposed RepDFSR framework to perform SR tasks on mobile devices. | Huawei Mate 40 Pro, Synaptics VS680 board |

| Sun et al. [81] - SSDSR | Single-Image Super-Resolution | Two-stage semantic and spatial deep SR model suitable for the IoT environment and capable of handling a variety of blur kernels. | NVIDIA Jetson Nano |

| Gao et al. [82] - RCBSR | Video Super-Resolution | Optimized ECBSR from three aspects: architecture, NAS, and training strategy. | Dimensity 9000 |

| Lian et al. [83] - SWRN | Video Super-Resolution | They proposed a lightweight VSR network named SWRN that utilizes the proposed sliding-window strategy and can be easily deployed on mobile devices for real-time SR. | Huawei Mate 10 Pro |

| Xu et al. [84] - ELSR | Video Super-Resolution | Network with 3×3 convolution, PReLU activation and pixel shuffle operation, which can run in real-time on mobile devices with very low power consumption. | Dimensity 9000 |

| Yue et al. [85] - SEESRNet | Video Super-Resolution | They designed SEESRNet based on the proposed EESRNet, which can reduce power consumption while maintaining reasonably high performance. | Dimensity 9000 |

| Gou et al. [86] - SYENet | Multiple low-level vision tasks | To handle multiple low-level vision tasks on mobile devices in real-time, they proposed SYENet, which consists of two asymmetrical branches fused with a Quadratic Connections Unit. | Snapdragon 8 Gen 1 |

| Li et al. [62] - TinyLUT | Image Restoration | They proposed TinyLUT, which utilizes the proposed separable mapping strategy and dynamic discretization mechanism. TinyLUT achieved significant restoration accuracy with minimal storage consumption. | Xiaomi 11, Raspberry Pi 4B |

| Liao et al. [87] - MWformer | Image Restoration | Algorithm named MWformer that combines wavelet transform and transformer to reduce computational overhead, achieving high performance in numerous image restoration tasks. | NVIDIA Jetson Xavier NX |

| Conde et al. [61] - LPIENet | Image Enhancement | Lightweight UNet-based architecture characterized by the inverted residual attention (IRA) block, achieving real-time performance on smartphones. | Samsung A50, OnePlus Nord 2 5G, OnePlus 8 Pro, Realme 8 Pro |

| Li et al. [88] | Under-Display Camera (UDC) Image Enhancement | They proposed a network that can restore UDC images in a blind manner and a lightweight variant, where the architectural redundancy in learning multi-scale features is reduced. | Razen-Phone2 |

| Fu et al. [89] - LLNet | Low-Light Image Enhancement (LLIE) | Efficient hybrid model combining a lite CNN and a non-trainable linear transformation estimation model for image enhancement on mobile devices. | SM8450 + Adreno660, SM8250 + Adreno650, SM7325 + Adreno642, SM7250 + Adreno620, SM6375 + Adreno619, SM6115 + Adreno610 |

| Sharif et al. [90] | Low-Light Image Enhancement (LLIE) | LLIE learning framework for edge devices that incorporates a lightweight deep model (fully convolutional encoder-decoder architecture) and a deployment strategy. | NVIDIA Jetson Orin |

| Liu et al. [91] - MFDNet | Image Denoising | They identified the network architectures and operations that can run on NPUs with low latency and built a mobile-friendly denoising network based on these findings. | iPhone 11, iPhone 14 Pro |

| Flepp et al. [92] - SplitterNet | Image Denoising | They introduced MIDD, a large mobile image denoising dataset, and SplitterNet, an efficient baseline model that is optimized both in terms of denoising and inference performance. | Snapdragon 8 Gen 1, 2 and 3, Snapdragon 888, Dimensity 9000, 9200 and 9300, Samsung Exynos 2100 and 2200, Google Tensor G1 and G2 |

| Xiang et al. [93] - ReMoNet | Video Denoising | Recurrent Multi-output Network (ReMoNet) composed of the Recurrent Temporal Fusion (RTF) block and the Multi-output Aggregation (MOA) block, and that achieves superior performance with significantly less computational cost. | Snapdragon 888 |

| Ignatov et al. [94] - PyNET-V2 | Image Signal Processing | Based on PyNET, they proposed PyNET-V2 Mobile CNN architecture which yields both good visual reconstruction results and low latency on mobile devices. | Dimensity Next, 9000, 1000+ and 820, Exynos 990 and 2100, Kirin 9000, Snapdragon 888 Google Tensor |

| Ignatov et al. [95] - MicroISP | Image Signal Processing | DL-based image signal processing (ISP) architecture for mobile devices named MicroISP that provides comparable or better visual results than traditional mobile ISP systems, while outperforming previous DL-based solutions. | Dimensity 9000, 1000+ and 820, Exynos 990 and 2100, Kirin 9000, Snapdragon 888 Google Tensor |

| Raimundo et al. [96] - LAN | Image Signal Processing | Lightweight attention-based network (LAN) that improves performance without hindering inference time on smartphone devices. | Dimensity 1000+ |

| Zheng et al. [97] - RFDCSANet | Image Signal Processing | Lightweight network named Residual Feature Distillation Channel Spatial Attention Network (RFDCSANet) for real-time smartphone ISP. | Snapdragon 870, Snapdragon 8 Gen 1 |

| Chen et al. [97] | Single Image Bokeh Rendering | Depth-guided deep filtering network (DDFN) for efficient bokeh effect synthesis on mobile devices. | Snapdragon 865 |

| Paper | Approach | Params | Footprint | Device | Latency (s) |

|---|---|---|---|---|---|

| Chen et al. [101] | Series of implementation optimizations applied to SD v1.4. | - | 2093 MB | Samsung Galaxy S23 Ultra | 11.5 |

| Choi et al. [102] - Mobile Stable Diffusion | Series of optimization techniques applied to SD v2.1, including pruning, mixed-precision quantization, and KD to reduce the number of denoising steps. | - | - | Samsung Galaxy S23 | 7 |

| Castells et al. [104] - EdgeFusion | Employment of BK-SDM, a refined step distillation process for few-step inference, and optimization techniques, including mixed-precision post-training quantization. | 0.5 B | - | Samsung Exynos NPU | 0.7 |

| Hu et al. [103] - SnapGen | Efficient network architecture and improved training method consisting of multi-stage pre-training followed by KD and adversarial step distillation. | 0.4 B | - | iPhone 16 Pro Max | 1.2 - 2.3 |

| Li et al. [105] - | Efficient network architecture, partic- | 1 B | - | iPhone 14 Pro | 2 |

| SnapFusion | ularly through improvements to the | iPhone 13 Pro Max | 2.7 | ||

| UNet, and improved step distillation to reduce the number of denoising steps. | iPhone 12 Pro Max | 4.4 | |||

| Kim et al. [106] - BK-SDM | Compression of the SD UNet by removing architectural blocks (block | 0.5 B | - | NVIDIA Jetson AGX Orin | 2.8 |

| pruning) and feature-level knowledge distillation retraining. | iPhone 14 | 3.9 | |||

| Zhao et al. [107] - MobileDiffusion | Efficient and lightweight diffusion model architecture and a novel approach for developing highly efficient one-step diffusion-GAN models. | 0.4 B | - | iPhone 15 Pro | 0.2 |

| Paper | Approach | Params | Footprint | Device | Throughput (token/s) |

|---|---|---|---|---|---|

| Abdin et al. [114] - Phi-3-mini | Transformer decoder architecture trained on a larger and more advanced dataset, 4-bit quantization applied before deployment. | 3.8 B | 1.8 GB | iPhone 14 | 12 |

| Hu et al. [115] - | Deep and thin network, embedding shar- | 2.4 B | 2 GB | iPhone 15 Pro | 18 |

| MiniCPM** | ing, and scalable training strategies, int4 | iPhone 15 | 15 | ||

| quantization applied before deployment. | OPPO Find N3 | 6.5 | |||

| Samsung S23 Ultra | 6.4 | ||||

| iPhone 12 | 5.8 | ||||

| Liu et al. [113] | MobileLLM : Deep and thin Transformer architecture, embedding sharing and grouped-query attention mechanisms. | 125 M | - | iPhone 13 | 64.1* |

| MobileLLM-LS : MobileLLM with layer sharing. | 125 M | - | iPhone 13 | 62.5* | |

| Thawakar et al. [116] - MobiLlama | Baseline architecture adapted from TinyLlama and Llama-2, parameter sharing scheme then employed, 4-bits quantization applied before deployment. | 0.5 B | 770 MB | Snapdragon-685 | 7 |

| Yi et al. [117] - PhoneLM | Resurce-efficient Trasformer decoder architecture for smartphone hardware, mixed-precision quantization applied before deployment. | 1.5 B | - | Xiaomi 14 | 58 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).