Submitted:

31 January 2025

Posted:

03 February 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

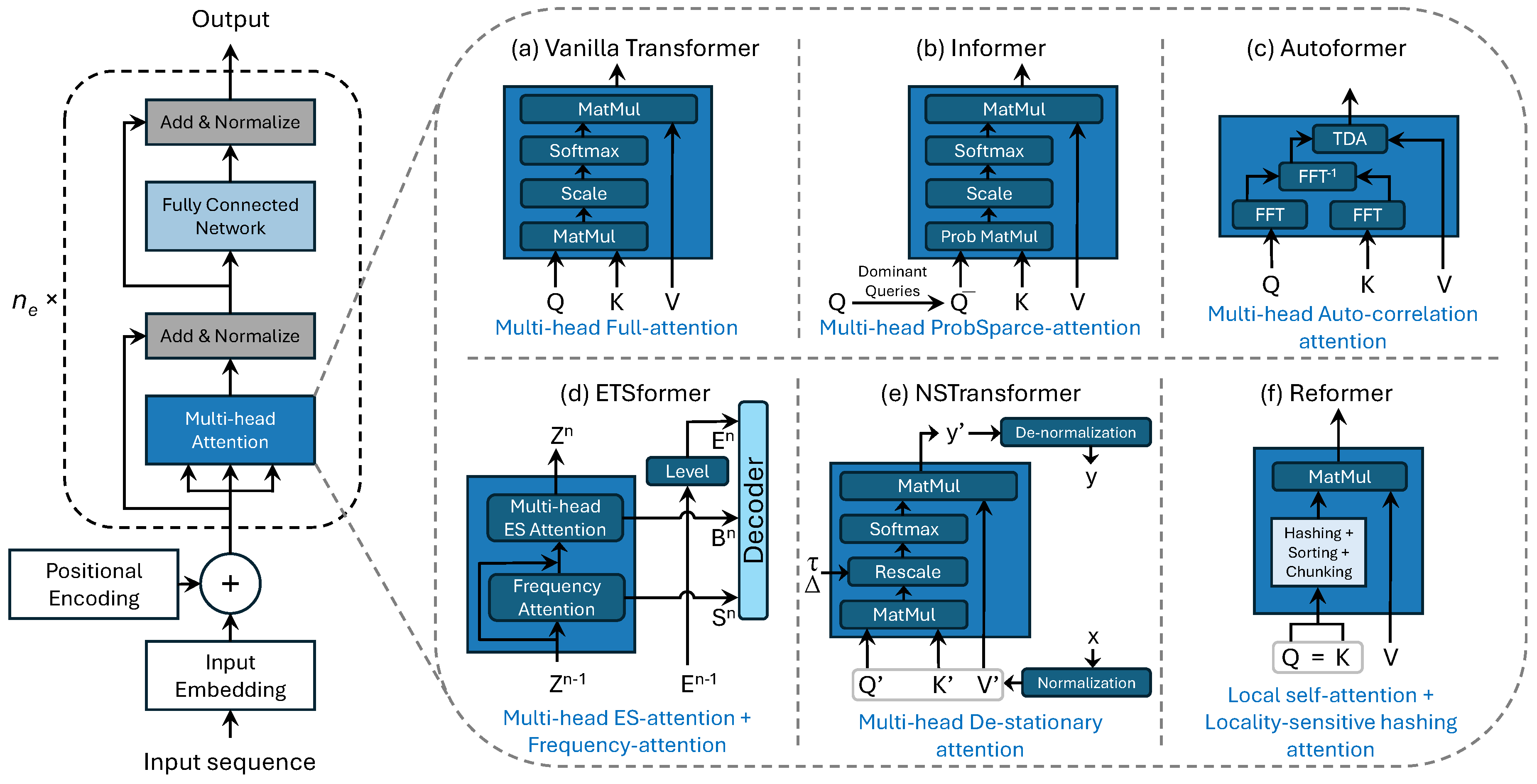

- Development of Transformer-Based Forecasting Models: The study explores various Transformer-based architectures tailored for retail demand forecasting, including Vanilla Transformer, Informer, Autoformer, ETSformer, NSTransformer, and Reformer. These models are evaluated on their ability to capture long-term dependencies, seasonality, and external factors affecting sales patterns.

- Incorporation of Explanatory Variables: The research emphasizes the importance of integrating explanatory variables such as calendar events, promotional activities, pricing, and socio-economic factors in improving forecast accuracy. The models effectively leverage these covariates to address the complexities of retail data.

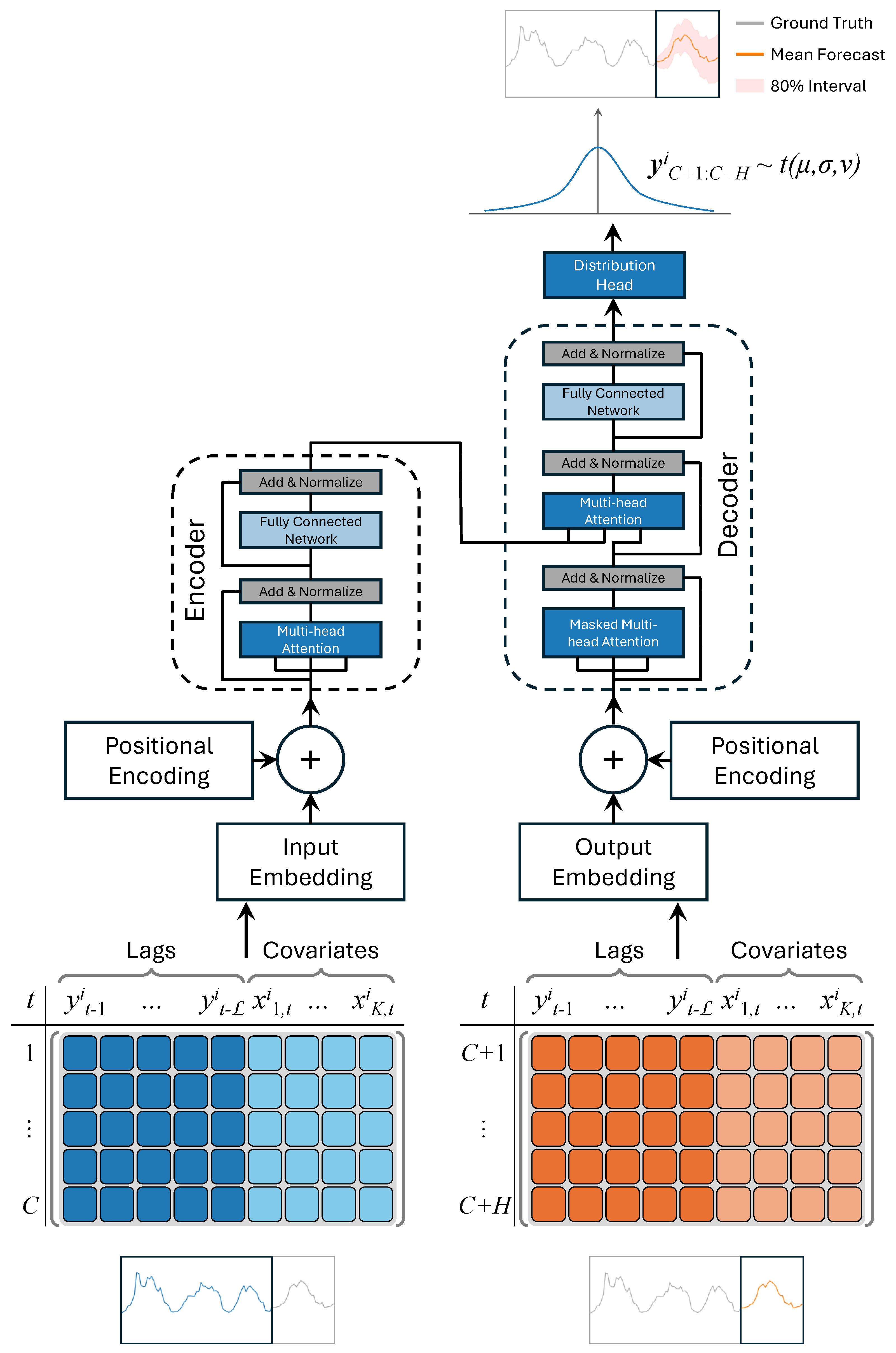

- Probabilistic Forecasting: The models provide probabilistic forecasts, capturing the uncertainty associated with demand predictions. This feature is crucial for risk management and decision-making processes in retail operations, ensuring a more resilient inventory management strategy.

- Empirical Evaluation Using Real-World Data: The paper includes a thorough empirical evaluation using the M5 dataset, a comprehensive retail dataset provided by Walmart. The results demonstrate the robustness and effectiveness of the proposed models in improving forecast accuracy across various retail scenarios.

2. Related Work

2.1. Retail Time Series Forecasting with Deep Learning

2.2. Explanatory Variables in Retail Demand Forecasting

2.3. Probabilistic Forecasting of Time Series Using Deep Learning

3. Probabilistic Forecasting with Transformer-based Models

3.1. Deep Learning Transformers for Time Series Forecasting

3.2. Probabilistic Forecasting of Time Series Data

4. Empirical Evaluation

4.1. Dataset

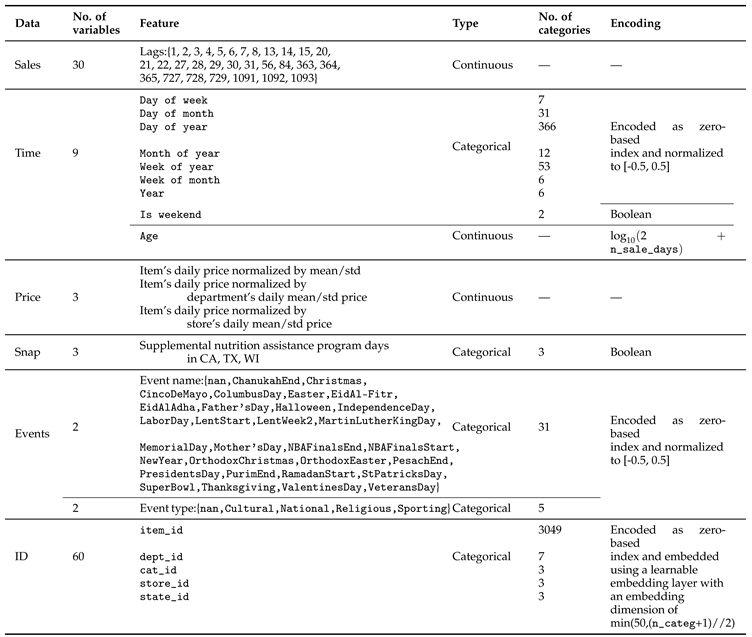

4.2. Explanatory Variables

- Calendar-Related Information: This includes a wide range of time-related variables such as the date, weekday, week number, month, and year. Additionally, it includes indicators for special days and holidays (e.g., Super Bowl, Valentine’s Day, Orthodox Easter), which are categorized into four classes: Sporting, Cultural, National, and Religious. Special days account for about 8% of the dataset, with their distribution across the classes being 11% Sporting, 23% Cultural, 32% National, and 34% Religious.

- Selling Prices: Prices are provided at a weekly level for each store. The weekly average prices reflect consistent pricing across the seven days of a week. If a price is unavailable for a given week, it indicates that the product was not sold during that period. Over time, the selling prices may vary, offering critical information for understanding price elasticity and its impact on sales.

- SNAP Activities: The dataset includes a binary indicator for Supplemental Nutrition Assistance Program (SNAP) activities. These activities denote whether a store allowed purchases using SNAP benefits on a particular date. This variable accounts for about 33% of the days in the dataset and reflects the socio-economic factors affecting consumer purchasing behavior.

4.3. Hyperparameter Tuning

4.4. Performance Metrics

4.5. Results and Discussion

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Petropoulos, F.; Apiletti, D.; Assimakopoulos, V.; Babai, M.Z.; Barrow, D.K.; Ben Taieb, S.; Bergmeir, C.; Bessa, R.J.; Bijak, J.; Boylan, J.E.; et al. Forecasting: theory and practice. International Journal of Forecasting 2022, 38, 705–871. [Google Scholar] [CrossRef]

- Fildes, R.; Ma, S.; Kolassa, S. Retail forecasting: Research and practice. International Journal of Forecasting 2022, 38, 1283–1318. [Google Scholar] [CrossRef]

- Oliveira, J.M.; Ramos, P. Assessing the Performance of Hierarchical Forecasting Methods on the Retail Sector. Entropy 2019, 21. [Google Scholar] [CrossRef]

- Theodoridis, G.; Tsadiras, A. Retail Demand Forecasting: A Multivariate Approach and Comparison of Boosting and Deep Learning Methods. International Journal on Artificial Intelligence Tools 2024, 33, 2450001. [Google Scholar] [CrossRef]

- Ramos, P.; Oliveira, J.M. A procedure for identification of appropriate state space and ARIMA models based on time-series cross-validation. Algorithms 2016, 9, 76. [Google Scholar] [CrossRef]

- Benidis, K.; Rangapuram, S.S.; Flunkert, V.; Wang, Y.; Maddix, D.; Turkmen, C.; Gasthaus, J.; Bohlke-Schneider, M.; Salinas, D.; Stella, L.; et al. Deep Learning for Time Series Forecasting: Tutorial and Literature Survey. ACM Comput. Surv. 2022, 55. [Google Scholar] [CrossRef]

- Ramos, P.; Oliveira, J.M. Robust Sales Forecasting Using Deep Learning with Static and Dynamic Covariates. Applied System Innovation 2023, 6. [Google Scholar] [CrossRef]

- Bojer, C.S.; Meldgaard, J.P. Kaggle forecasting competitions: An overlooked learning opportunity. International Journal of Forecasting 2021, 37, 587–603. [Google Scholar] [CrossRef]

- Oliveira, J.M.; Ramos, P. Cross-Learning-Based Sales Forecasting Using Deep Learning via Partial Pooling from Multi-level Data. In Proceedings of the Engineering Applications of Neural Networks; Iliadis, L.; Maglogiannis, I.; Alonso, S.; Jayne, C.; Pimenidis, E., Eds., Cham, 2023; pp. 279–290. [CrossRef]

- Makridakis, S.; Spiliotis, E.; Assimakopoulos, V. M5 accuracy competition: Results, findings, and conclusions. International Journal of Forecasting 2022, 38, 1346–1364. [Google Scholar] [CrossRef]

- Theodoridis, G.; Tsadiras, A. Comparing Boosting and Deep Learning Methods on Multivariate Time Series for Retail Demand Forecasting. In Proceedings of the Artificial Intelligence Applications and Innovations; Maglogiannis, I.; Iliadis, L.; Macintyre, J.; Cortez, P., Eds., Cham, 2022; pp. 375–386.

- Teixeira, M.; Oliveira, J.M.; Ramos, P. Enhancing Hierarchical Sales Forecasting with Promotional Data: A Comparative Study Using ARIMA and Deep Neural Networks. Machine Learning and Knowledge Extraction 2024, 6, 2659–2687. [Google Scholar] [CrossRef]

- Islam, S.; Elmekki, H.; Elsebai, A.; Bentahar, J.; Drawel, N.; Rjoub, G.; Pedrycz, W. A comprehensive survey on applications of transformers for deep learning tasks. Expert Systems with Applications 2024, 241, 122666. [Google Scholar] [CrossRef]

- Oliveira, J.M.; Ramos, P. Investigating the Accuracy of Autoregressive Recurrent Networks Using Hierarchical Aggregation Structure-Based Data Partitioning. Big Data and Cognitive Computing 2023, 7. [Google Scholar] [CrossRef]

- Oliveira, J.M.; Ramos, P. Evaluating the Effectiveness of Time Series Transformers for Demand Forecasting in Retail. Mathematics 2024, 12. [Google Scholar] [CrossRef]

- Torres, J.F.; Hadjout, D.; Sebaa, A.; Martínez-Álvarez, F.; Troncoso, A. Deep Learning for Time Series Forecasting: A Survey. Big Data 2021, 9, 3–21. [Google Scholar] [CrossRef]

- Bandara, K.; Shi, P.; Bergmeir, C.; Hewamalage, H.; Tran, Q.; Seaman, B. Sales Demand Forecast in E-commerce Using a Long Short-Term Memory Neural Network Methodology. In Proceedings of the Neural Information Processing. ICONIP 2019. Lecture Notes in Computer Science, Cham, 2019; Vol. 11955, pp. 462–474. [CrossRef]

- Joseph, R.V.; Mohanty, A.; Tyagi, S.; Mishra, S.; Satapathy, S.K.; Mohanty, S.N. A hybrid deep learning framework with CNN and Bi-directional LSTM for store item demand forecasting. Computers and Electrical Engineering 2022, 103, 108358. [Google Scholar] [CrossRef]

- Giri, C.; Chen, Y. Deep Learning for Demand Forecasting in the Fashion and Apparel Retail Industry. Forecasting 2022, 4, 565–581. [Google Scholar] [CrossRef]

- Mogarala Guruvaya, A.; Kollu, A.; Divakarachari, P.B.; Falkowski-Gilski, P.; Praveena, H.D. Bi-GRU-APSO: Bi-Directional Gated Recurrent Unit with Adaptive Particle Swarm Optimization Algorithm for Sales Forecasting in Multi-Channel Retail. Telecom 2024, 5, 537–555. [Google Scholar] [CrossRef]

- de Castro Moraes, T.; Yuan, X.M.; Chew, E.P. Deep Learning Models for Inventory Decisions: A Comparative Analysis. In Proceedings of the Intelligent Systems and Applications; Arai, K., Ed., Cham, 2024; pp. 132–150. [CrossRef]

- de Castro Moraes, T.; Yuan, X.M.; Chew, E.P. Hybrid convolutional long short-term memory models for sales forecasting in retail. Journal of Forecasting 2024, 43, 1278–1293. [Google Scholar] [CrossRef]

- Wu, J.; Liu, H.; Yao, X.; Zhang, L. Unveiling consumer preferences: A two-stage deep learning approach to enhance accuracy in multi-channel retail sales forecasting. Expert Systems with Applications 2024, 257, 125066. [Google Scholar] [CrossRef]

- Sousa, M.; Loureiro, A.; Miguéis, V. Predicting demand for new products in fashion retailing using censored data. Expert Systems with Applications 2025, 259, 125313. [Google Scholar] [CrossRef]

- Huang, T.; Fildes, R.; Soopramanien, D. The value of competitive information in forecasting FMCG retail product sales and the variable selection problem. European Journal of Operational Research 2014, 237, 738–748. [Google Scholar] [CrossRef]

- Loureiro, A.; Miguéis, V.; da Silva, L.F. Exploring the use of deep neural networks for sales forecasting in fashion retail. Decision Support Systems 2018, 114, 81–93. [Google Scholar] [CrossRef]

- Punia, S.; Nikolopoulos, K.; Singh, S.P.; Madaan, J.K.; Litsiou, K. Deep learning with long short-term memory networks and random forests for demand forecasting in multi-channel retail. International Journal of Production Research 2020, 58, 4964–4979. [Google Scholar] [CrossRef]

- Lim, B.; Arık, S.Ö.; Loeff, N.; Pfister, T. Temporal Fusion Transformers for interpretable multi-horizon time series forecasting. International Journal of Forecasting 2021, 37, 1748–1764. [Google Scholar] [CrossRef]

- Wang, C.H. Considering economic indicators and dynamic channel interactions to conduct sales forecasting for retail sectors. Computers & Industrial Engineering 2022, 165, 107965. [Google Scholar] [CrossRef]

- Kao, C.Y.; Chueh, H.E. Deep Learning Based Purchase Forecasting for Food Producer-Retailer Team Merchandising. Scientific Programming 2022, 2022, 2857850. [Google Scholar] [CrossRef]

- Ramos, P.; Oliveira, J.M.; Kourentzes, N.; Fildes, R. Forecasting Seasonal Sales with Many Drivers: Shrinkage or Dimensionality Reduction? Applied System Innovation 2023, 6. [Google Scholar] [CrossRef]

- Punia, S.; Shankar, S. Predictive analytics for demand forecasting: A deep learning-based decision support system. Knowledge-Based Systems 2022, 258, 109956. [Google Scholar] [CrossRef]

- Nasseri, M.; Falatouri, T.; Brandtner, P.; Darbanian, F. Applying Machine Learning in Retail Demand Prediction—A Comparison of Tree-Based Ensembles and Long Short-Term Memory-Based Deep Learning. Applied Sciences 2023, 13. [Google Scholar] [CrossRef]

- Wellens, A.P.; Boute, R.N.; Udenio, M. Simplifying tree-based methods for retail sales forecasting with explanatory variables. European Journal of Operational Research 2024, 314, 523–539. [Google Scholar] [CrossRef]

- Praveena, S.; Prasanna Devi, S. A Hybrid Deep Learning Based Deep Prophet Memory Neural Network Approach for Seasonal Items Demand Forecasting. Journal of Advances in Information Technology 2024, 15, 735–747. [Google Scholar] [CrossRef]

- Wen, R.; Torkkola, K.; Narayanaswamy, B.; Madeka, D. A Multi-Horizon Quantile Recurrent Forecaster. 2018; arXiv:stat.ML/1711.11053. [Google Scholar]

- Salinas, D.; Flunkert, V.; Gasthaus, J.; Januschowski, T. DeepAR: Probabilistic forecasting with autoregressive recurrent networks. International Journal of Forecasting 2020, 36, 1181–1191. [Google Scholar] [CrossRef]

- Rasul, K.; Seward, C.; Schuster, I.; Vollgraf, R. Autoregressive Denoising Diffusion Models for Multivariate Probabilistic Time Series Forecasting. In Proceedings of the 38th International Conference on Machine Learning; Meila, M.; Zhang, T., Eds. PMLR, 18–24 Jul 2021, Vol. 139, Proceedings of Machine Learning Research, pp.

- Rasul, K.; Sheikh, A.S.; Schuster, I.; Bergmann, U.; Vollgraf, R. Multivariate Probabilistic Time Series Forecasting via Conditioned Normalizing Flows. 2021; arXiv:cs.LG/2002.06103. [Google Scholar]

- Hasson, H.; Wang, B.; Januschowski, T.; Gasthaus, J. Probabilistic Forecasting: A Level-Set Approach. In Proceedings of the Advances in Neural Information Processing Systems; Ranzato, M.; Beygelzimer, A.; Dauphin, Y.; Liang, P.; Vaughan, J.W., Eds. Curran Associates, Inc., 2021, Vol. 34, pp. 6404–6416.

- Rangapuram, S.S.; Werner, L.D.; Benidis, K.; Mercado, P.; Gasthaus, J.; Januschowski, T. End-to-End Learning of Coherent Probabilistic Forecasts for Hierarchical Time Series. In Proceedings of the 38th International Conference on Machine Learning; Meila, M.; Zhang, T., Eds. PMLR, 18–24 Jul 2021, Vol. 139, Proceedings of Machine Learning Research, pp. 8832–8843.

- Kan, K.; Aubet, F.X.; Januschowski, T.; Park, Y.; Benidis, K.; Ruthotto, L.; Gasthaus, J. Multivariate Quantile Function Forecaster. In Proceedings of the 25th International Conference on Artificial Intelligence and Statistics, Virtual, 28-30 March 2022. PMLR, 2022, Vol. 151, Proceedings of Machine Learning Research, pp. 10603–10621.

- Shchur, O.; Turkmen, C.; Erickson, N.; Shen, H.; Shirkov, A.; Hu, T.; Wang, Y. AutoGluon-TimeSeries: AutoML for Probabilistic Time Series Forecasting. In Proceedings of the International Conference on Automated Machine Learning. PMLR, 2023, pp. 9–1.

- Tong, J.; Xie, L.; Yang, W.; Zhang, K.; Zhao, J. Enhancing time series forecasting: A hierarchical transformer with probabilistic decomposition representation. Information Sciences 2023, 647, 119410. [Google Scholar] [CrossRef]

- Sprangers, O.; Schelter, S.; de Rijke, M. Parameter-efficient deep probabilistic forecasting. International Journal of Forecasting 2023, 39, 332–345. [Google Scholar] [CrossRef]

- Olivares, K.G.; Meetei, O.N.; Ma, R.; Reddy, R.; Cao, M.; Dicker, L. Probabilistic hierarchical forecasting with deep Poisson mixtures. International Journal of Forecasting 2024, 40, 470–489. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.u.; Polosukhin, I. Attention is All you Need. In Proceedings of the Advances in Neural Information Processing Systems, 2017, Vol. 30, pp. 5998–6008.

- Zhou, H.; Zhang, S.; Peng, J.; Zhang, S.; Li, J.; Xiong, H.; Zhang, W. Informer: Beyond Efficient Transformer for Long Sequence Time-Series Forecasting. Proceedings of the AAAI Conference on Artificial Intelligence 2021, 35, 11106–11115. [Google Scholar] [CrossRef]

- Wu, H.; Xu, J.; Wang, J.; Long, M. Autoformer: Decomposition Transformers with Auto-Correlation for Long-Term Series Forecasting. In Proceedings of the Advances in Neural Information Processing Systems, 2021, Vol. 34, pp. 22419–22430.

- Woo, G.; Liu, C.; Sahoo, D.; Kumar, A.; Hoi, S. ETSformer: Exponential Smoothing Transformers for Time-series Forecasting. 2022; arXiv:cs.LG/2202.01381. [Google Scholar]

- Liu, Y.; Wu, H.; Wang, J. Non-stationary transformers: Exploring the stationarity in time series forecasting. In Proceedings of the 36th Conference on Neural Information Processing Systems (NeurIPS 2022), 2022, Vol. 35, Adv. Neural Inf. Process. Syst., p. 9881–9893.

- Kitaev, N.; Łukasz Kaiser.; Levskaya, A. Reformer: The Efficient Transformer, 2020, [arXiv:cs.LG/2001.04451].

- Kashif Rasul. Time Series Transformer. https://huggingface.co/docs/transformers/en/model_doc/time_series_transformer. Hugging Face. Accessed: 2024-09-06.

- Casolaro, A.; Capone, V.; Iannuzzo, G.; Camastra, F. Deep Learning for Time Series Forecasting: Advances and Open Problems. Information 2023, 14. [Google Scholar] [CrossRef]

- Ansari, A.F.; Stella, L.; Turkmen, C.; Zhang, X.; Mercado, P.; Shen, H.; Shchur, O.; Rangapuram, S.S.; Arango, S.P.; Kapoor, S.; et al. Chronos: Learning the Language of Time Series. 2024; arXiv:cs.LG/2403.07815. [Google Scholar]

- Rasul, K.; Ashok, A.; Williams, A.R.; Ghonia, H.; Bhagwatkar, R.; Khorasani, A.; Bayazi, M.J.D.; Adamopoulos, G.; Riachi, R.; Hassen, N.; et al. Lag-Llama: Towards Foundation Models for Probabilistic Time Series Forecasting. 2024; arXiv:cs.LG/2310.08278. [Google Scholar]

- Alexandrov, A.; Benidis, K.; Bohlke-Schneider, M.; Flunkert, V.; Gasthaus, J.; Januschowski, T.; Maddix, D.C.; Rangapuram, S.; Salinas, D.; Schulz, J.; et al. GluonTS: Probabilistic and Neural Time Series Modeling in Python. Journal of Machine Learning Research 2020, 21, 4629–4634. [Google Scholar]

- Rasul, K. pytorch-transformer-ts. https://github.com/kashif/pytorch-transformer-ts, 2021. Accessed: 2024-12-04.

- Makridakis, S.; Spiliotis, E.; Assimakopoulos, V. The M5 competition: Background, organization, and implementation. International Journal of Forecasting 2022, 38, 1325–1336. [Google Scholar] [CrossRef]

- Akiba, T.; Sano, S.; Yanase, T.; Ohta, T.; Koyama, M. Optuna: A Next-generation Hyperparameter Optimization Framework. In Proceedings of the 25th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining; 2019. [Google Scholar]

- Gasthaus, J.; Benidis, K.; Wang, Y.; Rangapuram, S.S.; Salinas, D.; Flunkert, V.; Januschowski, T. Probabilistic Forecasting with Spline Quantile Function RNNs. In Proceedings of the Twenty-Second International Conference on Artificial Intelligence and Statistics; Chaudhuri, K.; Sugiyama, M., Eds. PMLR, 16–18 Apr 2019, Vol. 89, Proceedings of Machine Learning Research, pp. 1901–1910.

- Hyndman, R.J.; Koehler, A.B. Another look at measures of forecast accuracy. International Journal of Forecasting 2006, 22, 679–688. [Google Scholar] [CrossRef]

- Koenker, R.; Hallock, K.F. Quantile Regression. Journal of Economic Perspectives 2001, 15, 143–156. [Google Scholar] [CrossRef]

| Hyperparameter | Range | |

|---|---|---|

| Transformer, Autoformer, Informer | ETSformer | |

| NSTransformer, Reformer | ||

| Context length | ||

| Batch size | ||

| Number of encoder layers | — | |

| Number of decoder layers | — | |

| Parameter | Value |

|---|---|

| Number of trials | 10 |

| Number of epochs | 10 |

| Number of batches per epoch | 50 |

| Number of samples | 20 |

| Validation function | Mean Weighted Quantile Loss (MWQL) |

| Parameter | Transformer | Autoformer | ETSformer | Informer | NSTransformer | Reformer |

|---|---|---|---|---|---|---|

| Prediction length of decoder | 28 | |||||

| Distribution output | Student’s t | |||||

| Loss function | Negative log likelyhood | |||||

| Learning rate | ||||||

| Size of target | 1 | |||||

| Scale of the input target | mean | std | std | std | — | std |

| Lags sequence | [1,2,3,4,5,6,7,8,13,14,15,20,21,22,27,28,29,30,31,56,84,363,364,365,727, | |||||

| 728,729,1091,1092,1093] | ||||||

| Dimensionality of Transformer layers | 32 | — | 64 | 64 | — | 64 |

| Number of attention heads | 2 | |||||

| Feedforward hidden size | 32 | 32 | — | 32 | 32 | — |

| Activation function | gelu | relu | — | relu | gelu | — |

| Dropout for fully connected layers | ||||||

| Moving average window | — | 25 | — | — | — | — |

| Autocorrelation factor | — | 1 | — | — | — | — |

| Number of layers | — | — | 2 | — | — | — |

| K largest amplitudes | — | — | 4 | — | — | — |

| Embedding kernel size | — | — | 3 | — | — | — |

| Attention in encoder | — | — | — | ProbAttention | — | — |

| Use distilling in encoder | — | — | — | True | — | — |

| ProbSparse sampling factor | — | — | — | 5 | — | — |

| Hyperparameter | Transformer | Autoformer | ETSformer | Informer | NSTransformer | Reformer |

|---|---|---|---|---|---|---|

| Without features | ||||||

| Context length | 28 | 28 | ||||

| Batch size | 128 | 64 | 128 | 256 | 256 | 64 |

| Number of encoder layers | 16 | 16 | — | 16 | 4 | 4 |

| Number of decoder layers | 2 | 8 | — | 16 | 8 | 4 |

| Best MWQL value | ||||||

| With features | ||||||

| Context length | 28 | 28 | 28 | |||

| Batch size | 256 | 256 | 128 | 128 | 256 | 32 |

| Number of encoder layers | 8 | 4 | — | 2 | 4 | 16 |

| Number of decoder layers | 4 | 4 | — | 2 | 4 | 2 |

| Best MWQL value | ||||||

| Point forecast metrics | Probabilistic forecast metrics | |||||||

| Model | MASE | NRMSE | WQL | WQL | WQL | MWQL | MAE Coverage |

|

| Transformer | Without features | |||||||

| With features | ||||||||

| Autoformer | Without features | |||||||

| With features | ||||||||

| ETSformer | Without features | |||||||

| With features | ||||||||

| Informer | Without features | |||||||

| With features | ||||||||

| NSTransformer | Without features | |||||||

| With features | ||||||||

| Reformer | Without features | |||||||

| With features | ||||||||

| MASE | NRMSE | ||||||||

| Model | 1 step | 7 steps | 14 steps | 21 steps | 1 step | 7 steps | 14 steps | 21 steps | |

| Transformer | Without features | ||||||||

| With features | |||||||||

| Autoformer | Without features | ||||||||

| With features | |||||||||

| ETSformer | Without features | ||||||||

| With features | |||||||||

| Informer | Without features | ||||||||

| With features | |||||||||

| NSTransformer | Without features | ||||||||

| With features | |||||||||

| Reformer | Without features | ||||||||

| With features | |||||||||

| MWQL | MAE Coverage | ||||||||

| Model | 1 step | 7 steps | 14 steps | 21 steps | 1 step | 7 steps | 14 steps | 21 steps | |

| Transformer | Without features | ||||||||

| With features | |||||||||

| Autoformer | Without features | ||||||||

| With features | |||||||||

| ETSformer | Without features | ||||||||

| With features | |||||||||

| Informer | Without features | ||||||||

| With features | |||||||||

| NSTransformer | Without features | ||||||||

| With features | |||||||||

| Reformer | Without features | ||||||||

| With features | |||||||||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).