Submitted:

30 January 2025

Posted:

31 January 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

- Refactoring large, complex codebases [5].

- Implementing multi-step workflows like creating database models, updating service layers, and generating API endpoints.

- Conducting automated code reviews to ensure adherence to coding standards and best practices.

- Detecting and fixing code smells, such as duplicated code or overly complex methods [5].

- Generating comprehensive unit and integration tests to ensure high code coverage [8].

- Replacing deprecated APIs with modern alternatives.

3. Foundational Building Blocks of the Workflow

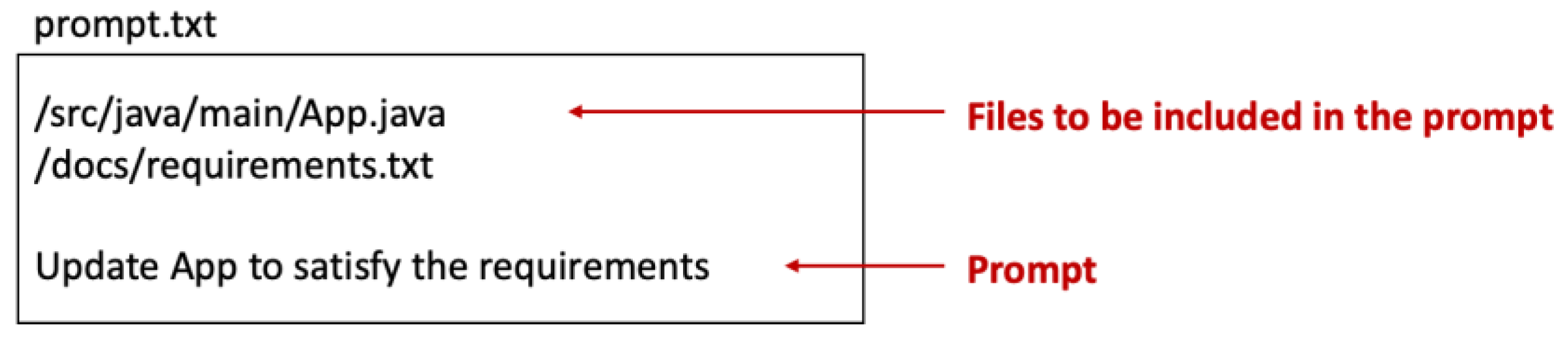

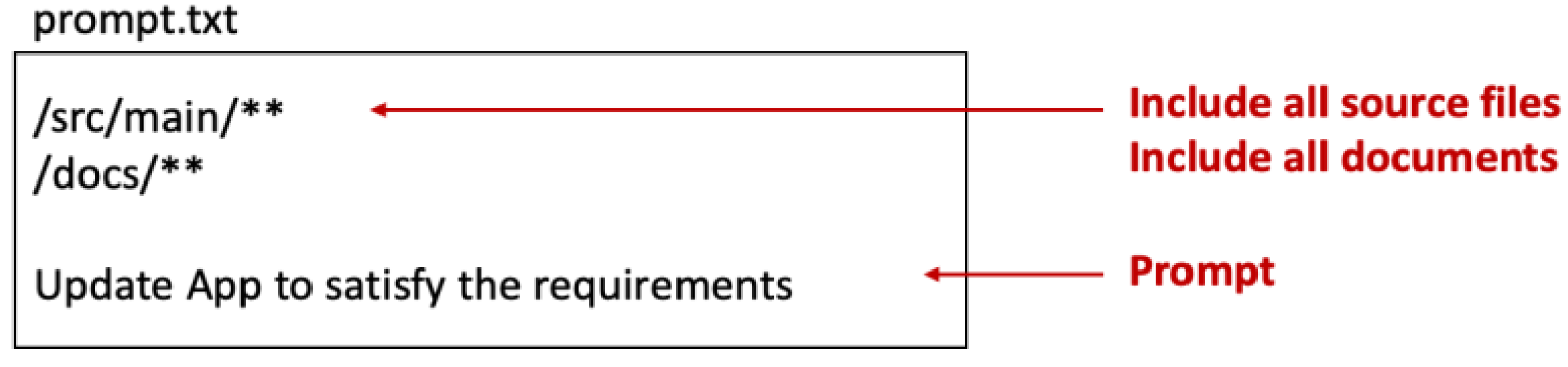

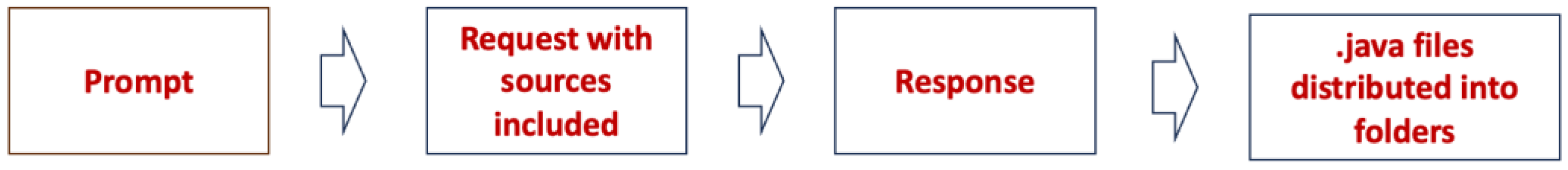

- Including Files into Prompts – Allows developers to include multiple files or folders in a prompt by specifying paths, ensuring the LLM has the necessary context for accurate code generation.

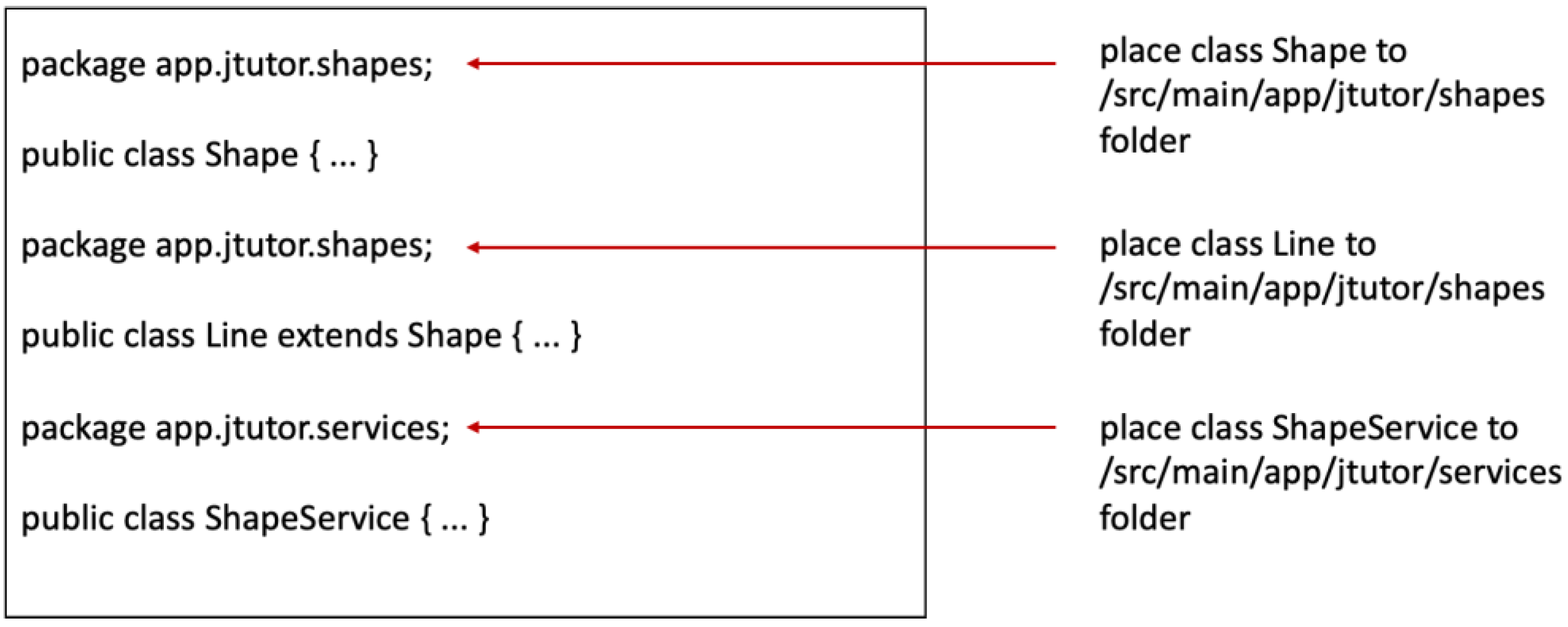

- Automated Response Parsing and File Organization – Once the LLM generates code, it must correctly create the files and place them in the appropriate project directories.

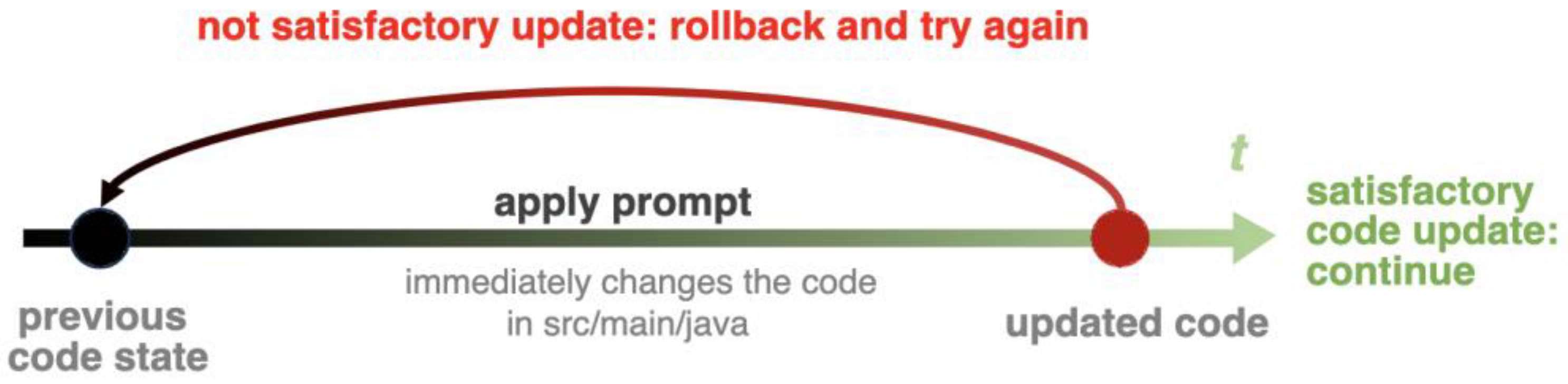

- Automatic Rollbacks – If the generated code doesn’t meet expectations, the framework automatically reverts changes, keeping the project consistent and ready for the next update.

- Automatic Context Discovery – Analyzes the project structure and dependencies to identify the minimal set of relevant files needed for a prompt, ensuring accuracy while avoiding unnecessary context overload.

3.1. Including Files into Prompts

3.2. Automated Parsing of the Generated Code

3.3. Automatic Rollbacks

3.4. Automatic Context Discovery

- Benefits of Automated Context Selection:

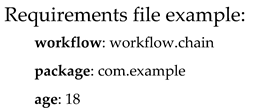

4. Prompt Directives and Prompt Templates

4.1. Prompt Directives

- #model: <model_name>

- #temperature: <value>

- #save-to: <path_to_file>

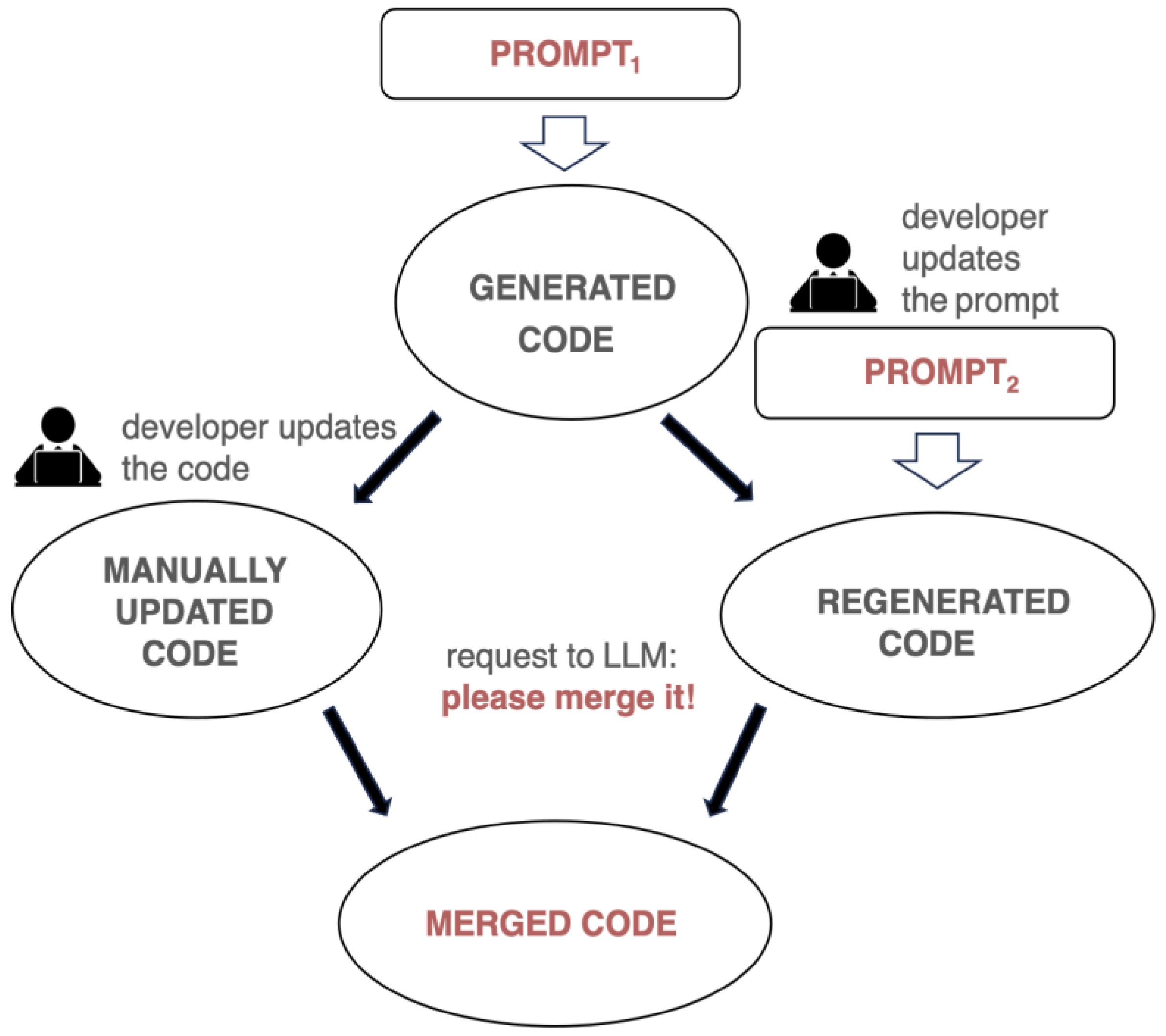

4.2. Merging Generated Code and Manually Updated Code

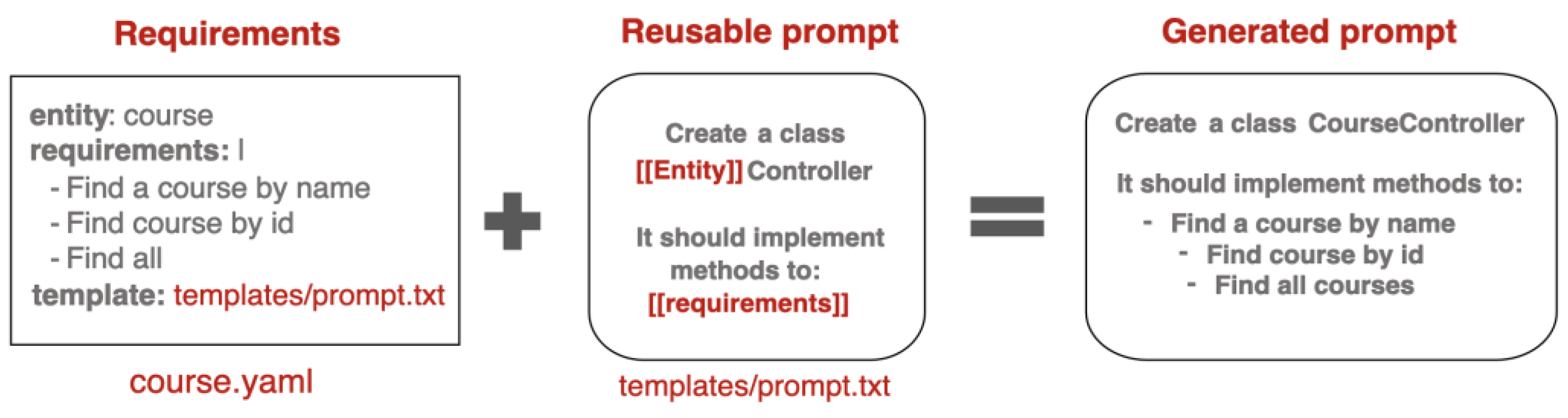

4.3. Reusable Prompt Templates

5. Automating Development with Workflow-Oriented Prompt Pipelines

- Update the domain model

- Modify the service layer

- Create API endpoints

- Write unit tests

5.1. Prompt Pipeline for Workflow Automation

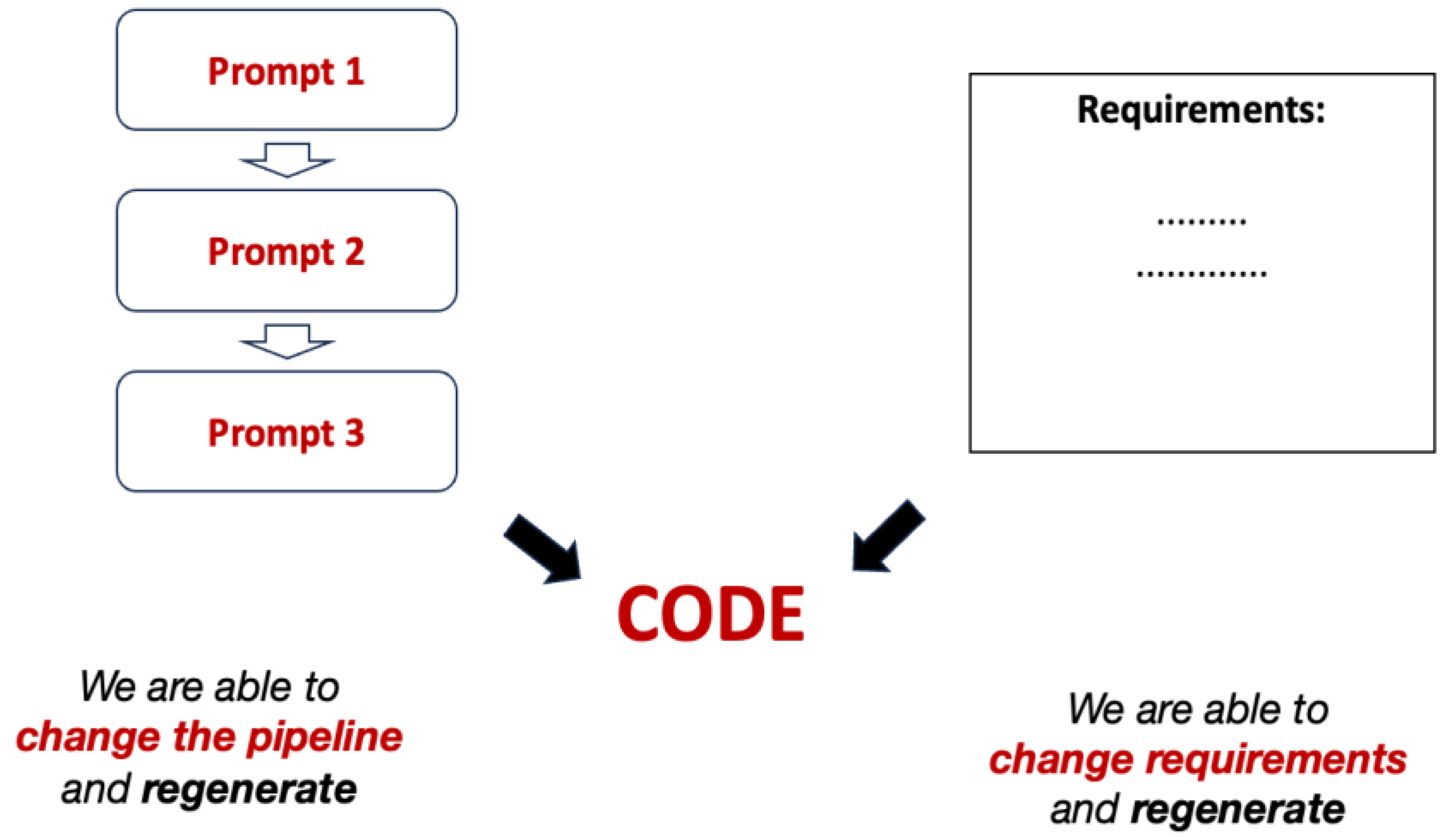

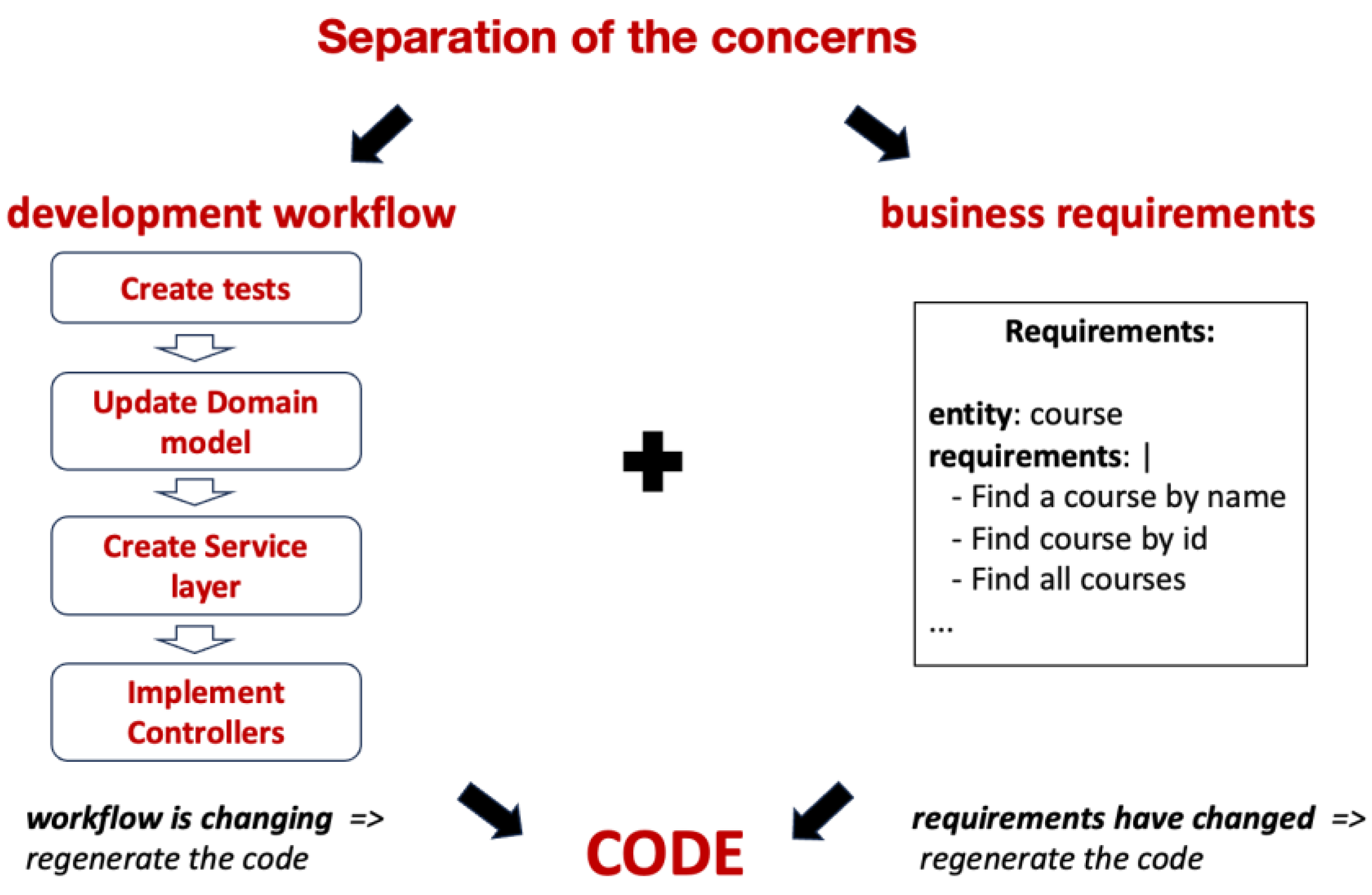

5.2. Separation of Concerns in Workflow Automation

- Changing the workflow: When a workflow is updated (e.g., to generate additional documentation), the same requirements can be reapplied to the updated workflow. Steps that have already been executed (e.g., domain model generation) are not re-executed unless affected by the changes. However, if an early step in the workflow is modified, all subsequent dependent steps will be re-executed to ensure consistency.

- Changing the requirements: When requirements change, the system automatically rolls back changes made by the previous workflow and regenerates the code to align with the updated requirements. This minimizes manual corrections and allows developers to focus on refining the requirements. Ideally, the workflow is established once, requiring only updates to the requirements or the addition of new ones, while the workflow handles the rest automatically.

6. Enhancing the Reliability of LLM-Generated Code

6.1. Self-healing of the generated code

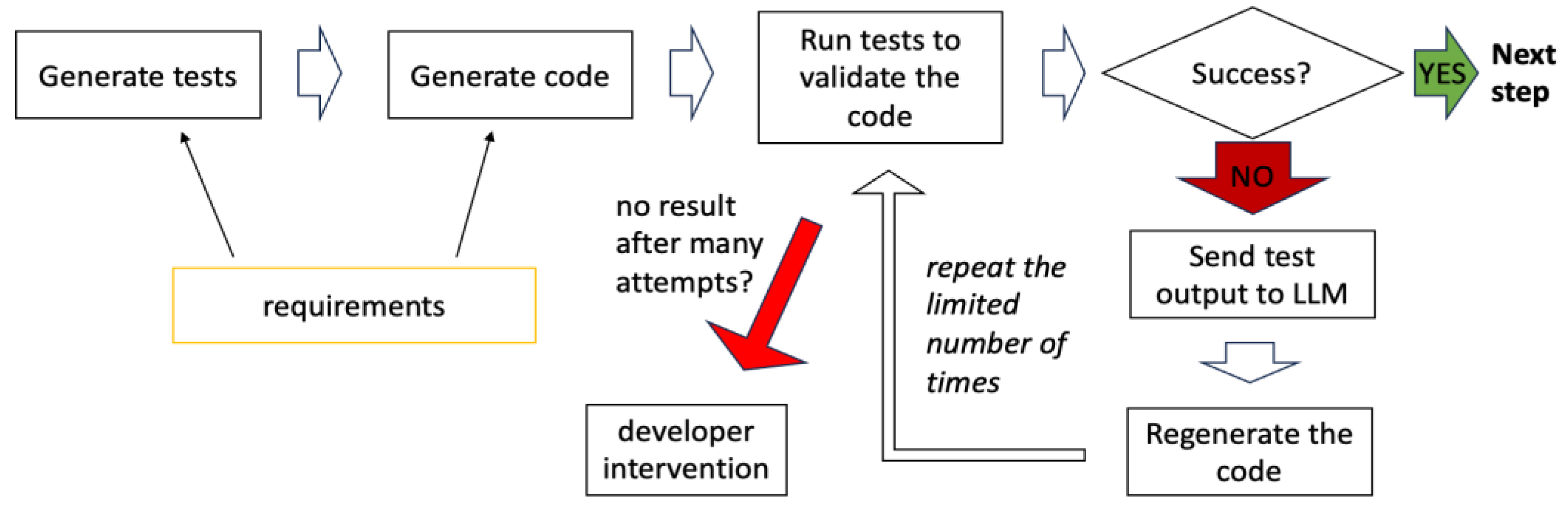

- Code Validation with Automated Tests: Generates and runs tests to verify that the code aligns with the requirements, identifying errors automatically.

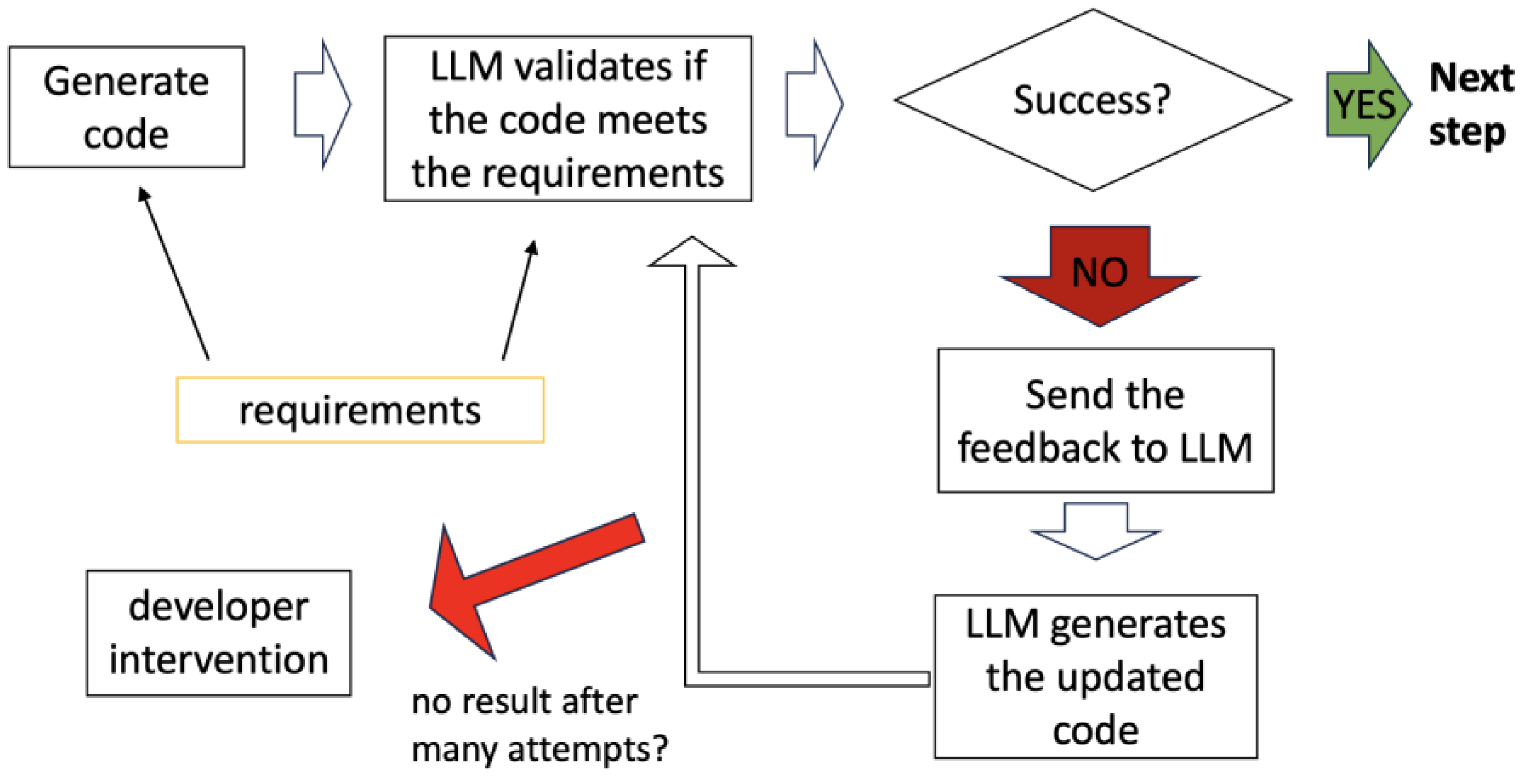

- Code Validation Using LLM Self-Assessment: Uses an LLM to analyze the generated code against predefined requirements.

6.2. Code Validation with Automated Tests

- Refine the requirements to improve clarity for LLM processing,

- Select an alternative model for validation or code regeneration,

- Adjust the prompt pipeline to enhance the quality of generated output

6.3. Code Validation Using LLM Self-Assessment

6.4. Combining Tests and Self-Assessment

- Generating Model: GPT-4o generates the code.

7. Prompt Pipeline Language

7.1. Directives in the Prompt Pipeline Language

- #context Defines a reusable block of context that can be included in multiple prompts.

- #prompt Specifies a prompt name. Prompts are the building blocks of the pipeline.

- #include Includes the specified context or output of a previous prompt into the current prompt. The special keyword prev includes the output of the immediately preceding prompt.

- #feedback Provides feedback to the LLM if a prompt fails or needs to be retried.

- #feedback-include Enables the inclusion of outputs or requests from multiple prompts in the feedback provided to the LLM. This ensures the model has adequate context to address issues and refine its responses.

- #repeat-if Repeats the prompt if a specified condition is met.

- #repeat-prompt Specifies the name of the prompt to execute if the current prompt fails (#repeat-if condition is met). If this directive is not specified, the current prompt is repeated by default upon failure.

- #attempts Specifies the maximum number of attempts for a prompt, including the first attempt and retry attempts. If all attempts fail (#repeat-if condition is met), the workflow stops and requires human intervention.

- #save-to Saves the output of the prompt to a file for further use.

- #test Marks a prompt that generates test cases. The generated tests are parsed and placed in the /src/test/java directory.

- #run Executes a command or script, such as running or testing the generated application.

7.2. Example Workflow

-

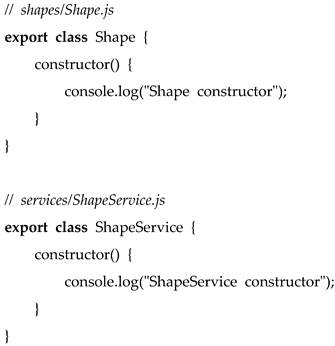

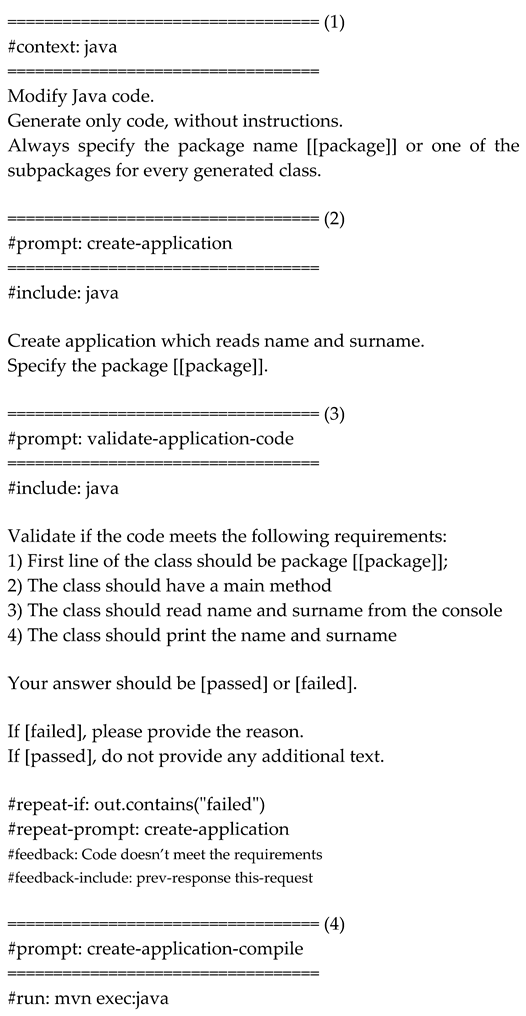

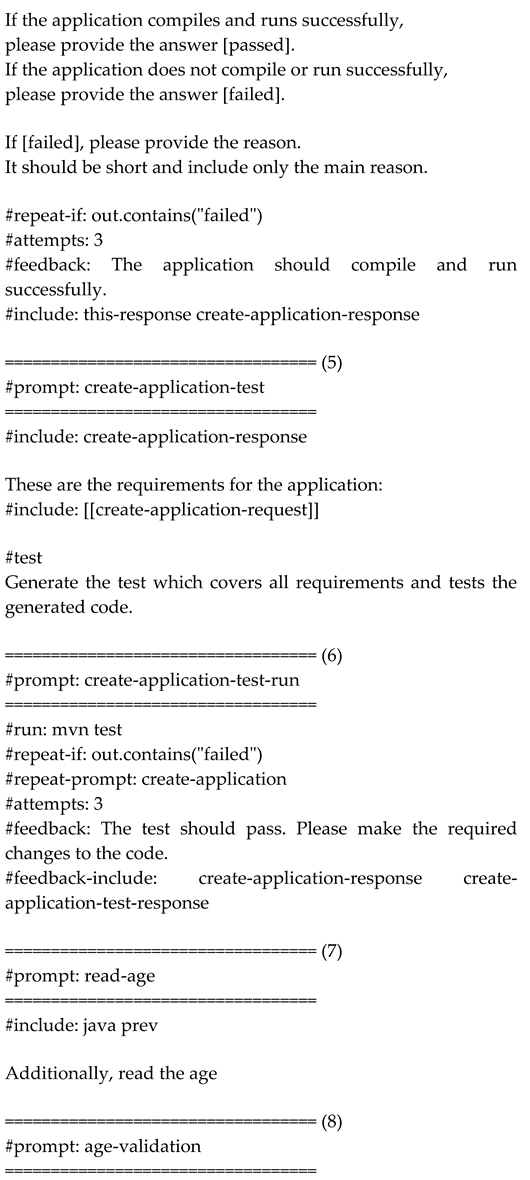

Step (1): Context DefinitionThe #context directive defines the reusable block java, which specifies that Java code should follow certain conventions, such as using package com.example.

-

Step (2): Application CreationThe create-application prompt generates an application that reads the user’s name and surname.

- Includes: The java context.

- Fallback: If this prompt fails, the create-application prompt is retried.

-

Step (3): Application ValidationThe validate-application-code prompt validates if the generated code meets the requirements: 1. The class must begin with the package declaration package com.example. 2. It must contain a main method. 3. It must read the name and surname from the console. 4. It must print the name and surname.

- Fallback: If validation fails, the prompt retries the application creation.

-

Step (4): Application Compilation and ExecutionThe create-application-compile prompt compiles and runs the application using mvn exec:java.

- Condition: If the application fails to compile or run, feedback is provided, and the prompt is retried up to two times.

- Feedback: "The application should compile and run successfully."

-

Step (5): Test GenerationThe create-application-test prompt generates test cases to validate the application’s functionality. - Includes: Output of the create-application prompt and requirements.

-

Step (6): Test ExecutionThe create-application-test-run prompt executes the tests using mvn test.

- Attempts: If the tests fail, they are retried up to two times (the overall number of attempts is 3).

- Feedback: "The test should pass. Please make the required changes to the code."

- Fallback to Application Creation: If test execution fails, the directive #repeat-prompt: create-application re-executes the earlier prompt to regenerate the code.

-

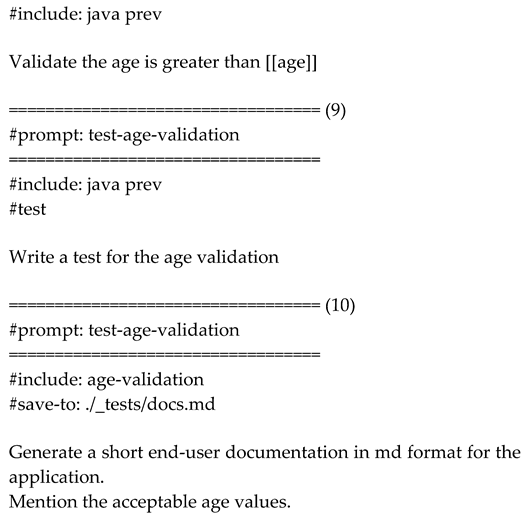

Step (7): Additional RequirementsThe read-age prompt updates the application to also read the user’s age.

- Includes: The output of the previous prompt.

-

Step (8): Age ValidationThe age-validation prompt ensures that the entered age is greater than a specified value from the requirements.

-

Step (9): Age Validation TestThe test-age-validation prompt generates test cases to validate the age input logic.

-

Step (10): Documentation GenerationThe final prompt generates end-user documentation for the application, specifying acceptable age values.

- Saves to: ./_tests/docs.md.

The placeholders, like package or age, are taken from the requirements.yaml file.

7.3. Automating Workflow Creation

- The application reads name, surname, and age from the console.

- The generated code is validated.

- The application is compiled and executed.

- Tests are generated and executed.

- If errors occur, the process automatically retries or provides feedback to the LLM for corrections.

8. Practical Applications of Workflow-Oriented AI Code Generation

- Implement New Features: The framework can automate the generation of boilerplate code, including REST API endpoints [39], service classes, and database models, using reusable prompt pipelines. This speeds up repetitive tasks, such as creating CRUD operations [6], standard controllers, and DTO classes [7]. This approach is particularly effective for established practices where processes are well-defined and easily automated.

- Code Review: The framework can automate code reviews to ensure adherence to coding standards, best practices, and potential bug detection [40]. It generates detailed reports with suggestions for improvements, streamlining and enhancing the thoroughness of the review process. Users can customize the rules to be checked, specify ignored rules, and define the report format. Additionally, the framework can automatically apply fixes for detected issues, further reducing manual effort.

- Fixing Bugs: The framework can assist in fixing simple bugs that do not require extensive debugging (with future potential to incorporate debugging capabilities). It analyzes the code, identifies the issue, applies a fix, and runs tests to verify the resolution.

- Generate Tests: The framework can automatically generate unit tests for existing classes, improving test coverage. Test cases are created based on the code structure, method signatures, and expected behavior, providing a robust foundation for test-driven development (TDD).

- Code Refactoring: The framework enhances code quality by refactoring large, complex methods into smaller, more manageable ones. It offers suggestions for optimizing code and improving readability, maintainability, and efficiency.

- Analyze Security Flaws: The framework identifies and resolves common security vulnerabilities, such as SQL injection, by analyzing the code and recommending secure coding practices.

- Replace Deprecated APIs: The framework automates the process of updating code that uses deprecated APIs by identifying outdated methods and classes and applying appropriate replacements. This ensures seamless migration to newer API versions, provided the framework has knowledge of the deprecations and their replacements.

- Fix Code Smells: The framework detects code smells [5], such as duplicated code, long methods, and complex conditionals, and offers refactoring suggestions to enhance code quality and maintainability.

- Generate the Documentation: The framework automates the generation of documentation for code segments, such as REST endpoints, classes, and methods. It produces detailed, customizable documentation in the desired format, ensuring alignment with the latest code changes.

9. Framework Reference Implementation: JAIG

10. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- GitHub Copilot, Available online: https://github.com/features/copilot.

- Cursor, the AI Code Editor, Available online: https://www.cursor.com/.

- Iusztin, P.; Labonne, M. LLM Engineer's Handbook: Master the art of engineering large language models from concept to production; Packt Publishing, 2024. [Google Scholar]

- Raschka, S. Build a Large Language Model; Manning: New York, NY, USA, 2024. [Google Scholar]

- Fowler, M. Refactoring: Improving the Design of Existing Code (2nd Edition); Addison-Wesley Professional, 2018. [Google Scholar]

- Bonteanu, A.-M.; Tudose, C.; Anghel, A.M. Multi-Platform Performance Analysis for CRUD Operations in Relational Databases from Java Programs using Spring Data JPA. In Proceedings of the 13th International Symposium on Advanced Topics in Electrical Engineering (ATEE), Bucharest, Romania, 23–25 March 2023. [Google Scholar]

- Tudose, C. Java Persistence with Spring Data and Hibernate; Manning: New York, NY, USA, 2023. [Google Scholar]

- Tudose, C. JUnit in Action; Manning: New York, NY, USA, 2020. [Google Scholar]

- Martin, E. Mastering SQL Injection: A Comprehensive Guide to Exploiting and Defending Databases; 2023.

- Caselli, E.; Galluccio, E.; Lombari, G. SQL Injection Strategies: Practical techniques to secure old vulnerabilities against modern attacks; Packt Publishing, 2020. [Google Scholar]

- Imai, S. Is GitHub Copilot a Substitute for Human Pair-programming? An Empirical Study, ACM/IEEE 44th International Conference on Software Engineering: Companion Proceedings, 2022, 319-321.

- Nguyen, N.; Nadi, S. An Empirical Evaluation of GitHub Copilot's Code Suggestions, 2022 Mining Software Repositories Conference, 2022, 1-5.

- Zhang, B.Q.; Liang, P.; Zhou, X.Y.; Ahmad, A.; Waseem, M. Demystifying Practices, Challenges and Expected Features of Using GitHub Copilot. International Journal of Software Engineering and Knowledge Engineering 2023, 33, 1653–1672. [Google Scholar] [CrossRef]

- Yetistiren, B.; Ozsoy, I.; Tuzun, E. Assessing the Quality of GitHub Copilot's Code Generation, Proceedings of the 18th International Conference on Predictive Models and Data Analytics in Software Engineering, 2022, 62-71.

- Suciu, G.; Sachian, M.A.; Bratulescu, R.; Koci, K.; Parangoni, G. Entity Recognition on Border Security, Proceedings of the 19th International Conference on Availability, Reliability and Security, 2024, 1-6.

- Jiao, L.; Zhao, J.; Wang, C.; Liu, X.; Liu, F.; Li, L.; Shang, R.; Li, Y.; Ma, W.; Yang, S. Nature-Inspired Intelligent Computing: A Comprehensive Survey. Research 2024, 7, 0442. [Google Scholar]

- El Haji, K.; Brandt, C.; Zaidman, A. Using GitHub Copilot for Test Generation in Python: An Empirical Study, Proceedings of the 2024 IEEE/ACM International Conference on Automation of Software Test, 2024, 45-55. A.

- Tufano, M.; Agarwal, A.; Jang, J.; Moghaddam, R.Z.; Sundaresan, N. AutoDev: Automated AI-Driven Development. arXiv 2024, arXiv:2403.08299. [Google Scholar]

- Ridnik, T.; Kredo, D.; Friedman, I. Code Generation with AlphaCodium: From Prompt Engineering to Flow Engineering. arXiv 2024, arXiv:2401.08500. [Google Scholar]

- Arnold, K.; Gosling, J.; Holmes, D. The Java Programming Language, 4th ed.; Addison-Wesley Professional: Glenview, IL, USA, 2005. [Google Scholar]

- Sierra, K.; Bates, B.; Gee, T. Head First Java: A Brain-Friendly Guide, 3rd ed.; O’Reilly Media: Sebastopol, CA, USA, 2022. [Google Scholar]

- Ackermann, P. JavaScript: The Comprehensive Guide to Learning Professional JavaScript Programming; Rheinwerk Computing, 2022. [Google Scholar]

- Svekis, L.L.; van Putten, M.; Percival, R. JavaScript from Beginner to Professional: Learn JavaScript quickly by building fun, interactive, and dynamic web apps, games, and pages; Packt Publishing, 2021. [Google Scholar]

- Amazon AWS Documentation, Available online: https://docs.aws.amazon.com/wellarchitected/latest/devops-guidance/dl.ads.2-implement-automatic-rollbacks-for-failed-deployments.html.

- Chatbot App, Available online: https://chatbotapp.ai.

- Using OpenAI o1 models and GPT-4o models on ChatGPT, Available online: https://help.openai.com/en/articles/9824965-using-openai-o1-models-and-gpt-4o-models-on-chatgpt.

- Varanasi, B. Introducing Maven: A Build Tool for Today's Java Developers; Apress, 2019. [Google Scholar]

- Sommerville, I. Software Engineering, 10th ed.; Pearson, 2015. [Google Scholar]

- Anghel, I.I.; Calin, R.S.; Nedelea, M.L.; Stanica, I.C.; Tudose, C.; Boiangiu, C.A. Software development methodologies: A comparative analysis. UPB Sci. Bull. 2022, 83, 45–58. [Google Scholar]

- Ling, Z.; Fang, Y.H.; Li, X.L.; Huang, Z.; Lee, M.; Memisevic, R.; Su, H. Deductive Verification of Chain-of-Thought Reasoning Advances in Neural Information Processing Systems 36 2023.

- Li, L.H.; Hessel, J.; Yu, Y.; Ren, X.; Chang, K.W.; Choi, Y. Symbolic Chain-of-Thought Distillation: Small Models Can Also "Think" Step-by-Step, Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics, 2023, 1, 2665-2679.

- Cormen T., H.; Leiserson, C.; Rivest, R.; Stein, C. Introduction to Algorithms; MIT Press, 2009. [Google Scholar]

- Smith, D.R. Top-Down Synthesis of Divide-and-Conquer Algorithms. Artificial Intelligence 1985, 27, 43–96. [Google Scholar] [CrossRef]

- Daga, A.; de Cesare, S.; Lycett, M. Separation of Concerns: Techniques, Issues and Implications. Journal of Intelligent Systems 2006, 15, 153–175. [Google Scholar] [CrossRef]

- Walls, C. Spring in Action; Manning: New York, NY, USA, 2022. [Google Scholar]

- Ghosh, D.; Sharman, R.; Rao, H.R.; Upadhyaya, S. Self-healing systems - survey and synthesis. Decision Support Systems 2007, 42, 2164–2185. [Google Scholar] [CrossRef]

- Claude Sonnet Official Website, Available online: https://claude.ai/.

- Claude 3.5 Sonnet Announcement, Available online: https://www.anthropic.com/news/claude-3-5-sonnet.

- Fielding, R.T. Architectural Styles and the Design of Network-based Software Architectures. PhD Thesis, University of California, Irvine, CA, USA, 2000. [Google Scholar]

- Martin, R.C. Clean Code: A Handbook of Agile Software Craftsmanship; Pearson, 2008. [Google Scholar]

- IntelliJ IDEA Official Website, Available online: https://www.jetbrains.com/idea/.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).