Submitted:

25 January 2025

Posted:

27 January 2025

You are already at the latest version

Abstract

Keywords:

I Introduction

1.1. Important Elements in an Optimized Charging Control System

1.2. Research Objective

1.3. The Remainder of the Research

II. Literature Review

| Reference No | Objective | Methodology | Result | Limitations |

|---|---|---|---|---|

| [6] | Predict EV charging station selection behavior. | XGBoost with SHAP for 500 EVs in Japan | XGBoost had the greatest accuracy, and SHAP explained the feature's significance. | Data restricted to Japan, generalizability uncertain |

| [7] | Forecast EV charging period | ELM, FFNN, and SVR enhanced by GWO, PSO, GA on 500 EVs in Japan | GWO-based models surpassed others | The optimization method may require validation with other datasets |

| [8] | Forecast session duration and power utilization | RF, SVM, XGBoost, DNN with historical, traffic, weather, and event data | Ensemble learning attained 9.9% SMAPE for duration and 11.6% for energy | Findings may not generalize beyond the dataset. |

| [9] | Use ensemble machine learning to forecast the charging time. | RF, XGBoost, CatBoost, LightGBM with SHAP on 500 EVs in Japan | XGBoost demonstrated the highest accuracy, SHAP emphasized important variables | Outcomes particular to Japan, not broadly applicable |

| [10] | Allow dynamic wireless charging | Hybrid DWC system with Improved-DSDV protocol and magnetic coupling | Dependable DWC with enhanced throughput and latency | Scalability and deployment difficulties not tackled |

| [11] | Predict EV metrics utilizing ML in MPC | Hybrid ML predicts and MPC to reduce electricity expenses | Decreased peak load by 46.7% and expenses by 20.9% | Concentrated on controlled studies, real-world difficulties not discussed |

| [12] | Evaluate EV charging infrastructure dependability | ML classifiers on 10 years of multilingual customer reviews | Government-owned stations had higher failure rates | Constrained data-sharing and region-specific pertinency |

| [13] | Forecast EV charging time with metaheuristic optimization | RF, CatBoost, and XGBoost enhanced by Ant Colony Optimization | Attained R2 of 20.5% (training), 12.4% (testing) | Requires enhancement in accuracy and cross-validation |

| [14] | Enhance charging to decrease emissions | Heuristic algorithm for enhanced scheduling in Ontario | Decreased emissions by 97% compared to the base case | Findings may differ by regional creation profiles and emissions |

| [15] | Enhance EV charging stations with renewables | MOPSO and TOPSIS for design with wind, PV, and storing | Enhanced optimization and quicker calculation | Constrained to Inner Mongolia, generalizability uncertain |

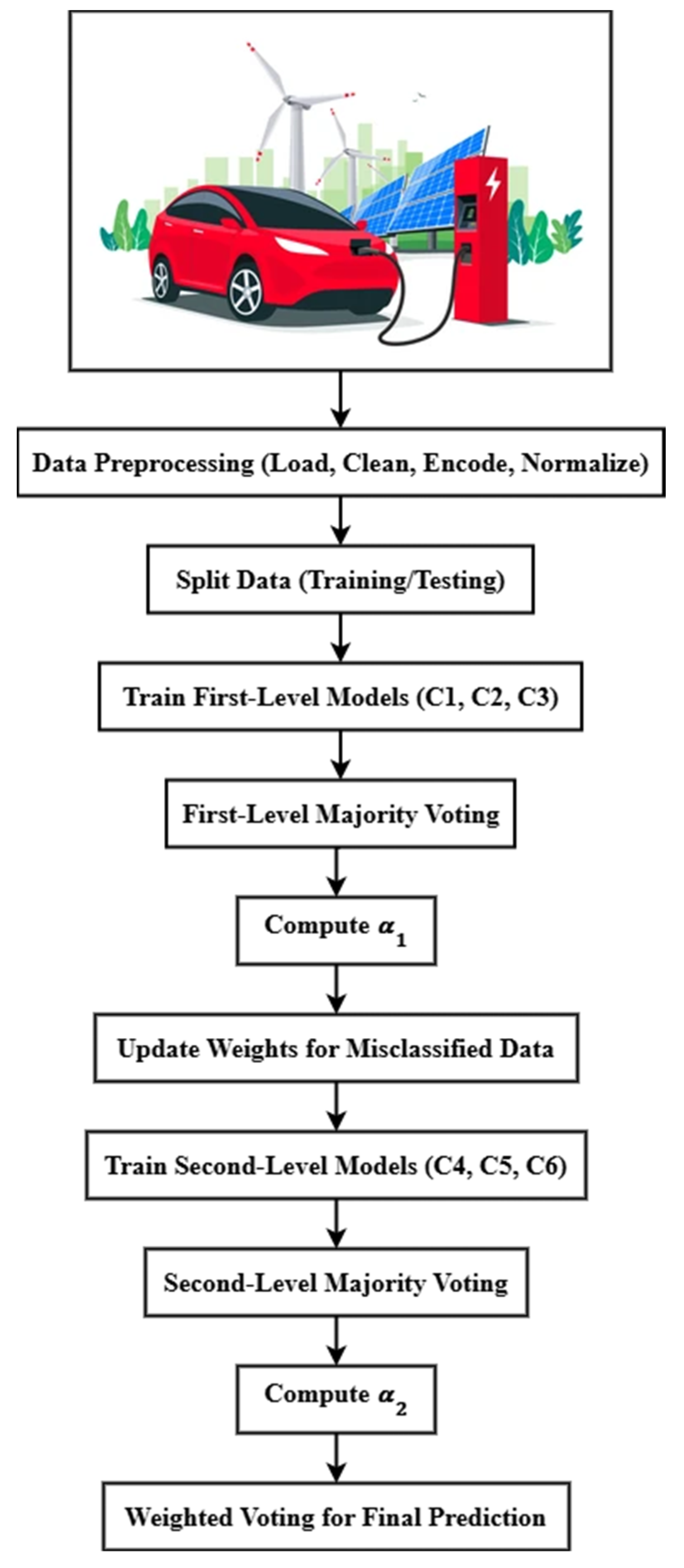

III. Methodology

3.1. Data Collection

3.2. Data Preprocessing Steps

3.2.1. Loading and Cleaning the Dataset

3.2.2. Encoding Categorical Features

3.2.3. Normalizing Numerical Features

3.2.4. Data Splitting

3.3. First-Level Ensemble of Classifiers

3.3.1. Selection of Basic Classifiers

- Decision Tree (C1): Recognized for its clear interpretation and capacity to model intricate relationships.

- Logistic Regression (C2): Suitable for binary classification with probabilistic outcomes.

- K-Nearest Neighbors (KNN, C3): A non-parametric model generates predictions depending on the proximity of data points.

3.3.2. Majority Voting Mechanism

3.3.3. Calculating Importance Score α1

3.4. Weight Adjustment for Misclassified Examples

3.4.1. Adjusting Weights

3.5. Second-Level Ensemble of Classifiers

3.5.1. Selection of Complex Classifiers

- Random Forest (C4): A powerful ensemble technique that uses numerous decision trees to generate more reliable predictions.

- Support Vector Machine (C5): Efficient in high-dimensional spaces and situations where classes are separated by a hyperplane.

- Naive Bayes (C6): A probabilistic classifier depending on Bayes' theorem is renowned for its effectiveness and simplicity.

3.5.2. Second-Level Majority Voting

3.5.3. Computing Importance Score (α2)

3.6. Final Combined Predictions

3.6.1. Weighted Voting for Final Prediction

| Pseudocode 1: Dual-Level Voting Boost (DLVB) |

| Handle missing data, encode categorical features, and normalize numerical values. Divide into training and testing sets. Train classifiers: Decision Tree (C1), Logistic Regression (C2), KNN (C3). Execute majority voting for predictions. Compute importance score α1. Rise weights of misclassified samples for the next level. Train classifiers: Random Forest (C4), SVM (C5), Naive Bayes (C6). Execute majority voting for predictions. Compute importance score α2. Utilize weighted voting depending on α1 and α2 for final prediction. |

IV. Performance Analysis

4.1. Experimental Setup

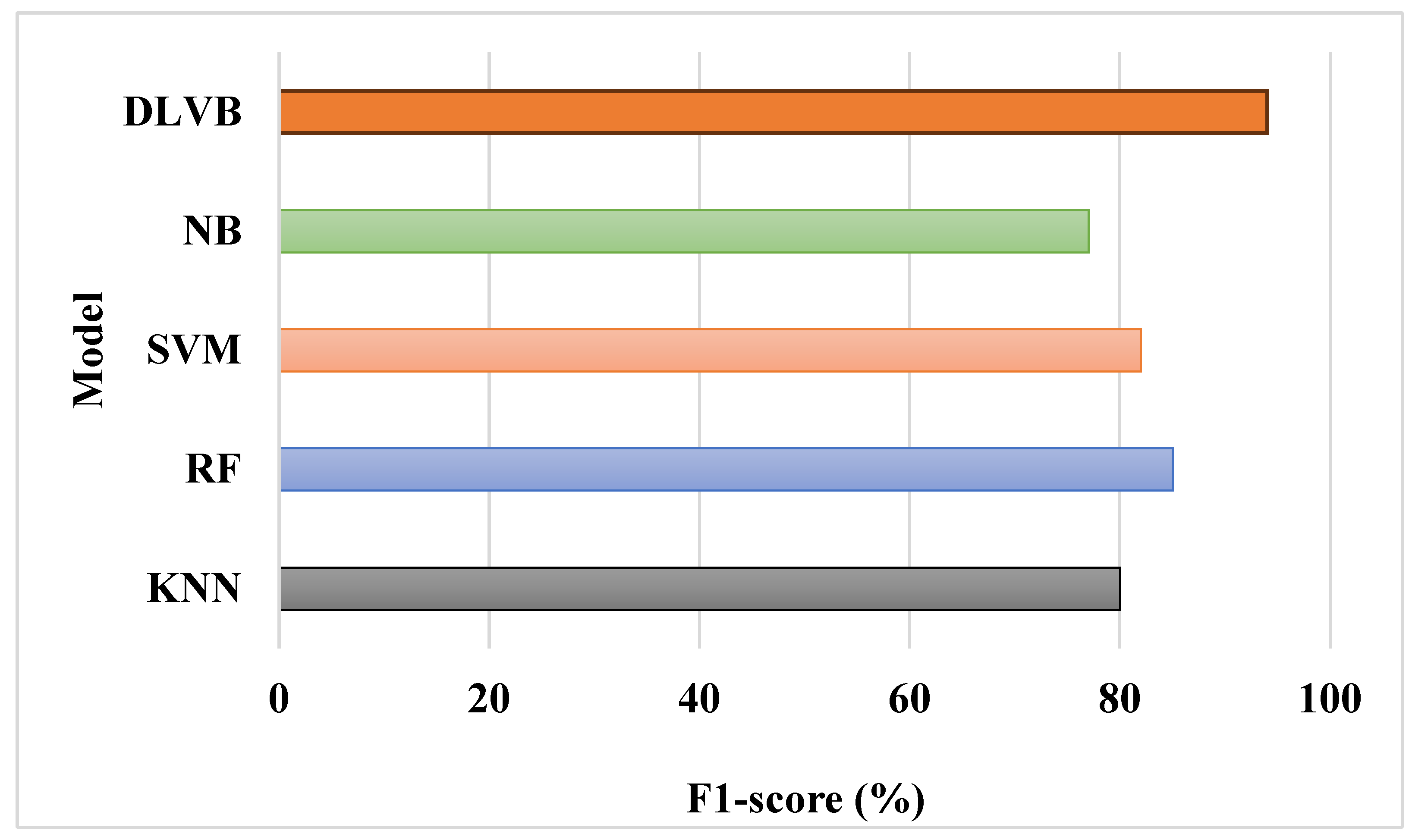

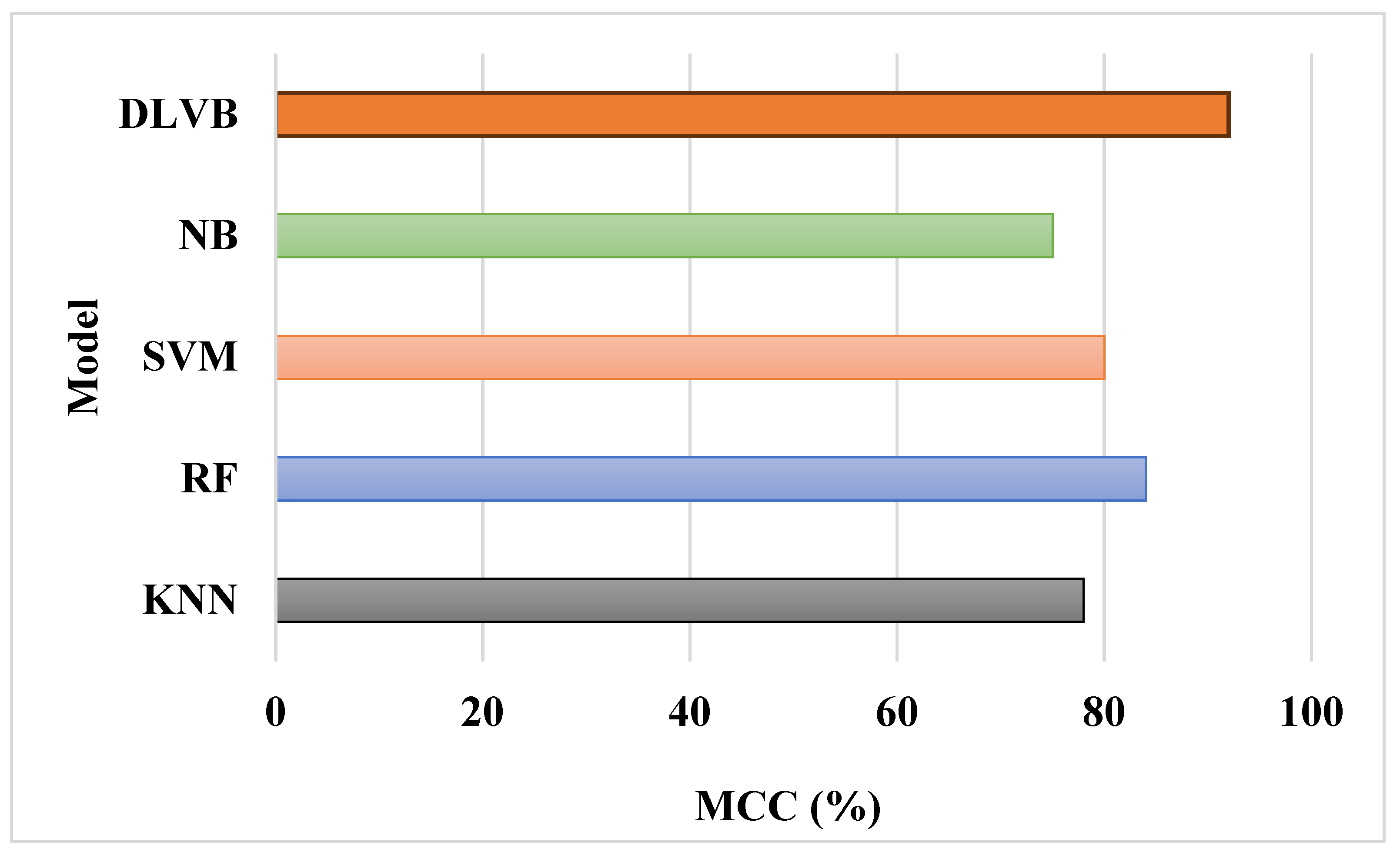

4.2. Comparative Analysis

V. Conclusions

Drawbacks and Future Scope

References

- adeghian, O., Oshnoei, A., Mohammadi-Ivatloo, B., Vahidinasab, V. and Anvari-Moghaddam, A., 2022. A comprehensive review on electric vehicles smart charging: Solutions, strategies, technologies, and challenges. Journal of Energy Storage, 54, p.105241. [CrossRef]

- Hemavathi, S. and Shinisha, A., 2022. A study on trends and developments in electric vehicle charging technologies. Journal of energy storage, 52, p.105013. [CrossRef]

- Shibl, M., Ismail, L. and Massoud, A., 2021. Electric vehicle charging management using machine learning considering fast charging and vehicle-to-grid operation. Energies, 14(19), p.6199. [CrossRef]

- Shibl, M., Ismail, L. and Massoud, A., 2020. Machine learning-based management of electric vehicles charging: Towards highly-dispersed fast chargers. Energies, 13(20), p.5429. [CrossRef]

- Mazhar, T., Asif, R.N., Malik, M.A., Nadeem, M.A., Haq, I., Iqbal, M., Kamran, M. and Ashraf, S., 2023. Electric vehicle charging system in the smart grid using different machine learning methods. Sustainability, 15(3), p.2603. [CrossRef]

- Ullah, I., Liu, K., Yamamoto, T., Zahid, M. and Jamal, A., 2023. Modeling of machine learning with SHAP approach for electric vehicle charging station choice behavior prediction. Travel Behaviour and Society, 31, pp.78-92. [CrossRef]

- Ullah, I., Liu, K., Yamamoto, T., Shafiullah, M. and Jamal, A., 2023. Grey wolf optimizer-based machine learning algorithm to predict electric vehicle charging duration time. Transportation Letters, 15(8), pp.889-906.

- Shahriar, S., Al-Ali, A.R., Osman, A.H., Dhou, S. and Nijim, M., 2021. Prediction of EV charging behavior using machine learning. Ieee Access, 9, pp.111576-111586. [CrossRef]

- Ullah, I., Liu, K., Yamamoto, T., Zahid, M. and Jamal, A., 2022. Prediction of electric vehicle charging duration time using ensemble machine learning algorithm and Shapley additive explanations. International Journal of Energy Research, 46(11), pp.15211-15230. [CrossRef]

- Adil, M., Ali, J., Ta, Q.T.H., Attique, M. and Chung, T.S., 2020. A reliable sensor network infrastructure for electric vehicles to enable dynamic wireless charging based on machine learning techniques. IEEE Access, 8, pp.187933-187947. [CrossRef]

- McClone, G., Ghosh, A., Khurram, A., Washom, B. and Kleissl, J., 2023. Hybrid Machine Learning Forecasting for Online MPC of Workplace Electric Vehicle Charging. IEEE Transactions on Smart Grid.

- Liu, Y., Francis, A., Hollauer, C., Lawson, M.C., Shaikh, O., Cotsman, A., Bhardwaj, K., Banboukian, A., Li, M., Webb, A. and Asensio, O.I., 2023. Reliability of electric vehicle charging infrastructure: A cross-lingual deep learning approach. Communications in Transportation Research, 3, p.100095. [CrossRef]

- Alshammari, A. and Chabaan, R.C., 2023. Metaheuristic optimization-based Ensemble machine learning model for designing Detection Coil with prediction of electric vehicle charging time. Sustainability, 15(8), p.6684.

- Tu, R., Gai, Y.J., Farooq, B., Posen, D. and Hatzopoulou, M., 2020. Electric vehicle charging optimization to minimize marginal greenhouse gas emissions from power generation. Applied Energy, 277, p.115517. [CrossRef]

- Sun, B., 2021. A multi-objective optimization model for fast electric vehicle charging stations with wind, PV power, and energy storage. Journal of Cleaner Production, 288, p.125564. [CrossRef]

| Component | Specification |

|---|---|

| Processor Model | Intel Core i7-1260P |

| CPU Type | 12-Core Architecture |

| Brand | Aspire 3 |

| Memory (RAM) | 64 GB |

| Clock Speed | 2.1 GHz |

| Operating System | Windows 11 Home |

| L3 Cache Size | 18 MB |

| Software | Python 3.10, Anaconda Spyder |

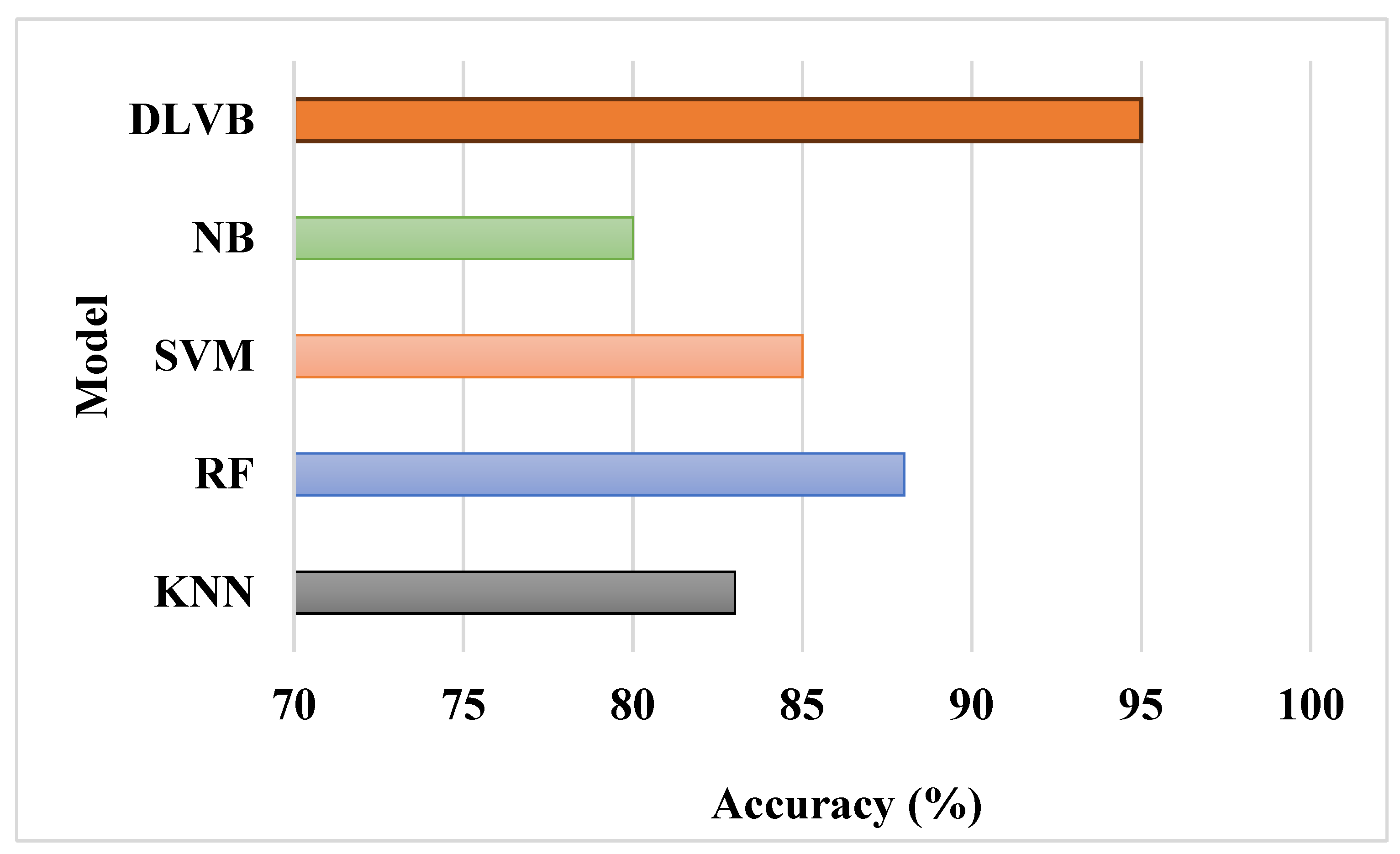

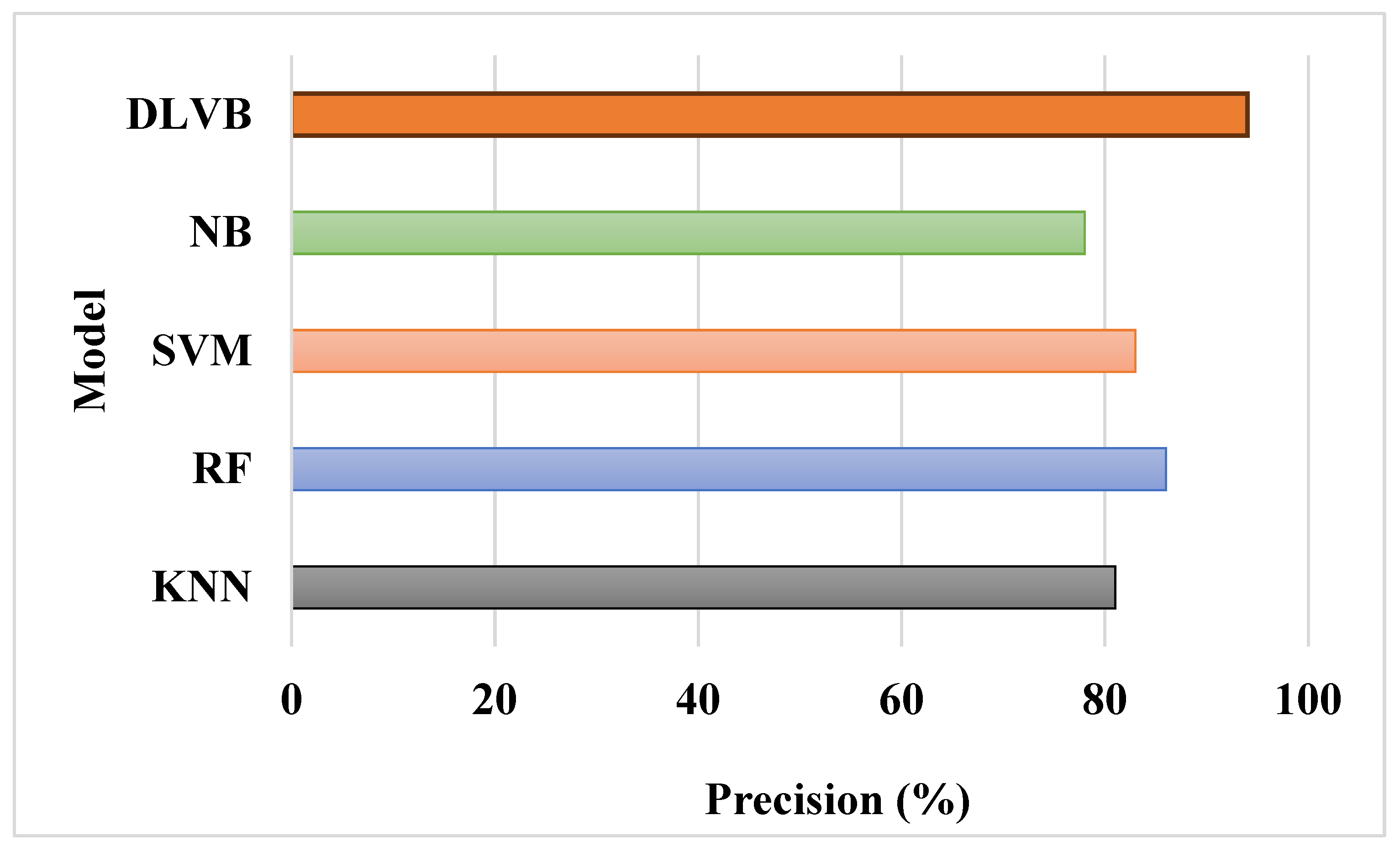

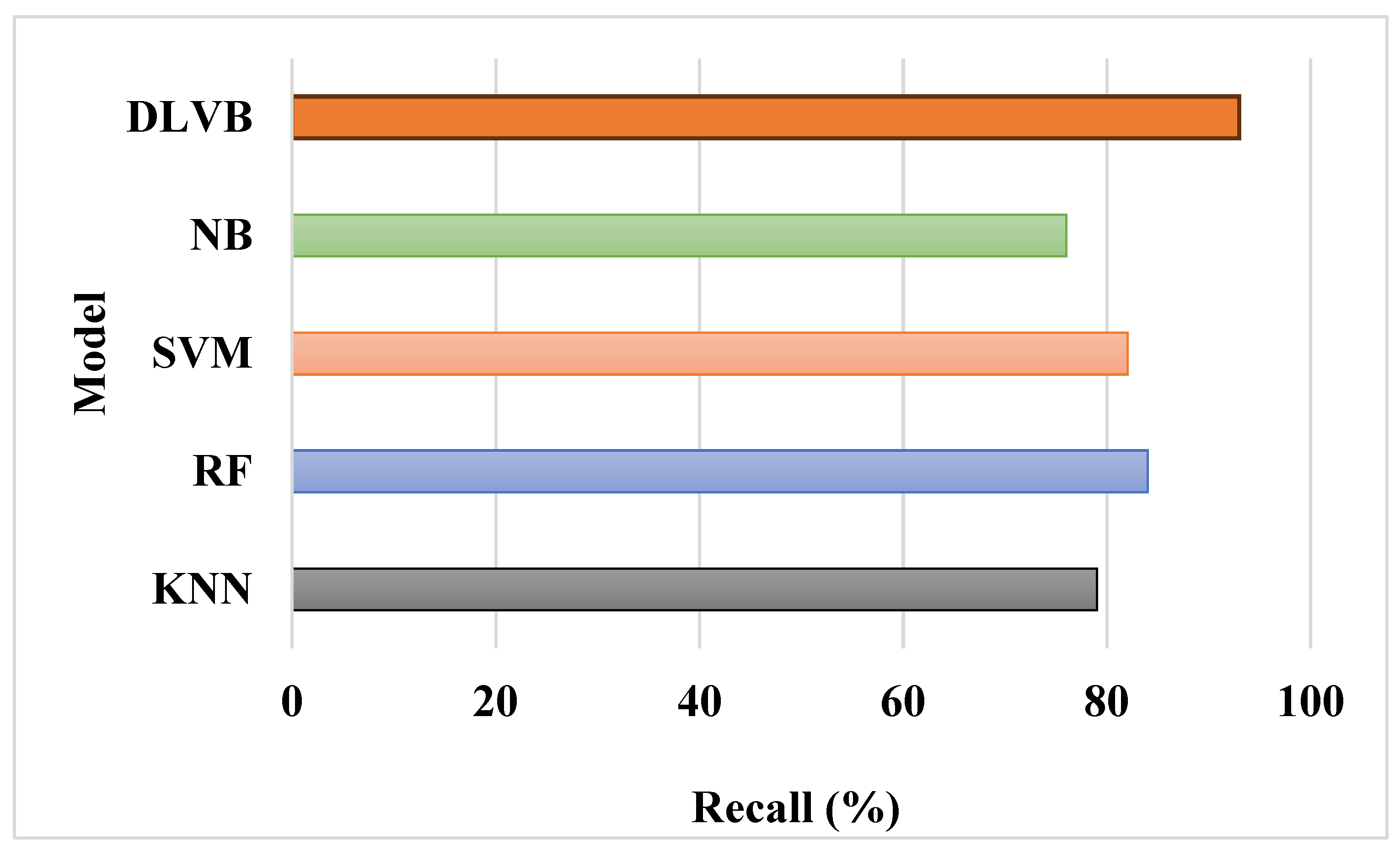

| Model | Accuracy (%) | Precision (%) | Recall (%) | F1-score (%) | MCC (%) |

|---|---|---|---|---|---|

| KNN | 83 | 81 | 79 | 80 | 78 |

| RF | 88 | 86 | 84 | 85 | 84 |

| SVM | 85 | 83 | 82 | 82 | 80 |

| NB | 80 | 78 | 76 | 77 | 75 |

| DLVB | 95 | 94 | 93 | 94 | 92 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).