Submitted:

10 January 2025

Posted:

13 January 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

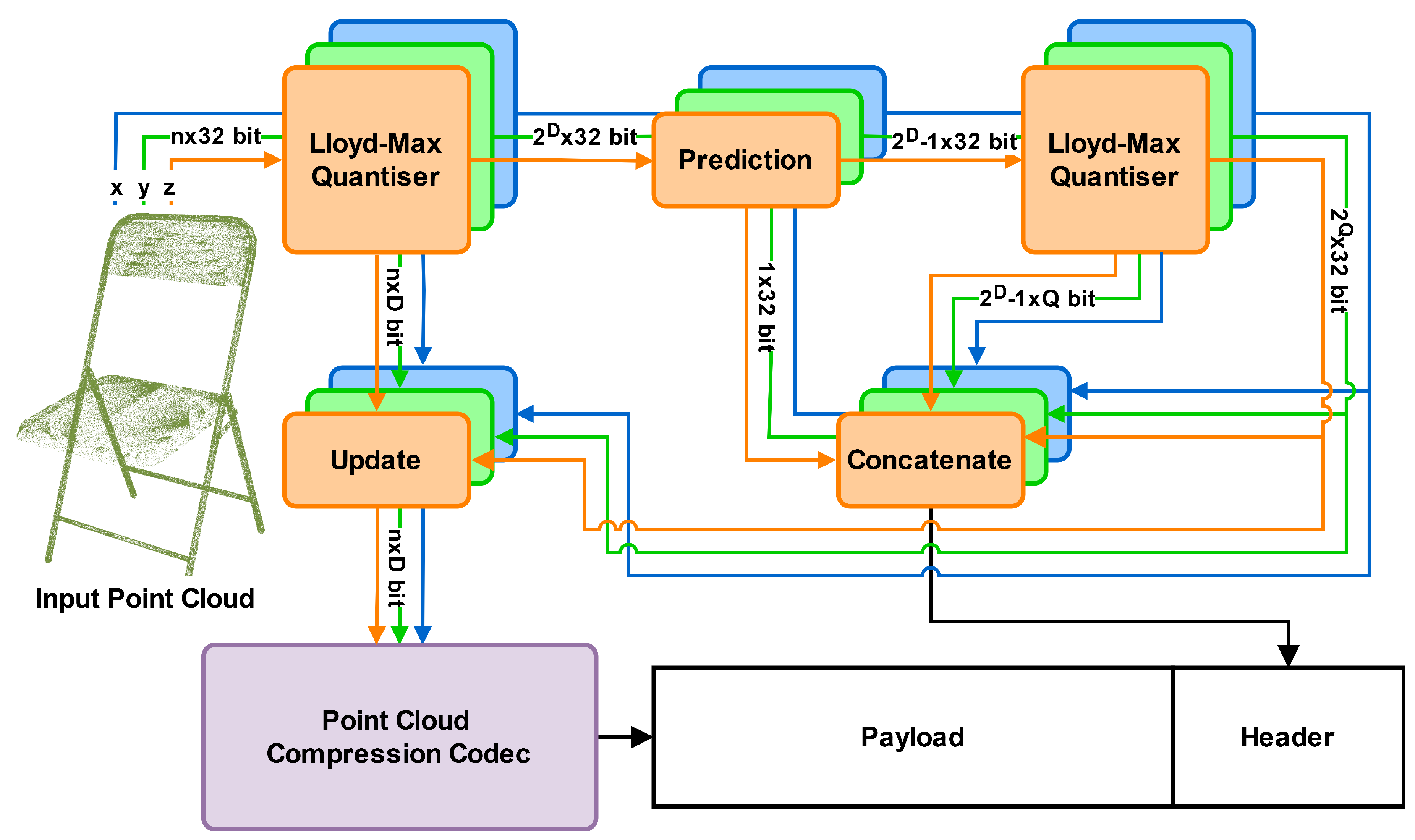

- We devised a novel approach that allows for non-uniform voxelisation in point cloud compression. The obtained method is based on Lloyd-Max quantisation and minimises Euclidian error.

2. Background Knowledge

-

UniformThe uniform quantiser is popular due to its simplicity, and it is shown to be optimal for some distributions. Given a series of values in the range the reconstruction values are given bywhere N is the number of quantisation levels. The decision boundaries are then in the middle of the adjacent reconstruction levels,

-

Lloyd-MaxThe Lloyd-Max quantiser adapts to the distribution of the input data . When in the optimisation problem is set to 0, the problem becomes minimising the mean squared error, which can be solved:andThese formulas can be used in an iterative manner. We start with a uniform quantiser, then iteratively update the boundaries and reconstruction levels according to these rules until the average variance in the bins no longer decreases.

3. Proposed Methodology

4. Results

4.1. Datasets

4.2. Experimental Details

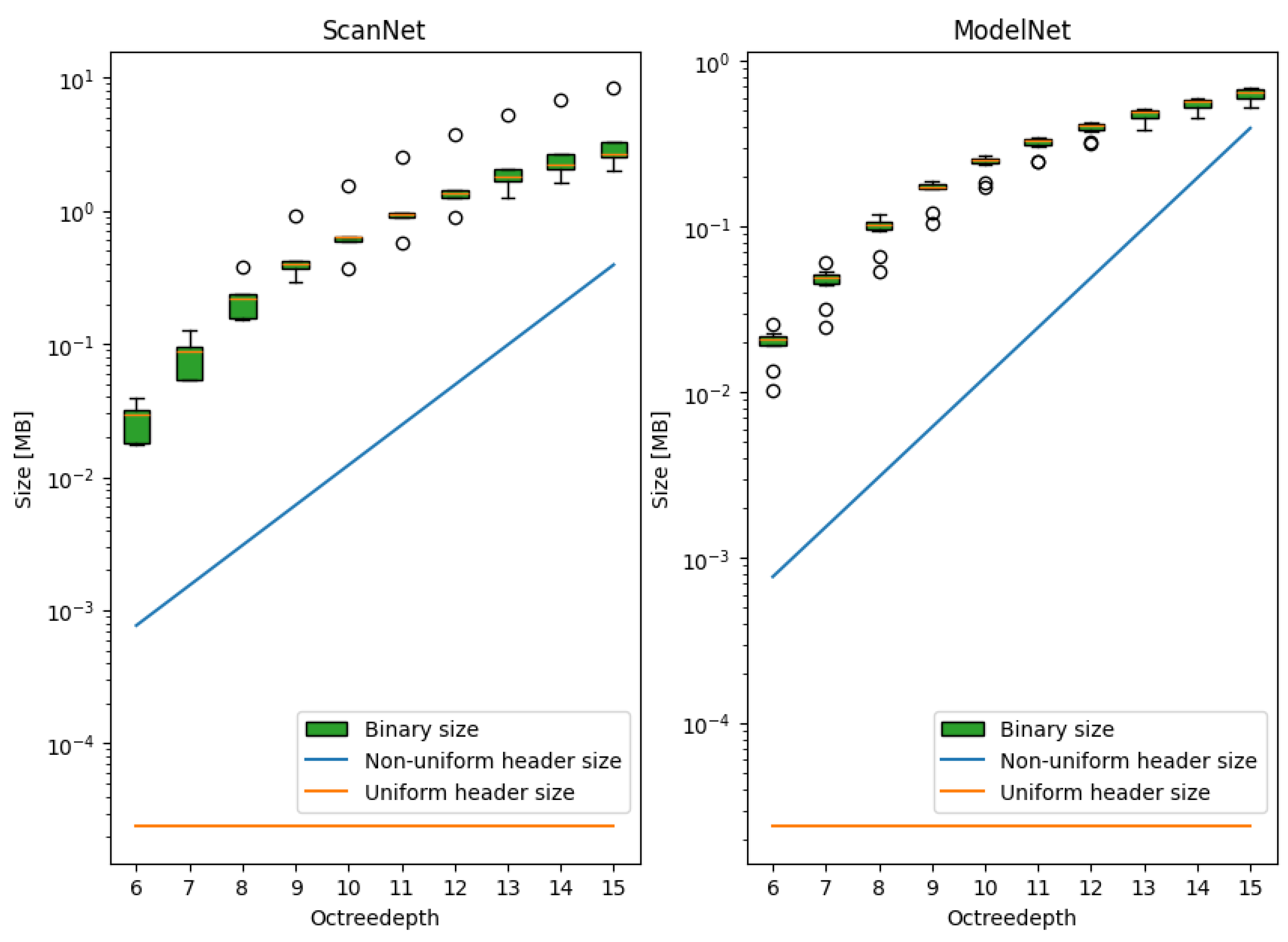

4.3. Q-Bits Tuning

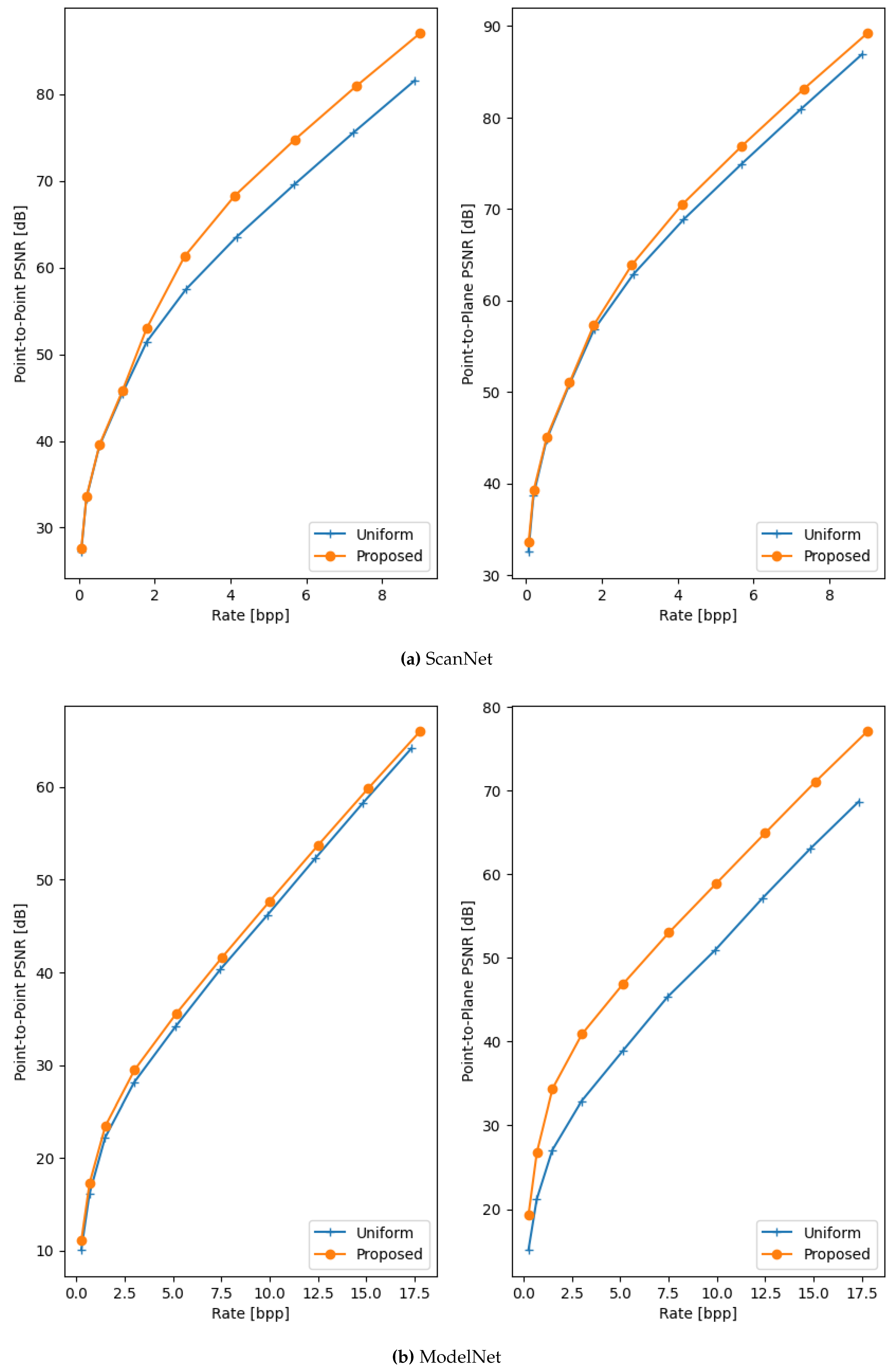

4.4. Comparison to SOTA

4.5. Impact of Density

4.6. Execution Time

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Data Availability Statement

Conflicts of Interest

References

- Wiegand, T.; Sullivan, G.; Bjontegaard, G.; Luthra, A. Overview of the H.264/AVC video coding standard. IEEE Transactions on Circuits and Systems for Video Technology 2003, 13, 560–576, Conference Name: IEEE Transactions on Circuits and Systems for Video Technology. [Google Scholar] [CrossRef]

- Sullivan, G.J.; Ohm, J.R.; Han, W.J.; Wiegand, T. Overview of the High Efficiency Video Coding (HEVC) Standard. IEEE Transactions on Circuits and Systems for Video Technology 2012, 22, 1649–1668, Conference Name: IEEE Transactions on Circuits and Systems for Video Technology. [Google Scholar] [CrossRef]

- Bross, B.; Wang, Y.K.; Ye, Y.; Liu, S.; Chen, J.; Sullivan, G.J.; Ohm, J.R. Overview of the Versatile Video Coding (VVC) Standard and its Applications. IEEE Transactions on Circuits and Systems for Video Technology 2021, 31, 3736–3764, Conference Name: IEEE Transactions on Circuits and Systems for Video Technology. [Google Scholar] [CrossRef]

- Zhang, W.; Yang, F.; Xu, Y.; Preda, M. Standardization Status of MPEG Geometry-Based Point Cloud Compression (G-PCC) Edition 2. In Proceedings of the 2024 Picture Coding Symposium (PCS); 2024; pp. 1–5, ISSN 2472-7822. [Google Scholar] [CrossRef]

- Mammou, K.; Kim, J.; Tourapis, A.M.; Podborski, D.; Flynn, D. Video and Subdivision based Mesh Coding. In Proceedings of the 2022 10th European Workshop on Visual Information Processing (EUVIP); 2022; pp. 1–6, ISSN 2471-8963. [Google Scholar] [CrossRef]

- Shao, Y.; Gao, W.; Liu, S.; Li, G. Advanced Patch-Based Affine Motion Estimation for Dynamic Point Cloud Geometry Compression. Sensors 2024, 24, 3142, Number: 10 Publisher: Multidisciplinary Digital Publishing Institute. [Google Scholar] [CrossRef]

- Tohidi, F.; Paul, M.; Ulhaq, A.; Chakraborty, S. Improved Video-Based Point Cloud Compression via Segmentation. Sensors 2024, 24, 4285, Number: 13 Publisher: Multidisciplinary Digital Publishing Institute. [Google Scholar] [CrossRef] [PubMed]

- Dumic, E.; Bjelopera, A.; Nüchter, A. Dynamic Point Cloud Compression Based on Projections, Surface Reconstruction and Video Compression. Sensors 2022, 22, 197, Number: 1 Publisher: Multidisciplinary Digital Publishing Institute. [Google Scholar] [CrossRef]

- Wang, J.; Zhu, H.; Liu, H.; Ma, Z. Lossy Point Cloud Geometry Compression via End-to-End Learning. IEEE Transactions on Circuits and Systems for Video Technology 2021, 31, 4909–4923, Conference Name: IEEE Transactions on Circuits and Systems for Video Technology. [Google Scholar] [CrossRef]

- Quach, M.; Valenzise, G.; Dufaux, F. Learning Convolutional Transforms for Lossy Point Cloud Geometry Compression. In Proceedings of the 2019 IEEE International Conference on Image Processing (ICIP); 2019; pp. 4320–4324, arXiv:1903.08548 [cs, eess, stat]. [Google Scholar] [CrossRef]

- Zhuang, L.; Tian, J.; Zhang, Y.; Fang, Z. Variable Rate Point Cloud Geometry Compression Method. Sensors 2023, 23, 5474, Number: 12 Publisher: Multidisciplinary Digital Publishing Institute. [Google Scholar] [CrossRef]

- Wang, J.; Ding, D.; Li, Z.; Feng, X.; Cao, C.; Ma, Z. Sparse Tensor-Based Multiscale Representation for Point Cloud Geometry Compression. IEEE Transactions on Pattern Analysis and Machine Intelligence 2023, 45, 9055–9071, Conference Name: IEEE Transactions on Pattern Analysis and Machine Intelligence. [Google Scholar] [CrossRef] [PubMed]

- Deng, J.; An, Y.; Li, T.H.; Liu, S.; Li, G. ScanPCGC: Learning-Based Lossless Point Cloud Geometry Compression using Sequential Slice Representation. In Proceedings of the ICASSP 2024 - 2024 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP); 2024; pp. 8386–8390, ISSN 2379-190X. [Google Scholar] [CrossRef]

- Akhtar, A.; Li, Z.; Van der Auwera, G. Inter-Frame Compression for Dynamic Point Cloud Geometry Coding. IEEE Transactions on Image Processing 2024, 33, 584–594, Conference Name: IEEE Transactions on Image Processing. [Google Scholar] [CrossRef] [PubMed]

- Que, Z.; Lu, G.; Xu, D. VoxelContext-Net: An Octree Based Framework for Point Cloud Compression. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2021, pp.

- Fu, C.; Li, G.; Song, R.; Gao, W.; Liu, S. OctAttention: Octree-Based Large-Scale Contexts Model for Point Cloud Compression. Proceedings of the AAAI Conference on Artificial Intelligence 2022, 36, 625–633, Number: 1. [Google Scholar] [CrossRef]

- Cui, M.; Long, J.; Feng, M.; Li, B.; Kai, H. OctFormer: Efficient Octree-Based Transformer for Point Cloud Compression with Local Enhancement. Proceedings of the AAAI Conference on Artificial Intelligence 2023, 37, 470–478, Number: 1. [Google Scholar] [CrossRef]

- Song, R.; Fu, C.; Liu, S.; Li, G. Efficient Hierarchical Entropy Model for Learned Point Cloud Compression. In Proceedings of the 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Vancouver, BC, Canada; 2023; pp. 14368–14377. [Google Scholar] [CrossRef]

- Nguyen, D.T.; Quach, M.; Valenzise, G.; Duhamel, P. Learning-Based Lossless Compression of 3D Point Cloud Geometry. In Proceedings of the ICASSP 2021 - 2021 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP); 2021; pp. 4220–4224, ISSN 2379-190X. [Google Scholar] [CrossRef]

- Nguyen, D.T.; Quach, M.; Valenzise, G.; Duhamel, P. Lossless Coding of Point Cloud Geometry Using a Deep Generative Model. IEEE Transactions on Circuits and Systems for Video Technology 2021, 31, 4617–4629, Conference Name: IEEE Transactions on Circuits and Systems for Video Technology. [Google Scholar] [CrossRef]

- Qi, C.R.; Su, H.; Mo, K.; Guibas, L.J. PointNet: Deep Learning on Point Sets for 3D Classification and Segmentation. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition; 2017; pp. 652–660. [Google Scholar]

- You, K.; Gao, P. Patch-Based Deep Autoencoder for Point Cloud Geometry Compression. In Proceedings of the ACM Multimedia Asia; 2021; pp. 1–7. [Google Scholar] [CrossRef]

- Huang, T.; Liu, Y. 3D Point Cloud Geometry Compression on Deep Learning. In Proceedings of the Proceedings of the 27th ACM International Conference on Multimedia; New York, NY, USA, 2019; MM ’19; pp. 890–898. [Google Scholar] [CrossRef]

- Tu, C.; Takeuchi, E.; Carballo, A.; Takeda, K. Point Cloud Compression for 3D LiDAR Sensor using Recurrent Neural Network with Residual Blocks. In Proceedings of the 2019 International Conference on Robotics and Automation (ICRA); 2019; pp. 3274–3280, ISSN 2577-087X. [Google Scholar] [CrossRef]

- Quach, M.; Pang, J.; Tian, D.; Valenzise, G.; Dufaux, F. Survey on Deep Learning-Based Point Cloud Compression. Frontiers in Signal Processing 2022, 2. Publisher: Frontiers. [Google Scholar] [CrossRef]

- Dai, A.; Chang, A.X.; Savva, M.; Halber, M.; Funkhouser, T.; Niessner, M. ScanNet: Richly-Annotated 3D Reconstructions of Indoor Scenes. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI; 2017; pp. 2432–2443. [Google Scholar] [CrossRef]

- Zhirong, Wu.; Song, S.; Khosla, A.; Fisher, Yu.; Zhang, L.; Tang, X.; Xiao, J. 3D ShapeNets: A deep representation for volumetric shapes. In Proceedings of the 2015 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 2015; pp. 1912–1920. [Google Scholar] [CrossRef]

- Maglo, A.; Lavoué, G.; Dupont, F.; Hudelot, C. 3D Mesh Compression: Survey, Comparisons, and Emerging Trends. ACM Computing Surveys 2015, 47, 44–1. [Google Scholar] [CrossRef]

- Tian, D.; Ochimizu, H.; Feng, C.; Cohen, R.; Vetro, A. Geometric distortion metrics for point cloud compression. In Proceedings of the 2017 IEEE International Conference on Image Processing (ICIP); 2017; pp. 3460–3464, ISSN 2381-8549. [Google Scholar] [CrossRef]

- Geiger, A.; Lenz, P.; Urtasun, R. Are we ready for autonomous driving? KITTI vision benchmark suite. In Proceedings of the 2012 IEEE Conference on Computer Vision and Pattern Recognition; 2012; pp. 3354–3361, ISSN 1063-6919. [Google Scholar] [CrossRef]

- Caesar, H.; Bankiti, V.; Lang, A.H.; Vora, S.; Liong, V.E.; Xu, Q.; Krishnan, A.; Pan, Y.; Baldan, G.; Beijbom, O. nuScenes: A Multimodal Dataset for Autonomous Driving. In Proceedings of the 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA; 2020; pp. 11618–11628. [Google Scholar] [CrossRef]

- Sun, P.; Kretzschmar, H.; Dotiwalla, X.; Chouard, A.; Patnaik, V.; Tsui, P.; Guo, J.; Zhou, Y.; Chai, Y.; Caine, B.; et al. Scalability in Perception for Autonomous Driving: Waymo Open Dataset, 2020. arXiv:1912.04838 [cs].

- Armeni, I.; Sax, S.; Zamir, A.R.; Savarese, S. Joint 2D-3D-Semantic Data for Indoor Scene Understanding. 2017, arXiv:1702.01105 [cs]. [Google Scholar]

| Q-bits | ScanNet | ModelNet | ||

| D1 BD-rate | D2 BD-rate | D1 BD-rate | D2 BD-rate | |

| 2 | -11.11% | -5.51% | -5.66% | -25.05% |

| 3 | -12.04% | -6.35% | -8.20% | -38.20% |

| 4 | -12.21% | -6.51% | -8.40% | -41.49% |

| 6 | -11.91% | -6.15% | -8.03% | -42.49% |

| 8 | -10.76% | -4.90% | -6.82% | -41.71% |

| 12 | 5.92% | 12.61% | 9.90% | -28.94% |

| Data | Uniform | Proposed | BD-rate gain | ||||||

| Rate | D1 PSNR | D2 PSNR | Rate | D1 PSNR | D2 PSNR | D1 | D2 | ||

| Scannet | 3.25 | 54.45 | 59.80 | 3.27 | 57.18 | 60.98 | -12.20 | -6.52 | |

| ModelNet | bathtub | 7.60 | 36.18 | 40.73 | 7.63 | 37.39 | 44.85 | -8.40 | -26.96 |

| bed | 7.41 | 32.56 | 37.81 | 7.53 | 33.83 | 44.54 | -7.41 | -38.93 | |

| chair | 5.65 | 39.79 | 45.12 | 5.72 | 40.98 | 52.20 | -9.52 | -46.88 | |

| desk | 7.51 | 34.20 | 39.13 | 7.60 | 35.90 | 49.61 | -10.15 | -50.86 | |

| dresser | 6.87 | 37.68 | 41.59 | 6.99 | 39.95 | 54.68 | -13.67 | -58.71 | |

| monitor | 5.63 | 45.59 | 51.54 | 5.74 | 46.97 | 64.25 | -8.60 | -63.83 | |

| nightstand | 7.71 | 40.19 | 44.36 | 7.81 | 41.74 | 48.78 | -9.45 | -26.87 | |

| sofa | 7.60 | 30.15 | 37.08 | 7.68 | 30.79 | 40.11 | -3.64 | -20.22 | |

| table | 7.61 | 39.16 | 43.48 | 7.75 | 40.57 | 47.58 | -8.73 | -26.17 | |

| toilet | 7.61 | 40.95 | 45.23 | 7.70 | 41.94 | 48.28 | -6.69 | -22.13 | |

| Average | 5.18 | 46.05 | 51.20 | 5.24 | 48.09 | 55.23 | -10.41 | -22.34 | |

| Dataset | Average points per frame |

| ScanNet [26] | 3.3M |

| KITTI [30] | 120K |

| nuScenes [31] | 34K |

| Waymo Open Dataset [32] | 177K |

| S3DIS [33] (Room / Area) | 1M / 45M |

| # Points | Q-bits | Uniform | Proposed | BD-rate gain | |||||

| Rate | D1 PSNR | D2 PSNR | Rate | D1 PSNR | D2 PSNR | D1 | D2 | ||

| 50 000 | 2 | 12.60 | 37.18 | 42.00 | 13.46 | 39.03 | 47.62 | -3.31 | -19.55 |

| 100 000 | 3 | 11.18 | 37.18 | 42.00 | 11.83 | 38.97 | 48.85 | -4.76 | -27.32 |

| 250 000 | 4 | 9.49 | 37.19 | 42.04 | 9.85 | 38.74 | 49.39 | -6.16 | -34.21 |

| 500 000 | 4 | 8.30 | 37.18 | 42.02 | 8.50 | 38.61 | 49.37 | -7.40 | -38.05 |

| 1 000 000 | 4 | 7.24 | 37.18 | 42.02 | 7.35 | 38.54 | 49.33 | -8.40 | -41.49 |

| 4 000 000 | 5 | 5.38 | 37.19 | 42.04 | 5.42 | 38.49 | 49.58 | -10.51 | -49.78 |

| octree depth | ScanNet | ModelNet | ||||

| Uniform | Proposed | Uniform | Proposed | |||

| 6 | 5194.60 | 5613.99 | 8.07% | 1804.75 | 2180.99 | 8.85% |

| 7 | 5190.60 | 5508.96 | 6.13% | 1867.25 | 2059.26 | 10.28% |

| 8 | 5143.20 | 5543.84 | 7.79% | 1909.05 | 2090.62 | 9.51% |

| 9 | 5257.00 | 5678.42 | 8.02% | 2111.20 | 2280.34 | 8.01% |

| 10 | 5759.20 | 6108.41 | 6.06% | 2354.25 | 2513.61 | 6.77% |

| 11 | 6593.20 | 6862.53 | 4.09% | 2603.80 | 2729.44 | 4.83% |

| 12 | 7177.60 | 7666.90 | 6.82% | 2768.40 | 2966.85 | 7.17% |

| 13 | 7903.00 | 8410.00 | 6.42% | 2989.00 | 3183.79 | 6.52% |

| 14 | 8644.40 | 9112.04 | 5.41% | 3161.90 | 3413.44 | 7.96% |

| 15 | 9239.60 | 9910.39 | 7.26% | 3454.80 | 3665.64 | 6.10% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).