Submitted:

07 January 2025

Posted:

09 January 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. What is AI?

2.1. Limitations of AI

2.2. AI Aspirations: Taking Over Addition Decision Space

3. Human-in-the-Loop Decision-Making

4. Quantum Probability Theory for Decision-Making

4.1. Ordering Effects That Affect Decision-Making

4.2. Categorization-Decision

4.3. Interference Effects

5. How Quantum Cognition Can Improve Human-in-the-AI-Loop Decision-Making

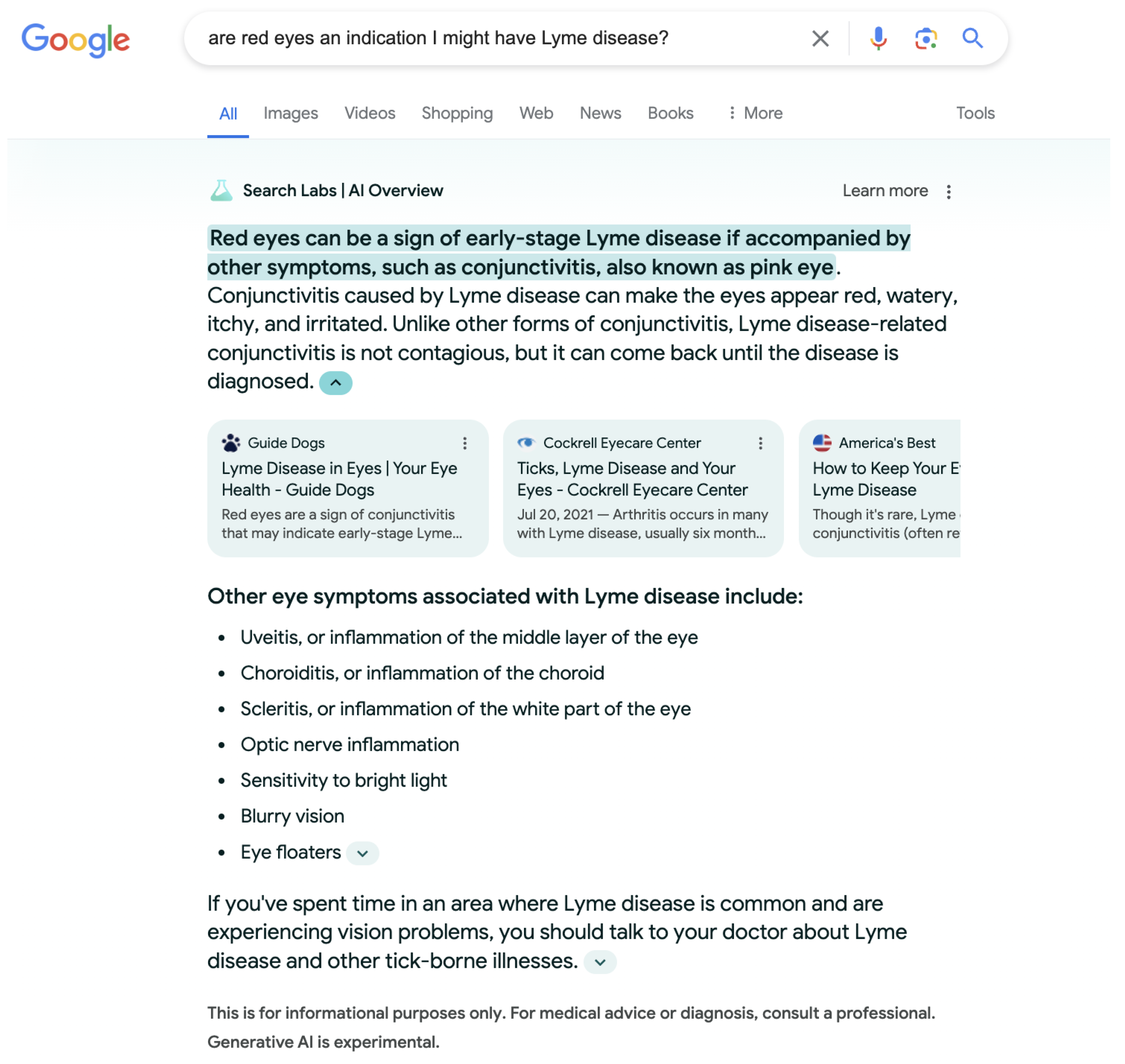

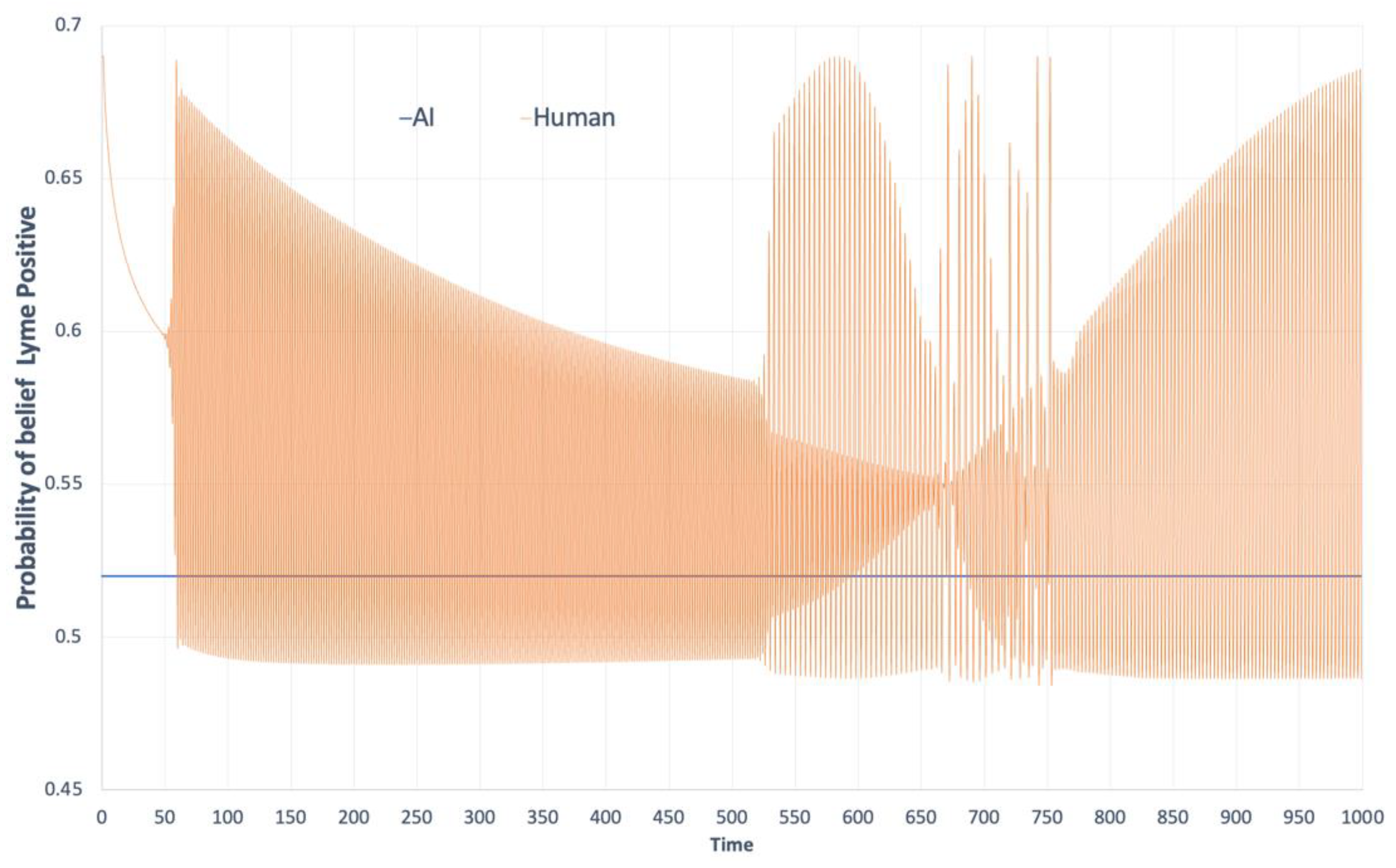

HITL-AI Example

6. Discussion

7. Future Research

8. Summary

Author Contributions

Funding

Institutional Review Board Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| AI | Artificial Intelligence |

| CPT | Classical Probability Theory |

| DSS | Decision Support System |

| HITL | Human-in-the-Loop |

| LLM | Large Language Model |

| QDT | Quantum Decision Theory |

| QPT | Quantum Probability Theory |

References

- L. Waardenburg, M. Huysman, and A. V. Sergeeva, “In the land of the blind, the one-eyed man is king: knowledge brokerage in the age of learning algorithms,” Organ. Sci., vol. 33, no. 1, pp. 59–82, Jan. 2022. [CrossRef]

- R. Gelles, D. McElfresh, and A. Mittu, “Project report: perceptions of AI in hiring,” College Park, MD, Oct. 2018. [Online]. Available: https://anjali.mittudev.com/content/Fairness_in_AI.pdf.

- D. Kaur, S. Uslu, K. J. Rittichier, and A. Durresi, “Trustworthy artificial intelligence: a review,” ACM Comput. Surv., vol. 55, no. 2, pp. 1–38, Mar. 2022. [CrossRef]

- S. Zuboff, In the age of the smart machine: the future of work and power, Reprint edition. New York: Basic Books, 1989.

- B. Reeves and C. Nass, The media equation: how people treat computers, television, and new media like real people and places. Stanford, Calif: Cambridge University Press, 2003.

- M. Wrzosek, “Challenges of contemporary command and future military operations | Scienti,” Sci. J. Mil. Univ. Land Forces, vol. 54, no. 1, pp. 35–51, 2022.

- J. K. Hawley and A. L. Mares, “Human performance challenges for the future force: lessons from patriot after the second gulf war,” in Designing Soldier Systems, CRC Press, 2012.

- A. Bisantz, J. Llinas, Y. Seong, R. Finger, and J.-Y. Jian, “Empirical investigations of trust-related systems vulnerabilities in aided, adversarial decision making,” State Univ of New York at Buffalo center of multisource information fusion, Mar. 2000. Accessed: Apr. 30, 2022. [Online]. Available: https://apps.dtic.mil/sti/citations/ADA389378.

- D. R. Hestad, “A discretionary-mandatory model as applied to network centric warfare and information operations,” NAVAL POSTGRADUATE SCHOOL MONTEREY CA, Mar. 2001. Accessed: Apr. 30, 2022. [Online]. Available: https://apps.dtic.mil/sti/citations/ADA387764.

- S. Marsh and M. R. Dibben, “The role of trust in information science and technology,” Annu. Rev. Inf. Sci. Technol., vol. 37, no. 1, pp. 465–498, 2003. [CrossRef]

- P. J. Denning and J. Arquilla, “The context problem in artificial intelligence,” Commun. ACM, vol. 65, no. 12, pp. 18–21, Nov. 2022. [CrossRef]

- S. A. Snook, Friendly fire: the accidental shootdown of u.s. black hawks over northern iraq. Princeton University Press, 2011. [CrossRef]

- S. Humr, M. Canan, and M. Demir, “A Quantum Decision Approach for Human-AI Decision-Making,” in EPiC Series in Computing, EasyChair, Jul. 2024, pp. 111–125. [CrossRef]

- D. Blair, J. O. Chapa, S. Cuomo, and J. Hurst, “Humans and hardware: an exploration of blended tactical workflows using john boyd’s ooda loop,” in The Conduct of War in the 21st Century, Routledge, 2021.

- E. Finn, What algorithms want: imagination in the age of computing, 1st ed. Boston, MA: MIT Press, 2018. Accessed: Nov. 11, 2022. [Online]. Available: https://mitpress.mit.edu/9780262536042/what-algorithms-want/.

- J. McCarthy, “What is artificial intelligence?,” Nov. 12, 2007. [Online]. Available: URL: http://www-formal.stanford.edu/jmc/whatisai.html.

- S. Russell and P. Norvig, Artificial intelligence: a modern approach, 3rd edition. Upper Saddle River: Pearson, 2009.

- C. Hughes, L. Robert, K. Frady, and A. Arroyos, “Artificial intelligence, employee engagement, fairness, and job outcomes,” in Managing Technology and Middle- and Low-skilled Employees, in The Changing Context of Managing People. , Emerald Publishing Limited, 2019, pp. 61–68. [CrossRef]

- G. Matthews, A. R. Panganiban, J. Lin, M. Long, and M. Schwing, “Chapter 3 - Super-machines or sub-humans: Mental models and trust in intelligent autonomous systems,” in Trust in Human-Robot Interaction, C. S. Nam and J. B. Lyons, Eds., Academic Press, 2021, pp. 59–82. [CrossRef]

- K. Scott, “I do not think it means what you think it means: artificial intelligence, cognitive work & scale,” Daedalus, vol. 151, no. 2, pp. 75–84, May 2022. [CrossRef]

- C. Collins, D. Dennehy, K. Conboy, and P. Mikalef, “Artificial intelligence in information systems research: a systematic literature review and research agenda,” Int. J. Inf. Manag., vol. 60, p. 102383, Oct. 2021. [CrossRef]

- M. Carter, Minds and computers: an introduction to the philosophy of artificial intelligence. Edinburgh University Press, 2007.

- R. Crootof, M. E. Kaminski, and W. N. Price II, “Humans in the loop,” Mar. 25, 2022, Rochester, NY: 4066781. [CrossRef]

- S. Reed et al., “A generalist agent,” May 19, 2022, arXiv: arXiv:2205.06175. [CrossRef]

- P. Madhavan, D. A. Wiegmann, and F. C. Lacson, “Automation failures on tasks easily performed by operators undermine trust in automated aids,” Hum. Factors, vol. 48, no. 2, pp. 241–256, Jun. 2006. [CrossRef]

- G. J. Cancro, S. Pan, and J. Foulds, “Tell me something that will help me trust you: a survey of trust calibration in human-agent interaction,” May 05, 2022, arXiv: arXiv:2205.02987. Accessed: May 31, 2022. [Online]. Available: http://arxiv.org/abs/2205.02987.

- H. Moravec, “When will computer hardware match the human brain?,” J. Evol. Technol., vol. 1, 1998, [Online]. Available: https://jetpress.org/volume1/moravec.pdf.

- B. P. Knijnenburg and M. C. Willemsen, “Inferring capabilities of intelligent agents from their external traits,” ACM Trans. Interact. Intell. Syst., vol. 6, no. 4, p. 28:1-28:25, Nov. 2016. [CrossRef]

- C. Ebermann, M. Selisky, and S. Weibelzahl, “Explainable AI: The Effect of Contradictory Decisions and Explanations on Users’ Acceptance of AI Systems,” Int. J. Human–Computer Interact., vol. 0, no. 0, pp. 1–20, Oct. 2022. [CrossRef]

- V. Bader and S. Kaiser, “Algorithmic decision-making? The user interface and its role for human involvement in decisions supported by artificial intelligence,” Organization, vol. 26, no. 5, pp. 655–672, Sep. 2019. [CrossRef]

- P. D. Harms and G. Han, “Algorithmic leadership: the future is now,” J. Leadersh. Stud., vol. 12, no. 4, pp. 74–75, Feb. 2019. [CrossRef]

- N. Luhmann, Organization and decision. Cambridge, United Kingdom ; New York, NY: Cambridge University Press, 2018.

- R. Hertwig, T. J. Pleskac, and T. Pachur, Taming uncertainty, 1st ed. MIT Press, 2019. Accessed: Dec. 04, 2022. [Online]. Available: https://mitpress.mit.edu/9780262039871/taming-uncertainty/.

- K. Brunsson and N. Brunsson, Decisions: the complexities of individual and organizational decision-making. Cheltenham, UK: Edward Elgar Pub, 2017.

- M. Frank, P. Roehrig, and B. Pring, What to do when machines do everything: how to get ahead in a world of ai, algorithms, bots, and big data. John Wiley & Sons, 2017.

- J. S. Wesche and A. Sonderegger, “When computers take the lead: The automation of leadership,” Comput. Hum. Behav., vol. 101, pp. 197–209, Dec. 2019. [CrossRef]

- T. Saßmannshausen, P. Burggräf, J. Wagner, M. Hassenzahl, T. Heupel, and F. Steinberg, “Trust in artificial intelligence within production management – an exploration of antecedents,” Ergonomics, pp. 1–18, May 2021. [CrossRef]

- P. D. Bruza and E. C. Hoenkamp, “Reinforcing trust in autonomous systems: a quantum cognitive approach,” in Foundations of Trusted Autonomy, H. A. Abbass, J. Scholz, and D. J. Reid, Eds., in Studies in Systems, Decision and Control. , Cham: Springer International Publishing, 2018, pp. 215–224. [CrossRef]

- D. Aerts, “Quantum structure in cognition,” J. Math. Psychol., vol. 53, no. 5, pp. 314–348, Oct. 2009. [CrossRef]

- P. M. Agrawal and R. Sharda, “Quantum mechanics and human decision making,” Aug. 05, 2010, Rochester, NY: 1653911. [CrossRef]

- J. R. Busemeyer and P. D. Bruza, Quantum models of cognition and decision, Reissue edition. Cambridge: Cambridge University Press, 2014.

- J. Jiang and X. Liu, “A quantum cognition based group decision making model considering interference effects in consensus reaching process,” Comput. Ind. Eng., vol. 173, p. 108705, Nov. 2022. [CrossRef]

- A. Khrennikov, “Social laser model for the bandwagon effect: generation of coherent information waves,” Entropy, vol. 22, no. 5, Art. no. 5, May 2020. [CrossRef]

- P. D. Kvam, J. R. Busemeyer, and T. J. Pleskac, “Temporal oscillations in preference strength provide evidence for an open system model of constructed preference,” Sci. Rep., vol. 11, no. 1, Art. no. 1, Apr. 2021. [CrossRef]

- L. Roeder et al., “A Quantum Model of Trust Calibration in Human–AI Interactions,” Entropy, vol. 25, no. 9, Art. no. 9, Sep. 2023. [CrossRef]

- J. S. Trueblood and J. R. Busemeyer, “A comparison of the belief-adjustment model and the quantum inference model as explanations of order effects in human inference,” Proc. Annu. Meet. Cogn. Sci. Soc., vol. 32, no. 32, p. 7, 2010.

- S. Humr and M. Canan, “Intermediate Judgments and Trust in Artificial Intelligence-Supported Decision-Making,” Entropy, vol. 26, no. 6, Art. no. 6, Jun. 2024. [CrossRef]

- S. Stenholm and K.-A. Suominen, Quantum approach to informatics, 1st edition. Hoboken, N.J: Wiley-Interscience, 2005.

- L. Floridi, The philosophy of information. OUP Oxford, 2013.

- E. M. Pothos, O. J. Waddup, P. Kouassi, and J. M. Yearsley, “What is rational and irrational in human decision making,” Quantum Rep., vol. 3, no. 1, Art. no. 1, Mar. 2021. [CrossRef]

- E. M. Pothos and J. R. Busemeyer, “Quantum cognition,” Annu. Rev. Psychol., vol. 73, pp. 749–778, 2022.

- J. R. Busemeyer, P. D. Kvam, and T. J. Pleskac, “Comparison of Markov versus quantum dynamical models of human decision making,” WIREs Cogn. Sci., vol. 11, no. 4, p. e1526, 2020. [CrossRef]

- D. Kahneman, Thinking, Fast and Slow, 1st edition. New York: Farrar, Straus and Giroux, 2013.

- D. Kahneman, S. P. Slovic, and A. Tversky, Judgment Under Uncertainty: Heuristics and Biases. Cambridge University Press, 1982.

- M. Canan, “Non-commutativity, incompatibility, emergent behavior and decision support systems,” Procedia Comput. Sci., vol. 140, pp. 13–20, 2018. [CrossRef]

- R. Blutner and P. beim Graben, “Quantum cognition and bounded rationality,” Synthese, vol. 193, no. 10, pp. 3239–3291, Oct. 2016. [CrossRef]

- F. Vaio, “The quantum-like approach to modeling classical rationality violations: an introduction,” Mind Soc., vol. 18, no. 1, pp. 105–123, Jun. 2019. [CrossRef]

- Z. Wang and J. R. Busemeyer, “Interference effects of categorization on decision making,” Cognition, vol. 150, pp. 133–149, May 2016. [CrossRef]

- R. Zheng, J. R. Busemeyer, and R. M. Nosofsky, “Integrating categorization and decision-making,” Cogn. Sci., vol. 47, no. 1, p. e13235, 2023. [CrossRef]

- Z. Wang and J. Busemeyer, “Order effects in sequential judgments and decisions,” in Reproducibility, John Wiley & Sons, Ltd, 2016, pp. 391–405. [CrossRef]

- M. Ashtiani and M. A. Azgomi, “A formulation of computational trust based on quantum decision theory,” Inf. Syst. Front., vol. 18, no. 4, pp. 735–764, Aug. 2016. [CrossRef]

- J. T. Townsend, K. M. Silva, J. Spencer-Smith, and M. J. Wenger, “Exploring the relations between categorization and decision making with regard to realistic face stimuli,” Pragmat. Cogn., vol. 8, no. 1, pp. 83–105, Jan. 2000. [CrossRef]

- P. D. Kvam, T. J. Pleskac, S. Yu, and J. R. Busemeyer, “Interference effects of choice on confidence: Quantum characteristics of evidence accumulation,” Proc. Natl. Acad. Sci., vol. 112, no. 34, pp. 10645–10650, Aug. 2015. [CrossRef]

- M. Canan and A. Sousa-Poza, “Pragmatic idealism: towards a probabilistic framework of shared awareness in complex situations,” in 2019 IEEE Conference on Cognitive and Computational Aspects of Situation Management (CogSIMA), Apr. 2019, pp. 114–121. [CrossRef]

- T. Darr, R. Mayer, R. D. Jones, T. Ramey, and R. Smith, “Quantum probability models for decision making,” presented at the 24th International Command and Control Research & Technology Symposium, ICCRTS, 2019, p. 20.[Online]. Available: https://static1.squarespace.com/static/53bad224e4b013a11d687e40/t/5dc42d54e8437d748186b031/1573137749772/24th_ICCRTS_paper_8.pdf.

- J. M. Yearsley, “Advanced tools and concepts for quantum cognition: A tutorial,” J. Math. Psychol., vol. 78, pp. 24–39, Jun. 2017. [CrossRef]

- E. Nick, “How Artificial Intelligence Is Powering Search Engines - DataScienceCentral.com,” Data Science Central. Accessed: May 22, 2023. [Online]. Available: https://www.datasciencecentral.com/how-artificial-intelligence-is-powering-search-engines/.

- R. Zhao, X. Li, Y. K. Chia, B. Ding, and L. Bing, “Can ChatGPT-like Generative Models Guarantee Factual Accuracy? On the Mistakes of New Generation Search Engines,” Mar. 02, 2023, arXiv: arXiv:2304.11076. Accessed: May 22, 2023. [Online]. Available: http://arxiv.org/abs/2304.11076.

- M. Aiken, The Cyber Effect: An Expert in Cyberpsychology Explains How Technology Is Shaping Our Children, Our Behavior, and Our Values--and What We Can Do About It, Reprint edition. Random House, 2017.

- A. N. Whitehead, Process and Reality, 2nd edition. New York: Free Press, 1979.

- L. Snow, S. Jain, and V. Krishnamurthy, “Lyapunov based stochastic stability of human-machine interaction: a quantum decision system approach,” Mar. 31, 2022, arXiv: arXiv:2204.00059. [CrossRef]

- M. Canan, M. Demir, and S. Kovacic, “A Probabilistic Perspective of Human-Machine Interaction,” Jan. 2022. Accessed: Jul. 26, 2022. [Online]. Available: http://hdl.handle.net/10125/80256.

- V. I. Yukalov, “Evolutionary Processes in Quantum Decision Theory,” Entropy, vol. 22, no. 6, Art. no. 6, Jun. 2020. [CrossRef]

- V. I. Yukalov, E. P. Yukalova, and D. Sornette, “Information processing by networks of quantum decision makers,” Phys. Stat. Mech. Its Appl., vol. 492, pp. 747–766, Feb. 2018. [CrossRef]

- E. Jussupow, K. Spohrer, A. Heinzl, and J. Gawlitza, “Augmenting medical diagnosis decisions? An investigation into physicians’ decision-making process with artificial intelligence,” Inf. Syst. Res., vol. 32, no. 3, pp. 713–735, Sep. 2021. [CrossRef]

- M. H. Jarrahi, G. Newlands, M. K. Lee, C. T. Wolf, E. Kinder, and W. Sutherland, “Algorithmic management in a work context,” Big Data Soc., vol. 8, no. 2, p. 20539517211020332, Jul. 2021. [CrossRef]

- A. Fenneman, J. Sickmann, T. Pitz, and A. G. Sanfey, “Two distinct and separable processes underlie individual differences in algorithm adherence: Differences in predictions and differences in trust thresholds,” PLOS ONE, vol. 16, no. 2, p. e0247084, Feb. 2021. [CrossRef]

- S. S. Panesar, M. Kliot, R. Parrish, J. Fernandez-Miranda, Y. Cagle, and G. W. Britz, “Promises and Perils of Artificial Intelligence in Neurosurgery,” Neurosurgery, vol. 87, no. 1, pp. 33–44, Jul. 2020. [CrossRef]

- S. Vallor, “Moral deskilling and upskilling in a new machine age: reflections on the ambiguous future of character,” Philos. Technol., vol. 28, no. 1, pp. 107–124, Mar. 2015. [CrossRef]

- B. P. Green, “Artificial Intelligence, Decision-Making, and Moral Deskilling,” Santa Clara University. Accessed: Nov. 27, 2022. [Online]. Available: https://www.scu.edu/ethics/focus-areas/technology-ethics/resources/artificial-intelligence-decision-making-and-moral-deskilling/.

- M. H. Jarrahi, “In the age of the smart artificial intelligence: AI’s dual capacities for automating and informating work,” Bus. Inf. Rev., vol. 36, no. 4, pp. 178–187, Dec. 2019. [CrossRef]

- J. Sun, D. J. Zhang, H. Hu, and J. A. Van Mieghem, “Predicting Human Discretion to Adjust Algorithmic Prescription: A Large-Scale Field Experiment in Warehouse Operations,” Manag. Sci., vol. 68, no. 2, pp. 846–865, Feb. 2022. [CrossRef]

- V. Arnold, P. A. Collier, S. A. Leech, and S. G. Sutton, “Impact of intelligent decision aids on expert and novice decision-makers’ judgments,” Account. Finance, vol. 44, no. 1, pp. 1–26, 2004. [CrossRef]

- S. Gaube et al., “Do as AI say: susceptibility in deployment of clinical decision-aids,” Npj Digit. Med., vol. 4, no. 1, Art. no. 1, Feb. 2021. [CrossRef]

- H. A. Simon and A. Newell, “Heuristic problem solving: the next advance in operations research,” Oper. Res., vol. 6, no. 1, pp. 1–10, Feb. 1958. [CrossRef]

- M. Favre, A. Wittwer, H. R. Heinimann, V. I. Yukalov, and D. Sornette, “Quantum decision theory in simple risky choices,” PLOS ONE, vol. 11, no. 12, p. e0168045, Dec. 2016. [CrossRef]

- L. Floridi and J. Cowls, “A unified framework of five principles for AI in society,” Harv. Data Sci. Rev., vol. 1, no. 1, Jul. 2019, Accessed: Nov. 26, 2022. [Online]. Available. [CrossRef]

- S. A. Kauffman and A. Roli, “What is consciousness? Artificial intelligence, real intelligence, quantum mind, and qualia,” Jun. 29, 2022, arXiv: arXiv:2106.15515. [CrossRef]

- J. Pearl and D. Mackenzie, The book of why: the new science of cause and effect. New York, NY: Basic Books, 2018.

| Human Roles | Reason for adding a Human-in-the-Loop |

|---|---|

| 1. Corrective Roles | Improve system performance, including error, situational, and bias correction |

| 2. Reliance Roles | Act as a failure mode or alternatively stop the whole system from working under an emergency |

| 3. Justificatory Roles | Increase the system’s legitimacy by providing reasoning for decisions |

| 4. Dignitary Roles | Protect the dignity of the humans affected by the decision |

| 5. Accountability Roles | Allocate liability or censure |

| 6. Stand-in Roles | Act as proof that something has been done or stand in for other humans and human values |

| 7. Friction Roles | Slow the pace of automated decision-making |

| 8. Warm Body Roles | Preserve human jobs |

| 9. Interface Roles | Link the systems to human users |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).