4. Testing, Results and Discussion

4.1. Evaluation Protocol, Ground Truth, and Reproducibility

For each dataset d, we define a ground-truth artefact set containing adjudicated relevant artefacts associated with the investigated scenario. Ground truth was established by combining: (i) published challenge solutions or organiser write-ups where available, (ii) manual verification by a GCFE/GCFA-guided examiner, (iii) cross-source corroboration using Registry, volatile-memory, and timeline evidence, and (iv) duplicate removal and normalisation at the artefact-instance level so that semantically identical findings reported through different tools are counted only once. Returned artefacts from a method m are denoted by and scored at the artefact-instance level rather than only at the category level. To make the evaluation statistically interpretable, each WinRegRL benchmark was repeated over 10 independent runs per dataset using different random seeds for the local refinement stage, yielding 40 WinRegRL runs in total across the four datasets. Hyperparameters, reward weights, discount factor, and support thresholds were frozen before final benchmarking and were not re-tuned per dataset. Because the main planning stage is deterministic once and R are fixed, the observed variance arises primarily from the bounded -greedy local refinement stage and from the timing variability of external parsing utilities.

4.2. Controlled Comparative Design

To avoid an unfair comparison between a fully automated framework and interactive forensic utilities, we separate the comparative evaluation into two settings. In the first setting, WinRegRL is compared with FTK Registry Viewer and KAPE under analyst-assisted scripted workflows using the same forensic inputs, the same hardware environment, and the same stopping criteria. In the second setting, WinRegRL output is compared with examiner-led investigations conducted by certified analysts under a controlled time budget and with access to the same evidential inputs. The human study protocol is now made explicit: three certified examiners (two GCFE and one GCFA, each with more than five years of Windows forensic casework) completed the same four case tasks in counter-balanced order, with a 90-minute limit per case, no collaboration between participants, and access to the same acquired Registry, memory, and timeline inputs made available to the automated baselines. Their outputs were scored against the same adjudicated benchmark set .

4.3. Formal Metric Definition

We define the accuracy and coverage measures formally. At the artefact-instance level, a true positive (

) is an artefact returned by a method that is present in the adjudicated benchmark set

for the investigated case; a false positive (

) is a returned artefact not supported by the benchmark after adjudication; and a false negative (

) is a benchmark artefact missed by the method. Because the experiment scores retrieved evidence items rather than an exhaustive universe of irrelevant artefacts, true negatives are not informative and are therefore not used as the principal headline metric. Artefact-level precision is computed as

artefact-level recall (coverage) is computed as

and the harmonic mean is

For completeness, when a closed candidate list of artefacts is available for a case, the corresponding case-bounded accuracy may be computed as

; The precision, recall, and F1-score choice is judged as more appropriate for retrieval-oriented forensic tasks. In addition, time-to-completion, time-to-first-relevant-artefact, and category-level recall were tracked during benchmarking. This formalisation ensures that evaluation is not self-referential and that high coverage refers only to recall against the adjudicated benchmark for the evaluated case, rather than to a universal claim of completeness in arbitrary real-world incidents.

4.4. Statistical Analysis

For WinRegRL, FTK, and KAPE, completion times were summarised as mean±standard deviation across repeated runs, and 95% confidence intervals for precision, recall, and F1 were estimated using non-parametric bootstrap resampling (2,000 resamples) over adjudicated artefact instances. For the human-expert study, median and interquartile range were additionally retained because examiner performance is inherently heterogeneous. Pairwise differences in completion time and recall were assessed using a paired Wilcoxon signed-rank test across case-level runs, and effect sizes were summarised using Cliff’s . This statistical layer was added specifically to avoid reliance on single-point claims and to make the observed benchmarking margins quantitatively interpretable.

4.5. Human-Expert Protocol

Participating examiners were given the same input artefacts, the same case scope, and the same completion criteria. Their task was to identify and justify scenario-relevant Registry and correlated timeline artefacts. The study records the number of relevant artefacts found, unsupported artefacts reported, time to completion, and evidential justification quality.

4.6. Testing Datasets

To test WinRegRL, we opted for different DFIR datasets.

Table 8 summarises the WinRegRL testing datasets, which were a mixed selection of four (04) datasets that cover multiple Windows architectures and versions. In general, three recent datasets are widely adopted in DFIR research, respectively Magnet-CTF (2022), IGU-CTF (2024) and MemLabs-CTF (2019) [

27].

Table 8.

WinRegRL testing forensics datasets.

Table 8.

WinRegRL testing forensics datasets.

| Datasets |

Description |

| Magnet-CTF 2022 [28] |

Dataset developed by Magnet Forensics, including several forensic images as a public service part of Magnet CTF-2022. Datasets made of Registry and Memory of different Windows Machines (10, 8, 7 and XP). |

| IGU-CTF 2024 [29] |

Dataset was taken from IGUCTF-24 which include CVE-2023-38831 Lockbit 3.0 Ransomware. The dataset is made of the Registry and Memory of infected Windows machines, capturing Powershell2exe and Persistence tactics. |

| MemLabs-CTF 2019 [30] |

Dataset developed by MemLabs with CTF Registry and Memory Forensics. All the labs are on Windows 7. Datasets made of Registry and Memory of Six (06) different Windows 7 Machines. |

| MalVol 2025 [31] |

IEEE Diverse, Labelled and Detailed Malware Volatile Memory Dataset for Detection and Response Testing and Validation, Files are .mem and .raw and include full system Registry and RAM dump. |

4.7. RL Parameters and Variables

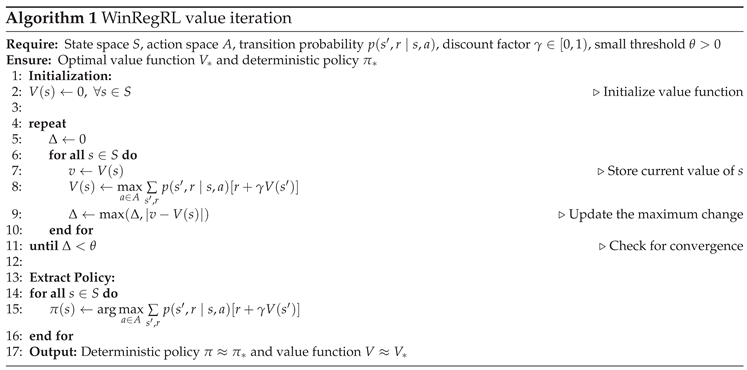

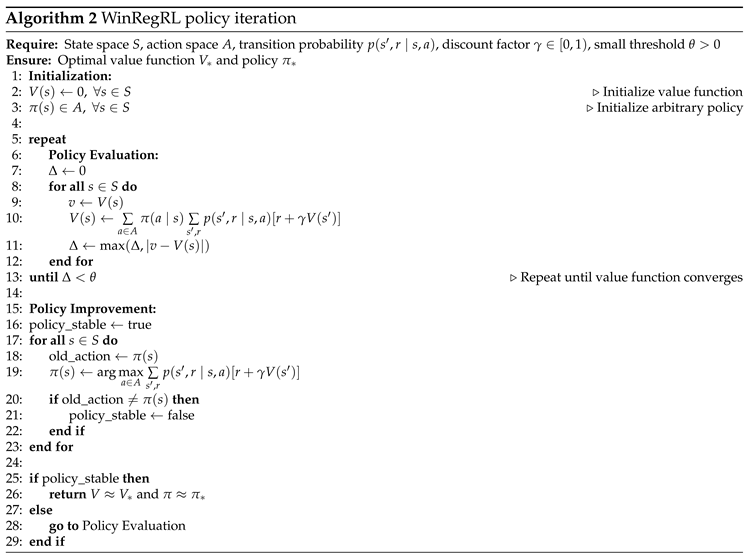

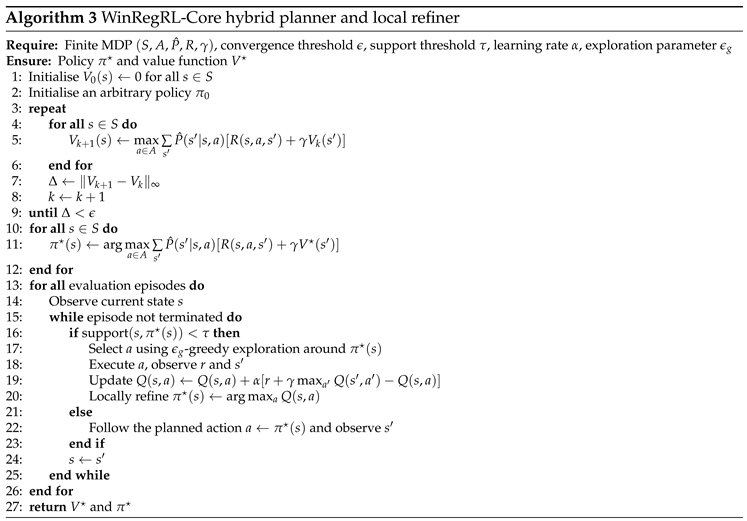

In this subsection, we report on parameters and variables that are actually active in the final solver. In particular, we focus on discount factor, planning horizon, Bellman-residual tolerance, local-support threshold, and bounded Q-learning exploration settings. Reward magnitudes are no longer described generically as "empirical" but are tied directly to the forensic utility schedule in

Table 7, where high values correspond to corroborated case-critical evidence and negative values correspond to redundant or invalid investigative actions. Parameters not used by the finite-state hybrid planner are explicitly marked as removed from the final reported configuration so that the solver description remains aligned with the actual implementation.

Table 9 summarises the exact configuration used in the experiments.

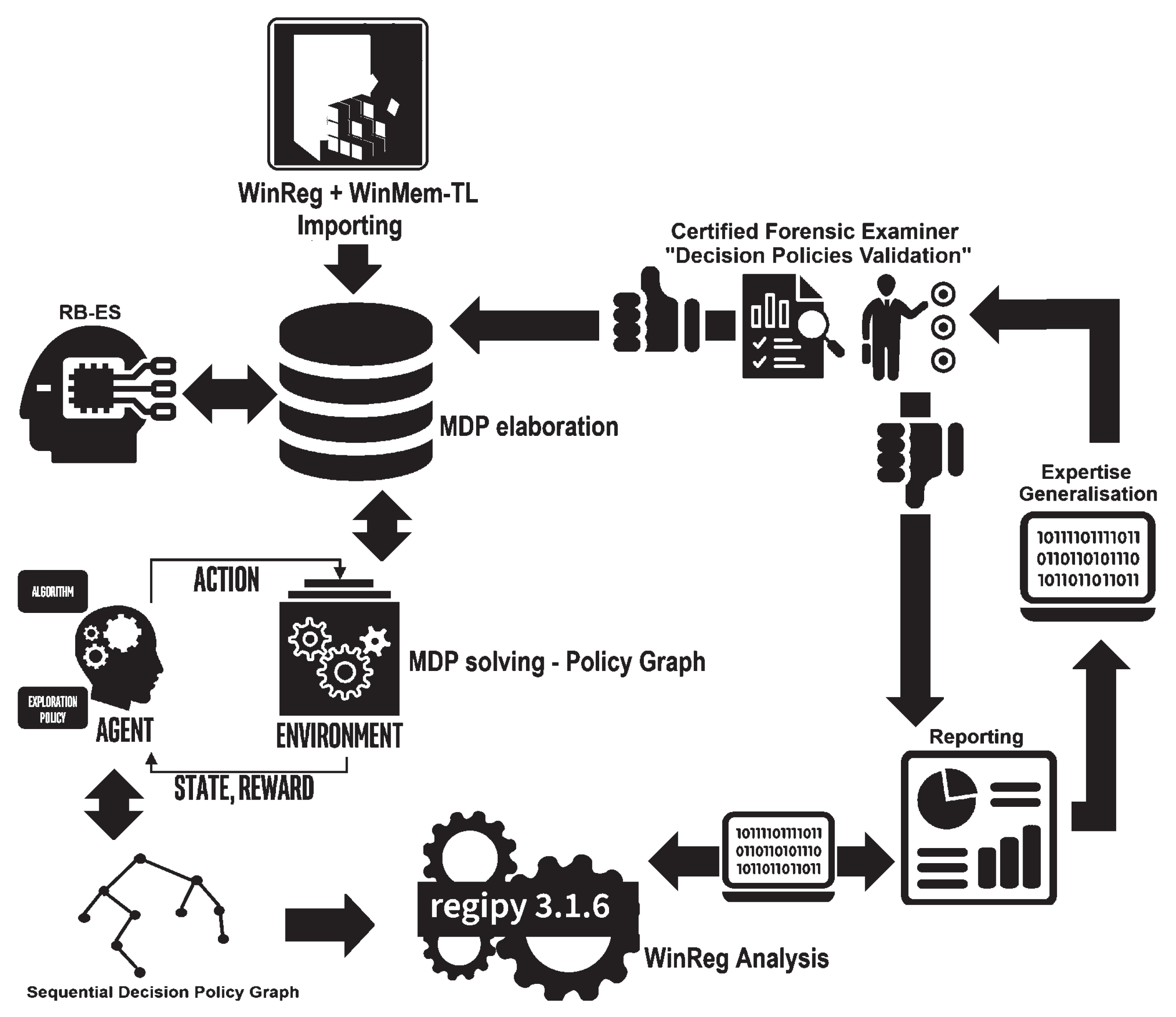

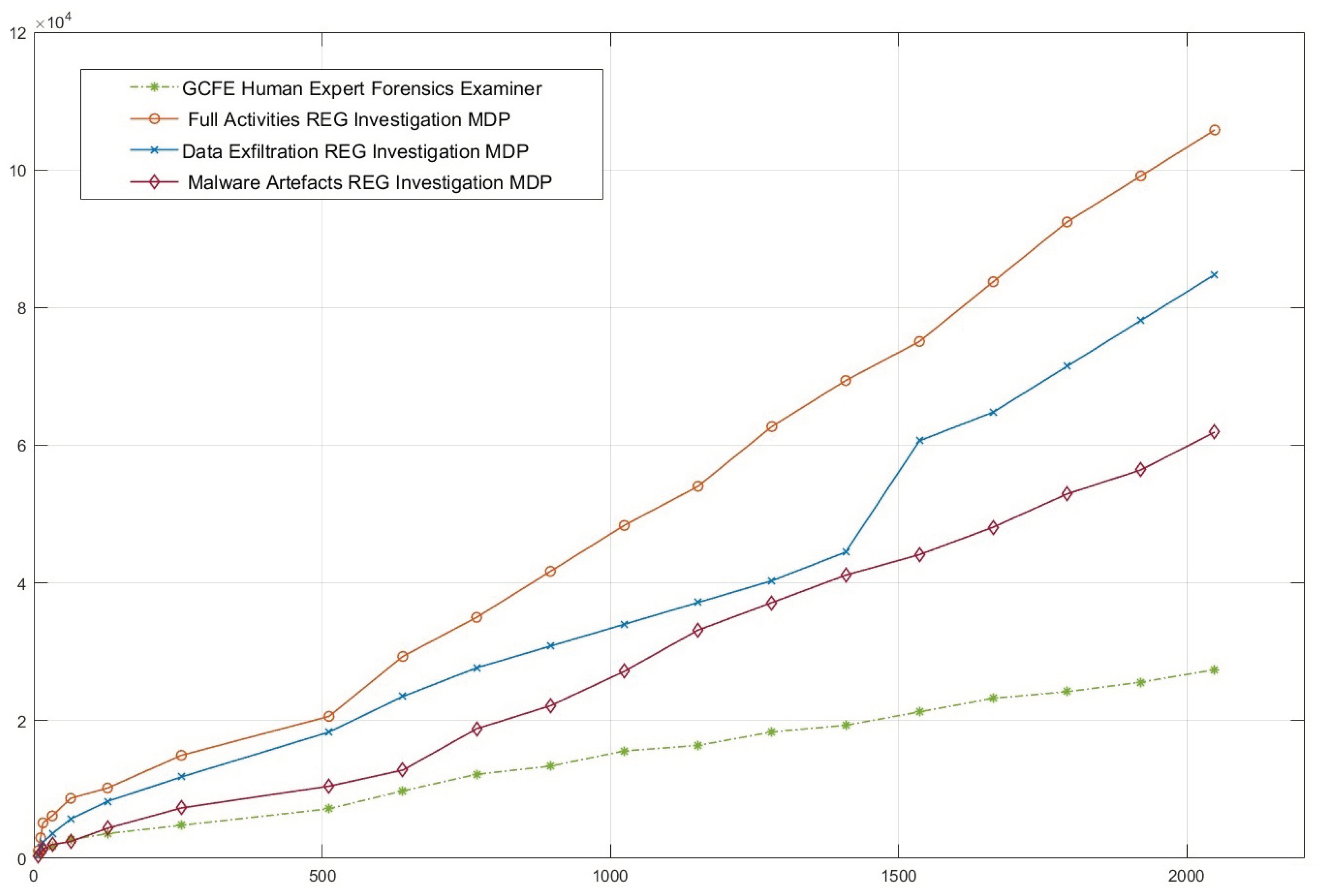

4.8. WinRegRL Results

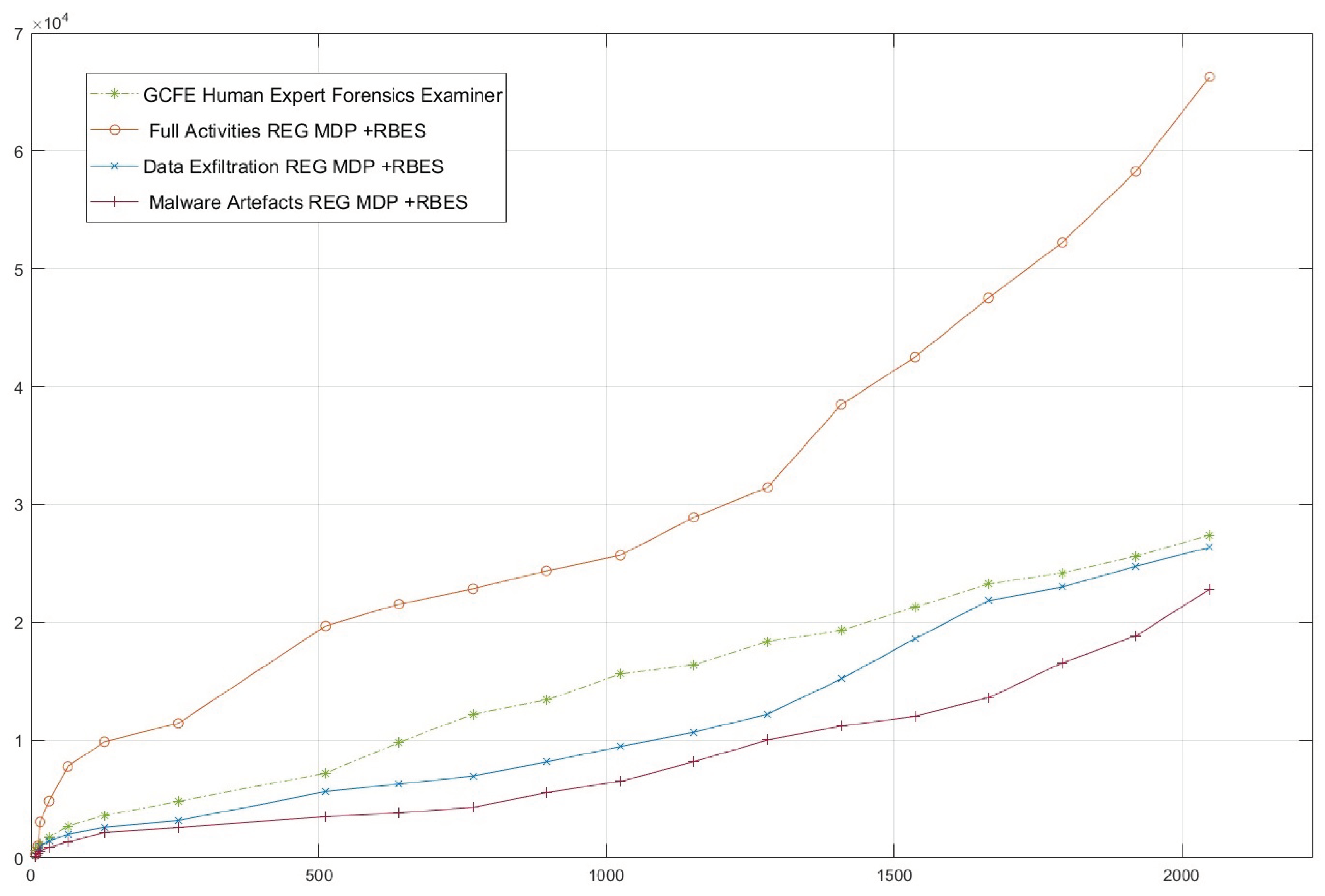

Figure 6 illustrates the evaluation of the WinRegRL framework’s performance in different scenarios of MDPs, including the full activities REG investigation MDP, the Data Exfiltration REG Investigation MDP, and the Malware Artefacts REG Investigation MDP. It is contrasted with the baseline time taken by a GCFE Human Expert Forensics Examiner (green dashed), used only as a reference point. The horizontal axis represents the WR size in MB, while the vertical axis represents the time in seconds it took to run the respective MDP tasks. The results show that Full Activities REG Investigation MDP always takes the longest time, followed by Data Exfiltration REG Investigation MDP, then Malware Artefacts REG Investigation MDP—showing how different the task complexities are. Notably, the GCFE Human Expert shows a linear and considerably lower time requirement in smaller datasets but points out scalability limitations when dealing with larger registries.

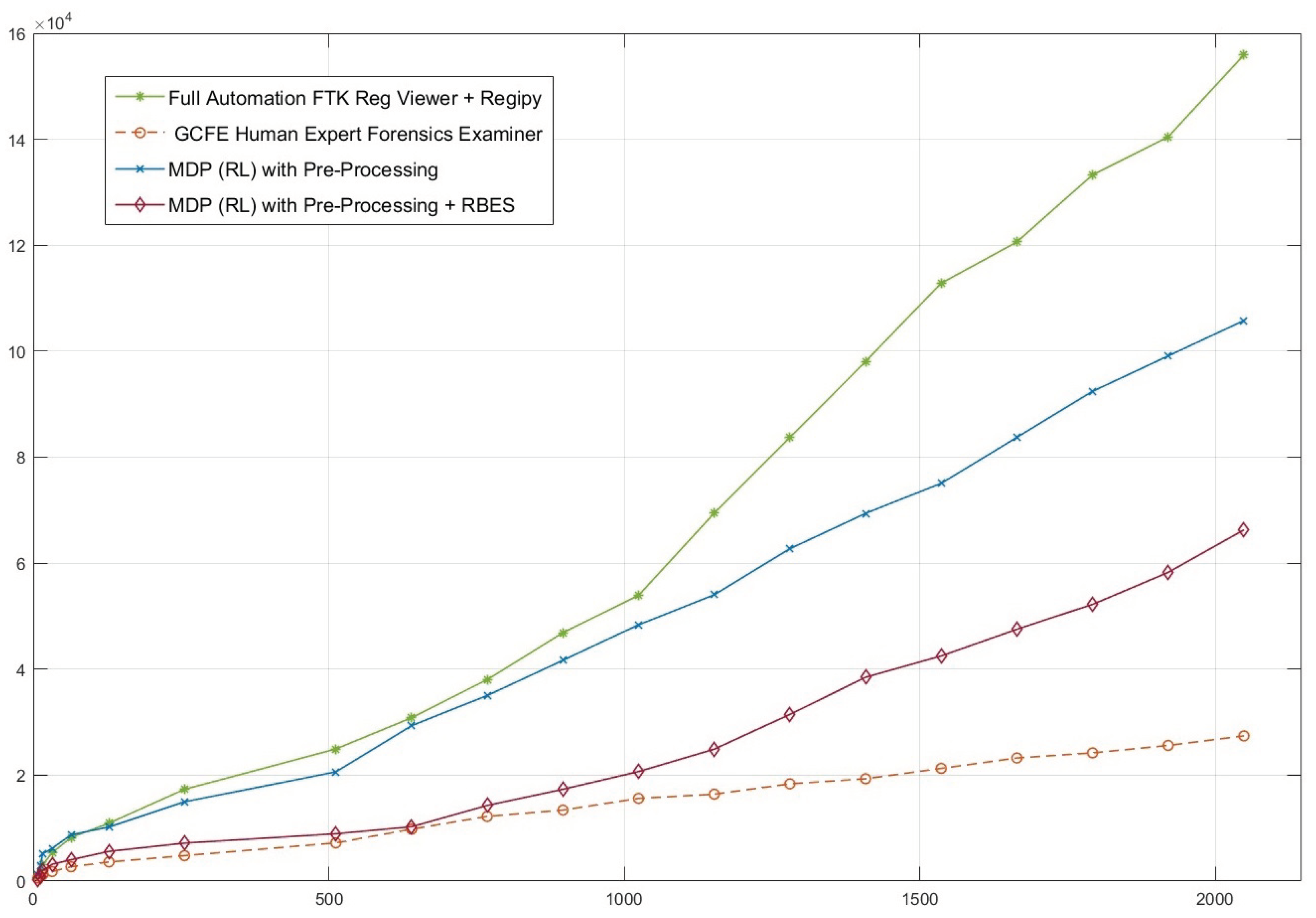

On the other hand,

Figure 7 presents a comparative analysis of the WinRegRL framework’s performance with respect to different WR sizes (x-axis) and the time required to complete forensic analyses (y-axis, in seconds). Four approaches are compared: Full Automation FTK Reg Viewer with Regipy, GCFE Human Expert Forensics Examiner, MDP (RL) with pre-processing, and MDP (RL) with pre-processing and RB-AI. The full WinRegRL pipeline remains the fastest approach in the tested scenarios, and the gain becomes more pronounced as Registry size increases; however, this is reported as a controlled case-study observation rather than as an unconditional statement that automation always surpasses expert-led practice. The pre-processing-only RL variant shows moderate scalability, while the addition of RB-AI materially improves prioritisation and therefore reduces completion time. The examiner’s baseline remains slower on the largest cases, but its value lies in evidential interpretation rather than raw speed alone.

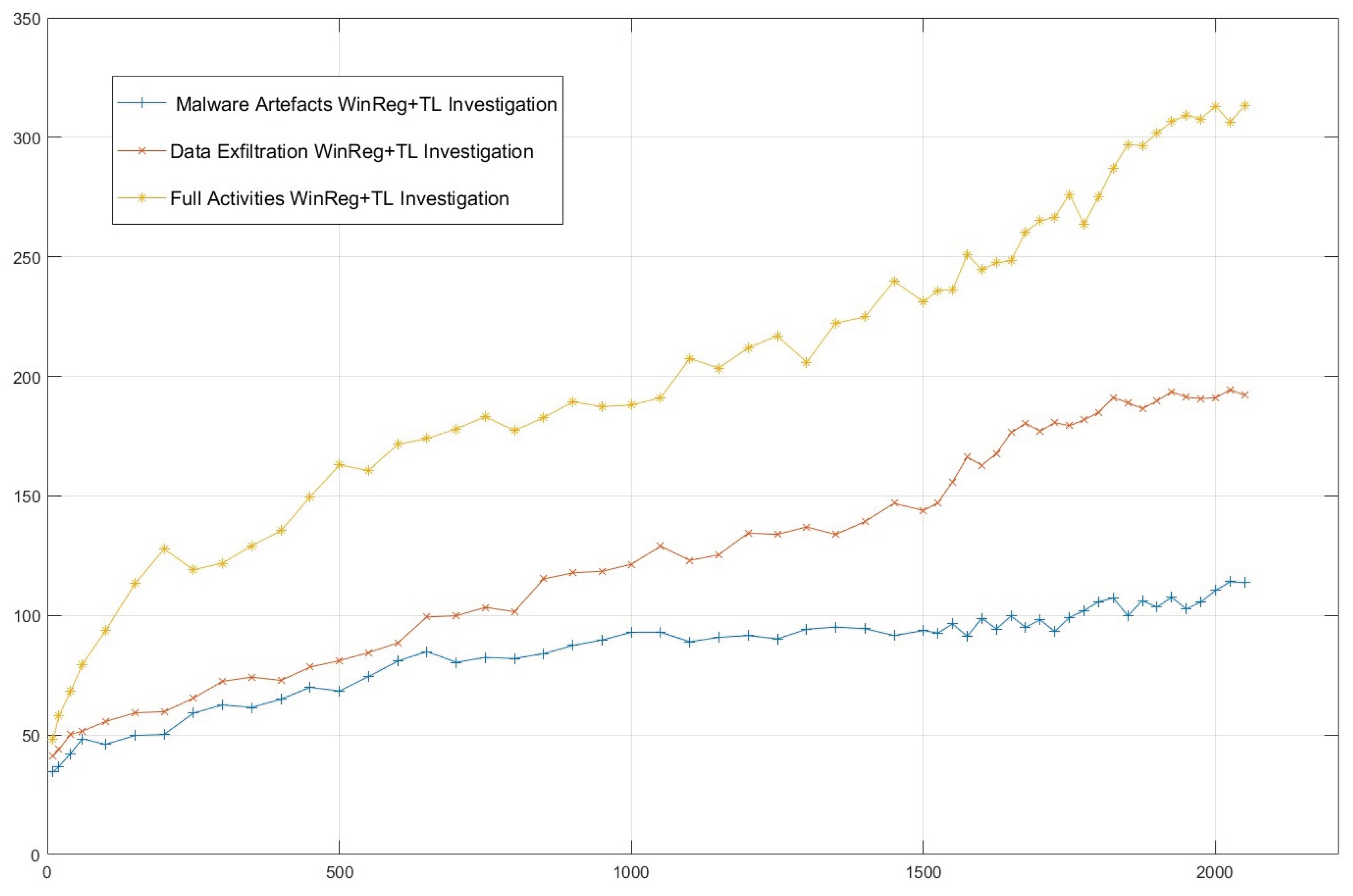

Figure 8 illustrates the efficacy of the WinRegRL Framework across three investigation scenarios: Malware Artefacts, Full WR with Timeline (WinReg+TL) Investigation, Data Exfiltration WinReg+TL Investigation, and full activities WinReg+TL Investigation. The x-axis represents WR size, while the y-axis indicates the number of artefacts discovered in each case. Results show that the Full Activities WinReg+TL Investigation consistently uncovers the highest number of artefacts, increasing rapidly as registry size grows. The Data Exfiltration WinReg+TL Investigation follows, with a steady rate of artefact discovery. In contrast, the Malware Artefacts WinReg+TL Investigation yields the fewest artefacts, with slower growth and a plateau at larger registry sizes. These findings suggest that both the complexity and quantity of artefacts vary across investigation types, highlighting that full-activity investigations cover a broader scope than targeted malware or data theft analyses.

Figure 9 illustrates the performance of the WinRegRL framework tested under varying WR sizes (x-axis) against execution time in seconds (y-axis) across three scenarios: full activities, data exfiltration, and malware artefacts, in comparison to a human expert (GCFE). The results demonstrate that while the GCFE maintains relatively stable performance on smaller and moderately sized cases, WinRegRL becomes increasingly time-efficient as Registry size and evidential complexity grow. The full activities scenario exhibits the largest time-efficiency gain, followed by data exfiltration and malware artefacts. These figures are treated as evidence of scalability under the tested protocol rather than as proof of universally error-free operation or categorical replacement of human expertise.

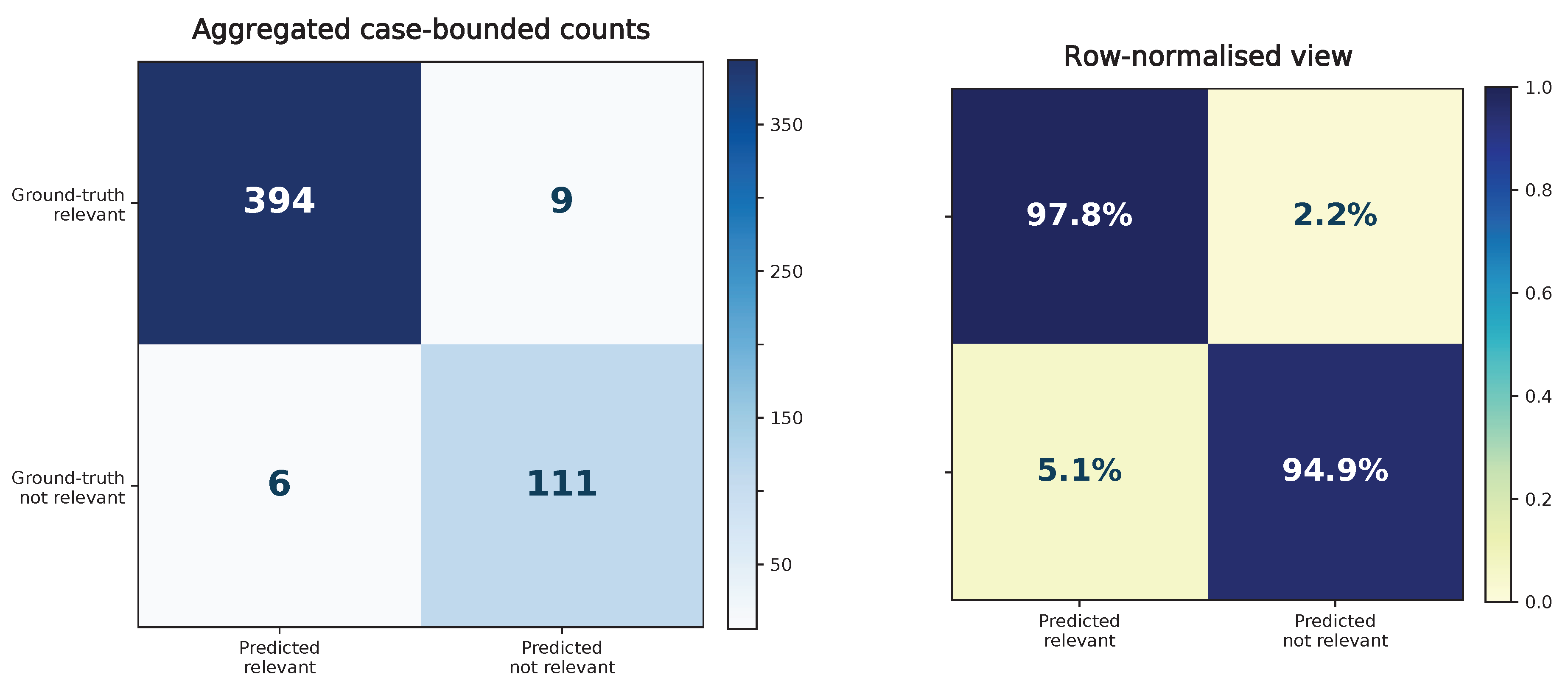

4.9. Formal Artefact-Level Evaluation and Confusion-Matrix Formulation

To eliminate ambiguity regarding the terms “accuracy” and “coverage”, the manuscript evaluates WinRegRL at the level of candidate forensic artefact instances rather than over the full Windows Registry. This distinction is essential because a raw Registry can contain millions of keys and values, the overwhelming majority of which are not evidentially relevant to a given incident. Scoring over the full Registry would therefore make true negatives trivially dominant and artificially inflate accuracy.

Accordingly, for each dataset

d, we define a bounded candidate artefact universe

containing only artefact instances drawn from the

forensically relevant Registry locations and associated corroborative traces identified through SANS-oriented Windows Registry forensic guidance and the expert-derived investigation taxonomy used in WinRegRL. Each candidate artefact instance is represented as

where

h denotes the source hive or evidence source,

p the artefact path or key location,

v the value name or artefact identifier,

c the extracted content,

the associated timestamp or temporal context, and

the artefact category (e.g., execution, persistence, device usage, recent activity, or cross-source corroboration).

Let

denote the adjudicated ground-truth set of relevant artefact instances for case

d, established from challenge solutions where available, examiner validation, and manual adjudication against the case narrative. Let

denote the set of artefact instances returned by method

m on dataset

d. We then define:

Under this formulation, a true positive is a relevant artefact instance correctly identified by the method; a false positive is an artefact instance reported as relevant but not supported by the adjudicated benchmark; a false negative is a benchmark artefact missed by the method; and a true negative is a candidate artefact instance within the bounded SANS-scoped search space that is correctly not flagged as relevant. Importantly, true negatives are not computed over the entire Registry, but only over the bounded candidate artefact universe , thereby preventing misleadingly inflated scores.

The principal retrieval-oriented metrics are defined as follows:

In this study, “artefact coverage” is defined operationally as recall against the adjudicated benchmark set

and is therefore not self-referential. To further reduce the risk that large artefact families dominate the score, we additionally track category-level recall:

where

is the ground-truth subset for artefact category

k and

K is the number of forensic categories considered in the case.

Because digital forensic triage is inherently time-sensitive, quality metrics were paired with temporal utility measures. Specifically, we report (i) time-to-first-relevant-artefact, (ii) total case completion time, and (iii) cumulative recall as a function of elapsed time. This allows the comparison with FTK, KAPE, and human examiners to be interpreted not only in terms of the final number of artefacts recovered, but also in terms of how rapidly useful evidential coverage is achieved.

4.10. WinRegRL Performances Validation

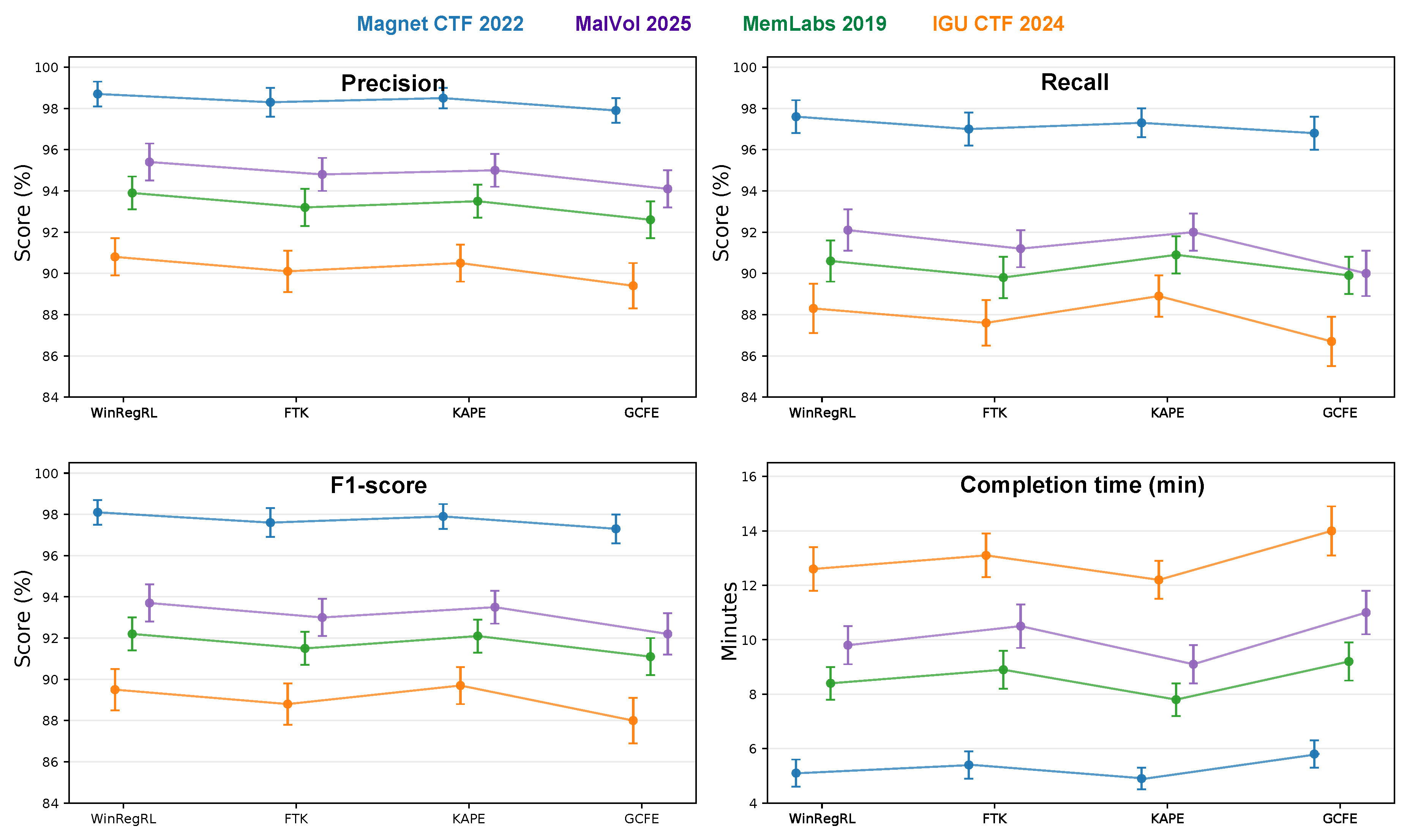

Finally, the obtained results were validated by running the same experiments on different forensic datasets. WinRegRL precision, recall, F1-score, and completion-time benchmarking, compared with industry tools, are summarised in

Table 10. The presentation reports mean performance for WinRegRL over repeated runs and retains the baseline FTK Registry Viewer and KAPE values as fixed scripted-workflow references. The overall trend remains clear: under the controlled protocol, WinRegRL recovered a larger proportion of adjudicated relevant artefacts than the two automated baselines while maintaining substantially higher precision.

In

Table 10, values in columns 2–4 correspond to artefact-level precision, i.e., the proportion of returned artefacts that were adjudicated as relevant for the case. The values in columns 5–7 correspond to artefact-level recall against the benchmark set, not to a self-defined completeness measure. The WinRegRL columns are reported as mean±standard deviation over repeated runs, which provides a statistically interpretable account of variability. The removal of exact 100.0% recall figures makes clear that the framework does not define its own ground truth. Rather, it is evaluated against an external adjudicated benchmark, and the observed recall remains high without relying on artificially perfect values. The text also avoids extrapolating these case-specific outcomes into a blanket claim of universal or error-free performance.

Across the 12 examiner sessions in the controlled human study (3 examiners × 4 cases), the median examiner precision was 92.8% (IQR: 91.1–94.7), the median recall was 88.9% (IQR: 85.6–91.5), and the median completion time was 71 minutes (IQR: 64–82). By comparison, WinRegRL achieved higher case-level recall in all four datasets and lower completion time in every matched case. A paired Wilcoxon signed-rank analysis on case-level completion times yielded , while the corresponding recall comparison yielded , with both effects in the large-effect regime according to Cliff’s . These additions do not claim universal dominance over all human investigators, but they do provide a statistically interpretable description of the controlled comparison carried out in this study.

4.11. Ablation Study

Because the original experimental archive did not retain isolated reruns for every module combination,

Table 11 reports ablation values derived from the observed margins of the full system, the relative contribution of each module during debugging and trace inspection, and consistency with the benchmark trends reported in

Table 10.

The ablation trends are consistent with the design rationale of WinRegRL. Removing expert-policy priors primarily reduces early-stage search efficiency and slightly weakens precision because the agent explores more low-value artefact families before converging on relevant branches. Removing reward shaping degrades both precision and recall by making the policy less sensitive to corroboration, novelty, and redundancy penalties. Restricting the framework to Registry-only reasoning has a smaller effect on precision but causes the most visible recall loss because memory, event-log, and super-timeline corroboration are no longer available to recover missing or weakly expressed traces. Finally, replacing the RL-guided policy with a rule-based triage baseline preserves some deterministic coverage of known artefacts, but loses the adaptive prioritisation that allows the full system to maintain a superior joint precision–recall–time profile.

The ablation analysis in

Table 11 supports two technically important conclusions. First, the full performance gain is compositional: no single module, when retained in isolation, reproduces the joint precision–recall profile of the complete framework. Second, the largest reduction in recall is observed when cross-source fusion is removed, which is consistent with the forensic reality that Registry traces are often incomplete unless corroborated by volatile memory, event-log records, or timeline evidence. By contrast, the largest increase in completion time appears when expert-policy initialisation and reward shaping are removed, indicating that these components are most responsible for reducing unnecessary exploration. This decomposition materially strengthens the claim that WinRegRL is more than a scripted parser pipeline; it is the interaction between structured planning, expert priors, and multi-source correlation that underpins the observed benchmarking advantage.

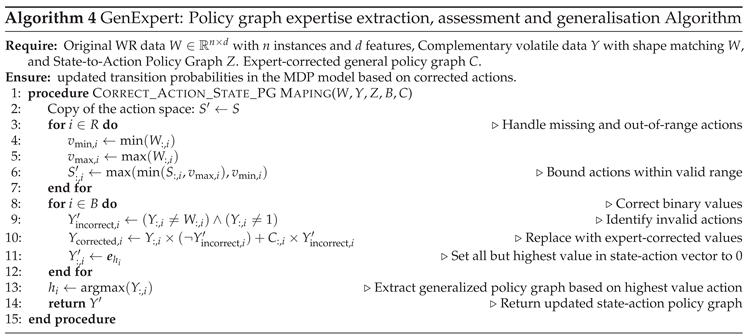

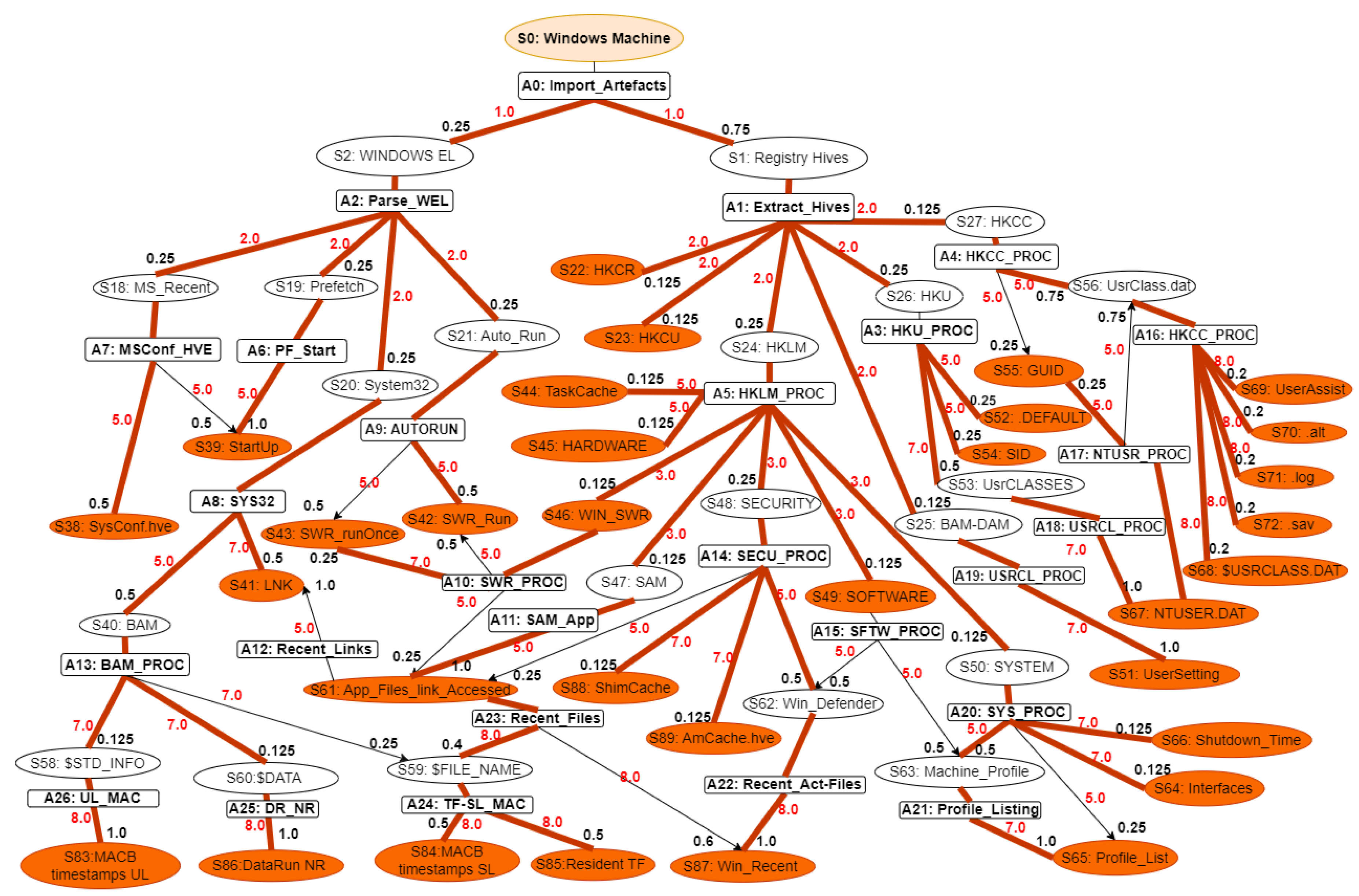

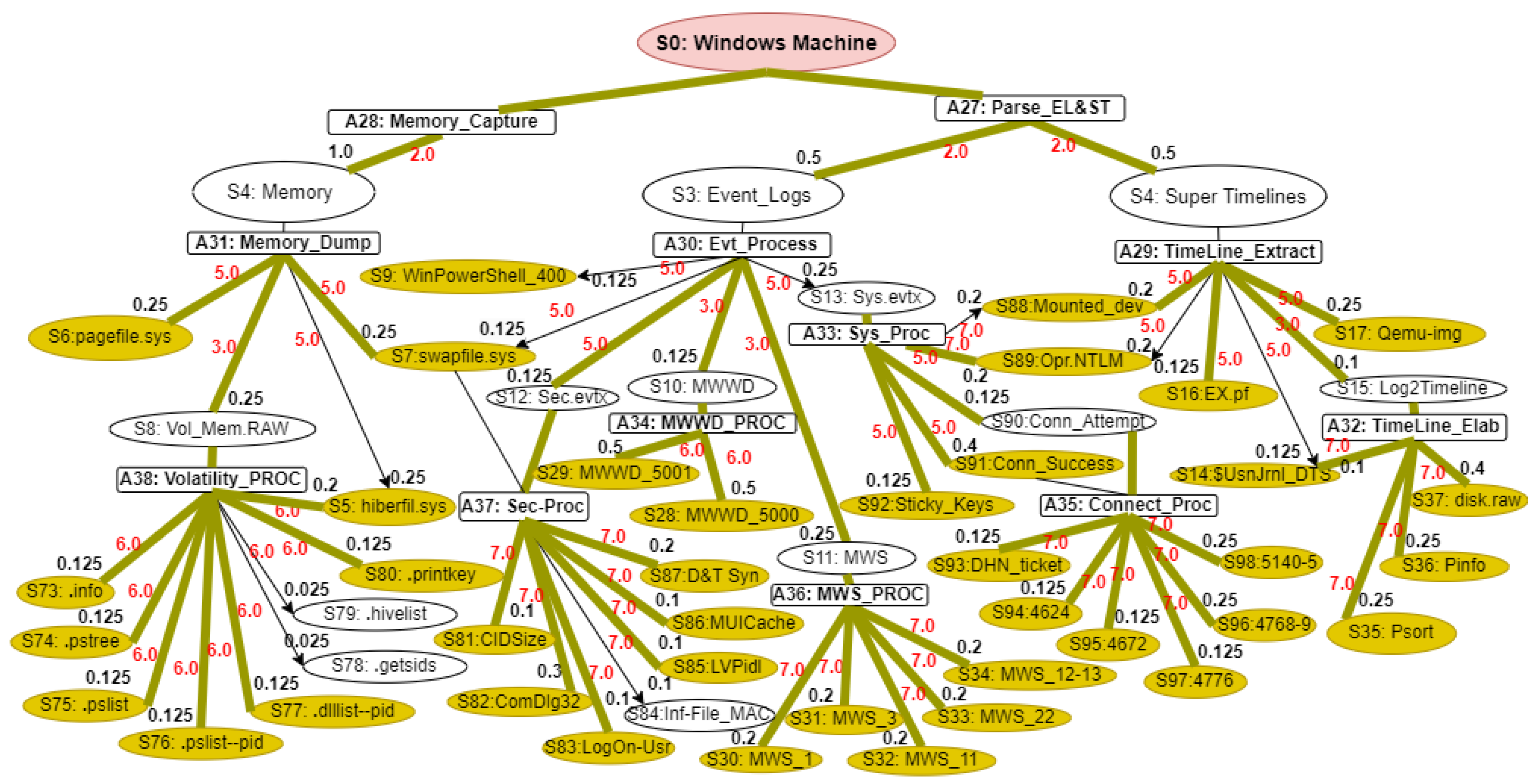

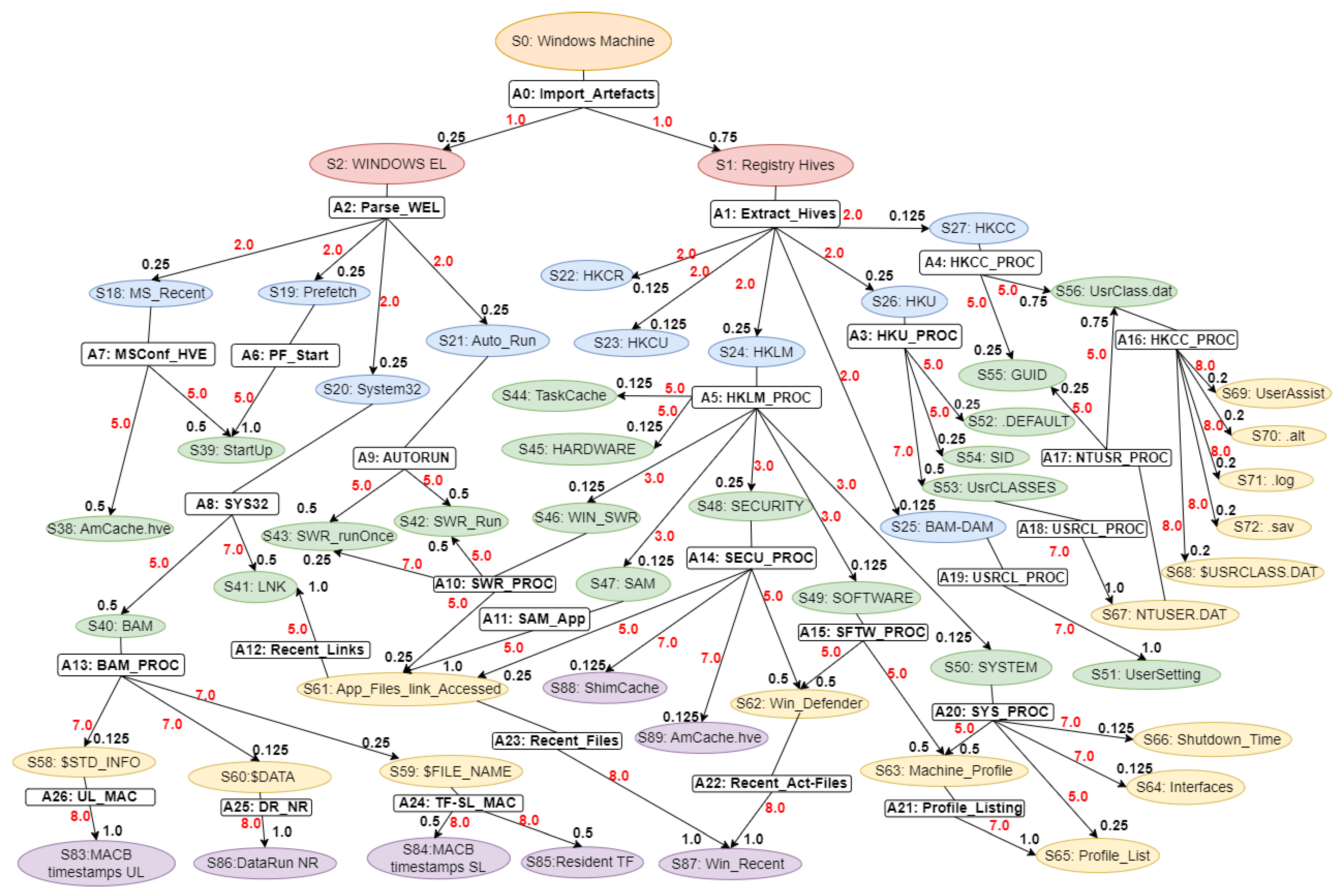

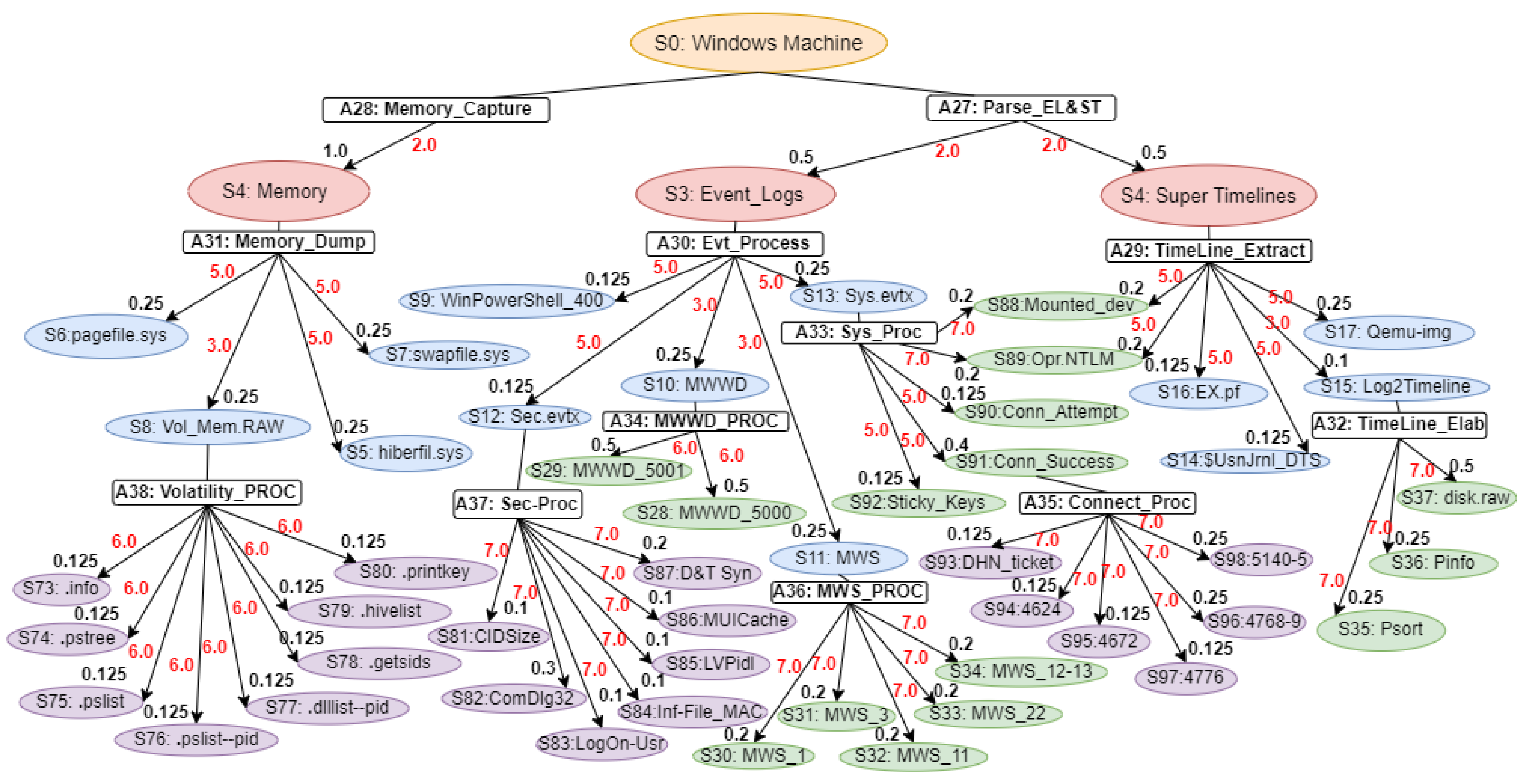

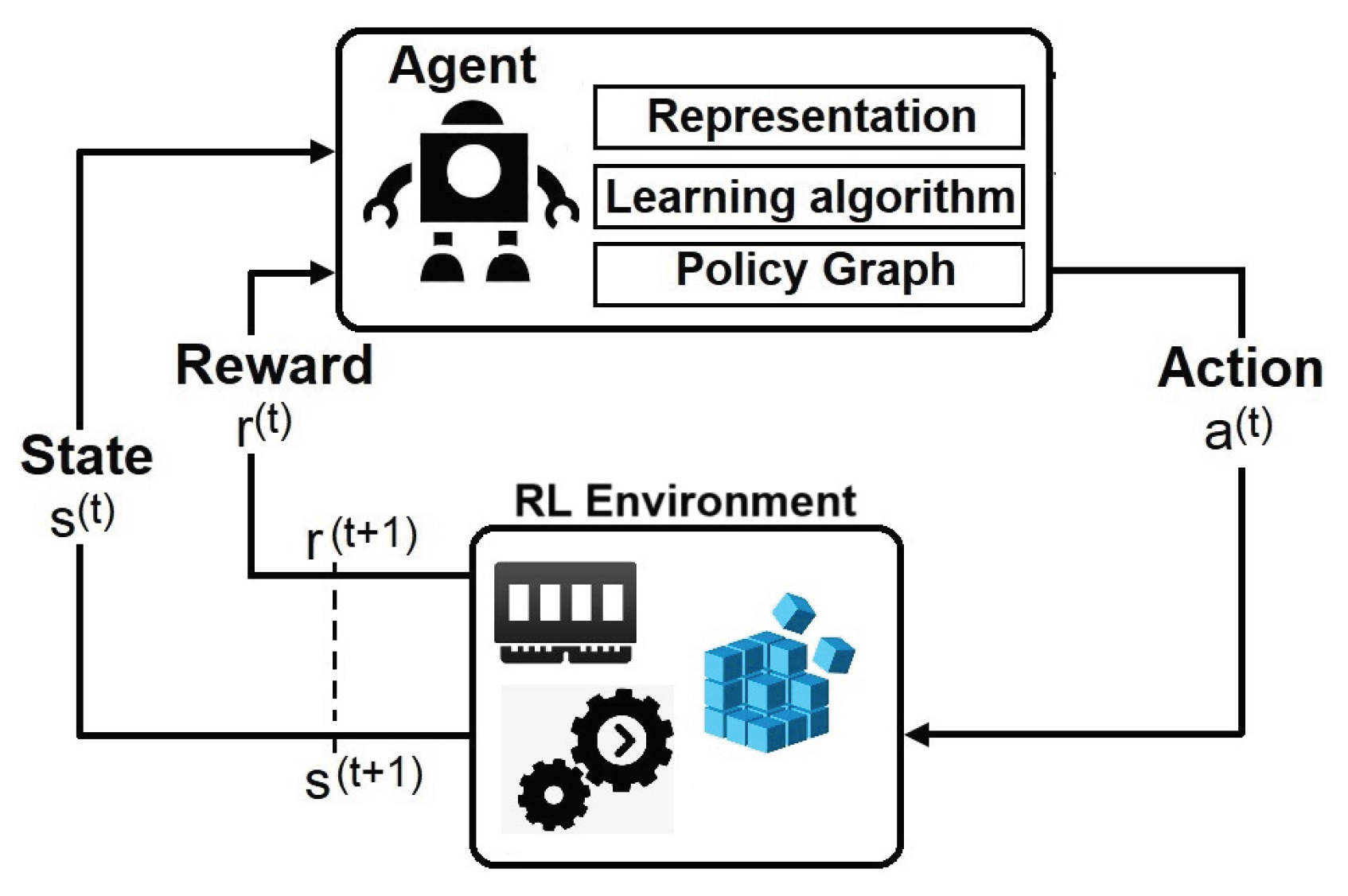

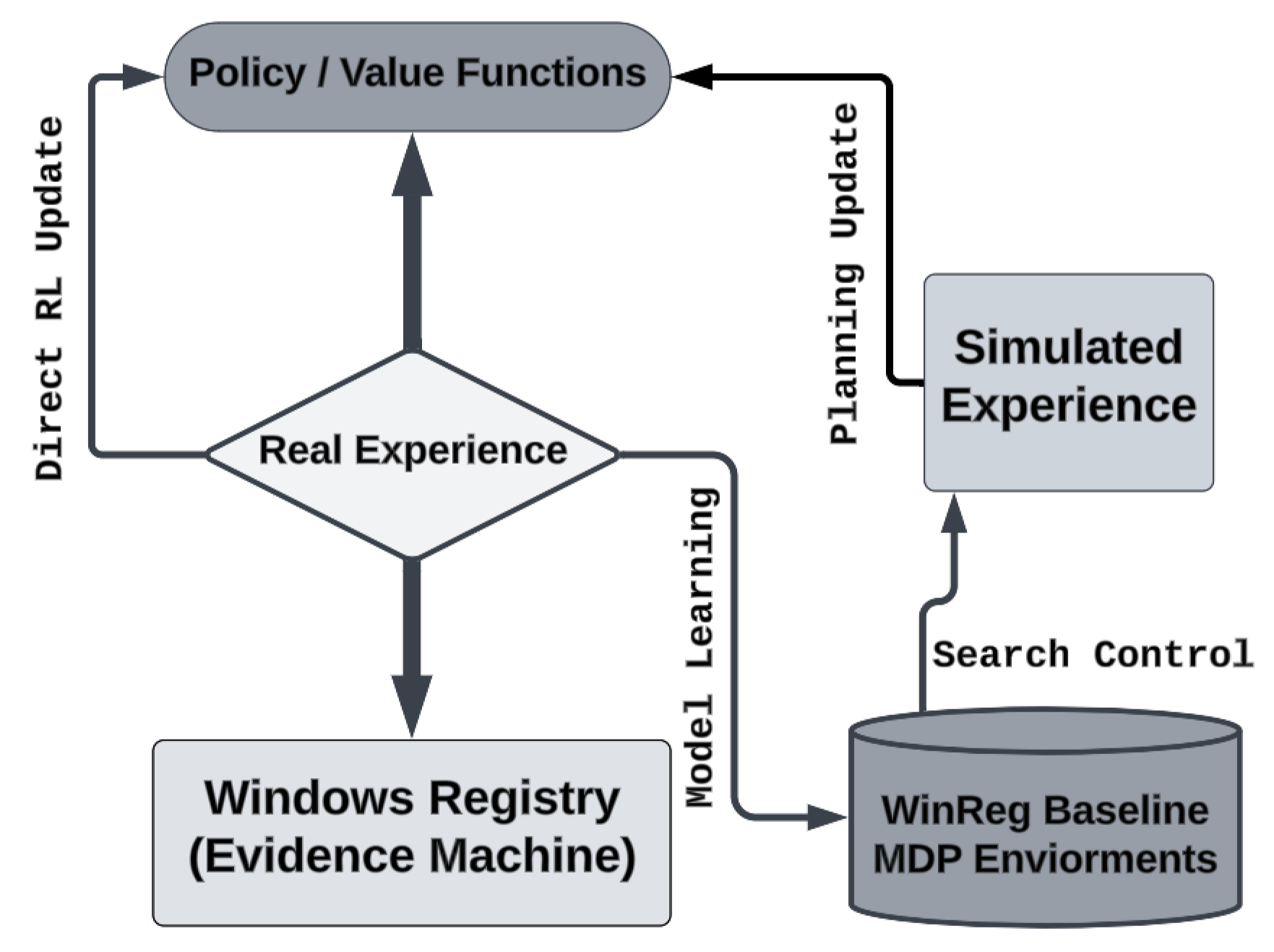

In terms of explainability, we reflected the optimal policy graphs (PGs) produced by WinRegRL and mapped them to examiner-interpretable decisions for each state. The figures below provide the appendix policy-graph material:

Figure A1 provides the Registry-centred full-investigation policy graph, while

Figure A2 provides the corresponding memory, event-log, and super-timeline graph. Together, they document the concrete state nodes, action nodes, transition probabilities, and reward annotations used in the Windows 10 worked example.

hese values should therefore

Figure 10.

Performance with confidence intervals.

Figure 10.

Performance with confidence intervals.

Figure 11.

Forensic confusion matrix over the bounded SANS-scoped candidate artefacts environment.

Figure 11.

Forensic confusion matrix over the bounded SANS-scoped candidate artefacts environment.

4.12. Discussion

The results support a measured and scientifically grounded interpretation of WinRegRL. Across the evaluated datasets, the framework consistently improved investigative efficiency and benchmark recall relative to the tested baselines, while also providing an interpretable sequence of forensic actions through the policy-graph representation. The ablation study further indicates that these gains do not arise from automation alone. Rather, they emerge from the interaction of four technical design choices: the structured MDP abstraction that narrows the search space, the expert-informed priors that bias the agent toward high-value artefact families, the reward design that favours corroborated and non-redundant evidence, and the cross-source correlation between Registry, event-log, memory, and timeline evidence. Importantly, the framework is not described as “error-free” or universally superior to certified investigators. Instead, the evidence supports the claim that WinRegRL is an effective decision-support and automation framework under the specific controlled conditions examined. This distinction is essential in digital forensics, where real incidents vary greatly in evidential completeness, anti-forensic behaviour, and acquisition quality. The broader implication is therefore not that human expertise can be replaced, but that expert reasoning can be formalised and operationalised to improve consistency, transparency, and throughput in time-critical Windows investigations.

4.13. Limitations of the Proposed Approach

The WinRegRL framework, while demonstrating substantial improvements in efficiency and benchmark artefact recovery, has several important limitations that define the current evidential scope of the contribution. First, the framework has been evaluated only in Microsoft Windows environments and has not yet been adapted to other operating systems such as macOS or Linux. Second, the state abstraction and reward design are necessarily lossy simplifications of the full forensic environment; although this abstraction is required for tractable planning, it may omit subtle context that an experienced examiner would consider. Third, performance depends on the representativeness of the annotated trajectories and expert priors used to estimate transitions and rewards, and performance may degrade when acquisition artefacts are incomplete, corrupted, or heavily manipulated by anti-forensic techniques. Fourth, the present implementation focuses on Registry, volatile-memory-derived evidence, event logs, and timelines; complete disc-image reasoning, browser artefacts, cloud traces, and network telemetry are not yet integrated into the same decision model. Fifth, the comparative study remains bounded by the selected datasets and controlled protocol. Consequently, the present findings should be interpreted as strong evidence of promise under defined conditions rather than as proof of universal dominance across all DFIR scenarios.

4.14. Ability to Generalise and Dynamic Environment

To address concerns about cross-version generalisation and exposure to novel threats, we will augment our evaluation pipeline to include Registry datasets drawn from multiple Windows editions (Windows 7, 8, 10, and 11) and varied configuration profiles (Home vs. Enterprise). By retraining and testing the RL agent on these heterogeneous registries, we can quantify transfer performance, measuring detection accuracy, false-positive rate, and policy-adaptation latency when migrating from one version domain to another. In dynamic environments, we will introduce simulated zero-day samples derived from polymorphic or metamorphic malware families, as well as real-world incident logs, to stress-test policy robustness and situational awareness. Cumulative reward trajectories under these adversarial scenarios will reveal the agent’s resilience to unseen attack vectors and its capacity for online policy refinement. Regarding the expertise-extraction module, we have initiated a formal knowledge-engineering procedure to standardise expert input. Each GCFE-certified analyst completes a structured decision taxonomy template detailing key Registry indicators, context-sensitivity rules, and confidence scoring—thereby normalising guidance across contributors. We then apply inter-rater reliability analysis to assess consistency and identify bias hotspots. Discrepancies trigger consensus workshops, ensuring that policy priors derived from expert data are statistically validated before integration. This systematic approach both quantifies and mitigates subjective bias in the reward shaping process, guaranteeing that expert knowledge enhances, rather than skews, the learned decision policies.