Submitted:

05 January 2025

Posted:

06 January 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Methodology

2.1. Datasets

2.2. Layer Pruning Strategies

2.2.1. Activation-Based Pruning

2.2.2. Mutual Information-Based Pruning

2.2.3. Gradient-Based Pruning

2.2.4. Weight-Based Pruning

2.2.5. Attention-Based Pruning

2.2.6. Strategic Fusion

2.2.7. Random Pruning

2.3. Knowledge Distillation

2.4. Layer Pruning and Model Training

3. Results

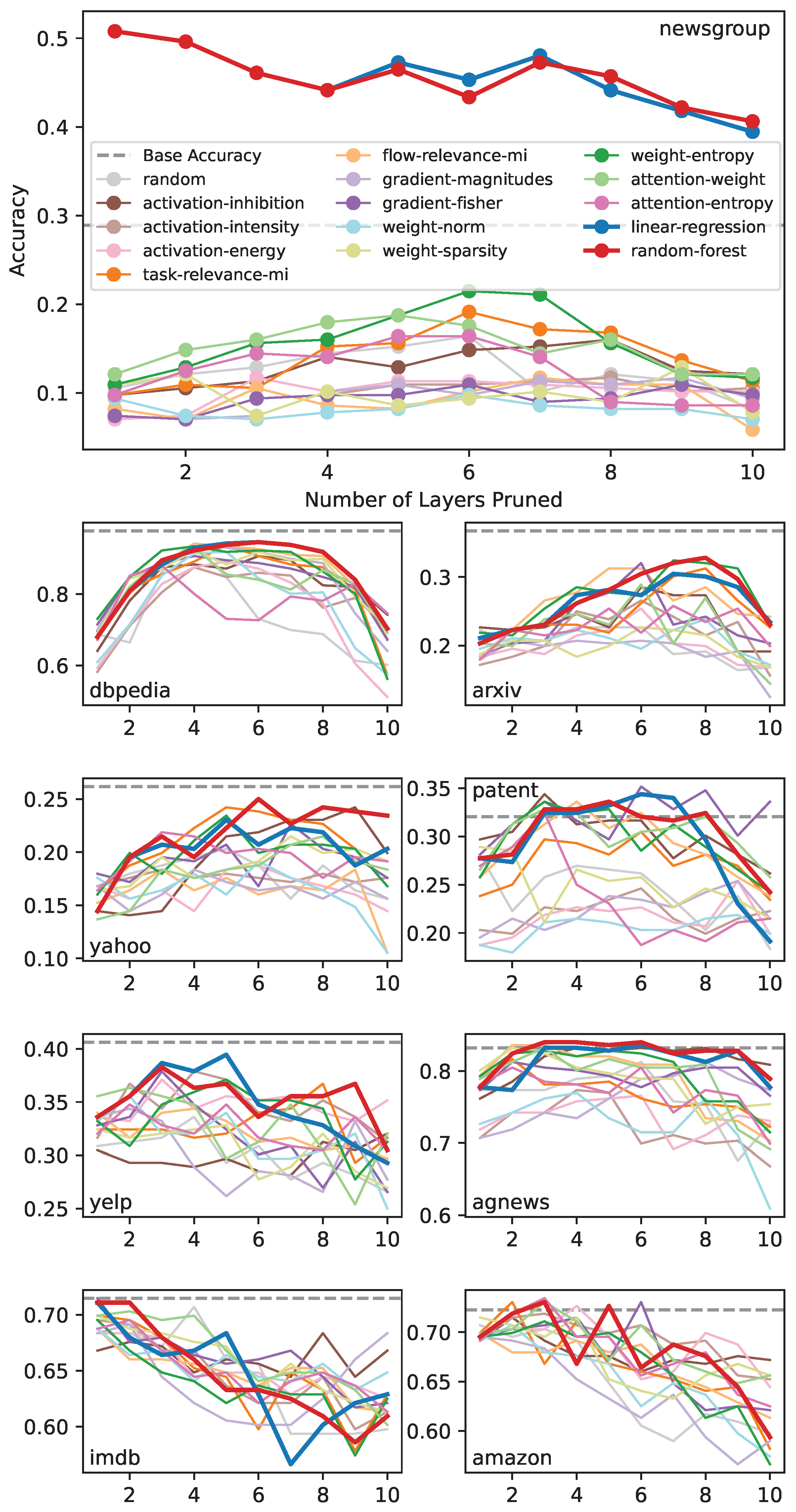

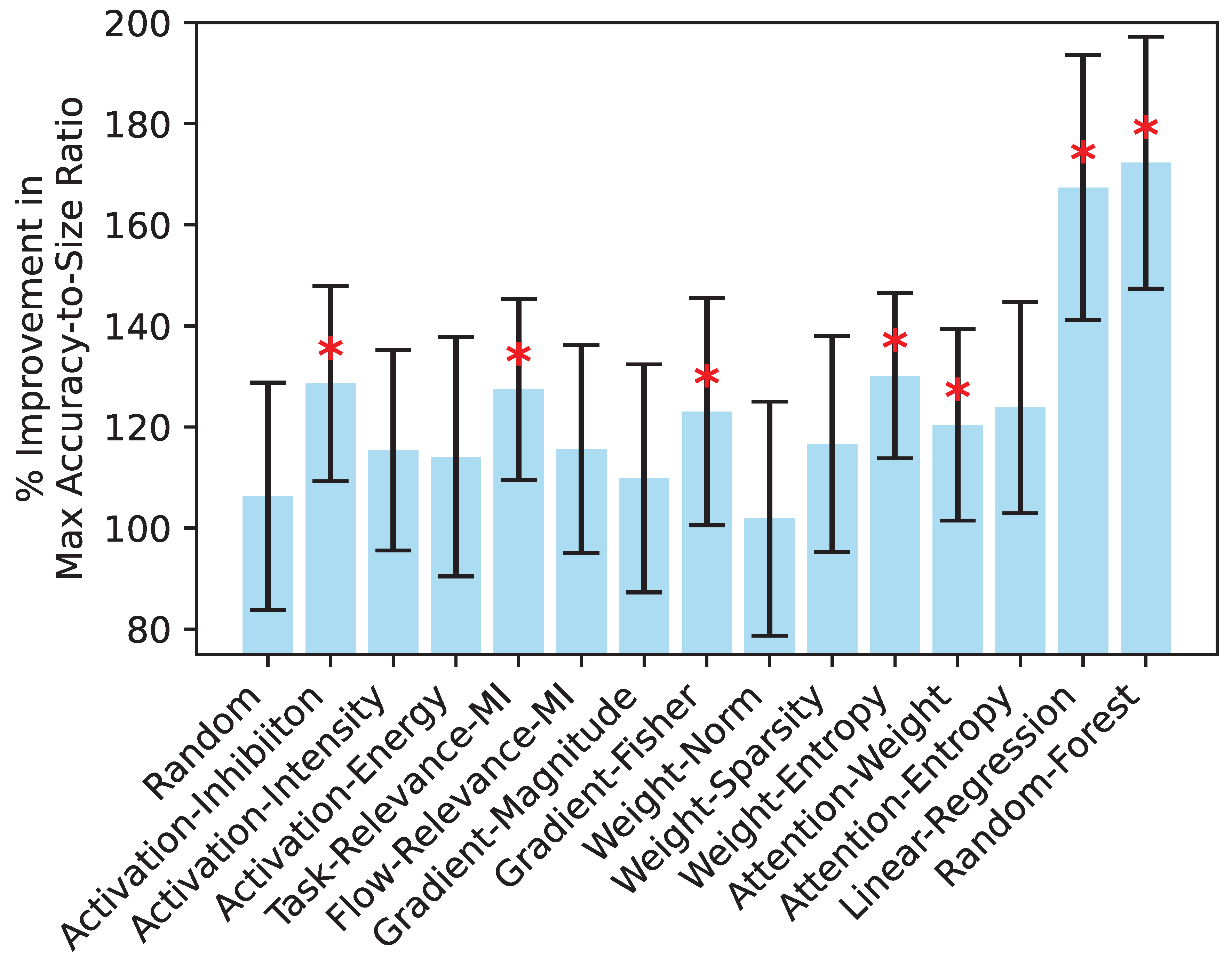

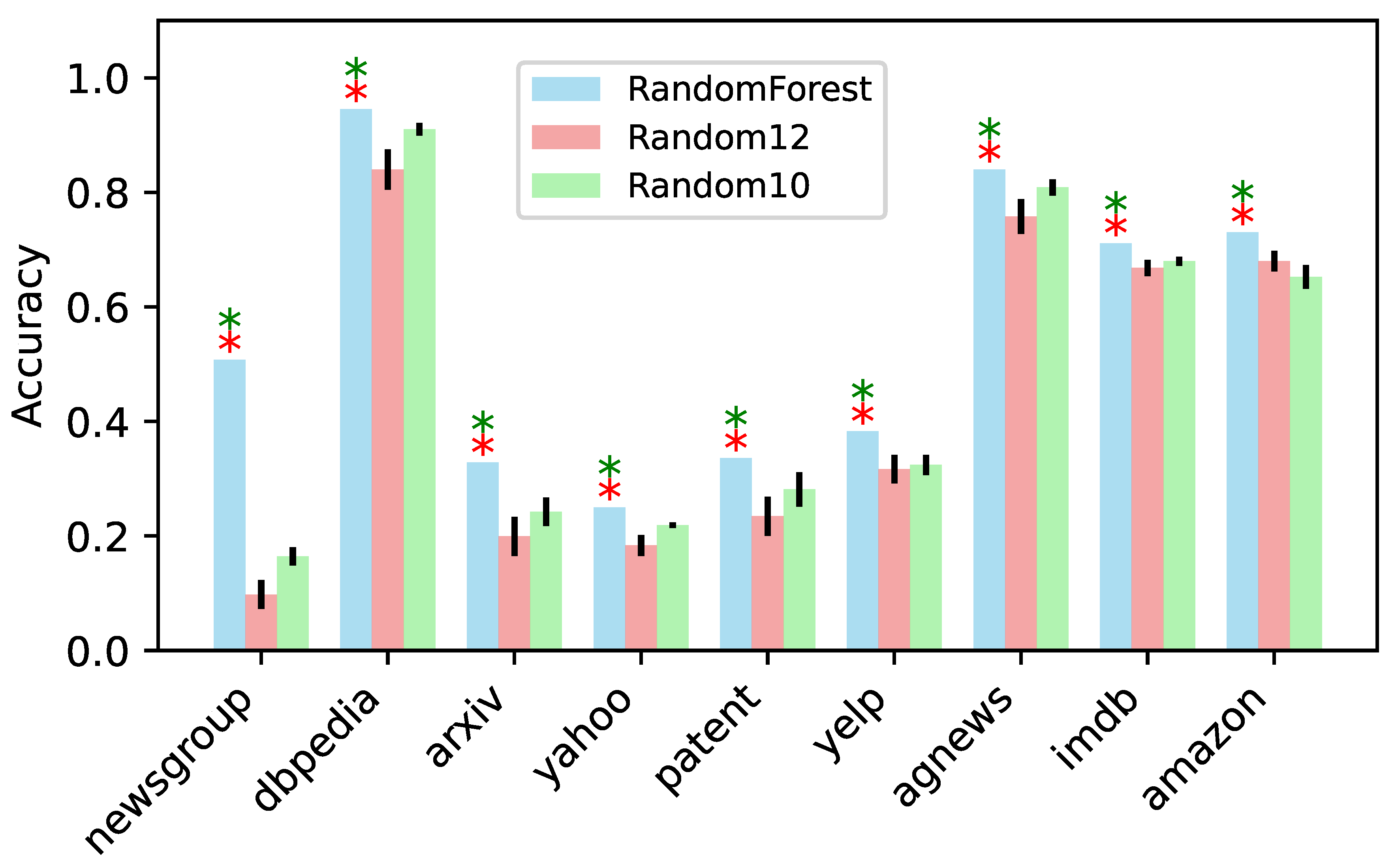

3.1. Strategic Fusion Optimizes Model Compression

| Strategy | newsgroup | dbpedia | arxiv | yahoo | patent | yelp | agnews | imdb | amazon |

|---|---|---|---|---|---|---|---|---|---|

| Baseline (uncompressed) | 0.289 | 0.977 | 0.367 | 0.262 | 0.320 | 0.406 | 0.832 | 0.705 | 0.723 |

| Random | 0.164 | 0.938 | 0.227 | 0.203 | 0.281 | 0.336 | 0.812 | 0.707 | 0.707 |

| Activation-Inhibition | 0.160 | 0.906 | 0.285 | 0.242 | 0.344 | 0.320 | 0.832 | 0.684 | 0.715 |

| Activation-Intensity | 0.117 | 0.875 | 0.266 | 0.184 | 0.246 | 0.379 | 0.773 | 0.695 | 0.719 |

| Activation-Energy | 0.117 | 0.891 | 0.254 | 0.188 | 0.254 | 0.371 | 0.766 | 0.684 | 0.727 |

| Task-Relevance-MI | 0.191 | 0.938 | 0.312 | 0.242 | 0.305 | 0.367 | 0.816 | 0.699 | 0.730 |

| Flow-Relevance-MI | 0.117 | 0.941 | 0.312 | 0.184 | 0.336 | 0.344 | 0.836 | 0.688 | 0.699 |

| Gradient-Magnitudes | 0.117 | 0.930 | 0.207 | 0.184 | 0.254 | 0.344 | 0.812 | 0.691 | 0.695 |

| Gradient-Fisher | 0.109 | 0.906 | 0.320 | 0.227 | 0.352 | 0.379 | 0.812 | 0.715 | 0.730 |

| Weight-Norm | 0.098 | 0.918 | 0.238 | 0.191 | 0.219 | 0.355 | 0.770 | 0.688 | 0.707 |

| Weight-Sparsity | 0.129 | 0.922 | 0.227 | 0.215 | 0.289 | 0.328 | 0.832 | 0.699 | 0.715 |

| Weight-Entropy | 0.215 | 0.934 | 0.324 | 0.234 | 0.336 | 0.371 | 0.828 | 0.695 | 0.711 |

| Attention-Weight | 0.188 | 0.93 | 0.289 | 0.215 | 0.328 | 0.363 | 0.832 | 0.703 | 0.730 |

| Attention-Entropy | 0.164 | 0.879 | 0.258 | 0.219 | 0.324 | 0.348 | 0.805 | 0.695 | 0.734 |

| Linear-Regression | 0.508 | 0.945 | 0.305 | 0.230 | 0.344 | 0.395 | 0.836 | 0.711 | 0.730 |

| Random-Forest | 0.508 | 0.945 | 0.328 | 0.250 | 0.336 | 0.383 | 0.840 | 0.711 | 0.730 |

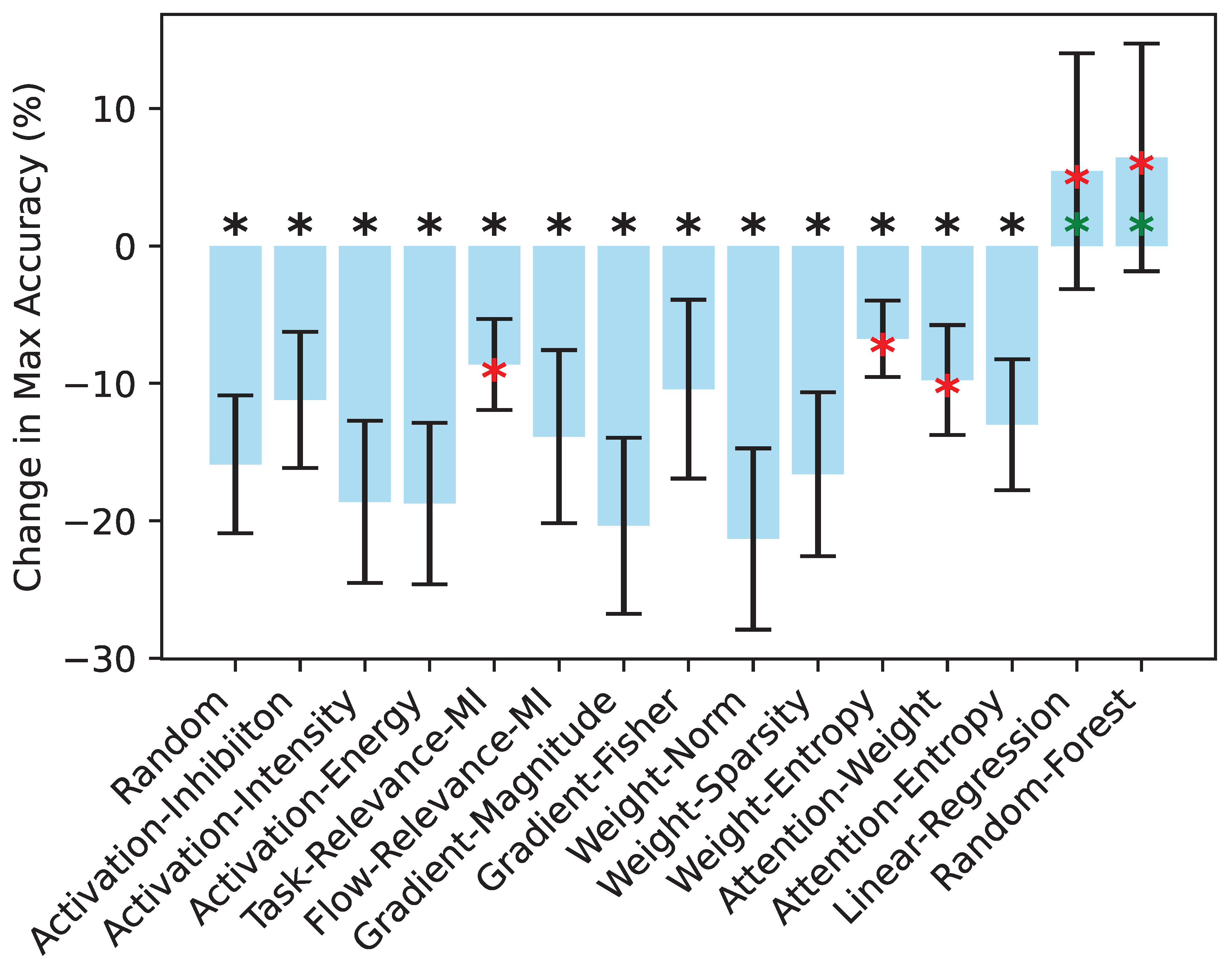

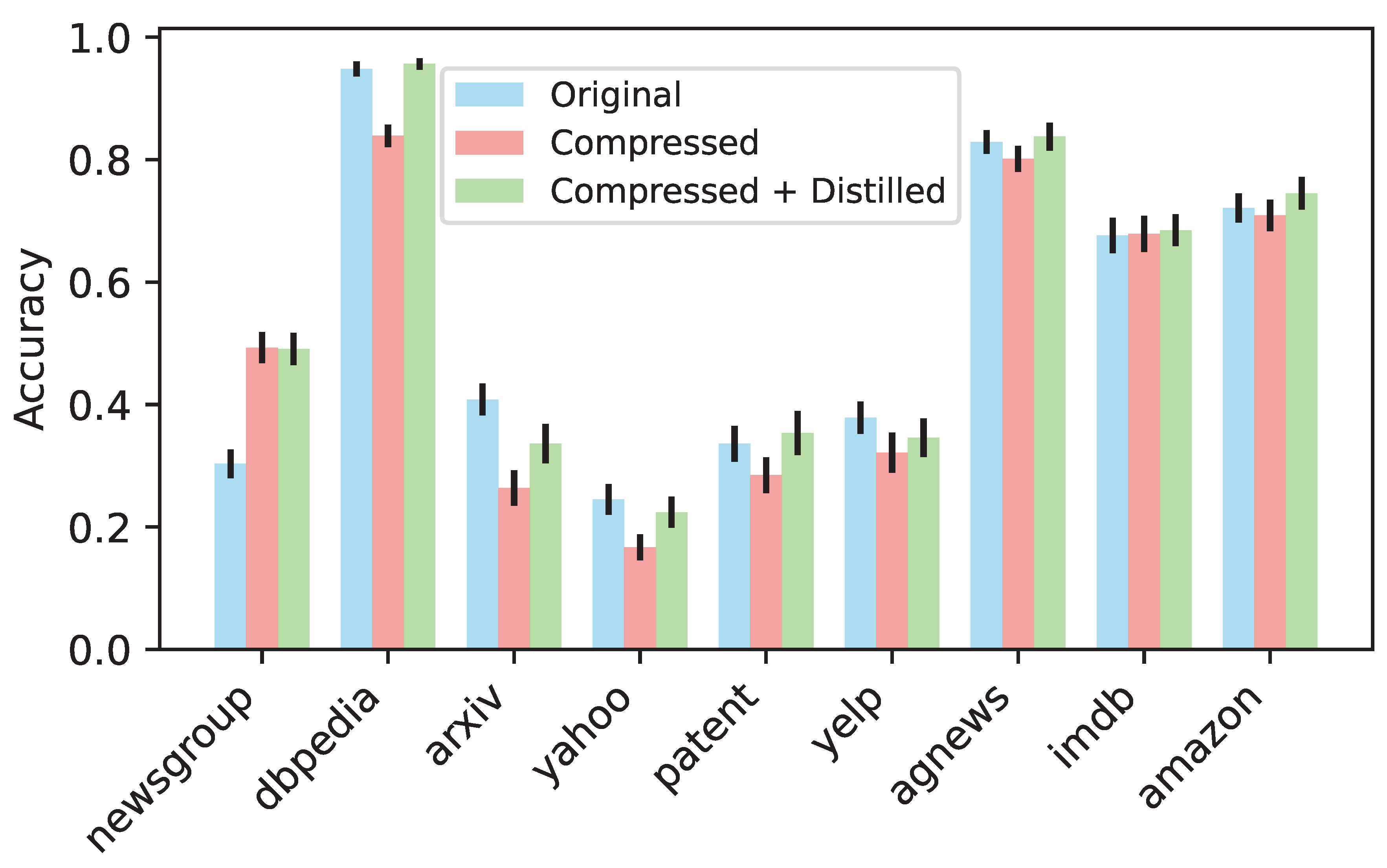

3.2. Knowledge Distillation Mitigates Accuracy Drops

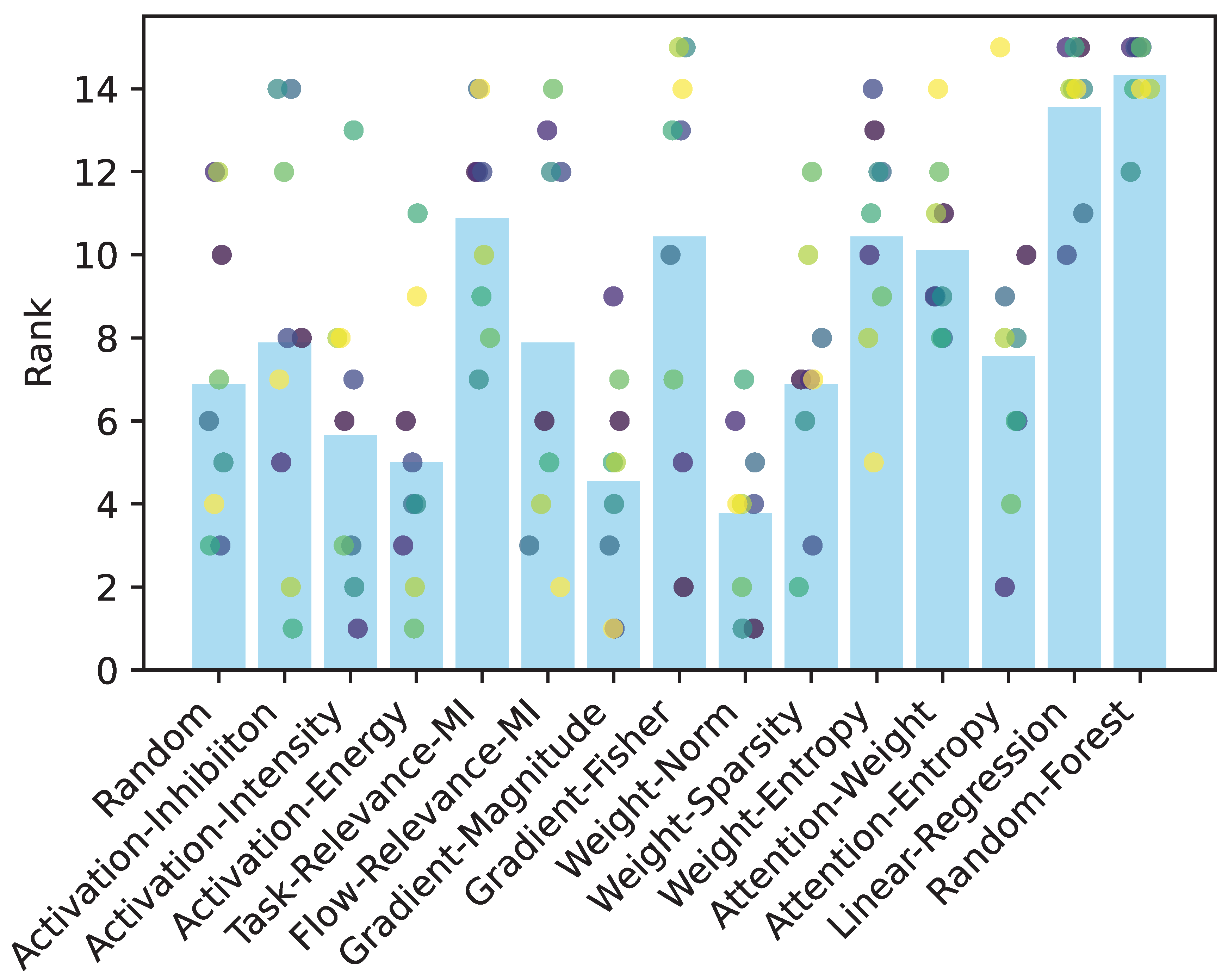

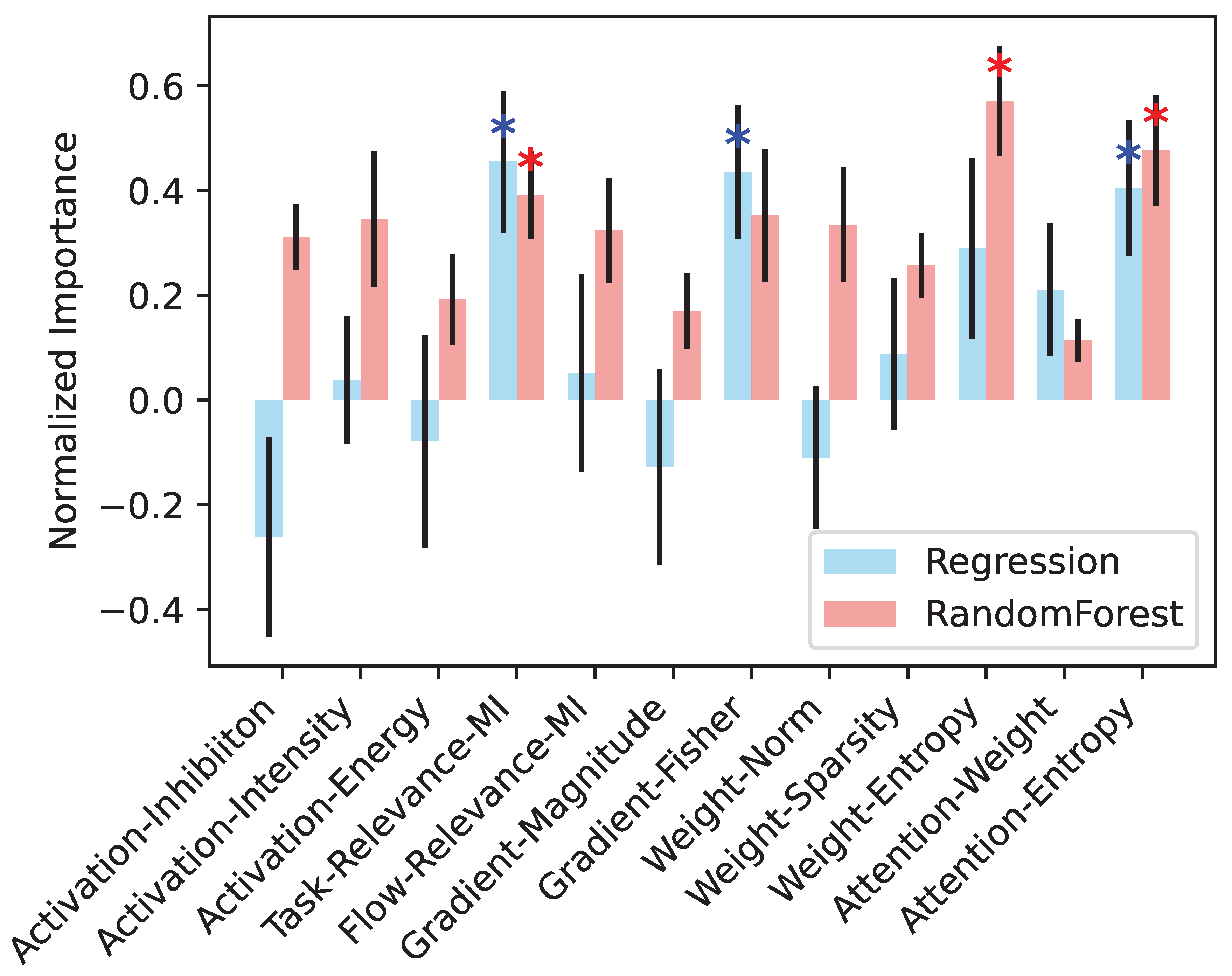

3.3. Why Does Strategic Fusion Outperform Individual Strategies?

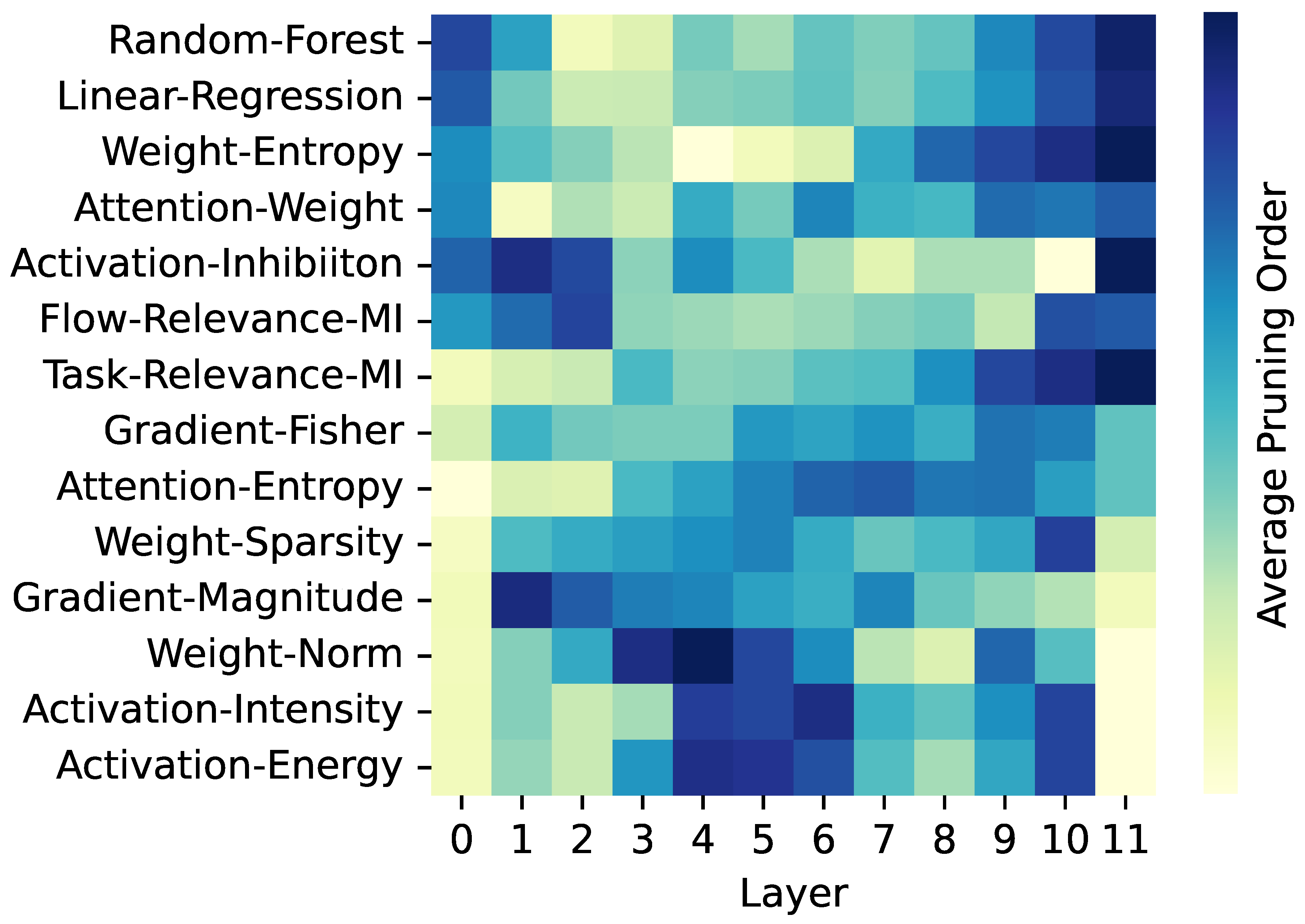

3.4. Informed Sequencing of Layer Pruning is Essential for Optimal Performance

4. Conclusions

Impact Statement

References

- Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A. N., Kaiser, L., and Polosukhin, I. Attention is all you need, 2023. URL https://arxiv.org/abs/1706.03762.

- Rahman, M. S. and Borera, E. Variations of large language models: An overview. Preprints, 2024. URL https://doi.org/10.20944/preprints202412.0811.v1.

- Strubell, E., Ganesh, A., and McCallum, A. Energy and policy considerations for deep learning in nlp, 2019. URL https://arxiv.org/abs/1906.02243.

- Han, S., Pool, J., Tran, J., and Dally, W. J. Learning both weights and connections for efficient neural networks, 2015. URL https://arxiv.org/abs/1506.02626.

- Gong, Y., Liu, L., Yang, M., and Bourdev, L. Compressing deep convolutional networks using vector quantization, 2014. URL https://arxiv.org/abs/1412.6115.

- Hinton, G., Vinyals, O., and Dean, J. Distilling the knowledge in a neural network, 2015. URL https://arxiv.org/abs/1503.02531.

- Ganguli, T. and Chong, E. K. P. Activation-based pruning of neural networks. Algorithms, 17(1), 2024. URL https://www.mdpi.com/1999-4893/17/1/48.

- Molchanov, P., Tyree, S., Karras, T., Aila, T., and Kautz, J. Pruning convolutional neural networks for resource efficient inference, 2017. URL https://arxiv.org/abs/1611.06440.

- Yang, Z., Cui, Y., Yao, X., and Wang, S. Gradient-based intra-attention pruning on pre-trained language models, 2023. URL https://arxiv.org/abs/2212.07634.

- Isik, B., Weissman, T., and No, A. An information-theoretic justification for model pruning, 2022. URL https://arxiv.org/abs/2102.08329.

- Frankle, J. and Carbin, M. The lottery ticket hypothesis: Finding sparse, trainable neural networks, 2019. URL https://arxiv.org/abs/1803.03635.

- Michel, P., Levy, O., and Neubig, G. Are sixteen heads really better than one?, 2019. URL https://arxiv.org/abs/1905.10650.

- Hooker, S., Courville, A., Clark, G., Dauphin, Y., and Frome, A. What do compressed deep neural networks forget?, 2021. URL https://arxiv.org/abs/1911.05248.

- Zhang, Q., Zuo, S., Liang, C., Bukharin, A., He, P., Chen, W., and Zhao, T. Platon: Pruning large transformer models with upper confidence bound of weight importance, 2022. URL https://arxiv.org/abs/2206.12562.

- Devlin, J., Chang, M.-W., Lee, K., and Toutanova, K. Bert: Pre-training of deep bidirectional transformers for language understanding, 2019. URL https://arxiv.org/abs/1810.04805.

- Harvey, M. A., Saal, H. P., Dammann, J. F., and Bensmaia, S. J. Multiplexing stimulus information through rate and temporal codes in primate somatosensory cortex. PLoS Biol, 11(5):e1001558, 2013. URL https://doi.org/10.1371/journal.pbio.1001558.

- Znamenskiy, P., Kim, M. H., Muir, D. R., Iacaruso, M. F., Hofer, S. B., and Mrsic-Flogel, T. D. Functional specificity of recurrent inhibition in visual cortex. Neuron, 112(6):991–1000.e8, 2024. URL https://doi.org/10.1016/j.neuron.2023.12.013.

- Xu, Z., Zhai, Y., and Kang, Y. Mutual information measure of visual perception based on noisy spiking neural networks. Front Neurosci, 17:1155362, 2023. URL https://doi.org/10.3389/fnins.2023.1155362.

- Rahman, M. S. and Yau, J. M. Somatosensory interactions reveal feature-dependent computations. J Neurophysiol, 122(1):5–21, 2019. URL https://doi.org/10.1152/jn.00168.2019.

- Felleman, D. J. and Essen, D. C. V. Distributed hierarchical processing in the primate cerebral cortex. Cereb Cortex, 1(1):1–47, 1991. URL https://doi.org/10.1093/cercor/1.1.1-a.

- Magee, J. C. and Grienberger, C. Synaptic plasticity forms and functions. Annu Rev Neurosci, 43:95–117, 2020. URL https://doi.org/10.1146/annurev-neuro-090919-022842.

- Paolicelli, R. C., Bolasco, G., Pagani, F., Maggi, L., Scianni, M., Panzanelli, P., Giustetto, M., Ferreira, T. A., Guiducci, E., Dumas, L., Ragozzino, D., and Gross, C. T. Synaptic pruning by microglia is necessary for normal brain development. Science, 333(6048):1456–1458, 2011. URL https://www.science.org/doi/10.1126/science.1202529.

- Convento, S., Rahman, M. S., and Yau, J. M. Selective attention gates the interactive crossmodal coupling between perceptual systems. Curr Biol, 28(5):746–752, 2018. URL https://doi.org/10.1016/j.cub.2018.01.021.

- Mesulam, M. M. From sensation to cognition. Brain, 121(6):1013–1052, 1998. URL https://doi.org/10.1093/brain/121.6.1013.

- Rahman, M. S., Barnes, K. A., Crommett, L. E., Tommerdahl, M., and Yau, J. M. Auditory and tactile frequency representations are co-embedded in modality-defined cortical sensory systems. NeuroImage, 215:116837, 2020. URL https://doi.org/10.1016/j.neuroimage.2020.116837.

- Muralidharan, S., Sreenivas, S. T., Joshi, R., Chochowski, M., Patwary, M., Shoeybi, M., Catanzaro, B., Kautz, J., and Molchanov, P. Compact language models via pruning and knowledge distillation, 2024. URL https://arxiv.org/abs/2407.14679.

- Mazurek, M. E., Roitman, J. D., Ditterich, J., and Shadlen, M. N. A role for neural integrators in perceptual decision making. Cerebral Cortex, 13(11):1257–1269, 2003. URL https://doi.org/10.1093/cercor/bhg097.

- Wu, C.-J., Acun, B., Raghavendra, R., and Hazelwood, K. Beyond efficiency: Scaling ai sustainably, 2024. URL https://arxiv.org/abs/2406.05303.

- Kim, S., Shen, S., Thorsley, D., Gholami, A., Kwon, W., Hassoun, J., and Keutzer, K. Learned token pruning for transformers, 2022. URL https://arxiv.org/abs/2107.00910.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).