Submitted:

10 December 2025

Posted:

11 December 2025

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

2. Related Work

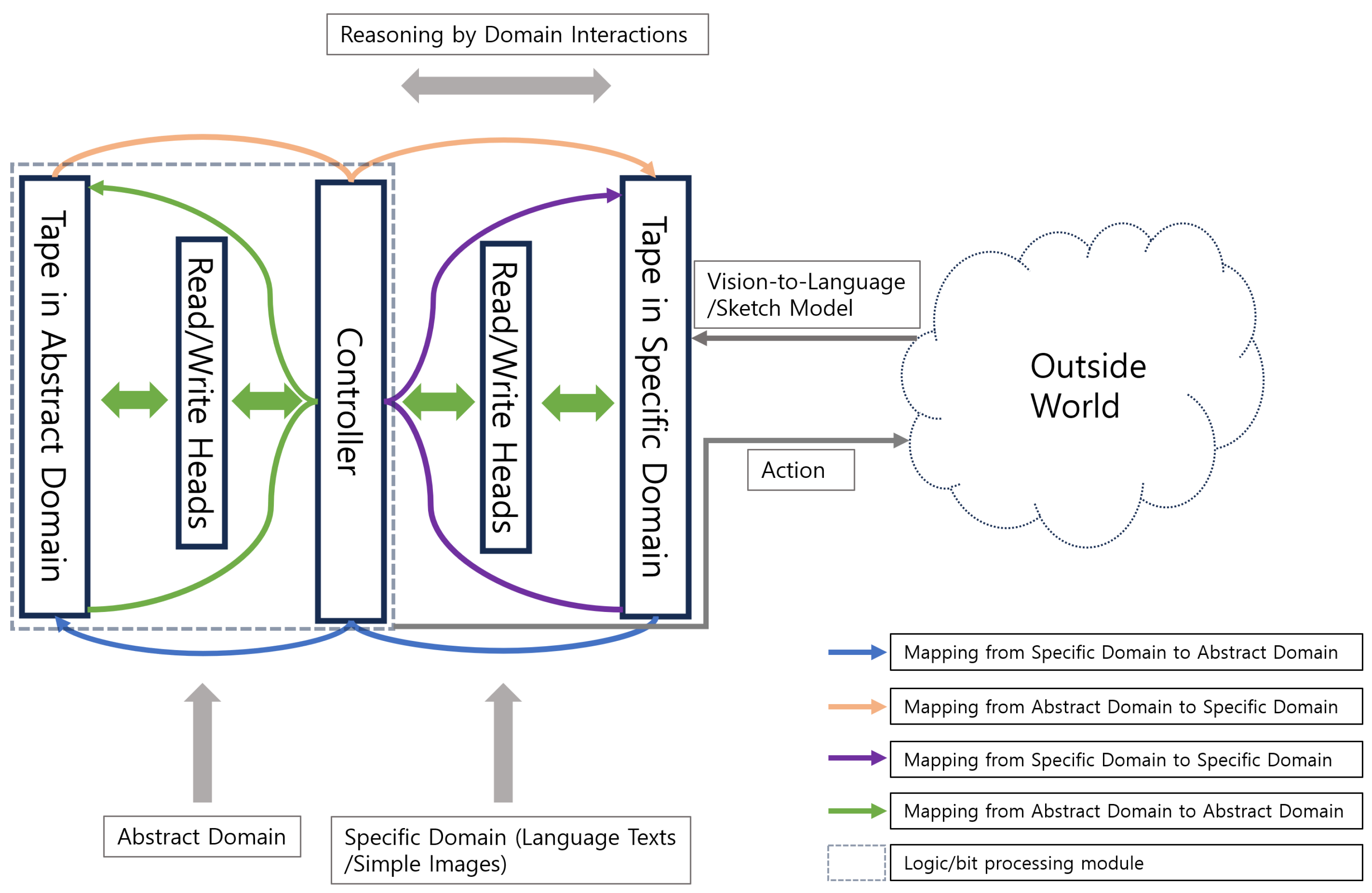

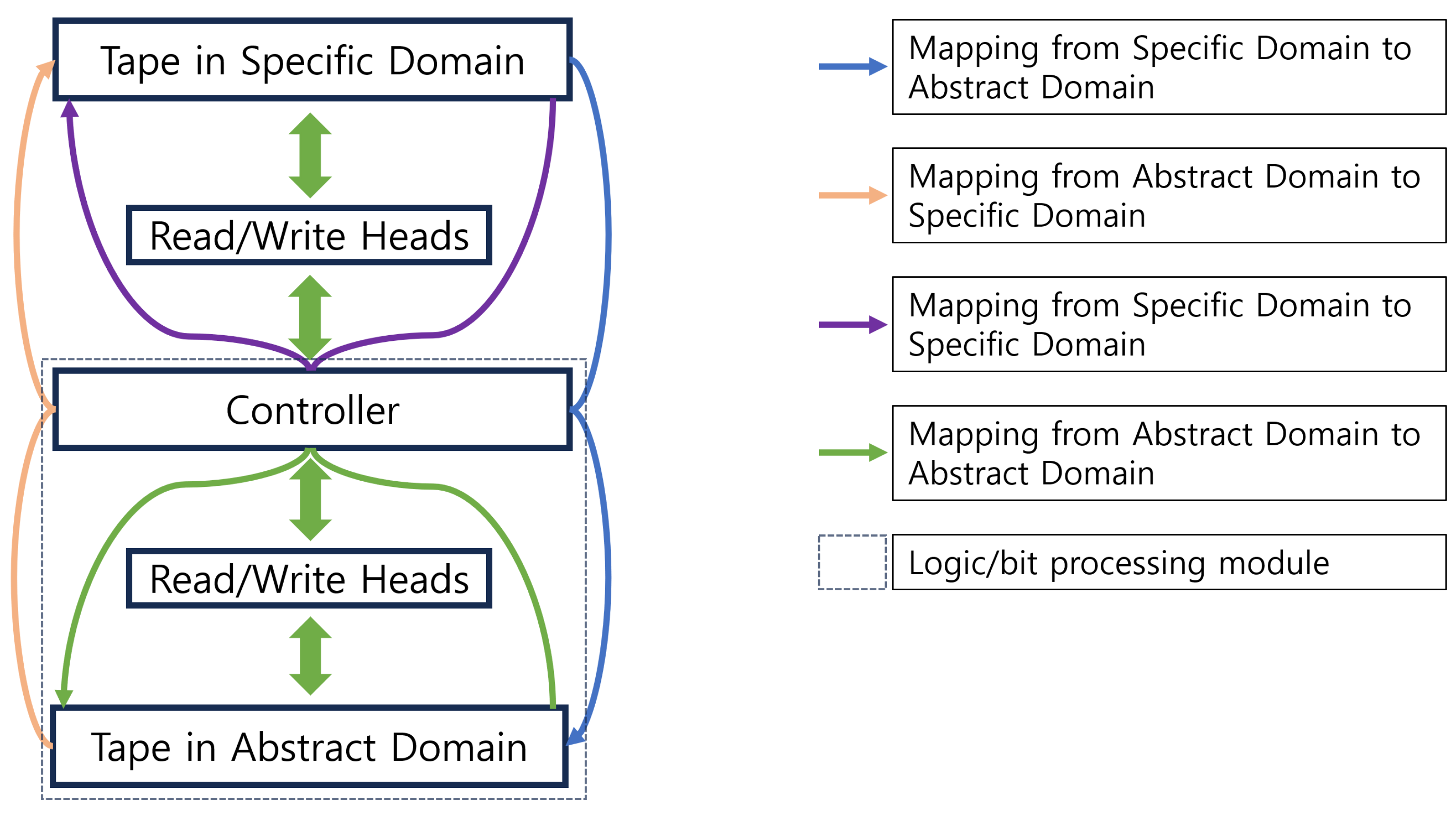

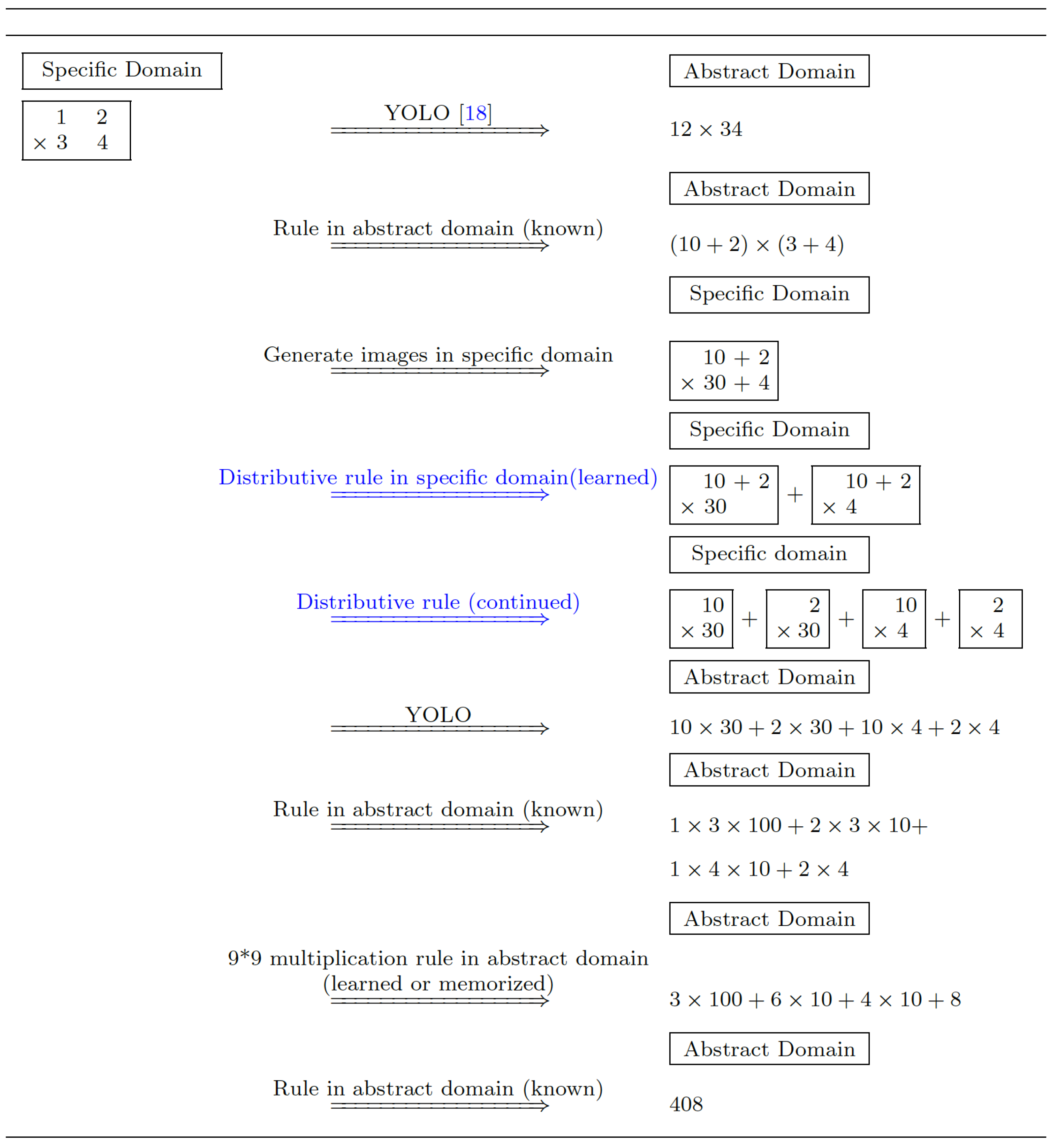

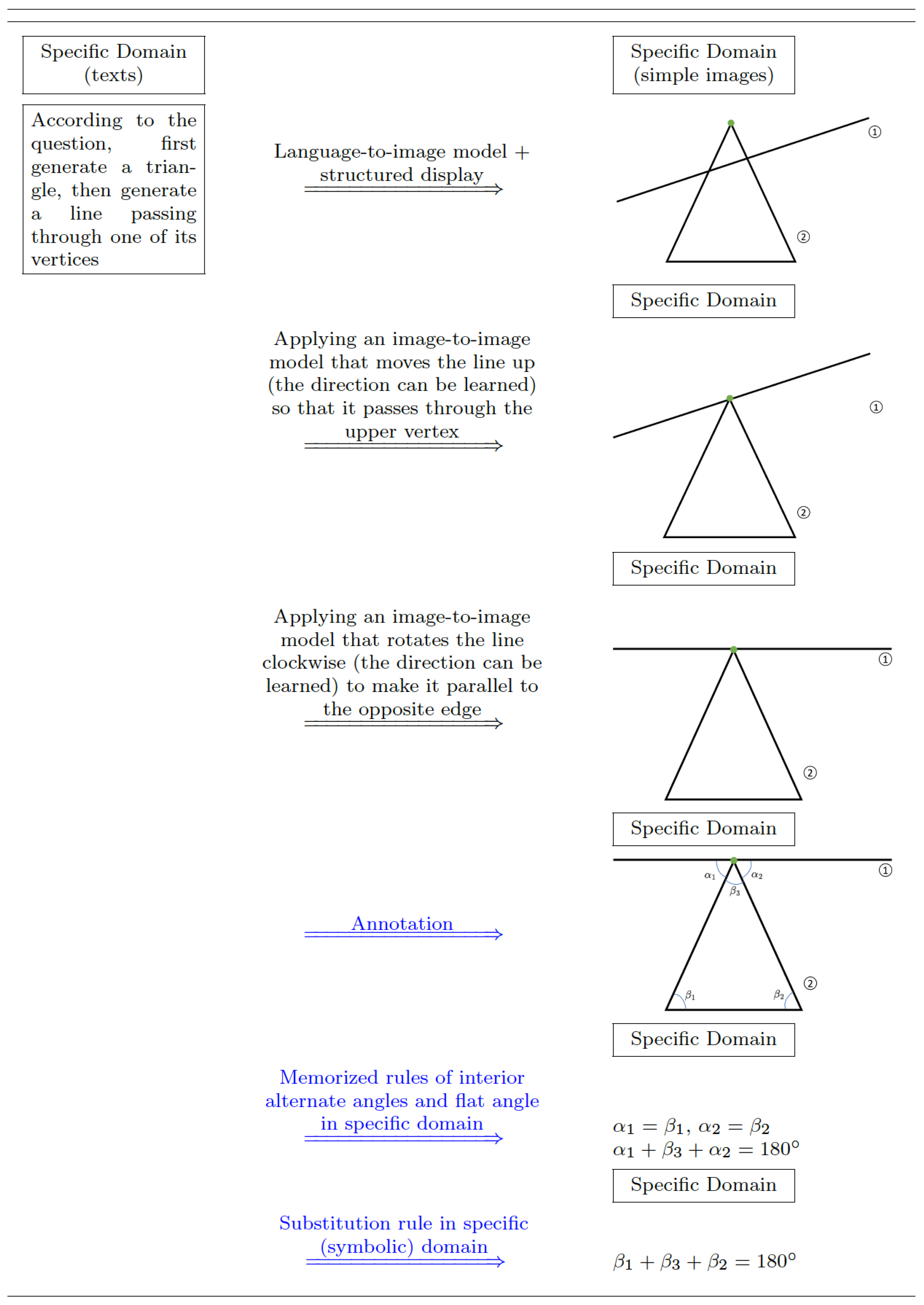

3. The Proposed Architecture

4. Experiment

| 1234*3456, | 12345*34567, | 123456*345678, | 1234567*3456789, | 12345678*34567891 |

| 2345*4567, | 23456*45678, | 234567*456789, | 2345678*4567891, | 23456789*45678912 |

| 3456*5678, | 34567*56789, | 345678*567891, | 3456789*5678912, | 34567891*56789123 |

| 4567*6789, | 45678*67891, | 456789*678912, | 4567891*6789123, | 45678912*67891234 |

5. Conclusion and Discussion

Appendix A. Non-Turing Robotics

| While | The target is not attained And No collision occurs |

|---|---|

| Step 1 Get images of real objects taken from the top and the right side | |

| with respect to the robot base | |

| Step 2 Apply a computer vision model to convert the images to | |

| 3D sketches in a simplified simulative 3D space in which | |

| cylinders and spheres are used to sketch the robot, and | |

| smaller collision spheres to sketch other objects with a unit | |

| arrow in the simplified 3D simulative space originating from | |

| the end-effector point towards the goal | |

| (Outside World to Specific Domain) | |

| Step 3 Get 3D voxels by viewing the 3D sketches in the robot base’s | |

| coordinate system | |

| (Specific Domain to Specific Domain) | |

| Step 4 Apply a sketch-to-direction model 1 to convert the 3D voxels | |

| to an escape direction in the 3D sketch space with a scale in | |

| range(, , 50) (divide the 3D space evenly into 36*36 | |

| segments with 36*36 representative directions so that the model | |

| is a classification model), or to an additional class “go directly | |

| to the target (zero escape direction)" | |

| (Specific Domain to Abstract Domain) | |

| Step 5 Control the robot to move in the real world in the direction 2 | |

| synthesized by the outputted (escape direction, scale) pairs | |

| and the arrow direction (with a safe speed) | |

| (Abstract Domain to Outside World) |

- 1)

- In their structure, the virtual world is supposed to be as close to the real world as possible. Therefore, their virtual world is more static and its complexity is more like the real world’s, compared with the actively generated, simpler, and more dynamic sketch space in the specific domain of our robotic architecture.

- 2)

- The reasoning is hence efficiently realized with low cost by the interactions inter and intra the abstract domain and the specific domain of the proposed Ren machine-based robotic architecture.

- 3)

- Their structure based on the Turing machine does not have an actual sensor/camera observing the virtual world. Instead, they only have the inner parameters to reconstruct the complex virtual world. As contrast, on our robotic architecture, the contents in the workspace (the additional tape) in the specific domain can be efficiently observed and mapped into various domains, significantly facilitating the reasoning process.

References

- Holyoak, K.J. Why I am not a Turing machine. Journal of Cognitive Psychology 2024, 1–12. [Google Scholar] [CrossRef]

- MacLennan, B.J. Natural computation and non-Turing models of computation. Theoretical computer science 2004, 317, 115–145. [Google Scholar] [CrossRef]

- Harel, D.; Marron, A. The Human-or-Machine Issue: Turing-Inspired Reflections on an Everyday Matter. Communications of the ACM 2024, 67, 62–69. [Google Scholar] [CrossRef]

- Brynjolfsson, E. The turing trap: The promise & peril of human-like artificial intelligence. Augmented education in the global age 2023, 103–116. [Google Scholar]

- Mitchell, M. The Turing Test and our shifting conceptions of intelligence. 2024. [Google Scholar] [CrossRef] [PubMed]

- Hoffmann, C.H. Is AI intelligent? An assessment of artificial intelligence, 70 years after Turing. Technology in Society 2022, 68, 101893. [Google Scholar] [CrossRef]

- MacLennan, B.J. Mapping the territory of computation including embodied computation. In Handbook of Unconventional Computing VOLUME 1: Theory; 2022; pp. 1–30. [Google Scholar]

- Graves, A. Neural Turing Machines. arXiv 2014, arXiv:1410.5401. [Google Scholar] [CrossRef]

- Hochreiter, S.; Schmidhuber, J. Long Short-term Memory. In Neural Computation MIT-Press; 1997. [Google Scholar]

- Hennie, F.C.; Stearns, R.E. Two-tape simulation of multitape Turing machines. Journal of the ACM (JACM) 1966, 13, 533–546. [Google Scholar] [CrossRef]

- Maass, W.; Schnitger, G.; Szemerédi, E.; Turán, G. Two tapes versus one for off-line Turing machines. Computational complexity 1993, 3, 392–401. [Google Scholar] [CrossRef]

- Verbaan, P.R.A. The computational complexity of evolving systems. Thesis, Utrecht University, 2006. [Google Scholar]

- Sims, K. Interactive evolution of dynamical systems. In Proceedings of the Toward a practice of autonomous systems: Proceedings of the first European conference on artificial life, 1992; pp. 171–178. [Google Scholar]

- Van Leeuwen, J.; Wiedermann, J. Techn. Report UU-CS-2001-02; A computational model of interaction in embedded systems. Dept of Computer Science: Utrecht University 2001.

- Van Leeuwen, J.; Wiedermann, J. Beyond the Turing limit: Evolving interactive systems. In Proceedings of the International Conference on Current Trends in Theory and Practice of Computer Science, 2001; Springer; pp. 90–109. [Google Scholar]

- Touvron, H.; Lavril, T.; Izacard, G.; Martinet, X.; Lachaux, M.A.; Lacroix, T.; Rozière, B.; Goyal, N.; Hambro, E.; Azhar, F.; et al. Llama: Open and efficient foundation language models. arXiv 2023, arXiv:2302.13971. [Google Scholar] [CrossRef]

- OpenAI. ChatGPT. 2023.

- Redmon, J. You only look once: Unified, real-time object detection. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2016. [Google Scholar]

- Microsoft. Microsoft Copilot: Your AI companion. 2023. [Google Scholar]

- Khatib, O. Real-time obstacle avoidance for manipulators and mobile robots. The international journal of robotics research 1986, 5, 90–98. [Google Scholar] [CrossRef]

- Cheng, C.A.; Mukadam, M.; Issac, J.; Birchfield, S.; Fox, D.; Boots, B.; Ratliff, N. Rmpflow: A geometric framework for generation of multitask motion policies. IEEE Transactions on Automation Science and Engineering 2021, 18, 968–987. [Google Scholar] [CrossRef]

- Durante, Z.; Huang, Q.; Wake, N.; Gong, R.; Park, J.S.; Sarkar, B.; Taori, R.; Noda, Y.; Terzopoulos, D.; Choi, Y.; et al. Agent AI: Surveying the horizons of multimodal interaction. arXiv 2024, arXiv:2401.03568. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).