Submitted:

27 December 2024

Posted:

30 December 2024

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

- We have developed an automated framework for generating diverse and high-quality RAG data tailored to the geriatric medicine domain. This framework leverages publicly available disease encyclopedia information from authoritative Chinese medical websites, resulting in the creation of xywyRAGQA, the Chinese medical knowledge RAG QA dataset;

- We have applied the RAG strategy to conduct full-parameter fine-tuning of LLMs for the geriatric medicine domain. By integrating external knowledge sources, our approach significantly enhances the model’s ability to accurately identify and utilize the correct retrieved segments;

- We have designed evaluation metrics to assess the professionalism and accuracy of the model. Experimental results demonstrate that, compared to "general LLM+RAG" strategy and "domain-finetuned LLM+RAG" strategy, our proposed method achieves notable improvements in geriatric medical QA tasks while also delivering outstanding performance in general domain QA tasks.

2. Related Work

2.1. Domain Adaptation Strategies for LLM

2.2. Enhancing Domain QA with RAG

2.3. Optimizing Fine-Tuning and Data Quality for Domain-Specific Models

3. Methodology

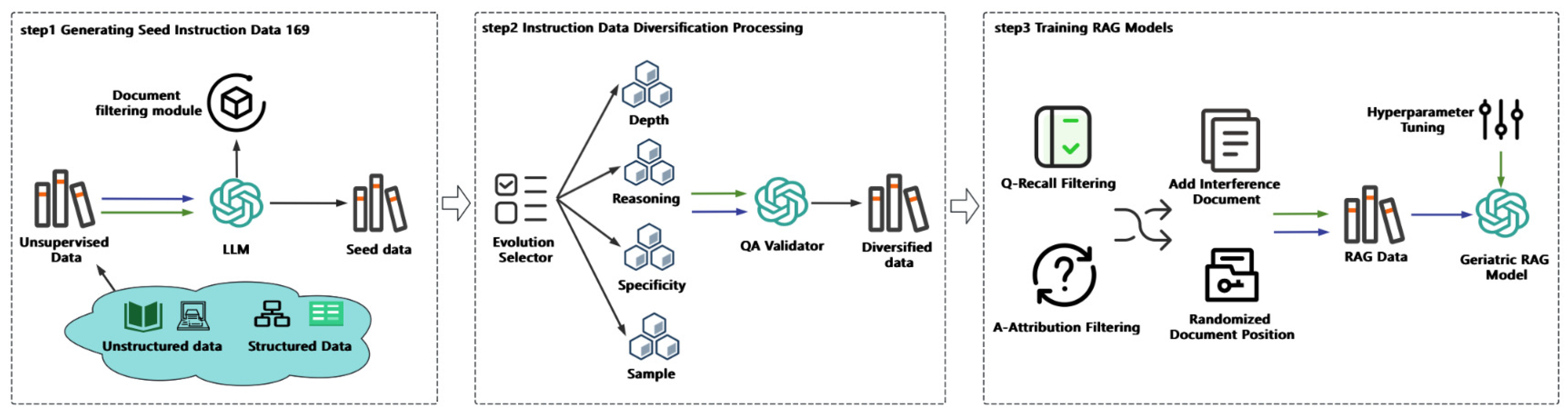

3.1. Generating Seed Instruction Data

3.1.1. Unsupervised Knowledge Acquisition

3.1.2. Geriatric Medical Seed Instruction Data Generation

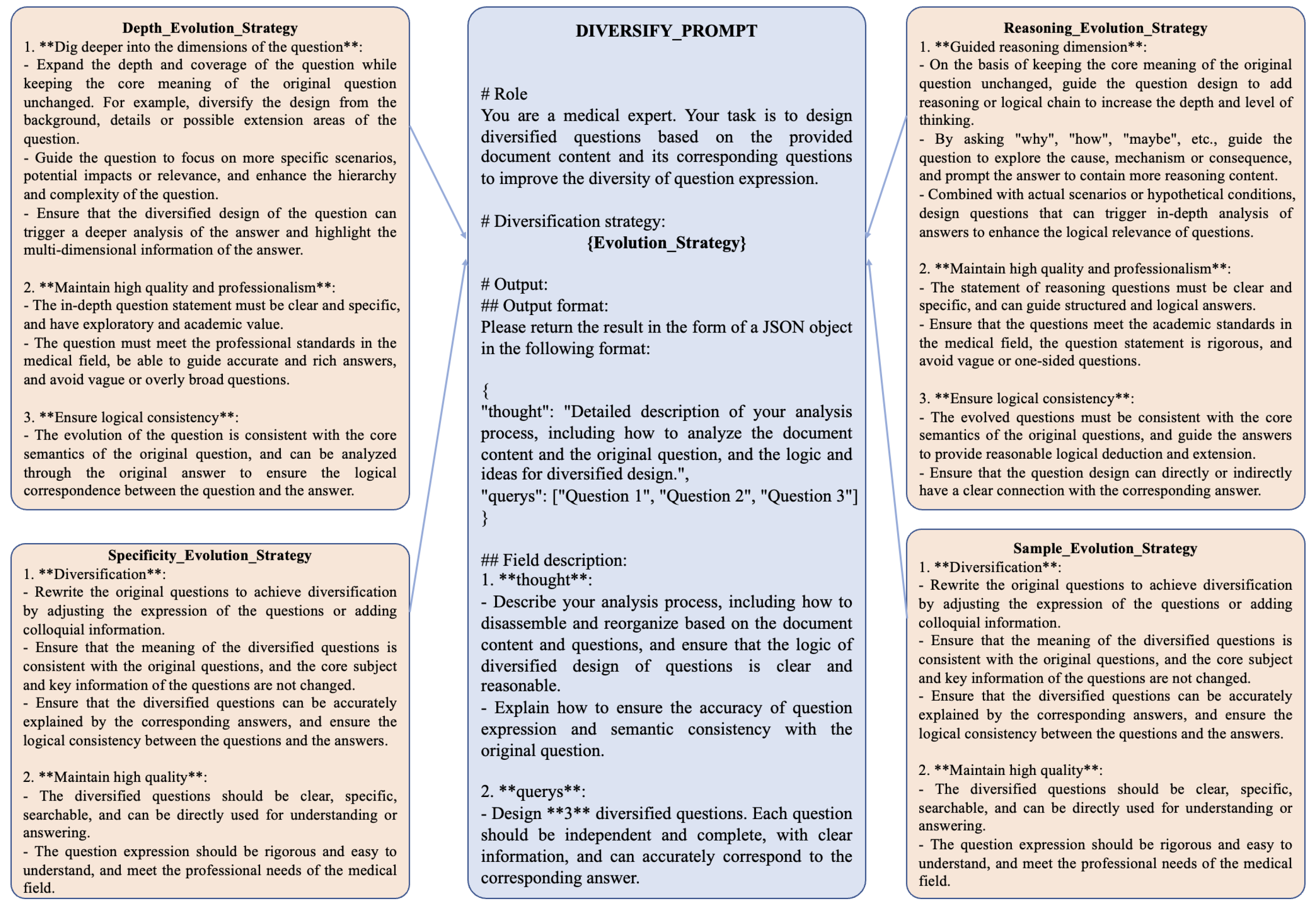

3.2. Instruction Data Diversification Processing

- Depth Evolution Strategy: This strategy increases the depth of questions by introducing more intricate scenarios or requiring multi-step reasoning for responses;

- Reasoning Evolution Strategy: This approach emphasizes logical progression and causal relationships, encouraging the generation of questions that require comprehensive inferential reasoning;

- Specificity Evolution Strategy: This method focuses on creating highly specific questions tailored to individual conditions or unique patient scenarios, moving away from generic templates;

- Sample Evolution Strategy: This strategy diversifies the dataset by introducing variations in patient demographics, symptom descriptions, or contextual settings, simulating a wide range of real-world medical cases.

3.3. Instruction Data Quality Filtering

3.3.1. Question Recall Rate

3.3.2. Answer Attributability

- : Total number of sentences in the answer.

- : Subset of sentences in the answer identified as entailing the document content.

- : Subset of sentences in the answer identified as contradicting the document content.

- : Subset of sentences in the answer identified as neutral to the document content.

3.4. Training RAG Models

- Randomized placement of correct-answer documents to prevent the model from developing position-based biases;

- Selection of relevant distractor documents to simulate realistic retrieval scenarios, improve discrimination in challenging contexts, and enhance the model’s domain knowledge.

3.4.1. Set Relevant Distractor Documents

3.4.2. Randomly Place Correct Documents

3.5. Evaluation Metrics

3.5.1. Domain Metric

- : Number of true positives.

- : Number of false positives.

- : Number of false negatives.

- : Weight assigned to the Answer Correctness, with a default value of 0.75.

- : Weight assigned to Semantic Similarity, with a default value of 0.25.

3.5.2. General Metric

- LSHT: A Chinese classification task that involves categorizing news articles into 24 distinct categories;

- DuReader: A task requiring the answering of relevant Chinese questions based on multiple retrieved documents;

- MultiFieldQA_ZH: A question-answering task based on a single document, where the documents span diverse domains;

- VCSum: A summarization task that entails generating concise summaries of Chinese meeting transcripts;

- Passage_Retrieval_ZH: A retrieval task where, given several Chinese passages from the C4 dataset, the model must identify which passage corresponds to a given summary.

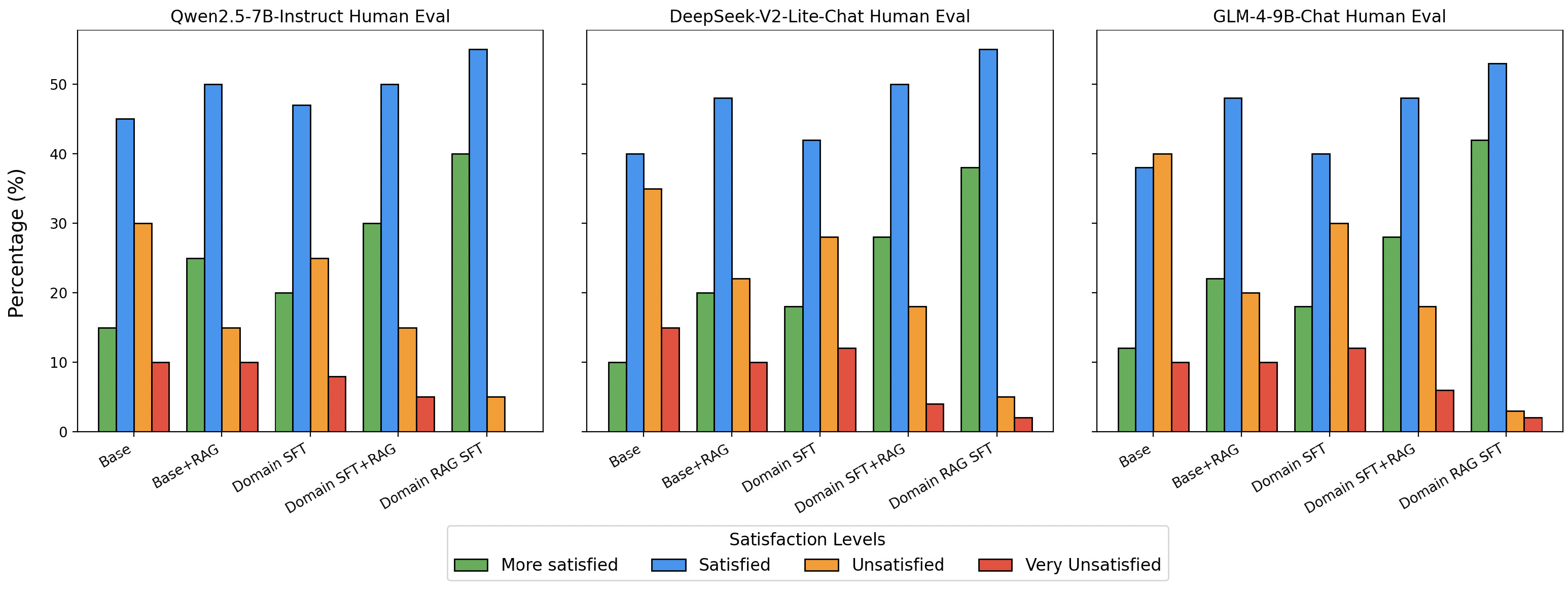

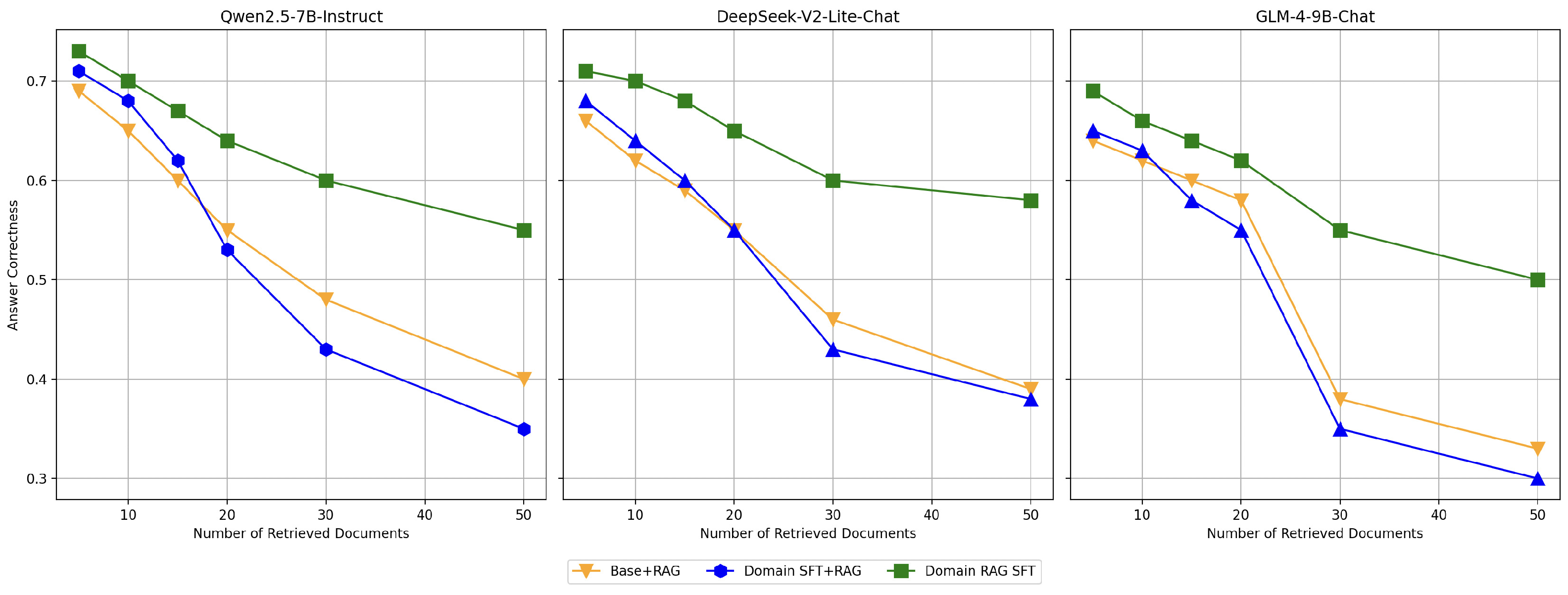

4. Results and Discussion

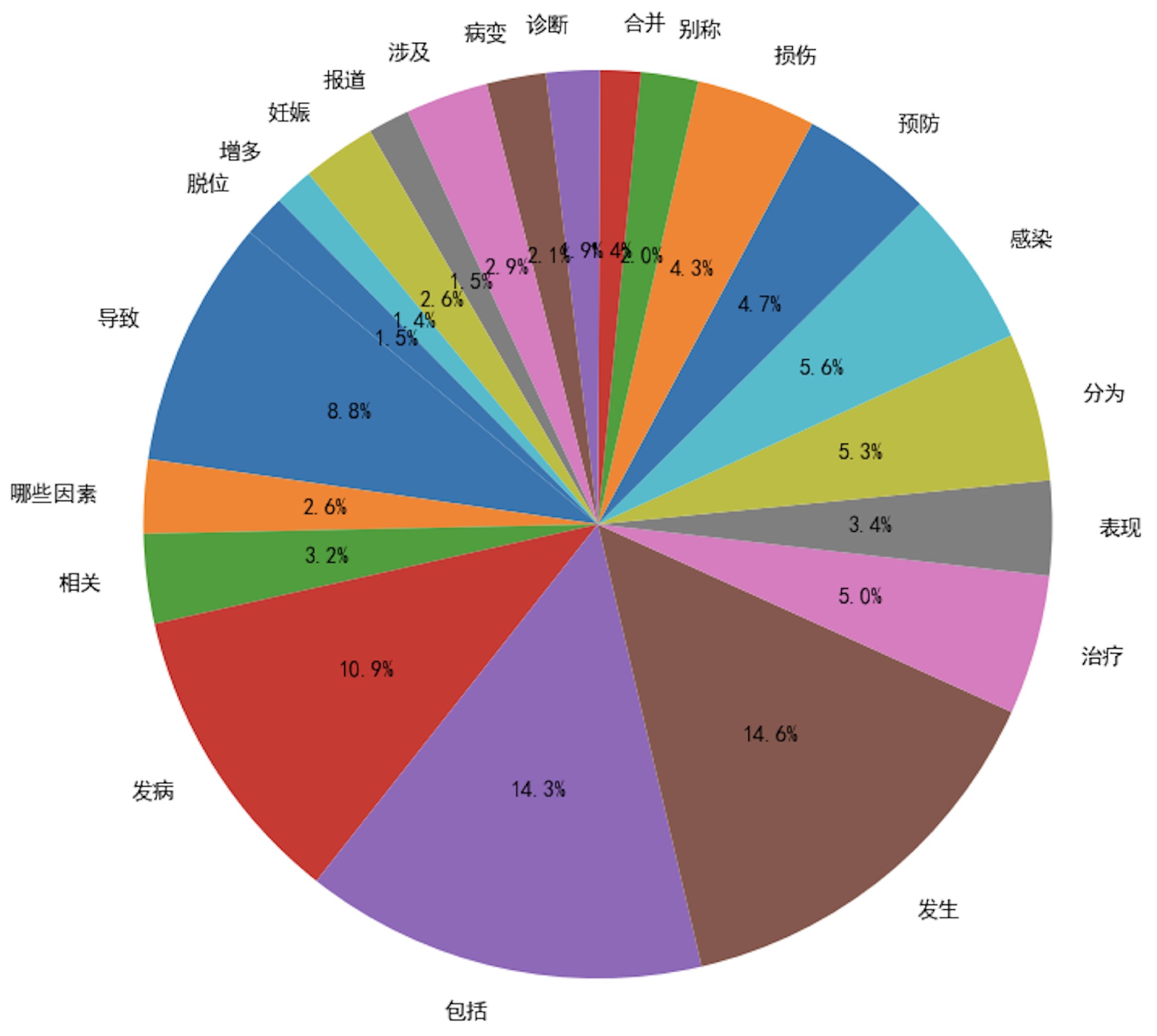

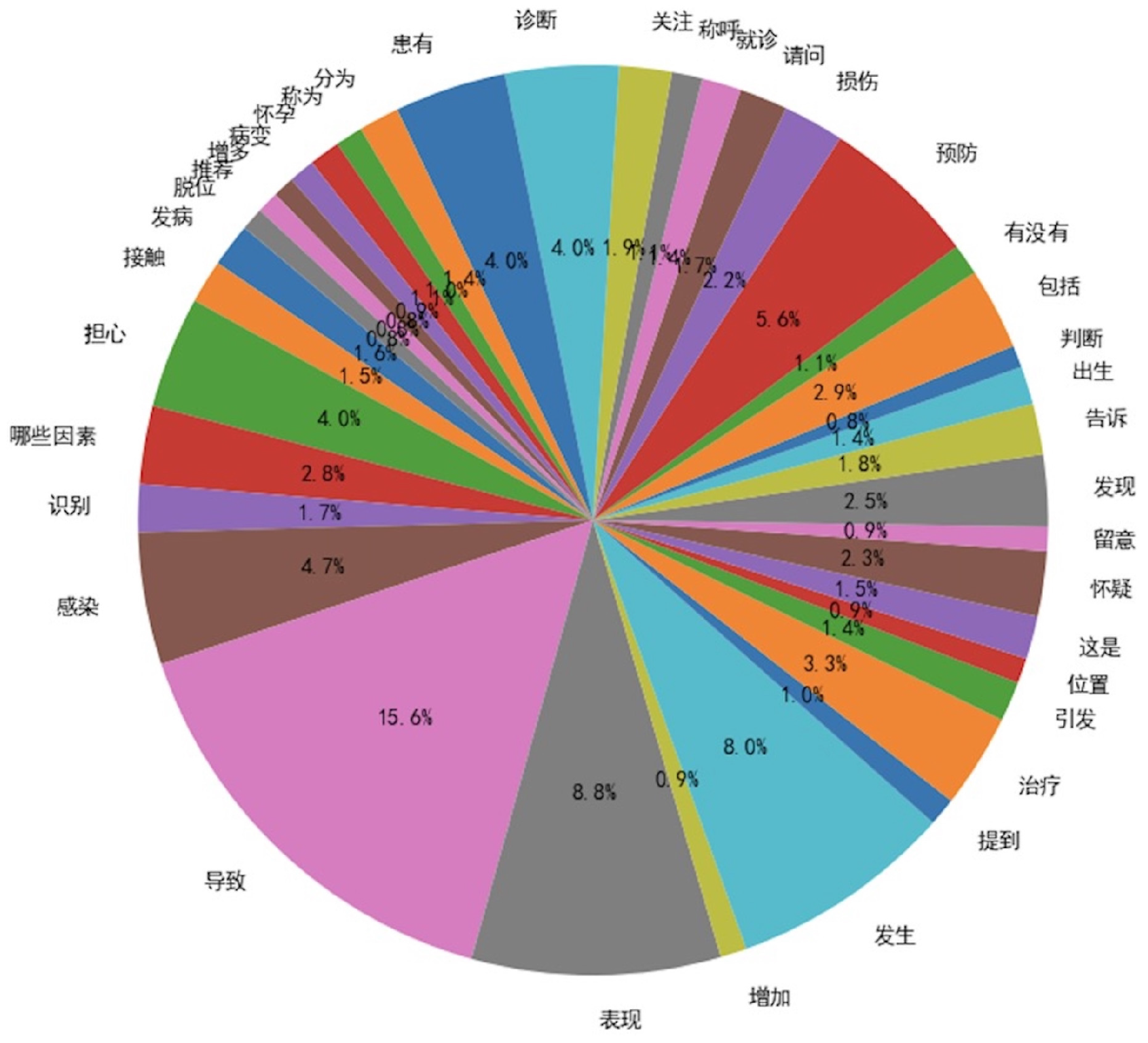

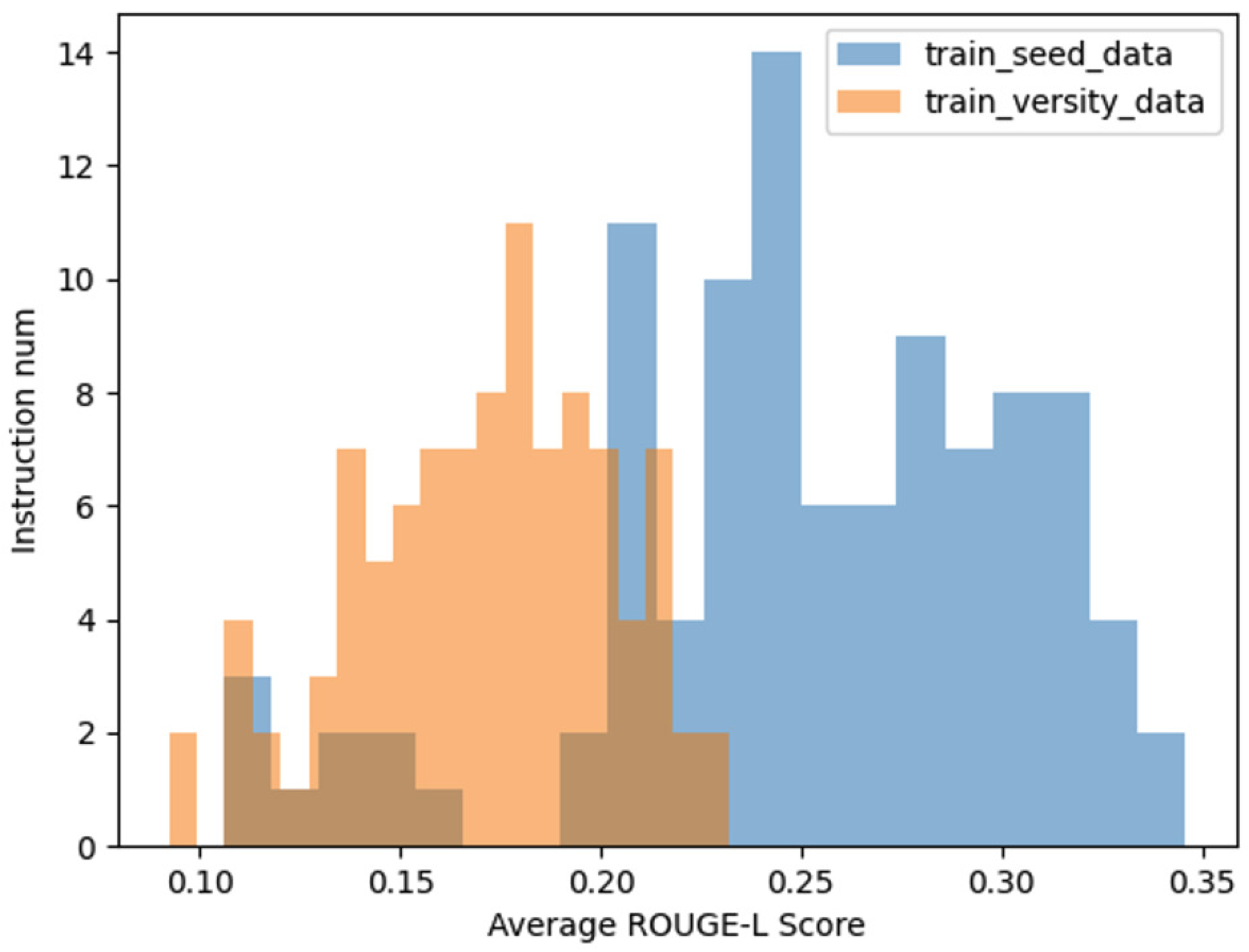

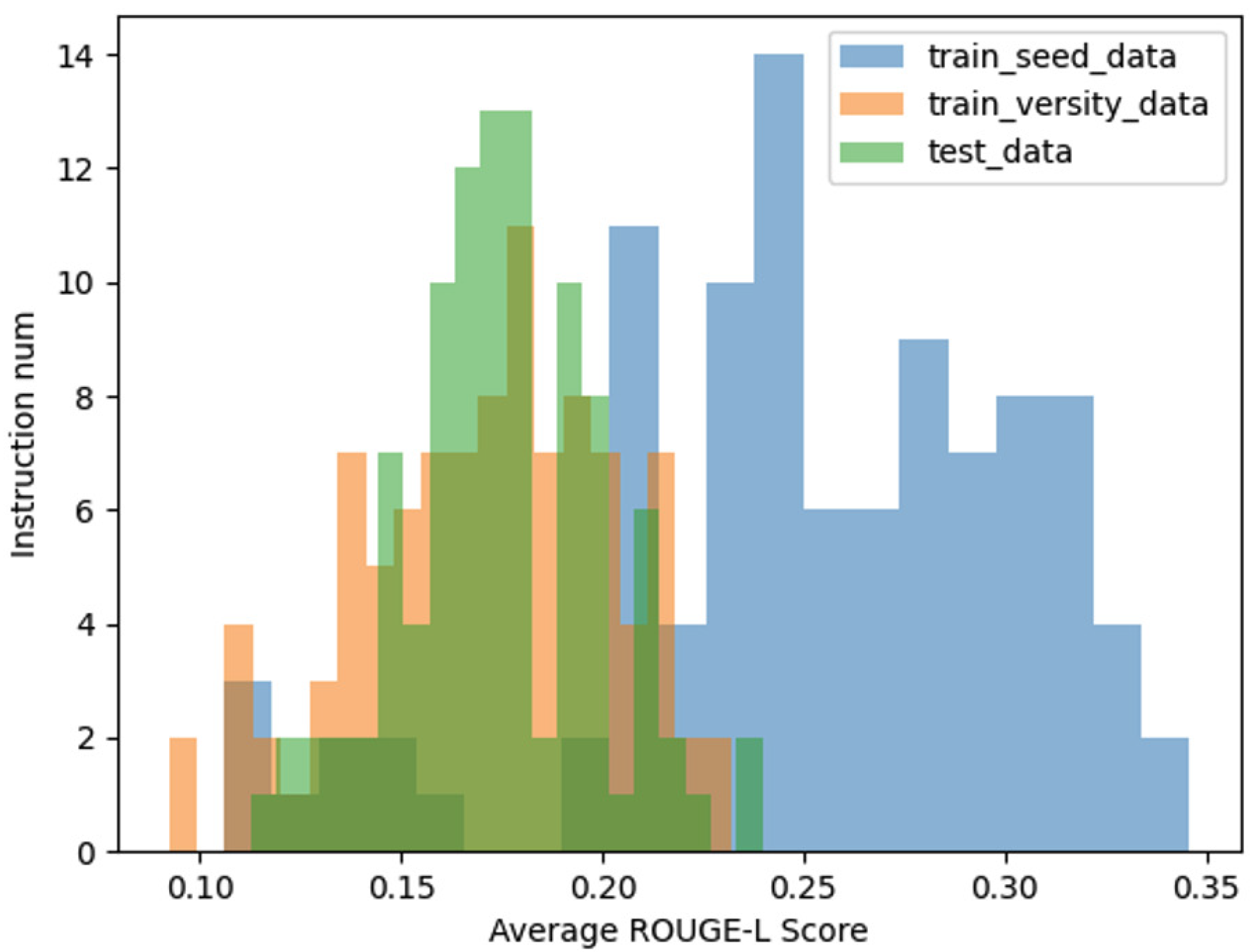

4.1. Data Diversity

- Verb Usage Frequency: A higher number of verbs exceeding a predefined frequency threshold indicates greater diversity;

- ROUGE-L: A lower average ROUGE-L score within the same dataset signifies higher diversity.

4.2. Model Training

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviations

| QA | Question-answering |

| LLM | Large Language Model |

| RAG | Retrieval-augmented Generation |

| SFT | Supervised Fine-Tuning |

References

- Brown, T.; Mann, B.; Ryder, N.; et al. Language models are few-shot learners. Adv. Neural Inf. Process. Syst. 2020, 33, 1877–1901. [Google Scholar]

- Lazaridou, A.; Gribovskaya, E.; Stokowiec, W.; et al. Internet-augmented language models through few-shot prompting for open-domain question answering. arXiv Preprint 2022, arXiv:2203.05115. [Google Scholar]

- Ni, J.; Bingler, J.; Colesanti-Senni, C.; et al. Chatreport: Democratizing sustainability disclosure analysis through LLM-based tools. arXiv Preprint 2023, arXiv:2307.15770. [Google Scholar]

- Ji, Z.; Lee, N.; Frieske, R.; et al. Survey of hallucination in natural language generation. ACM Comput. Surv. 2023, 55, 1–38. [Google Scholar] [CrossRef]

- Hu, X.; Chen, J.; Li, X.; et al. Do large language models know about facts? arXiv Preprint 2023, arXiv:2310.05177. [Google Scholar]

- Gao, Y.; Xiong, Y.; Gao, X.; et al. Retrieval-augmented generation for large language models: A survey. arXiv Preprint 2023, arXiv:2312.10997. [Google Scholar]

- Wang, H.; Liu, C.; Xi, N.; et al. Huatuo: Tuning llama model with Chinese medical knowledge. arXiv Preprint 2023, arXiv:2304.06975. [Google Scholar]

- Chen, Y.; Wang, Z.; Xing, X.; et al. Bianque: Balancing the questioning and suggestion ability of health LLMs with multi-turn health conversations polished by ChatGPT. arXiv Preprint 2023, arXiv:2310.15896. [Google Scholar]

- Li, L.; Wang, P.; Yan, J.; et al. Real-world data medical knowledge graph: Construction and applications. Artif. Intell. Med. 2020, 103, 101817. [Google Scholar] [CrossRef]

- Lewis, P.; Ott, M.; Du, J.; et al. Pretrained language models for biomedical and clinical tasks: Understanding and extending the state-of-the-art. In Proceedings of the 3rd Clinical Natural Language Processing Workshop, Online, 19 November 2020; pp. 146–157. [Google Scholar]

- Zhang, T.; Cai, Z.; Wang, C.; et al. SMedBERT: A knowledge-enhanced pre-trained language model with structured semantics for medical text mining. arXiv Preprint 2021, arXiv:2108.08983. [Google Scholar]

- Li, L.; Wang, P.; Yan, J.; et al. Real-world data medical knowledge graph: Construction and applications. Artif. Intell. Med. 2020, 103, 101817. [Google Scholar] [CrossRef] [PubMed]

- Lewis, P.; Perez, E.; Piktus, A.; et al. Retrieval-augmented generation for knowledge-intensive NLP tasks. Adv. Neural Inf. Process. Syst. 2020, 33, 9459–9474. [Google Scholar]

- Borgeaud, S.; Mensch, A.; Hoffmann, J.; et al. Improving language models by retrieving from trillions of tokens. In Proceedings of the International Conference on Machine Learning, Baltimore, MD, USA, 17–23 July 2022; PMLR. pp. 2206–2240. [Google Scholar]

- Frisoni, G.; Mizutani, M.; Moro, G.; et al. Bioreader: A retrieval-enhanced text-to-text transformer for biomedical literature. In Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing, Online, 7–11 December 2022; pp. 5770–5793. [Google Scholar]

- Naik, A.; Parasa, S.; Feldman, S.; et al. Literature-augmented clinical outcome prediction. arXiv Preprint 2021, arXiv:2111.08374. [Google Scholar]

- Zakka, C.; Shad, R.; Chaurasia, A.; et al. Almanac—retrieval-augmented language models for clinical medicine. NEJM AI 2024, 1, AIoa2300068. [Google Scholar] [CrossRef] [PubMed]

- Pal, A.; Umapathi, L.K.; Sankarasubbu, M. MedMCQA: A large-scale multi-subject multi-choice dataset for medical domain question answering. In Proceedings of the Conference on Health, Inference, and Learning, Virtual, 20–23 April 2022; PMLR; pp. 248–260. [Google Scholar]

- Jin, Q.; Dhingra, B.; Liu, Z.; et al. PubMedQA: A dataset for biomedical research question answering. arXiv Preprint 2019, arXiv:1909.06146. [Google Scholar]

- Fan, A.; Jernite, Y.; Perez, E.; et al. ELI5: Long form question answering. arXiv Preprint 2019, arXiv:1907.09190. [Google Scholar]

- Hinton, G. Distilling the knowledge in a neural network. arXiv Preprint 2015, arXiv:1503.02531. [Google Scholar]

- Beyer, L.; Zhai, X.; Royer, A.; et al. Knowledge distillation: A good teacher is patient and consistent. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 10925–10934. [Google Scholar]

- Hsieh, C.Y.; Li, C.L.; Yeh, C.K.; et al. Distilling step-by-step! Outperforming larger language models with less training data and smaller model sizes. arXiv Preprint 2023, arXiv:2305.02301. [Google Scholar]

- DeVries, T.; Taylor, G.W. Dataset augmentation in feature space. arXiv Preprint 2017, arXiv:1702.05538. [Google Scholar]

- Shorten, C.; Khoshgoftaar, T.M. A survey on image data augmentation for deep learning. J. Big Data 2019, 6, 1–48. [Google Scholar] [CrossRef]

- Zhou, W.; Bras, R.L.; Choi, Y. Modular transformers: Compressing transformers into modularized layers for flexible efficient inference. arXiv Preprint 2023, arXiv:2306.02379. [Google Scholar]

- Chen, T.; Kornblith, S.; Swersky, K.; et al. Big self-supervised models are strong semi-supervised learners. Adv. Neural Inf. Process. Syst. 2020, 33, 22243–22255. [Google Scholar]

- Puri, R.; Spring, R.; Patwary, M.; et al. Training question answering models from synthetic data. arXiv Preprint 2020, arXiv:2002.09599. [Google Scholar]

- Wei, J.; Wang, X.; Schuurmans, D.; et al. Chain-of-thought prompting elicits reasoning in large language models. Advances in Neural Information Processing Systems 2022, 35, 24824–24837. [Google Scholar]

- Xu, C.; Sun, Q.; Zheng, K.; et al. WizardLM: Empowering large language models to follow complex instructions. arXiv Preprint 2023, arXiv:2304.12244. [Google Scholar]

- Bai, Y.; Lv, X.; Zhang, J.; et al. Longbench: A bilingual, multitask benchmark for long context understanding. arXiv Preprint 2023, arXiv:2308.14508. [Google Scholar]

| Model | Method | Overall Score | Answer Correctness | Answer Similarity |

|---|---|---|---|---|

| ]Qwen2.5-7B-Instruct | Base | 0.4264 | 0.3875 | 0.5432 |

| Base+RAG | 0.6935 | 0.6676 | 0.7712 | |

| Domain SFT | 0.4702 | 0.4213 | 0.6171 | |

| Domain SFT+RAG | 0.7126 | 0.6864 | 0.7913 | |

| Domain RAG SFT | 0.7296 | 0.7011 | 0.8132 | |

| DeepSeek-V2-Lite-Chat | Base | 0.4000 | 0.3601 | 0.5198 |

| Base+RAG | 0.6638 | 0.6356 | 0.7485 | |

| Domain SFT | 0.4396 | 0.3852 | 0.6027 | |

| Domain SFT+RAG | 0.6805 | 0.6477 | 0.7792 | |

| Domain RAG SFT | 0.6997 | 0.6622 | 0.8123 | |

| GLM-4-9B-Chat | Base | 0.3831 | 0.3453 | 0.4965 |

| Base+RAG | 0.6408 | 0.6105 | 0.7315 | |

| Domain SFT | 0.4232 | 0.3702 | 0.5823 | |

| Domain SFT+RAG | 0.6576 | 0.6253 | 0.7546 | |

| Domain RAG SFT | 0.6975 | 0.6626 | 0.8023 |

| Model | Method | lsht | dureader | multifieldqa_zh | vcsum | passage_retrieval_zh |

|---|---|---|---|---|---|---|

| Qwen2.5-7B-Instruct | Base | 29.5 | 38.2 | 65.2 | 17.5 | 92.5 |

| Domain SFT | 28.0 | 35.3 | 52.2 | 14.8 | 78.0 | |

| Domain RAG SFT | 29.0 | 39.3 | 67.4 | 15.1 | 94.5 | |

| DeepSeek-V2-Lite-Chat | Base | 26.0 | 36.5 | 63.6 | 18.6 | 86.0 |

| Domain SFT | 23.0 | 34.0 | 51.8 | 14.0 | 78.5 | |

| Domain RAG SFT | 24.5 | 38.7 | 66.0 | 19.3 | 83.0 | |

| GLM-4-9B-Chat | Base | 42.0 | 46.2 | 64.3 | 19.8 | 94.0 |

| Domain SFT | 32.0 | 33.5 | 50.5 | 15.5 | 85.5 | |

| Domain RAG SFT | 36.5 | 48.8 | 66.7 | 17.2 | 92.0 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).