2. Decomposition of Entropy in Stationary Markov Chains – Predictable and Unpredictable Information

An outcome of each coin flip, governed by the transition matrix

encapsulates a single bit of information. The probability of state repetition,

determines how this information is divided into predictable and unpredictable components [

2]. When

, the sequence becomes perfectly predictable, locking outcomes into stationary patterns like

(

heads) or

(

tails). Similarly, when

, the sequence alternates deterministically:

. In contrast,

corresponds to complete randomness, making future outcomes wholly unpredictable.

For long sequences (

) generated by an

N-state, irreducible, and recurrent Markov chain

with a stationary transition matrix

, the relative frequency

of visits to state

k converges to the stationary distribution

, such that

for all

s. The probability of each specific sequence

becomes extremely small for large

decreasing as

. Consequently, the number of distinct sequences consistent with the stationary distribution

decreases exponentially with

n, viz.,

where

is the

decay rate at which the number of different sequences constrained by the stationary distribution

decreases with sequence length

n. Up to a factor of

, the decay rate

, corresponds to the Boltzmann-Gibbs-Shannon entropy quantifying the uncertainty of a Markov chain’s state in equilibrium:

The inverse frequency

in (

2) is the expected

recurrence time of sequence returns to state

Thus,

can be termed the

utility function of recurrence time, quantifying the reduction in diversity of state sequences caused by the repetition of state

k in the most likely sequence patterns corresponding to the stationary distribution

.

The entropy (

2) serves as a foundation for decomposing the system’s total uncertainty into predictable and unpredictable components [

2,

4,

5]. To analyze the information dynamics, we add and subtract the following conditional entropy quantities to

, grouping the resulting terms into distinct informational quantities:

The excess entropy

quantifies the influence of past states on the present and future states, capturing the system’s structural correlations and predictive potential. The conditional mutual information

represents the information shared between the current state

and the future state

, independent of past history. It vanishes for both fully deterministic and completely random systems, reflecting the absence of predictive utility in these extremes. The sum of the excess entropy and the conditional mutual information shown in the second line of (

3) represents the

predictable information in the system [

2,

4]. This component quantifies the portion of the total uncertainty in the future state

that can be resolved using information about the current state

and the past states within the system’s dynamics. In contrast, the

unpredictable component

given in the third line of (

3), quantifies the intrinsic randomness in the system that remains unresolved even with full knowledge of its history. The entropy decomposition in (

3) is closed, meaning it completely partitions the total entropy

into predictable and the unpredictable components. For a fully deterministic system (e.g.,

or

), the unpredictable component vanishes,

, making the entire entropy predictable,

. Conversely, for a completely random system (

), the predictable components disappear. In this case,

as observations of the prior sequence provide no information for predicting future states (

). Similarly, attempts to predict the next state by repeating or alternating the current state fail, as

.

In a finite-state, irreducible Markov chain, the entropy rate quantifies the uncertainty associated with transitions from one state to the next. It is formally given by:

where the second formulation highlights the connection to the

geometric mean of transition probabilities. The term

can be interpreted as the geometric mean time-averaged transition probability rate (per step) from the state

k over an infinitely long observation period:

where

is the observed frequency of transitions from

k to

s over the most likely sequence of length

n. The frequency converges to the transition probability

as

. The inverse of the geometric mean transition probability rate,

, represents the average

residence time in state

k. The excess entropy

takes a form similar to the entropy (

2):

where the ratio of average recurrence and residence times,

measures the

transience of state

k in a sequence consistent with the stationary distribution

. Low transience implies that typical sequences are more predictable. Conversely, in the case of maximally random sequences where

, states are visited regularly, and the excess entropy (

6) does not enhance predictability. The formula for excess entropy

remains valid only for non-deterministic processes where all states can be visited and exited with non-zero probability. If the system remains indefinitely in a single state, the excess entropy becomes undefined because the system’s behavior carries no uncertainty.

Similarly, the conditional entropy,

quantifies the uncertainty associated with the statistics of

trigrams involving two transitions. The transition probability rate,

represents the asymptotic frequency of trigrams starting with state

k. The relative frequency

converges to the corresponding elements of the squared transition matrix

, over long, most likely sequences conforming the stationary distribution

. The conditional mutual information,

measures the amount of information shared between the current state

and the future state

, independently of the historical context provided by

. In the context of coin tossing, the mutual information (

9) emerges from uncertainty in choosing between alternating the present coin side (

) or repeating the current side (

) when predicting the coin’s future state. The mutual information vanishes for the fair coin (

), where transitions are completely random and independent. It also vanishes for a fully deterministic coin (

or

), where past states provide no additional information for predicting future behavior.

When the past and present states of the chain are known, the predictable information about the future state can be expressed as:

The conditional probability

represents the likelihood that, in a two-step transition starting from

k, both steps remain in

k. The inverse conditional probability,

can be interpreted as the average state

repetition time — the expected time between instances where state

k appears consecutively twice. Predictable information, therefore, reflects the balance between recurrences (returns to states) and state repetitions (remaining in the same state). Systems with frequent recurrences and repetitions exhibit high predictability, indicating more organized and regular behavior. In contrast, systems with infrequent recurrences and frequent state changes exhibit low predictability, suggesting a more random and chaotic behavior. According to (

3), unpredictable information is then defined as:

The logarithm of the state repetition time

which can also be interpreted as the

utility of state repetition, emphasizes the exponential growth of unpredictability when repetition times increase. Conversely, lower values of

indicate reduced uncertainty and predictable behavior.

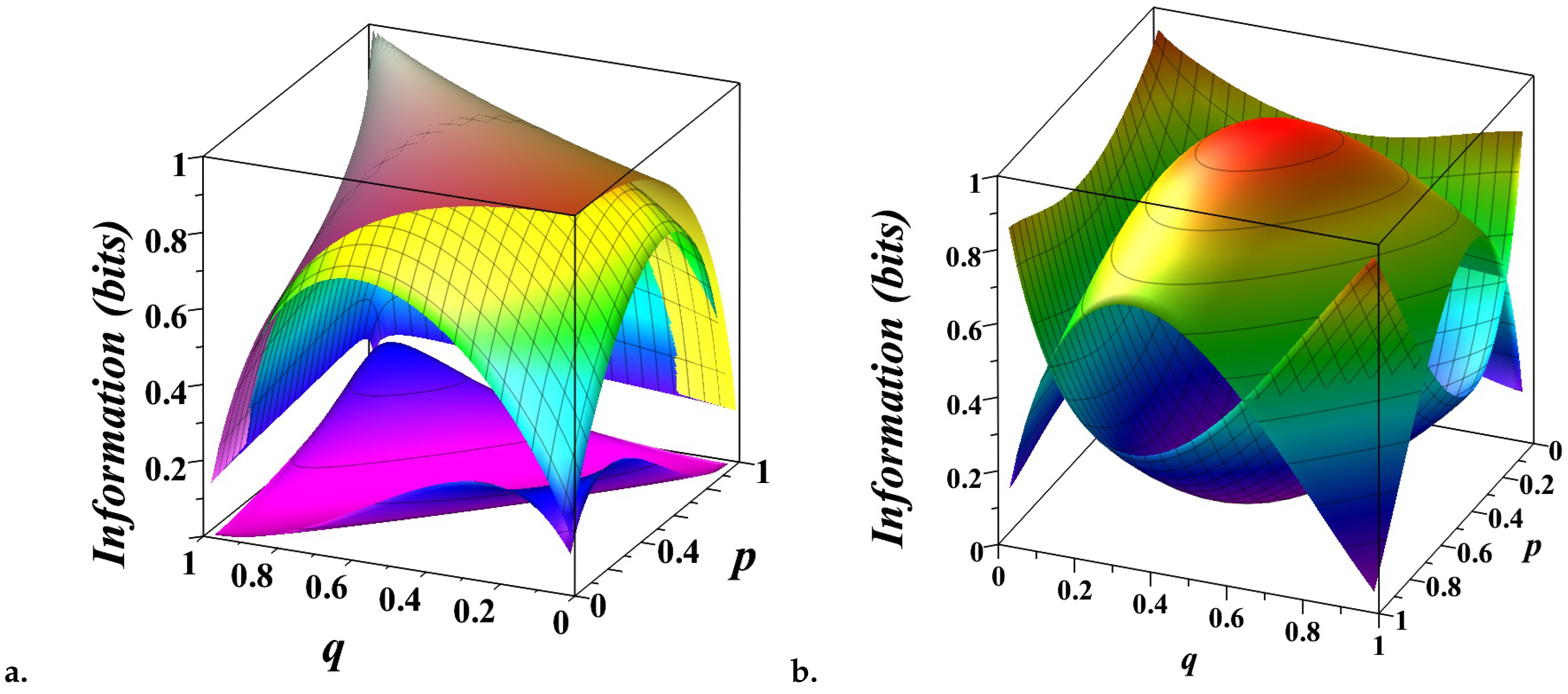

Figure 1 provides a detailed visualization of the entropy decomposition (

3) for a biased coin modeled as a Markov chain with transition matrix

where

p and

q are the probabilities of repeating the current state.

In

Figure 1.

a, the three surfaces illustrate different information quantities as functions of

p and

q. The top surface represents the total entropy

capturing the overall uncertainty in the system’s state. For a symmetric chain (

), the uncertainty reaches its maximum of 1 bit. The entropy decreases when

as one state becomes more probable than another, reducing overall uncertainty. The middle surface shows the entropy rate

, measuring the uncertainty in predicting the next state given the past states. When

, this surface coincides with the top one, indicating that the system behaves like a fair coin with no memory of past states. The bottom surface illustrates the conditional mutual information

, measures the predictive power of the current state for the next state, independent of past history. When

, the bottom surface highlights the tension between two simple prediction strategies — repeating or alternating the current state — reflecting the inherent randomness of a fair coin. The gaps between these surfaces reveal how the total uncertainty is partitioned. The gap between the top and middle surfaces corresponds to the excess entropy

, capturing the structured, predictable correlations in the system. Together with the space below the bottom surface, these gaps represent the amount of predictable information

. The gap between the middle and bottom surfaces represents the unpredictable component of the system, which diminishes as

p and

q approach deterministic values (0 or 1), reflecting minimal randomness.

In

Figure 1.

b, the decomposition of entropy is presented more intuitively. The top, convex surface shows the unpredictable component, peaking at 1 bit for a fair coin (

) and dropping to zero for deterministic scenarios (

). The bottom, concave surface represents the predictable component, which grows as the system becomes more deterministic. This figure highlights the delicate balance between randomness and structure, emphasizing how the predictability of the system depends on the interplay between state repetition probabilities.