Submitted:

19 December 2024

Posted:

20 December 2024

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

2. Materials and Methods

2.1. Dataset

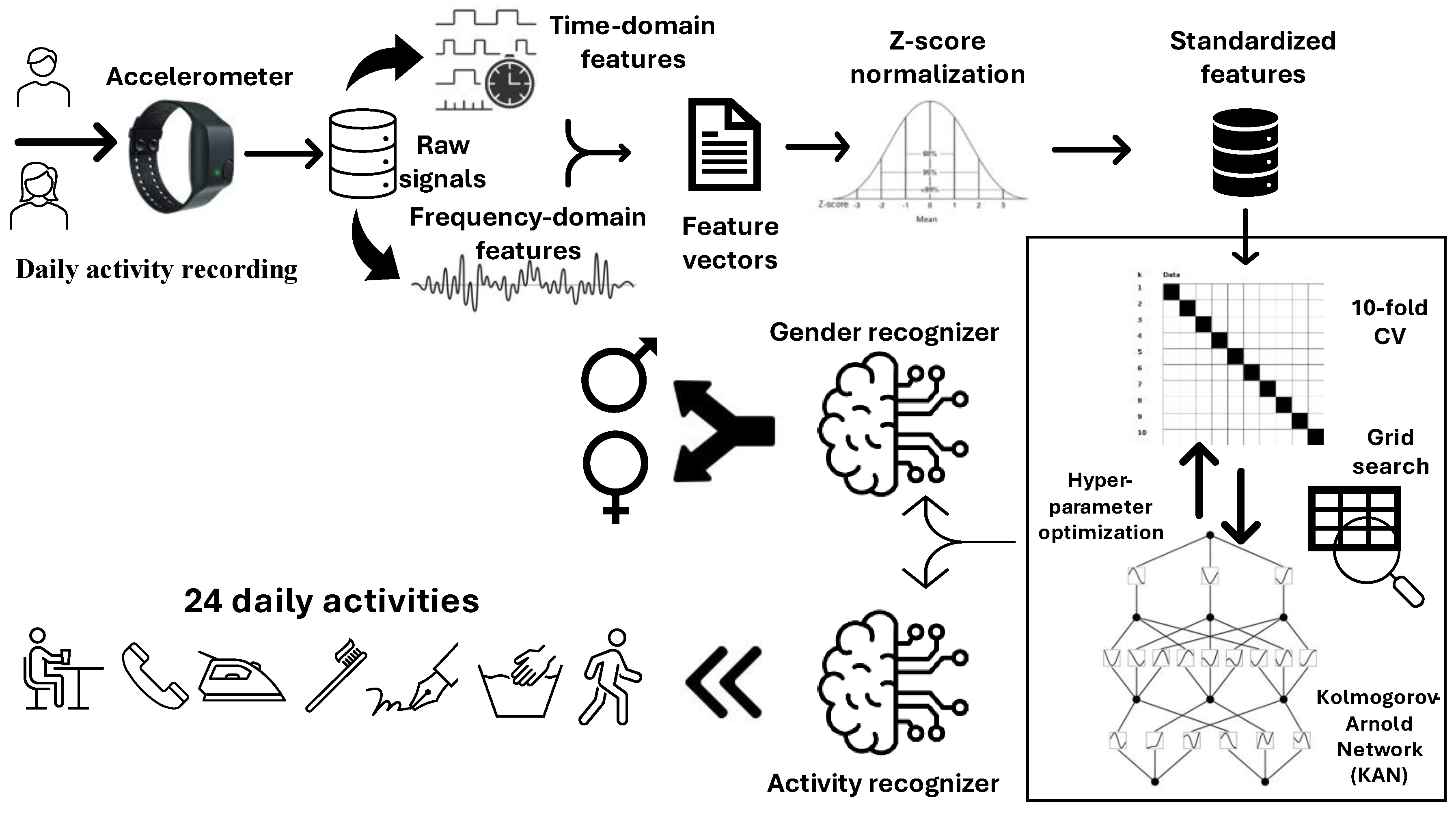

2.2. General Framework

2.3. Feature Extraction

2.4. Normalization

2.5. Kolmogorov-Arnold Network (KAN)

2.6. Experimental Setup

3. Results

3.1. Evaluation Metrics

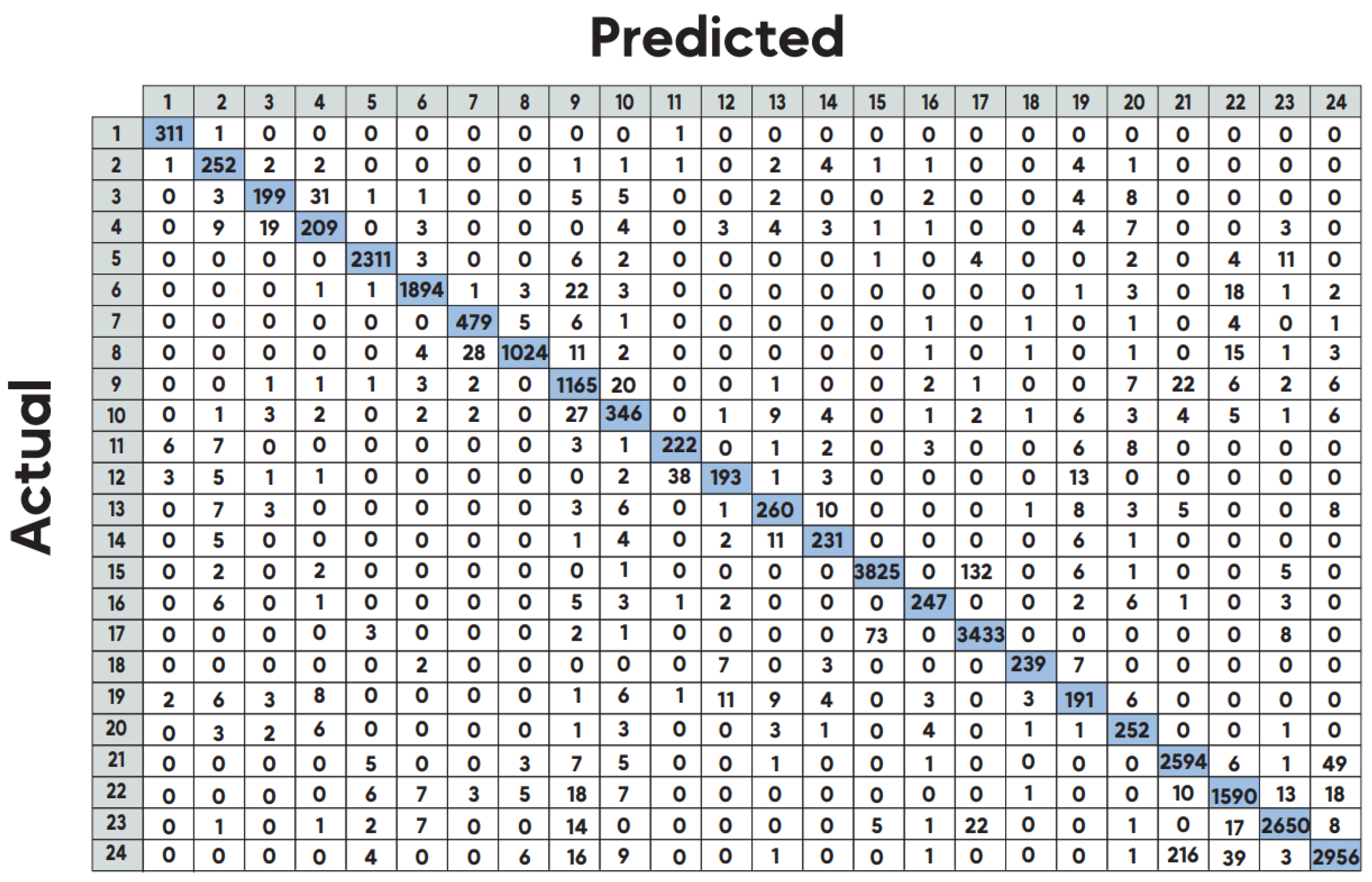

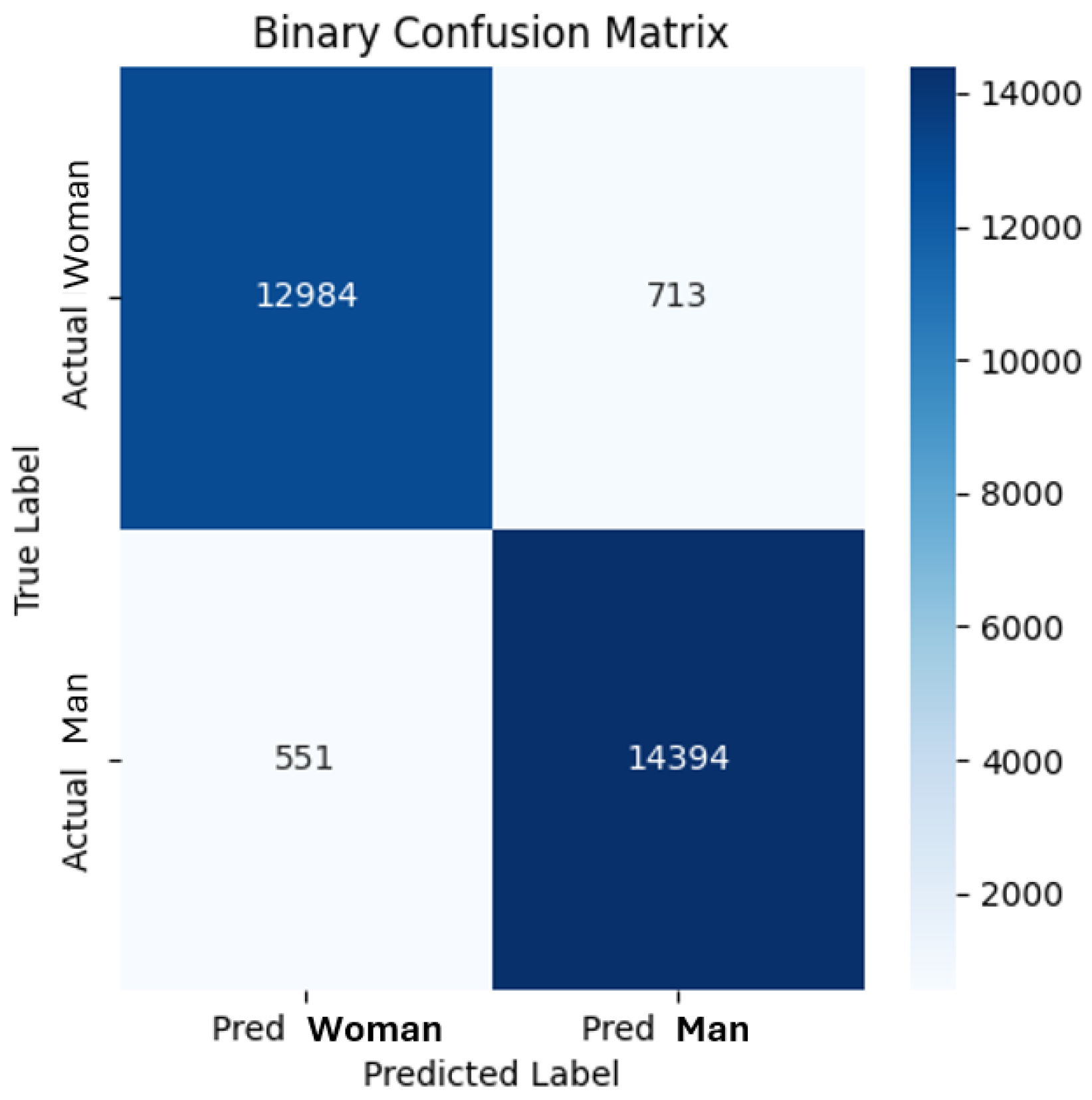

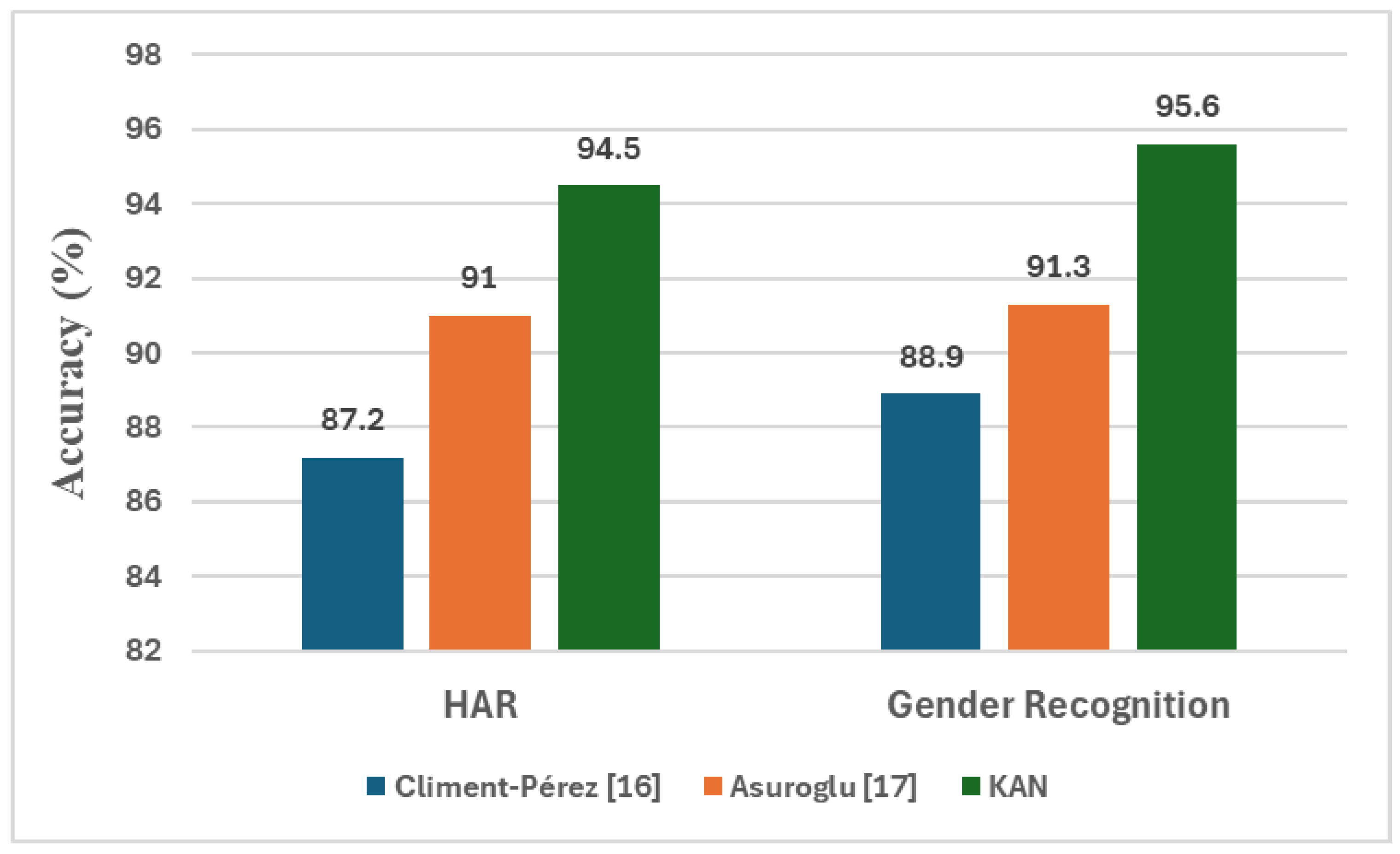

3.2. Empirical Results

4. Discussion and Conclusion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Kaseris:, M.; Kostavelis, I.; Malassiotis, S. A Comprehensive Survey on Deep Learning Methods in Human Activity Recognition. Mach. Learn. Knowl. Extr. 2024, 6, 842–876. [Google Scholar] [CrossRef]

- Khan, D.; Alshahrani, A.; Almjally, A.; al Mudawi, N.; Algarni, A.; Alnowaiser, K.; et al. Advanced IoT-Based Human Activity Recognition and Localization Using Deep Polynomial Neural Network. IEEE Access 2024, 12, 94337–94353. [Google Scholar] [CrossRef]

- de la Cal, E.A.; Fáñez, M.; Villar, M.; Villar, J.R.; González, V.M. A Low-Power HAR Method for Fall and High-Intensity ADLs Identification Using Wrist-Worn Accelerometer Devices. Logic J. IGPL 2023, 31, 375–389. [Google Scholar] [CrossRef]

- Hiremath, S.K.; Plötz, T. The Lifespan of Human Activity Recognition Systems for Smart Homes. Sensors 2023, 23, 7729. [Google Scholar] [CrossRef]

- Laudato, G.; Rosa, G.; Scalabrino, S.; Simeone, J.; Picariello, F.; Tudosa, I.; De Vito, L.; et al. MIPHAS: Military Performances and Health Analysis System. In Proceedings of the HEALTHINF; 2020; pp. 198–207. [Google Scholar] [CrossRef]

- Mahbub, U.; Ahad, M.A.R. Advances in Human Action, Activity and Gesture Recognition. Pattern Recognit. Lett. 2022, 155, 186–190. [Google Scholar] [CrossRef]

- Bektaş, K.; Strecker, J.; Mayer, S.; Garcia, K. Gaze-Enabled Activity Recognition for Augmented Reality Feedback. Comput. Graph. 2024, 119, 103909. [Google Scholar] [CrossRef]

- Kaur, H.; Rani, V.; Kumar, M. Human Activity Recognition: A Comprehensive Review. Expert Syst. 2024, 41(11), e13680. [Google Scholar] [CrossRef]

- Oleh, U.; Obermaisser, R.; Ahammed, A.S. A Review of Recent Techniques for Human Activity Recognition: Multimodality, Reinforcement Learning, and Language Models. Algorithms 2024, 17, 434. [Google Scholar] [CrossRef]

- Banos, O.; Galvez, J.M.; Damas, M.; Pomares, H.; Rojas, I. Window Size Impact in Human Activity Recognition. Sensors, 2014, 14, 6474–6499. [Google Scholar] [CrossRef]

- Anguita, D.; Ghio, A.; Oneto, L.; Parra, X.; Reyes-Ortiz, J.L. A Public Domain Dataset for Human Activity Recognition Using Smartphones. 21st European Symposium on Artificial Neural Networks, Computational Intelligence and Machine Learning, 2013, 437-442.

- Gupta, P.; Dallas, T. Feature Selection and Activity Recognition System Using a Single Triaxial Accelerometer. IEEE Trans. Biomed. Eng. 2014, 61, 1780–1786. [Google Scholar] [CrossRef]

- Reyes-Ortiz, J.L.; Oneto, L.; Samà, A.; Parra, X.; Anguita, D. Transition-Aware Human Activity Recognition Using Smartphones. Neurocomputing 2016, 171, 754–767. [Google Scholar] [CrossRef]

- Catal, C.; Tufekci, S.; Pirmit, E.; Kocabag, G. On the Use of Ensemble of Classifiers for Accelerometer-Based Activity Recognition. Applied Soft Computing 2015, 37, 1018–1022. [Google Scholar] [CrossRef]

- Demrozi, F.; Bacchin, R.; Tamburin, S.; Cristani, M.; Pravadelli, G. Toward a Wearable System for Predicting Freezing of Gait in People Affected by Parkinson's Disease. IEEE J. Biomed. Health Inform. 2020, 24, 2444–2451. [Google Scholar] [CrossRef]

- Climent-Pérez, P.; Florez-Revuelta, F. Privacy-Preserving Human Action Recognition with a Many-Objective Evolutionary Algorithm. Sensors 2022, 22, 764. [Google Scholar] [CrossRef]

- Aşuroğlu, T. Complex Human Activity Recognition Using a Local Weighted Approach. IEEE Access 2022, 10, 101207–101219. [Google Scholar] [CrossRef]

- Garcia-Gonzalez, D.; Rivero, D.; Fernandez-Blanco, E.; Luaces, M.R. New Machine Learning Approaches for Real-Life Human Activity Recognition Using Smartphone Sensor-Based Data. Knowledge-Based Systems 2023, 262, 110260. [Google Scholar] [CrossRef]

- Ordóñez, F.J.; Roggen, D. Deep Convolutional and LSTM Recurrent Neural Networks for Multimodal Wearable Activity Recognition. Sensors 2016, 16, 115. [Google Scholar] [CrossRef]

- Yang, J.B.; Nguyen, M.N.; San, P.P.; Li, X.L.; Krishnaswamy, S. Deep Convolutional Neural Networks on Multichannel Time Series for Human Activity Recognition. Proceedings of the 24th International Joint Conference on Artificial Intelligence (IJCAI’15), 2015, 3995–4001.

- Yao, S.; Hu, S.; Zhao, Y.; Zhang, A.; Abdelzaher, T. DeepSense: A Unified Deep Learning Framework for Time-Series Mobile Sensing Data Processing. Proceedings of the 26th International Conference on World Wide Web 2017, 171, 351–360. [Google Scholar] [CrossRef]

- Ignatov, A. Real-Time Human Activity Recognition from Accelerometer Data Using Convolutional Neural Networks. Appl. Soft Comput. 2018, 62, 915–922. [Google Scholar] [CrossRef]

- Guan, Y.; Plötz, T. Ensembles of Deep LSTM Learners for Activity Recognition Using Wearables. Proc. ACM Interact. Mob. Wearable Ubiquitous Technol. 2017, 1, 11. [Google Scholar] [CrossRef]

- Murad, A.; Pyun, J.-Y. Deep Recurrent Neural Networks for Human Activity Recognition. Sensors 2017, 17, 2556. [Google Scholar] [CrossRef] [PubMed]

- Hu, Y.; Zhang, X.-Q.; Xu, L.; He, F.X.; Tian, Z.; She, W.; Liu, W. Harmonic Loss Function for Sensor-Based Human Activity Recognition Based on LSTM Recurrent Neural Networks. IEEE Access 2020, 8, 135617–135627. [Google Scholar] [CrossRef]

- Climent-Pérez, P.; Muñoz-Antón, Á.M.; Poli, A.; Spinsante, S.; Florez-Revuelta, F. Dataset of Acceleration Signals Recorded While Performing Activities of Daily Living. Data in Brief 2022, 41, 107896. [Google Scholar] [CrossRef] [PubMed]

- Müller, P.N.; Müller, A.J.; Achenbach, P.; Göbel, S. IMU-Based Fitness Activity Recognition Using CNNs for Time Series Classification. Sensors 2024, 24, 742. [Google Scholar] [CrossRef]

- Yi, M.-K.; Lee, W.-K.; Hwang, S.O. A Human Activity Recognition Method Based on Lightweight Feature Extraction Combined with Pruned and Quantized CNN for Wearable Device. IEEE Trans. Consum. Electron. 2023, 69(3), 657–670. [Google Scholar] [CrossRef]

- Liu, Z.; Wang, Y.; Vaidya, S.; Ruehle, F.; Halverson, J.; Soljačić, M.; Hou, T.Y.; Tegmark, M. Kan: Kolmogorov-Arnold Networks. arXiv arXiv:2404.19756, 2024.

- Abd Elaziz, M.; Ahmed Fares, I.; Aseeri, A.O. CKAN: Convolutional Kolmogorov–Arnold Networks Model for Intrusion Detection in IoT Environment. IEEE Access 2024, 12, 134837–134851. [Google Scholar] [CrossRef]

- Hollósi, J.; Ballagi, Á.; Kovács, G.; Fischer, S.; Nagy, V. Detection of Bus Driver Mobile Phone Usage Using Kolmogorov-Arnold Networks. Computers 2024, 13, 218. [Google Scholar] [CrossRef]

- Jaramillo, I.E.; Jeong, J.G.; Lopez, P.R.; Lee, C.-H.; Kang, D.-Y.; Ha, T.-J.; Oh, J.-H.; Jung, H.; Lee, J.H.; Lee, W.H.; et al. Real-Time Human Activity Recognition with IMU and Encoder Sensors in Wearable Exoskeleton Robot via Deep Learning Networks. Sensors 2022, 22, 9690. [Google Scholar] [CrossRef]

- Le Guennec, A.; Malinowski, S.; Tavenard, R. Data Augmentation for Time Series Classification Using Convolutional Neural Networks. In Proceedings of the ECML/PKDD Workshop on Advanced Analytics and Learning on Temporal Data, Riva Del Garda, Italy, 1 September 2016. [Google Scholar]

- Banjarey, K.; Prakash Sahu, S.; Kumar Dewangan, D. A Survey on Human Activity Recognition Using Sensors and Deep Learning Methods. In Proceedings of the 2021 5th International Conference on Computing Methodologies and Communication (ICCMC), Erode, India, 8–10 April 2021; pp. 1610–1617. [Google Scholar]

- Sansano, E.; Montoliu, R.; Belmonte Fernández, Ó. A Study of Deep Neural Networks for Human Activity Recognition. Comput. Intell. 2020, 36, 1113–1139. [Google Scholar] [CrossRef]

- Zhang, S.; Li, Y.; Zhang, S.; Shahabi, F.; Xia, S.; Deng, Y.; Alshurafa, N. Deep Learning in Human Activity Recognition with Wearable Sensors: A Review on Advances. Sensors 2022, 22, 1476. [Google Scholar] [CrossRef] [PubMed]

- Bozkurt, F. A Comparative Study on Classifying Human Activities Using Classical Machine and Deep Learning Methods. Arab. J. Sci. Eng. 2022, 47, 1507–1521. [Google Scholar] [CrossRef]

- Jaramillo, I.E.; Chola, C.; Jeong, J.-G.; Oh, J.-H.; Jung, H.; Lee, J.-H.; Lee, W.H.; Kim, T.-S. Human Activity Prediction Based on Forecasted IMU Activity Signals by Sequence-to-Sequence Deep Neural Networks. Sensors 2023, 23, 6491. [Google Scholar] [CrossRef] [PubMed]

- Jeon, H.; Lee, D. A New Data Augmentation Method for Time Series Wearable Sensor Data Using a Learning Mode Switching-Based DCGAN. IEEE Robot. Autom. Lett. 2021, 6(4), 8671–8677. [Google Scholar] [CrossRef]

| Class Label | Activity | Number of Samples |

|---|---|---|

| 1 | Blowing nose | 313 |

| 2 | Brushing hair | 273 |

| 3 | Brushing teeth | 261 |

| 4 | Drinking water | 270 |

| 5 | Dusting | 2344 |

| 6 | Eating meal | 1950 |

| 7 | Taking off glasses | 499 |

| 8 | Putting on glasses | 1088 |

| 9 | Ironing | 1240 |

| 10 | Taking off jacket | 424 |

| 11 | Putting on jacket | 259 |

| 12 | Typing on keyboard | 260 |

| 13 | Opening bottle | 315 |

| 14 | Opening a box | 261 |

| 15 | Making a phone call | 3967 |

| 16 | Saluting | 277 |

| 17 | Taking off a shoe | 3520 |

| 18 | Putting on a shoe | 258 |

| 19 | Sitting down | 254 |

| 20 | Sneezing/coughing | 278 |

| 21 | Standing up | 2672 |

| 22 | Washing dishes | 1678 |

| 23 | Washing hands | 2729 |

| 24 | Writing | 3252 |

| Total: 28642 | ||

| Hyper-Parameter | Range |

|---|---|

| Hidden layers | Multi-class HAR |

| [62,32,64,128,24] [62,64,128,256,24] [62,128,256,512,24], [62,64,32,16,24] | |

| Binary-class gender recognition | |

| [62,32,64,128,1] [62,64,128,256,1] [62,128,256,512,1], [62,64,32,16,1] | |

| Grid size | [3, 5, 7, 9] |

| Learning rate | [0.0001, 0.001, 0.01, 0.0005, 0.005, 0.05, 0.0002, 0,002] |

| Spline order | [2,3,4,5,6,7,8,9] |

| Scale base | [0.5, 1.0, 1.5, 2.0] |

| Scale spline | [1.0, 2.0] |

| Batch size | [16, 32, 64,128] |

| Optimizer | [Adam, SGD, RMSprop] |

| Optimized hyper-parameters | ||

| Hyper-parameter | HAR | Gender Recognition |

| Hidden layers | [62, 64, 32, 16, 24] | [62, 128, 64, 32, 1] |

| Grid size | 7 | 7 |

| Learning rate | 0.0001 | 0.0005 |

| Spline order | 7 | 3 |

| Scale base | 1.0 | 1.0 |

| Scale spline | 1.0 | 1.0 |

| Batch size | 32 | 32 |

| Optimizer | Adam | Adam |

| Model | Acc (%) | Prec (%) | Sn (%) | Sp (%) | MCC | F1 score | AUC |

|---|---|---|---|---|---|---|---|

| kNN [17] | 88.9 | 88.7 | 88.9 | 99.3 | 0.88 | 0.89 | NR |

| NB [17] | 47.6 | 52 | 47.6 | 96 | 0.44 | 0.5 | NR |

| DT [17] | 71.7 | 71.7 | 71.7 | 98.1 | 0.7 | 0.72 | NR |

| MLP [17] | 74.2 | 74.1 | 74.2 | 98.1 | 0.72 | 0.74 | NR |

| SVM [17] | 69.2 | 68.9 | 69.2 | 97.4 | 0.67 | 0.69 | NR |

| LMT [17] | 73.3 | 73.2 | 73.3 | 97.9 | 0.71 | 0.73 | NR |

| RF [16] | 87.2 | NR | NR | NR | NR | NR | NR |

| LWRF [17] | 91 | 90.9 | 91 | 99.5 | 0.91 | 0.91 | NR |

| Proposed method | 94.5 | 94.6 | 94.5 | 99.7 | 0.94 | 0.95 | 0.97 |

| Model | Acc (%) | Prec (%) | Sn (%) | Sp (%) | MCC | F1 score | AUC |

|---|---|---|---|---|---|---|---|

| kNN [17] | 89.9 | 89.9 | 89.9 | 89.9 | 0.79 | 0.79 | NR |

| NB [17] | 54.8 | 54.6 | 54.8 | 53.3 | 0.09 | 0.55 | NR |

| DT [17] | 73.9 | 73.9 | 73.9 | 73.8 | 0.48 | 0.74 | NR |

| MLP [17] | 65.7 | 65.8 | 65.7 | 65.7 | 0.31 | 0.66 | NR |

| SVM [17] | 59.8 | 59.7 | 59.8 | 59.5 | 0.19 | 0.6 | NR |

| LMT [17] | 75.7 | 75.7 | 75.7 | 75.6 | 0.51 | 0.76 | NR |

| RF [16] | 88.9 | NR | NR | NR | NR | NR | NR |

| LWRF [17] | 91.3 | 91.3 | 91.4 | 91.2 | 0.83 | 0.91 | NR |

| Proposed method | 95.6 | 95.3 | 96.3 | 94.8 | 0.91 | 0.96 | 0.99 |

| Daily Activity | Our Study | Asuroglu [17] | Climent-Pérez [16] |

|---|---|---|---|

| Putting on a shoe | 93 | 73 | 77 |

| Taking off a shoe | 98 | 97 | 55 |

| Opening a bottle | 83 | 63 | 47 |

| Opening a box | 89 | 69 | 41 |

| Putting on glasses | 94 | 90 | 60 |

| Taking off glasses | 96 | 88 | 50 |

| Standing up | 97 | 95 | 68 |

| Sitting down | 75 | 35 | 58 |

| Making a phone call | 96 | 98 | 52 |

| Sneezing/coughing | 91 | 57 | 33 |

| Blowing nose | 99 | 90 | 56 |

| Activity vs. All | Acc (%) | Prec (%) | Sn (%) | Sp (%) | MCC | F1 score | AUC |

|---|---|---|---|---|---|---|---|

| Blowing nose | 99.8 | 91.3 | 90.4 | 99.9 | 0.908 | 0.909 | 0.999 |

| 99.9 | 99.7 | 99.4 | 99.9 | 0.995 | 0.995 | 0.999 | |

| Brushing hair | 99.4 | 93.5 | 36.6 | 99.9 | 0.583 | 0.526 | 0.988 |

| 99.8 | 85.4 | 96.7 | 99.8 | 0.908 | 0.907 | 0.999 | |

| Brushing teeth | 99.2 | 60.2 | 21.5 | 99.9 | 0.356 | 0.316 | 0.989 |

| 99.8 | 91.3 | 80.5 | 99.9 | 0.856 | 0.855 | 0.999 | |

| Drinking water | 99.1 | 55.8 | 10.7 | 99.9 | 0.242 | 0.18 | 0.987 |

| 99.8 | 91.9 | 87.8 | 99.9 | 0.897 | 0.898 | 0.999 | |

| Dusting | 98.8 | 94.2 | 90.7 | 99.5 | 0.918 | 0.925 | 0.996 |

| 99.7 | 97.9 | 99.0 | 99.8 | 0.983 | 0.984 | 0.999 | |

| Eating meal | 98.0 | 93.2 | 75.6 | 99.6 | 0.83 | 0.835 | 0.989 |

| 99.3 | 94.4 | 95.7 | 99.6 | 0.947 | 0.951 | 0.997 | |

| Taking off glasses | 99.2 | 74.7 | 82.2 | 99.5 | 0.779 | 0.782 | 0.996 |

| 99.6 | 87.0 | 92.6 | 99.8 | 0.896 | 0.897 | 0.999 | |

| Putting on glasses | 98.2 | 84.7 | 65.0 | 99.5 | 0.733 | 0.735 | 0.991 |

| 99.4 | 88.9 | 95.0 | 99.5 | 0.915 | 0.918 | 0.999 | |

| Ironing | 97.0 | 86.1 | 36.5 | 99.7 | 0.55 | 0.513 | 0.981 |

| 99.0 | 85.0 | 93.4 | 99.3 | 0.886 | 0.89 | 0.996 | |

| Taking off jacket | 98.6 | 81.0 | 8.01 | 99.9 | 0.252 | 0.146 | 0.969 |

| 99.3 | 70.3 | 93.2 | 99.4 | 0.806 | 0.801 | 0.998 | |

| Putting on jacket | 99.4 | 84.2 | 43.2 | 99.9 | 0.601 | 0.571 | 0.992 |

| 99.9 | 88.8 | 98.1 | 99.9 | 0.933 | 0.932 | 0.999 | |

| Typing on keyboard | 99.3 | 79.4 | 31.2 | 99.9 | 0.495 | 0.448 | 0.991 |

| 99.9 | 97.4 | 88.1 | 99.9 | 0.926 | 0.925 | 0.999 | |

| Opening bottle | 99.1 | 63.0 | 54.6 | 99.6 | 0.582 | 0.585 | 0.991 |

| 99.9 | 94.5 | 93.0 | 99.9 | 0.937 | 0.938 | 0.999 | |

| Opening a box | 99.4 | 64.7 | 64.0 | 99.7 | 0.640 | 0.644 | 0.994 |

| 99.8 | 95.0 | 88.1 | 99.9 | 0.914 | 0.915 | 0.999 | |

| Making a phone call | 97.4 | 87.6 | 94.6 | 97.8 | 0.895 | 0.91 | 0.995 |

| 98.7 | 92.3 | 98.3 | 98.7 | 0.945 | 0.952 | 0.999 | |

| Saluting | 99.4 | 78.8 | 48.4 | 99.9 | 0.615 | 0.6 | 0.991 |

| 99.9 | 91.2 | 97.1 | 99.9 | 0.94 | 0.941 | 0.999 | |

| Taking off a shoe | 97.2 | 90.5 | 86.7 | 98.7 | 0.87 | 0.885 | 0.992 |

| 99.1 | 98.1 | 94.1 | 99.7 | 0.955 | 0.961 | 0.999 | |

| Putting on a shoe | 99.6 | 84.9 | 71.7 | 99.9 | 0.778 | 0.777 | 0.997 |

| 99.9 | 100.0 | 87.6 | 100.0 | 0.935 | 0.934 | 0.999 | |

| Sitting down | 99.2 | 67.3 | 13.8 | 99.9 | 0.302 | 0.229 | 0.982 |

| 99.7 | 96.8 | 71.3 | 99.9 | 0.829 | 0.821 | 0.999 | |

| Sneezing/coughing | 99.3 | 79.9 | 41.4 | 99.9 | 0.572 | 0.545 | 0.991 |

| 99.8 | 89.9 | 93.2 | 99.9 | 0.914 | 0.915 | 0.999 | |

| Standing up | 96.2 | 89.0 | 67.9 | 99.1 | 0.758 | 0.77 | 0.988 |

| 98.6 | 94.9 | 89.8 | 99.5 | 0.915 | 0.923 | 0.997 | |

| Washing dishes | 97.2 | 86.1 | 61.3 | 99.4 | 0.713 | 0.716 | 0.98 |

| 98.3 | 94.4 | 75.0 | 99.7 | 0.833 | 0.835 | 0.992 | |

| Washing hands | 97.4 | 90.7 | 81.4 | 99.1 | 0.845 | 0.858 | 0.991 |

| 99.4 | 95.4 | 98.9 | 99.5 | 0.968 | 0.971 | 0.999 | |

| Writing | 94.7 | 76.7 | 76.6 | 97.0 | 0.736 | 0.766 | 0.976 |

| 97.6 | 93.7 | 84.3 | 99.3 | 0.876 | 0.888 | 0.994 |

| Task | Acc (%) | Prec (%) | Sn (%) | Sp (%) | MCC | F1 score | AUC |

|---|---|---|---|---|---|---|---|

| HAR | 78.1 | 77.8 | 78.1 | 100.0 | 0.761 | 0.777 | 0.816 |

| Gender recognition | 77.1 | 74.4 | 68.1 | 74.4 | 0.425 | 0.711 | 0.789 |

| Architecture | Acc (%) | Prec (%) | Sn (%) | Sp (%) | MCC | F1 score | AUC |

|---|---|---|---|---|---|---|---|

| BiLSTM | 80.8 | 80.7 | 80.8 | 98.7 | 0.790 | 0.808 | 0.989 |

| CNN | 94.2 | 94.5 | 94.2 | 99.7 | 0.940 | 0.940 | 0.940 |

| GRU | 79.9 | 79.8 | 79.9 | 98.7 | 0.781 | 0.798 | 0.987 |

| LSTM | 84.3 | 83.4 | 84.3 | 95.2 | 0.829 | 0.836 | 0.976 |

| RNN | 87.3 | 87.5 | 87.3 | 95.0 | 0.861 | 0.872 | 0.996 |

| Proposed method | 94.5 | 94.6 | 94.5 | 99.7 | 0.940 | 0.950 | 0.97 |

| Architecture | Acc (%) | Prec (%) | Sn (%) | Sp (%) | MCC | F1 score | AUC |

|---|---|---|---|---|---|---|---|

| BiLSTM | 68.8 | 69.9 | 70.7 | 66.8 | 0.375 | 0.703 | 0.688 |

| CNN | 82.8 | 83.7 | 83.1 | 82.4 | 0.655 | 0.834 | 0.827 |

| GRU | 73.8 | 75.9 | 73.1 | 74.7 | 0.477 | 0.744 | 0847 |

| LSTM | 76.9 | 76.1 | 75.4 | 78.3 | 0.537 | 0.757 | 0.861 |

| RNN | 83.8 | 83.1 | 83.8 | 84.5 | 0.676 | 0.831 | 0.925 |

| Proposed method | 95.6 | 95.3 | 96.3 | 94.8 | 0.91 | 0.96 | 0.99 |

| KAN vs. Architecture | Chi-square statistic | p-value |

|---|---|---|

| BiLSTM | 3420.594 | 0.000 |

| CNN | 865.919 | 0.000 |

| GRU | 3699.303 | 0.000 |

| LSTM | 3066.98 | 0.000 |

| RNN | 1822.349 | 0.000 |

| KAN vs. Architecture | Chi-square statistic | p-value |

|---|---|---|

| BiLSTM | 7025.721 | 0.000 |

| CNN | 2453.494 | 0.000 |

| GRU | 5266.163 | 0.000 |

| LSTM | 4381.682 | 0.000 |

| RNN | 2288.968 | 0.000 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).