Submitted:

19 December 2024

Posted:

20 December 2024

You are already at the latest version

Abstract

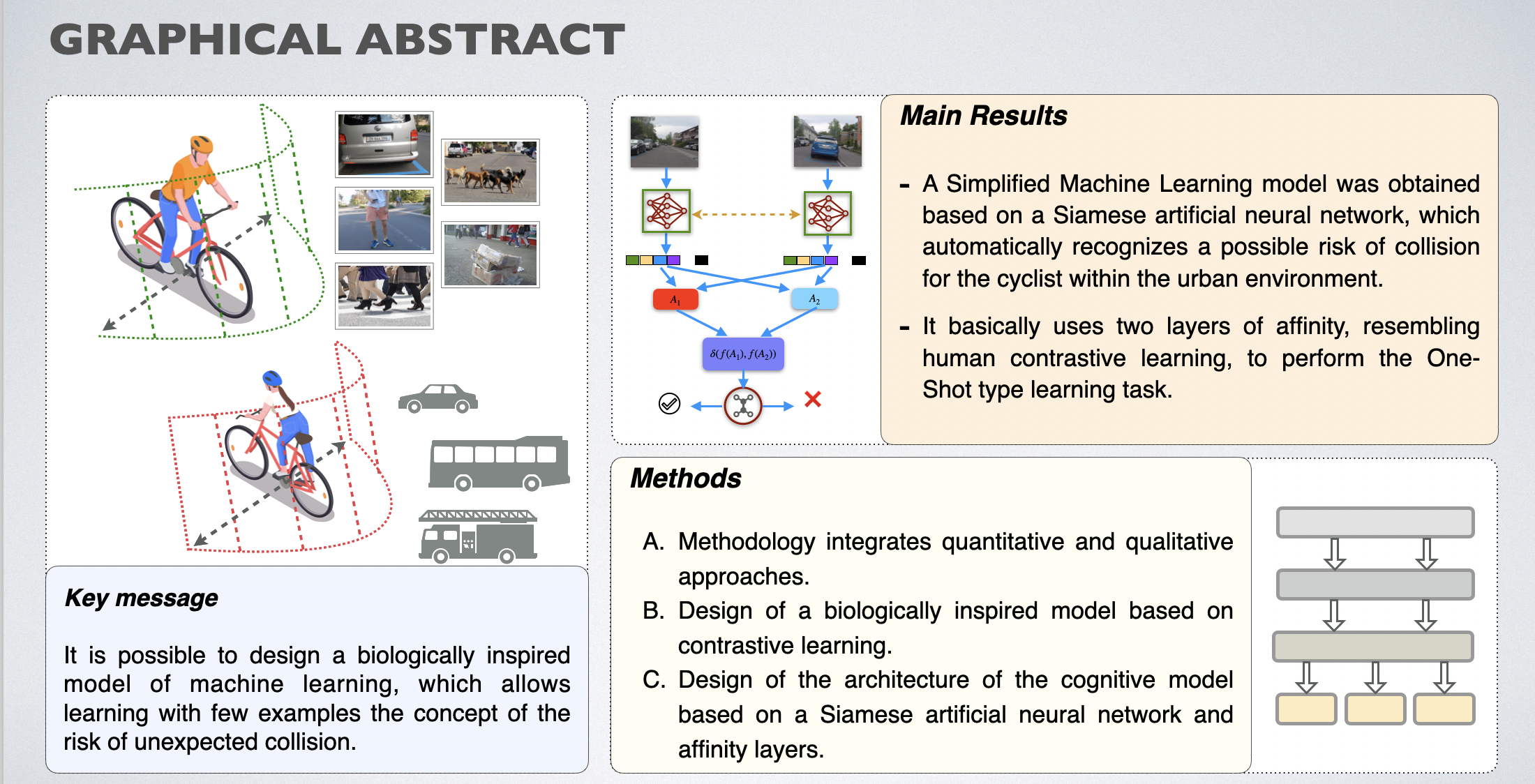

Urban cycling is a sustainable mode of transportation in large cities, and it offers many advantages. It is an eco-friendly means of transport that is accessible to the population and easy to use. Additionally, it is more economical than other means of transportation. Urban cycling is beneficial for physical health and mental well-being. Achieving sustainable mobility and the evolution towards smart cities demands a comprehensive analysis of all the essential aspects that enable their inclusion. Road safety is particularly important, which must be prioritized to ensure safe transportation and reduce the incidence of road accidents. In order to help reduce the number of accidents that urban cyclists are involved in, this work proposes an alternative solution in the form of an intelligent computational assistant that utilizes Simplified Machine Learning (SML) to detect potential risks of unexpected collisions. This technological approach serves as a helpful alternative to the current problem. Through our methodology, we were able to identify the problem involved in the research, design and development of the solution proposal, collect and analyze data, and obtain preliminary results. These results experimentally demonstrate how the proposed model outperforms most state-of-the-art models that use a metric learning layer for small image sets.

Keywords:

1. Introduction

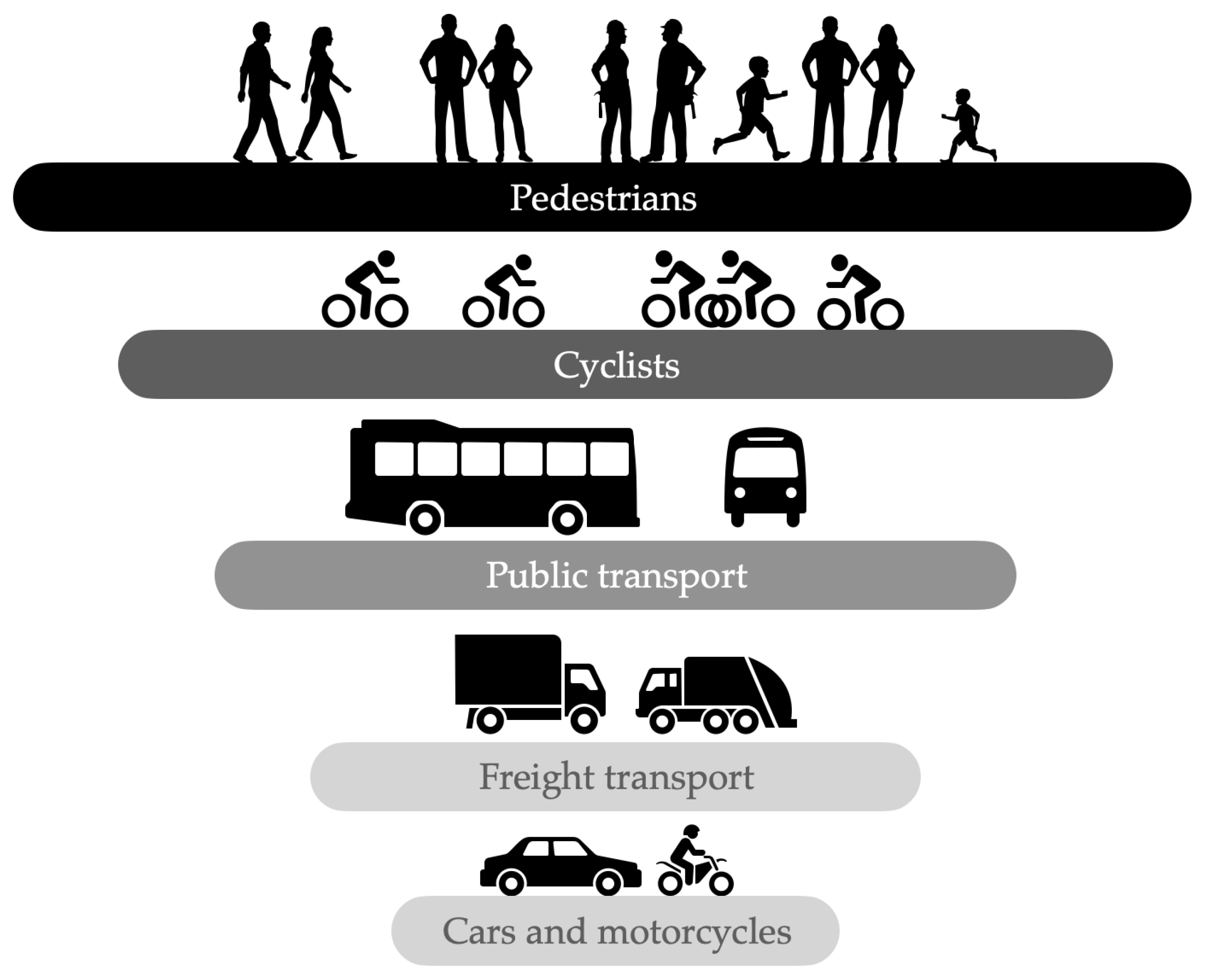

1.1. Urban Mobility

1.2. Road Safety for Cyclists

1.3. Intelligent Urban Cycling

- Section 2 is prepared to outline the intuitive information presented by the problem and the main research method adopted to identify the dominant characteristics and advantages of Simplified Machine Learning in achieving unexpected collision risk identification tasks crucial for training and testing procedures for maximize the effectiveness of model classification.

- Section 3 it is structured to generally explain the methodology used, the essential description of the proposed solution that includes the architecture of the proposed cognitive model as well as its main parts.

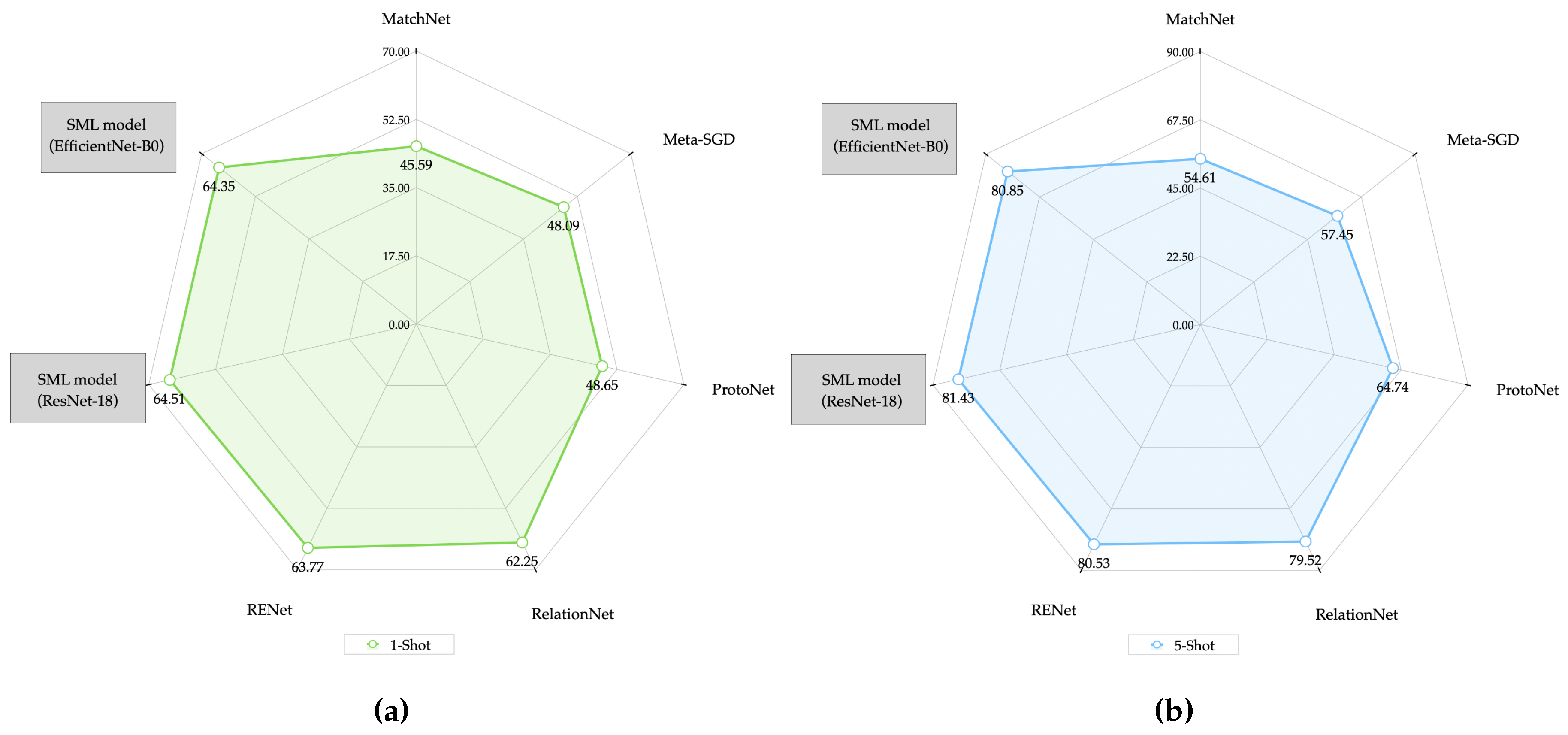

- Section 4 describes the experimental stage used to observe the performance of the model with different datasets and provides comparative tables and graphs that illustrate its performance when using different feature extractors as well as in each of the sample ranges that were used (One-Shot and Five-Shot ).

- Section 5 provides discussion of notable results related to the evaluation of the proposed model, its generalization capacity and its comparison against other state-of-the-art methods, as well as other particular aspects of its operation and performance.

- Section 6 expresses the main research conclusions.

- Reduce time, effort and costs related to the number of examples or training samples used in conventional Deep Learning (DL) and Machine Learning (ML) models.,

- This proposal, based on cutting-edge technology, is presented as a novel support option for cyclists, allowing them to travel more safely during trips within urban areas.,

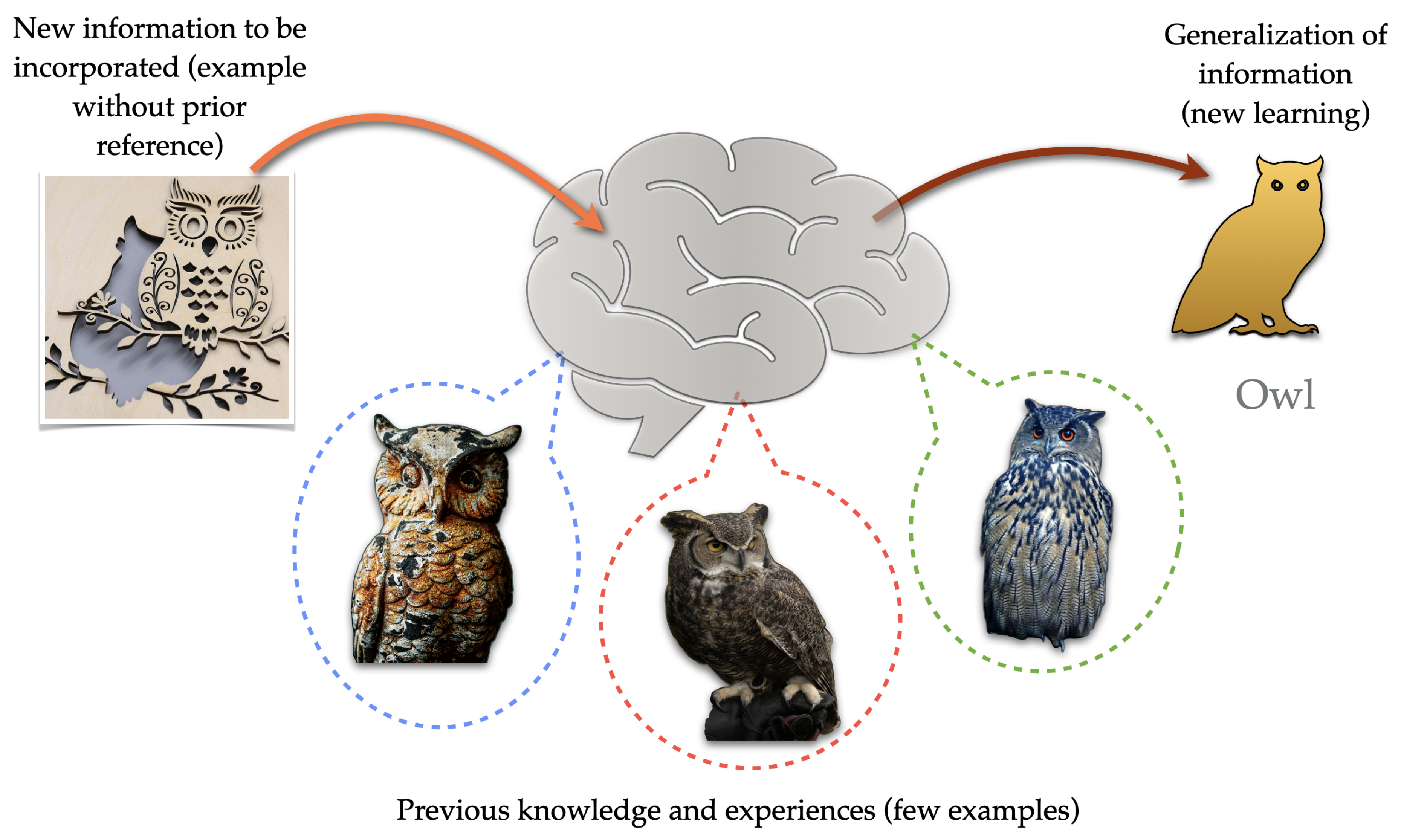

- Contribution to the area of machine learning with a model that proposes using fewer examples or samples of information for training and being able to resemble the natural learning of human beings.

2. Simplified Machine Learning

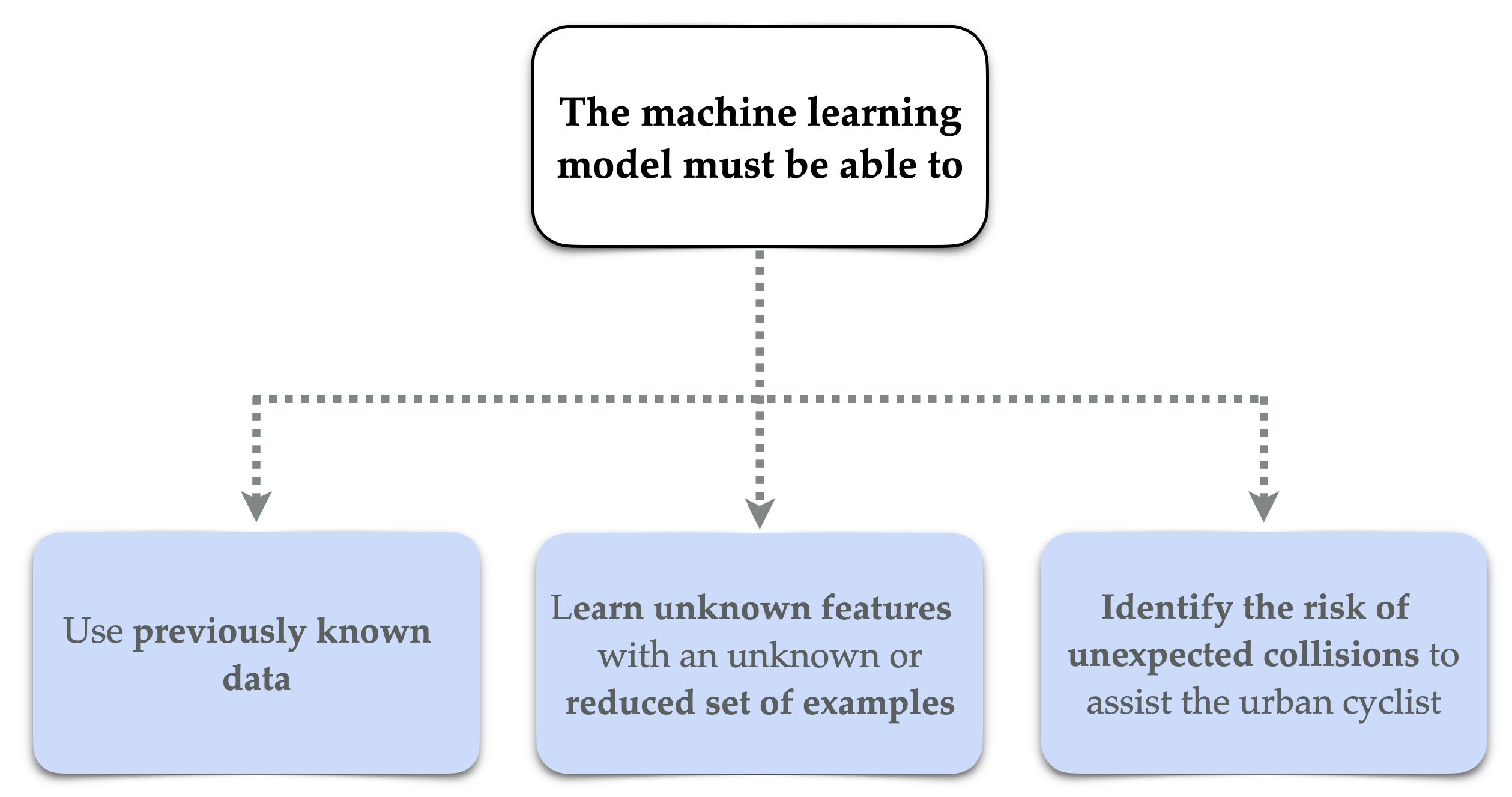

2.1. The Challenge of Machine Learning

2.2. Related Work

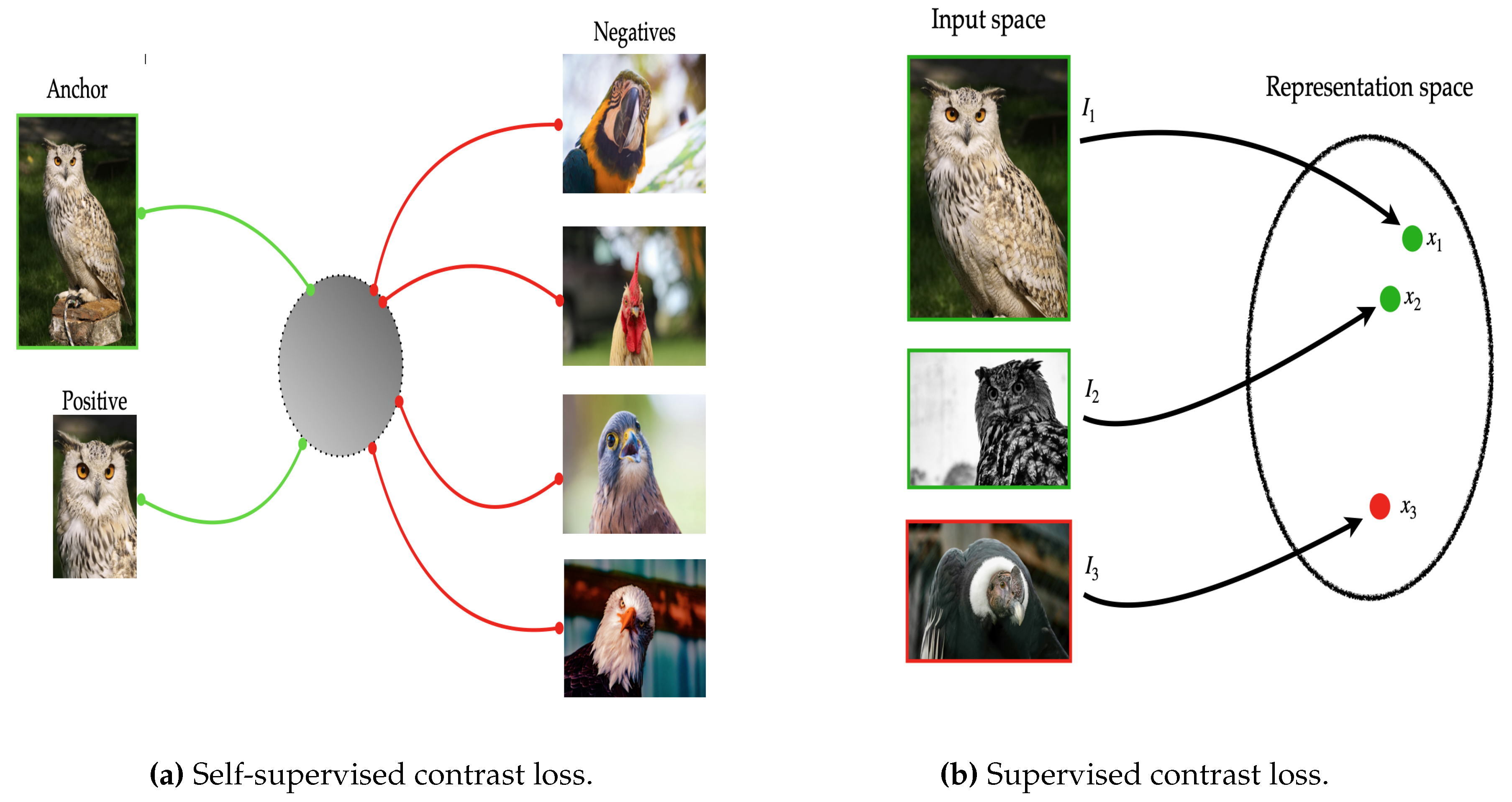

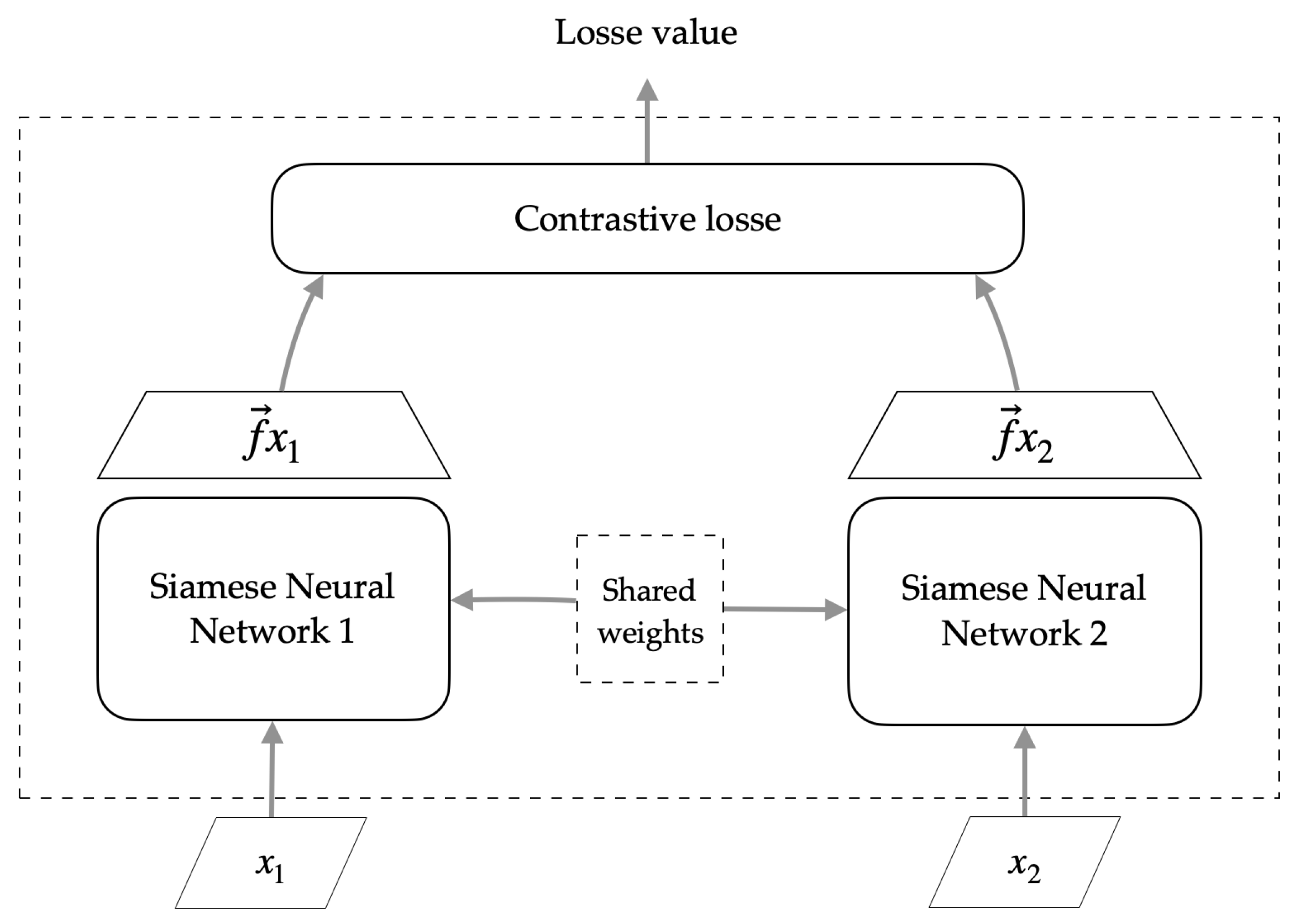

2.3. Contrastive Learning

2.4. Approach Overview and Contributions

3. Materials and Methods

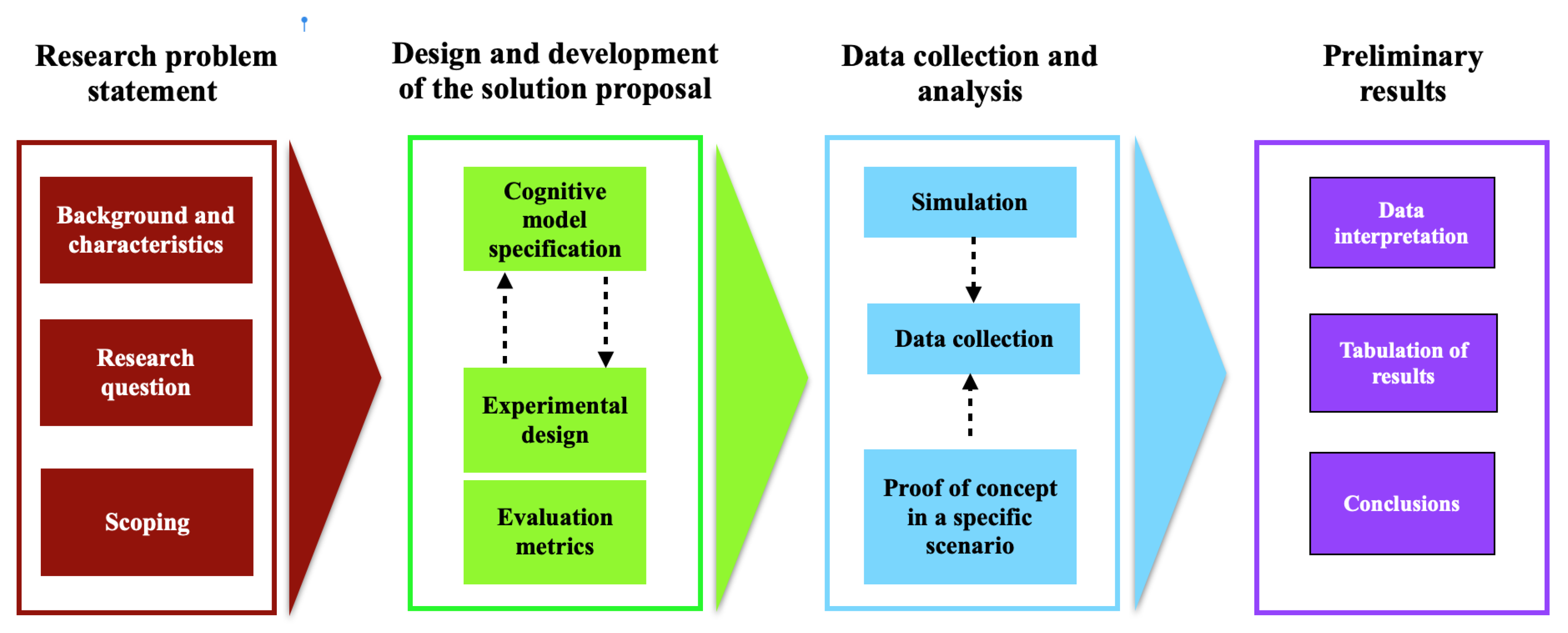

3.1. Methodolgy

- Research problem statement;

- Design and development of the solution proposal;

- Data collection and analysis;

- Preliminary Results.

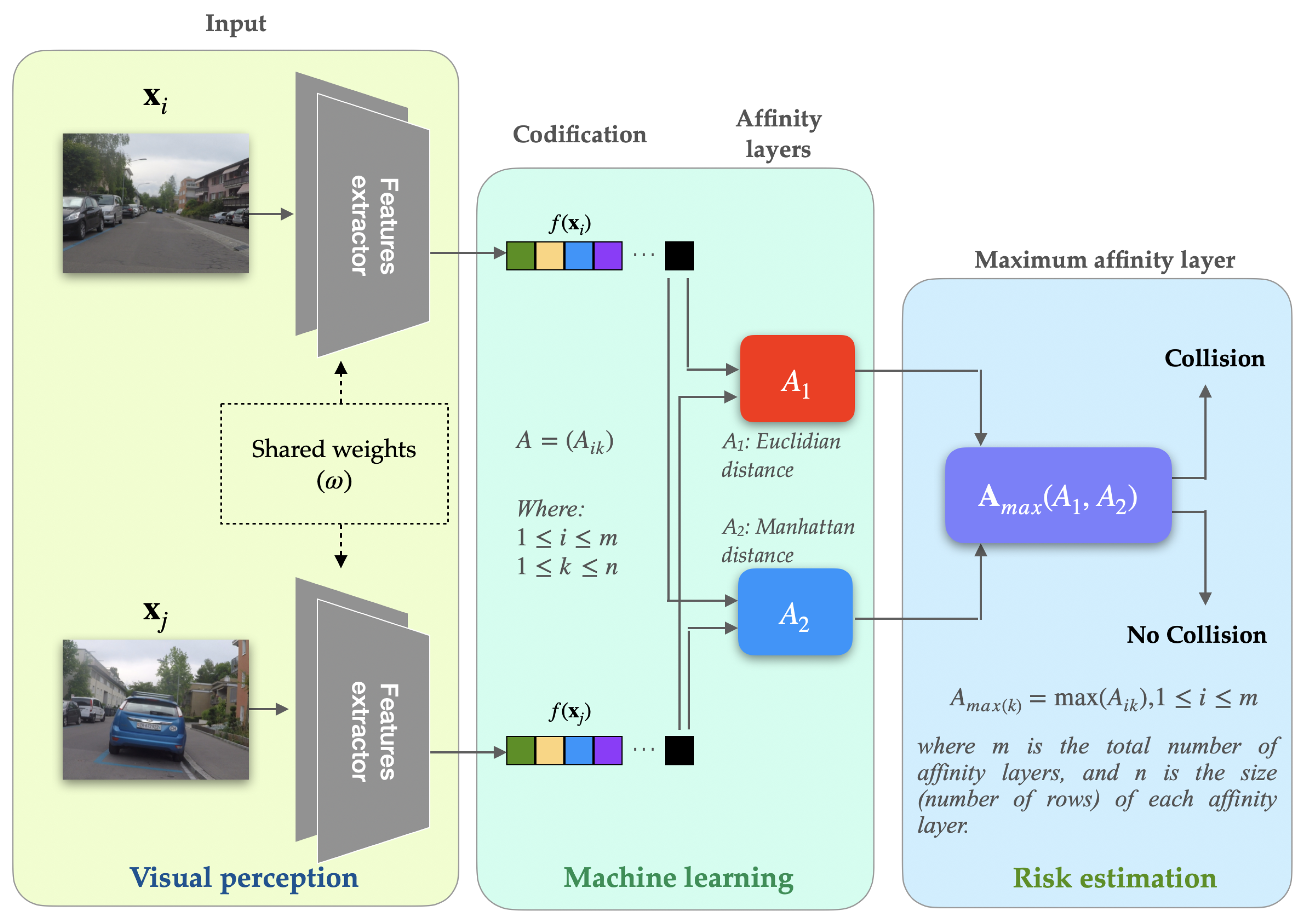

3.2. Cognitive Model Architecture

| Algorithm 1: Training of generic Siamese neural network. |

|

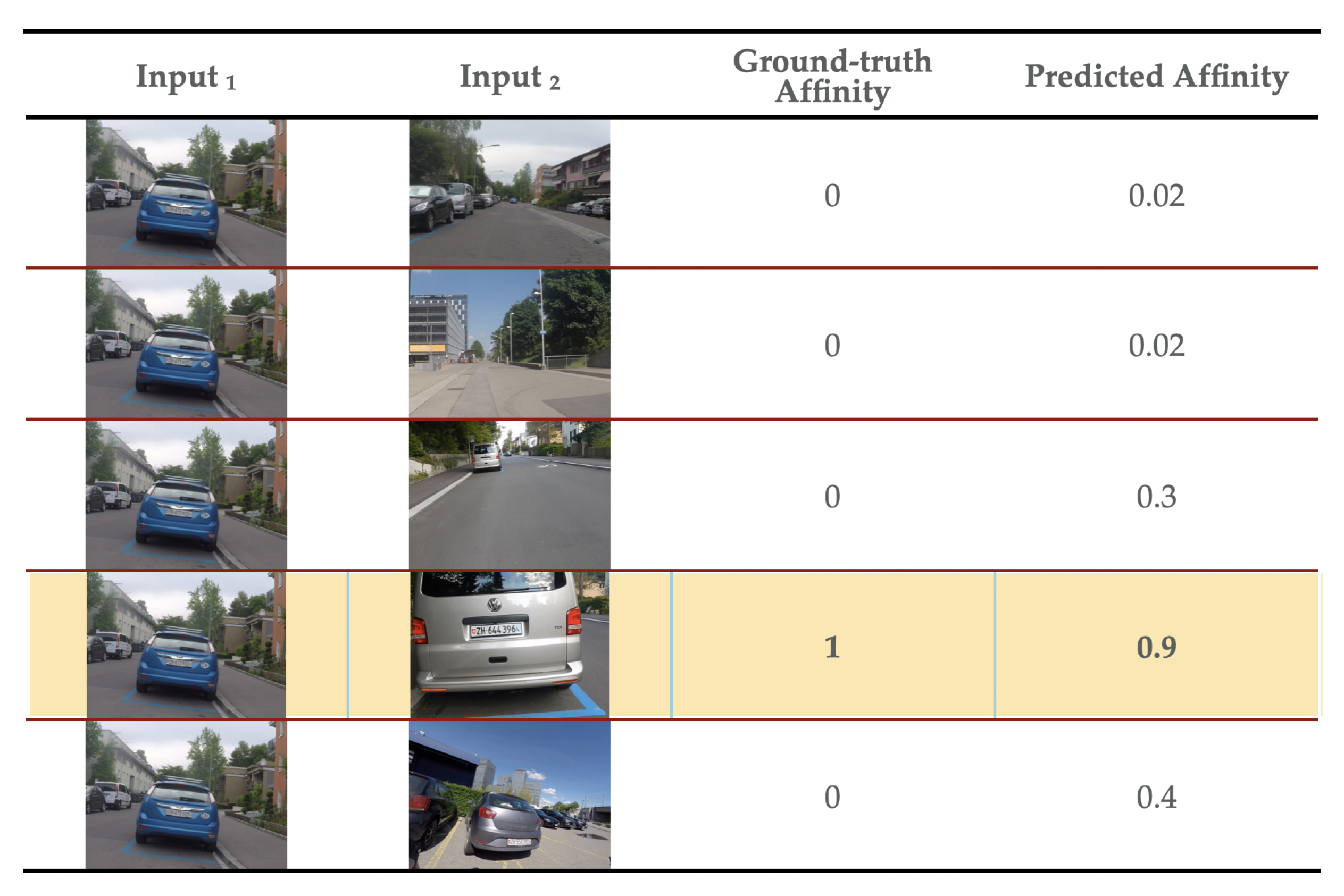

3.3. Affinity Layer Overview

3.4. Combined Affinity Layer Overview

3.5. Dataset Overview

- The MiniImageNet dataset as stated in Vinyals et al. [22] contains 100 classes chosen randomly from the original ImageNet dataset and each of those classes is itself composed of 600 images. The data set was divided following what was presented in [30,34,35,36], into 64, 16 and 20 training, validation and test classes, respectively. The main reason for using this data set is due to its complexity and its repeated use to test many other One-Shot learning tasks.

- The CIFAR-100 dataset as stated in [31] contains 100 classes with 600 images each. The data set was divided as suggested in [22,34,35,37] into 64, 16 and 20 training, validation and test classes, respectively. This division is in line with other research that evaluated one-shot learning models with this dataset.

- The CUB-200–2011 dataset defined in [32], as previously mentioned, is a fine-grained dataset consisting of 200 classes and images. A split was applied to the dataset similar to the one proposed in [34] of 100, 50, and 50 classes for training, validation, and testing, respectively, which in turn is in line with the splits also established in [14,21,22,35,37,38,39].

4. Results

4.1. Experimental Setup

4.2. Comparison of the Model Against Reference Data

4.3. Performance and Generalization in the State-of-the-Art

5. Discussion

6. Conclusions

Funding

Conflicts of Interest

Abbreviations

| CNN | Convolutional Neural Network |

| CNNs | Convolutional Neural Networks |

| DL | Deep Learning |

| LSTM | Long Short-Term Memory |

| ML | Machine Learning |

| SML | Simplified Machine Learning |

References

- López Gómez, L. La bicicleta como medio de transporte en la movilidad sustentable. Technical report, Dirección General de Análisis Legislativo, Senado de la República, México, 2018.

- ITDP. Manual Ciclociudades I. La Movilidad en Bicicleta como Política Pública. In Manual Ciclociudades; Instituto de Políticas para el Transporte y el Desarrollo: México D.F., 2011; Vol. I, p. 62.

- Vision Zero Network. What is Vision Zero? 2022. Available online: https://visionzeronetwork.org/about/what-is-vision-zero/.

- WHO. Global status report on road safety 2018. Technical report, World Health Organization, Geneva, 2018.

- INEGI. Estadísticas a propósito del Día de Muertos, DATOS NACIONALES. Technical report, Instituto Nacional de Estadística y Geografía, México, 2019.

- Hilmkil, A.; Ivarsson, O.; Johansson, M.; Kuylenstierna, D.; van Erp, T. Towards Machine Learning on data from Professional Cyclists. 2018; arXiv:cs.LG/1808.00198]. [Google Scholar]

- Ngiam, J.; Khosla, A.; Kim, M.; Nam, J.; Lee, H.; Ng, A.Y. Multimodal Deep Learning. In Proceedings of the Proceedings of the 28th International Conference on Machine Learning, ICML 2011, Bellevue, Washington, USA, June 28 - July 2, 2011; Getoor, L.; Scheffer, T., Eds. Omnipress. 2011; 689–696. [Google Scholar]

- Srivastava, N.; Salakhutdinov, R. Multimodal Learning with Deep Boltzmann Machines. J. Mach. Learn. Res. 2014, 15, 2949–2980. [Google Scholar]

- Zhao, H.; Wijnands, J.S.; Nice, K.A.; Thompson, J.; Aschwanden, G.D.P.A.; Stevenson, M.; Guo, J. Unsupervised Deep Learning to Explore Streetscape Factors Associated with Urban Cyclist Safety. In Proceedings of the Smart Transportation Systems 2019; Qu, X.; Zhen, L.; Howlett, R.J.; Jain, L.C., Eds., Singapore. 2019; pp. 155–164. [Google Scholar]

- Galán, R.; Calle, M. García., J.M. Análisis de variables que influencian la accidentalidad ciclista: desarrollo de modelos y diseño de una herramienta de ayuda. In Proceedings of the XIII Congreso de Ingeniería de Organización Barcelona-Terrassa, September 2nd-4th 2009. Asociación para el Desarrollo de la Ingeniería de Organización - ADINGOR. 2009; 696–703. [Google Scholar]

- Caterini, A.L.; Chang, D.E. Deep Neural Networks in a Mathematical Framework, 1st ed.; Springer Publishing Company, Incorporated, 2018.

- Cuomo, S.; Di Cola, V.S.; Giampaolo, F.; Rozza, G.; Raissi, M.; Piccialli, F. Scientific Machine Learning Through Physics–Informed Neural Networks: Where we are and What’s Next. Journal of Scientific Computing 2022, 92, 88. [Google Scholar] [CrossRef]

- Khosla, P.; Teterwak, P.; Wang, C.; Sarna, A.; Tian, Y.; Isola, P.; Maschinot, A.; Liu, C.; Krishnan, D. Supervised Contrastive Learning. 2021; arXiv:cs.LG/2004.11362. [Google Scholar]

- Chen, T.; Kornblith, S.; Norouzi, M.; Hinton, G. A Simple Framework for Contrastive Learning of Visual Representations. In Proceedings of the Proceedings of the 37th International Conference on Machine Learning; III, H.D.; Singh, A., Eds. PMLR, 13–18 Jul 2020, Vol. 119, Proceedings of Machine Learning Research. 1597–1607.

- van den Oord, A.; Li, Y.; Vinyals, O. Representation Learning with Contrastive Predictive Coding. 2019; arXiv:cs.LG/1807.03748. [Google Scholar]

- Tian, Y.; Krishnan, D.; Isola, P. Contrastive Multiview Coding. 2020; arXiv:cs.CV/1906.05849. [Google Scholar]

- Hjelm, R.D.; Fedorov, A.; Lavoie-Marchildon, S.; Grewal, K.; Bachman, P.; Trischler, A.; Bengio, Y. Learning deep representations by mutual information estimation and maximization. In Proceedings of the International Conference on Learning Representations. 2019. [Google Scholar]

- He, K.; Fan, H.; Wu, Y.; Xie, S.; Girshick, R. Momentum Contrast for Unsupervised Visual Representation Learning. In Proceedings of the 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). 2020; 9726–9735. [Google Scholar] [CrossRef]

- Hernández-Herrera, A.; Espino, E.R.; Álvarez Vargas, R.; Ponce, V.H.P. Una Exploración Sobre el Aprendizaje Automático Simplificado: Generalización a partir de Algunos Ejemplos. Komputer Sapiens 2021, 3, 36–41. [Google Scholar]

- Lee, S.W.; O’Doherty, J.P.; Shimojo, S. Neural Computations Mediating One-Shot Learning in the Human Brain. PLOS Biology 2015, 13, 1–36. [Google Scholar] [CrossRef] [PubMed]

- Snell, J.; Swersky, K.; Zemel, R. Prototypical Networks for Few-shot Learning. In Proceedings of the Advances in Neural Information Processing Systems; Guyon, I.; Luxburg, U.V.; Bengio, S.; Wallach, H.; Fergus, R.; Vishwanathan, S.; Garnett, R., Eds. Curran Associates, Inc. 2017; 30. [Google Scholar]

- Vinyals, O.; Blundell, C.; Lillicrap, T.; kavukcuoglu, k.; Wierstra, D. Matching Networks for One Shot Learning. In Proceedings of the Advances in Neural Information Processing Systems; Lee, D.; Sugiyama, M.; Luxburg, U.; Guyon, I.; Garnett, R., Eds. Curran Associates, Inc. 2016; 29. [Google Scholar]

- y Christian Paulina Mendoza Torres, R.H.S. Metodología de la Investigación: Las rutas cuantitativa, cualitativa y mixta; McGraw-Hill Interamericana, 2018.

- Xing, E.; Jordan, M.; Russell, S.J.; Ng, A. Distance Metric Learning with Application to Clustering with Side-Information. In Proceedings of the Advances in Neural Information Processing Systems; Becker, S.; Thrun, S.; Obermayer, K., Eds. MIT Press. 2002; 15. [Google Scholar]

- Hadsell, R.; Chopra, S.; LeCun, Y. Dimensionality Reduction by Learning an Invariant Mapping. In Proceedings of the 2006 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR’06). 2006; 2, 1735–1742. [Google Scholar] [CrossRef]

- Bromley, J.; Bentz, J.W.; Bottou, L.; Guyon, I.M.; LeCun, Y.; Moore, C.; Säckinger, E.; Shah, R. Signature Verification Using A "Siamese" Time Delay Neural Network. Int. J. Pattern Recognit. Artif. Intell. 1993, 7, 669–688. [Google Scholar] [CrossRef]

- Koch, G.R. Siamese Neural Networks for One-Shot Image Recognition. In Proceedings of the Proceedings of the 32nd International Conference on Machine Learning; 2015. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition. 2016; pp. 770–778. [Google Scholar]

- Tan, M.; Le, Q. Efficientnet: Rethinking model scaling for convolutional neural networks. In Proceedings of the International conference on machine learning. PMLR. 2019; pp. 6105–6114. [Google Scholar]

- Li, X.; Yu, L.; Fu, C.W.; Fang, M.; Heng, P.A. Revisiting metric learning for few-shot image classification. Neurocomputing 2020, 406, 49–58. [Google Scholar] [CrossRef]

- Krizhevsky, A.; Hinton, G. Learning multiple layers of features from tiny images. Technical Report 0, University of Toronto, Toronto, Ontario, 2009.

- Wah, C.; Branson, S.; Welinder, P.; Perona, P.; Belongie, S. The Caltech-UCSD Birds-200-2011 Dataset; 2011.

- Loquercio, A.; Maqueda, A.I.; del Blanco, C.R.; Scaramuzza, D. DroNet: Learning to Fly by Driving. IEEE Robotics and Automation Letters 2018, 3, 1088–1095. [Google Scholar] [CrossRef]

- Chen, Z.; Fu, Y.; Zhang, Y.; Jiang, Y.G.; Xue, X.; Sigal, L. Multi-Level Semantic Feature Augmentation for One-Shot Learning. IEEE Transactions on Image Processing 2019, 28, 4594–4605. [Google Scholar] [CrossRef]

- Sung, F.; Yang, Y.; Zhang, L.; Xiang, T.; Torr, P.H.; Hospedales, T.M. Learning to Compare: Relation Network for Few-Shot Learning. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition; 2018; pp. 1199–1208. [Google Scholar] [CrossRef]

- Hilliard, N.; Phillips, L.; Howland, S.; Yankov, A.; Corley, C.D.; Hodas, N.O. Few-Shot Learning with Metric-Agnostic Conditional Embeddings. 2018; arXiv:cs.LG/1802.04376. [Google Scholar]

- Zhou, F.; Wu, B.; Li, Z. Deep Meta-Learning: Learning to Learn in the Concept Space. ArXiv 2018, abs/1802.03596.

- Mangla, P.; Kumari, N.; Sinha, A.; Singh, M.; Krishnamurthy, B.; Balasubramanian, V.N. Charting the Right Manifold: Manifold Mixup for Few-shot Learning. In Proceedings of the Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision (WACV), March 2020.

- Kang, D.; Kwon, H.; Min, J.; Cho, M. Relational Embedding for Few-Shot Classification. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), October. 2021; 8822–8833. [Google Scholar]

- Li, Z.; Zhou, F.; Chen, F.; Li, H. Meta-SGD: Learning to Learn Quickly for Few-Shot Learning. 2017; arXiv:cs.LG/1707.09835. [Google Scholar]

- Finn, C.; Abbeel, P.; Levine, S. Model-Agnostic Meta-Learning for Fast Adaptation of Deep Networks. In Proceedings of the Proceedings of the 34th International Conference on Machine Learning; Precup, D.; Teh, Y.W., Eds. PMLR, 06–11 Aug 2017, Vol. 70, Proceedings of Machine Learning Research. pp. 1126–1135.

| Available | Not Available |

|---|---|

| Position | Types of possible moving obstacles |

| Orientation | Number of moving obstacles |

| Velocity | Position of moving obstacles |

| Aceleration | Known data set according to the problem for analysis and testing. |

| Image / Video |

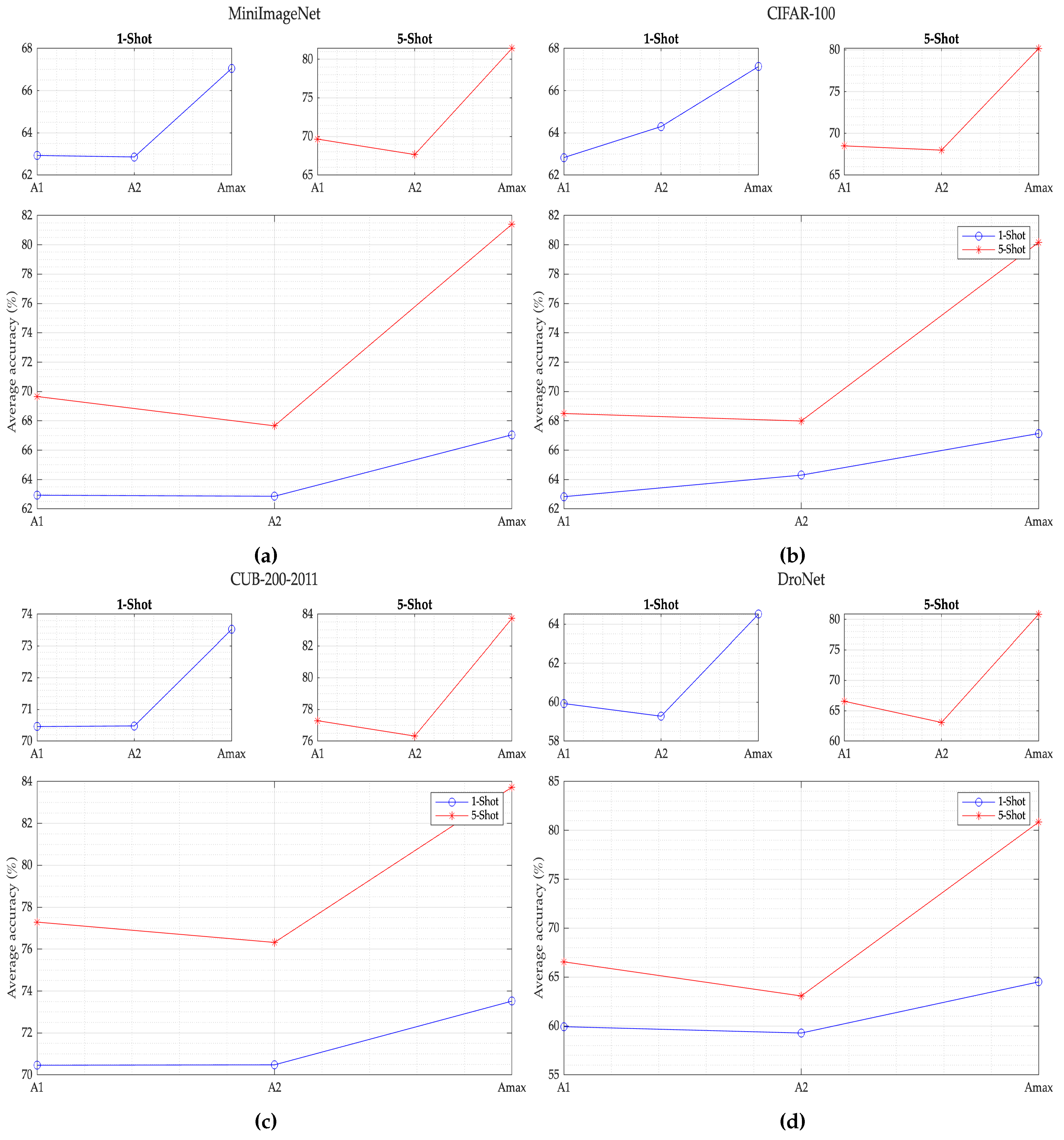

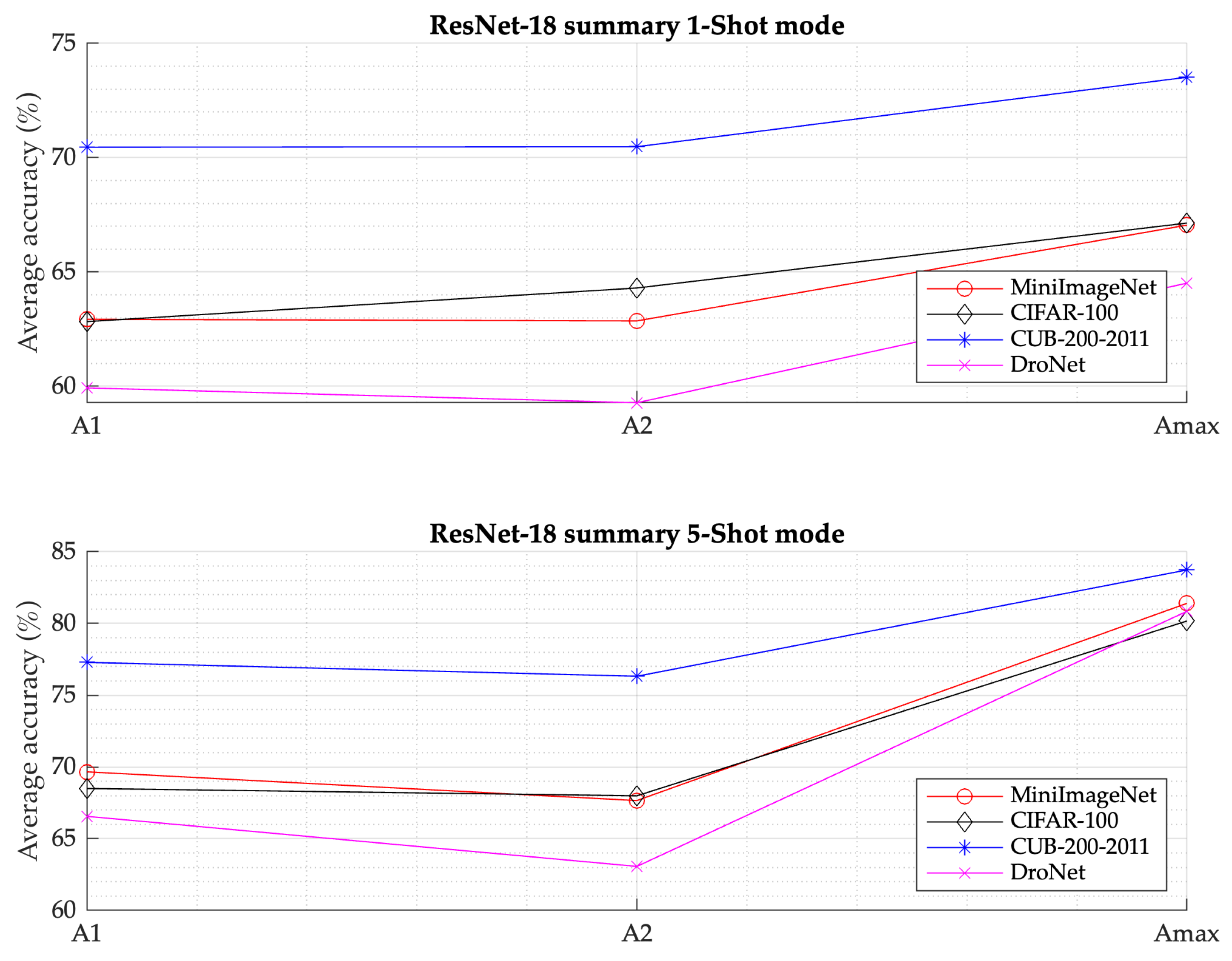

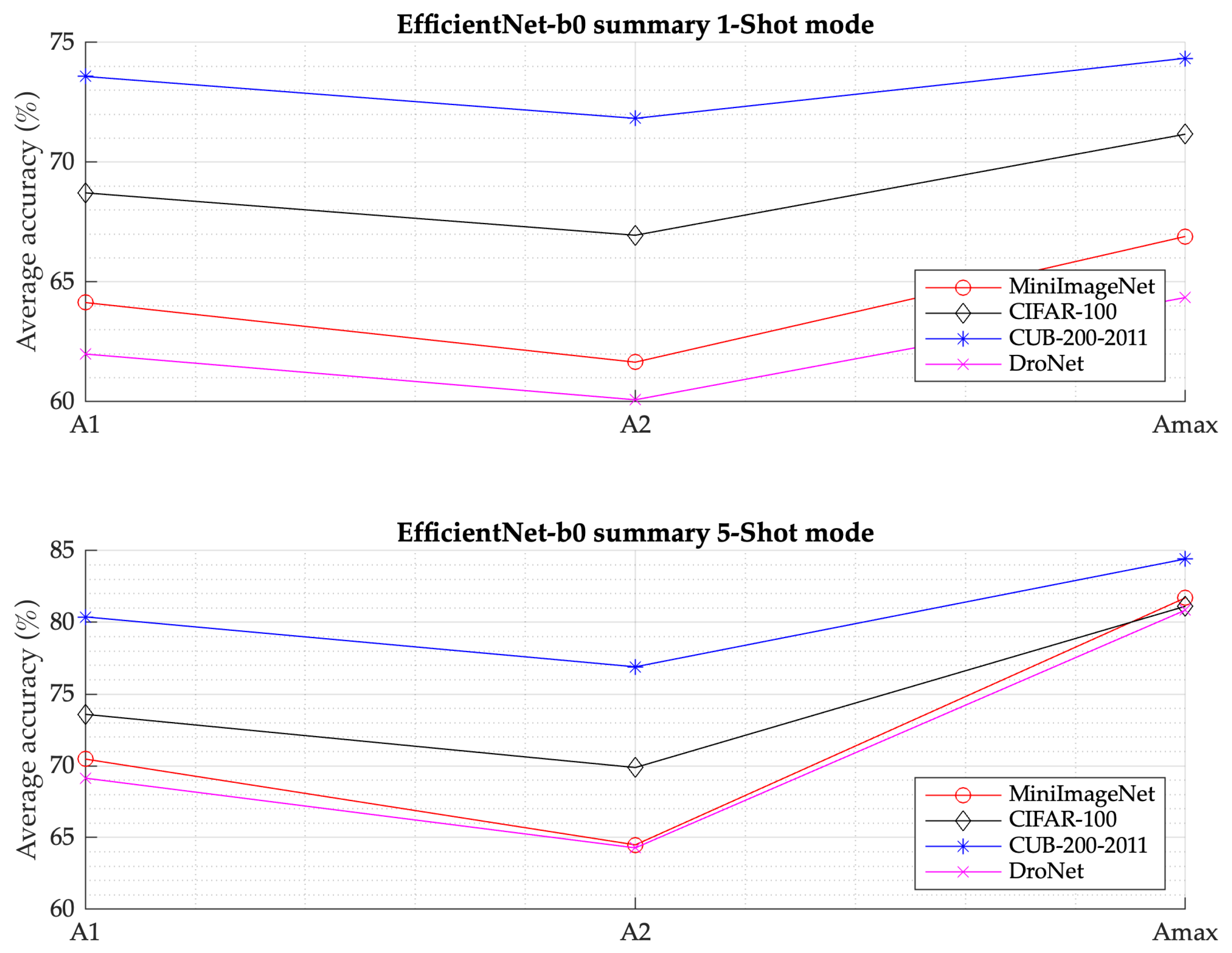

| Feature extractor | Dataset | A1 | A2 | SML model () | |||

|---|---|---|---|---|---|---|---|

| 1-Shot | 5-Shot | 1-Shot | 5-Shot | 1-Shot | 5-Shot | ||

| ResNet-18 | MiniImageNet | 62.93 | 69.66 | 62.86 | 67.66 | 67.05 | 81.40 |

| CIFAR-100 | 62.83 | 68.50 | 64.30 | 67.99 | 67.14 | 80.17 | |

| CUB-200–2011 | 70.46 | 77.29 | 70.48 | 76.32 | 73.52 | 83.73 | |

| DroNet | 59.93 | 66.56 | 59.28 | 63.07 | 64.51 | 80.85 | |

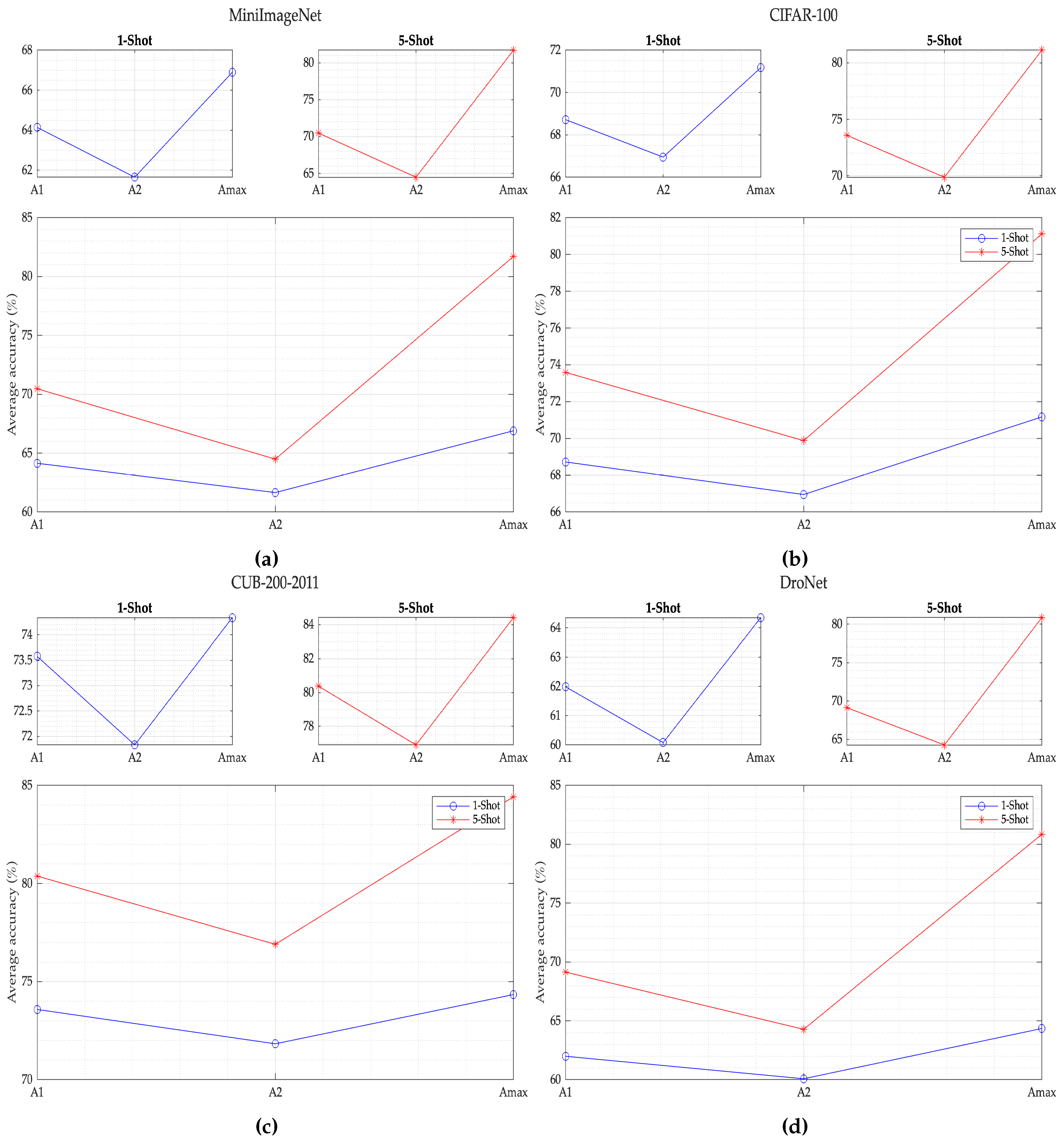

| Feature extractor | Dataset | A1 | A2 | SML model () | |||

|---|---|---|---|---|---|---|---|

| 1-Shot | 5-Shot | 1-Shot | 5-Shot | 1-Shot | 5-Shot | ||

| EfficientNet-b0 | MiniImageNet | 64.14 | 70.47 | 61.65 | 64.49 | 66.90 | 81.71 |

| CIFAR-100 | 68.72 | 73.59 | 66.95 | 68.99 | 71.17 | 79.12 | |

| CUB-200–2011 | 73.58 | 80.38 | 71.83 | 76.90 | 74.34 | 84.42 | |

| DroNet | 61.99 | 69.14 | 60.08 | 64.28 | 64.35 | 80.85 | |

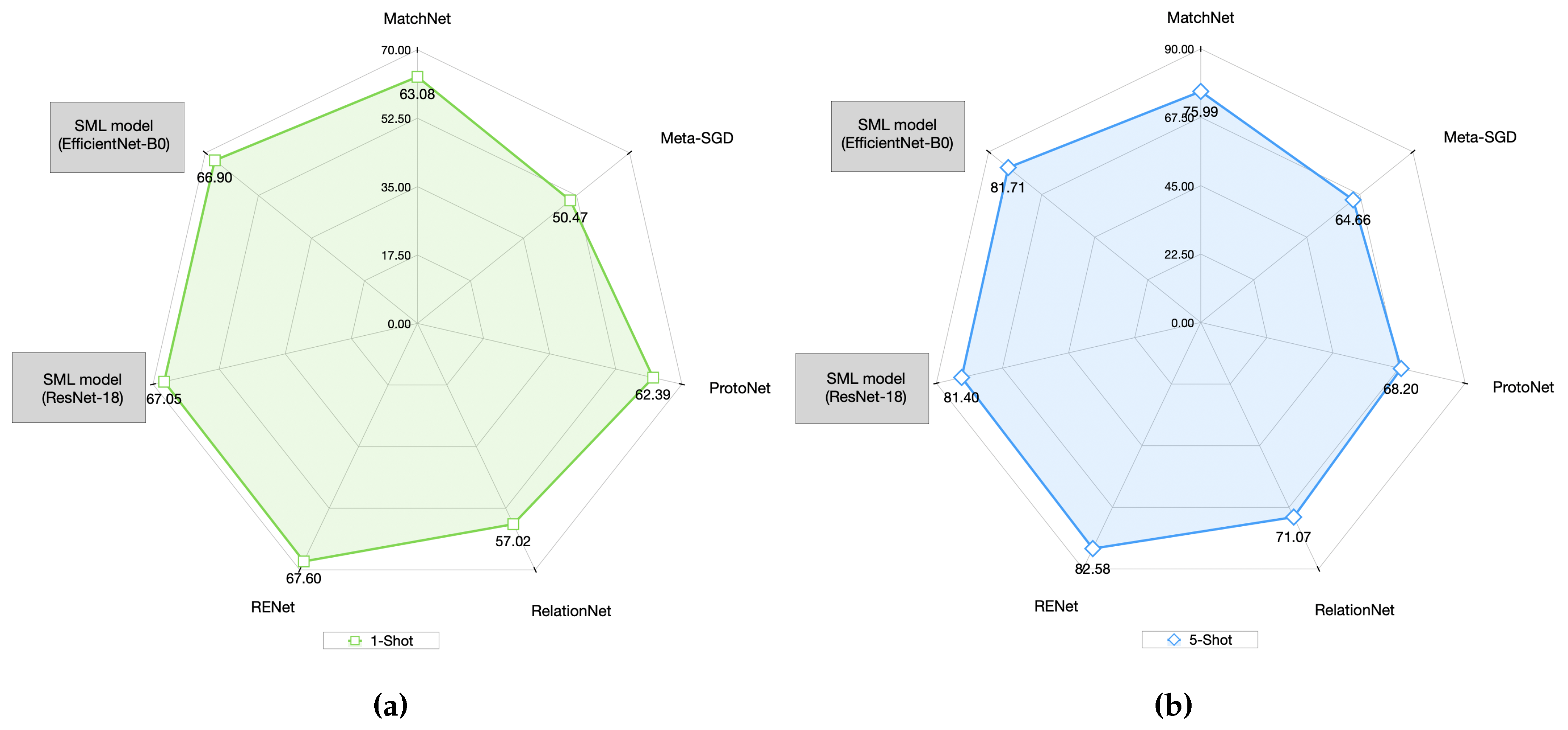

| Model | Feature extractor | MiniImageNet | |

|---|---|---|---|

| 1-Shot | 5-Shot | ||

| [22] MatchNet | ResNet-12 | 63.08 | 75.99 |

| [40] Meta-SGD | ResNet-50 | 50.47 | 64.66 |

| [21] ProtoNet | ResNet-12 | 62.39 | 68.20 |

| [35] RelationNet | ResNet-34 | 57.02 | 71.07 |

| [39] RENet | ResNet-12 | 67.60 | 82.58 |

| SML (Our model) | ResNet-18 | 67.05 | 81.40 |

| SML (Our model) | EfficientNet-B0 | 66.90 | 81.71 |

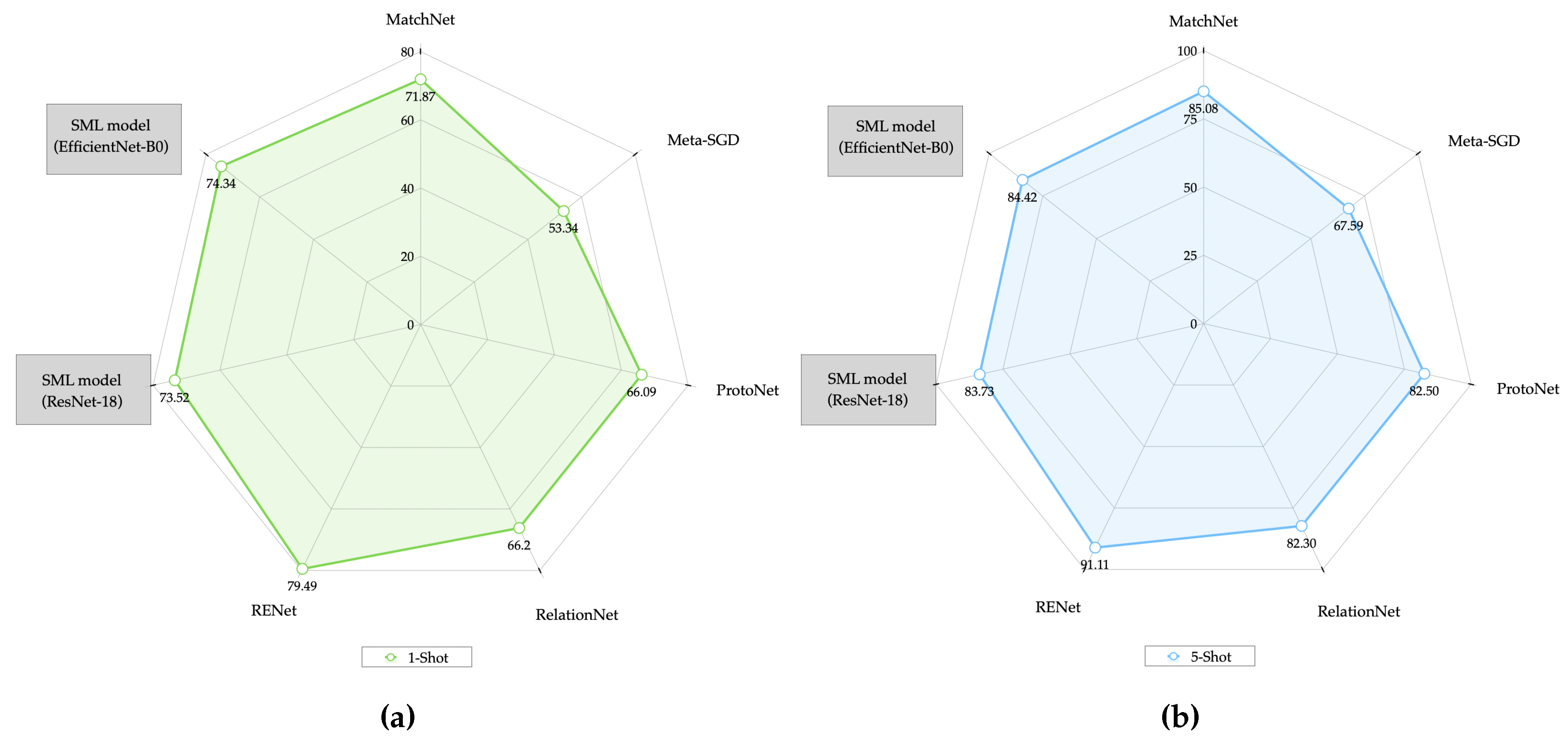

| Model | Feature extractor | CUB-200–2011 | |

|---|---|---|---|

| 1-Shot | 5-Shot | ||

| [22] MatchNet | ResNet-12 | 71.87 | 85.08 |

| [40] Meta-SGD | ResNet-50 | 53.34 | 67.59 |

| [21] ProtoNet | ResNet-12 | 66.09 | 82.50 |

| [35] RelationNet | ResNet-34 | 66.20 | 82.30 |

| [39] RENet | ResNet-12 | 79.49 | 91.11 |

| SML (Our model) | ResNet-18 | 73.52 | 83.73 |

| SML (Our model) | EfficientNet-B0 | 74.34 | 84.42 |

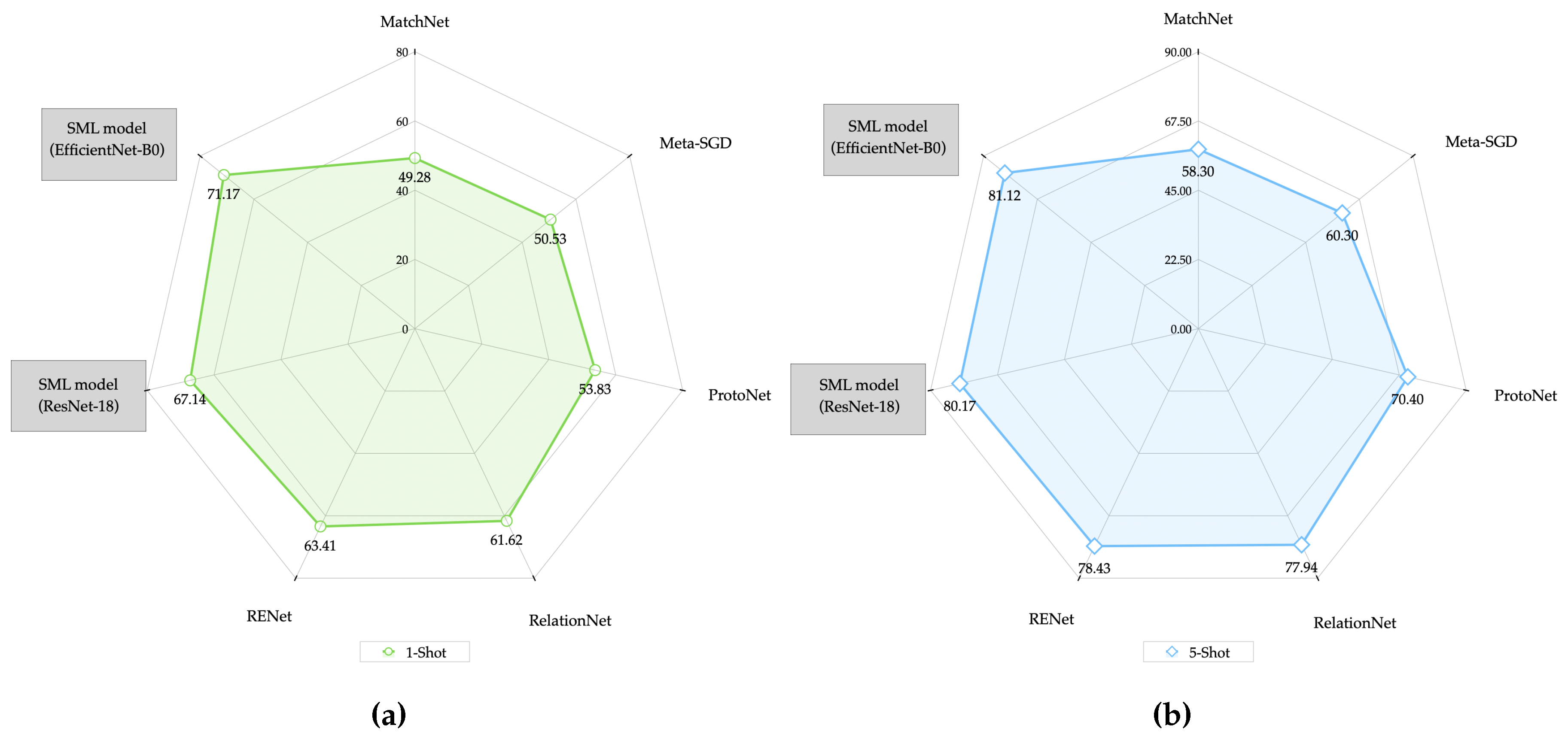

| Model | Feature extractor | CIFAR-100 | |

|---|---|---|---|

| 1-Shot | 5-Shot | ||

| [41] MAML | ResNet-12 | 49.28 | 58.30 |

| [22] MatchNet | ResNet-12 | 50.53 | 60.30 |

| [40]Meta-SGD | ResNet-50 | 53.83 | 70.40 |

| [37]DEML+Meta-SGD | ResNet-50 | 61.62 | 77.94 |

| [34]Dual TriNet | ResNet-18 | 63.41 | 78.43 |

| SML (Our model) | ResNet-18 | 67.14 | 80.17 |

| SML (Our model) | EfficientNet-B0 | 71.17 | 81.12 |

| Model | Feature extractor | DroNet | |

|---|---|---|---|

| 1-Shot | 5-Shot | ||

| [41] MAML | ResNet-12 | 45.59 | 54.61 |

| [22] MatchNet | ResNet-12 | 48.09 | 57.45 |

| [40]Meta-SGD | ResNet-50 | 48.65 | 64.74 |

| [37]DEML+Meta-SGD | ResNet-50 | 62.25 | 79.52 |

| [34]Dual TriNet | ResNet-18 | 63.77 | 80.53 |

| SML (Our model) | ResNet-18 | 64.51 | 81.43 |

| SML (Our model) | EfficientNet-B0 | 64.35 | 80.85 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).