Submitted:

16 December 2024

Posted:

16 December 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

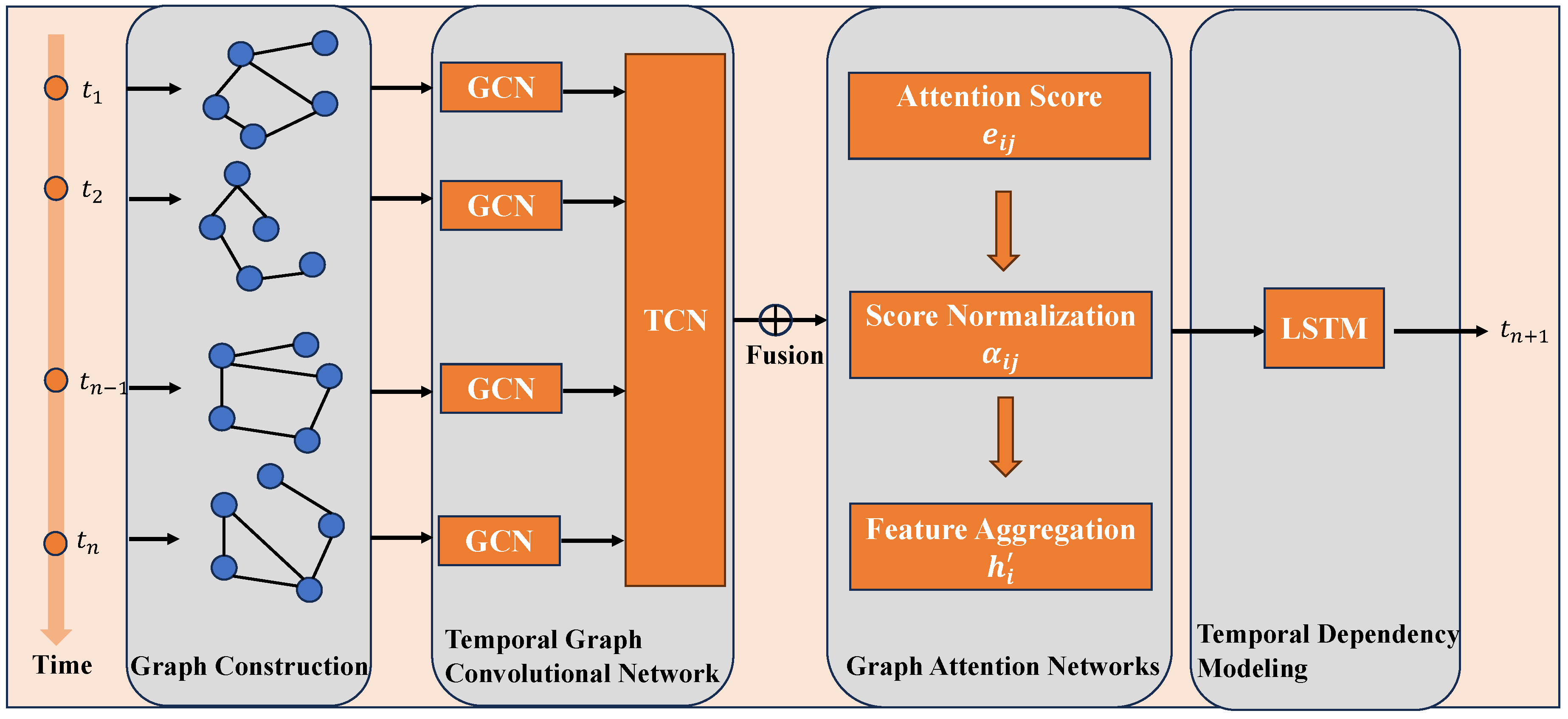

- We propose a hybrid framework that combines GNN with temporal modeling to simultaneously capture spatial and temporal dependencies in multi-dimensional time-series data.

- We introduce a graph attention mechanism that dynamically prioritizes feature relationships, enhancing the interpretability and adaptability of the model.

- We validate the proposed method on real-world cooling system data, demonstrating its superior performance compared to traditional machine learning and deep learning models.

2. Related Work

2.1. Cooling Capacity Prediction

2.2. Graph Neural Networks for Structured Data

2.3. Temporal Graph Modeling

2.4. Summary and Research Gap

- Limited Temporal-Spatial Integration: Traditional methods fail to simultaneously capture temporal dependencies and spatial relationships among variables.

- Lack of Dynamic Relationship Modeling: Most graph-based approaches assume a fixed graph structure, overlooking the dynamic nature of variable interactions in real-world cooling systems.

- Insufficient Interpretability: Many existing methods lack mechanisms to explain the importance of relationships or variables in the prediction process.

3. Proposed Framework

- Graph Construction: Encodes relationships among features into a graph structure, allowing spatial dependencies to be explicitly modeled.

- Temporal Graph Convolutional Network (TGCN): Integrates graph convolution and temporal convolution to jointly capture spatial and temporal dependencies.

- Graph Attention Mechanism (GAT): Dynamically adjusts the importance of relationships between variables, enhancing model interpretability and predictive performance.

- Temporal Dependency Modeling: Utilizes recurrent layers (e.g., LSTM or GRU) to capture long-term dependencies across time steps.

3.1. Graph Construction

- Nodes (): Each node represents a variable in the dataset.

- Edges (): Each edge represents a relationship between two variables. For example, the correlation between voltage and current might be strong, while the correlation between ambient temperature and coolant flow rate might be weaker.

3.2. Temporal Graph Convolutional Network (TGCN)

- Graph Convolution: Captures spatial dependencies by propagating information across the graph. Each graph convolutional layer updates the representation of each node by aggregating features from its neighbors:where is the node embedding matrix at layer l, is a learnable weight matrix, and is an activation function such as ReLU [13]. This operation ensures that each node’s representation reflects information from its neighbors, enabling the model to capture local spatial patterns.

- Temporal Convolution: Extracts short-term temporal features by applying 1D convolution along the time dimension:where represents temporal features at time step t for layer l. The convolution kernel size is chosen to balance between capturing immediate temporal dependencies and maintaining computational efficiency.

3.3. Graph Attention Mechanism (GAT)

- Attention Score Calculation: For each pair of connected nodes, the attention score is computed as:where and are node embeddings, is a learnable weight matrix, and is the attention vector.

-

Score Normalization: The attention scores are normalized using softmax:This ensures that the sum of attention weights for each node is 1, improving stability.

- Feature Aggregation: The updated embedding for each node is computed as a weighted sum of its neighbors’ features:

3.4. Temporal Dependency Modeling

3.5. Optimization

| Algorithm 1 Graph Neural Networks with Temporal Dynamics |

|

Input: Time-series data , adjacency matrix , window size T.

|

4. Experiments

4.1. Dataset and Experimental Setup

4.1.1. Dataset Description

- Voltage (V): Describes the input voltage of the system, ranging from 450V to 550V.

- Current (A): Represents the system load current, with values between 250A and 350A.

- Coolant Flow Rate (L/s): Measures the flow rate of the coolant, ranging from 2.0L/s to 3.0L/s.

- Ambient Temperature (°C): Reflects the external environmental influence on cooling efficiency, with values between 20°C and 35°C.

- Cooling Capacity (kW): The target variable, indicating the cooling power per unit time, ranging from 100kW to 150kW.

- Timestamp Alignment: Missing data were imputed using interpolation to ensure a complete time-series.

- Noise Removal: High-frequency noise was filtered using wavelet transformation.

- Normalization: All input variables were normalized to the range to eliminate dimensional effects.

- Sliding Window Construction: A sliding window of size 24 (corresponding to 4 hours) was used to transform the time-series into input samples. Each sample contains 24 historical time steps as input and the next time step as the output cooling capacity.

- High-Load Scenario: Increased current values to 400A to simulate extreme operating conditions.

- Low-Temperature Environment: Reduced ambient temperature to 10°C to examine the cooling system under cold environments.

- Non-Periodic Disturbances: Introduced random disturbances at specific time intervals to test model stability.

4.1.2. Experimental Setup

- Support Vector Machine (SVM): A traditional machine learning model for static prediction tasks [17].

- Gradient Boosting Machine (GBM): A boosting-based ensemble learning model [18].

- Long Short-Term Memory (LSTM): A deep learning model designed for sequential data [15].

- Graph Convolutional Network (GCN): A graph-based model for spatial dependency modeling [19].

- Temporal Graph Convolutional Network (TGCN): A hybrid model combining GCN and RNN for spatial-temporal modeling [20].

4.2. Evaluation Metrics

- Mean Squared Error (MSE): Measures the average squared difference between predicted and actual values.

- Root Mean Squared Error (RMSE): A square root of MSE, representing the standard deviation of prediction errors.

- Coefficient of Determination (): Indicates the proportion of variance explained by the model [21].

4.3. Results and Analysis

4.3.1. Overall Performance

4.3.2. In-Depth Analysis of Prediction Performance

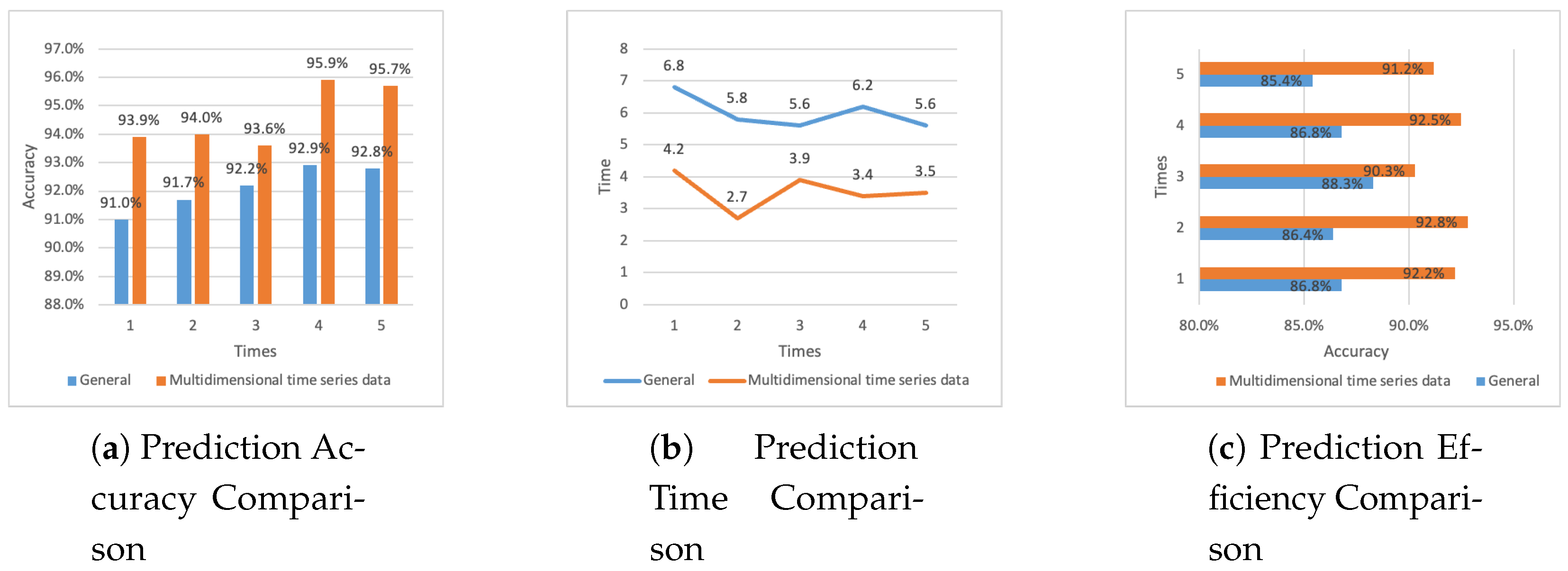

- The accuracy of the general method ranges from 91.0% to 92.9%, with an average accuracy of 92.12%.

- The accuracy of the proposed method ranges from 93.6% to 95.9%, with an average accuracy of 94.62%.

- The general method requires 5.6 to 6.8 seconds for prediction, with an average time of 6 seconds.

- The proposed method reduces the prediction time to a range of 2.7 to 4.2 seconds, with an average time of 3.54 seconds.

- The prediction efficiency of the general method ranges from 85.4% to 88.3%, with an average efficiency of 86.74%.

- The prediction efficiency of the proposed method ranges from 90.3% to 92.8%, with an average efficiency of 91.80%.

5. Discussion

5.1. Advantages of the Proposed Method

5.2. Limitations and Future Work

- Dependence on Graph Structure: The performance of the model relies on the accuracy of the initial graph structure, which is currently constructed based on predefined rules (e.g., correlation coefficients). In scenarios where relationships among variables are complex or non-linear, the predefined graph may not fully capture their dependencies.

- Scalability: While the model demonstrates excellent performance on medium-sized datasets, its computational complexity increases with the number of variables and the length of time-series data. This may pose challenges when scaling to very large datasets or real-time applications.

- Data Availability: The proposed method requires a sufficient amount of high-quality labeled data for training. In industrial settings, such data may not always be available due to sensor limitations or data privacy concerns.

6. Conclusion

Acknowledgments

References

- Zhang, W.; Yang, D.; Wang, H. Data-driven methods for predictive maintenance of industrial equipment: A survey. IEEE systems journal 2019, 13, 2213–2227. [Google Scholar] [CrossRef]

- Perera, A.; Wickramasinghe, P.; Nik, V.M.; Scartezzini, J.L. Machine learning methods to assist energy system optimization. Applied energy 2019, 243, 191–205. [Google Scholar] [CrossRef]

- Cortes, C. Support-Vector Networks. Machine Learning 1995. [Google Scholar] [CrossRef]

- Friedman, J.H. Greedy function approximation: a gradient boosting machine. Annals of statistics 2001, pp. 1189–1232.

- Graves, A.; Graves, A. Long short-term memory. Supervised sequence labelling with recurrent neural networks 2012, pp. 37–45.

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef]

- Kipf, T.N.; Welling, M. Semi-supervised classification with graph convolutional networks. arXiv preprint arXiv:1609.02907 2016.

- Yu, B.; Yin, H.; Zhu, Z. Spatio-temporal graph convolutional networks: A deep learning framework for traffic forecasting. arXiv preprint arXiv:1709.04875 2017.

- Velickovic, P.; Cucurull, G.; Casanova, A.; Romero, A.; Lio, P.; Bengio, Y. Graph attention networks. arXiv preprint arXiv:1710.10903 2017.

- Zhao, L.; Song, Y.; Zhang, C.; Liu, Y.; Wang, H.; Deng, M. T-GCN: A temporal graph convolutional network for traffic prediction. IEEE Transactions on Intelligent Transportation Systems 2020, 21, 3848–3858. [Google Scholar] [CrossRef]

- Hamilton, W.L.; Ying, R.; Leskovec, J. Inductive representation learning on large graphs. Advances in Neural Information Processing Systems 2017, 30, 1025–1035. [Google Scholar]

- Kipf, T.N.; Welling, M. Semi-Supervised Classification with Graph Convolutional Networks. arXiv preprint arXiv:1609.02907 2017.

- He, K.; Zhang, X.; Ren, S.; Sun, J. Delving Deep into Rectifiers: Surpassing Human-Level Performance on ImageNet Classification. arXiv preprint arXiv:1502.01852 2015.

- Velickovic, P.; Cucurull, G.; Casanova, A.; Romero, A.; Lio, P.; Bengio, Y. Graph Attention Networks. arXiv preprint arXiv:1710.10903 2018.

- Hochreiter, S.; Schmidhuber, J. Long short-term memory. Neural Computation 1997, 9, 1735–1780. [Google Scholar] [CrossRef] [PubMed]

- Kingma, D.P.; Ba, J. Adam: A Method for Stochastic Optimization. arXiv preprint arXiv:1412.6980 2014.

- Vapnik, V.N. The nature of statistical learning theory. Springer 1995. [Google Scholar]

- Friedman, J.H. Greedy function approximation: A gradient boosting machine. Annals of Statistics 2001, 29, 1189–1232. [Google Scholar] [CrossRef]

- Li, Y.; Tarlow, D.; Brockschmidt, M.; Zemel, R. Gated graph sequence neural networks. arXiv preprint arXiv:1511.05493 2015.

- Yu, B.; Yin, H.; Zhu, Z. Spatio-temporal graph convolutional networks: A deep learning framework for traffic forecasting. arXiv preprint arXiv:1709.04875 2017.

- Jean-Baptiste, B.; Johnson, E. Sliding window techniques for time-series data prediction. Journal of Time Series Analysis 2019, 42, 564–580. [Google Scholar]

| Model | MSE | RMSE | |

|---|---|---|---|

| SVM | 0.0085 | 0.0922 | 0.81 |

| GBM | 0.0062 | 0.0787 | 0.85 |

| LSTM | 0.0057 | 0.0755 | 0.87 |

| GCN | 0.0071 | 0.0843 | 0.83 |

| TGCN | 0.0049 | 0.0700 | 0.89 |

| Proposed Method | 0.0035 | 0.0592 | 0.92 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).