Submitted:

10 December 2024

Posted:

11 December 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Literature Review

3. Development of EnSmart

3.1. Conceptualization and Design

- Real-time generation of reading comprehension content.

- Automated grading of student responses.

- Provision of detailed, human-like feedback.

- Full integration with the Canvas LMS.

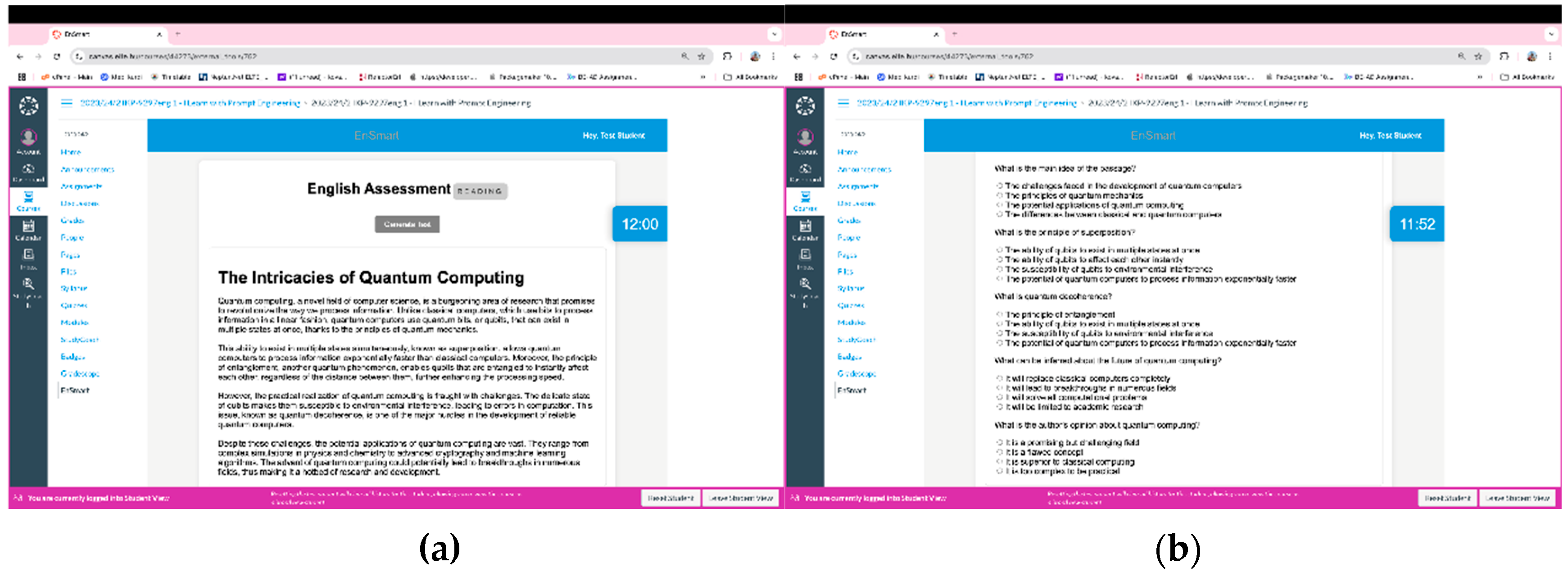

3.2. Integration with Generative AI – Test Content Generation

- Passage Generation: The AI is tasked with creating a 380-400 word passage that is both informative and engaging. The passage must cover a diverse and random topic related to an area of computer science. Its purpose is to effectively evaluate students' academic English reading comprehension while exploring a unique aspect or application of computer science.

- Question Design: The prompt instructs the AI to craft questions that assess various reading comprehension skills, including identifying main ideas, understanding details, making inferences, and recognizing the writer's opinions.

- Structuring the Passage: The AI is prompted to provide a title for the passage and organize it into paragraphs, ensuring clear and logical presentation of information.

- Multiple-Choice Questions: Five multiple-choice questions are to be meticulously designed to delve into and assess students' profound comprehension of academic English reading concepts demonstrated in the passage. The questions should follow a format similar to IELTS reading test questions, challenging students' understanding effectively.

- Output Format: The generated passage and questions are required to be presented in the format of a JavaScript object. This structured output includes attributes such as passage.name, passage.paragraphs[], and questions[{questions [0].text,questions [0].options[],questions [0].answer}], facilitating easy accessibility and usability of the generated data by other components within the application.

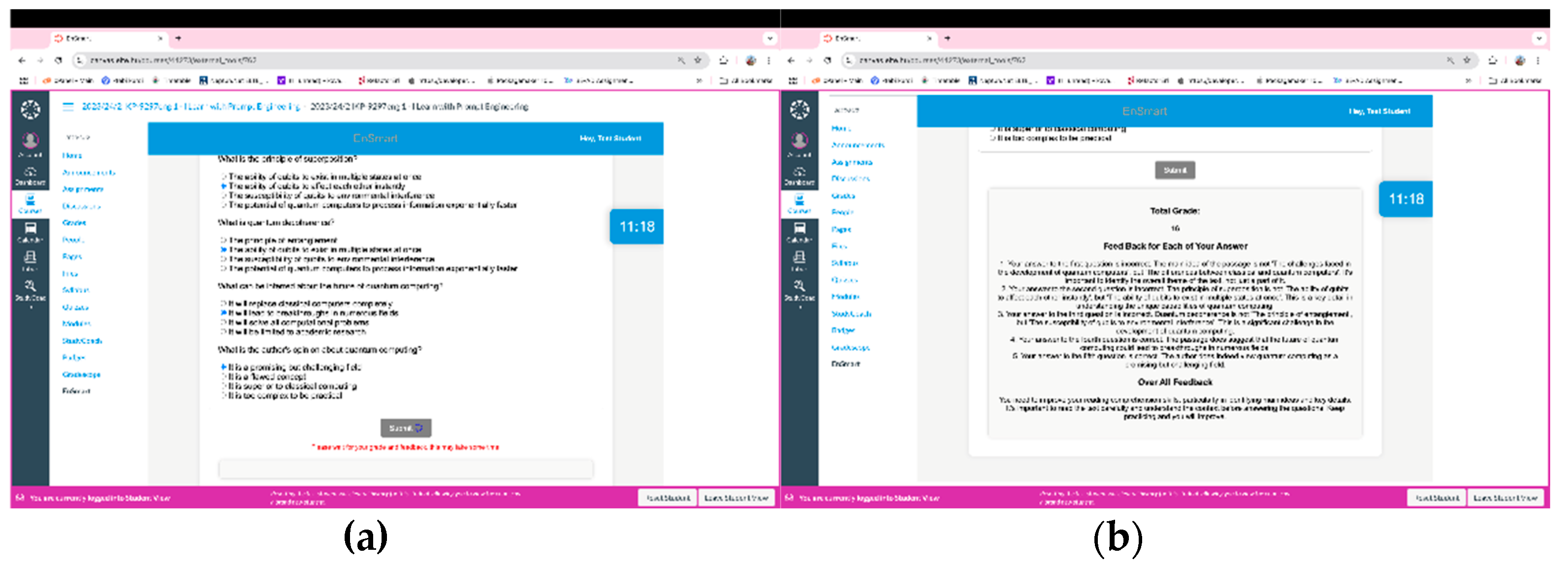

3.3. Integration with Generative AI - Automated Grading and Feedback System

- Accurate Grading: The system computes grades by comparing student responses to correct answers, adopting a binary representation where 1 denotes correctness and 0 signifies incorrectness. This methodical approach ensures precise evaluation of academic English reading comprehension, aligning with the overarching goal of the exercise.

- Scoring Criteria: Each question carries a weightage of 8 points, with full credit awarded for correct responses. This standardized scoring mechanism enhances transparency and consistency in the grading process, adhering closely to academic standards.

- Detailed Feedback: An integral component of the system is the generation of precise and detailed feedback for each student's response. Focused on academic English reading comprehension, the feedback provides nuanced assessments, emphasizing clarity and depth. By mimicking human-like insights, the feedback aids students in identifying and rectifying errors effectively, fostering a conducive learning environment.

- Structured Output: The system presents its assessment outcomes in the format of a JavaScript object, adhering to the prompt's specifications. This structured format includes attributes such as assessment.feedback, assessment.totalGrade, and assessment.overallFeedback, This output format facilitates accessibility and ensures that the generated output is captured more easily, enabling seamless integration with other components of the system.

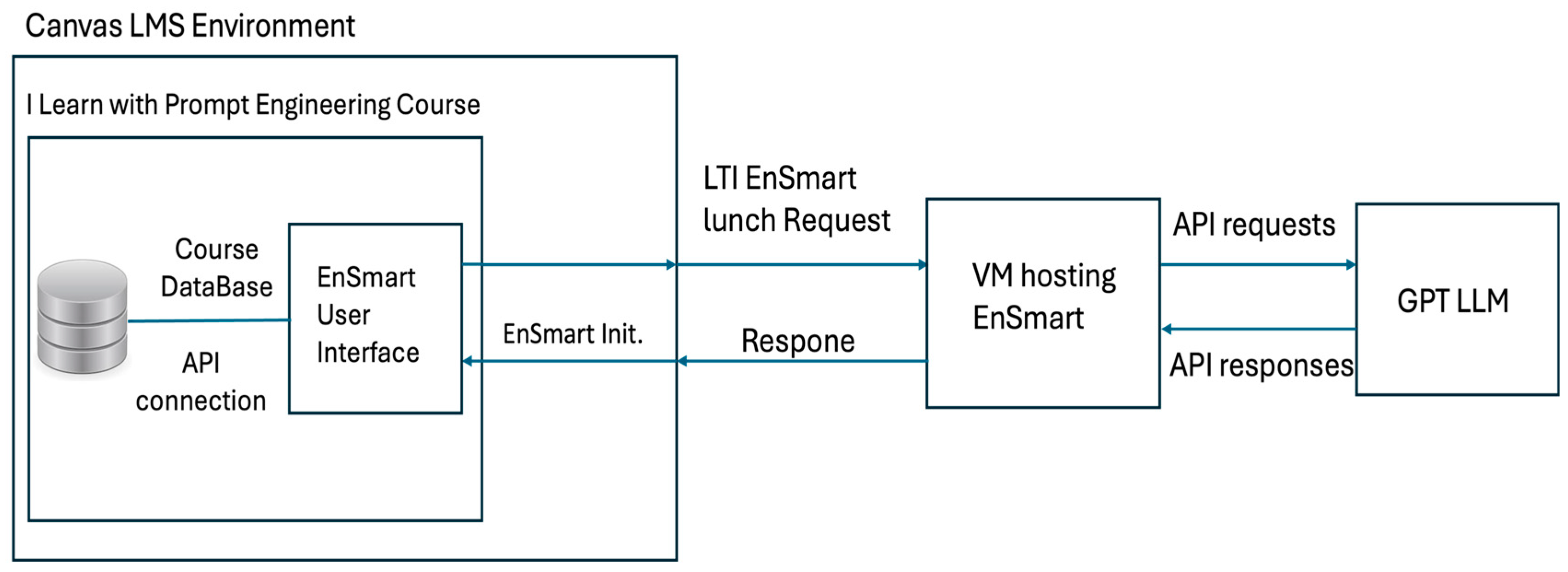

3.4. Integration with Canvas LMS

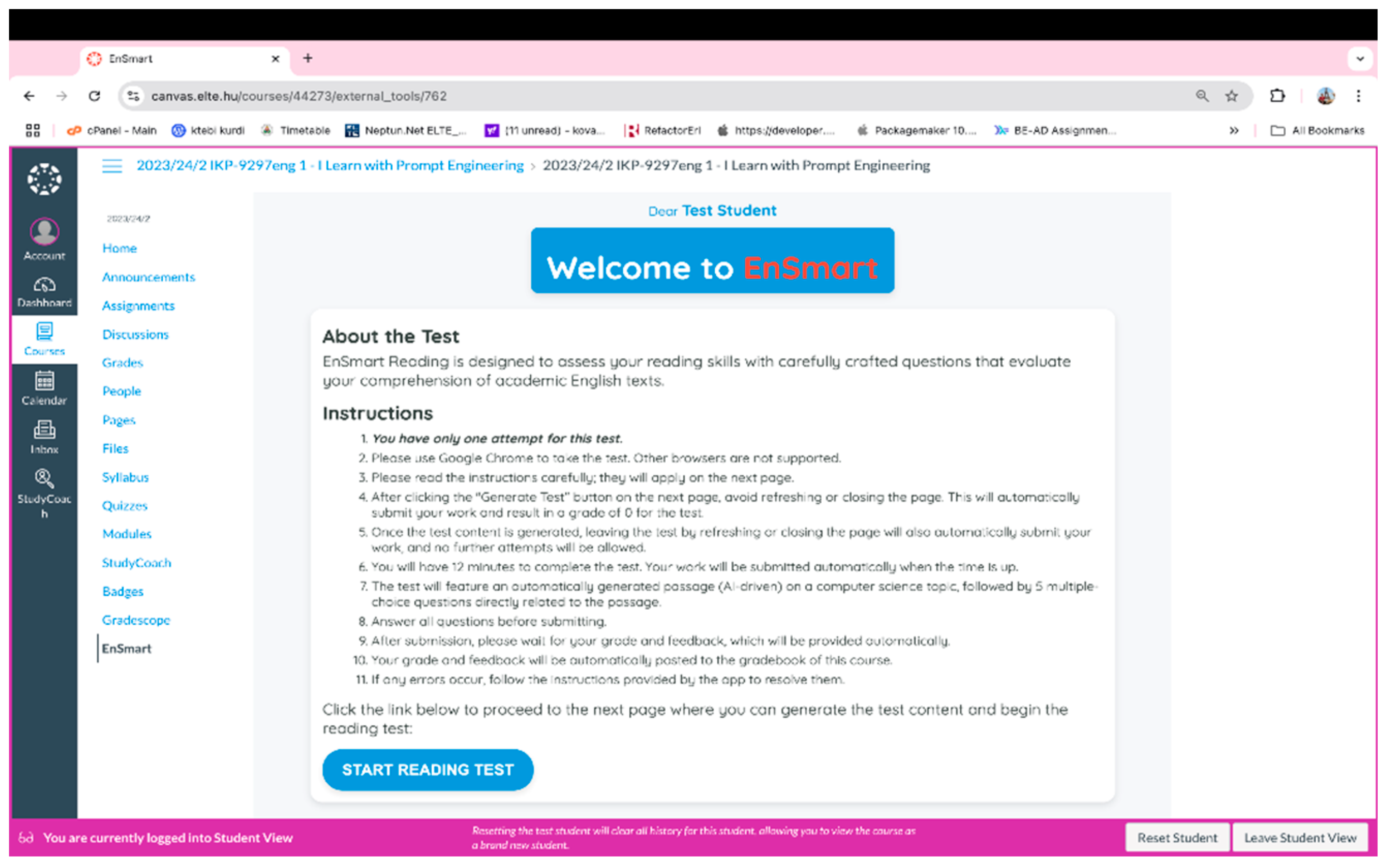

3.5. Usability Testing, Refinement, and Deployment

- Pilot Testing: Initial testing with a sample student to gather feedback on usability and interface design.

- Iterative Refinements: Adjustments based on test results to improve functionality and user experience.

4. Methodology

4.1. Course Design and Pedagogical Framework

- Promotes a student-centered approach to learning, emphasizing active engagement.

- Provides students with real-world contexts and scenarios, enabling them to apply knowledge and skills in practice.

- Encourages the development of research skills and complex thinking, allowing students to propose innovative solutions to current societal issues.

- Mastery of prompt engineering, enabling students to skillfully interact with LLMs using advanced prompt techniques.

- Master purposeful Socratic conversations and the skill to build prompt constructs for self-learning on any discipline or seek guidelines of any life situations. At the same time caution students on „hallucinations” on LLMs and prepare them for fact checking and critical thinking.

- Allow them to improve their scientific English reading and writing skills, which are highly important skills for their advancement in Informatics studies and workforce skills.

- Methodology for learning involved peer reviews and discussions helping out each other or sharing good practices.

- Basic prompt patterns: question refinement, cognitive verifier, persona, audience and flipped interaction patterns.

- Extended prompting techniques: prompt expansion, chain of thought, guiding prompts, optimizing, GPT confronts itself and discussed problem formulation as well as the parallel between prompt engineering and programming.

- Prompt structures for language learning – assessment was done using the developed EnSmart app.

- Prompt structures for general learning and self-development of various work-life skills – assessment was done by developing a mega-prompt for a formulated relevant problem.

4.2. Research Design

4.3. Participants

4.4. Data Collection Instruments

4.5. Survey Instrument

-

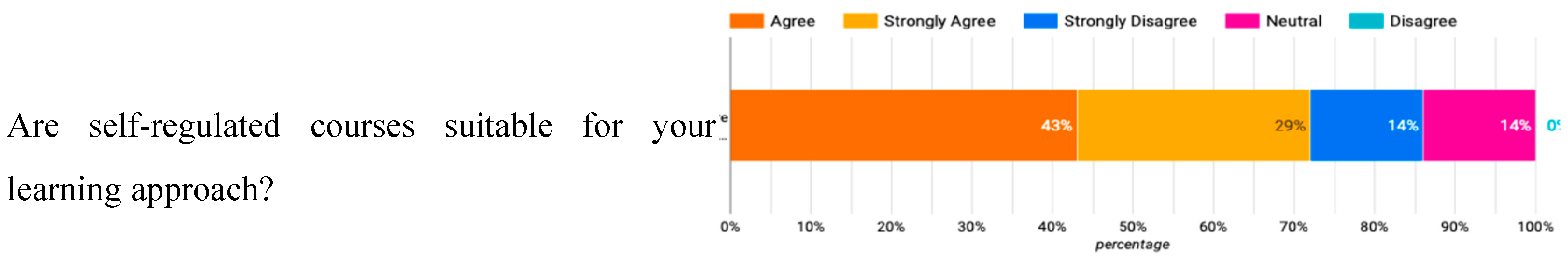

Opinions on Autonomous Learning and improving self-direct learningThis section included questions to gauge students' attitudes towards autonomous courses and their suitability for different learning approaches. Likert-scale items assessed the degree of agreement with statements about the preference for instructor-led versus self-regulated courses. Additionally, it evaluated students' perceptions of the appropriateness and effectiveness of course content (prompt engineering) on improving their self-direct learning with GAI tools.

-

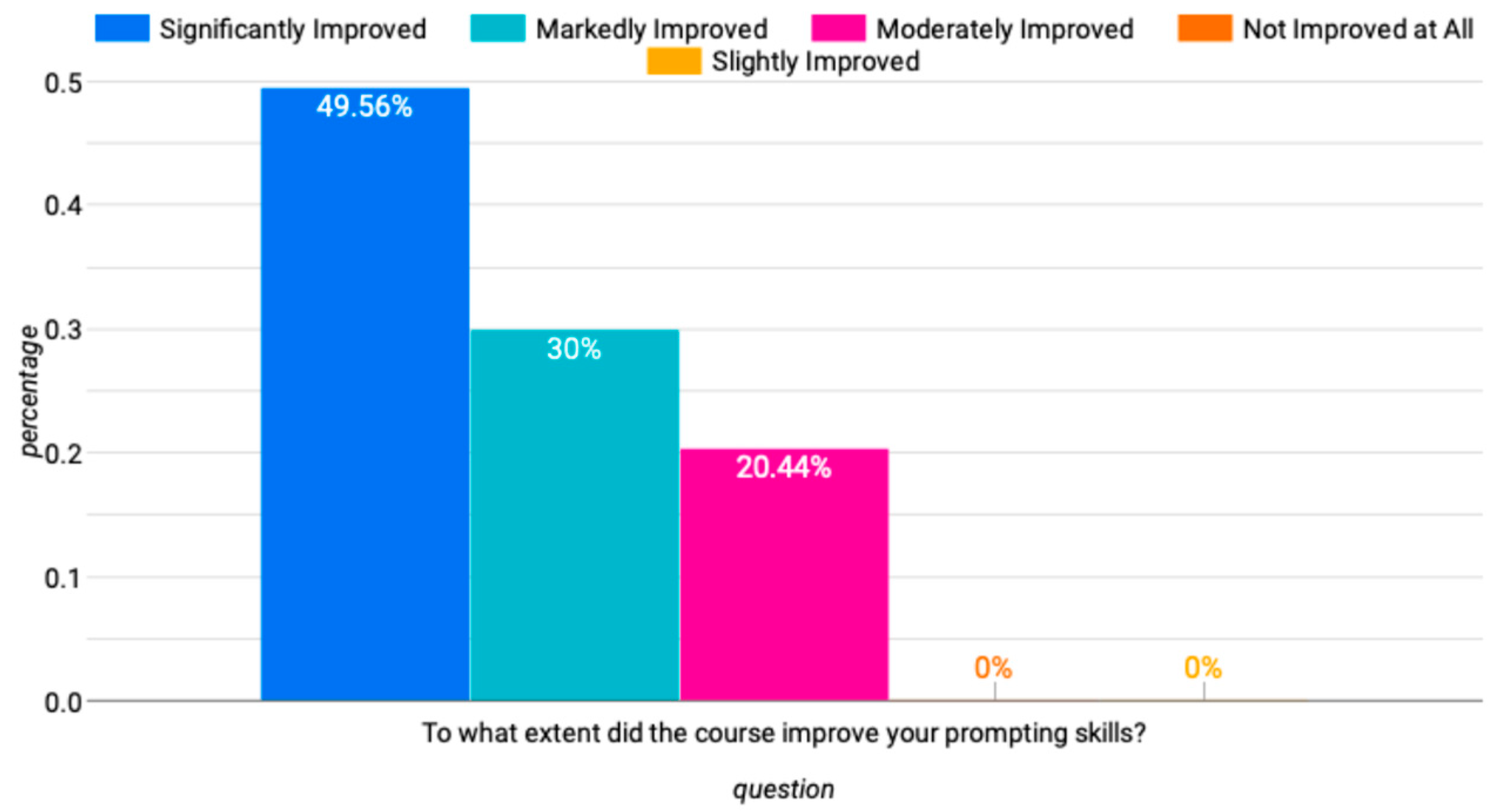

Enhancement of Prompting Skills and Reflections on Academic Language ProficiencyThis section assessed the extent to which the course improved students' prompting skills. It explored how the application of various prompt patterns enhanced students' ability to engage with generative AI tools and how these skills contributed to improvements in their academic language proficiency.

-

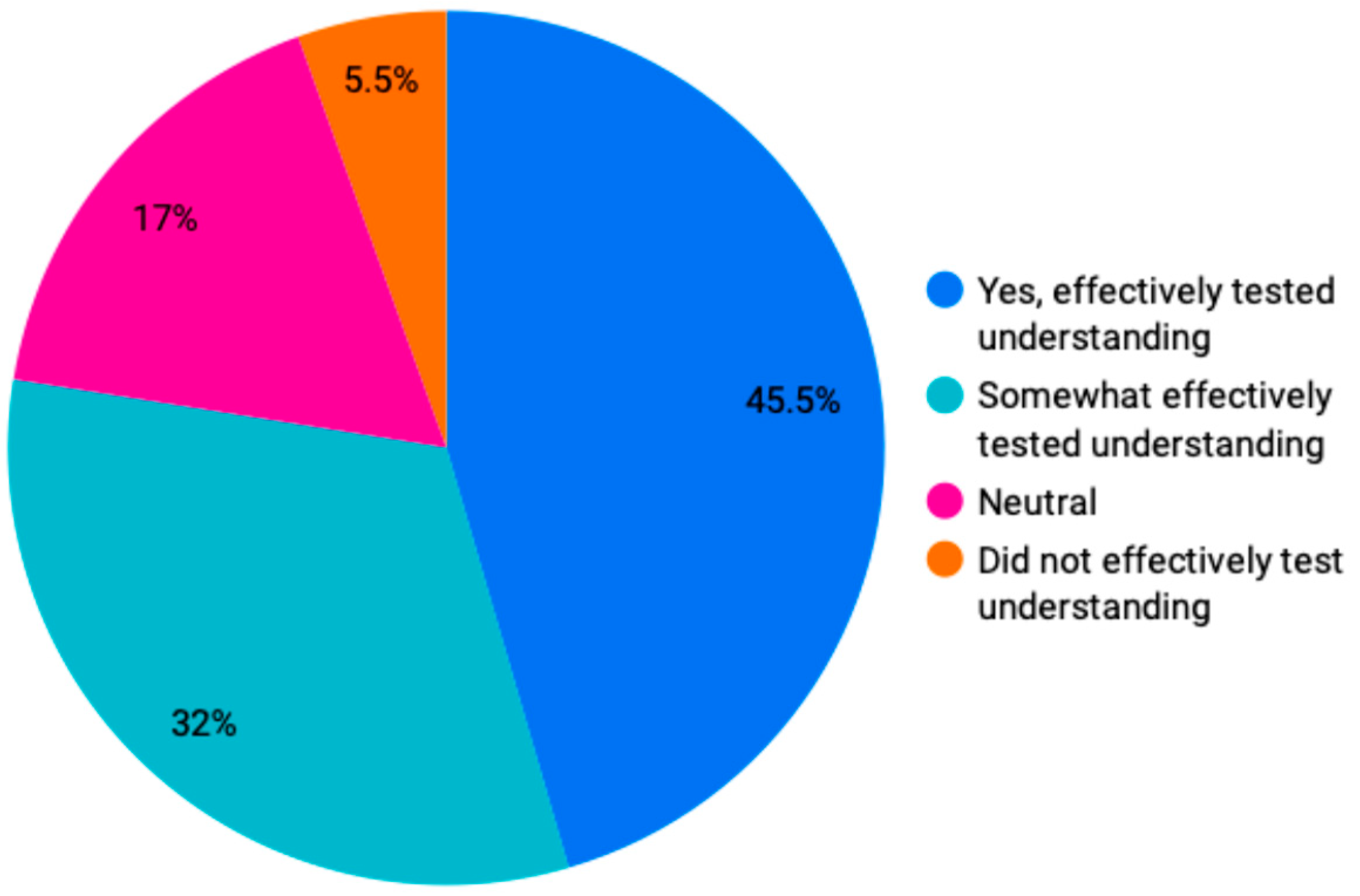

Effectiveness of AI-Driven Content and ToolsThis section included questions on the quality, complexity, and effectiveness of AI-generated passages and questions provided by the Ensmart tool. It aimed to evaluate how well these AI-driven elements supported academic English reading comprehension and the accuracy of auto-grading and feedback.

-

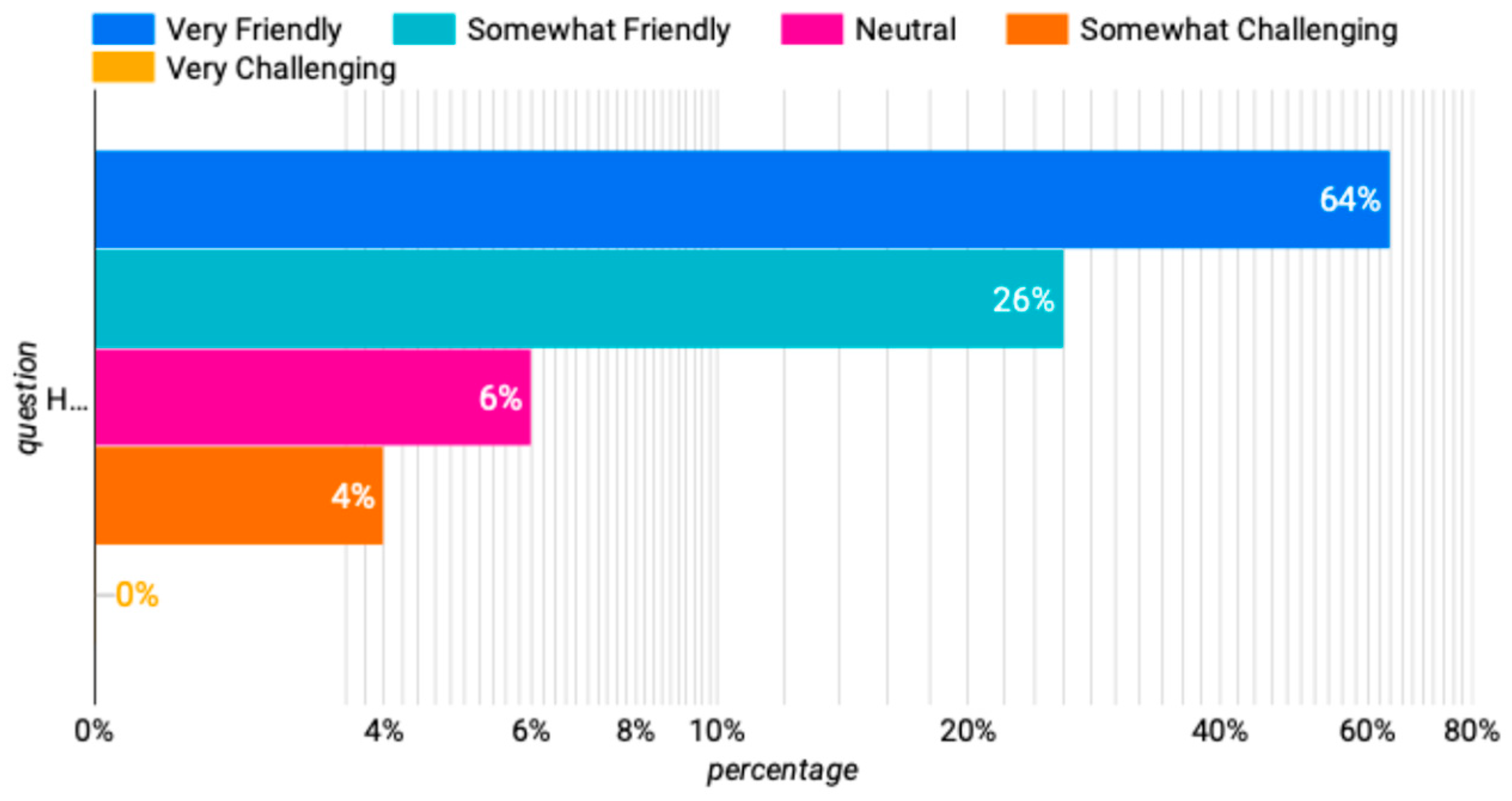

Interaction and Support MechanismsThis section explored the potential benefits of live consultations and peer discussions in enhancing knowledge transfer. It included questions about the reasons for utilizing or not utilizing discussion areas in the LMS, as well as the perceived impact of these interactions on learning outcomes.

-

Overall Satisfaction and RecommendationsThe final section included a Net Promoter Score (NPS) question to determine the likelihood of students recommending the course to their peers. It also featured open-ended questions to gather additional feedback on the Ensmart tool and the overall reliance on AI-driven content, providing insights into areas for improvement and students' suggestions for future enhancements.

5. Data Analysis and Results

5.1. Opinions on Autonomous Learning and improving self-direct learning

5.2. Improvement in Prompting Skills and Reflection on English Proficiency Improvement

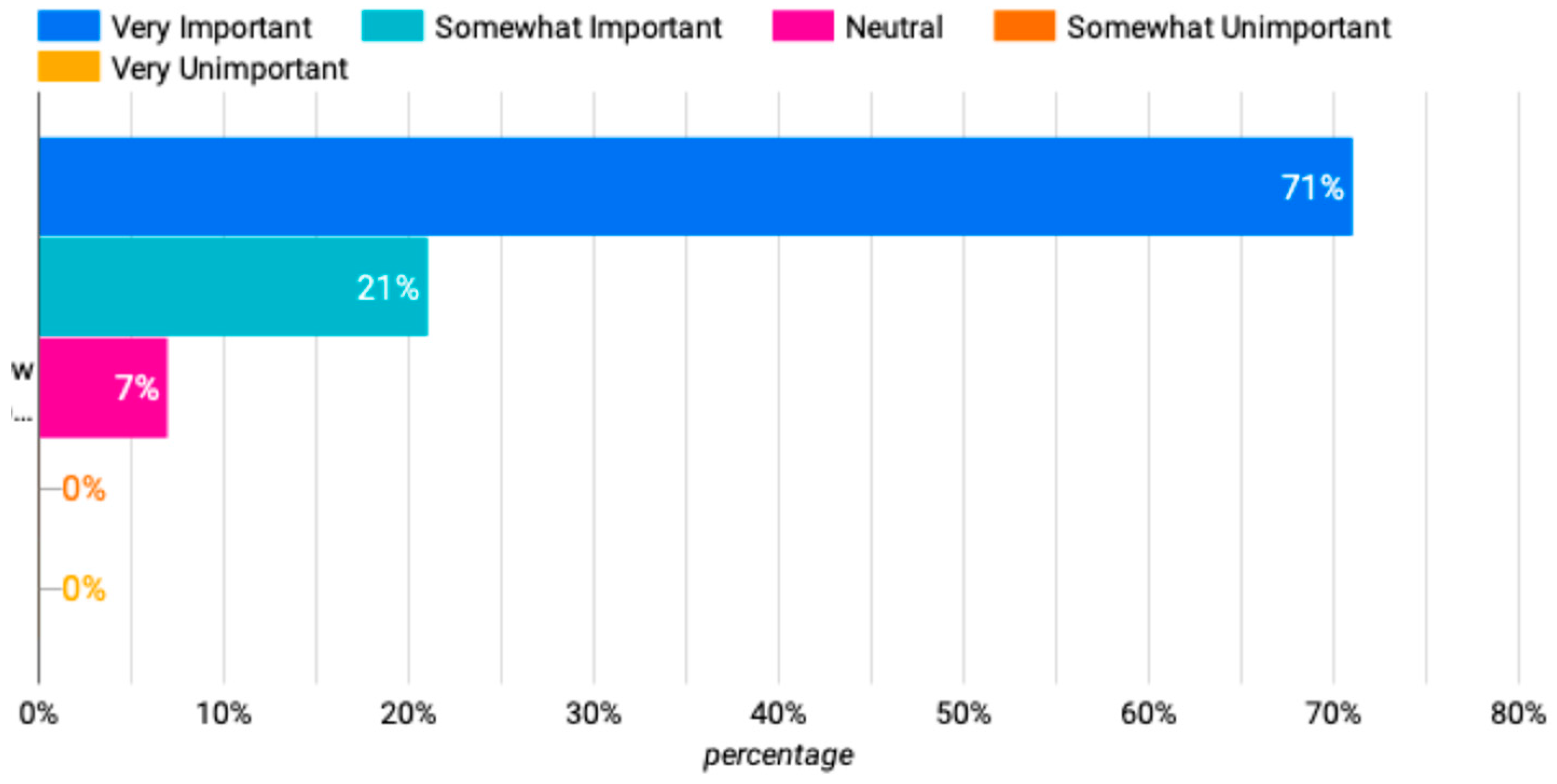

5.3. Effectiveness of AI-Driven Content and Ensmart

5.4. Interaction and Support Mechanisms

5.5. Overall Satisfaction and Recommendations

6. Conclusions, Limitations, and Future Works

6.1. Conclusions

6.2. Limitations

6.3. Future Works

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Barana, A.; Conte, A.; Fioravera, M.; Marchisio, M.; Rabellino, S. (2018). A Model of Formative Automatic Assessment and Interactive Feedback for STEM. [CrossRef]

- Boscardin, C.K.; Gin, B.; Golde, P.B.; Hauer, K.E. ChatGPT and Generative Artificial Intelligence for Medical Education: Potential Impact and Opportunity. Academic Medicine: Journal of the Association of American Medical Colleges 2024, 99, 22–27. [Google Scholar] [CrossRef] [PubMed]

- Brown, T.B.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.; Dhariwal, P.; Neelakantan, A.; Shyam, P.; Sastry, G.; Askell, A.; Agarwal, S.; Herbert-Voss, A.; Krueger, G.; Henighan, T.; Child, R.; Ramesh, A.; Ziegler, D.M.; Wu, J.; Winter, C.; … Amodei, D. (2020). Language Models Are Few-Shot Learners. Proceedings of the 34th International Conference on Neural Information Processing Systems.

- Caines, A.; Benedetto, L.; Taslimipoor, S.; Davis, C.; Gao, Y.; Andersen, O.; Yuan, Z.; Elliott, M.; Moore, R.; Bryant, C.; Rei, M.; Yannakoudakis, H.; Mullooly, A.; Nicholls, D.; Buttery, P. On the application of Large Language Models for language teaching and assessment technology. arXiv 2023, arXiv:2307.08393. [Google Scholar]

- Chen, Q.; Wang, X.; Zhao, Q. Appearance Discrimination in Grading?—Evidence from Migrant Schools in China. Economics Letters 2019, 181, 116–119. [Google Scholar] [CrossRef]

- Crompton, H.; Burke, D. Artificial intelligence in higher education: The state of the field. International Journal of Educational Technology in Higher Education 2023, 20, 22. [Google Scholar] [CrossRef]

- Dikli, S. Assessment at a distance: Traditional vs. Alternative Assessments. Alternative Assessments. The Turkish Online Journal of Educational Technology 2003, 2. [Google Scholar]

- Fagbohun, O.; Iduwe, N.P.; Abdullahi, M.; Ifaturoti, A.; Nwanna, O.M. Beyond Traditional Assessment: Exploring the Impact of Large Language Models on Grading Practices. Journal of Artificial Intelligence, Machine Learning and Data Science 2024, 2, 1–8. [Google Scholar] [CrossRef] [PubMed]

- Galanis, N.; Mayol, E.; Casany, M.J.; Alier, M.; Galanis, N.; Mayol, E.; Casany, M.J.; Alier, M. 1 C.E.; January 1). Tools Interoperability for Learning Management Systems (tools-interoperability-for-learning-management-systems) [Chapter]. Https://Services.Igi-Global.Com/Resolvedoi/Resolve.Aspx?Doi=10.4018/978-1-5225-0905-9.Ch002; IGI Global. [CrossRef]

- Graf, S.; Kinshuk, D.; Liu, T.-C. Supporting Teachers in Identifying Students’ Learning Styles in Learning Management Systems: An Automatic Student Modelling Approach. Educational Technology & Society 2009, 12, 3–14. [Google Scholar]

- Guei, H.; Wei, T.-H.; Wu, I.-C. 2048-like games for teaching reinforcement learning. ICGA Journal 2020, 42, 1–24. [Google Scholar] [CrossRef]

- Guo, K.; Zhong, Y.; Li, D.; Chu, S. Effects of chatbot-assisted in-class debates on students’ argumentation skills and task motivation. Computers & Education 2023, 203. [Google Scholar] [CrossRef]

- Heilman, M.; Collins-Thompson, K.; Callan, J.; Eskenazi, M. (2006). Classroom success of an intelligent tutoring system for lexical practice and reading comprehension. paper 1325. [CrossRef]

- Koraishi, O. Teaching English in the Age of AI: Embracing ChatGPT to Optimize EFL Materials and Assessment. Language Education and Technology 2023, 3, 1. Available online: https://langedutechcom/letjournal/indexphp/let/article/view/48.

- Luckin, R.; Holmes, W. (2016). Intelligence Unleashed: An argument for AI in Education.

- Marwan, S.; Gao, G.; Fisk, S.; Price, T.W.; Barnes, T. Adaptive Immediate Feedback Can Improve Novice Programming Engagement and Intention to Persist in Computer Science. Proceedings of the 2020 ACM Conference on International Computing Education Research 2020, 194–203. [Google Scholar] [CrossRef]

- Marzuki, Widiati, U.; Rusdin, D.; Darwin. The impact of AI writing tools on the content and organization of students’ writing: EFL teachers’ perspective. Cogent Education 2023, 10. [CrossRef]

- Muñoz, S.; Gayoso, G.; Huambo, A.; Domingo, R.; Tapia, C.; Incaluque, J.; Nacional, U.; Villarreal, F.; Cielo, J.; Cajamarca, R.; Enrique, J.; Reyes Acevedo, J.; Victor, H.; Huaranga Rivera, H.; Luis, J.; Pongo, O. Examining the Impacts of ChatGPT on Student Motivation and Engagement. Przestrzeń Społeczna (Social Space), 2023; 23. [Google Scholar]

- Peláez-Sánchez, I.C.; Velarde-Camaqui, D.; Glasserman-Morales, L.D. (2024). The impact of large language models on higher education: Exploring the connection between AI and Education 4.0. Frontiers in Education, 2024, 9, 1392091. [Google Scholar] [CrossRef]

- Ramírez-Montoya, M.S.; Castillo-Martínez, I.M.; Sanabria-Z, J.; Miranda, J. Complex Thinking in the Framework of Education 4.0 and Open Innovation—A Systematic Literature Review. Journal of Open Innovation: Technology, Market, and Complexity 2022, 8, 4. [Google Scholar] [CrossRef]

- Reynolds, L.; McDonell, K. Prompt Programming for Large Language Models: Beyond the Few-Shot Paradigm. Extended Abstracts of the 2021 CHI Conference on Human Factors in Computing Systems 2021, 1–7. [Google Scholar] [CrossRef]

- Ruwe, T.; Mayweg-Paus, E. Embracing LLM Feedback: The role of feedback providers and provider information for feedback effectiveness. Frontiers in Education 2024, 9. [Google Scholar] [CrossRef]

- Shermis, M.D.; Burstein, J. (Eds.) Handbook of Automated Essay Evaluation: Current Applications and New Directions; Routledge, 2013. [Google Scholar] [CrossRef]

- Watson, W. An Argument for clarity: What are Learning Management Systems, what are they not, and what should they become. TechTrends 2007, 51, 28–34. [Google Scholar]

- Xu, W.; Ouyang, F. A systematic review of AI role in the educational system based on a proposed conceptual framework. Education and Information Technologies 2022, 27, 4195–4223. [Google Scholar] [CrossRef]

- Zimmerman, B.J. Investigating self-regulation and motivation: Historical background, methodological developments, and future prospects. American Educational Research Journal 2008, 45, 166–183. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).