Submitted:

27 November 2024

Posted:

28 November 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

- To our knowledge, this is the first overview study that covers deep learning approaches for 3D instance and semantic segmentation employing a variety of 3D data representations, such as RGB-D, projected pictures, voxels, point clouds, and mesh-based methods.

- Several forms of 3D instance and semantic segmentation algorithms have been carefully evaluated in terms of relative advantages and drawbacks.

- Unlike previous evaluations, we concentrate on deep learning-based algorithms for 3D instance and semantic segmentation, as well as common application domains.

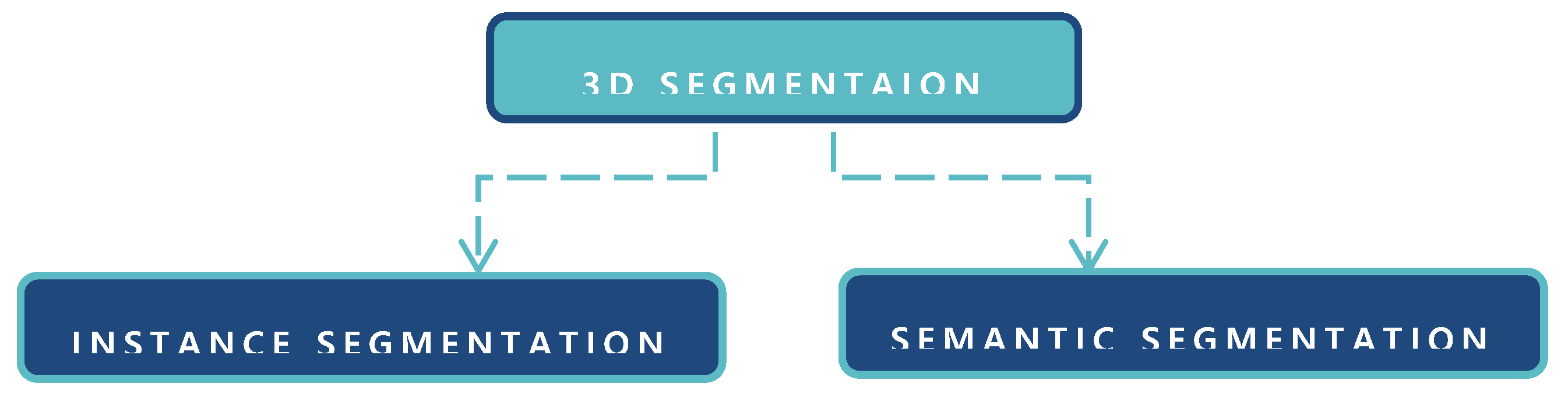

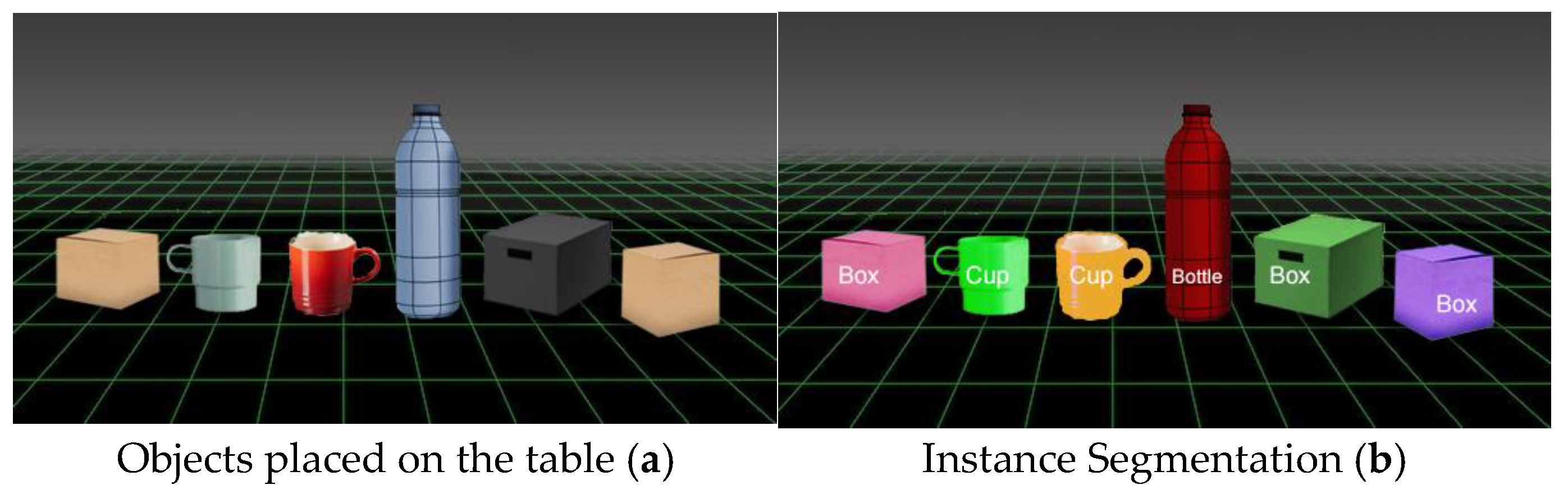

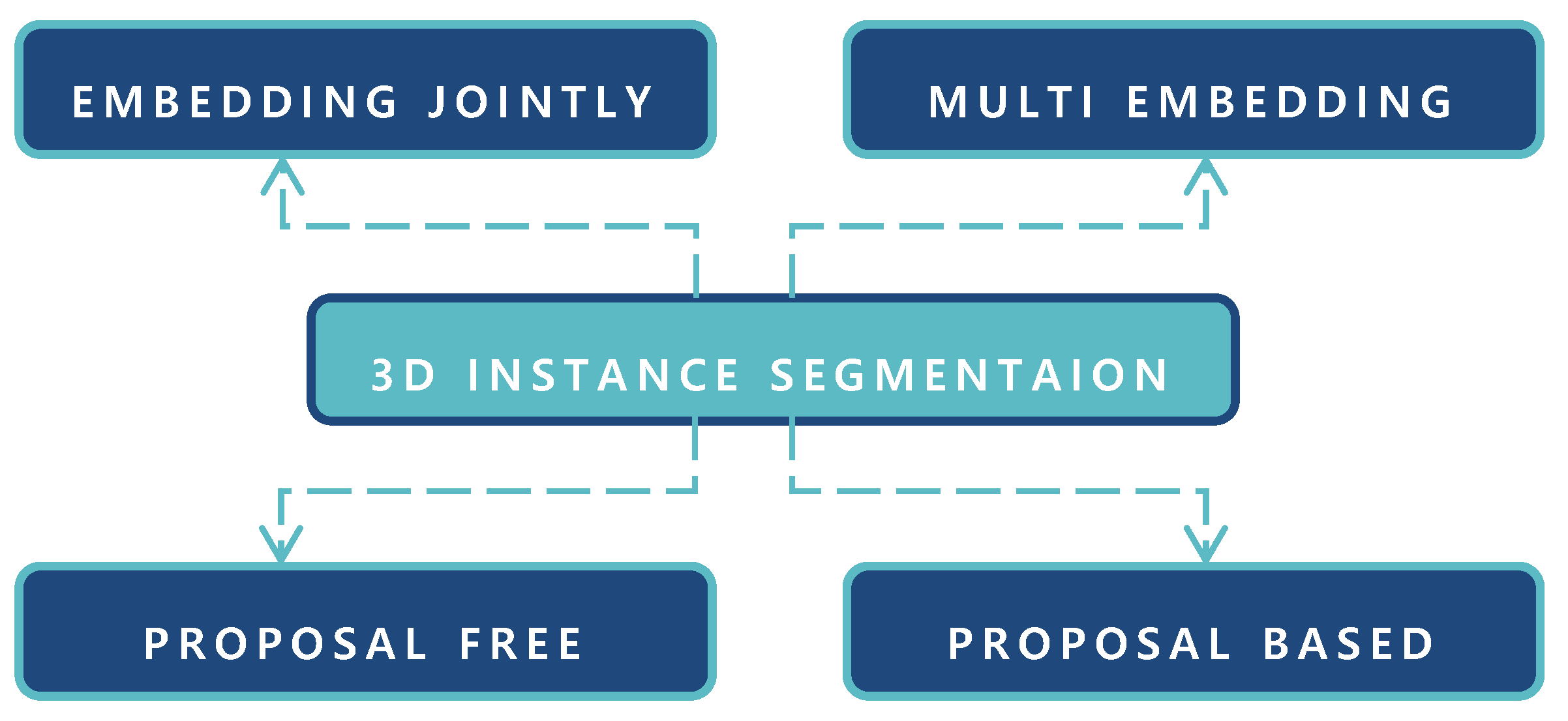

2. 3D Instance Segmentation

2.1. Proposal Based Segmentation

2.1.1. Detection Based Segmentation

2.1.2. Detection Free Segmentation

2.1.3. Proposal Free Segmentation

2.2. Multi Embedding Learning

2.3. 3D Embedding Propagation Method

2.4. Multi Task Jointly Learning

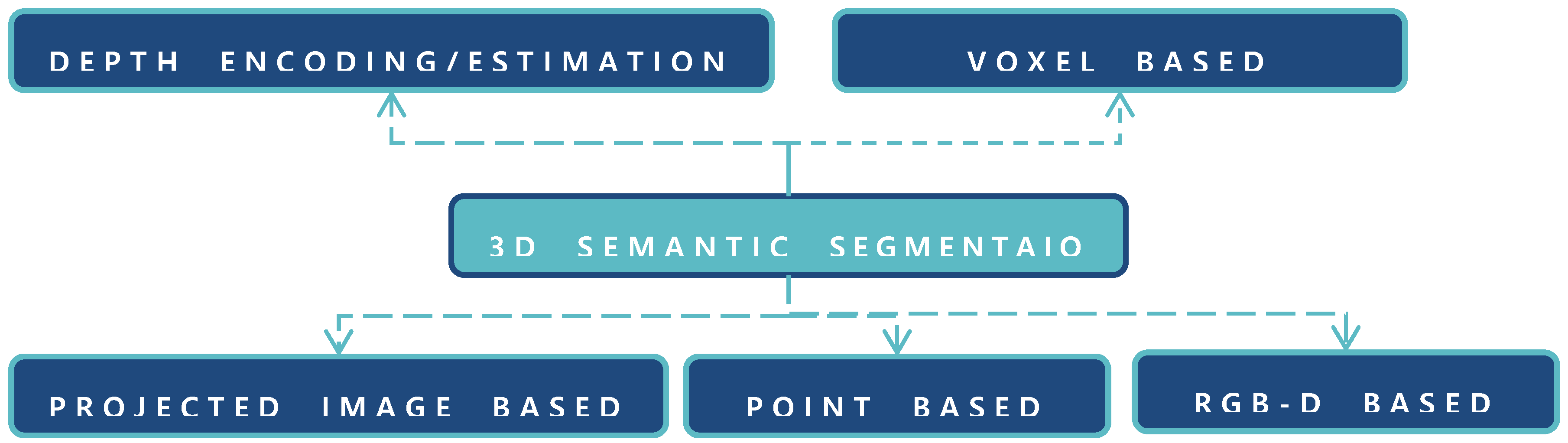

3. 3D Semantic Segmentation

3.1. RGB-D Based Segmentation

3.1.1. Depth Estimation and Encoding

3.1.2. Multi-Scale Networks

3.1.3. Neural Network Based Segmentation

3.1.4. Data/Feature/Score Fusion

3.2. Projected Images Based Segmentation

3.2.1. Multiview Image Based Segmentation

3.2.2. Spherical Image Based Segmentation

3.3. Point Based Semantic Segmentation

4. Benchmark Datasets for 3D Segmentation

| Dataset | Classes | Sensors | Scenes - Segmentation | Points | Feature Representation |

|---|---|---|---|---|---|

| ShapeNet | 55 | - | Outdoor, Indoor Instance seg. | 51,300 | XYZ, Propagating human label to shapes |

| ScanNet | 21 | RGB-D | Indoor - Instance seg. | 242 | XYZ, RGB, label |

| S3DIS | 13 | Structured, Light |

Indoor - Instance seg. | 215 | XYZ, RGB, Normalized coordinates |

| PSB | 19 | Amazon’s Mechanical Turk |

Indoor - Instance seg. | 380 | XYZ, segmentation, class |

| COSEG | 11 | - | Indoor - Instance seg. | 1,090 | Supervised, semi-supervised |

| KITTI | 28 | MLS | Outdoor - Semantic seg. | 1,799 | XYZ, reflectance, label, class |

| Semantic3D.net | 8 | TLS | Outdoor - Semantic seg. | 4,009 | XYZ, intensity, RGB |

| Paris-Lille-3D | 50 | MLS | Outdoor - Semantic seg. | 143 | XYZ, GPS Time, Label, Class |

| NYUv1 & 2 | 726 | Microsoft Kinect v1 |

Indoor - Semantic seg. | 2,347 | 2D LabelMe-style annotation, classes |

| SUN RGB-D | 47 | RealSense, Xtion, MKv1/2 |

Indoor - Semantic seg. | 10,355 | 2D/3Dpolygons +3D bounding box |

| Semantic3D | 8 | Terrestrial Laser scanner |

Outdoor - Semantic seg. | 1,660 | XYZ, three baseline methods |

| PL3D | 50 | Velodyne HDL-32E LiDAR |

Outdoor - Semantic seg. | 143.1M | Human labeling, Annotation, Class |

| Matterport3D | 90 | Matterport camera |

Indoor - Semantic seg. | 194.4K | Hierarchical labeling, Annotation, Class |

| HoME & House3D | 84 | Planner5D platform |

Indoor - Semantic seg. | 45,622 | SSCNet+3 ways, test description |

5. Discussion and Challenges

6. Conclusions

Funding

Conflicts of Interest

References

- X. Zhong, M. Amrehn, N. Ravikumar, S. Chen, N. Strobel et al., "Deep action learning enables robust 3D segmentation of body organs in various CT and MRI images," Scientific Reports, vol.11, pp.3311, 2021. [CrossRef]

- Ioannidou, E. Chatzilari, S. Nikolopoulos and I. Kompatsiaris, “Deep learning advances in computer vision with 3D data: A survey,” ACM Computer Survey, vol. 50, pp. 1-38, 2017.

- Y. Guo, H. Wang, Q. Hu, H. Liu, L. Liu et al., “Deep learning for 3D point clouds: A survey,” IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 43, pp. 4338–4364, 2021.

- J. Deng, S. Shi, P. Li, W. Zhou, Y. Zhang et al., “Voxel r-cnn: Towards high performance voxel-based 3d object detection,” in AAAI Conference on Artificial Intelligence, vol. 35, pp.1201-1209, 2021. [CrossRef]

- F. Fooladgar and S. Kasaei, “A survey on indoor RGB-D semantic segmentation: from hand-crafted features to deep convolutional neural networks,” Multimedia Tools and Applications, vol. 79, pp. 4499–4524, 2020. [CrossRef]

- S. Bello, S. Yu, C. Wang, J.M. Adam and J. Li, “Review: Deep learning on 3D point clouds,” Remote Sensing, vol. 12, pp. 1729, 2020. [CrossRef]

- J. Hou, A. Dai and M. Nießner, “3d-sis: 3d semantic instance segmentation of rgb-d scans,” in IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 2019, pp. 4421–4430.

- L. Jiang, H. Zhao, S. Shi, S. Liu, C. Fu, et el., “Pointgroup: Dual-set point grouping for 3d instance segmentation,” in IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 2020, pp. 4867–4876.

- Yang, J. Wang, R. Clark, Q. Hu, S. Wang et al., “Learning object bounding boxes for 3D instance segmentation on point clouds,” Advances in Neural Information Processing Systems, vol. 32, 2019.

- W. Wang, R. Yu, Q. Huang and U. Neumann, “Sgpn: Similarity group proposal network for 3d point cloud instance segmentation,” in IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 2018, pp. 2569–2578.

- F. Engelmann, M. Bokeloh, A. Fathi, B. Leibe and M. Nießner, “3d-mpa: multi-proposal aggregation for 3d semantic instance segmentation,” in IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 2020, pp. 9031–9040.

- H. Jiang, F. Yan, J. Cai, J. Zheng and J. Xiao, “End-to-end 3d point cloud instance segmentation without detection,” in IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 2020, pp. 12796–12805.

- S. Run, Z. Deyun, L. Jinhuai and C. Chuandong, “MSU-Net: Multi-Scale U-Net for 2D medical image segmentation,” Frontiers in Genetics, vol. 12, pp. 639930, 2021.

- Graham, M. Engelcke and L. Maaten, “3d semantic segmentation with submanifold sparse convolutional networks,” in IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 2018, pp. 9224–9232.

- Y. Liu, S. Yang, B. Li, W. Zhou, J. Xu et al., “Affinity derivation and graph merge for instance segmentation,” in European Conference on Computer Vision (ECCV), Munich, Germany, 2018, pp. 686–703.

- J. Lahoud, B. Ghanem, M. Pollefeys and M. Oswald, “3d instance segmentation via multi-task metric learning,” in IEEE/CVF International Conference on Computer Vision, Seoul, South Korea, 2019, pp. 9256–9266.

- Elich, F. Engelmann, T. Kontogianni and B. Leibe, “3D-BEVIS: Bird’s-eye-view instance segmentation,” Pattern Recognition, vol. 14, pp. 48-61, 2022.

- Y. Wang, Y. Sun, Z. Liu, S.E. Sarma, M. Bronstein, et al., “Dynamic graph CNN for learning on point clouds,” Acm Transactions on Graphics, vol. 38, pp. 1-12, 2019. [CrossRef]

- G. Narita, T. Seno, T. Ishikawa and Y. Kaji, “Panopticfusion: Online volumetric semantic mapping at the level of stuff and things,” in IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Macau, China, 2019, pp. 4205–4212.

- M. A. Malbog, “MASK R-CNN for pedestrian crosswalk detection and instance segmentation” in IEEE 6th International Conference on Engineering Technologies and Applied Sciences (ICETAS), Kuala Lumpur, Malaysia, 2019, pp. 1-5.

- X. Wang, S. Liu, X. Shen, C. Shen and J. Jia, “Associatively segmenting instances and semantics in point clouds,” in IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 2019, pp. 4096–4105.

- Q. Pham, T. Nguyen, B. Hua, G. Roig and S. Yeung, “JSIS3D: Joint semantic-instance segmentation of 3D point clouds with multi-task pointwise networks and multi-value conditional random fields,” in IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 2019, pp. 8827–8836. [CrossRef]

- J. Du, G. Cai, Z. Wang, J. Su and Y. Wu, "Convertible sparse convolution for point cloud instance segmentation," in IEEE International Geoscience and Remote Sensing Symposium IGARSS, Brussels, Belgium, 2021, pp. 4111-4114.

- Z. Liang, M. Yang, H. Li and C. Wang, “3D instance embedding learning with a structure-aware loss function for point cloud segmentation,” IEEE Robotics and Automation Letters, vol. 5, pp. 4915–4922, 2020. [CrossRef]

- L. Han, T. Zheng, L. Xu and L. Fang, “Occuseg: Occupancy-aware 3d instance segmentation,” in IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 2020, pp. 2940–2949.

- Liu, C. Shen, G. Lin and I. Reid, “Learning depth from single monocular images using deep convolutional neural fields,” IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 38, pp. 2024–2039, 2015. [CrossRef]

- Y. Cao, C. Shen and H. Shen, “Exploiting depth from single monocular images for object detection and semantic segmentation,” IEEE Transactions on Image Processing, vol. 26, pp. 836–846, 2016. [CrossRef]

- Y. Guo and T. Chen, “Semantic segmentation of RGBD images based on deep depth regression,” Pattern Recognition Letters, vol. 109, pp. 55–64, 2018. [CrossRef]

- B. Ivaneckỳ, “Depth estimation by convolutional neural networks,” PhD Thesis, Master thesis, Brno University of Technology, 2016.

- P. Wang, X. Shen, Z. Lin, S. Cohen, B. Price, et al., “Towards unified depth and semantic prediction from a single image,” in IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, 2015, pp. 2800–2809. [CrossRef]

- Mousavian, H. Pirsiavash and J. Košecká, “Joint semantic segmentation and depth estimation with deep convolutional networks,” in Fourth IEEE International Conference on 3D Vision (3DV), Stanford, CA, USA, 2016, pp. 611–619.

- J. Liu, Y. Wang, Y. Li, J. Fu, J. Li, et al., “Collaborative deconvolutional neural networks for joint depth estimation and semantic segmentation,” IEEE Transactions on Neural Networks and Learning Systems, vol. 29, pp. 5655–5666, 2018. [CrossRef]

- N. Höft, H. Schulz and S. Behnke, “Fast semantic segmentation of RGB-D scenes with GPU-accelerated deep neural networks,” in Advances in Artificial Intelligence Springer, Cham, 2014, pp. 80–85.

- D. Lin, G. Chen, D. Cohen, P. Heng and H. Huang, “Cascaded feature network for semantic segmentation of RGB-D images,” in IEEE International Conference on Computer Vision, Venice, Italy, 2017, pp. 1311–1319.

- H. Liu, W. Wu, X. Wang and Y. Qian, “RGB-D joint modelling with scene geometric information for indoor semantic segmentation,” Multimedia Tools and Applications, vol. 77, pp. 22475–22488, 2018. [CrossRef]

- C. Hazirbas, L. Ma, C. Domokos and D. Cremers, “Fusenet: Incorporating depth into semantic segmentation via fusion-based cnn architecture,” in Asian Conference on Computer Vision, Springer, Taipei, Taiwan, 2016, pp. 213–228. [CrossRef]

- C. Couprie, C. Farabet, L. Najman and Y. LeCun, “Indoor semantic segmentation using depth information,” in ICLR 2013 conference submission, Scottsdale, United States, 2013, pp. 1301-3572.

- Pandey, D. Rajpoot and M. Saraswat, “Twitter sentiment analysis using hybrid cuckoo search method,” Information Processing & Management, vol. 53, pp. 764–779, 2017. [CrossRef]

- J. Jiang, Z. Zhang, Y. Huang and L. Zheng, “Incorporating depth into both CNN and CRF for indoor semantic segmentation,” in 8th IEEE International Conference on Software Engineering and Service Science (ICSESS), Beijing, China, 2017, pp. 525–530.

- W. Wang and U. Neumann, “Depth-Aware CNN for RGB-D segmentation,” Computer Vision – ECCV, vol. 11215, pp. 144–161, 2018.

- J. Wang, Z. Wang, D. Tao, S. See and G. Wang, “Learning common and specific features for RGB-D semantic segmentation with Deconvolutional Networks,” in Computer Vision – ECCV, Amsterdam, Netherlands, 2016, pp. 664–679.

- Y. Cheng, R. Cai, Z. Li, X. Zhao and K. Huang, “Locality-sensitive deconvolution networks with gated fusion for RGB-D indoor semantic segmentation,” in IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 2017: pp. 1475–1483.

- H. Fan, X. Mei, D. Prokhorov and H. Ling, “RGB-D scene labeling with multimodal recurrent neural networks,” in IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, USA, 2017, pp. 203–211.

- Z. Li, Y. Gan, X. Liang, Y. Yu, H. Cheng, et al., “LSTM-CF: Unifying context modeling and fusion with LSTMs for RGB-D scene labeling,” in Computer Vision – ECCV 2016, Cham, 2016, pp. 541–557.

- X. Qi, R. Liao, J. Jia, S. Fidler and R. Urtasun, “3D Graph Neural Networks for RGBD Semantic Segmentation, in: 2017 IEEE International Conference on Computer Vision (ICCV), 2017: pp. 5209–5218.

- H. Su, S. Maji, E. Kalogerakis and E. Learned-Miller, “Multi-view convolutional neural networks for 3d shape recognition,” in IEEE International Conference on Computer Vision, Santiago, Chile, 2015, pp. 945–953. [CrossRef]

- F.J. Lawin, M. Danelljan, P. Tosteberg, G. Bhat, F.S. Khan, et al., “Deep projective 3D semantic segmentation,” in International Conference on Computer Analysis of Images and Patterns, Ystad, Sweden, 2017, pp. 95–107.

- Boulch, J. Guerry, B. Saux and N. Audebert, “SnapNet: 3D point cloud semantic labeling with 2D deep segmentation networks,” Computers & Graphics, vol. 71, pp. 189–198, 2018. [CrossRef]

- Boulch, B. Saux and N. Audebert, “Unstructured Point Cloud Semantic Labeling Using Deep Segmentation Networks,” 3DOR@ Eurographics, vol. 3, pp. 17-24, 2017.

- J. Guerry, A. Boulch, B. Le Saux, J. Moras, A. Plyer and D. Filliat, “Snapnet-r: Consistent 3d multi-view semantic labeling for robotics,” in IEEE International Conference on Computer Vision Workshops, Venice, Italy, 2017, pp. 669–678.

- Q. Pham, B. Hua, T. Nguyen and S. Yeung, “Real-time progressive 3D semantic segmentation for indoor scenes,” in IEEE Winter Conference on Applications of Computer Vision (WACV), Waikoloa, HI, USA, 2019, pp. 1089–1098.

- Iandola, S. Han, M. Moskewicz, K. Ashraf, W. Dally, et al., “SqueezeNet: AlexNet-level accuracy with 50x fewer parameters and< 0.5 MB model size,” in 5th International Conference on Learning Representations, Toulon, France, 2016.

- X. Xie, L. Bai, and X. Huang, “Real-time LiDAR point cloud semantic segmentation for autonomous driving,” Electronics, vol. 11, pp. 11, 2021. [CrossRef]

- Wu, X. Zhou, S. Zhao, X. Yue and K. Keutzer, “Squeezesegv2: Improved model structure and unsupervised domain adaptation for road-object segmentation from a lidar point cloud,” in IEEE/International Conference on Robotics and Automation (ICRA), Montreal, QC, Canada, 2019, pp. 4376–4382. [CrossRef]

- Milioto, I. Vizzo, J. Behley and C. Stachniss, “Rangenet++: Fast and accurate lidar semantic segmentation,” in IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Macau, China, 2019, pp. 4213–4220.

- Xu, B. Wu, Z. Wang, W. Zhan, P. Vajda, et al., “Squeezesegv3: Spatially-adaptive convolution for efficient point-cloud segmentation,” in European Conference on Computer Vision Springer, Glasgow, UK, 2020, pp. 1–19. [CrossRef]

- Qi, L. Yi, H. Su and L. Guibas, “PointNet++: Deep Hierarchical Feature Learning on Point Sets in a Metric Space,” in Advances in Neural Information Processing Systems, Long Beach, CA United States, 2017, pp. 30.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).