Submitted:

29 October 2024

Posted:

30 October 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Background and Motivation

1.2. dynamic Stride Adjustment for Fractional Strides

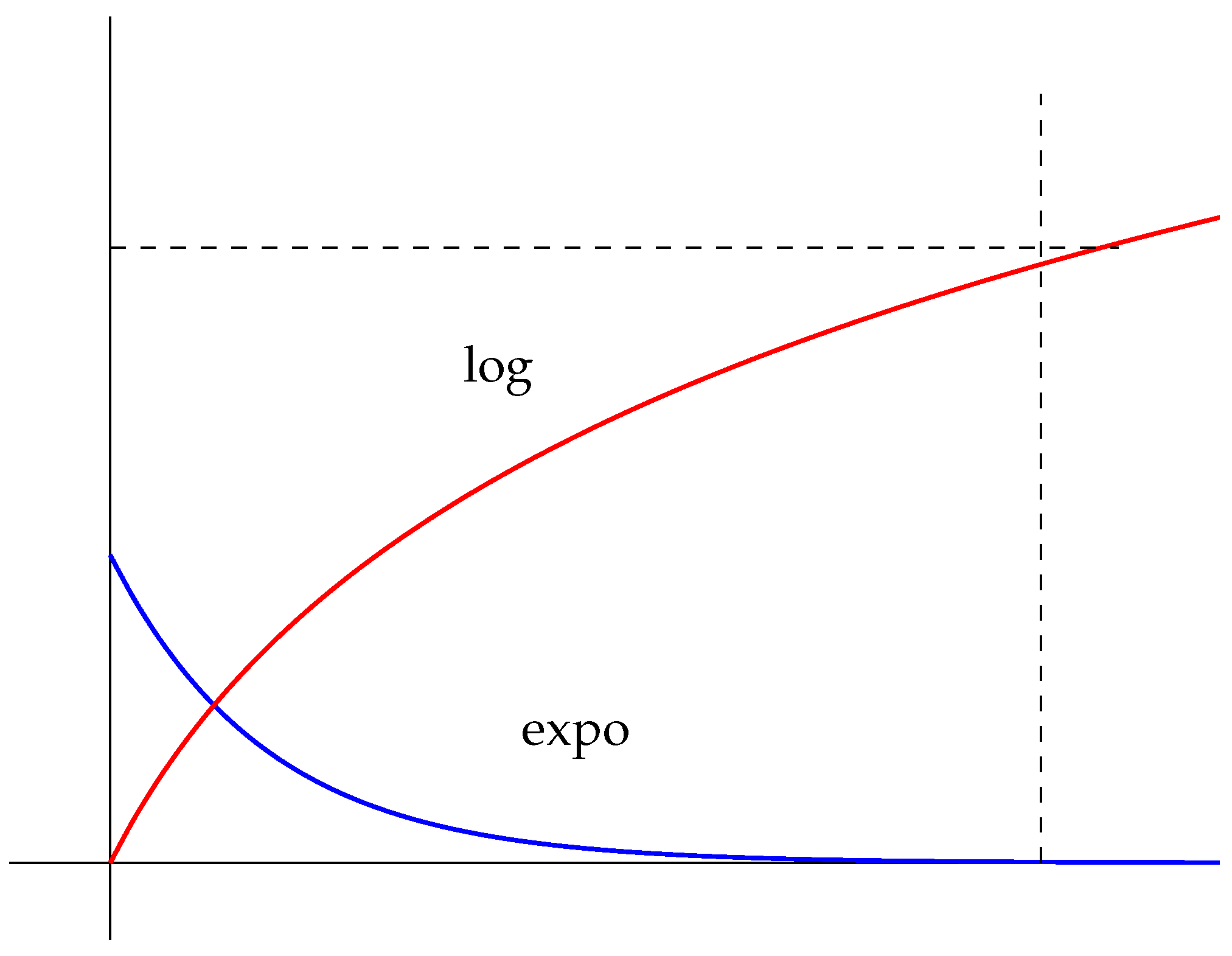

- Exponential decay, which reduces the stride rapidly at first, capturing fine details earlier in the network’s operation.

- Logarithmic decay, which offers a slower, more gradual decrease, allowing for more controlled refinement of the network’s feature extraction capabilities over time.

1.3. Binary Neural Networks and Applications

1.4. Contribution and Paper Outline

- A detailed formulation of exponential and logarithmic stride decay functions to simulate fractional strides in binary ANNs.

- An attention mechanism integrated into the network to emphasize important spatial regions during feature extraction, enhancing the quality of the output feature maps.

- A comparative analysis of the two decay methods, exploring their trade-offs in terms of resolution growth and computational overhead.

- Application insights into areas such as computer vision tasks and autonomous spacecraft systems, highlighting the flexibility and adaptability of dynamic stride adjustment for energy-efficient ANN design.

2. Literature Review

3. Mathematical Foundation of Dynamic Stride Adjustment

3.1 Stride in Convolutional Neural Networks (CNNs)

3.2 Dynamic Stride Adjustment

3.2.1 Exponential Decay Function

- is the stride at step n.

- is a decay constant controlling how quickly the stride decreases.

- ensures that the stride does not fall below a certain threshold.

3.2.2 Logarithmic Decay Function

- controls the rate of decrease.

- is a scaling factor.

- ensures the stride does not drop below a threshold.

3.3 Indefinite Integral Interpretation

3.4 Simulating Fractional Strides

3.5 Attention Mechanism

4. Logarithmic Stride Function Test

4.1. Setup and Parameters

4.2. Results

4.3. Analysis

5. Exponential Stride Function Test

5.1. Setup and Parameters

5.2. Results

5.3. Analysis

6. Discussion

6.1. Logarithmic vs. Exponential Stride Behavior

- Logarithmic Decay: In the logarithmic stride function, the output size grows gradually, with more significant changes occurring in the early steps and slower growth as the stride approaches its minimum value. This behavior is advantageous for tasks that require a more conservative, fine-grained focus over time. The logarithmic function allows the network to slowly zoom in on details, which may be beneficial in scenarios where gradual feature extraction is crucial, such as in progressive learning or multi-stage image processing tasks.

- Exponential Decay: The exponential stride function, in contrast, results in rapid growth in output size, particularly in the early steps. This function quickly increases the resolution, allowing the model to focus on finer details much faster. While this is useful for tasks requiring rapid feature refinement, the output size stabilizes once the stride reaches its minimum threshold. The exponential function is ideal for applications where a fast and aggressive resolution increase is needed, but it also demonstrates diminishing returns in terms of detail extraction as it quickly reaches the maximum possible output size.

6.2. Implications for Artificial Neural Network Design

- Hybrid Approaches: Future studies could explore hybrid stride functions, which combine both logarithmic and exponential behaviors to allow for both rapid and gradual resolution increases in different stages of the model’s operation. For example, an initial exponential stride decay could enable fast detail extraction, followed by a logarithmic decay for finer adjustments.

- Computational Efficiency: One of the challenges highlighted by the experiments is the computational cost associated with increased output sizes, particularly in the exponential model where the output grows rapidly. Addressing this issue may involve introducing adaptive mechanisms that automatically adjust the stride based on the task complexity or computational resource availability. Such mechanisms could ensure that the model focuses on important features without overburdening computational resources.

6.3. Enhancing Attention Mechanisms

- Improved Feature Prioritization: With dynamic strides, attention mechanisms can prioritize features at varying resolutions, making the network more adaptable in environments where both coarse and fine details are important. For example, in object detection, the attention mechanism can first focus on general object boundaries and then progressively refine its focus to detect small details such as texture or edges.

- Multi-Resolution Attention: Dynamic stride functions can lead to the development of multi-resolution attention mechanisms where attention dynamically adjusts based on the current level of detail. This opens up new possibilities for tasks requiring contextual awareness at multiple scales, such as anomaly detection, image segmentation, and even language processing in hierarchical data structures.

6.4. Artificial Cognitive Systems and Future Research

- Adaptive Cognitive Models: The findings suggest that neural networks in cognitive systems could be designed to self-regulate [18] their focus by dynamically adjusting strides in response to changing environmental stimuli. This would create more robust and responsive systems that can handle complex tasks without requiring human intervention to fine-tune stride parameters.

- Dynamic Task-Specific Attention: Another area of future research involves combining dynamic strides with multi-task-specific attention mechanisms, where the network can determine which stride function (logarithmic or exponential) to apply based on the nature of the task. A common multi-task learning design is to have a large amount of parameters shared among tasks while keeping certain private parameters for individual ones [19]. For instance, for tasks requiring quick pattern recognition, exponential strides might be preferable, whereas for more detailed, iterative tasks, logarithmic strides could allow for slower, more deliberate feature extraction.

6.5. Challenges and Opportunities

6.6. Resolution

7. Conclusion

7.1. Future Studies in Artificial Neural Network Design

7.2. Application of Real Data in Computer Vision Tasks

- Object Detection and Recognition: Dynamic stride functions could be used to enhance object detection models by allowing the network to quickly focus on regions of interest and then progressively refine its focus on fine details, such as textures or edges. This would improve the model’s ability to detect small objects in cluttered scenes or identify specific features in high-resolution images.

- Image Segmentation: By adjusting the stride to focus on different spatial scales, models could better identify and segment objects from the background, improving the accuracy of medical imaging, autonomous driving systems, or satellite imagery analysis.

- Action Recognition and Tracking: In video analysis, dynamic strides could help networks focus on movement patterns, tracking objects across frames more effectively by dynamically adjusting resolution based on the motion’s scale and speed.

7.3. Applications in Robotics for Environmental Awareness and Object Identification

- Environmental Awareness: Dynamic stride functions can help robots better understand and interact with their environment by adjusting their focus on different regions in the field of view. For instance, a robot could first identify general obstacles or landmarks in the environment and then focus more closely on the details of objects it needs to interact with, such as tools, components, or manipulatable items.

- Object Identification: The use of dynamic strides could improve object recognition in dynamic and cluttered environments. As the robot navigates through an unknown space, it could rapidly detect and classify objects from afar using a coarse focus and then progressively refine its understanding of these objects as it approaches them.

- Movement Detection and Tracking: In scenarios where detecting and tracking motion is critical, such as in surveillance, human-robot interaction, or autonomous vehicles, dynamic strides could allow the robot to adjust its focus on objects that are moving at varying speeds, enhancing its ability to track objects, avoid obstacles, or engage with moving targets.

7.4. Synopsis

8. Patents

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Meng, Y.; Kuppannagari, S.; Kannan, R.; Prasanna, V. How to Avoid Zero-Spacing in Fractionally-Strided Convolution? A Hardware-Algorithm Co-Design Methodology. IEEE 28th International Conference on High Performance Computing, Data, and Analytics, 2021. [Google Scholar]

- Han, Y.; Huang, G.; song, S.; Yang, L.; Wang, H.; Wang, Y. Dynamic Neural Networks: A Survey. arXiv 2021, arXiv:2102.04906. [Google Scholar] [CrossRef] [PubMed]

- Riad, R.; Olivier, T.; Grangier, D.; Zeghidour, N. Learning Strides in Convolutional Neural Networks. arXiv 2022, arXiv:2202.01653. [Google Scholar]

- Minglei, T.; Lyuyuan, F.; Hao, N.; Zhao, Y. Smart Camera Aware Crowd Counting via Multiple Task Fractional Stride Deep Learning. Sensor 2019, 19, 1346. [Google Scholar]

- Prince, S. Understanding Deep Learning; The MIT Press: Cambridge, MA, USA.

- Qiao, S.; Lin, Z.; Zhang, J.; Yuille, A. Neural Rejuvenation: Improving Deep Network Training by Enhancing Computational Resource Utilization. Computer Vision Foundation, IEEE 2019.

- Zhao, X.; Wang, L.; Zhang, Y.; Xuming, H.; Muhammet, D. A review of convolutional neural network in computer. Artif. Intell. Rev. 2024, 57, 99. [Google Scholar] [CrossRef]

- Fukaya, K.; Yong-Geun, O.; Hiroshi, O.; Kaoru, O. Exponential Decay Estimates and Smoothness of the Moduli Space of Pseudoholomorphic Curves. arXiv 2024, arXiv:1603.07026. [Google Scholar] [CrossRef]

- Gomez, J. On the Stieltjes Approximation Error to Logarithmic Integral. arXiv 2024, arXiv:2406.12152. [Google Scholar]

- Sayed, R.; Azmi, H.; Shawkey, H.; Khalil, A.; Refky, M. A Systematic Literature Review on Binary Neural Networks. IEEE Access 2023, 11, 27546–27578. [Google Scholar] [CrossRef]

- Lin, Z.; Wang, Y.; Zhang, J.; Chu, X.; Ling, H. NAS-BNN: Neural Architecture Search for Binary Neural Networks. arXiv 2024, arXiv:2408.15484. [Google Scholar] [CrossRef]

- Guo, N.; Bethge, J.; Yang, H.; Zhong, K.; Ning, X.; Meinel, C.; Wang, Y. Boolnet: Minimizing the Energy Consumption of Binary Neural Networks. arXiv 2021, arXiv:2106.06991. [Google Scholar]

- Kuster, F.; Orth, U. The Long-Term Stability of Self-Esteem: Its Time-Dependent Decay and Nonzero Asymptote. University of Basel 2013. [CrossRef] [PubMed]

- Yang, G.; Lei, J.; Fang, Z.; Li, Y.; Zhang, J.; Xie, W. HyBNN: Quantifying and Optimizing Hardware Efficiency of Binary Neural Networks. ACM Trans. Reconfigurable Technol. Syst. 2024, 17, 1–24. [Google Scholar] [CrossRef]

- Nunemacher, J. Asymptotes, Cubic Curves, and the Projective Plane. Mathematics Magazine, STOR 1999.

- Osborne, A.; Dorville, J.; Romano, P. Upsampling Monte Carlo neutron transport simulation tallies using a convolutional neural network. Energy and AI, Elsevier 2023.

- Guo, M.; Xu, T.; Liu, Z.; Liu, J.; Jiang, P.; Mu, T.; Zhang, S.; Martin, R.; Cheng, M.; Hu, S. Attention mechanisms in computer vision: A survey. Comput. Vis. Media 2022, 8, 331–368. [Google Scholar] [CrossRef]

- Xu, J.; Pan, Y.; Pan, X.; Hoi, S.; Yi, Z.; Xu, Z. RegNet: Self-Regulated Network for Image Classification. IEEE Trans. Neural Netw. Learn. Syst. 2023, 34, 9562–9567. [Google Scholar] [CrossRef] [PubMed]

- Lopes, I.; Vu, T.; Charette, R. Cross-task Attention Mechanism for Dense Multi-task Learning. Computer Vision Foundation 2023.

| Step | Output Size |

|---|---|

| 1 | |

| 2 | |

| 3 | |

| 4 | |

| 5 | |

| 6 | |

| 7 | |

| 8 | |

| 9 | |

| 10 | |

| 15 | |

| 20 | |

| 25 | |

| 30 |

| Step | Output Size |

|---|---|

| 1 | |

| 2 | |

| 3 | |

| 4 | |

| 5 | |

| 6 | |

| 7 | |

| 8 | |

| 9 | |

| 10 | |

| 15 | |

| 20 | |

| 25 | |

| 30 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).