Submitted:

23 October 2024

Posted:

24 October 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

- We conduct a comparative analysis of six decision models, including Decision Tree, Random Forest, XGBoost, AdaBoost, GBDT, and HGBDT, using a comprehensive SQL injection dataset.

- We evaluated the performance of these models based on key metrics such as precision, precision, recall, and F1 score, identifying the most effective machine learning techniques for SQL injection detection.

- We apply SHAP and LIME to explain the decision-making processes of each model, improving transparency and trustworthiness in SQL injection detection.

- We provide insights into the strengths and limitations of ensemble learning and boosting models for practical deployment in real-world SQL detection systems.

2. Related Work

2.1. Machine Learning Techniques for SQLi Detection

2.2. Challenges with Explainable AI for SQL Injection Detection

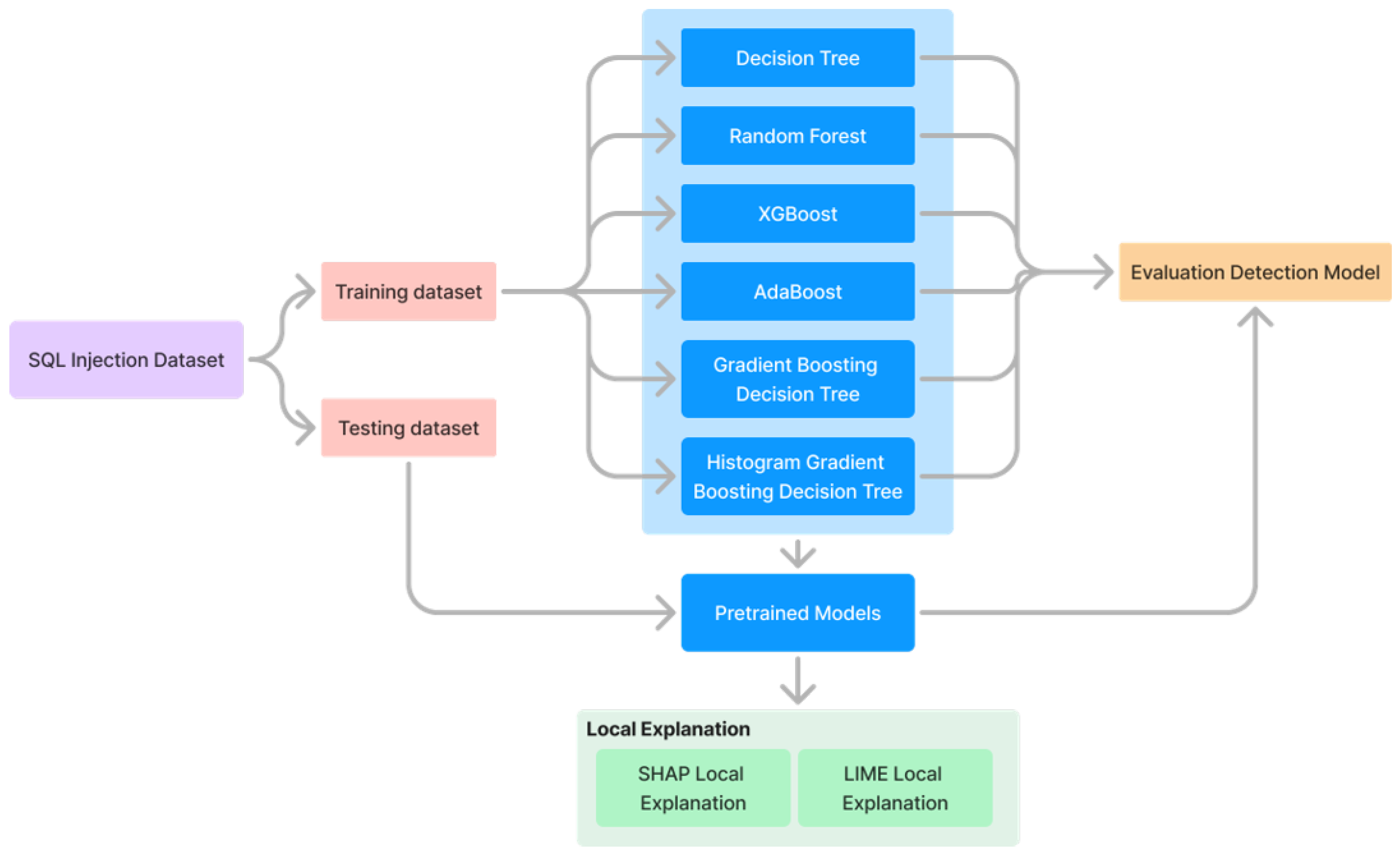

3. Methodology

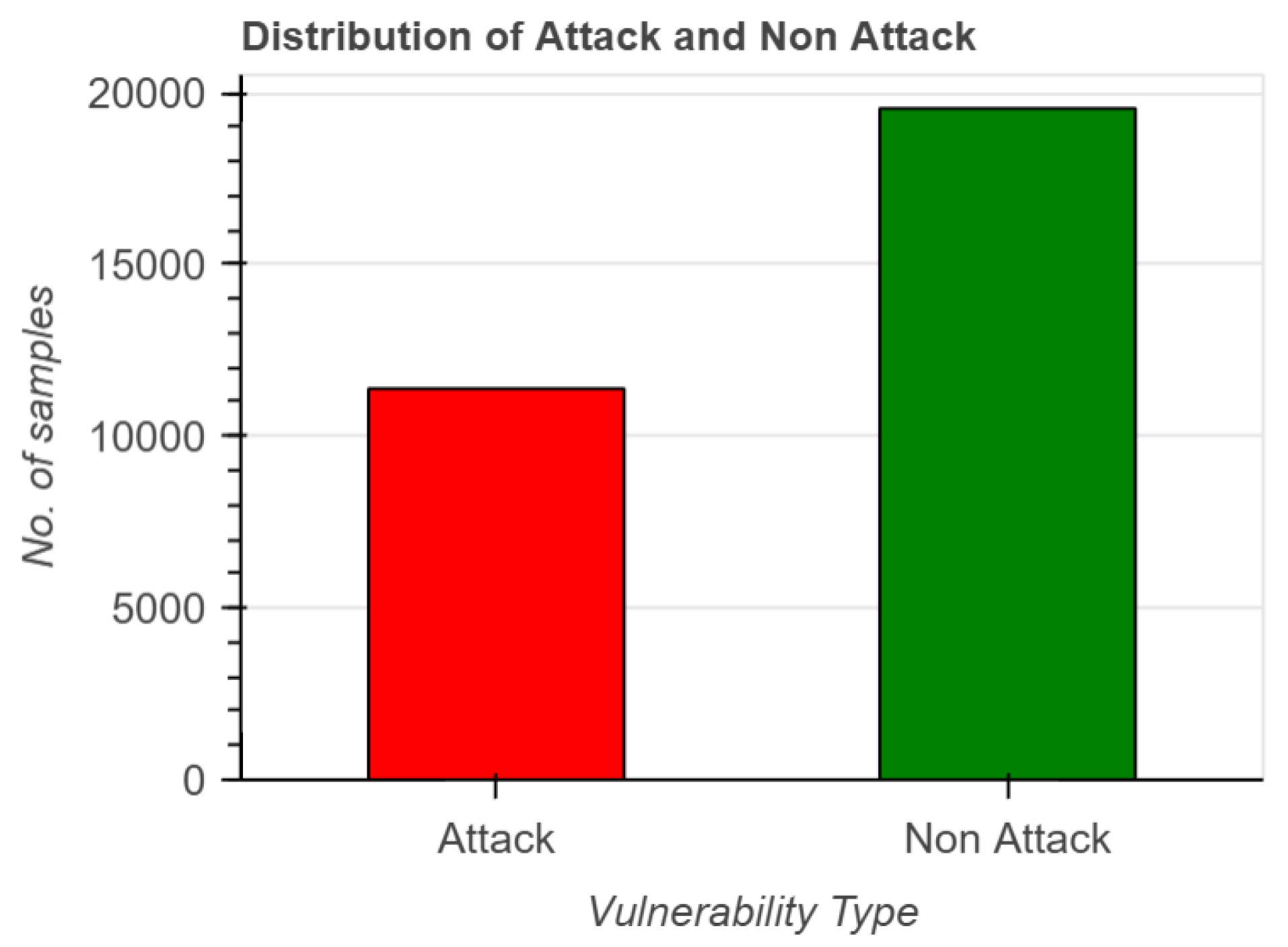

3.1. Data Description

3.2. SQL Vulnerabilities Detection Based Ensemble and Bagging Models

- Decision Tree. This model has hierarchical structures where each node represents a feature, and each branch represents a decision rule based on that feature. The decision tree recursively partitions the feature space into regions that minimize a splitting criterion (e.g., Gini impurity or entropy).

- Random Forest. It is an ensemble learning method that constructs multiple decision trees during training and outputs the mode of the classes (classification) or average prediction (regression) of the individual trees. Let T denote the number of trees in the forest. For classification, the random forest combines the predictions of each tree t by voting: .

- XGBoost (Extreme Gradient Boosting). XGBoost is an optimized gradient-boosting library known for its speed and performance. It sequentially builds trees, where each subsequent tree corrects errors made by the previous one. XGBoost minimizes the loss function iteratively by adding weak learners to the model: , where T is the number of boosting rounds.

- AdaBoost. AdaBoost is another ensemble learning method that combines multiple weak classifiers to create a strong classifier. It adjusts the weights of incorrectly classified instances so that subsequent classifiers focus more on difficult cases. At each iteration t, AdaBoost updates the weights of training instances and computes the model weight based on the classification error. The final classifier is a weighted sum: .

- GBDT. GBDT builds trees sequentially, where each tree attempts to correct errors made by the previous one. Unlike AdaBoost, which adjusts instance weights, GBDT fits each new tree to the residual errors of the current model predictions. GBDT minimizes the loss function by adding new trees that approximate the negative gradient of the loss function for the ensemble model: , where is the t-th decision tree.

- HGBDT. HGBDT is an optimized version of GBDT that uses histograms to discretize continuous features, reducing memory usage and speeding up training. Similar to GBDT, HGBDT constructs an ensemble model by iteratively adding decision trees that minimize the loss function: , where each tree is trained on histogram-based feature representations.

3.3. Local Explanation for SQL Injection Model Decision Based on SHAP and LIME

3.3.1. SHAP: SHapley Additive exPlanations

3.3.2. LIME: Local Interpretable Model-Agnostic Explanations

4. Experiment

4.1. Experiment Setting

4.2. Evaluation Metrics

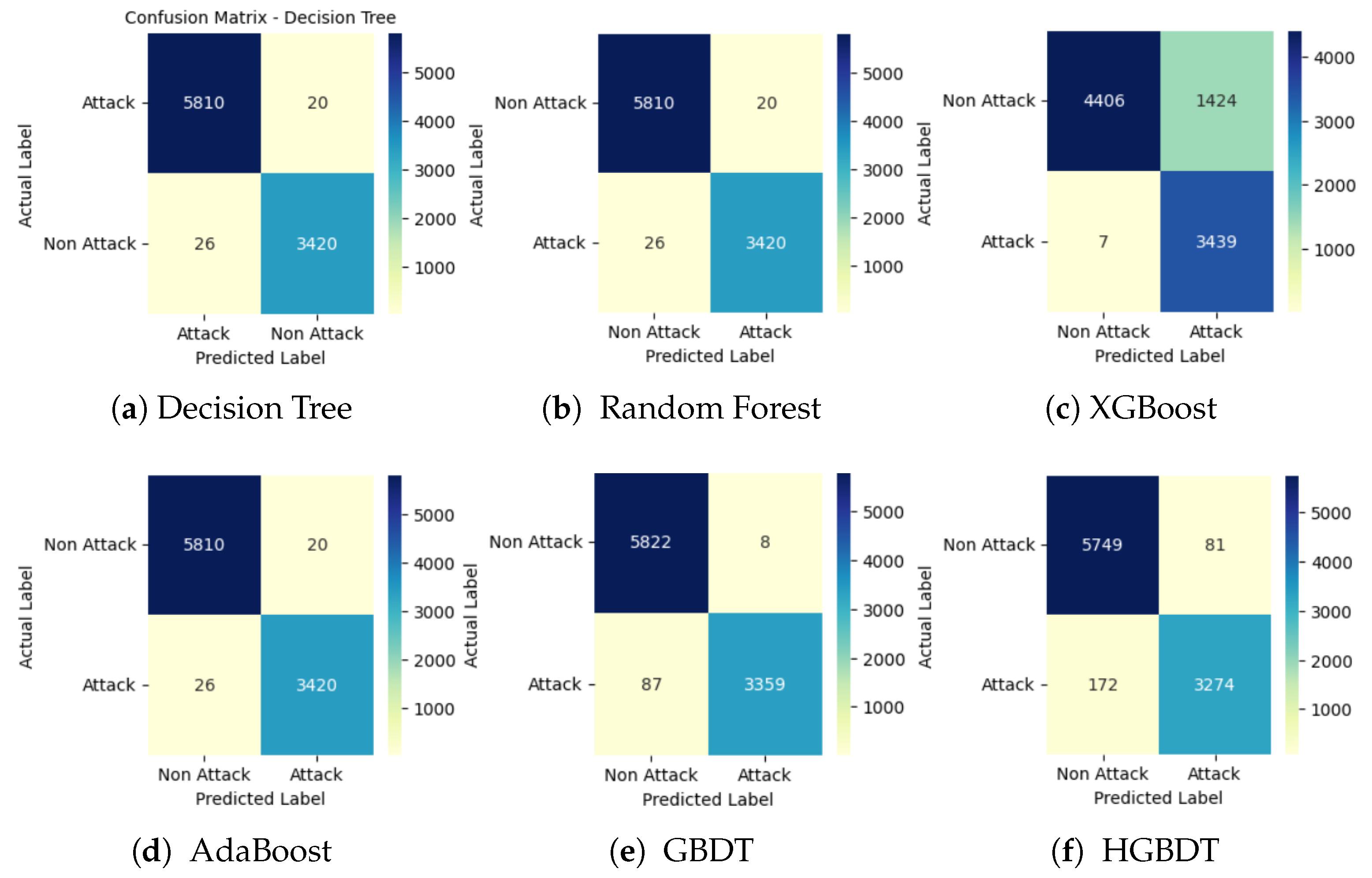

4.3. Confusion Matrix Results

4.4. ROC Curve Results

4.5. Other Performance Evaluation Matrix Results

4.6. Comparison with Existing Methods

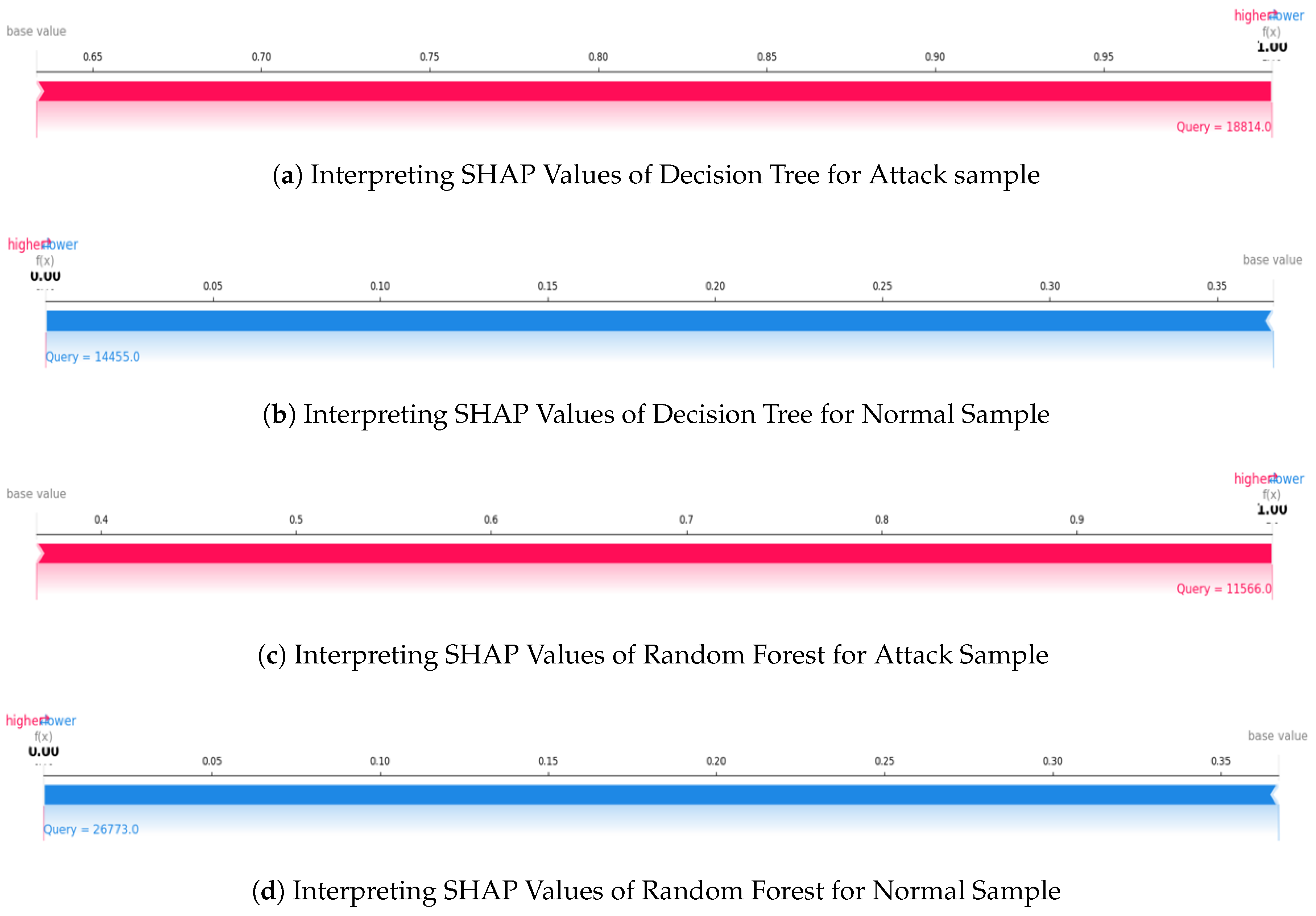

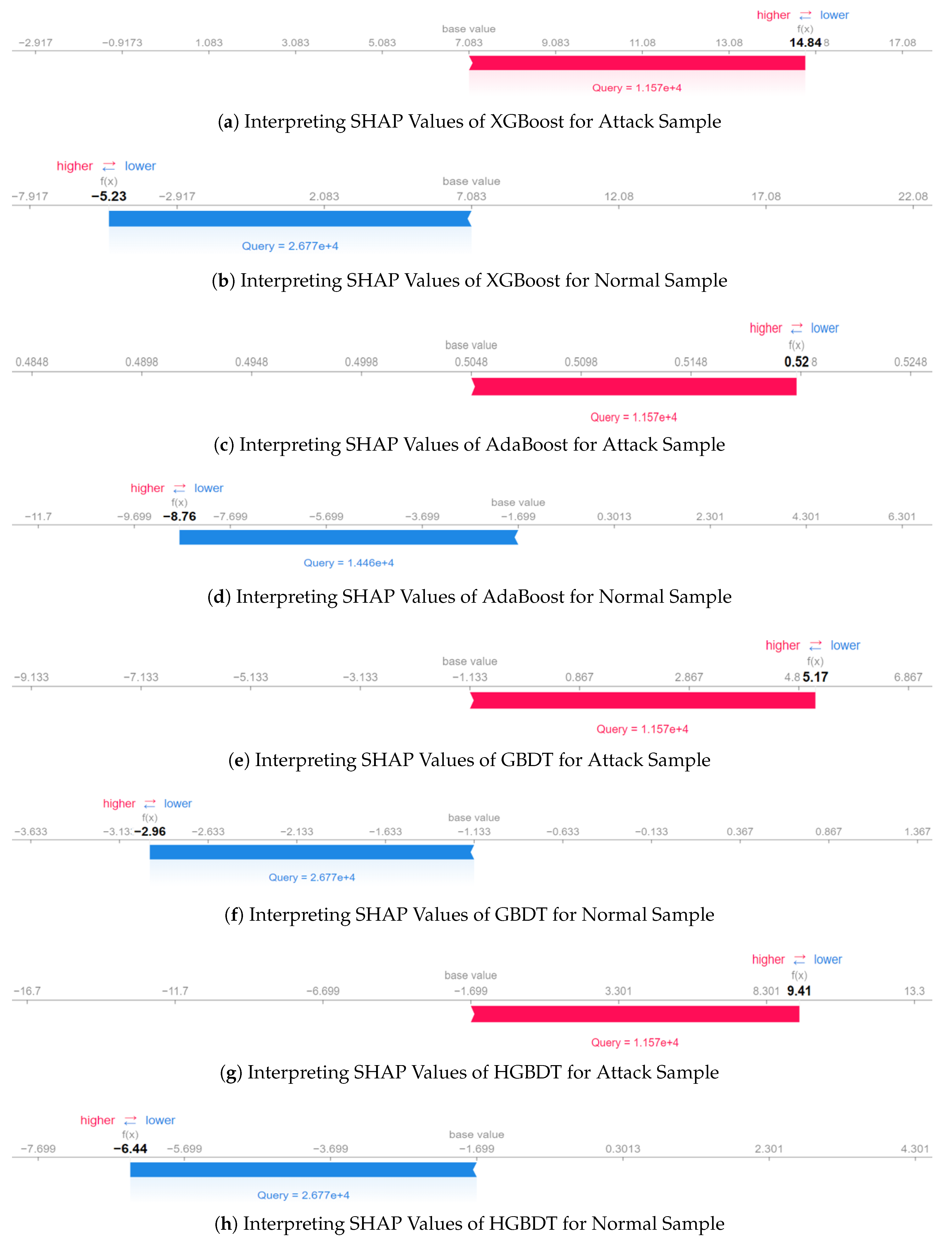

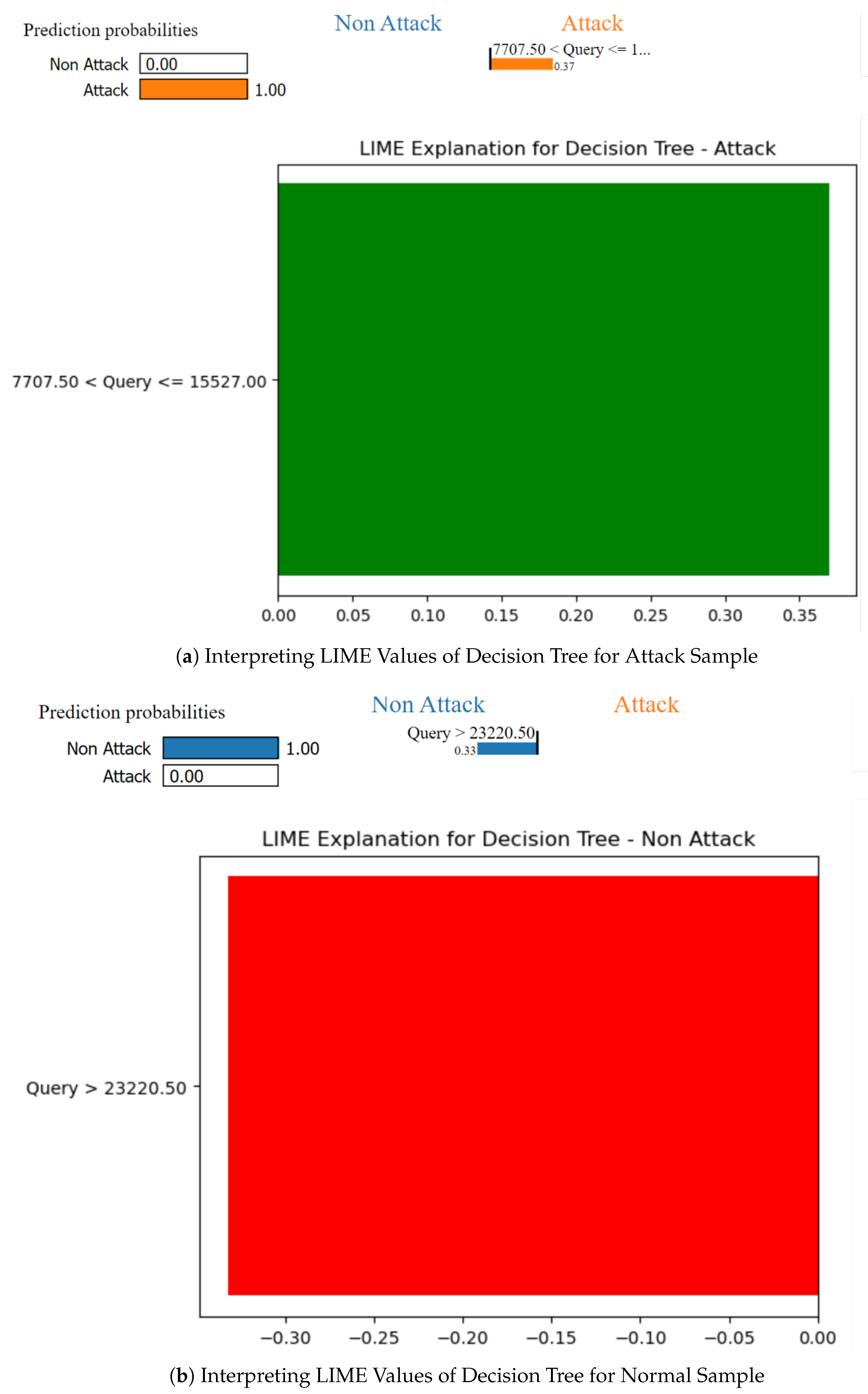

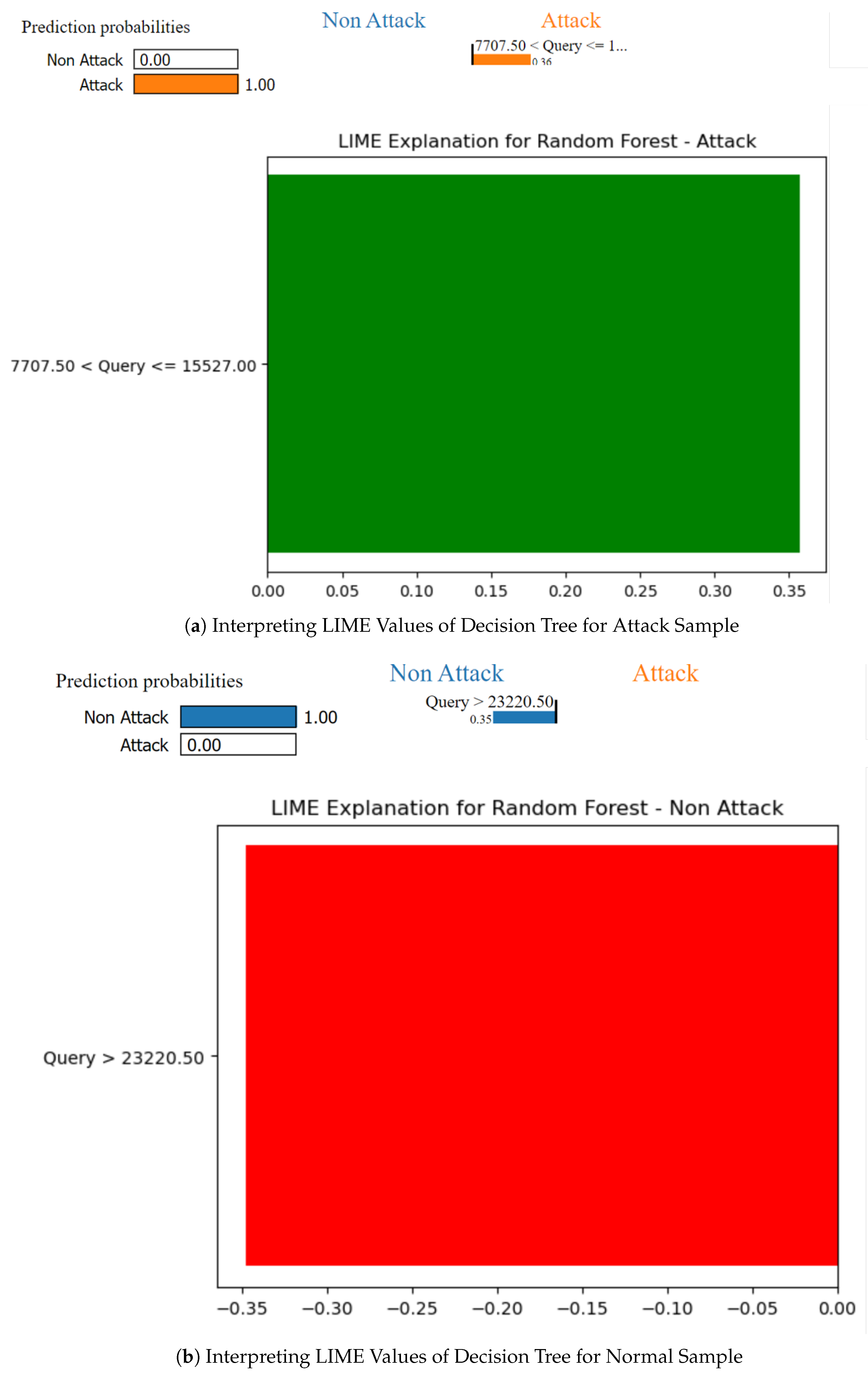

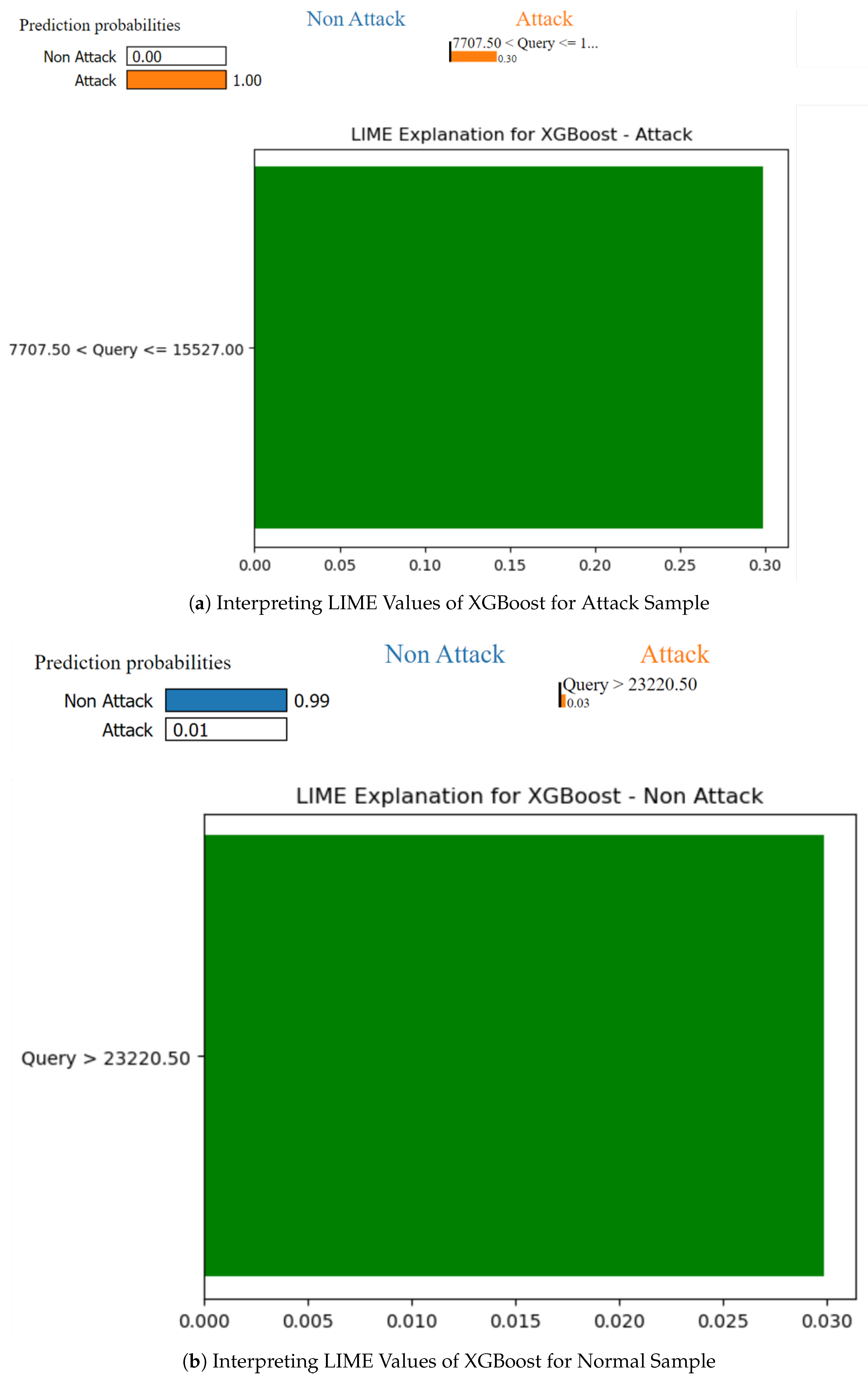

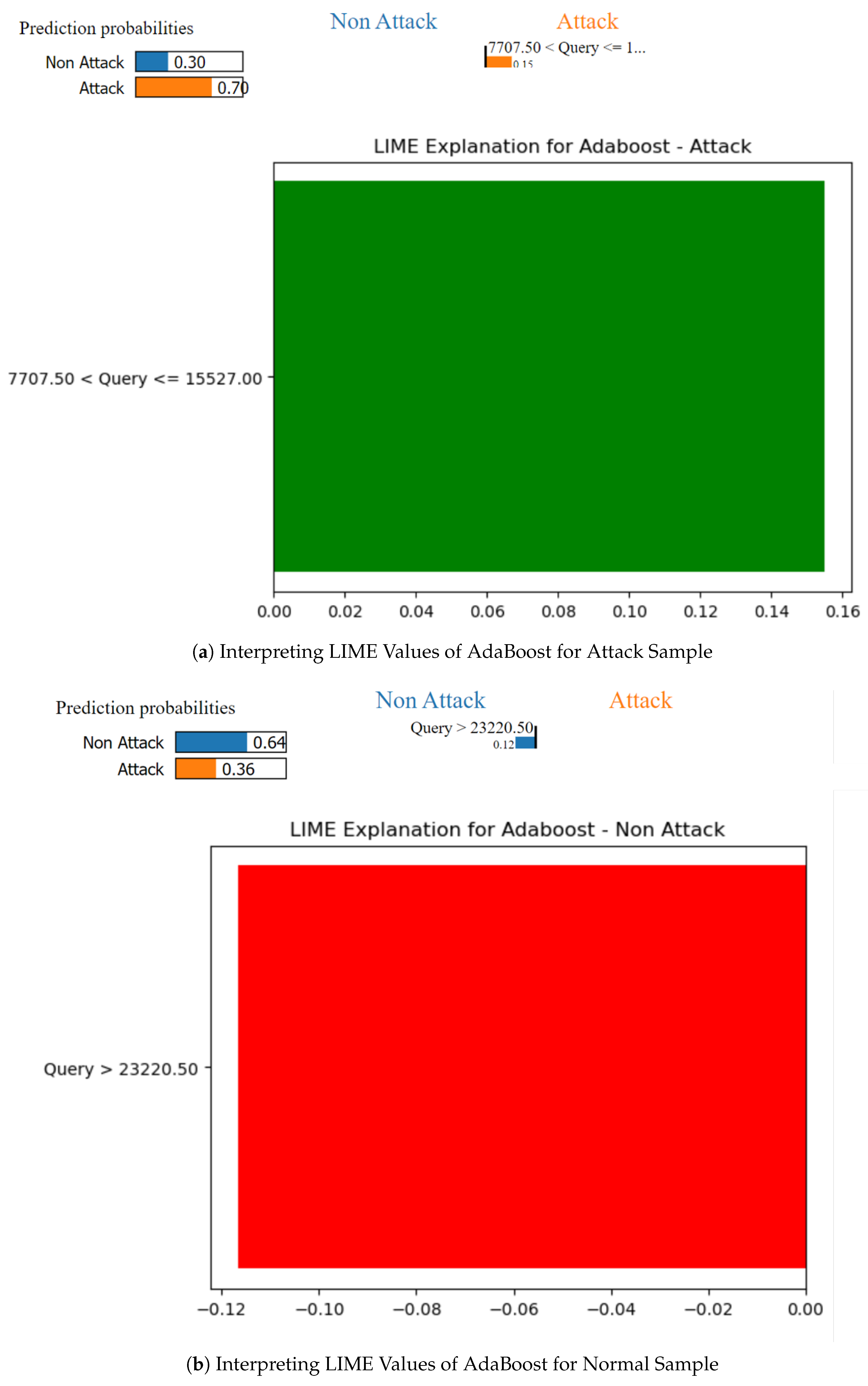

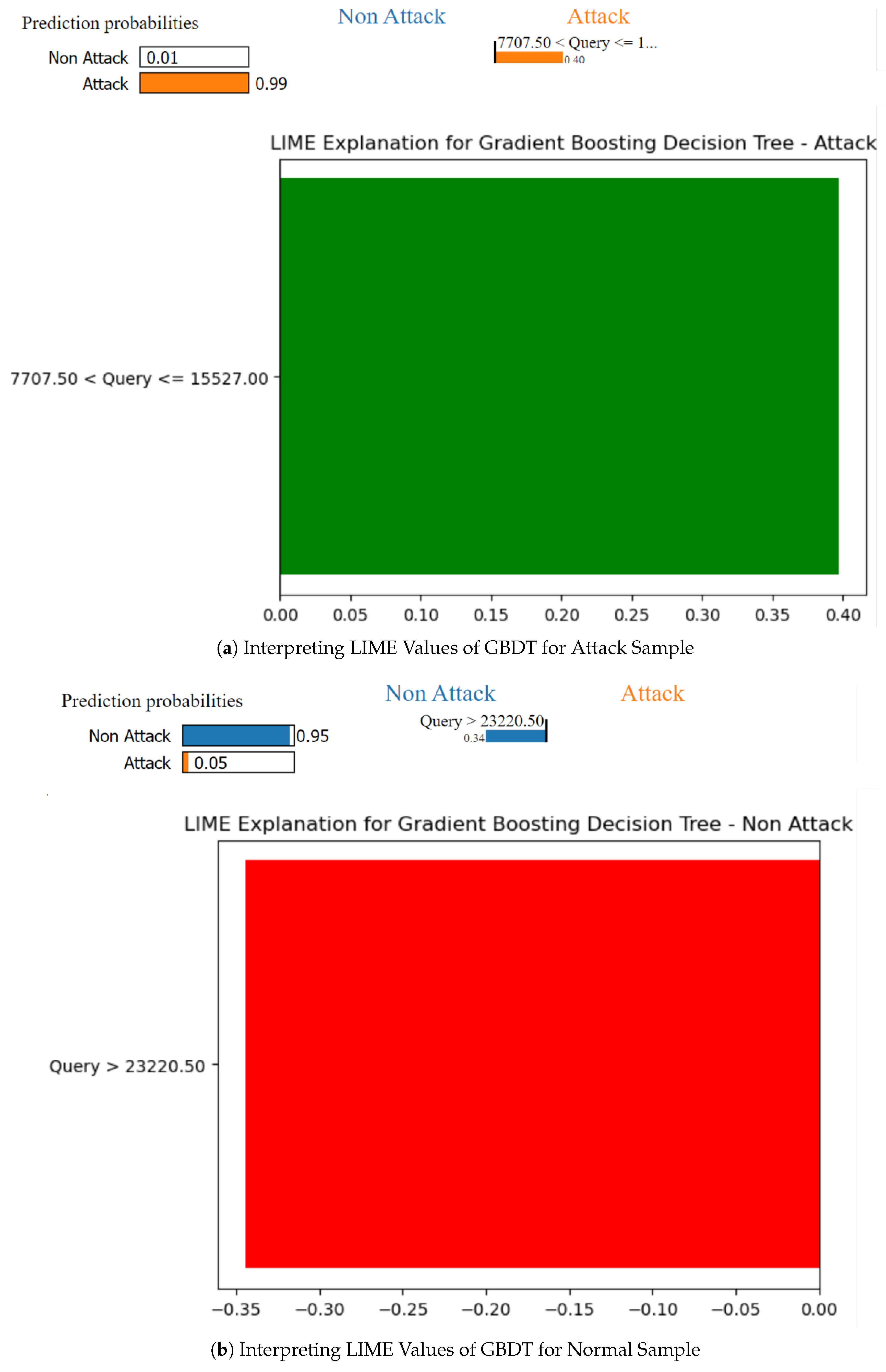

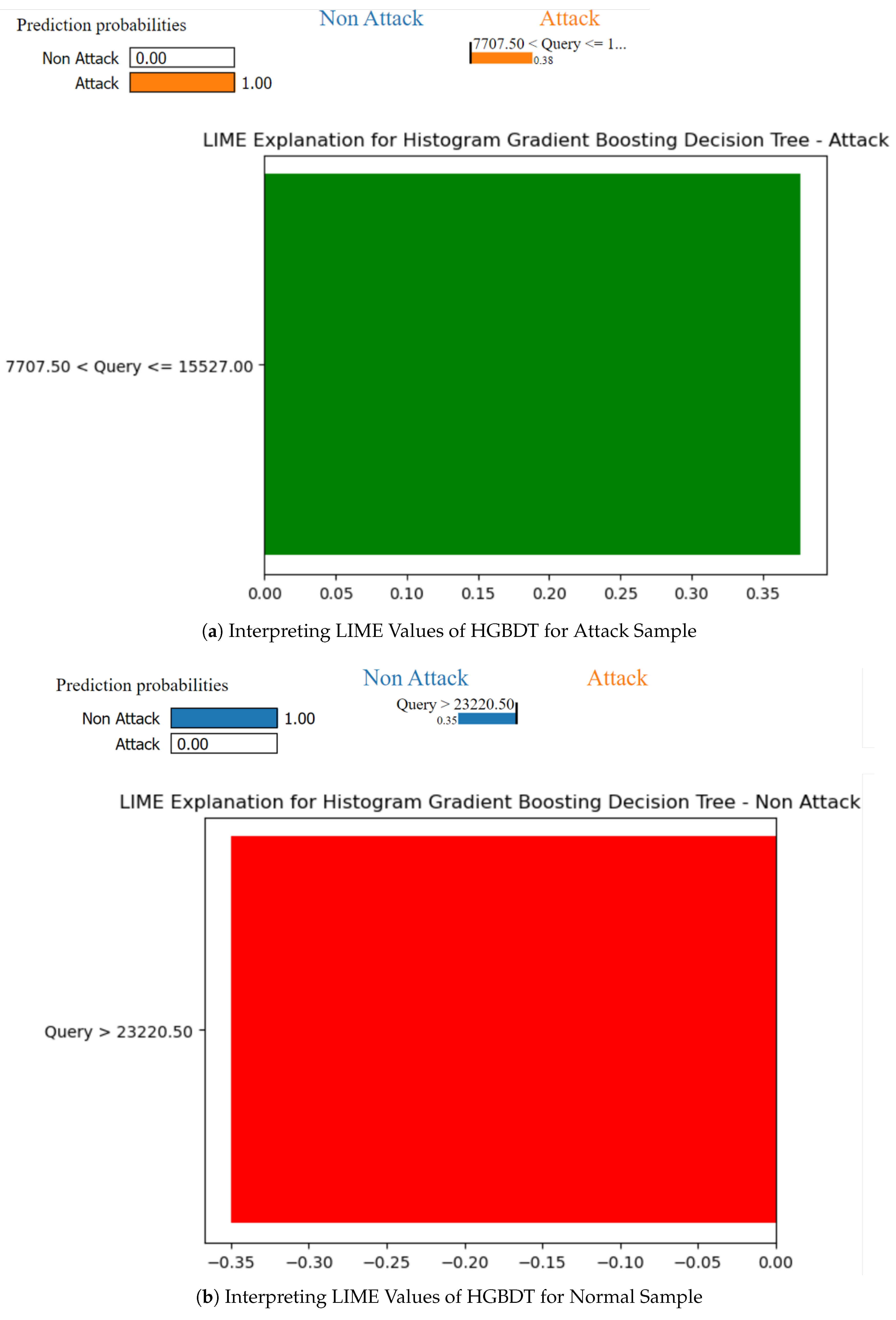

4.7. Local Explanation Results for Model Decision

5. Discussion

5.1. Insights from Local Explanation Results

5.2. Limitations and Future Work

6. Conclusion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- OWASP Top Ten. The Ten Most Critical Web Application Security Risks. Available online: https://owasp.org/www-project-top-ten/ (accessed on 2 May 2024).

- Halfond, W.G.; Viegas, J.; Orso, A. A classification of SQL-injection attacks and countermeasures. In Proceedings of the IEEE international symposium on secure software engineering; IEEE: Piscataway, NJ, March 2006; Volume 1, pp. 13–15. [Google Scholar]

- Jacob, I.; Pirnau, M. SQL INJECTION ATTACKS AND VULNERABILITIES. Journal of Information Systems & Operations Management 2020, 68–81. [Google Scholar]

- Su, Z.; Wassermann, G. The essence of command injection attacks in web applications. Acm Sigplan Notices 2006, 41(1), 372–382. [Google Scholar] [CrossRef]

- Demilie, W.B.; Deriba, F.G. Detection and prevention of SQLI attacks and developing compressive framework using machine learning and hybrid techniques. Journal of Big Data 2022, 9(1), 124. [Google Scholar] [CrossRef]

- Shar, L.K.; Tan, H.B.K. Predicting SQL injection and cross site scripting vulnerabilities through mining input sanitization patterns. Information and Software Technology 2013, 55(10), 1767–1780. [Google Scholar] [CrossRef]

- Nair, S.S. Securing Against Advanced Cyber Threats: A Comprehensive Guide to Phishing, XSS, and SQL Injection Defense. Journal of Computer Science and Technology Studies 2024, 6(1), 76–93. [Google Scholar] [CrossRef]

- Anley, C. Advanced SQL injection in SQL server applications. NGS Software Insight Security Research (NISR), 2002. [Google Scholar]

- Chandola, V.; Banerjee, A.; Kumar, V. Anomaly detection: A survey. ACM computing surveys (CSUR) 2009, 41(3), 1–58. [Google Scholar] [CrossRef]

- Le, T.T.H.; Kim, H.; Kang, H.; Kim, H. Classification and explanation for intrusion detection system based on ensemble trees and SHAP method. Sensors 2022, 22(3), 1154. [Google Scholar] [CrossRef] [PubMed]

- Friedman, J.H. Greedy function approximation: a gradient boosting machine. Annals of Statistics 2001, 1189–1232. [Google Scholar] [CrossRef]

- Dietterich, T.G. Ensemble methods in machine learning. In International workshop on multiple classifier systems; Springer Berlin Heidelberg: Berlin, Heidelberg, June 2000; pp. 1–15. [Google Scholar] [CrossRef]

- Breiman, L. Random forests. Machine Learning 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Le, T.T.H.; Oktian, Y.E.; Kim, H. XGBoost for imbalanced multiclass classification-based industrial internet of things intrusion detection systems. Sustainability 2022, 14(14), 8707. [Google Scholar] [CrossRef]

- Le, T.T.H.; Wardhani, R.W.; Putranto, D.S.C.; Jo, U.; Kim, H. Toward Enhanced Attack Detection and Explanation in Intrusion Detection System-Based IoT Environment Data. IEEE Access 2023, 11, 131661–131676. [Google Scholar] [CrossRef]

- Recio-García, J.A.; Orozco-del-Castillo, M.G.; Soladrero, J.A. Case-Based Explanation of Classification Models for the Detection of SQL Injection Attacks. In ICCBR Workshops; July 2023; pp. 200–215. [Google Scholar]

- Cumi-Guzman, B.A.; Espinosa-Chim, A.D.; Orozco-del-Castillo, M.G.; Recio-García, J.A. Counterfactual Explanation of a Classification Model for Detecting SQL Injection Attacks. 2024. [Google Scholar]

- Le, T.T.H.; Prihatno, A.T.; Oktian, Y.E.; Kang, H.; Kim, H. Exploring local explanation of practical industrial AI applications: A systematic literature review. Applied Sciences 2023, 13(9), 5809. [Google Scholar] [CrossRef]

- Lundberg, S. A unified approach to interpreting model predictions. arXiv 2017, arXiv:1705.07874. [Google Scholar]

- Ribeiro, M.T.; Singh, S.; Guestrin, C. "Why should i trust you?" Explaining the predictions of any classifier. In Proceedings of the 22nd ACM SIGKDD international conference on knowledge discovery and data mining; August 2016; pp. 1135–1144. [Google Scholar] [CrossRef]

- Valeur, F.; Mutz, D.; Vigna, G. A Learning-Based Approach to the Detection of SQL Attacks. In Proceedings of the Conference on Detection of Intrusions and Malware & Vulnerability Assessment; 2005. [Google Scholar] [CrossRef]

- Gao, Q.; Li, H.; Huang, T. Detecting SQL Injection Attack Using an Artificial Neural Network. In Proceedings of the International Conference on Computational Intelligence and Security; 2008. [Google Scholar]

- Xu, D.; Huang, H.; Yin, H. An Improved SQL Injection Detection Model Based on Machine Learning. Journal of Software Engineering and Applications 2010, 3(12), 1131–1135. [Google Scholar]

- Pan, X.; Liu, L.; Yan, H. SQL Injection Detection Based on AdaBoost Algorithm. In Proceedings of the IEEE International Conference on Computer and Information Technology; 2016. [Google Scholar]

- Le, T.Q.; Tran, D.H.; Nguyen, H.T. SQL Injection Detection Using Gradient Boosting Decision Trees. In Proceedings of the International Conference on Information and Communication Technology; 2018. [Google Scholar]

- Chen, T.; Guestrin, C. XGBoost: A Scalable Tree Boosting System. In Proceedings of the ACM SIGKDD International Conference on Knowledge Discovery and Data Mining; 2016. [Google Scholar] [CrossRef]

- Ke, G.; Meng, Q.; Finley, T.; Wang, T.; Chen, W.; Ma, W.; Liu, T.Y. LightGBM: A Highly Efficient Gradient Boosting Decision Tree. In Proceedings of the Advances in Neural Information Processing Systems; 2017. [Google Scholar]

- Nguyen, D.M.; Tran, M.T.; Pham, B.T. A Comparative Study of Machine Learning Algorithms for SQL Injection Detection. Journal of Information Security and Applications 2019, 44, 144–154. [Google Scholar]

- Chawla, N.V.; Bowyer, K.W.; Hall, L.O.; Kegelmeyer, W.P. SMOTE: Synthetic Minority Over-sampling Technique. Journal of Artificial Intelligence Research 2002, 16, 321–357. [Google Scholar] [CrossRef]

- Deriba, F.G.; Salau, A.O.; Mohammed, S.H.; Kassa, T.M.; Demilie, W.B. Development of a compressive framework using machine learning approaches for SQL injection attacks. Przeglad Elektrotechniczny 2022, 98(7), 181–187. [Google Scholar] [CrossRef]

- SQL Injection dataset. Available online: https://www.kaggle.com/datasets/sajid576/sql-injection-dataset (accessed on 25 May 2024).

- Hosam, E.; Hosny, H.; Ashraf, W.; Kaseb, A.S. Sql injection detection using machine learning techniques. In Proceedings of the 2021 8th International Conference on Soft Computing & Machine Intelligence (ISCMI); IEEE, November 2021; pp. 15–20. [Google Scholar] [CrossRef]

- Gowtham, M.; Pramod, H.B. Semantic query-featured ensemble learning model for SQL-injection attack detection in IoT-ecosystems. IEEE Transactions on Reliability 2021, 71(2), 1057–1074. [Google Scholar] [CrossRef]

- Abdulhamza, F.R.; Al-Janabi, R.J.S. SQL injection detection using 2D-convolutional neural networks (2D-CNN). In Proceedings of the 2022 International Conference on Data Science and Intelligent Computing (ICDSIC); IEEE, November 2022; pp. 212–217. [Google Scholar] [CrossRef]

- Roy, P.; Kumar, R.; Rani, P. SQL injection attack detection by machine learning classifier. In Proceedings of the 2022 International Conference on Applied Artificial Intelligence and Computing (ICAAIC); IEEE, May 2022; pp. 394–400. [Google Scholar] [CrossRef]

| Model Name | Hyperparameter | Value |

|---|---|---|

| XGBClassifier | enable_categorical | False |

| n_estimators | 100 | |

| RandomForestClassifier | n_estimators | 100 |

| criterion | gini | |

| max_depth | None | |

| min_samples_split | 2 | |

| min_samples_leaf | 1 | |

| max_features | auto | |

| DecisionTreeClassifier | criterion | gini |

| splitter | best | |

| max_depth | None | |

| min_samples_split | 2 | |

| random_state | 42 | |

| GradientBoostingClassifier (GBDT) | loss | deviance |

| learning_rate | 0.1 | |

| n_estimators | 100 | |

| subsample | 1.0 | |

| criterion | friedman_mse | |

| min_samples_split | 2 | |

| min_samples_leaf | 1 | |

| max_depth | 3 | |

| max_features | None | |

| random_state | 42 | |

| HistGradientBoostingClassifier (HGBDT) | loss | auto |

| learning_rate | 0.1 | |

| max_iterations | 100 | |

| max_leaf_nodes | 31 | |

| max_depth | None | |

| min_samples_leaf | 20 | |

| l2_regularization | 0.0 | |

| max_bins | 255 | |

| early_stopping | auto | |

| scoring | loss | |

| random_state | 42 | |

| AdaBoostClassifier | n_estimators | 50 |

| learning_rate | 1.0 | |

| algorithm | SAMME.R | |

| base_estimator | DecisionTreeClassifier | |

| random_state | None | |

| estimator_params | () |

| Model | Precision | Recall | Accuracy | F1 | ROC |

|---|---|---|---|---|---|

| Decision Tree | 0.9942 | 0.9925 | 0.9950 | 0.9933 | 0.99 |

| Random Forest | 0.9942 | 0.9925 | 0.9950 | 0.9933 | 0.99 |

| XGBoost | 0.7072 | 0.9980 | 0.8457 | 0.8278 | 0.88 |

| AdaBoost | 0.9942 | 0.9925 | 0.9950 | 0.9933 | 0.99 |

| GBDT | 0.9976 | 0.9748 | 0.9898 | 0.9861 | 0.99 |

| HGBDT | 0.9759 | 0.9501 | 0.9727 | 0.9628 | 0.97 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).