Submitted:

16 October 2024

Posted:

16 October 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Contribution 1: This paper proposes a hybrid methodology that integrates multiple components: preprocessing of map information, high-level DM facilitated by the DRL module, and low-level control signals managed by a classic controller. Our approach not only solves individual complex urban scenarios but also handles concatenated scenarios. This contribution is an extension of the work previously published in the conference IV 2023 [9].

- Contribution 2: In this work, a novel low-level controller is developed. This includes a Linear-quadratic regulator (LQR) controller for trajectory tracking and a Model Predictive Control (MPC) controller for manoeuvre execution. The online integration of these two controllers results in a hybrid low-level control module that allows the execution of high-level actions in a comfortable and safe manner.

- Contribution 3: This study also presents a RL framework developed within the Car Learning to Act (CARLA) simulator [10] to evaluate complete vehicle navigation with dynamics. Unique to this framework is the incorporation of evaluation metrics that extend beyond mere success rates to include the smoothness and comfort of the agent’s trajectory.

2. Related Works

2.1. Transformer-Based Reinforcement Learning

2.2. Attention-Based Deep Reinforcement Learning

2.3. Combining Machine Learning and Rule-Based Algorithms

2.4. Tactical Behaviour Planning

2.5. Discussion

- State Dimensionality and Preprocessing: The architectures employing Transformer and Attention mechanisms are characterized by handling high state dimensionalities, utilizing sophisticated preprocessing techniques to manage complex input data. Conversely, the Decision-Control, Tactical Behaviour, and our approach, with an emphasis on low state dimensionalities, leverage simpler preprocessing methods such as map data, aiming for computational efficiency and reduced complexity in data handling.

- Action and Control Signal: Transformer-based and Attention-based provide low-level control commands, often resulting in sharper vehicle control. In contrast, our methodology, along with Decision-Control and Tactical Behaviour, opt for high-level actions that yield smoother control signals, promoting more naturalistic and comfortable driving behaviours.

- Scenario Handling: The capacity to adapt to multiple, including concatenated, scenarios shows the versatility of DRL models in AD. Our approach, similar to Transformer-based and Attention-based models, supports a broad spectrum of driving situations, crucial for developing adaptable and dynamic AD systems.

- Computational Cost and Scalability: In terms of computational cost, both our proposal and Tactical Behaviour have lower costs and higher efficiency. Moreover, our model, together with the Transformer-based and the Attention-based, show scalability, being easily transferable from one environment to another.

- Real Implementation: Among the reviewed architectures, only our approach and the Attention-based model have been validated in real-world settings, showcasing their reliability and applicability beyond simulated environments.

3. Background

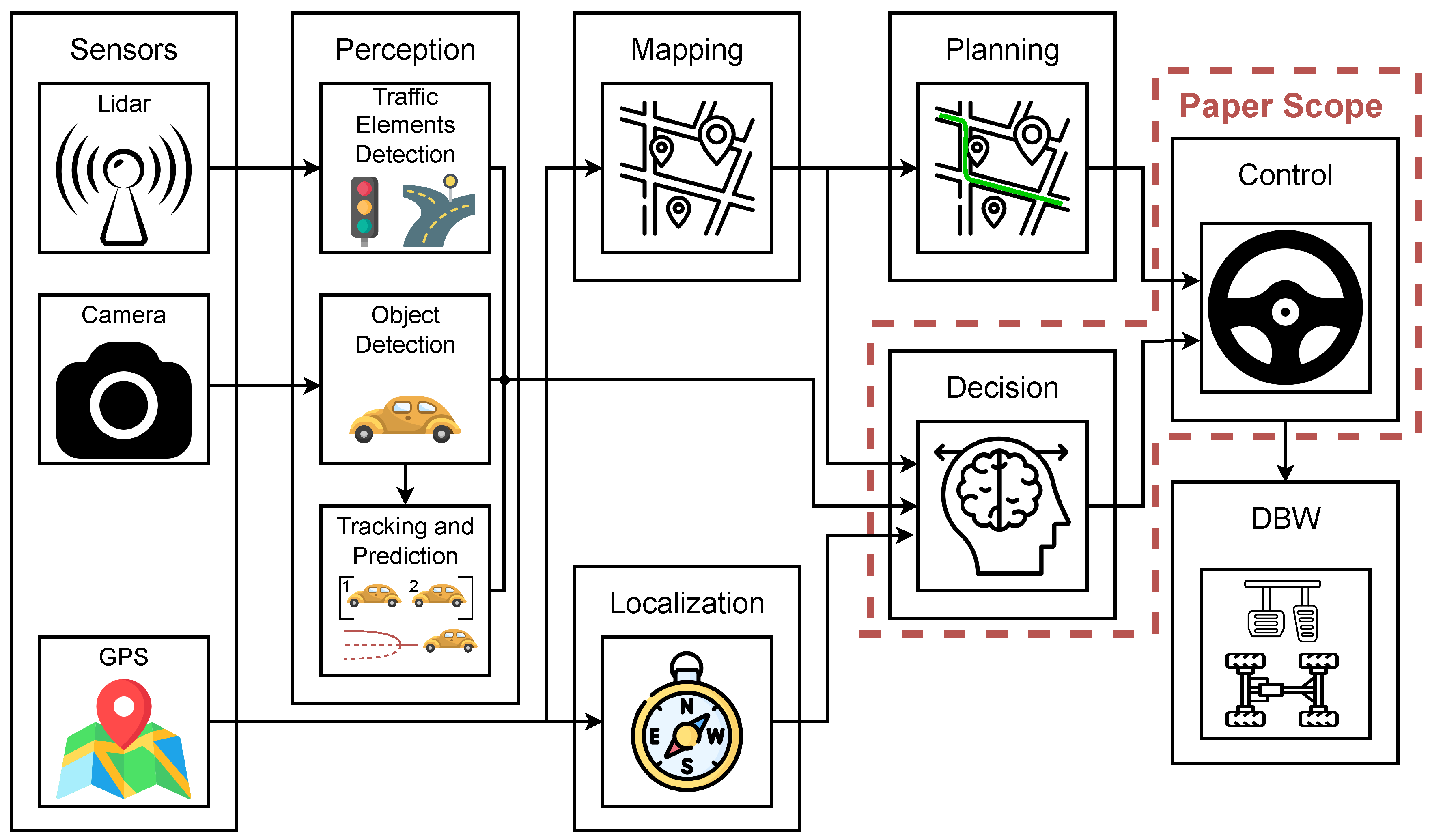

3.1. Autonomous Driving

- Rule-Based Systems: Use a predefined set of rules to guide the vehicle’s decisions.

- Finite State Machines: Model the DM process as a series of states and transitions.

- Behaviour Trees: This approach structures the DM process in a tree-like hierarchy, allowing for modular and scalable systems.

- Machine Learning and Deep Learning: These techniques, where DL is a subset of ML, enable vehicles to learn from data and make decisions based on features extracted from data.

- Reinforcement Learning: is a subset of ML, characterized by its focus on training an agent through experiments to interact effectively with its environment.

3.2. Partially Observable Markov Decision Processes

- S is a finite set of states, representing the possible configurations of the environment.

- A is a finite set of actions available to the decision-maker or agent.

- is the state transition probability function and represents the probability of transitioning to state from state s after taking action a.

- is the reward function, associating a numerical reward (or cost) with each action taken in a given state.

- is a finite set of observations that the agent can perceive.

- is the observation function, defines the probability of observing o after taking action a and ending up in state .

3.3. Deep Reinforcement Learning

4. Methodology

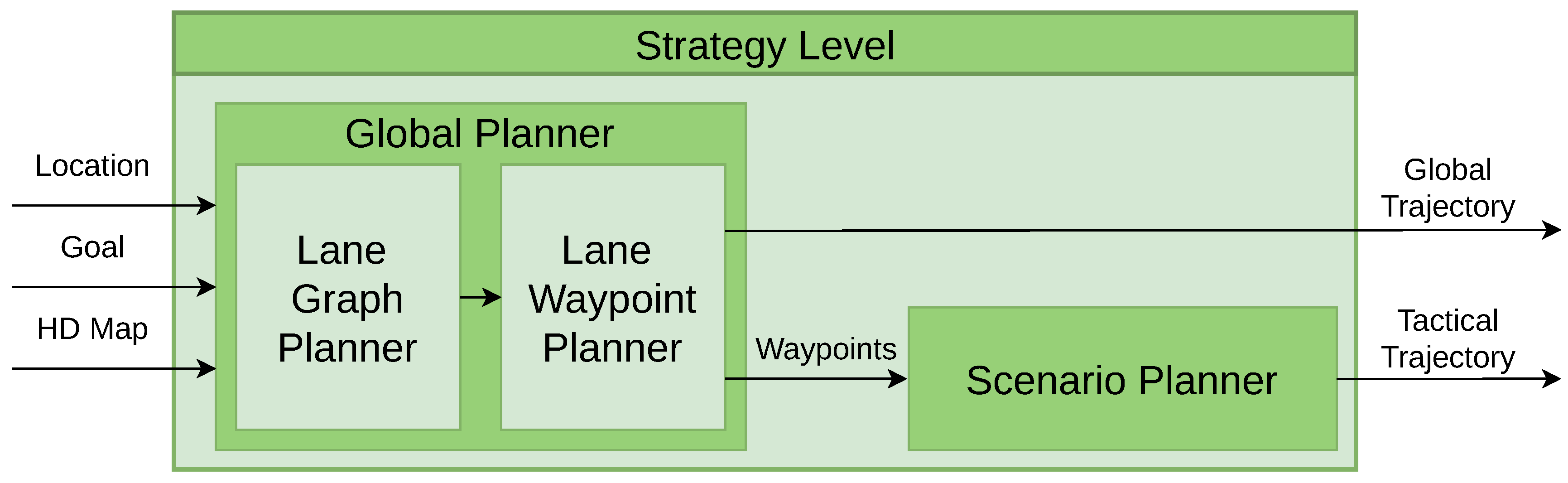

4.1. Strategy Level

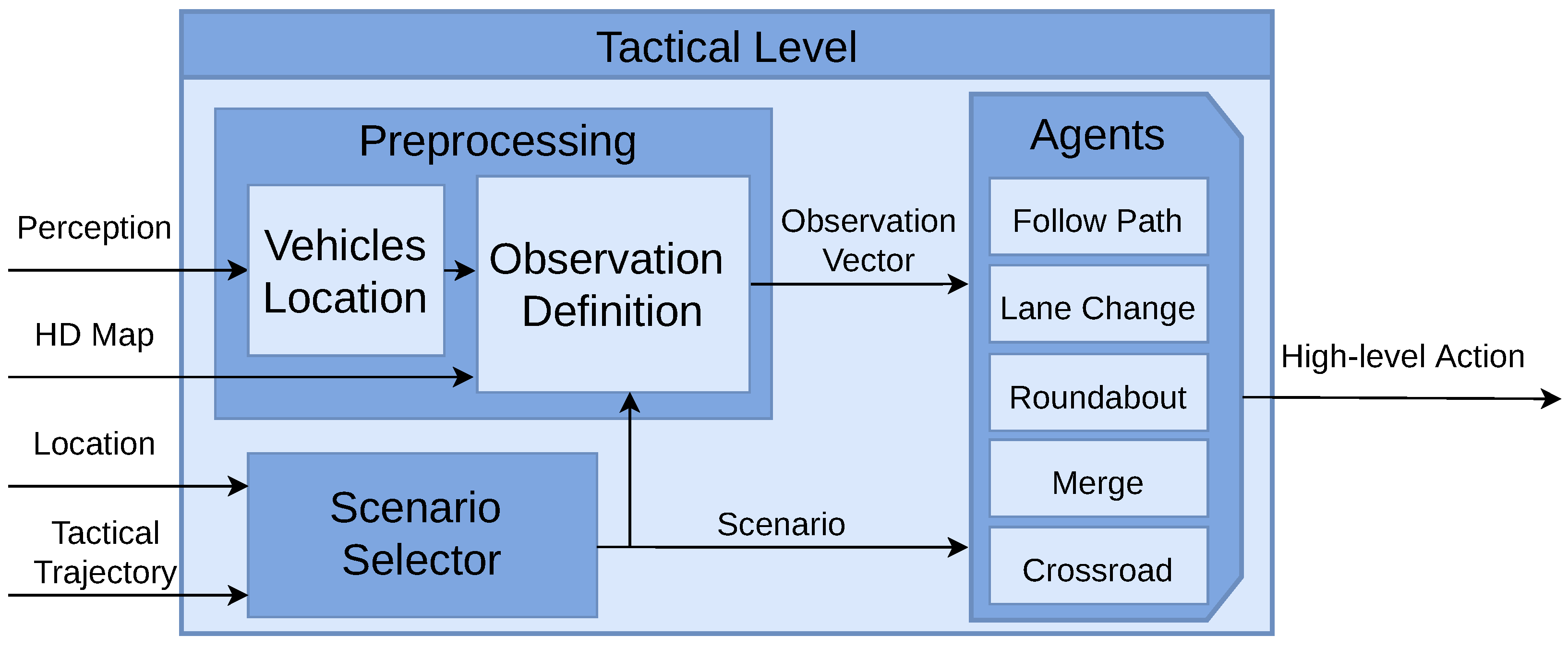

4.2. Tactical Level

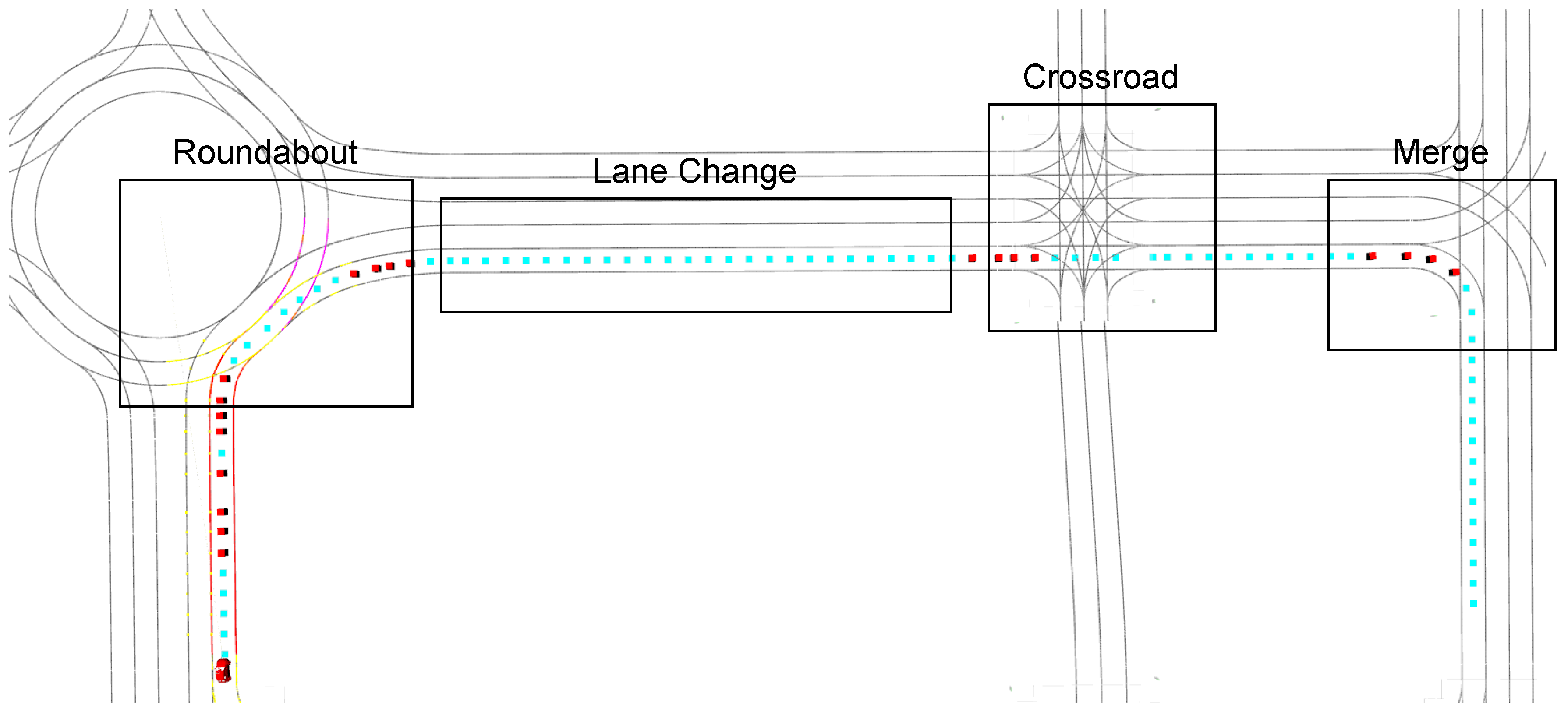

- Scenario Selector: This module is in charge of selecting the agent to be executed. In the tactical trajectory, the locations where each use case starts and ends are stored. The "Follow Path" agent is activated by default. When the vehicle reaches one of these locations, the selector activates the corresponding agent. Once the end of the use case is reached, the "Follow Path" agent is activated again.

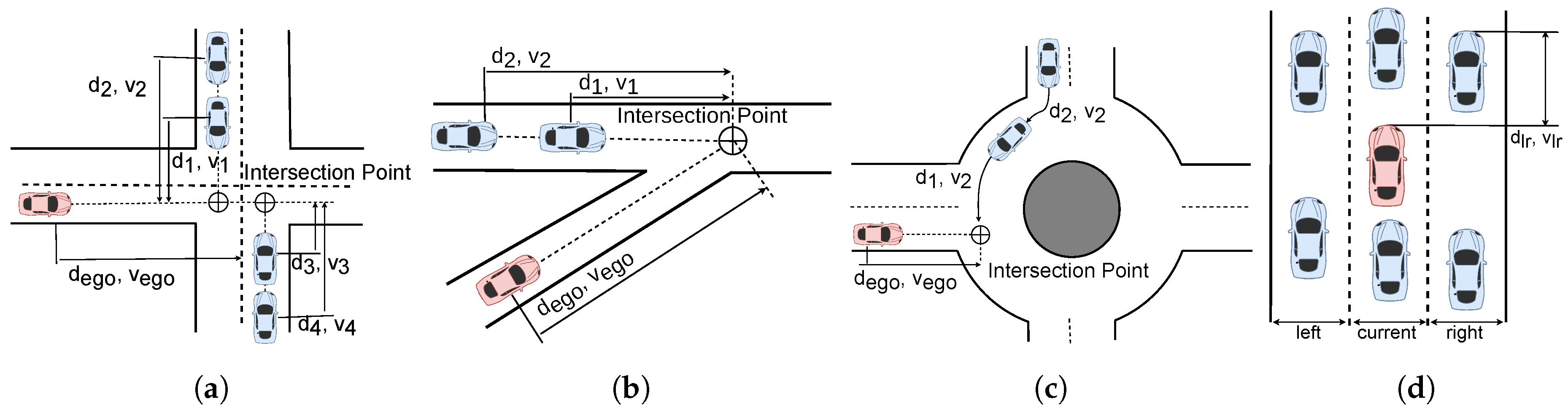

- Preprocessing: Another task is the preprocessing of perception data, which involves transforming global locations and velocities of surrounding vehicles into an observation vector. This process starts by obtaining the global location of each vehicle, which is then mapped to a specific waypoint on the HD map. Subsequently, each waypoint is associated with a particular lane and road. This information, coupled with the current scenario as determined by the Scenario Selector, forms the basis for generating distinct observation vectors. Importantly, these vectors are scenario-specific, varying according to the different driving situations encountered.

- Agents: In the proposed architecture, five distinct behaviours (use cases) can be executed. By default, the ’Follow Path’ behaviour is selected, where the operative level follows the global trajectory while maintaining a safe distance from the leading vehicle, and no active decisions are made. Upon the Scenario Selector choosing a specific scenario, one of the following agents is activated (Lane Change, Roundabout, Merge, Crossroad). These agents then take actions (drive, stop, turn left, turn right) based on the corresponding observation vector.

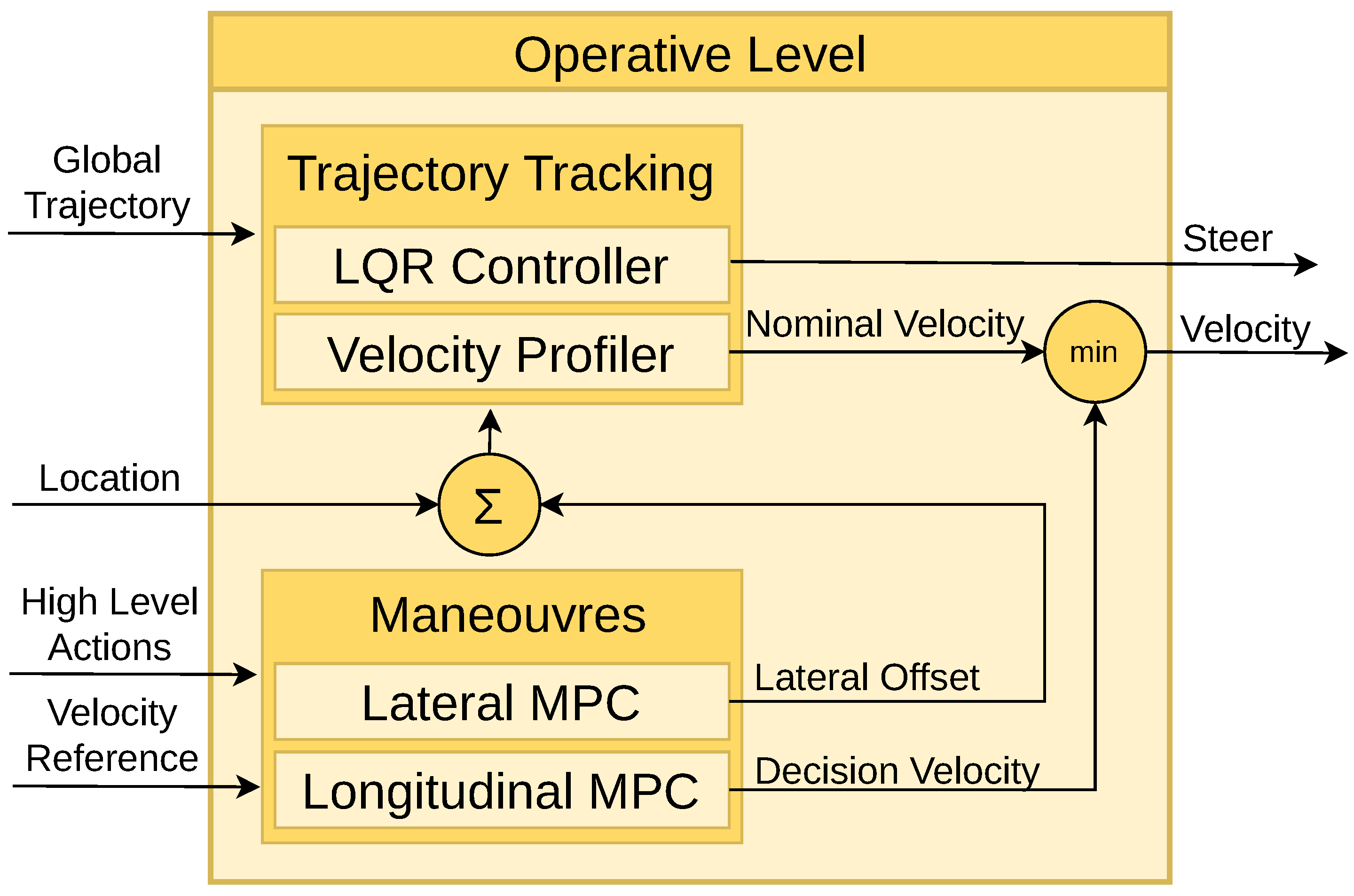

4.3. Operative Level

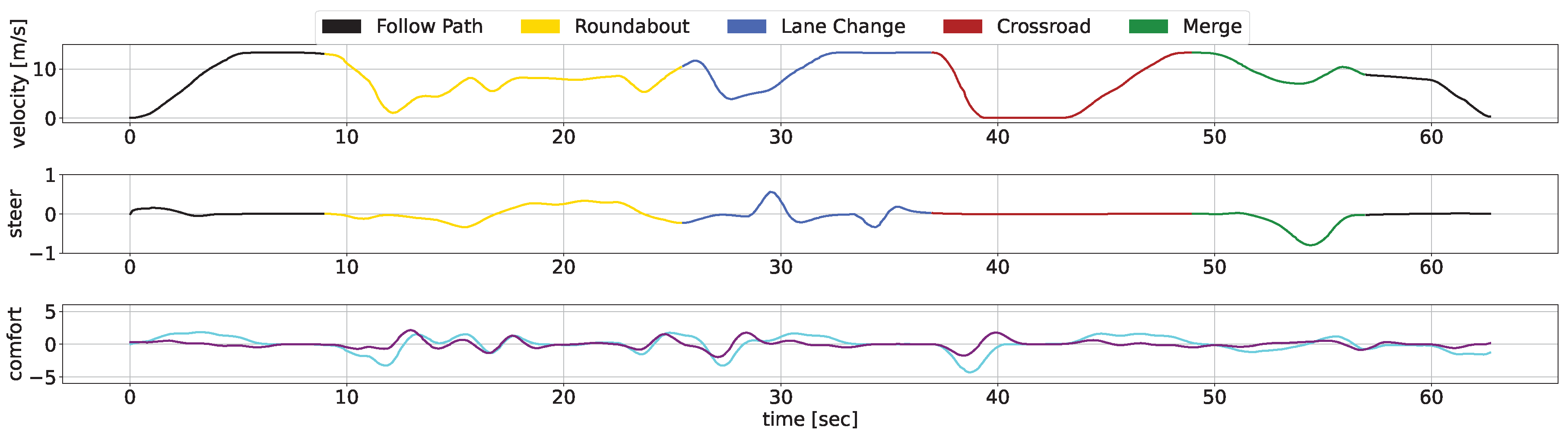

- Trajectory Tracking: Utilizing the provided waypoints, a smooth trajectory is computed using the LQR controller. At each simulation time step, the lateral and orientation errors are calculated to generate a steering command aimed at minimizing these errors. Besides, a velocity command is derived based on the curvature radius of the trajectory. These steering and velocity commands constitute the nominal commands, enabling the vehicle to follow the predefined path accurately.

- Manoeuvres: In the operative level, manoeuvres modify the nominal commands based on the tactical level’s requirements, covering three primary tasks. Firstly, when a preceding vehicle is detected, the MPC controller adjusts the ego vehicle’s velocity to adapt it to the ahead vehicle velocity keeping a safe distance. Secondly, if a stop action is demanded by an agent, the decision velocity decreases smoothly, being the resulting command the minimum between this constrained velocity and the nominal velocity. Lastly, in the case of a lane change request (left or right), the MPC controller generates a lateral offset to modify the vehicle’s location, and the LQR controller generates a smooth steering signal to facilitate the lane change.

5. Experiments

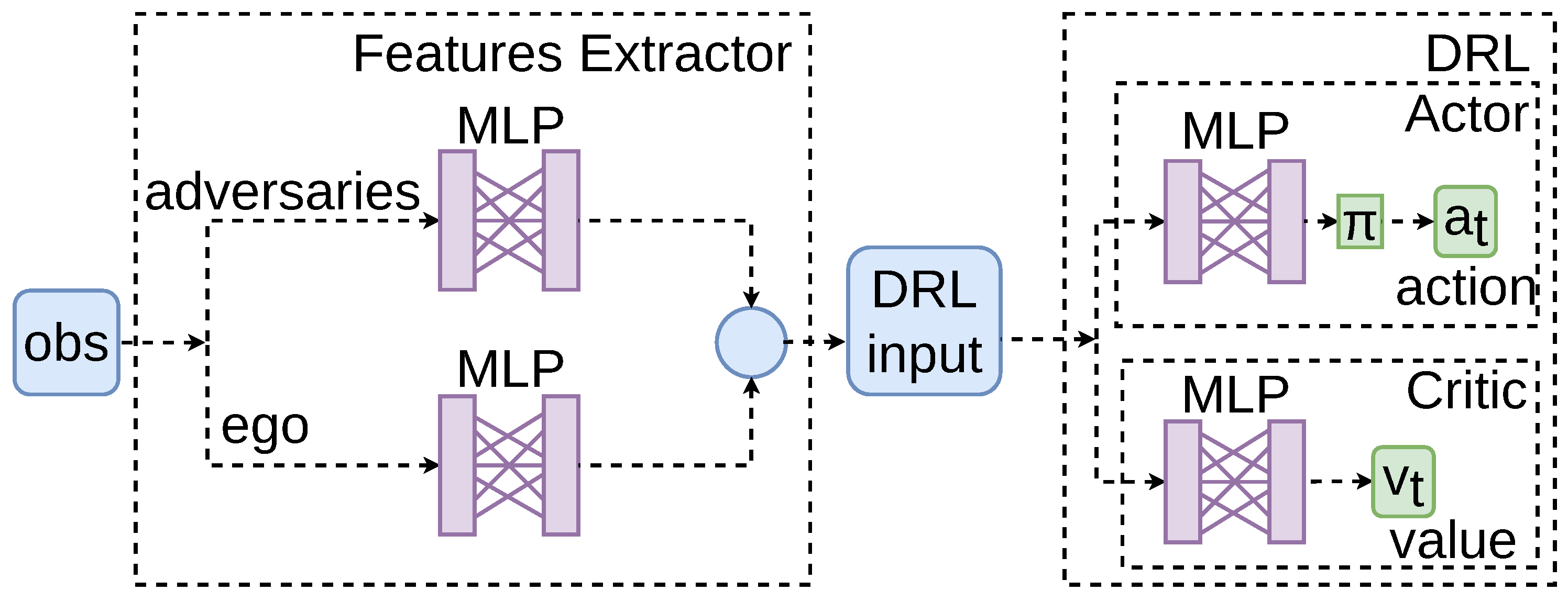

- Features Extractor Module. In line with insights from our previous research, this work incorporates a feature extraction module, which has proven to enhance the convergence of training [37], comprising a dense Multi-Layer Perceptron (MLP) to process observations from the environment. Information about both adversarial and ego vehicles is separately processed through the feature extractor. The outputs are then concatenated into a single vector, serving as the input for the DRL algorithms.

- Deep Reinforcement Learning Algorithms. An actor-critic framework is adopted. Within this framework, one MLP functions as the actor, determining the actions to take, while a separate MLP serves as the critic, evaluating the action’s value.

5.1. POMDP Formulation

5.1.1. State

5.1.2. Observation

5.1.3. Action

5.1.4. Reward

- Reward based on the velocity: ;

- Reward for reaching the end of the road: ;

- Penalty for collisions: ;

5.2. Evaluation Metrics

- Success Rate (%): This metric indicates the frequency of succeed episodes performed by the agent during simulation, providing a direct measure of safety.

- Average of 95th Percentile of Jerk (per episode, in m/s3): Jerk is the rate of acceleration changes. This metric reflects the smoothness of the driving, relating to passenger comfort.

- Average of Maximum Jerk (per episode, in m/s3): This metric measures the highest jerk experienced.

- Average of 95th Percentile of Acceleration (per episode, in m/s2): This metric provides insight into how aggressively the vehicle accelerates, impacting both comfort and efficiency.

- Average Time of Episode Completion (in seconds): It measures the duration taken to complete an episode, indicating the efficiency of the agent.

- Average Speed (in m/s): This metric assesses the agent’s ability to maintain a consistent and efficient speed throughout the episode.

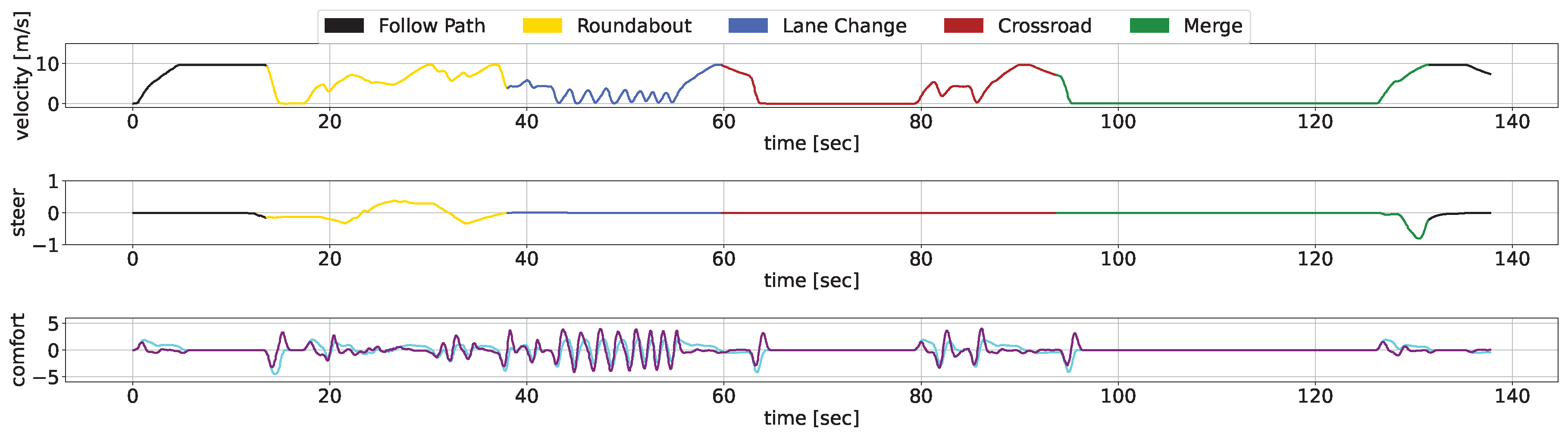

5.3. Concatenated Use Cases Scenario

6. Conclusions and Future Works

Funding

References

- Rosenzweig, J.; Bartl, M. A review and analysis of literature on autonomous driving. E-Journal Making-of Innovation 2015, pp. 1–57.

- Deichmann, J. Autonomous Driving’s Future: Convenient and Connected; McKinsey, 2023.

- Wansley, M. The End of Accidents. UC Davis L. Rev. 2021, 55, 269.

- Hubmann, C.; Becker, M.; Althoff, D.; Lenz, D.; Stiller, C. Decision making for autonomous driving considering interaction and uncertain prediction of surrounding vehicles. 2017 IEEE intelligent vehicles symposium (IV). IEEE, 2017, pp. 1671–1678. [CrossRef]

- Wang, X.; Wang, S.; Liang, X.; Zhao, D.; Huang, J.; Xu, X.; Dai, B.; Miao, Q. Deep reinforcement learning: A survey. IEEE Transactions on Neural Networks and Learning Systems 2022. [CrossRef]

- Moerland, T.M.; Broekens, J.; Jonker, C.M. Model-based Reinforcement Learning: A Survey. CoRR 2020, abs/2006.16712, [2006.16712]. [CrossRef]

- Dinneweth, J.; Boubezoul, A.; Mandiau, R.; Espié, S. Multi-agent reinforcement learning for autonomous vehicles: A survey. Autonomous Intelligent Systems 2022, 2, 27. [CrossRef]

- Sun, Q.; Zhang, L.; Yu, H.; Zhang, W.; Mei, Y.; Xiong, H. Hierarchical reinforcement learning for dynamic autonomous vehicle navigation at intelligent intersections. Proceedings of the 29th ACM SIGKDD Conference on Knowledge Discovery and Data Mining, 2023, pp. 4852–4861. [CrossRef]

- Gutiérrez-Moreno, R.; Barea, R.; López-Guillén, E.; Arango, F.; Abdeselam, N.; Bergasa, L.M. Hybrid Decision Making for Autonomous Driving in Complex Urban Scenarios. 2023 IEEE Intelligent Vehicles Symposium (IV), 2023. [CrossRef]

- Dosovitskiy, A.; Ros, G.; Codevilla, F.; Lopez, A.; Koltun, V. CARLA: An Open Urban Driving Simulator. Proceedings of the 1st Annual Conference on Robot Learning; Levine, S.; Vanhoucke, V.; Goldberg, K., Eds. PMLR, 2017, Vol. 78, Proceedings of Machine Learning Research, pp. 1–16.

- Tram, T.; Batkovic, I.; Ali, M.; Sjöberg, J. Learning When to Drive in Intersections by Combining Reinforcement Learning and Model Predictive Control. 2019 IEEE Intelligent Transportation Systems Conference (ITSC), 2019, pp. 3263–3268. [CrossRef]

- Agarwal, P.; Rahman, A.A.; St-Charles, P.L.; Prince, S.J.; Kahou, S.E. Transformers in reinforcement learning: a survey. arXiv preprint arXiv:2307.05979 2023. [CrossRef]

- Huang, Z.; Liu, H.; Wu, J.; Huang, W.; Lv, C. Learning interaction-aware motion prediction model for decision-making in autonomous driving. 2023 IEEE 26th International Conference on Intelligent Transportation Systems (ITSC). IEEE, 2023, pp. 4820–4826. [CrossRef]

- Fu, J.; Shen, Y.; Jian, Z.; Chen, S.; Xin, J.; Zheng, N. InteractionNet: Joint Planning and Prediction for Autonomous Driving with Transformers. 2023 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). IEEE, 2023, pp. 9332–9339. [CrossRef]

- Liu, H.; Huang, Z.; Mo, X.; Lv, C. Augmenting Reinforcement Learning with Transformer-based Scene Representation Learning for Decision-making of Autonomous Driving, 2023, [arXiv:cs.LG/2208.12263]. [CrossRef]

- Chen, Y.; Dong, C.; Palanisamy, P.; Mudalige, P.; Muelling, K.; Dolan, J.M. Attention-based hierarchical deep reinforcement learning for lane change behaviors in autonomous driving. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, 2019, pp. 0–0.

- Cao, D.; Zhao, J.; Hu, W.; Ding, F.; Huang, Q.; Chen, Z. Attention enabled multi-agent DRL for decentralized volt-VAR control of active distribution system using PV inverters and SVCs. IEEE transactions on sustainable energy 2021, 12, 1582–1592. [CrossRef]

- Seong, H.; Jung, C.; Lee, S.; Shim, D.H. Learning to Drive at Unsignalized Intersections using Attention-based Deep Reinforcement Learning. 2021 IEEE International Intelligent Transportation Systems Conference (ITSC), 2021, pp. 559–566. [CrossRef]

- Avilés, H.; Negrete, M.; Reyes, A.; Machucho, R.; Rivera, K.; de-la Garza, G.; Petrilli, A. Autonomous Behavior Selection For Self-driving Cars Using Probabilistic Logic Factored Markov Decision Processes. Applied Artificial Intelligence 2024, 38, 2304942.

- Lu, J.; Alcan, G.; Kyrki, V. Integrating Expert Guidance for Efficient Learning of Safe Overtaking in Autonomous Driving Using Deep Reinforcement Learning. arXiv preprint arXiv:2308.09456 2023. [CrossRef]

- Aksjonov, A.; Kyrki, V. A Safety-Critical Decision Making and Control Framework Combining Machine Learning and Rule-based Algorithms, 2022, [arXiv:cs.AI/2201.12819]. [CrossRef]

- S Kassem, N.; F Saad, S.; I Elshaaer, Y. Behavior Planning for Autonomous Driving: Methodologies, Applications, and Future Orientation 2023.

- Gong, X.; Wang, B.; Liang, S. Collision-Free Cooperative Motion Planning and Decision-Making for Connected and Automated Vehicles at Unsignalized Intersections. IEEE Transactions on Systems, Man, and Cybernetics: Systems 2024. [CrossRef]

- Sefati, M.; Chandiramani, J.; Kreiskoether, K.; Kampker, A.; Baldi, S. Towards tactical behaviour planning under uncertainties for automated vehicles in urban scenarios. 2017 IEEE 20th International Conference on Intelligent Transportation Systems (ITSC), 2017, pp. 1–7. [CrossRef]

- O’Kelly, M.; Sinha, A.; Namkoong, H.; Duchi, J.C.; Tedrake, R. Scalable End-to-End Autonomous Vehicle Testing via Rare-event Simulation. CoRR 2018, abs/1811.00145, [1811.00145].

- Ma, Y.; Wang, Z.; Yang, H.; Yang, L. Artificial intelligence applications in the development of autonomous vehicles: a survey. IEEE/CAA Journal of Automatica Sinica 2020, 7, 315–329. [CrossRef]

- Kessler, T.; Bernhard, J.; Buechel, M.; Esterle, K.; Hart, P.; Malovetz, D.; Truong Le, M.; Diehl, F.; Brunner, T.; Knoll, A. Bridging the Gap between Open Source Software and Vehicle Hardware for Autonomous Driving. 2019 IEEE Intelligent Vehicles Symposium (IV), 2019, pp. 1612–1619. [CrossRef]

- Yurtsever, E.; Lambert, J.; Carballo, A.; Takeda, K. A Survey of Autonomous Driving: Common Practices and Emerging Technologies. IEEE Access 2020, 8, 58443–58469. [CrossRef]

- Gómez-Huélamo, C.; Diaz-Diaz, A.; Araluce, J.; Ortiz, M.E.; Gutiérrez, R.; Arango, F.; Llamazares, Á.; Bergasa, L.M. How to build and validate a safe and reliable Autonomous Driving stack? A ROS based software modular architecture baseline. 2022 IEEE Intelligent Vehicles Symposium (IV). IEEE, 2022, pp. 1282–1289. [CrossRef]

- Liu, Q.; Li, X.; Yuan, S.; Li, Z. Decision-Making Technology for Autonomous Vehicles Learning-Based Methods, Applications and Future Outlook, 2021, [arXiv:cs.RO/2107.01110].

- Shani, G.; Pineau, J.; Kaplow, R. A survey of point-based POMDP solvers. Autonomous Agents and Multi-Agent Systems 2013, 27, 1–51. [CrossRef]

- Schulman, J.; Levine, S.; Abbeel, P.; Jordan, M.; Moritz, P. Trust Region Policy Optimization. Proceedings of the 32nd International Conference on Machine Learning; Bach, F.; Blei, D., Eds.; PMLR: Lille, France, 2015; Vol. 37, Proceedings of Machine Learning Research, pp. 1889–1897.

- Diaz-Diaz, A.; Ocaña, M.; Llamazares, A.; Gómez-Huélamo, C.; Revenga, P.; Bergasa, L.M. HD maps: Exploiting OpenDRIVE potential for Path Planning and Map Monitoring. 2022 IEEE Intelligent Vehicles Symposium (IV), 2022. [CrossRef]

- Gutiérrez, R.; López-Guillén, E.; Bergasa, L.M.; Barea, R.; Pérez, Ó.; Gómez-Huélamo, C.; Arango, F.; del Egido, J.; López-Fernández, J. A Waypoint Tracking Controller for Autonomous Road Vehicles Using ROS Framework. Sensors 2020, 20. [CrossRef]

- Abdeselam, N.; Gutiérrez-Moreno, R.; López-Guillén, E.; Barea, R.; Montiel-Marín, S.; Bergasa, L.M. Hybrid MPC and Spline-based Controller for Lane Change Maneuvers in Autonomous Vehicles. 2023 IEEE International Conference on Intelligent Transportation Systems (ITSC), 2023, pp. 1–6. [CrossRef]

- Raffin, A.; Hill, A.; Gleave, A.; Kanervisto, A.; Ernestus, M.; Dormann, N. Stable-Baselines3: Reliable Reinforcement Learning Implementations. Journal of Machine Learning Research 2021, 22, 1–8.

- Gutiérrez-Moreno, R.; Barea, R.; López-Guillén, E.; Araluce, J.; Bergasa, L.M. Reinforcement Learning-Based Autonomous Driving at Intersections in CARLA Simulator. Sensors 2022, 22. [CrossRef]

| Architecture | Transformer-based | Attention + LSTM | Decision-Control | Tactical Behaviour | Ours | |

|---|---|---|---|---|---|---|

| Ref | [15] | [18] | [21] | [24] | - | |

| State Dimensionality | High | High | Low | Low | Low | |

| Preprocessing | Transformer | Attention | - | - | Map | |

| Action | Low-level | Low-level | High-level | High-level | High-level | |

| Control Signal | Sharp | Sharp | Smooth | Smooth | Smooth | |

| Multiple Scenario | √ | √ | × | √ | √ | |

| Concatenated Scenario | × | × | × | √ | √ | |

| Computational Cost | High | High | High | Low | Low | |

| Scalability | √ | √ | × | × | √ | |

| Real Implementation | × | √ | × | × | √ | |

| Metric | Agent | Concatenated Scenario | |

|---|---|---|---|

| Success Rate [%] ↑ |

Ours | 95.76 | |

| Autopilot | 100 | ||

| 95th Percentile of Jerk (per episode, in m/s3) ↓ |

Ours | 4.37 | |

| Autopilot | 8.63 | ||

| Maximum Jerk (per episode, in m/s3) ↓ |

Ours | 6.20 | |

| Autopilot | 11.94 | ||

| 95th Percentile of Acceleration (per episode, in m/s2) ↓ |

Ours | 3.33 | |

| Autopilot | 2.98 | ||

| Time (sec) ↓ |

Ours | 76.85 | |

| Autopilot | 140.23 | ||

| Speed (in m/s) ↑ |

Ours | 7.60 | |

| Autopilot | 2.75 | ||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).