Submitted:

24 September 2024

Posted:

25 September 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Research Background and Significance

1.2. Research Objectives and Issues

- Limited adaptability: Many traditional models perform well under specific market conditions or for particular stocks, but their predictive accuracy drops significantly when market environments change or when applied to different stocks.

- Insufficient capture of nonlinear relationships: Changes in stock prices often exhibit complex nonlinear relationships that linear models struggle to fully capture.

- Limitations in feature extraction: Existing methods may not sufficiently mine potential valuable information during data processing and feature engineering, leading to incomplete and ineffective features input into the prediction models.

1.3. Research Methods

- Data: A large dataset of historical trading data covering multiple periods and industries was collected, including detailed information such as opening prices, closing prices, highest and lowest prices, and trading volumes.

- Techniques: A combination of convolutional neural networks (CNNs) and long short-term memory (LSTM) networks was utilized, taking advantage of the strengths of CNNs in feature extraction and the capability of LSTMs to handle long-term dependencies in time series data.

- Algorithms: Adaptive optimization algorithms, such as the Adam optimizer, were adopted to adjust the parameters of the model and minimize prediction errors.

2. Related Work

2.1. Traditional Stock Price Prediction Methods

2.2. Application of Machine Learning in Stock Forecasting

2.3. Advances in Deep Learning for Financial Applications

3. Data Preparation and Preprocessing

3.1. Data Description

- Opening Price (Open): Reflecting the initial trading price at the start of the day, it represents market participants’ initial assessment of the stock’s value.

- Closing Price (Close): Representing the final trading price at the end of the day, it is considered one of the most important price indicators and significantly influences investors’ decision-making.

- Highest Price (High): Recording the highest price reached during the day’s trading session, it indicates the market’s upward potential and resistance levels.

- Lowest Price (Low): Displaying the lowest price traded during the day, it reflects the market’s downside support and risk level.

- Trading Volume (Volume): Indicating the quantity of stocks traded during the day, it reflects the market’s trading activity and investor participation enthusiasm.

3.2. Data Cleaning and Handling Missing Values

3.3. Feature Engineering

4. Model Architecture and Methodology

4.1. Model Selection and Principles

4.2. Model Structure Design

- Convolutional Layers: Two convolutional layers are employed. The first convolutional layer uses 32 kernels of size 3 with a stride of 1 and a ReLU activation function. The second convolutional layer employs 64 kernels of size 3 with a stride of 1 and a ReLU activation function. These convolutional layers extract local features from the input stock price data.

- Pooling Layers: Following each convolutional layer, a max-pooling layer with a window size of 2 and a stride of 2 is used. The purpose of the pooling layers is to reduce the dimensionality of the feature maps, decrease the computational load, and preserve important features.

- LSTM Layers: Two bidirectional LSTM layers are set up, each containing 100 units. Bidirectional LSTMs consider both past and future information, enhancing the model’s understanding of time series data.

- Fully Connected Layer: After the LSTM layers, a fully connected layer with 128 neurons and a ReLU activation function is connected, further integrating and transforming the features extracted by the LSTM layers.

- Output Layer: Finally, an output layer with 1 neuron and a linear activation function is used to predict stock prices.

4.3. Training Strategy and Optimization Algorithm

5. Experiments and Results Analysis

5.1. Experimental Setup

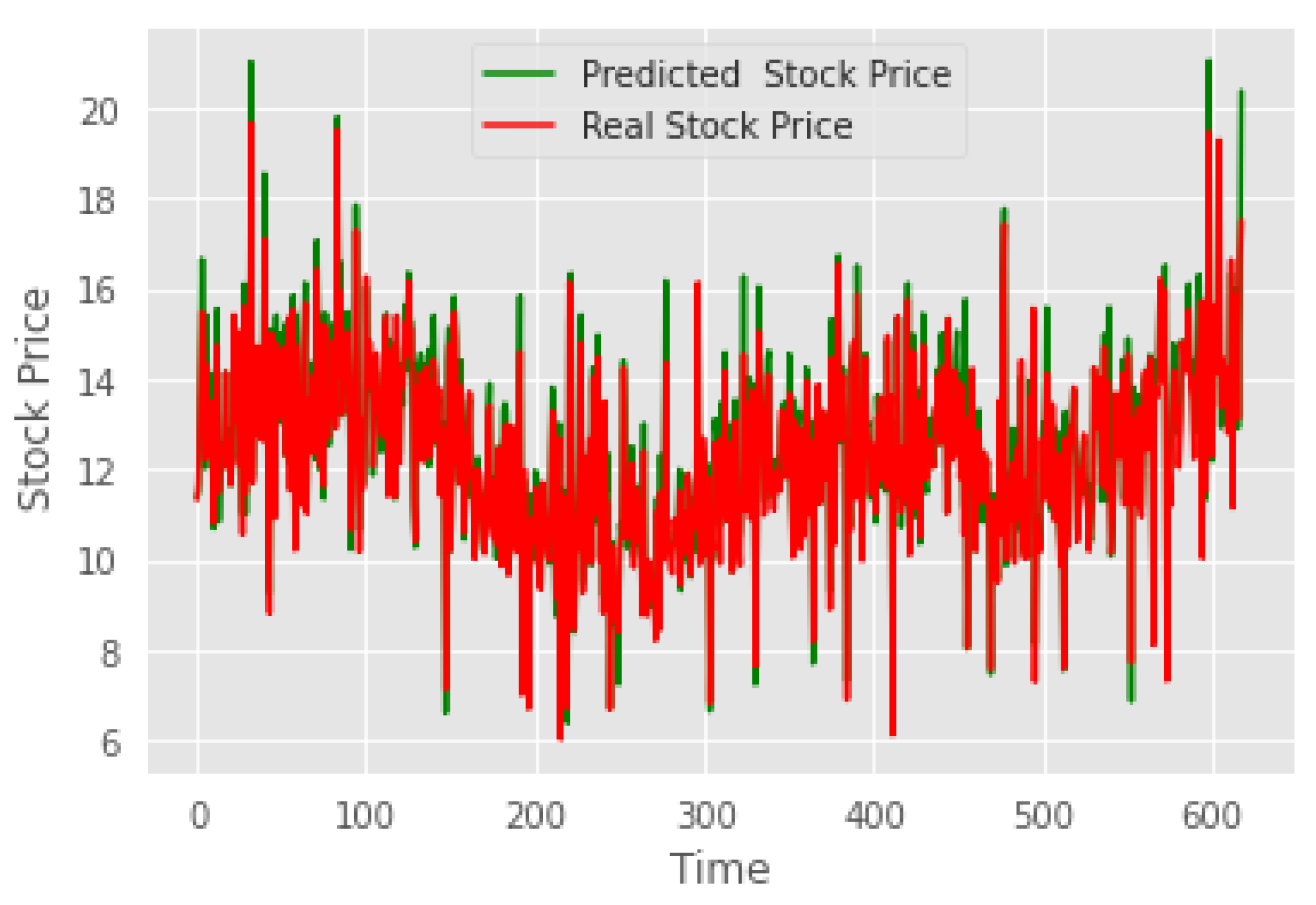

5.2. Results Evaluation and Comparison

6. Conclusion

References

- C.Y. and Marques, J.A.L., 2024. Stock market prediction using Artificial Intelligence: A systematic review of Systematic Reviews. Social Sciences & Humanities Open, 9, p.100864.

- Lutey, M. , 2022. Robust Testing for Bollinger Band, Moving Average and Relative Strength Index. Journal of Finance Issues, 20(1), pp.27-46.

- Gunn, S.R. , 1997. Support vector machines for classification and regression. Technical report, image speech and intelligent systems research group, University of Southampton.

- Rezaei, H. , Faaljou, H. and Mansourfar, G., 2021. Stock price prediction using deep learning and frequency decomposition. Expert Systems with Applications, 169, p.114332.

- Turkoglu, M.O. , D’Aronco, S., Wegner, J.D. and Schindler, K., 2021. Gating revisited: Deep multi-layer RNNs that can be trained. IEEE Transactions on Pattern Analysis and Machine Intelligence, 44(8), pp.4081-4092.

- Linardatos, P. , Papastefanopoulos, V. and Kotsiantis, S., 2020. Explainable ai: A review of machine learning interpretability methods. Entropy, 23(1), p.18.

- Li, Z. , Liu, F., Yang, W., Peng, S. and Zhou, J., 2021. A survey of convolutional neural networks: analysis, applications, and prospects. IEEE transactions on neural networks and learning systems, 33(12), pp.6999-7019.

- Egan, S. , Fedorko, W., Lister, A., Pearkes, J. and Gay, C., 2017. Long Short-Term Memory (LSTM) networks with jet constituents for boosted top tagging at the LHC. arXiv preprint. arXiv:1711.09059.

- Schmidt, R.M. , 2019. Recurrent neural networks (rnns): A gentle introduction and overview. arXiv preprint. arXiv:1912.05911.

- Bottou, L. , 2012. Stochastic gradient descent tricks. In Neural Networks: Tricks of the Trade: Second Edition (pp. 421-436). Berlin, Heidelberg: Springer Berlin Heidelberg.

- Das, K. , Jiang, J. and Rao, J.N.K., 2004. Mean squared error of empirical predictor.

| Model | Variance | R2 Score | Max Error |

| Linear Regression | 0.712 | 0.705 | 0.521 |

| Decision Tree | 0.785 | 0.776 | 0.483 |

| Random Forest | 0.851 | 0.842 | 0.426 |

| Only CNN | 0.887 | 0.879 | 0.388 |

| Only LSTM | 0.895 | 0.888 | 0.372 |

| CNN and LSTM Combined Model | 0.933 | 0.933 | 0.337 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).