Submitted:

22 September 2024

Posted:

24 September 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

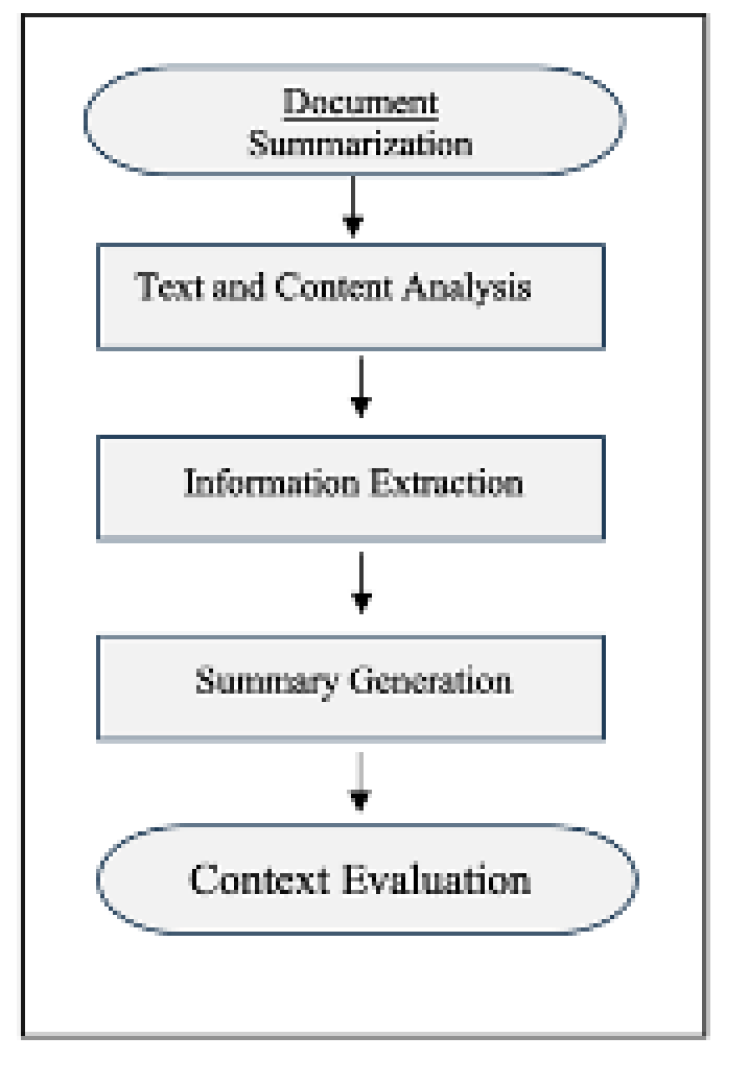

2. Traditional Document Summarization Techniques and Challenges

2.1. Traditional Methods of Document Summarization

2.2. Challenges in Multi-Document Summarization

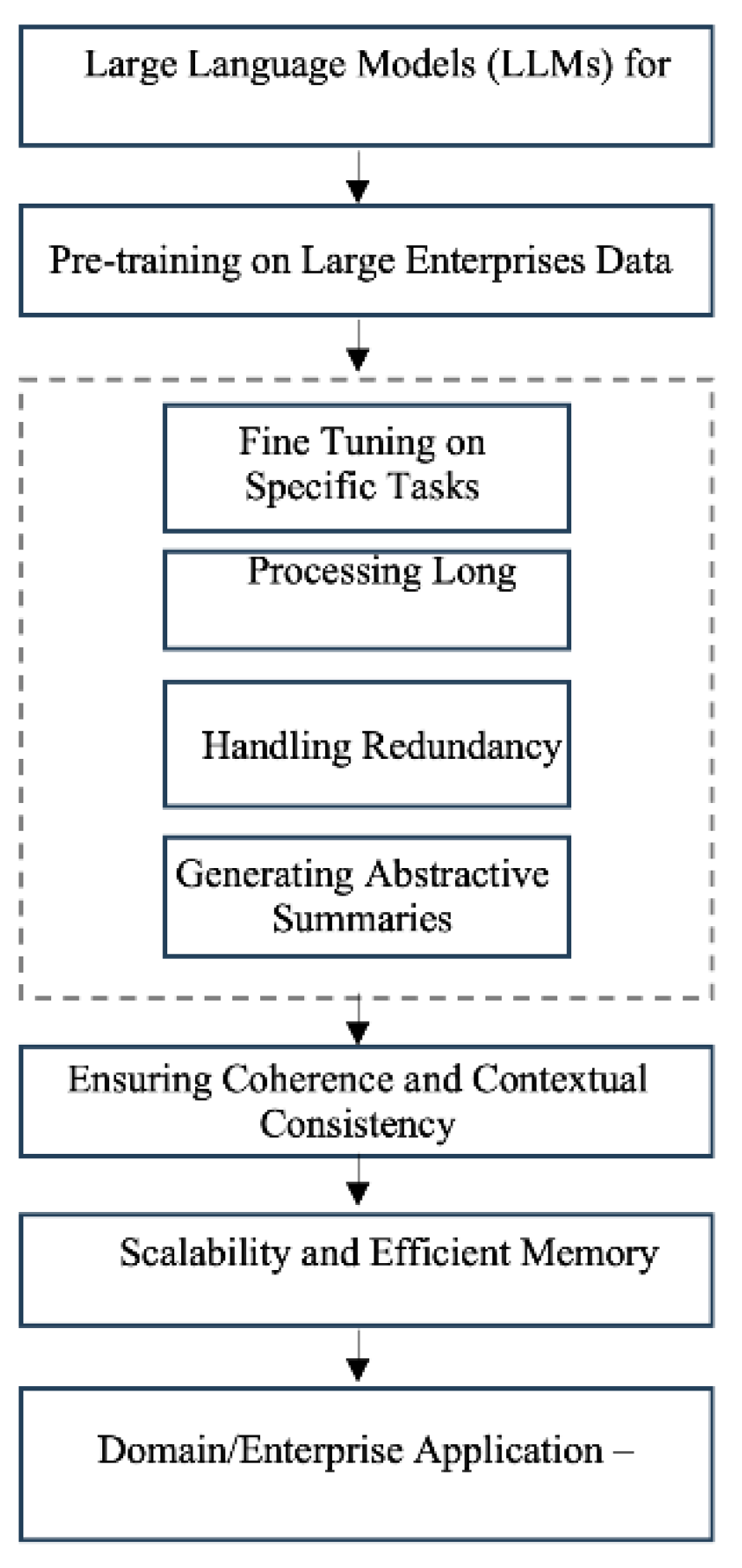

2.3. Large Language Models for Document Summarization

2.4. Domain-Specific Applications

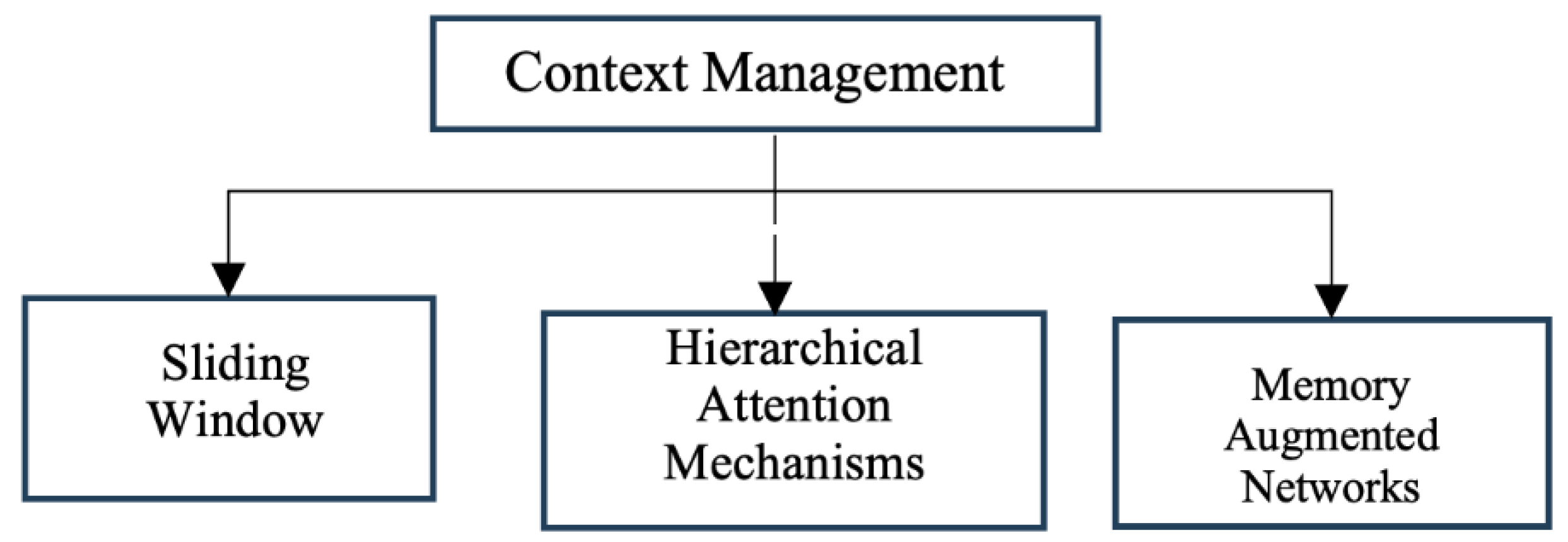

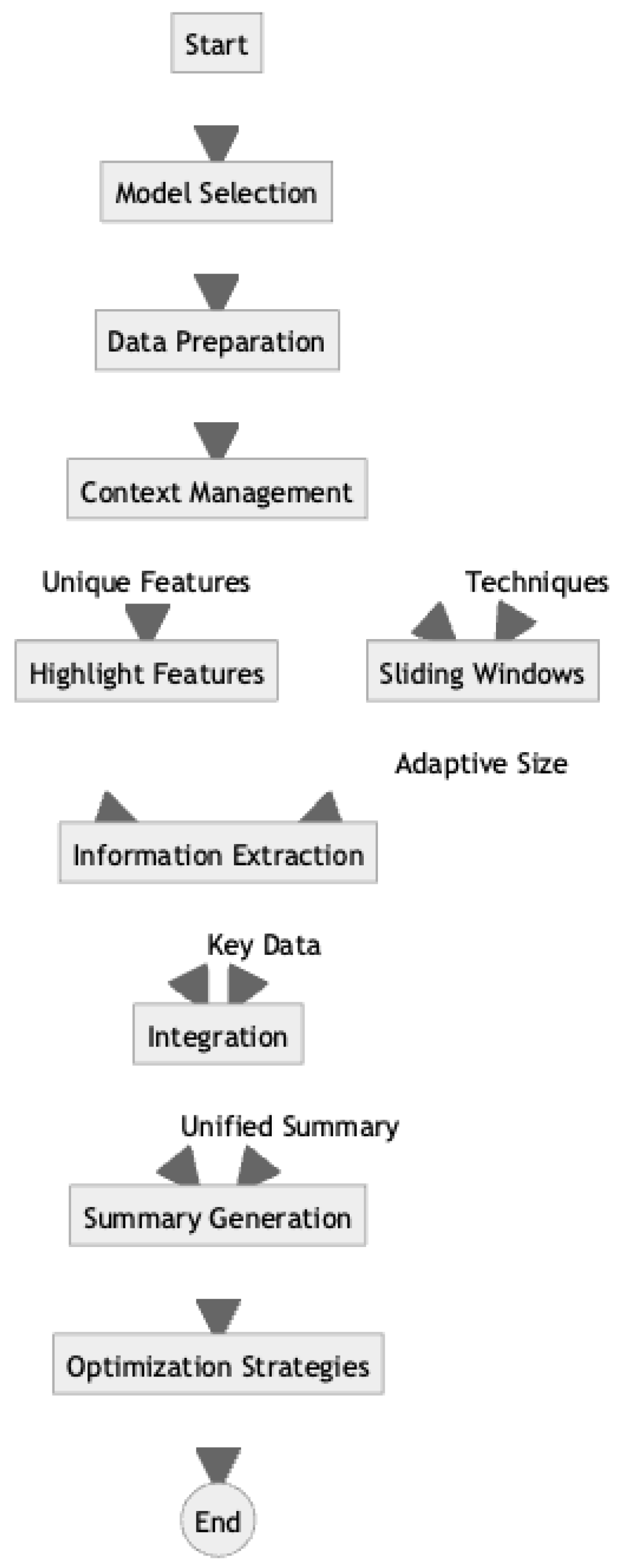

3. Methodology

4. Applications and Case Studies

4.1. Legal Domain

4.2. Medical Field

4.3. News Industry

4.4. Enterprise Applications

5. Challenges and Considerations

5.1. Technical Considerations

5.2. Ethical Considerations

5.3. Ensuring factual accuracy and reliability of summaries

5.4. Emerging Challenges

6. Conclusion And Future direction

References

- G. Vishal and G. S. Lehal, "A Survey of Text Summarization Extractive Techniques," Journal of Emerging Technologies in Web Intelligence, vol. 2, no. 3, pp. 258-268, 2010.

- Allahyari, M.; Pouriyeh, S.; Assefi, M.; Safaei, S.; D., E.; B., J.; Kochut, K. Text Summarization Techniques: A Brief Survey. Int. J. Adv. Comput. Sci. Appl. 2017, 8. [Google Scholar] [CrossRef]

- Gambhir, M.; Gupta, V. Recent automatic text summarization techniques: a survey. Artif. Intell. Rev. 2016, 47, 1–66. [Google Scholar] [CrossRef]

- Bing, L.; Li, P.; Liao, Y.; Lam, W.; Guo, W.; Passonneau, R. Abstractive Multi-Document Summarization via Phrase Selection and Merging. Proceedings of the 53rd Annual Meeting of the Association for Computational Linguistics and the 7th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). LOCATION OF CONFERENCE, ChinaDATE OF CONFERENCE;

- J. Christensen, M.J. Christensen, M. Mausam, S. Soderland, and O. Etzioni, "Towards Coherent Multi-Document Summarization," in Proceedings of the 2013 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Atlanta, Georgia, 2013, pp. 1163-1173.

- Kim, Y.-B.; Stratos, K.; Sarikaya, R.; Jeong, M. New Transfer Learning Techniques for Disparate Label Sets. Proceedings of the 53rd Annual Meeting of the Association for Computational Linguistics and the 7th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). LOCATION OF CONFERENCE, ChinaDATE OF CONFERENCE; pp. 473–482.

- Parveen, D.; Ramsl, H.-M.; Strube, M. Topical Coherence for Graph-based Extractive Summarization. Proceedings of the 2015 Conference on Empirical Methods in Natural Language Processing. LOCATION OF CONFERENCE, PortugalDATE OF CONFERENCE;

- Z. Cao, F. Wei, W. Li, and S. Li, "Faithful to the Original: Fact-Aware Neural Abstractive Summarization," in Proceedings of the Thirty-Second AAAI Conference on Artificial Intelligence and Thirtieth Innovative Applications of Artificial Intelligence Conference and Eighth AAAI Symposium on Educational Advances in Artificial Intelligence [AAAI'18/IAAI'18/EAAI'18], New Orleans, LA, USA, 2018, pp. 4784-4791.

- Gerani, S.; Mehdad, Y.; Carenini, G.; Ng, R.T.; Nejat, B. Abstractive Summarization of Product Reviews Using Discourse Structure. Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing (EMNLP). LOCATION OF CONFERENCE, QatarDATE OF CONFERENCE;

- Feigenblat, G.; Roitman, H.; Boni, O.; Konopnicki, D. Unsupervised Query-Focused Multi-Document Summarization using the Cross Entropy Method. SIGIR '17: The 40th International ACM SIGIR conference on research and development in Information Retrieval. LOCATION OF CONFERENCE, JapanDATE OF CONFERENCE; pp. 961–964.

- Yasunaga, M.; Zhang, R.; Meelu, K.; Pareek, A.; Srinivasan, K.; Radev, D. Graph-based Neural Multi-Document Summarization. Proceedings of the 21st Conference on Computational Natural Language Learning (CoNLL 2017). LOCATION OF CONFERENCE, CanadaDATE OF CONFERENCE;

- E. L. Pontes, S. Huet, and J.-M. Torres-Moreno, "A Multilingual Study of Compressive Cross-Language Text Summarization," in Proceedings of the Eleventh International Conference on Language Resources and Evaluation (LREC 2018), Miyazaki, Japan, 2018, pp. 4414-4422. [Online]. Available: https://aclanthology.org/L18-1696.

- Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A.N., Kaiser, Ł., Polosukhin, I.: Attention is All you Need. In: Guyon, I., Von Luxburg, U., Bengio, S., Wallach, H., Fergus, R., Vishwanathan, S., Garnett, R. (eds.) Advances in Neural Information Processing Systems, vol. 30. Curran Associates, Inc. (2017).

- a. Beltagy, M. E. Peters, and A. Cohan, "Longformer: The Long-Document Transformer," arXiv preprint arXiv:2004.05150, 2020. [Online]. Available: https://arxiv.org/abs/2004.05150.

- Cheng, J.; Dong, L.; Lapata, M. Long Short-Term Memory-Networks for Machine Reading. arXiv, 1601; arXiv:1601.06733. [Google Scholar]

- J. Zhang, Y. Zhao, M. Saleh, and P. Liu, "PEGASUS: Pre-training with Extracted Gap-sentences for Abstractive Summarization," in Proceedings of the 37th International Conference on Machine Learning, vol. 119, 2020, pp. 11328-11339. [Online]. Available: http://proceedings.mlr.press/v119/zhang20ae.html.

- Z. Yang, Z. Dai, Y. Yang, J. Carbonell, R. R. Salakhutdinov, and Q. V. Le, "XLNet: Generalized Autoregressive Pretraining for Language Understanding," in Advances in Neural Information Processing Systems, vol. 32, H. Wallach, H. Larochelle, A. Beygelzimer, F. d'Alché-Buc, E. Fox, and R. Garnett, Eds. Curran Associates, Inc., 2019, pp. 5753-5763. [Online]. Available: https://proceedings.neurips.cc/paper/2019/file/dc6a7e655d7e5840e66733e9ee67cc69-Paper.pdf.

- Lewis, M.; Liu, Y.; Goyal, N.; Ghazvininejad, M.; Mohamed, A.; Levy, O.; Stoyanov, V.; Zettlemoyer, L. BART: Denoising Sequence-to-Sequence Pre-training for Natural Language Generation, Translation, and Comprehension. Proc. 58th Annu. Meet. Assoc. Comput. Linguist. 2020, 7871–7880. [Google Scholar] [CrossRef]

- Liu, Y.; Lapata, M. Text Summarization with Pretrained Encoders. Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP). LOCATION OF CONFERENCE, ChinaDATE OF CONFERENCE; pp. 3721–3731.

- M. Zaheer, G. M. Zaheer, G. Guruganesh, A. Dubey, J. Ainslie, C. Alberti, S. Ontanon, P. Pham, A. Ravula, Q. Wang, L. Yang, and A. Ahmed, "Big Bird: Transformers for Longer Sequences," in Advances in Neural Information Processing Systems, vol. 33, H. Larochelle, M. Ranzato, R. Hadsell, M. F. Balcan, and H. Lin, Eds. Curran Associates, Inc., 2020, pp. 17283-17297. [Online]. Available: https://proceedings.neurips.cc/paper/2020/file/c8512d142a2d849725f31a9a7a361ab9-Paper.

- Ruder, S.; Peters, M.E.; Swayamdipta, S.; Wolf, T. Transfer learning in natural language processing. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Tutorials, Minneapolis, MN, USA, 2 June 2019. [Google Scholar]

- OpenAI, Achiam, J., Adler, S., Agarwal, S., Ahmad, L., Akkaya, I., Aleman, F.L., Almeida, D., Altenschmidt, J., Altman, S., Anadkat, S., Avila, R., Babuschkin, I., Balaji, S., Balcom, V., Baltescu, P., Bao, H., Bavarian, M., Belgum, J., Bello, I., Berdine, J., Bernadett-Shapiro, G., Berner, C., Bogdonoff, L., Boiko, O., et al.: GPT-4 Technical Report. arXiv preprint arXiv:2303.08774 (2024).

- Anthropic, "Claude 2.1 Release Notes," Anthropic, Apr. 20, 2023. [Online]. Available: https://www.anthropic.com/news/claude-2-1-prompting.

- Beltagy, M. E. Peters, and A. Cohan, "Longformer: The Long-Document Transformer," arXiv preprint arXiv:2004.05150, 2020.].

- Yasunaga, M.; Zhang, R.; Meelu, K.; Pareek, A.; Srinivasan, K.; Radev, D. Graph-based Neural Multi-Document Summarization. Proceedings of the 21st Conference on Computational Natural Language Learning (CoNLL 2017). LOCATION OF CONFERENCE, CanadaDATE OF CONFERENCE;

- S. Liu, Y. Ding, C. Liu, W. Hu, Z. Shi, and M. Jiang, "Long but not Enough: Rethinking Long Context Modeling in Transformers," arXiv preprint. arXiv:2305.15003, 2023.

- Pappagari, R.; Zelasko, P.; Villalba, J.; Carmiel, Y.; Dehak, N. Hierarchical Transformers for Long Document Classification. 2019 IEEE Automatic Speech Recognition and Understanding Workshop (ASRU). LOCATION OF CONFERENCE, SingaporeDATE OF CONFERENCE; pp. 838–844.

- Yang, Z.; Yang, D.; Dyer, C.; He, X.; Smola, A.; Hovy, E. Hierarchical attention networks for document classification. In Proceedings of the Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, San Diego, CA, USA, 12–17 June 2016; pp. 1480–1489. [Google Scholar]

- Graves, A.; Wayne, G.; Reynolds, M.; Harley, T.; Danihelka, I.; Grabska-Barwińska, A.; Colmenarejo, S.G.; Grefenstette, E.; Ramalho, T.; Agapiou, J.; et al. Hybrid computing using a neural network with dynamic external memory. Nature 2016, 538, 471–476. [Google Scholar] [CrossRef]

- V. Singh, R. Verma, and M. Shrivastava, "Memory Attention Networks for Abstractive Summarization," in Proceedings of the 16th Conference of the European Chapter of the Association for Computational Linguistics: Main Volume, Online, 2021, pp. 2177-2187.

- Stanovsky, G.; Michael, J.; Zettlemoyer, L.; Dagan, I. Supervised Open Information Extraction. Proceedings of the 2018 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long Papers). LOCATION OF CONFERENCE, United StatesDATE OF CONFERENCE;

- Lee, K.; He, L.; Lewis, M.; Zettlemoyer, L. End-to-end Neural Coreference Resolution. Proceedings of the 2017 Conference on Empirical Methods in Natural Language Processing. LOCATION OF CONFERENCE, DenmarkDATE OF CONFERENCE;

- Luan, Y.; Wadden, D.; He, L.; Shah, A.; Ostendorf, M.; Hajishirzi, H. A general framework for information extraction using dynamic span graphs. Proceedings of the 2019 Conference of the North. LOCATION OF CONFERENCE, United StatesDATE OF CONFERENCE;

- K. Guu, K. Lee, Z. Tung, P. Pasupat, and M. Chang, "REALM: Retrieval-Augmented Language Model Pre-Training," arXiv preprint arXiv:2002.08909, 2020. [Online]. Available: https://arxiv.org/abs/2002.08909.

- Joshi, M.; Levy, O.; Zettlemoyer, L.; Weld, D.S. BERT for Coreference Resolution: Baselines and Analysis. Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP). LOCATION OF CONFERENCE, ChinaDATE OF CONFERENCE; pp. 5807–5812.

- Fan, A.; Gardent, C.; Braud, C.; Bordes, A. Using Local Knowledge Graph Construction to Scale Seq2Seq Models to Multi-Document Inputs. Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP). LOCATION OF CONFERENCE, ChinaDATE OF CONFERENCE; pp. 4184–4194.

- See, A.; Liu, P.J.; Manning, C.D. Get To The Point: Summarization with Pointer-Generator Networks. In Proceedings of the Proceedings of the 55th Annual Meeting of the Association for Computational Linguistics; Vancouver, BC, Canada, 30 July–4 August 2017, Volume 1, pp. 1073–1083.

- Xu, J.; Gan, Z.; Cheng, Y.; Liu, J. Discourse-Aware Neural Extractive Text Summarization. Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics. LOCATION OF CONFERENCE, COUNTRYDATE OF CONFERENCE; pp. 5021–5031.

- Kryscinski, W.; McCann, B.; Xiong, C.; Socher, R. Evaluating the Factual Consistency of Abstractive Text Summarization. Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP). LOCATION OF CONFERENCE, COUNTRYDATE OF CONFERENCE; pp. 9332–9346.

- Zhong, M.; Liu, P.; Chen, Y.; Wang, D.; Qiu, X.; Huang, X.-J. Extractive Summarization as Text Matching. Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics. LOCATION OF CONFERENCE, COUNTRYDATE OF CONFERENCE; pp. 6197–6208.

- Raffel 890uipjk., "Exploring the Limits of Transfer Learning with a Unified Text-to-Text Transformer," Journal of Machine Learning Research, vol. 21, no. 140, pp. 1-67, 2020. [Online]. Available: http://jmlr.org/papers/v21/20-074.

- Zhang, X.; Wei, F.; Zhou, M. HIBERT: Document Level Pre-training of Hierarchical Bidirectional Transformers for Document Summarization. In Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics, Florence, Italy, 28 July—2 August 2019. [Google Scholar] [CrossRef]

- V. Sanh, L. Debut, J. Chaumond, and T. Wolf, "DistilBERT, a distilled version of BERT: smaller, faster, cheaper and lighter," arXiv preprint arXiv:1910.01108, 2019. [Online]. Available: https://arxiv.org/abs/1910.01108.

- G. Hinton, O. Vinyals, and J. Dean, "Distilling the Knowledge in a Neural Network," arXiv preprint arXiv:1503.02531, 2015. [Online]. Available: https://arxiv.org/abs/1503.02531.

- Shen, S.; Dong, Z.; Ye, J.; Ma, L.; Yao, Z.; Gholami, A.; Mahoney, M.W.; Keutzer, K. Q-BERT: Hessian Based Ultra Low Precision Quantization of BERT. Proc. AAAI Conf. Artif. Intell. 2020, 34, 8815–8821. [Google Scholar] [CrossRef]

- Hamdi, A.; Jean-Caurant, A.; Sidere, N.; Coustaty, M.; Doucet, A. An Analysis of the Performance of Named Entity Recognition over OCRed Documents. 2019 ACM/IEEE Joint Conference on Digital Libraries (JCDL). LOCATION OF CONFERENCE, United StatesDATE OF CONFERENCE; pp. 333–334.

- Dale, R. Law and Word Order: NLP in Legal Tech. Nat. Lang. Eng. 2018, 25, 211–217. [Google Scholar] [CrossRef]

- X. Huang, J. Zou, and J. Tang, "Automatic Generation of Legal Case Brief by Extracting Key Information from Chinese Legal Documents," in Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, Online and Punta Cana, Dominican Republic, 2021, pp. 6328-6337. [Online]. Available: https://aclanthology.org/2021.emnlp-main.510.

- Y. Zhu, E. B. Fox, and A. J. Obeid, "TEBM-Net: A Template Enhanced Bidirectional Matching Network for Biomedical Literature Retrieval and Question Answering," in Proceedings of the 44th International ACM SIGIR Conference on Research and Development in Information Retrieval, Virtual Event, Canada, 2021, pp. 2555-2559. [CrossRef]

- Chan, A.; Tay, Y.; Ong, Y.-S.; Zhang, A. Poison Attacks against Text Datasets with Conditional Adversarially Regularized Autoencoder. Findings of the Association for Computational Linguistics: EMNLP 2020. LOCATION OF CONFERENCE, COUNTRYDATE OF CONFERENCE; pp. 4175–4189.

- You, C.; Chen, N.; Zou, Y. Self-supervised Contrastive Cross-Modality Representation Learning for Spoken Question Answering. Findings of the Association for Computational Linguistics: EMNLP 2021. LOCATION OF CONFERENCE, Dominican RepublicDATE OF CONFERENCE; pp. 28–39.

- Y. Deng, S. Liu, X. Wang, Y. Shen, J. Xie, and H. Ma, "Extracting Structured Information from Clinical Conversations for COVID-19 Diagnosis," in Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, Online and Punta Cana, Dominican Republic, 2021, pp. 3109-3121. [Online]. Available: https://aclanthology.org/2021.emnlp-main.246.

- Huang, H.; Geng, X.; Pei, J.; Long, G.; Jiang, D. Reasoning over Entity-Action-Location Graph for Procedural Text Understanding. Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). LOCATION OF CONFERENCE, COUNTRYDATE OF CONFERENCE; pp. 5100–5109.

- Adolphs, L.; Hofmann, T. LeDeepChef Deep Reinforcement Learning Agent for Families of Text-Based Games. Proc. AAAI Conf. Artif. Intell. 2020, 34, 7342–7349. [Google Scholar] [CrossRef]

- Aboufoul, L.; Mahajan, K.; Gallicano, T.; Levens, S.; Shaikh, S. A Case Study of Analysis of Construals in Language on Social Media Surrounding a Crisis Event. Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing: Student Research Workshop. LOCATION OF CONFERENCE, COUNTRYDATE OF CONFERENCE; pp. 304–309.

- Bommasani, R., Hudson, D.A., Adeli, E., Altman, R., Arora, S., et al.: On the Opportunities and Risks of Foundation Models. arXiv preprint arXiv:2108.07258 (2022). https://arxiv.org/abs/2108.07258.

- O. Press, N. Smith, and M. Lewis, "Train short, test long: Attention with linear biases enables input length extrapolation," in International Conference on Learning Representations, 2022.

- Y. Ding, L. L. Zhang, C. Zhang, Y. Xu, N. Shang, J. Xu, F. Yang, and M. Yang, "Longrope: Extending LLM Context Window Beyond 2 Million Tokens," arXiv preprint arXiv:2402.13753, 2024.

- Y. Liu, M. Lapata, and Y. Lan, "Multi-document summarization with diverse formats and styles," arXiv preprint. 2021, arXiv:2102.12033, 2021.

- L. Huang, Y. Cao, and J. Jiang, "Heterogeneous document summarization with structural regularization. Proceedings of the AAAI Conference on Artificial Intelligenc 2021, 35, 13258–13266.

- J. Zhu, Y. Xia, and M. Lapata, "Document segmentation for long-form document summarization," in Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, 2021, pp. 6584-6594.

- E. M. Bender, T. E. M. Bender, T. Gebru, A. McMillan-Major, and S. Shmitchell, "On the dangers of stochastic parrots: Can language models be too big?" in Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency, 2021, pp. 610-623.

- Shah, D.S.; Schwartz, H.A.; Hovy, D. Predictive Biases in Natural Language Processing Models: A Conceptual Framework and Overview. Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics. LOCATION OF CONFERENCE, COUNTRYDATE OF CONFERENCE; pp. 5248–5264.

- P. Liang, C. Wu, L. Morency, and R. Salakhutdinov, "Towards understanding and mitigating social biases in language models," in International Conference on Machine Learning. PMLR, 2021, pp. 6565-6576.

- W. Kryscinski, B. McCann, C. Xiong, and R. Socher, "Evaluating the factual consistency of abstractive text summarization," in Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP), 2020, pp. 9332-9346.

- J. Maynez, S. Narayan, B. Bohnet, and R. McDonald, "On faithfulness and factuality in abstractive summarization," in Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, 2020, pp. 1906-1919.

- Falke, T.; Ribeiro, L.F.R.; Utama, P.A.; Dagan, I.; Gurevych, I. Ranking Generated Summaries by Correctness: An Interesting but Challenging Application for Natural Language Inference. Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics. LOCATION OF CONFERENCE, ItalyDATE OF CONFERENCE;

- T. Li, G. Zhang, Q. D. Do, X. Yue, and W. Chen, "Long-context LLMs Struggle with Long In-context Learning," arXiv preprint. 2024; arXiv:2404.02060.

- S. M. Lakew, M. Cettolo, and M. Federico, "A Comparison of Transformer and Recurrent Neural Networks on Multilingual Neural Machine Translation," in Proceedings of the 27th International Conference on Computational Linguistics, 2018, pp. 641-652.

- Krippendorff, K. Computing Krippendorff’s Alpha-Reliability. 2011. Available online: http://repository.upenn.edu/asc_papers/43.

- Lipton, Z.C. The Mythos of Model Interpretability. Queue 2018, 16, 31–57. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).