Submitted:

28 August 2024

Posted:

28 August 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Real-Time Object Detector

2.2. Deep Learning-Based Multimodal Image Fusion

2.3. Attention Mechanism

3. Methods

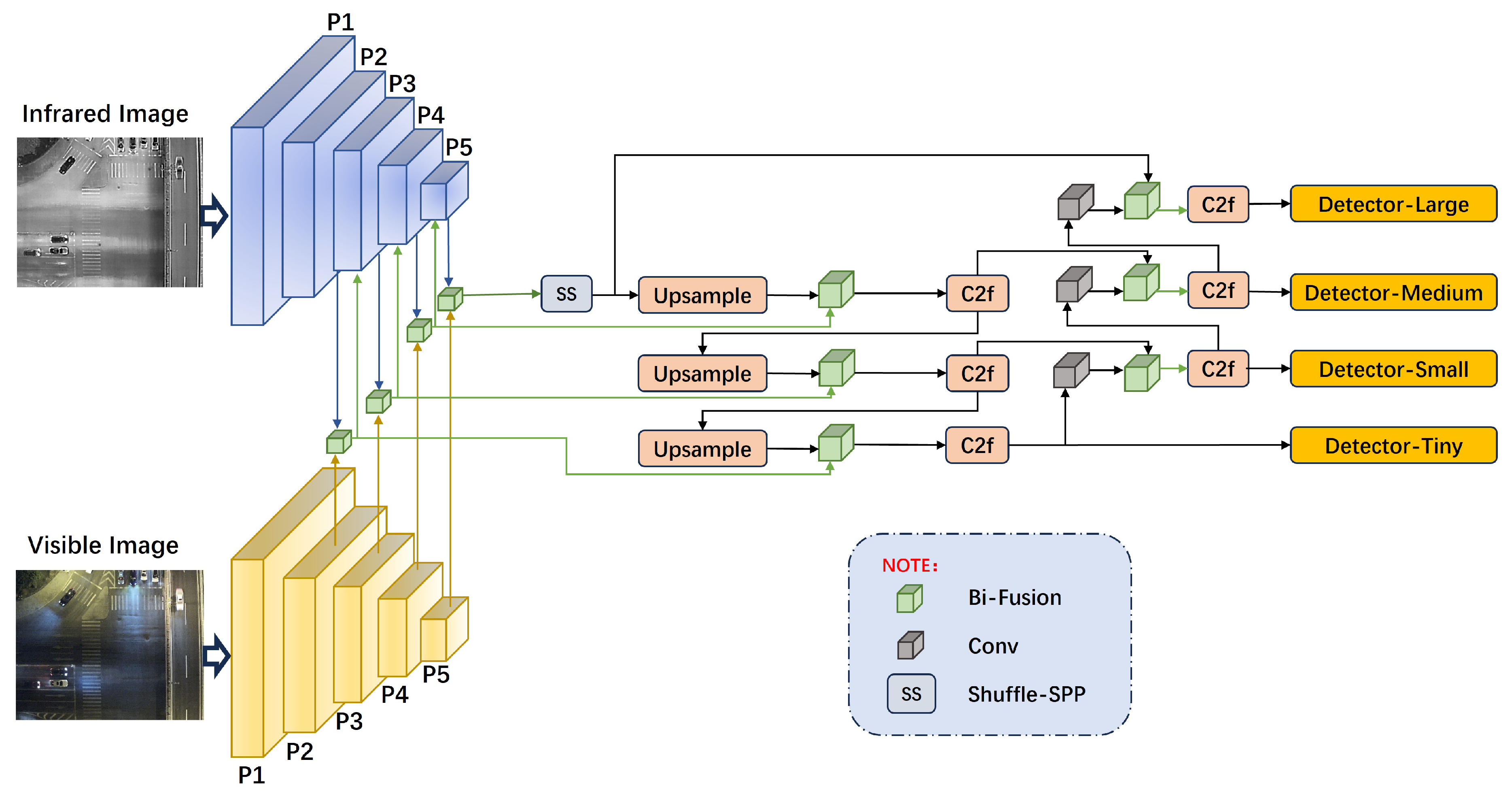

3.1. Overall Network Architecture

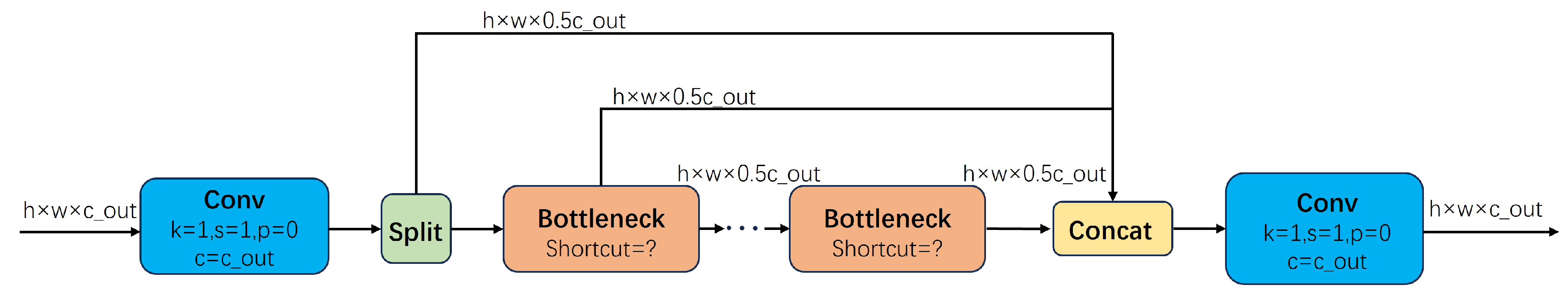

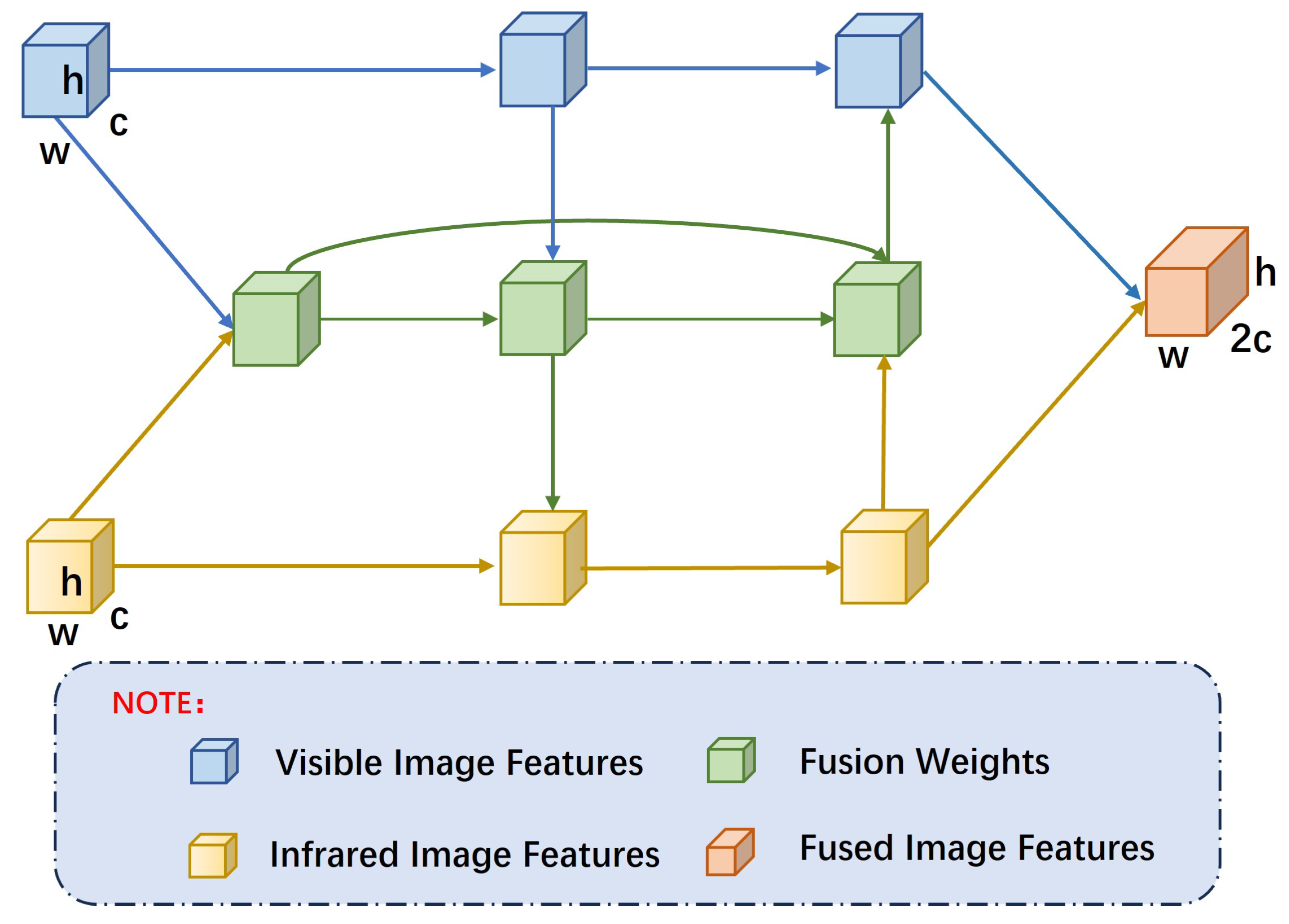

3.2. Feature Fusion Structure

3.2.1. Bidirectional Pyramid Feature Fusion Structure

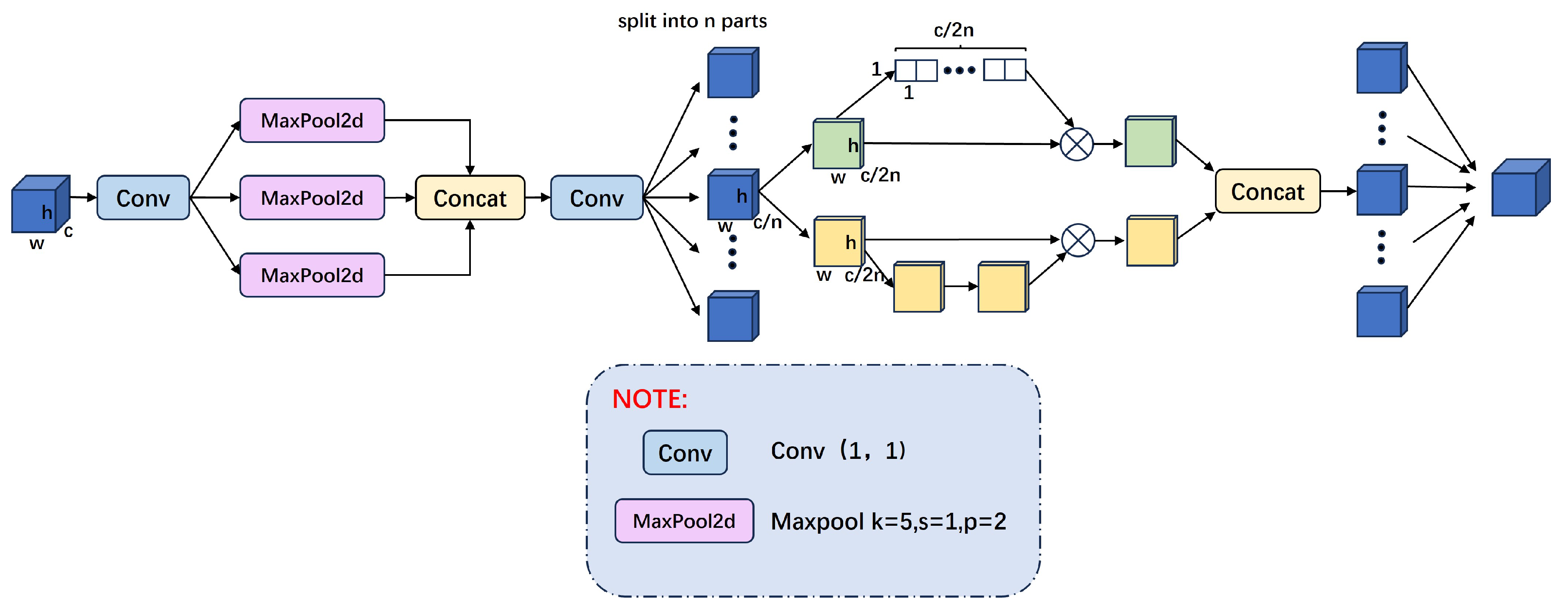

3.2.2. Shuffle Attention Spatial Pyramid Pooling Structure

3.3. Loss Function

4. Results

4.1. Dataset Introduction

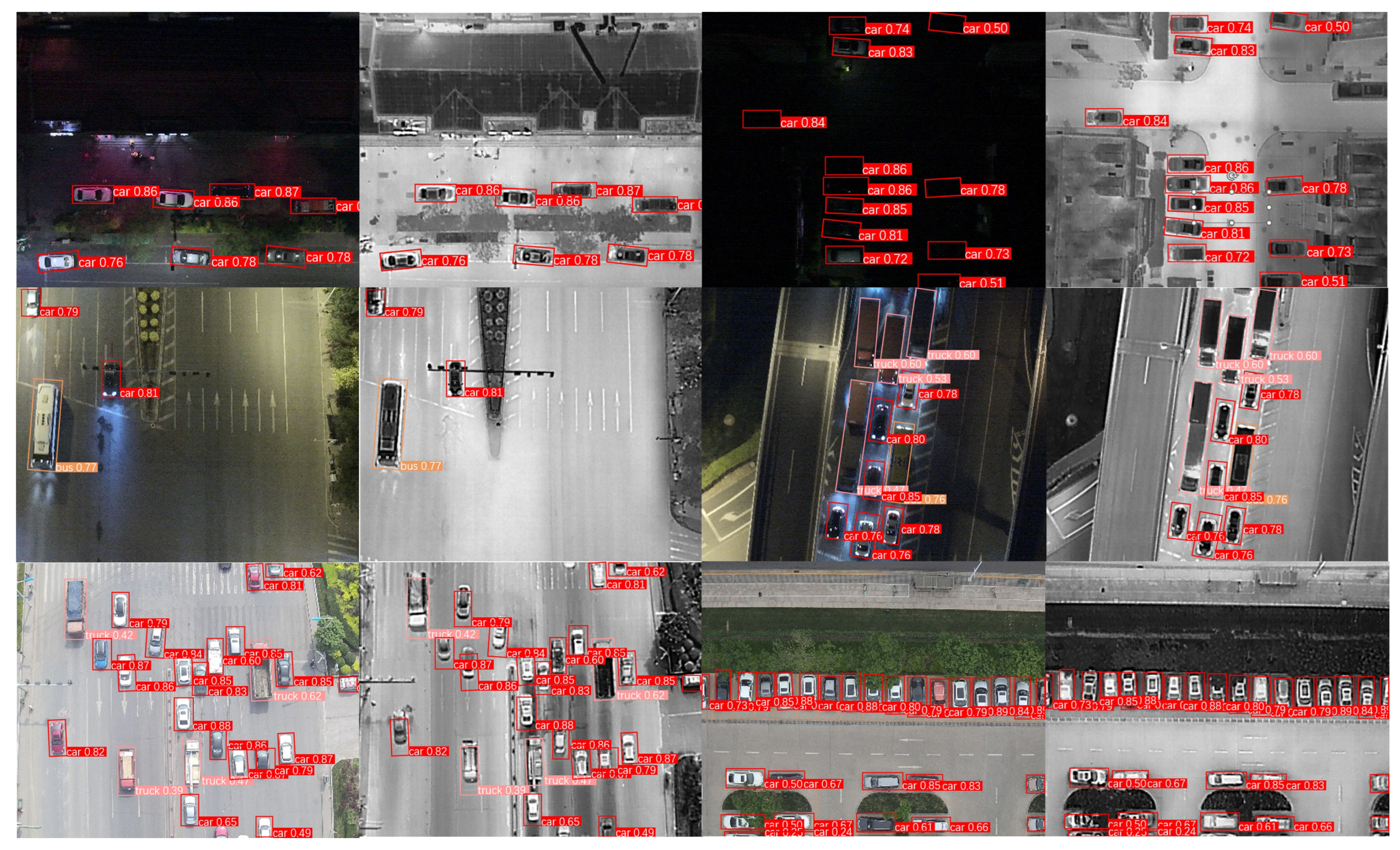

4.1.1. Drone Vehicle Dataset

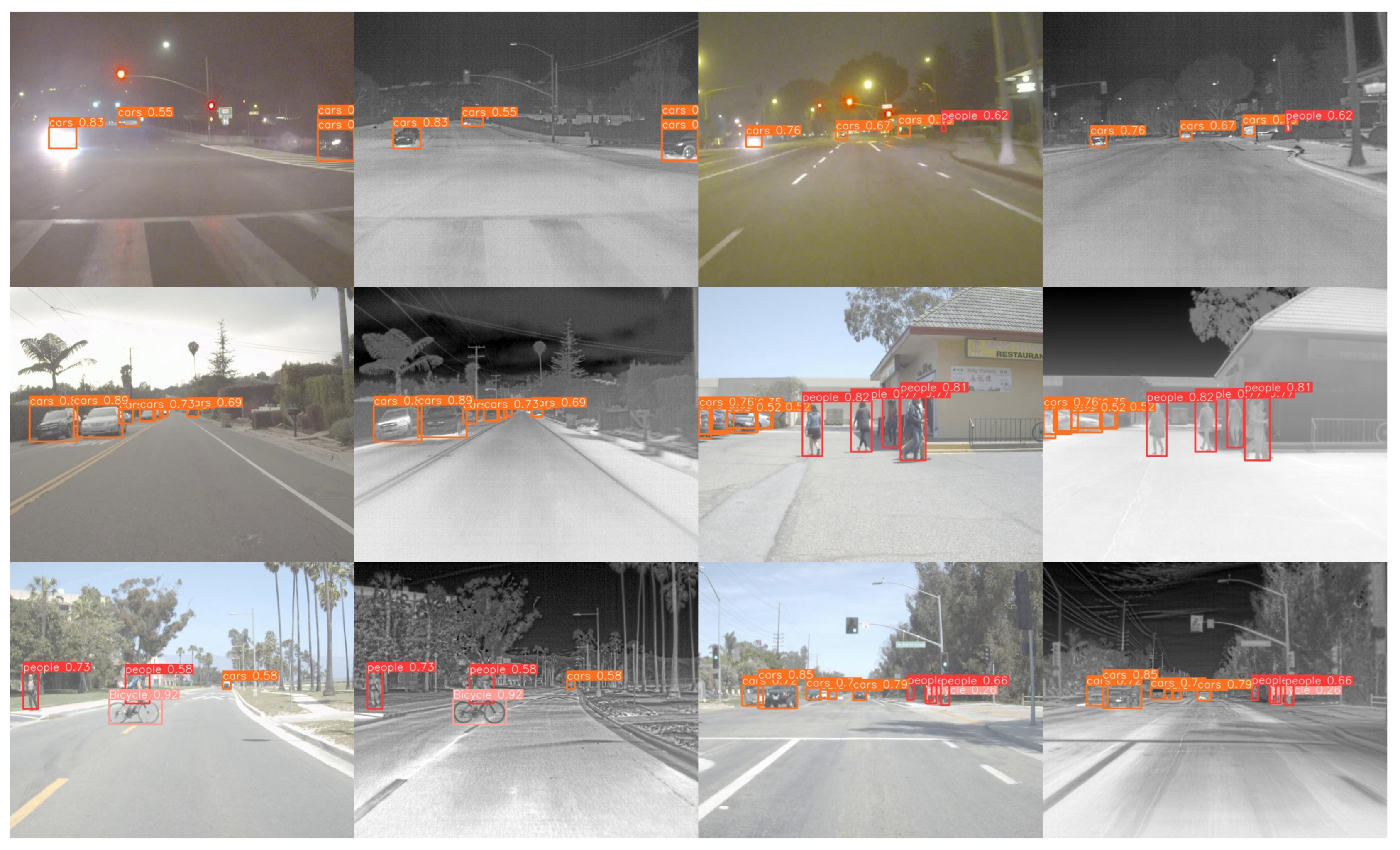

4.1.2. FLIR Dataset

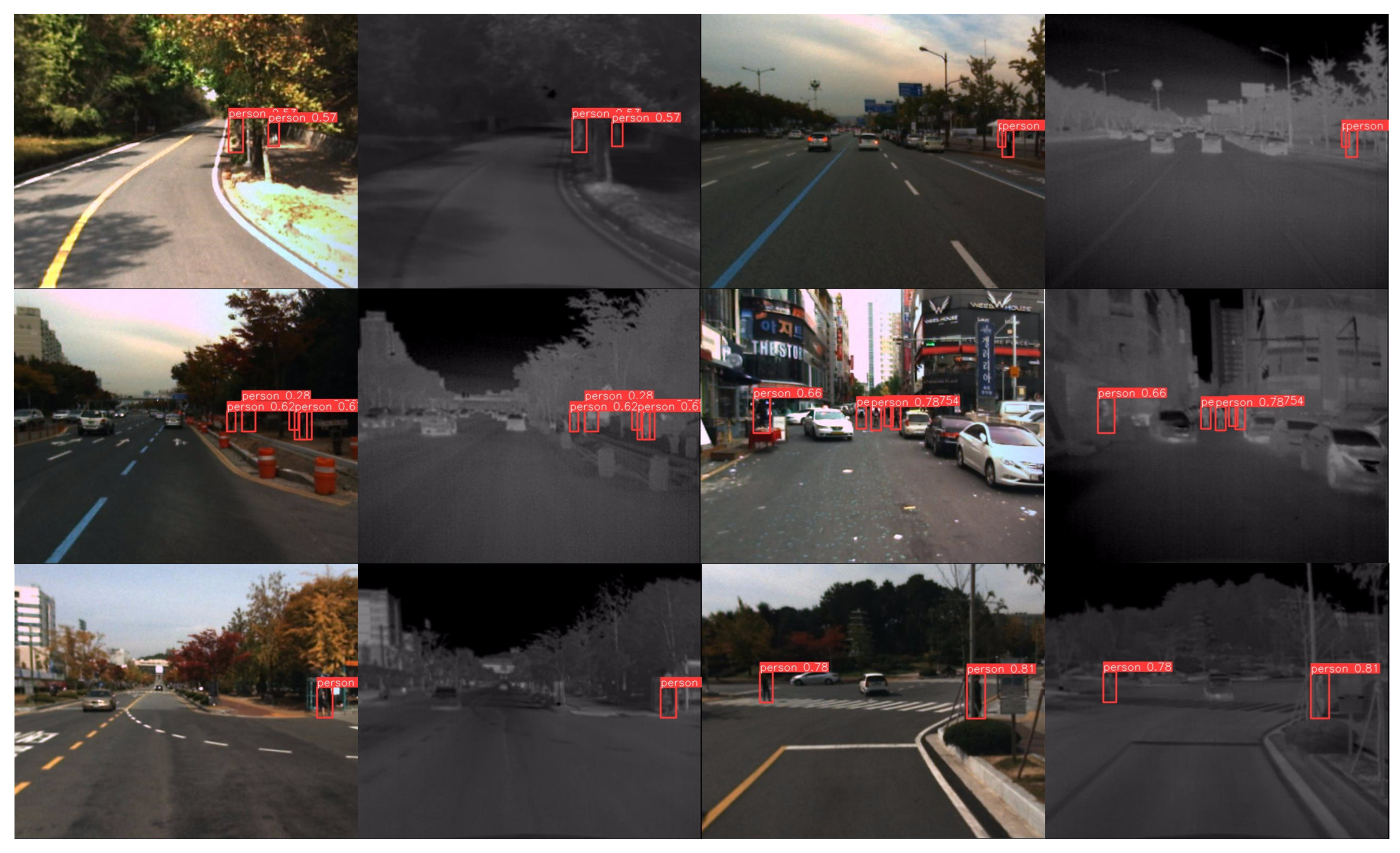

4.1.3. KAIST Dataset

4.2. Implementation Details

4.3. Evaluation Metrics

4.4. Analysis of Results

4.4.1. Experiment on the DroneVehicle Dataset

4.4.2. Experiments on the FLIR Dataset

4.4.3. Experiments Based on the KAIST Dataset

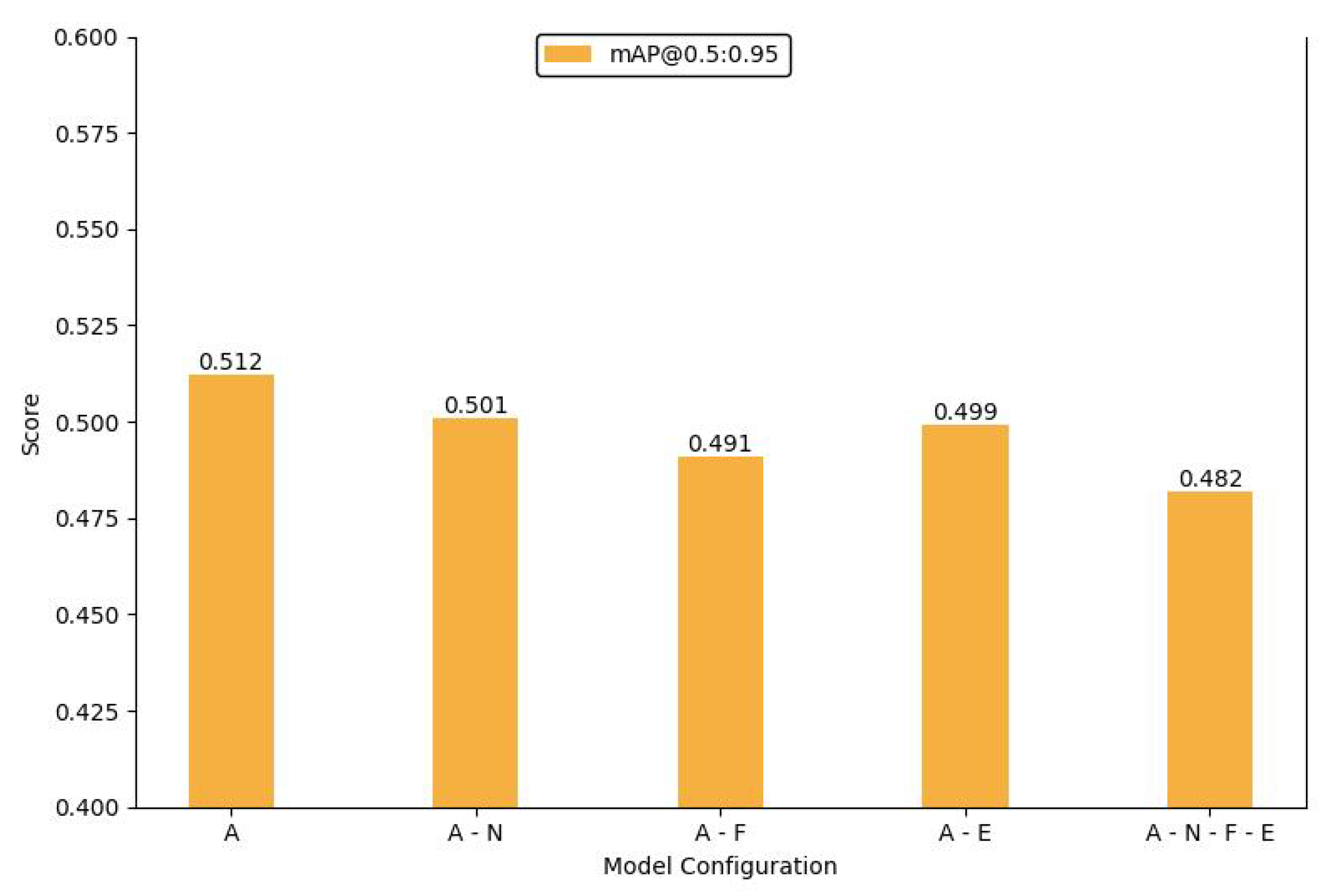

4.5. Parameter Analysis

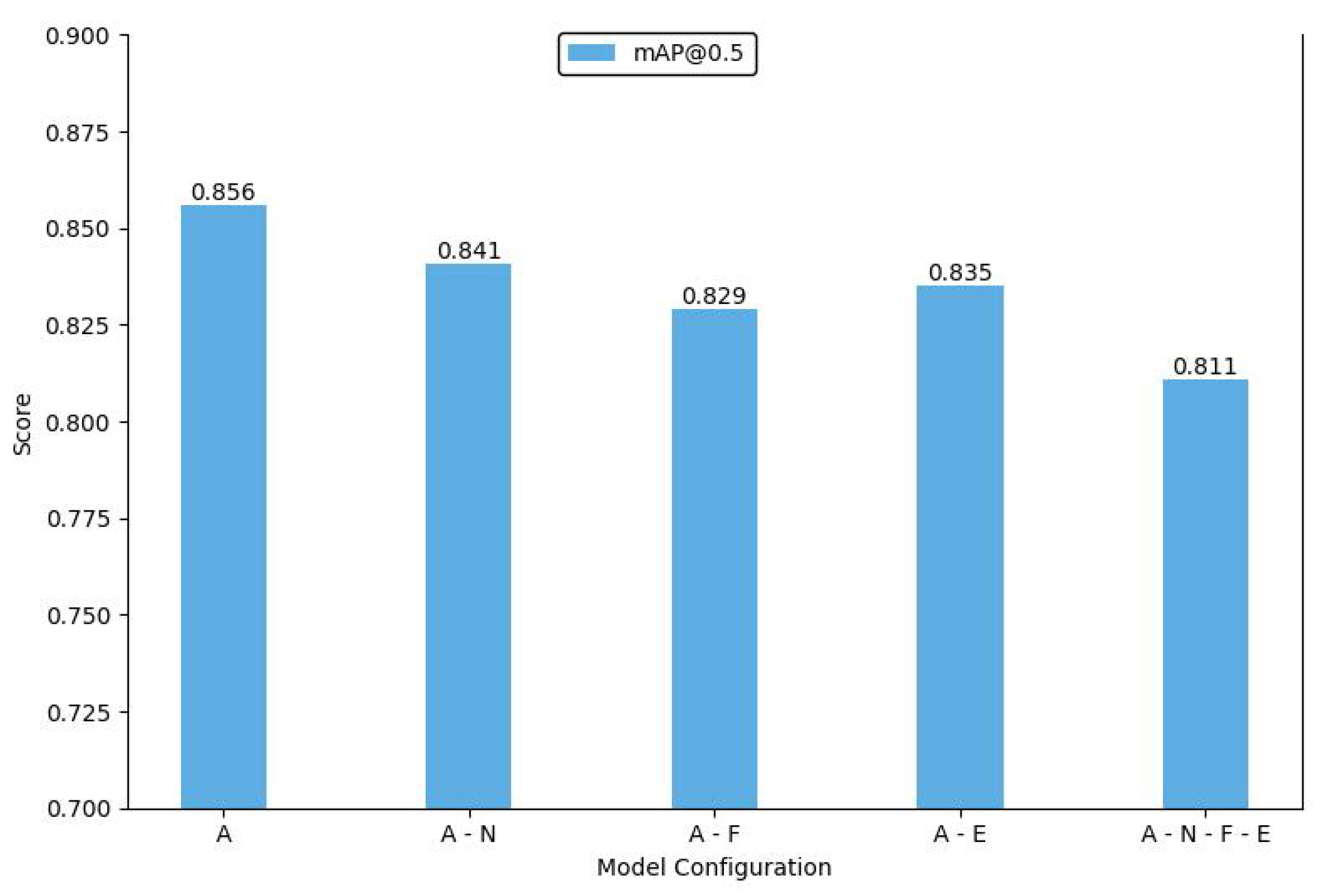

4.6. Ablation Study

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Szegedy, C.; Ioffe, S.; Vanhoucke, V.; Alemi, A. Inception-v4, inception-resnet and the impact of residual connections on learning. In Proceedings of the AAAI conference on artificial intelligence; 2017; Vol. 31. [Google Scholar]

- Ding, J.; Xue, N.; Long, Y.; Xia, G.S.; Lu, Q. Learning RoI transformer for oriented object detection in aerial images. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition; 2019; pp. 2849–2858. [Google Scholar]

- Huang, X.; Liu, M.Y.; Belongie, S.; Kautz, J. Multimodal unsupervised image-to-image translation. In Proceedings of the European conference on computer vision (ECCV); 2018; pp. 172–189. [Google Scholar]

- Holst, G.C. Infrared Imaging Systems: Design, Analysis, Modeling, and Testing. Infrared Imaging Systems: Design, Analysis, Modeling, and Testing 1990, 1309. [Google Scholar]

- Ma, K.; Yeganeh, H.; Zeng, K.; Wang, Z. High dynamic range image compression by optimizing tone mapped image quality index. IEEE Transactions on Image Processing 2015, 24, 3086–3097. [Google Scholar] [PubMed]

- French, G.; Finlayson, G.; Mackiewicz, M. Multi-spectral pedestrian detection via image fusion and deep neural networks. Journal of Imaging Science and Technology 2018, 176–181. [Google Scholar]

- Bao, C.; Cao, J.; Hao, Q.; Cheng, Y.; Ning, Y.; Zhao, T. Dual-YOLO architecture from infrared and visible images for object detection. Sensors 2023, 23, 2934. [Google Scholar] [CrossRef] [PubMed]

- Guan, D.; Cao, Y.; Yang, J.; Cao, Y.; Yang, M.Y. Fusion of multispectral data through illumination-aware deep neural networks for pedestrian detection. Information Fusion 2019, 50, 148–157. [Google Scholar] [CrossRef]

- Kim, J.U.; Park, S.; Ro, Y.M. Uncertainty-guided cross-modal learning for robust multispectral pedestrian detection. IEEE Transactions on Circuits and Systems for Video Technology 2021, 32, 1510–1523. [Google Scholar] [CrossRef]

- Ma, J.; Yu, W.; Liang, P.; Li, C.; Jiang, J. FusionGAN: A generative adversarial network for infrared and visible image fusion. Information fusion 2019, 48, 11–26. [Google Scholar] [CrossRef]

- Zhao, Z.; Xu, S.; Zhang, J.; Liang, C.; Zhang, C.; Liu, J. Efficient and model-based infrared and visible image fusion via algorithm unrolling. IEEE Transactions on Circuits and Systems for Video Technology 2021, 32, 1186–1196. [Google Scholar] [CrossRef]

- Ultralytics. YOLOv8. 2023. Available online: https://github.com/ultralytics/ultralytics.

- Hwang, S.; Park, J.; Kim, N.; Choi, Y.; So Kweon, I. Multispectral pedestrian detection: Benchmark dataset and baseline. In Proceedings of the IEEE conference on computer vision and pattern recognition; 2015; pp. 1037–1045. [Google Scholar]

- Sun, Y.; Cao, B.; Zhu, P.; Hu, Q. Drone-based RGB-infrared cross-modality vehicle detection via uncertainty-aware learning. IEEE Transactions on Circuits and Systems for Video Technology 2022, 32, 6700–6713. [Google Scholar] [CrossRef]

- FLIR ADAS Dataset. 2022. Available online: https://www.flir.com/oem/adas/adas-dataset-form (accessed on 19 January 2022).

- Liu, W.; Anguelov, D.; Erhan, D.; Szegedy, C.; Reed, S.; Fu, C.Y.; Berg, A.C. Ssd: Single shot multibox detector. In Proceedings of the Computer Vision–ECCV 2016: 14th European Conference, Amsterdam, The Netherlands, 11–14 October 2016; Proceedings, Part I 14. Springer, 2016; pp. 21–37. [Google Scholar]

- Girshick, R. Fast r-cnn. In Proceedings of the IEEE international conference on computer vision; 2015; pp. 1440–1448. [Google Scholar]

- Redmon, J.; Divvala, S.; Girshick, R.; Farhadi, A. You Only Look Once: Unified, Real-Time Object Detection. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2016. [Google Scholar] [CrossRef]

- Redmon, J.; Farhadi, A. YOLO9000: Better, Faster, Stronger. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR); 2017; pp. 6517–6525. [Google Scholar] [CrossRef]

- Redmon, J.; Farhadi, A. YOLOv3: An Incremental Improvement. arXiv preprint, 2018; arXiv:1804.02767. [Google Scholar]

- Bochkovskiy, A.; Wang, C.Y.; Liao, H.Y.M. YOLOv4: Optimal Speed and Accuracy of Object Detection. arXiv preprint, 2020; arXiv:2004.10934. [Google Scholar]

- Jocher, G. YOLOv5 by Ultralytics. 2020. Available online: https://github.com/ultralytics/yolov5.

- Li, C.Y.; Wang, N.; Mu, Y.Q.; Wang, J.; Liao, H.Y.M. YOLOv6: A Single-Stage Object Detection Framework for Industrial Applications. arXiv preprint, 2022; arXiv:2209.02976. [Google Scholar]

- Wang, C.Y.; Bochkovskiy, A.; Liao, H.Y.M. YOLOv7: Trainable bag-of-freebies sets new state-of-the-art for real-time object detectors. arXiv preprint, 2022; arXiv:2207.02696. [Google Scholar]

- Wang, C.Y.; Yeh, I.H.; Liao, H.Y.M. Yolov9: Learning what you want to learn using programmable gradient information. arXiv preprint, 2024; arXiv:2402.13616. [Google Scholar]

- Wang, A.; Chen, H.; Liu, L.; Chen, K.; Lin, Z.; Han, J.; Ding, G. Yolov10: Real-time end-to-end object detection. arXiv preprint, 2024; arXiv:2405.14458. [Google Scholar]

- Farahnakian, F.; Heikkonen, J. Deep learning based multi-modal fusion architectures for maritime vessel detection. Remote Sensing 2020, 12, 2509. [Google Scholar] [CrossRef]

- Li, R.; Peng, Y.; Yang, Q. Fusion enhancement: UAV target detection based on multi-modal GAN. In Proceedings of the 2023 IEEE 7th Information Technology and Mechatronics Engineering Conference (ITOEC); 2023; Vol. 7, pp. 1953–1957. [Google Scholar]

- Liang, P.; Jiang, J.; Liu, X.; Ma, J. Fusion from decomposition: A self-supervised decomposition approach for image fusion. Proceedings of the European Conference on Computer Vision, Springer, 2022; 719–735. [Google Scholar]

- Bao, Y.; Song, K.; Wang, J.; Huang, L.; Dong, H.; Yan, Y. Visible and thermal images fusion architecture for few-shot semantic segmentation. Journal of Visual Communication and Image Representation 2021, 80, 103306. [Google Scholar] [CrossRef]

- Dai, X.; Yuan, X.; Wei, X. TIRNet: Object detection in thermal infrared images for autonomous driving. Applied Intelligence 2021, 51, 1244–1261. [Google Scholar] [CrossRef]

- Wang, Q.; Chi, Y.; Shen, T.; Song, J.; Zhang, Z.; Zhu, Y. Improving RGB-infrared object detection by reducing cross-modality redundancy. Remote Sensing 2022, 14, 2020. [Google Scholar] [CrossRef]

- Luo, F.; Li, Y.; Zeng, G.; Peng, P.; Wang, G.; Li, Y. Thermal infrared image colorization for nighttime driving scenes with top-down guided attention. IEEE Transactions on Intelligent Transportation Systems 2022, 23, 15808–15823. [Google Scholar] [CrossRef]

- Hu, J.; Shen, L.; Sun, G. Squeeze-and-excitation networks. In Proceedings of the IEEE conference on computer vision and pattern recognition; 2018; pp. 7132–7141. [Google Scholar]

- Wang, Q.; Wu, B.; Zhu, P.; Li, P.; Zuo, W.; Hu, Q. ECA-Net: Efficient channel attention for deep convolutional neural networks. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition; 2020; pp. 11534–11542. [Google Scholar]

- Wang, X.; Girshick, R.; Gupta, A.; He, K. Non-local neural networks. In Proceedings of the IEEE conference on computer vision and pattern recognition; 2018; pp. 7794–7803. [Google Scholar]

- Woo, S.; Park, J.; Lee, J.Y.; Kweon, I.S. Cbam: Convolutional block attention module. In Proceedings of the European conference on computer vision (ECCV); 2018; pp. 3–19. [Google Scholar]

- Cao, Y.; Xu, J.; Lin, S.; Wei, F.; Hu, H. Gcnet: Non-local networks meet squeeze-excitation networks and beyond. In Proceedings of the IEEE/CVF international conference on computer vision workshops; 2019; pp. 0–0. [Google Scholar]

- Li, X.; Hu, X.; Yang, J. Spatial group-wise enhance: Improving semantic feature learning in convolutional networks. arXiv preprint, 2019; arXiv:1905.09646. [Google Scholar]

- Fu, J.; Liu, J.; Tian, H.; Li, Y.; Bao, Y.; Fang, Z.; Lu, H. Dual attention network for scene segmentation. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition; 2019; pp. 3146–3154. [Google Scholar]

- Zhang, Q.L.; Yang, Y.B. Sa-net: Shuffle attention for deep convolutional neural networks. In Proceedings of the ICASSP 2021-2021 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP); 2021; pp. 2235–2239. [Google Scholar]

- Wu, Y.; He, K. Group normalization. In Proceedings of the European conference on computer vision (ECCV); 2018; pp. 3–19. [Google Scholar]

- Lin, T.Y.; Goyal, P.; Girshick, R.; He, K.; Dollár, P. Focal loss for dense object detection. In Proceedings of the IEEE international conference on computer vision; 2017; pp. 2980–2988. [Google Scholar]

- Dai, J.; Qi, H.; Xiong, Y.; Li, Y.; Zhang, G.; Hu, H.; Wei, Y. Deformable convolutional networks. In Proceedings of the IEEE international conference on computer vision; 2017; pp. 764–773. [Google Scholar]

- He, K.; Gkioxari, G.; Dollár, P.; Girshick, R. Mask r-cnn. In Proceedings of the IEEE international conference on computer vision; 2017; pp. 2961–2969. [Google Scholar]

- Cai, Z.; Vasconcelos, N. Cascade r-cnn: Delving into high quality object detection. In Proceedings of the IEEE conference on computer vision and pattern recognition; 2018; pp. 6154–6162. [Google Scholar]

- Tian, Z.; Shen, C.; Chen, H.; He, T. FCOS: Fully convolutional one-stage object detection. arXiv 2019. arXiv preprint, 2019; arXiv:1904.01355. [Google Scholar]

- Zhang, S.; Wen, L.; Bian, X.; Lei, Z.; Li, S.Z. Single-shot refinement neural network for object detection. In Proceedings of the IEEE conference on computer vision and pattern recognition; 2018; pp. 4203–4212. [Google Scholar]

- Li, S.; Li, Y.; Li, Y.; Li, M.; Xu, X. Yolo-firi: Improved yolov5 for infrared image object detection. IEEE access 2021, 9, 141861–141875. [Google Scholar] [CrossRef]

- Jiang, X.; Cai, W.; Yang, Z.; Xu, P.; Jiang, B. IARet: A lightweight multiscale infrared aerocraft recognition algorithm. Arabian Journal for Science and Engineering 2022, 47, 2289–2303. [Google Scholar] [CrossRef]

- Li, Q.; Zhang, C.; Hu, Q.; Fu, H.; Zhu, P. Confidence-aware fusion using dempster-shafer theory for multispectral pedestrian detection. IEEE Transactions on Multimedia 2022, 25, 3420–3431. [Google Scholar] [CrossRef]

- Chen, Q.; Wang, Y.; Yang, T.; Zhang, X.; Cheng, J.; Sun, J. You only look one-level feature. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition; 2021; pp. 13039–13048. [Google Scholar]

- Zheng, Z.; Wu, Y.; Han, X.; Shi, J. Forkgan: Seeing into the rainy night. In Proceedings of the Computer Vision–ECCV 2020: 16th European Conference, Glasgow, UK, 23–28 August 2020; Proceedings, Part III 16. Springer, 23 August 2020; pp. 155–170. [Google Scholar]

- Anoosheh, A.; Sattler, T.; Timofte, R.; Pollefeys, M.; Van Gool, L. Night-to-day image translation for retrieval-based localization. In Proceedings of the 2019 International Conference on Robotics and Automation (ICRA); 2019; pp. 5958–5964. [Google Scholar]

- Liu, M.Y.; Breuel, T.; Kautz, J. Unsupervised image-to-image translation networks. Advances in neural information processing systems 2017, 30. [Google Scholar]

| Hyper Parameter | Drone Vehicle Dataset | FLIR Dataset | KAIST Dataset |

|---|---|---|---|

| Scenario | drone | adas | pedestrian |

| Modality | Infrared + Visible | Infrared + Visible | Infrared + Visible |

| Images | 56878 | 14000 | 95328 |

| Categories | 5 | 4 | 3 |

| Labels | 190.6K | 14.5K | 103.1K |

| Resolution | 840 × 712 | 640 × 512 | 640 × 512 |

| Category | Parameter |

|---|---|

| CPU Intel | i7-12700H |

| GPU | RTX 4090 |

| System | Windows11 |

| Python | 3.8.19 |

| PyTorch | 1.12.1 |

| Training Epochs | 300 |

| Learning Rate | 0.01 |

| Weight Decay | 0.0005 |

| Momentum | 0.937 |

| Hyper Parameter | DroneVehicle Dataset | FLIR Dataset | KAIST Dataset |

|---|---|---|---|

| Visible Image Size | 640 × 640 | 640 × 640 | 640 × 640 |

| Infrared Image Size | 640 × 640 | 640 × 640 | 640 × 640 |

| Visible Image | 28439 | 8437 | 8995 |

| Infrared Image | 28439 | 8437 | 8995 |

| Training set | 17990 | 7381 | 7595 |

| Validation set | 1469 | 1056 | 1400 |

| Testing set | 8980 | 1056 (test-val) | 1400 (test-val) |

| Method | Modality | Car | Freight Car | Truck | Bus | Van | mAP |

|---|---|---|---|---|---|---|---|

| RetinaNet(OBB)[43] | Visible | 67.5 | 13.7 | 28.2 | 62.1 | 19.3 | 38.2 |

| Faster R-CNN(OBB)[17] | Visible | 67.9 | 26.3 | 38.6 | 67.0 | 23.2 | 44.6 |

| Faster R-CNN(Dpool)[44] | Visible | 68.2 | 26.4 | 38.7 | 69.1 | 26.4 | 45.8 |

| Mask R-CNN[45] | Visible | 68.5 | 26.8 | 39.8 | 66.8 | 25.4 | 45.5 |

| Cascade Mask R-CNN[46] | Visible | 68.0 | 27.3 | 44.7 | 69.3 | 29.8 | 47.8 |

| RoITransformer[2] | Visible | 68.1 | 29.1 | 44.2 | 70.6 | 27.6 | 47.9 |

| YOLOv8(OBB)[12] | Visible | 97.5 | 42.8 | 62.0 | 94.3 | 46.6 | 68.6 |

| RetinaNet(OBB)[43] | Infrared | 79.9 | 28.1 | 32.8 | 67.3 | 16.4 | 44.9 |

| Faster R-CNN(OBB)[17] | Infrared | 88.6 | 35.2 | 42.5 | 77.9 | 28.5 | 54.6 |

| Faster R-CNN(Dpool)[44] | Infrared | 88.9 | 36.8 | 47.9 | 78.3 | 32.8 | 56.9 |

| Mask R-CNN[45] | Infrared | 88.8 | 36.6 | 48.9 | 78.4 | 32.2 | 57.0 |

| Cascade Mask R-CNN[46] | Infrared | 81.0 | 39.0 | 47.2 | 79.3 | 33.0 | 55.9 |

| RoITransformer[2] | Infrared | 88.9 | 41.5 | 51.5 | 79.5 | 34.4 | 59.2 |

| YOLOv8(OBB)[12] | Infrared | 97.1 | 38.5 | 65.2 | 94.5 | 45.2 | 68.1 |

| UA-CMDet[14] | Visible+Infrared | 87.5 | 46.8 | 60.7 | 87.1 | 38.0 | 64.0 |

| Dual-YOLO[7] | Visible+Infrared | 98.1 | 52.9 | 65.7 | 95.8 | 46.6 | 71.8 |

| IR-YOLO(Ours) | Visible+Infrared | 97.2 | 63.1 | 65.4 | 94.3 | 53.0 | 74.6 |

| Method | Person | Bicycle | Car | mAP |

|---|---|---|---|---|

| Faster R-CNN[17] | 39.6 | 54.7 | 67.6 | 53.9 |

| SSD[16] | 40.9 | 43.6 | 61.6 | 48.7 |

| RetinaNet[43] | 52.3 | 61.3 | 71.5 | 61.7 |

| FCOS[47] | 69.7 | 67.4 | 79.7 | 72.3 |

| MMTOD-UNIT[14] | 49.4 | 64.4 | 70.7 | 61.5 |

| MMTOD-CG[14] | 50.3 | 63.3 | 70.6 | 61.4 |

| RefineDet[48] | 77.2 | 57.2 | 84.5 | 72.9 |

| TermalDet[14] | 78.2 | 60.0 | 85.5 | 74.6 |

| YOLO-FIR[49] | 85.2 | 70.7 | 84.3 | 80.1 |

| YOLOv3-tiny[20] | 67.1 | 50.3 | 81.2 | 66.2 |

| IARet[50] | 77.2 | 48.7 | 85.8 | 70.7 |

| CMPD[51] | 69.6 | 59.8 | 78.1 | 69.3 |

| PearlGAN[33] | 54.0 | 23.0 | 75.5 | 50.8 |

| Cascade R-CNN[46] | 77.3 | 84.3 | 79.8 | 80.5 |

| YOLOv5s[22] | 68.3 | 67.1 | 80.0 | 71.8 |

| YOLOF[52] | 67.8 | 68.1 | 79.4 | 71.8 |

| Dual-YOLO[7] | 88.6 | 66.7 | 93 | 84.5 |

| IV-YOLO(Ours) | 86.6 | 77.8 | 92.4 | 85.6 |

| Method | Precision | Recall | mAP |

|---|---|---|---|

| ForkGAN[53] | 33.9 | 4.6 | 4.9 |

| ToDayGAN[54] | 11.4 | 14.9 | 5.0 |

| UNIT[55] | 40.9 | 43.6 | 11.0 |

| PearlGAN[33] | 21.0 | 39.8 | 25.8 |

| Dual-YOLO[7] | 75.1 | 66.7 | 73.2 |

| IV-YOLO(OURS) | 77.2 | 84.5 | 75.4 |

| Method | Dataset | Params | Runtime(fps) |

|---|---|---|---|

| Faster R-CNN(OBB)[17] | Drone Vehicle | 58.3M | 5.3 |

| Faster R-CNN(Dpool)[44] | Drone Vehicle | 59.9M | 4.3 |

| Mask R-CNN[45] | Drone Vehicle | 242.0M | 13.5 |

| RetinaNet[44] | Drone Vehicle | 145.0M | 15.0 |

| Cascade Mask R-CNN[46] | Drone Vehicle | 368.0M | 9.8 |

| RolTransformer[2] | Drone Vehicle | 273.0M | 7.1 |

| YOLOv7[24] | Drone Vehicle | 72.1M | 161.0 |

| YOLOv8n[12] | Drone Vehicle | 5.92M | 188.6 |

| SSD[16] | FLIR | 131.0M | 43.7 |

| FCOS[47] | FLIR | 123.0M | 22.9 |

| RefineDet[48] | FLIR | 128.0M | 24.1 |

| YOLO-FIR[49] | FLIR | 7.1M | 83.3 |

| YOLOv3-tiny[20] | FLIR | 17.0M | 66.2 |

| Cascade R-CNN[46] | FLIR | 165.0M | 16.1 |

| YOLOv5s[22] | FLIR | 14.0M | 41.0 |

| YOLOF[52] | FLIR | 44.0M | 32.0 |

| Dual-YOLO[7] | FLIR | 175.1M | 62.0 |

| IV-YOLO(Ours) | Drone Vehicle/FLIR | 4.31M | 208.2 |

| Shuffle-SPP | Bi-concat | Person | Bicycle | Car | mAP@0.5 | mAP@0.5:0.95 |

|---|---|---|---|---|---|---|

| × | 🗸 | 83.7 | 76.4 | 92.1 | 84.1 | 50.1 |

| 🗸 | × | 83.2 | 73.8 | 91.6 | 82.9 | 49.1 |

| 🗸 | 🗸 | 86.6 | 77.8 | 92.4 | 85.6 | 51.2 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).