Submitted:

01 February 2025

Posted:

06 February 2025

Read the latest preprint version here

Abstract

Keywords:

Introduction

Literature Work

Proposed Model

Data Preparation

Transformer Encoder Layer

FlameViT Model Architecture

Hyperparameter Tuning

| Hyperparameter | Values Tested |

|---|---|

| Patch Size (P) | {8, 16, 32} |

| Projection Dimension (d) | {32, 64, 128} |

| Number of Attention Heads (h) | {4, 8} |

| ) | {128, 256} |

| Number of Layers (L) | {1, 2, 4} |

| Dropout Rate (p) | {0.0, 0.1, 0.2} |

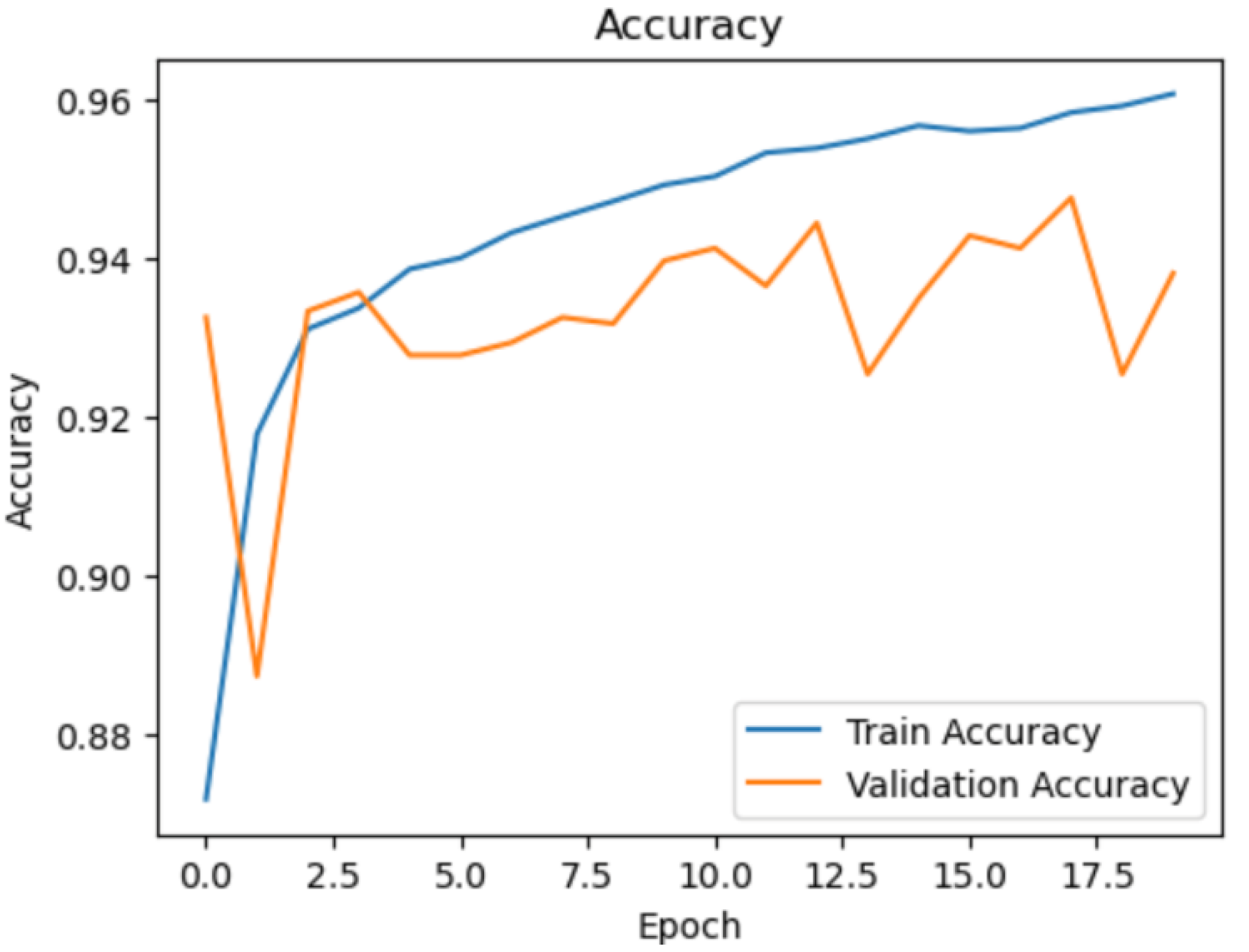

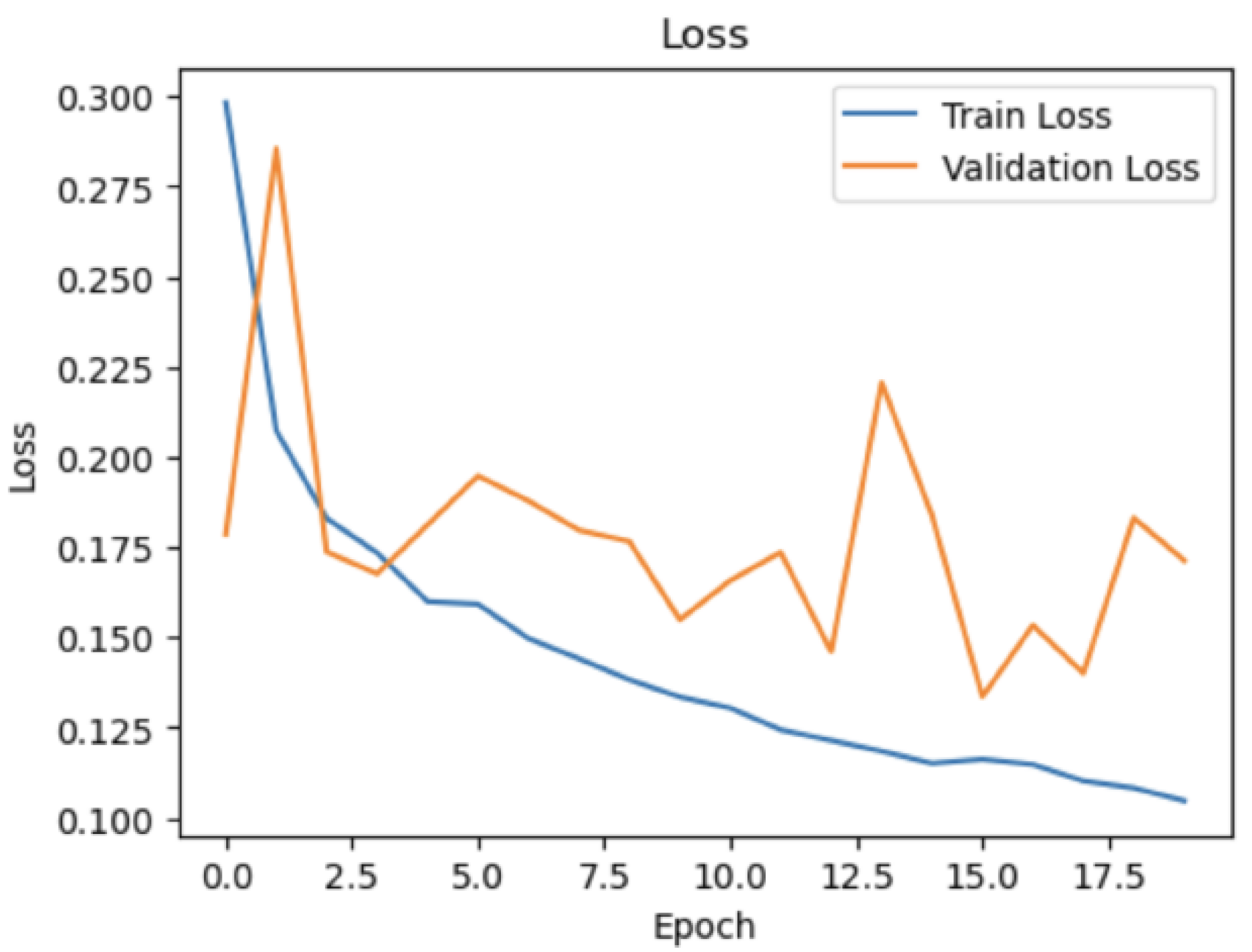

Training Procedure

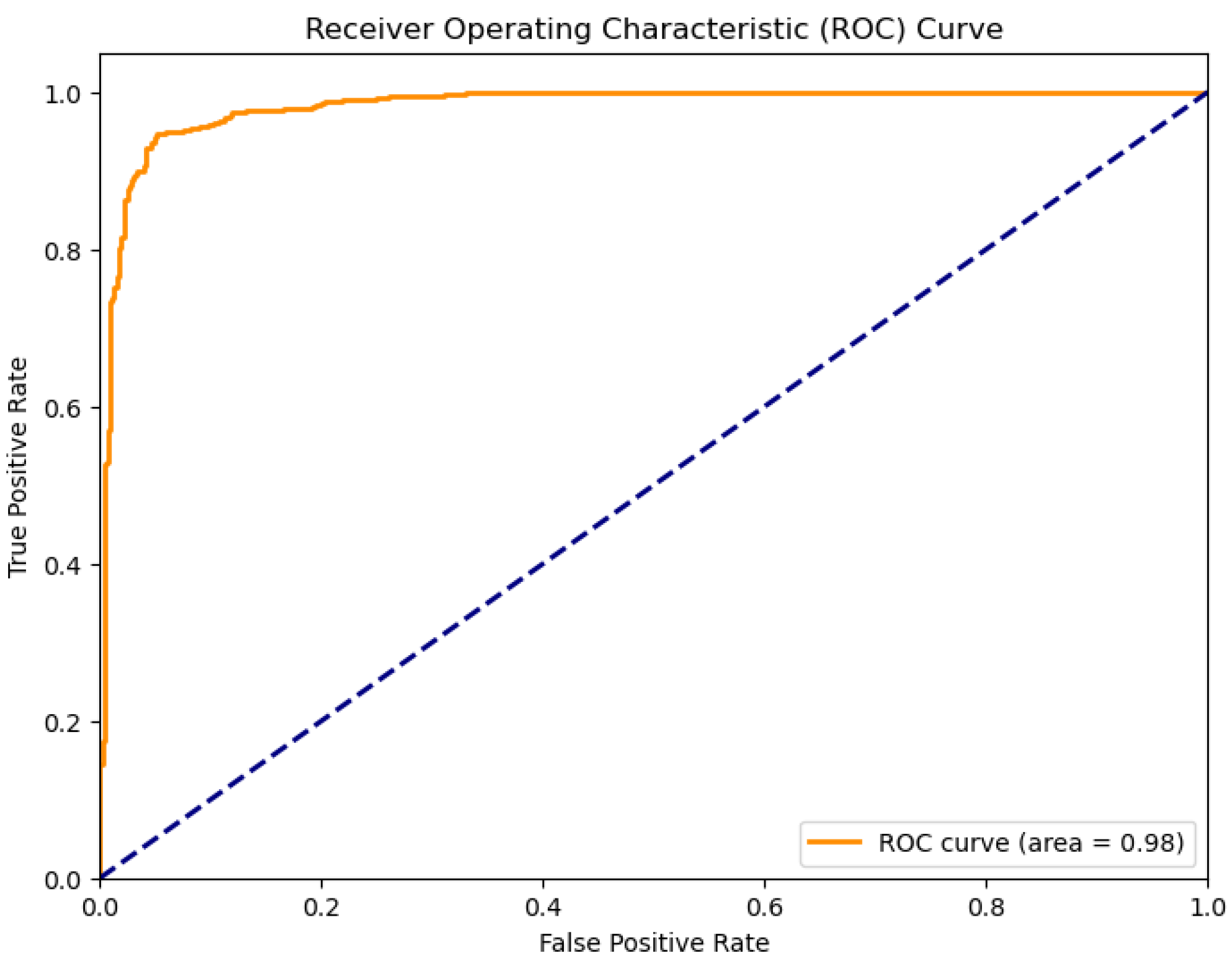

Model Evaluation

Experiments and results

Experimental Setup

- Rescaling: Pixel values are rescaled by a factor of 1/255 to normalize the input images.

- Rotation: Images are randomly rotated by up to 20 degrees.

- Width and Height Shifts: Images are randomly shifted horizontally and vertically by up to 20% of the total width and height, respectively.

- Shear: Shear transformations are applied to the images by up to 20 degrees.

- Zoom: Images are randomly zoomed in by up to 20%.

- Horizontal Flip: Images are randomly flipped horizontally.

Results

Discussion

Conclusion & Future work

References

- David MJS Bowman, Jennifer K Balch, Paulo Artaxo,William J Bond, MarkACochrane, CarlaMD’Antonio, Ruth S DeFries, Fay H Johnston, Jon E Keeley, Meg A Krawchuk, et al. Fire in the earth system. Science, 324(5926):481–484, 2009.

- Camilo Mora, Todd McKenzie, Ivan L Gaw, Justin M Dean, Heike von Hammerstein, Travis A Knudson,Ryan O Setter, Cameron Z Smith, KristopherMWebster, Jonathan A Patz, et al. Broad threat to humanity from cumulative climate hazards intensified by greenhouse gas emissions. Nature Climate Change, 8(12):1062–1071, 2018.

- Sander Veraverbeke, Brendan M Rogers, Michael L Goulden, Randi R Jandt, Charles E Miller, E Brady Wiggins, and James T Randerson. Direct and indirect climate effects on spatial patterns of wildfires in boreal forest ecosystems. Science advances, 6(18):eaay1121, 2020.

- Preeti Jain, Praveen Kumar Jain, and Preeti Chauhan. Review of forest fire detection techniques using wireless sensor network. Materials Today: Proceedings, 2020.

- X Xu, Q Guo, and Y Su. Gis-based wildfire risk mapping and modeling for mediterranean forests using logistic regression and multi-criteria decision analysis. Forest Ecology and Management, 368:163–172, 2016.

- J D Radke and James Radke. Application of gis technology in forest fire prevention and management. International Journal of Geo-Information, 8(9):394, 2019.

- Yang Yuan, Hongjun Fang, Zhidong Deng, and Songyan Li. Fire detection in uav images using deep learning approach. IEEE/ASME Transactions on Mechatronics, 20(6):2893–2904, 2015.

- Hongyu Zhao, Zhixin Wang, Bing Xu, Qun Liu, and Yong Zhang. Uav-based remote sensing for forest fire monitoring and assessment. Journal of Remote Sensing, 22(4):578–589, 2018.

- Carl Hartung, Richard Han, and Carl Seielstad. Fire risk assessment using wireless sensor networks. 2006 Fourth Annual IEEE International Conference on Pervasive Computing and CommunicationsWorkshops (PerCom Workshops), pages 13–17, 2006.

- Xinjian Mao, Qingxin Xie, and Shilin Tang. Wireless sensor networks for fire risk prediction and forest fire detection. Sensors, 19(6):1441, 2019.

- Zhiyuan Liu, Jian Yang, Youbao Chang, and Licheng Jiao. Assessment of forest fire risk based on fuzzy ahpand fuzzy comprehensive evaluation. Ecological Modelling, 297:42–50, 2015.

- Wenming Xi and Jianqing, Li. Wenming Xi and Jianqing Li. Integrating multi-source data to improve forest fire detection based on random forests. Remote Sensing, 11(3):297, 2019.

- Prashant Kumar Srivastava, Dawei Han, Miguel Angel Rico-Ramirez, Michael Bray, Tanvir Islam, and Qiang Dai. Deep learning for precipitation prediction: Towards better accuracy. Meteorological Applications, 27(1):e1874, 2020.

- Alex Krizhevsky, Ilya Sutskever, and Geoffrey E Hinton. Imagenet classification with deep convolutional neural networks. Advances in neural information processing systems, 25:1097–1105, 2012.

- Karen Simonyan and Andrew Zisserman. Very deep convolutional networks for large-scale image recognition. arXiv preprint arXiv:1409.1556, 2014.

- Kaiming He, Xiangyu Zhang, Shaoqing Ren, and Jian Sun. Deep residual learning for image recognition. Proceedings of the IEEE conference on computer vision and pattern recognition, pages 770–778, 2016.

- Igor Farasin, Mario Anedda, Andrea Fanni, and Luigi Martis. Deep learning techniques for wildland fires analysis through aerial images. 2017 IEEE International Geoscience and Remote Sensing Symposium (IGARSS), pages 1068–1071, 2017.

- Sujith Raj, K Suriyalakshmi, and K Srinivasan. Detection of wildfires using recurrent neural networks. International Journal of Disaster Risk Reduction, 49:101745, 2020.

- Jian Zhang, Zhiwei Zhang, Junwei Ma, and Lei Wang. Enhancing spatial attention using dual attention mechanism for wildfire detection in satellite images. Remote Sensing Letters, 10(9):903–912, 2019.

- Jieneng Chen, Yongyi Lu, Qihang Yu, Xiangde Luo, Ehsan Adeli, YanWang, Le Lu, Alan L Yuille, and Yuyin Zhou. Transunet: Transformers make strong encoders for medical image segmentation. arXiv preprint arXiv:2102.04306, 2021a.

- Hugo Touvron, Matthieu Cord, Alexandre Sablayrolles, Gabriel Synnaeve, and Herv´e J´egou. Training dataefficient image transformers and distillation through attention. arXiv preprint arXiv:2012.12877, 2021.

- Alexey Dosovitskiy, Lucas Beyer, Alexander Kolesnikov, Dirk Weissenborn, Xiaohua Zhai, Thomas Unterthiner, Mostafa Dehghani, Matthias Minderer, Georg Heigold, Sylvain Gelly, et al. An image is worth 16 × 16 words: Transformers for image recognition at scale. arXiv preprint arXiv:2010.11929, 2020.

- Christian Szegedy, Wei Liu, Yangqing Jia, Pierre Sermanet, Scott Reed, Dragomir Anguelov, and Andrew Rabinovich. Going deeper with convolutions. Proceedings of the IEEE conference on computer vision and pattern recognition, pages 1–9, 2015.

- Gao Huang, Zhuang Liu, Laurens Van Der Maaten, and Kilian Q Weinberger. Densely connected convolutional networks. Proceedings of the IEEE conference on computer vision and pattern recognition, pages 4700–4708, 2017.

- Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, AidanNGomez, Łukasz Kaiser, and Illia Polosukhin. Attention is all you need. Advances in neural information processing systems, 30, 2017.

| Hyperparameter | Values Tested |

|---|---|

| Patch Size (P) | 16 |

| Projection Dimension (d) | 64 |

| Number of Attention Heads (h) | 8 |

| ) | 128 |

| Number of Layers (L) | 4 |

| Dropout Rate (p) | 0.1 |

| Class | Precision | Recall | F1-Score | Support |

|---|---|---|---|---|

| No wildfire | 0.94 | 0.92 | 0.93 | 564 |

| Wildfire | 0.94 | 0.95 | 0.94 | 696 |

| Accuracy | 0.94(1260) | |||

| Macro avg | 0.94 | 0.94 | 0.94 | 1260 |

| Weighted avg | 0.94 | 0.94 | 0.94 | 1260 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).