Submitted:

16 August 2024

Posted:

19 August 2024

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

2. Literature Review

2.1. Transformers in Machine Learning

2.2. Wildfire Prediction and Detection Methods

3. Methods

3.1. Model Architecture

3.2. Data Preparation

3.3. Patch Extraction and Embedding

3.4. Transformer Encoder Layer

3.4.1. Self-Attention Mechanism

3.4.2. Multi-Head Attention

3.4.3. Feed-Forward Network

3.5. FlameViT Model Architecture

3.6. Hyperparameter Tuning

Patch Size (P):

Projection Dimension (d):

Number of Attention Heads (h):

MLP Dimension ():

Number of Layers (L):

Dropout Rate (p):

| Hyperparameter | Values Tested |

|---|---|

| Patch Size (P) | {8, 16, 32} |

| Projection Dimension (d) | {32, 64, 128} |

| Number of Attention Heads (h) | {4, 8} |

| MLP Dimension () | {128, 256} |

| Number of Layers (L) | {1, 2, 4} |

| Dropout Rate (p) | {0.0, 0.1, 0.2} |

3.7. Training Procedure

3.8. Model Evaluation

4. Experiments and Results

4.1. Dataset Description

4.2. Data Augmentation and Preparation

- Rescaling: Pixel values are rescaled by a factor of to normalize the input images.

- Rotation: Images are randomly rotated by up to 20 degrees.

- Width and Height Shifts: Images are randomly shifted horizontally and vertically by up to 20% of the total width and height, respectively.

- Shear: Shear transformations are applied to the images by up to 20 degrees.

- Zoom: Images are randomly zoomed in by up to 20%.

- Horizontal Flip: Images are randomly flipped horizontally.

4.3. Hyperparameter Tuning

| Hyperparameter | Values Tested |

|---|---|

| Patch Size (P) | {8, 16, 32} |

| Projection Dimension (d) | {32, 64, 128} |

| Number of Attention Heads (h) | {4, 8} |

| MLP Dimension () | {128, 256} |

| Number of Layers (L) | {1, 2, 4} |

| Dropout Rate (p) | {0.0, 0.1, 0.2} |

4.4. Training Procedure

4.5. Model Evaluation

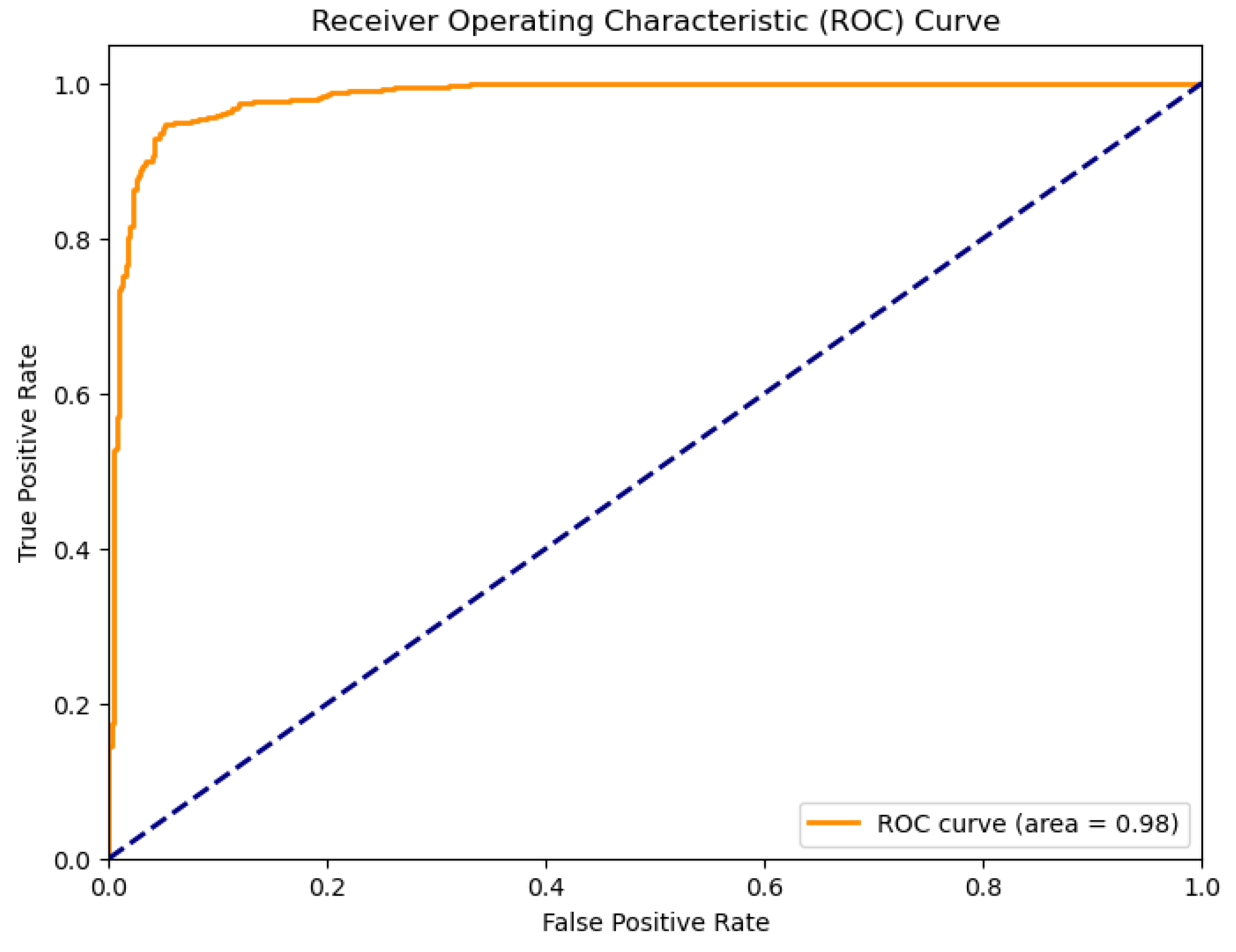

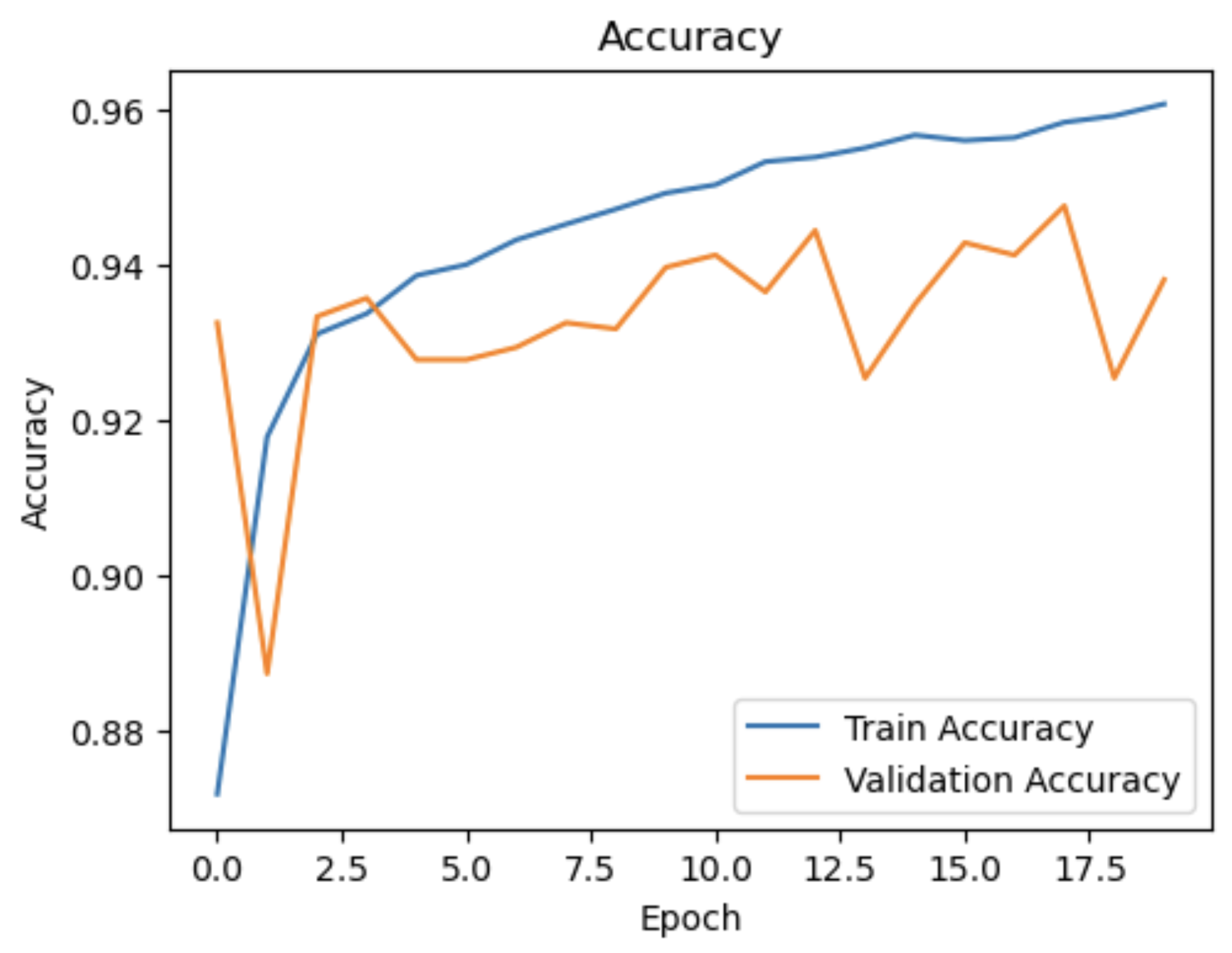

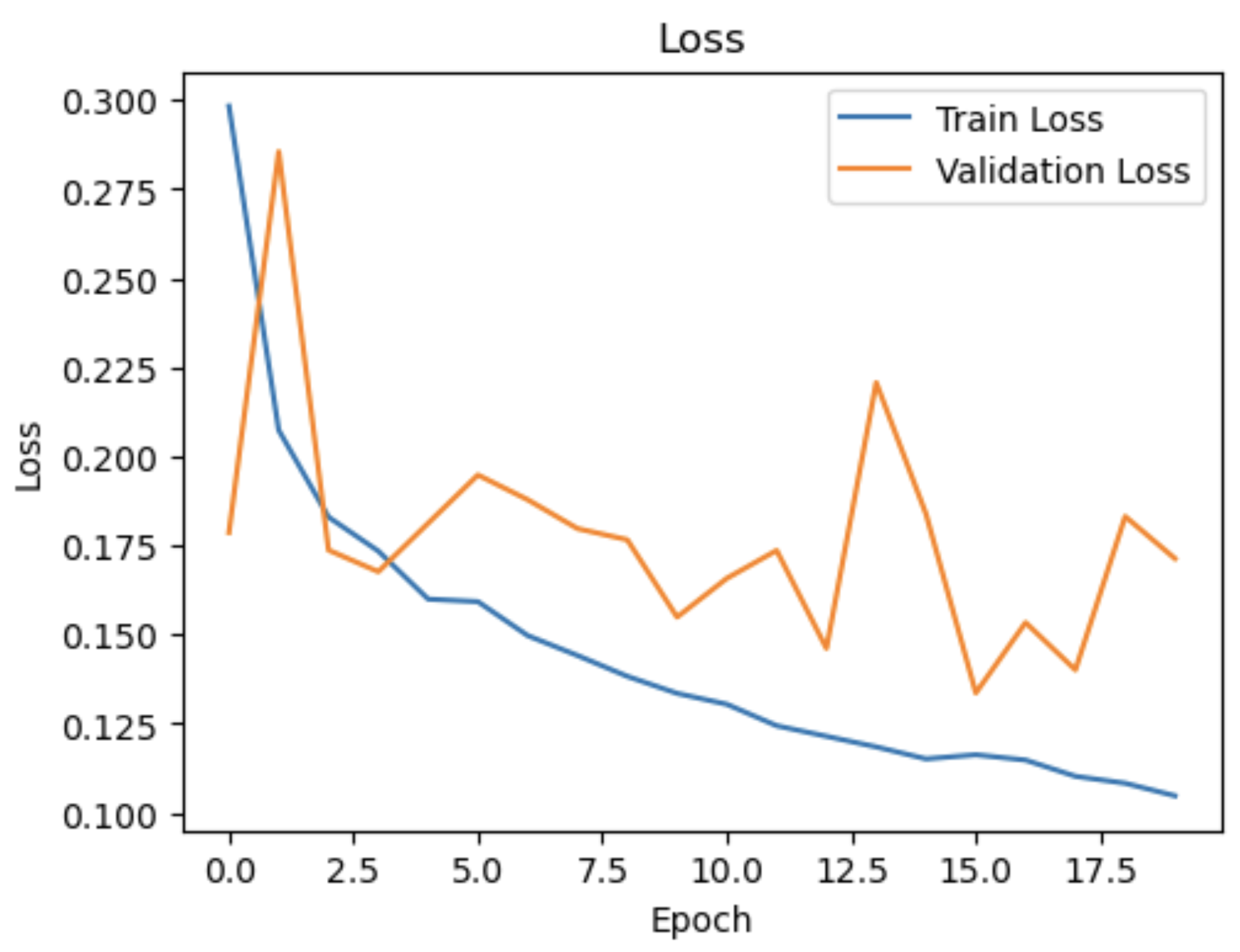

4.6. Results

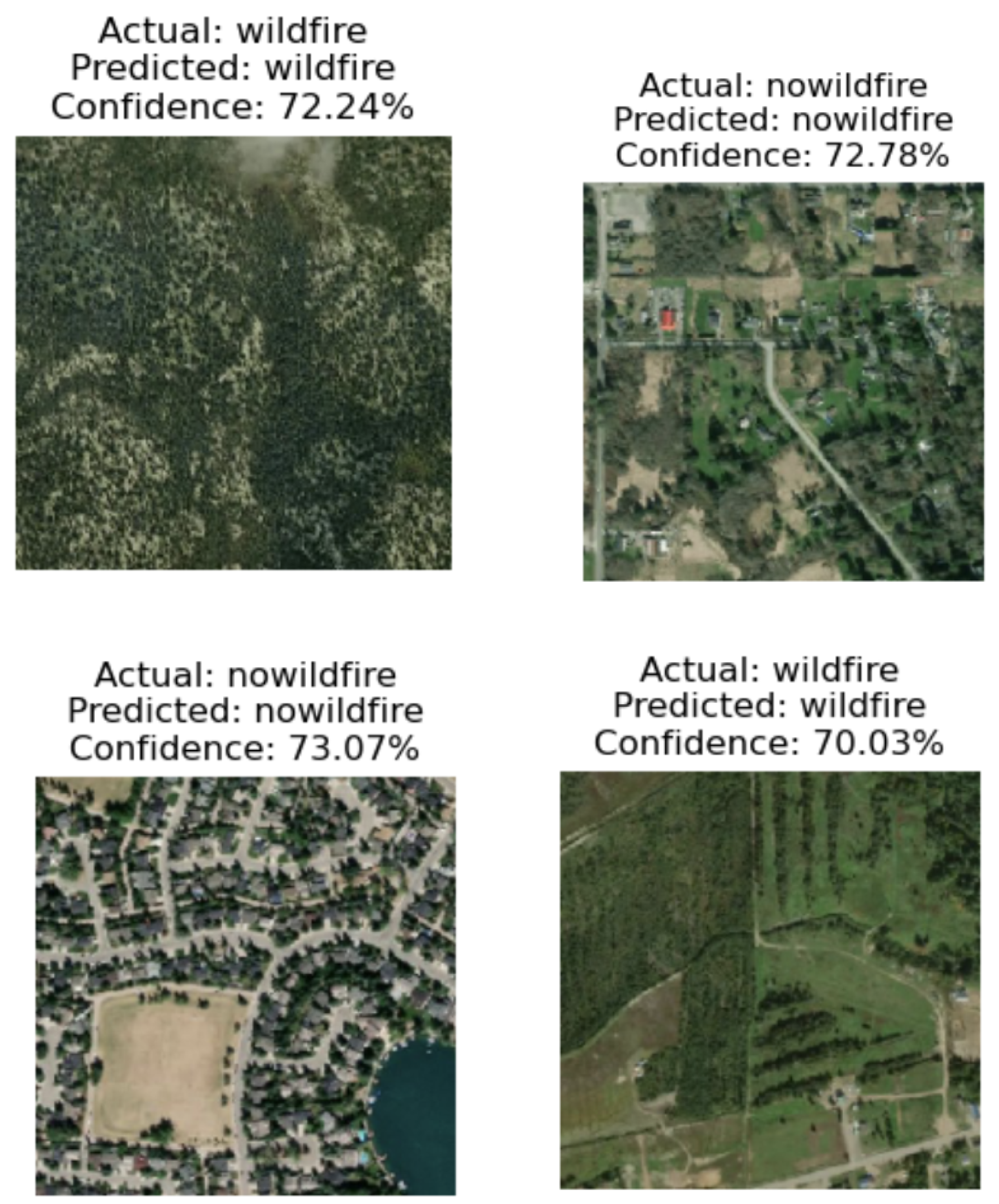

4.7. Model Predictions

4.8. Discussion

5. Conclusion

References

- Bowman, D.M.; Balch, J.K.; Artaxo, P.; Bond, W.J.; Cochrane, M.A.; D’Antonio, C.M.; DeFries, R.S.; Johnston, F.H.; Keeley, J.E.; Krawchuk, M.A.; et al. Fire in the Earth system. Science 2009, 324, 481–484. [Google Scholar] [CrossRef]

- Mora, C.; McKenzie, T.; Gaw, I.L.; Dean, J.M.; von Hammerstein, H.; Knudson, T.A.; Setter, R.O.; Smith, C.Z.; Webster, K.M.; Patz, J.A.; et al. Broad threat to humanity from cumulative climate hazards intensified by greenhouse gas emissions. Nature Climate Change 2018, 8, 1062–1071. [Google Scholar] [CrossRef]

- Veraverbeke, S.; Rogers, B.M.; Goulden, M.L.; Jandt, R.R.; Miller, C.E.; Wiggins, E.B.; Randerson, J.T. Direct and indirect climate effects on spatial patterns of wildfires in boreal forest ecosystems. Science advances 2020, 6, eaay1121. [Google Scholar]

- Jain, P.; Jain, P.K.; Chauhan, P. Review of forest fire detection techniques using wireless sensor network. Materials Today: Proceedings 2020.

- Xu, X.; Guo, Q.; Su, Y. GIS-based wildfire risk mapping and modeling for Mediterranean forests using logistic regression and multi-criteria decision analysis. Forest Ecology and Management 2016, 368, 163–172. [Google Scholar]

- Radke, J.D.; Radke, J. Application of GIS technology in forest fire prevention and management. International Journal of Geo-Information 2019, 8, 394. [Google Scholar]

- Yuan, Y.; Fang, H.; Deng, Z.; Li, S. Fire detection in UAV images using deep learning approach. IEEE/ASME Transactions on Mechatronics 2015, 20, 2893–2904. [Google Scholar]

- Zhao, H.; Wang, Z.; Xu, B.; Liu, Q.; Zhang, Y. UAV-based remote sensing for forest fire monitoring and assessment. Journal of Remote Sensing 2018, 22, 578–589. [Google Scholar]

- Hartung, C.; Han, R.; Seielstad, C. Fire risk assessment using wireless sensor networks. 2006 Fourth Annual IEEE International Conference on Pervasive Computing and Communications Workshops (PerCom Workshops) 2006, pp. 13–17.

- Mao, X.; Xie, Q.; Tang, S. Wireless sensor networks for fire risk prediction and forest fire detection. Sensors 2019, 19, 1441. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. Advances in neural information processing systems 2017, 30. [Google Scholar]

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. BERT: Pre-training of deep bidirectional transformers for language understanding. arXiv 2018, arXiv:1810.04805. [Google Scholar]

- Liu, Y.; Lapata, M. Text summarization with pretrained encoders. arXiv 2019, arXiv:1908.08345. [Google Scholar]

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; et al. An image is worth 16x16 words: Transformers for image recognition at scale. arXiv 2020, arXiv:2010.11929. [Google Scholar]

- Touvron, H.; Cord, M.; Douze, M.; Massa, F.; Sablayrolles, A.; Jégou, H. Training data-efficient image transformers & distillation through attention. arXiv 2020, arXiv:2012.12877. [Google Scholar]

- Rajpurkar, P.; Zhang, J.; Lopyrev, K.; Liang, P. SQuAD: 100,000+ questions for machine comprehension of text. arXiv 2016, arXiv:1606.05250. [Google Scholar]

- Yang, Z.; Dai, Z.; Yang, Y.; Carbonell, J.; Salakhutdinov, R.; Le, Q.V. XLNet: Generalized autoregressive pretraining for language understanding. Advances in neural information processing systems 2019, 32, 5753–5763. [Google Scholar]

- Radford, A.; Narasimhan, K.; Salimans, T.; Sutskever, I. Improving language understanding by generative pre-training. arXiv 2018, arXiv:1810.04805. [Google Scholar]

- Liu, Y.; Ott, M.; Goyal, N.; Du, J.; Joshi, M.; Chen, D.; Levy, O.; Lewis, M.; Zettlemoyer, L.; Stoyanov, V. RoBERTa: A Robustly Optimized BERT Pretraining Approach. arXiv 2019, arXiv:1907.11692. [Google Scholar]

- Dong, L.; Xu, S.; Xu, B. Speech-Transformer: A no-recurrence sequence-to-sequence model for speech recognition. 2018 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP) 2018, pp. 5884–5888.

- Li, S.; Jin, X.; Xie, C.; Jiang, H.; Pan, H. Enhancing time-series momentum strategies using deep neural networks: A systematic learning framework. Journal of Financial Data Science 2019, 1, 45–64. [Google Scholar]

- Jumper, J.; Evans, R.; Pritzel, A.; Green, T.; Figurnov, M.; Ronneberger, O.; Tunyasuvunakool, K.; Bates, R.; Zidek, A.; Potapenko, A.; et al. Highly accurate protein structure prediction with AlphaFold. Nature 2021, 596, 583–589. [Google Scholar] [CrossRef]

- Rao, R.; Liu, J.; Verkuil, R.; Meier, J.; Canny, J.; Abbeel, P.; et al. MSA Transformer. bioRxiv 2021. [Google Scholar]

- Esser, P.; Rombach, R.; Ommer, B. Taming transformers for high-resolution image synthesis. arXiv 2021, arXiv:2012.09841. [Google Scholar]

- Fan, L.; Xie, D.; Zeng, W.; Wang, H.; Pu, S. Learning to drive through deep reinforcement learning. IEEE Transactions on Vehicular Technology 2021, 70, 1062–1073. [Google Scholar]

- Chen, L.; Lu, K.; Rajeswaran, A.; Lee, K.; Grover, A.; Laskin, M.; Srinivas, A.; Abbeel, P.; Mordatch, I.; et al. Decision transformer: Reinforcement learning via sequence modeling. arXiv 2021, arXiv:2106.01345. [Google Scholar]

- Li, L.H.; Su, W.; Xiong, C.; et al. VisualBERT: A simple and performant baseline for vision and language. arXiv 2019, arXiv:1908.03557. [Google Scholar]

- Sun, C.; Myers, A.; Vondrick, C.; Murphy, K.; Schmid, C. VideoBERT: A joint model for video and language representation learning. Proceedings of the IEEE/CVF International Conference on Computer Vision 2019, pp. 7464–7473.

- Child, R.; Gray, S.; Radford, A.; Sutskever, I. Generating long sequences with sparse transformers. arXiv 2019, arXiv:1904.10509. [Google Scholar]

- Kitaev, N.; Kaiser, .; Levskaya, A. Reformer: The efficient transformer. arXiv 2020, arXiv:2001.04451.

- Choromanski, K.; Likhosherstov, V.; Dohan, D.; Song, X.; Gane, A.; Sarlos, T.; Belanger, D.; Colwell, L.; Weller, A. Rethinking attention with performers. arXiv 2020, arXiv:2009.14794. [Google Scholar]

- Wang, S.; Li, B.Z.; Khabsa, M.; Fang, H.; Ma, H. Linformer: Self-attention with linear complexity. arXiv 2020, arXiv:2006.04768. [Google Scholar]

- Pettorelli, N.; Laurance, W.F.; O’Brien, T.G.; Wegmann, M.; Nagendra, H.; Turner, W. Satellite remote sensing for applied ecologists: opportunities and challenges. Journal of Applied Ecology 2013, 50, 830–841. [Google Scholar] [CrossRef]

- Liu, Z.; Yang, J.; Chang, Y.; Jiao, L. Assessment of forest fire risk based on fuzzy AHP and fuzzy comprehensive evaluation. Ecological Modelling 2015, 297, 42–50. [Google Scholar]

- Xi, W.; Li, J. Integrating multi-source data to improve forest fire detection based on random forests. Remote Sensing 2019, 11, 297. [Google Scholar]

- Srivastava, P.K.; Han, D.; Rico-Ramirez, M.A.; Bray, M.; Islam, T.; Dai, Q. Deep learning for precipitation prediction: Towards better accuracy. Meteorological Applications 2020, 27, e1874. [Google Scholar]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. Imagenet classification with deep convolutional neural networks. Advances in neural information processing systems 2012, 25, 1097–1105. [Google Scholar] [CrossRef]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv 2014, arXiv:1409.1556. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. Proceedings of the IEEE conference on computer vision and pattern recognition 2016, pp. 770–778.

- Dutta, R.; Sgurr, G. Improving fire detection using an ensemble of convolutional neural networks trained with satellite-based image patches. Remote Sensing Letters 2016, 7, 1215–1224. [Google Scholar]

- Mendonça, E.A.d.S.; Martinez, A.L. Wildfire detection in the brazilian amazon rainforest using convolutional neural networks and satellite images. Remote Sensing 2020, 12, 3172. [Google Scholar]

- Farasin, I.; Anedda, M.; Fanni, A.; Martis, L. Deep learning techniques for wildland fires analysis through aerial images. 2017 IEEE International Geoscience and Remote Sensing Symposium (IGARSS) 2017, pp. 1068–1071.

- Raj, S.; Suriyalakshmi, K.; Srinivasan, K. Detection of wildfires using recurrent neural networks. International Journal of Disaster Risk Reduction 2020, 49, 101745. [Google Scholar]

- Zhang, J.; Zhang, Z.; Ma, J.; Wang, L. Enhancing spatial attention using dual attention mechanism for wildfire detection in satellite images. Remote Sensing Letters 2019, 10, 903–912. [Google Scholar]

- Chen, J.; Lu, Y.; Yu, Q.; Luo, X.; Adeli, E.; Wang, Y.; Lu, L.; Yuille, A.L.; Zhou, Y. TransUNet: Transformers Make Strong Encoders for Medical Image Segmentation. arXiv 2021, arXiv:2102.04306. [Google Scholar]

- Touvron, H.; Cord, M.; Sablayrolles, A.; Synnaeve, G.; Jégou, H. Training data-efficient image transformers and distillation through attention. arXiv 2021, arXiv:2012.12877. [Google Scholar]

- Breiman, L. Random forests. Machine learning 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Xiang, W.N.; Clarke, K.C. Spatial modeling of wildland fire risk in the wildland-urban interface using support vector machines and geographic information systems. Environmental Modelling & Software 2016, 83, 207–219. [Google Scholar]

- Szegedy, C.; Liu, W.; Jia, Y.; Sermanet, P.; Reed, S.; Anguelov, D.; Rabinovich, A. Going deeper with convolutions. Proceedings of the IEEE conference on computer vision and pattern recognition 2015, pp. 1–9.

- Huang, G.; Liu, Z.; Van Der Maaten, L.; Weinberger, K.Q. Densely connected convolutional networks. Proceedings of the IEEE conference on computer vision and pattern recognition 2017, pp. 4700–4708.

| Hyperparameter | Optimal Value |

|---|---|

| Patch Size (P) | 16 |

| Projection Dimension (d) | 64 |

| Number of Attention Heads (h) | 8 |

| MLP Dimension () | 128 |

| Number of Layers (L) | 4 |

| Dropout Rate (p) | 0.1 |

| Class | Precision | Recall | F1-score | Support |

|---|---|---|---|---|

| nowildfire | 0.94 | 0.92 | 0.93 | 564 |

| wildfire | 0.94 | 0.95 | 0.94 | 696 |

| accuracy | 0.94 (1260) | |||

| macro avg | 0.94 | 0.94 | 0.94 | 1260 |

| weighted avg | 0.94 | 0.94 | 0.94 | 1260 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).