Submitted:

08 August 2024

Posted:

09 August 2024

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

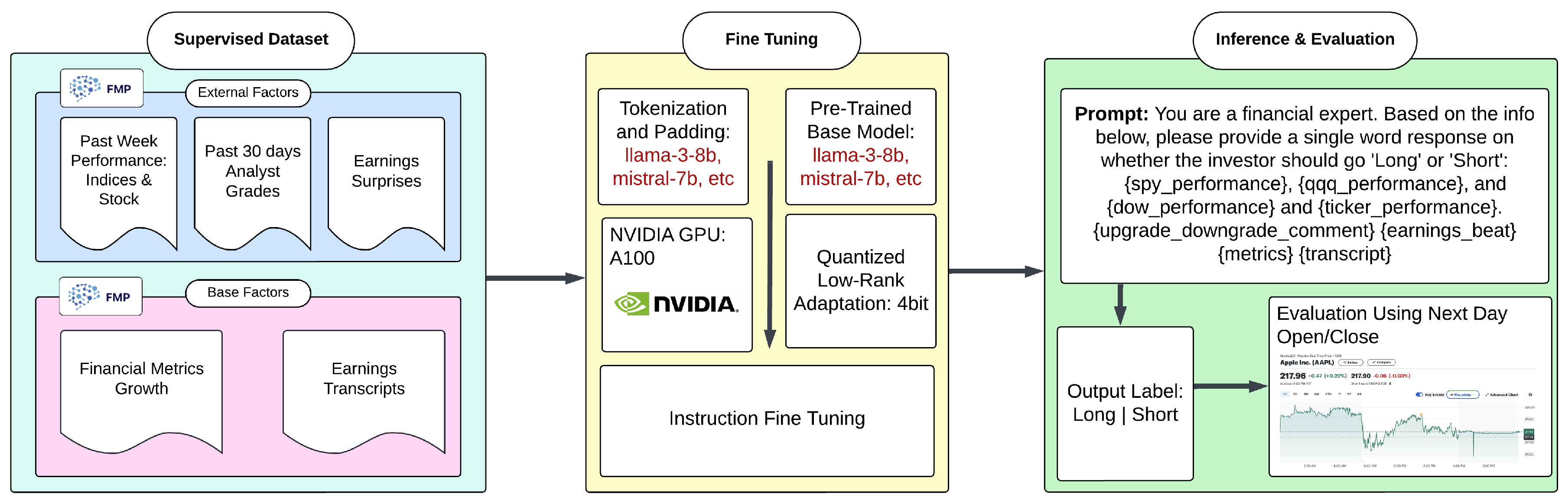

2. Data Collection and Preprocessing

2.1. Dataset Curation

- Market Performance Metrics: For each company, the performance data for the past week from major market indices (SPY, QQQ, and DOW), along with the company’s own stock performance, was collected and aligned with the quarterly earnings date.

- Analyst Grades: A comprehensive list of stock upgrades and downgrades was compiled from various analysts for the months preceding each earnings date, providing insights into market sentiment and expert opinions.

- Earnings Surprises: Instances where companies either beat or missed their estimated Earnings Per Share (EPS) were identified by comparing actual EPS results with estimates.

-

Financial Metrics Growth: This part includes a comparative analysis of financial metrics, contrasting the quarter of the earnings report with the same quarter from the previous year. This encompasses key data points typically presented in earnings reports, including:

- -

- Income statement metrics

- -

- Balance sheet figures

- -

- Cash flow statement data

- Earnings Transcripts: Full earnings call transcripts were collected for each company to capture qualitative data and management insights.

- Output Label: For the study’s outcome metric, we calculated the next day’s stock performance after the earnings announcement. If the opening price is less than the closing price, we label this as `Long’; otherwise, it is labeled as `Short’. This measure serves as the dependent variable in our predictive models.

2.2. Textualization and Tokenization

2.2.1. Market Performance Metrics

- Original data: “SPY -1.5%"

- Textualized form: “In the past week, SPY went down by 1.5%"

2.2.2. Analyst Grades

- Original data: Multiple analyst grades over 30 days

- Aggregated data: Most frequent grade (e.g., “Buy")

- Textualized form: “In the past 30 days, most grading companies suggest buying this stock"

2.2.3. Earnings Surprises

- Original data: “Actual EPS: $2.10, Estimated EPS: $1.95"

- Textualized form: “The company’s reported earnings per share (EPS) were 7.69% higher than the analysts’ consensus estimates"

2.2.4. Financial Metrics Growth

- Original data: “growthNetIncome": 0.046

- Textualized form: “Compared to the same quarter last year, Net Income grew by 4.6%"

3. Method

3.1. Framework

| Model | Base | Full | ||||

|---|---|---|---|---|---|---|

| Accuracy | Weighted F1 | MCC | Accuracy | Weighted F1 | MCC | |

| ChatGPT 4.0 | 0.363 | 0.482 | 0.023 | 0.494 | 0.512 | 0.031 |

| gemma-7b-4bit | 0.541 | 0.468 | 0.135 | 0.542 | 0.442 | 0.178 |

| Phi-3-medium-4k-instruct | 0.559 | 0.469 | 0.224 | 0.560 | 0.471 | 0.227 |

| Phi-3-mini-4k-instruct | 0.548 | 0.478 | 0.154 | 0.557 | 0.494 | 0.175 |

| mistral-7b-4bit | 0.556 | 0.556 | 0.112 | 0.550 | 0.497 | 0.122 |

| mistral-7b-instruct-4bit | 0.549 | 0.472 | 0.168 | 0.534 | 0.532 | 0.070 |

| llama-3-8b-4bit | 0.534 | 0.533 | 0.069 | 0.541 | 0.535 | 0.087 |

| mistral-7b-4bit | 0.542 | 0.497 | 0.114 | 0.544 | 0.536 | 0.089 |

| llama-3-8b-Instruct-4bit | 0.550 | 0.533 | 0.104 | 0.573 | 0.565 | 0.154 |

3.2. Instruction Fine Tuning

3.3. QLoRA

4. Evaluation

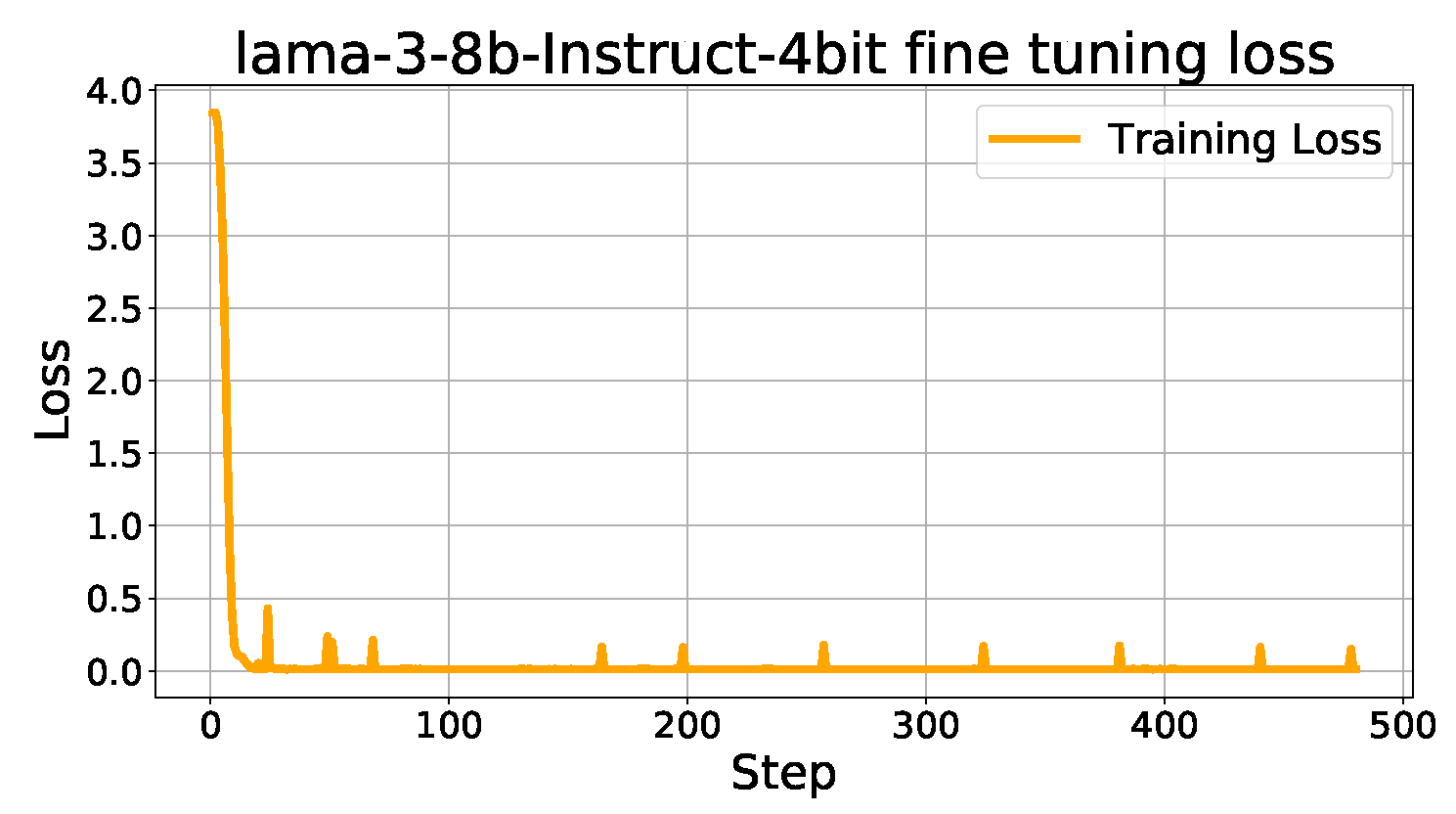

4.1. Model Training

4.2. Baseline Models

4.2.1. ChatGPT

4.3. Performance Analysis

4.3.1. Evaluation Metrics

4.3.2. Comparative Analysis of Model Performance

5. Conclusions and Future Work

References

- Lai, Z.; et al. Language Models are Free Boosters for Biomedical Imaging Tasks. arXiv 2024, arXiv:2403.17343. [Google Scholar]

- Yan, X.; et al. Survival Prediction Across Diverse Cancer Types Using Neural Networks. arXiv 2024, arXiv:2404.08713. [Google Scholar]

- Lai, Z.; Zhang, X.; Chen, S. Adaptive Ensembles of Fine-Tuned Transformers for LLM-Generated Text Detection. arXiv 2024, arXiv:2403.13335. [Google Scholar]

- Su, J.; Jiang, C.; Jin, X. Large Language Models for Forecasting and Anomaly Detection: A Systematic Literature Review. arXiv 2024, arXiv:2402.10350. [Google Scholar]

- Liu, T.; et al. News Recommendation with Attention Mechanism. Journal of Industrial Engineering and Applied Science 2024, 2, 21–26. [Google Scholar]

- Tan, Z.; Peng, J.; Chen, T.; Liu, H. Tuning-Free Accountable Intervention for LLM Deployment–A Metacognitive Approach. arXiv 2024, arXiv:2403.05636. [Google Scholar]

- Tan, Z.; et al. Large Language Models for Data Annotation: A Survey. arXiv 2024, arXiv:2402.13446. [Google Scholar]

- Xiao, M.; Li, Y.; Yan, X.; Gao, M.; Wang, W. Convolutional neural network classification of cancer cytopathology images: taking breast cancer as an example. arXiv 2024, arXiv:2404.08279. [Google Scholar]

- Ruan, K.; Wang, X.; Di, X. From Twitter to Reasoner: Understand Mobility Travel Modes and Sentiment Using Large Language Models. 2024 IEEE 27th International Conference on Intelligent Transportation Systems (ITSC), 2024.

- Jin, Y. GraphCNNpred: A stock market indices prediction using a Graph based deep learning system. arXiv 2024, arXiv:2407.03760. [Google Scholar]

- Guo, F.; Mo, H. A Hybrid Stacking Model for Enhanced Short-Term Load Forecasting. Electronics 2024, 13. [Google Scholar] [CrossRef]

- Zheng, Q.; et al. Advanced Payment Security System: XGBoost, CatBoost and SMOTE Integrated. arXiv 2024, arXiv:2406.04658. [Google Scholar]

- Li, Y.; Yan, X.; Xiao, M.; Wang, W.; Zhang, F. Investigation of Creating Accessibility Linked Data Based on Publicly Available Accessibility Datasets. Proceedings of the 2023 13th International Conference on Communication and Network Security, 2023, pp. 77–81.

- Lipeng, L.; et al. Prioritized experience replay-based DDQN for Unmanned Vehicle Path Planning. arXiv 2024, arXiv:2406.17286. [Google Scholar]

- Jiang, L.; Yu, C.; et al. Advanced AI Framework for Enhanced Detection and Assessment of Abdominal Trauma: Integrating 3D Segmentation with 2D CNN and RNN Models. arXiv 2024, arXiv:2407.16165. [Google Scholar]

- Wang, J.; et al. Predicting Stock Market Trends Using LSTM Networks: Overcoming RNN Limitations for Improved Financial Forecasting. Journal of Computer Science and Software Applications 2024, 4, 1–7. [Google Scholar]

- Wei, Y.; Gu, X.; Feng, Z.; Li, Z.; Sun, M. Feature Extraction and Model Optimization of Deep Learning in Stock Market Prediction. Journal of Computer Technology and Software 2024, 3. [Google Scholar]

- Zhang, B.; Yang, H.; Zhou, T.; Babar, A.; Liu, X.Y. Enhancing Financial Sentiment Analysis via Retrieval Augmented Large Language Models. arXiv 2023, arXiv:2310.04027. [Google Scholar]

- Koa, K.J. ; others. Learning to Generate Explainable Stock Predictions using Self-Reflective Large Language Models. Proceedings of the ACM on Web Conference 2024. ACM, 2024, WWW ’24.

- Gu, W.; Zhong, Y. Predicting Stock Prices with FinBERT-LSTM: Integrating News Sentiment Analysis. arXiv 2024, arXiv:2407.16150. [Google Scholar]

- Yu, C.; et al. Credit Card Fraud Detection Using Advanced Transformer Model. arXiv 2024, arXiv:2406.03733. [Google Scholar]

- Xiong, S.; Payani, A.; Kompella, R.; Fekri, F. Large language models can learn temporal reasoning. arXiv 2024, arXiv:2401.06853. [Google Scholar]

- Song, X.; others. ZeroPrompt: Streaming Acoustic Encoders are Zero-Shot Masked LMs. INTERSPEECH 2023, 2023, pp. 1648–1652.

- Dang, B.; Ma, D.; Li, S.; Qi, Z.; Zhu, E. Deep learning-based snore sound analysis for the detection of night-time breathing disorders. Applied and Computational Engineering 2024, 76, 109–114. [Google Scholar] [CrossRef]

- Xu, L.; Liu, J.; Zhao, H.; Zheng, T.; Jiang, T.; Liu, L. Autonomous Navigation of Unmanned Vehicle Through Deep Reinforcement Learning. arXiv 2024, arXiv:2407.18962. [Google Scholar]

- Mo, K.; Liu, W.; Xu, X.; Yu, C.; Zou, Y.; Xia, F. Fine-Tuning Gemma-7B for Enhanced Sentiment Analysis of Financial News Headlines. arXiv 2024, arXiv:2406.13626. [Google Scholar]

- Ding, Z.; Li, P.; Yang, Q.; Li, S. Enhance Image-to-Image Generation with LLaVA Prompt and Negative Prompt. arXiv 2024, arXiv:2406.01956. [Google Scholar]

- Dai, W.; Tao, J.; Yan, X.; Feng, Z.; Chen, J. Addressing Unintended Bias in Toxicity Detection: An LSTM and Attention-Based Approach. 2023 5th International Conference on Artificial Intelligence and Computer Applications (ICAICA), 2023, pp. 375–379.

- Li, P.; Lin, Y.; Schultz-Fellenz, E. Encoded Hourglass Network for Semantic Segmentation of High Resolution Aerial Imagery. arXiv 2018, arXiv:1810.12813. [Google Scholar]

- Chen, Y.; others. Hadamard adapter: An extreme parameter-efficient adapter tuning method for pre-trained language models. CIKM, 2023.

- Lin, Z.; et al. Text Sentiment Detection and Classification Based on Integrated Learning Algorithm. Applied Science and Engineering Journal for Advanced Research 2024, 3, 27–33. [Google Scholar]

- Tao, Y. Meta Learning Enabled Adversarial Defense. 2023 IEEE International Conference on Sensors, Electronics and Computer Engineering, 2023, pp. 1326–1330.

- Chen, Y. ; others. TemporalMed: Advancing Medical Dialogues with Time-Aware Responses in Large Language Models. Proceedings of the 17th ACM International Conference on Web Search and Data Mining, 2024.

- Ni, H.; Meng, S.; Geng, X.; Li, P.; Li, Z.; Chen, X.; Wang, X.; Zhang, S. Time Series Modeling for Heart Rate Prediction: From ARIMA to Transformers. arXiv 2024, arXiv:2406.12199. [Google Scholar]

- Dettmers, T.; et al. QLoRA: Efficient Finetuning of Quantized LLMs. arXiv 2023, arXiv:2305.14314. [Google Scholar]

- Hu, E.J.; et al. LoRA: Low-Rank Adaptation of Large Language Models. arXiv 2021, arXiv:2106.09685. [Google Scholar]

- Jin, C.; Che, T.; Peng, H.; Li, Y.; Pavone, M. Learning from teaching regularization: Generalizable correlations should be easy to imitate. arXiv 2024, arXiv:2402.02769. [Google Scholar]

- Jin, C.; et al. APEER: Automatic Prompt Engineering Enhances Large Language Model Reranking. arXiv 2024, arXiv:2406.14449. [Google Scholar]

- Wu, Q.; et al. Surveying Attitudinal Alignment Between Large Language Models Vs. Humans Towards 17 Sustainable Development Goals. arXiv 2024, arXiv:2404.13885. [Google Scholar]

- Xu, Q.; Feng, Z.; Gong, C.; Wu, X.; Zhao, H.; Ye, Z.; Li, Z.; Wei, C. Applications of Explainable AI in Natural Language Processing. Global Academic Frontiers 2024, 2, 51–64. [Google Scholar]

- Zhang, Z.; Gu, Y.; Plummer, B. Show, Write, and Retrieve: Entity-aware Article Generation and Retrieval. Findings of the Association for Computational Linguistics: EMNLP 2023, 2023, pp. 8684–8704. [Google Scholar]

- Zhang, Z.; Qin, W.; Plummer, B.A. Machine-generated Text Localization. Findings of the Annual Meeting of the Association for Computational Linguistics (ACL), 2024.

- Deng, Q.; et al. ComposerX: Multi-Agent Symbolic Music Composition with LLMs. arXiv 2024, arXiv:2404.18081. [Google Scholar]

- Tao, Y. SQBA: sequential query-based blackbox attack. Fifth International Conference on Artificial Intelligence and Computer Science, 2017, Vol. 12803, p. 128032Q.

- Hu, T. ; others. Artificial intelligence aspect of transportation analysis using large scale systems. Proceedings of the 2023 6th Artificial Intelligence and Cloud Computing Conference, 2023, pp. 54–59.

- Zhu, W. Optimizing distributed networking with big data scheduling and cloud computing. International Conference on Cloud Computing, Internet of Things, and Computer Applications, 2022, pp. 23–28.

- Zhu, W.; Hu, T. Twitter Sentiment analysis of covid vaccines. 2021 5th International Conference on Artificial Intelligence and Virtual Reality (AIVR), 2021, pp. 118–122.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).