Submitted:

07 August 2024

Posted:

08 August 2024

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

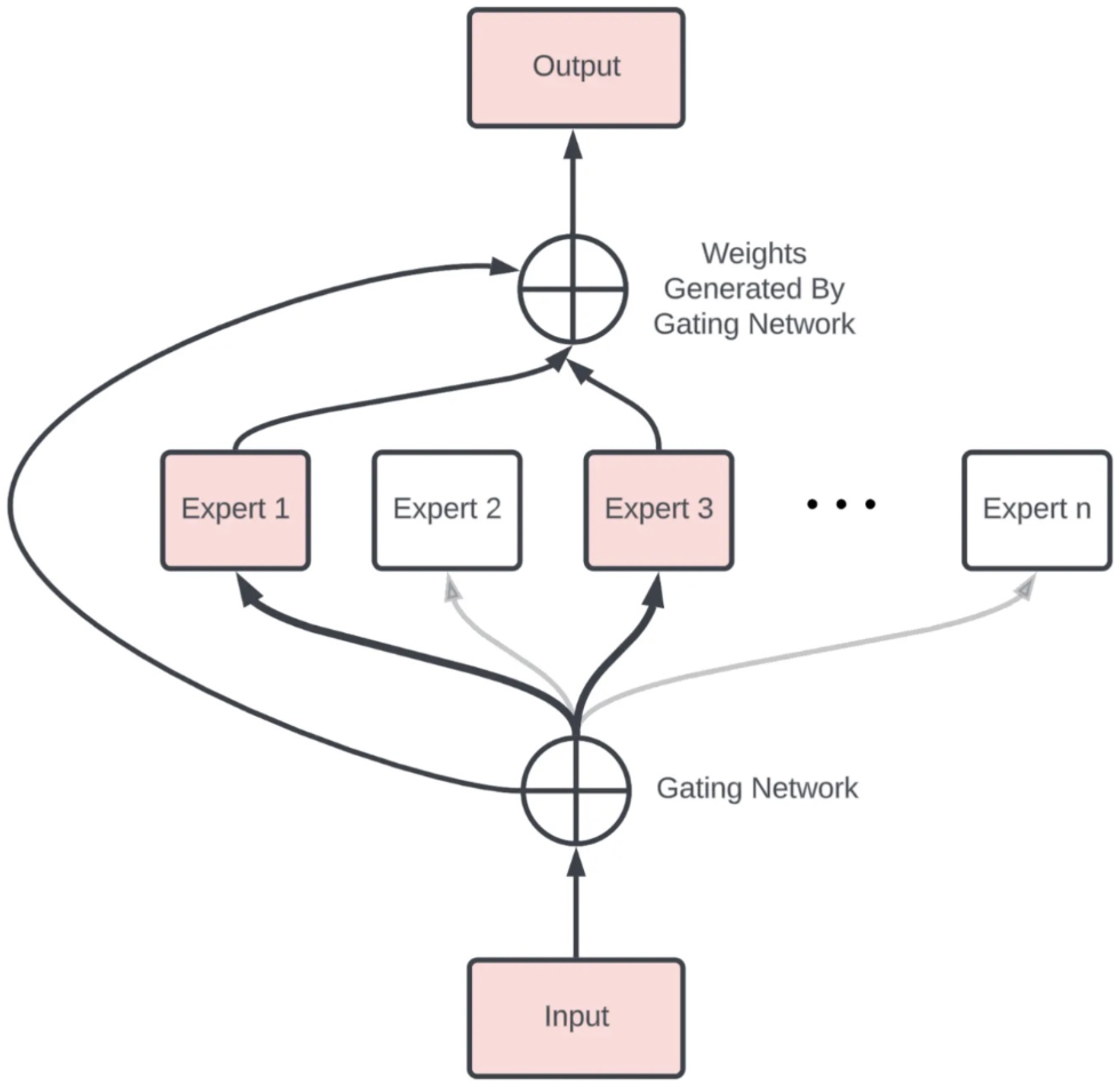

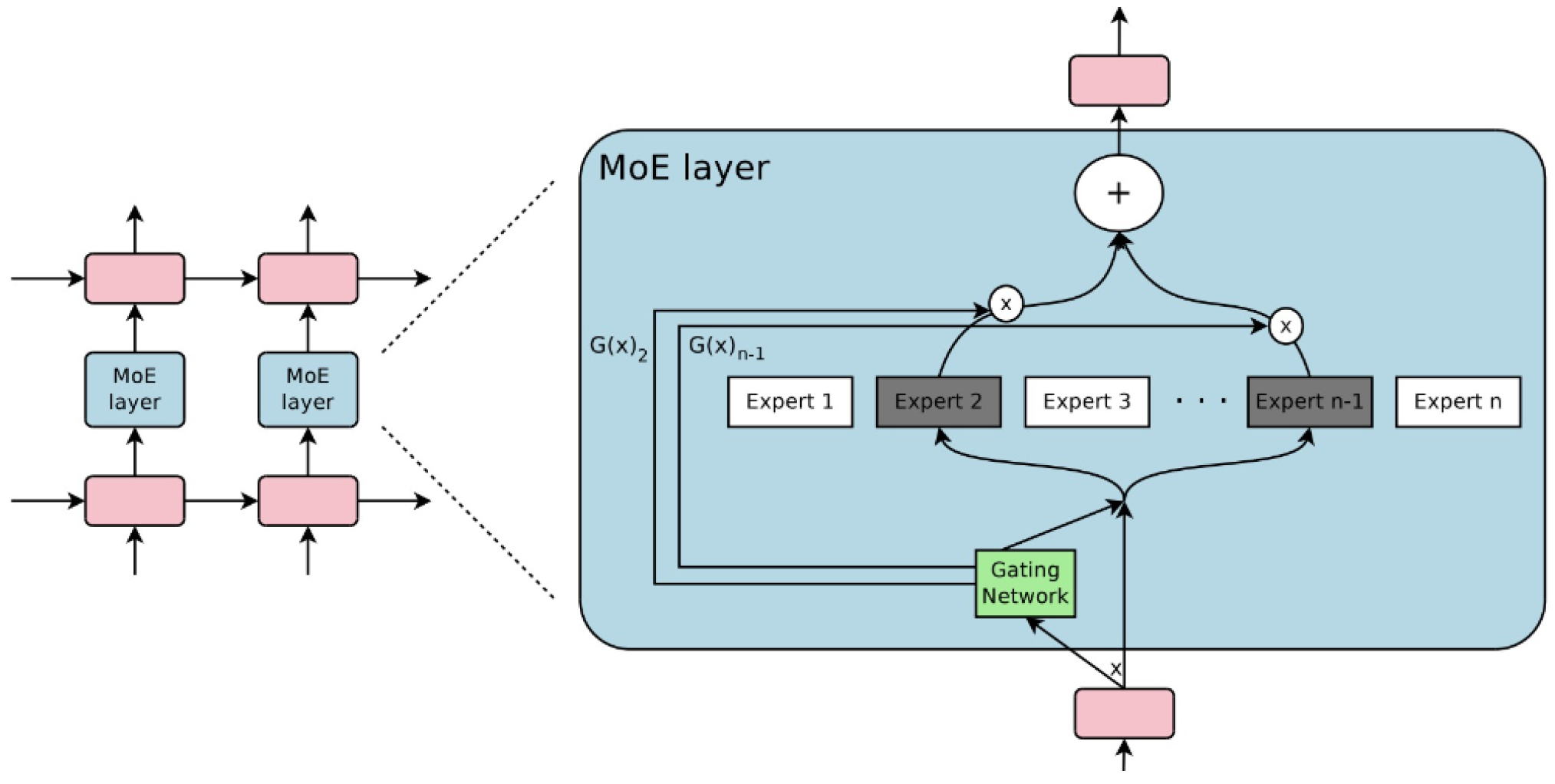

- Division of dataset into local subsets: First, the predictive modeling problem is divided into subtasks. This division often requires domain knowledge or employs an unsupervised clustering algorithm. It’s important to clarify that clustering is not based on the feature vectors’ similarities. Instead, it’s executed based on the correlation among the relationships that the features share with the labels.

- Expert models: These are the specialized neural network layers or experts that are trained to excel at specific sub-tasks. Each expert receives the same input pattern and processes it according to its specialization. An expert is trained for each subset of the data. Typically, the experts themselves can be any model, from Support Vector Machines (SVM) to neural networks. Each expert model receives the same input pattern and makes a prediction.

- Gating network (Router): The gating network, also called the router, is responsible for selecting which experts to use for each input data. It works by estimating the compatibility between the input data and each expert, and then outputs a softmax distribution over the experts. This distribution is used as the weights to combine the outputs of the expert layers. This model helps interpret predictions made by each expert and decide which expert to trust for a given input.

- Pooling method: Finally, an aggregation mechanism is needed to make a prediction based on the output from the gating network and the experts. The gating network and expert layers are jointly trained to minimize the overall loss function of the MoE model. The gating network learns to route each input to the most relevant expert layer(s), while the expert layers specialize in their assigned sub-tasks.

1.1. Gate Functionality

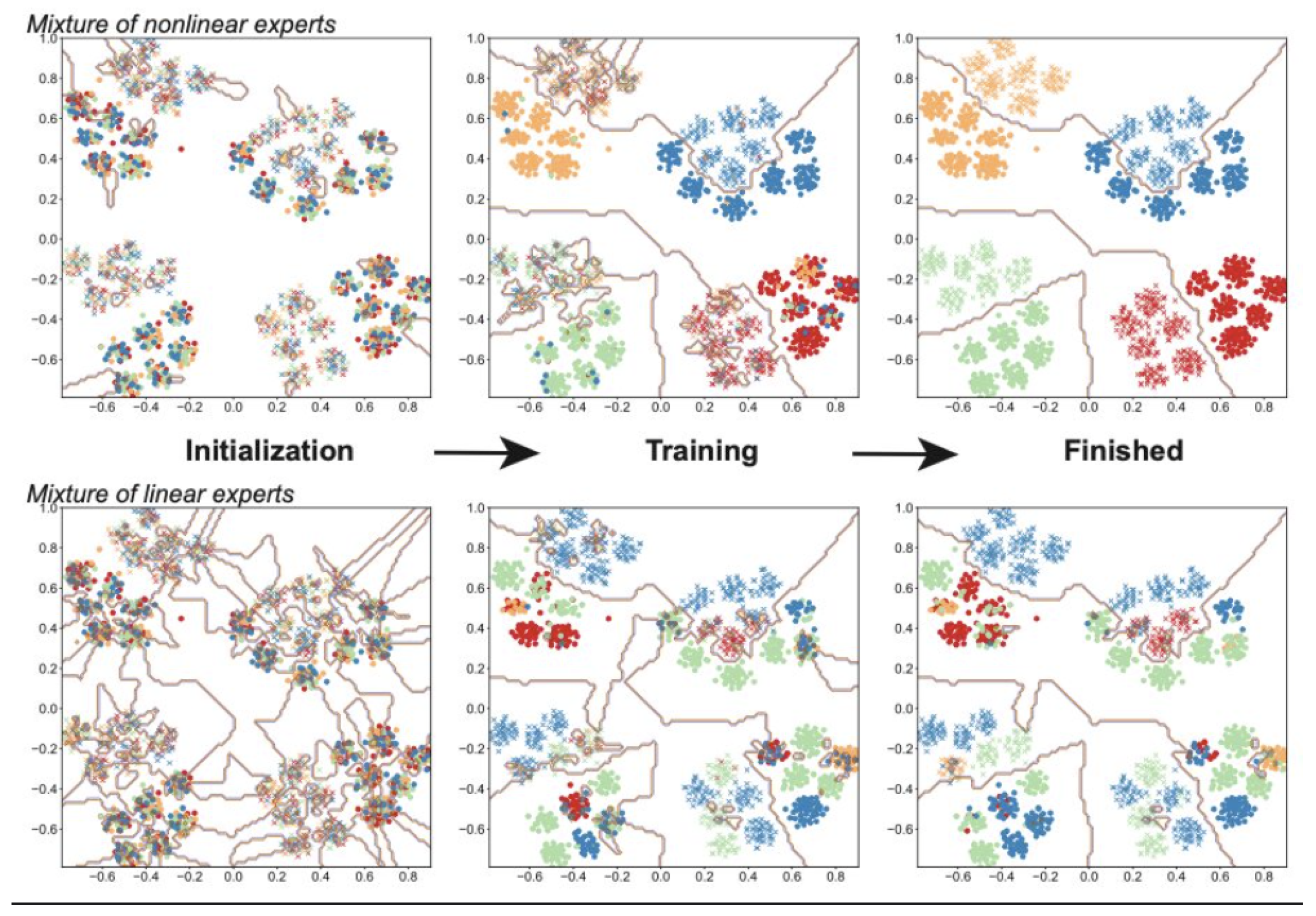

- Clustering the Data: In the context of an MoE model, clustering the data means that the gate is learning to identify and group together similar data points. There is no clustering in the traditional unsupervised learning, where the algorithm discovers clusters without any external labels. The gate learns from the training process to identify features or patterns in the data that indicate which data points are comparable to one another and ought to be handled accordingly. This is a important stage since it establishes the structure and interpretation of the data by the model.

- Mapping Experts to Clusters: when the gate has identified clusters within the data, the next part is to assign or map each cluster to the most appropriate expert within the MoE model. Each expert in the model is specialized to handle different types of data or different aspects of the problem. The gate’s function direct each data point (or each group of similar data points) to the expert that is best suited to process it. The mapping is dynamic and is based on the strengths and specialties of each expert when they evolve during the training process.

1.2. Sparsely Gated

- Modern computing devices like GPUs and TPUs perform better in arithmetic operations than in network branching.

- Larger batch sizes benefit performance but are reduced by conditional computation.

- Network bandwidth can limit computational efficiency, notably affecting embedding layers.

- Some schemes might need loss terms to attain required sparsity levels, impacting model quality and load balance.

- Model capacity is vital for handling vast data sets, a challenge that current conditional computation literature doesn’t adequately address.

2. Related Works

- Modern computing devices like GPUs and TPUs perform better in arithmetic operations than in network branching.

- Larger batch sizes benefit performance but are reduced by conditional computation.

- Network bandwidth can limit computational efficiency, notably affecting embedding layers.

- Some schemes might need loss terms to attain required sparsity levels, impacting model quality and load balance.

- Model capacity is vital for handling vast data sets, a challenge that current conditional computation literature doesn’t adequately address.

2.1. Expert Choice Routing

- Expert Capacity: is the Capacity of the expert, representing the maximum number of tokens it can handle.

- Capacity Factor: Capacity factor, a scalar that adjusts the effective capacity of each expert.

- Gating Network: Gating probability or decision for the token to be routed to the , It can be binary (0 or 1) or a continuous value between 0 and 1.

2.2. Load Balancing

-

Loss Function Component: The loss function in MoEs typically includes a term to encourage load balancing. This term penalizes the model when the load is unevenly distributed across the experts. The loss function can be expressed as:

- (a)

- : The primary task-specific loss (e.g., cross-entropy loss for classification).

- (b)

- : A penalty term to ensure experts are used evenly.

- (c)

- : A hyperparameter to control the importance of the load balancing term.

-

One common approach is to use the entropy of the expert selection probabilities to encourage a uniform distribution:

- (a)

- where is the probability of selecting the expert, and N is the total number of experts

- Potential Solutions for Load Balancing

-

Several strategies can be employed to address load balancing in MoEs:

-

Regularization Terms in Loss Function

- -

- Include terms in the loss function that penalize uneven expert utilization.

- -

- Use entropy-based regularization to encourage a uniform distribution of expert usage.

-

Gating Networks

- -

- Use a sophisticated gating mechanism to ensure more balanced expert selection.

- -

- Implement gating networks that consider the historical usage of experts to avoid over-reliance on specific experts.

-

Expert Capacity Constraints

- -

- Set a maximum capacity for each expert, limiting the number of inputs an expert can handle

- -

- Dynamically adjust the capacity based on the current load to distribute the work more evenly.

-

Routing Strategies

- -

- Employ advanced routing strategies that distribute inputs more evenly among experts.

- -

- Use probabilistic or learned routing to decide which experts should handle which inputs.

-

MegaBlocks Approach

- -

- Proposed in MegaBlocks: Efficient Sparse Training with Mixture-of-Experts, MegaBlocks introduces block-wise parallelism and a structured sparse approach to balance the load.

- -

- It partitions the model into blocks and uses efficient algorithms to ensure even distribution of computational load across these blocks.

-

2.3. MegaBlocks Handling of Load Balancing

-

Block-wise Parallelism

- The model is divided into smaller, manageable blocks.

- Each block can be processed in parallel, which helps distribute the computational load more evenly.

-

Sparse Activation

- MegaBlocks uses structured sparsity to activate only a subset of the model for each input.

- This reduces the computational cost and ensures that no single expert or block is overwhelmed with too much load.

-

Efficient Load Distribution Algorithms

- Advanced algorithms are employed to monitor and adjust the load distribution in real-time.

- These algorithms ensure that experts within each block are utilized evenly, preventing any single expert from becoming a bottleneck.

-

Dynamic Capacity Adjustment

- MegaBlocks can dynamically adjust the capacity of each expert based on the current load.

- This dynamic adjustment helps in redistributing the workload as needed, maintaining a balanced utilization of experts

2.4. Expert Specialization

2.5. Token Dropping

-

Causes of Token Dropping

- Imbalanced Expert Activation:When the gating network disproportionately routes most tokens to a few experts, some tokens may end up not being assigned to any expert, effectively getting “dropped”.

- Capacity Constraints: If the model enforces strict capacity limits on how many tokens each expert can handle, some tokens might be left out when these limits are reached.

- Inefficient Gating Mechanism:A gating mechanism that does not adequately distribute tokens across experts can cause some tokens to be neglected.

-

Mitigation Strategies for Token Dropping

- Enhanced Gating Mechanism:Improve the gating network to ensure a more balanced distribution of tokens across experts.Use soft gating mechanisms that allow for more flexible expert selection, reducing the chances of token dropping.

- Regularization Techniques: Incorporate regularization terms in the loss function that penalize the model for uneven expert activation or for dropping tokens.Use load balancing regularization to ensure a more uniform token distribution.

- Dynamic Capacity Allocation:Allow for dynamic adjustment of the capacity of each expert based on the current load.Implement adaptive capacity constraints to prevent experts from becoming overloaded while ensuring that all tokens receive attention.

- Token Routing Strategies:Develop advanced token routing algorithms that ensure every token is assigned to at least one expert.Use probabilistic routing to distribute tokens more evenly among experts.

-

Specific Approaches to Mitigate Token Dropping

- Auxiliary Loss Functions:Introduce auxiliary loss functions that specifically penalize token dropping. For instance, a penalty can be added for tokens that do not get assigned to any expert.

- Token Coverage Mechanisms: Implement token coverage mechanisms that ensure every token is attended to by at least one expert. This can involve ensuring that the sum of the gating probabilities for each token is above a certain threshold.

- MegaBlocks Approach:MegaBlocks can help mitigate token dropping by utilizing block-wise parallelism and structured sparsity. Each block is responsible for a subset of the model’s computation, and by structuring the activation sparsity, it ensures that all tokens are processed by some experts within the active blocks.

- Diversity Promoting Techniques:Use techniques that promote diversity in expert selection. This can include using entropy maximization in the gating decisions to ensure a more diverse set of experts is activated for different tokens.

- Regular Monitoring and Adjustment:Continuously monitor the distribution of tokens across experts and adjust the gating network or capacity constraints accordingly.Use feedback mechanisms to dynamically adjust the gating probabilities and ensure a balanced expert activation.

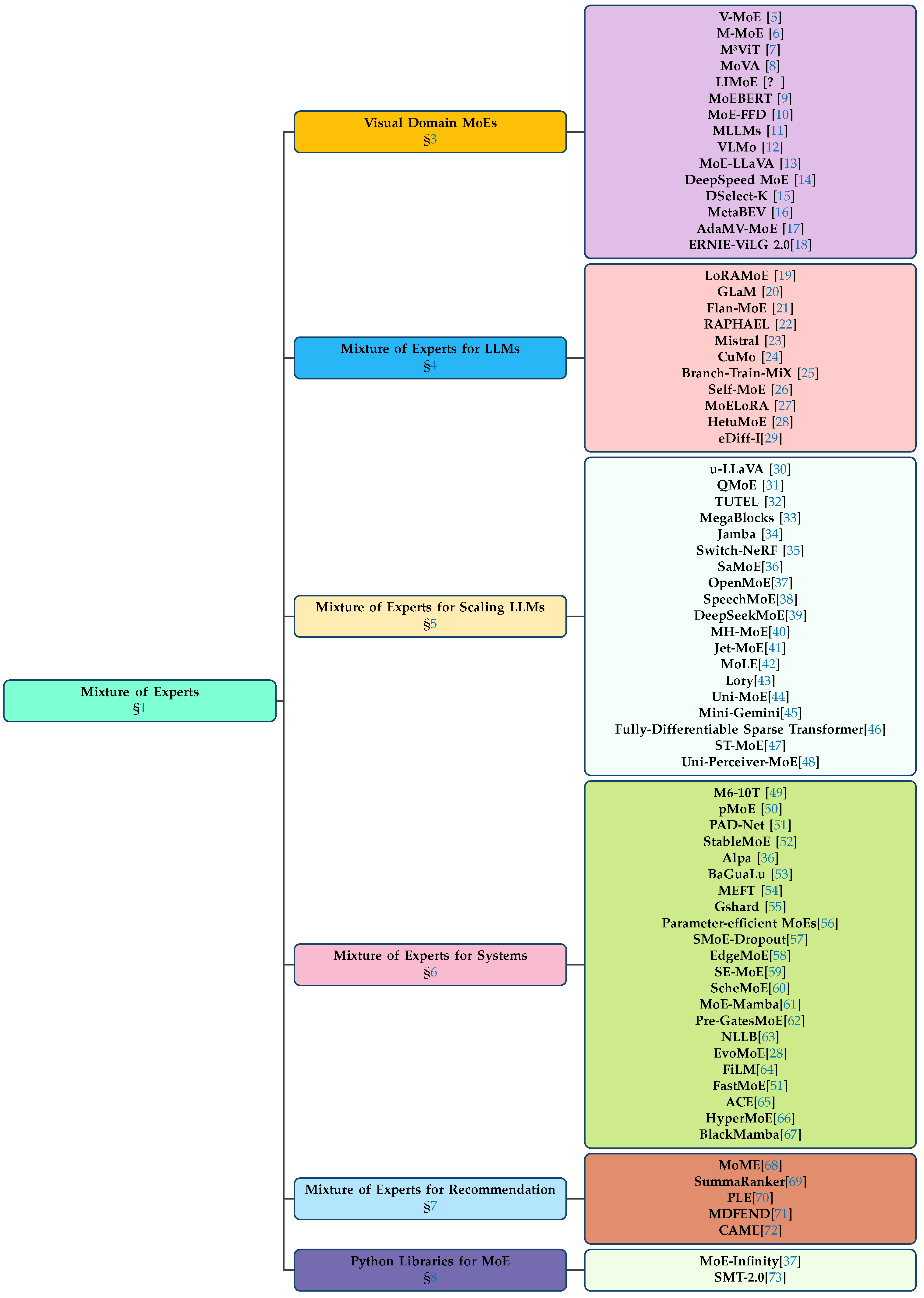

3. Visual Domain-Specific Implementations of Mixture of Experts

4. Harnessing Expert Networks for Advanced Language Understanding

5. LLM Expansion via Specialized Expert Networks

6. MoE: Enhancing System Performance and Efficiency

7. Integrating Mixture of Experts into Recommendation Algorithms

8. Python Libraries for MoE

9. Conclusions

References

- Jacobs, R.A.; Jordan, M.I.; Nowlan, S. Adaptive Mixtures of Local Experts. Neural Computation 1991, 3, 79–87. [Google Scholar] [CrossRef] [PubMed]

- Aung, M.N.; Phyo, Y.; Do, C.M.; Ogata, K. A Divide and Conquer Approach to Eventual Model Checking. Mathematics 2021, 9. [Google Scholar] [CrossRef]

- Shazeer, N.; Mirhoseini, A.; Maziarz, K.; Davis, A.; Le, Q.; Hinton, G.; Dean, J. Outrageously Large Neural Networks: The Sparsely-Gated Mixture-of-Experts Layer, 2017, [arXiv:cs.LG/1701.06538].

- Eigen, D.; Ranzato, M.; Sutskever, I. Learning Factored Representations in a Deep Mixture of Experts, 2014, [arXiv:cs.LG/1312.4314].

- Riquelme, C.; Puigcerver, J.; Mustafa, B.; Neumann, M.; Jenatton, R.; Pinto, A.S.; Keysers, D.; Houlsby, N. Scaling Vision with Sparse Mixture of Experts, 2021, [arXiv:cs.CV/2106.05974].

- Ma, J.; Zhao, Z.; Yi, X.; Chen, J.; Hong, L.; Chi, E.H. Modeling task relationships in multi-task learning with multi-gate mixture-of-experts. Proceedings of the 24th ACM SIGKDD international conference on knowledge discovery & data mining, 2018, pp. 1930–1939.

- Liang, H.; Fan, Z.; Sarkar, R.; Jiang, Z.; Chen, T.; Zou, K.; Cheng, Y.; Hao, C.; Wang, Z. M3ViT: Mixture-of-Experts Vision Transformer for Efficient Multi-task Learning with Model-Accelerator Co-design. arXiv 2022, arXiv:abs/2210.14793. [Google Scholar]

- Zong, Z.; Ma, B.; Shen, D.; Song, G.; Shao, H.; Jiang, D.; Li, H.; Liu, Y. MoVA: Adapting Mixture of Vision Experts to Multimodal Context, 2024, [arXiv:cs.CV/2404.13046].

- Zuo, S.; Zhang, Q.; Liang, C.; He, P.; Zhao, T.; Chen, W. MoEBERT: from BERT to Mixture-of-Experts via Importance-Guided Adaptation. North American Chapter of the Association for Computational Linguistics, 2022.

- Kong, C.; Luo, A.; Bao, P.; Yu, Y.; Li, H.; Zheng, Z.; Wang, S.; Kot, A.C. MoE-FFD: Mixture of Experts for Generalized and Parameter-Efficient Face Forgery Detection, 2024, [2404.08452].

- Wang, A.J.; Li, L.; Lin, Y.; Li, M.; Wang, L.; Shou, M.Z. Leveraging Visual Tokens for Extended Text Contexts in Multi-Modal Learning, 2024, [2406.02547].

- Bao, H.; Wang, W.; Dong, L.; Liu, Q.; Mohammed, O.K.; Aggarwal, K.; Som, S.; Wei, F. VLMo: Unified Vision-Language Pre-Training with Mixture-of-Modality-Experts, 2022, [arXiv:cs.CV/2111.02358].

- Lin, B.; Tang, Z.; Ye, Y.; Cui, J.; Zhu, B.; Jin, P.; Huang, J.; Zhang, J.; Ning, M.; Yuan, L. MoE-LLaVA: Mixture of Experts for Large Vision-Language Models, 2024, [arXiv:cs.CV/2401.15947].

- Rajbhandari, S.; Li, C.; Yao, Z.; Zhang, M.; Aminabadi, R.Y.; Awan, A.A.; Rasley, J.; He, Y. DeepSpeed-MoE: Advancing Mixture-of-Experts Inference and Training to Power Next-Generation AI Scale. Proceedings of the 39th International Conference on Machine Learning, 2022, pp. 18332–18346.

- Hazimeh, H.; Zhao, Z.; Chowdhery, A.; Sathiamoorthy, M.; Chen, Y.; Mazumder, R.; Hong, L.; Chi, E. DSelect-k: Differentiable Selection in the Mixture of Experts with Applications to Multi-Task Learning. Advances in Neural Information Processing Systems; Ranzato, M.; Beygelzimer, A.; Dauphin, Y.; Liang, P.; Vaughan, J.W., Eds. Curran Associates, Inc., 2021, Vol. 34, pp. 29335–29347.

- Ge, C.; Chen, J.; Xie, E.; Wang, Z.; Hong, L.; Lu, H.; Li, Z.; Luo, P. MetaBEV: Solving Sensor Failures for 3D Detection and Map Segmentation. Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2023, pp. 8721–8731.

- Chen, T.; Chen, X.; Du, X.; Rashwan, A.; Yang, F.; Chen, H.; Wang, Z.; Li, Y. AdaMV-MoE: Adaptive Multi-Task Vision Mixture-of-Experts. 2023 IEEE/CVF International Conference on Computer Vision (ICCV) 2023, pp. 17300–17311.

- Feng, Z.; Zhang, Z.; Yu, X.; Fang, Y.; Li, L.; Chen, X.; Lu, Y.; Liu, J.; Yin, W.; Feng, S.; Sun, Y.; Chen, L.; Tian, H.; Wu, H.; Wang, H. ERNIE-ViLG 2.0: Improving Text-to-Image Diffusion Model With Knowledge-Enhanced Mixture-of-Denoising-Experts. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2023, pp. 10135–10145.

- Dou, S.; Zhou, E.; Liu, Y.; Gao, S.; Zhao, J.; Shen, W.; Zhou, Y.; Xi, Z.; Wang, X.; Fan, X.; Pu, S.; Zhu, J.; Zheng, R.; Gui, T.; Zhang, Q.; Huang, X. LoRAMoE: Alleviate World Knowledge Forgetting in Large Language Models via MoE-Style Plugin, 2024, [arXiv:cs.CL/2312.09979].

- Du, N.; Huang, Y.; Dai, A.M.; Tong, S.; Lepikhin, D.; Xu, Y.; Krikun, M.; Zhou, Y.; Yu, A.W.; Firat, O.; Zoph, B.; Fedus, L.; Bosma, M.; Zhou, Z.; Wang, T.; Wang, Y.E.; Webster, K.; Pellat, M.; Robinson, K.; Meier-Hellstern, K.; Duke, T.; Dixon, L.; Zhang, K.; Le, Q.V.; Wu, Y.; Chen, Z.; Cui, C. GLaM: Efficient Scaling of Language Models with Mixture-of-Experts, 2022, [arXiv:cs.CL/2112.06905].

- Shen, S.; Hou, L.; Zhou, Y.; Du, N.; Longpre, S.; Wei, J.; Chung, H.W.; Zoph, B.; Fedus, W.; Chen, X.; Vu, T.; Wu, Y.; Chen, W.; Webson, A.; Li, Y.; Zhao, V.; Yu, H.; Keutzer, K.; Darrell, T.; Zhou, D. Mixture-of-Experts Meets Instruction Tuning:A Winning Combination for Large Language Models, 2023, [arXiv:cs.CL/2305.14705].

- Xue, Z.; Song, G.; Guo, Q.; Liu, B.; Zong, Z.; Liu, Y.; Luo, P. RAPHAEL: Text-to-Image Generation via Large Mixture of Diffusion Paths, 2024, [arXiv:cs.CV/2305.18295].

- Jiang, A.Q.; Sablayrolles, A.; Roux, A.; Mensch, A.; Savary, B.; Bamford, C.; Chaplot, D.S.; de las Casas, D.; Hanna, E.B.; Bressand, F.; Lengyel, G.; Bour, G.; Lample, G.; Lavaud, L.R.; Saulnier, L.; Lachaux, M.A.; Stock, P.; Subramanian, S.; Yang, S.; Antoniak, S.; Scao, T.L.; Gervet, T.; Lavril, T.; Wang, T.; Lacroix, T.; Sayed, W.E. Mixtral of Experts, 2024, [arXiv:cs.LG/2401.04088].

- Li, J.; Wang, X.; Zhu, S.; Kuo, C.W.; Xu, L.; Chen, F.; Jain, J.; Shi, H.; Wen, L. CuMo: Scaling Multimodal LLM with Co-Upcycled Mixture-of-Experts, 2024, [arXiv:cs.CV/2405.05949].

- Sukhbaatar, S.; Golovneva, O.; Sharma, V.; Xu, H.; Lin, X.V.; Rozière, B.; Kahn, J.; Li, D.; tau Yih, W.; Weston, J.; Li, X. Branch-Train-MiX: Mixing Expert LLMs into a Mixture-of-Experts LLM, 2024, [2403.07816].

- Kang, J.; Karlinsky, L.; Luo, H.; Wang, Z.; Hansen, J.; Glass, J.; Cox, D.; Panda, R.; Feris, R.; Ritter, A. Self-MoE: Towards Compositional Large Language Models with Self-Specialized Experts, 2024, [2406.12034].

- Liu, Q.; Wu, X.; Zhao, X.; Zhu, Y.; Xu, D.; Tian, F.; Zheng, Y. When MOE Meets LLMs: Parameter Efficient Fine-tuning for Multi-task Medical Applications, 2024, [arXiv:cs.CL/2310.18339].

- Nie, X.; Zhao, P.; Miao, X.; Zhao, T.; Cui, B. HetuMoE: An Efficient Trillion-scale Mixture-of-Expert Distributed Training System. 2022; arXiv:cs.DC/2203.14685. [Google Scholar]

- Balaji, Y.; Nah, S.; Huang, X.; Vahdat, A.; Song, J.; Zhang, Q.; Kreis, K.; Aittala, M.; Aila, T.; Laine, S.; Catanzaro, B.; Karras, T.; Liu, M.Y. eDiff-I: Text-to-Image Diffusion Models with an Ensemble of Expert Denoisers. 2023; arXiv:cs.CV/2211.01324. [Google Scholar]

- Xu, J.; Xu, L.; Yang, Y.; Li, X.; Wang, F.; Xie, Y.; Huang, Y.J.; Li, Y. u-LLaVA: Unifying Multi-Modal Tasks via Large Language Model. 2024; arXiv:cs.CV/2311.05348. [Google Scholar]

- Frantar, E.; Alistarh, D. QMoE: Practical Sub-1-Bit Compression of Trillion-Parameter Models. 2023; arXiv:cs.LG/2310.16795. [Google Scholar]

- Hwang, C.; Cui, W.; Xiong, Y.; Yang, Z.; Liu, Z.; Hu, H.; Wang, Z.; Salas, R.; Jose, J.; Ram, P.; Chau, J.; Cheng, P.; Yang, F.; Yang, M.; Xiong, Y. Tutel: Adaptive Mixture-of-Experts at Scale. arXiv, 2022; arXiv:abs/2206.03382. [Google Scholar]

- Gale, T.; Narayanan, D.; Young, C.; Zaharia, M. MegaBlocks: Efficient Sparse Training with Mixture-of-Experts. 2022; arXiv:cs.LG/2211.15841. [Google Scholar]

- Lieber, O.; Lenz, B.; Bata, H.; Cohen, G.; Osin, J.; Dalmedigos, I.; Safahi, E.; Meirom, S.; Belinkov, Y.; Shalev-Shwartz, S.; Abend, O.; Alon, R.; Asida, T.; Bergman, A.; Glozman, R.; Gokhman, M.; Manevich, A.; Ratner, N.; Rozen, N.; Shwartz, E.; Zusman, M.; Shoham, Y. Jamba: A Hybrid Transformer-Mamba Language Model. 2024; arXiv:cs.CL/2403.19887. [Google Scholar]

- Mi, Z.; Xu, D. Switch-NeRF: Learning Scene Decomposition with Mixture of Experts for Large-scale Neural Radiance Fields. International Conference on Learning Representations, 2023.

- Zheng, L.; Li, Z.; Zhang, H.; Zhuang, Y.; Chen, Z.; Huang, Y.; Wang, Y.; Xu, Y.; Zhuo, D.; Xing, E.P.; Gonzalez, J.E.; Stoica, I. Alpa: Automating Inter- and Intra-Operator Parallelism for Distributed Deep Learning. 2022; arXiv:cs.LG/2201.12023. [Google Scholar]

- Xue, F.; Zheng, Z.; Fu, Y.; Ni, J.; Zheng, Z.; Zhou, W.; You, Y. OpenMoE: An Early Effort on Open Mixture-of-Experts Language Models. 2024; arXiv:cs.CL/2402.01739. [Google Scholar]

- You, Z.; Feng, S.; Su, D.; Yu, D. SpeechMoE: Scaling to Large Acoustic Models with Dynamic Routing Mixture of Experts. 2021; arXiv:cs.SD/2105.03036. [Google Scholar]

- Dai, D.; Deng, C.; Zhao, C.; Xu, R.X.; Gao, H.; Chen, D.; Li, J.; Zeng, W.; Yu, X.; Wu, Y.; Xie, Z.; Li, Y.K.; Huang, P.; Luo, F.; Ruan, C.; Sui, Z.; Liang, W. DeepSeekMoE: Towards Ultimate Expert Specialization in Mixture-of-Experts Language Models. 2024; arXiv:cs.CL/2401.06066. [Google Scholar]

- Wu, X.; Huang, S.; Wang, W.; Wei, F. Multi-Head Mixture-of-Experts. 2024; arXiv:cs.CL/2404.15045. [Google Scholar]

- Shen, Y.; Guo, Z.; Cai, T.; Qin, Z. JetMoE: Reaching Llama2 Performance with 0.1M Dollars. 2024; arXiv:cs.CL/2404.07413. [Google Scholar]

- Wu, X.; Huang, S.; Wei, F. Mixture of LoRA Experts. 2024; arXiv:cs.CL/2404.13628. [Google Scholar]

- Zhong, Z.; Xia, M.; Chen, D.; Lewis, M. Lory: Fully Differentiable Mixture-of-Experts for Autoregressive Language Model Pre-training. 2024; arXiv:cs.CL/2405.03133. [Google Scholar]

- Li, Y.; Jiang, S.; Hu, B.; Wang, L.; Zhong, W.; Luo, W.; Ma, L.; Zhang, M. Uni-MoE: Scaling Unified Multimodal LLMs with Mixture of Experts. 2024; arXiv:cs.AI/2405.11273. [Google Scholar]

- Li, Y.; Zhang, Y.; Wang, C.; Zhong, Z.; Chen, Y.; Chu, R.; Liu, S.; Jia, J. Mini-Gemini: Mining the Potential of Multi-modality Vision Language Models, 2024, [2403.18814].

- Puigcerver, J.; Riquelme, C.; Mustafa, B.; Houlsby, N. From Sparse to Soft Mixtures of Experts. 2023; arXiv:cs.LG/2308.00951. [Google Scholar]

- Zoph, B.; Bello, I.; Kumar, S.; Du, N.; Huang, Y.; Dean, J.; Shazeer, N.; Fedus, W. ST-MoE: Designing Stable and Transferable Sparse Expert Models. 2022; arXiv:cs.CL/2202.08906. [Google Scholar]

- Zhu, J.; Zhu, X.; Wang, W.; Wang, X.; Li, H.; Wang, X.; Dai, J. Uni-Perceiver-MoE: Learning Sparse Generalist Models with Conditional MoEs. Advances in Neural Information Processing Systems; Koyejo, S.; Mohamed, S.; Agarwal, A.; Belgrave, D.; Cho, K.; Oh, A., Eds. Curran Associates, Inc., 2022, Vol. 35, pp. 2664–2678.

- Lin, J.; Yang, A.; Bai, J.; Zhou, C.; Jiang, L.; Jia, X.; Wang, A.; Zhang, J.; Li, Y.; Lin, W.; Zhou, J.; Yang, H. M6-10T: A Sharing-Delinking Paradigm for Efficient Multi-Trillion Parameter Pretraining. 2021; arXiv:cs.LG/2110.03888. [Google Scholar]

- Chowdhury, M.N.R.; Zhang, S.; Wang, M.; Liu, S.; Chen, P.Y. Patch-level Routing in Mixture-of-Experts is Provably Sample-efficient for Convolutional Neural Networks. 2023; arXiv:cs.LG/2306.04073. [Google Scholar]

- He, S.; Ding, L.; Dong, D.; Liu, B.; Yu, F.; Tao, D. PAD-Net: An Efficient Framework for Dynamic Networks. 2023; arXiv:cs.LG/2211.05528. [Google Scholar]

- Dai, D.; Dong, L.; Ma, S.; Zheng, B.; Sui, Z.; Chang, B.; Wei, F. StableMoE: Stable Routing Strategy for Mixture of Experts. 2022; arXiv:cs.LG/2204.08396. [Google Scholar]

- Ma, Z.; He. BaGuaLu: targeting brain scale pretrained models with over 37 million cores; PPoPP ’22; Association for Computing Machinery: New York, NY, USA, 2022; pp. 192–204. [Google Scholar] [CrossRef]

- Hao, J.; Sun, W.; Xin, X.; Meng, Q.; Chen, Z.; Ren, P.; Ren, Z. MEFT: Memory-Efficient Fine-Tuning through Sparse Adapter, 2024, [2406.04984].

- Lepikhin, D.; Lee, H.; Xu, Y.; Chen, D.; Firat, O.; Huang, Y.; Krikun, M.; Shazeer, N.; Chen, Z. {GS}hard: Scaling Giant Models with Conditional Computation and Automatic Sharding. International Conference on Learning Representations, 2021.

- Zadouri, T.; Üstün, A.; Ahmadian, A.; Ermiş, B.; Locatelli, A.; Hooker, S. Pushing Mixture of Experts to the Limit: Extremely Parameter Efficient MoE for Instruction Tuning. 2023; arXiv:cs.CL/2309.05444. [Google Scholar]

- Chen, T.; Zhang, Z.A.; Jaiswal, A.; Liu, S.; Wang, Z. Sparse MoE as the New Dropout: Scaling Dense and Self-Slimmable Transformers. arXiv 2023, arXiv:abs/2303.01610. [Google Scholar]

- Yi, R.; Guo, L.; Wei, S.; Zhou, A.; Wang, S.; Xu, M. EdgeMoE: Fast On-Device Inference of MoE-based Large Language Models. ArXiv 2023, abs/2308.14352.

- Shen, L.; Wu, Z.; Gong, W.; Hao, H.; Bai, Y.; Wu, H.; Wu, X.; Xiong, H.; Yu, D.; Ma, Y. SE-MoE: A Scalable and Efficient Mixture-of-Experts Distributed Training and Inference System. ArXiv 2022, abs/2205.10034.

- Shi, S.; Pan, X.; Wang, Q.; Liu, C.; Ren, X.; Hu, Z.; Yang, Y.; Li, B.; Chu, X. ScheMoE: An Extensible Mixture-of-Experts Distributed Training System with Tasks Scheduling. Proceedings of the Nineteenth European Conference on Computer Systems 2024. [Google Scholar]

- Pi’oro, M.; Ciebiera, K.; Kr’ol, K.; Ludziejewski, J.; Jaszczur, S. MoE-Mamba: Efficient Selective State Space Models with Mixture of Experts. ArXiv 2024, abs/2401.04081.

- Hwang, R.; Wei, J.; Cao, S.; Hwang, C.; Tang, X.; Cao, T.; Yang, M.; Rhu, M. Pre-gated MoE: An Algorithm-System Co-Design for Fast and Scalable Mixture-of-Expert Inference. ArXiv 2023, abs/2308.12066.

- Team, N.; Costa-jussà, M.R.; Cross, J.; Çelebi, O.; Elbayad, M. No Language Left Behind: Scaling Human-Centered Machine Translation. 2022; arXiv:cs.CL/2207.04672. [Google Scholar]

- Zhou, T.; MA, Z.; wang, x.; Wen, Q.; Sun, L.; Yao, T.; Yin, W.; Jin, R. FiLM: Frequency improved Legendre Memory Model for Long-term Time Series Forecasting. Advances in Neural Information Processing Systems; Koyejo, S.; Mohamed, S.; Agarwal, A.; Belgrave, D.; Cho, K.; Oh, A., Eds. Curran Associates, Inc., 2022, Vol. 35, pp. 12677–12690.

- Cai, J.; Wang, Y.; Hwang, J.N. ACE: Ally Complementary Experts for Solving Long-Tailed Recognition in One-Shot. Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2021, pp. 112–121.

- Zhao, H.; Qiu, Z.; Wu, H.; Wang, Z.; He, Z.; Fu, J. HyperMoE: Towards Better Mixture of Experts via Transferring Among Experts. 2024; arXiv:cs.LG/2402.12656. [Google Scholar]

- Anthony, Q.; Tokpanov, Y.; Glorioso, P.; Millidge, B. BlackMamba: Mixture of Experts for State-Space Models. 2024; arXiv:cs.CL/2402.01771. [Google Scholar]

- Xu, J.; Sun, L.; Zhao, D. MoME: Mixture-of-Masked-Experts for Efficient Multi-Task Recommendation. Proceedings of the 47th International ACM SIGIR Conference on Research and Development in Information Retrieval; SIGIR ’24; Association for Computing Machinery: New York, NY, USA, 2024; pp. 2527–2531. [Google Scholar] [CrossRef]

- Ravaut, M.; Joty, S.; Chen, N.F. SummaReranker: A Multi-Task Mixture-of-Experts Re-ranking Framework for Abstractive Summarization. 2023; arXiv:cs.CL/2203.06569. [Google Scholar]

- Tang, H.; Liu, J.; Zhao, M.; Gong, X. Progressive Layered Extraction (PLE): A Novel Multi-Task Learning (MTL) Model for Personalized Recommendations. Proceedings of the 14th ACM Conference on Recommender Systems; RecSys ’20; Association for Computing Machinery: New York, NY, USA, 2020; pp. 269–278. [Google Scholar] [CrossRef]

- Nan, Q.; Cao, J.; Zhu, Y.; Wang, Y.; Li, J. MDFEND: Multi-domain Fake News Detection. In Proceedings of the 30th ACM International Conference on Information & Knowledge Management; CIKM ’21; Association for Computing Machinery: New York, NY, USA, 2021; pp. 3343–3347. [Google Scholar] [CrossRef]

- Guo, J.; Cai, Y.; Bi, K.; Fan, Y.; Chen, W.; Zhang, R.; Cheng, X. CAME: Competitively Learning a Mixture-of-Experts Model for First-stage Retrieval 2024. [CrossRef]

- Saves, P.; Lafage, R.; Bartoli, N.; Diouane, Y.; Bussemaker, J.; Lefebvre, T.; Hwang, J.T.; Morlier, J.; Martins, J.R. SMT 2.0: A Surrogate Modeling Toolbox with a focus on hierarchical and mixed variables Gaussian processes. Advances in Engineering Software. [CrossRef]

- Chen, Z.; Deng, Y.; Wu, Y.; Gu, Q.; Li, Y. Towards Understanding Mixture of Experts in Deep Learning. 2022; arXiv:cs.LG/2208.02813. [Google Scholar]

- Mustafa, B.; Riquelme, C.; Puigcerver, J.; Jenatton, R.; Houlsby, N. Multimodal Contrastive Learning with LIMoE: the Language-Image Mixture of Experts. ArXiv 2022, abs/2206.02770.

- Hu, E.J.; Shen, Y.; Wallis, P.; Allen-Zhu, Z.; Li, Y.; Wang, S.; Wang, L.; Chen, W. LoRA: Low-Rank Adaptation of Large Language Models. 2021; arXiv:cs.CL/2106.09685. [Google Scholar]

- Touvron, H.; Martin, L.; Stone, K.; Albert, P.; Almahairi, A.; Babaei, Y.; Bashlykov, N.; Batra, S.; Bhargava, P.; Bhosale, S.; Bikel, D.; Blecher, L.; Ferrer, C.C.; Chen, M.; Cucurull, G.; Esiobu, D.; Fernandes, J.; Fu, J.; Fu, W.; Fuller, B.; Gao, C.; Goswami, V.; Goyal, N.; Hartshorn, A. Llama 2: Open Foundation and Fine-Tuned Chat Models. 2023; arXiv:cs.CL/2307.09288. [Google Scholar]

- Gao, Z.F.; Liu, P.; Zhao, W.X.; Lu, Z.Y.; Wen, J.R. Parameter-Efficient Mixture-of-Experts Architecture for Pre-trained Language Models. 2022; arXiv:cs.CL/2203.01104. [Google Scholar]

- He, J.; Qiu, J.; Zeng, A.; Yang, Z.; Zhai, J.; Tang, J. FastMoE: A Fast Mixture-of-Expert Training System. 2021; arXiv:cs.LG/2103.13262. [Google Scholar]

- Xue, L. MoE-Infinity: Activation-Aware Expert Offloading for Efficient MoE Serving, 2024.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).