Submitted:

07 August 2024

Posted:

11 August 2024

Read the latest preprint version here

Abstract

Keywords:

I. Introduction

II. Theoretical Overview

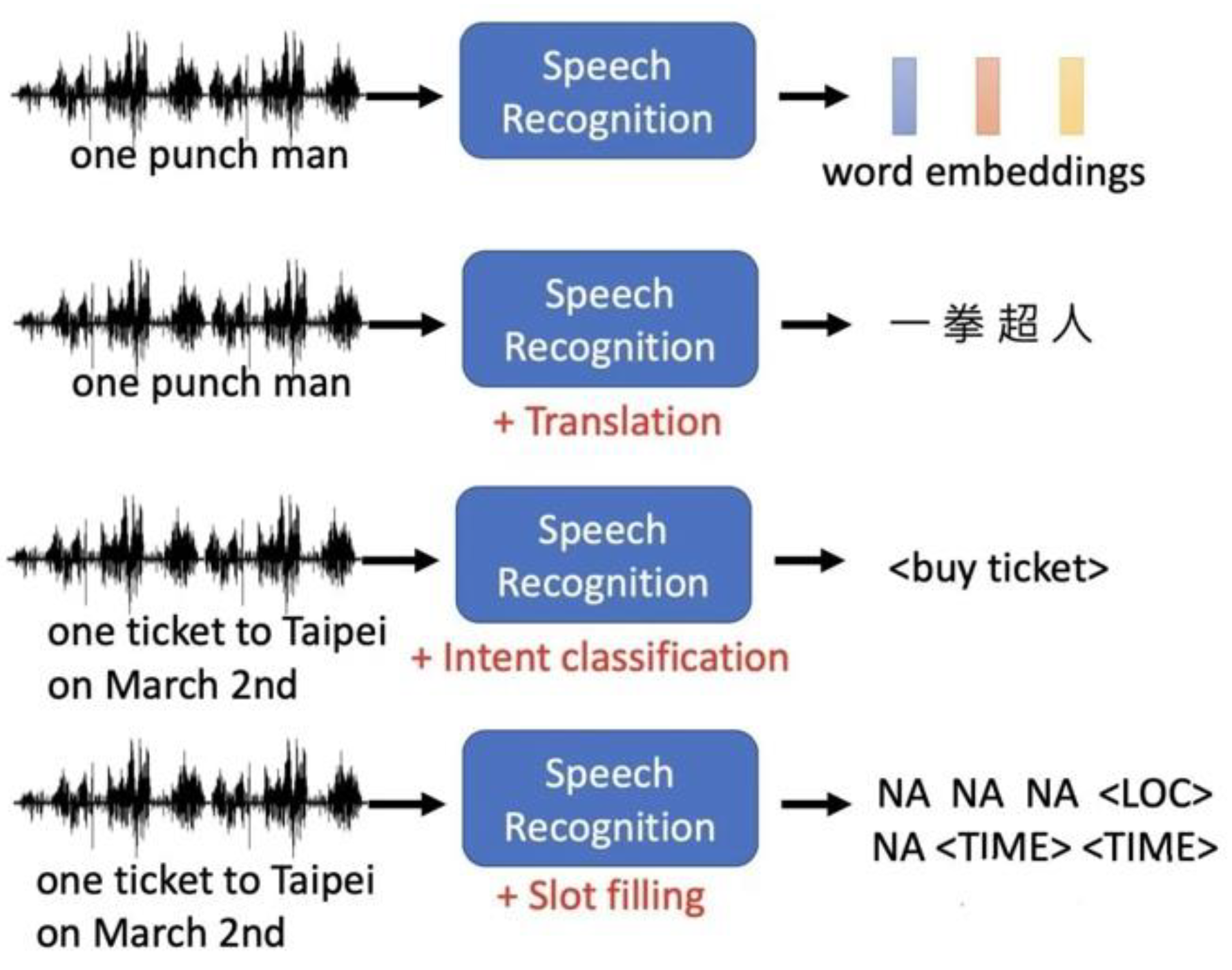

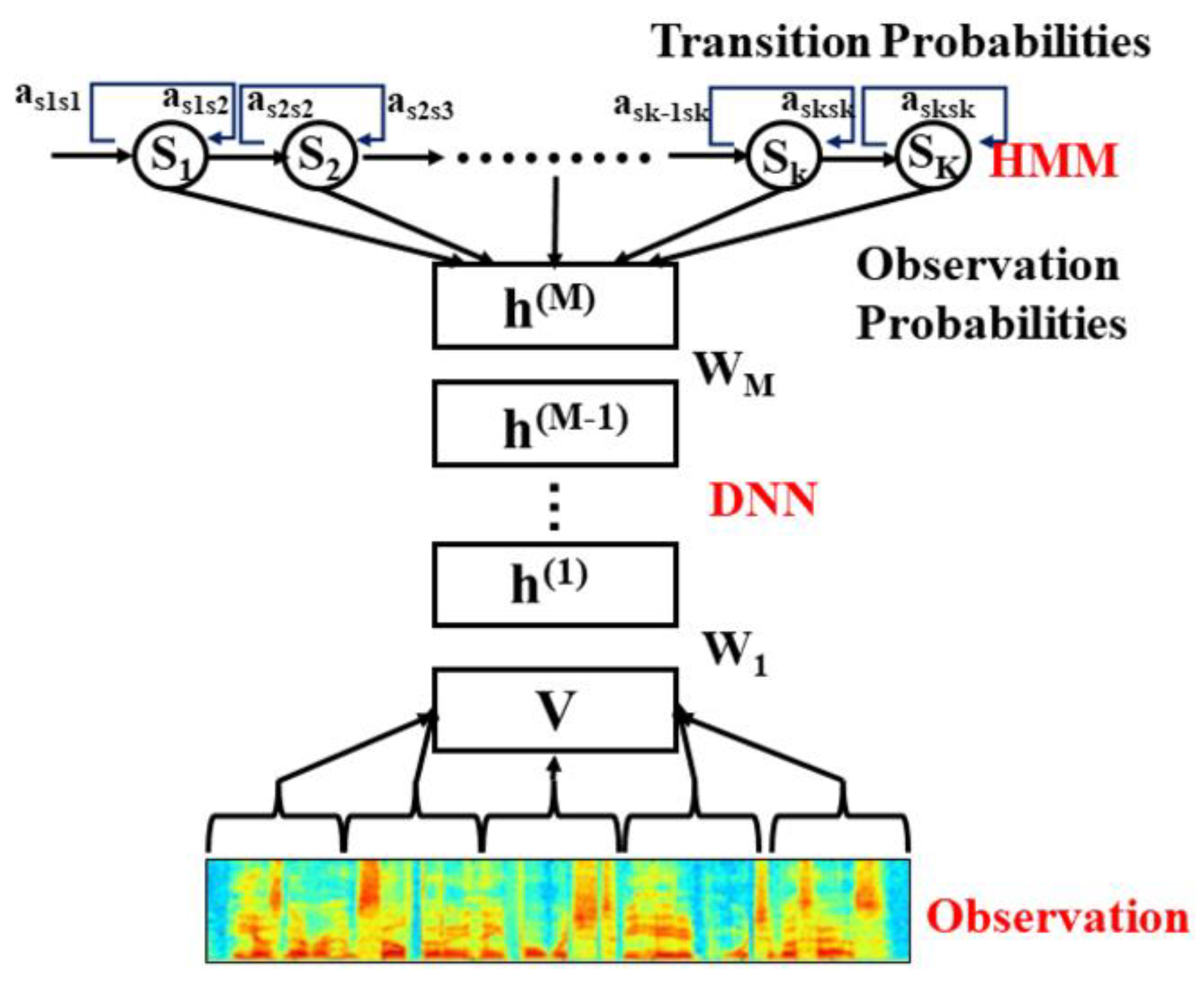

A. Basic Speech Recognition Technology

B. Deep Learning Tehnologies and Large Language Models in Applications

C. Application of Deep Learning Technology in Speech Recognition

D. The Role of Large Language Models in Speech Recognition

III. Integration Method of deep learning and large language model

A. Design Principles and Architecture of Integrated Model

B. Process of Integration Implementation

IV. Result Analysis

A. Experimental Setup

- TIMIT dataset: Contains 6300 sentences from different dialects of American English, recorded by 438 speakers. Each sample is provided with detailed phoneme-level annotation for training and testing the accuracy of acoustic models.

- LibriSpeech data set: It is a larger data set, containing 1,000 hours of English speech, recorded by 2,428 speakers from different backgrounds, divided into two recording environments: clear and noisy, used to evaluate the model in different listening environments performance under conditions.

- Common Voice data set: A multilingual data set provided by Mozilla, containing more than 2,000 hours of recordings covering multiple languages and accents, used to test the multilingual adaptability of the model.

B. Performance Evaluation and Analysis

C. Discussion

V. Conclusion

References

- Zraibi, B.; Okar, C.; Chaoui, H.; Mansouri, M. Remaining useful life assessment for lithium-ion batteries using CNN-LSTM-DNN hybrid method. IEEE Trans. Veh. Technol. 2021, 70, 4252–4261. [Google Scholar] [CrossRef]

- Zhao, C.; Zhu, G.; Wang, J. The enlightenment brought by ChatGPT to large language models and new development ideas for multi-modal large models. Data Anal. Knowl. Discov. 2023, 7, 26–35. [Google Scholar]

- Wang, N.; Ye, Y.; Liu, L.; et al. Research progress on language models based on deep learning. J. Softw. 2020, 32, 1082–1115. [Google Scholar]

- Wang, S.; Zhang, L.; Yang, H.; et al. Analysis on the research progress of deep learning language models. J. Agric. Libr. Inf. Technol. 2023, 1–15. [Google Scholar]

- Wang Jianxin, Wang Ziya, Tian Xuan. Review of natural scene text detection and recognition based on deep learning. J. Softw. 2020, 31, 1465–1496. [Google Scholar]

- Wang Xinya, Hua Guang, Jiang Hao, et al. A review of copyright protection research on deep learning models. J. Netw. Inf. Secur. 2022, 8, 1–14. [Google Scholar]

- Jin, X.; Wang, Y. Understand Legal Documents with Contextualized Large Language Models. arXiv 2023, arXiv:2303.12135. [Google Scholar]

- Mo, Y.; Qin, H.; Dong, Y.; Zhu, Z.; Li, Z. Large Language Model (LLM) AI Text Generation Detection based on Transformer Deep Learning Algorithm. Int. J. Eng. Mgmt. Res. 2024, 14, 154–159. [Google Scholar]

- Zou, H.P.; Samuel, V.; Zhou, Y.; Zhang, W.; Fang, L.; Song, Z.; Caragea, C. ImplicitAVE: An Open-Source Dataset and Multimodal LLMs Benchmark for Implicit Attribute Value Extraction. arXiv 2024, arXiv:2404.15592. [Google Scholar]

- Dong, Z.; Chen, B.; Liu, X.; Polak, P.; Zhang, P. Musechat: A conversational music recommendation system for videos. arXiv 2023, arXiv:2310.06282. [Google Scholar]

- Li, Z.; Yu, H.; Xu, J.; Liu, J.; Mo, Y. Stock market analysis and prediction using LSTM: A case study on technology stocks. Innov. Appl. Eng. Technol. 2023, 1–6. [Google Scholar] [CrossRef]

- Jia, Q.; Liu, Y.; Wu, D.; Xu, S.; Liu, H.; Fu, J.; Wang, B. (2023, July). KG-FLIP: Knowledge-guided Fashion-domain Language-Image Pre-training for E-commerce. In Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 5: Industry Track) (pp. 81-88).

- Liang, J.; Li, S.; Cao, B.; Jiang, W.; He, C. Omnilytics: A blockchain-based secure data market for decentralized machine learning. arXiv 2021, arXiv:2107.05252. [Google Scholar]

- Wang, C.; Yang, Y.; Li, R.; Sun, D.; Cai, R.; Zhang, Y.; Floyd, L. (2024). Adapting llms for efficient context processing through soft prompt compression. arXiv preprint . arXiv:2404.04997.

- Wang, Y.; Su, J.; Lu, H.; Xie, C.; Liu, T.; Yuan, J.; Yang, H. (2023). LEMON: Lossless model expansion. arXiv preprint. arXiv:2310.07999.

- Feng, W.; Zhang, W.; Meng, M.; Gong, Y.; Gu, F. (2023, June). A Novel Binary Classification Algorithm for Carpal Tunnel Syndrome Detection Using LSTM. In 2023 IEEE 3rd International Conference on Software Engineering and Artificial Intelligence (SEAI) (pp. 143-147). IEEE.

- Zhou, Y.; Li, X.; Wang, Q.; Shen, J. (2024). Visual In-Context Learning for Large Vision-Language Models. arXiv preprint. arXiv:2402.11574.

- Jin, Y.; Choi, M.; Verma, G.; Wang, J.; Kumar, S. (2024). MM-Soc: Benchmarking Multimodal Large Language Models in Social Media Platforms. arXiv preprint. arXiv:2402.14154.

- Liu, W.; Cheng, S.; Zeng, D.; Qu, H. (2023). Enhancing document-level event argument extraction with contextual clues and role relevance. arXiv preprint. arXiv:2310.05991.

- Han, G.; Liu, W.; Huang, X.; Borsari, B. (2024). Chain-of-Interaction: Enhancing Large Language Models for Psychiatric Behavior Understanding by Dyadic Contexts. arXiv preprint. arXiv:2403.13786.

- Mo, Y.; Qin, H.; Dong, Y.; Zhu, Z.; Li, Z. (2024). Large language model (llm) ai text generation detection based on transformer deep learning algorithm. arXiv preprint. arXiv:2405.06652.

- Xu, W.; Chen, J.; Ding, Z.; Wang, J. (2024). Text Sentiment Analysis and Classification Based on Bidirectional Gated Recurrent Units (GRUs) Model. arXiv preprint. arXiv:2404.17123.

- Han, G.; Tsao, J.; Huang, X. (2024). Length-Aware Multi-Kernel Transformer for Long Document Classification. arXiv preprint. arXiv:2405.07052.

- Tan, Z.; Beigi, A.; Wang, S.; Guo, R.; Bhattacharjee, A.; Jiang, B.; Liu, H. (2024). Large Language Models for Data Annotation: A Survey. arXiv preprint. arXiv:2402.13446.

- Yuan, B.; Chen, Y.; Tan, Z.; Jinyan, W.; Liu, H.; Zhang, Y. (2024). Label Distribution Learning-Enhanced Dual-KNN for Text Classification. In Proceedings of the 2024 SIAM International Conference on Data Mining (SDM) (pp. 400-408). Society for Industrial and Applied Mathematics.

- Xie, T.; Wan, Y.; Huang, W.; Zhou, Y.; Liu, Y.; Linghu, Q.; Hoex, B. (2023). Large language models as master key: unlocking the secrets of materials science with GPT. arXiv preprint. arXiv:2304.02213.

- Xie, T.; Wan, Y.; Huang, W.; Yin, Z.; Liu, Y.; Wang, S.; Hoex, B. (2023). Darwin series: Domain specific large language models for natural science. arXiv preprint. arXiv:2308.13565.

- Zhen Tan, Tianlong Chen, Zhenyu Zhang, and Huan Liu. 2024. Sparsity-guided holistic explanation for llms with interpretable inference-time intervention. In Proceedings of the AAAI Conference on Artificial Intelligence, Vol. 38. 2161921627.

- Yang, S.; Zhao, Y.; Gao, H. (2024, May). Using Large Language Models in Real Estate Transactions: A Few-shot Learning Approach. arXiv preprint arXiv:2404.

- Zhao, Y.; Gao, H.; Yang, S. (2024, June). Utilizing Large Language Models to Analyze Common Law Contract Formation. OSF Preprints. [CrossRef]

- Li, K.; Zhu, A.; Zhao, P.; Song, J.; Liu, J. (2024). Utilizing Deep Learning to Optimize Software Development Processes. arXiv preprint. arXiv:2404.13630.

- Weng, Y.; Wu, J. Big data and machine learning in defence. Int. J. Comput. Sci. Inf. Technol. 2024, 16, 25–35. [Google Scholar] [CrossRef]

- Chen, Z.; Ge, J.; Zhan, H.; Huang, S.; Wang, D. (2021). Pareto self-supervised training for few-shot learning. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 13663-13672).

| data set | Model type | WER (%) | RTF |

| TIMIT | baseline model | 18.5 | 0.09 |

| TIMIT | integrated model | 15.2 | 0.07 |

| LibriSpeech | baseline model | 10.3 | 0.12 |

| LibriSpeech | integrated model | 8.4 | 0.10 |

| Common Voice | baseline model | 22.0 | 0.15 |

| Common Voice | integrated model | 17.8 | 0.11 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).