Submitted:

03 July 2024

Posted:

04 July 2024

You are already at the latest version

Abstract

Keywords:

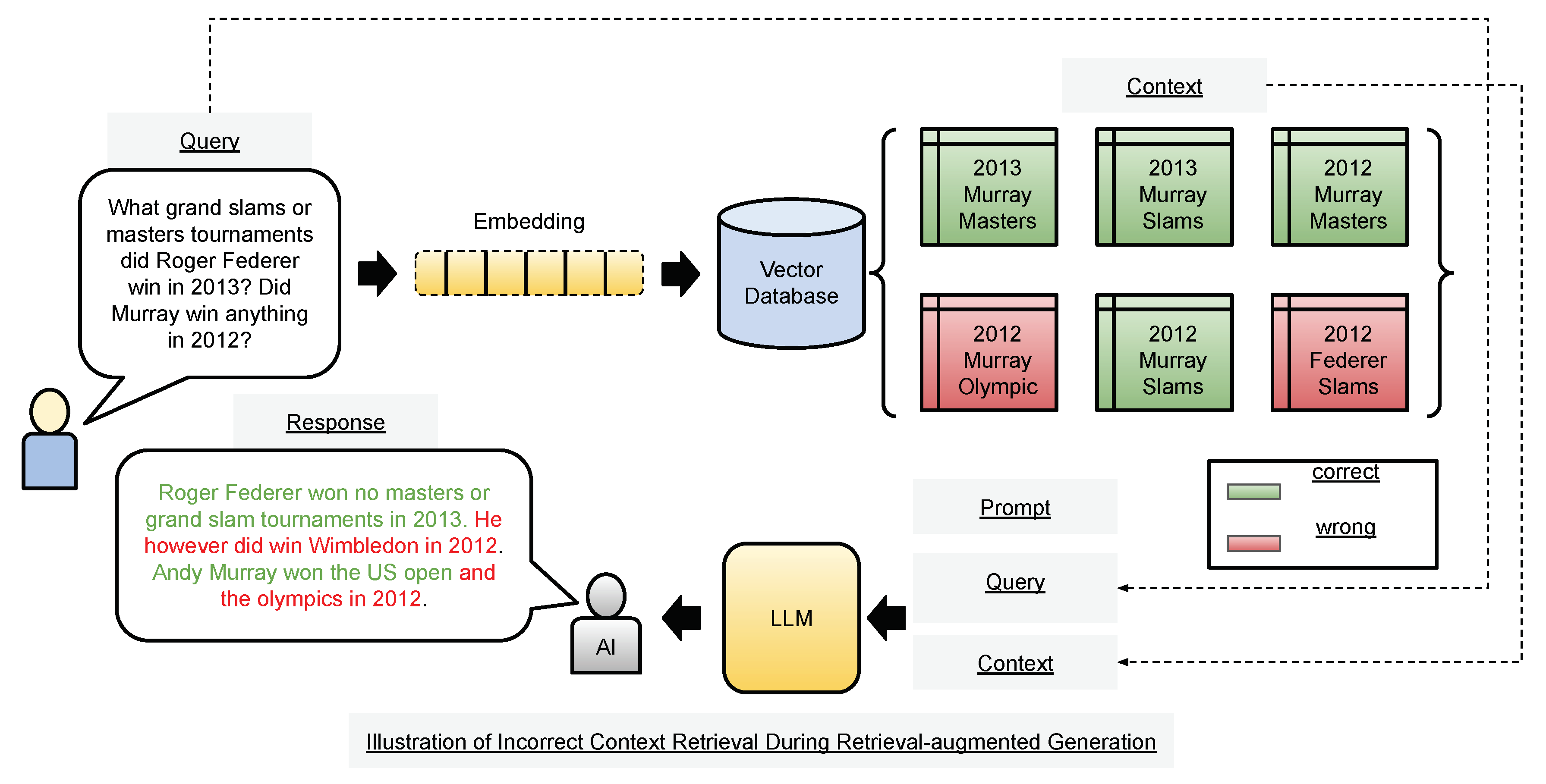

1. Introduction

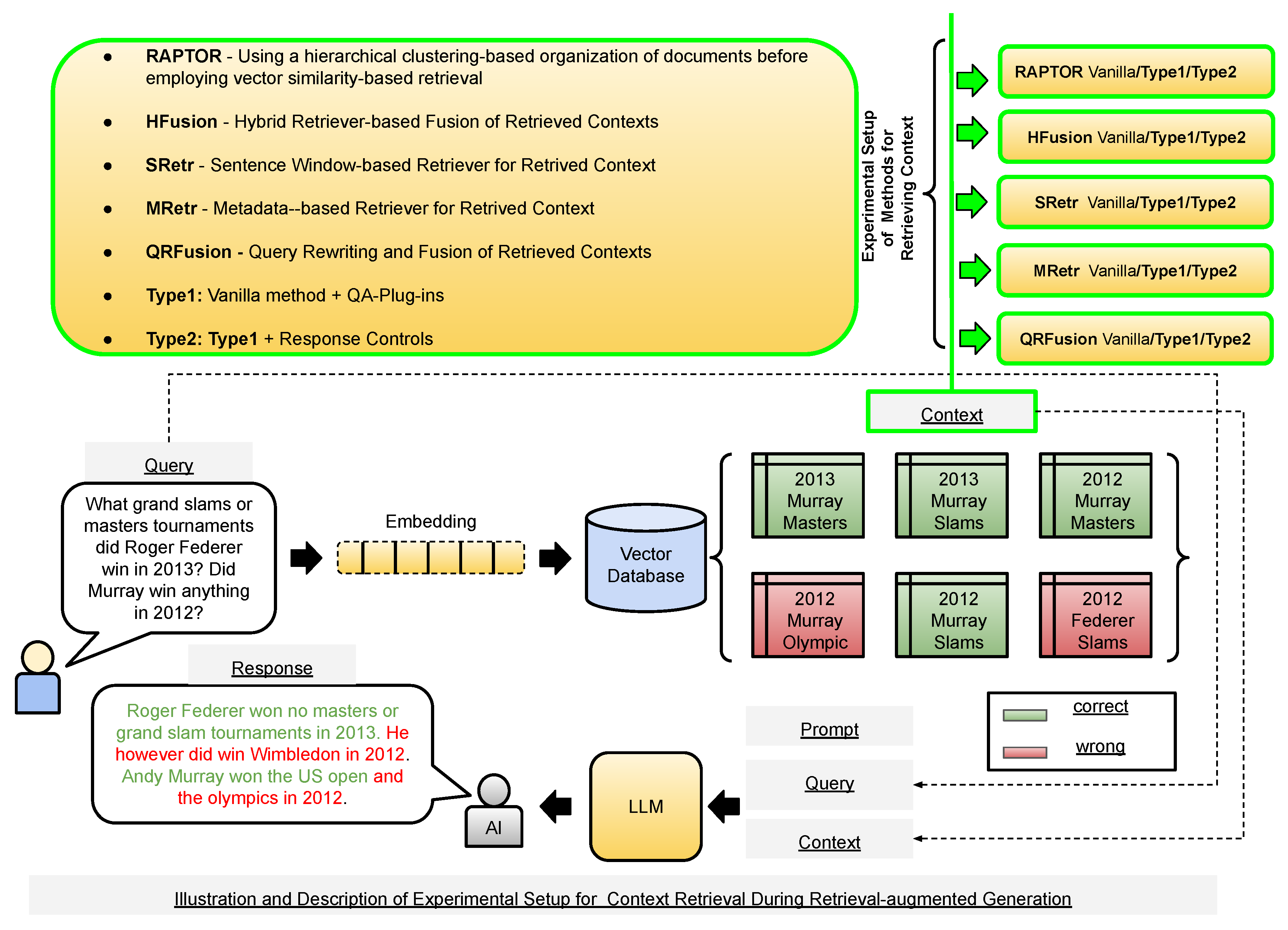

2. Current RAG Methods

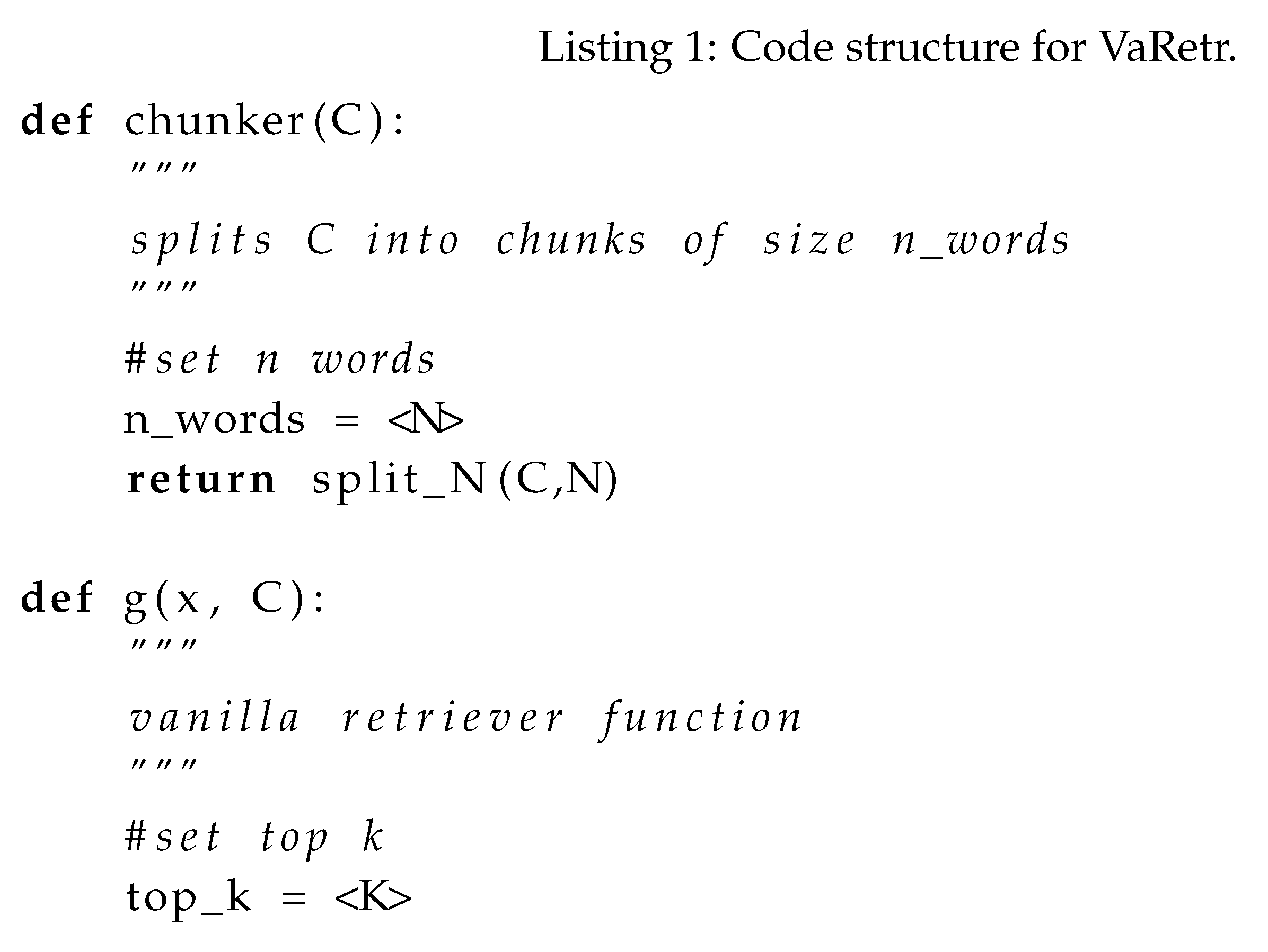

2.1. Vanilla Retrieval (VaRetr)

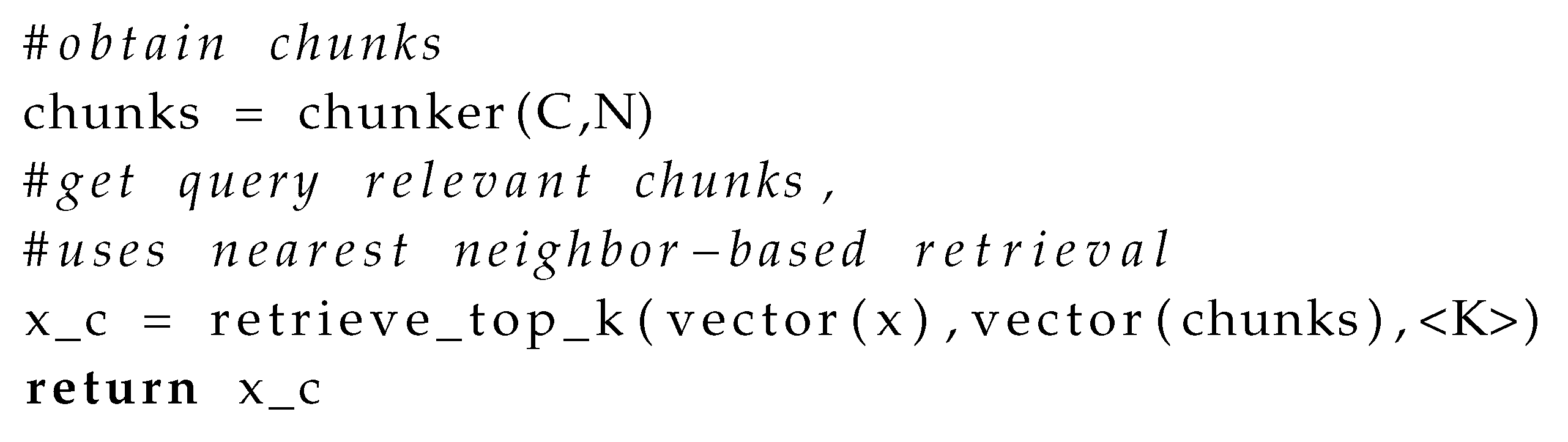

2.2. Query Rewriting and Fusion (QRFusion)

QRFusion: Benefits and Shortcomings

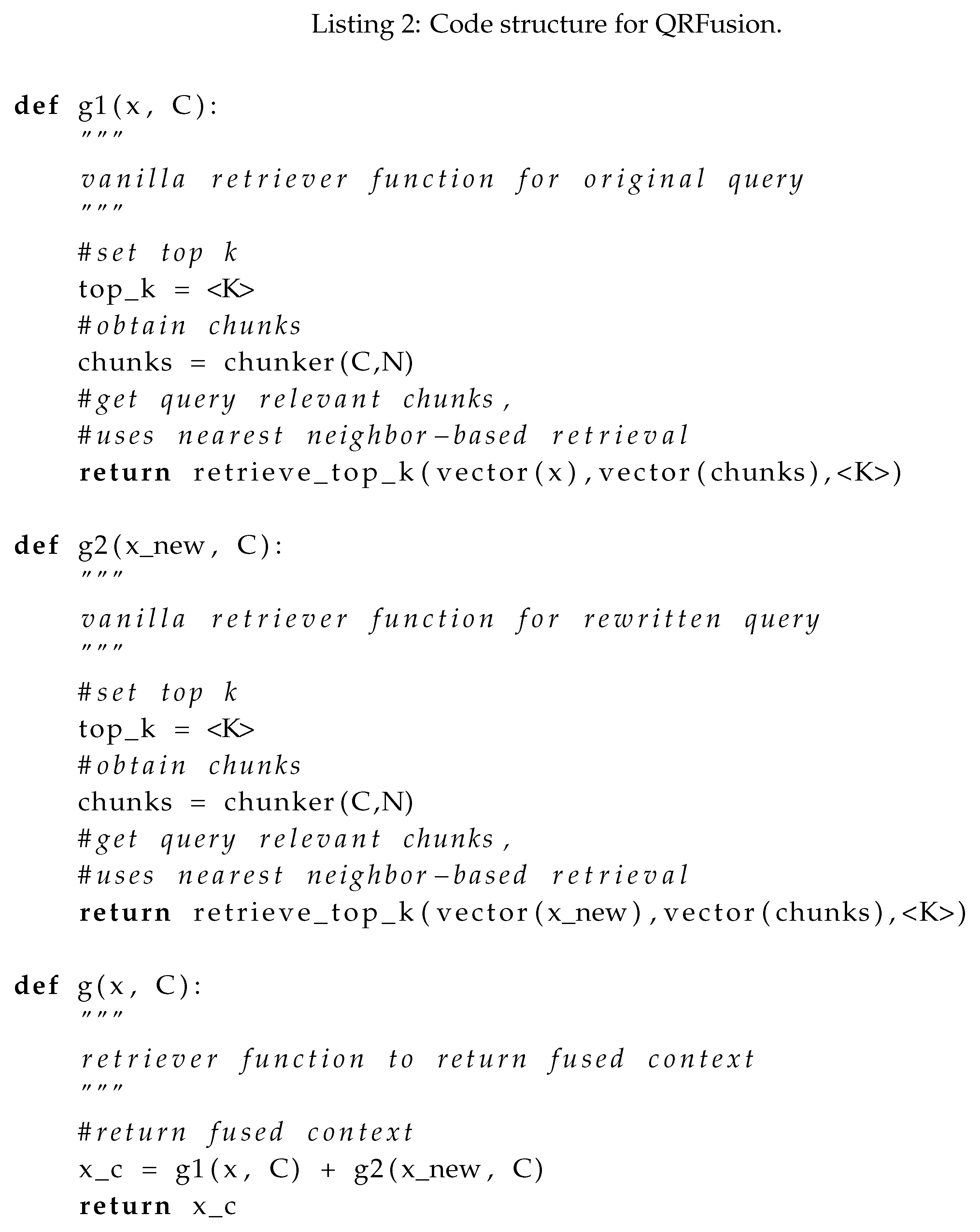

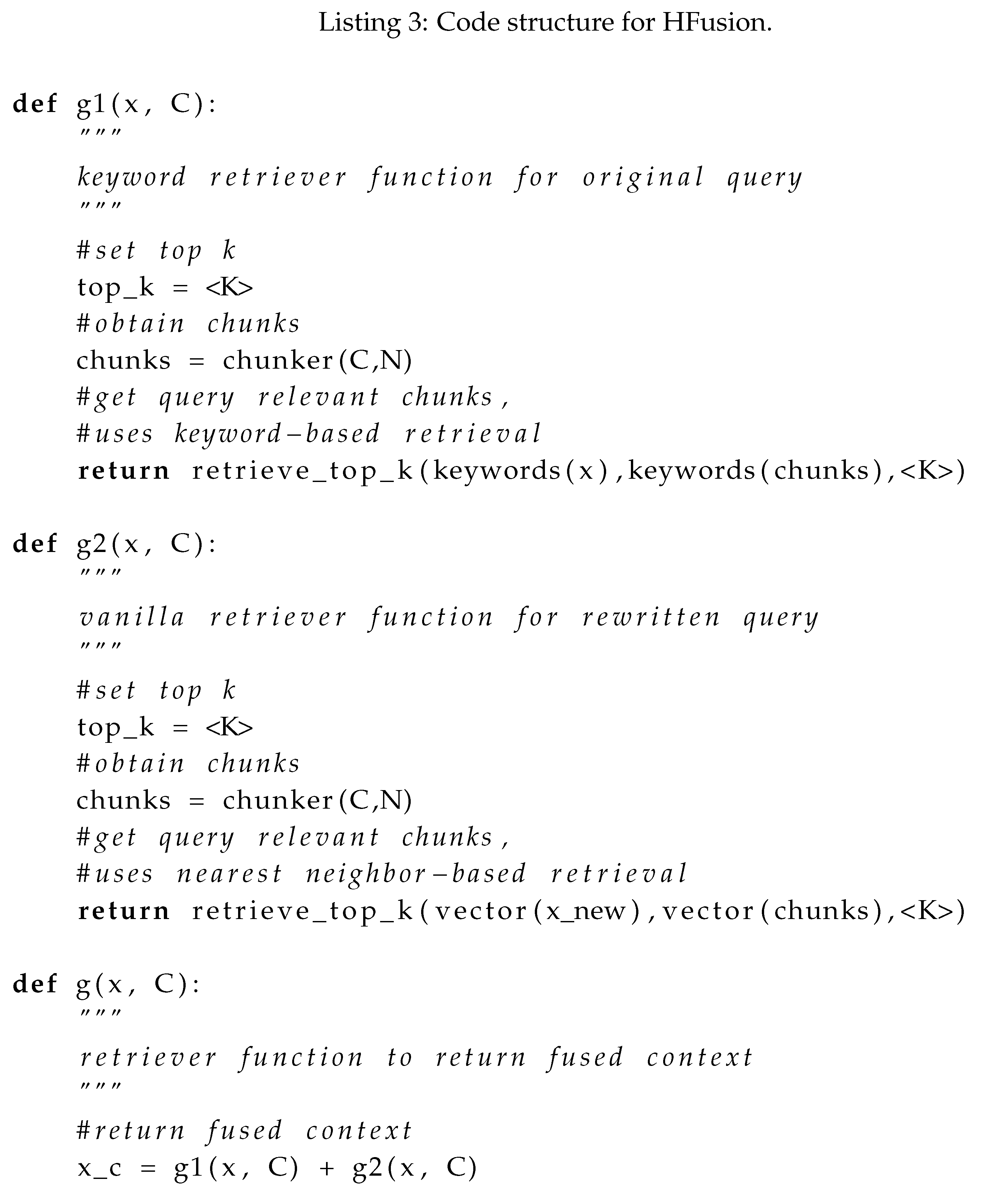

2.3. Hybrid Fusion (HFusion)

HFusion: Benefits and Shortcomings

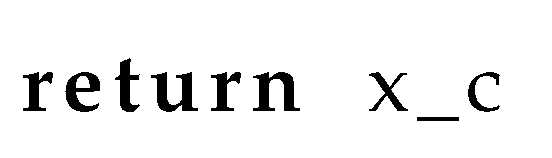

2.4. Sentence Window Retriever (SWRetr)

SWRetr: Benefits and Shortcomings

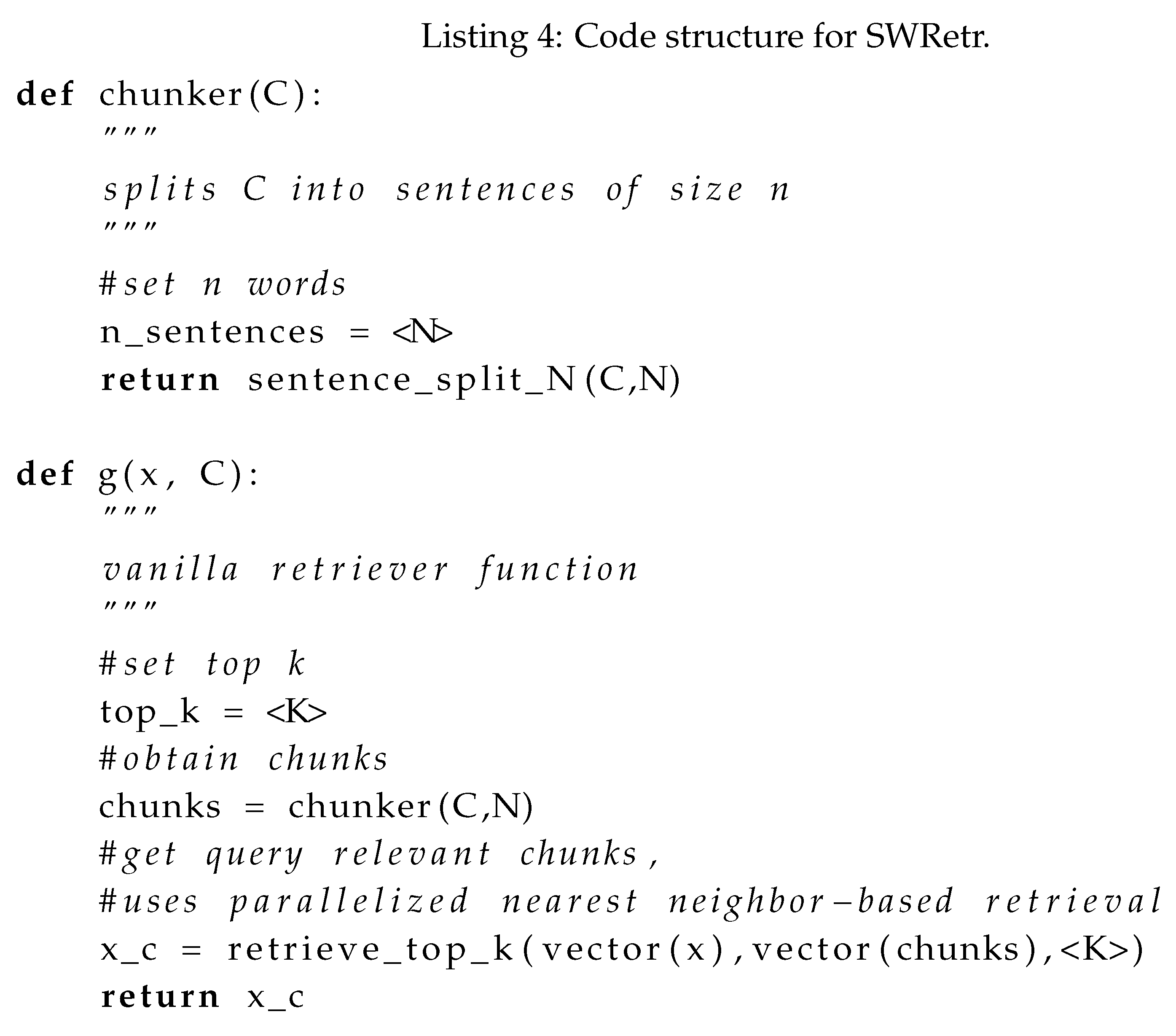

2.5. Metadata Retrieval (MRetr)

MRetr: Benefits and Shortcomings

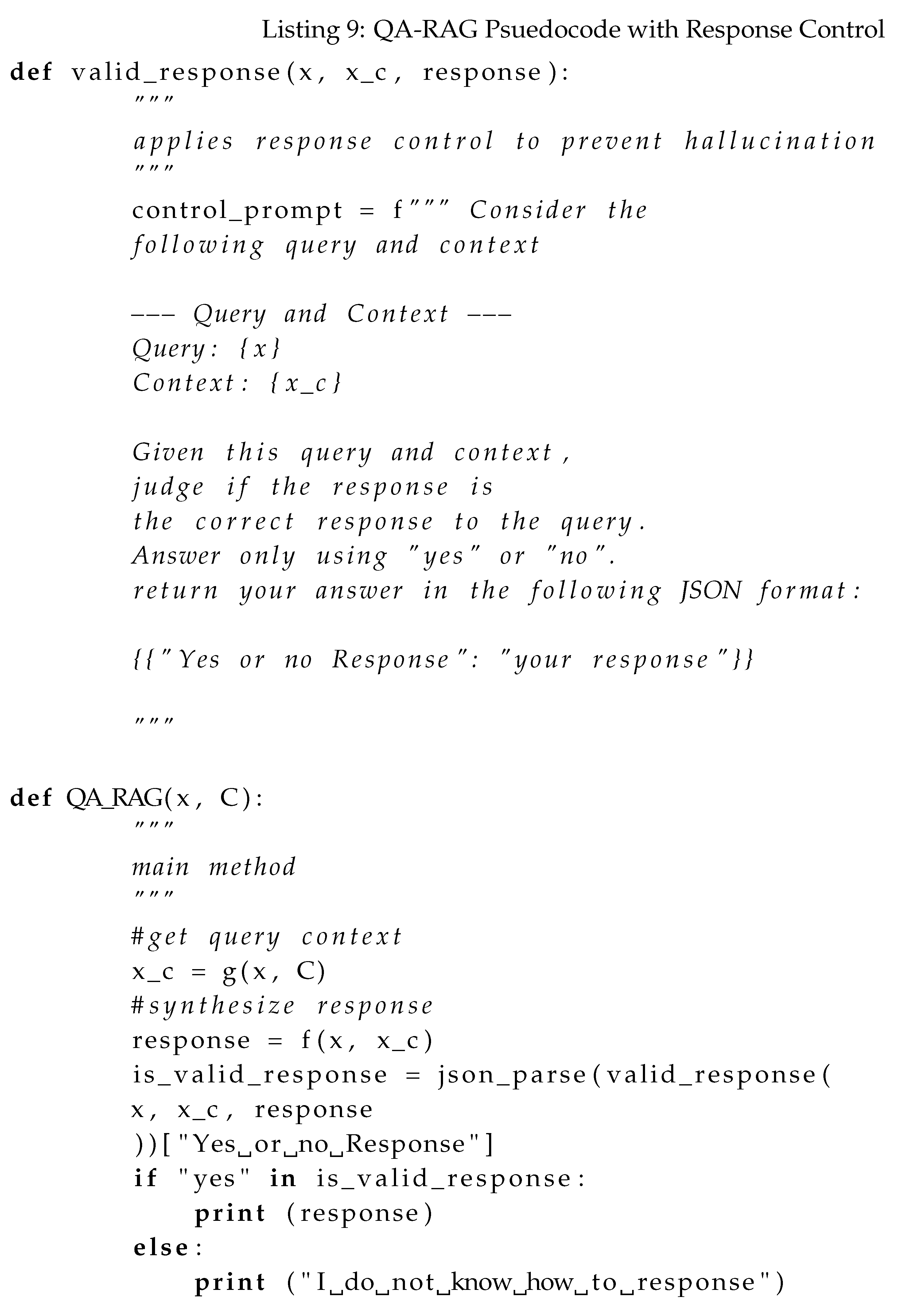

2.6. Response Controls

Motivation for QA-RAG

3. Materials and Methods

3.1. Dataset Details and Response Performance Evaluation

3.2. Proposed Method: QA-RAG

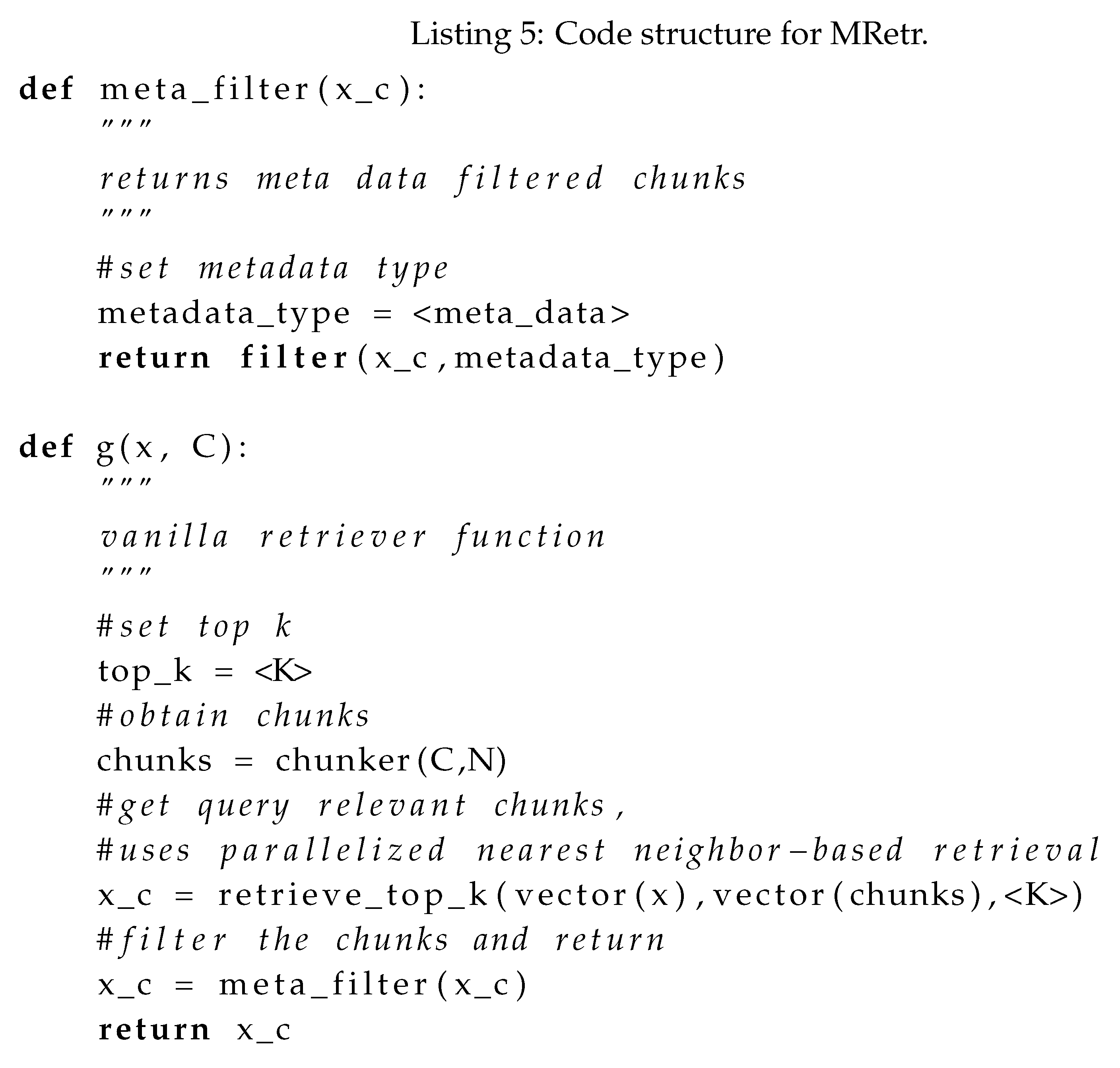

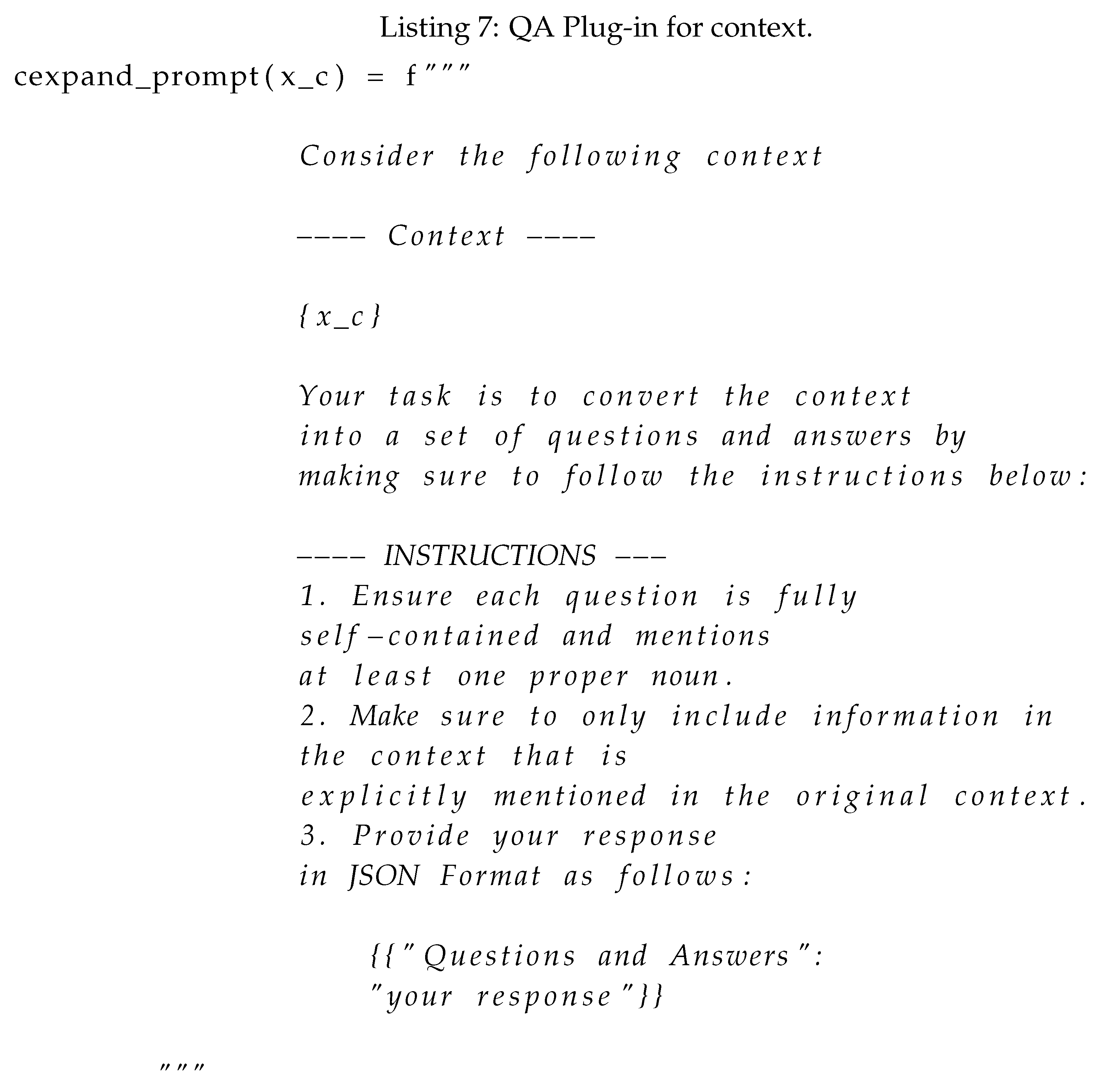

3.2.1. The QA-Plug-In

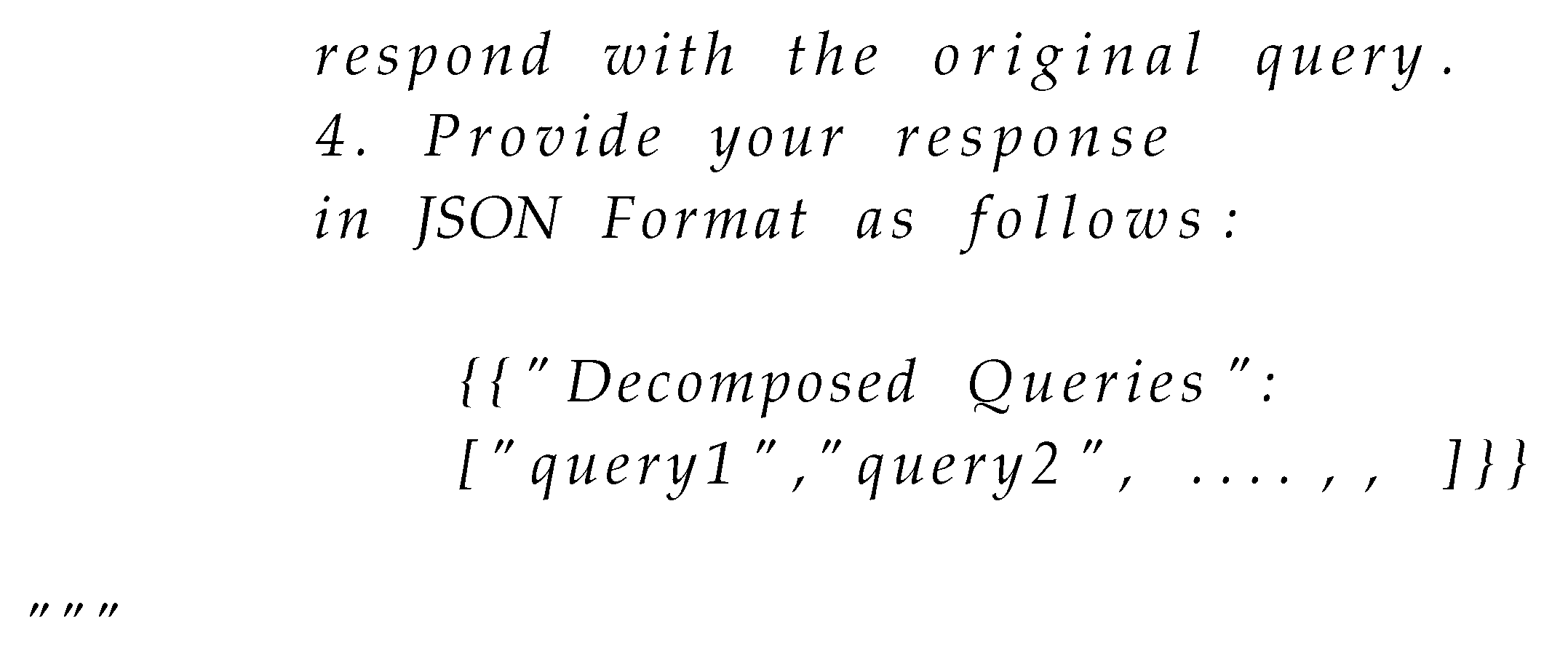

Query Plugin

Context Plugin

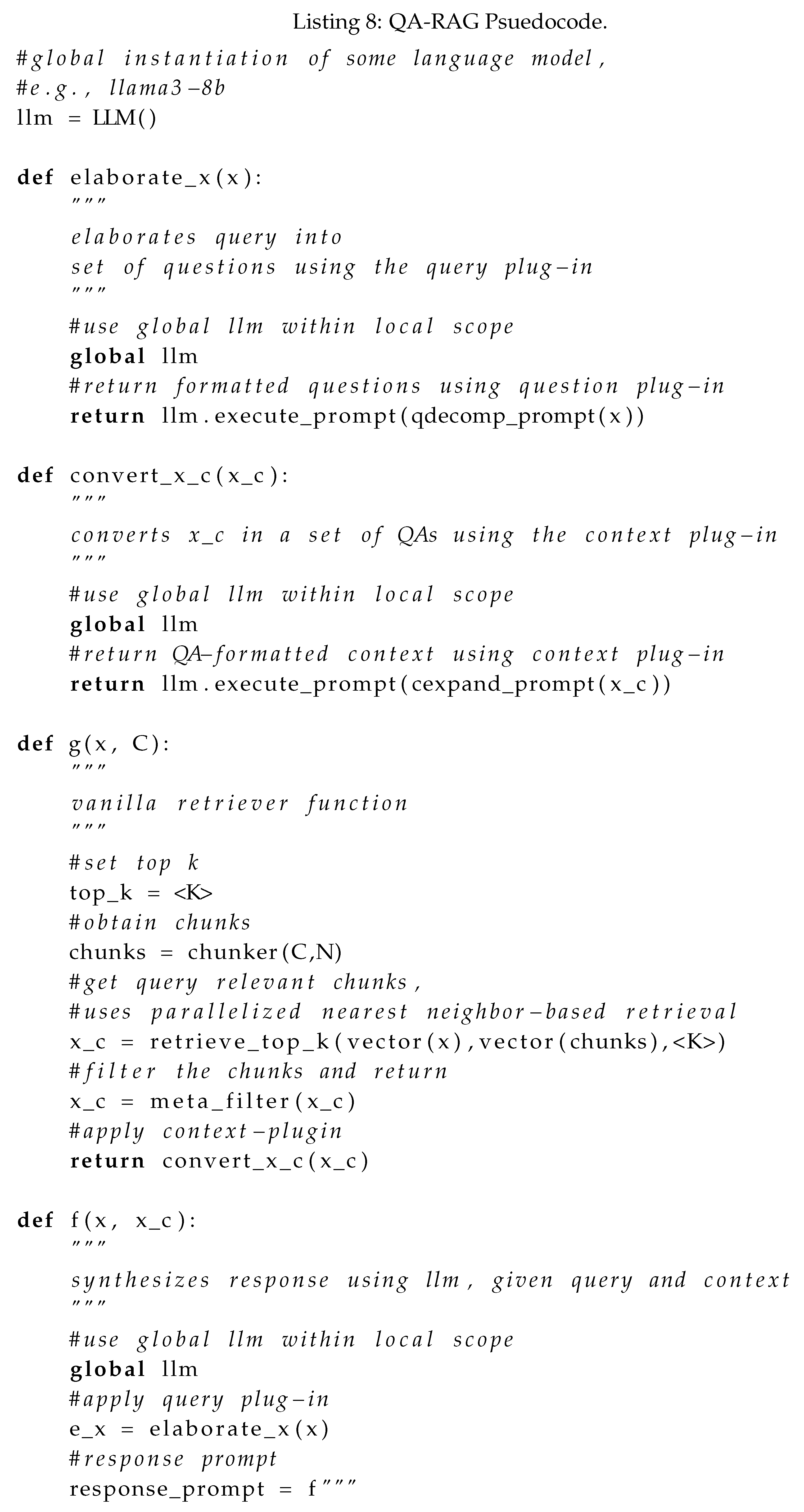

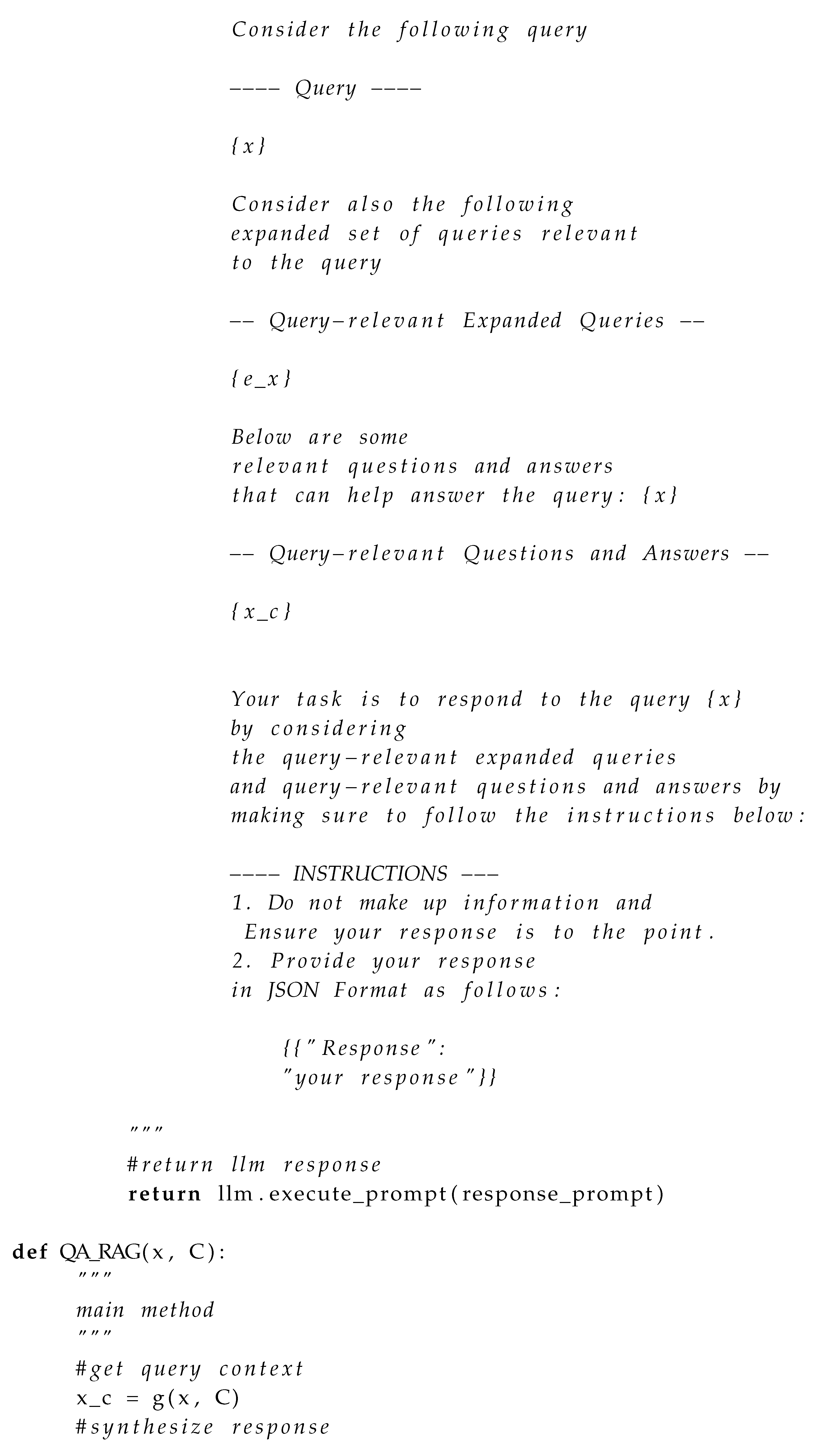

3.3. QA-RAG Psuedocode

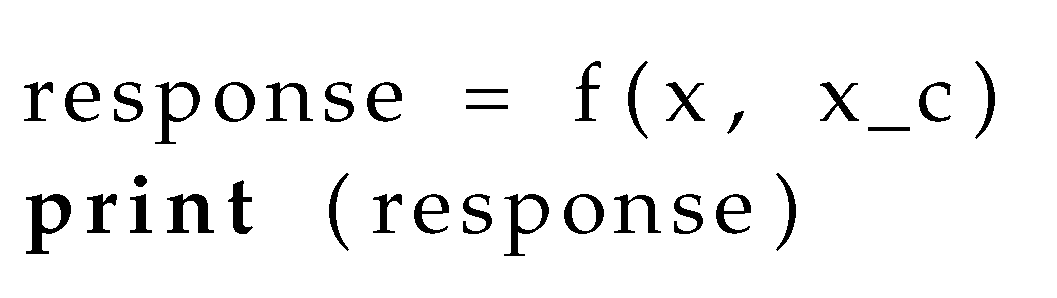

QA-RAG Psuedocode Without response controls

QA-RAG Psuedocode With response controls

Impelmentation Note.

3.4. Experimental Setup

3.5. Qualitative Analysis of QA-Plug-ins

4. Results

4.1. Results on NarrativeQA Dataset

4.2. Results on Random Wikipedia Dataset

4.3. Results on QASPER Dataset

5. Discussion

6. Conclusion

Author Contributions

Funding

Data Availability Statement

References

- Touvron, H.; Martin, L.; Stone, K.; Albert, P.; Almahairi, A.; Babaei, Y.; Bashlykov, N.; Batra, S.; Bhargava, P.; Bhosale, S.; others. Llama 2: Open foundation and fine-tuned chat models. arXiv preprint arXiv:2307.09288 2023. [CrossRef]

- Gokaslan, A.; Cohen, V. OpenWebText Corpus. https://shorturl.at/9K9wq, 2019.

- Gao, L.; Biderman, S.; Black, S.; Golding, L.; Hoppe, T.; Foster, C.; Phang, J.; He, H.; Thite, A.; Nabeshima, N.; others. The pile: An 800gb dataset of diverse text for language modeling. arXiv preprint arXiv:2101.00027 2020. [CrossRef]

- Roberts, M.; Thakur, H.; Herlihy, C.; White, C.; Dooley, S. To the cutoff... and beyond? a longitudinal perspective on LLM data contamination. The Twelfth International Conference on Learning Representations, 2023.

- Asai, A.; Min, S.; Zhong, Z.; Chen, D. Retrieval-based language models and applications. Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 6: Tutorial Abstracts), 2023, pp. 41–46.

- Borgeaud, S.; Mensch, A.; Hoffmann, J.; Cai, T.; Rutherford, E.; Millican, K.; Van Den Driessche, G.B.; Lespiau, J.B.; Damoc, B.; Clark, A.; others. Improving language models by retrieving from trillions of tokens. International conference on machine learning. PMLR, 2022, pp. 2206–2240.

- Gao, T.; Yen, H.; Yu, J.; Chen, D. Enabling Large Language Models to Generate Text with Citations. arXiv preprint arXiv:2305.14627 2023. [CrossRef]

- Zirnstein, B. Extended context for InstructGPT with LlamaIndex, 2023.

- Topsakal, O.; Akinci, T.C. Creating large language model applications utilizing langchain: A primer on developing llm apps fast. International Conference on Applied Engineering and Natural Sciences, 2023, Vol. 1, pp. 1050–1056. [CrossRef]

- Zhou, K.; Ethayarajh, K.; Card, D.; Jurafsky, D. Problems with Cosine as a Measure of Embedding Similarity for High Frequency Words. Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers), 2022, pp. 401–423.

- Gao, Y.; Xiong, Y.; Gao, X.; Jia, K.; Pan, J.; Bi, Y.; Dai, Y.; Sun, J.; Wang, H. Retrieval-augmented generation for large language models: A survey. arXiv preprint arXiv:2312.10997 2023. [CrossRef]

- Reimers, N.; Gurevych, I. Sentence-BERT: Sentence Embeddings using Siamese BERT-Networks. Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP), 2019, pp. 3982–3992.

- Chan, C.M.; Xu, C.; Yuan, R.; Luo, H.; Xue, W.; Guo, Y.; Fu, J. Rq-rag: Learning to refine queries for retrieval augmented generation. arXiv preprint arXiv:2404.00610 2024. [CrossRef]

- Song, M.; Feng, Y.; Jing, L. Utilizing BERT intermediate layers for unsupervised keyphrase extraction. Proceedings of the 5th International Conference on Natural Language and Speech Processing (ICNLSP 2022), 2022, pp. 277–281.

- Kadhim, A.I. Term weighting for feature extraction on Twitter: A comparison between BM25 and TF-IDF. 2019 international conference on advanced science and engineering (ICOASE). IEEE, 2019, pp. 124–128. [CrossRef]

- Metzler, D.P.; Haas, S.W.; Cosic, C.L.; Wheeler, L.H. Constituent object parsing for information retrieval and similar text processing problems. Journal of the American Society for Information Science 1989, 40, 398–423. [CrossRef]

- Nguyen, D.H.; Le, N.K.; Nguyen, M.L. Exploring Retriever-Reader Approaches in Question-Answering on Scientific Documents. Asian Conference on Intelligent Information and Database Systems. Springer, 2022, pp. 383–395.

- Yu, W. Retrieval-augmented generation across heterogeneous knowledge. Proceedings of the 2022 conference of the North American chapter of the association for computational linguistics: human language technologies: student research workshop, 2022, pp. 52–58.

- Zheng, L.; Chiang, W.L.; Sheng, Y.; Zhuang, S.; Wu, Z.; Zhuang, Y.; Lin, Z.; Li, Z.; Li, D.; Xing, E.; others. Judging llm-as-a-judge with mt-bench and chatbot arena. Advances in Neural Information Processing Systems 2024, 36.

- Ahmed, K.; Chang, K.W.; Van den Broeck, G. A pseudo-semantic loss for autoregressive models with logical constraints. Advances in Neural Information Processing Systems 2024, 36.

- Zhang, H.; Kung, P.N.; Yoshida, M.; Broeck, G.V.d.; Peng, N. Adaptable Logical Control for Large Language Models. arXiv preprint arXiv:2406.13892 2024. [CrossRef]

- Kočiskỳ, T.; Schwarz, J.; Blunsom, P.; Dyer, C.; Hermann, K.M.; Melis, G.; Grefenstette, E. The narrativeqa reading comprehension challenge. Transactions of the Association for Computational Linguistics 2018, 6, 317–328. [CrossRef]

- Dasigi, P.; Lo, K.; Beltagy, I.; Cohan, A.; Smith, N.A.; Gardner, M. A Dataset of Information-Seeking Questions and Answers Anchored in Research Papers. Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 2021, pp. 4599–4610.

- Papineni, K.; Roukos, S.; Ward, T.; Zhu, W.J. Bleu: a method for automatic evaluation of machine translation. Proceedings of the 40th annual meeting of the Association for Computational Linguistics, 2002, pp. 311–318.

- Lin, C.Y. Rouge: A package for automatic evaluation of summaries. Text summarization branches out, 2004, pp. 74–81.

- Banerjee, S.; Lavie, A. METEOR: An automatic metric for MT evaluation with improved correlation with human judgments. Proceedings of the acl workshop on intrinsic and extrinsic evaluation measures for machine translation and/or summarization, 2005, pp. 65–72.

- Sarthi, P.; Abdullah, S.; Tuli, A.; Khanna, S.; Goldie, A.; Manning, C.D. RAPTOR: Recursive Abstractive Processing for Tree-Organized Retrieval. The Twelfth International Conference on Learning Representations, 2023.

- Sheth, A.; Gaur, M.; Roy, K.; Faldu, K. Knowledge-intensive language understanding for explainable AI. IEEE Internet Computing 2021, 25, 19–24. [CrossRef]

- Sheth, A.; Gaur, M.; Roy, K.; Venkataraman, R.; Khandelwal, V. Process Knowledge-Infused AI: Toward User-Level Explainability, Interpretability, and Safety. IEEE Internet Computing 2022, 26, 76–84. [CrossRef]

- Sheth, A.; Roy, K.; Gaur, M. Neurosymbolic Artificial Intelligence (Why, What, and How). IEEE Intelligent Systems 2023, 38, 56–62. [CrossRef]

- Sheth, A.; Roy, K. Neurosymbolic Value-Inspired Artificial Intelligence (Why, What, and How). IEEE Intelligent Systems 2024, 39, 5–11. [CrossRef]

| Evaluation Metric | llama3-8b | llama3-70b | mixtral-8x7b | gemma-7b |

|---|---|---|---|---|

| hits@1 | 87% | 89% | 85% | 78% |

| hits@<5 | 98% | 99% | 98% | 95% |

| Method | B1V/Type1/Type2 | B4V/Type1/Type2 | RLV/Type1/Type2 | MV/Type1/Type2 |

|---|---|---|---|---|

| RAPTOR | 0.68/0.92/1.33 | 0.31/0.59/1.12 | 0.76/0.86/0.9 | 0.64/0.89/0.92 |

| HFusion | 0.40/0.59/1.15 | 0.09/0.31/0.91 | 0.26/0.41/0.96 | 0.26/0.29/0.94 |

| SRetr | 0.07/0.17/0.51 | 0.05/0.2/0.32 | 0.4/0.43/0.46 | 0.29/0.3/0.45 |

| MRetr | 0.20/0.29/0.55 | 0.04/0.25/0.39 | 0.37/0.40/0.54 | 0.20/0.30/0.54 |

| QRFusion | 0.11/1.0/1.33 | 0.39/0.43/1.11 | 0.33/0.97/0.98 | 0.18/0.93/0.99 |

| Method | B1V/Type1/Type2 | B4V/Type1/Type2 | RLV/Type1/Type2 | MV/Type1/Type2 |

|---|---|---|---|---|

| RAPTOR | 0.86/0.89/1.0 | 0.70/0.71/0.92 | 0.72/0.87/0.9 | 0.78/0.88/0.98 |

| HFusion | 0.60/0.88/1.15 | 0.82/0.91/0.92 | 0.63/0.70/0.96 | 0.77/0.82/0.92 |

| SRetr | 0.12/0.47/0.51 | 0.09/0.32/0.35 | 0.31/0.33/0.43 | 0.27/0.30/0.34 |

| MRetr | 0.35/0.44/0.58 | 0.29/0.47/0.55 | 0.21/0.32/0.34 | 0.27/0.29/0.44 |

| QRFusion | 0.71/0.72/1.3 | 0.73/0.89/1.12 | 0.77/0.79/0.9 | 0.78/0.83/0.98 |

| Method | AccV/Type1/Type2 | PRV/Type1/Type2 | RecallV/Type1/Type2 | F1V/Type1/Type2 |

|---|---|---|---|---|

| RAPTOR | 0.54/0.81/0.94 | 0.8/0.81/0.89 | 0.63/0.89/0.96 | 0.83/0.91/0.92 |

| HFusion | 0.43/0.46/0.93 | 0.31/0.38/0.96 | 0.36/0.41/0.98 | 0.35/0.47/0.92 |

| SRetr | 0.27/0.4/0.60 | 0.3/0.75/1.0 | 0.27/0.4/0.46 | 0.4/0.42/0.57 |

| MRetr | 0.32/0.46/0.5 | 0.33/0.66/0.8 | 0.30/0.47/0.52 | 0.24/0.30/0.56 |

| QRFusion | 0.2/0.8/0.95 | 0.96/0.98/1.0 | 0.2/0.90/0.96 | 0.33/0.78/0.99 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).