Submitted:

27 June 2024

Posted:

28 June 2024

Read the latest preprint version here

Abstract

Keywords:

Introduction

- This paper proposes a lightweight fusion framework for infrared and visible light images based on low-light enhancement, which can rapidly improve the visual perception of low-light scenes and produce fusion images with high contrast and clear textures;

- A novel enhancement loss is introduced in this paper to guide the network model in learning the intensity distribution and edge gradient of the CLAHE enhancement results, enabling the model to generate fusion images with low-light enhancement effects in an end-to-end manner;

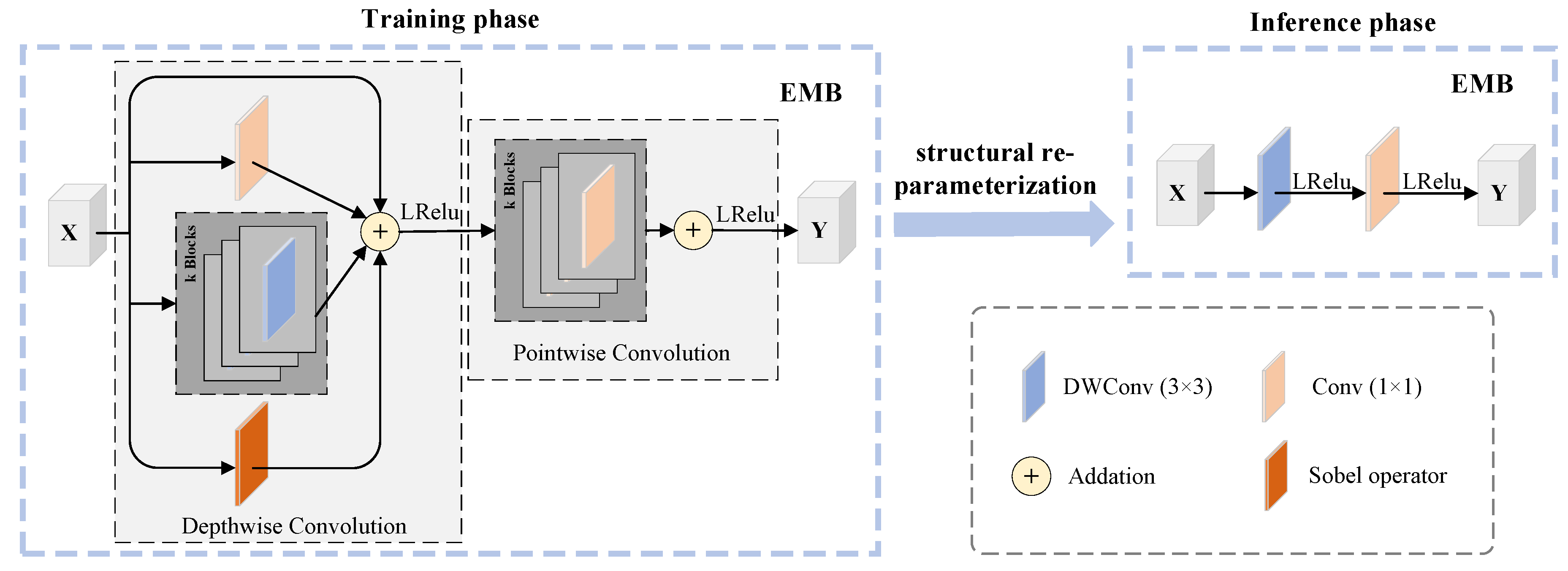

- The paper designs the Edge-MobileOne Block as the encoder and decoder of the network model, significantly enhancing the fusion performance without increasing the computational load during the inference phase;

- The paper also designs a cross-modal attention fusion module that effectively integrates information from different modality images.

2. Related Work

2.1. Deep Learning-Based Fusion Methods

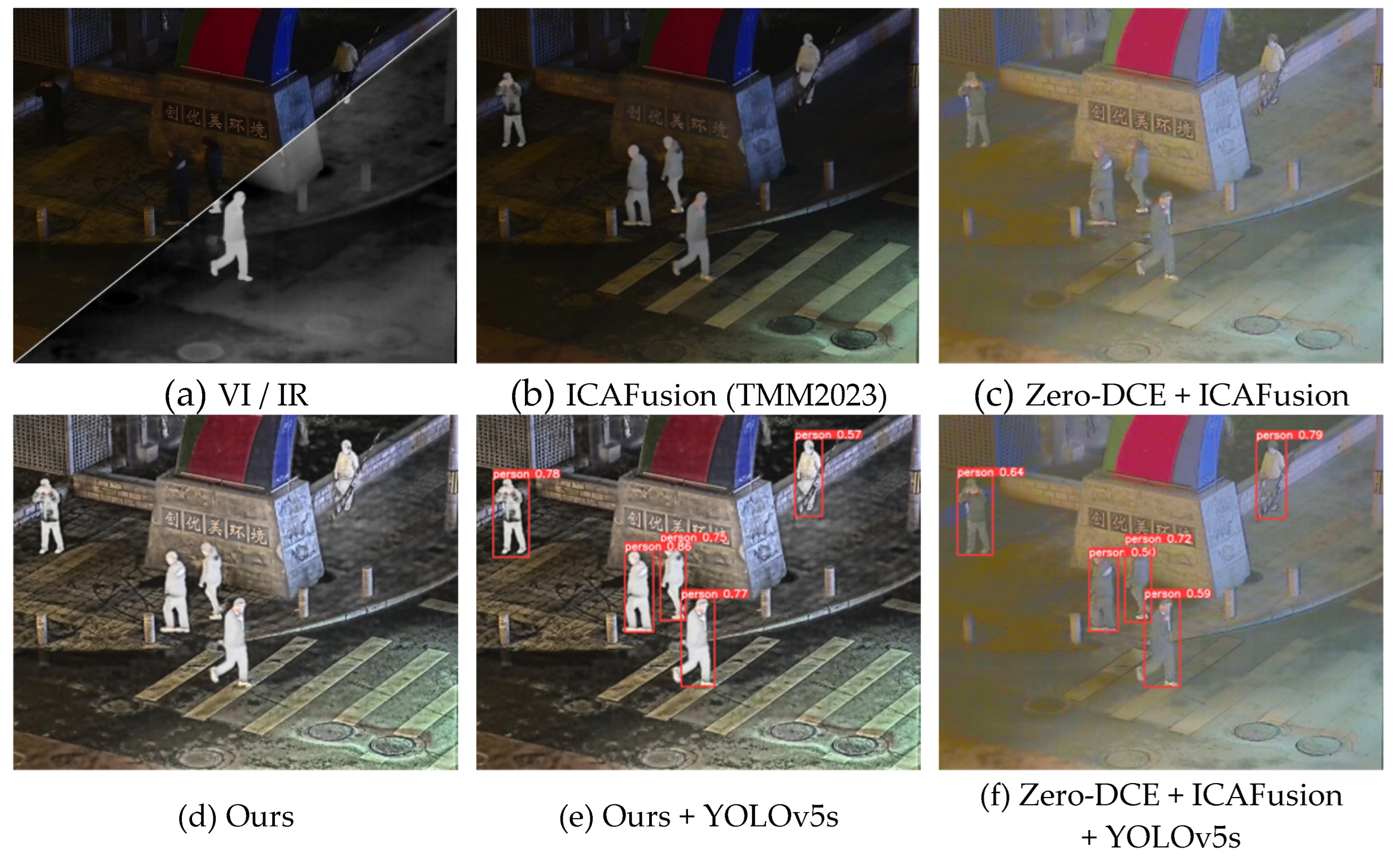

2.2. Low-Light Scenarios-Based Fusion Methods

3. Methods

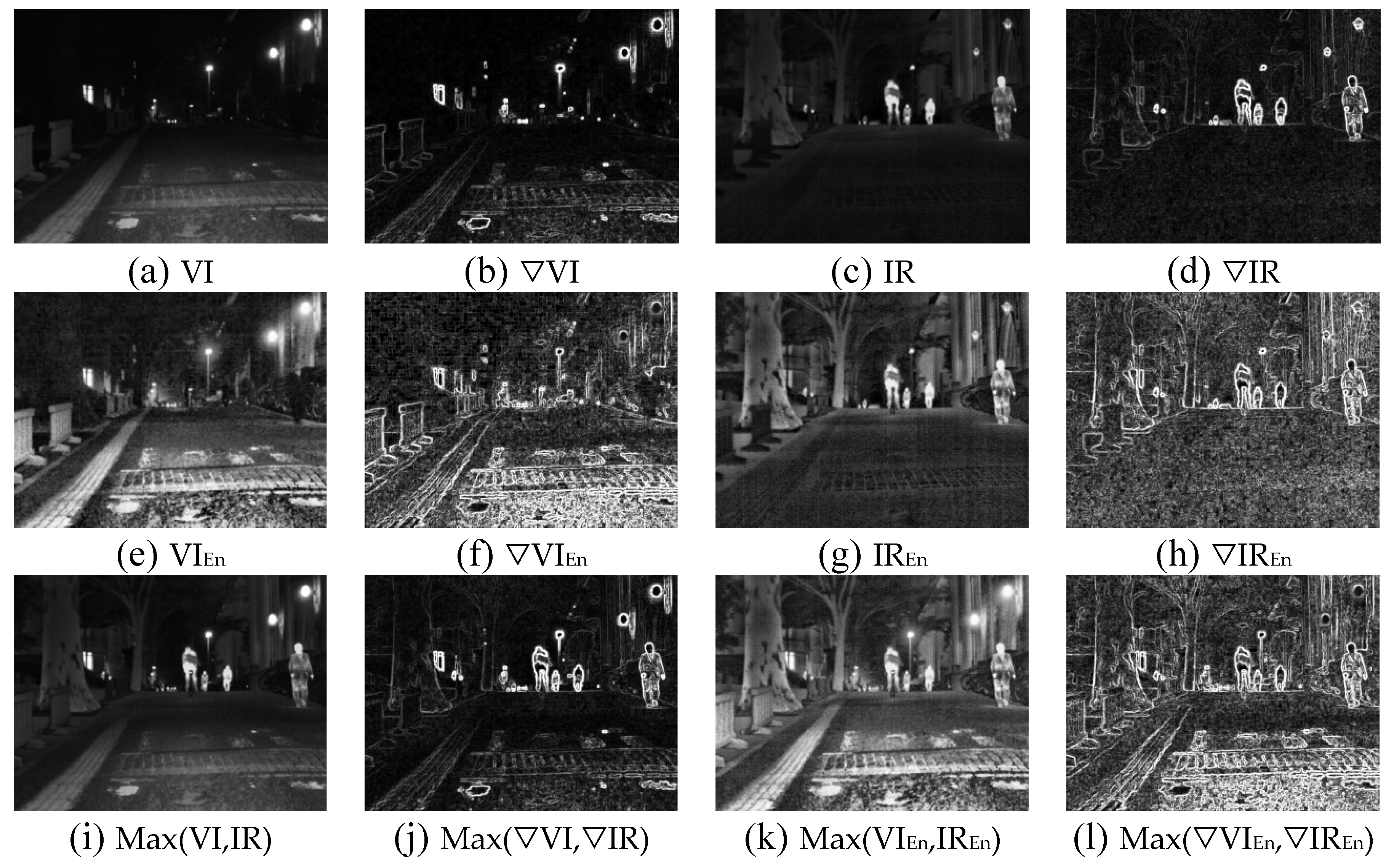

3.1. Problem Formulation

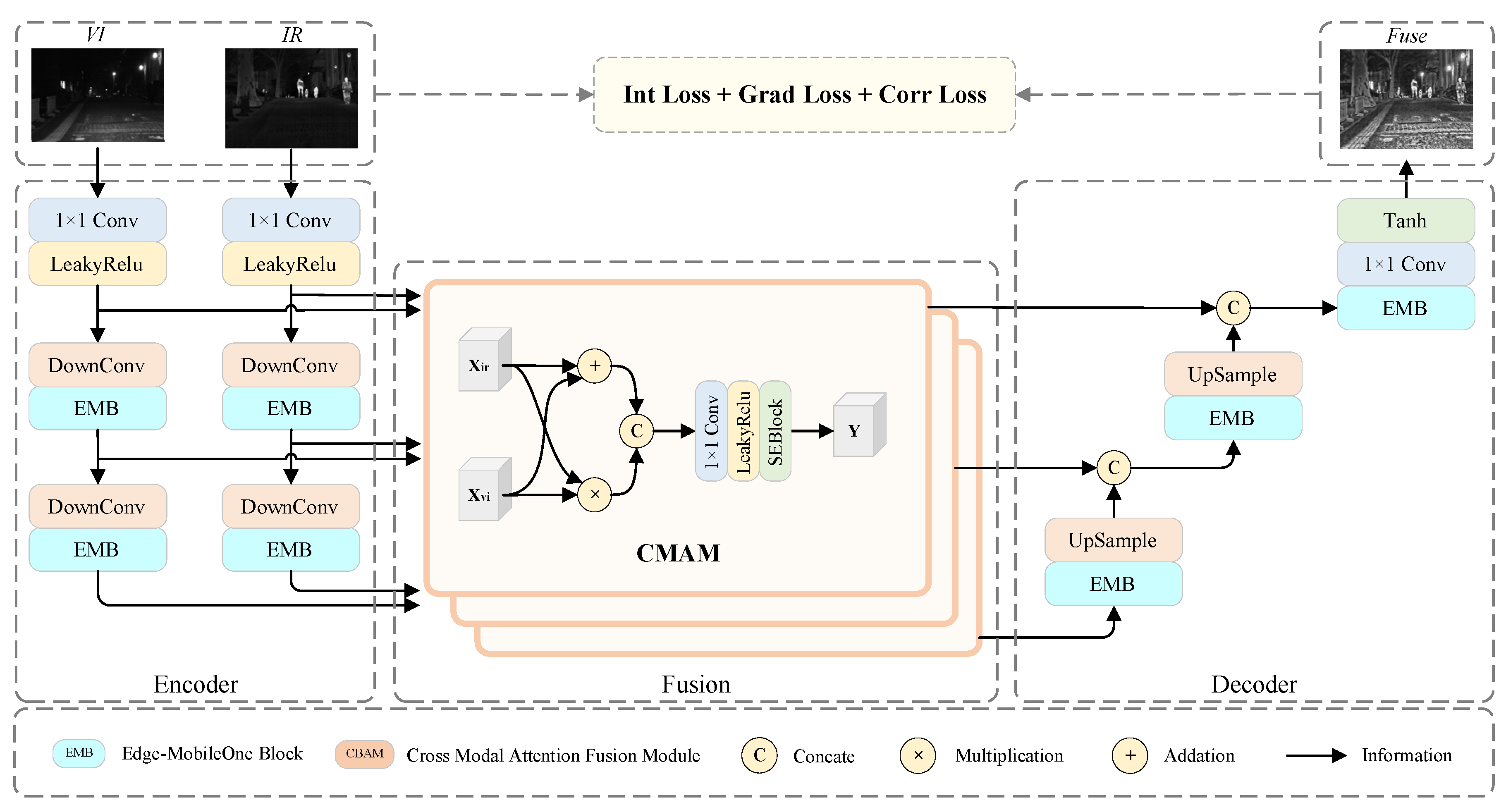

3.2. Network Architecture

3.2.1. Overall Network

3.2.2. Edge-MobileOne Block

3.2.3. Cross-Modal Attention Fusion Module

3.2.4. Color Space Model

3.3. Loss Function

4. Experiments and Analysis

4.1. Experimental Setup

4.1.1. Dataset

4.1.2. Experimental Details

4.1.3. Comparative Algorithms

4.1.4. Evaluation Metrics

4.2. Comparative Experiments

4.3. Generalization Comparative Experiments

4.3.1. TNO Dataset

4.3.2. MSRS Dataset

4.4. Efficiency Comparative Experiment

4.5. Ablation study

4.5.1. Analysis of Enhancement Loss (Len)

4.5.2. Analysis of Cross-Modality Attention Fusion Module (CMAM)

4.5.3. Analysis of Edge-MobileOne Block (EMB)

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflict of Interest

References

- Zhang H, Xu H, Tian X, et al. Image fusion meets deep learning: A survey and perspective[J]. Information Fusion, 2021, 76: 323-336. [CrossRef]

- Zhang, X. Benchmarking and comparing multi-exposure image fusion algorithms[J]. Information Fusion, 2021, 74: 111-131. [CrossRef]

- Guo C, Li C, Guo J, et al. Zero-reference deep curve estimation for low-light image enhancement[C]//Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. 2020: 1780-1789. [CrossRef]

- Wang D, He D. Channel pruned YOLO V5s-based deep learning approach for rapid and accurate apple fruitlet detection before fruit thinning[J]. Biosystems Engineering, 2021, 210: 271-281. [CrossRef]

- Guan D, Cao Y, Yang J, et al. Fusion of multispectral data through illumination-aware deep neural networks for pedestrian detection[J]. Information Fusion, 2019, 50: 148-157. [CrossRef]

- Jain D K, Zhao X, González-Almagro G, et al. Multimodal pedestrian detection using metaheuristics with deep convolutional neural network in crowded scenes[J]. Information Fusion, 2023, 95: 401-414. [CrossRef]

- Zhang Q, Zhao S, Luo Y, et al. ABMDRNet: Adaptive-weighted bi-directional modality difference reduction network for RGB-T semantic segmentation[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 2021: 2633-2642. [CrossRef]

- Chen J, Li X, Luo L, et al. Infrared and visible image fusion based on target-enhanced multiscale transform decomposition[J]. Information Sciences, 2020, 508: 64-78. [CrossRef]

- Fu Z, Wang X, Xu J, et al. Infrared and visible images fusion based on RPCA and NSCT[J]. Infrared Physics & Technology, 2016, 77: 114-123. [CrossRef]

- Li H, Wu X J, Kittler J. MDLatLRR: A novel decomposition method for infrared and visible image fusion[J]. IEEE Transactions on Image Processing, 2020, 29: 4733-4746. [CrossRef]

- Ma J, Zhou Z, Wang B, et al. Infrared and visible image fusion based on visual saliency map and weighted least square optimization[J]. Infrared Physics & Technology, 2017, 82: 8-17. [CrossRef]

- Li H, Wu X J. DenseFuse: A fusion approach to infrared and visible images[J]. IEEE Transactions on Image Processing, 2019, 28(5): 2614-2623. [CrossRef]

- Huang G, Liu Z, Van Der Maaten L, et al. Densely connected convolutional networks[C]//Proceedings of the IEEE conference on computer vision and pattern recognition. 2017: 4700-4708. [CrossRef]

- Li H, Wu X J, Durrani T. NestFuse: An infrared and visible image fusion architecture based on nest connection and spatial/channel attention models[J]. IEEE Transactions on Instrumentation and Measurement, 2020, 69(12): 9645-9656. [CrossRef]

- Li H, Wu X J, Kittler J. RFN-Nest: An end-to-end residual fusion network for infrared and visible images[J]. Information Fusion, 2021, 73: 72-86. [CrossRef]

- Xu H, Zhang H, Ma J. Classification saliency-based rule for visible and infrared image fusion[J]. IEEE Transactions on Computational Imaging, 2021, 7: 824-836. [CrossRef]

- Cheng C, Xu T, Wu X J. MUFusion: A general unsupervised image fusion network based on memory unit[J]. Information Fusion, 2023, 92: 80-92. [CrossRef]

- Ma J, Tang L, Xu M, et al. STDFusionNet: An infrared and visible image fusion network based on salient target detection[J]. IEEE Transactions on Instrumentation and Measurement, 2021, 70: 1-13. [CrossRef]

- Long Y, Jia H, Zhong Y, et al. RXDNFuse: A aggregated residual dense network for infrared and visible image fusion[J]. Information Fusion, 2021, 69: 128-141. [CrossRef]

- He K, Zhang X, Ren S, et al. Deep residual learning for image recognition[C]//Proceedings of the IEEE conference on computer vision and pattern recognition. 2016: 770-778. [CrossRef]

- Li H, Cen Y, Liu Y, et al. Different input resolutions and arbitrary output resolution: A meta learning-based deep framework for infrared and visible image fusion[J]. IEEE Transactions on Image Processing, 2021, 30: 4070-4083. [CrossRef]

- Tang L, Yuan J, Ma J. Image fusion in the loop of high-level vision tasks: A semantic-aware real-time infrared and visible image fusion network[J]. Information Fusion, 2022, 82: 28-42. [CrossRef]

- Tang L, Zhang H, Xu H, et al. Rethinking the necessity of image fusion in high-level vision tasks: A practical infrared and visible image fusion network based on progressive semantic injection and scene fidelity[J]. Information Fusion, 2023: 101870. [CrossRef]

- Ma J, Yu W, Liang P, et al. FusionGAN: A generative adversarial network for infrared and visible image fusion[J]. Information fusion, 2019, 48: 11-26. [CrossRef]

- Ma J, Xu H, Jiang J, et al. DDcGAN: A dual-discriminator conditional generative adversarial network for multi-resolution image fusion[J]. IEEE Transactions on Image Processing, 2020, 29: 4980-4995. [CrossRef]

- Li J, Huo H, Li C, et al. AttentionFGAN: Infrared and visible image fusion using attention-based generative adversarial networks[J]. IEEE Transactions on Multimedia, 2020, 23: 1383-1396. [CrossRef]

- Ma J, Zhang H, Shao Z, et al. GANMcC: A generative adversarial network with multiclassification constraints for infrared and visible image fusion[J]. IEEE Transactions on Instrumentation and Measurement, 2021, 70: 1-14. [CrossRef]

- Liu J, Fan X, Huang Z, et al. Target-aware dual adversarial learning and a multi-scenario multi-modality benchmark to fuse infrared and visible for object detection[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 2022: 5802-5811. [CrossRef]

- Tang L, Yuan J, Zhang H, et al. PIAFusion: A progressive infrared and visible image fusion network based on illumination aware[J]. Information Fusion, 2022, 83: 79-92. [CrossRef]

- Liu B, Wei J, Su S, et al. Research on Task-Driven Dual-Light Image Fusion and Enhancement Method under Low Illumination[C]//2022 7th International Conference on Image, Vision and Computing (ICIVC). IEEE, 2022: 523-530. [CrossRef]

- Tang L, Xiang X, Zhang H, et al. DIVFusion: Darkness-free infrared and visible image fusion[J]. Information Fusion, 2023, 91: 477-493. [CrossRef]

- Chang R, Zhao S, Rao Y, et al. LVIF-Net: Learning synchronous visible and infrared image fusion and enhancement under low-light conditions[J]. Infrared Physics & Technology, 2024: 105270. [CrossRef]

- Reza A, M. Realization of the contrast limited adaptive histogram equalization (CLAHE) for real-time image enhancement[J]. Journal of VLSI signal processing systems for signal, image and video technology, 2004, 38: 35-44. [CrossRef]

- Vasu P K A, Gabriel J, Zhu J, et al. Mobileone: An improved one millisecond mobile backbone[C]//Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. 2023: 7907-7917. [CrossRef]

- Chen Z, Fan H, Ma M, et al. FECFusion: Infrared and visible image fusion network based on fast edge convolution[J]. Mathematical Biosciences and Engineering: MBE, 2023, 20(9): 16060-16082. [CrossRef]

- Hu J, Shen L, Sun G. Squeeze-and-excitation networks[C]//Proceedings of the IEEE conference on computer vision and pattern recognition. 2018: 7132-7141. [CrossRef]

- Jia X, Zhu C, Li M, et al. LLVIP: A visible-infrared paired dataset for low-light vision[C]//Proceedings of the IEEE/CVF international conference on computer vision. 2021: 3496-3504. [CrossRef]

- Toet, A. The TNO multiband image data collection[J]. Data in brief, 2017, 15: 249-251. [CrossRef]

- Xu H, Gong M, Tian X, et al. CUFD: An encoder–decoder network for visible and infrared image fusion based on common and unique feature decomposition[J]. Computer Vision and Image Understanding, 2022, 218: 103407. [CrossRef]

- Zhang Y, Liu Y, Sun P, et al. IFCNN: A general image fusion framework based on convolutional neural network[J]. Information Fusion, 2020, 54: 99-118. [CrossRef]

- Zhang H, Xu H, Xiao Y, et al. Rethinking the image fusion: A fast unified image fusion network based on proportional maintenance of gradient and intensity[C]//Proceedings of the AAAI conference on artificial intelligence. 2020, 34(07): 12797-12804. [CrossRef]

- Zhang H, Ma J. SDNet: A versatile squeeze-and-decomposition network for real-time image fusion[J]. International Journal of Computer Vision, 2021, 129: 2761-2785. [CrossRef]

- Xue W, Wang A, Zhao L. FLFuse-Net: A fast and lightweight infrared and visible image fusion network via feature flow and edge compensation for salient information[J]. Infrared Physics & Technology, 2022, 127: 104383. [CrossRef]

- Xu H, Ma J, Jiang J, et al. U2Fusion: A unified unsupervised image fusion network[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2022, 44(1):502-518. [CrossRef]

- Wang D, Liu J, Fan X, et al. Unsupervised misaligned infrared and visible image fusion via cross-modality image generation and registration[J]. arxiv preprint arxiv:2205.11876, 2022. https://www.arxiv.org/abs/2205.11876v1.

- Wang Z, Shao W, Chen Y, et al. Infrared and visible image fusion via interactive compensatory attention adversarial learning[J]. IEEE Transactions on Multimedia, 2022. [CrossRef]

- Howard A G, Zhu M, Chen B, et al. Mobilenets: Efficient convolutional neural networks for mobile vision applications[J]. arxiv preprint arxiv:1704.04861, 2017. https://arxiv.org/abs/1704.04861.

- Zhu Z, Wei H, Hu G, et al. A novel fast single image dehazing algorithm based on artificial multiexposure image fusion[J]. IEEE Transactions on Instrumentation and Measurement, 2020, 70: 1-23. [CrossRef]

- Liu Y, Qi Z, Cheng J, et al. Rethinking the Effectiveness of Objective Evaluation Metrics in Multi-focus Image Fusion: A Statistic-based Approach[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2024, 1-14. [CrossRef]

| Dataset | Algorithm | SD | VIF | AG | EN | SF |

|---|---|---|---|---|---|---|

| LLVIP | DenseFuse | 9.2490 | 0.8317 | 2.7245 | 6.8727 | 0.0363 |

| FusionGAN | 8.3299 | 0.5322 | 1.9442 | 6.3078 | 0.0271 | |

| IFCNN | 8.6038 | 0.8094 | 4.1833 | 6.7336 | 0.0565 | |

| PMGI | 9.7091 | 0.7697 | 2.6571 | 6.9990 | 0.0332 | |

| GANMcC | 9.0244 | 0.7155 | 2.1196 | 6.6894 | 0.0267 | |

| SDNet | 8.9238 | 0.6537 | 3.4359 | 6.6793 | 0.0474 | |

| STDFusionNet | 6.2734 | 0.5222 | 2.9843 | 5.2143 | 0.0459 | |

| CUFD | 8.8130 | 0.7139 | 2.0277 | 6.6813 | 0.0284 | |

| FLFuse | 8.8942 | 0.6337 | 1.2916 | 6.4600 | 0.0162 | |

| U2Fusion | 7.7951 | 0.5631 | 2.2132 | 5.9464 | 0.0287 | |

| UMF-CMGR | 8.0539 | 0.5796 | 2.5040 | 6.4619 | 0.0389 | |

| ICAFusion | 7.8053 | 0.7300 | 2.4907 | 6.1222 | 0.0367 | |

| Ours | 10.1525 | 1.1682 | 10.7486 | 7.6459 | 0.1122 |

| Dataset | Algorithm | SD | VIF | AG | EN | SF |

|---|---|---|---|---|---|---|

| TNO | DenseFuse | 9.2424 | 0.8175 | 3.5600 | 6.8193 | 0.0352 |

| FusionGAN | 8.6736 | 0.6541 | 2.4211 | 6.5580 | 0.0246 | |

| IFCNN | 9.0581 | 0.7864 | 5.1154 | 6.8533 | 0.0508 | |

| PMGI | 9.6029 | 0.8689 | 3.6004 | 7.0181 | 0.0344 | |

| GANMcC | 9.0532 | 0.7123 | 2.5441 | 6.7359 | 0.0242 | |

| SDNet | 9.0698 | 0.7592 | 4.6117 | 6.6948 | 0.0457 | |

| STDFusionNet | 9.0451 | 0.9746 | 4.3846 | 6.9031 | 0.0455 | |

| CUFD | 9.5380 | 0.9911 | 4.0435 | 7.0599 | 0.0391 | |

| FLFuse | 9.2611 | 0.8084 | 3.3691 | 6.3924 | 0.0339 | |

| U2Fusion | 8.8553 | 0.6787 | 3.4891 | 6.4230 | 0.0327 | |

| UMF-CMGR | 8.7085 | 0.7121 | 2.9727 | 6.5325 | 0.0321 | |

| ICAFusion | 9.5750 | 1.0757 | 4.6253 | 7.1372 | 0.0470 | |

| Ours | 10.3190 | 0.7849 | 13.3298 | 7.3418 | 0.1218 |

| Dataset | Algorithm | SD | VIF | AG | EN | SF |

|---|---|---|---|---|---|---|

| MSRS | DenseFuse | 7.5090 | 0.7317 | 2.2024 | 6.0225 | 0.0255 |

| FusionGAN | 5.7942 | 0.4671 | 1.4470 | 5.4631 | 0.0172 | |

| IFCNN | 6.6247 | 0.6904 | 3.6574 | 5.8457 | 0.0450 | |

| PMGI | 7.5838 | 0.6348 | 2.7487 | 6.1807 | 0.0301 | |

| GANMcC | 8.0840 | 0.6283 | 1.9036 | 6.0204 | 0.0212 | |

| SDNet | 5.6207 | 0.4149 | 2.5085 | 5.1713 | 0.0317 | |

| STDFusionNet | 6.5162 | 0.5298 | 2.4355 | 5.3721 | 0.0366 | |

| CUFD | 7.2588 | 0.6038 | 2.4734 | 5.8668 | 0.0322 | |

| FLFuse | 6.6117 | 0.4791 | 1.7241 | 5.5299 | 0.0189 | |

| U2Fusion | 5.7280 | 0.3902 | 1.8871 | 4.7535 | 0.0243 | |

| UMF-CMGR | 5.9766 | 0.3836 | 2.1143 | 5.5499 | 0.0272 | |

| ICAFusion | 7.8528 | 0.5961 | 1.9544 | 5.7254 | 0.0261 | |

| Ours | 9.3456 | 0.9212 | 8.9116 | 7.3362 | 0.0789 |

| Algorithm | Running Time(ms) | FLOPs/G |

|---|---|---|

| DenseFuse | 267.26 ± 472.11 | 115.3 |

| FusionGAN | 341.21 ± 546.12 | 1213.9 |

| IFCNN | 20.42 ± 2.13 | 170.9 |

| PMGI | 218.26 ± 306.99 | 55.3 |

| GANMcC | 726.51 ± 972.39 | 2444.6 |

| SDNet | 111.22 ± 338.89 | 122.7 |

| STDFusionNet | 284.86 ± 517.61 | 369.5 |

| CUFD | No Data1 | 137.7 |

| FLFuse | 1.77 ± 14.18 | 18.6 |

| U2Fusion | 511.95 ± 774.04 | 863.4 |

| UMF-CMGR | 260.55 ± 440.31 | 824.3 |

| ICAFusion | 1456.16 ± 395.66 | 1226.2 |

| Ours* 2 | 6.43 ± 3.89 | 16.5 |

| Ours | 3.27 ± 3.54 | 7.9 |

| Re-parametrization | Runtime | Forward memory | Parameters | Weight Size | Deviation |

|---|---|---|---|---|---|

| × | 6.43ms | 14770.00MB | 82,081 | 0.31MB | / |

| √ | 3.27ms | 5620.18MB | 47,089 | 0.18MB | 2.8×10-3 |

| Experiment | Evaluation Methods | |||||||

|---|---|---|---|---|---|---|---|---|

| No. | EMB | CMAM | Len | SD | VIF | AG | EN | SF |

| 1 | √ | √ | √ | 10.1213 | 1.1624 | 11.3551 | 7.6509 | 0.1183 |

| 2 | √ | √ | × | 9.8140 | 0.9353 | 7.6129 | 7.4839 | 0.0771 |

| 3 | √ | × | × | 9.5538 | 1.0152 | 4.0657 | 7.3336 | 0.0543 |

| 4 | × | × | × | 9.0162 | 0.8351 | 6.3087 | 7.0437 | 0.0780 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).