Submitted:

25 June 2024

Posted:

25 June 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

- A mathematical model for SL training latency and convergence is established, which jointly considers unknown non-i.i.d. data distributions, device participate sequence, inaccurate CSI, and deviations in occupied computing resources. These are crucial factors for SL training performance but are often overlooked in existing SL studies.

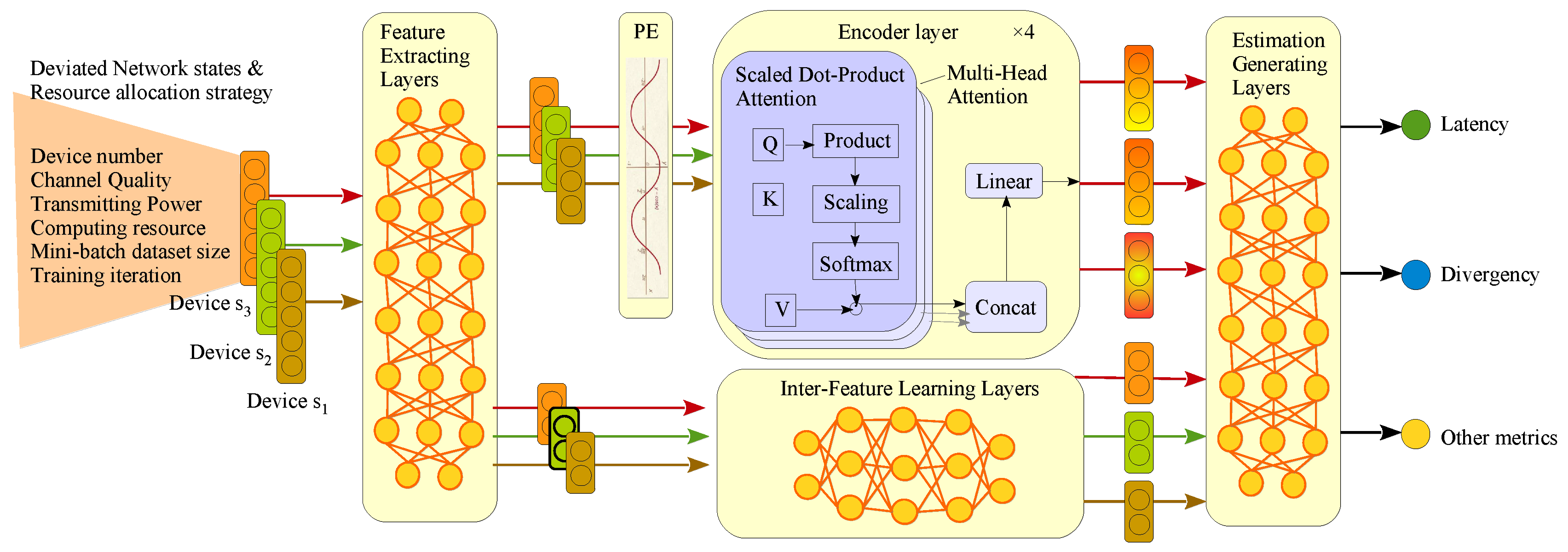

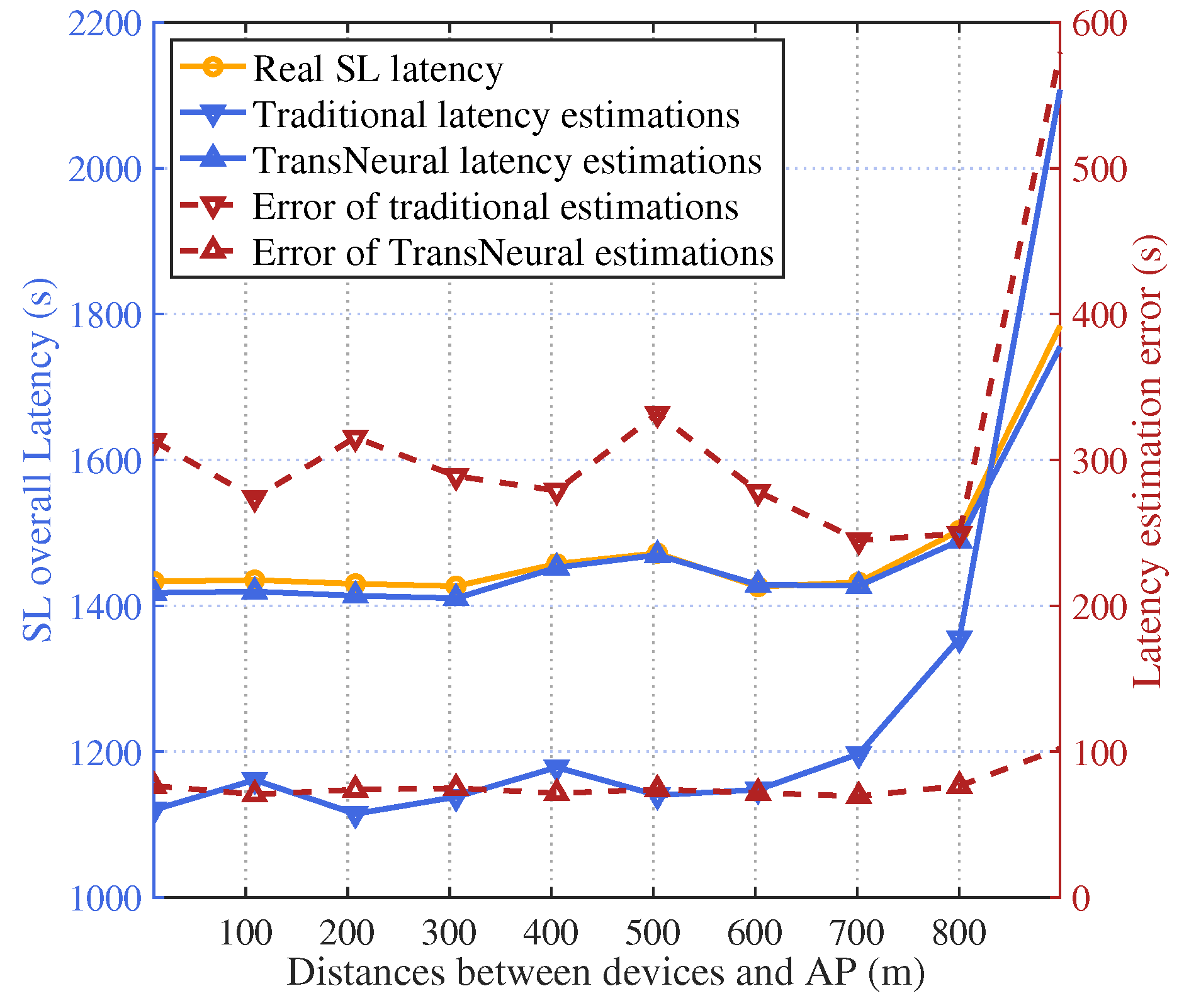

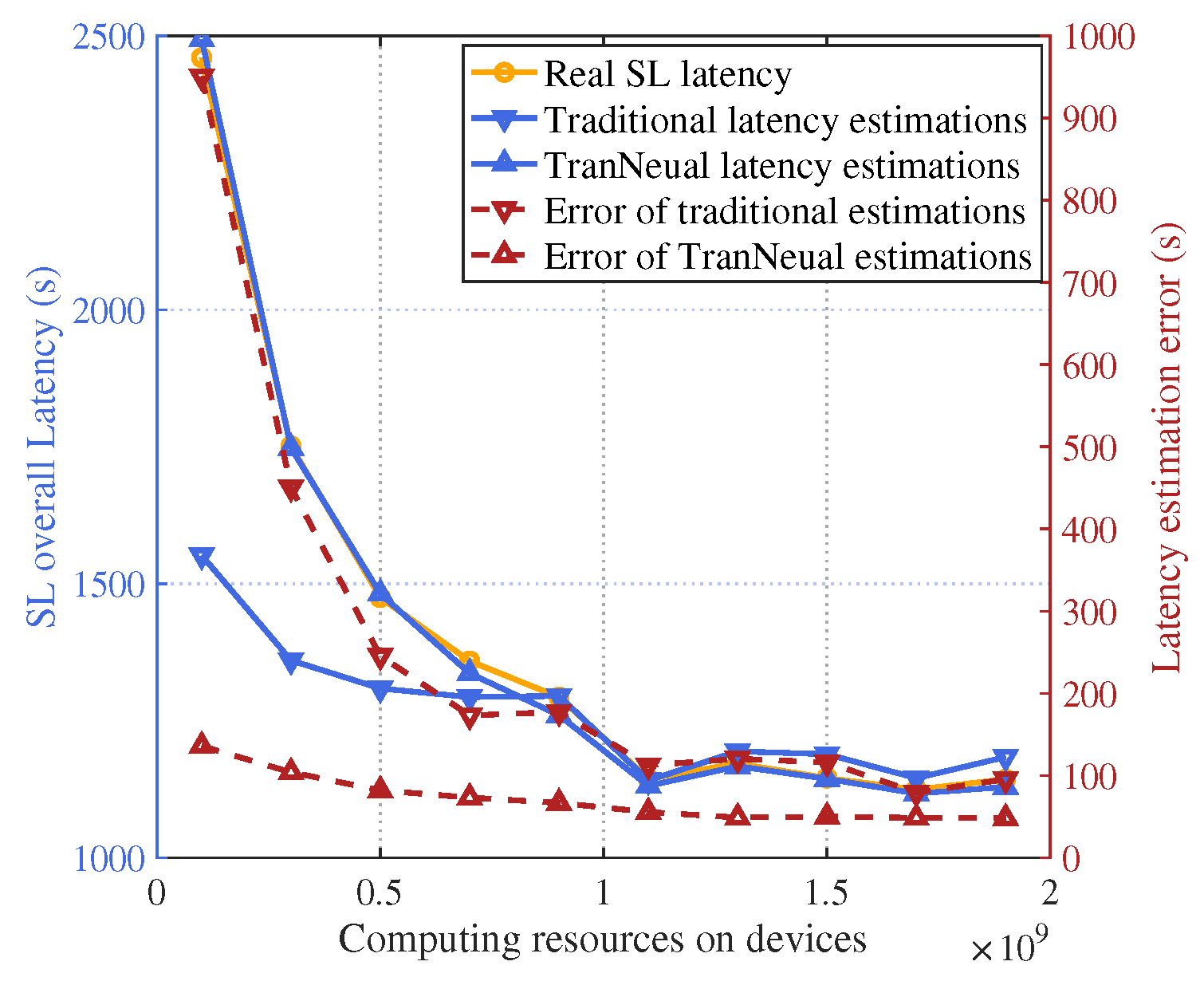

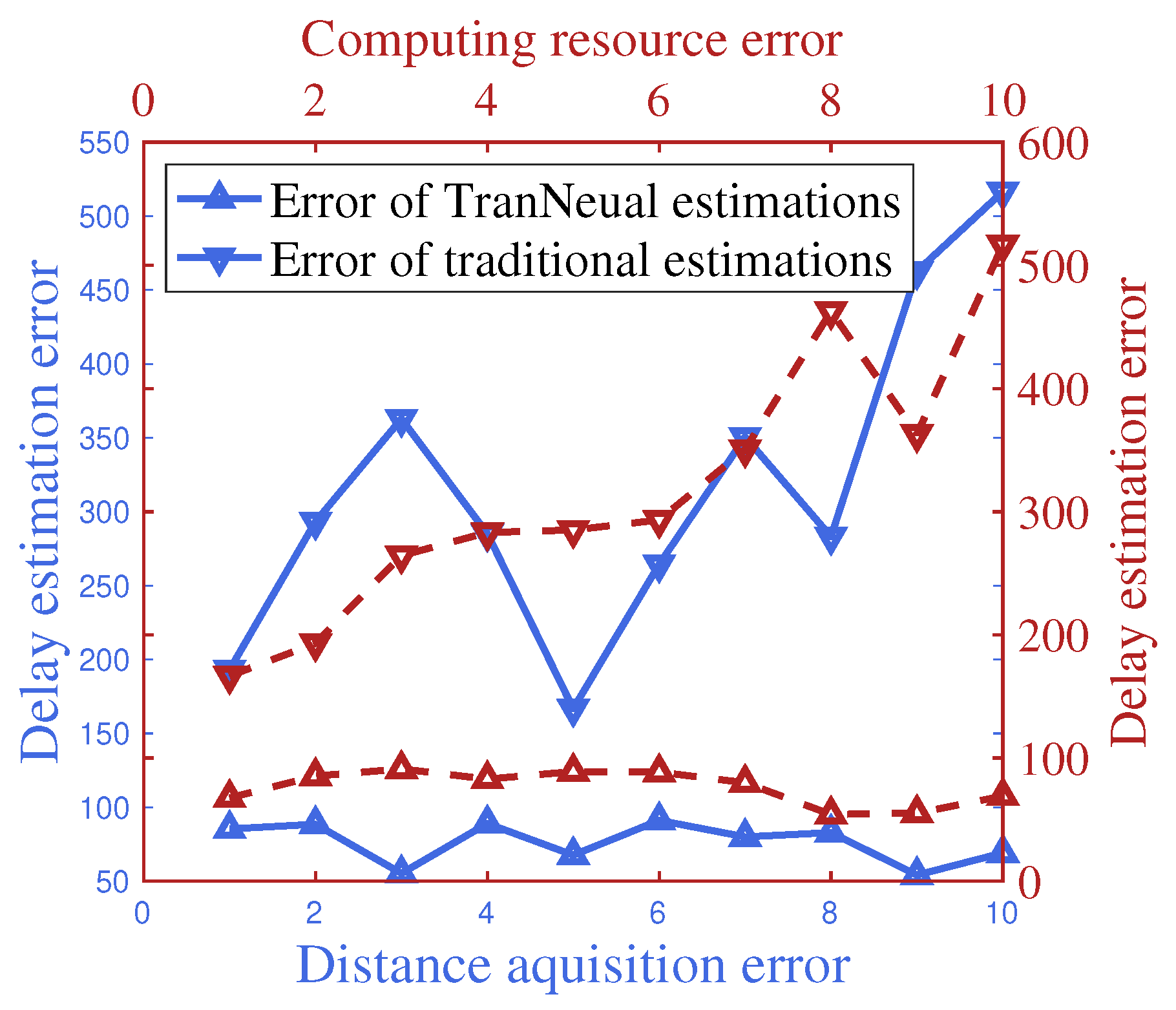

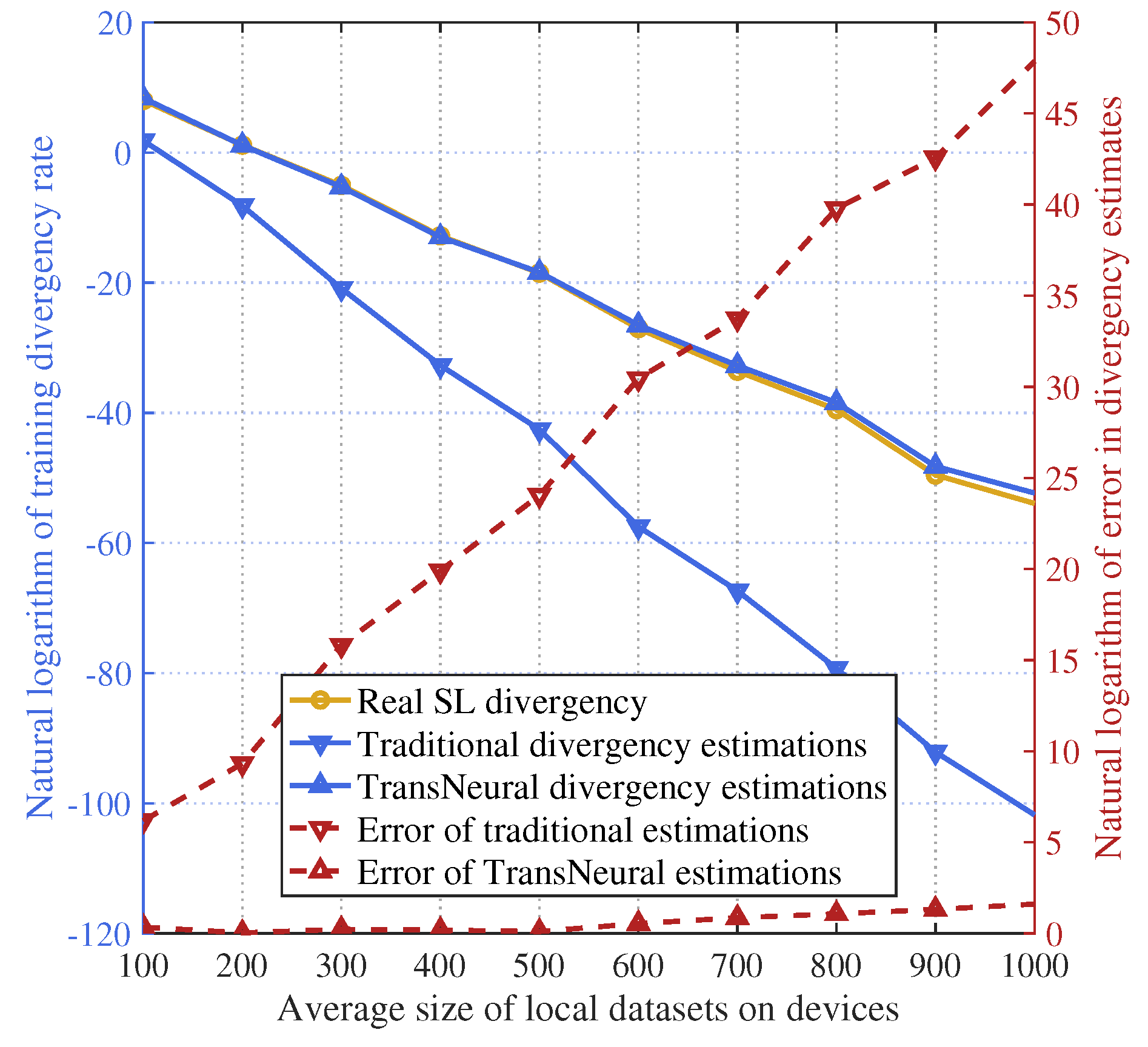

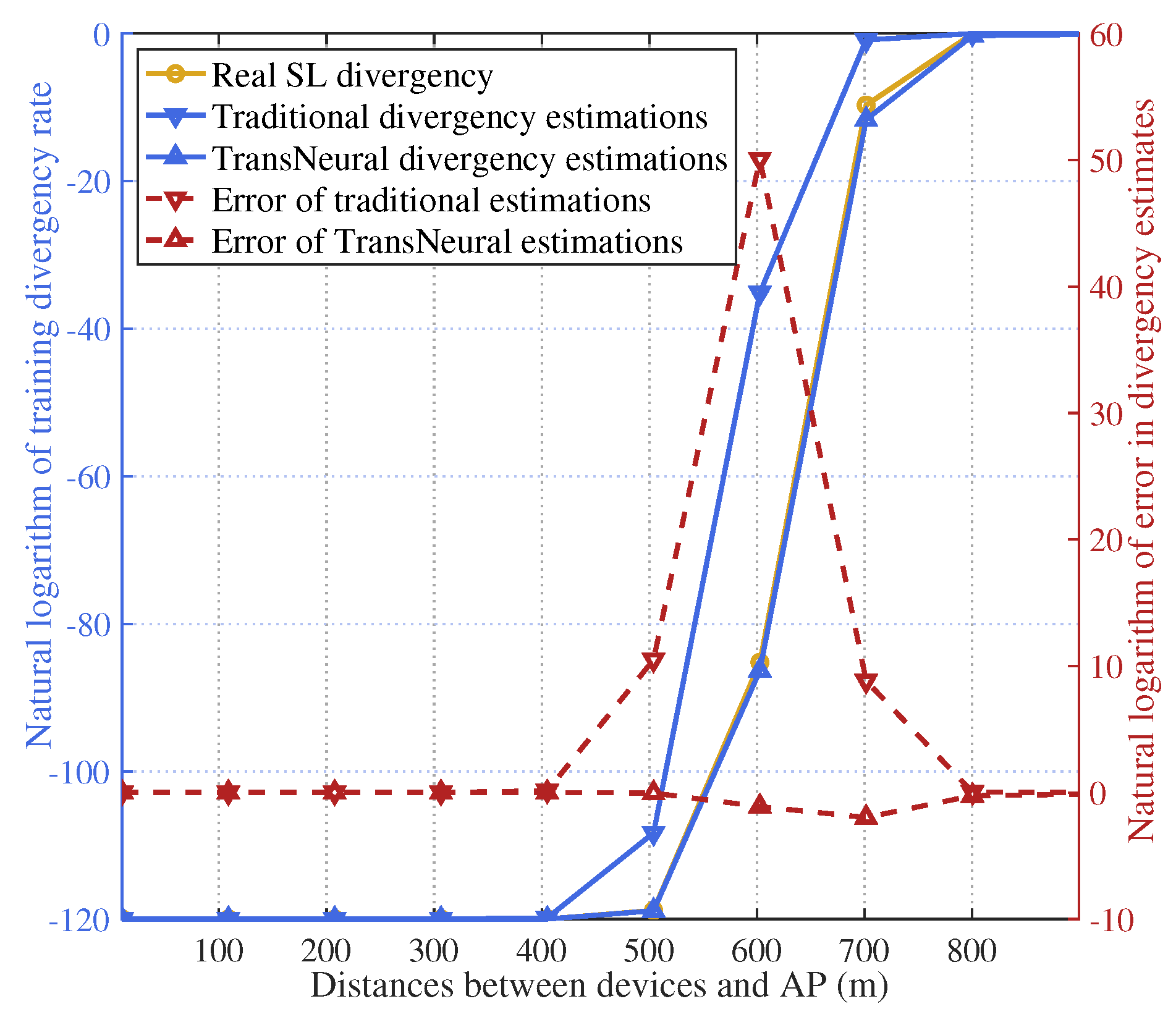

- To close the gap in SL-target DTN pre-validation environment, we propose a TransNeural algorithm to estimate SL training latency and convergence under given resource allocation strategies. This algorithm combines the transformer and neural network to model data similarities between devices, establish complex relationships between SL performance and network factors such as data distributions, wireless and computing resources, dataset sizes, and training iterations, and learn the reporting deviation characteristics of different devices.

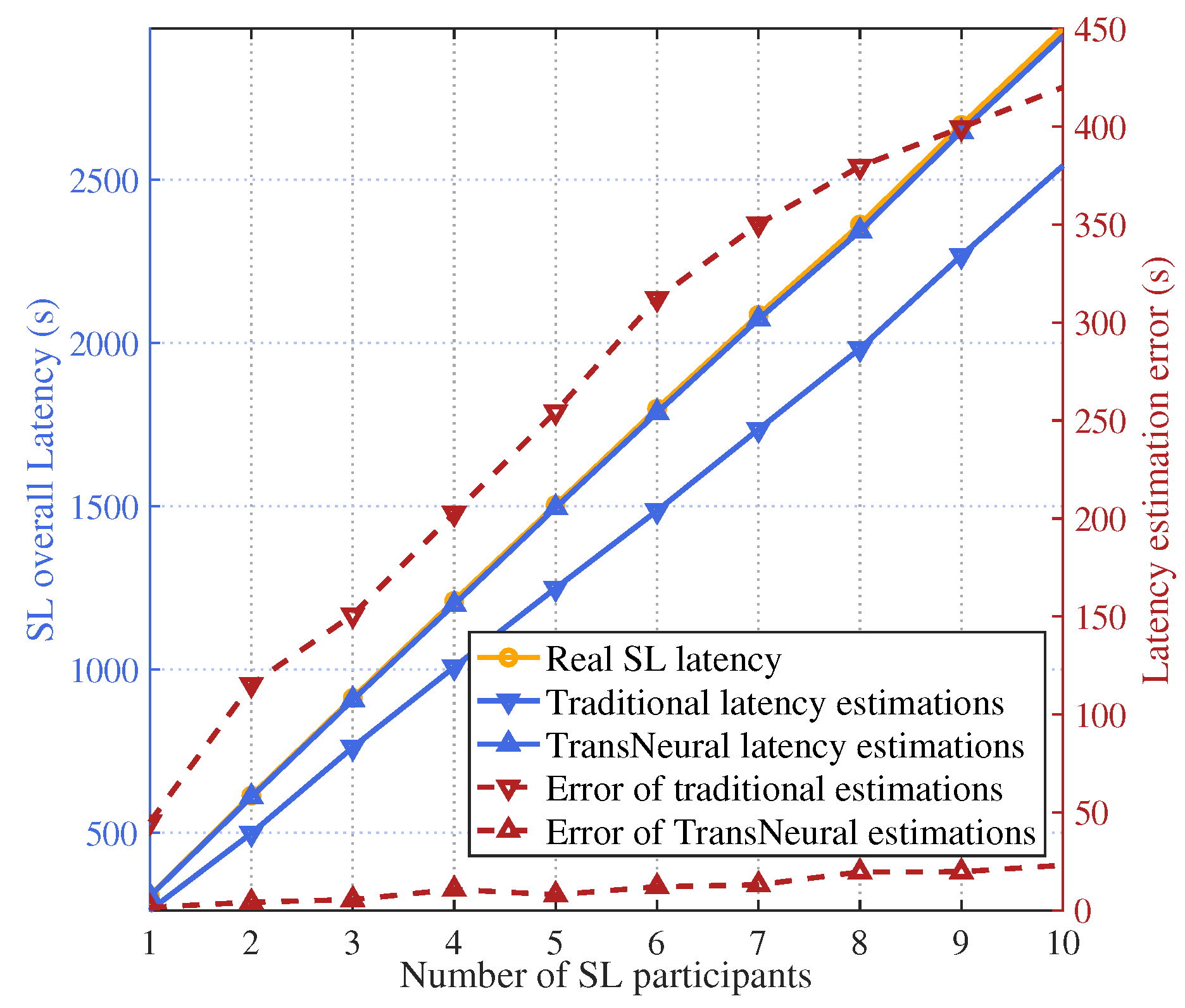

- Simulations show that the proposed TransNeural algorithm improves latency estimation accuracy by compared to traditional equation-based algorithms and enhances convergence estimation accuracy by .

2. System Description

2.1. System Model

2.2. Communication Model

2.3. Split Learning Process

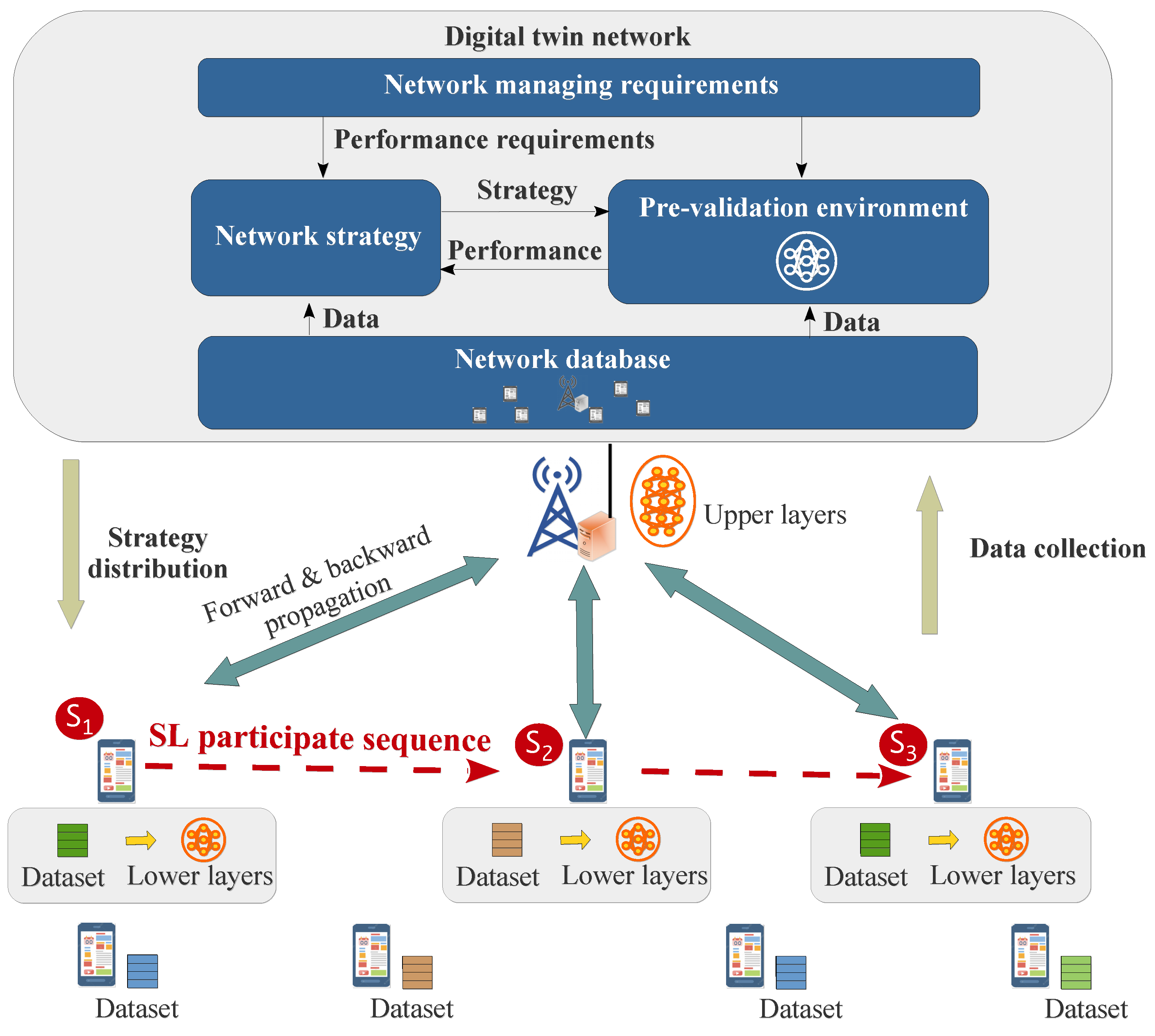

2.4. Digital Twin Network

3. Transformer-Based Pre-Validation Model for DTN

3.1. Problem Analysis for SL Convergence Estimation

3.2. Problem Analysis for SL Latency Estimation

3.3. Proposed Transformer-Based Pre-Validation Model

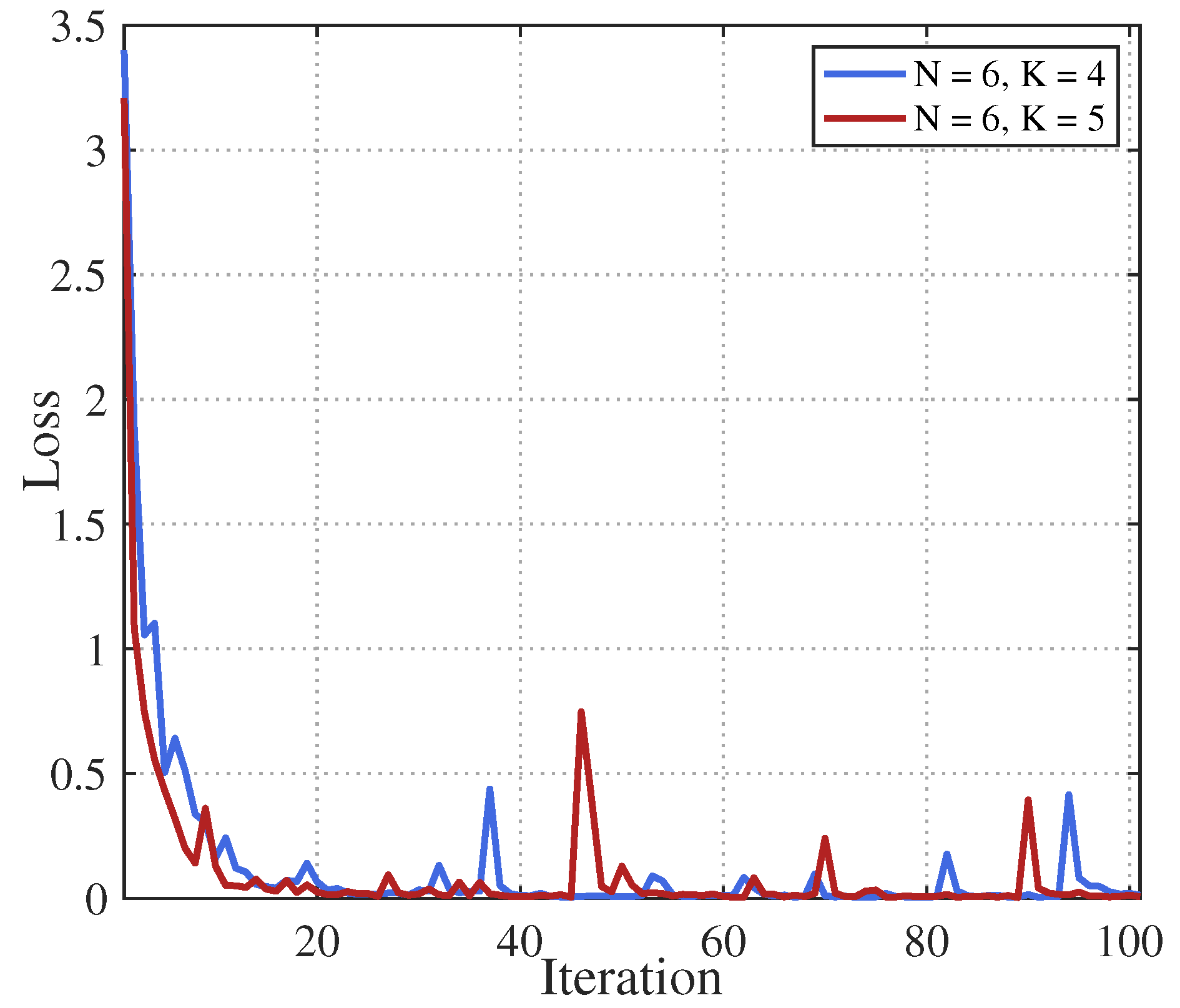

4. Simulation

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

References

- Wang, Y.; Yang, C.; Lan, S.; Zhu, L.; Zhang, Y. End-Edge-Cloud Collaborative Computing for Deep Learning: A Comprehensive Survey. IEEE Communications Surveys & Tutorials 2024, 1. [Google Scholar] [CrossRef]

- Wen, J.; Zhang, Z.; Lan, Y.; Cui, Z.; Cai, J.; Zhang, W. A survey on federated learning: challenges and applications. International Journal of Machine Learning and Cybernetics 2023, 14, 513–535. [Google Scholar] [CrossRef] [PubMed]

- Shen, X.; Liu, Y.; Liu, H.; Hong, J.; Duan, B.; Huang, Z.; Mao, Y.; Wu, Y.; Wu, D. A Split-and-Privatize Framework for Large Language Model Fine-Tuning. arXiv 2023, arXiv:cs.CL/2312.15603. [Google Scholar]

- Lin, Z.; Qu, G.; Chen, X.; Huang, K. Split Learning in 6G Edge Networks. IEEE Wireless Communications 2024, 1–7. [Google Scholar] [CrossRef]

- Lin, Z.; Zhu, G.; Deng, Y.; Chen, X.; Gao, Y.; Huang, K.; Fang, Y. Efficient Parallel Split Learning over Resource-constrained Wireless Edge Networks. IEEE Transactions on Mobile Computing 2024, 1–16. [Google Scholar] [CrossRef]

- Khan, L.U.; Saad, W.; Niyato, D.; Han, Z.; Hong, C.S. Digital-Twin-Enabled 6G: Vision, Architectural Trends, and Future Directions. IEEE Communications Magazine 2022, 60, 74–80. [Google Scholar] [CrossRef]

- Kuruvatti, N.P.; Habibi, M.A.; Partani, S.; Han, B.; Fellan, A.; Schotten, H.D. Empowering 6G Communication Systems With Digital Twin Technology: A Comprehensive Survey. IEEE Access 2022, 10, 112158–112186. [Google Scholar] [CrossRef]

- Almasan, P.; Galmés, M.F.; Paillisse, J.; Suárez-Varela, J.; Perino, D.; López, D.R.; Perales, A.A.P.; Harvey, P.; Ciavaglia, L.; Wong, L.; et al. Digital Twin Network: Opportunities and Challenges. CoRR 2022, abs/2201.01144, [2201.01144]. [Google Scholar]

- Lai, J.; Chen, Z.; Zhu, J.; Ma, W.; Gan, L.; Xie, S.; Li, G. Deep learning based traffic prediction method for digital twin network. Cognitive Computation 2023, 15, 1748–1766. [Google Scholar] [CrossRef] [PubMed]

- He, J.; Xiang, T.; Wang, Y.; Ruan, H.; Zhang, X. A Reinforcement Learning Handover Parameter Adaptation Method Based on LSTM-Aided Digital Twin for UDN. Sensors 2023, 23. [Google Scholar] [CrossRef] [PubMed]

- Ferriol-Galmés, M.; Suárez-Varela, J.; Paillissé, J.; Shi, X.; Xiao, S.; Cheng, X.; Barlet-Ros, P.; Cabellos-Aparicio, A. Building a Digital Twin for network optimization using Graph Neural Networks. Computer Networks 2022, 217, 109329. [Google Scholar] [CrossRef]

- Wang, H.; Wu, Y.; Min, G.; Miao, W. A Graph Neural Network-Based Digital Twin for Network Slicing Management. IEEE Transactions on Industrial Informatics 2022, 18, 1367–1376. [Google Scholar] [CrossRef]

- Tam, D.S.H.; Liu, Y.; Xu, H.; Xie, S.; Lau, W.C. PERT-GNN: Latency Prediction for Microservice-based Cloud-Native Applications via Graph Neural Networks. Proceedings of the Proceedings of the 29th ACM SIGKDD Conference on Knowledge Discovery and Data Mining, New York, NY, USA, 2023; KDD’23, pp. 2155–2165. [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. Advances in neural information processing systems 2017, 30. [Google Scholar]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. nature 2015, 521, 436–444. [Google Scholar] [CrossRef] [PubMed]

- Popovski, P.; Trillingsgaard, K.F.; Simeone, O.; Durisi, G. 5G wireless network slicing for eMBB, URLLC, and mMTC: A communication-theoretic view. Ieee Access 2018, 6, 55765–55779. [Google Scholar] [CrossRef]

- Hou, Z.; She, C.; Li, Y.; Zhuo, L.; Vucetic, B. Prediction and Communication Co-Design for Ultra-Reliable and Low-Latency Communications. IEEE Transactions on Wireless Communications 2020, 19, 1196–1209. [Google Scholar] [CrossRef]

- Schiessl, S.; Gross, J.; Skoglund, M.; Caire, G. Delay Performance of the Multiuser MISO Downlink under Imperfect CSI and Finite Length Coding. IEEE Journal on Selected Areas in Communications 2019, 37, 765–779. [Google Scholar] [CrossRef]

- Kang, M.; Li, X.; Ji, H.; Zhang, H. Digital twin-based framework for wireless multimodal interactions over long distance. International Journal of Communication Systems 2023, 36, e5603. [Google Scholar] [CrossRef]

- TS, G. Evolved universal terrestrial radio access (e-utra). further advancements for e-utra physical layer aspects (release 9). Technical report, 3GPP TR 36.814, 2010.

| Parameter | Value | Parameter | Value |

|---|---|---|---|

| G cycles/s | 100 G cycles/s | ||

| B | 10 MHz | K | 64 |

| W | 88 W | ||

| 1 Mbits | 1 Mbits | ||

| 100 M cycles | 100 M cycles | ||

| 1 M cycles | 1 M cycles | ||

| 1 Gbits |

| Latency estimation (s) | Deviant ratio | |

|---|---|---|

| Actual SL latency | / | |

| TransNeural algorithm | ||

| Equation-based algorithm | ||

| Natural logarithm of convergence estimation | Deviant ratio | |

| Actual SL divergence | / | |

| TransNeural algorithm | ||

| Equation-based algorithm |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).