Submitted:

08 April 2024

Posted:

09 April 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Works

2.1. Acne Detection

2.2. Semi-Supervised Learning

3. Method

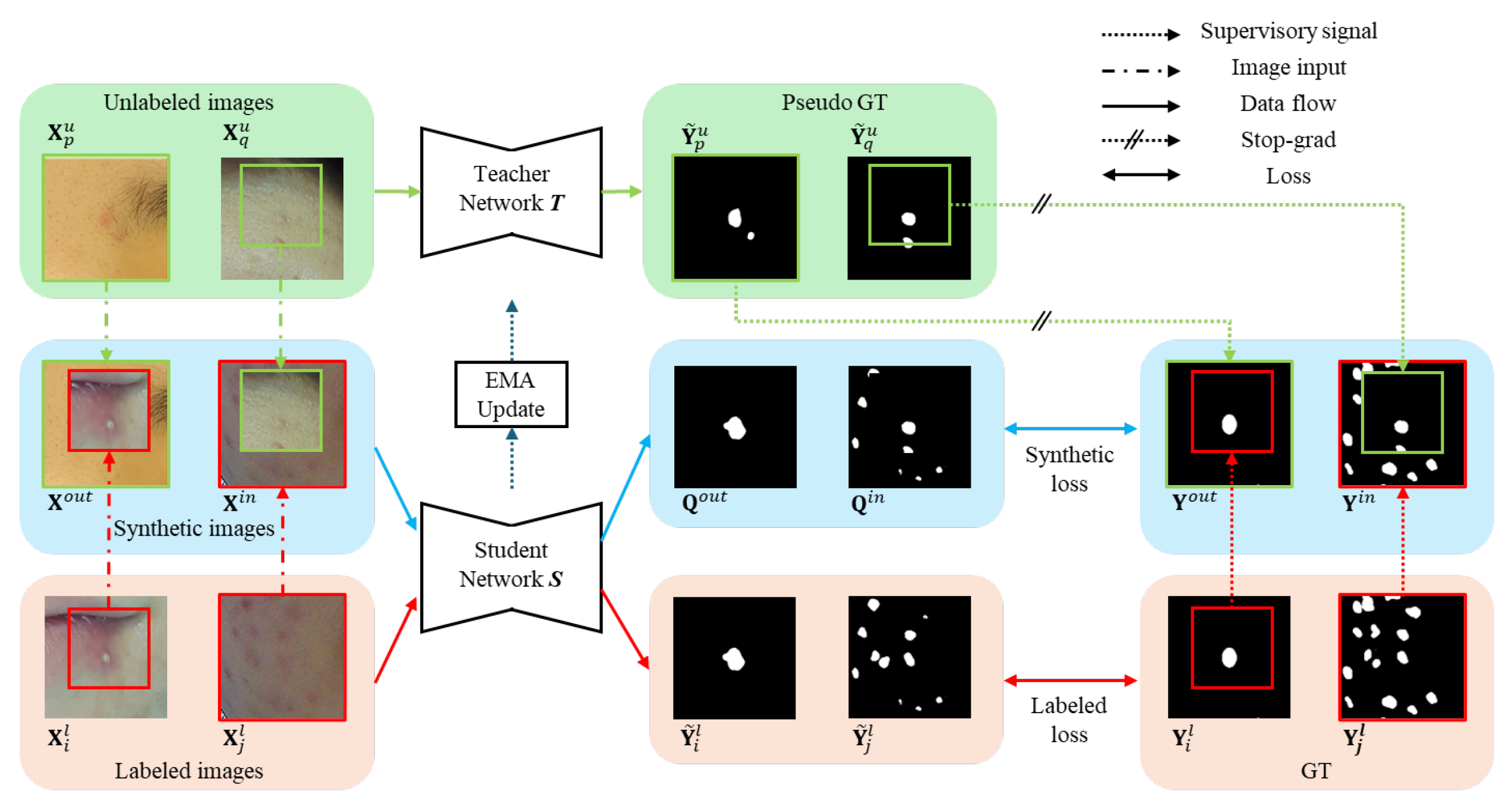

3.1. Overall Structure

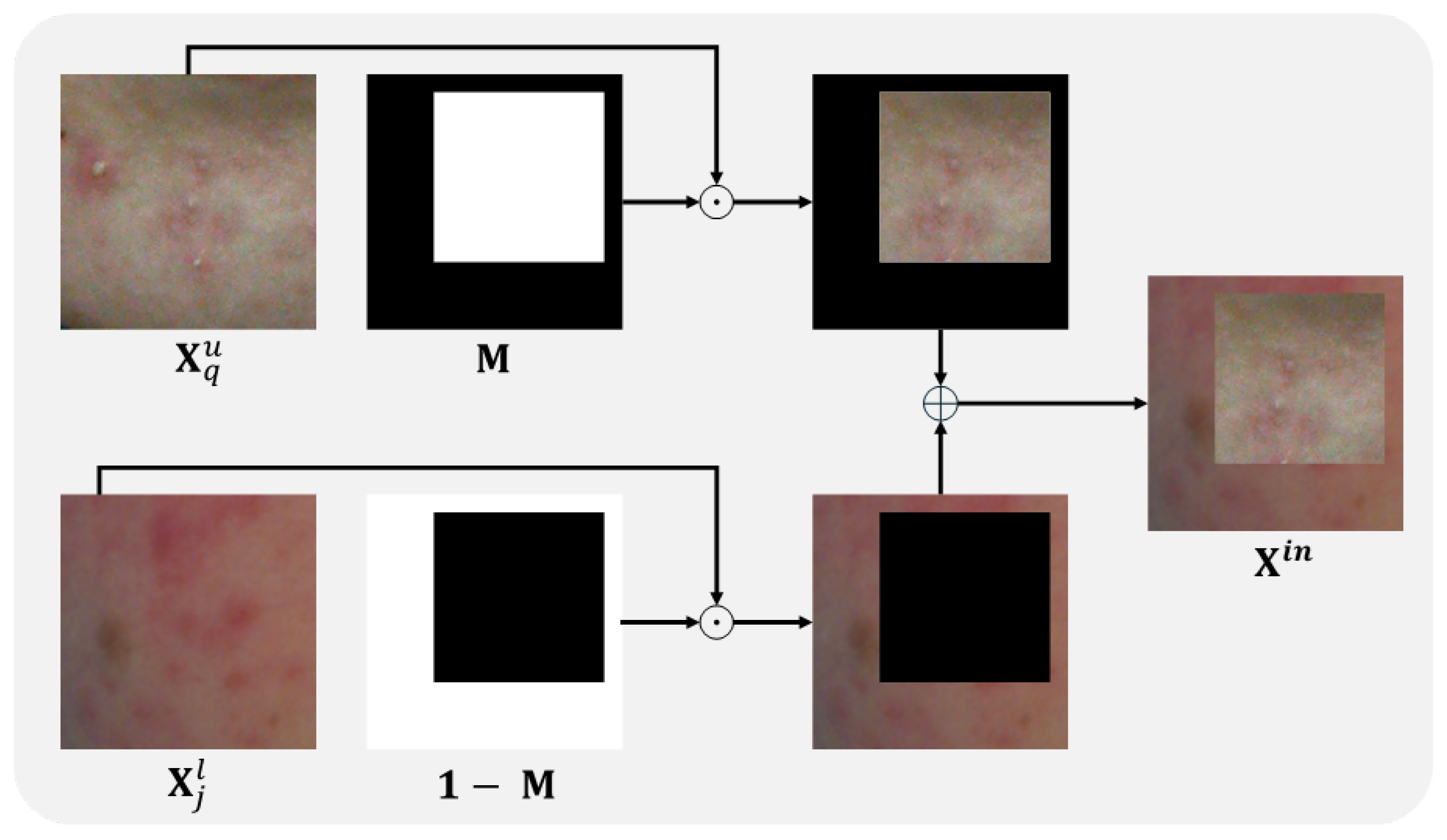

3.2. Bidirectional Copy-Paste for Synthetic images

3.3. Pseudo Synthetic GT for Supervisory Signals

3.4. Loss Computation

4. Experimental Results

4.1. Experimental Setup

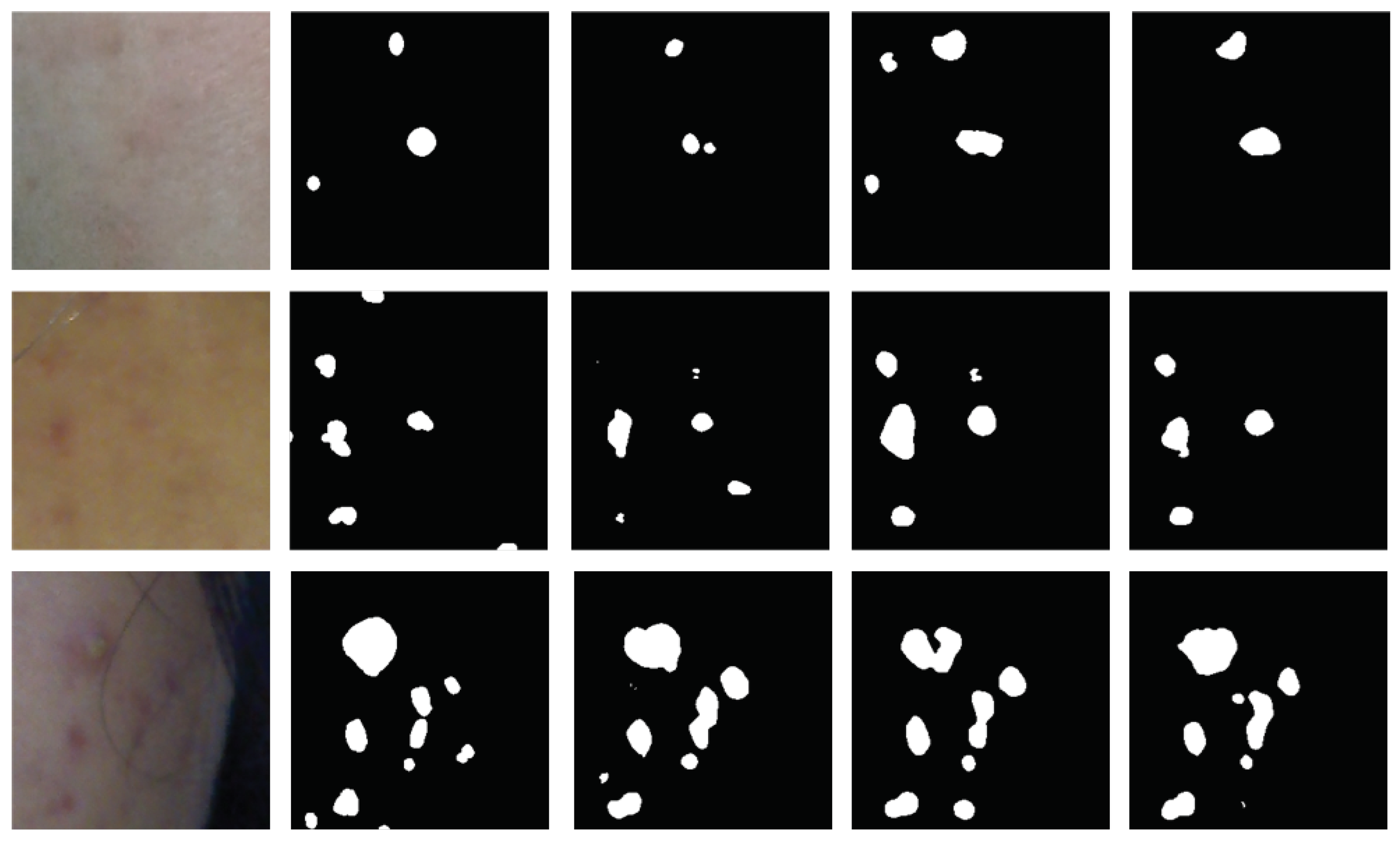

4.2. Comparison of Results

4.2.1. Comparison between Synthetic Images and Labeled Images for Pre-Trained Weight

4.2.2. Semi-Supervised Learning Comparison

5. Ablation study

6. Conclusion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Mekonnen, B. , Hsieh, T., Tsai, D., Liaw, S., Yang, F. & Huang, S. Generation of Augmented Capillary Network Optical Coherence Tomography Image Data of Human Skin for Deep Learning and Capillary Segmentation. Diagnostics. 11 (2021), https://www.mdpi.com/2075-4418/11/4/685.

- Bekmirzaev, S. , Oh, S. & Yo, S. RethNet: Object-by-Object Learning for Detecting Facial Skin Problems. Proceedings Of The IEEE/CVF International Conference On Computer Vision Workshops. pp. 0-0 (2019).

- Yoon, H. , Kim, S., Lee, J. & Yoo, S. Deep-Learning-Based Morphological Feature Segmentation for Facial Skin Image Analysis. Diagnostics. 13 (2023), https://www.mdpi.com/2075-4418/13/11/1894.

- Lee, J. , Yoon, H., Kim, S., Lee, C., Lee, J. & Yoo, S. Deep learning-based skin care product recommendation: A focus on cosmetic ingredient analysis and facial skin conditions. Journal Of Cosmetic Dermatology. (2024).

- Yadav, N. , Alfayeed, S., Khamparia, A., Pandey, B., Thanh, D. & Pande, S. HSV model-based segmentation driven facial acne detection using deep learning. Expert Systems. 39, e12760 (2022).

- Rashataprucksa, K. , Chuangchaichatchavarn, C., Triukose, S., Nitinawarat, S., Pongprutthipan, M. & Piromsopa, K. Acne Detection with Deep Neural Networks. Proceedings Of The 2020 2nd International Conference On Image Processing And Machine Vision. pp. 53-56, 2020. [Google Scholar] [CrossRef]

- Huynh, Q. , Nguyen, P., Le, H., Ngo, L., Trinh, N., Tran, M., Nguyen, H., Vu, N., Nguyen, A., Suda, K., Tsuji, K., Ishii, T., Ngo, T. & Ngo, H. Automatic Acne Object Detection and Acne Severity Grading Using Smartphone Images and Artificial Intelligence. Diagnostics. 12 (2022), https://www.mdpi.com/2075-4418/12/8/1879.

- Ren, S. , He, K., Girshick, R. & Sun, J. Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Networks. IEEE Transactions On Pattern Analysis And Machine Intelligence. 39, 1137-1149 (2017).

- Dai, J. , Li, Y., He, K. & Sun, J. R-FCN: Object Detection via Region-based Fully Convolutional Networks. Advances In Neural Information Processing Systems. 29 (2016).

- Min, K. , Lee, G. & Lee, S. ACNet: Mask-Aware Attention with Dynamic Context Enhancement for Robust Acne Detection. 2021 IEEE International Conference On Systems, Man, And Cybernetics (SMC). pp. 2724-2729 (2021).

- Junayed, M. , Islam, M. & Anjum, N. A Transformer-Based Versatile Network for Acne Vulgaris Segmentation. 2022 Innovations In Intelligent Systems And Applications Conference (ASYU). pp. 1-6, 2022. [Google Scholar]

- Kim, S. , Lee, C., Jung, G., Yoon, H., Lee, J. & Yoo, S. Facial Acne Segmentation based on Deep Learning with Center Point Loss. 2023 IEEE 36th International Symposium On Computer-Based Medical Systems (CBMS). pp. 678-683, 2023. [Google Scholar]

- Laine, S. & Aila, T. Temporal ensembling for semi-supervised learning. ArXiv Preprint ArXiv:1610.02242. (2016).

- Yun, S. , Han, D., Oh, S., Chun, S., Choe, J. & Yoo, Y. CutMix: Regularization Strategy to Train Strong Classifiers With Localizable Features. Proceedings Of The IEEE/CVF International Conference On Computer Vision (ICCV). (2019,10).

- Bai, Y. , Chen, D., Li, Q., Shen, W. & Wang, Y. Bidirectional Copy-Paste for Semi-Supervised Medical Image Segmentation. Proceedings Of The IEEE/CVF Conference On Computer Vision And Pattern Recognition (CVPR), pp. 11514-11524 (2023,6). [Google Scholar]

- Kim, S. , Yoon, H., Lee, J. & Yoo, S. Semi-automatic Labeling and Training Strategy for Deep Learning-based Facial Wrinkle Detection. 2022 IEEE 35th International Symposium On Computer-Based Medical Systems (CBMS), pp. 383-388 (2022). [Google Scholar]

- Kim, S. , Yoon, H., Lee, J. & Yoo, S. Facial wrinkle segmentation using weighted deep supervision and semi-automatic labeling. Artificial Intelligence In Medicine. 145 pp. 102679 (2023), https://www.sciencedirect.com/science/article/pii/S0933365723001938.

- Kang, S. , Lozada, V., Bettoli, V., Tan, J., Rueda, M., Layton, A., Petit, L. & Dréno, B. New Atrophic Acne Scar Classification: Reliability of Assessments Based on Size, Shape, and Number. Journal Of Drugs In Dermatology : JDD. 15, 693-702 (2016,6), http://europepmc.org/abstract/MED/27272075. [Google Scholar]

- Sohn, K. , Berthelot, D., Carlini, N., Zhang, Z., Zhang, H., Raffel, C., Cubuk, E., Kurakin, A. & Li, C. FixMatch: Simplifying Semi-Supervised Learning with Consistency and Confidence. Advances In Neural Information Processing Systems, 33 pp. 596-608 (2020). [Google Scholar]

- Chen, Y. , Tan, X., Zhao, B., Chen, Z., Song, R., Liang, J. & Lu, X. Boosting Semi-Supervised Learning by Exploiting All Unlabeled Data. Proceedings Of The IEEE/CVF Conference On Computer Vision And Pattern Recognition (CVPR), pp. 7548-7557 (2023,6). [Google Scholar]

- Wu, Y. , Wu, Z., Wu, Q., Ge, Z. & Cai, J. Exploring smoothness and class-separation for semi-supervised medical image segmentation. International Conference On Medical Image Computing And Computer-assisted Intervention, pp. 34-43 (2022). [Google Scholar]

- Yang, L. , Qi, L., Feng, L., Zhang, W. & Shi, Y. Revisiting Weak-to-Strong Consistency in Semi-Supervised Semantic Segmentation. Proceedings Of The IEEE/CVF Conference On Computer Vision And Pattern Recognition (CVPR), pp. 7236-7246 (2023,6). [Google Scholar]

- Lumini. (2020). Lumini KIOSK V2 Home Page. [Online]. Available: https://www.lulu-lab.com/bbs/write. php?bo_table=product_en&sca=LUMINI+KIOSK. [Accessed: July 9, 2020].

| Method | Ratio | Metrics | ||

|---|---|---|---|---|

| Labeled | Unlabeled | Dice Score | Jaccard Index | |

| Synthetic images | 3% | 0% | 0.4423 | 0.3108 |

| Labeled images | 3% | 0% | 0.4570 | 0.3203 |

| Synthetic images | 7% | 0% | 0.4784 | 0.3425 |

| Labeled images | 7% | 0% | 0.4951 | 0.3517 |

| Method | Ratio | Metrics | ||

|---|---|---|---|---|

| Labeled | Unlabeled | Dice Score | Jaccard Index | |

| Pre-trained | 3% | 97% | 0.4570 | 0.3203 |

| SS-Net [21] | 3% | 97% | 0.4732 | 0.3333 |

| BCP [15] | 3% | 97% | 0.5054 | 0.3617 |

| Ours | 3% | 97% | 0.5251 | 0.3777 |

| Pre-trained | 7% | 93% | 0.4951 | 0.3517 |

| SS-Net [21] | 7% | 93% | 0.5162 | 0.3750 |

| BCP [15] | 7% | 93% | 0.5357 | 0.3912 |

| Ours | 7% | 93% | 0.5603 | 0.4117 |

| Method | Ratio | Metrics | ||

|---|---|---|---|---|

| Labeled | Unlabeled | Dice Score | Jaccard Index | |

| 0.1 | 3% | 97% | 0.5177 | 0.3693 |

| 0.5 | 3% | 97% | 0.5251 | 0.3777 |

| 1.0 | 3% | 97% | 0.5205 | 0.3753 |

| 0.1 | 7% | 93% | 0.5522 | 0.4060 |

| 0.5 | 7% | 93% | 0.5603 | 0.4122 |

| 1.0 | 7% | 93% | 0.5588 | 0.4117 |

| Method | Ratio | Metrics | ||

|---|---|---|---|---|

| Labeled | Unlabeled | Dice Score | Jaccard Index | |

| 16 (BCP[15]) | 3% | 97% | 0.5054 | 0.3617 |

| 16 (ours) | 3% | 97% | 0.5251 | 0.3777 |

| 32 (ours) | 3% | 97% | 0.5394 | 0.3912 |

| 64 (ours) | 3% | 97% | 0.5458 | 0.3965 |

| 16 (BCP[15]) | 7% | 93% | 0.5357 | 0.3912 |

| 16 (ours) | 7% | 93% | 0.5603 | 0.4117 |

| 32 (ours) | 7% | 93% | 0.5709 | 0.4233 |

| 64 (ours) | 7% | 93% | 0.5781 | 0.4271 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).