Submitted:

21 March 2024

Posted:

22 March 2024

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

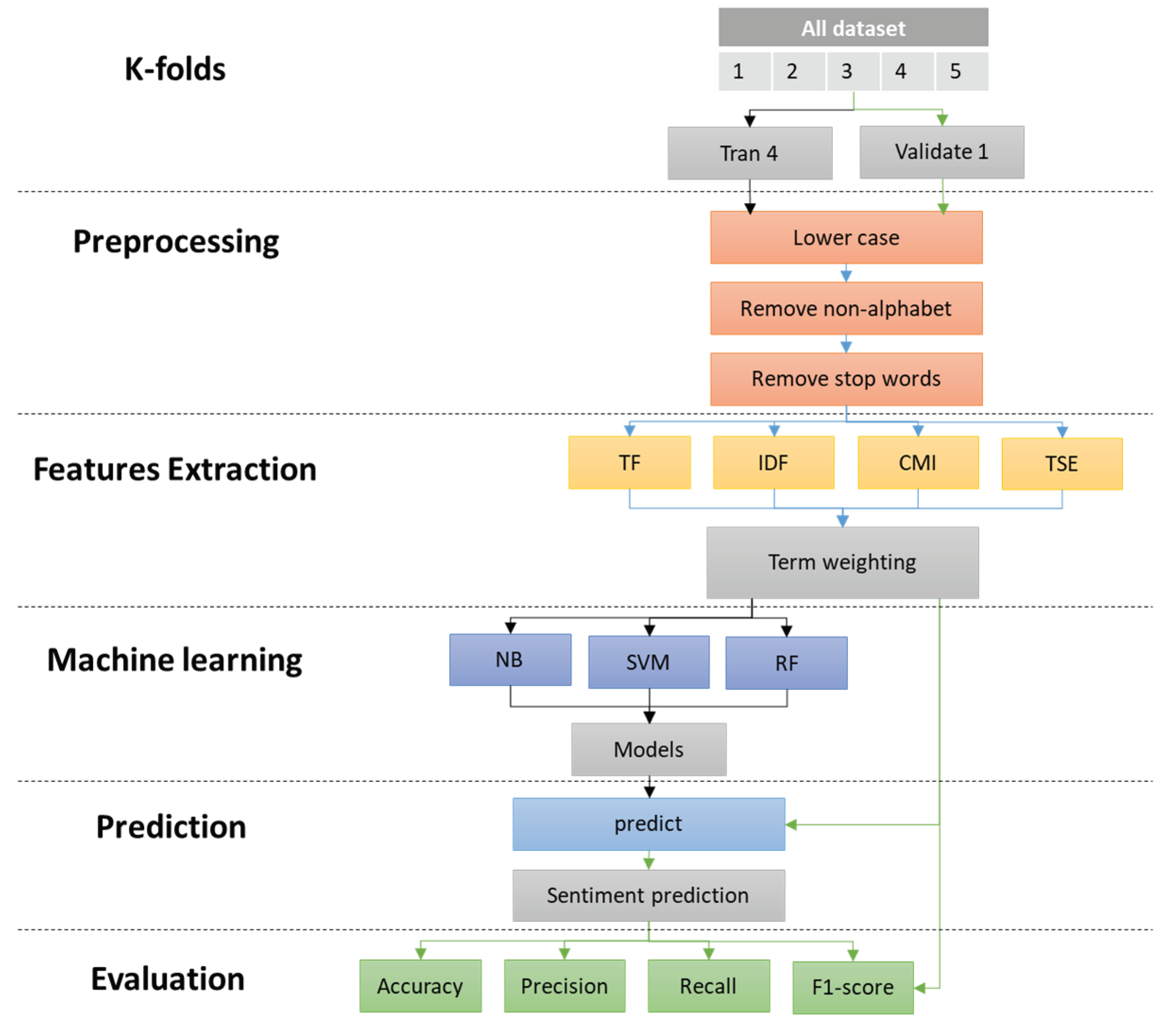

2. Materials and Methods

- -

- Improved accuracy: Words with high entropy might be less informative for specific sentiment classifications, so down weighting them could lead to more accurate results.

- -

- Domain adaptation: Words with low entropy might be specific to a certain domain (e.g., “scam” in review sites), and incorporating their entropy could improve sentiment analysis in that domain.

- -

- Identifying nuanced sentiment: Words with high entropy might be valuable for capturing subtle sentiment changes within texts, allowing for more advanced sentiment analysis.

- -

- TF focuses on frequency: High TF words appear often, but they might not be sentiment-specific.

- -

- IDF focuses on uniqueness: High IDF words are rare, but they might not be relevant for overall sentiment.

- -

- Term sentiment entropy focuses on sentiment distribution: It provides a nuanced understanding of how a word’s sentiment varies across different contexts.

| Term | number of term | TF | ||||||

|---|---|---|---|---|---|---|---|---|

| d1 | d2 | d3 | d4 | d1 | d2 | d3 | d4 | |

| t1 | 5 | 2 | 3 | 4 | 0.56 | 0.25 | 0.43 | 0.50 |

| t2 | 3 | 5 | 3 | 4 | 0.33 | 0.63 | 0.43 | 0.50 |

| t3 | 0 | 1 | 0 | 0 | 0.00 | 0.13 | 0.00 | 0.00 |

| t4 | 1 | 0 | 0 | 0 | 0.11 | 0.00 | 0.00 | 0.00 |

| t5 | 0 | 0 | 1 | 0 | 0.00 | 0.00 | 0.14 | 0.00 |

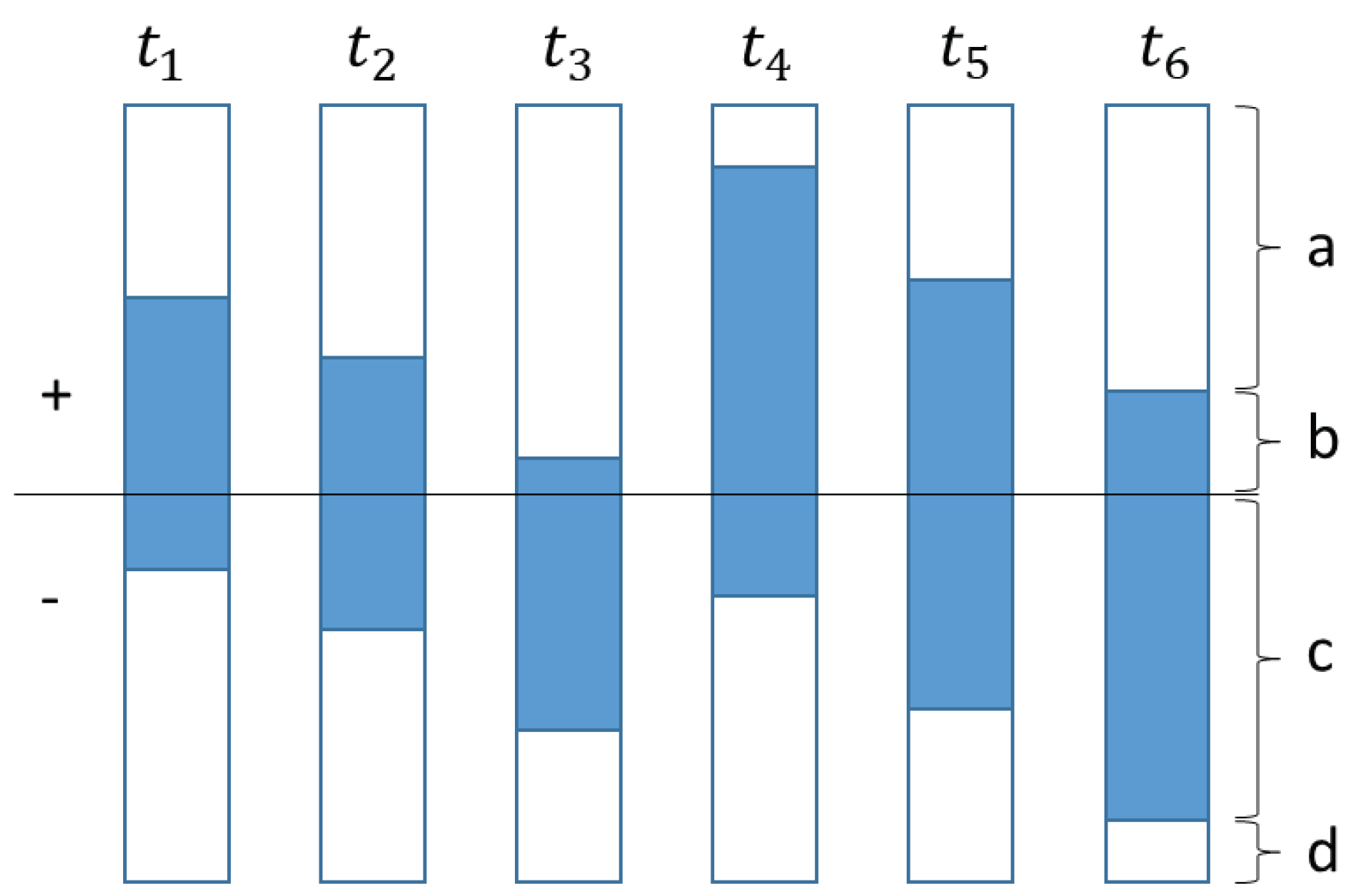

| Term | number of document that term i exist | IDF | CMI | TSE | |||

|---|---|---|---|---|---|---|---|

| a | b | c | d | ||||

| t1 | 3 | 3 | 1 | 5 | 0.33 | 0.12 | 4.09 |

| t2 | 4 | 2 | 2 | 4 | 0.33 | 0.08 | 3.32 |

| t3 | 5 | 1 | 3 | 3 | 0.33 | 0.04 | 4.09 |

| t4 | 1 | 5 | 1 | 5 | 0.50 | 0.13 | 5.11 |

| t5 | 3 | 3 | 3 | 3 | 0.50 | 0.08 | 3.32 |

- Amazon Cell Phones Reviews: (https://www.kaggle.com/datasets/grikomsn/amazon-cell-phones-reviews) This dataset features product reviews alongside their corresponding star ratings, providing a rich source of sentiment information within a specific domain.

- Coronavirus Tweets NLP - Text Classification: (https://www.kaggle.com/datasets/datatattle/covid-19-nlp-text-classification) This dataset comprises Twitter messages labeled with their sentiment regarding the COVID-19 pandemic. It presents an opportunity to analyze public opinion and emotional responses surrounding a critical real-world event.

- Twitter US Airline Sentiment: (https://www.kaggle.com/datasets/crowdflower/twitter-airline-sentiment) This dataset gathers tweets expressing travelers’ sentiments towards six major US airlines. Labeled with positive, neutral, or negative sentiment, it allows for comparative analysis and identification of factors influencing traveler satisfaction.

- IMDB Dataset of 50K Movie Reviews: (https://www.kaggle.com/datasets/lakshmi25npathi/imdb-dataset-of-50k-movie-reviews) This dataset consists of 50,000 movie reviews classified as positive or negative. It provides a well-established benchmark for sentiment analysis tasks and helps assess the proposed method’s performance on general sentiment classification.

3. Results

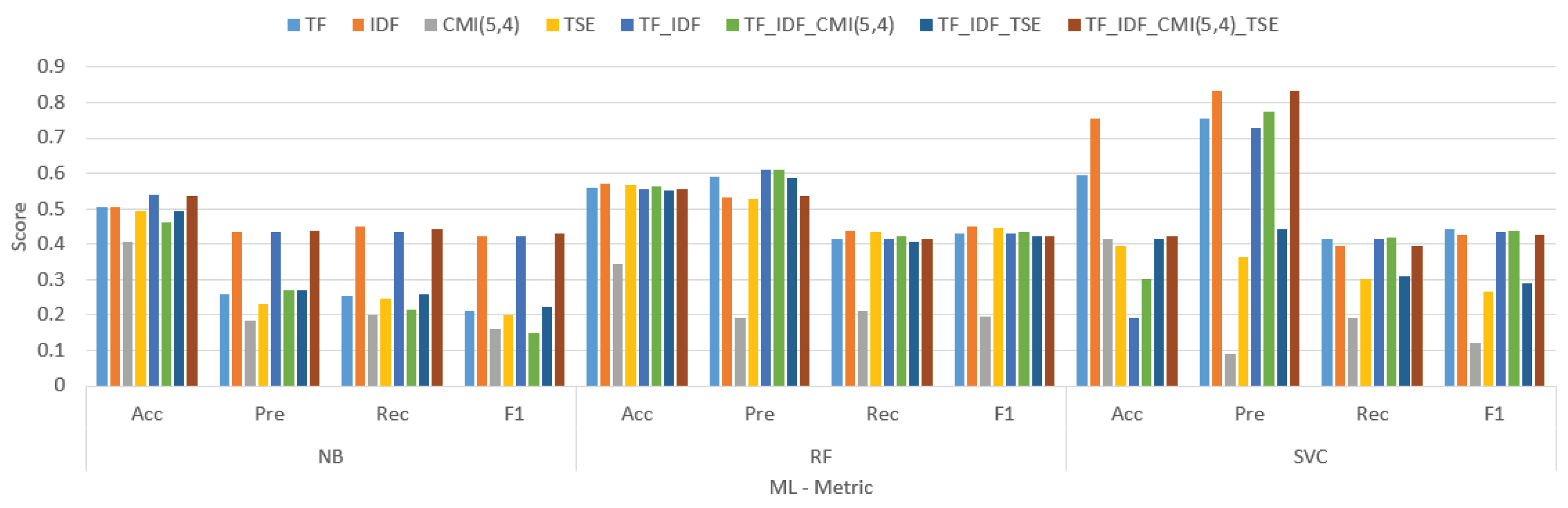

| Tokenize weight | NB | RF | SVC | |||||||||

| Acc | Pre | Rec | F1 | Acc | Pre | Rec | F1 | Acc | Pre | Rec | F1 | |

| TF | 0.505 | 0.259 | 0.256 | 0.211 | 0.559 | 0.592 | 0.414 | 0.429 | 0.594 | 0.756 | 0.416 | 0.441 |

| IDF | 0.504 | 0.435 | 0.448 | 0.421 | 0.571 | 0.532 | 0.438 | 0.45 | 0.756 | 0.835 | 0.394 | 0.426 |

| CMI(pos) | 0.408 | 0.185 | 0.201 | 0.162 | 0.343 | 0.19 | 0.211 | 0.194 | 0.416 | 0.092 | 0.192 | 0.12 |

| TSE | 0.491 | 0.23 | 0.246 | 0.198 | 0.569 | 0.529 | 0.436 | 0.447 | 0.394 | 0.363 | 0.303 | 0.266 |

| TF_IDF | 0.54 | 0.434 | 0.435 | 0.422 | 0.556 | 0.611 | 0.414 | 0.43 | 0.192 | 0.726 | 0.414 | 0.433 |

| TF_IDF_CMI(pos) | 0.461 | 0.271 | 0.215 | 0.15 | 0.564 | 0.609 | 0.421 | 0.435 | 0.303 | 0.776 | 0.42 | 0.437 |

| TF_IDF_TSE | 0.492 | 0.271 | 0.26 | 0.223 | 0.553 | 0.588 | 0.407 | 0.422 | 0.414 | 0.442 | 0.308 | 0.289 |

| TF_IDF_CMI(pos)_TSE | 0.534 | 0.439 | 0.442 | 0.43 | 0.554 | 0.535 | 0.413 | 0.422 | 0.422 | 0.835 | 0.395 | 0.427 |

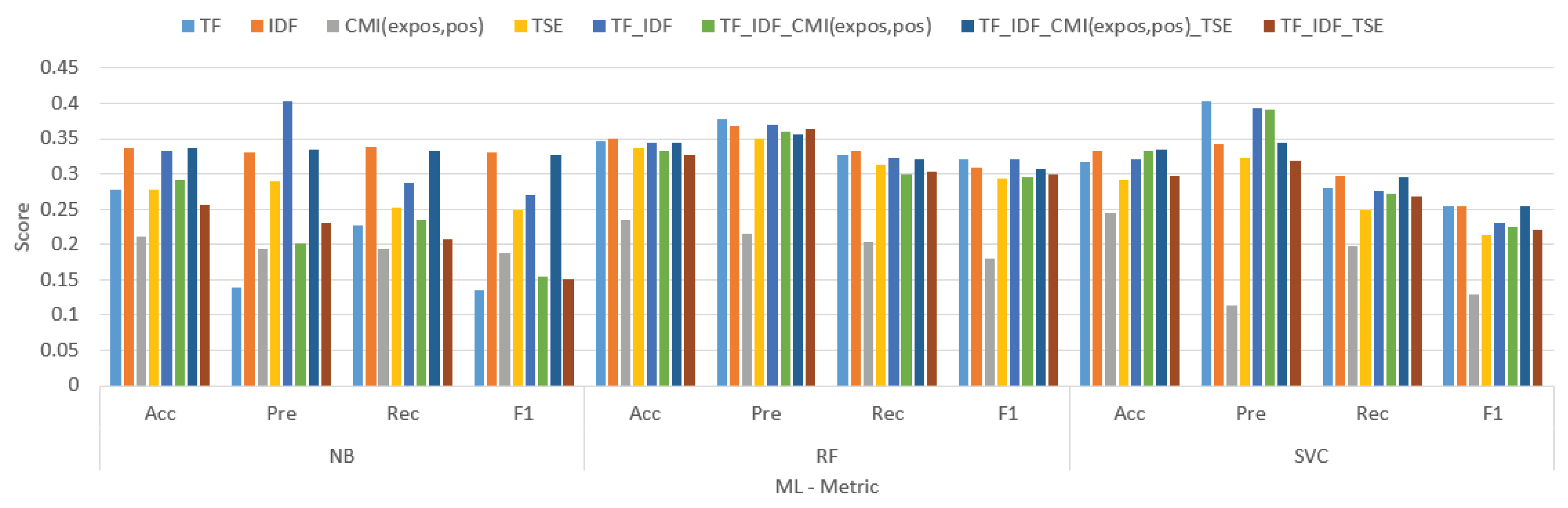

| Tokenize weight | NB | RF | SVC | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Acc | Pre | Rec | F1 | Acc | Pre | Rec | F1 | Acc | Pre | Rec | F1 | |

| TF | 0.277 | 0.139 | 0.227 | 0.135 | 0.346 | 0.377 | 0.327 | 0.32 | 0.317 | 0.403 | 0.28 | 0.255 |

| IDF | 0.336 | 0.33 | 0.339 | 0.33 | 0.35 | 0.367 | 0.332 | 0.309 | 0.333 | 0.343 | 0.297 | 0.255 |

| CMI(pos) | 0.211 | 0.194 | 0.194 | 0.188 | 0.234 | 0.216 | 0.203 | 0.18 | 0.244 | 0.113 | 0.197 | 0.129 |

| TSE | 0.277 | 0.289 | 0.252 | 0.249 | 0.337 | 0.35 | 0.313 | 0.293 | 0.291 | 0.323 | 0.249 | 0.213 |

| TF_IDF | 0.333 | 0.403 | 0.288 | 0.269 | 0.345 | 0.369 | 0.322 | 0.32 | 0.321 | 0.394 | 0.276 | 0.23 |

| TF_IDF_CMI(pos) | 0.292 | 0.201 | 0.235 | 0.155 | 0.332 | 0.36 | 0.3 | 0.295 | 0.333 | 0.392 | 0.272 | 0.224 |

| TF_IDF_TSE | 0.256 | 0.231 | 0.208 | 0.15 | 0.327 | 0.364 | 0.304 | 0.3 | 0.297 | 0.318 | 0.268 | 0.222 |

| TF_IDF_CMI(pos)_TSE | 0.337 | 0.334 | 0.332 | 0.326 | 0.344 | 0.356 | 0.32 | 0.308 | 0.334 | 0.344 | 0.296 | 0.255 |

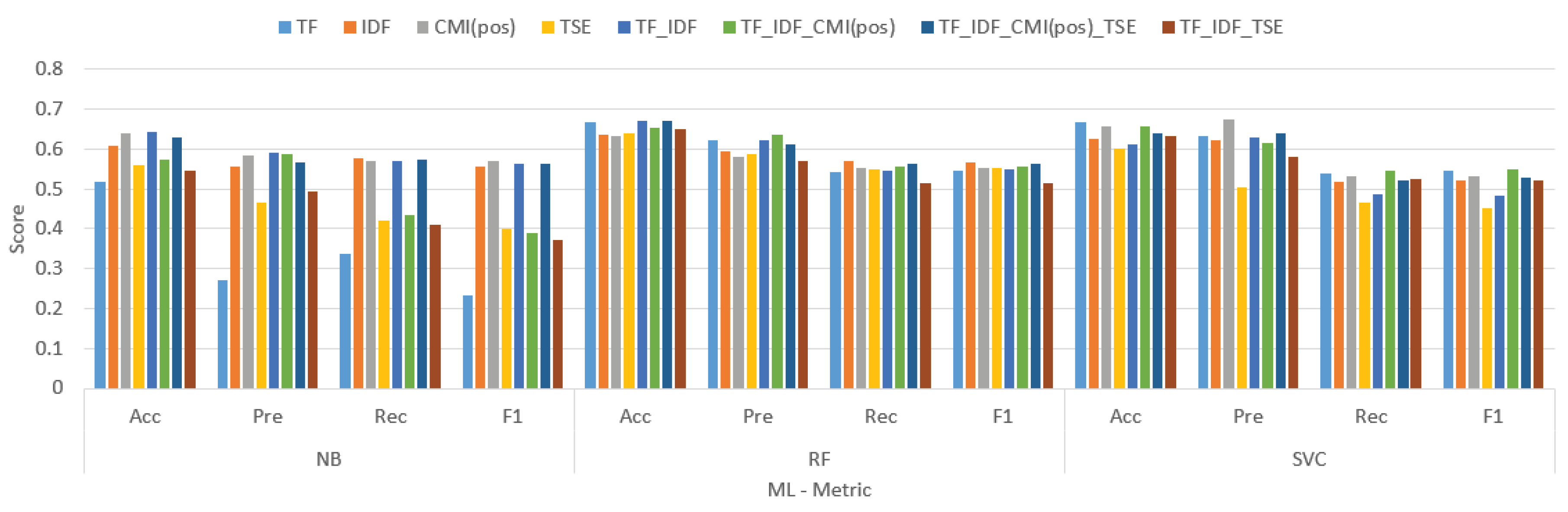

| Tokenize weight | NB | RF | SVC | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Acc | Pre | Rec | F1 | Acc | Pre | Rec | F1 | Acc | Pre | Rec | F1 | |

| TF | 0.517 | 0.272 | 0.337 | 0.232 | 0.668 | 0.622 | 0.542 | 0.545 | 0.668 | 0.633 | 0.539 | 0.545 |

| IDF | 0.608 | 0.557 | 0.576 | 0.557 | 0.635 | 0.593 | 0.569 | 0.568 | 0.627 | 0.622 | 0.518 | 0.521 |

| CMI(pos) | 0.64 | 0.585 | 0.569 | 0.569 | 0.632 | 0.581 | 0.553 | 0.553 | 0.658 | 0.675 | 0.531 | 0.532 |

| TSE | 0.561 | 0.467 | 0.42 | 0.4 | 0.638 | 0.586 | 0.55 | 0.552 | 0.603 | 0.504 | 0.465 | 0.453 |

| TF_IDF | 0.644 | 0.591 | 0.57 | 0.564 | 0.67 | 0.623 | 0.545 | 0.549 | 0.612 | 0.629 | 0.488 | 0.485 |

| TF_IDF_CMI(pos) | 0.575 | 0.588 | 0.435 | 0.39 | 0.653 | 0.636 | 0.558 | 0.557 | 0.657 | 0.615 | 0.546 | 0.551 |

| TF_IDF_TSE | 0.545 | 0.494 | 0.409 | 0.371 | 0.649 | 0.57 | 0.516 | 0.513 | 0.634 | 0.582 | 0.525 | 0.523 |

| TF_IDF_CMI(pos)_TSE | 0.629 | 0.568 | 0.572 | 0.563 | 0.672 | 0.612 | 0.562 | 0.562 | 0.638 | 0.641 | 0.521 | 0.528 |

- -

- -Naive Bayes (NB): TF.IDF.CMI(pos) achieved the highest F1 score (0.588), followed by TF.IDF (0.570) and TF (0.435).

- -

- -Support Vector Machines (SVM): TF.IDF.CMI(pos) again achieved the highest F1 score (0.623), followed by TF.IDF (0.612) and TF (0.586).

- -

- Random Forest (RF): TF.IDF.CMI(pos) achieved the highest F1 score (0.641), followed by TF.IDF (0.629) and TF (0.570).

- -

- SVM generally outperformed NB and RF in terms of accuracy, precision, recall, and F1 score for all tokenization algorithms and weight methods. This suggests that SVM is a more robust model for this particular text classification task.

| Tokenize weight | NB | RF | SVC | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Acc | Pre | Rec | F1 | Acc | Pre | Rec | F1 | Acc | Pre | Rec | F1 | |

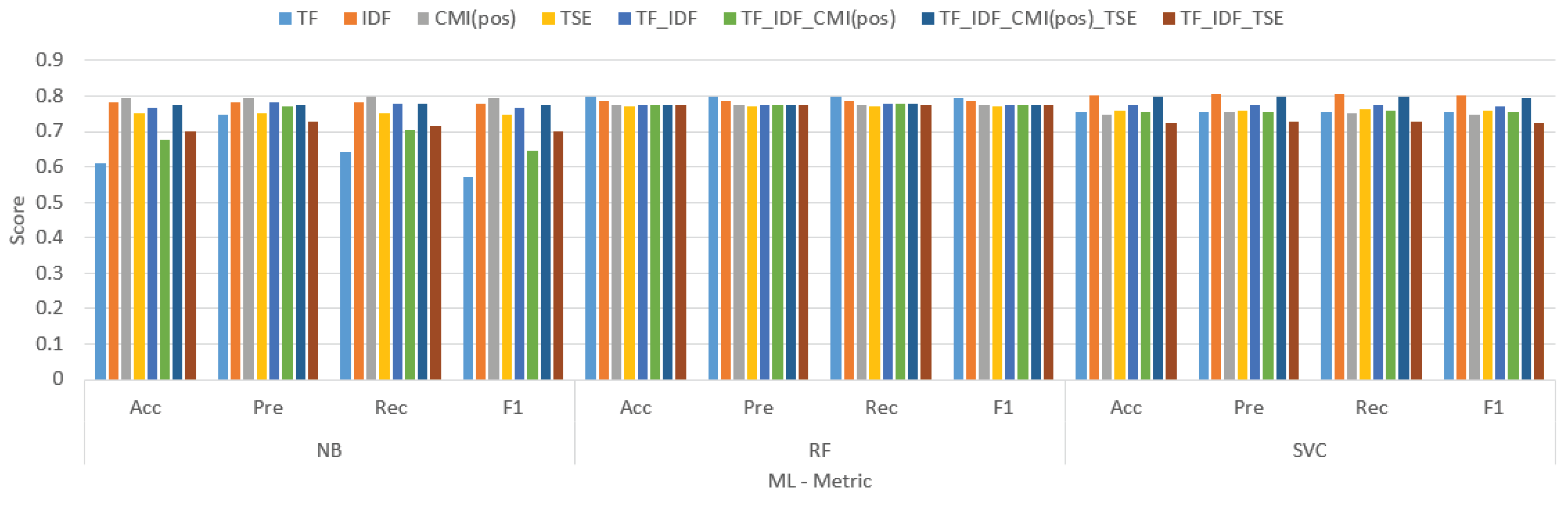

| TF | 0.609 | 0.746 | 0.643 | 0.573 | 0.796 | 0.796 | 0.797 | 0.795 | 0.754 | 0.753 | 0.755 | 0.753 |

| IDF | 0.781 | 0.781 | 0.783 | 0.78 | 0.788 | 0.788 | 0.788 | 0.787 | 0.803 | 0.804 | 0.806 | 0.802 |

| CMI(pos) | 0.794 | 0.793 | 0.796 | 0.793 | 0.775 | 0.774 | 0.776 | 0.774 | 0.749 | 0.753 | 0.752 | 0.748 |

| TSE | 0.75 | 0.75 | 0.752 | 0.749 | 0.771 | 0.769 | 0.77 | 0.769 | 0.76 | 0.76 | 0.763 | 0.759 |

| TF_IDF | 0.767 | 0.784 | 0.778 | 0.767 | 0.775 | 0.774 | 0.777 | 0.774 | 0.773 | 0.774 | 0.776 | 0.772 |

| TF_IDF_CMI(pos) | 0.677 | 0.771 | 0.703 | 0.645 | 0.774 | 0.775 | 0.777 | 0.773 | 0.755 | 0.755 | 0.757 | 0.754 |

| TF_IDF_TSE | 0.701 | 0.727 | 0.716 | 0.699 | 0.774 | 0.774 | 0.775 | 0.773 | 0.725 | 0.727 | 0.728 | 0.724 |

| TF_IDF_CMI(pos)_TSE | 0.776 | 0.776 | 0.778 | 0.775 | 0.775 | 0.774 | 0.777 | 0.774 | 0.796 | 0.797 | 0.799 | 0.795 |

- -

- -Naive Bayes (NB): TF.IDF.CMI(pos) secured the highest F1 score (0.796), with TF.IDF trailing behind at 0.788 and TF at 0.755.

- -

- Support Vector Machines (SVM): Once again, TF.IDF.CMI(pos) reigned supreme with an F1 score of 0.806, followed by TF.IDF at 0.803 and TF at 0.776.

- -

- Random Forest (RF): TF.IDF.CMI(pos) maintained its dominance by achieving an F1 score of 0.799, with TF.IDF close behind at 0.776 and TF at 0.728.

4. Discussion

5. Conclusions

References

- Buatoom, U.; Ceawchan, K.; Sriput, V.; Foithong, S. . Sentiment Classification Based on Term Weighting with Class-mutual Information. Journal of Engineering and Digital Technology (JEDT) 2023, 11. [Google Scholar]

- Theobald, M.; Middenbrock, H.; Ritter, R. Sentiment analysis for online guest reviews in the hotel industry. International Journal of Information Technologies and Tourism 2016, 10, 193–214. [Google Scholar]

- Pang, B.; Lee, L. A sentimental education: Sentiment analysis using supervised and unsupervised learning. arXiv, 2004; arXiv:cs/0409058. [Google Scholar]

- Qiu, L.; Zhu, X.; Sarker, S. The effects of customer sentiment analysis on online customer relationships. Journal of the Association for Information Systems 2019, 20, 1629–1654. [Google Scholar]

- Park, H.; Kim, J.; Cho, H. Exploring the effects of sentiment analysis on employee engagement and knowledge sharing. International Journal of Human-Computer Studies 2017, 106, 1–12. [Google Scholar]

- De Choudhury, M.; Sundaram, H.; John, A. The utility of social media analytics for customer service. Management Science 2016, 62, 2497–2514. [Google Scholar]

- Li, X.; Sun, L.; Li, R. Sentiment analysis for competitive intelligence in the financial industry. International Journal of Financial Research 2017, 8, 116–125. [Google Scholar]

- Chen, L.; Xu, G.; Zhou, Y. Opinion mining and sentiment analysis on social media for market trend prediction. In International Conference on Advanced Multimedia and Information Technology; Springer: Berlin/Heidelberg, Germany, 2014; pp. 301–305. [Google Scholar]

- Mishne, G.; Glance, N. Predicting movie box office performance using a combination of advance reviews and social media. In SIGKDD Workshop on Mining and Analysis of Social Networks (pp. 5-11); 2006.

- Bollen, J.; Mao, H.; Zeng, X. Twitter mood predicts the stock market. Journal of Computational Finance 2011, 14, 1–36. [Google Scholar] [CrossRef]

- Nakov, P.; Ritter, A.; Rosenthal, S. Semeval-2016 task 4: Sentiment analysis in Twitter. In Proceedings of the 10th International Workshop on Semantic Evaluation (pp. 139-150). arXiv 2016. arXiv:1912.01973.

- Yang, Y.; Chen, J.; Zhang, Y. An improved text sentiment classification model using TF-IDF and next word negation. arXiv 2018, arXiv:1806.06407. [Google Scholar]

- Singh, S.; Kumar, A.; Singh, V.K. Sentiment analysis of Twitter data using term frequency-inverse document frequency. Journal of Computer and Communications 2020, 8, 135–146. [Google Scholar]

- El-Helw, A. (2022). Why use tf-idf for sentiment analysis? Towards Data Science. https://medium.com/analytics-vidhya/sentiment-analysis-on-amazon-reviews-using-tf-idf-approach-c5ab4c36e7a1.

- Singh, S.; Kumar, A.; Singh, V.K. Sentiment analysis on Twitter data using TF-IDF and machine learning techniques. International Journal of Advanced Computer Science and Applications 2022, 13, 543–549. [Google Scholar]

- Wasim, A.K.M.; Rahman, M.N.M.; Kabir, M.A.; Hoque, M.A. Opinion spam detection with TF-IDF and supervised learning. arXiv 2023, arXiv:2012.13905. [Google Scholar]

- Pang, B.; Lee, L.; Vaithyanathan, S. (2002). Thumbs up? Sentiment classification using machine learning techniques. Proceedings of the ACL-02 conference on Empirical methods in natural language processing - EMNLP ‘02, 79–86.

- Go, A.; Bhayani, R.; Huang, L. (2009). Twitter sentiment classification using distant supervision. CS224N Project Report, Stanford, 1–12.

- Kotsiantis, S. B. Supervised machine learning: A review of classification techniques. Informatica 2007, 31, 249–268. [Google Scholar]

- Moraes, R.; Valiati, J.F.; Neto, W.P.G. Document-level sentiment classification: An empirical comparison between SVM and ANN. Expert Systems with Applications 2013, 40, 621–633. [Google Scholar] [CrossRef]

- Pasupa, K.; Ayutthaya, T.S.N. Thai sentiment analysis with deep learning techniques: A comparative study based on word embedding, POS-tag, and sentic features. Sustainable Cities and Society 2019, 50, 101615. [Google Scholar] [CrossRef]

- Deng, H.; Ergu, D.; Liu, F.; Cai, Y.; Ma, B. Text sentiment analysis of fusion model based on attention mechanism. Procedia Computer Science 2022, 199, 741–748. [Google Scholar] [CrossRef]

- Zhai, G.; Yang, Y.; Wang, H.; Du, S. Multi-Attention Fusion Modeling for Sentiment Analysis of Educational Big Data. BIG DATA MINING AND ANALYTICS 2020, 3, 311–319. [Google Scholar] [CrossRef]

- Van Houdt, G.; Mosquera, C.; Nápoles, G. A Review on the Long Short-Term Memory Model. Artificial Intelligence Review 2020, 53, 5929–5955. [Google Scholar] [CrossRef]

- Mercha, E.M.; Benbrahim, H. Machine learning and deep learning for sentiment analysis across languages: A survey. Neurocoputing 2023, 531, 195–216. [Google Scholar] [CrossRef]

- Haque, R.; Islam, N.; Tasneem, M.; Das, A.K. Multi-class sentiment classification on Bengali social media comments using machine learning. International Journal of Cognitive Computing in Engineering 2023, 4, 21–35. [Google Scholar] [CrossRef]

- Umarani, V.; Julian, A.; Deepa, J. Sentiment Analysis using various Machine Learning and Deep Learning Techniques. J. Nig. Soc. Phys. Sci. 2021, 3, 385–394. [Google Scholar] [CrossRef]

- Bordoloi, M.; Biswas, S.K. Sentiment analysis: A survey on design framework, applications and future scopes. Artificial Intelligence Review 2023. [Google Scholar] [CrossRef]

- Lin, C.-H.; Nuha, U. Sentiment analysis of Indonesian datasets based on a hybrid deep-learning strategy. Journal of Big Data 2023. [Google Scholar] [CrossRef]

- Copaceanu, A.-M. Sentiment Analysis Using Machine Learning Approach, “Ovidius” University Annals, Economic Sciences Series, Vol XXI, Issue 1, 2021.

- Lazrig, I.; Humpherys, S.L. Using Machine Learning Sentiment Analysis to Evaluate Learning Impact. Information Systems Education Journal (ISEDJ) 2022. [Google Scholar]

- Bhagat, B.; Dhande, S. A Comparison of Different Machine Learning Techniques for Sentiment Analysis in Education Domain. ResearchGate 2023. [Google Scholar]

- Wang, Z.; Fang, J.; Liu, Y.; Li, D. Deep Learning-Based Sentiment Analysis for Social Media. AIPR 2022. [Google Scholar]

- Alantari, H.J.; Currim, I.S.; Deng, Y.; Singh, S. An empirical comparison of machine learning methods for text-based sentiment analysis of online consumer reviews. International Journal of Research in Margeting 2022, 39, 1–19. [Google Scholar] [CrossRef]

- Khamphakdee, N.; Seresangtakul, P. An Efficient Deep Learning for Thai Sentiment Analysis. Data 2023. [Google Scholar] [CrossRef]

- Pugsee, P.; Rengsomboonsuk, T.; Saiyot, K. Sentiment Analysis for Thai dramas on Twitter. Naresuan University Journal: Science and Technology 2022. [Google Scholar]

- Siino, M.; Tinnirello, I.; La Cascia, M. Is text preprocessing still worth the time? A comparative survey on the influence of popular preprocesssing methods on Transformers and traditional classifiers. Information Systems 2024, 121, 102342. [Google Scholar] [CrossRef]

- Koukaras, P.; Tjortjis, C.; Rousidis, D. Mining association rules from COVID-19 related twitter data to discover word patterns, topics and inferences. Information Systems 2022, 109, 102054. [Google Scholar] [CrossRef]

- Siciliani, L.; Taccardi, V.; Basile, P.; Di Ciano, M.; Lops, P. AI-based decision support system for public procurement. Information Systems 2023, 119, 102284. [Google Scholar] [CrossRef]

- Iqbal, M.; Lissandrini, M.; Pedersen, T.B. A foundation for spatio-textual-temporal cube analytics. Information Systems 2022, 108, 102009. [Google Scholar] [CrossRef]

- Revina, A.; Aksu, U. An approach for analysing business process execution complexity based on textual data and event log. Information System 2023, 114, 102184. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).