Submitted:

14 March 2024

Posted:

14 March 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

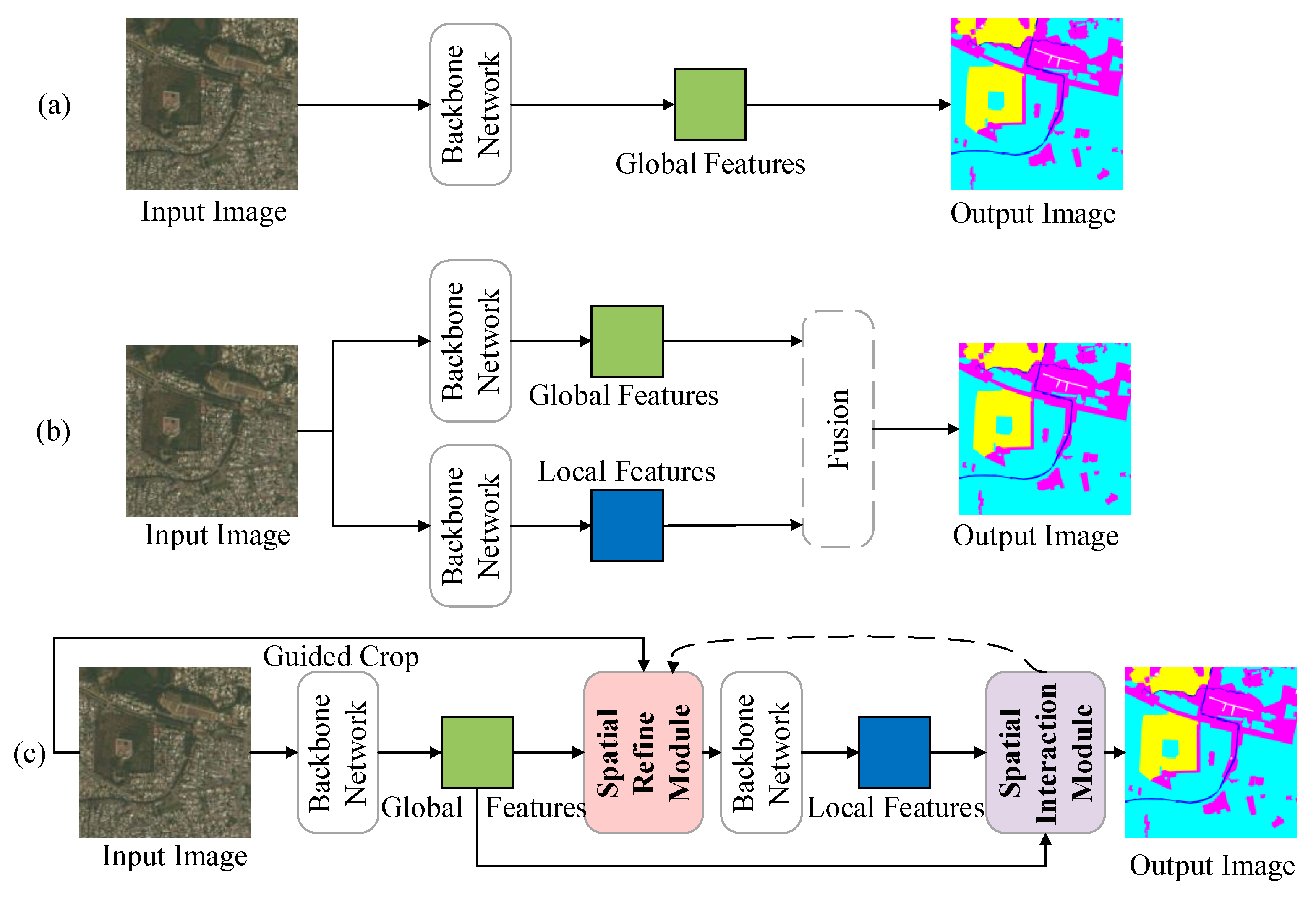

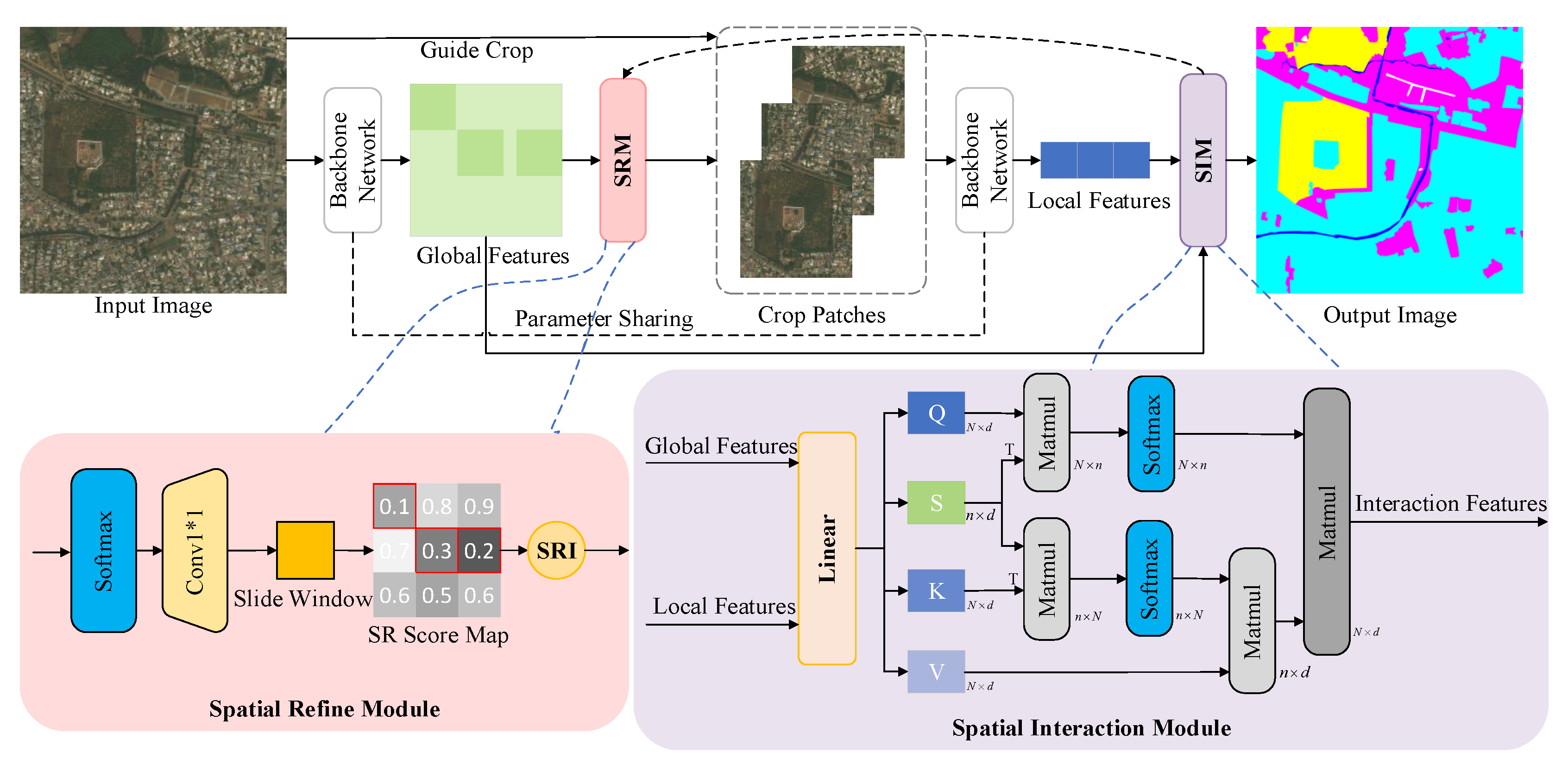

- We propose a novel spatially adaptive interaction network (SAINet) for filtering and dynamic interaction among different spatial features in the remote sensing semantic segmentation task.

- We present a new spatial refined module that uses local context information to filter spatial positions and extract salient regions.

- We devise a spatial interaction model to adaptively modulate mechanisms to dynamically select and allocate spatial position weights to focus on target areas.

- We demonstrate the effectiveness of our approach by achieving state-of-the-art semantic segmentation performance on three publicly available high-resolution remote sensing image datasets.

2. Related Works

2.1. Semantic Segmentation

2.2. Dual-Branch Architecture

3. Method

3.1. Overview

3.2. Spatial Refine Module

3.3. Spatial Interaction Module

3.4. Loss

4. Experiment

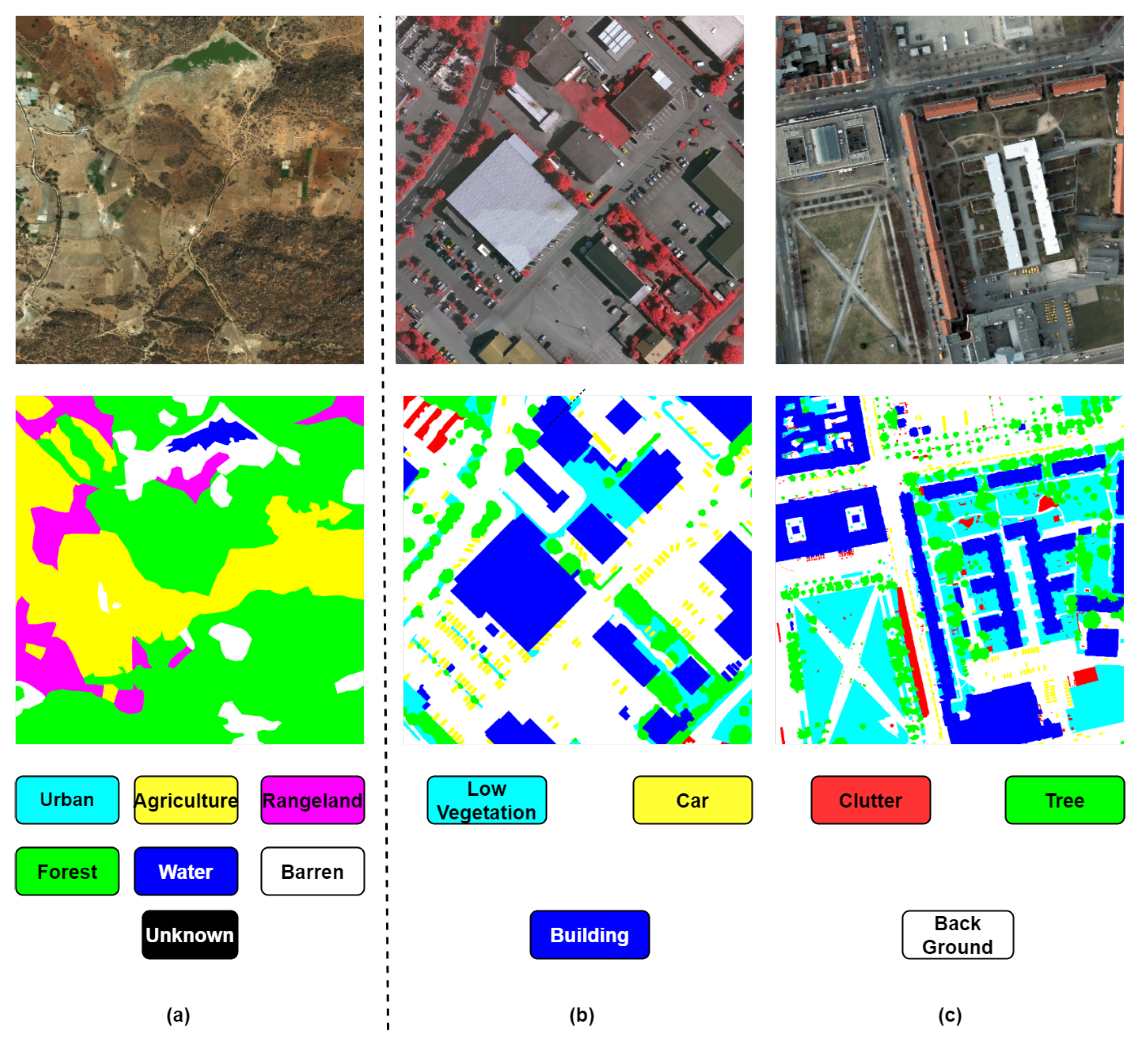

4.1. Datasets

| Dataset | Max Size | Number of Classes | %of 4K UHR Image | #Image |

|---|---|---|---|---|

| DeepGlobe [63] | 6M pixels (2448×2448) | 7 | 100% | 803 |

| Vaihingen * | 28M pixels (2494×2064) | 6 | 100% | 33 |

| Potsdam * | 103M pixels (6000×6000) | 6 | 100% | 38 |

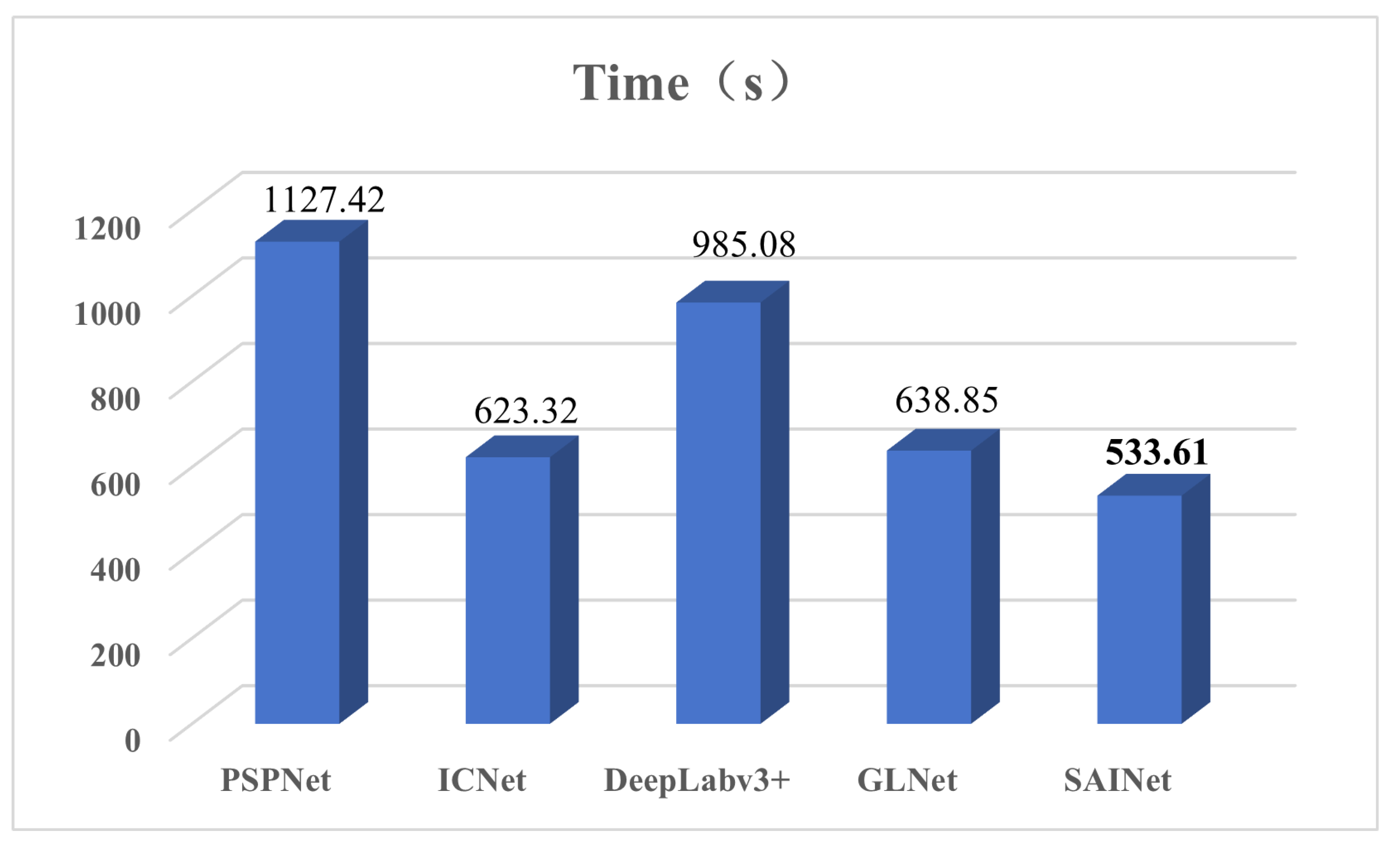

4.2. Experimental Settings

4.3. Evaluation Matrix

4.4. Results

4.4.1. Results on DeepGlobe

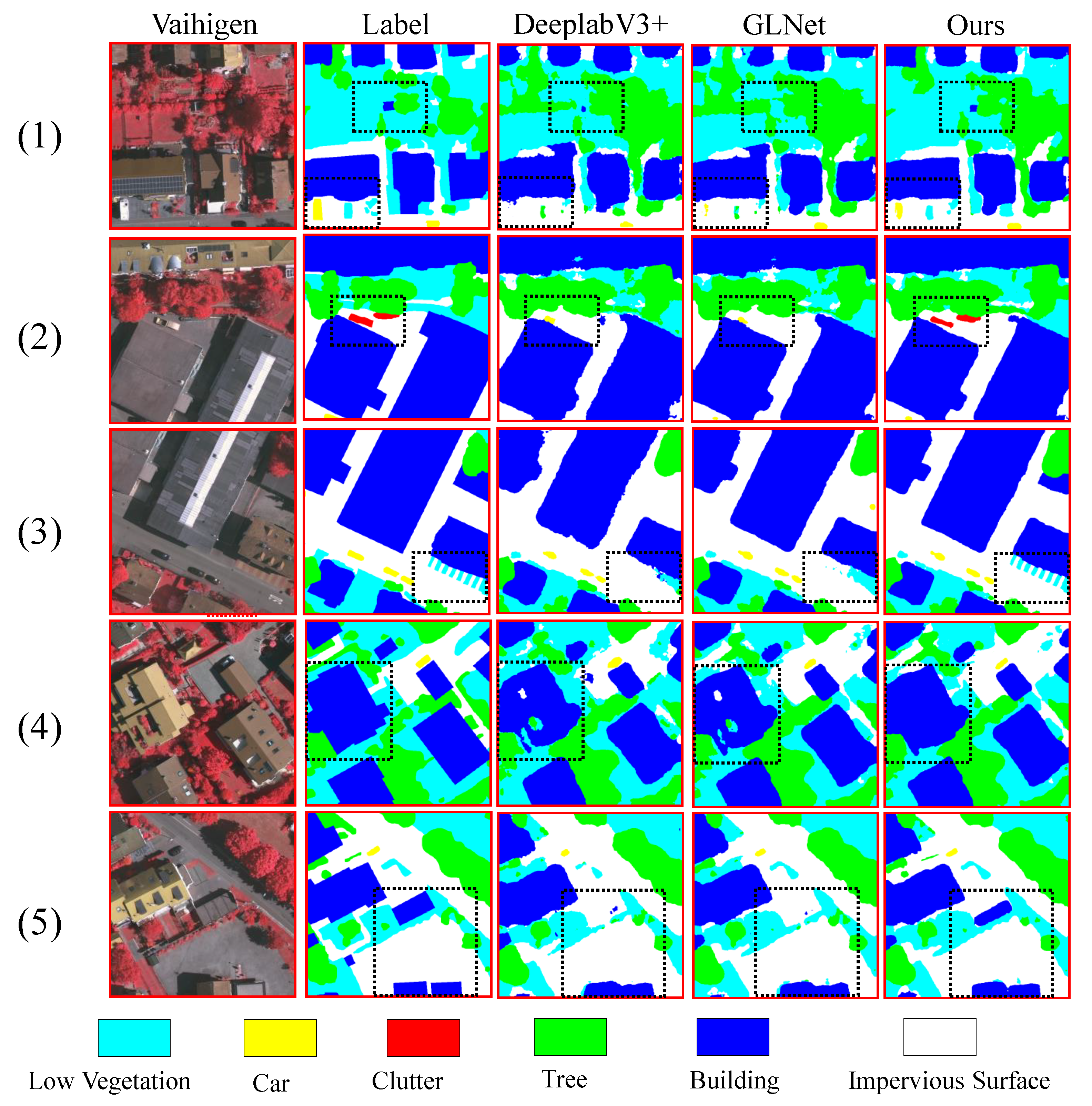

4.4.2. The Result on the Vaihingen Dataset

| Method | Barren | Rangeland | Forest | Water | Urban | Agriculture | mIoU (%) |

|---|---|---|---|---|---|---|---|

| ICNet[64] | 35.2 | 31.3 | 24.2 | 42.1 | 24.3 | 69.2 | 37.7 |

| FCN[12] | 36.1 | 36.4 | 35.5 | 38.9 | 26.2 | 72.3 | 40.9 |

| U-Net[13] | 34.1 | 33.5 | 28.2 | 45.1 | 26.1 | 71.7 | 39.8 |

| PSPNet[24] | 39.3 | 36.9 | 53.6 | 64.2 | 48.2 | 74.7 | 52.8 |

| SegNet[65] | 43.1 | 38.2 | 54.8 | 67.8 | 53.1 | 75.2 | 55.4 |

| DeepLabv3+[9] | 44.1 | 42.2 | 56.3 | 68.4 | 54.1 | 77.3 | 57.1 |

| UHRSNet[16] | 81.2 | 61.3 | 72.3 | 70.1 | 78.9 | 68.4 | 72.0 |

| MBNet[17] | 64.1 | 87.3 | 41.5 | 80.6 | 83.1 | 78.9 | 72.6 |

| Baseline[15] | 63.6 | 38.6 | 79.8 | 82.6 | 78.1 | 86.8 | 71.6 |

| SAINet | 86.5 | 46.1 | 83.1 | 84.4 | 79.2 | 87.3 | 77.8 |

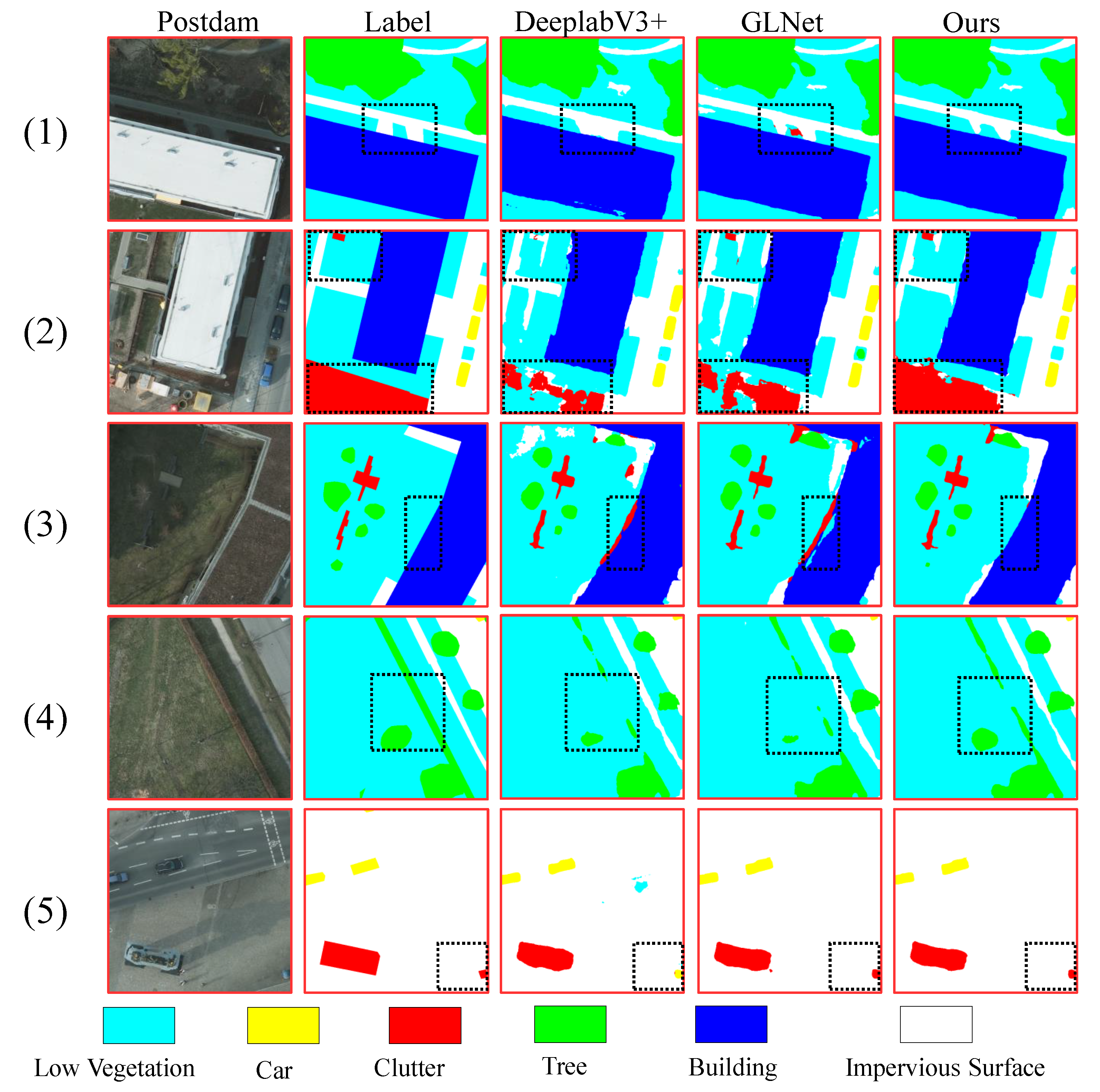

4.4.3. Results on Potsdam

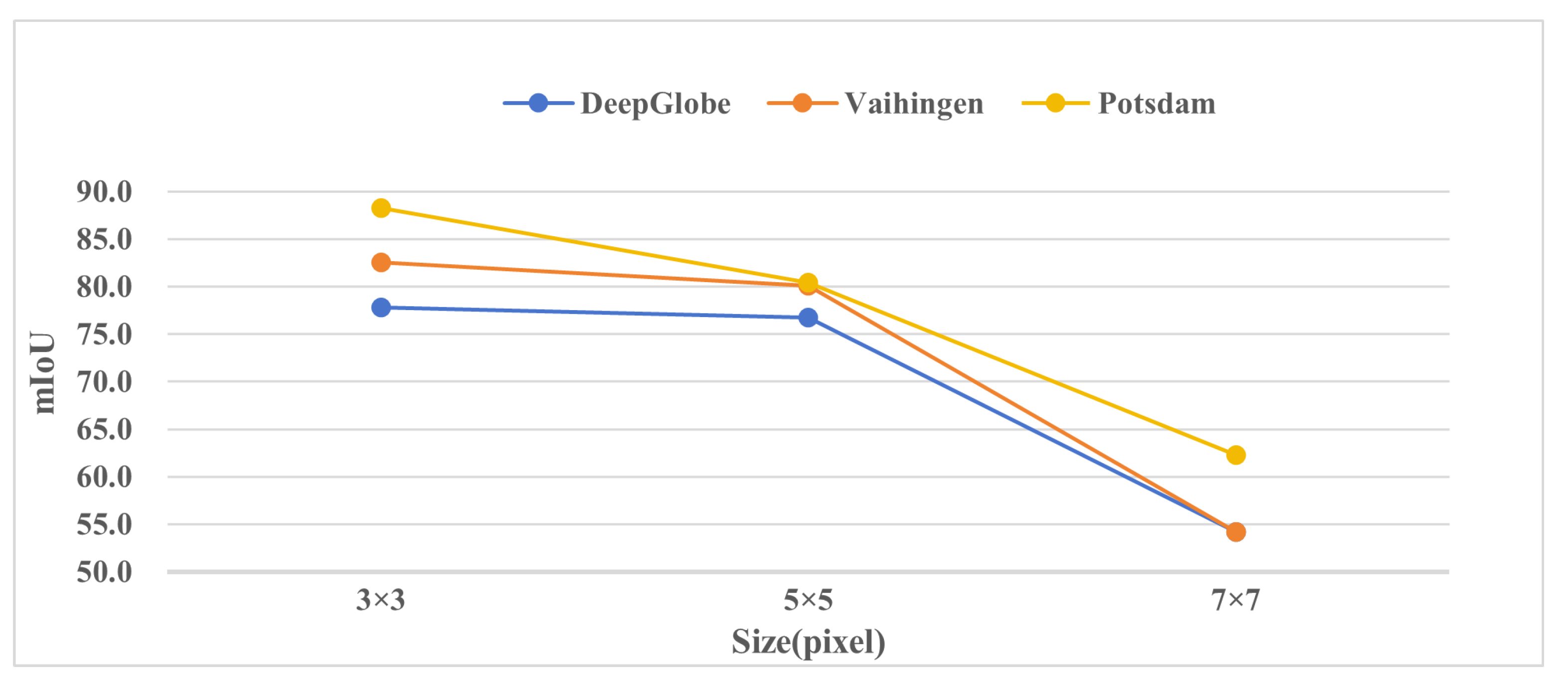

4.5. Ablation Studies and Analysis

4.6. Visual Results and Analysis

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Kussul, N.; Lavreniuk, M.; Skakun, S.; Shelestov, A. Deep learning classification of land cover and crop types using remote sensing data. IEEE Geoscience and Remote Sensing Letters 2017, 14, 778–782. [CrossRef]

- Milioto, A.; Lottes, P.; Stachniss, C. Real-time semantic segmentation of crop and weed for precision agriculture robots leveraging background knowledge in CNNs. 2018 IEEE international conference on robotics and automation (ICRA). IEEE, 2018, pp. 2229–2235.

- Malambo, L.; Popescu, S.; Ku, N.W.; Rooney, W.; Zhou, T.; Moore, S. A deep learning semantic segmentation-based approach for field-level sorghum panicle counting. Remote Sensing 2019, 11, 2939. [CrossRef]

- Martinez, J.A.C.; Oliveira, H.; dos Santos, J.A.; Feitosa, R.Q. Open set semantic segmentation for multitemporal crop recognition. IEEE Geoscience and Remote Sensing Letters 2021, 19, 1–5. [CrossRef]

- Tarasiou, M.; Güler, R.A.; Zafeiriou, S. Context-self contrastive pretraining for crop type semantic segmentation. IEEE Transactions on Geoscience and Remote Sensing 2022, 60, 1–17. [CrossRef]

- Paisitkriangkrai, S.; Sherrah, J.; Janney, P.; Hengel, V.D.; others. Effective semantic pixel labelling with convolutional networks and conditional random fields. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, 2015, pp. 36–43.

- Hu, J.; Huang, Z.; Shen, F.; He, D.; Xian, Q. A Bag of Tricks for Fine-Grained roof Extraction. IGARSS 2023-2023 IEEE International Geoscience and Remote Sensing Symposium. IEEE, 2023.

- Fu, G.; Liu, C.; Zhou, R.; Sun, T.; Zhang, Q. Classification for high resolution remote sensing imagery using a fully convolutional network. Remote Sensing 2017, 9, 498. [CrossRef]

- Chen, L.C.; Zhu, Y.; Papandreou, G.; Schroff, F.; Adam, H. Encoder-decoder with atrous separable convolution for semantic image segmentation. Proceedings of the European conference on computer vision (ECCV), 2018, pp. 801–818.

- Xu, Y.; Wu, L.; Xie, Z.; Chen, Z. Building extraction in very high resolution remote sensing imagery using deep learning and guided filters. Remote Sensing 2018, 10, 144. [CrossRef]

- Diakogiannis, F.I.; Waldner, F.; Caccetta, P.; Wu, C. ResUNet-a: A deep learning framework for semantic segmentation of remotely sensed data. ISPRS Journal of Photogrammetry and Remote Sensing 2020, 162, 94–114.

- Long, J.; Shelhamer, E.; Darrell, T. Fully convolutional networks for semantic segmentation. Proceedings of the IEEE conference on computer vision and pattern recognition, 2015, pp. 3431–3440.

- Ronneberger, O.; Fischer, P.; Brox, T. U-net: Convolutional networks for biomedical image segmentation. Medical Image Computing and Computer-Assisted Intervention–MICCAI 2015: 18th International Conference, Munich, Germany, October 5-9, 2015, Proceedings, Part III 18. Springer, 2015, pp. 234–241.

- Chen, L.C.; Papandreou, G.; Schroff, F.; Adam, H. Rethinking atrous convolution for semantic image segmentation. arXiv preprint arXiv:1706.05587 2017.

- Chen, W.; Jiang, Z.; Wang, Z.; Cui, K.; Qian, X. Collaborative global-local networks for memory-efficient segmentation of ultra-high resolution images. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2019, pp. 8924–8933.

- Shan, L.; Li, M.; Li, X.; Bai, Y.; Lv, K.; Luo, B.; Chen, S.B.; Wang, W. Uhrsnet: A semantic segmentation network specifically for ultra-high-resolution images. 2020 25th International Conference on Pattern Recognition (ICPR). IEEE, 2021, pp. 1460–1466.

- Shan, L.; Wang, W. MBNet: A Multi-Resolution Branch Network for Semantic Segmentation Of Ultra-High Resolution Images. ICASSP 2022-2022 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2022, pp. 2589–2593.

- Du, X.; He, S.; Yang, H.; Wang, C. Multi-Field Context Fusion Network for Semantic Segmentation of High-Spatial-Resolution Remote Sensing Images. Remote Sensing 2022, 14, 5830. [CrossRef]

- Chen, L.C.; Papandreou, G.; Kokkinos, I.; Murphy, K.; Yuille, A.L. Semantic image segmentation with deep convolutional nets and fully connected crfs. arXiv preprint arXiv:1412.7062 2014.

- Badrinarayanan, V.; Kendall, A.; SegNet, R.C. A deep convolutional encoder-decoder architecture for image segmentation. arXiv preprint arXiv:1511.00561 2015, 5. [CrossRef]

- Yu, F.; Koltun, V. Multi-scale context aggregation by dilated convolutions. arXiv preprint arXiv:1511.07122 2015.

- Chen, L.C.; Papandreou, G.; Kokkinos, I.; Murphy, K.; Yuille, A.L. Deeplab: Semantic image segmentation with deep convolutional nets, atrous convolution, and fully connected crfs. IEEE transactions on pattern analysis and machine intelligence 2017, 40, 834–848.

- Shen, F.; Zhu, J.; Zhu, X.; Huang, J.; Zeng, H.; Lei, Z.; Cai, C. An Efficient Multiresolution Network for Vehicle Reidentification. IEEE Internet of Things Journal 2021, 9, 9049–9059. [CrossRef]

- Zhao, H.; Shi, J.; Qi, X.; Wang, X.; Jia, J. Pyramid scene parsing network. Proceedings of the IEEE conference on computer vision and pattern recognition, 2017, pp. 2881–2890.

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2016.

- Fu, J.; Liu, J.; Tian, H.; Li, Y.; Bao, Y.; Fang, Z.; Lu, H. Dual attention network for scene segmentation. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2019, pp. 3146–3154.

- Shen, F.; Wei, M.; Ren, J. HSGNet: Object Re-identification with Hierarchical Similarity Graph Network. arXiv preprint arXiv:2211.05486 2022.

- Liu, C.; Chen, L.C.; Schroff, F.; Adam, H.; Hua, W.; Yuille, A.L.; Fei-Fei, L. Auto-deeplab: Hierarchical neural architecture search for semantic image segmentation. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2019, pp. 82–92.

- Shen, F.; Peng, X.; Wang, L.; Zhang, X.; Shu, M.; Wang, Y. HSGM: A Hierarchical Similarity Graph Module for Object Re-identification. 2022 IEEE International Conference on Multimedia and Expo (ICME). IEEE, 2022, pp. 1–6.

- Li, M.; Wei, M.; He, X.; Shen, F. Enhancing Part Features via Contrastive Attention Module for Vehicle Re-identification. 2022 IEEE International Conference on Image Processing (ICIP). IEEE, 2022, pp. 1816–1820.

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, .; Polosukhin, I. Attention is all you need. Advances in neural information processing systems 2017, 30.

- Xu, R.; Shen, F.; Wu, H.; Zhu, J.; Zeng, H. Dual modal meta metric learning for attribute-image person re-identification. 2021 IEEE International Conference on Networking, Sensing and Control (ICNSC). IEEE, 2021, Vol. 1, pp. 1–6.

- Shen, F.; Xie, Y.; Zhu, J.; Zhu, X.; Zeng, H. Git: Graph interactive transformer for vehicle re-identification. IEEE Transactions on Image Processing 2023. [CrossRef]

- Xie, E.; Wang, W.; Yu, Z.; Anandkumar, A.; Alvarez, J.M.; Luo, P. SegFormer: Simple and efficient design for semantic segmentation with transformers. Advances in Neural Information Processing Systems 2021, 34, 12077–12090.

- Fu, X.; Shen, F.; Du, X.; Li, Z. Bag of Tricks for “Vision Meet Alage” Object Detection Challenge. 2022 6th International Conference on Universal Village (UV). IEEE, 2022, pp. 1–4.

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; others. An image is worth 16x16 words: Transformers for image recognition at scale. arXiv preprint arXiv:2010.11929 2020.

- Wang, W.; Xie, E.; Li, X.; Fan, D.P.; Song, K.; Liang, D.; Lu, T.; Luo, P.; Shao, L. Pyramid vision transformer: A versatile backbone for dense prediction without convolutions. Proceedings of the IEEE/CVF international conference on computer vision, 2021, pp. 568–578. [CrossRef]

- Weng, W.; Ling, W.; Lin, F.; Ren, J.; Shen, F. A Novel Cross Frequency-domain Interaction Learning for Aerial Oriented Object Detection. Chinese Conference on Pattern Recognition and Computer Vision (PRCV). Springer, 2023.

- Qiao, C.; Shen, F.; Wang, X.; Wang, R.; Cao, F.; Zhao, S.; Li, C. A Novel Multi-Frequency Coordinated Module for SAR Ship Detection. 2022 IEEE 34th International Conference on Tools with Artificial Intelligence (ICTAI). IEEE, 2022, pp. 804–811.

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Lin, S.; Guo, B. Swin transformer: Hierarchical vision transformer using shifted windows. Proceedings of the IEEE/CVF international conference on computer vision, 2021, pp. 10012–10022.

- Wang, Z.; Cun, X.; Bao, J.; Zhou, W.; Liu, J.; Li, H. Uformer: A general u-shaped transformer for image restoration. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2022, pp. 17683–17693.

- Yuan, L.; Chen, Y.; Wang, T.; Yu, W.; Shi, Y.; Jiang, Z.H.; Tay, F.E.; Feng, J.; Yan, S. Tokens-to-token vit: Training vision transformers from scratch on imagenet. Proceedings of the IEEE/CVF international conference on computer vision, 2021, pp. 558–567.

- Shen, F.; Du, X.; Zhang, L.; Tang, J. Triplet Contrastive Learning for Unsupervised Vehicle Re-identification. arXiv preprint arXiv:2301.09498 2023.

- Xiao, T.; Singh, M.; Mintun, E.; Darrell, T.; Dollár, P.; Girshick, R. Early convolutions help transformers see better. Advances in neural information processing systems 2021, 34, 30392–30400.

- Chen, Y.; Dai, X.; Chen, D.; Liu, M.; Dong, X.; Yuan, L.; Liu, Z. Mobile-former: Bridging mobilenet and transformer. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2022, pp. 5270–5279.

- Li, Y.; Yao, T.; Pan, Y.; Mei, T. Contextual transformer networks for visual recognition. IEEE Transactions on Pattern Analysis and Machine Intelligence 2022, 45, 1489–1500.

- Xia, Z.; Pan, X.; Song, S.; Li, L.E.; Huang, G. Vision transformer with deformable attention. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2022, pp. 4794–4803.

- Liu, Z.; Mao, H.; Wu, C.Y.; Feichtenhofer, C.; Darrell, T.; Xie, S. A convnet for the 2020s. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2022, pp. 11976–11986.

- Jin, C.; Tanno, R.; Xu, M.; Mertzanidou, T.; Alexander, D.C. Foveation for segmentation of ultra-high resolution images. arXiv preprint arXiv:2007.15124 2020.

- Shen, F.; Shu, X.; Du, X.; Tang, J. Pedestrian-specific Bipartite-aware Similarity Learning for Text-based Person Retrieval. Proceedings of the 31th ACM International Conference on Multimedia, 2023.

- Shan, L.; Li, X.; Wang, W. Decouple the high-frequency and low-frequency information of images for semantic segmentation. ICASSP 2021-2021 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2021, pp. 1805–1809.

- Huynh, C.; Tran, A.T.; Luu, K.; Hoai, M. Progressive semantic segmentation. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2021, pp. 16755–16764.

- Hou, J.; Guo, Z.; Wu, Y.; Diao, W.; Xu, T. BSNet: Dynamic hybrid gradient convolution based boundary-sensitive network for remote sensing image segmentation. IEEE Transactions on Geoscience and Remote Sensing 2022, 60, 1–22. [CrossRef]

- Guo, S.; Liu, L.; Gan, Z.; Wang, Y.; Zhang, W.; Wang, C.; Jiang, G.; Zhang, W.; Yi, R.; Ma, L.; others. Isdnet: Integrating shallow and deep networks for efficient ultra-high resolution segmentation. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2022, pp. 4361–4370.

- Liu, J.; Shen, F.; Wei, M.; Zhang, Y.; Zeng, H.; Zhu, J.; Cai, C. A Large-Scale Benchmark for Vehicle Logo Recognition. 2019 IEEE 4th International Conference on Image, Vision and Computing (ICIVC). IEEE, 2019, pp. 479–483.

- Kamnitsas, K.; Ledig, C.; Newcombe, V.F.; Simpson, J.P.; Kane, A.D.; Menon, D.K.; Rueckert, D.; Glocker, B. Efficient multi-scale 3D CNN with fully connected CRF for accurate brain lesion segmentation. Medical image analysis 2017, 36, 61–78.

- Shen, F.; Lin, L.; Wei, M.; Liu, J.; Zhu, J.; Zeng, H.; Cai, C.; Zheng, L. A large benchmark for fabric image retrieval. 2019 IEEE 4th International Conference on Image, Vision and Computing (ICIVC). IEEE, 2019, pp. 247–251.

- Li, Y.; Wu, J.; Wu, Q. Classification of breast cancer histology images using multi-size and discriminative patches based on deep learning. Ieee Access 2019, 7, 21400–21408.

- Xie, Y.; Shen, F.; Zhu, J.; Zeng, H. Viewpoint robust knowledge distillation for accelerating vehicle re-identification. EURASIP Journal on Advances in Signal Processing 2021, 2021, 1–13. [CrossRef]

- Shen, F.; Zhu, J.; Zhu, X.; Xie, Y.; Huang, J. Exploring spatial significance via hybrid pyramidal graph network for vehicle re-identification. IEEE Transactions on Intelligent Transportation Systems 2021, 23, 8793–8804. [CrossRef]

- Katharopoulos, A.; Vyas, A.; Pappas, N.; Fleuret, F. Transformers are rnns: Fast autoregressive transformers with linear attention. International conference on machine learning. PMLR, 2020, pp. 5156–5165.

- Lin, T.Y.; Goyal, P.; Girshick, R.; He, K.; Dollár, P. Focal loss for dense object detection. Proceedings of the IEEE international conference on computer vision, 2017, pp. 2980–2988.

- Demir, I.; Koperski, K.; Lindenbaum, D.; Pang, G.; Huang, J.; Basu, S.; Hughes, F.; Tuia, D.; Raskar, R. Deepglobe 2018: A challenge to parse the earth through satellite images. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, 2018, pp. 172–181.

- Zhao, H.; Qi, X.; Shen, X.; Shi, J.; Jia, J. Icnet for real-time semantic segmentation on high-resolution images. Proceedings of the European conference on computer vision (ECCV), 2018, pp. 405–420.

- Badrinarayanan, V.; Kendall, A.; Cipolla, R. Segnet: A deep convolutional encoder-decoder architecture for image segmentation. IEEE transactions on pattern analysis and machine intelligence 2017, 39, 2481–2495. [CrossRef]

- Sun, H.; Pan, C.; He, L.; Xu, Z. A Full-Scale Feature Extraction Network for Semantic Segmentation of Remote Sensing Images. 2022 4th International Conference on Intelligent Control, Measurement and Signal Processing (ICMSP). IEEE, 2022, pp. 725–728.

- Chen, C.; Qian, Y.; Liu, H.; Yang, G. CLANET: a cross-linear attention network for semantic segmentation of urban scenes remote sensing images. International Journal of Remote Sensing 2023, 44, 7321–7337. [CrossRef]

- Wu, H.; Huang, P.; Zhang, M.; Tang, W. CTFNet: CNN-Transformer Fusion Network for Remote Sensing Image Semantic Segmentation. IEEE Geoscience and Remote Sensing Letters 2023. [CrossRef]

- Zhang, Y.; Cheng, J.; Bai, H.; Wang, Q.; Liang, X. Multilevel feature fusion and attention network for high-resolution remote sensing image semantic labeling. IEEE Geoscience and Remote Sensing Letters 2022, 19, 1–5. [CrossRef]

- Sun, X.; Qian, Y.; Cao, R.; Tuerxun, P.; Hu, Z. BGFNet: Semantic Segmentation Network Based on Boundary Guidance. IEEE Geoscience and Remote Sensing Letters 2023. [CrossRef]

- Hu, J.; Shen, L.; Sun, G. Squeeze-and-excitation networks. Proceedings of the IEEE conference on computer vision and pattern recognition, 2018, pp. 7132–7141.

- Jaderberg, M.; Simonyan, K.; Zisserman, A.; others. Spatial transformer networks. Advances in neural information processing systems 2015, 28.

- Woo, S.; Park, J.; Lee, J.Y.; Kweon, I.S. Cbam: Convolutional block attention module. Proceedings of the European conference on computer vision (ECCV), 2018, pp. 3–19.

| Method | Impervious Surface |

Building | Low Vegetation |

Tree | Car | mean F1 | OA | mIoU |

|---|---|---|---|---|---|---|---|---|

| DeepLabv3+[9] | 91.4 | 94.7 | 79.6 | 87.6 | 85.8 | 87.8 | 89.9 | 79.0 |

| PSPNet[24] | 90.6 | 94.3 | 79.0 | 87.0 | 70.7 | 84.3 | 89.1 | 74.1 |

| FSFNet [66] | 91.9 | 94.8 | 82.5 | 89.0 | 85.4 | 88.7 | 89.8 | - |

| CLANet [67] | 91.5 | 94.6 | 82.9 | 89.5 | 87.3 | 89.2 | 89.8 | 80.7 |

| CTFNet [68] | 90.7 | 94.4 | 81.7 | 87.3 | 82.7 | 87.4 | 88.6 | 77.8 |

| MFANet [69] | 92.7 | 95.8 | 84.2 | 89.3 | 84.7 | 89.3 | 90.6 | 81.6 |

| BGFNet [70] | 92.9 | 95.5 | 84.4 | 89.6 | 86.7 | 89.8 | 90.6 | 81.7 |

| Basaeline[15] | 88.3 | 93.0 | 79.7 | 87.1 | 83.3 | 89.0 | 86.3 | 78.7 |

| SAINet | 92.5 | 96.5 | 85.9 | 89.3 | 89.4 | 90.7 | 91.9 | 82.5 |

| Method | Impervious Surface |

Building | Low Vegetation |

Tree | Car | mean F1 | OA | mIoU |

|---|---|---|---|---|---|---|---|---|

| DeepLabv3+[9] | 91.3 | 94.8 | 84.2 | 86.6 | 93.8 | 90.1 | 89.2 | 73.8 |

| PSPNet[24] | 90.7 | 94.8 | 84.1 | 85.9 | 90.5 | 89.2 | 88.7 | 71.7 |

| FSFNet [66] | 92.2 | 95.0 | 85.2 | 87.2 | 95.5 | 91.0 | 89.3 | - |

| CLANet [67] | 92.1 | 96.3 | 86.8 | 88.4 | 95.4 | 91.8 | 90.3 | 85.1 |

| CTFNet [68] | 91.5 | 96.3 | 86.0 | 87.2 | 92.5 | 90.7 | 89.4 | 83.2 |

| MFANet [69] | 93.1 | 96.6 | 87.0 | 88.0 | 94.9 | 91.9 | 90.9 | 88.3 |

| BGFNet [70] | 95.3 | 96.3 | 88.8 | 88.6 | 95.6 | 92.9 | 92.2 | 86.9 |

| Basaeline[15] | 90.3 | 94.5 | 79.4 | 87.2 | 84.4 | 89.5 | 87.6 | 79.3 |

| SAINet | 95.4 | 96.7 | 89.5 | 88.6 | 96.2 | 93.3 | 92.5 | 88.3 |

| border-bottom:solid thin">Dataset | Model | Spatial Refine Module | Spatial Interation Module | mIoU |

|---|---|---|---|---|

| DeepGlobe | Baseline | × | × | 71.6 |

| Ours | ✓ | × | 65.7 | |

| × | ✓ | 72.4 | ||

| ✓ | ✓ | 77.8 | ||

| Vaihingen | Baseline | × | × | 78.7 |

| Ours | ✓ | × | 75.6 | |

| × | ✓ | 78.9 | ||

| ✓ | ✓ | 82.5 | ||

| Postdam | Baseline | × | × | 79.3 |

| Ours | ✓ | × | 70.3 | |

| × | ✓ | 79.6 | ||

| ✓ | ✓ | 88.3 |

| Method | Dataset | ||

|---|---|---|---|

| DeepGlobe | Vaihingen | Potsdam | |

| CAM [71] | 71.9 | 78.6 | 79.0 |

| SAM [72] | 71.5 | 78.3 | 78.9 |

| CBAM [73] | 72.3 | 79.1 | 79.7 |

| Ours | 77.8 | 82.5 | 88.3 |

| Method | PatchSize | |||

|---|---|---|---|---|

| 500×500 | 400×400 | 256×256 | 128×128 | |

| GLNet | 71.6 | 70.6 | 66.4 | 64.6 |

| Ours | 77.8 | 77.1 | 75.7 | 74.3 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).