Submitted:

03 February 2025

Posted:

04 February 2025

Read the latest preprint version here

Abstract

Keywords:

I. Introduction

II. Purpose of the Study

III. Customer Churn in the Telecom Industry

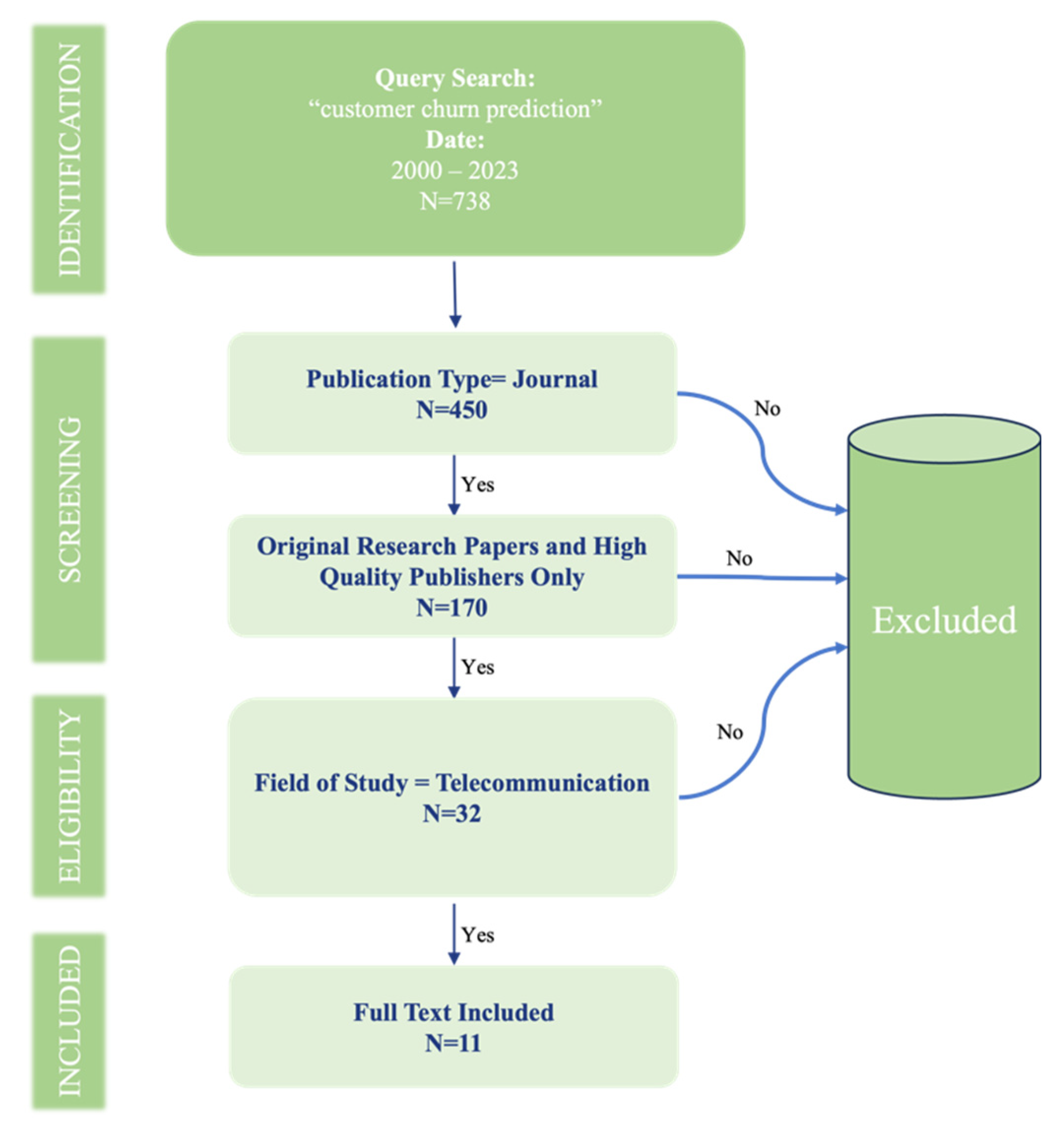

IV. Search Process

- Articles must contain keywords from the search string. The search string was “customer churn prediction.”

- Selection was limited to articles published in notable journals or conferences. Formats such as newsletters, lecture notes, books, doctoral dissertations, and unpublished works were excluded.

- The timeframe of the search was confined from the year 2000 to 2023.

- Only articles pertinent to the telecommunications sector were considered.

V. Article’s Distribution

- ❖ Distribution of articles by Techniques

- ❖ Distribution of articles by Journals

- ❖ Distribution of articles by Publication Year

A. Distribution of articles by Year of Publication

B. Distribution of Articles by Journals

C. Distribution of Articles by Techniques

| Ref. | DT | ANN | SVM | RF | GA | AdaBoost | XGBoost | LightGBM | CatBoost | Regression | Naive |

| [8] | ✓ | ✓ | |||||||||

| [9] | ✓ | ✓ | ✓ | ✓ | |||||||

| [10] | ✓ | ✓ | |||||||||

| [11] | ✓ | ||||||||||

| [12] | ✓ | ✓ | ✓ | ||||||||

| [13] | ✓ | ||||||||||

| [14] | ✓ | ✓ | ✓ | ||||||||

| [15] | ✓ | ||||||||||

| [16] | ✓ | ✓ | ✓ | ✓ | ✓ | ||||||

| [17] | ✓ | ✓ | ✓ | ✓ | |||||||

| [18] | ✓ | ✓ | ✓ |

- XGBoost - 2 time ([16])

- LightGBM - 2 time ([16])

- CatBoost - 2 time ([16])

- Regression Analysis - 1 time ([9])

- Naïve Bayes - 1 time ([14])

- 1.

- Random forest:

- 2.

- Logistic Regression:

- 3.

- Decision Tree:

- 4.

- Neural Networks:

VI. Challenges

- Incomplete or Missing Datasets: A significant challenge is the lack of complete datasets in the telecom industry field. On the other hand, some telecom companies provide datasets that are so large that they become cumbersome to manage, often compounded by issues of noisy data.

- Imbalanced Data: Another major hurdle is the imbalanced nature of the data, where the ratio of regular customers to churners is uneven. Often, churners’ data constitute only about 10% to 20% of the total data, creating challenges in terms of the reliability of the predictive model.

VII. Limitations

- Scope of Articles Reviewed: The review is confined to 11 articles published between 2000 and 2023. Increasing the number of research papers could enrich the study’s findings.

- Keyword Constraints in the Search String: The research employed a search string incorporating key terms such as “customer churn prediction” and “telecommunication.” However, this approach may have inadvertently excluded relevant articles that address churn prediction in telecom but do not feature these specific keywords.

- Limitation to Specific Online Publishers: The study was limited to sourcing articles from only six online publishers. Expanding the search to include additional academic journals could provide a more comprehensive understanding and possibly unveil additional insights.

VIII. Findings

- Popularity of Decision Tree Technique: The Decision Tree emerges as the most prevalent technique used for churn prediction, indicating its widespread acceptance and utility in the field.

- Data Quality Challenges: Researchers face major challenges in terms of data quality, particularly due to the unavailability of complete datasets or the presence of overly large datasets with noisy information.

- High Dimensionality and Data Imbalance: The high dimensionality and imbalanced nature of data are identified as significant obstacles, complicating the development of precise and reliable prediction models.

- Limitations of Classification Techniques: While classification techniques are effective for analyzing qualitative and continuous data, they fall short in ensuring the desired accuracy of prediction models, especially for highly dimensional, non-linear, or time series datasets.

IX. Conclusions

References

- Ahna, J.-H.; Hana, S.-P.; Lee, Y.-S. Customer churn analysis: Churn determinants and mediation effects of partial defection in the Korean mobile telecommunications service industry. Telecommunications Policy 2006, 30, 552–568. [Google Scholar] [CrossRef]

- Shu, X.; Ye, Y. Knowledge Discovery: Methods from data mining and machine learning. Social Science Research 2023, 110, 102817. [Google Scholar] [CrossRef] [PubMed]

- “FF23 Mobile Network Coverage.” International Telecommunication Union, 10 Oct. 2023, www.itu.int/itu-d/reports/statistics/2023/10/10/ff23-mobile-network-coverage/.

- Adnan, I.; Rizwan, M.; Khan, A. Churn prediction in telecom using Random Forest and PSO based data balancing in combination with various feature selection strategies. Journal of Computers and Electrical Engineering 2012, 38, 1808–1819. [Google Scholar]

- Chen, Z.-Y.; Fan, Z.-P.; Sun, M. A hierarchical multiple kernel support vector machine for customer churn prediction using longitudinal behavioral data. European Journal of Operational Research 2012, 223, 461–472. [Google Scholar] [CrossRef]

- Gordini, N.; Veglio, V. Customers churn prediction and marketing retention strategies. An application of support vector machines based on the AUC parameter-selection technique in B2B e-commerce industry. Industrial Marketing Management 2017, 62, 100–107. [Google Scholar] [CrossRef]

- Lemmens, A.; Gupta, S. Managing churn to maximize profits. Marketing Science 2020, 39, 2020–956. [Google Scholar] [CrossRef]

- Mozer, M. C. , et al. Predicting subscriber dissatisfaction and improving retention in the wireless telecommunications industry. IEEE Transactions on Neural Networks 2000, 11, 690–696. [Google Scholar] [CrossRef]

- Hadden, J.; et al. Computer assisted customer churn management: State-of-the-art and future trends. Computers & Operations Research 2007, 34, 2902–2917. [Google Scholar]

- Coussement, K.; Van den Poel, D. Churn prediction in subscription services: An application of support vector machines while comparing two parameter-selection techniques. Expert Systems with Applications 2008, 34, 313–327. [Google Scholar] [CrossRef]

- Burez, J.; Van den Poel, D. Handling class imbalance in customer churn prediction. Expert Systems with Applications 2009, 36, 4626–4636. [Google Scholar] [CrossRef]

- Pendharkar, P.C. Genetic algorithm based neural network approaches for predicting churn in cellular wireless network services. Expert Systems with Applications 2009, 36, 6714–6720. [Google Scholar] [CrossRef]

- Idris, A.; et al. Genetic programming and adaboosting based churn prediction for telecom. IEEE International Conference on Systems, Man, and Cybernetics (SMC), 2012, pp. 1328–1332.

- Vafeiadis, T.; et al. A comparison of machine learning techniques for customer churn prediction. Simulation Modelling Practice and Theory 2015, 55, 1–9. [Google Scholar] [CrossRef]

- Idris, A.; Khan, A. Churn prediction system for telecom using filter–wrapper and ensemble classification. The Computer Journal 2017, 60, 410–430. [Google Scholar] [CrossRef]

- Imani, M.; Arabnia, H.R. Hyperparameter Optimization and Combined Data Sampling Techniques in Machine Learning for Customer Churn Prediction: A Comparative Analysis. Technologies 2023, 11, 167. [Google Scholar] [CrossRef]

- Wu, S.; et al. Integrated churn prediction and customer segmentation framework for telco business. IEEE Access 2021, 9, 62118–62136. [Google Scholar] [CrossRef]

- Beeharry, Y.; Fokone, R.T. Hybrid approach using machine learning algorithms for customers’ churn prediction in the telecommunications industry. Concurrency and Computation: Practice and Experience 2022, 34, e6627. [Google Scholar] [CrossRef]

- Jolly, K. Machine Learning with Scikit-Learn Quick Start Guide: Classification, Regression, and Clustering Techniques in Python. Packt Publishing Ltd., 2018.

- Usman-Hamza, F.E.; et al. Intelligent decision forest models for customer churn prediction. Applied Sciences 2022, 12, 8270. [Google Scholar] [CrossRef]

- Ullah, I.; et al. A churn prediction model using random forest: analysis of machine learning techniques for churn prediction and factor identification in telecom sector. IEEE Access 2019, 7, 60134–60149. [Google Scholar] [CrossRef]

- Fujo, W.S.; et al. Customer churn prediction in telecommunication industry using deep learning. Information Sciences Letters 2022, 11, 24. [Google Scholar]

- Chu, B.H.; et al. Towards a hybrid data mining model for customer retention. Knowledge-Based Systems 2007, 20, 703–718. [Google Scholar] [CrossRef]

- Berry, M.J.A.; Linoff, G.S. Data Mining Techniques Second Edition – for Marketing, Sales, and Customer Relationship Management. 2004.

- Liu, R.; et al. An intelligent hybrid scheme for customer churn prediction integrating clustering and classification algorithms. Applied Sciences 2022, 12, 9355. [Google Scholar] [CrossRef]

- Fathian, M.; et al. Offering a hybrid approach of data mining to predict the customer churn based on bagging and boosting methods. Kybernetes, 2016.

- Tsai, C.-F.; Lu, Y.-H. Customer churn prediction by hybrid neural networks. Expert System with Applications 2009, 36, 12547–12553. [Google Scholar] [CrossRef]

- Song, H.S.; et al. A personalized defection detection and prevention procedure based on the self-organizing map and association rule mining: Applied to online game site. Artificial Intelligence Review 2004, 21, 161–184. [Google Scholar] [CrossRef]

- Bhambri, V. Data Mining as a Tool to Predict Churn Behaviour of Customers. GE-International Journal of Management Research (IJMR), April 2013, pp. 59–69.

- Idris, A.; Rizwan, M.; Khan, A. Churn prediction in telecom using Random Forest and PSO based data balancing in combination with various feature selection strategies. Journal of Computers and Electrical Engineering 2012, 38, 1808–1819. [Google Scholar] [CrossRef]

- Imani, M.; et al. The Impact of SMOTE and ADASYN on Random Forest and Advanced Gradient Boosting Techniques in Telecom Customer Churn Prediction. 2024 10th International Conference on Web Research (ICWR). IEEE, 2024. [CrossRef]

- Joudaki, M.; et al. Presenting a New Approach for Predicting and Preventing Active/Deliberate Customer Churn in Telecommunication Industry. Proceedings of the International Conference on Security and Management (SAM). The Steering Committee of The World Congress in Computer Science, Computer Engineering and Applied Computing (WorldComp), 2011.

- Joudaki, M.; Imani, M.; Arabnia, H.R. A New Efficient Hybrid Technique for Human Action Recognition Using 2D Conv-RBM and LSTM with Optimized Frame Selection. Technologies 13.2 (2025): 53. [CrossRef]

- Mazhari, N.; et al. An overview of the classification and its algorithm. 3rd Data Mining Conference (IDMC’09): Tehran. 2009.

| Ref. | Publication Year |

|---|---|

| [8] | 2000 |

| [9] | 2007 |

| [10] | 2008 |

| [11] | 2009 |

| [12] | 2009 |

| [13] | 2012 |

| [14] | 2015 |

| [15] | 2017 |

| [16] | 2023 |

| [17] | 2021 |

| [18] | 2022 |

| Ref. | Journal/Publication |

|---|---|

| [8] | IEEE Transactions on Neural Networks |

| [9] | Computers & Operations Research |

| [10] | Expert Systems with Applications |

| [11] | Expert Systems with Applications |

| [12] | Expert Systems with Applications |

| [13] | IEEE International Conference on Systems, Man, and Cybernetics (SMC) |

| [14] | Simulation Modelling Practice and Theory |

| [15] | The Computer Journal |

| [16] | MDPI, Technologies |

| [17] | IEEE Access |

| [18] | Concurrency and Computation: Practice and Experience |

| Ref. | Techniques Used |

|---|---|

| [8] | Decision Trees (DT), Artificial Neural Networks (ANN), Logistic Regression (LR), Ensemble Learning Models with Boosting Techniques |

| [9] | Decision Trees (DT), Regression Analysis, Artificial Neural Networks (ANN), Feature Selection Techniques, Genetic Algorithms |

| [10] | Support Vector Machine (SVM), Cross-Validation, Grid-Search, Logistic Regression (LR), Random Forest (RF) |

| [11] | Weighted Random Forest (RF), Gradient Boosting Models, Under-sampling Techniques |

| [12] | Artificial Neural Networks (ANN) with Genetic Algorithm (GA) |

| [13] | Ensemble Models with Decision Trees (DT), Boosting Methods, Genetic Programming, AdaBoost |

| [14] | Decision Trees (DT), Artificial Neural Networks (ANN), Naïve Bayes, Support Vector Machine (SVM), Logistic Regression (LR), Ensemble Learning with Boosting Techniques |

| [15] | Filter–Wrapper Feature Selection, Ensemble Classification, Particle Swarm Optimization (PSO), Genetic Algorithm (GA) |

| [16] | Artificial Neural Networks, Decision Trees, Support Vector Machines, Random Forests, Logistic Regression, XGBoost, LightGBM, CatBoost, Sampling Techniques, Hyperparameter Optimization |

| [17] | Artificial Neural Networks (ANN), Logistic Regression (LR), Decision Trees (DT), Random Forest (RF), Naïve Bayes, AdaBoost, Synthetic Minority Oversampling Technique (SMOTE) |

| [18] | Two-Layer Soft Voting Model, Conventional Machine Learning Algorithms, Ensemble Classifiers |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).