Submitted:

02 March 2024

Posted:

05 March 2024

Read the latest preprint version here

Abstract

Keywords:

Introduction

Purpose of the Study

I. Related Wwork

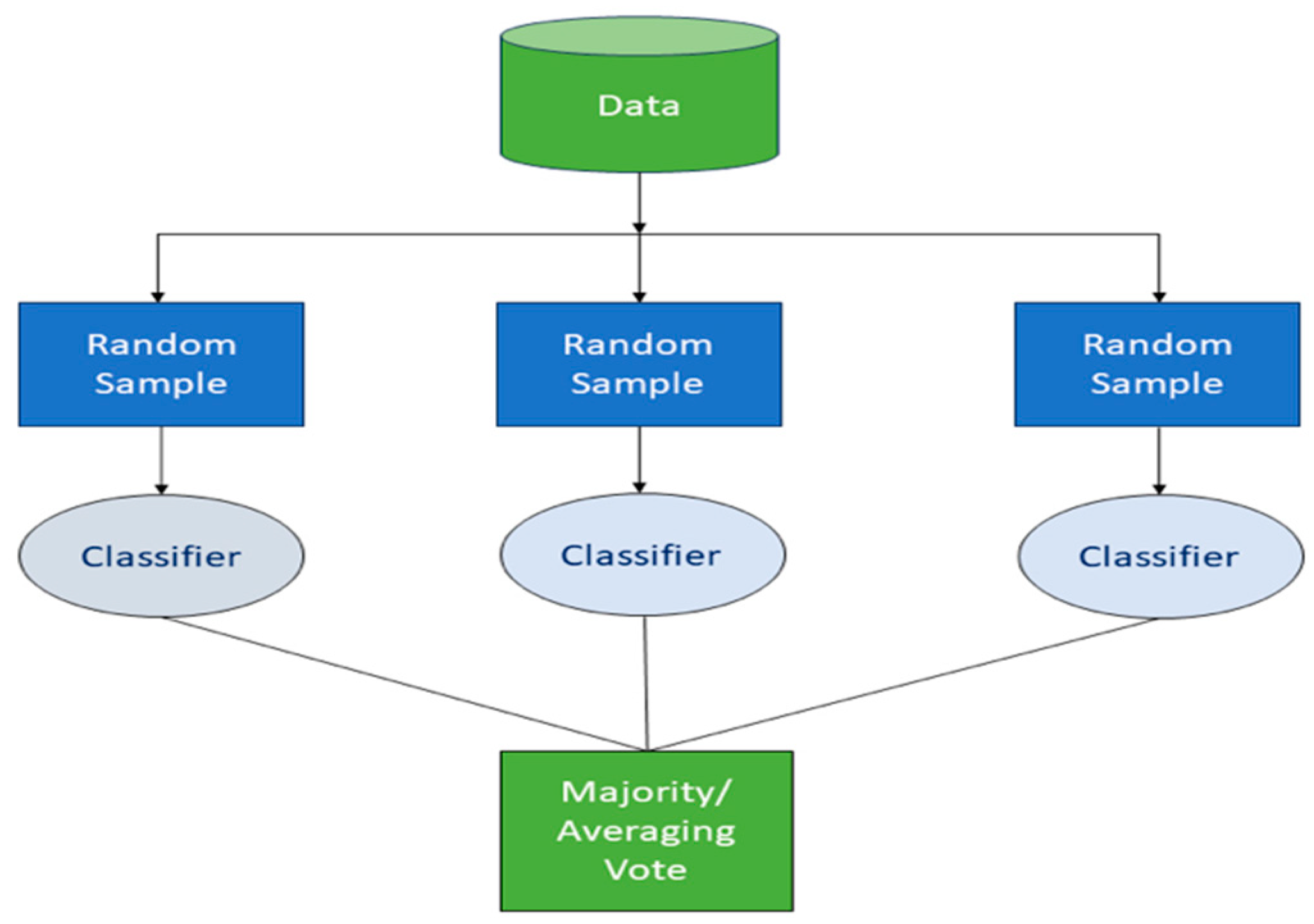

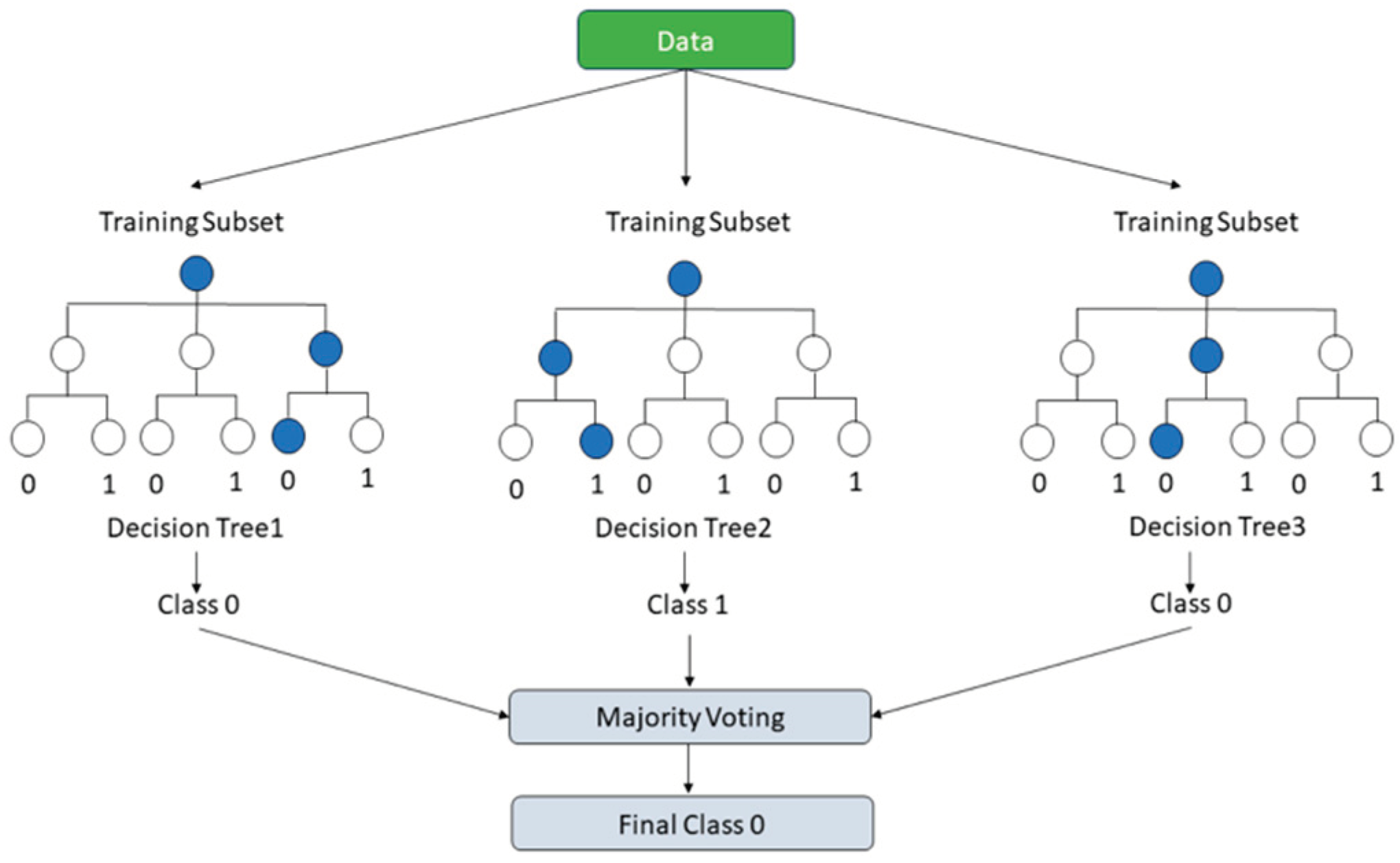

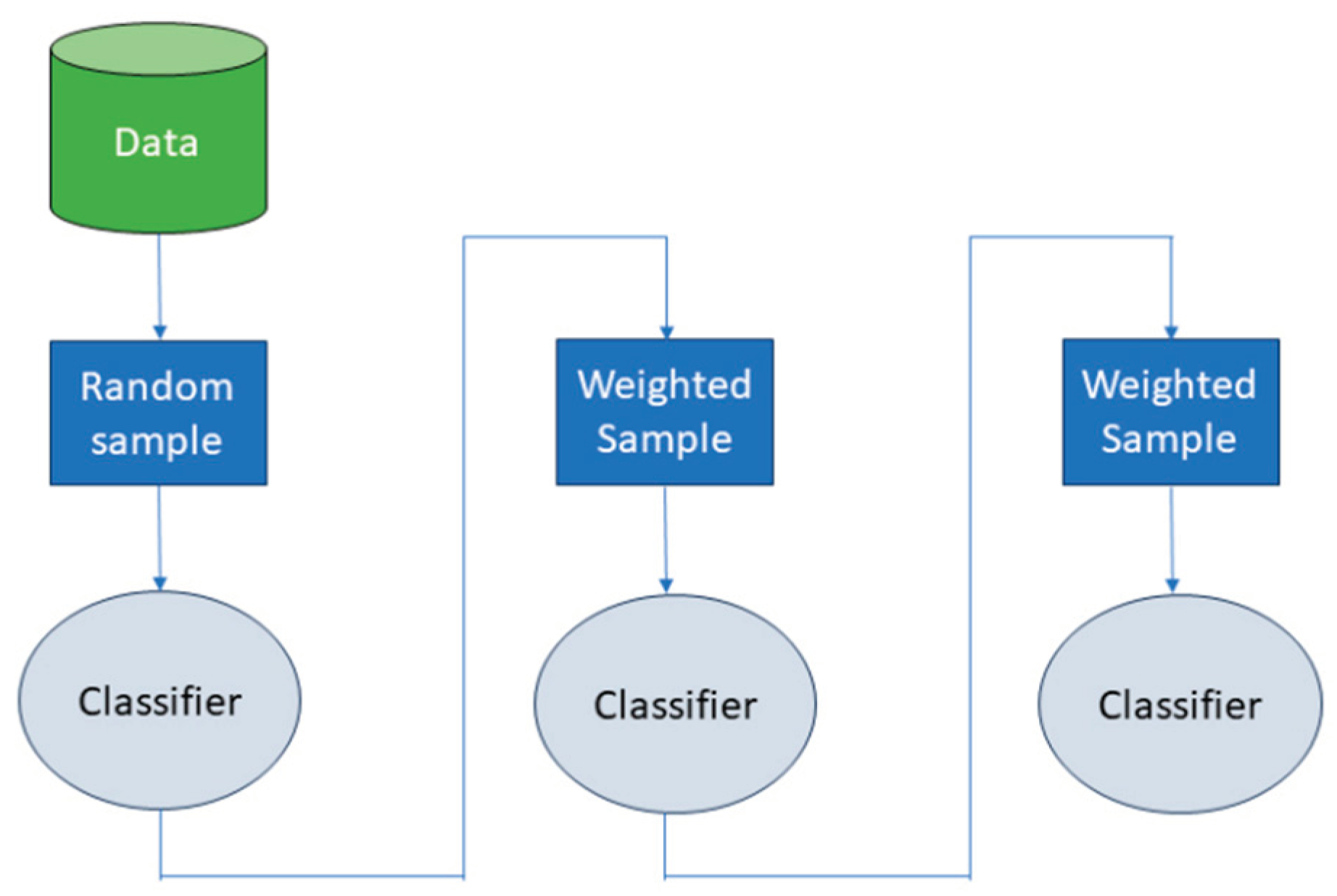

- Ensemble Learning: This paradigm amalgamates the outputs from an array of models to formulate a singular, more potent classifier. It capitalizes on the collective strength of numerous weaker models to erect a more robust and accurate predictive model. Within this framework, bagging and boosting are two principal strategies [1,2,8].

II. Method

A. Training and Validation Process

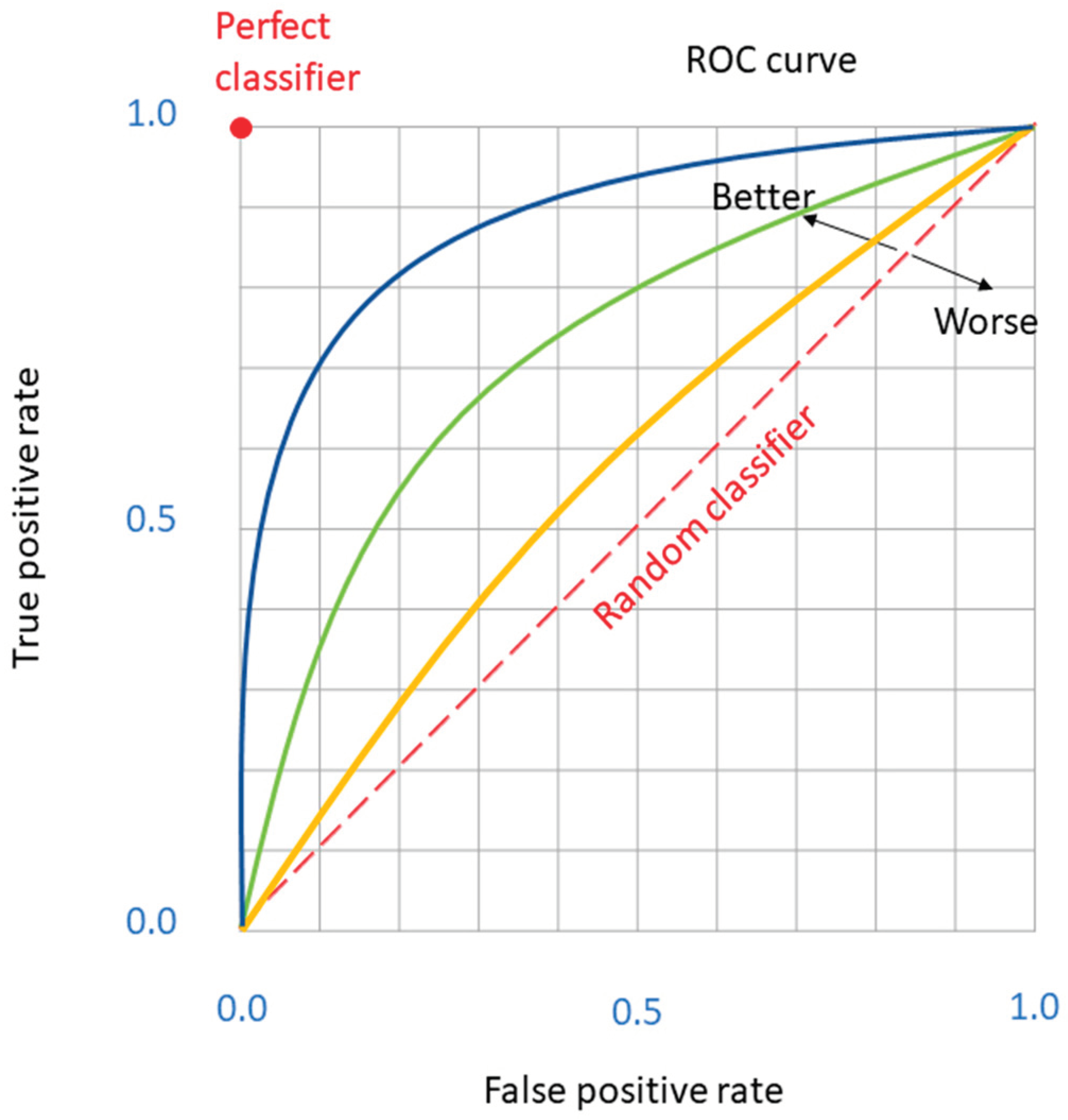

B. Evaluation Metrics

- Precision (also known as Positive Predictive Value) quantifies the accuracy of positive predictions. It is calculated asessentially the ratio of correctly predicted positive observations to the total predicted positives.

- Recall (or Sensitivity) measures the model’s ability to correctly identify all positive cases. It is defined asrepresenting the fraction of true positives detected over all actual positives.

- Accuracy assesses the overall correctness of the model, computed aswhich is the proportion of true results (both true positives and true negatives) among the total number of cases examined.

- F1-score provides a balance between Precision and Recall, serving as a harmonic mean of the two metrics. It is calculated aswhich combines the precision and recall of a model into a single metric by taking their harmonic mean, thereby offering a measure of the model’s accuracy in cases where an equal importance is placed on precision and recall.

| Predicted Class | |||

|---|---|---|---|

| Churners | Non-churners | ||

| Actual Class | Churners | TP | FN |

| Non-churners | FP | TN | |

C. The Challenge of Imbalanced Data

D. Sampling Techniques

- Synthetic Minority Over-Sampling Technique (SMOTE)

- Adaptive Synthetic Sampling Approach (ADASYN)

E. Hyperparameters Tuning

III. Results

A. Simulation Framework

B. Outcomes of Simulations

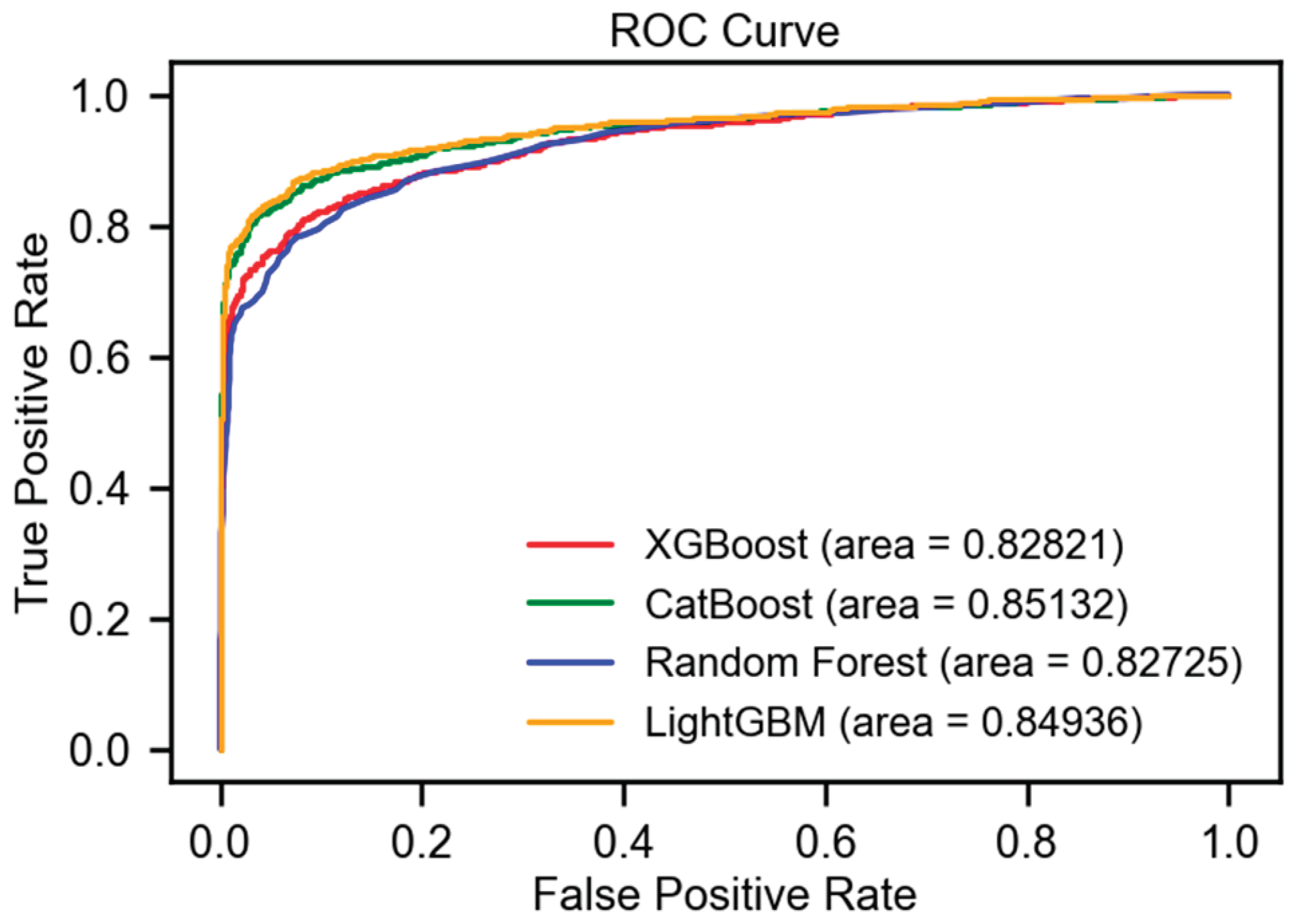

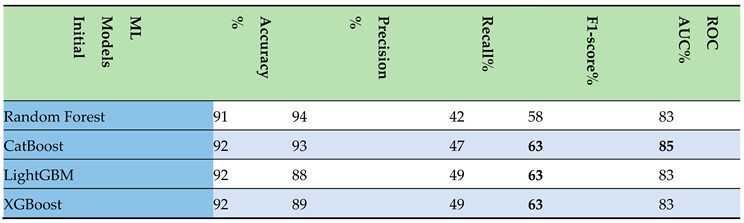

- Initial Step: Following the execution of data preprocessing and feature delineation, the results were methodically aggregated into a tabular format. This compilation underscored the superior performance exhibited by the boosting algorithms, particularly in relation to the F1-score and ROC AUC metrics, as delineated in Table 2. Key findings are highlighted in bold for enhanced clarity. Among the models evaluated, CatBoost distinguished itself as a top performer, securing an impressive F1-score of 63% and an ROC AUC of 85%.

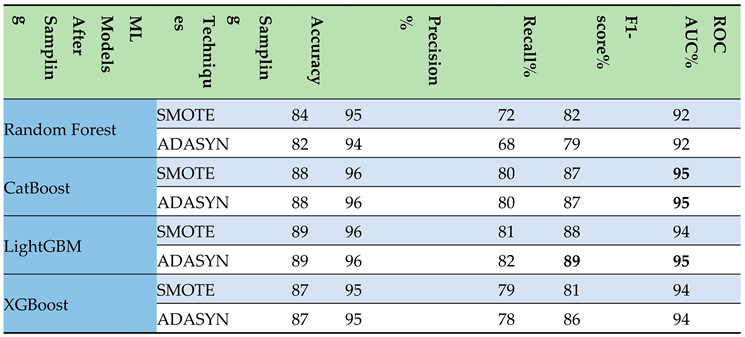

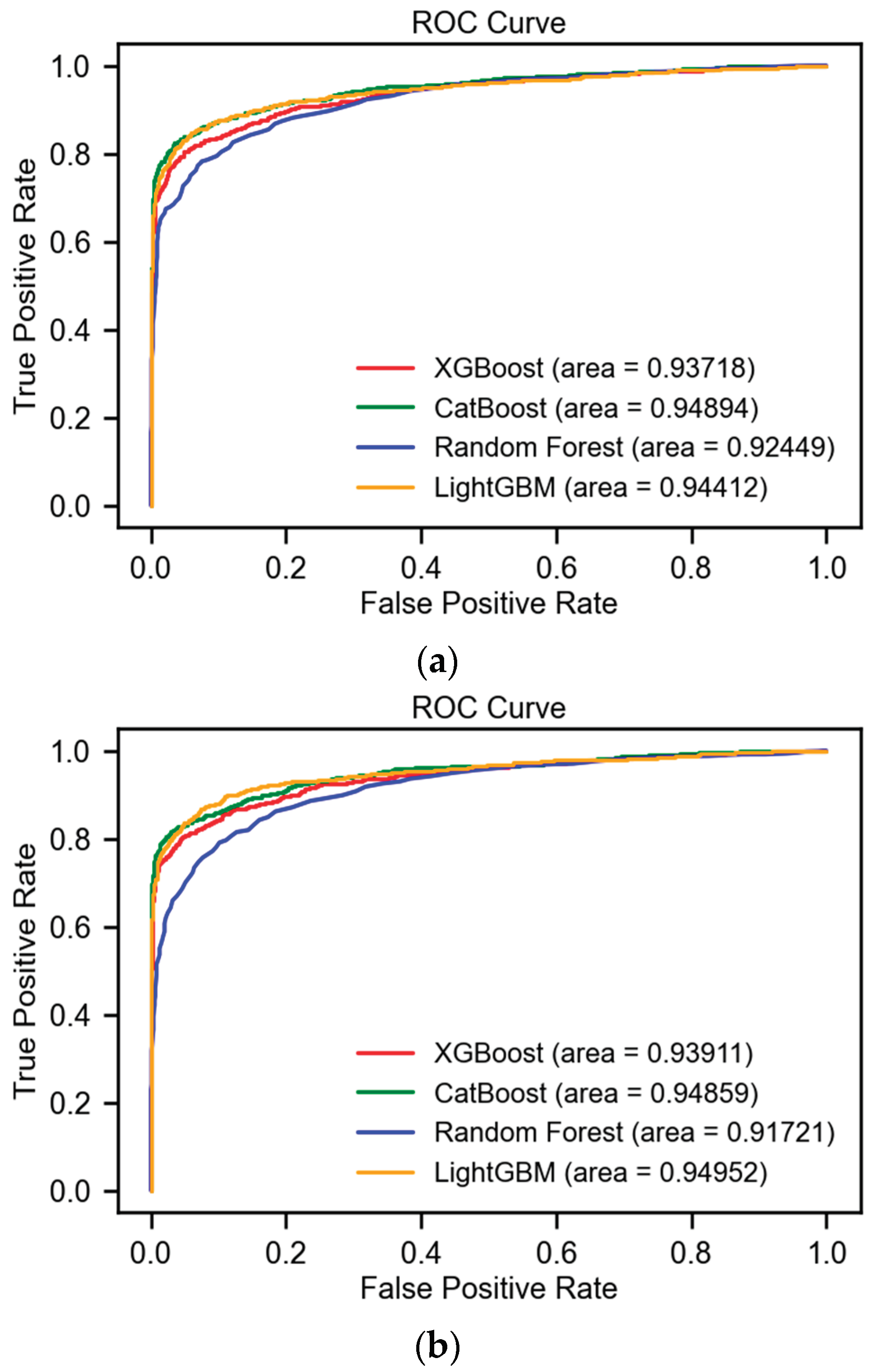

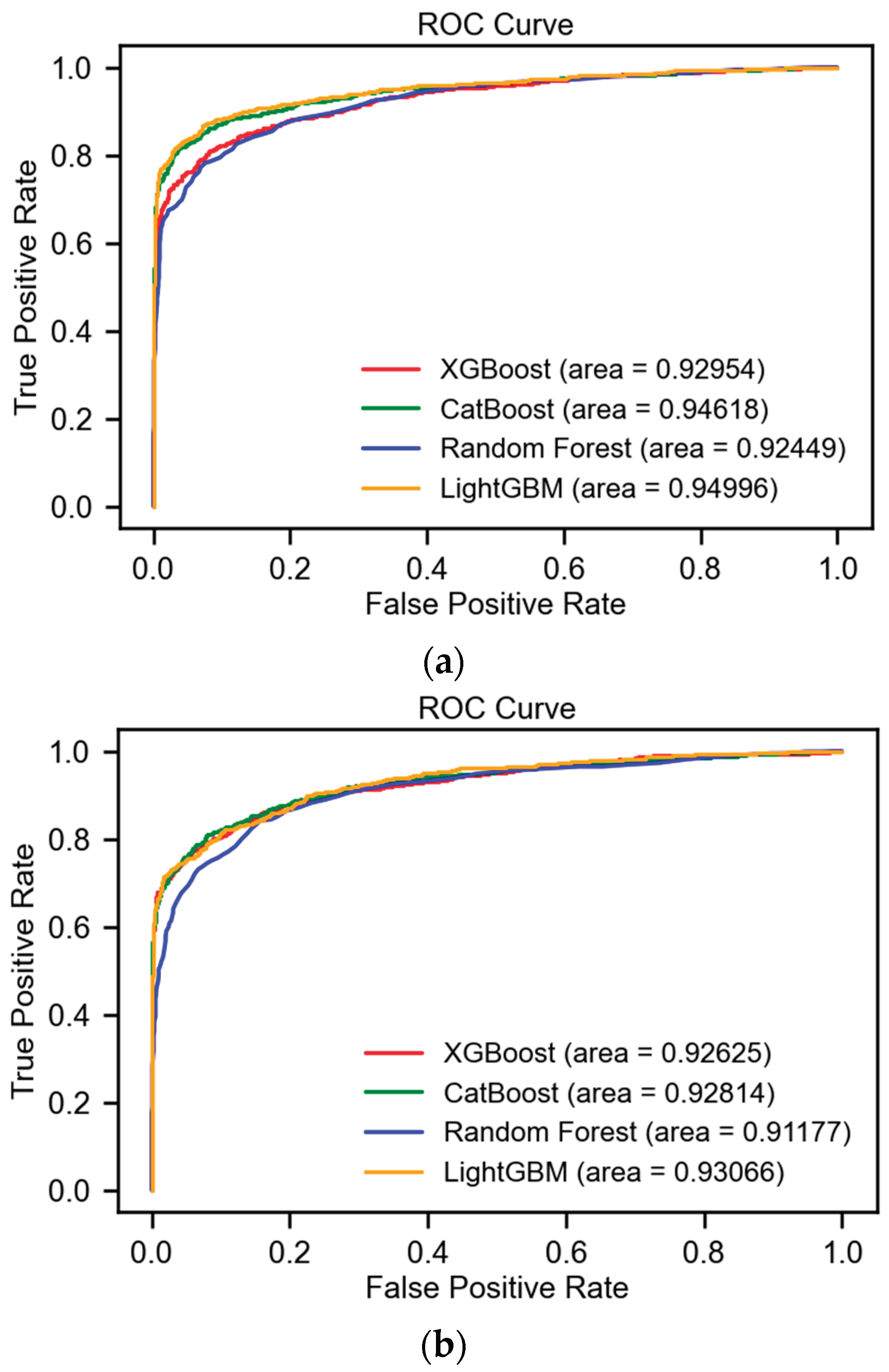

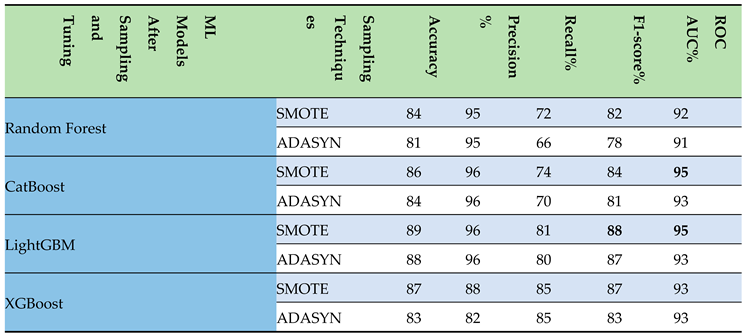

- Sampling Step: Subsequent to the implementation of data sampling techniques such as SMOTE and ADASYN, the outcomes were systematically organized into a table. This structured presentation highlighted the enhanced efficacy of the CatBoost and LightGBM models, particularly with respect to the F1-score and ROC AUC metrics, as detailed in Table 3. Key findings are highlighted in bold for improved readability. Among these, LightGBM distinguished itself, attaining an impressive F1-score of 89% and an ROC AUC of 95% after applying ADASYN.

|

|

- Tuning Step: Following the hyperparameter tuning of models on upsampled data, the results were meticulously organized in a tabular format. This arrangement illuminated the superior performance of the CatBoost and LightGBM models, especially in terms of the F1-score and ROC AUC metrics, as presented in Table 4. Key findings are highlighted in bold to enhance legibility. Notably, LightGBM achieved a commendable F1-score of 88% and an ROC AUC of 95%, figures that remained consistent with its performance prior to the implementation of hyperparameter tuning.

IV. Conclusions and Future Work

- Advanced Sampling Techniques: Beyond SMOTE and ADASYN, future investigations could evaluate the efficacy of more recent upsampling methods such as Borderline-SMOTE, SVMSMOTE, and K-Means SMOTE. These techniques offer nuanced approaches to balancing datasets by focusing on the samples near the decision boundary, leveraging support vector machines, or employing clustering methods to generate synthetic samples, respectively.

- Integration of Novel Hyperparameter Optimization Algorithms: While this study utilized random grid search, subsequent research could delve into the application of cutting-edge optimization techniques. Bayesian optimization, Genetic Algorithms, and Particle Swarm Optimization (PSO) are notable for their potential to efficiently navigate the hyperparameter space with the aim of uncovering optimal model configurations.

- Exploration of Emerging Machine Learning Models: The rapid advancements in artificial intelligence herald the introduction of new and innovative models. Research can expand to include the evaluation of models such as Deep Learning architectures (e.g., Convolutional Neural Networks for tabular data and Recurrent Neural Networks for sequence prediction), Graph Neural Networks (GNNs) for relational data, and Transformer models adapted for time series forecasting. These models could offer superior performance in capturing complex patterns and relationships in customer data.

References

- K. Alok and J. Mayank, Ensemble Learning for AI Developers. Berkeley, CA, USA: BApress, 2020.

- M. Van Wezel and R. Potharst, “Improved customer choice predictions using ensemble methods,” Eur. J. Oper. Res., vol. 181, pp. 436-452, 2007. [CrossRef]

- Ullah, B. Raza, A.K. Malik, M. Imran, S.U. Islam, and S.W. Kim, “A Churn Prediction Model Using Random Forest: Analysis of Machine Learning Techniques for Churn Prediction and Factor Identification in Telecom Sector,” IEEE Access, vol. 7, pp. 60134-60149, 2019. [CrossRef]

- P. Lalwani, M.K. Mishra, J.S. Chadha, and P. Sethi, “Customer churn prediction system: A machine learning approach,” Computing, vol. 104, pp. 271-294, 2021. [CrossRef]

- N. Mazhari, M. Imani, M. Joudaki, and A. Ghelichpour, “An overview of classification and its algorithms,” in Proceedings of the 3rd Data Mining Conference (IDMC’09), Tehran, Iran, Dec. 15-16, 2009.

- M. Ahmed, H. Afzal, I. Siddiqi, M.F. Amjad, and K. Khurshid, “Exploring nested ensemble learners using overproduction and choose approach for churn prediction in telecom industry,” Neural Comput. Appl., vol. 32, pp. 3237-3251, 2018. [CrossRef]

- M. Joudaki, M. Imani, M. Esmaeili, M. Mahmoodi, and N. Mazhari, “Presenting a New Approach for Predicting and Preventing Active/Deliberate Customer Churn in Telecommunication Industry,” in Proceedings of the International Conference on Security and Management (SAM), 2011.

- I.H. Witten, E. Frank, and M.A. Hall, Data Mining: Practical Machine Learning Tools and Techniques. San Francisco, CA, USA: Elsevier Science & Technology, 2016.

- T.K. Ho, “Random decision forests,” in Proceedings of the 3rd International Conference on Document Analysis and Recognition, Montreal, QC, Canada, vol. 1, Aug. 14-16, 1995.

- L. Breiman, “Random forests,” Mach. Learn., vol. 45, pp. 5-32, 2001. [CrossRef]

- J. Karlberg and M. Axen, Binary Classification for Predicting Customer Churn. Umeå, Sweden: Umeå University, 2020.

- D. Windridge and R. Nagarajan, “Quantum Bootstrap Aggregation,” in Proceedings of the International Symposium on Quantum Interaction, San Francisco, CA, USA, Jul. 20-22, 2016.

- J.C. Wang and T. Hastie, “Boosted Varying-Coefficient Regression Models for Product Demand Prediction,” J. Comput. Graph. Stat., vol. 23, pp. 361-382, 2014. [CrossRef]

- E. Al Daoud, “Intrusion Detection Using a New Particle Swarm Method and Support Vector Machines,” World Acad. Sci. Eng. Technol., vol. 77, pp. 59-62, 2013.

- E. Al Daoud and H. Turabieh, “New empirical nonparametric kernels for support vector machine classification,” Appl. Soft Comput., vol. 13, pp. 1759-1765, 2013. [CrossRef]

- E. Al Daoud, “An Efficient Algorithm for Finding a Fuzzy Rough Set Reduct Using an Improved Harmony Search,” Int. J. Mod. Educ. Comput. Sci. (IJMECS), vol. 7, pp. 16-23, 2015. [CrossRef]

- Y. Zhang and A. Haghani, “A gradient boosting method to improve travel time prediction,” Transp. Res. Part C Emerg. Technol., vol. 58, pp. 308-324, 2015. [CrossRef]

- Dorogush, V. Ershov, and A. Gulin, “CatBoost: Gradient boosting with categorical features support,” in Proceedings of the Thirty-first Conference on Neural Information Processing Systems, Long Beach, CA, USA, Dec. 4-9, 2017.

- G. Ke, Q. Meng, T. Finley, T. Wang, W. Chen, W. Ma, Q. Ye, and T.Y. Liu, “Lightgbm: A highly efficient gradient boosting decision tree,” in Advances in Neural Information Processing Systems, vol. 30, 2017.

- Klein, S. Falkner, S. Bartels, P. Hennig, and F. Hutter, “Fast Bayesian optimization of machine learning hyperparameters on large datasets,” in Proceedings of the Machine Learning Research PMLR, Sydney, NSW, Australia, Aug. 6-11, 2017.

- M. Imani and H.R. Arabnia, “Hyperparameter Optimization and Combined Data Sampling Techniques in Machine Learning for Customer Churn Prediction: A Comparative Analysis,” Technologies, vol. 11, no. 6, 167, 2023. [CrossRef]

- M. Kubat and S. Matwin, “Addressing the curse of imbalanced training sets: one-sided selection,” in Proceedings of the International Conference on Machine Learning (ICML), vol. 97, no. 1, 1997.

- N.V. Chawla, K.W. Bowyer, L.O. Hall, and W.P. Kegelmeyer, “SMOTE: synthetic minority over-sampling technique,” Journal of Artificial Intelligence Research, vol. 16, pp. 321-357, 2002. [CrossRef]

- H. He, Y. Bai, E.A. Garcia, and S. Li, “ADASYN: Adaptive synthetic sampling approach for imbalanced learning,” in 2008 IEEE International Joint Conference on Neural Networks (IEEE World Congress on Computational Intelligence), pp. 1322-1328, Jun. 2008.

- T. Akiba, S. Sano, T. Yanase, T. Ohta, and M. Koyama, “Optuna: A next-generation hyperparameter optimization framework,” in Proceedings of the 25th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining, pp. 2623-2631, Jul. 2019.

- J. Bergstra, D. Yamins, and D. Cox, “Making a science of model search: Hyperparameter optimization in hundreds of dimensions for vision architectures,” in International Conference on Machine Learning, PMLR, 2013.

- J. Bergstra, R. Bardenet, Y. Bengio, and B. Kégl, “Algorithms for hyper-parameter optimization,” in Advances in Neural Information Processing Systems, vol. 24, 2011.

- N. Hansen and A. Ostermeier, “Completely derandomized self-adaptation in evolution strategies,” Evolutionary Computation, vol. 9, no. 2, pp. 159-195, 2001. [CrossRef]

- R. Christy, “Customer Churn Prediction 2020, Version 1,” 2020. [Online]. Available online: https://www.kaggle.com/code/rinichristy/customer-churn-prediction-2020 (accessed on 20 January 2022).

|

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).