Submitted:

28 February 2024

Posted:

29 February 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

|

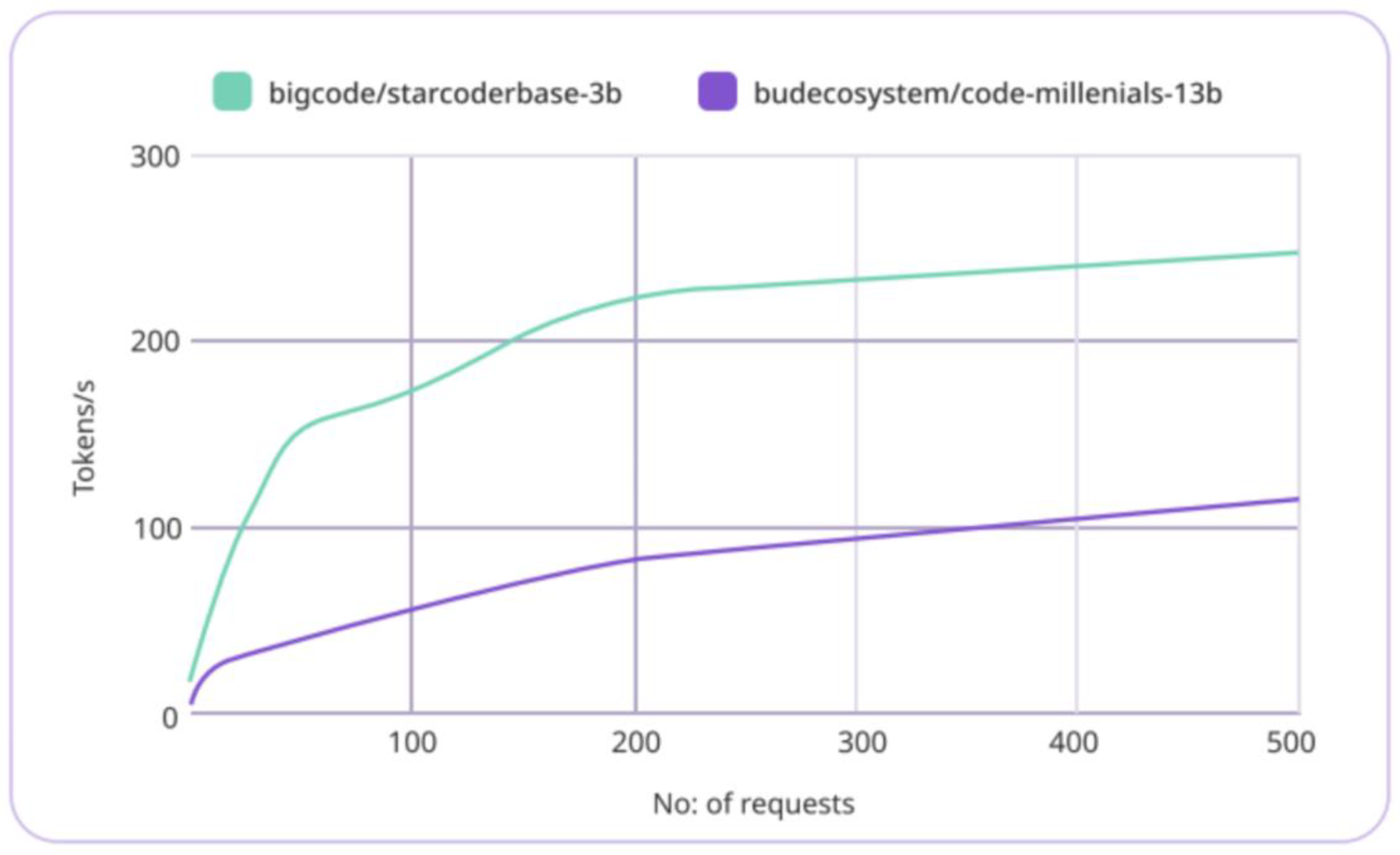

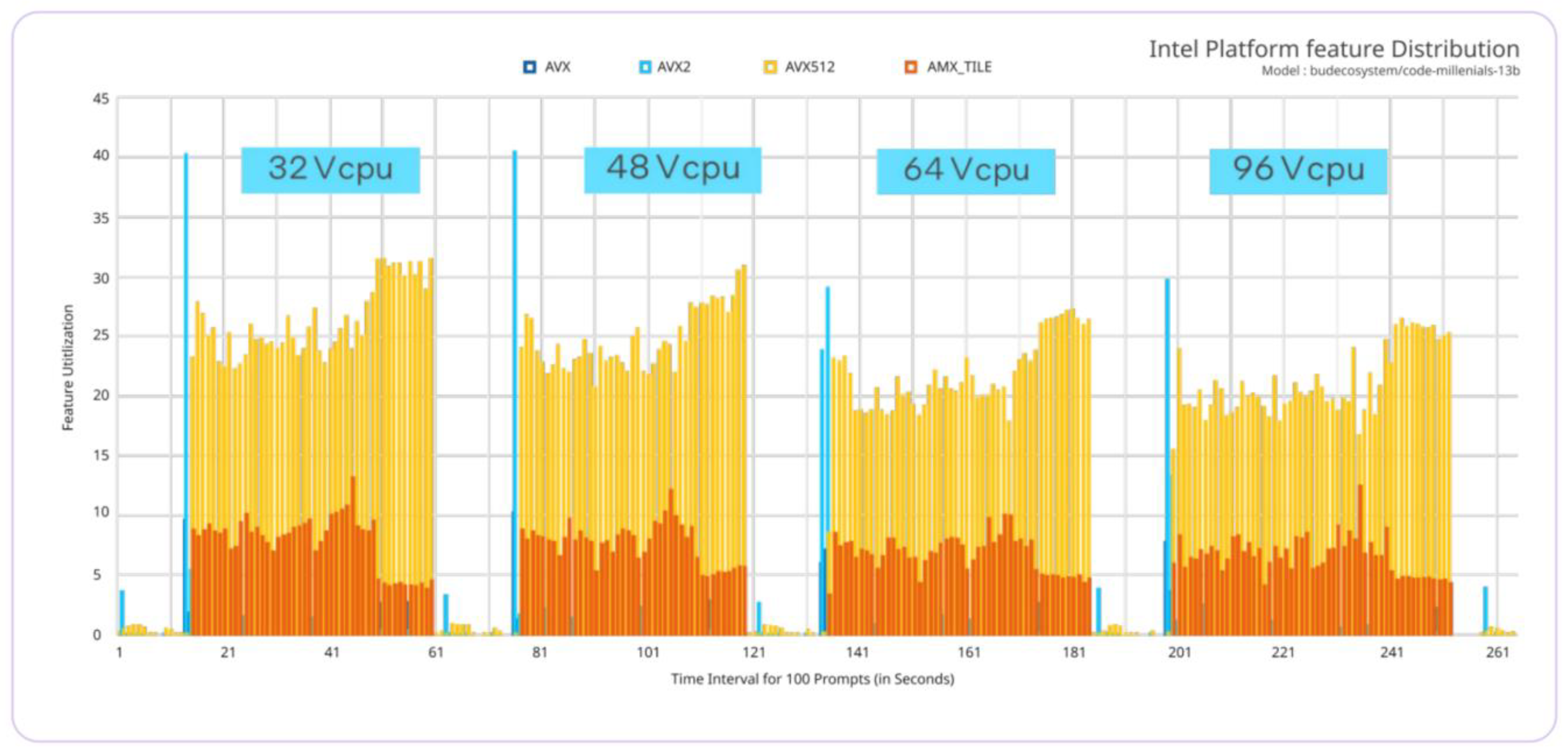

3. Inference Acceleration

- Tiles: These consist of eight two-dimensional registers, each 1 kilobyte in size, that store large chunks of data.

- Tile Matrix Multiplication (TMUL): TMUL is an accelerator engine attached to the tiles that performs matrix-multiply computations for AI.

4. Experimental Setup

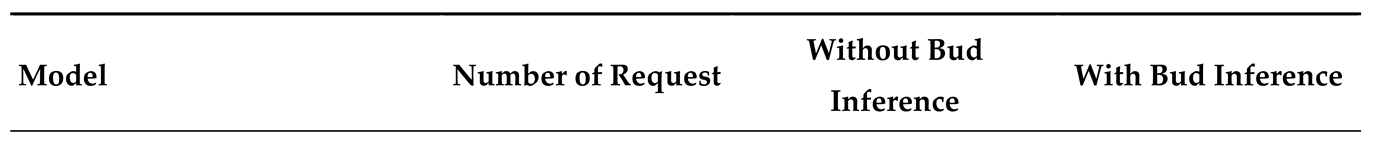

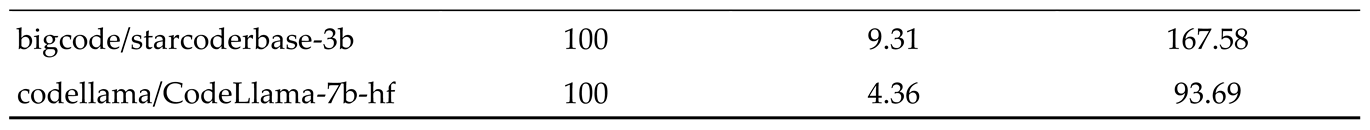

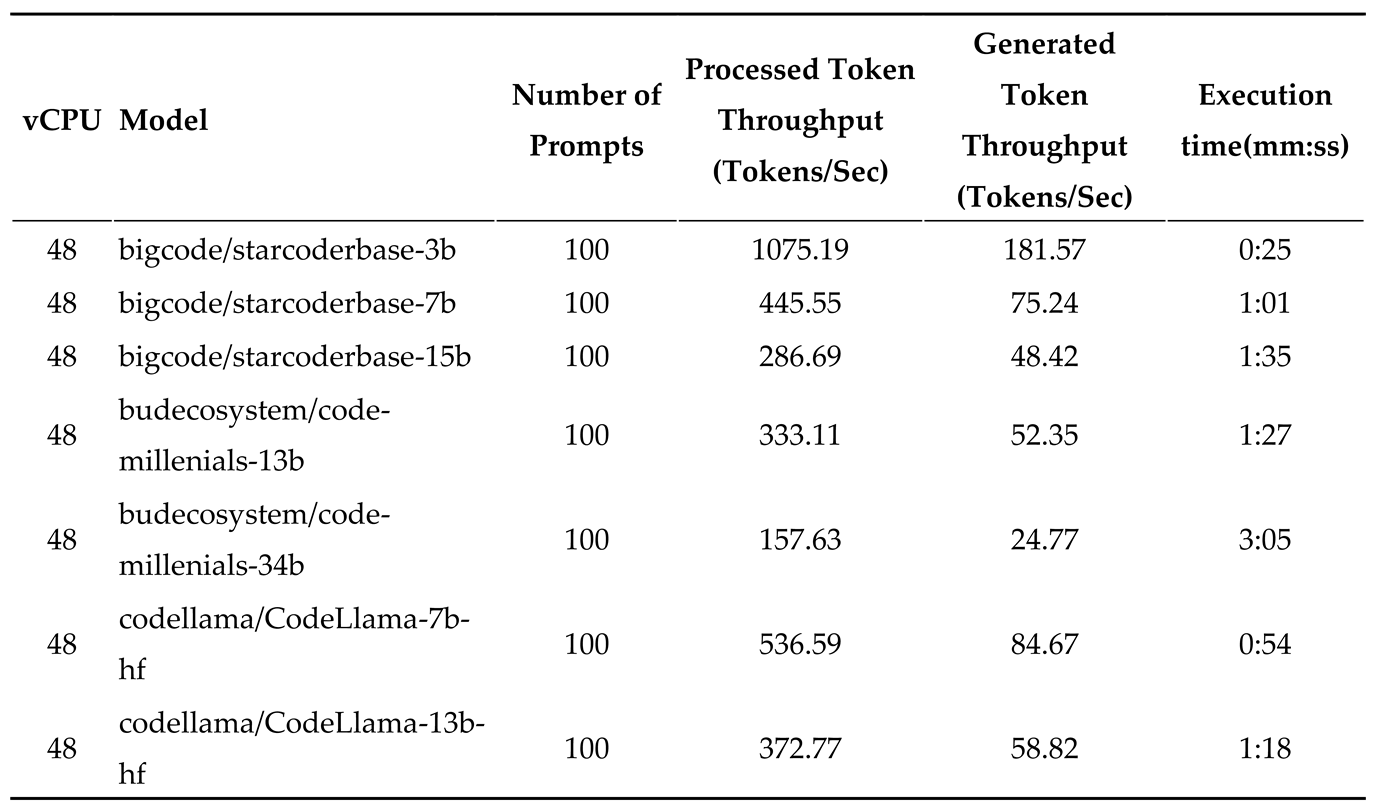

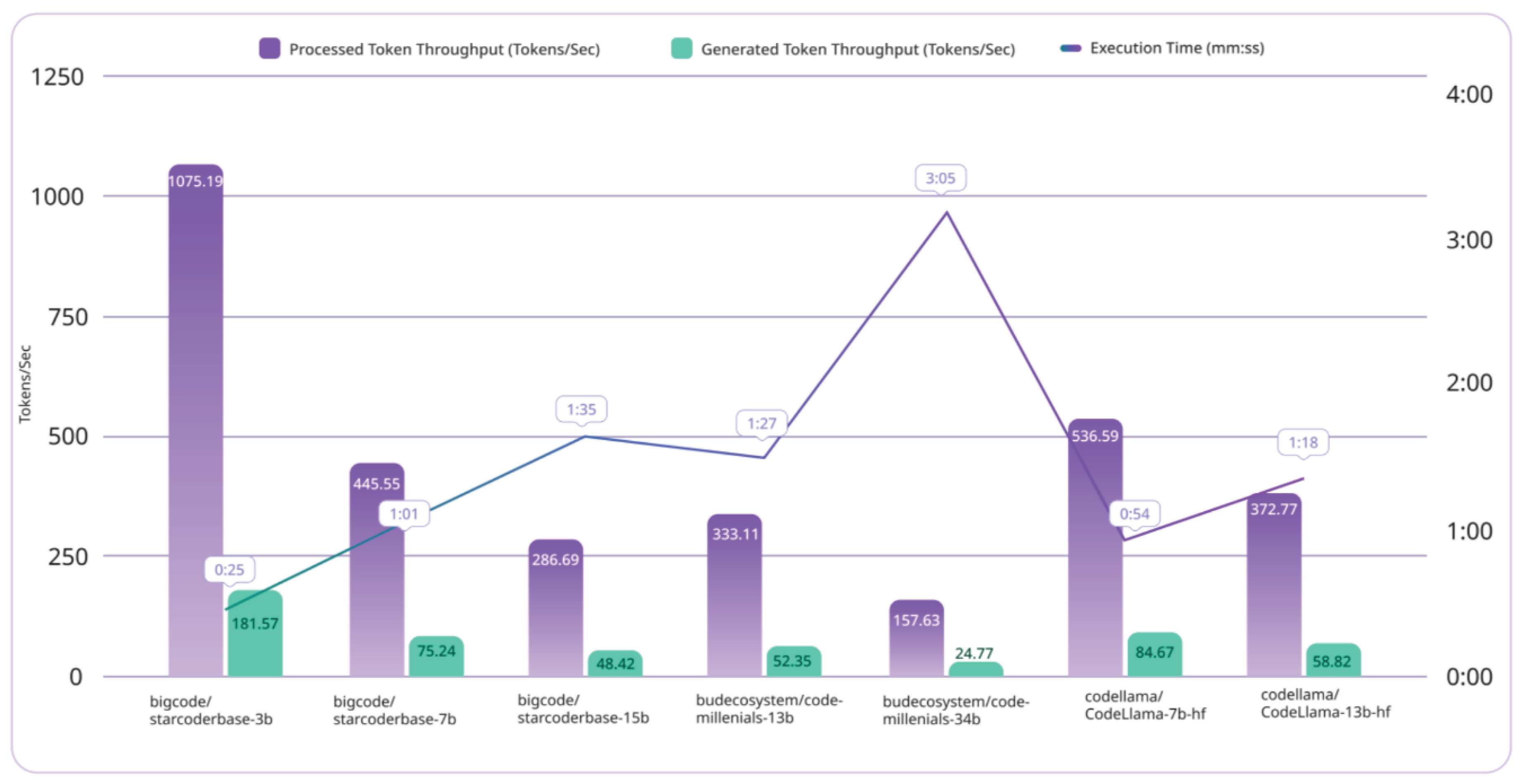

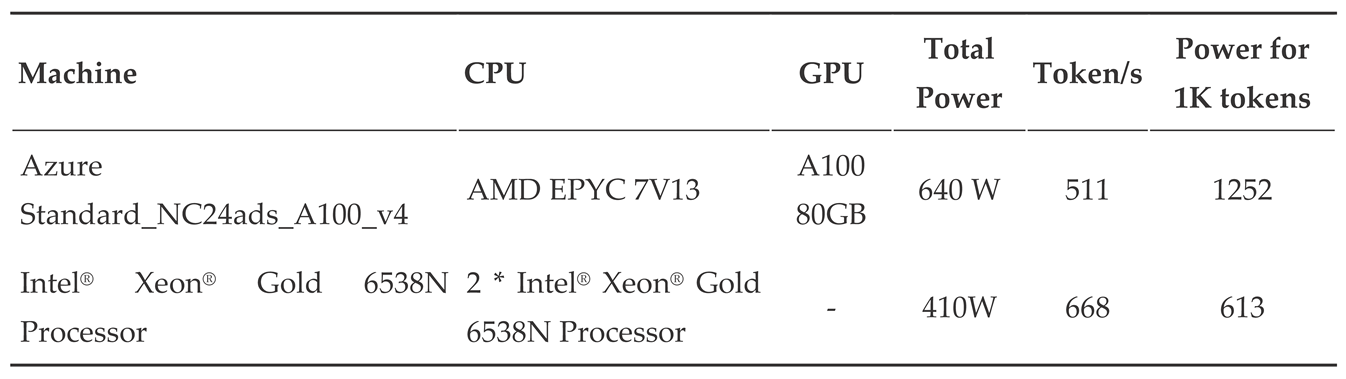

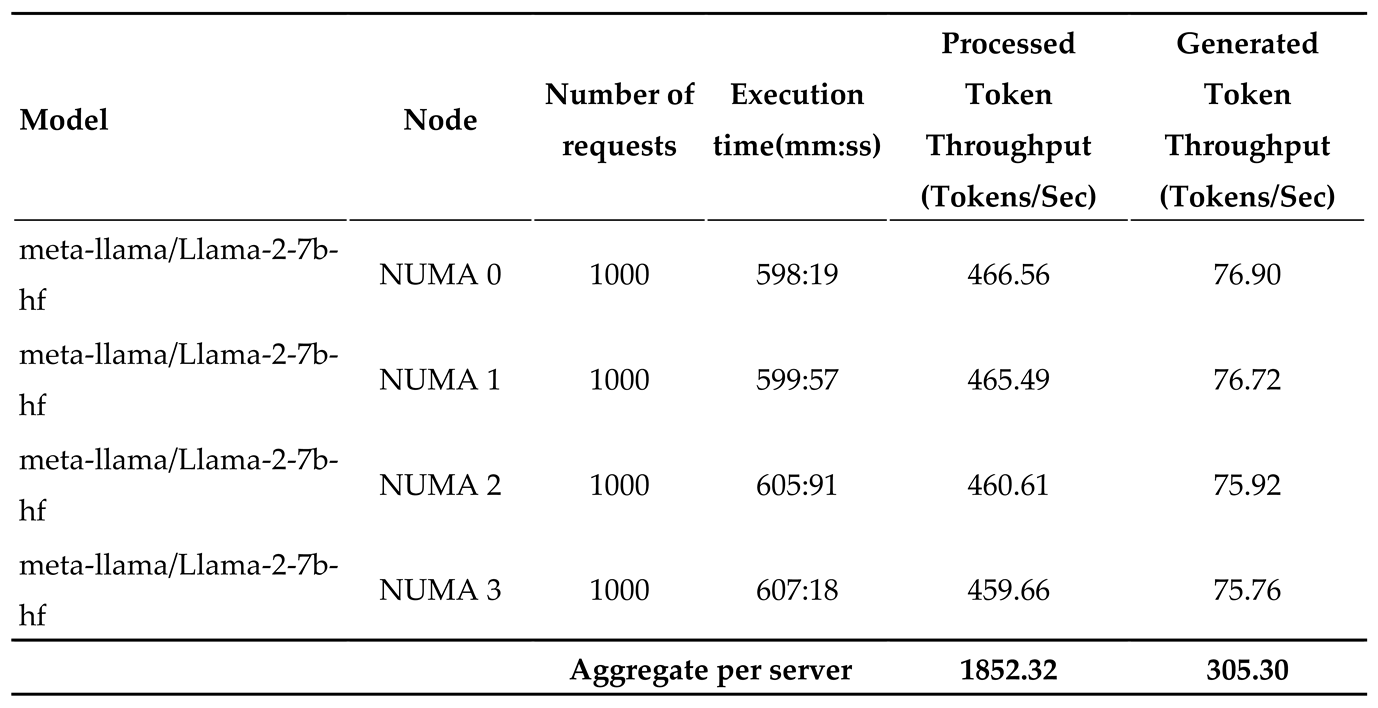

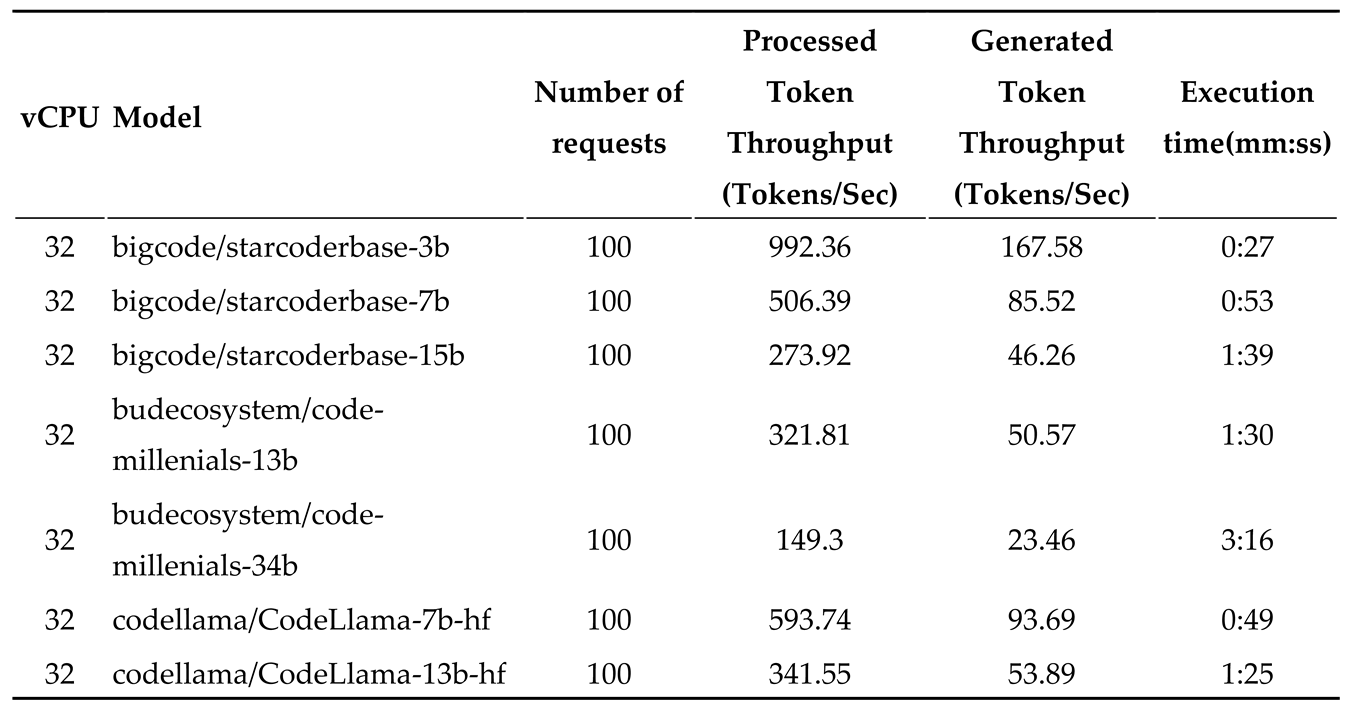

5. Results and Analysis:

|

|

6. Conclusion

References

- L. Chen, M. Zaharia, and J. Zou, “FrugalGPT: How to Use Large Language Models While Reducing Cost and Improving Performance,” May 2023, Accessed: Feb. 29, 2024. [Online]. Available: https://arxiv.org/abs/2305.05176v1.

- N. S. Google, “Fast Transformer Decoding: One Write-Head is All You Need,” Nov. 2019, Accessed: Feb. 29, 2024. [Online]. Available: https://arxiv.org/abs/1911.02150v1.

- M. Hahn, “Theoretical Limitations of Self-Attention in Neural Sequence Models,” Trans Assoc Comput Linguist, vol. 8, pp. 156–171, Jun. 2019. [CrossRef]

- H. Shen, H. Chang, B. Dong, Y. Luo, and H. Meng, “Efficient LLM Inference on CPUs,” Nov. 2023, Accessed: Feb. 29, 2024. [Online]. Available: https://arxiv.org/abs/2311.00502v2.

- “GPT-4.” Accessed: Feb. 29, 2024. [Online]. Available: https://openai.com/research/gpt-4.

- B. Lin et al., “Infinite-LLM: Efficient LLM Service for Long Context with DistAttention and Distributed KVCache,” Jan. 2024, Accessed: Feb. 29, 2024. [Online]. Available: https://arxiv.org/abs/2401.02669v1.

- W. Kwon et al., “Efficient Memory Management for Large Language Model Serving with PagedAttention,” SOSP 2023 - Proceedings of the 29th ACM Symposium on Operating Systems Principles, vol. 1, pp. 611–626, Oct. 2023. [CrossRef]

- C. Lameter, “NUMA (Non-Uniform Memory Access): An Overview,” Queue, vol. 11, no. 7, pp. 40–51, Jul. 2013. [CrossRef]

- R. Li et al., “StarCoder: may the source be with you!,” May 2023, Accessed: Feb. 29, 2024. [Online]. Available: https://arxiv.org/abs/2305.06161v2.

- B. Rozière et al., “Code Llama: Open Foundation Models for Code,” Aug. 2023, Accessed: Feb. 29, 2024. [Online]. Available: https://arxiv.org/abs/2308.12950v3.

|

|

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).