Submitted:

19 February 2024

Posted:

20 February 2024

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

2. Antecedents

3. Methodology to train deep learning models

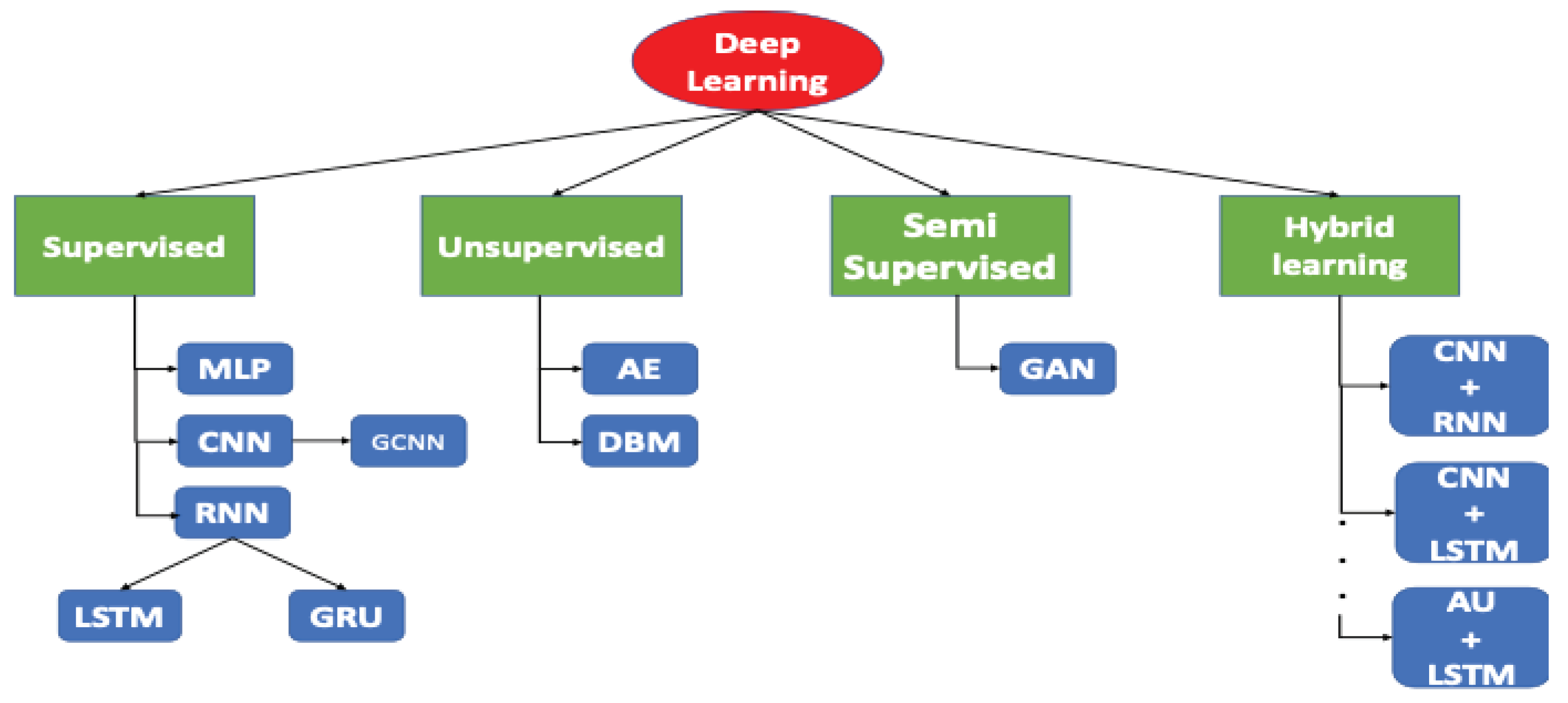

3.1. Deciding what Deep Learning model to use

3.2. Splitting the dataset into train, validation, and test

3.3. Feature scaling

3.4. Choosing the metrics depends on the problem to solve

3.5. Using k-fold validation during the training stage

3.6. Avoiding underfitting and overfitting

3.7. Adding new metrics for an in-depth interpretation of the model

3.8. Making a comparison with a baseline

4. Use cases

| Step | Decision | Explanation |

| 3.1. | Classification problem with coloured images solved with CNNs | The dataset consists of thermographic images of healthy people and people with breast cancer. |

| 3.2. | Randomize 80-20 split | The number of images is enough and images between patients are very different. |

| 3.3. | Scaling: Min-Max | Typical decision when working with images. |

| 3.4. | Accuracy metrics | In a diagnosed problem, we need to measure the number of hits. |

| 3.5. | K-fold validation with k=5 | Usual decision |

| 3.6. | Bias-variance trade-off. Train: 87.67%±6.65, Val: 86.67%±12.47, Test: 89.23%±5.85 | Bias is correct as human performance accuracy, assisted by a CAD, is near 83%, (Keyserlingk et al. 2020). The variance is also correct. |

| 3.7. | Other metrics. Specificity: 88%, Sensitivity: 90.53% and Precision: 88.91% | These metrics are around the same values which means the model is very stable |

| 3.8. | Baselines. VGG16: 68%. VGG19: 51.33%, Inception 84% | The proposed model performs better than the baseline |

| Step | Decision | Explanation |

| 3.1. | Classifying chunks of an EEG using a CNN | The dataset is chunked into timestamps that are model as graphs represented in images. The CNN model discriminates between stages of a seizure. |

| 3.2. | 80-20 split between patients | As brain states in a person does not change a lot from a particular moment and the following, the split is done considering the EEGs of the patients. |

| 3.3. | Scaling: does not apply | As images are in greyscale, they are in the same ranges. |

| 3.4. | Accuracy metrics | For a classification problem, accuracy should be used. |

| 3.5. | K-fold validation with k=5 | Usual decision |

| 3.6. | Bias-variance trade-off. Model 1: 93.6%, 88.2%, 87.2%. Model 2: 91.9%, 86.8%, 81.3% | Bias can not be measured as there are no official studies about the performance of physicians distinguishing seizure’s states. The variance is correct. |

| 3.7. | Other metrics. Specificity: 94%, Sensitivity: 94.1% and Precision: 93.2% | These metrics are around the same values which means the model is very stable. |

| 3.8. | Baselines: nothing. | There is no baseline for being a very specific use case. |

| Step | Decision | Explanation |

| 3.1. | Small brain tumor detection in MR using a U-Net | The dataset is pairs of MRs of the brain and the mask that isolates the brain tumor in this MR |

| 3.2. | Randomize 80-20 split | The number of MRs is enough and are very different between them. |

| 3.3. | Scaling: Not needed | The images are in greyscale. |

| 3.4. | Loss metrics: MSE | In an object detection, we need to know how the predicted mask resembles the ground truth image that represents the mask. |

| 3.5. | K-fold validation with k=5 | Usual decision. |

| 3.6. | Bias-variance trade-off. Train:10% ± 0.5%, Validation: 11.1% ± 0.5%, Test: 14% | Human performance goes from 28 and 44% error (the model performs better) and variance is not high. |

| 3.7. | Other metrics: Dice score. Train: 96.3% ± 0.8%. Validation: 92% ± 1%. Test: 91.6%. | Dice score considers FP and FN. |

| 3.8. | Baselines. Dice score: 45% | The proposed model performs better than the baseline. |

5. Conclusions

References

- Almalki, Amani, and Longin Jan Latecki. 2024. “Self-Supervised Learning With Masked Autoencoders for Teeth Segmentation From Intra-Oral 3D Scans.” In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, , 7820–30.

- Ballard, Dana H. 1987. “Modular Learning in Neural Networks.” In AAAI, , 279–84.

- Belkin, Mikhail, Daniel Hsu, Siyuan Ma, and Soumik Mandal. 2019. “Reconciling Modern Machine-Learning Practice and the Classical Bias--Variance Trade-Off.” Proceedings of the National Academy of Sciences 116(32): 15849–54. [CrossRef]

- Boroujeni, Sayed Pedram Haeri, and Abolfazl Razi. 2024. “IC-GAN: An Improved Conditional Generative Adversarial Network for RGB-to-IR Image Translation with Applications to Forest Fire Monitoring.” Expert Systems with Applications 238: 121962. [CrossRef]

- Chegraoui, Hamza et al. 2021. “Object Detection Improves Tumour Segmentation in MR Images of Rare Brain Tumours.” Cancers 13(23): 6113. [CrossRef]

- Ding, Dan et al. 2024. “A Tunable Diode Laser Absorption Spectroscopy (TDLAS) Signal Denoising Method Based on LSTM-DAE.” Optics Communications: 130327. [CrossRef]

- Dunford, Rosie, Quanrong Su, and Ekraj Tamang. 2014. “The Pareto Principle.”.

- Ehtisham, Rana et al. 2024. “Computing the Characteristics of Defects in Wooden Structures Using Image Processing and CNN.” Automation in Construction 158: 105211. [CrossRef]

- Elman, Jeffrey L. 1990. “Finding Structure in Time.” Cognitive science 14(2): 179–211.

- Goodfellow, Ian et al. 2014. “Generative Adversarial Nets.” Advances in neural information processing systems 27.

- Hochreiter, Sepp, and Jürgen Schmidhuber. 1997. “Long Short-Term Memory.” Neural computation 9(8): 1735–80.

- Hu, Wenxing, Mengshan Li, Haiyang Xiao, and Lixin Guan. 2024. “Essential Genes Identification Model Based on Sequence Feature Map and Graph Convolutional Neural Network.” BMC genomics 25(1): 47. [CrossRef]

- Jadon, Aryan, Avinash Patil, and Shruti Jadon. 2022. “A Comprehensive Survey of Regression Based Loss Functions for Time Series Forecasting.” arXiv preprint arXiv:2211.02989.

- Keyserlingk, J. R., Ahlgren, P. D., Yu, E., Belliveau, N., & Yassa, M. (2000). Functional infrared imaging of the breast. IEEE Engineering in Medicine and Biology Magazine,19(3), 30–41.

- Kipf, Thomas N, and Max Welling. 2016. “Semi-Supervised Classification with Graph Convolutional Networks.” arXiv preprint arXiv:1609.02907.

- Khenkar, S. G., Jarraya, S. K., Allinjawi, A., Alkhuraiji, S., Abuzinadah, N., & Kateb, F. A. (2023). Deep Analysis of Student Body Activities to Detect Engagement State in E-Learning Sessions. Applied Sciences, 13(4), 2591. [CrossRef]

- Krizhevsky, Alex, Ilya Sutskever, and Geoffrey E Hinton. 2012. “Imagenet Classification with Deep Convolutional Neural Networks.” Advances in neural information processing systems 25: 1097–1105.

- Kuhn, Max, Kjell Johnson, Max Kuhn, and Kjell Johnson. 2013. “Over-Fitting and Model Tuning.” Applied predictive modeling: 61–92.

- LeCun, Yann, Yoshua Bengio, and Geoffrey Hinton. 2015. “Deep Learning.” nature 521(7553): 436–44.

- Li, Huaguang, and Alireza Baghban. 2024. “Insights into the Prediction of the Liquid Density of Refrigerant Systems by Artificial Intelligent Approaches.” Scientific Reports 14(1): 2343. [CrossRef]

- Lu, Minrong, and Xuerong Xu. 2024. “TRNN: An Efficient Time-Series Recurrent Neural Network for Stock Price Prediction.” Information Sciences 657: 119951. [CrossRef]

- Maray, Nader et al. 2023. “Transfer Learning on Small Datasets for Improved Fall Detection.” Sensors 23(3): 1105. [CrossRef]

- Protić, Danijela et al. 2023. “Numerical Feature Selection and Hyperbolic Tangent Feature Scaling in Machine Learning-Based Detection of Anomalies in the Computer Network Behavior.” Electronics 12(19): 4158. [CrossRef]

- Rahman, Md Atiqur, and Yang Wang. 2016. “Optimizing Intersection-over-Union in Deep Neural Networks for Image Segmentation.” In International Symposium on Visual Computing, , 234–44.

- Rahman, Zia Ur et al. 2022. “Automated Detection of Rehabilitation Exercise by Stroke Patients Using 3-Layer CNN-LSTM Model.” Journal of Healthcare Engineering 2022. [CrossRef]

- Russell, Stuart J, and Peter Norvig. 2016. “Artificial Intelligence: A Modern Approach. Malaysia.”.

- Salakhutdinov, Ruslan, and Geoffrey Hinton. 2009. “Deep Boltzmann Machines.” In Proceedings of the Twelth International Conference on Artificial Intelligence and Statistics, Proceedings of Machine Learning Research, eds. David van Dyk and Max Welling. Hilton Clearwater Beach Resort, Clearwater Beach, Florida USA: PMLR, 448–55. https://proceedings.mlr.press/v5/salakhutdinov09a.html.

- Sammut, Claude, and Geoffrey I Webb. 2011. Encyclopedia of Machine Learning. Springer Science \& Business Media.

- Sathya, Ramadass, Annamma Abraham, and others. 2013. “Comparison of Supervised and Unsupervised Learning Algorithms for Pattern Classification.” International Journal of Advanced Research in Artificial Intelligence 2(2): 34–38. [CrossRef]

- Smolensky, Paul. 1985. “Chapter 6: Information Processing in Dynamical Systems: Foundations of Harmony Theory.” Parallel distributed processing: explorations in the microstructure of cognition 1.

- Srivastava, Nitish et al. 2014. “Dropout: A Simple Way to Prevent Neural Networks from Overfitting.” The journal of machine learning research 15(1): 1929–58.

- Vaswani, Ashish et al. 2017. “Attention Is All You Need.” Advances in neural information processing systems 30.

- Zhou, Fangzheng et al. 2024. “Unified CNN-LSTM for Keyhole Status Prediction in PAW Based on Spatial-Temporal Features.” Expert Systems with Applications 237: 121425. [CrossRef]

| Model type | Nature of data | Use case |

| MLP | Tabular data | Prediction of a continuous value |

| CNNs | Image data with spatial relationships | Image Classification, Object Detection, Segmentation |

| GCNNs | Data represented as graphs or networks | Graph-structured Data, Social Network Analysis |

| RNNs | Temporal sequences, sequential data | Sequential Data Modeling, Time Series Prediction |

| Autoencoders | Unlabeled images | Data Compression, Denoising, Anomaly Detection |

| Transformers | Sequential data, particularly text data | Language Translation, Text Generation |

| GANs | Often images, but can be applied to other domains | Image Generation, Style Transfer, Data Augmentation |

| DRL | Sequential decision-making tasks with rewards | Game Playing, Robotics, Autonomous Systems |

| Hybrid models | Mixed data types or tasks | Multiple types of data/modalities or tasks |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).