Submitted:

09 February 2024

Posted:

12 February 2024

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

2. Related works

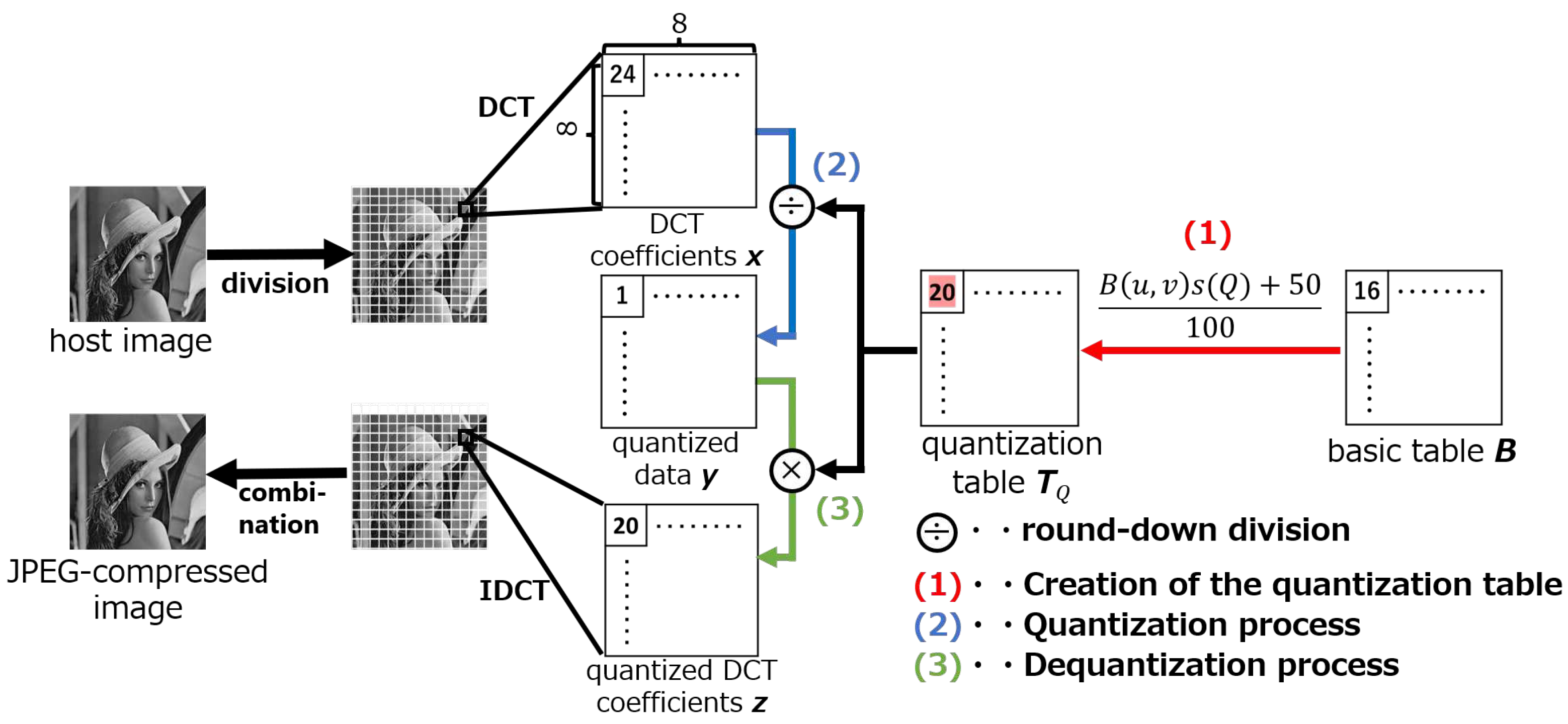

2.1. Quantization of JPEG compression

2.1.1. Creation of the quantization table

2.1.2. Quantization process

2.1.3. Dequantization process

2.2. Implementation of quantization in related works

3. Proposed method

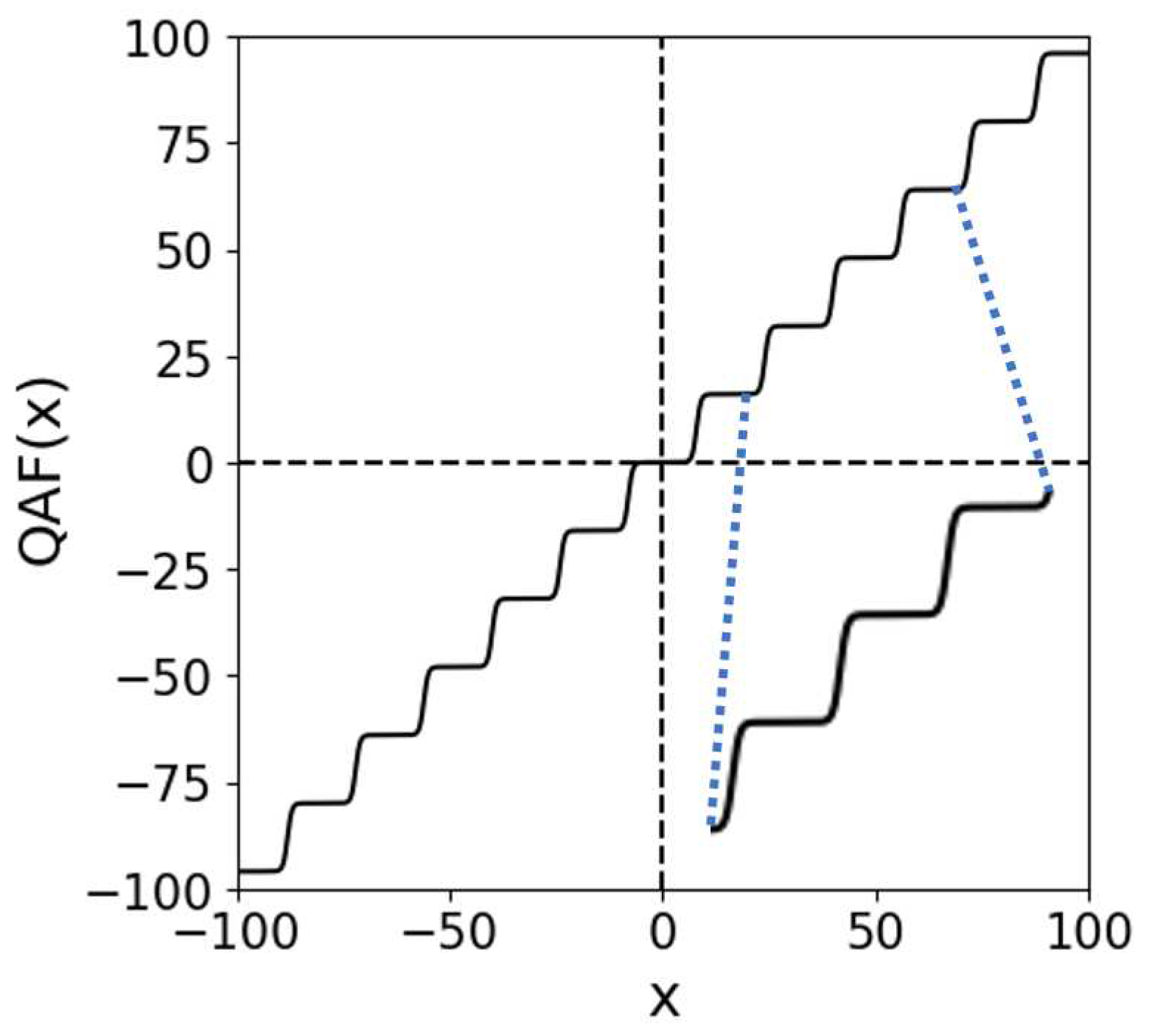

3.1. Quantized activation function

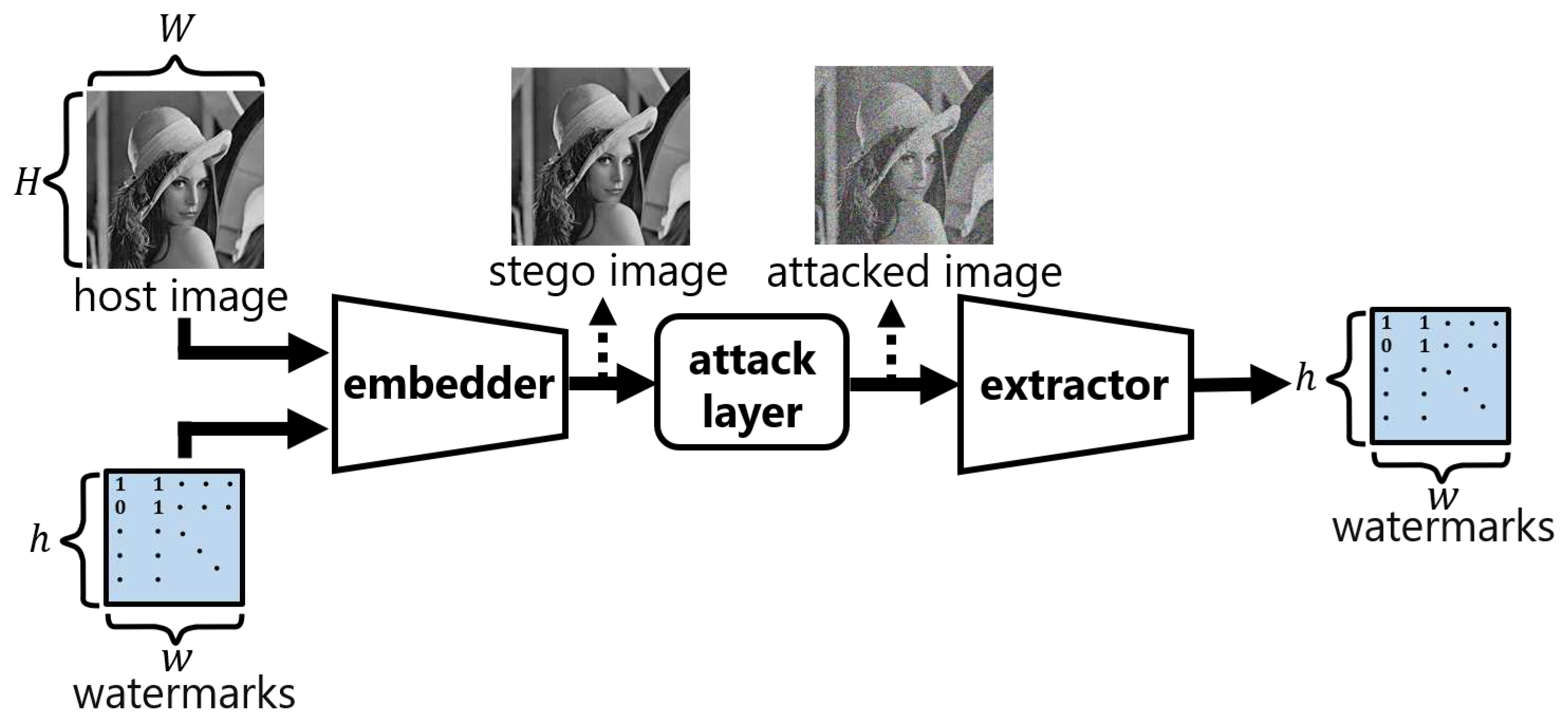

3.2. ReDMark

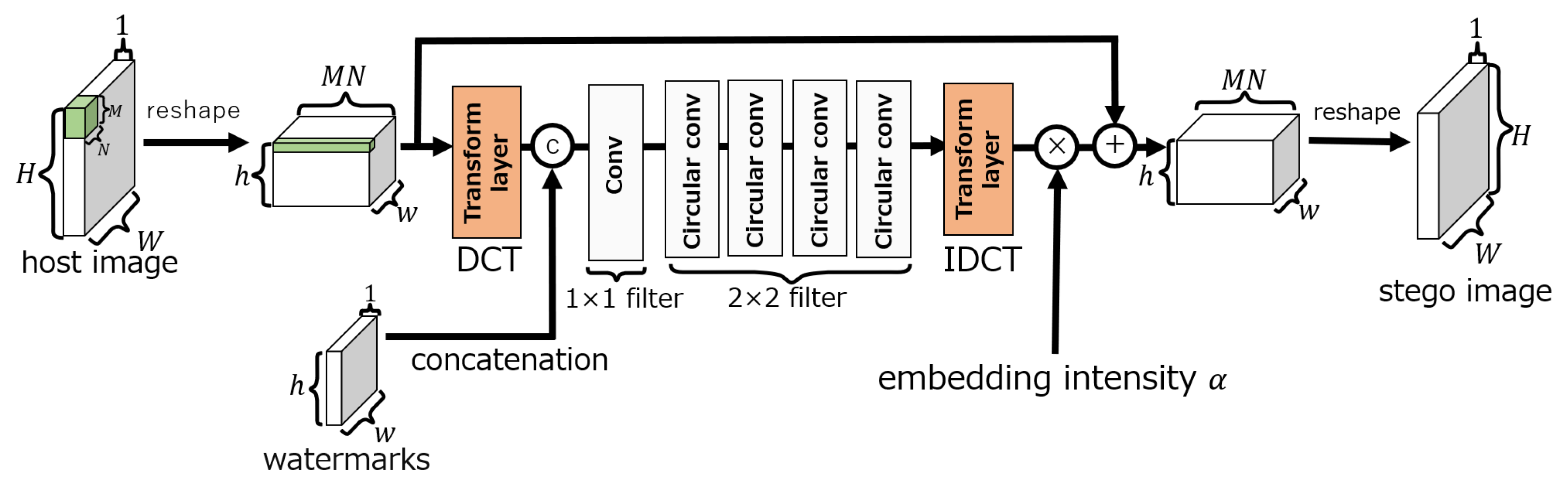

3.3. Embedding network

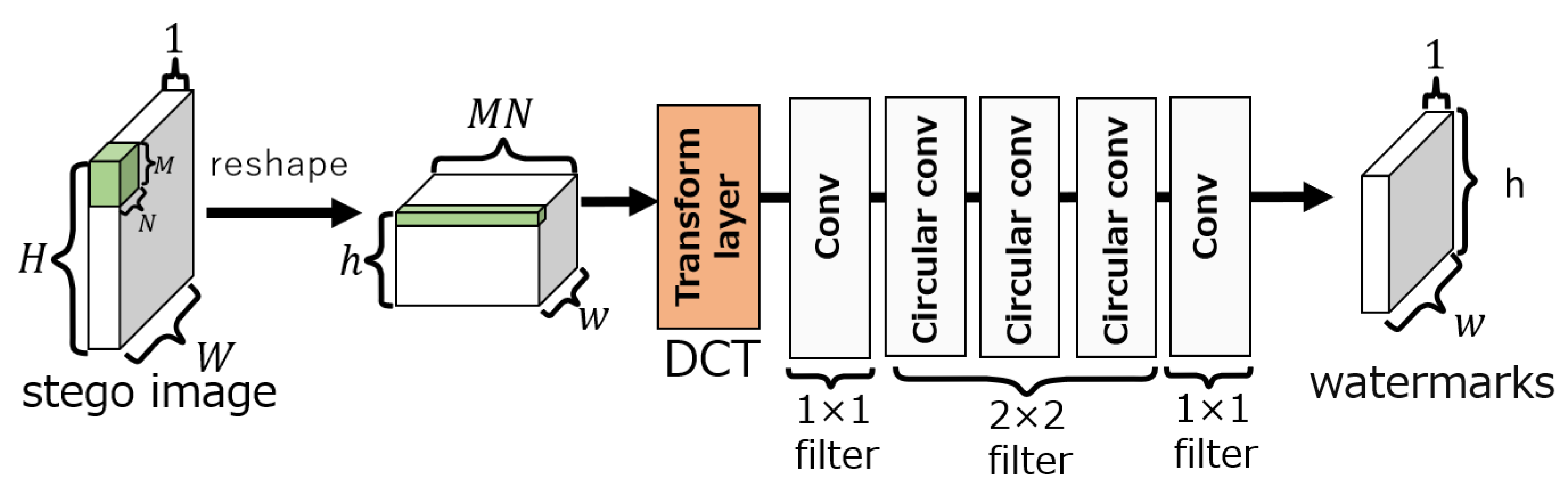

3.4. Extraction network

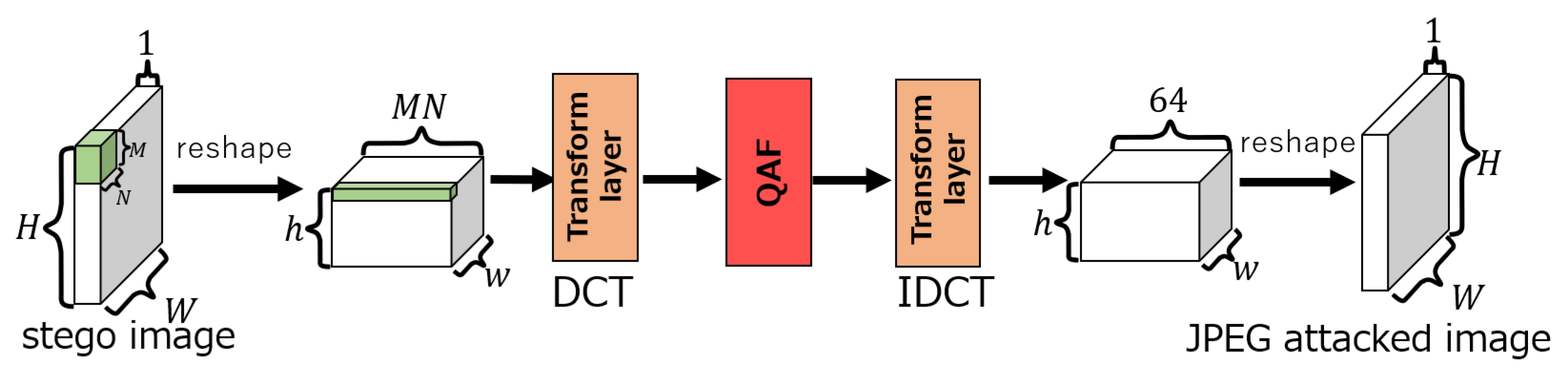

3.5. Attack layer

3.6. Training method

4. Computer Simulation

4.1. Evaluation of the QAF

4.2. Evaluation of the proposed attack layer

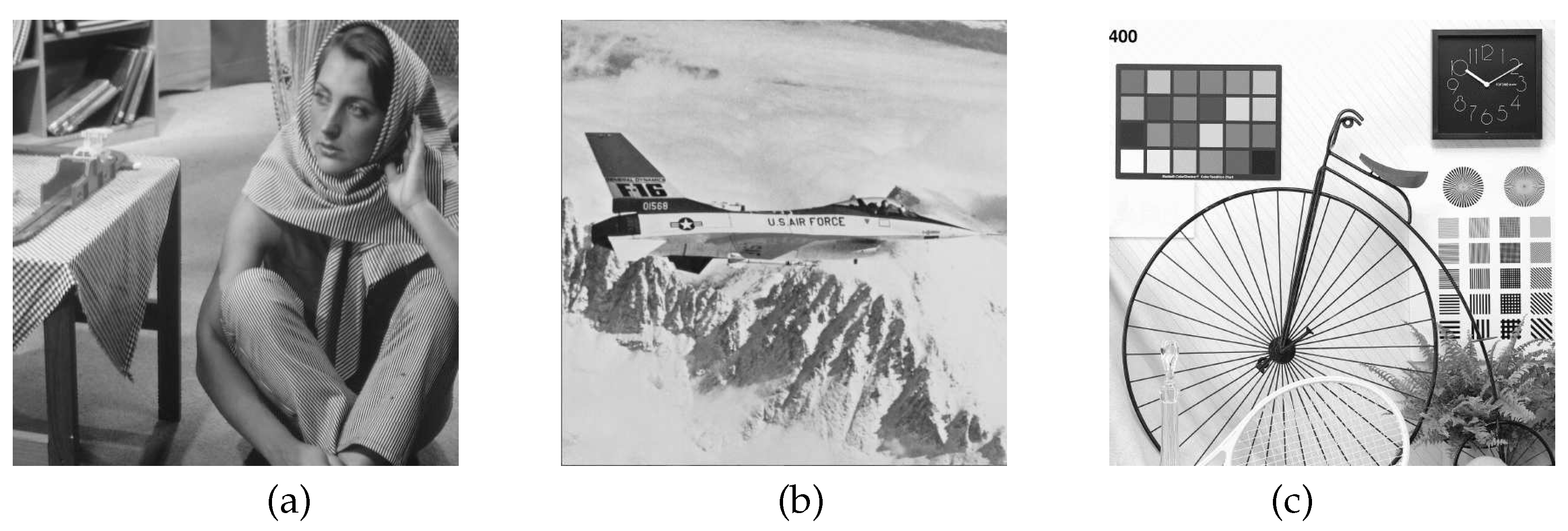

4.2.1. Experimental conditions

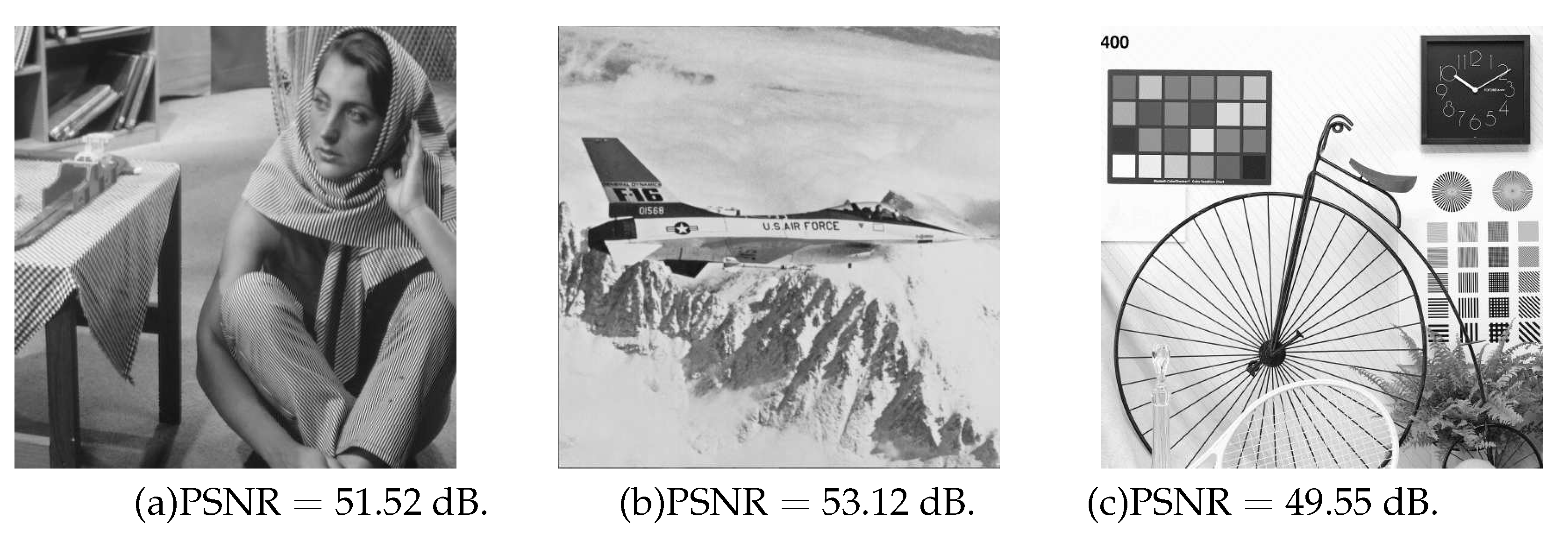

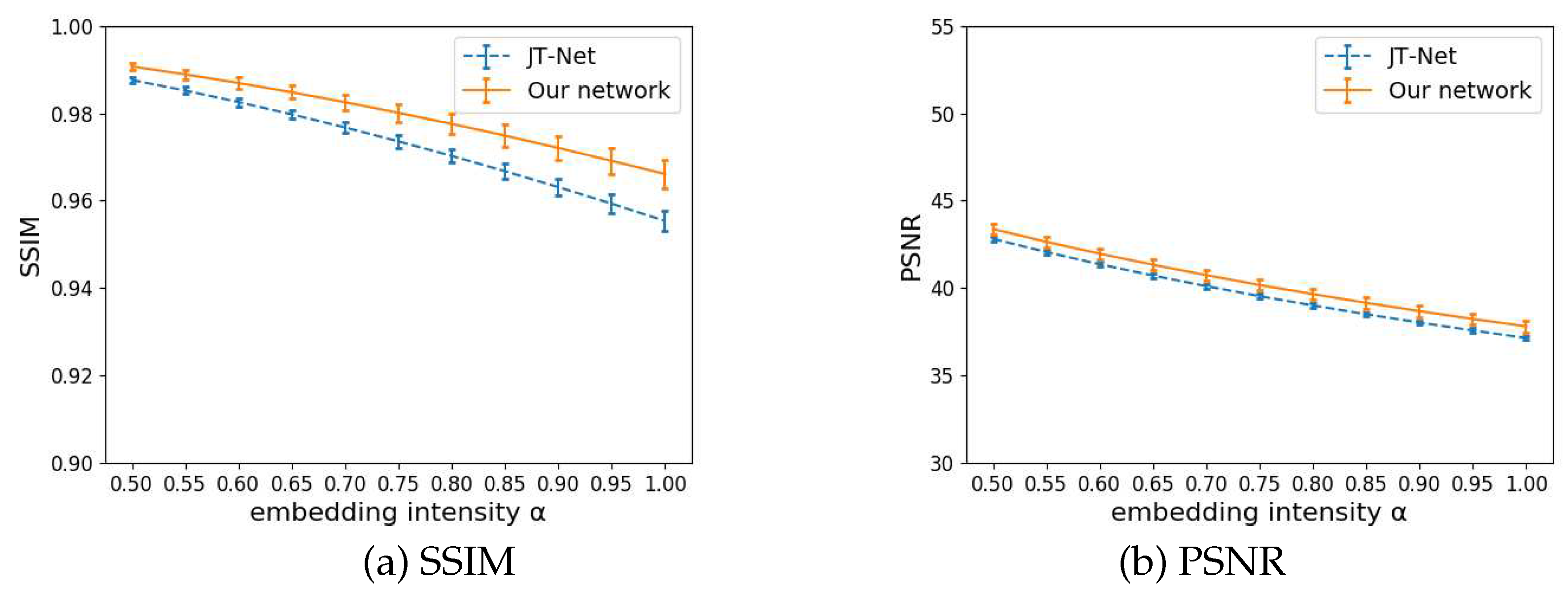

4.2.2. Evaluation of the image quality

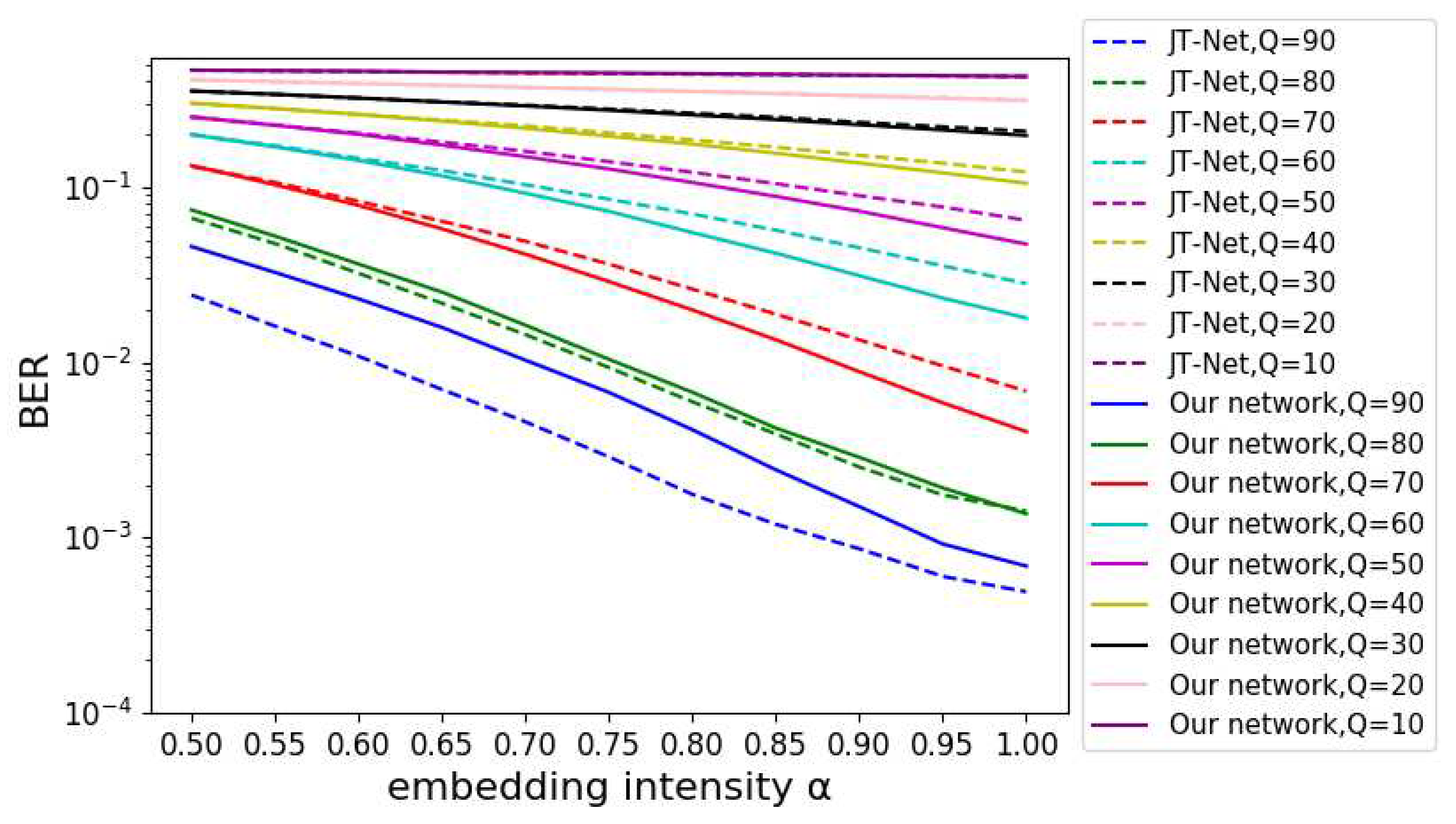

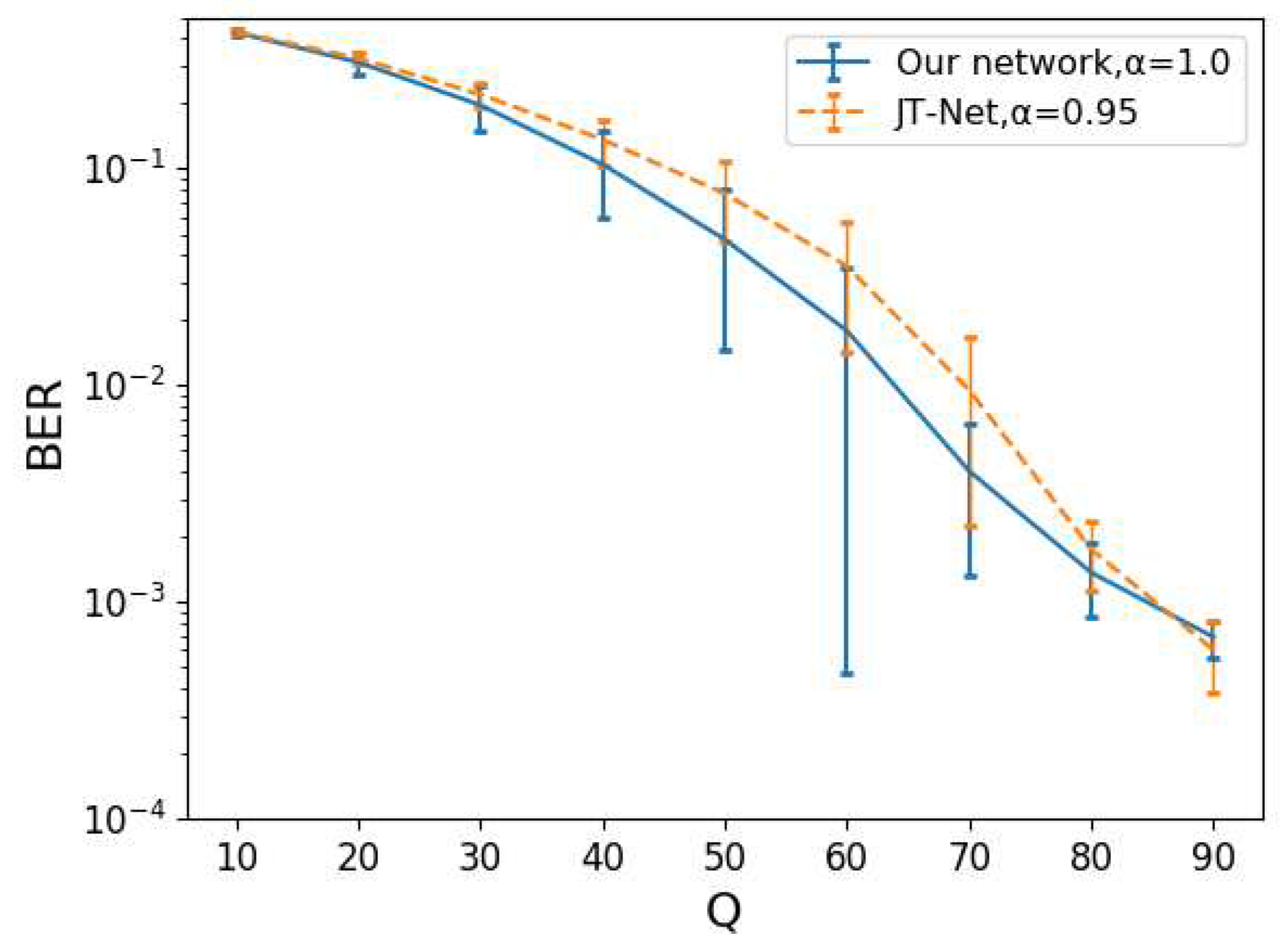

4.2.3. Evaluation of the BER

5. Conclusion

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- J. Zhu, R. Kaplan, J. Johnson, L. Fei-Fei, “HiDDeN: Hiding Data With Deep Networks,” European Conference on Computer Vision, 2018.

- I. Hamamoto, M. Kawamura, “Image watermarking technique using embedder and extractor neural networks,” IEICE transactions on Information and Systems, vol. E102-D, no. 1, pp. 19–30, 2019. [CrossRef]

- I. Hamamoto, M. Kawamura, “Neural Watermarking Method Including an Attack Simulator against Rotation and Compression Attacks,” IEICE transactions on Information and Systems, vol. E103-D, no. 1, pp. 33–41, 2020. [CrossRef]

- M. Ahmadi, A. Norouzi, S. M. Reza Soroushmehr, N. Karimi, K. Najarian, S. Samavi, A. Emami, “Redmark: Framework for residual diffusion watermarking based on deep networks,” Expert Systems with Applications, vol. 146, 2020. [CrossRef]

- Independent JPEG Group, http://www.ijg.org/, (Accessed 2023-05-21).

- G. E. Hinton, R. R. Salakhutdinov, “Reducing the Dimensionality of Data with Neural Networks,” Science, vol. 313, pp. 504–507, 2006. [CrossRef]

- D. E. Rumelhart, G. E. Hinton, R. J. Williams, “Learning representations by back-propagating errors,” Nature, vol. 323, no. 6, pp. 533–536, 1986. [CrossRef]

- D. P. Kingma, J. L. Ba, “Adam: A method for stochastic optimization,” Proceedings of 3rd international conference on learning representations, 2015.

- V. Nair, G. E. Hinton, “Rectified linear units improve restricted boltzmann machines,” Proceedings of the 27th international conference on machine learning, pp. 807–814, 2010.

- K. He, X. Zhang, S. Ren, J. Sun, “Delving deep into rectifiers: Surpassing human-level performance on imagenet classification,” Proceedings of the IEEE international conference on computer vision, pp. 1026–1034, 2015.

- Computer Vision Group at the University of Granada, Dataset of standard 512 × 512 grayscale test images, http://decsai.ugr.es/cvg/CG/base.htm, (Accessed 2023-06-09).

- A. Krizhevsky, V. Nair, G. Hinton, The CIFAR-10 dataset, https://www.cs.toronto.edu/ kriz/cifar.html, (Accessed 2023-06-09).

- D. Clevert, T. Unterthiner, S. Hochreiter, “Fast and accurate deep network learning by exponential linear units (ELUs),” arXiv preprint arXiv:1511.07289, 2015. [CrossRef]

- D. Clevert, T. Unterthiner, S. Hochreiter, “Fast and accurate deep network learning by exponential linear units (ELUs),” arXiv preprint arXiv:1511.07289, 2015. [CrossRef]

- Y. Shingo, M. Kawamura, “Neural Network Based Watermarking Trained with Quantized Activation Function,” Asia Pacific Signal and Information Processing Association Annual Summit and Conference 2022, pp. 1608–1613, 2022.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).