Submitted:

29 January 2024

Posted:

29 January 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Onsite Team Collaboration Data

2.2. Multi-Modal Speaker Diarization

2.3. Individual Well-Being Data Analysis

3. Study Design

3.1. Teamwork Setting

Study Context

Teams

3.2. Data Collection

Recording

Surveys

3.3. Data Preparation

3.3.1. Data Preprocessing

3.3.2. Speaker Diarization

Face Detection

Face Tracking

Face Cropping

Active Speaker Detection

Scores-to-Speech Segment Transformation

Face-Track Clustering

RTTM File

3.3.3. Audio Feature Calculation

3.3.4. Qualitative Assessment of the Software

Audiovisual Speaker Diarization Evaluation

Audio Feature Calculation Evaluation

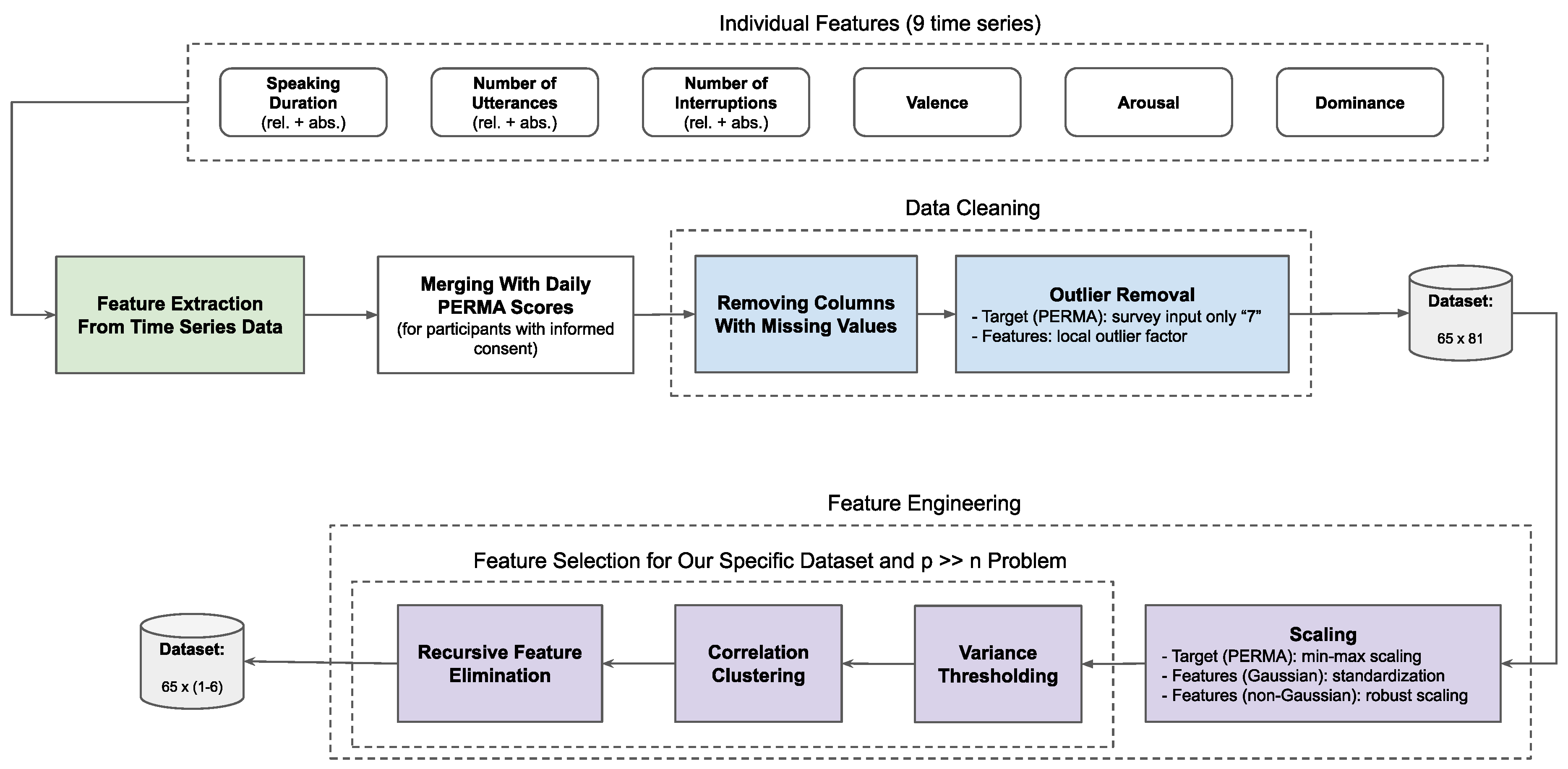

3.3.5. Feature Extraction, Data Cleaning, and Feature Engineering

Feature Extraction from Time Series Data

Outlier Removal

Scaling

Feature Selection

3.4. Data Analysis

3.4.1. Correlation of Features With PERMA Pillars

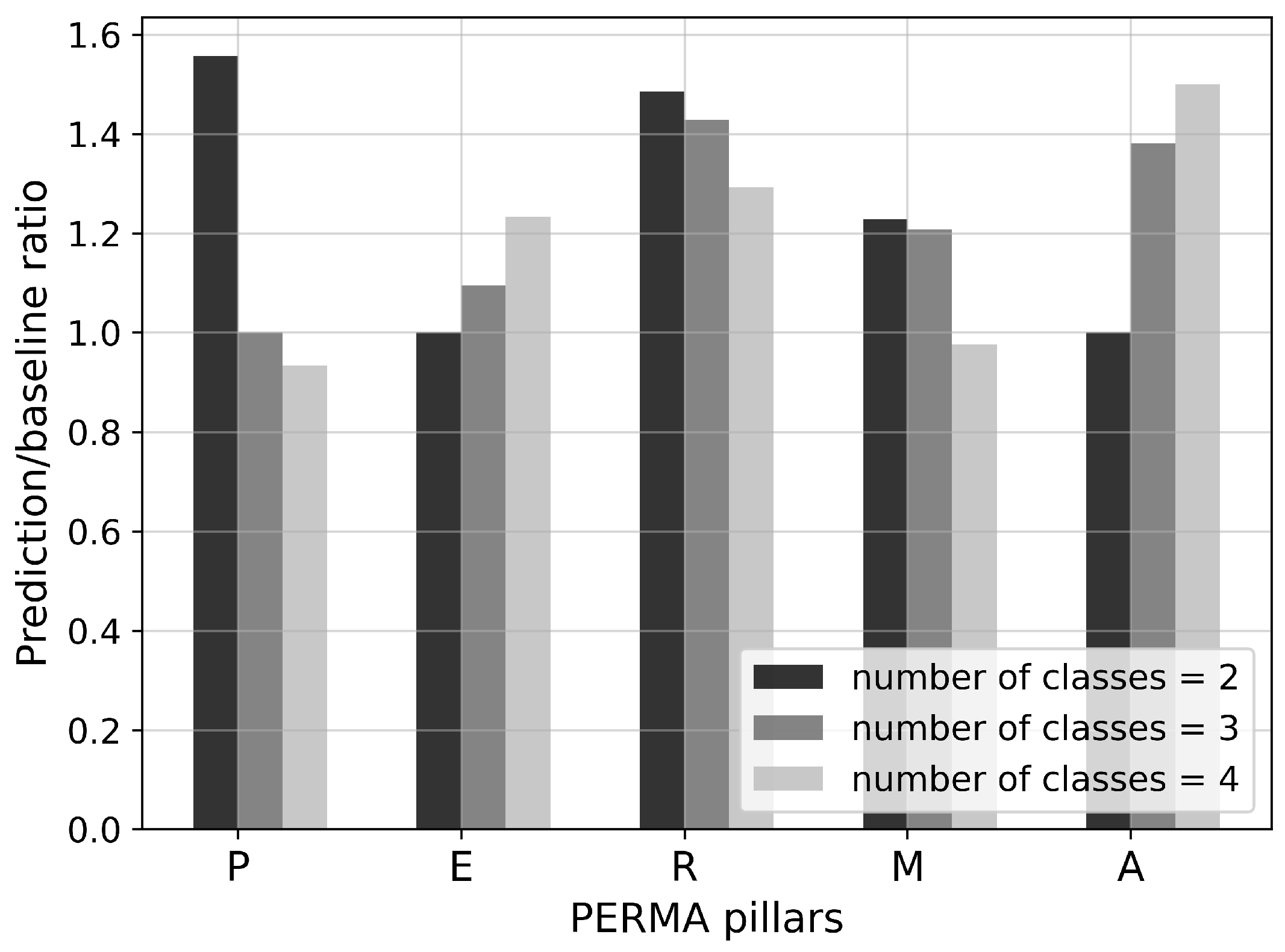

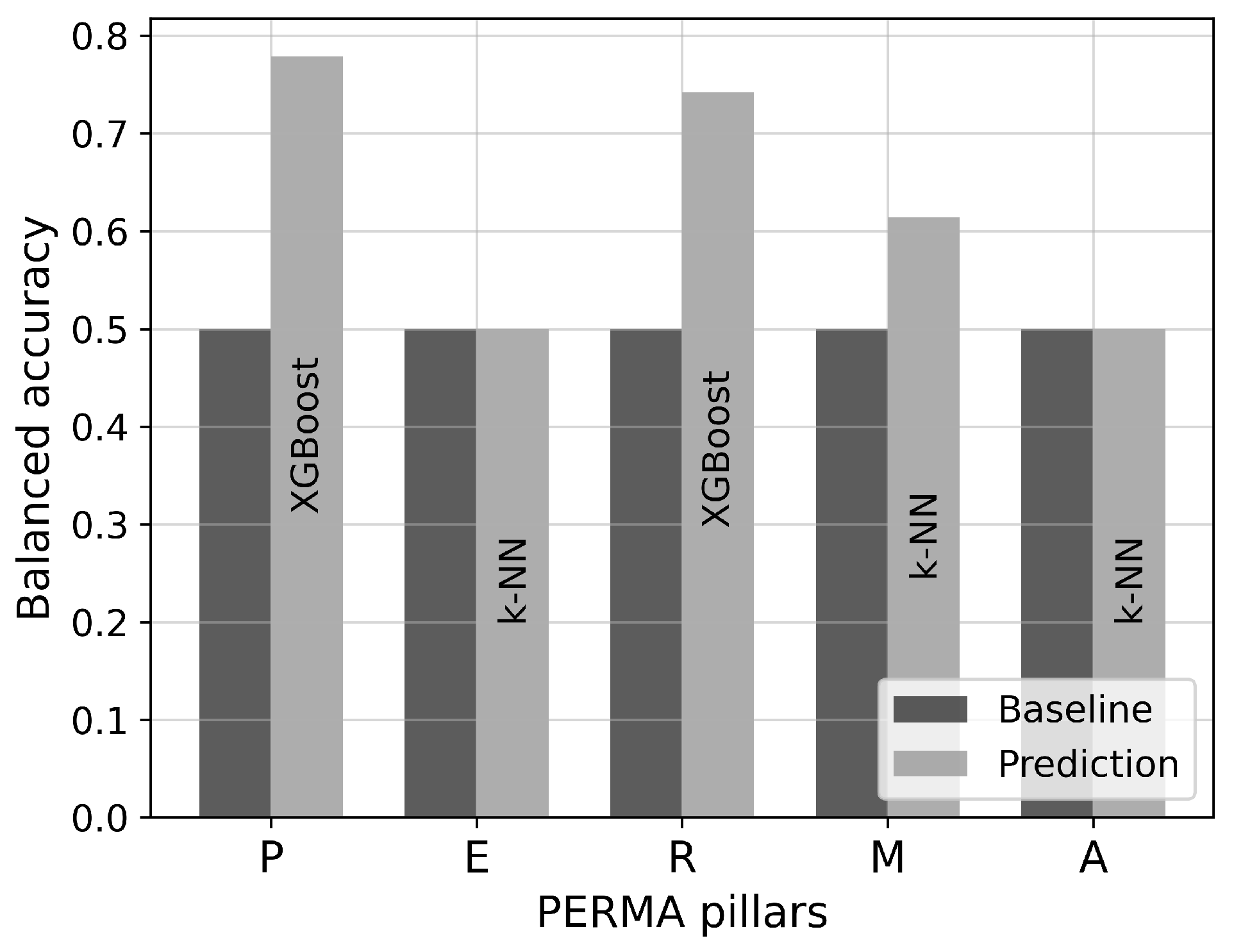

3.4.2. Evaluation of Classification Models

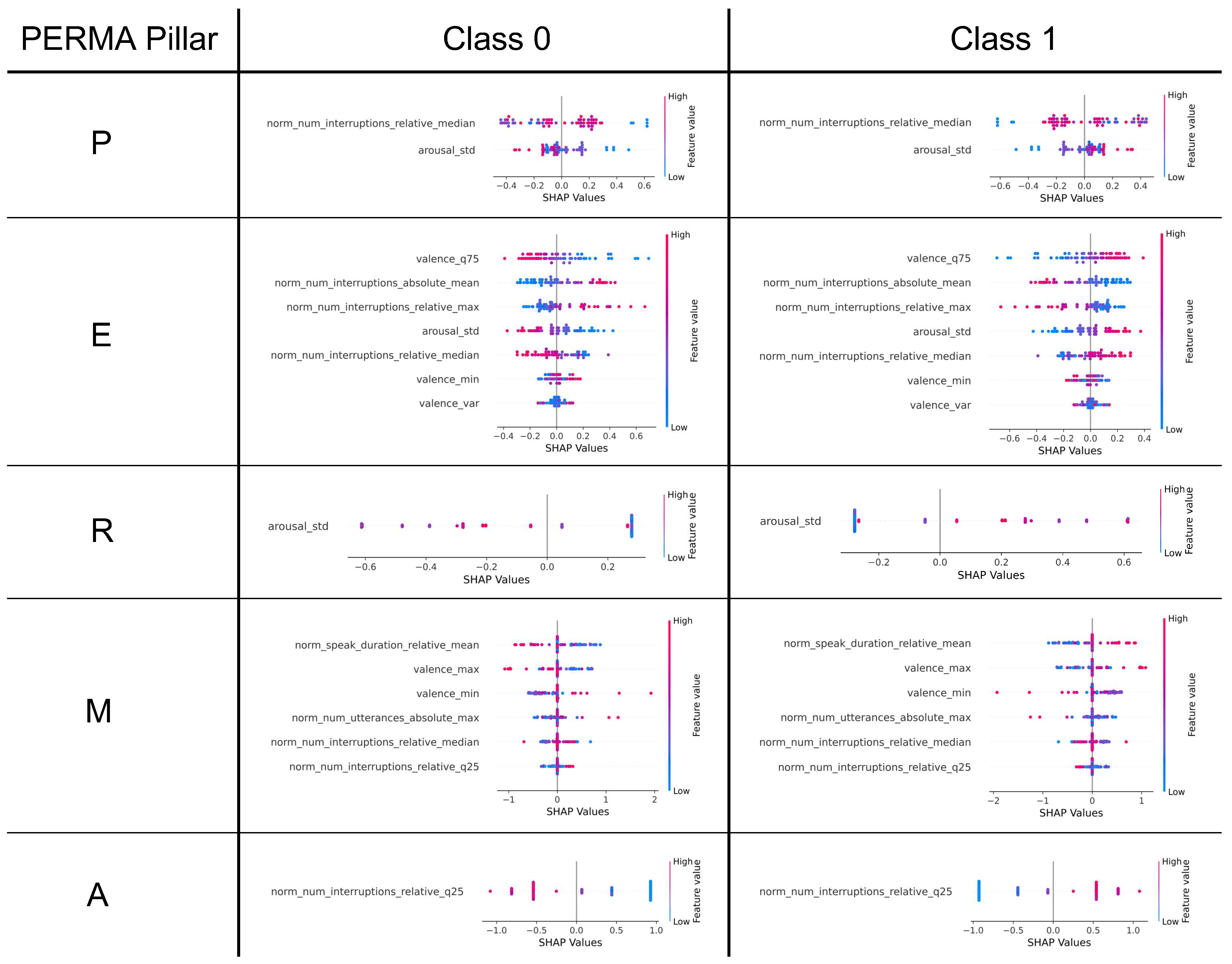

3.4.3. Feature Importance of Classification Models

4. Results

4.1. Selected Features for Classification Models

4.2. Evaluation of Classification Models

4.3. SHAP Values of Classification Models

5. Findings

5.1. Answers to Research Questions

- RQ1. What are the challenges of individual well-being prediction in team collaboration based on multi-modal speech data? How can they be addressed?

- RQ2. Based on our own data, what are suitable algorithms and target labels for predicting well-being in teamwork contexts based on multi-modal speech data?

- RQ3. Based on our own data, which speech features serve as predictors of individual well-being in team collaboration?

5.2. Limitations

6. Future Work

7. Conclusion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| AIA | Anonymous Institute. |

| AS | Anonymous Study. |

| ASD | active speaker detection. |

| CatBoost | categorical boosting. |

| DBSCAN | density-based spatial clustering of applications with noise. |

| fps | frames per second. |

| IOU | intersection over union. |

| IPO | input-process-output. |

| k-NN | k-nearest neighbor. |

| LOF | local outlier factor. |

| LOOCV | leave-one-out cross-validation. |

| PERMA | positive emotion, engagement, relationships, meaning, and accomplishment. |

| RFE | recursive feature elimination. |

| RTTM | rich transcription time marked. |

| S3FD | single shot scale-invariant face detector. |

| SHAP | Shapley additive explanations. |

| VER | voice emotion recognition. |

| XGBoost | extreme gradient boosting. |

Appendix A

| 1 | An URL will be inserted at this position after acceptance due to double-blind peer review. |

| 2 | |

| 3 | |

| 4 | |

| 5 | |

| 6 | |

| 7 | |

| 8 | |

| 9 | |

| 10 | |

| 11 | |

| 12 | |

| 13 | |

| 14 |

References

- World Health Organization. International Classification of Diseases (ICD).

- Gloor, P.A. Happimetrics: Leveraging AI to Untangle the Surprising Link Between Ethics, Happiness and Business Success; Edward Elgar Publishing, 2022.

- Landy, F.J.; Conte, J.M. Work in the 21st Century: An Introduction to Industrial and Organizational Psychology; John Wiley & Sons, 2010. Google-Books-ID: 1K1rnp9uAscC.

- Seligman, M.E.P. Flourish: A Visionary New Understanding of Happiness and Well-being; Simon and Schuster, 2012. Google-Books-ID: YVAQVa0dAE8C.

- Ringeval, F.; Sonderegger, A.; Sauer, J.; Lalanne, D. Introducing the RECOLA multimodal corpus of remote collaborative and affective interactions. In Proceedings of the 2013 10th IEEE International Conference and Workshops on Automatic Face and Gesture Recognition (FG); 2013; pp. 1–8. [Google Scholar] [CrossRef]

- Oxelmark, L.; Nordahl Amorøe, T.; Carlzon, L.; Rystedt, H. Students’ understanding of teamwork and professional roles after interprofessional simulation—a qualitative analysis. Advances in Simulation, 2, 8. [CrossRef]

- Koutsombogera, M.; Vogel, C. Modeling Collaborative Multimodal Behavior in Group Dialogues: The MULTISIMO Corpus. In Proceedings of the Proceedings of the Eleventh International Conference on Language Resources and Evaluation (LREC 2018). European Language Resources Association (ELRA), 2018.

- Sanchez-Cortes, D.; Aran, O.; Jayagopi, D.B.; Schmid Mast, M.; Gatica-Perez, D. Emergent leaders through looking and speaking: from audio-visual data to multimodal recognition. Journal on Multimodal User Interfaces 2013, 7, 39–53. [Google Scholar] [CrossRef]

- Braley, M.; Murray, G. The Group Affect and Performance (GAP) Corpus. In Proceedings of the Proceedings of the Group Interaction Frontiers in Technology. Association for Computing Machinery, 2018, GIFT’18, pp. 1–9. [CrossRef]

- Christensen, B.T.; Abildgaard, S.J.J. Inside the DTRS11 dataset: Background, content, and methodological choices. In Analysing design thinking: Studies of cross-cultural co-creation; CRC Press, 2017; pp. 19–37.

- Ivarsson, J.; Åberg, M. Role of requests and communication breakdowns in the coordination of teamwork: a video-based observational study of hybrid operating rooms. BMJ Open 2020, 10, e035194. [Google Scholar] [CrossRef] [PubMed]

- Yoshioka, T.; Abramovski, I.; Aksoylar, C.; Chen, Z.; David, M.; Dimitriadis, D.; Gong, Y.; Gurvich, I.; Huang, X.; Huang, Y.; et al. Advances in Online Audio-Visual Meeting Transcription, [1912.04979 [cs, eess]]. [CrossRef]

- Nagrani, A.; Chung, J.S.; Zisserman, A. VoxCeleb: a large-scale speaker identification dataset. In Proceedings of the Interspeech 2017, 2017, pp. 2616–2620, [1706.08612 [cs]]. [Google Scholar] [CrossRef]

- Chung, J.S.; Nagrani, A.; Zisserman, A. VoxCeleb2: Deep Speaker Recognition. In Proceedings of the Interspeech 2018, 2018, pp. 1086–1090, [1806.05622 [cs, eess]]. [Google Scholar] [CrossRef]

- Chung, J.S.; Huh, J.; Nagrani, A.; Afouras, T.; Zisserman, A. Spot the conversation: speaker diarisation in the wild. In Proceedings of the Interspeech 2020, 2020, pp. 299–303, [2007.01216 [cs, eess]]. [Google Scholar] [CrossRef]

- Xu, E.Z.; Song, Z.; Tsutsui, S.; Feng, C.; Ye, M.; Shou, M.Z. AVA-AVD: Audio-visual Speaker Diarization in the Wild. In Proceedings of the Proceedings of the 30th ACM International Conference on Multimedia. Association for Computing Machinery, 2022, MM ’22, pp. 3838–3847. [CrossRef]

- Chung, J.S.; Lee, B.J.; Han, I. Who said that?: Audio-visual speaker diarisation of real-world meetings, [1906.10042 [cs, eess]]. [CrossRef]

- Sonnentag, S. Dynamics of Well-Being. Annual Review of Organizational Psychology and Organizational Behavior, 2, 261–293. [CrossRef]

- Anglim, J.; Horwood, S.; Smillie, L.D.; Marrero, R.J.; Wood, J.K. Predicting psychological and subjective well-being from personality: A meta-analysis. Psychological Bulletin, 146, 279–323. Place: US Publisher: American Psychological Association. [CrossRef]

- Dejonckheere, E.; Mestdagh, M.; Houben, M.; Rutten, I.; Sels, L.; Kuppens, P.; Tuerlinckx, F. Complex affect dynamics add limited information to the prediction of psychological well-being. Nature Human Behaviour, 3, 478–491. Number: 5 Publisher: Nature Publishing Group. [CrossRef]

- Smits, C.H.M.; Deeg, D.J.H.; Bosscher, R.J. Well-Being and Control in Older Persons: The Prediction of Well-Being from Control Measures. The International Journal of Aging and Human Development, 40, 237–251. Publisher: SAGE Publications Inc. [CrossRef]

- Karademas, E.C. Positive and negative aspects of well-being: Common and specific predictors. Personality and Individual Differences 2007, 43, 277–287. [Google Scholar] [CrossRef]

- Bharadwaj, L.; Wilkening, E.A. The prediction of perceived well-being. Social Indicators Research 1977, 4, 421–439. [Google Scholar] [CrossRef]

- Ridner, S.L.; Newton, K.S.; Staten, R.R.; Crawford, T.N.; Hall, L.A. Predictors of well-being among college students. Journal of American College Health 2016, 64, 116–124. [Google Scholar] [CrossRef] [PubMed]

- van Mierlo, H.; Rutte, C.G.; Kompier, M.A.J.; Doorewaard, H.A.C.M. Self-Managing Teamwork and Psychological Well-Being: Review of a Multilevel Research Domain, Group & Organization Management, 30, 211–235. Publisher: SAGE Publications Inc. [CrossRef]

- Markova, G.; T. Perry, J. Cohesion and individual well-being of members in self-managed teams. Leadership & Organization Development Journal 2014, 35, 429–441. [Google Scholar] [CrossRef]

- Dawadi, P.N.; Cook, D.J.; Schmitter-Edgecombe, M. Automated Cognitive Health Assessment From Smart Home-Based Behavior Data. IEEE journal of biomedical and health informatics 2016, 20, 1188–1194. [Google Scholar] [CrossRef] [PubMed]

- Casaccia, S.; Romeo, L.; Calvaresi, A.; Morresi, N.; Monteriù, A.; Frontoni, E.; Scalise, L.; Revel, G.M. Measurement of Users’ Well-Being Through Domotic Sensors and Machine Learning Algorithms. IEEE Sensors Journal 2020, 20, 8029–8038. [Google Scholar] [CrossRef]

- Rickard, N.; Arjmand, H.A.; Bakker, D.; Seabrook, E. Development of a Mobile Phone App to Support Self-Monitoring of Emotional Well-Being: A Mental Health Digital Innovation. JMIR Mental Health, 3, e6202. Company: JMIR Mental Health Distributor: JMIR Mental Health Institution: JMIR Mental Health Label: JMIRMental Health Publisher: JMIR Publications Inc., Toronto, Canada. [CrossRef]

- Nosakhare, E.; Picard, R. Toward Assessing and Recommending Combinations of Behaviors for Improving Health and Well-Being. ACM Transactions on Computing for Healthcare, 1, 4:1–4:29. [CrossRef]

- Robles-Granda, P.; Lin, S.; Wu, X.; D’Mello, S.; Martinez, G.J.; Saha, K.; Nies, K.; Mark, G.; Campbell, A.T.; De Choudhury, M.; et al. Jointly Predicting Job Performance, Personality, Cognitive Ability, Affect, and Well-Being, [2006.08364 [cs]]. [CrossRef]

- Gong, Y.; Poellabauer, C. Topic Modeling Based Multi-modal Depression Detection. In Proceedings of the Proceedings of the 7th Annual Workshop on Audio/Visual Emotion Challenge. Association for Computing Machinery, 2017, AVEC ’17, pp. 69–76. [CrossRef]

- Gupta, R.; Malandrakis, N.; Xiao, B.; Guha, T.; Van Segbroeck, M.; Black, M.; Potamianos, A.; Narayanan, S. Multimodal Prediction of Affective Dimensions and Depression in Human-Computer Interactions. In Proceedings of the Proceedings of the 4th International Workshop on Audio/Visual Emotion Challenge. Association for Computing Machinery, 2014, AVEC ’14, pp. 69–76. [CrossRef]

- Williamson, J.R.; Young, D.; Nierenberg, A.A.; Niemi, J.; Helfer, B.S.; Quatieri, T.F. Tracking depression severity from audio and video based on speech articulatory coordination. Computer Speech & Language, 55, 40–56. [CrossRef]

- Huang, Y.N.; Zhao, S.; Rivera, M.L.; Hong, J.I.; Kraut, R.E. Predicting Well-being Using Short Ecological Momentary Audio Recordings. In Proceedings of the Extended Abstracts of the 2021 CHI Conference on Human Factors in Computing Systems. Association for Computing Machinery, 2021, CHI EA ’21; pp. 1–7. [CrossRef]

- Kim, S.; Kwon, N.; O’Connell, H. Toward estimating personal well-being using voice, [1910.10082 [cs, eess]]. [CrossRef]

- Kuutila, M.; Mäntylä, M.; Claes, M.; Elovainio, M.; Adams, B. Individual differences limit predicting well-being and productivity using software repositories: a longitudinal industrial study. Empirical Software Engineering, 26, 88. [CrossRef]

- Izumi, K.; Minato, K.; Shiga, K.; Sugio, T.; Hanashiro, S.; Cortright, K.; Kudo, S.; Fujita, T.; Sado, M.; Maeno, T.; et al. Unobtrusive Sensing Technology for Quantifying Stress and Well-Being Using Pulse, Speech, Body Motion, and Electrodermal Data in a Workplace Setting: Study Concept and Design. Frontiers in Psychiatry.

- MIT. MIT SDM - System Design and Management.

- j5create. 360° All Around Webcam.

- Lobe, B.; Morgan, D.; Hoffman, K.A. Qualitative Data Collection in an Era of Social Distancing. International Journal of Qualitative Methods, 19, 1609406920937875. Publisher: SAGE Publications Inc. [CrossRef]

- Donaldson, S.I.; van Zyl, L.E.; Donaldson, S.I. PERMA+4: A Framework for Work-Related Wellbeing, Performance and Positive Organizational Psychology 2.0. Frontiers in Psychology, 12, 817244. [CrossRef]

- Yang, S.; Luo, P.; Loy, C.C.; Tang, X. WIDER FACE: A Face Detection Benchmark, [1511.06523 [cs]].

- Chung, J.S.; Zisserman, A. Out of time: automated lip sync in the wild. In Proceedings of the Workshop on Multi-view Lip-reading, ACCV; 2016. [Google Scholar]

- Tao, R.; Pan, Z.; Das, R.K.; Qian, X.; Shou, M.Z.; Li, H. Is Someone Speaking? Exploring Long-term Temporal Features for Audio-visual Active Speaker Detection. In Proceedings of the Proceedings of the 29th ACM International Conference on Multimedia, 2021, pp. 3927–3935, [2107.06592 [cs, eess]]. [CrossRef]

- Ryant, N.; Church, K.; Cieri, C.; Cristia, A.; Du, J.; Ganapathy, S.; Liberman, M. First DIHARD Challenge Evaluation Plan. tech. Rep. Publisher: Zenodo. [CrossRef]

- Fu, S.W.; Fan, Y.; Hosseinkashi, Y.; Gupchup, J.; Cutler, R. Improving Meeting Inclusiveness using Speech Interruption Analysis, 2023, [arXiv:eess.AS/2304.00658]; arXiv:eess.AS/2304.00658].

- Wagner, J.; Triantafyllopoulos, A.; Wierstorf, H.; Schmitt, M.; Burkhardt, F.; Eyben, F.; Schuller, B.W. Dawn of the Transformer Era in Speech Emotion Recognition: Closing the Valence Gap. IEEE Transactions on Pattern Analysis and Machine Intelligence 2023, pp. 1–13.

- Wagner, J.; Triantafyllopoulos, A.; Wierstorf, H.; Schmitt, M.; Burkhardt, F.; Eyben, F.; Schuller, B.W. Dawn of the transformer era in speech emotion recognition: closing the valence gap, [2203.07378 [cs, eess]]. [CrossRef]

- Christ, M.; Braun, N.; Neuffer, J.; Kempa-Liehr, A.W. Time Series FeatuRe Extraction on basis of Scalable Hypothesis tests (tsfresh – A Python package). Neurocomputing, 307, 72–77. [CrossRef]

- Breunig, M.M.; Kriegel, H.P.; Ng, R.T.; Sander, J. LOF: identifying density-based local outliers. ACM SIGMOD Record, 29, 93–104. [CrossRef]

- Cheng, Z.; Zou, C.; Dong, J. Outlier detection using isolation forest and local outlier factor. In Proceedings of the Conference on Research in Adaptive and Convergent Systems. Association for Computing Machinery, 2019, RACS ’19, pp. 161–168. [CrossRef]

- Zheng, A.; Casari, A. Feature Engineering for Machine Learning: Principles and Techniques for Data Scientists; "O’Reilly Media, Inc.", 2018. Google-Books-ID: sthSDwAAQBAJ.

- Jain, A.; Nandakumar, K.; Ross, A. Score normalization in multimodal biometric systems. Pattern Recognition, 38, 2270–2285. [CrossRef]

- Pedregosa, F.; Varoquaux, G.; Gramfort, A.; Michel, V.; Thirion, B.; Grisel, O.; Blondel, M.; Prettenhofer, P.; Weiss, R.; Dubourg, V.; et al. Scikit-learn: Machine Learning in Python. Journal of Machine Learning Research, 12, 2825–2830.

- Kelleher, J.D.; Mac Namee, B.; D’Arcy, A. Fundamentals of machine learning for predictive data analytics: algorithms, worked examples, and case studies; The MIT Press, 2015.

- Disabato, D.J.; Goodman, F.R.; Kashdan, T.B.; Short, J.L.; Jarden, A. Different types of well-being? A cross-cultural examination of hedonic and eudaimonic well-being. Psychological Assessment, 28, 471–482. [CrossRef]

- Mirehie, M.; Gibson, H. Empirical testing of destination attribute preferences of women snow-sport tourists along a trajectory of participation. Author. Accepted: 2020-10-13T18:50:52Z Publisher: Taylor & Francis.

- Mirehie, M.; Gibson, H. Women’s participation in snow-sports and sense of well-being: a positive psychology approach. Journal of Leisure Research, 51, 397–415. [CrossRef]

- Park, T.J.; Kanda, N.; Dimitriadis, D.; Han, K.J.; Watanabe, S.; Narayanan, S. A Review of Speaker Diarization: Recent Advances with Deep Learning, [2101.09624 [cs, eess]].

- Giri, V.N. Culture and Communication Style. Review of Communication, 6, 124–130. [CrossRef]

- Stolcke, A.; Yoshioka, T. DOVER: A Method for Combining Diarization Outputs, [1909.08090 [cs]]. [CrossRef]

- Rajasekar, G.P.; de Melo, W.C.; Ullah, N.; Aslam, H.; Zeeshan, O.; Denorme, T.; Pedersoli, M.; Koerich, A.; Bacon, S.; Cardinal, P.; et al. A Joint Cross-Attention Model for Audio-Visual Fusion in Dimensional Emotion Recognition, [2203.14779 [cs, eess]]. [CrossRef]

- Müller, M. Predicting Well-Being in Team Collaboration from Video Data Using Machine and Deep Learning; Technical University of Munich, 2023. in press.

- Radford, A.; Kim, J.W.; Xu, T.; Brockman, G.; McLeavey, C.; Sutskever, I. Robust Speech Recognition via Large-Scale Weak Supervision, [2212.04356 [cs, eess]]. [CrossRef]

| Classifier | Hyperparameters |

|---|---|

| k-NN | n_neighbors: [3, 5, 7, 9, 11, 13, 15], weights: [’uniform’, ’distance’], metric: [’euclidean’, ’manhattan’] |

| Random forests | n_estimators: [100, 200], max_depth: [3, 5, 7] |

| XGBoost | learning_rate: [0.01, 0.1], max_depth: [3, 5, 7] |

| CatBoost | learning_rate: [0.01, 0.1], depth: [3, 5, 7] |

| Pillar | # features | Selected feature(s) |

|---|---|---|

| P | 2 | norm_num_interruptions_relative_median, arousal_std |

| E | 7 | valence_min, valence_q75, norm_num_interruptions_relative_median, arousal_std, valence_var, norm_num_interruptions_absolute_mean, norm_num_interruptions_relative_max |

| R | 1 | arousal_std |

| M | 6 | valence_min, valence_max, norm_num_interruptions_relative_median, norm_speak_duration _relative_mean, norm_num_interruptions_relative_q25, norm_num_utterances_absolute_max |

| A | 1 | norm_num_interruptions_relative_q25 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).