Submitted:

25 January 2024

Posted:

26 January 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Besic concepts of BERT

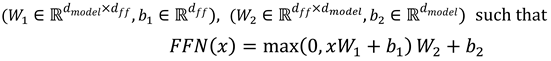

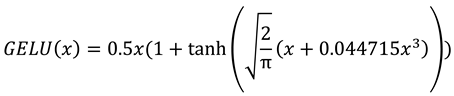

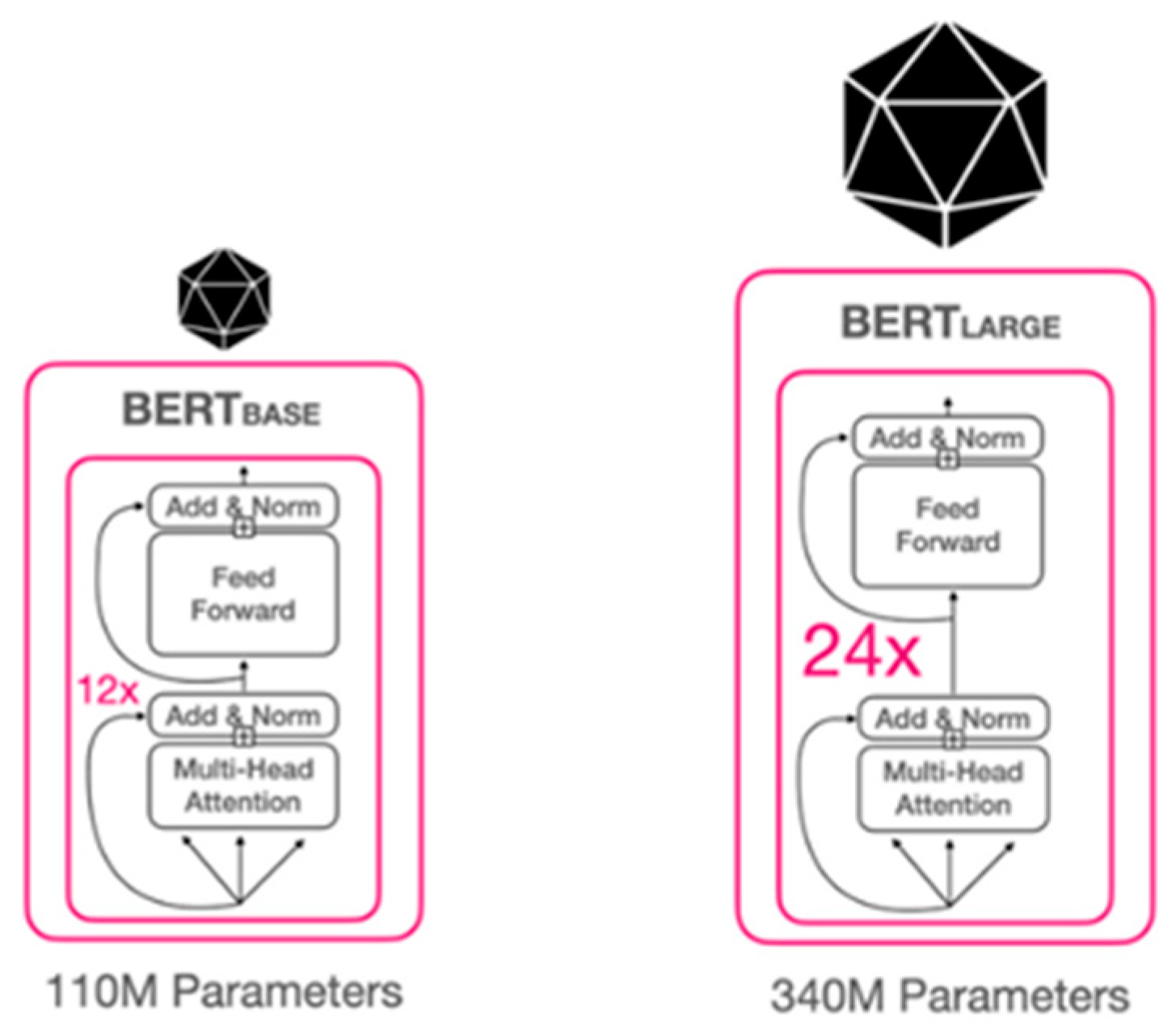

2.1. BERT Model Architecture

- • BERT-base: L = 12, dmodel = 768, h = 12, dff = 3072 (110M total parameters).

- • BERT-large: L = 24, dmodel = 1024, h = 16, dff = 4096 (340M total parameters)

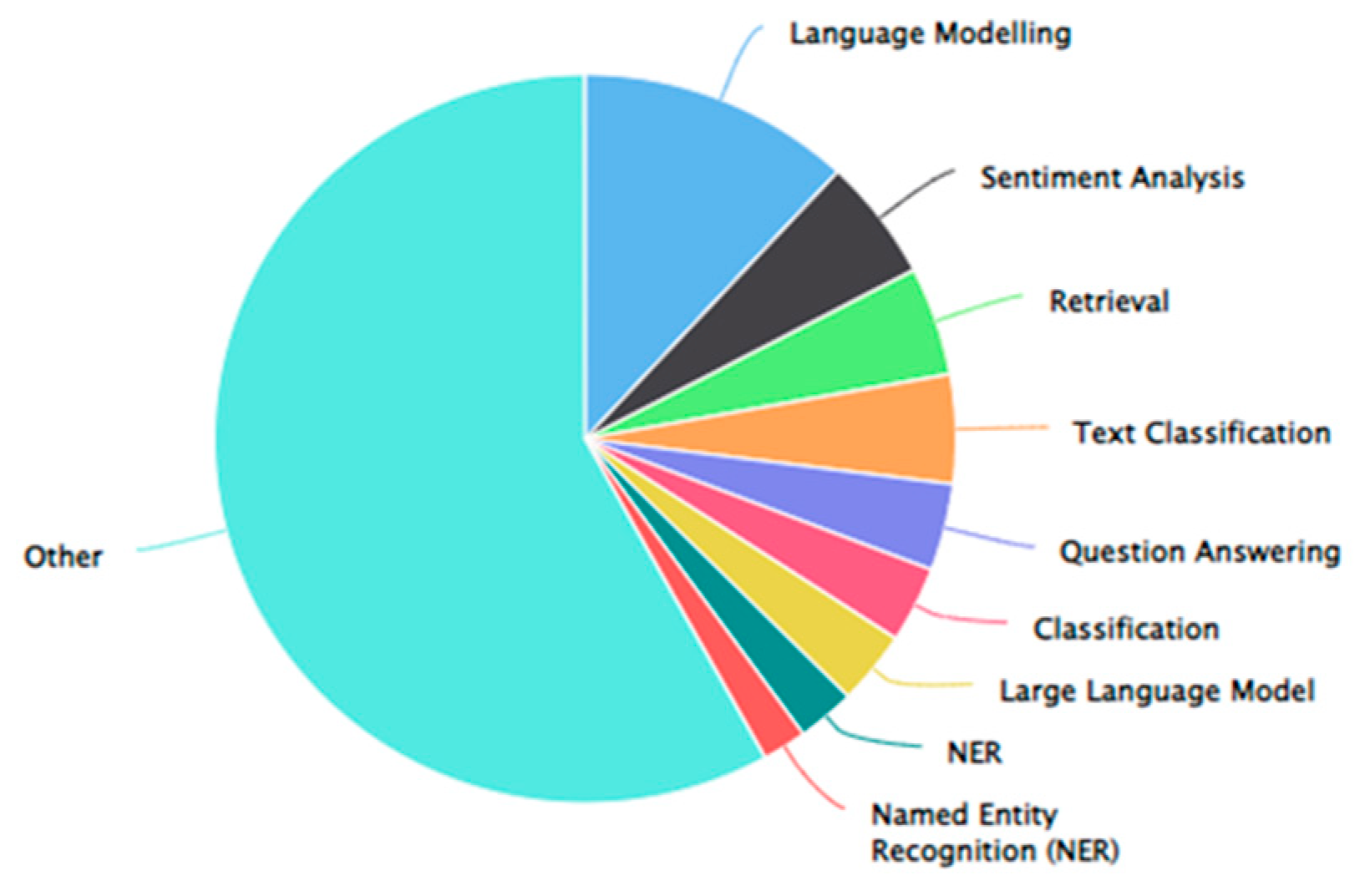

3. BERT Application Implementation

3.1. Aspect-base Sentiment Analysis

3.2. Text Classification

3.3. Next Sentence Prediction (NSP)

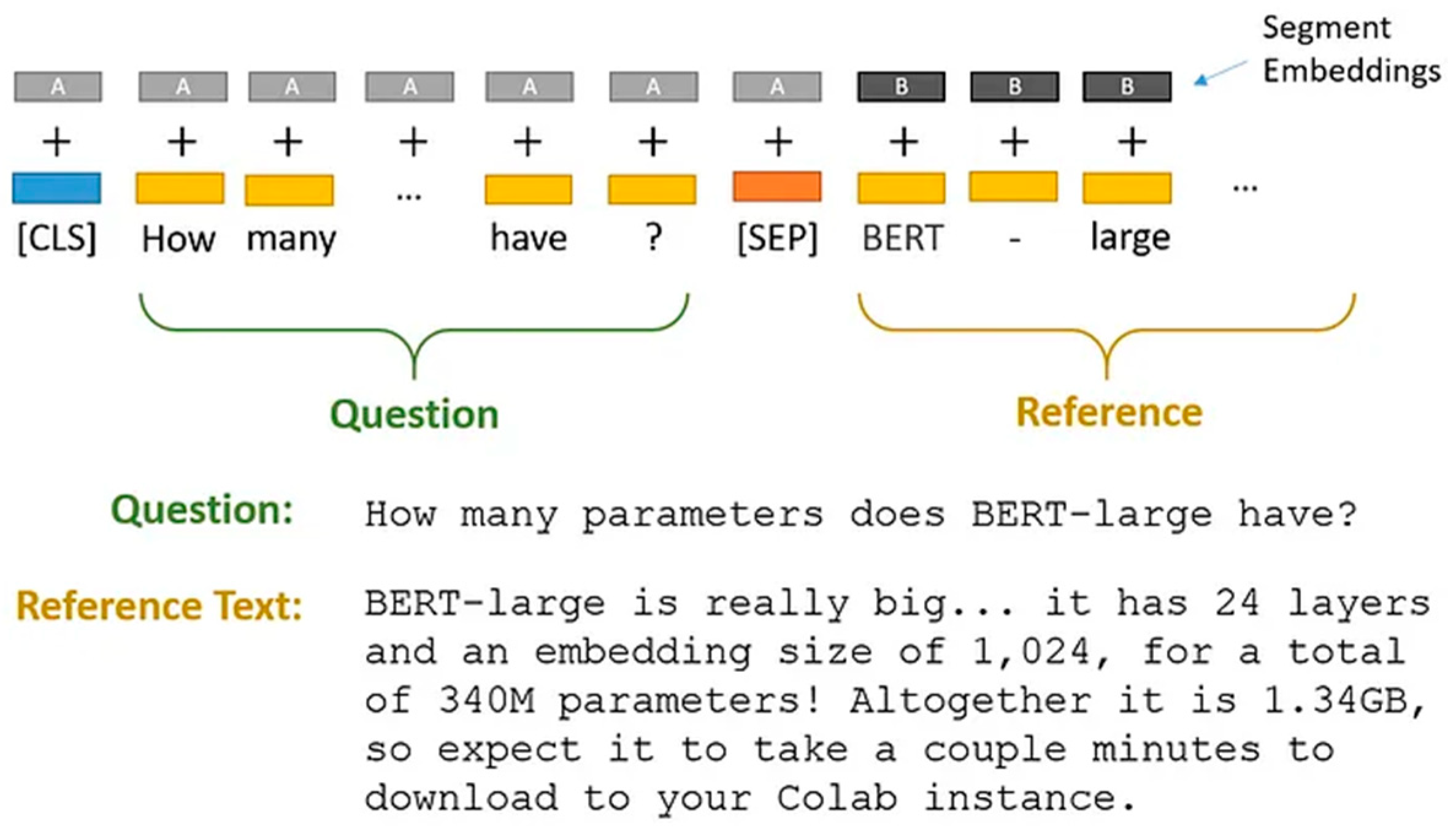

- Tokens [CLS] and [SEP] are inserted at the beginning and end of each sentence, respectively.

- Each token has a sentence embedding indicating whether it is Sentence A or B. Sentence embeddings are conceptually similar to token embeddings, but with a vocabulary of two.

- Tokens are embedded with positional information to show their place in the sequence. The Transformer paper[25] presents the notion and implementation of positional embedding.

- To determine if the second statement is connected to the first, perform the following steps:

- The Transformer model processes the full input sequence.

- The [CLS] token output is transformed into a 2×1 vector using a simple classification layer (learned weights and biases).

- Calculating IsNextSequence probability using softmax.

3.4. Question Answering

3.5. Named Entity Recognition (NER)

3.6. Machine Translation

3.7. Word Masking

Conclusion

Author Declaration

References

- J. Pennington, R. Socher, and C. Manning, “Glove: Global Vectors for Word Representation,” in Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing (EMNLP), Doha, Qatar: Association for Computational Linguistics, 2014, pp. 1532–1543. [CrossRef]

- T. Mikolov, I. Sutskever, K. Chen, G. Corrado, and J. Dean, “Distributed Representations of Words and Phrases and their Compositionality,” 2013. [CrossRef]

- X. Ma, Z. Wang, P. Ng, R. Nallapati, and B. Xiang, “Universal Text Representation from BERT: An Empirical Study,” 2019. [CrossRef]

- J. Gu, H. Hassan, J. Devlin, and V. O. K. Li, “Universal Neural Machine Translation for Extremely Low Resource Languages,” 2018. [CrossRef]

- Z. Dai, Z. Yang, Y. Yang, J. Carbonell, Q. V. Le, and R. Salakhutdinov, “Transformer-XL: Attentive Language Models Beyond a Fixed-Length Context,” 2019. [CrossRef]

- A. Graves, “Sequence Transduction with Recurrent Neural Networks,” 2012. [CrossRef]

- C. Lo, “MEANT 2.0: Accurate semantic MT evaluation for any output language,” in Proceedings of the Second Conference on Machine Translation, Copenhagen, Denmark: Association for Computational Linguistics, 2017, pp. 589–597. [CrossRef]

- P. Liu, X. Qiu, and X. Huang, “Recurrent Neural Network for Text Classification with Multi-Task Learning,” 2016. [CrossRef]

- J. Schapke, “Evolution of word representations in NLP,” Medium. Accessed: Jan. 09, 2024. [Online]. Available: https://towardsdatascience.com/evolution-of-word-representations-in-nlp-d4483fe23e93.

- M. V. Koroteev, “BERT: A Review of Applications in Natural Language Processing and Understanding,” 2021. [CrossRef]

- J. Howard and S. Ruder, “Universal Language Model Fine-tuning for Text Classification,” 2018. [CrossRef]

- M. E. Peters et al., “Deep contextualized word representations,” 2018. [CrossRef]

- V. Chakkarwar, S. Tamane, and A. Thombre, “A Review on BERT and Its Implementation in Various NLP Tasks,” in Proceedings of the International Conference on Applications of Machine Intelligence and Data Analytics (ICAMIDA 2022), vol. 105, S. Tamane, S. Ghosh, and S. Deshmukh, Eds., in Advances in Computer Science Research, vol. 105. , Dordrecht: Atlantis Press International BV, 2023, pp. 112–121. [CrossRef]

- A. Tam, “A Brief Introduction to BERT,” MachineLearningMastery.com. Accessed: Jan. 24, 2024. [Online]. Available: https://machinelearningmastery.com/a-brief-introduction-to-bert/.

- N. S. Chauhan, “Google BERT: Understanding the Architecture,” The AI dream. Accessed: Jan. 24, 2024. [Online]. Available: https://www.theaidream.com/post/google-bert-understanding-the-architecture.

- A. Vaswani et al., “Attention Is All You Need,” 2017. [CrossRef]

- “Improving language understanding with unsupervised learning.” Accessed: Jan. 05, 2024. [Online]. Available: https://openai.com/research/language-unsupervised.

- D. Hendrycks and K. Gimpel, “Gaussian Error Linear Units (GELUs),” 2016. [CrossRef]

- M. Joshi, D. Chen, Y. Liu, D. S. Weld, L. Zettlemoyer, and O. Levy, “SpanBERT: Improving Pre-training by Representing and Predicting Spans,” 2019. [CrossRef]

- “BERT NLP Model Explained for Complete Beginners,” ProjectPro. Accessed: Jan. 24, 2024. [Online]. Available: https://www.projectpro.io/article/bert-nlp-model-explained/558.

- “Understanding BERT.” Accessed: Jan. 24, 2024. [Online]. Available: https://www.rws.com/blog/understanding-bert/.

- S. Yu, J. Su, and D. Luo, “Improving BERT-Based Text Classification With Auxiliary Sentence and Domain Knowledge,” IEEE Access, vol. 7, pp. 176600–176612, 2019. [CrossRef]

- S. Bag, “Text Summarization using BERT, GPT2, XLNet,” Analytics Vidhya. Accessed: Jan. 09, 2024. [Online]. Available: https://medium.com/analytics-vidhya/text-summarization-using-bert-gpt2-xlnet-5ee80608e961.

- “Text Classification with BERT - Shiksha Online.” Accessed: Jan. 09, 2024. [Online]. Available: https://www.shiksha.com/online-courses/articles/text-classification-with-bert/.

- R. Horev, “BERT Explained: State of the art language model for NLP,” Medium. Accessed: Jan. 09, 2024. [Online]. Available: https://towardsdatascience.com/bert-explained-state-of-the-art-language-model-for-nlp-f8b21a9b6270.

- N. N, “Question Answering System with BERT,” Analytics Vidhya. Accessed: Jan. 09, 2024. [Online]. Available: https://medium.com/analytics-vidhya/question-answering-system-with-bert-ebe1130f8def.

- X. Zhang, J. Zhao, and Y. LeCun, “Character-level Convolutional Networks for Text Classification,” 2015. [CrossRef]

- “Text Classification model,” NVIDIA NeMo. Accessed: Jan. 24, 2024. [Online]. Available: https://nvidia.github.io/NeMo/nlp/text_classification.html.

- “Text classification task guide | MediaPipe,” Google for Developers. Accessed: Jan. 24, 2024. [Online]. Available: https://developers.google.com/mediapipe/solutions/text/text_classifier.

- J. Briggs, “BERT For Next Sentence Prediction,” Medium. Accessed: Jan. 24, 2024. [Online]. Available: https://towardsdatascience.com/bert-for-next-sentence-prediction-466b67f8226f.

- “Weights & Biases,” W&B. Accessed: Jan. 24, 2024. [Online]. Available: https://wandb.ai/mostafaibrahim17/ml-articles/reports/The-Answer-Key-Unlocking-the-Potential-of-Question-Answering-With-NLP--VmlldzozNTcxMDE3.

- “What is Named Entity Recognition (NER)? Methods, Use Cases, and Challenges.” Accessed: Jan. 24, 2024. [Online]. Available: https://www.datacamp.com/blog/what-is-named-entity-recognition-ner.

- “A Comprehensive Guide to Named Entity Recognition (NER).” Accessed: Jan. 24, 2024. [Online]. Available: https://www.turing.com/kb/a-comprehensive-guide-to-named-entity-recognition.

- A. M. Kocaman, “Mastering Named Entity Recognition with BERT: A Comprehensive Guide,” Medium. Accessed: Jan. 09, 2024. [Online]. Available: https://medium.com/@ahmetmnirkocaman/mastering-named-entity-recognition-with-bert-a-comprehensive-guide-b49f620e50b0.

- “Named Entity Recognition: Challenges and Solutions | ProcessMaker.” Accessed: Jan. 24, 2024. [Online]. Available: https://www.processmaker.com/blog/named-entity-recognition-challenges-and-solutions/.

- W. Zhu et al., “Multilingual Machine Translation with Large Language Models: Empirical Results and Analysis,” 2023. [CrossRef]

- “Transforming machine translation: a deep learning system reaches news translation quality comparable to human professionals | Nature Communications.” Accessed: Jan. 24, 2024. [Online]. Available: https://www.nature.com/articles/s41467-020-18073-9.

- D. Bahdanau, K. Cho, and Y. Bengio, “Neural Machine Translation by Jointly Learning to Align and Translate,” 2014. [CrossRef]

- J. Devlin, M.-W. Chang, K. Lee, and K. Toutanova, “BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding,” 2018. [CrossRef]

- G. Lample and A. Conneau, “Cross-lingual Language Model Pretraining,” 2019. [CrossRef]

- C. Xing, D. Wang, C. Liu, and Y. Lin, “Normalized Word Embedding and Orthogonal Transform for Bilingual Word Translation,” in Proceedings of the 2015 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Denver, Colorado: Association for Computational Linguistics, 2015, pp. 1006–1011. [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).