Submitted:

16 January 2024

Posted:

17 January 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

- We propose a Multi-head LSTM for bankruptcy prediction on time series data.

- We investigated the optimal time window of financial variables to predict bankruptcy with a comparison among the main state-of-the-art approaches in Machine Learning and Deep Learning. Experiments have been performed on public companies traded in the American stock market with data available between 2000 and 2018.

- We anonymized our dataset and made public for the scientific community for further investigations and to provide a benchmark for future studies on this topic.

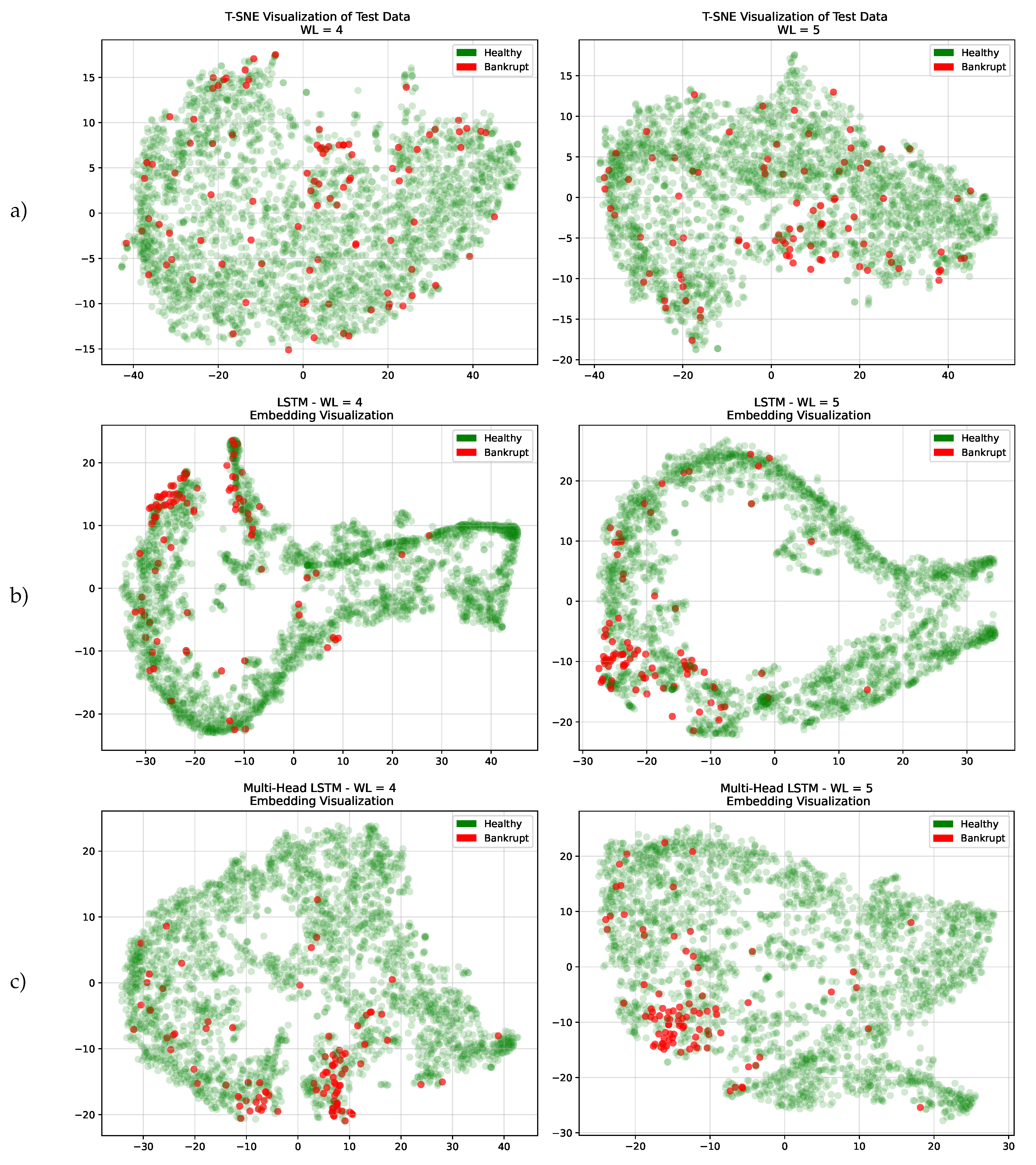

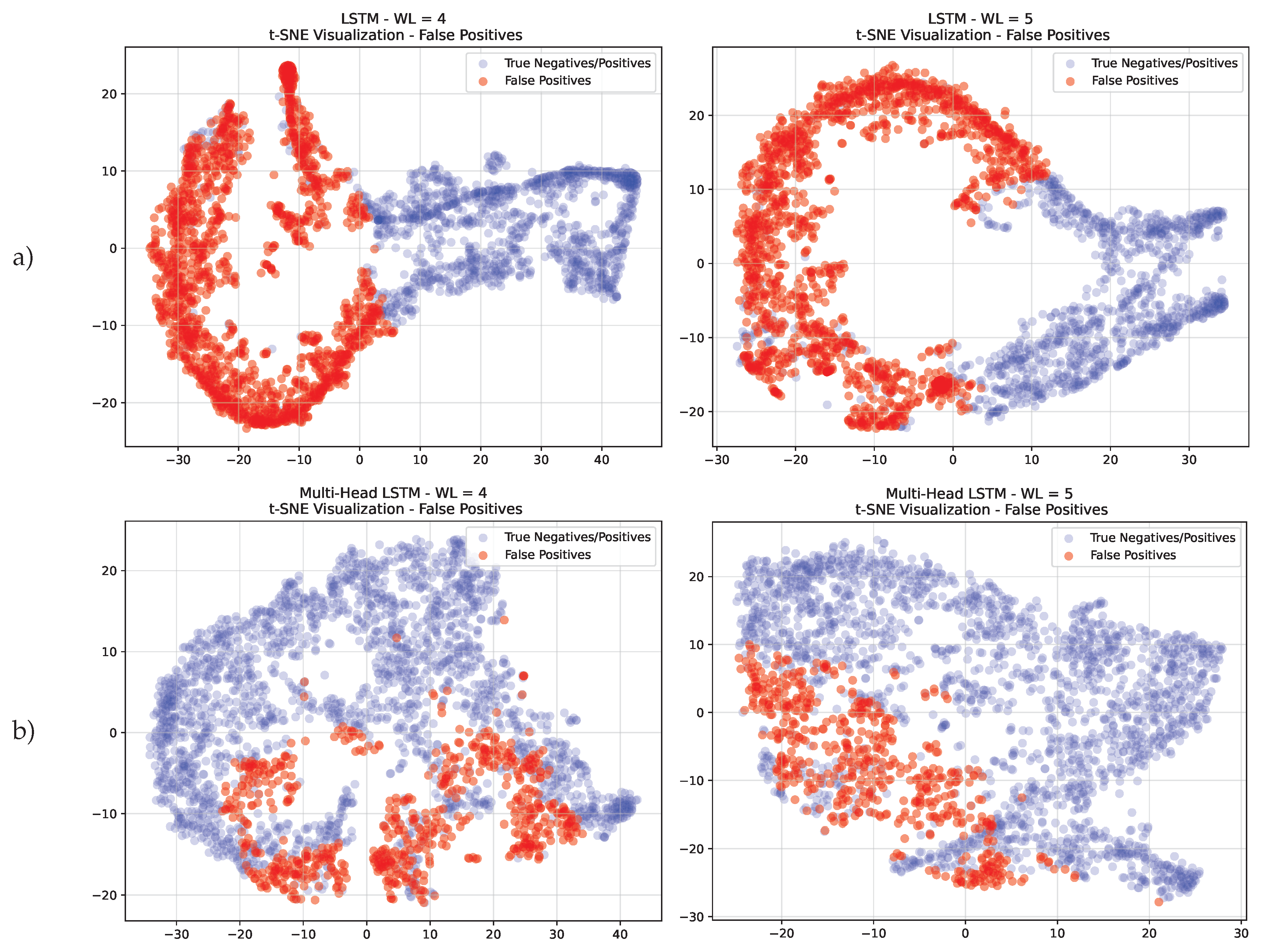

- We analyzed our models on the test set, using T-SNE [13] to show the ability of our models to capture patterns. We also performed an in-depth analysis of false positives.

2. Related Works

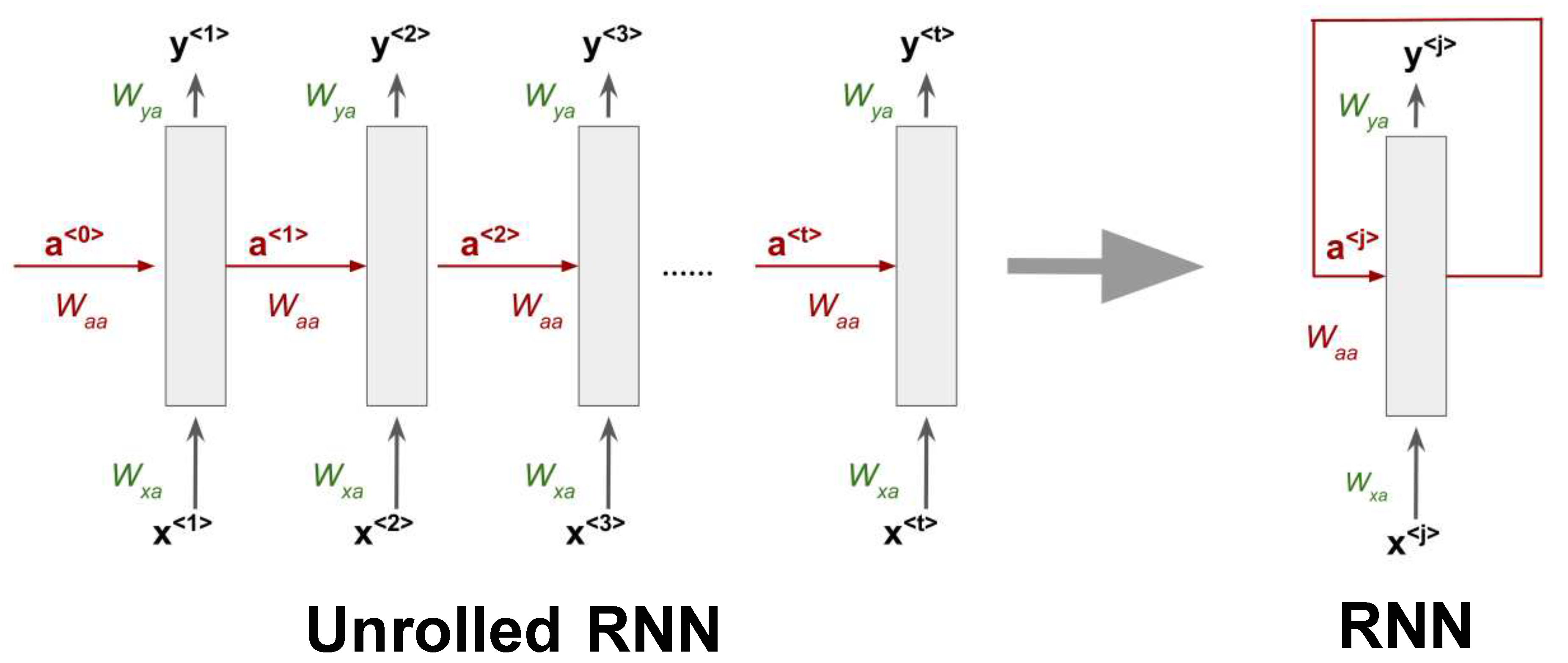

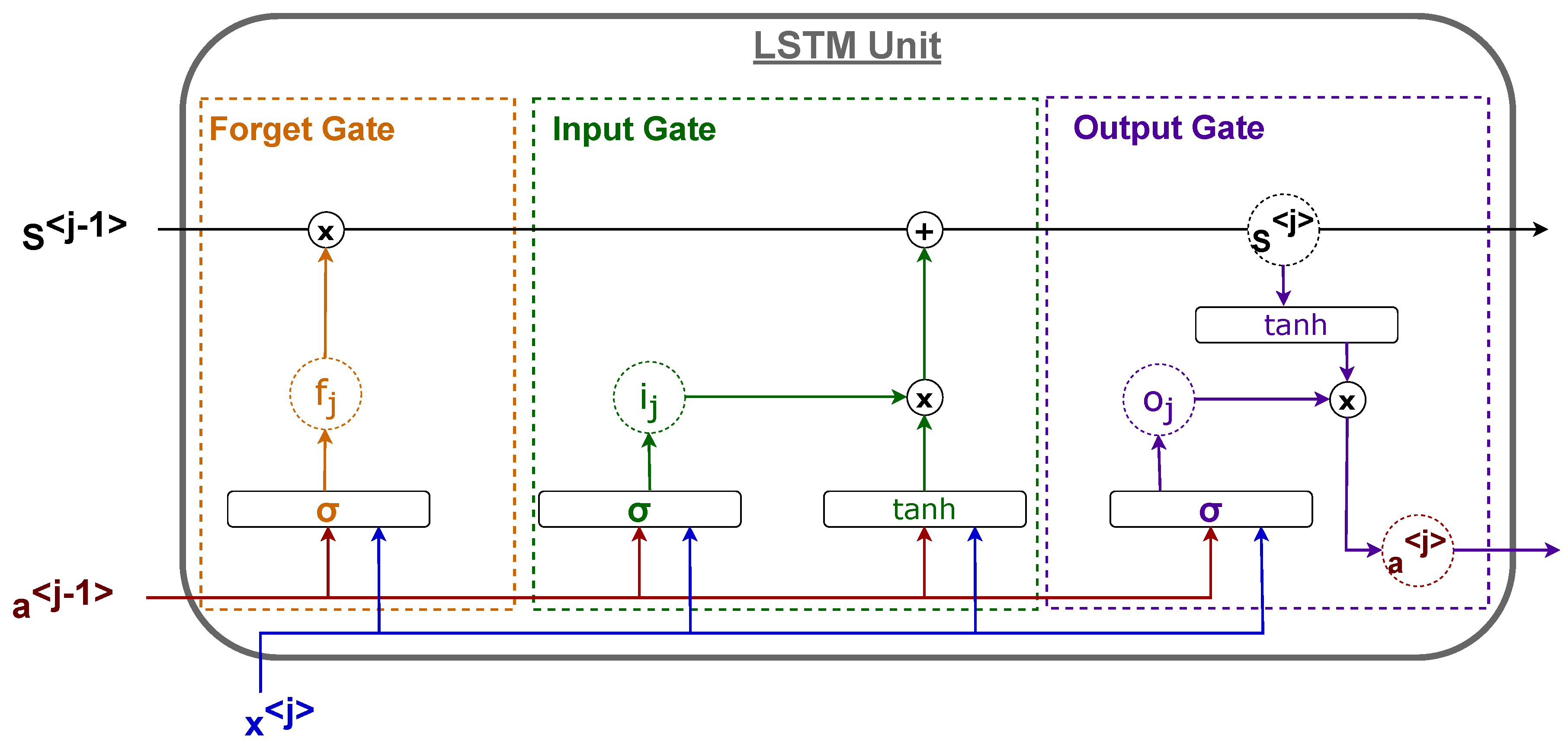

3. Recurrent Neural Networks

- Forget Gate: It determines the amount of information that should be retrieved from the previous unit state.

- Input Gate: It defines the amount of information from the new input that should be used to update the unit’s internal state.

- Output Gate: It defines the output of the unit as a function of its current unit state.

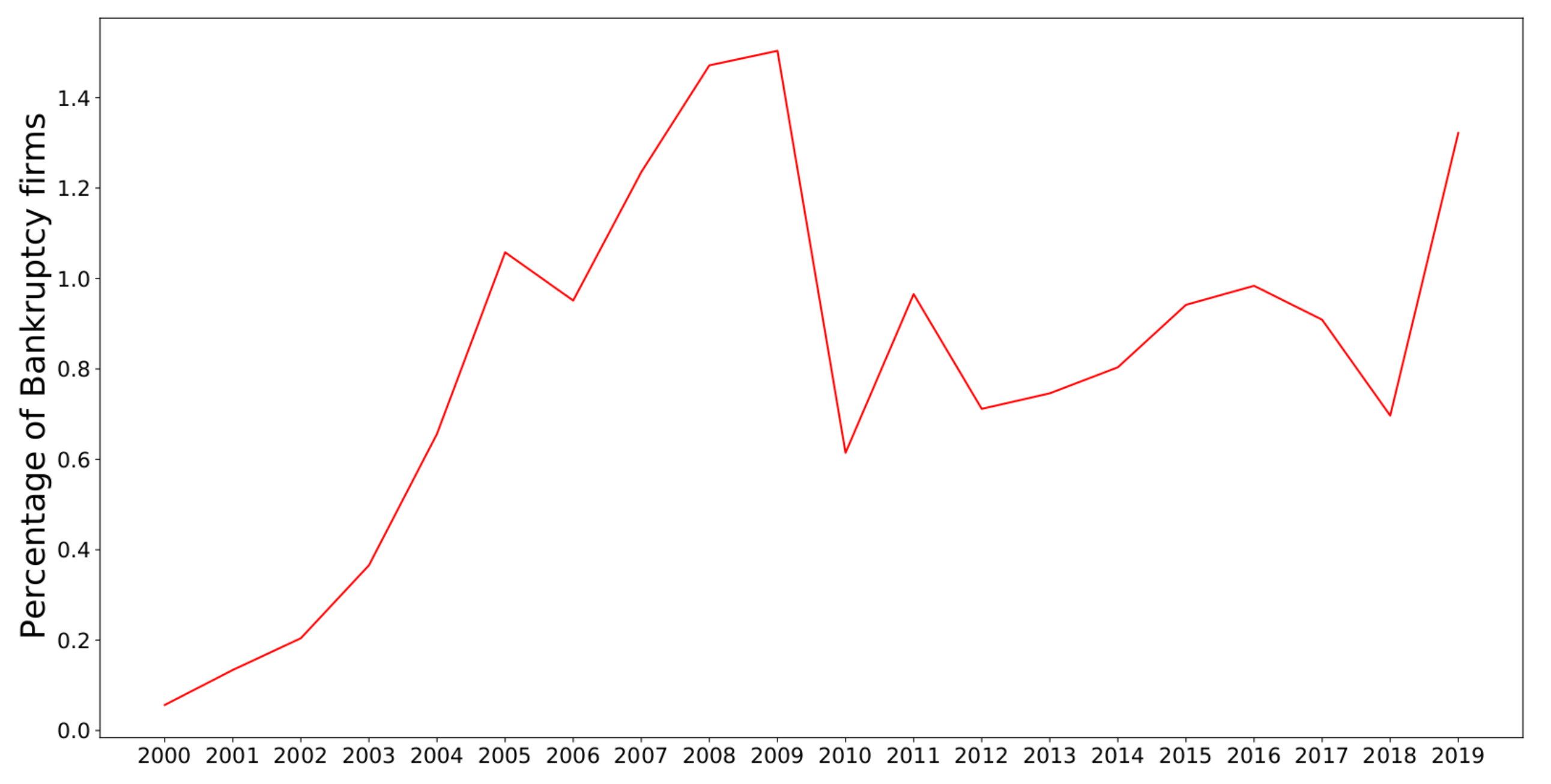

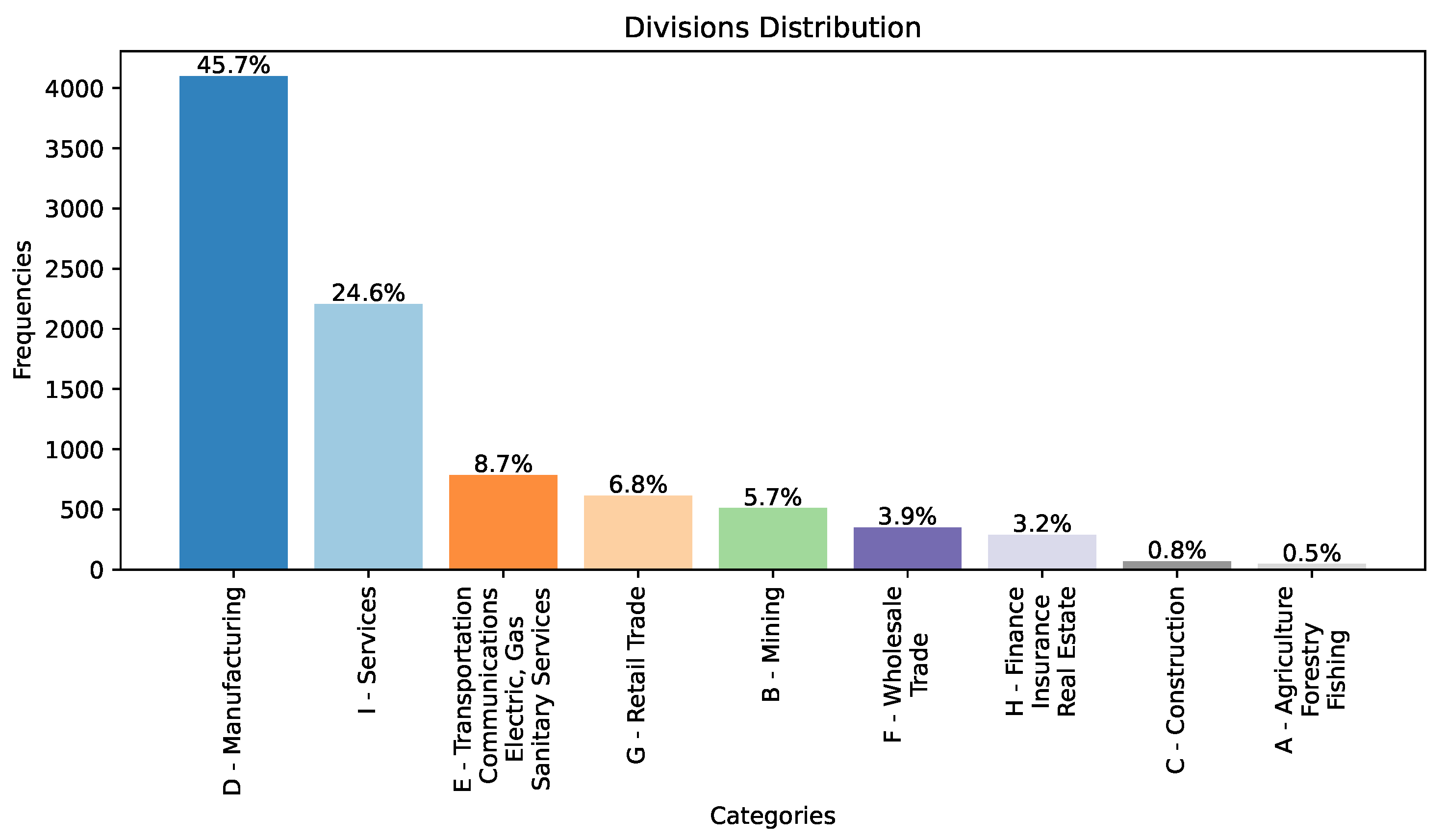

4. Dataset

- For such firms, we collected 18 financial variables, often used for bankruptcy prediction, for each year. In bankruptcy prediction it is common to consider accounting information and up-to-date market information that may reflect the company’s liability and profitability. We selected the variables listed in Table 1 as the minimum common set found in the literature [3,10,39] and to have a dataset for twenty years without missing observations.

- For all the experiments presented in Section 5, we only considered firms with at least 5 years of activity since we aim to first identify the time window that optimizes the bankruptcy prediction accuracy.

- If the firm’s management files Chapter 11 of the Bankruptcy Code to "reorganize" its business: management continues to run the day-to-day business operations, but all significant business decisions must be approved by a bankruptcy court.

- If the firm’s management files Chapter 7 of the Bankruptcy Code: the company stops all operations and goes completely out of business.

| Variable name | Variable name | |

|---|---|---|

| Current assets | Total assets | |

| Cost of good sold | Total Long term debt | |

| Depreciation & amortization | EBIT | |

| EBITDA | Gross profit | |

| Inventory | Total Current liabilities | |

| Net income | Retained earnings | |

| Total Receivables | Total Revenue | |

| Market value | Total Liabilities | |

| Net Sales | Total Operating expenses |

5. Hardware specifications

- CPU: Intel i9-10900 @2.80 GHZ

- GPU: Nvidia RTX 3090 (24 GB)

- RAM: 32 GB DDR4 - 2667MHz

- Motherboard: Z490-A PRO (MS-7C75)

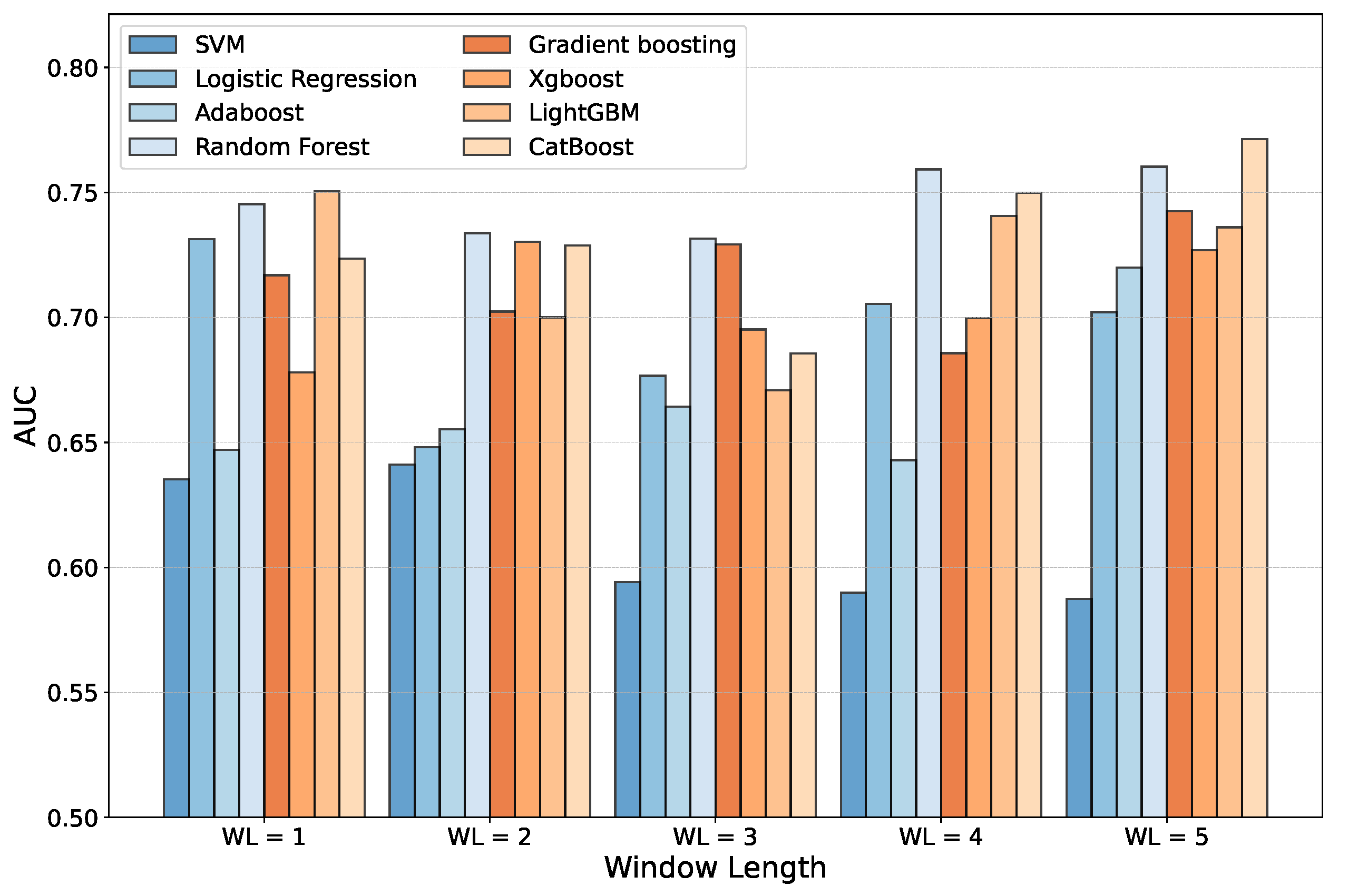

6. Temporal window selection

- Some firms could only be considered for certain time windows since they have only recently been made public.

- Some firms could be excluded depending on the time window although they existed in the past because of an acquisition or merging operation.

- By extending the training and testing window, the number of companies available for training and testing will inevitably decrease. Moreover, one should consider that a time window above a certain number of years introduces a statistical bias that limits the analysis to only structured companies that have been on the market for several years. At the same time, it leads to ignoring the relatively new companies, which usually have smaller market capitalization and thus are riskier and with a higher probability of default, especially in an overall adverse economic environment.

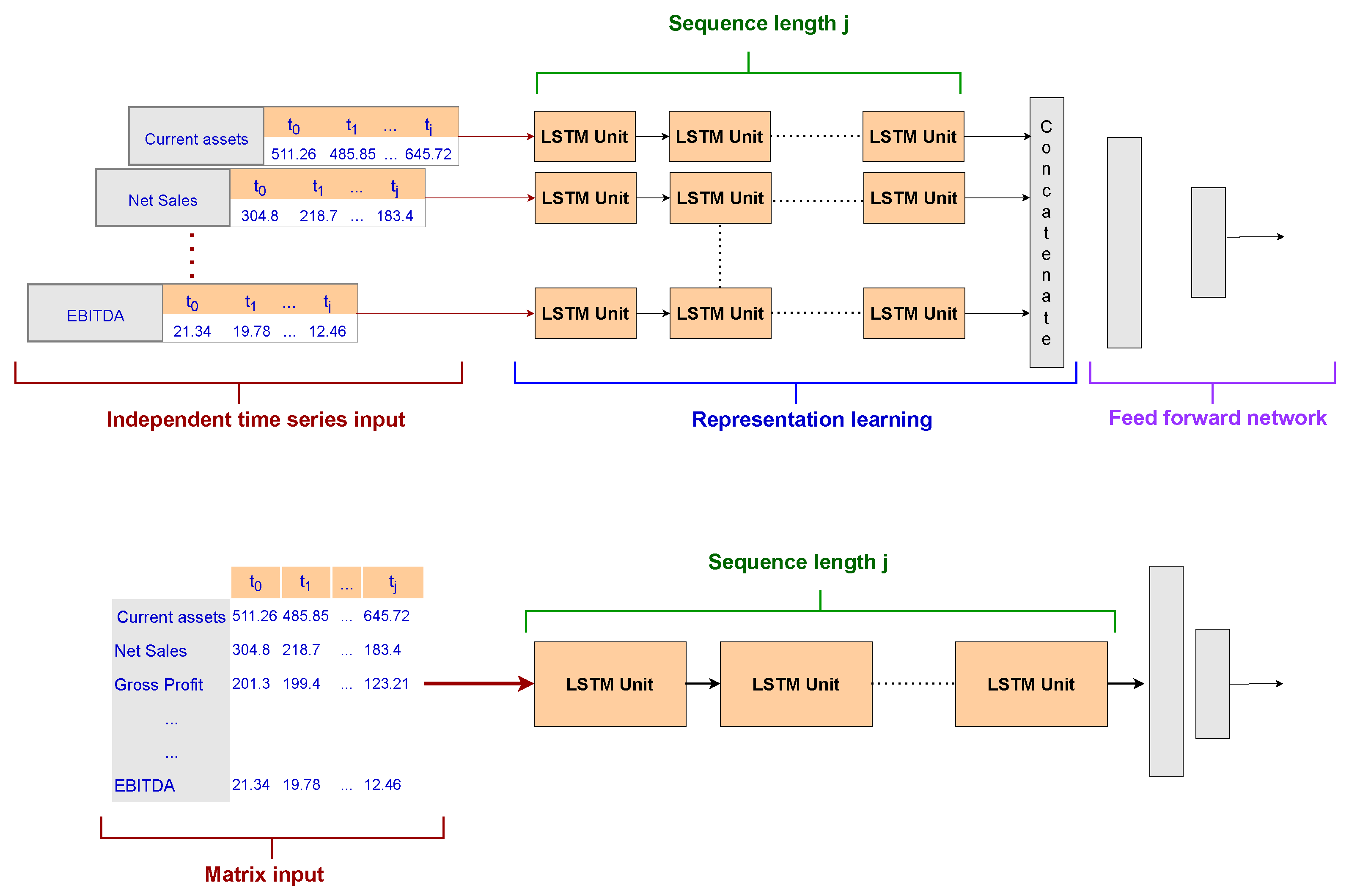

7. LSTM architectures for Bankruptcy prediction

- A single-input LSTM: This is the most common approach with RNNs. The input is a matrix with 18 rows (number of accounting variables) and a number of columns equal to the time window selected for the experiment. Moreover, the LSTM is composed of a sequence of units as long as the time window. Finally, a dense layer with a Softmax function is used as an output layer for the final prediction.

- A Multi-head LSTM: This is one of the main contributions of our research with respect to the current state of the art. In order to deal with a smaller training set due to the temporal window selection and the class imbalance, we developed several smaller LSTMs, one for each accounting variable to be analyzed by the model, named LSTM heads. Each network includes a short sequence of units equal to the input sequence length and contributes to the latent representation of the company learned by utilizing the accounting variables. Indeed, the output of the Multi-head layer is then concatenated and exploited by a two-layer feed-forward network with a Softmax function in the output layer. This architecture aims to test whether an attention method based on a latent representation of the company that focuses on each time series independently can outperform a classical RNN setting.

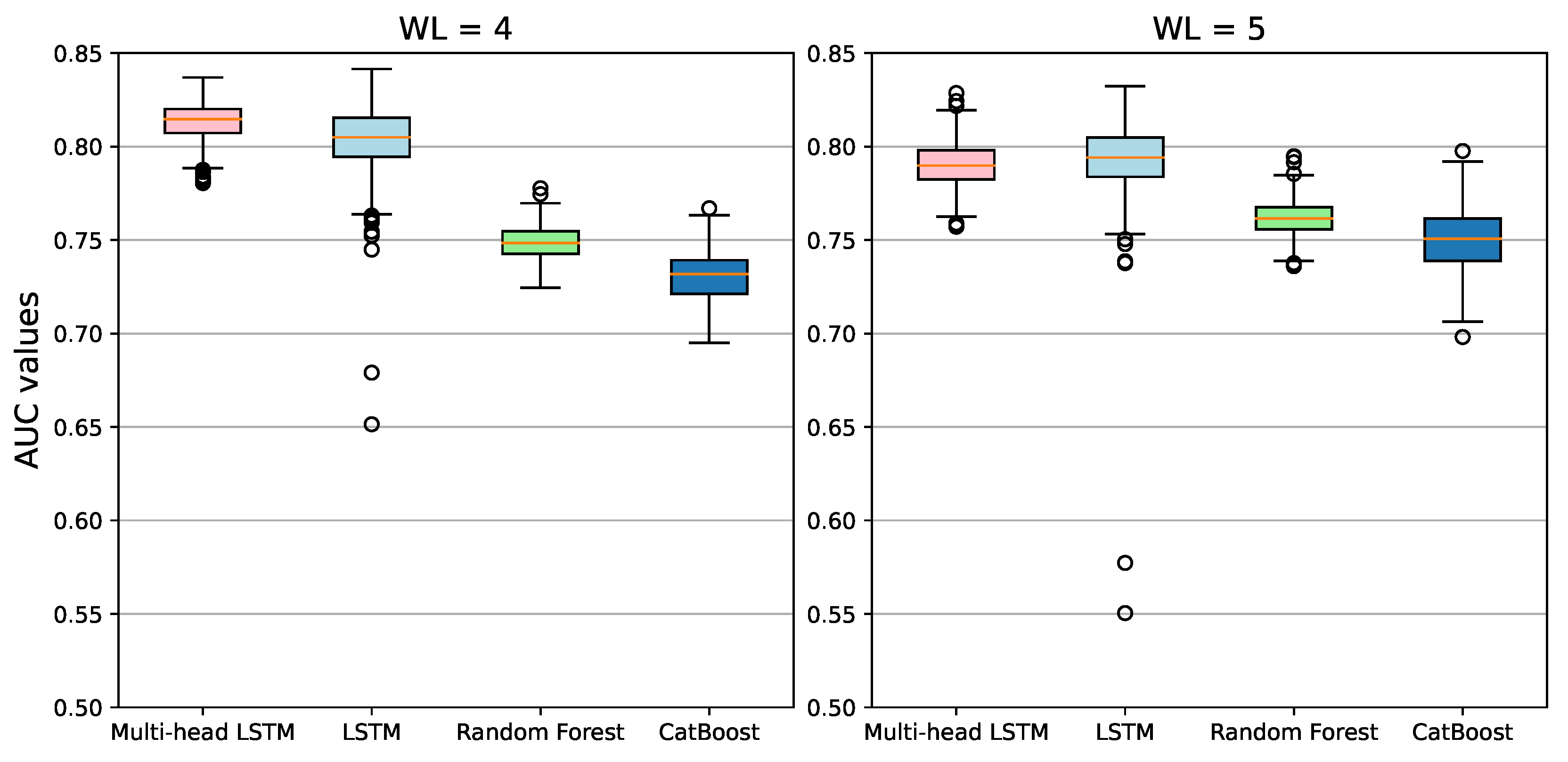

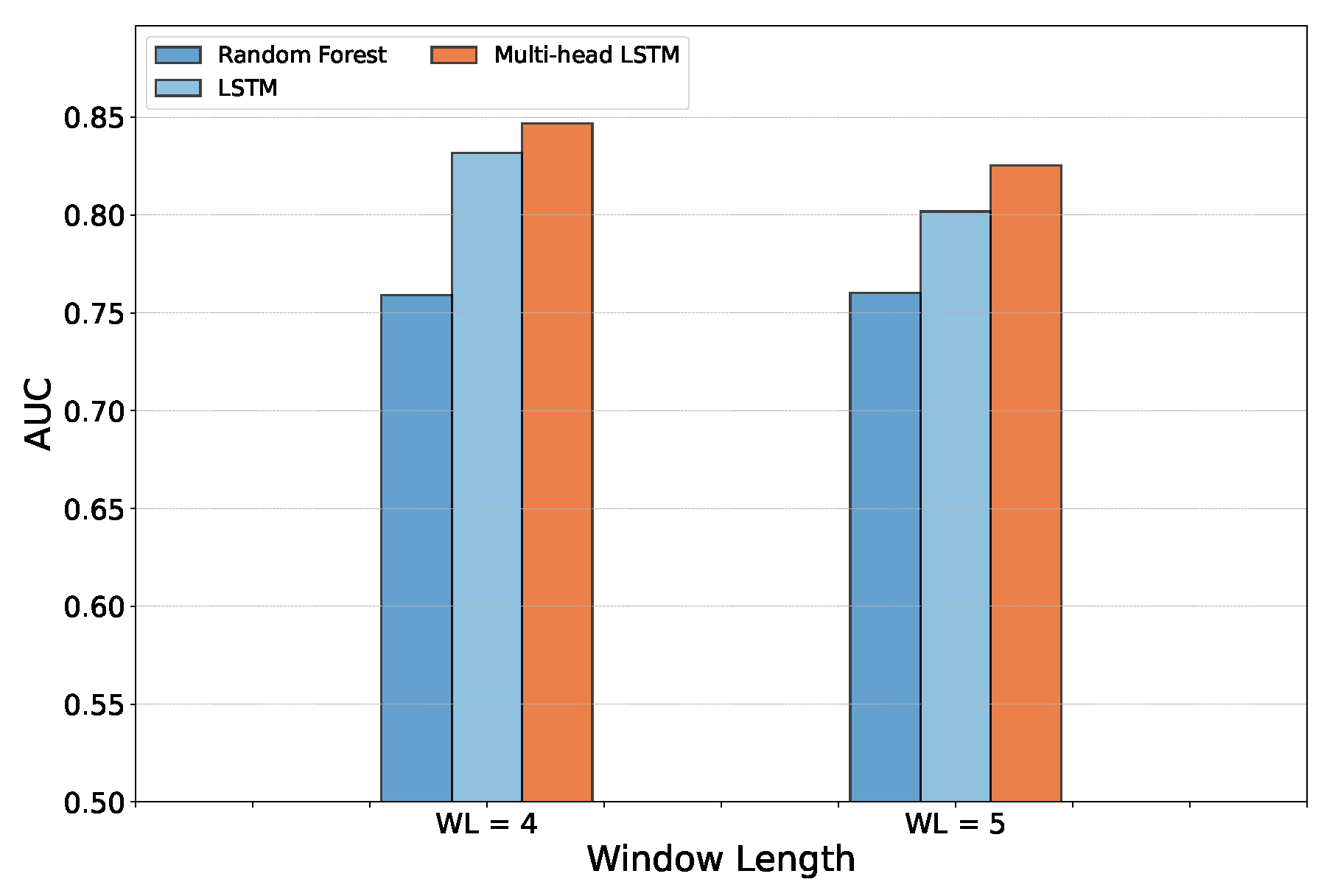

8. Results

8.1. Metrics

- True Positive (TP): The number of actually defaulted companies that have been correctly predicted as bankrupted

- False Negative (FN): The number of actually defaulted companies that have been wrongly predicted as healthy firms.

- True Negative (TN): The number of actually healthy companies that have been correctly predicted as healthy

- False Positive (FP): The number of actually healthy companies that have been wrongly predicted as bankrupted by the model.

- The Area Under the Curve (AUC) measures the ability of a classifier to distinguish between classes and is used as a summary of the Receiver Operating Characteristic (ROC) Curve. The ROC curve is created by plotting the true positive rate (TPR) against the false positive rate (FPR) at various threshold settings.

- The macro score is computed as the arithmetic mean of the score of all the classes.

- The micro score is used to assess the quality of multi-label binary problems. It measures the score of the aggregated contributions of all classes giving the same importance to each sample.

8.2. LSTMs training and validation

- Epochs = 1000

- Learning rate =

- Batch size = 32 (Default Keras value)

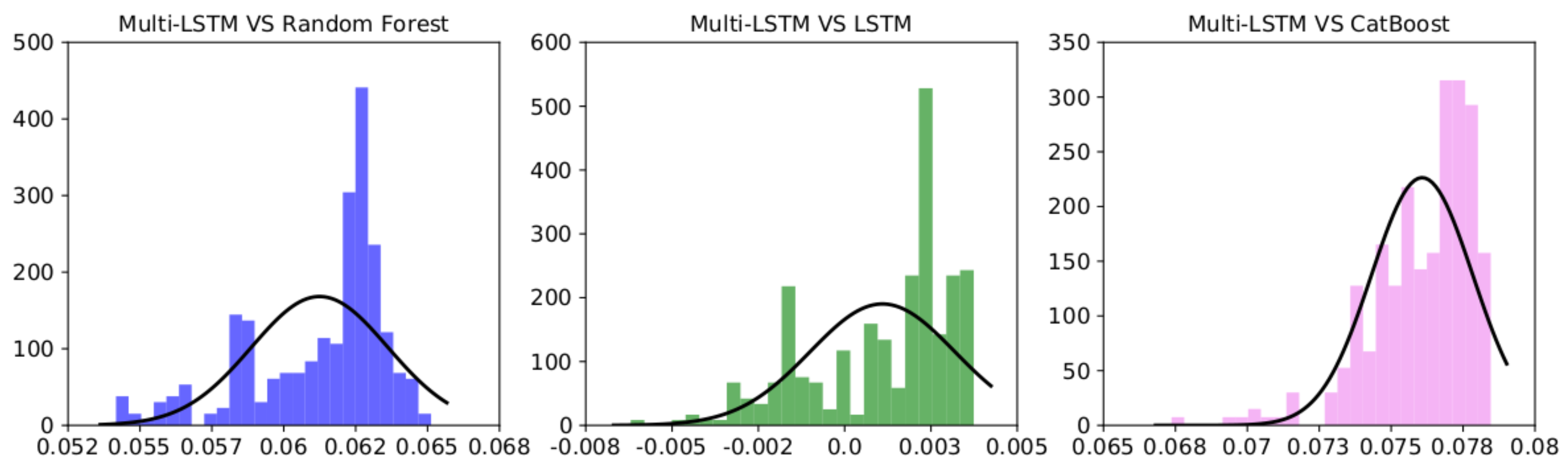

8.3. Statistical analysis

9. Final analysis on the test set

- Lowest difference between training and validation loss to ensure that the highest AUC is not achieved as a consequence of overfitting.

- Lowest validation loss

- Highest AUC on the validation set

9.1. Further Analysis on the Test Set

9.2. False Positive Analysis

- Across all divisions, the Multi-head model outperforms LSTM, for both window lengths, in the number of false positives. This shows how much better the Multi-head LSTM is in classifying and identifying between alive and bankrupt firms.

- Examining the variance of false positives with an emphasis on window length reveals an interesting pattern. When considering the Multi-head LSTM, the more the window length grows, the lower the percentage of false positives. This can be explained by the Multi-head LSTM’s capacity to identify structures and patterns that can be missed when the window is set to 4. On the opposite, since the single LSTM’s performance has more variance, no definitive conclusions can be made about it, making it difficult to understand how false positives change across various time windows. This is because there are equal amounts of positive and negative variations.

| Division | Test set | LSTM (False Positive) | Multi-head LSTM (False Positive) | |||

|---|---|---|---|---|---|---|

| WL = 4 | WL = 5 | WL = 4 | WL = 5 | WL = 4 | WL = 5 | |

| A | 17 | 15 | 12 (70%) | 9 (60 %) | 4 (23 %) | 3 (20 %) |

| B | 152 | 142 | 84 (55%) | 79 (52 %) | 34 (22 %) | 29 (20 %) |

| C | 30 | 28 | 13 (43%) | 14 (50 %) | 5 (17 %) | 6 (21 %) |

| D | 1476 | 1382 | 824 (55%) | 790 (57 %) | 326 (22 %) | 284 (21 %) |

| E | 254 | 248 | 107 (42 %) | 139 (56 %) | 77 (30 %) | 44 (18 %) |

| F | 106 | 99 | 73 (69 %) | 71 (72 %) | 34 (32 %) | 28 (28 %) |

| G | 213 | 202 | 134 (62 %) | 131 (65 %) | 104 (49 %) | 95 (47 %) |

| H | 66 | 63 | 34 (52 %) | 32 (51 %) | 12 (6%) | 5 (8 %) |

| I | 606 | 556 | 310 (51 %) | 308 (55 %) | 70 (11 %) | 65 (12 %) |

10. Conclusions

References

- Danilov, C.; Konstantin, A. Corporate Bankruptcy: Assessment, Analysis and Prediction of Financial Distress, Insolvency, and Failure. Analysis and Prediction of Financial Distress, Insolvency, and Failure (May 9, 2014) 2014.

- Ding, A.A.; Tian, S.; Yu, Y.; Guo, H. A class of discrete transformation survival models with application to default probability prediction. Journal of the American Statistical Association 2012, 107, 990–1003. [Google Scholar] [CrossRef]

- Altman, E.I. Financial ratios, discriminant analysis and the prediction of corporate bankruptcy. The journal of finance 1968, 23, 589–609. [Google Scholar] [CrossRef]

- Wang, G.; Ma, J.; Huang, L.; Xu, K. Two credit scoring models based on dual strategy ensemble trees. Knowledge-Based Systems 2012, 26, 61–68. [Google Scholar] [CrossRef]

- Wang, G.; Ma, J.; Yang, S. An improved boosting based on feature selection for corporate bankruptcy prediction. Expert Systems with Applications 2014, 41, 2353–2361. [Google Scholar] [CrossRef]

- Zhou, L.; Lai, K.K.; Yen, J. Bankruptcy prediction using SVM models with a new approach to combine features selection and parameter optimisation. International Journal of Systems Science 2014, 45, 241–253. [Google Scholar] [CrossRef]

- Geng, R.; Bose, I.; Chen, X. Prediction of financial distress: An empirical study of listed Chinese companies using data mining. European Journal of Operational Research 2015, 241, 236–247. [Google Scholar] [CrossRef]

- Alfaro, E.; García, N.; Gámez, M.; Elizondo, D. Bankruptcy forecasting: An empirical comparison of AdaBoost and neural networks. Decision Support Systems 2008, 45, 110–122. [Google Scholar] [CrossRef]

- Bose, I.; Pal, R. Predicting the survival or failure of click-and-mortar corporations: A knowledge discovery approach. European Journal of Operational Research 2006, 174, 959–982. [Google Scholar] [CrossRef]

- Tian, S.; Yu, Y.; Guo, H. Variable selection and corporate bankruptcy forecasts. Journal of Banking & Finance 2015, 52, 89–100. [Google Scholar]

- Wanke, P.; Barros, C.P.; Faria, J.R. Financial distress drivers in Brazilian banks: A dynamic slacks approach. European Journal of Operational Research 2015, 240, 258–268. [Google Scholar] [CrossRef]

- du Jardin, P. A two-stage classification technique for bankruptcy prediction. European Journal of Operational Research 2016, 254, 236–252. [Google Scholar] [CrossRef]

- Van der Maaten, L.; Hinton, G. Visualizing data using t-SNE. Journal of machine learning research 2008, 9. [Google Scholar]

- Taffler, R.J.; Tisshaw, H. Going, going, gone–four factors which predict. Accountancy 1977, 88, 50–54. [Google Scholar]

- Kralicek, P. Fundamentals of finance: balance sheets, profit and loss accounts, cash flow, calculation bases, financial planning, early warning systems; Ueberreuter, 1991.

- Beaver, W.H. Financial ratios as predictors of failure. Journal of accounting research 1966, 71–111. [Google Scholar] [CrossRef]

- Ohlson, J.A. Financial ratios and the probabilistic prediction of bankruptcy. Journal of accounting research 1980, 109–131. [Google Scholar] [CrossRef]

- Altman, E.I.; Hotchkiss, E.; Wang, W. Corporate financial distress, restructuring, and bankruptcy: analyze leveraged finance, distressed debt, and bankruptcy; John Wiley & Sons, 2019.

- Schönfeld, J.; Kuděj, M.; Smrčka, L. Financial health of enterprises introducing safeguard procedure based on bankruptcy models. Journal of Business Economics and Management 2018, 19, 692–705. [Google Scholar] [CrossRef]

- Moscatelli, M.; Parlapiano, F.; Narizzano, S.; Viggiano, G. Corporate default forecasting with machine learning. Expert Systems with Applications 2020, 161, 113567. [Google Scholar] [CrossRef]

- Danenas, P.; Garsva, G. Selection of support vector machines based classifiers for credit risk domain. Expert systems with applications 2015, 42, 3194–3204. [Google Scholar] [CrossRef]

- Tsai, C.F.; Hsu, Y.F.; Yen, D.C. A comparative study of classifier ensembles for bankruptcy prediction. Applied Soft Computing 2014, 24, 977–984. [Google Scholar] [CrossRef]

- Barboza, F.; Kimura, H.; Altman, E. Machine learning models and bankruptcy prediction. Expert Systems with Applications 2017, 83, 405–417. [Google Scholar] [CrossRef]

- Nanni, L.; Lumini, A. An experimental comparison of ensemble of classifiers for bankruptcy prediction and credit scoring. Expert systems with applications 2009, 36, 3028–3033. [Google Scholar] [CrossRef]

- Kim, M.J.; Kang, D.K. Ensemble with neural networks for bankruptcy prediction. Expert systems with apphttps://www.osha.gov/data/sic-manuallications 2010, 37, 3373–3379. [Google Scholar] [CrossRef]

- Wang, G.; Hao, J.; Ma, J.; Jiang, H. A comparative assessment of ensemble learning for credit scoring. Expert systems with applications 2011, 38, 223–230. [Google Scholar] [CrossRef]

- Lombardo, G.; Pellegrino, M.; Adosoglou, G.; Cagnoni, S.; Pardalos, P.M.; Poggi, A. Machine Learning for Bankruptcy Prediction in the American Stock Market: Dataset and Benchmarks. Future Internet 2022, 14, 244. [Google Scholar] [CrossRef]

- Mossman, C.E.; Bell, G.G.; Swartz, L.M.; Turtle, H. An empirical comparison of bankruptcy models. Financial Review 1998, 33, 35–54. [Google Scholar] [CrossRef]

- Duan, J.C.; Sun, J.; Wang, T. Multiperiod corporate default prediction—A forward intensity approach. Journal of Econometrics 2012, 170, 191–209. [Google Scholar] [CrossRef]

- Kim, H.; Cho, H.; Ryu, D. Corporate default predictions using machine learning: Literature review. Sustainability 2020, 12, 6325. [Google Scholar] [CrossRef]

- Vochozka, M.; Vrbka, J.; Suler, P. Bankruptcy or success? the effective prediction of a company’s financial development using LSTM. Sustainability 2020, 12, 7529. [Google Scholar] [CrossRef]

- Kim, H.; Cho, H.; Ryu, D. Corporate bankruptcy prediction using machine learning methodologies with a focus on sequential data. Computational Economics 2022, 59, 1231–1249. [Google Scholar] [CrossRef]

- Gruslys, A.; Munos, R.; Danihelka, I.; Lanctot, M.; Graves, A. Memory-efficient backpropagation through time. Advances in Neural Information Processing Systems 2016, 29. [Google Scholar]

- Graves, A. Supervised sequence labelling. In Supervised sequence labelling with recurrent neural networks; Springer, 2012; pp. 5–13.

- Hochreiter, S.; Schmidhuber, J. Long short-term memory. Neural computation 1997, 9, 1735–1780. [Google Scholar] [CrossRef]

- Cho, K.; van Merriënboer, B.; Bahdanau, D.; Bengio, Y. On the Properties of Neural Machine Translation: Encoder–Decoder Approaches. Syntax, Semantics and Structure in Statistical Translation 2014, p. 103.

- Adosoglou, G.; Lombardo, G.; Pardalos, P.M. Neural network embeddings on corporate annual filings for portfolio selection. Expert Systems with Applications 2021, 164, 114053. [Google Scholar] [CrossRef]

- Adosoglou, G.; Park, S.; Lombardo, G.; Cagnoni, S.; Pardalos, P.M. Lazy Network: A Word Embedding-Based Temporal Financial Network to Avoid Economic Shocks in Asset Pricing Models. Complexity 2022, 2022. [Google Scholar] [CrossRef]

- Campbell, J.Y.; Hilscher, J.; Szilagyi, J. In search of distress risk. The Journal of Finance 2008, 63, 2899–2939. [Google Scholar] [CrossRef]

- Structure, S.I.C.S.M.D. https://www.osha.gov/data/sic-manual.

- Ke, G.; Meng, Q.; Finley, T.; Wang, T.; Chen, W.; Ma, W.; Ye, Q.; Liu, T.Y. Lightgbm: A highly efficient gradient boosting decision tree. Advances in neural information processing systems 2017, 30. [Google Scholar]

- Dorogush, A.V.; Ershov, V.; Gulin, A. CatBoost: gradient boosting with categorical features support. arXiv preprint arXiv:1810.11363 2018. arXiv:1810.11363 2018.

- Yang, S.; Yu, X.; Zhou, Y. Lstm and gru neural network performance comparison study: Taking yelp review dataset as an example. 2020 International workshop on electronic communication and artificial intelligence (IWECAI). IEEE, 2020, pp. 98–101.

- Demšar, J. Statistical comparisons of classifiers over multiple data sets. The Journal of Machine learning research 2006, 7, 1–30. [Google Scholar]

- Benavoli, A.; Corani, G.; Demšar, J.; Zaffalon, M. Time for a change: a tutorial for comparing multiple classifiers through Bayesian analysis. The Journal of Machine Learning Research 2017, 18, 2653–2688. [Google Scholar]

- Rey, D.; Neuhäuser, M. Wilcoxon-signed-rank test. In International encyclopedia of statistical science; Springer, 2011; pp. 1658–1659.

| 1 | |

| 2 |

| Year | Total Firms | Bankruptcy firms | Year | Total firms | Bankruptcy firms |

|---|---|---|---|---|---|

| 2000 | 5308 | 3 | 2010 | 3743 | 23 |

| 2001 | 5226 | 7 | 2011 | 3625 | 35 |

| 2002 | 4897 | 10 | 2012 | 3513 | 25 |

| 2003 | 4651 | 17 | 2013 | 3485 | 26 |

| 2004 | 4417 | 29 | 2014 | 3484 | 28 |

| 2005 | 4348 | 46 | 2015 | 3504 | 33 |

| 2006 | 4205 | 40 | 2016 | 3354 | 33 |

| 2007 | 4128 | 51 | 2017 | 3191 | 29 |

| 2008 | 4009 | 59 | 2018 | 3014 | 21 |

| 2009 | 3857 | 58 | 2019 | 2723 | 36 |

| Average AUC | |||||

| ML models | WL=1 | WL=2 | WL=3 | WL=4 | WL=5 |

| Support Vector Machine | 0.635 | 0.641 | 0.594 | 0.589 | 0.587 |

| Logistic Regression | 0.731 | 0.648 | 0.676 | 0.705 | 0.702 |

| AdaBoost | 0.647 | 0.655 | 0.664 | 0.642 | 0.719 |

| Random Forest | 0.745 | 0.733 | 0.731 | 0.759 | 0.760 |

| Gradient Boosting | 0.716 | 0.702 | 0.729 | 0.685 | 0.742 |

| XGBoost | 0.678 | 0.730 | 0.695 | 0.699 | 0.726 |

| CatBoost | 0.724 | 0.729 | 0.686 | 0.749 | 0.771 |

| LightGBM | 0.751 | 0.699 | 0.671 | 0.741 | 0.736 |

| Average training time [s] | |||||

| ML models | WL=1 | WL=2 | WL=3 | WL=4 | WL=5 |

| Support Vector Machine | 0.032 | 0.036 | 0.036 | 0.034 | 0.033 |

| Logistic Regression | 0.018 | 0.022 | 0.023 | 0.024 | 0.026 |

| AdaBoost | 0.826 | 1.246 | 1.560 | 1.769 | 1.994 |

| Random Forest | 0.799 | 0.973 | 1.033 | 1.034 | 1.059 |

| Gradient Boosting | 1.022 | 1.833 | 2.545 | 3.097 | 3.555 |

| XGBoost | 0.421 | 0.422 | 0.493 | 0.478 | 0.483 |

| CatBoost | 6.670 | 7.059 | 7.088 | 7.383 | 7.398 |

| LightGBM | 0.187 | 0.195 | 0.191 | 0.184 | 0.179 |

| Avg AUC | Max AUC | Avg Training time [s] | Avg AUC | Max AUC | Avg Training time [s] | ||

| Multi-head LSTM | 0.813356 | 0.837 | 103.86 | 0.79 | 0.828 | 82.60 | |

| LSTM | 0.8026 | 0.8415 | 51.80 | 0,7928 | 0,864 | 42.86 | |

| Random Forest | 0.75 | 0.777 | 1.035 | 0.762 | 0.794 | 1.062 | |

| CatBoost | 0.731 | 0.767 | 7.388 | 0.750 | 0.797 | 7.432 | |

| WL=4 | WL =5 | ||||||

| LSTM | Multi-head LSTM | LSTM | Multi-head LSTM | ||

| TP | 88 | 75 | 89 | 71 | |

| TN | 1233 | 2158 | 1071 | 2085 | |

| FN | 8 | 21 | 2 | 20 | |

| FP | 1591 | 666 | 1573 | 559 | |

| AUC score | 0.832 | 0.847 | 0.802 | 0.825 | |

| BAC | 0.677 | 0.773 | 0.692 | 0.784 | |

| micro-f1 | 0.772 | 0.797 | 0.777 | 0.793 | |

| macro-f1 | 0.53 | 0.55 | 0.528 | 0.542 | |

| I Error | 21.74 | 18.45 | 22.65 | 20.5 | |

| II Error | 23.95 | 28.125 | 21.97 | 21.97 | |

| RecBankruptcy | 0.76 | 0.71 | 0.78 | 0.78 | |

| Pr Bankruptcy | 0.106 | 0.117 | 0.106 | 0.115 | |

| Rec Healthy | 0.782 | 0.815 | 0.773 | 0.795 | |

| Pr Healthy | 0.989 | 0.988 | 0.99 | 0.99 | |

| WL = 4 | WL = 5 | ||||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).