Submitted:

15 January 2024

Posted:

16 January 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

- We consider that anytime property is an important problem concerned for the C-DCOP algorithm. Since communication may be halted arbitrarily, an algorithm without the anytime property risk being terminated at an unsatisfactory assignment combination. Therefore, an anytime algorithm guarantees lower bounds on performance in anytime environments when given acceptable starting conditions.

- The MGM algorithm is an iterative, search-based algorithm that performs a distributed local search, and it can guarantee the monotonicity of the solution quality through local interactions. In other words, the MGM algorithm is an anytime algorithm.

- Compared with the anytime algorithms using the BFS pseudo-tree, the MGM algorithm solves problems without any restriction on the graph structure. Specifically, MGM uses a basic constraint graph and local interactions to maintain the privacy of agents.

2. Background

2.1. Distributed Constraint Optimization Problems

- is a set of agents, an agent can control one or more variables.

- is a set of discrete variables, each variable is controlled by one of the agents.

- is a set of discrete domains and each variable takes value from the set .

- is a set of utility functions and each utility function is defined over a set of variables: , where the is the scope of .

- is a mapping function that associates each variable to one agent. In this paper, we assume one agent controls only one variable.

2.2. Continuous Distributed Constraint Optimization Problems

- is the set of continuous variables.

- is the set of continuous domains and each continuous variable takes any value from the domain , where and represent the lower and upper bounds of the domain, respectively.

2.3. Maximum Gain Message

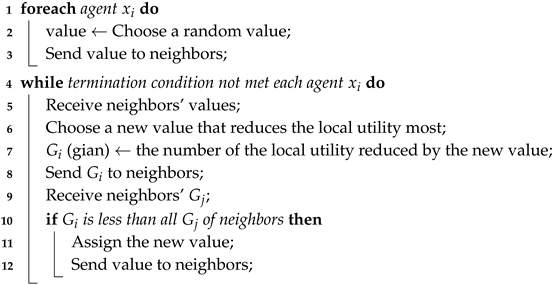

| Algorithm 1: Maximum Gain Message Algorithm (MGM) |

|

3. Our Algorithms

3.1. Continuous Maximum Gain Message

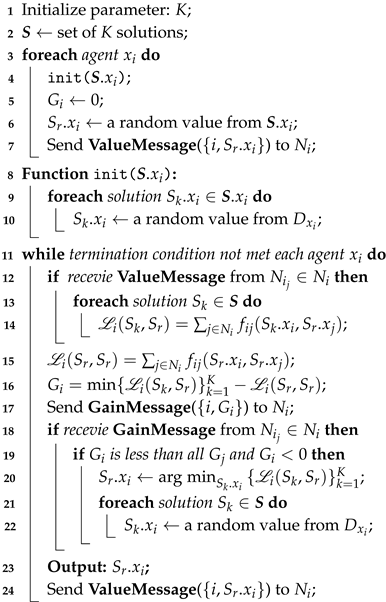

| Algorithm 2: Continuous MGM (C-MGM) |

|

3.2. Parallel C-MGM

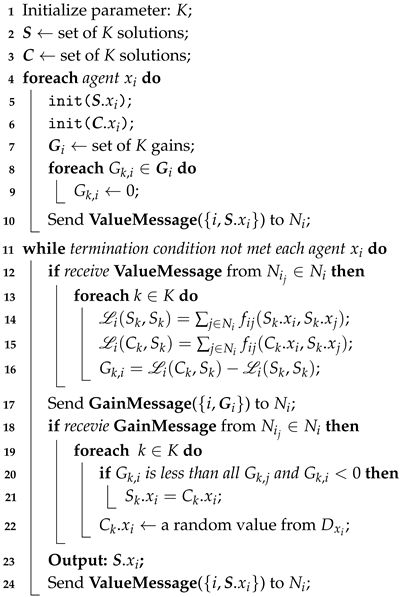

| Algorithm 3: Parallel C-MGM (C-PMGM) |

|

3.3. Parallel Differential Search C-MGM

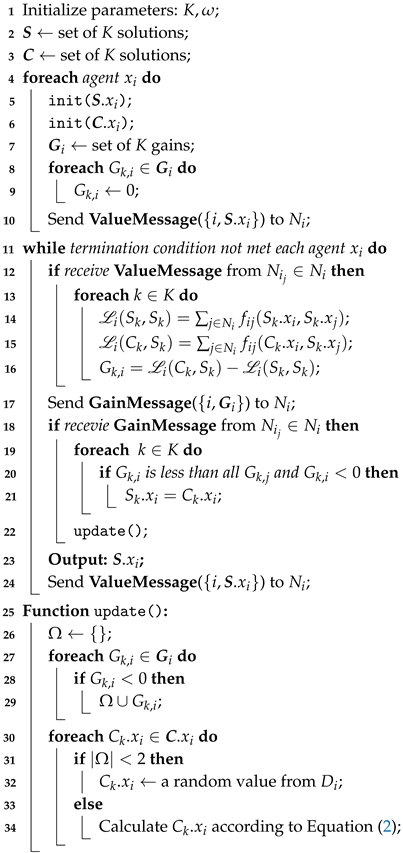

| Algorithm 4: Parallel Differential Search C-MGM (C-PDSM) |

|

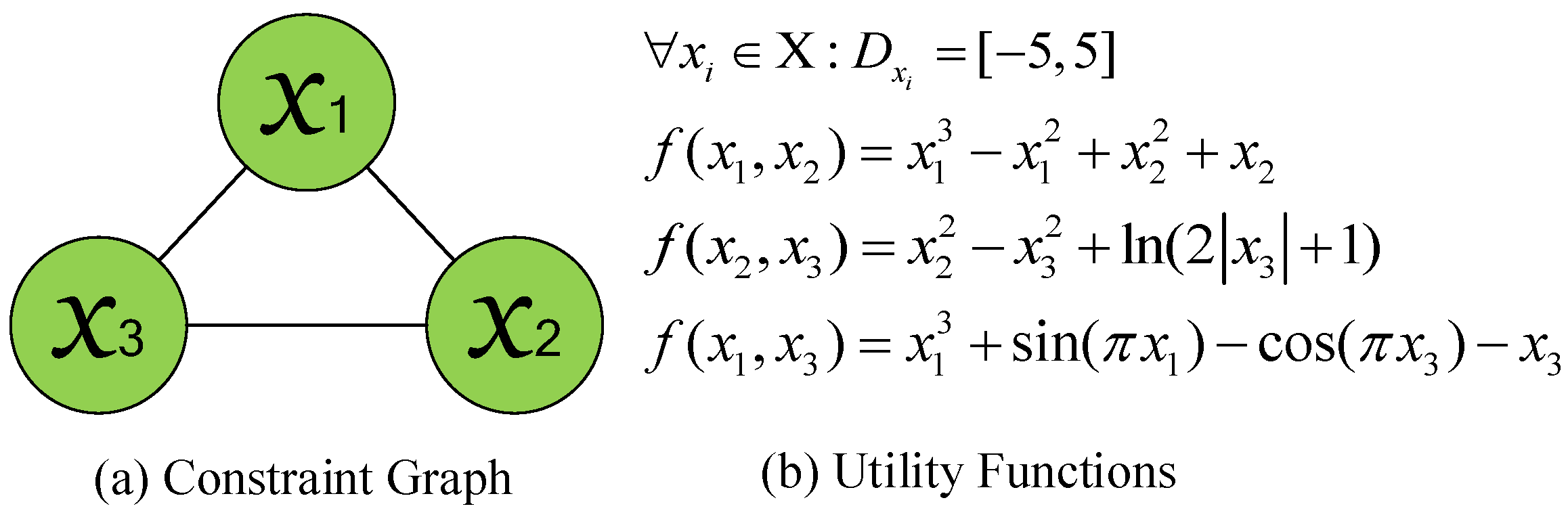

3.4. An Example of Algorithms

- For C-MGM, the values randomly selected by each agent are , , and .

- For C-PMGM and C-PDSM, we assume the competing solution sets both are and the scaling factor in C-PDSM is 1.4.

-

For C-MGM:;

-

For C-PMGM and C-PDSM:;;

-

For C-MGM:;(assumed random values);

-

For C-PMGM:, ;(assumed random values);

-

For C-PDSM:, ;Since both gain values (-189 and -43) are less than 0, .;

4. Theoretical Analysis

5. Experimental Results

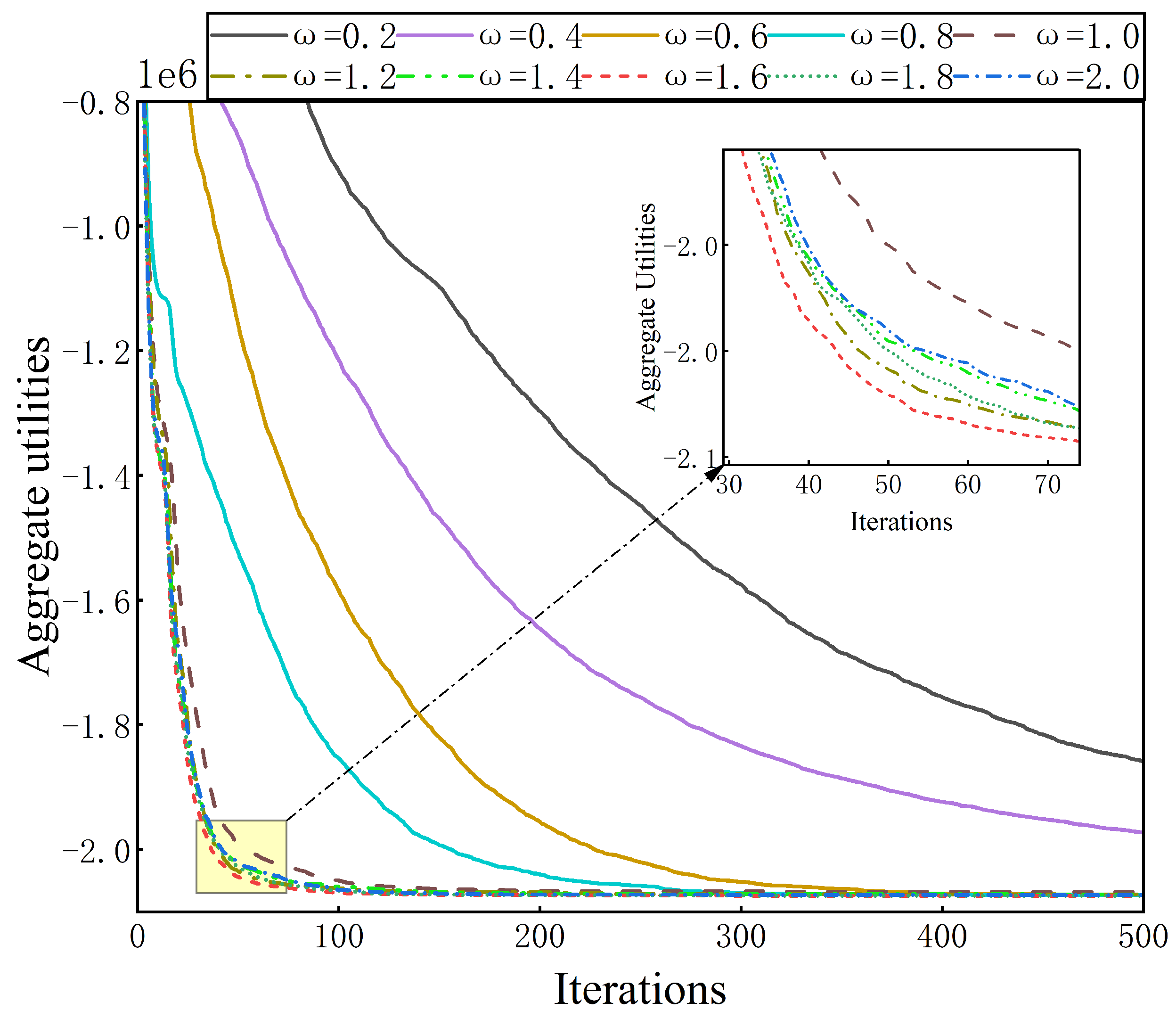

5.1. Parameter Configuration

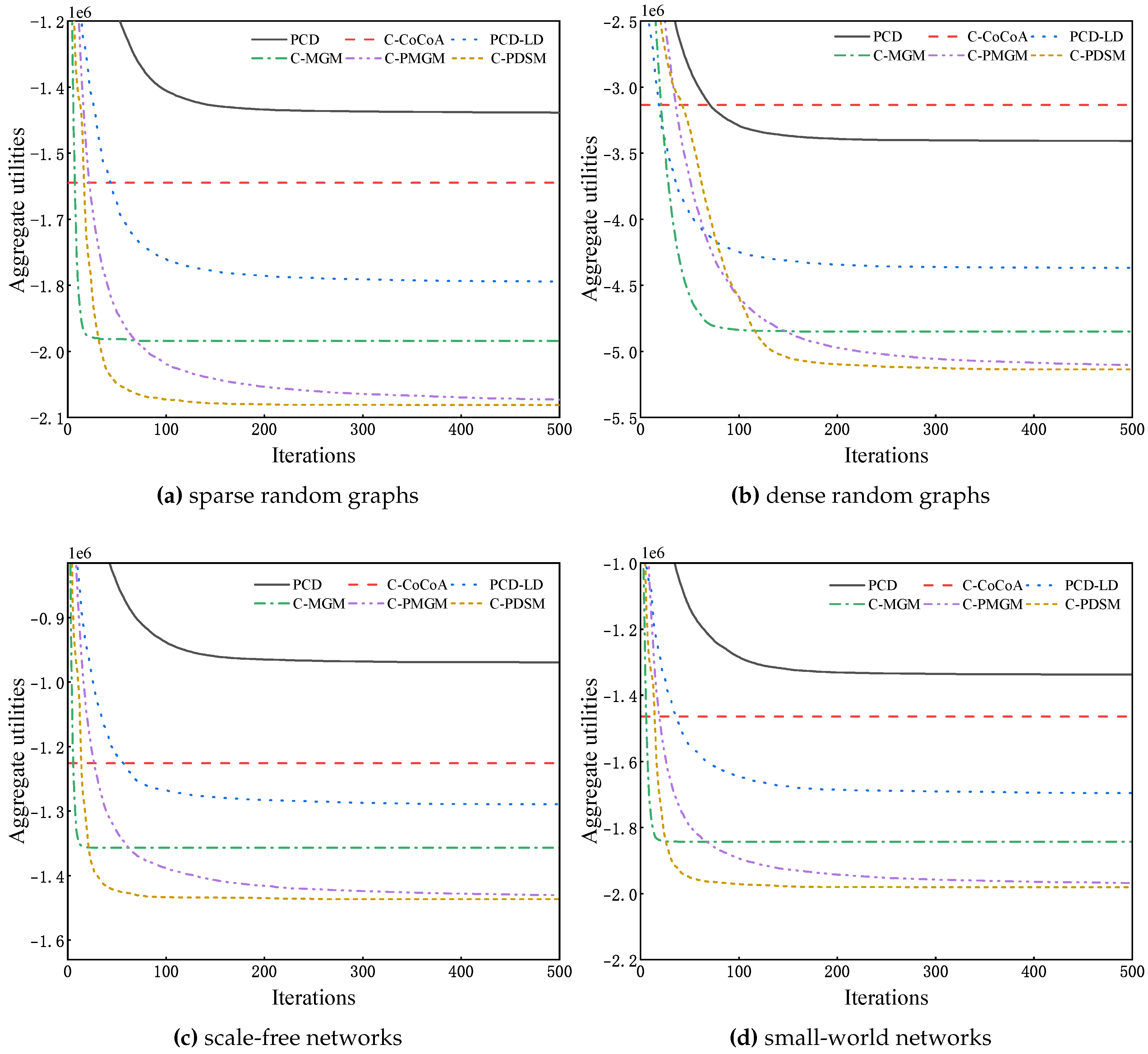

5.2. Comparison of Solution Quality

5.3. Comparison of Algorithms Using Runtime

6. Conclusion and Future Work

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Modi, P.; Shen, W.M.; Tambe, M.; Yokoo, M. ADOPT: Asynchronous Distributed Constraint Optimization with Quality Guarantees. Artificial Intelligence 2005, 161, 149–180. [CrossRef]

- Petcu, A.; Faltings, B. A Scalable Method for Multiagent Constraint Optimization. In Proceedings of the Proceedings of the 19th International Joint Conference on Artificial Intelligence, 2005, IJCAI, pp. 266–271.

- Yeoh, W.; Yokoo, M. Distributed Problem Solving. AI Magazine 2012, 33, 53–65.

- Fioretto, F.; Pontelli, E.; Yeoh, W. Distributed Constraint Optimization Problems and Applications: A Survey. Journal of Artificial Intelligence Research 2018, 61, 623–698. [CrossRef]

- Maheswaran, R.T.; Tambe, M.; Bowring, E.; Pearce, J.P.; Varakantham, P. Taking DCOP to the Real World: Efficient Complete Solutions for Distributed Multi-Event Scheduling. In Proceedings of the Proceedings of the 3rd International Conference on Autonomous Agents and Multiagent Systems, 2004, AAMAS, pp. 310–317.

- Di, B.; Jennings, N.R. A Contract-based Incentive Mechanism for Distributed Meeting Scheduling: Can Agents Who Value Privacy Tell the Truth? Autonomous Agents and Multiagent Systems 2021, 35, 35.

- Farinelli, A.; Rogers, A.; Jennings, N.R. Agent-Based Decentralised Coordination for Sensor Networks Using the Max-sum Algorithms. Autonomous Agents and Multiagent Systems 2014, 28, 337–380. [CrossRef]

- Zivan, R.; Yedidsion, H.; Okamoto, S.; Glinton, R.; Sycara, K.P. Distributed Constraint Optimization for Teams of Mobile Sensing Agents. Autonomous Agents and Multiagent Systems 2015, 29, 495–536. [CrossRef]

- Harel, Y.; Roie, Z. Applying DCOP_MST to a Team of Mobile Robots with Directional Sensing Abilities. In Proceedings of the Proceedings of the 15th International Conference on Autonomous Agents and Multiagent Systems, 2016, AAMAS, pp. 1357–1358.

- Fioretto, F.; Yeoh, W.; Pontelli, E.; Ma, Y.; Ranade, S.J. A Distributed Constraint Optimization (DCOP) Approach to the Economic Dispatch with Demand Response. In Proceedings of the Proceedings of the 16th International Conference on Autonomous Agents and Multiagent Systems, 2017, AAMAS, pp. 999–1007.

- Miller, S.; Ramchurn, S.D.; Rogers, A. Optimal Decentralised Dispatch of Embedded Generation in the Smart Grid. In Proceedings of the Proceedings of the 11st International Conference on Autonomous Agents and Multiagent Systems, 2012, AAMAS, pp. 281–288.

- Rust, P.; Picard, G.; Ramparany, F. Using Message-Passing DCOP Algorithms to Solve Energy-Efficient Smart Environment Configuration Problems. In Proceedings of the Proceedings of the 25th International Joint Conference on Artificial Intelligence, 2016, IJCAI, pp. 468–474.

- Fioretto, F.; Yeoh, W.; Pontelli, E. A Multiagent System Approach to Scheduling Devices in Smart Homes. In Proceedings of the Proceedings of the 16th International Conference on Autonomous Agents and Multiagent Systems, 2017, AAMAS, pp. 981–989.

- Chen, Z.; Deng, Y.; Wu, T. An Iterative Refined Max-sum_AD Algorithm via Single-side Value Propagation and Local Search. In Proceedings of the Proceedings of the 16th International Conference on Autonomous Agents and Multiagent Systems, 2017, AAMAS, pp. 195–202.

- Chen, Z.; Wu, T.; Deng, Y.; Zhang, C. An Ant-based Algorithm to Solve Distributed Constraint Optimization Problems. In Proceedings of the Proceedings of the 32nd AAAI Conference on Artificial Intelligence, 2018, AAAI, pp. 4654–4661.

- Hoang, K.D.; Fioretto, F.; Yeoh, W.; Pontelli, E.; Zivan, R. A Large Neighboring Search Schema for Multi-agent Optimization. In Proceedings of the Proceedings of the 24th International Conference on Principles and Practice of Constraint Programming, 2018, CP, pp. 688–706.

- Khan, M.M.; Tran-Thanh, L.; Jennings, N.R. A Generic Domain Pruning Technique for GDL-Based DCOP Algorithms in Cooperative Multi-Agent Systems. In Proceedings of the Proceedings of the 17th International Conference on Autonomous Agents and Multiagent Systems, 2018, AAMAS, pp. 1595–1603.

- Grinshpoun, T.; Tassa, A.; Levit, V.; Zivan, R. Privacy Preserving Region Optimal Algorithms for Symmetric and Asymmetric DCOPs. Artificial Intelligence 2019, 266, 27–50. [CrossRef]

- Tassa, T.; Grinshpoun, T.; Yanai, A. A Privacy Preserving Collusion Secure DCOP Algorithm. In Proceedings of the Proceedings of the 28th International Joint Conference on Artificial Intelligence, 2019, IJCAI, pp. 4774–4780.

- Tassa, T.; Grinshpoun, T.; Yanai, A. PC-SyncBB: A Privacy Preserving Collusion Secure DCOP Algorithm. Artificial Intelligence 2021, 297, 103501.

- Deng, Y.; Chen, Z.; Chen, D.; Zhang, W.; Jiang, X. AsymDPOP: Complete Inference for Asymmetric Distributed Constraint Optimization Problems. In Proceedings of the Proceedings of the 28th International Joint Conference on Artificial Intelligence, 2019, IJCAI, pp. 223–230.

- van Leeuwen, C.J.; Pawelczak, P. CoCoA: A Non-Iterative Approach to a Local Search (A)DCOP Solver. In Proceedings of the Proceedings of the 35th AAAI Conference on Artificial Intelligence, 2017, AAAI, pp. 3944–3950.

- Zivan, R.; Parash, T.; Cohen-Lavi, L.; Naveh, Y. Applying Max-sum to Asymmetric Distributed Constraint Optimization Problems. Autonomous Agents and Multiagent Systems 2020, 34, 1–29. [CrossRef]

- Deng, Y.; Chen, Z.; Chen, D.; Jiang, X.; Li, Q. PT-ISABB: A Hybrid Tree-based Complete Algorithm to Solve Asymmetric Distributed Constraint Optimization Problems. In Proceedings of the Proceedings of the 18th International Conference on Autonomous Agents and Multiagent Systems, 2019, AAMAS, pp. 1506–1514.

- Hoang, K.D.; Hou, P.; Fioretto, F.; Yeoh, W.; Zivan, R.; Yokoo, M. Infinite-Horizon Proactive Dynamic DCOPs. In Proceedings of the Proceedings of the 16th International Conference on Autonomous Agents and Multiagent Systems, 2017, AAMAS, pp. 212–220.

- Hoang, K.D.; Fioretto, F.; Hou, P.; Yokoo, M.; Yeoh, W.; Zivan, R. Proactive Dynamic Distributed Constraint Optimization. In Proceedings of the Proceedings of the 15th International Conference on Autonomous Agents and Multiagent Systems, 2016, AAMAS, pp. 597–605.

- Hoang, K.D.; Fioretto, F.; Hou, P.; Yeoh, W.; Yokoo, M.; Zivan, R. Proactive Dynamic Distributed Constraint Optimization Problems. Journal Of Artificial Intelligence Research 2022, 74, 179–225. [CrossRef]

- Gutierrez, P.; Meseguer, P.; Yeoh, W. Generalizing ADOPT and BnB-ADOPT. In Proceedings of the Proceedings of the 22th International Joint Conference on Artificial Intelligence, 2011, IJCAI, pp. 554–559.

- Yeoh, W.; Felner, A.; Koenig, S. BnB-ADOPT: An Asynchronous Branch-and-Bound DCOP Algorithm. Journal of Artificial Intelligence Research 2010, 38, 85–133.

- Vinyals, M.; Rodríguez-Aguilar, J.A.; Cerquides, J. Constructing A Unifying Theory of Dynamic Programming DCOP Algorithms via the Generalized Distributive Law. Autonomous Agents and Multiagent Systems 2011, 22, 439–464. [CrossRef]

- Maheswaran, R.T.; Pearce, J.P.; Tambe, M. Distributed Algorithms for DCOP: A Graphical-Game-Based Approach. In Proceedings of the Proceedings of the 17th International Conference on Parallel and Distributed Computing Systems, 2004, PDCS, pp. 432–439.

- Ottens, B.; Dimitrakakis, C.; Faltings, B. DUCT: An Upper Confidence Bound Approach to Distributed Constraint Optimization Problems. ACM Transactions on Intelligent Systems and Technology 2017, 8, 69:1–69:27.

- Nguyen, D.T.; Yeoh, W.; Lau, H.C.; Zivan, R. Distributed Gibbs: A Linear-Space Sampling-Based DCOP Algorithm. Journal of Artificial Intelligence Research 2019, 64, 705–748. [CrossRef]

- Stranders, R.; Farinelli, A.; Rogers, A.; Jennings, N.R. Decentralised Coordination of Continuously Valued Control Parameters Using the Max-sum Algorithm. In Proceedings of the Proceedings of the 8th International Conference on Autonomous Agents and Multiagent Systems, 2009, AAMAS, pp. 601–608.

- Voice, T.; Stranders, R.; Rogers, A.; Jennings, N.R. A Hybrid Continuous Max-Sum Algorithm for Decentralised Coordination. In Proceedings of the Proceedings of the 19th European Conference on Artificial Intelligence, 2010, ECAI, pp. 61–66.

- Fransman, J.; Sijs, J.; Dol, H.; Theunissen, E.; Schutter, B.D. Bayesian-DPOP for Continuous Distributed Constraint Optimization Problems. In Proceedings of the Proceedings of the 18th International Conference on Autonomous Agents and Multiagent Systems, 2019, AAMAS, pp. 1961–1963.

- Choudhury, M.; Mahmud, S.; Khan, M.M. A Particle Swarm Based Algorithm for Functional Distributed Constraint Optimization Problems. In Proceedings of the Proceedings of the 34th AAAI Conference on Artificial Intelligence, 2020, AAAI, pp. 7111–7118.

- Hoang, K.D.; Yeoh, W.; Yokoo, M.; Rabinovich, Z. New Algorithms for Continuous Distributed Constraint Optimization Problems. In Proceedings of the Proceedings of the 19th International Conference on Autonomous Agents and Multiagent Systems, 2020, AAMAS, pp. 502–510.

- Sarker, A.; Choudhury, M.; Khan, M.M. A Local Search Based Approach to Solve Continuous DCOPs. In Proceedings of the Proceedings of the 20th International Conference on Autonomous Agents and Multiagent Systems, 2021, AAMAS, pp. 1127–1135.

- Shi, M.; Liao, X.; Chen, Y. A Particle Swarm with Local Decision Algorithm for Functional Distributed Constraint Optimization Problems. International Journal of Pattern Recognition and Artificial Intelligence 2022, 36, 2259025. [CrossRef]

- Zivan, R.; Okamoto, S.; Peled, H. Explorative Anytime Local Search for Distributed Constraint Optimization. Artificial Intelligence 2014, 212, 1–26. [CrossRef]

- Storn, R.; Price, K.V. Differential Evolution - A Simple and Efficient Heuristic for Global Optimization over Continuous Spaces. Journal of Global Optimization 1997, 11, 341–359. [CrossRef]

- Paul, E.; Rényi, A. On the Evolution of Random Graphs. Publications of the Mathematical Institute of the Hungarian Academy of Sciences 1960, 5, 17–60.

- Albert-László, B.; Réka, A. Emergence of Scaling in Random Networks. Science 1999, 286, 509–512.

- Newman, M.; Watts, D. Renormalization Group Analysis of the Small-World Network Model. Physics Letters A 1999, 263, 341–346. [CrossRef]

| 1 | We are going to consider the minimization in this paper. |

| Number | Type | PCD | C-CcCoA | PCD-LD | C-MGM | C-PMGM | C-PDSM |

|---|---|---|---|---|---|---|---|

| D1 | -1042798 | -1101989 | -1304624 | -1366430 | -1465110 | -1473604 | |

| D2 | -2585639 | -2393154 | -3241010 | -3529010 | -3725781 | -3749835 | |

| D3 | -883870 | -979241 | -1074588 | -1127033 | -1213038 | -1220117 | |

| D4 | -1191145 | -1273078 | -1465341 | -1547525 | -1650405 | -1660851 | |

| D1 | -1259013 | -1387131 | -1576550 | -1657470 | -1798312 | -1810145 | |

| D2 | -3102676 | -2821139 | -3769051 | -4128793 | -4364131 | -4393997 | |

| D3 | -990772 | -1120876 | -1221272 | -1285462 | -1379148 | -1386262 | |

| D4 | -1231794 | -1332859 | -1527462 | -1634378 | -1748604 | -1761438 | |

| D1 | -1407602 | -1567222 | -1791288 | -1926485 | -2059722 | -2071917 | |

| D2 | -3407684 | -3135087 | -4367475 | -4849739 | -5103759 | -5137080 | |

| D3 | -1000479 | -1203417 | -1286422 | -1373971 | -1469692 | -1477799 | |

| D4 | -1337766 | -1463976 | -1695295 | -1842810 | -1967855 | -1980617 |

| Number | Type | PCD (time) | C-CcCoA (time) | PCD-LD (time) | C-MGM (time) | C-PMGM (time) | C-PDSM (time) |

|---|---|---|---|---|---|---|---|

| D1 | -555861 (960) | -1111394 (136) | -896276 (952) | -1355370 (978) | -1004208 (956) | 1150880 (948) | |

| D2 | -1197702 (931) | -2332978 (407) | -2157473 (986) | -2200719 (954) | -1280861 (953) | -1706260 (985) | |

| D3 | -467112 (949) | -1024342 (141) | -774797 (959) | -1127708 (952) | -880713 (927) | -1065422 (941) | |

| D4 | -608475 (956) | -1254330 (157) | -916351 (946) | -1552279 (976) | -1157349 (940) | -1327593 (944) | |

| D1 | -619407 (968) | -1380270 (157) | -1073774 (961) | -1658509 (998) | -1234988 (983) | -1370201 (995) | |

| D2 | -1237887 (907) | -2829167 (486) | -2339043 (883) | -2339654 (988) | -1345707 (944) | -1728215 (938) | |

| D3 | -513043 (987) | -1103239 (126) | -828157 (949) | -1274467 (997) | -988866 (949) | -1171402 (985) | |

| D4 | -610568 (980) | -1340294 (157) | -993353 (966) | -1647306 (972) | -1200160 (965) | -1299064 (950) | |

| D1 | -684657 (988) | -1533802 (188) | -1138453 (923) | -1888946 (992) | -1314583 (942) | -1462948 (979) | |

| D2 | -1197062 (935) | -3176851 (578) | -2445525 (929) | -2501213 (982) | -1346609 (891) | -1764955 (969) | |

| D3 | -531285 (971) | -1204229 (141) | -868545 (987) | -1366420 (981) | -1009219 (950) | -1298292 (973) | |

| D4 | -637169 (956) | -1504482 (159) | -1135444 (932) | -1830023 (954) | -1361162 (989) | -1497704 (942) |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).