Submitted:

24 December 2023

Posted:

27 December 2023

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Problem formulation

3. General architecture of class-incremental learning setups

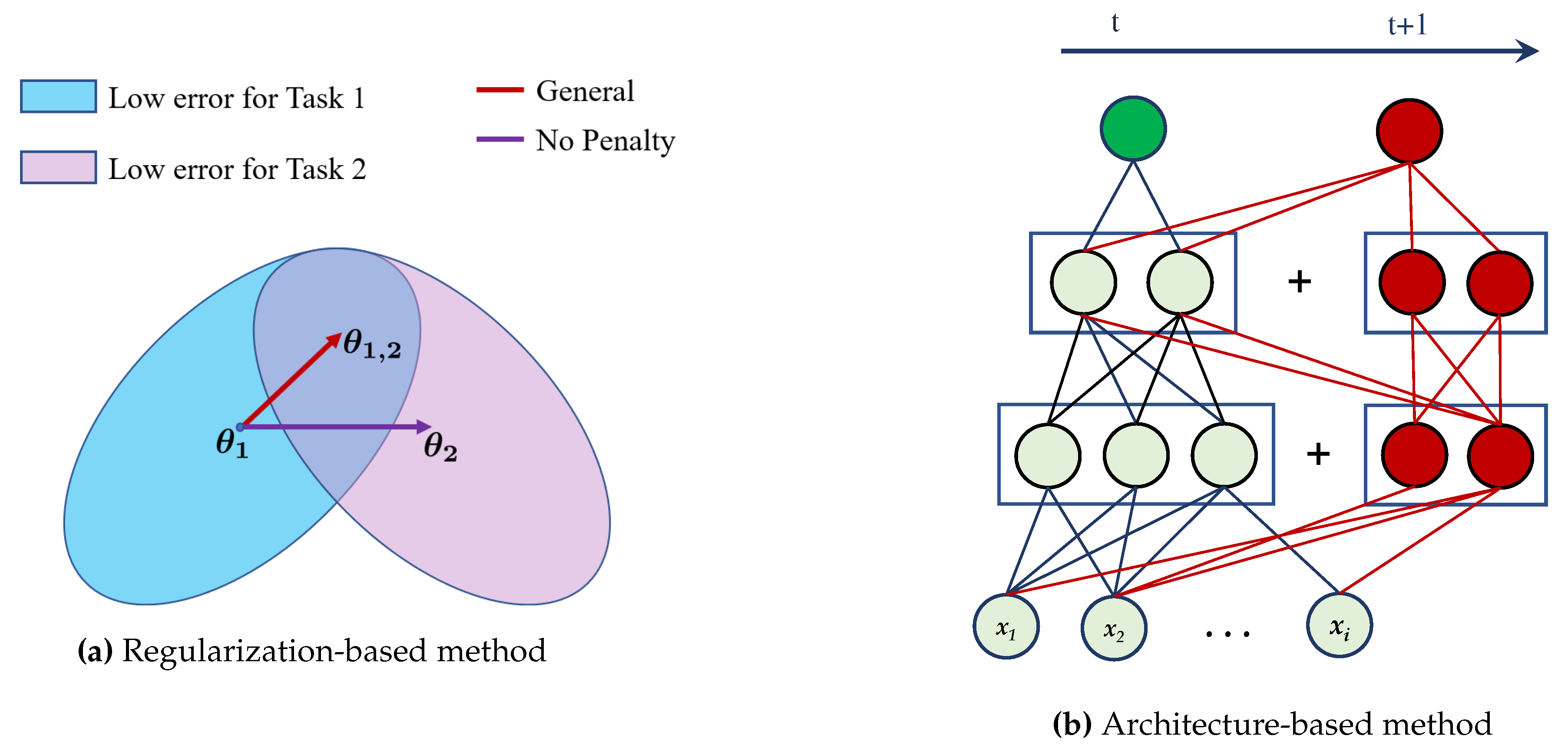

3.1. Regularization-based CIL

3.2. Architecture-based CIL

3.3. Rehearsal-based CIL

4. Literature review

4.1. Approaches in rehearsal-based CIL

4.2. Approaches in regularization-based CIL

4.3. Approaches in architecture-based CIL

5. Our works and methodologies

5.1. Experimental work

5.2. Datasets and Metrics used

6. Future directions

7. Conclusion

References

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. Proceedings of the IEEE conference on computer vision and pattern recognition, 2016, pp. 770–778. [CrossRef]

- Feng, F.; Chan, R.H.; Shi, X.; Zhang, Y.; She, Q. Challenges in task incremental learning for assistive robotics. IEEE Access 2019, 8, 3434–3441. [Google Scholar] [CrossRef]

- Mozaffari, A.; Vajedi, M.; Azad, N.L. A robust safety-oriented autonomous cruise control scheme for electric vehicles based on model predictive control and online sequential extreme learning machine with a hyper-level fault tolerance-based supervisor. Neurocomputing 2015, 151, 845–856. [Google Scholar] [CrossRef]

- Zhang, J.; Li, Y.; Xiao, W.; Zhang, Z. Non-iterative and fast deep learning: Multilayer extreme learning machines. Journal of the Franklin Institute 2020, 357, 8925–8955. [Google Scholar] [CrossRef]

- Power, J.D.; Schlaggar, B.L. Neural plasticity across the lifespan. Wiley Interdisciplinary Reviews: Developmental Biology 2017, 6, e216. [Google Scholar] [CrossRef] [PubMed]

- Zenke, F.; Gerstner, W.; Ganguli, S. The temporal paradox of Hebbian learning and homeostatic plasticity. Current opinion in neurobiology 2017, 43, 166–176. [Google Scholar] [CrossRef] [PubMed]

- Morris, R.G. Do hebb: The organization of behavior, wiley: New york; 1949. Brain research bulletin 1999, 50, 437. [Google Scholar] [CrossRef] [PubMed]

- Abbott, L.F.; Nelson, S.B. Synaptic plasticity: taming the beast. Nature neuroscience 2000, 3, 1178–1183. [Google Scholar] [CrossRef] [PubMed]

- Song, S.; Miller, K.D.; Abbott, L.F. Competitive Hebbian learning through spike-timing-dependent synaptic plasticity. Nature neuroscience 2000, 3, 919–926. [Google Scholar] [CrossRef] [PubMed]

- McClelland, J.L.; McNaughton, B.L.; O’Reilly, R.C. Why there are complementary learning systems in the hippocampus and neocortex: insights from the successes and failures of connectionist models of learning and memory. Psychological review 1995, 102, 419. [Google Scholar] [CrossRef] [PubMed]

- Goodfellow, I.J.; Mirza, M.; Xiao, D.; Courville, A.; Bengio, Y. An empirical investigation of catastrophic forgetting in gradient-based neural networks. arXiv preprint arXiv:1312.6211 2013. [CrossRef]

- Nokhwal, S.; Pahune, S.; Chaudhary, A. EmbAu: A Novel Technique to Embed Audio Data using Shuffled Frog Leaping Algorithm. Proceedings of the 2023 7th International Conference on Intelligent Systems, Metaheuristics & Swarm Intelligence, 2023, pp. 79–86. [CrossRef]

- Tanwer, A.; Reel, P.S.; Reel, S.; Nokhwal, S.; Nokhwal, S.; Hussain, M.; Bist, A.S. System and method for camera based cloth fitting and recommendation, 2020. US Patent App. 16/448,094.

- Kirkpatrick, J.; Pascanu, R.; Rabinowitz, N.; Veness, J.; Desjardins, G.; Rusu, A.A.; Milan, K.; Quan, J.; Ramalho, T.; Grabska-Barwinska, A.; others. Overcoming catastrophic forgetting in neural networks. Proceedings of the national academy of sciences 2017, 114, 3521–3526. [Google Scholar] [CrossRef] [PubMed]

- Li, Z.; Hoiem, D. Learning without forgetting. IEEE transactions on pattern analysis and machine intelligence 2017, 40, 2935–2947. [Google Scholar] [CrossRef] [PubMed]

- Rebuffi, S.A.; Kolesnikov, A.; Sperl, G.; Lampert, C.H. icarl: Incremental classifier and representation learning. Proceedings of the IEEE conference on Computer Vision and Pattern Recognition, 2017, pp. 2001–2010. [CrossRef]

- Losing, V.; Hammer, B.; Wersing, H. Incremental on-line learning: A review and comparison of state of the art algorithms. Neurocomputing 2018, 275, 1261–1274. [Google Scholar] [CrossRef]

- Chefrour, A. Incremental supervised learning: algorithms and applications in pattern recognition. Evolutionary Intelligence 2019, 12, 97–112. [Google Scholar] [CrossRef]

- Chaudhry, A.; Rohrbach, M.; Elhoseiny, M.; Ajanthan, T.; Dokania, P.K.; Torr, P.H.; Ranzato, M. On tiny episodic memories in continual learning. arXiv preprint arXiv:1902.10486 2019. [CrossRef]

- Riemer, M.; Cases, I.; Ajemian, R.; Liu, M.; Rish, I.; Tu, Y.; Tesauro, G. Learning to learn without forgetting by maximizing transfer and minimizing interference. arXiv preprint arXiv:1810.11910 2018. [CrossRef]

- Vitter, J.S. Random sampling with a reservoir. ACM Transactions on Mathematical Software (TOMS) 1985, 11, 37–57. [Google Scholar] [CrossRef]

- Lopez-Paz, D.; Ranzato, M. Gradient episodic memory for continual learning. Advances in neural information processing systems 2017, 30. [Google Scholar]

- Mirzadeh, S.I.; Farajtabar, M.; Pascanu, R.; Ghasemzadeh, H. Understanding the role of training regimes in continual learning. Advances in Neural Information Processing Systems 2020, 33, 7308–7320. [Google Scholar]

- Mirzadeh, S.I.; Farajtabar, M.; Gorur, D.; Pascanu, R.; Ghasemzadeh, H. Linear mode connectivity in multitask and continual learning. arXiv preprint arXiv:2010.04495 2020. [CrossRef]

- Lin, G.; Chu, H.; Lai, H. Towards better plasticity-stability trade-off in incremental learning: A simple linear connector. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2022, pp. 89–98. [CrossRef]

- Wang, S.; Li, X.; Sun, J.; Xu, Z. Training networks in null space of feature covariance for continual learning. Proceedings of the IEEE/CVF conference on Computer Vision and Pattern Recognition, 2021, pp. 184–193. [CrossRef]

- Mallya, A.; Davis, D.; Lazebnik, S. Piggyback: Adapting a single network to multiple tasks by learning to mask weights. Proceedings of the European conference on computer vision (ECCV), 2018, pp. 67–82. [CrossRef]

- Kang, H.; Mina, R.J.L.; Madjid, S.R.H.; Yoon, J.; Hasegawa-Johnson, M.; Hwang, S.J.; Yoo, C.D. Forget-free continual learning with winning subnetworks. International Conference on Machine Learning. PMLR, 2022, pp. 10734–10750.

- Jin, H.; Kim, E. Helpful or Harmful: Inter-task Association in Continual Learning. European Conference on Computer Vision. Springer, 2022, pp. 519–535. [CrossRef]

- Mallya, A.; Lazebnik, S. Packnet: Adding multiple tasks to a single network by iterative pruning. Proceedings of the IEEE conference on Computer Vision and Pattern Recognition, 2018, pp. 7765–7773. [CrossRef]

- Ahn, H.; Cha, S.; Lee, D.; Moon, T. Uncertainty-based continual learning with adaptive regularization. Advances in neural information processing systems 2019, 32. [Google Scholar]

- Golkar, S.; Kagan, M.; Cho, K. Continual learning via neural pruning. arXiv preprint arXiv:1903.04476 2019. [CrossRef]

- Jung, S.; Ahn, H.; Cha, S.; Moon, T. Continual learning with node-importance based adaptive group sparse regularization. Advances in neural information processing systems 2020, 33, 3647–3658. [Google Scholar]

- Gurbuz, M.B.; Dovrolis, C. Nispa: Neuro-inspired stability-plasticity adaptation for continual learning in sparse networks. arXiv preprint arXiv:2206.09117 2022. [CrossRef]

- Yoon, J.; Yang, E.; Lee, J.; Hwang, S.J. Lifelong learning with dynamically expandable networks. arXiv preprint arXiv:1708.01547 2017. [CrossRef]

- Hung, C.Y.; Tu, C.H.; Wu, C.E.; Chen, C.H.; Chan, Y.M.; Chen, C.S. Compacting, picking and growing for unforgetting continual learning. Advances in Neural Information Processing Systems 2019, 32. [Google Scholar]

- Ostapenko, O.; Puscas, M.; Klein, T.; Jahnichen, P.; Nabi, M. Learning to remember: A synaptic plasticity driven framework for continual learning. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2019, pp. 11321–11329. [CrossRef]

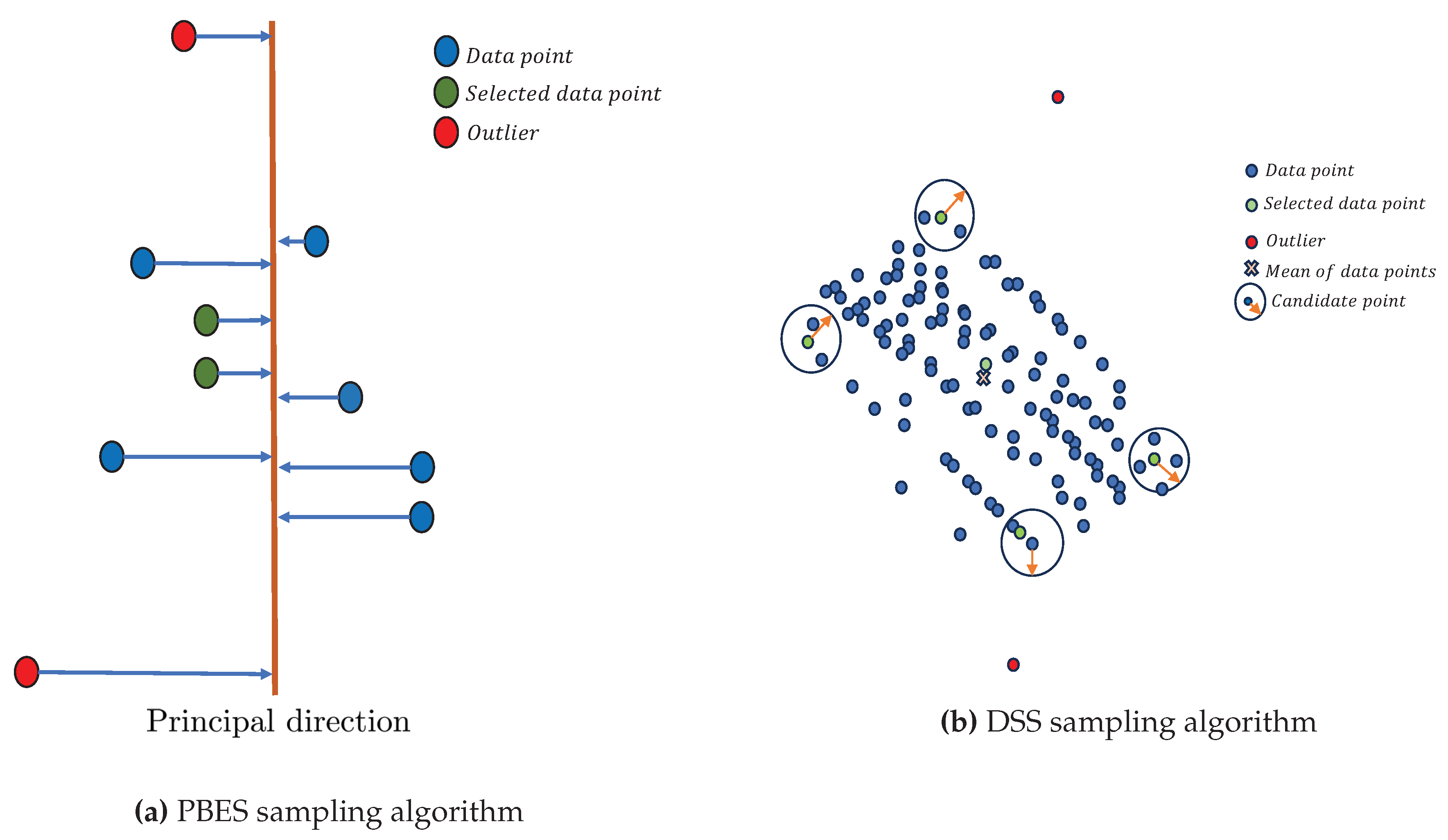

- Nokhwal, S.; Kumar, N. PBES: PCA Based Exemplar Sampling Algorithm for Continual Learning. 2023 2nd International Conference on Informatics (ICI). IEEE, 2023. [CrossRef]

- Gong, C.; Wang, D.; Li, M.; Chandra, V.; Liu, Q. Keepaugment: A simple information-preserving data augmentation approach. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2021, pp. 1055–1064. [CrossRef]

- Nokhwal, S.; Kumar, N. DSS: A Diverse Sample Selection Method to Preserve Knowledge in Class-Incremental Learning. 2023 10th International Conference on Soft Computing & Machine Intelligence (ISCMI). IEEE, 2023. [CrossRef]

- Nokhwal, S.; Kumar, N. RTRA: Rapid Training of Regularization-based Approaches in Continual Learning. 2023 10th International Conference on Soft Computing & Machine Intelligence (ISCMI). IEEE, 2023. [CrossRef]

- Prabhu, A.; Torr, P.H.; Dokania, P.K. Gdumb: A simple approach that questions our progress in continual learning. Computer Vision–ECCV 2020: 16th European Conference, Glasgow, UK, August 23–28, 2020, Proceedings, Part II 16. Springer, 2020, pp. 524–540.

- Bang, J.; Kim, H.; Yoo, Y.; Ha, J.W.; Choi, J. Rainbow memory: Continual learning with a memory of diverse samples. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2021, pp. 8218–8227. [CrossRef]

- Wu, Y.; Chen, Y.; Wang, L.; Ye, Y.; Liu, Z.; Guo, Y.; Fu, Y. Large scale incremental learning. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2019, pp. 374–382. [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).