Submitted:

20 December 2023

Posted:

21 December 2023

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

3. Methodology

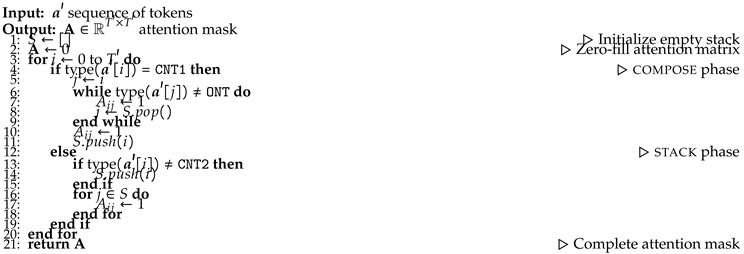

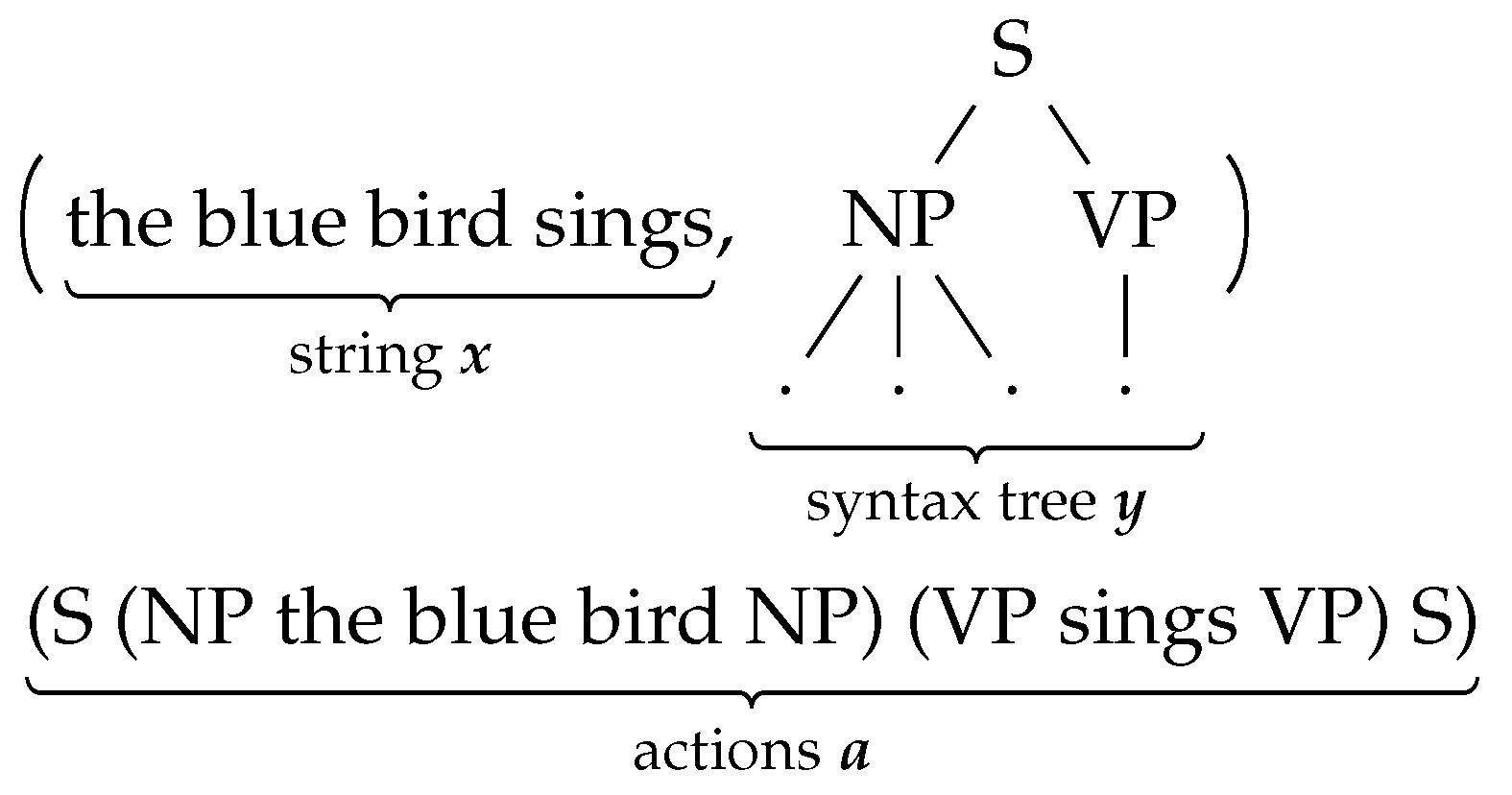

3.1. Recursive syntactic composition via attention

3.1.0.1. Relative positional encoding.

| Algorithm 1 stack/compose attention in SET Models |

|

3.2. Segmentation and Recurrence in SET

- represents the sequence of hidden states, inputted into the next layer,

- is the memory state,

- denotes the attention mask linking the current segment with its memory,

- is the matrix of relative positions.

- Self-Attention in SET.

- Memory Update in SET.

3.3. Properties of SET

- Recursive Composition with SET.

- Context-Modulated Composition in SET.

4. Experiments

- DataSET.

- Experimental Details.

4.1. Language Modeling Perplexity

Experimental Setup

Discussion

4.2. Syntactic Generalization

Experimental Setup

Discussion

5. Conclusions

5.1. Future Work

| 1 | This property, a key advantage of Transformer-XL, forms the basis for our selection of it as the foundational architecture for SET. |

References

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. Proc. of NAACL, 2019. [CrossRef]

- Radford, A.; Wu, J.; Child, R.; Luan, D.; Amodei, D.; Sutskever, I. Language Models are Unsupervised Multitask Learners 2019.

- Wu, S.; Fei, H.; Qu, L.; Ji, W.; Chua, T.S. NExT-GPT: Any-to-Any Multimodal LLM. CoRR 2023, abs/2309.05519.

- Brown, T.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.; Dhariwal, P.; Neelakantan, A.; Shyam, P.; Sastry, G.; Askell, A.; Agarwal, S.; Herbert-Voss, A.; Krueger, G.; Henighan, T.; Child, R.; Ramesh, A.; Ziegler, D.; Wu, J.; Winter, C.; Hesse, C.; Chen, M.; Sigler, E.; Litwin, M.; Gray, S.; Chess, B.; Clark, J.; Berner, C.; McCandlish, S.; Radford, A.; Sutskever, I.; Amodei, D. Language Models are Few-Shot Learners. Advances in Neural Information Processing Systems; Larochelle, H.; Ranzato, M.; Hadsell, R.; Balcan, M.F.; Lin, H., Eds. Curran Associates, Inc., 2020, Vol. 33, pp. 1877–1901.

- Fei, H.; Ren, Y.; Ji, D. Retrofitting Structure-aware Transformer Language Model for End Tasks. Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing, 2020, pp. 2151–2161.

- Peters, M.E.; Neumann, M.; Iyyer, M.; Gardner, M.; Clark, C.; Lee, K.; Zettlemoyer, L. Deep Contextualized Word Representations. Proc. of NAACL, 2018. [CrossRef]

- Chomsky, N. Syntactic Structures; Mouton: The Hague/Paris, 1957.

- Li, J.; Xu, K.; Li, F.; Fei, H.; Ren, Y.; Ji, D. MRN: A Locally and Globally Mention-Based Reasoning Network for Document-Level Relation Extraction. Findings of the Association for Computational Linguistics: ACL-IJCNLP 2021, 2021, pp. 1359–1370.

- Fei, H.; Wu, S.; Ren, Y.; Zhang, M. Matching Structure for Dual Learning. Proceedings of the International Conference on Machine Learning, ICML, 2022, pp. 6373–6391.

- Dyer, C.; Kuncoro, A.; Ballesteros, M.; Smith, N.A. Recurrent Neural Network Grammars. Proceedings of the 2016 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies; Association for Computational Linguistics: San Diego, California, 2016; pp. 199–209. [CrossRef]

- Qian, P.; Naseem, T.; Levy, R.; Fernandez Astudillo, R. Structural Guidance for Transformer Language Models. Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers); Association for Computational Linguistics: Online, 2021; pp. 3735–3745. [CrossRef]

- Kuncoro, A.; Ballesteros, M.; Kong, L.; Dyer, C.; Neubig, G.; Smith, N.A. What Do Recurrent Neural Network Grammars Learn About Syntax? Proceedings of the 15th Conference of the European Chapter of the Association for Computational Linguistics: Volume 1, Long Papers; Association for Computational Linguistics: Valencia, Spain, 2017; pp. 1249–1258.

- Fei, H.; Ren, Y.; Ji, D. Boundaries and edges rethinking: An end-to-end neural model for overlapping entity relation extraction. Information Processing & Management 2020, 57, 102311. [Google Scholar]

- Li, J.; Fei, H.; Liu, J.; Wu, S.; Zhang, M.; Teng, C.; Ji, D.; Li, F. Unified Named Entity Recognition as Word-Word Relation Classification. Proceedings of the AAAI Conference on Artificial Intelligence, 2022, pp. 10965–10973.

- Noji, H.; Oseki, Y. Effective Batching for Recurrent Neural Network Grammars. Findings of the ACL-IJCNLP, 2021. [CrossRef]

- Yogatama, D.; Miao, Y.; Melis, G.; Ling, W.; Kuncoro, A.; Dyer, C.; Blunsom, P. Memory Architectures in Recurrent Neural Network Language Models. Proc. of ICLR, 2018.

- Chung, J.; Ahn, S.; Bengio, Y. Hierarchical Multiscale Recurrent Neural Networks. Proc. of ICLR, 2017.

- Shen, Y.; Tan, S.; Sordoni, A.; Courville, A. Ordered Neurons: Integrating Tree Structures into Recurrent Neural Networks. International Conference on Learning Representations, 2019.

- Wu, S.; Fei, H.; Li, F.; Zhang, M.; Liu, Y.; Teng, C.; Ji, D. Mastering the Explicit Opinion-Role Interaction: Syntax-Aided Neural Transition System for Unified Opinion Role Labeling. Proceedings of the Thirty-Sixth AAAI Conference on Artificial Intelligence, 2022, pp. 11513–11521.

- Shi, W.; Li, F.; Li, J.; Fei, H.; Ji, D. Effective Token Graph Modeling using a Novel Labeling Strategy for Structured Sentiment Analysis. Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), 2022, pp. 4232–4241.

- Fei, H.; Zhang, Y.; Ren, Y.; Ji, D. Latent Emotion Memory for Multi-Label Emotion Classification. Proceedings of the AAAI Conference on Artificial Intelligence, 2020, pp. 7692–7699.

- Wang, F.; Li, F.; Fei, H.; Li, J.; Wu, S.; Su, F.; Shi, W.; Ji, D.; Cai, B. Entity-centered Cross-document Relation Extraction. Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing, 2022, pp. 9871–9881.

- Manning, C.D.; Clark, K.; Hewitt, J.; Khandelwal, U.; Levy, O. Emergent linguistic structure in artificial neural networks trained by self-supervision. Proceedings of the National Academy of Sciences 2020, 117. [CrossRef]

- Ettinger, A. What BERT Is Not: Lessons from a New Suite of Psycholinguistic Diagnostics for Language Models. Transactions of the Association for Computational Linguistics 2020. [Google Scholar] [CrossRef]

- Wang, W.; Bi, B.; Yan, M.; Wu, C.; Bao, Z.; Peng, L.; Si, L. StructBERT: Incorporating Language Structures into Pre-training for Deep Language Understanding. Proc. of ICLR, 2020.

- Fei, H.; Wu, S.; Ren, Y.; Li, F.; Ji, D. Better Combine Them Together! Integrating Syntactic Constituency and Dependency Representations for Semantic Role Labeling. Findings of the Association for Computational Linguistics: ACL/IJCNLP 2021, 2021, pp. 549–559.

- Sundararaman, D.; Subramanian, V.; Wang, G.; Si, S.; Shen, D.; Wang, D.; Carin, L. Syntax-Infused Transformer and BERT models for Machine Translation and Natural Language Understanding. arXiv preprint arXiv:1911.06156v1 2019. arXiv:1911.06156v1 2019.

- Kuncoro, A.; Kong, L.; Fried, D.; Yogatama, D.; Rimell, L.; Dyer, C.; Blunsom, P. Syntactic Structure Distillation Pretraining for Bidirectional Encoders. Transactions of the Association for Computational Linguistics 2020, 8, 776–794, [https://direct.mit.edu/tacl/article-pdf/doi/10.1162/tacl_a_00345/1923888/tacl_a_00345.pdf]. [CrossRef]

- Sachan, D.; Zhang, Y.; Qi, P.; Hamilton, W.L. Do Syntax Trees Help Pre-trained Transformers Extract Information? Proc. of EACL, 2021. [CrossRef]

- Bai, J.; Wang, Y.; Chen, Y.; Yang, Y.; Bai, J.; Yu, J.; Tong, Y. Syntax-BERT: Improving Pre-trained Transformers with Syntax Trees. Proceedings of the 16th Conference of the European Chapter of the Association for Computational Linguistics: Main Volume; Association for Computational Linguistics: Online, 2021; pp. 3011–3020.

- Warstadt, A.; Parrish, A.; Liu, H.; Mohananey, A.; Peng, W.; Wang, S.F.; Bowman, S.R. BLiMP: The Benchmark of Linguistic Minimal Pairs for English. TACL 2020. [CrossRef]

- Pruksachatkun, Y.; Phang, J.; Liu, H.; Htut, P.M.; Zhang, X.; Pang, R.Y.; Vania, C.; Kann, K.; Bowman, S.R. Intermediate-Task Transfer Learning with Pretrained Language Models: When and Why Does It Work? Proc. of ACL, 2020. [CrossRef]

- Jurafsky, D.; Wooters, C.; Segal, J.; Stolcke, A.; Fosler, E.; Tajchman, G.N.; Morgan, N. Using a stochastic context-free grammar as a language model for speech recognition. Proc. of ICASSP, 1995. [CrossRef]

- Chelba, C.; Jelinek, F. Structured Language Modeling. Computer Speech & Language 2000, 14, 283–332. [CrossRef]

- Roark, B. Probabilistic Top-Down Parsing and Language Modeling. Computational Linguistics 2001, 27, 249–276. [Google Scholar] [CrossRef]

- Henderson, J. Discriminative Training of a Neural Network Statistical Parser. Proceedings of the 42nd Annual Meeting of the Association for Computational Linguistics (ACL-04); , 2004; pp. 95–102. [CrossRef]

- Mirowski, P.; Vlachos, A. Dependency Recurrent Neural Language Models for Sentence Completion. Proc. of ACL-IJCNLP, 2015. [CrossRef]

- Choe, D.K.; Charniak, E. Parsing as Language Modeling. Proceedings of the 2016 Conference on Empirical Methods in Natural Language Processing; Association for Computational Linguistics: Austin, Texas, 2016; pp. 2331–2336. [CrossRef]

- Kim, Y.; Rush, A.; Yu, L.; Kuncoro, A.; Dyer, C.; Melis, G. Unsupervised Recurrent Neural Network Grammars. Proc. of NAACL, 2019. [CrossRef]

- Fei, H.; Wu, S.; Li, J.; Li, B.; Li, F.; Qin, L.; Zhang, M.; Zhang, M.; Chua, T.S. LasUIE: Unifying Information Extraction with Latent Adaptive Structure-aware Generative Language Model. Proceedings of the Advances in Neural Information Processing Systems, NeurIPS 2022, 2022, pp. 15460–15475.

- Fei, H.; Ren, Y.; Zhang, Y.; Ji, D.; Liang, X. Enriching contextualized language model from knowledge graph for biomedical information extraction. Briefings in Bioinformatics 2021, 22.

- Strubell, E.; Verga, P.; Andor, D.; Weiss, D.; McCallum, A. Linguistically-Informed Self-Attention for Semantic Role Labeling. Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing; Association for Computational Linguistics: Brussels, Belgium, 2018; pp. 5027–5038. [Google Scholar] [CrossRef]

- Wang, Y.; Lee, H.Y.; Chen, Y.N. Tree Transformer: Integrating Tree Structures into Self-Attention. Proc. of EMNLP-IJCNLP, 2019. [CrossRef]

- Peng, H.; Schwartz, R.; Smith, N.A. PaLM: A Hybrid Parser and Language Model. Proc. of EMNLP-IJCNLP, 2019. [CrossRef]

- Fei, H.; Liu, Q.; Zhang, M.; Zhang, M.; Chua, T.S. Scene Graph as Pivoting: Inference-time Image-free Unsupervised Multimodal Machine Translation with Visual Scene Hallucination. Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), 2023, pp. 5980–5994.

- Zhang, Z.; Wu, Y.; Zhou, J.; Duan, S.; Zhao, H.; Wang, R. SG-Net: Syntax-Guided Machine Reading Comprehension. The Thirty-Fourth AAAI Conference on Artificial Intelligence, AAAI 2020, The Thirty-Second Innovative Applications of Artificial Intelligence Conference, IAAI 2020, The Tenth AAAI Symposium on Educational Advances in Artificial Intelligence, EAAI 2020, New York, NY, USA, February 7-12, 2020. AAAI Press, 2020, pp. 9636–9643.

- Nguyen, X.; Joty, S.R.; Hoi, S.C.H.; Socher, R. Tree-Structured Attention with Hierarchical Accumulation. 8th International Conference on Learning Representations, ICLR 2020, Addis Ababa, Ethiopia, April 26-30, 2020. OpenReview.net, 2020.

- Astudillo, R.F.; Ballesteros, M.; Naseem, T.; Blodgett, A.; Florian, R. Transition-based Parsing with Stack-Transformers. Findings of EMNLP, 2020. [CrossRef]

- Fei, H.; Zhang, M.; Ji, D. Cross-Lingual Semantic Role Labeling with High-Quality Translated Training Corpus. Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, 2020, pp. 7014–7026.

- Wu, S.; Fei, H.; Ren, Y.; Ji, D.; Li, J. Learn from Syntax: Improving Pair-wise Aspect and Opinion Terms Extraction with Rich Syntactic Knowledge. Proceedings of the Thirtieth International Joint Conference on Artificial Intelligence, 2021, pp. 3957–3963.

- Wilcox, E.; Qian, P.; Futrell, R.; Ballesteros, M.; Levy, R. Structural Supervision Improves Learning of Non-Local Grammatical Dependencies. Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers); Association for Computational Linguistics: Minneapolis, Minnesota, 2019; pp. 3302–3312. [CrossRef]

- Futrell, R.; Wilcox, E.; Morita, T.; Qian, P.; Ballesteros, M.; Levy, R. Neural language models as psycholinguistic subjects: Representations of syntactic state. Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers); Association for Computational Linguistics: Minneapolis, Minnesota, 2019; pp. 32–42. [CrossRef]

- Vinyals, O.; Kaiser, L.; Koo, T.; Petrov, S.; Sutskever, I.; Hinton, G. Grammar as a Foreign Language. Advances in Neural Information Processing Systems; Cortes, C.; Lawrence, N.; Lee, D.; Sugiyama, M.; Garnett, R., Eds. Curran Associates, Inc., 2015, Vol. 28.

- Dai, Z.; Yang, Z.; Yang, Y.; Carbonell, J.; Le, Q.; Salakhutdinov, R. Transformer-XL: Attentive Language Models beyond a Fixed-Length Context. Proc. of ACL, 2019. [CrossRef]

- Hahn, M. Theoretical Limitations of Self-Attention in Neural Sequence Models. Transactions of the Association for Computational Linguistics 2020, 8, 156–171, [https://direct.mit.edu/tacl/article-pdf/doi/10.1162/tacl_a_00306/1923102/tacl_a_00306.pdf]. [CrossRef]

- Bowman, S.R.; Gauthier, J.; Rastogi, A.; Gupta, R.; Manning, C.D.; Potts, C. A Fast Unified Model for Parsing and Sentence Understanding. Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers); Association for Computational Linguistics: Berlin, Germany, 2016; pp. 1466–1477. [CrossRef]

- Marcus, M.P.; Santorini, B.; Marcinkiewicz, M.A. Building a Large Annotated Corpus of English: The Penn Treebank. Computational Linguistics 1993, 19, 313–330.

- Charniak, E.; Blaheta, D.; Ge, N.; Hall, K.; Hale, J.; Johnson, M. BLLIP 1987–89 WSJ Corpus Release 1, LDC2000T43. LDC2000T43. Linguistic Data Consortium 2000, 36.

- Hu, J.; Gauthier, J.; Qian, P.; Wilcox, E.; Levy, R. A Systematic Assessment of Syntactic Generalization in Neural Language Models. Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics; Association for Computational Linguistics: Online, 2020; pp. 1725–1744. [CrossRef]

- Petrov, S.; Klein, D. Improved Inference for Unlexicalized Parsing. Human Language Technologies 2007: The Conference of the North American Chapter of the Association for Computational Linguistics; Proceedings of the Main Conference; Association for Computational Linguistics: Rochester, New York, 2007; pp. 404–411.

- Kudo, T.; Richardson, J. SentencePiece: A simple and language independent subword tokenizer and detokenizer for Neural Text Processing. Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing: System Demonstrations; Association for Computational Linguistics: Brussels, Belgium, 2018; pp. 66–71. [CrossRef]

- Rae, J.W.; Borgeaud, S.; Cai, T.; Millican, K.; Hoffmann, J.; Song, H.F.; Aslanides, J.; Henderson, S.; Ring, R.; Young, S.; Rutherford, E.; Hennigan, T.; Menick, J.; Cassirer, A.; Powell, R.; van den Driessche, G.; Hendricks, L.A.; Rauh, M.; Huang, P.; Glaese, A.; Welbl, J.; Dathathri, S.; Huang, S.; Uesato, J.; Mellor, J.; Higgins, I.; Creswell, A.; McAleese, N.; Wu, A.; Elsen, E.; Jayakumar, S.M.; Buchatskaya, E.; Budden, D.; Sutherland, E.; Simonyan, K.; Paganini, M.; Sifre, L.; Martens, L.; Li, X.L.; Kuncoro, A.; Nematzadeh, A.; Gribovskaya, E.; Donato, D.; Lazaridou, A.; Mensch, A.; Lespiau, J.; Tsimpoukelli, M.; Grigorev, N.; Fritz, D.; Sottiaux, T.; Pajarskas, M.; Pohlen, T.; Gong, Z.; Toyama, D.; de Masson d’Autume, C.; Li, Y.; Terzi, T.; Mikulik, V.; Babuschkin, I.; Clark, A.; de Las Casas, D.; Guy, A.; Jones, C.; Bradbury, J.; Johnson, M.; Hechtman, B.A.; Weidinger, L.; Gabriel, I.; Isaac, W.S.; Lockhart, E.; Osindero, S.; Rimell, L.; Dyer, C.; Vinyals, O.; Ayoub, K.; Stanway, J.; Bennett, L.; Hassabis, D.; Kavukcuoglu, K.; Irving, G. Scaling Language Models: Methods, Analysis & Insights from Training Gopher. CoRR 2021, abs/2112.11446v2.

- Hoffmann, J.; Borgeaud, S.; Mensch, A.; Buchatskaya, E.; Cai, T.; Rutherford, E.; Casas, D.d.L.; Hendricks, L.A.; Welbl, J.; Clark, A.; Hennigan, T.; Noland, E.; Millican, K.; Driessche, G.v.d.; Damoc, B.; Guy, A.; Osindero, S.; Simonyan, K.; Elsen, E.; Rae, J.W.; Vinyals, O.; Sifre, L. Training Compute-Optimal Large Language Models. CoRR 2022, abs/2203.15556v1. [CrossRef]

| Perplexity (↓) | SG (↑) | (↑) | |||

|---|---|---|---|---|---|

| PTB | BLLIP sent. | BLLIP doc. | BLLIP sent. | PTB | |

| SET | 61.8 ± 0.2 | 30.3 ± 0.5 | 26.3 ± 0.1 | 82.5 ± 1.6 | 93.7 ± 0.1 |

| TXL (trees) | 61.2 ± 0.3 | 29.8 ± 0.4 | 22.1 ± 0.1 | 80.2 ± 1.6 | 93.6 ± 0.1 |

| TXL (terminals) | 62.6 ± 0.2 | 31.2 ± 0.4 | 23.1 ± 0.1 | 69.5 ± 2.1 | n/a |

| RNNG [10] | 105.2 | n/a | n/a | n/a | 93.3 |

| PLM-Mask [11] | n/a | 49.1 | n/a | 74.8 | n/a |

| Batched RNNG [15] | n/a | 62.9 | n/a | 81.4 | n/a |

| GPT-2 [2] | n/a | n/a | n/a | 78.4 | n/a |

| Gopher [62] | n/a | n/a | n/a | 79.5 | n/a |

| Chinchilla [63] | n/a | n/a | n/a | 79.7 | n/a |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).