Submitted:

22 November 2023

Posted:

23 November 2023

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Works

2.1. Visual Quality of Street Space

2.2. Multimodal Large Language Models

3. Data and Method

3.1. Data

3.1.1. Data Collection

3.1.2. Estimation Settings

3.2. Overview of Our Pipeline

3.3. Knowledge Distillation From GPT-4

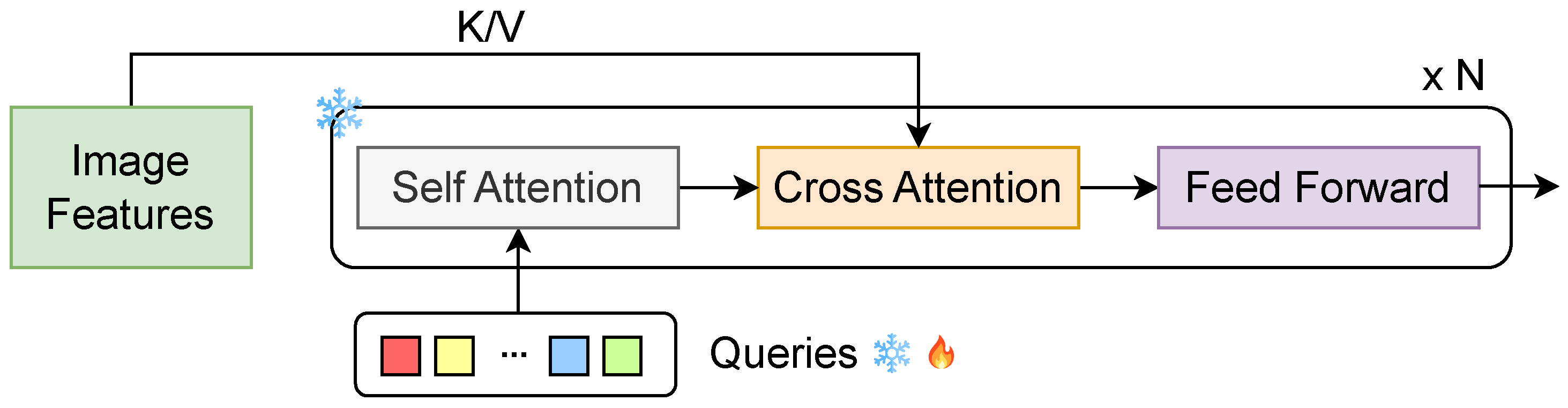

3.4. SQ-GPT

4. Results

4.1. Experimental Settings

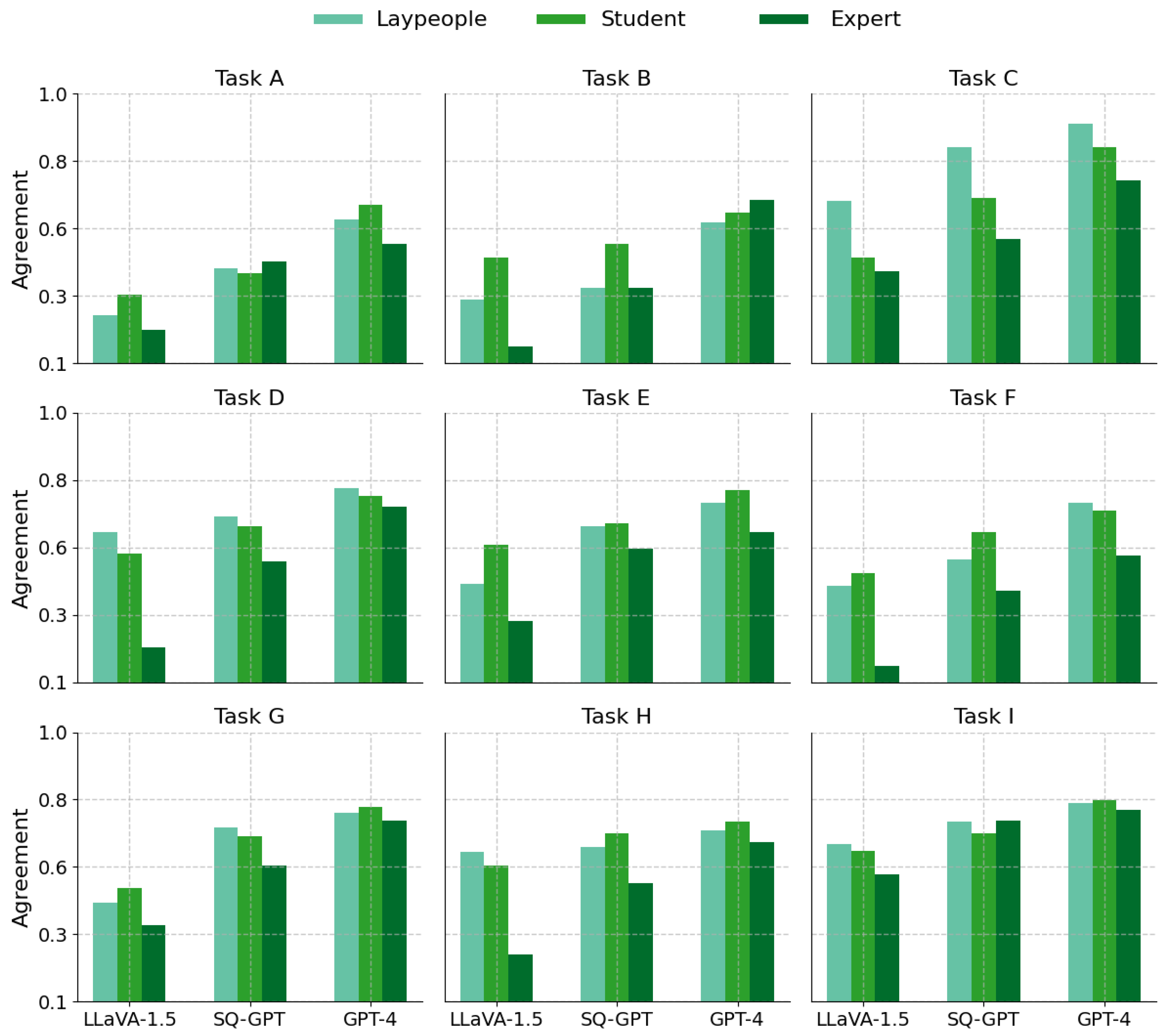

4.2. Answer Quality Analysis

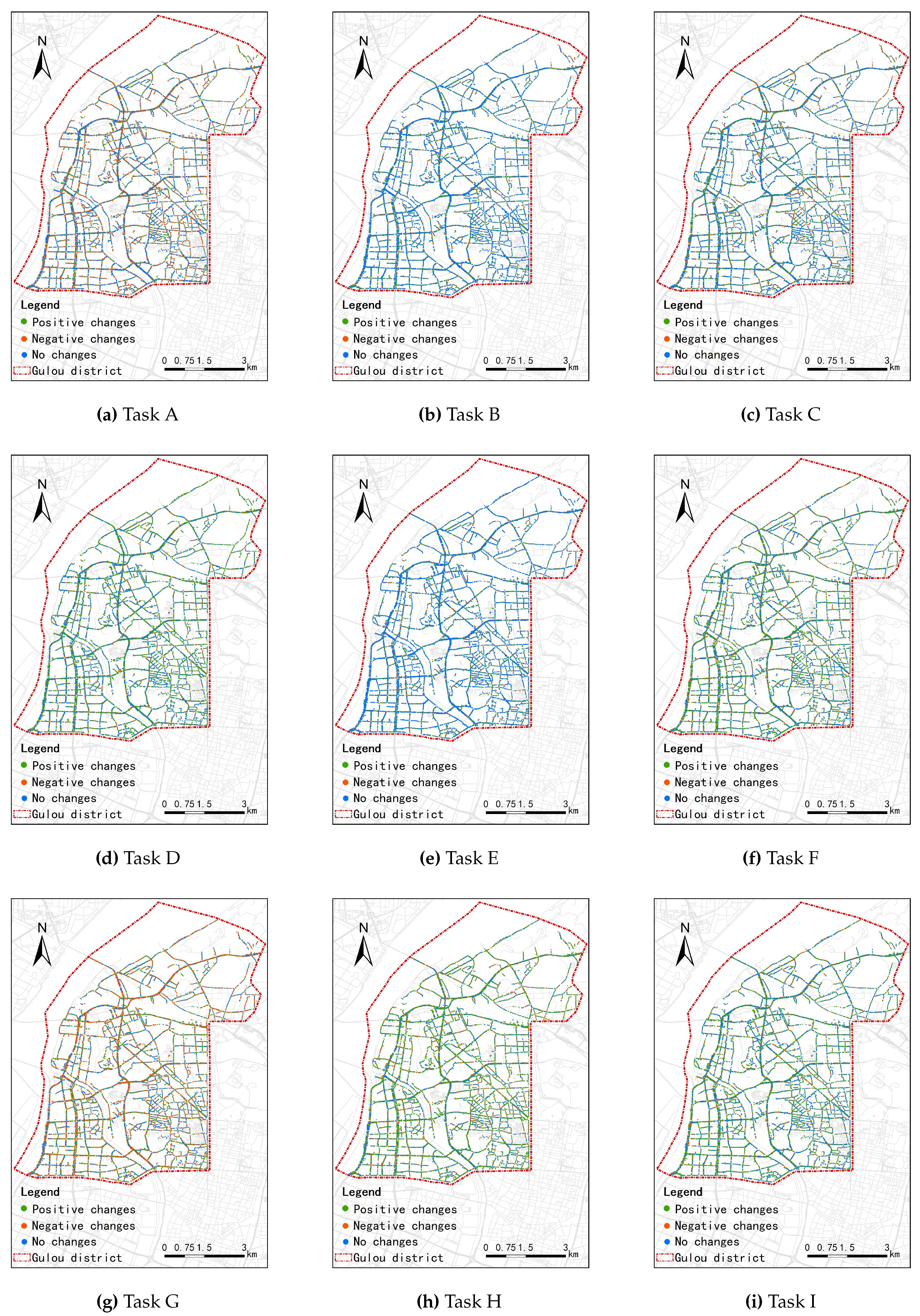

4.3. Visualization of VQoSS Estimation

5. Discussion

6. Conclusion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Mouratidis, K. Urban planning and quality of life: A review of pathways linking the built environment to subjective well-being. Cities 2021, 115, 103229. [Google Scholar] [CrossRef]

- Meyrick, K.; Newman, P. Exploring the potential connection between place capital and health capital in the post COVID-19 city. npj Urban Sustain. 2023, 3, 44. [Google Scholar] [CrossRef]

- Sharifi, A.; Khavarian-Garmsir, A.R. The COVID-19 pandemic: Impacts on cities and major lessons for urban planning, design, and management. Sci. Total Environ. 2020, 749, 142391. [Google Scholar] [CrossRef] [PubMed]

- Tang, J.; Long, Y. Measuring visual quality of street space and its temporal variation: Methodology and its application in the Hutong area in Beijing. Landsc. Urban Plan. 2019, 191, 103436. [Google Scholar] [CrossRef]

- Whyte, W.H.; others. The social life of small urban spaces; Conservation Foundation: Washington, DC, USA, 1980. [Google Scholar]

- Balogun, A.L.; Marks, D.; Sharma, R.; Shekhar, H.; Balmes, C.; Maheng, D.; Arshad, A.; Salehi, P. Assessing the potentials of digitalization as a tool for climate change adaptation and sustainable development in urban centres. Sustain. Cities Soc. 2020, 53, 101888. [Google Scholar] [CrossRef]

- Biljecki, F.; Ito, K. Street view imagery in urban analytics and GIS: A review. Landsc. Urban Plan. 2021, 215, 104217. [Google Scholar] [CrossRef]

- Wang, M.; He, Y.; Meng, H.; Zhang, Y.; Zhu, B.; Mango, J.; Li, X. Assessing street space quality using street view imagery and function-driven method: The case of Xiamen, China. ISPRS Int. J. Geo-Inf. 2022, 11, 282. [Google Scholar] [CrossRef]

- Toth, C.; Jóźków, G. Remote sensing platforms and sensors: A survey. ISPRS J. Photogramm. Remote Sens. 2016, 115, 22–36. [Google Scholar] [CrossRef]

- Cheng, G.; Xie, X.; Han, J.; Guo, L.; Xia, G.S. Remote sensing image scene classification meets deep learning: Challenges, methods, benchmarks, and opportunities. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2020, 13, 3735–3756. [Google Scholar] [CrossRef]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef]

- Goodfellow, I.; Bengio, Y.; Courville, A. Deep Learning, MIT press, 2016.

- Gong, Z.; Ma, Q.; Kan, C.; Qi, Q. Classifying street spaces with street view images for a spatial indicator of urban functions. Sustainability 2019, 11, 6424. [Google Scholar] [CrossRef]

- Li, Y.; Yabuki, N.; Fukuda, T. Measuring visual walkability perception using panoramic street view images, virtual reality, and deep learning. Sustain. Cities Soc. 2022, 86, 104140. [Google Scholar] [CrossRef]

- Pan, S.J.; Yang, Q. A survey on transfer learning. IEEE Trans. Knowl. Data Eng. 2009, 22, 1345–1359. [Google Scholar] [CrossRef]

- Tan, C.; Sun, F.; Kong, T.; Zhang, W.; Yang, C.; Liu, C. A survey on deep transfer learning. In Proceedings of the Artificial Neural Networks and Machine Learning–ICANN 2018: 27th International Conference on Artificial Neural Networks, Rhodes, Greece, 4–7 October 2018; Proceedings, Part III 27. Springer, 2018; pp. 270–279. [Google Scholar]

- Aurigi, A.; Odendaal, N. From “smart in the box” to “smart in the city”: Rethinking the socially sustainable smart city in context. In Sustainable Smart City Transitions; Routledge, 2022; pp. 53–68. [Google Scholar]

- Wang, J.; Wang, X.; Shen, T.; Wang, Y.; Li, L.; Tian, Y.; Yu, H.; Chen, L.; Xin, J.; Wu, X.; others. Parallel vision for long-tail regularization: Initial results from IVFC autonomous driving testing. IEEE Trans. Intell. Veh. 2022, 7, 286–299. [Google Scholar] [CrossRef]

- Zhao, W.X.; Zhou, K.; Li, J.; Tang, T.; Wang, X.; Hou, Y.; Min, Y.; Zhang, B.; Zhang, J.; Dong, Z.; Du, Y.; Yang, C.; Chen, Y.; Chen, Z.; Jiang, J.; Ren, R.; Li, Y.; Tang, X.; Liu, Z.; Liu, P.; Nie, J.Y.; Wen, J.R. A Survey of Large Language Models. arXiv 2023, arXiv:2303.18223. [Google Scholar]

- OpenAI, n. OpenAI. "ChatGPT." 2023 https://www.openai.com/research/chatgpt.

- Yin, S.; Fu, C.; Zhao, S.; Li, K.; Sun, X.; Xu, T.; Chen, E. A Survey on Multimodal Large Language Models. arXiv 2023, arXiv:2306.13549. [Google Scholar]

- OpenAI. GPT-4 Technical Report. arXiv 2023. [Google Scholar]

- Li, J.; Li, D.; Savarese, S.; Hoi, S. Blip-2: Bootstrapping language-image pre-training with frozen image encoders and large language models. arXiv 2023, arXiv:2301.12597. [Google Scholar]

- Li, K.; He, Y.; Wang, Y.; Li, Y.; Wang, W.; Luo, P.; Wang, Y.; Wang, L.; Qiao, Y. VideoChat: Chat-Centric Video Understanding. arXiv 2023, arXiv:2305.06355. [Google Scholar]

- Liu, H.; Li, C.; Wu, Q.; Lee, Y.J. Visual Instruction Tuning. NeurIPS, 2023.

- Zhu, D.; Chen, J.; Shen, X.; Li, X.; Elhoseiny, M. MiniGPT-4: Enhancing Vision-Language Understanding with Advanced Large Language Models. arXiv 2023, arXiv:2304.10592. [Google Scholar]

- Nori, H.; King, N.; McKinney, S.M.; Carignan, D.; Horvitz, E. Capabilities of gpt-4 on medical challenge problems. arXiv 2023, arXiv:2303.13375. [Google Scholar]

- Chen, P.; Liu, S.; Zhao, H.; Jia, J. Distilling knowledge via knowledge review. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; 2021; pp. 5008–5017. [Google Scholar]

- Gou, J.; Yu, B.; Maybank, S.J.; Tao, D. Knowledge distillation: A survey. Int. J. Comput. Vis. 2021, 129, 1789–1819. [Google Scholar] [CrossRef]

- Wei, C.; Meng, J.; Zhu, L.; Han, Z. Assessing progress towards sustainable development goals for Chinese urban land use: A new cloud model approach. J. Environ. Manag. 2023, 326, 116826. [Google Scholar] [CrossRef] [PubMed]

- Liu, M.; Zhang, B.; Luo, T.; Liu, Y.; Portnov, B.A.; Trop, T.; Jiao, W.; Liu, H.; Li, Y.; Liu, Q. Evaluating street lighting quality in residential areas by combining remote sensing tools and a survey on pedestrians’ perceptions of safety and visual comfort. Remote Sens. 2022, 14, 826. [Google Scholar] [CrossRef]

- Ye, N.; Wang, B.; Kita, M.; Xie, M.; Cai, W. Urban Commerce Distribution Analysis Based on Street View and Deep Learning. IEEE Access 2019, 7, 162841–162849. [Google Scholar] [CrossRef]

- Li, Y.; Yabuki, N.; Fukuda, T. Integrating GIS, deep learning, and environmental sensors for multicriteria evaluation of urban street walkability. Landsc. Urban Plan. 2023, 230, 104603. [Google Scholar] [CrossRef]

- Mahabir, R.; Schuchard, R.; Crooks, A.; Croitoru, A.; Stefanidis, A. Crowdsourcing street view imagery: A comparison of mapillary and OpenStreetCam. ISPRS Int. J. Geo-Inf. 2020, 9, 341. [Google Scholar] [CrossRef]

- Zhang, J.; Yu, Z.; Li, Y.; Wang, X. Uncovering Bias in Objective Mapping and Subjective Perception of Urban Building Functionality: A Machine Learning Approach to Urban Spatial Perception. Land 2023, 12, 1322. [Google Scholar] [CrossRef]

- Wang, B.; Zhang, J.; Zhang, R.; Li, Y.; Li, L.; Nakashima, Y. Improving Facade Parsing with Vision Transformers and Line Integration. arXiv 2023, arXiv:2309.15523. [Google Scholar] [CrossRef]

- Clifton, K.; Ewing, R.; Knaap, G.J.; Song, Y. Quantitative analysis of urban form: a multidisciplinary review. J. Urban. 2008, 1, 17–45. [Google Scholar] [CrossRef]

- Zhang, J.; Fukuda, T.; Yabuki, N. Automatic generation of synthetic datasets from a city digital twin for use in the instance segmentation of building facades. J. Comput. Des. Eng. 2022, 9, 1737–1755. [Google Scholar] [CrossRef]

- Zhang, J.; Fukuda, T.; Yabuki, N. Development of a city-scale approach for façade color measurement with building functional classification using deep learning and street view images. ISPRS Int. J. Geo-Inf. 2021, 10, 551. [Google Scholar] [CrossRef]

- Badrinarayanan, V.; Kendall, A.; Cipolla, R. Segnet: A deep convolutional encoder-decoder architecture for image segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 2481–2495. [Google Scholar] [CrossRef] [PubMed]

- Li, X.; Li, Y.; Jia, T.; Zhou, L.; Hijazi, I.H. The six dimensions of built environment on urban vitality: Fusion evidence from multi-source data. Cities 2022, 121, 103482. [Google Scholar] [CrossRef]

- Koo, B.W.; Guhathakurta, S.; Botchwey, N. How are neighborhood and street-level walkability factors associated with walking behaviors? A big data approach using street view images. Environ. Behav. 2022, 54, 211–241. [Google Scholar] [CrossRef]

- Hoffmann, E.J.; Wang, Y.; Werner, M.; Kang, J.; Zhu, X.X. Model fusion for building type classification from aerial and street view images. Remote Sens. 2019, 11, 1259. [Google Scholar] [CrossRef]

- Gebru, T.; Krause, J.; Wang, Y.; Chen, D.; Deng, J.; Aiden, E.L.; Fei-Fei, L. Using deep learning and Google Street View to estimate the demographic makeup of neighborhoods across the United States. Proc. Natl. Acad. Sci. USA 2017, 114, 13108–13113. [Google Scholar] [CrossRef]

- Baltrušaitis, T.; Ahuja, C.; Morency, L.P. Multimodal machine learning: A survey and taxonomy. IEEE Trans. Pattern Anal. Mach. Intell. 2018, 41, 423–443. [Google Scholar] [CrossRef] [PubMed]

- Dai, W.; Li, J.; Li, D.; Tiong, A.M.H.; Zhao, J.; Wang, W.; Li, B.; Fung, P.; Hoi, S. InstructBLIP: Towards General-purpose Vision-Language Models with Instruction Tuning. arXiv 2023, arXiv:2305.06500. [arXiv:cs.CV/2305.06500]. [Google Scholar]

- Zhang, R.; Han, J.; Zhou, A.; Hu, X.; Yan, S.; Lu, P.; Li, H.; Gao, P.; Qiao, Y. LLaMA-Adapter: Efficient Fine-tuning of Language Models with Zero-init Attention. arXiv 2023, arXiv:2303.16199. [Google Scholar]

- Gao, P.; Han, J.; Zhang, R.; Lin, Z.; Geng, S.; Zhou, A.; Zhang, W.; Lu, P.; He, C.; Yue, X.; Li, H.; Qiao, Y. LLaMA-Adapter V2: Parameter-Efficient Visual Instruction Model. arXiv 2023, arXiv:2304.15010. [Google Scholar]

- Gong, T.; Lyu, C.; Zhang, S.; Wang, Y.; Zheng, M.; Zhao, Q.; Liu, K.; Zhang, W.; Luo, P.; Chen, K. 2023; arXiv:2305.04790.

- Su, Y.; Lan, T.; Li, H.; Xu, J.; Wang, Y.; Cai, D. PandaGPT: One Model To Instruction-Follow Them All. arXiv 2023, arXiv:2305.16355. [Google Scholar]

- Hu, E.J.; Shen, Y.; Wallis, P.; Allen-Zhu, Z.; Li, Y.; Wang, S.; Wang, L.; Chen, W. LoRA: Low-Rank Adaptation of Large Language Models. International Conference on Learning Representations, 2022.

- Awadalla, A.; Gao, I.; Gardner, J.; Hessel, J.; Hanafy, Y.; Zhu, W.; Marathe, K.; Bitton, Y.; Gadre, S.; Sagawa, S.; Jitsev, J.; Kornblith, S.; Koh, P.W.; Ilharco, G.; Wortsman, M.; Schmidt, L. OpenFlamingo: An Open-Source Framework for Training Large Autoregressive Vision-Language Models. arXiv 2023, arXiv:2308.01390. [Google Scholar]

- Xu, Z.; Shen, Y.; Huang, L. Multiinstruct: Improving multi-modal zero-shot learning via instruction tuning. arXiv 2022, arXiv:2212.10773. [Google Scholar]

- Lin, T.Y.; Maire, M.; Belongie, S.; Hays, J.; Perona, P.; Ramanan, D.; Dollár, P.; Zitnick, C.L. Microsoft coco: Common objects in context. In Proceedings of the Computer Vision–ECCV 2014: 13th European Conference, Zurich, Switzerland, 6–12 September 2014; Proceedings, Part V 13. Springer, 2014; pp. 740–755. [Google Scholar]

- Mukherjee, S.; Mitra, A.; Jawahar, G.; Agarwal, S.; Palangi, H.; Awadallah, A. Orca: Progressive learning from complex explanation traces of gpt-4. arXiv 2023, arXiv:2306.02707. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. Adv. Neural Inf. Process. Syst. 2017, 30. [Google Scholar]

| Visual Input Example, Question for "Facade Color" | |

|---|---|

|

|

| Question | The first four images are street view images taken from four different directions of the street. The last four images are taken from the same location and the same four directions but at a different period. Analyze the facade color changes of different periods and finally you must give me a choice from: A: Positive, B: Negative, C: No Changes. Make your statement concise. |

| Answer | Based on the provided images, we can observe the following changes in the building facades’ color over the two different time periods. Initial Period (First Four Images): The building facades are predominantly neutral-toned with a mix of whites, beiges, and light gray hues. The overall color palette is muted and uniform, with little variation in color saturation or brightness. The buildings have a clean and modern appearance, with the color of the construction materials clearly visible. Later Period (Last Four Images): The facades exhibit a similar color scheme with no significant changes in the hues. However, there may be slight variations in brightness due to differences in the time of day or weather conditions when the photos were taken. There is no evidence of major renovation or repainting that has altered the color palette of the buildings significantly. Considering these observations, the most accurate response would be: C: No Changes. . |

| Visual Input Comparison Examples, Question for "Lane Refinement" | |

|---|---|

| Question |  |

| {D} + "Describe changes in road refinement between two periods." + {C} | |

| Answer GPT-4 | After reviewing the images from two different periods, it is noticeable that there has been some wear in the road markings and a general decline in lane visibility in the later images. The roads show signs of heavier use, and the increased clutter along the curbside could suggest changes in traffic or parking patterns that may not be as orderly as before. These factors contribute to a slight negative impact on lane refinement, indicating a decrease in the clarity and maintenance of traffic lanes over time. Hence, the assessment concludes with a negative change in lane refinement. Therefore, my feeling towards these changes would be option B: Negative. |

| Answer SQ-GPT | It’s evident that lane refinement has seen a decline over time. Initially, clear lane markings in the first period have become less discernible in the second, likely as a result of wear and inadequate maintenance. This degradation in lane visibility can have serious implications, potentially compromising lane divisions’ clarity and introducing confusion among drivers. Despite a consistent presence of traffic signs, the overall impression hints at a deterioration in the road’s organization. Taking these factors into account, it becomes increasingly apparent that the changes in lane refinement lean towards a negative impact, aligning with Option B: Negative. |

| Answer LLaVA-1.5 | The changes in lane refinement between the two periods are evident in the increased number of cars on the street, which suggests that the area is more congested. I feel that the changes in lane refinement are negative, as the increased number of cars on the street indicates a higher level of traffic congestion. This can lead to longer travel times, increased air pollution, and a higher risk of accidents. The increased traffic can also be a sign of urbanization and economic growth, which is a positive aspect of the city’s development. However, the negative impacts on the environment and the quality of life for the residents must be considered. The final option is B. |

| Visual Input Comparison Examples, Question for "Greenery Improvement" | |

|---|---|

| Question |  |

| {D} + "Describe changes in greenery improvement between two periods." + {C} | |

| Answer GPT-4 | Upon examining the eight street view images you provided, they depict the same location from four cardinal directions, captured at two different time periods. The comparison shows visible differences in the greenery between the two periods. Initially, the area has smaller trees and less dense foliage. In the later images, the trees appear more mature and fuller, indicating growth and increased leaf density, which contributes to a lusher streetscape. Additionally, the vegetation in the median strips and along the sidewalks seems to be better maintained, suggesting an enhancement in the overall greenery management. This qualitative assessment of the vegetative growth and maintenance suggests a positive change in the greenery of the area. Therefore, based on the visual evidence from the images provided, the choice would be: A: Positive. |

| Answer SQ-GPT | From the images you’ve provided, it’s evident that there have been changes in the greenery between the two periods. In the earlier images, the trees appear less mature and the foliage is sparser, while in the latter images, the trees show more growth, with denser canopies, indicating positive development in the greenery. Additionally, the bushes and the underplanting in the median strips and along the sidewalks seem to be better maintained and more abundant in the later images. This suggests a concerted effort to enhance the urban green spaces. These improvements contribute to a healthier, more aesthetically pleasing environment and potentially better air quality for the area. Thus, it is a positive change (Option A). |

| Answer LLaVA-1.5 | Upon analyzing the images from the two different periods, the following observations can be made: The tree canopies seem consistent in size and density, indicating that the trees are mature and have been maintained. There is no significant removal or reduction of trees or plants. The color and vigor of the greenery appear similar across both periods, suggesting good health. No major landscaping additions or enhancements are visible that would suggest an intentional effort to increase green spaces. Considering these points, it can be concluded that there have been no significant changes in the greenery between the two periods depicted in the images. The urban greenery maintenance appears to be stable. Therefore, the assessment is: C: No Changes. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).