Submitted:

17 November 2023

Posted:

20 November 2023

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

1.1. Model-based Methods

1.2. Data-driven Methods

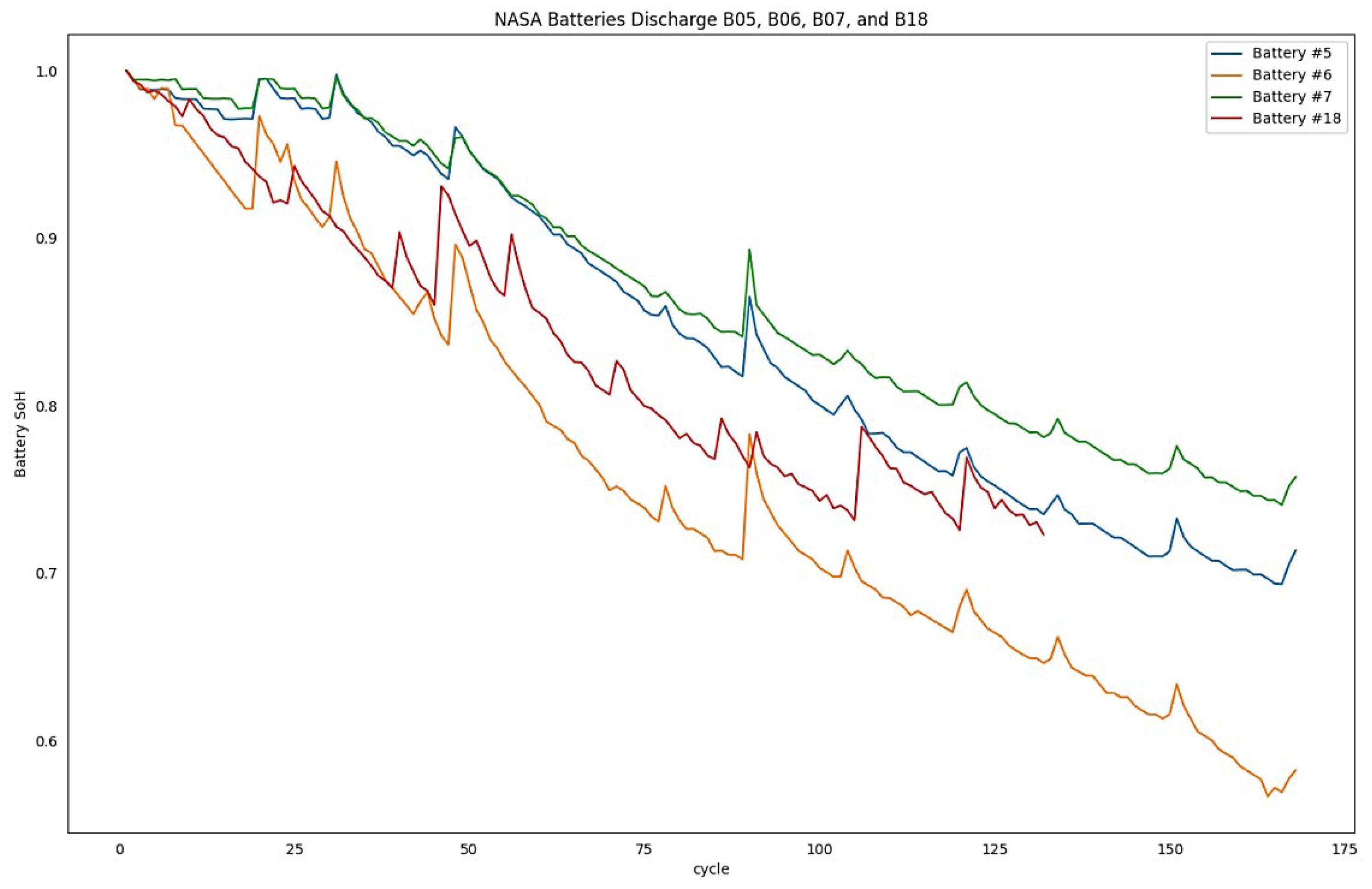

2. Dataset Description

Data Preprocessing

3. Background and Preliminaries

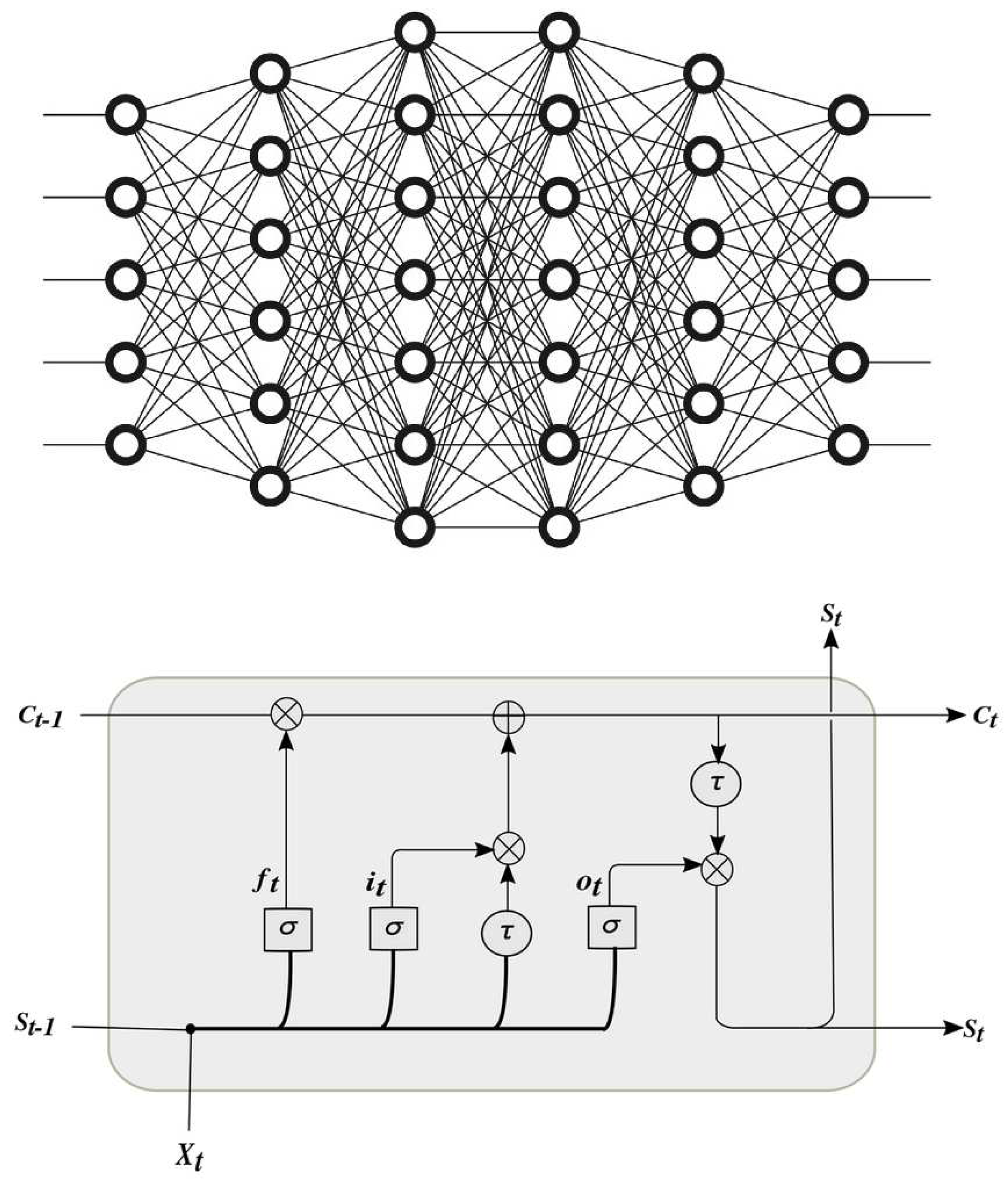

3.1. Feedforward Deep Neural Network

3.2. Long Short-Term Memory (LSTM) Deep Neural Network

3.3. Quantization

3.4. Post-Training Quantization

3.5. Quantization-Aware Training

3.6. Pruning

3.7. Quantization Theories

4. Related Works on Quantization in DLNN

5. Proposed Methodology

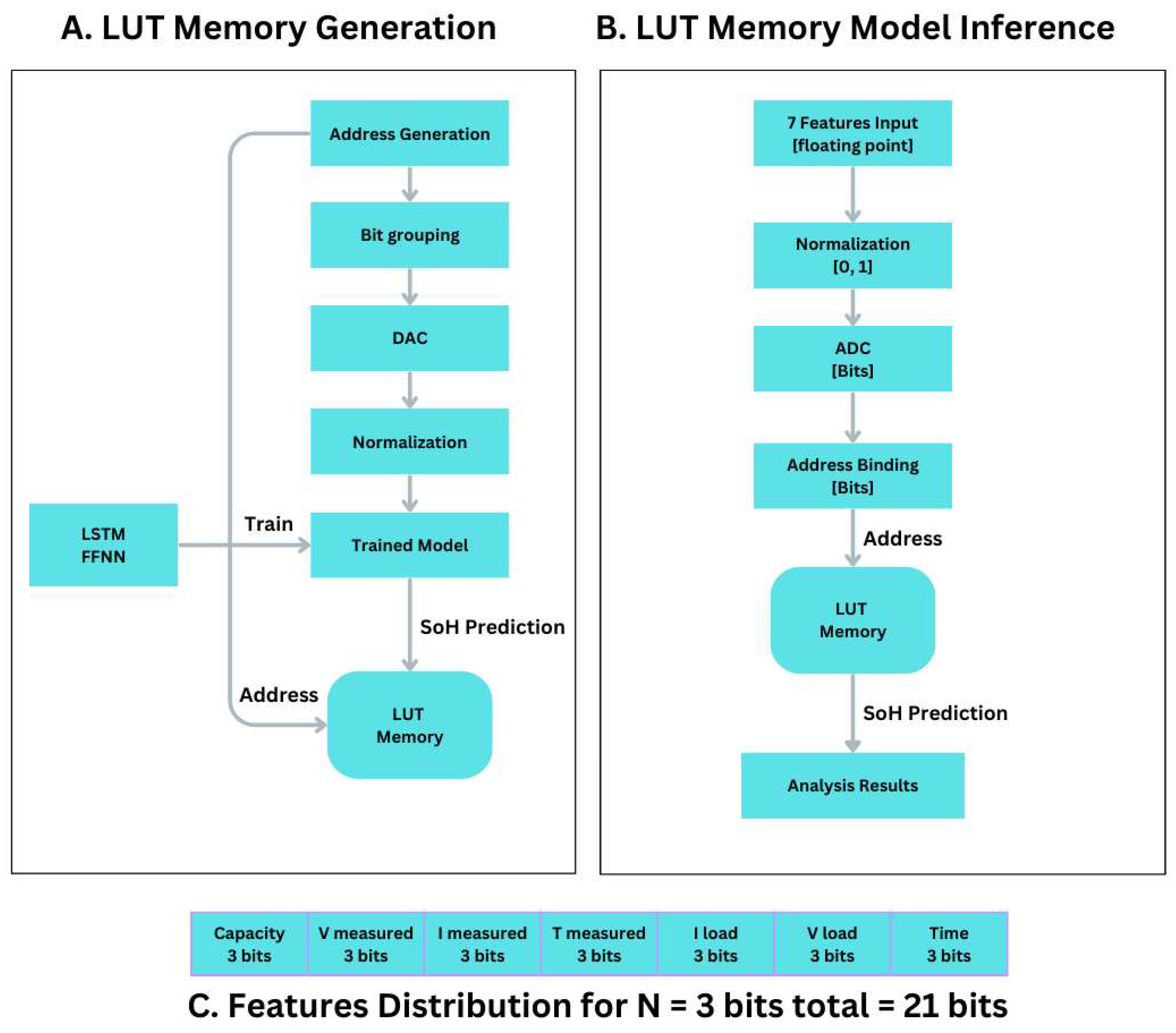

Creating the LUT-Memory

- The address bit combination starts from 0 up to 2^7n. The binary address generated depends highly on the number of bits assigned to each of the seven features. The ones that will be tested are 2, 3, 4, 5, 6, 7, and 8 bits. Table 3 shows the details.

- Then the generated address bits are grouped into seven feature groups, while each feature owns its own number of bits, generating a feature binary address bit. See next, Figure 3.

- The address bit value for each feature is normalized as bits value / 2^n, where n is the number of bits selected for the feature.

- The seven normalized feature values are presented to the trained deep neural network.

- The value inferred from the model is stored in the LUT memory at the given address.

- Then the next address is selected, and the whole operation is repeated (going to step 1).

- Starting from the seven feature values (capacity, ambient temperature, date-time, measured volts, measured current, measured temperature, load voltage, and load current),

- Each of the seven feature values will be normalized (0, 1).

- Then those will be quantized based on the next configurations: 2 bits, 3 bits, 4 bits, 5 bits, 6 bits, and 8 bits, depending on the adaptation.

- Quantization produces the binary bits for each feature.

- Combining all bits into one address, as shown in Figure 3,

6. Performance Evaluation and Metrics

6.1. Performance Evaluation Indicators

6.2. Models Training

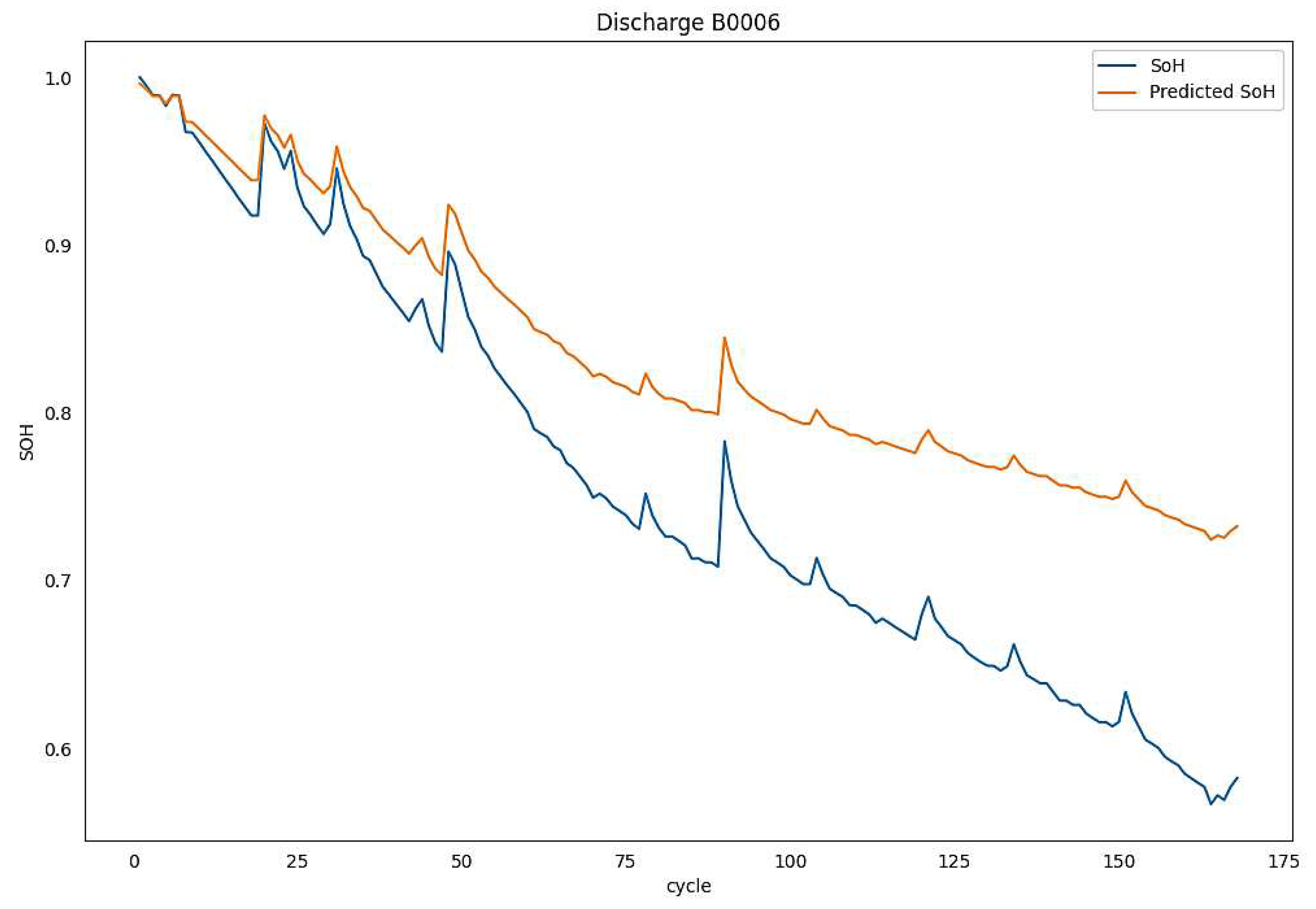

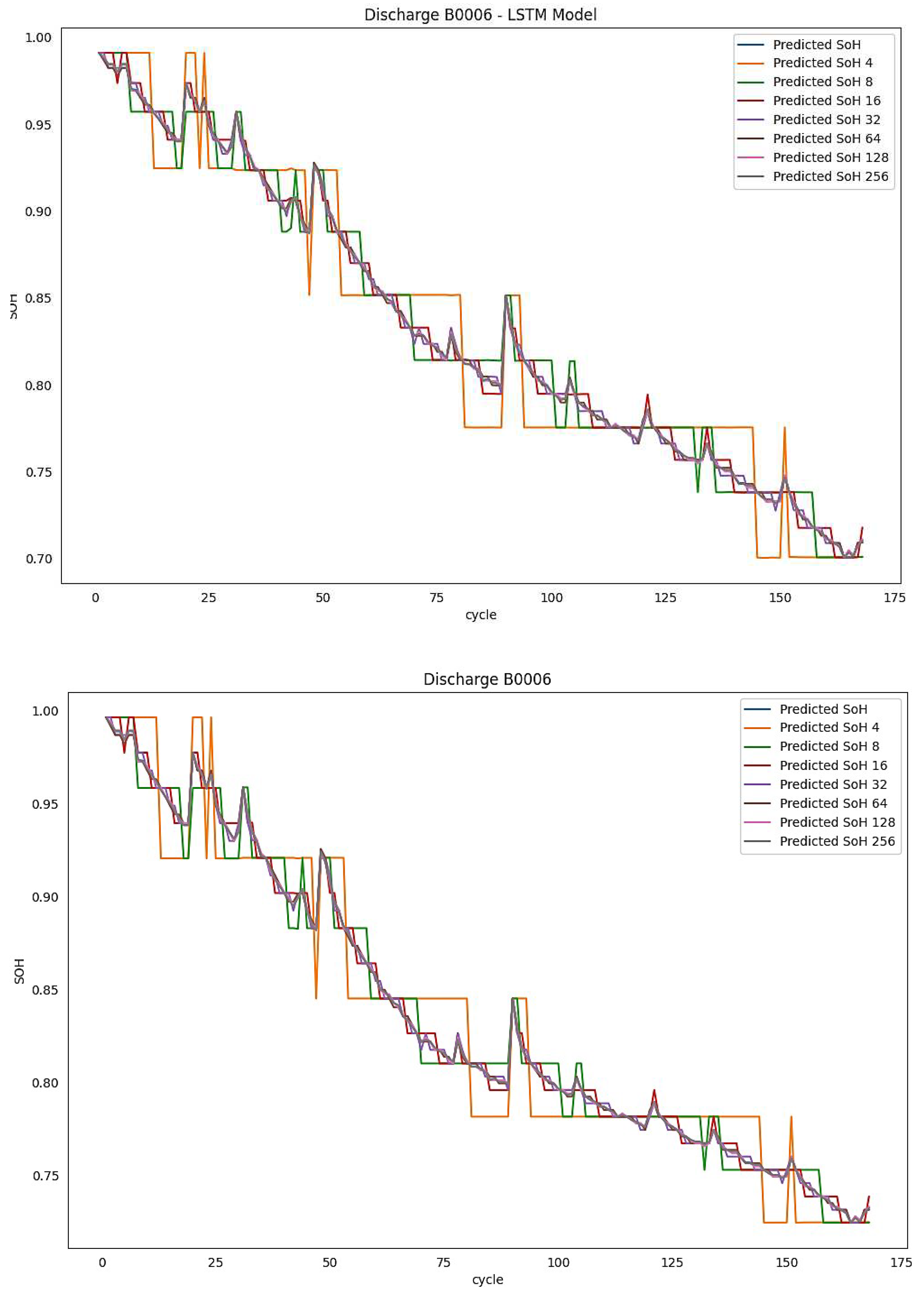

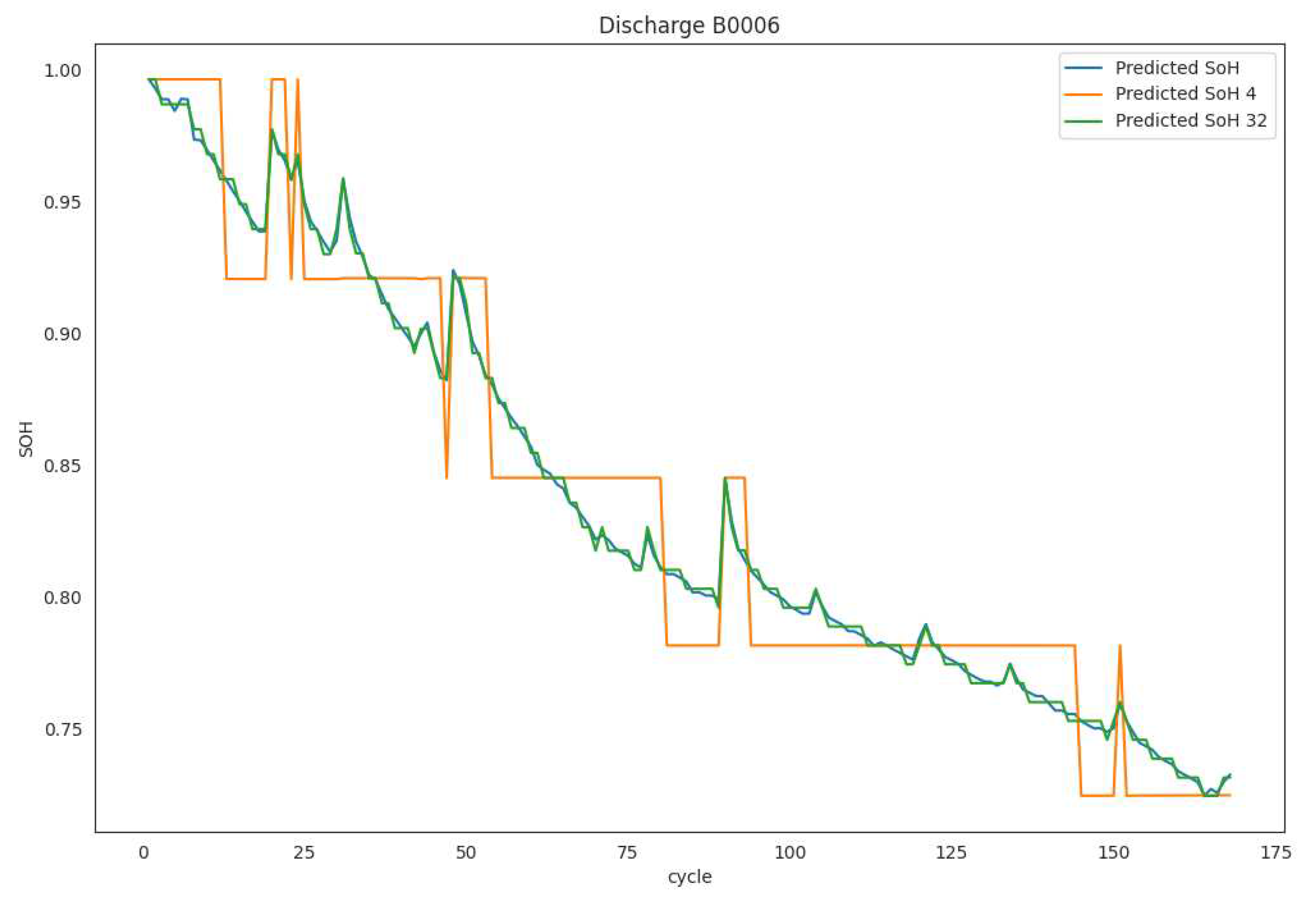

6.3. Experimental Evaluation & Results

7. Discussion

8. Summary

References

- Whittingham, M.S. Electrical Energy Storage and Intercalation Chemistry. Science 1976, 192, 1126–1127. [Google Scholar] [CrossRef]

- Stan, A.I.; ´Swierczy ´ nski, M.; Stroe, D.I.; Teodorescu, R.; Andreasen, S.J. Lithium Ion Battery Chemistries from Renewable Energy Storage to Automotive and Back-up Power Applications—An Overview. In Proceedings of the 2014 International Conference on Optimization of Electrical and Electronic Equipment (OPTIM), Bran, Romania, 22–24 May 2014; pp. 713–720. [Google Scholar]

- Nishi, Y. Lithium Ion Secondary Batteries; Past 10 Years and the Future. J. Power Sources 2001, 100, 101–106. [Google Scholar] [CrossRef]

- Huang, S.C.; Tseng, K.H.; Liang, J.W.; Chang, C.L.; Pecht, M.G. An Online SOC and SOH Estimation Model for Lithium-Ion Batteries. Energies 2017, 10, 512. [Google Scholar] [CrossRef]

- Goodenough, J.B.; Kim, Y. Challenges for Rechargeable Li Batteries. Chem. Mater. 2010, 22, 587–603. [Google Scholar] [CrossRef]

- Nitta, N.; Wu, F.; Lee, J.T.; Yushin, G. Li-Ion Battery Materials: Present and Future. Mater. Today 2015, 18, 252–264. [Google Scholar] [CrossRef]

- Dai, H.; Jiang, B.; Hu, X.; Lin, X.; Wei, X.; Pecht, M. Advanced Battery Management Strategies for a Sustainable Energy Future: Multilayer Design Concepts and Research Trends. Renew. Sustain. Energ. Rev. 2021, 138, 110480. [Google Scholar] [CrossRef]

- Lawder, M.T.; Suthar, B.; Northrop, P.W.; De, S.; Hoff, C.M.; Leitermann, O.; Crow, M.L.; Santhanagopalan, S.; Subramanian, V.R. Battery Energy Storage System (BESS) and Battery Management System (BMS) for Grid-Scale Applications. Proc. IEEE 2014, 102, 1014–1030. [Google Scholar] [CrossRef]

- Lai, X.; Gao, W. A comparative study of global optimization methods for parameter identification of different equivalent circuit models for Li-ion batteries. Electrochim. Acta 2019, 295, 1057–1066. [Google Scholar] [CrossRef]

- Wang, Y.; Gao, G.; Li, X.; Chen, Z. A fractional-order model-based state estimation approach for lithium-ion battery and ultra-capacitor hybrid power source system considering load trajectory. J. Power Sources 2020, 449, 227543. [Google Scholar] [CrossRef]

- Cheng, G.; Wang, X.; He, Y. Remaining useful life and state of health prediction for lithium batteries based on empirical mode decomposition and a long and short memory neural network. Energy 2021, 232, 121022. [Google Scholar] [CrossRef]

- Rechkemmer, S.; Zang, X. Empirical Li-ion aging model derived from single particle model. J. Energy Storage 2019, 21, 773–786. [Google Scholar] [CrossRef]

- Li, K.; Wang, Y.; Chen, Z. A comparative study of battery state-of-health estimation based on empirical mode decomposition and neural network. J. Energy Storage 2022, 54, 105333. [Google Scholar] [CrossRef]

- Geng, Z.; Wang, S.; Lacey, M.J.; Brandell, D.; Thiringer, T. Bridging physics-based and equivalent circuit models for lithium-ion batteries. Electrochim. Acta 2021, 372, 137829. [Google Scholar] [CrossRef]

- Xu, N.; Xie, Y.; Liu, Q.; Yue, F.; Zhao, D. A Data-Driven Approach to State of Health Estimation and Prediction for a Lithium-Ion Battery Pack of Electric Buses Based on Real-World Data. Sensors 2022, 22, 5762. [Google Scholar] [CrossRef] [PubMed]

- Alipour, M.; Tavallaey, S. Improved Battery Cycle Life Prediction Using a Hybrid Data-Driven Model Incorporating Linear Support Vector Regression and Gaussian. ChemPhysChem 2022, 23, e202100829. [Google Scholar] [CrossRef]

- Li, X.; Wang, Z. Prognostic health condition for lithium battery using the partial incremental capacity and Gaussian process regression. J. Power Sources 2019, 421, 56–67. [Google Scholar] [CrossRef]

- Li, Y.; Abdel-Monem, M. A quick on-line state of health estimation method for Li-ion battery with incremental capacity curves processed by Gaussian filter. J. Power Sources 2018, 373, 40–53. [Google Scholar] [CrossRef]

- Onori, S.; Spagnol, P.; Marano, V.; Guezennec, Y.; Rizzoni, G. A New Life Estimation Method for Lithium-Ion Batteries in Plug-in Hybrid Electric Vehicles Applications. Int. J. Power Electron. 2012, 4, 302–319. [Google Scholar] [CrossRef]

- Plett, G.L. Extended Kalman Filtering for Battery Management Systems of LiPB-Based HEV Battery Packs: Part State and Parameter Estimation. J. Power Sources 2004, 134, 277–292. [Google Scholar] [CrossRef]

- Goebel, K.; Saha, B.; Saxena, A.; Celaya, J.R.; Christophersen, J.P. Prognostics in Battery Health Management. IEEE Instrum. Meas. Mag 2008, 11, 33–40. [Google Scholar] [CrossRef]

- Wang, D.; Yang, F.; Zhao, Y.; Tsui, K.L. Battery Remaining Useful Life Prediction at Different Discharge Rates. Microelectron. Reliab. 2017, 78, 212–219. [Google Scholar] [CrossRef]

- Li, J.; Landers, R.G.; Park, J. A Comprehensive Single-Particle-Degradation Model for Battery State-of-Health Prediction. J. Power Sources 2020, 456, 227950. [Google Scholar] [CrossRef]

- Hu, X.; Jiang, J.; Cao, D.; Egardt, B. Battery Health Prognosis for Electric Vehicles Using Sample Entropy and Sparse Bayesian Predictive Modeling. IEEE Trans. Ind. Electron. 2015, 63, 2645–2656. [Google Scholar] [CrossRef]

- Piao, C.; Li, Z.; Lu, S.; Jin, Z.; Cho, C. Analysis of Real-Time Estimation Method Based on Hidden Markov Models for Battery System States of Health. J. Power Electron. 2016, 16, 217–226. [Google Scholar] [CrossRef]

- Liu, D.; Pang, J.; Zhou, J.; Peng, Y.; Pecht, M. Prognostics for State of Health Estimation of Lithium-Ion Batteries Based on Combination Gaussian Process Functional Regression. Microelectron. Reliab. 2013, 53, 832–839. [Google Scholar] [CrossRef]

- Khumprom, P.; Yodo, N. A Data-Driven Predictive Prognostic Model for Lithium-Ion Batteries Based on a Deep Learning Algorithm. Energies 2019, 12, 660. [Google Scholar] [CrossRef]

- Xia, Z.; Qahouq, J.A.A. Adaptive and Fast State of Health Estimation Method for Lithium-Ion Batteries Using Online Complex Impedance and Artificial Neural Network. In Proceedings of the 2019 IEEE Applied Power Electronics Conference and Exposition (APEC), Anaheim, CA, USA, 17–21 March 2019; pp. 3361–3365. [Google Scholar]

- Eddahech, A.; Briat, O.; Bertrand, N.; Delétage, J.Y.; Vinassa, J.M. Behavior and State-of-Health Monitoring of Li-Ion Batteries Using Impedance Spectroscopy and Recurrent Neural Networks. Int. J. Electr. Power Energy Syst. 2012, 42, 487–494. [Google Scholar] [CrossRef]

- Shen, S.; Sadoughi, M.; Chen, X.; Hong, M.; Hu, C. A Deep Learning Method for Online Capacity Estimation of Lithium-Ion Batteries. J. Energy Storage 2019, 25, 100817. [Google Scholar] [CrossRef]

- Saha, B.; Goebel, K. Battery Data Set, NASA Ames Prognostics Data Repository; NASA Ames Research Center: Moffett Field, CA, USA, 2007; Available online: https://ti.arc.nasa.gov/tech/dash/groups/pcoe/prognostic-data-repository/ (accessed on 25 September 2020).

- Ren, L.; Zhao, L.; Hong, S.; Zhao, S.; Wang, H.; Zhang, L. Remaining Useful Life Prediction for Lithium-Ion Battery: A Deep Learning Approach. IEEE Access 2018, 6, 50587–50598. [Google Scholar] [CrossRef]

- Khumprom, P.; Yodo, N. A Data-Driven Predictive Prognostic Model for Lithium-Ion Batteries Based on a Deep Learning Algorithm. Energies 2019, 12, 660. [Google Scholar] [CrossRef]

- Choi, Y.; Ryu, S.; Park, K.; Kim, H. Machine Learning-Based Lithium-Ion Battery Capacity Estimation Exploiting Multi-Channel Charging Profiles. IEEE Access 2019, 7, 75143–75152. [Google Scholar] [CrossRef]

- Ruihao Gong, Xianglong Liu, Shenghu Jiang, Tianxiang Li,Peng Hu, Jiazhen Lin, Fengwei Yu, and Junjie Yan. Differentiablesoft quantization: Bridging full-precision and low-bitneural networks. In ICCV, pages 4852–4861, 2019. 1, 2, 5,6.

- Jungwook Choi, Zhuo Wang, Swagath Venkataramani,Pierce I-Jen Chuang, Vijayalakshmi Srinivasan, and KailashGopalakrishnan. Pact: Parameterized clipping activation forquantized neural networks. arXiv, 2018. 1, 2, 5, 6.

- Steven K Esser, Jeffrey L McKinstry, Deepika Bablani,Rathinakumar Appuswamy, and Dharmendra S Modha.Learned step size quantization. In ICLR, 2019. 1, 2, 4, 5,6.

- Zhaohui Yang, Yunhe Wang, Kai Han, Chunjing Xu, ChaoXu, Dacheng Tao, and Chang Xu. Searching for low-bitweights in quantized neural networks. In NeurIPS, 2020.1, 2, 5.

- Matthieu Courbariaux, Yoshua Bengio, and Jean-PierreDavid. Binaryconnect: Training deep neural networks withbinary weights during propagations. In NeurIPS, 2017. 2.

- Chenzhuo Zhu, Song Han, Huizi Mao, and William J Dally.Trained ternary quantization. In ICLR, 2017. 2.

- Mohammad Rastegari, Vicente Ordonez, Joseph Redmon,and Ali Farhadi. Xnor-net: Imagenet classification using binaryconvolutional neural networks. In ECCV, pages 525–542, 2016. 2.

- Karen Ullrich, Edward Meeds, and Max Welling. Softweight-sharing for neural network compression. In ICLR,2017. 2.

- Yuhui Xu, YongzhuangWang, Aojun Zhou,Weiyao Lin, andHongkai Xiong. Deep neural network compression with singleand multiple level quantization. In AAAI, volume 32,2018. 2.

- Aojun Zhou, Anbang Yao, Yiwen Guo, Lin Xu, and YurongChen. Incremental network quantization: Towards losslesscnns with low-precision weights. In ICLR, 2017. 2.

- Daisuke Miyashita, Edward H Lee, and Boris Murmann.Convolutional neural networks using logarithmic data representation.ar Xiv, 2016. 2.

- Davis Blalock, Jose Javier Gonzalez Ortiz, Jonathan Frankle, and John Guttag. 2020. What is the state of neural network pruning? Proceedings of machine learning and systems 2 (2020), 129–146.

- Jianping Gou, Baosheng Yu, Stephen J Maybank, and Dacheng Tao.2021. Knowledge distillation: A survey. International Journal of Computer Vision 129 (2021), 1789–1819.

- Google. 2019. TensorFlow: An end-to-end open source machine learningplatform. https://www.tensorflow.org/.

- MACE. 2020. https://github.com/XiaoMi/mace.

- Microsoft. 2019. ONNX Runtime. https://github.com/microsoft/.

- Manni Wang, Shaohua Ding, Ting Cao, Yunxin Liu, and Fengyuan Xu. 2021. Asymo: scalable and efficient deep-learning inference on asymmetric mobile CPUs. In Proceedings of the 27th Annual International Conference on Mobile Computing and Networking. 215–228.

- Tianqi Chen, Thierry Moreau, Ziheng Jiang, Lianmin Zheng, Eddie Yan, Haichen Shen, Meghan Cowan, Leyuan Wang, Yuwei Hu, LuisCeze, et al. 2018. TVM: An automated end-to-end optimizing compiler for deep learning. In 13th USENIX Symposium on Operating Systems Design and Implementation (OSDI 18). 578–594.

- Rendong Liang, Ting Cao, Jicheng Wen, Manni Wang, Yang Wang, Jianhua Zou, and Yunxin Liu. 2022. Romou: Rapidly generate high performance tensor kernels for mobile gpus. In Proceedings of the 28thAnnual International Conference on Mobile Computing And Networking.487–500.

- Yang Jiao, Liang Han, and Xin Long. 2020. Hanguang 800 NPU - The Ultimate AI Inference Solution for Data Centers. In IEEE Hot Chips 32Symposium, HCS 2020, Palo Alto, CA, USA, August 16-18, 2020. IEEE,1–29. [CrossRef]

- Norman P. Jouppi, Doe Hyun Yoon, Matthew Ashcraft, Mark Gottscho, Thomas B. Jablin, George Kurian, James Laudon, Sheng Li, Peter Ma, Xiaoyu Ma, Thomas Norrie, Nishant Patil, Sushma Prasad, Cliff Young, Zongwei Zhou, and David Patterson. 2021. Ten Lessons from Three Generations Shaped Google’s TPUv4i (ISCA ’21). IEEE Press, 1–14. [CrossRef]

- Ofri Wechsler, Michael Behar, and Bharat Daga. 2019. Spring Hill(NNP-I 1000) Intel’s Data Center Inference Chip. In 2019 IEEE Hot Chips 31 Symposium (HCS). 1–12. [CrossRef]

| Capacity | Vm | Im | Tm | ILoad | VLoad | Time (s) | |

|---|---|---|---|---|---|---|---|

| Min | 1.28745 | 2.44567 | -2.02909 | 23.2148 | -1.9984 | 0.0 | 0 |

| Max | 1.85648 | 4.22293 | 0.00749 | 41.4502 | 1.9984 | 4.238 | 3690234 |

|

Model 1 FFNN |

Layers | Output Shape | Parameters No. |

| Dense | (node, 8) | 217 | |

| Dense | (node, 8) | ||

| Dense | (node, 8) | ||

| Dense | (node, 8) | ||

| Dense | (node, 1) | ||

| Layers | Output Shape | Parameters No. | |

|

Model 2 LSTM |

LSTM 1 | (N, 7, 200) | 1.124 M |

| Dropout 1 | (N, 7, 200) | ||

| LSTM 2 | (7, 200) | ||

| Dropout 2 | (N, 7, 200) | ||

| LSTM 3 | (N, 7, 200) | ||

| Dropout 3 | (N, 7, 200) | ||

| LSTM 4 | (N, 200) | ||

| Dropout 4 | (N, 200) | ||

| Dense | (N, 1) |

| Bits / Feature | Values given | Bits Total (Address) |

SQNR dB |

Memory Size |

|---|---|---|---|---|

| 2 | 4 | 14 | 12.04 | 16K |

| 3 | 8 | 21 | 18.06 | 2M |

| 4 | 16 | 28 | 24.08 | 256M |

| 5 | 32 | 35 | 30.10 | 32G |

| 6 | 64 | 42 | 36.12 | 4T |

| 7 | 128 | 49 | 42.14 | ---- |

| 8 | 256 | 56 | 48.16 | ---- |

| Model | Batch size | Epochs | Time(s) | Loss |

|---|---|---|---|---|

| FFNN | 25 | 50 | 200 | 0.0243 |

| LSTM | 25 | 50 | 7453 | 3.1478E-05 |

| Battery | Model | RMSE | MAE | MAPE |

|---|---|---|---|---|

| B0006 | FFNN | 0.080010 | 0.068220 | 0.100970 |

| LSTM | 0.076270 | 0.067620 | 0.098770 | |

| B0007 | FFNN | 0.019510 | 0.018019 | 0.021460 |

| LSTM | 0.029282 | 0.024710 | 0.030434 | |

| B0018 | FFNN | 0.015680 | 0.013610 | 0.016890 |

| LSTM | 0.018021 | 0.016371 | 0.020547 |

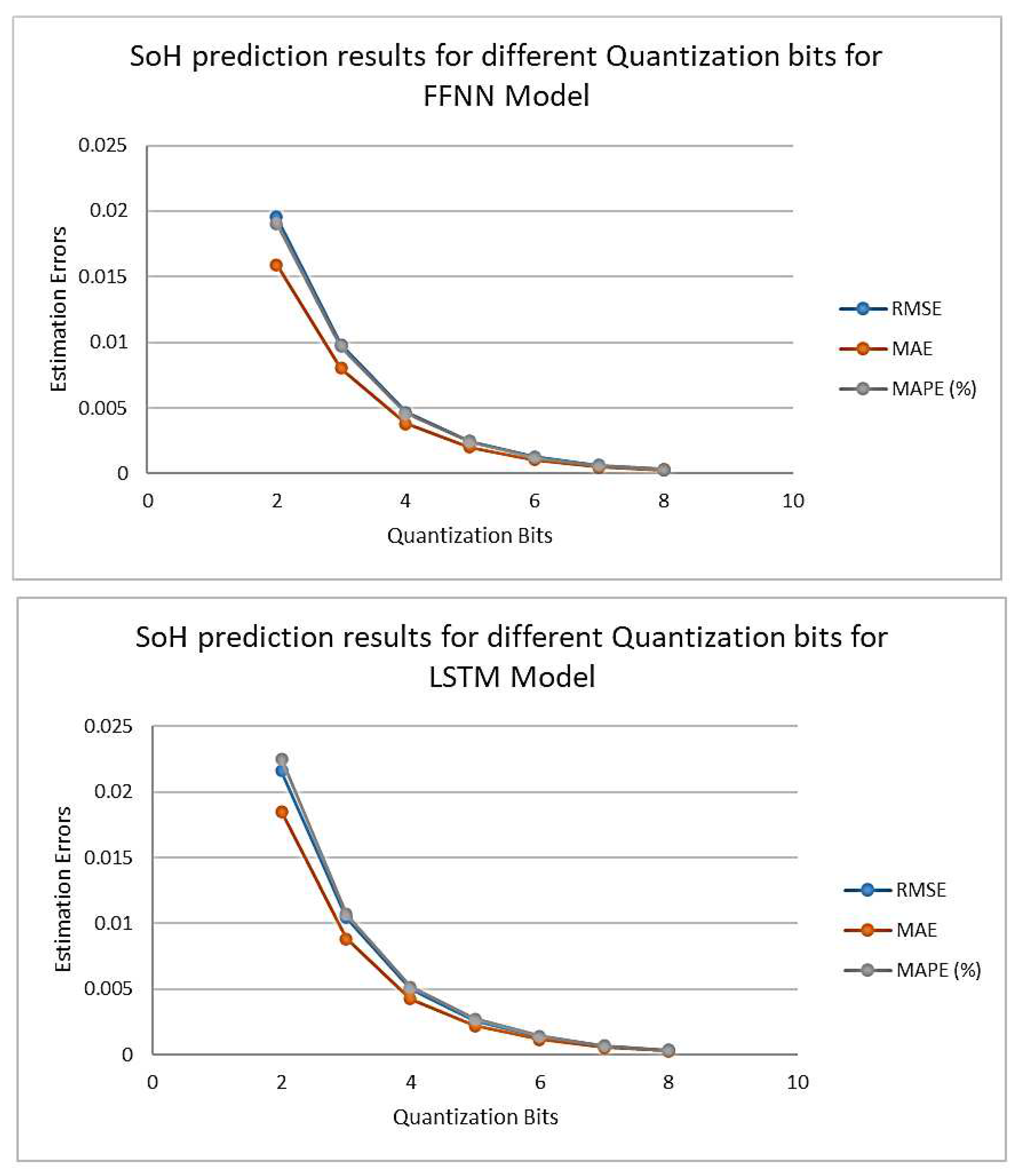

| Battery | Model | Quantization Bits | RMSE | MAE | MAPE (%) |

|---|---|---|---|---|---|

| B0006 | FFNN | 2 | 0.0195370 | 0.0159236 | 0.0190499 |

| 3 | 0.0098006 | 0.0080317 | 0.0096645 | ||

| 4 | 0.0046815 | 0.0037988 | 0.0045664 | ||

| 5 | 0.0024301 | 0.0020093 | 0.0024294 | ||

| 6 | 0.0012535 | 0.0010379 | 0.0012461 | ||

| 7 | 0.0006150 | 0.0005068 | 0.0006144 | ||

| 8 | 0.0003125 | 0.0002565 | 0.0003088 | ||

| LSTM | 2 | 0.0216045 | 0.0185078 | 0.0225291 | |

| 3 | 0.0104658 | 0.0088477 | 0.0107360 | ||

| 4 | 0.0050010 | 0.0042487 | 0.0051737 | ||

| 5 | 0.0025885 | 0.0022293 | 0.0027206 | ||

| 6 | 0.0013394 | 0.0011620 | 0.0014114 | ||

| 7 | 0.0006609 | 0.0005692 | 0.0006974 | ||

| 8 | 0.0003309 | 0.0002835 | 0.0003446 | ||

| B0007 | FFNN | 2 | 0.0187614 | 0.0162685 | 0.0191451 |

| 3 | 0.0101181 | 0.0088282 | 0.0103004 | ||

| 4 | 0.0050026 | 0.0043651 | 0.0051114 | ||

| 5 | 0.0024498 | 0.0021127 | 0.0024730 | ||

| 6 | 0.0012030 | 0.0010481 | 0.0012269 | ||

| 7 | 0.0006394 | 0.0005566 | 0.0006533 | ||

| 8 | 0.0003060 | 0.0002578 | 0.0003013 | ||

| LSTM | 2 | 0.0209633 | 0.0181984 | 0.0219105 | |

| 3 | 0.0113147 | 0.0099692 | 0.0119157 | ||

| 4 | 0.0056382 | 0.0049296 | 0.0059140 | ||

| 5 | 0.0027386 | 0.0023843 | 0.0028542 | ||

| 6 | 0.0013495 | 0.0011826 | 0.0014153 | ||

| 7 | 0.0007212 | 0.0006320 | 0.0007581 | ||

| 8 | 0.0003432 | 0.0002912 | 0.0003475 | ||

| B00018 | FFNN | 2 | 0.0205289 | 0.0159912 | 0.0189426 |

| 3 | 0.0096451 | 0.0077552 | 0.0092191 | ||

| 4 | 0.0050730 | 0.0040254 | 0.0047780 | ||

| 5 | 0.0022966 | 0.0017585 | 0.0020886 | ||

| 6 | 0.0011492 | 0.0008754 | 0.0010336 | ||

| 7 | 0.0006432 | 0.0005005 | 0.0005950 | ||

| 8 | 0.0002954 | 0.0002268 | 0.0002719 | ||

| LSTM | 2 | 0.0218554 | 0.0189299 | 0.0233109 | |

| 3 | 0.0109069 | 0.0094792 | 0.0116619 | ||

| 4 | 0.0057440 | 0.0049472 | 0.0060704 | ||

| 5 | 0.0026591 | 0.0022228 | 0.0027317 | ||

| 6 | 0.0013255 | 0.0011411 | 0.0014012 | ||

| 7 | 0.0007168 | 0.0006208 | 0.0007612 | ||

| 8 | 0.0003431 | 0.0002941 | 0.0003649 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).