1. Short-term memory capacity can be estimated from literary texts

The aim of this paper is to propose that the short−term memory (STM) – which refers to the ability to remember a small number of items for a short period of time - is likely made by two consecutive (in series) and uncorrelated processing units with similar capacity. The clues for conjecturing this model emerge from studying many novels of the Italian and English Literatures. Although simple, because only the surface structure of texts is considered, the model seems to describe mathematically the input-output characteristics of a complex mental process, largely unknown.

To model a two-unit STM processing, we further develop our previous studies based on a parameter called the “word interval”, indicated by

, given by the number of words between any two contiguous interpunctions [

1,

2,

3,

4,

5,

6,

7,

8]. The term “interval” arises by noting that

does measure an “interval” - expressed in words - which can be transformed into time through a reading speed [

9], as shown in [

1].

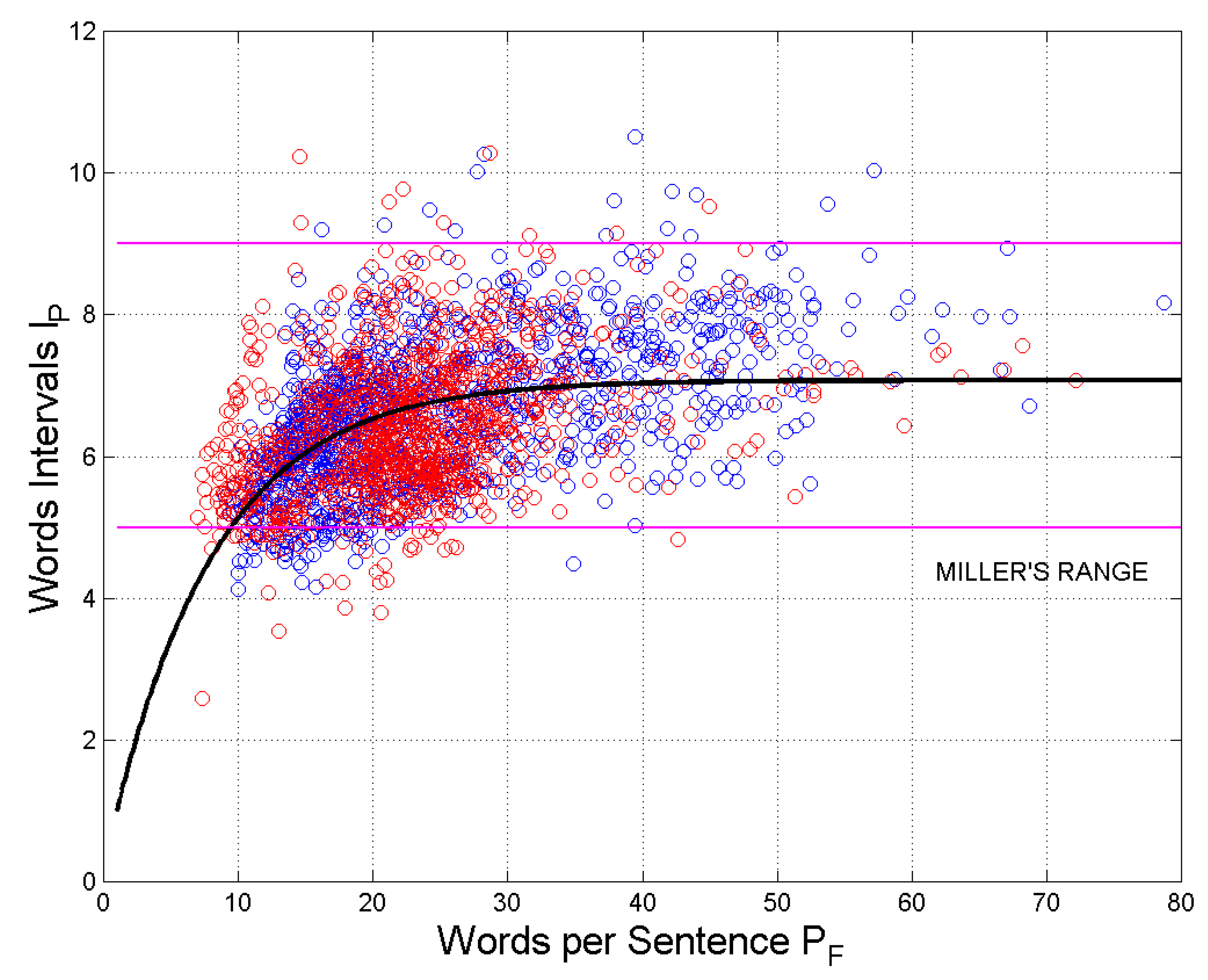

The parameter

varies in the same range of the STM capacity, given by Miller’s

law [

10], a range that includes 95% of cases. As discussed in [

1], the two ranges are deeply related because interpunctions organize small portions of more complex arguments (which make a sentence) in short chunks of text, which represent the natural STM input (see [

11,

12,

13,

14,

15,

16,

17,

18,

19,

20,

21,

22,

23,

24,

25,

26,

27,

28,

29,

30,

31], a sample of the many papers appeared in the literature, and also the discussion in Ref. [

1]). It is interesting to recall that

, drawn against the number of words per sentence,

, approaches a horizontal asymptote as

increases [

1,

2,

3]. The writer, therefore, maybe unconsciously, introduces interpunctions as sentences get longer because he/she acts also as a reader, therefore limiting

approximately in Miller’s range.

The presence of interpunctions in a sentence and its length in words are, very likely, the tangible consequence of two consecutive processing units necessary to deliver the meaning of the sentence, the first of which we have already studied with regard to

and the linguistic I-channel [

1,

2,

3,

4,

5,

6,

7,

8].

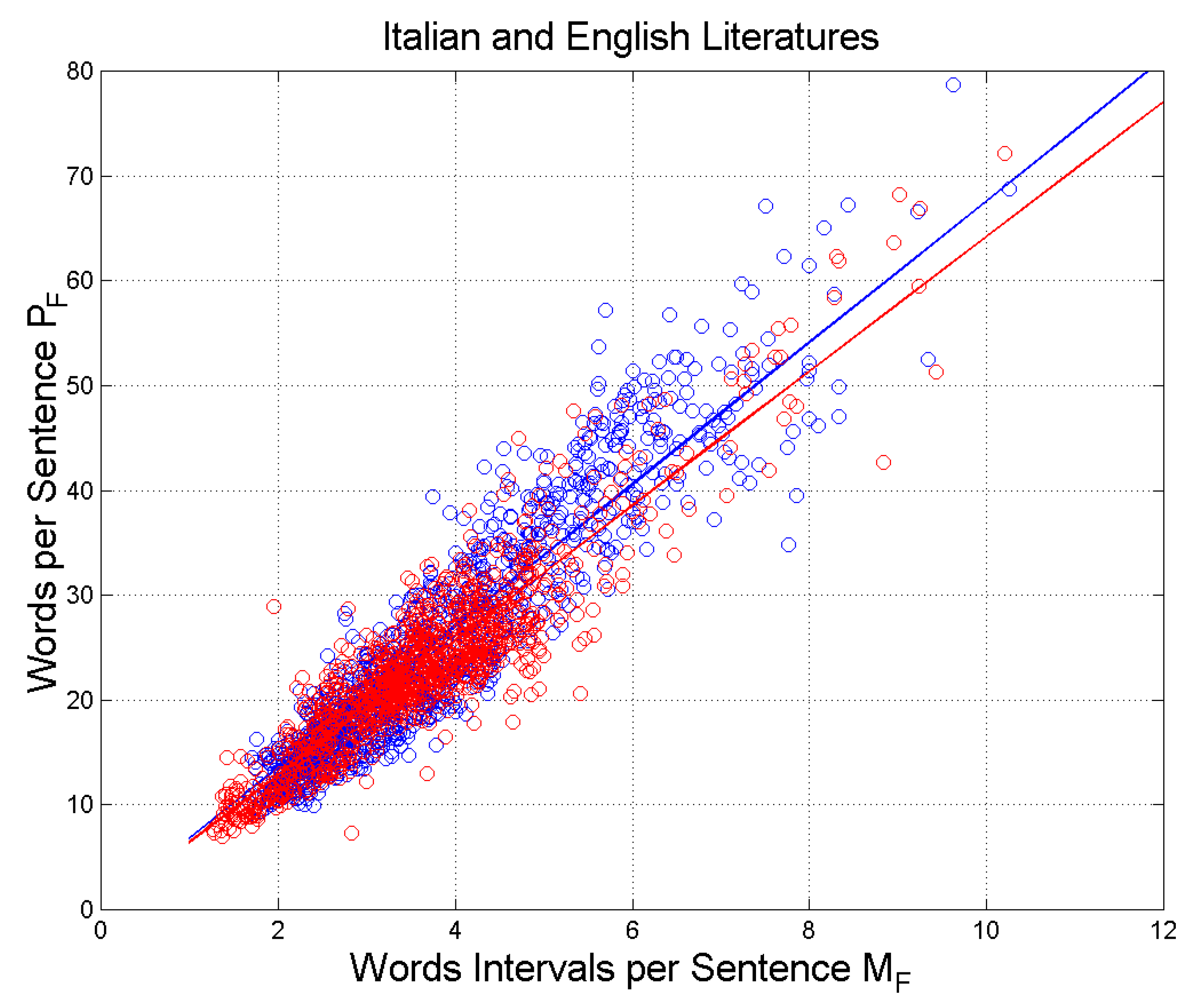

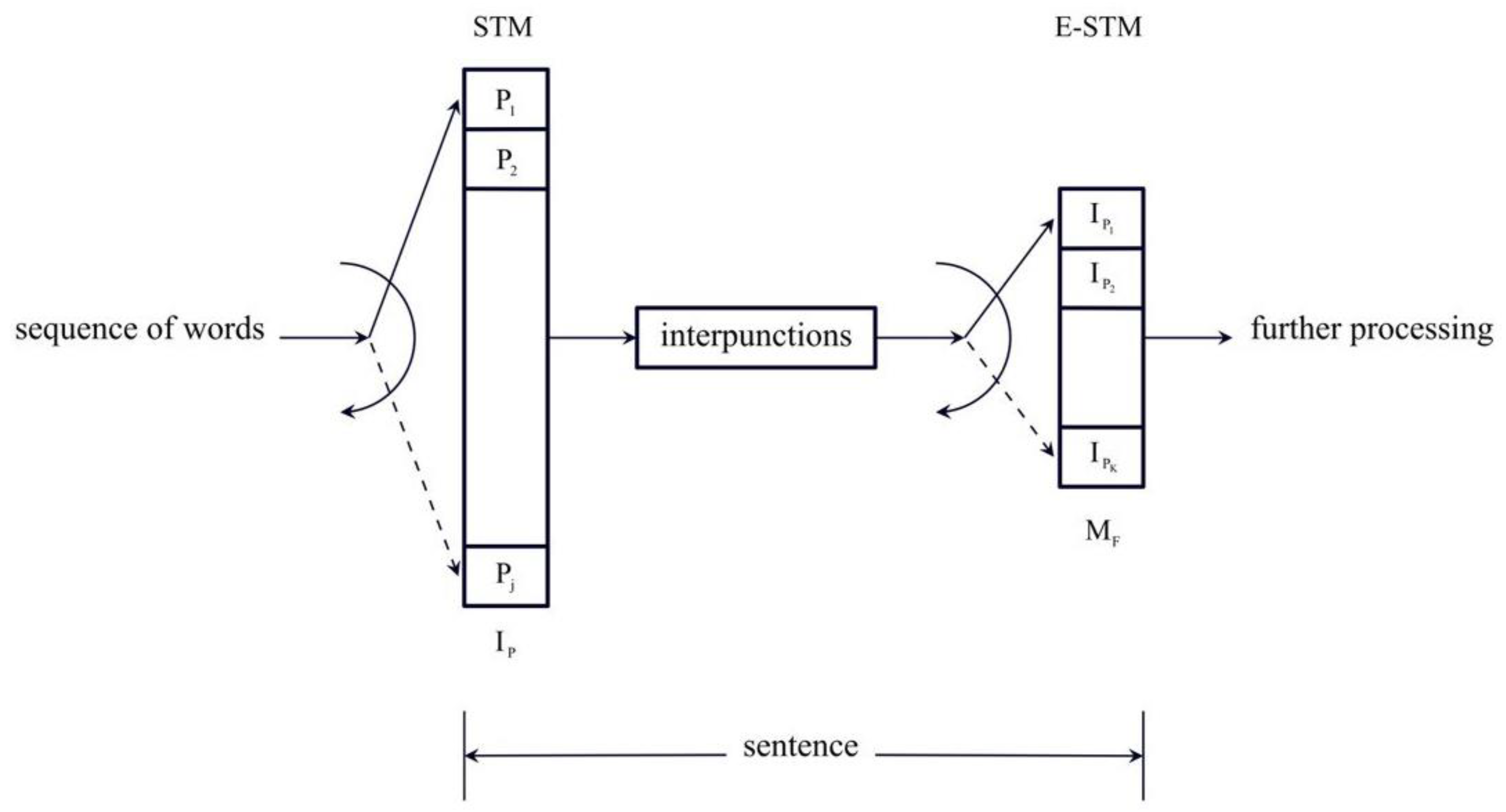

A two-unit STM processing can be justified, at least empirically, according to how a human mind is thought to memorize “chunks” of information in the STM. When we start reading a sentence, the mind tries to predict its full meaning from what it has already read and only when an in-sentence interpunction is found (i.e., comma, colon, semicolon), it can partially understand the text, whose full meaning is finally revealed when a final interpunction (question mark, exclamation mark, full-stop) is found. This first processing therefore is revealed by

, the second processing is revealed by

and by the number of word intervals

contained in the sentence, the latter indicated by

[

1,

2,

3,

4,

5,

6,

7,

8].

The longer and more twisted a sentence is, the longer the ideas remain deferred until the mind can establish its meaning from all its words, with the result that the text is less readable. The readability can be measured by the universal readability index which includes the two-unit STM processing [

6].

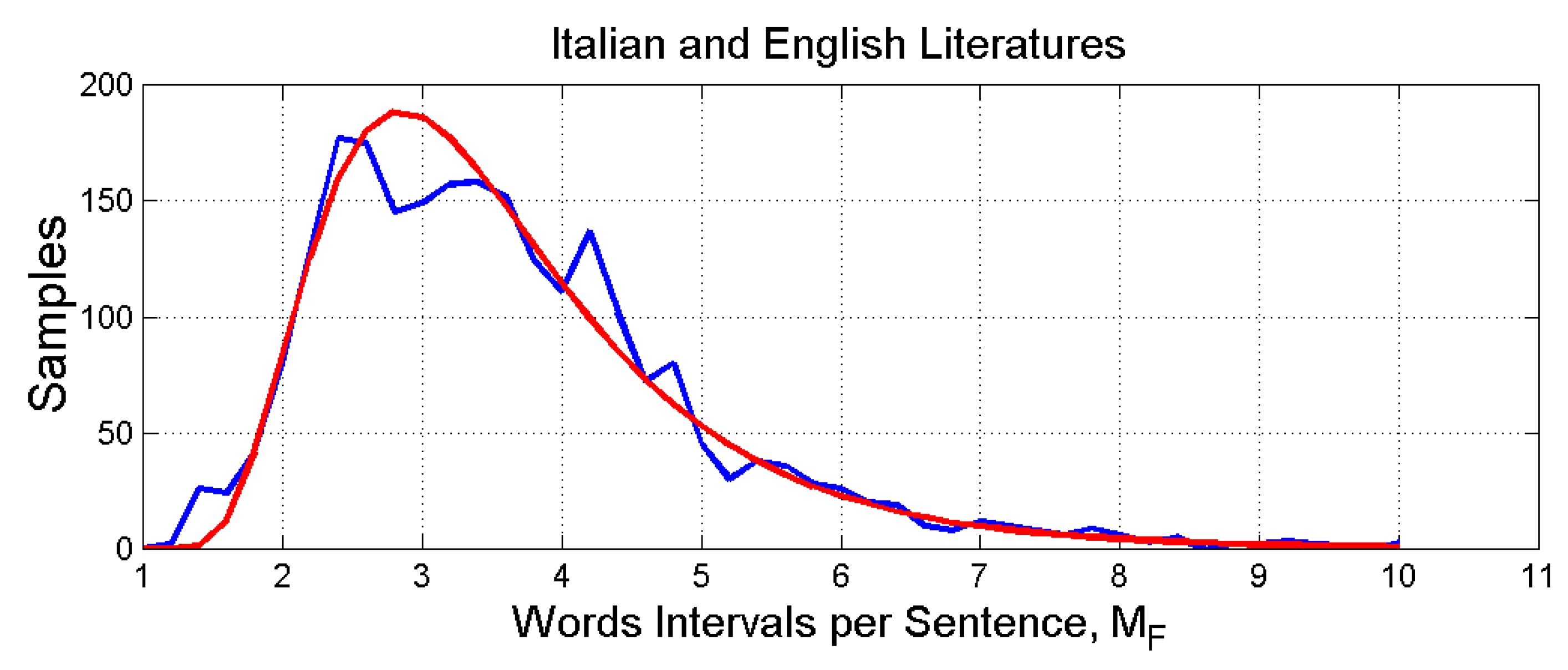

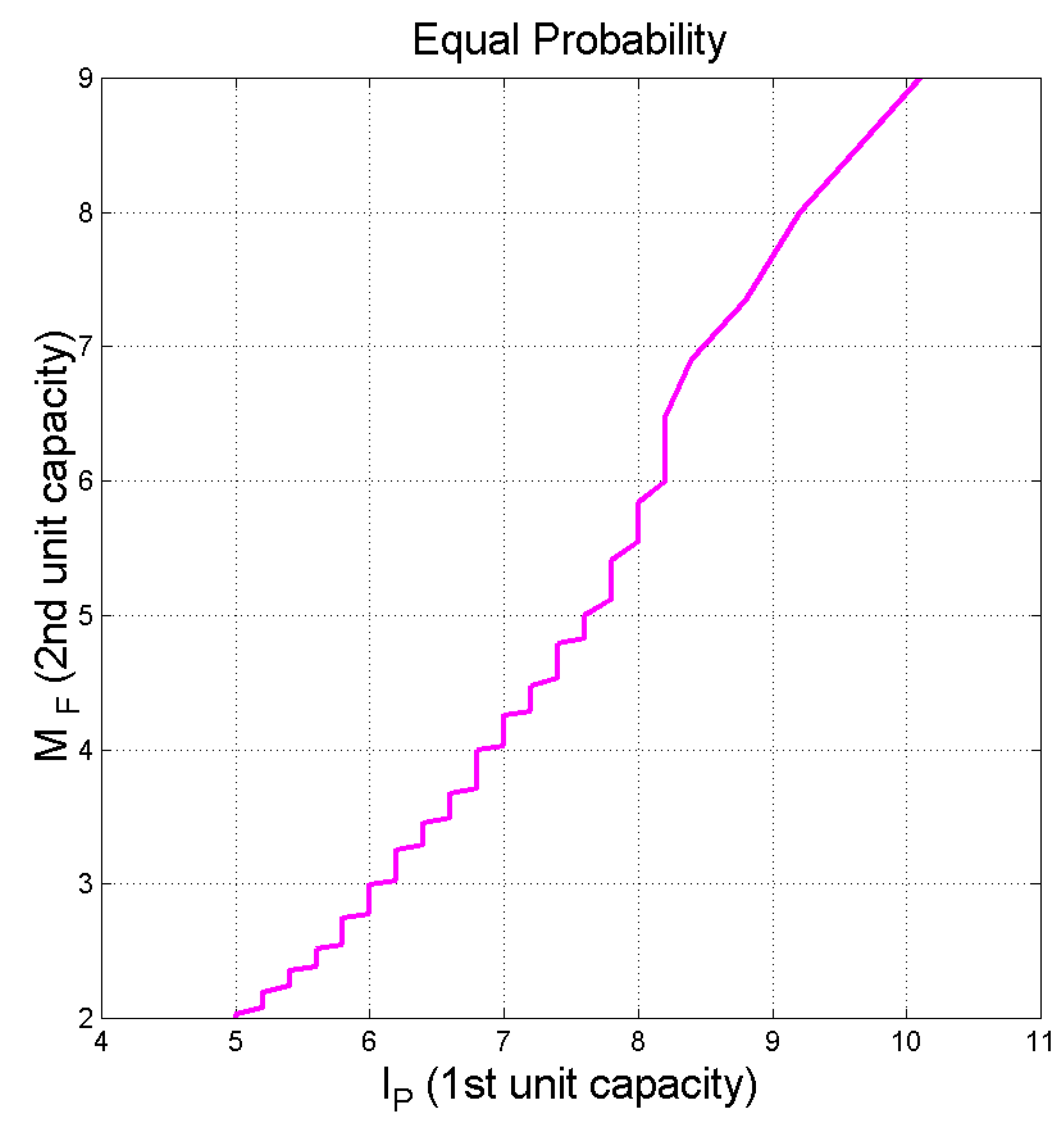

In synthesis, in the present paper we conjecture that in reading a full sentence humans engage a second STM capacity – quantitatively measured by

- which works in series with the first STM – quantitatively measured by

. We refer to the second STM capacity as the “extended” STM (E-STM) capacity. The modeling of the STM capacity with

has never been considered in the literature [

11,

12,

13,

14,

15,

16,

17,

18,

19,

20,

21,

22,

23,

24,

25,

26,

27,

28,

29,

30,

31] before our paper in 2019 [

1]. The number

, of

contained in a sentence studied previously in I-channels [

4], is now associated with the E-STM.

The E-STM should not be confused with the intermediate memory [

32,

33], not to mention the long-term memory. It should be also clear that the E-STM is not modelled by studying neuronal activity, but from counting words and interpunctions, whose effects hundreds of writers - both modern and classic - and millions of people have experienced through reading.

The stochastic variables

,

,

, and the number of characters per word,

, are loosely termed deep-language variables considered in this paper, following our general statistical theory on alphabetical languages and its linguistic channels, developed in a series of papers [

1,

2,

3,

4,

5,

6,

7,

8]. These parameters refer, of course, to the “surface” structure of texts, not to the “deep” structure mentioned in cognitive theory.

These variables allow to perform “experiments” with ancient or modern readers by studying the literary works read. These “experiments” have revealed unexpected similarity and dependence between texts, because the deep−language variables may be not consciously controlled by writers. Moreover, the linear linguistic channels present in texts can further assess, by a sort of “fine tuning”, how much two texts are mathematically similar.

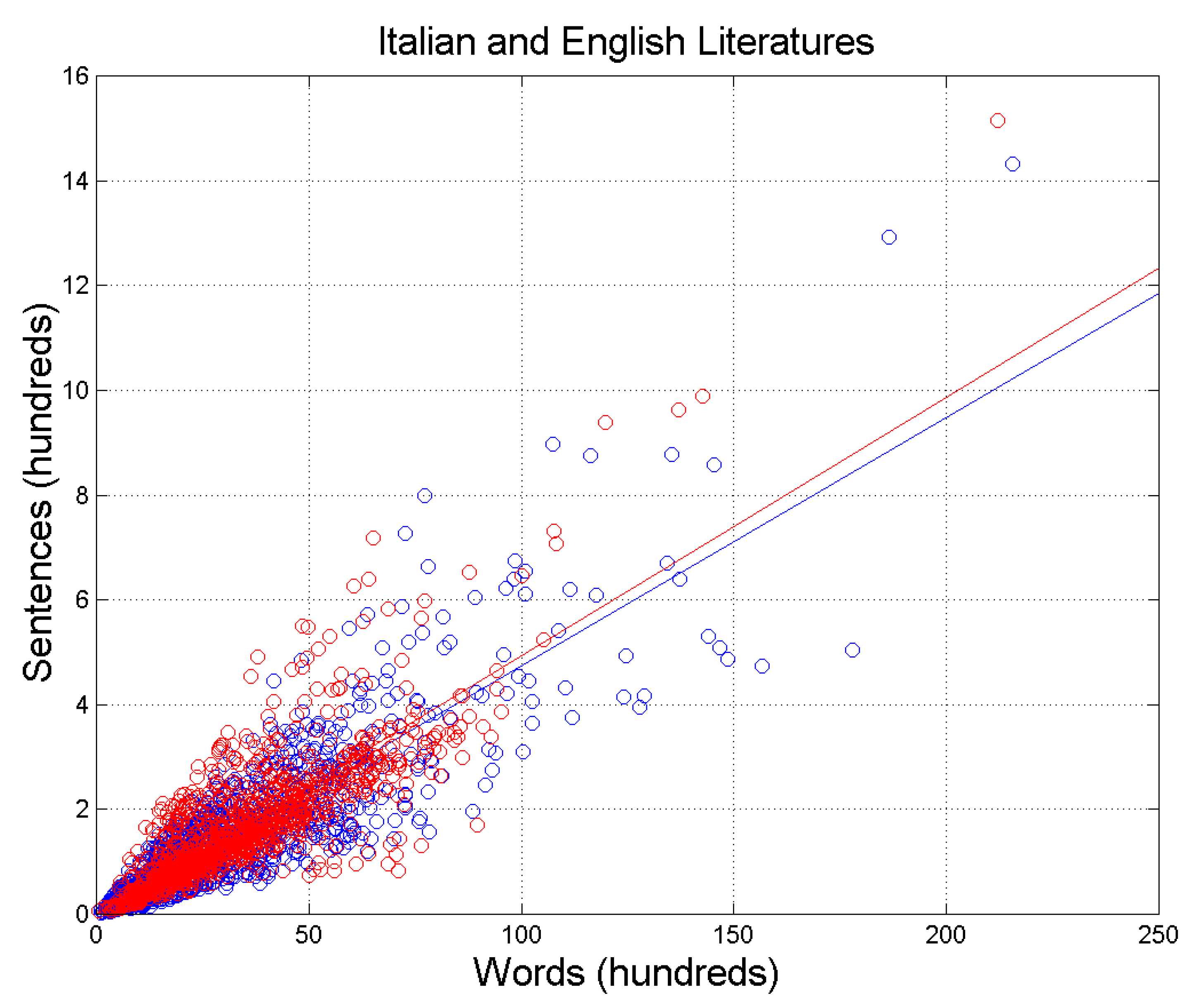

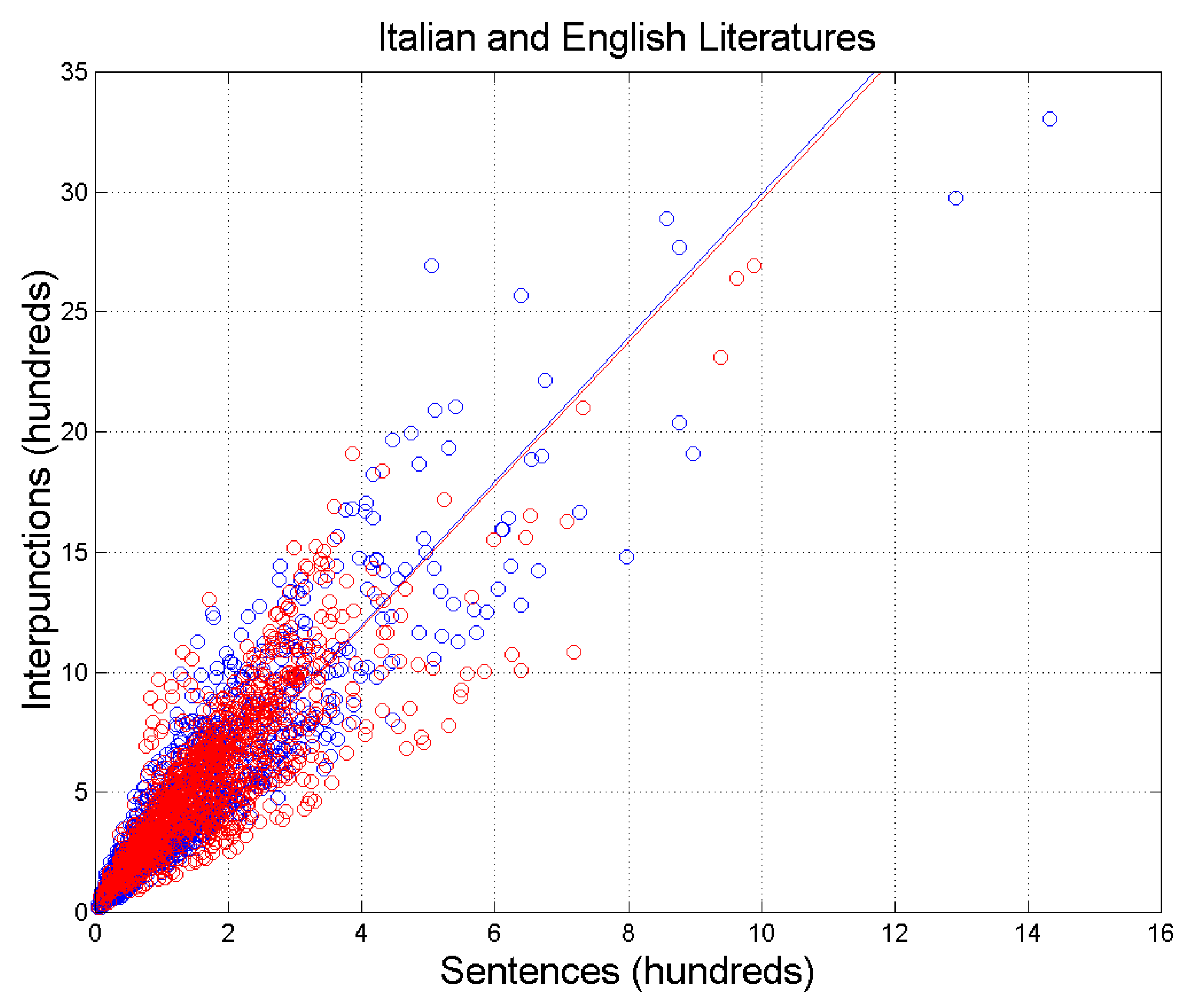

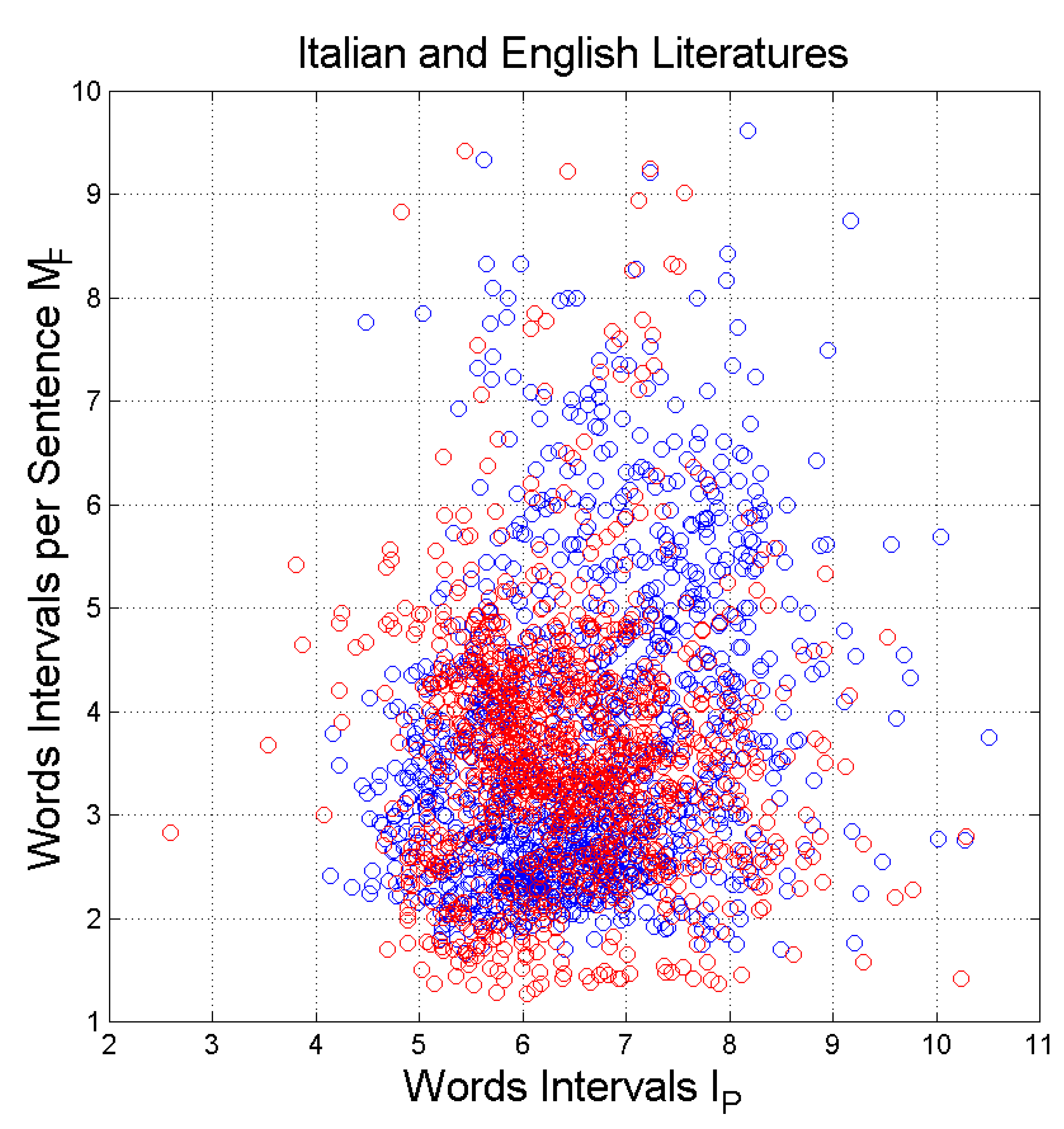

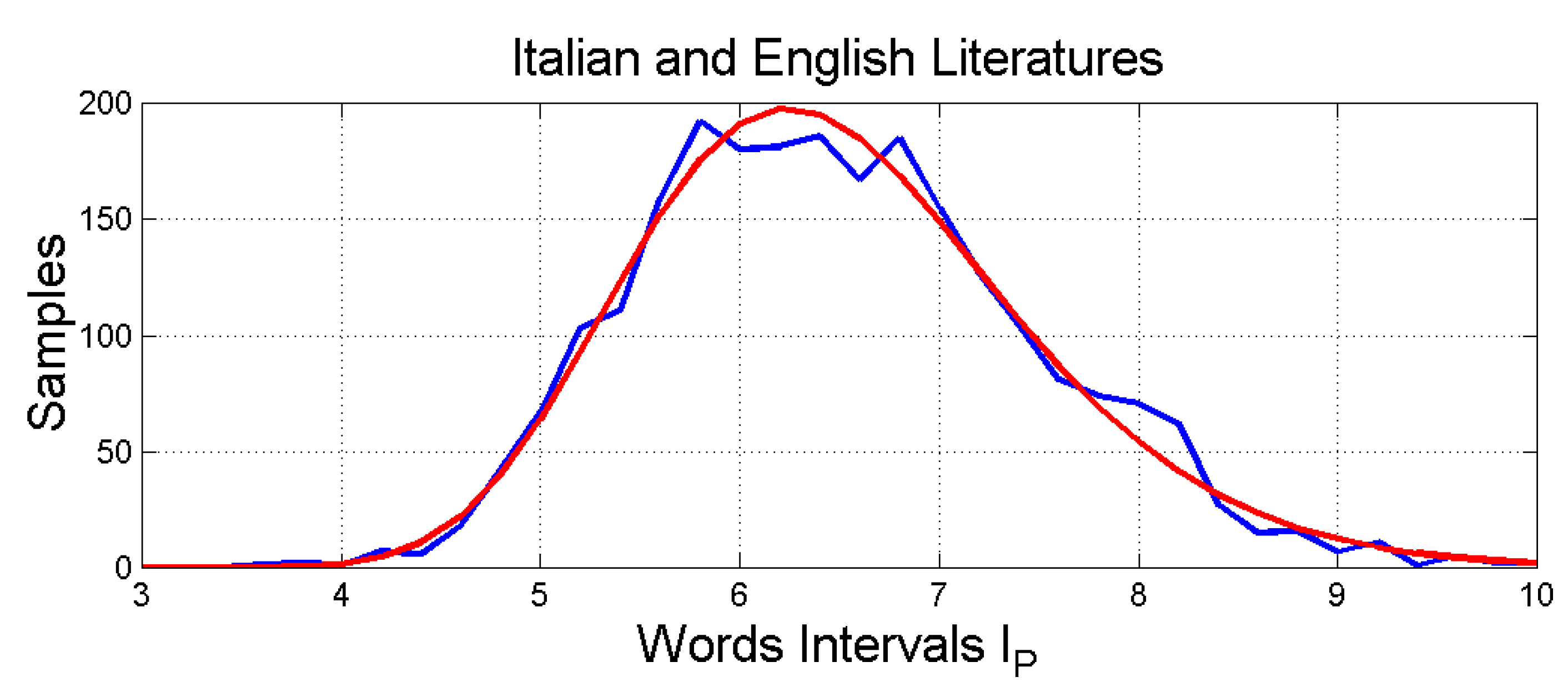

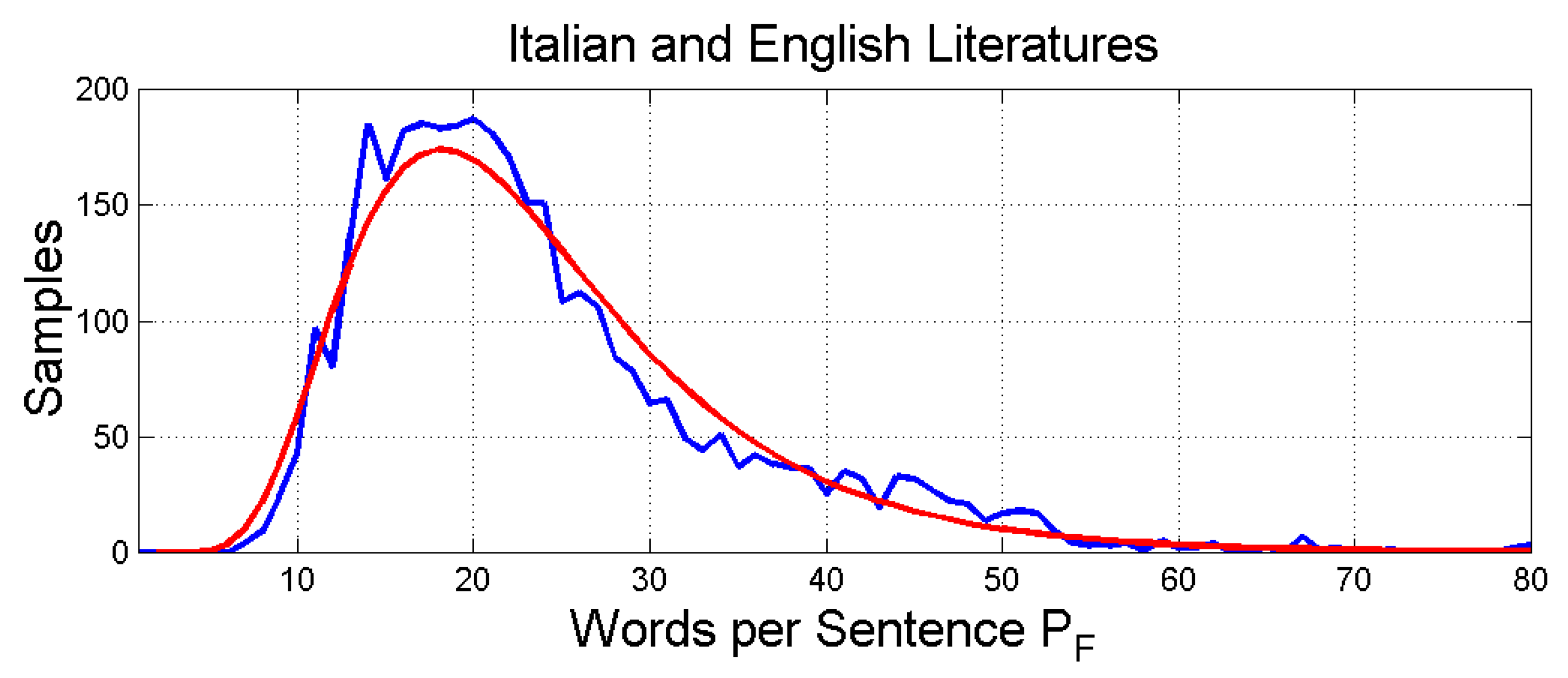

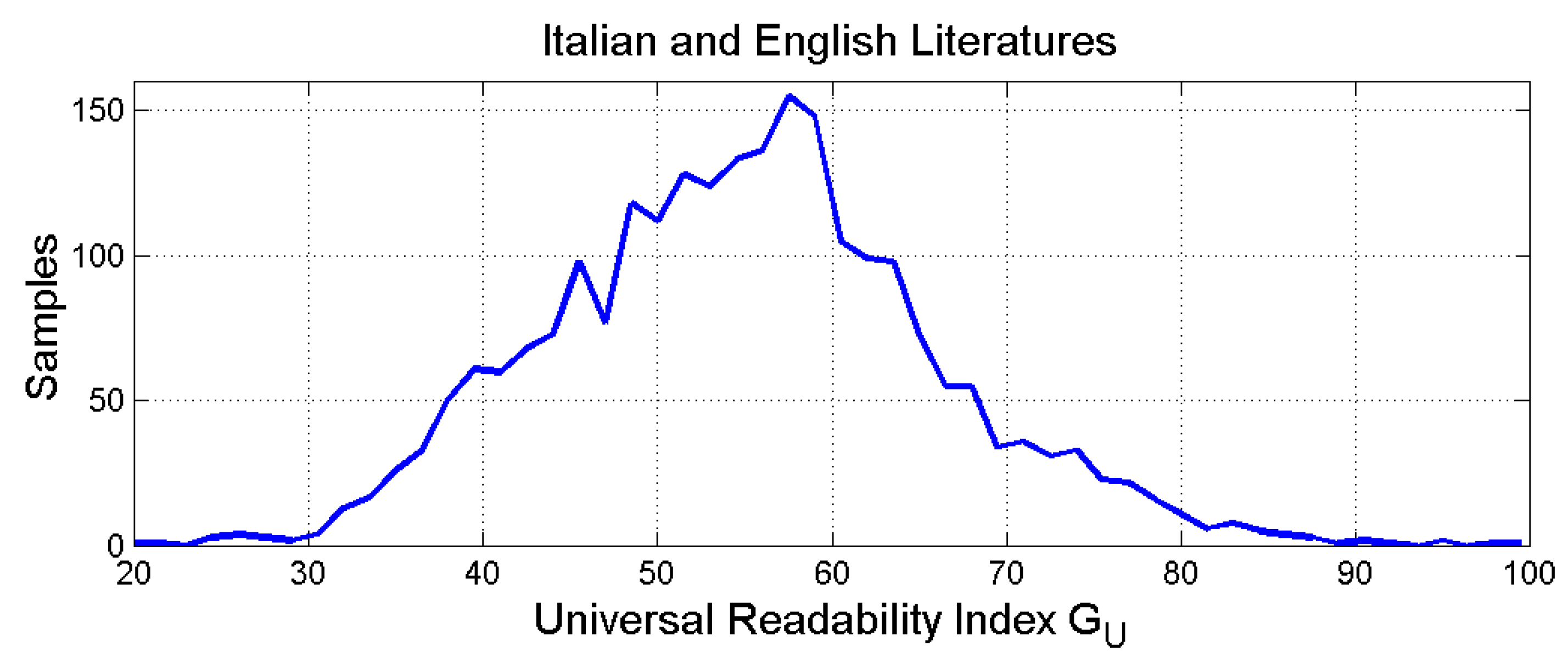

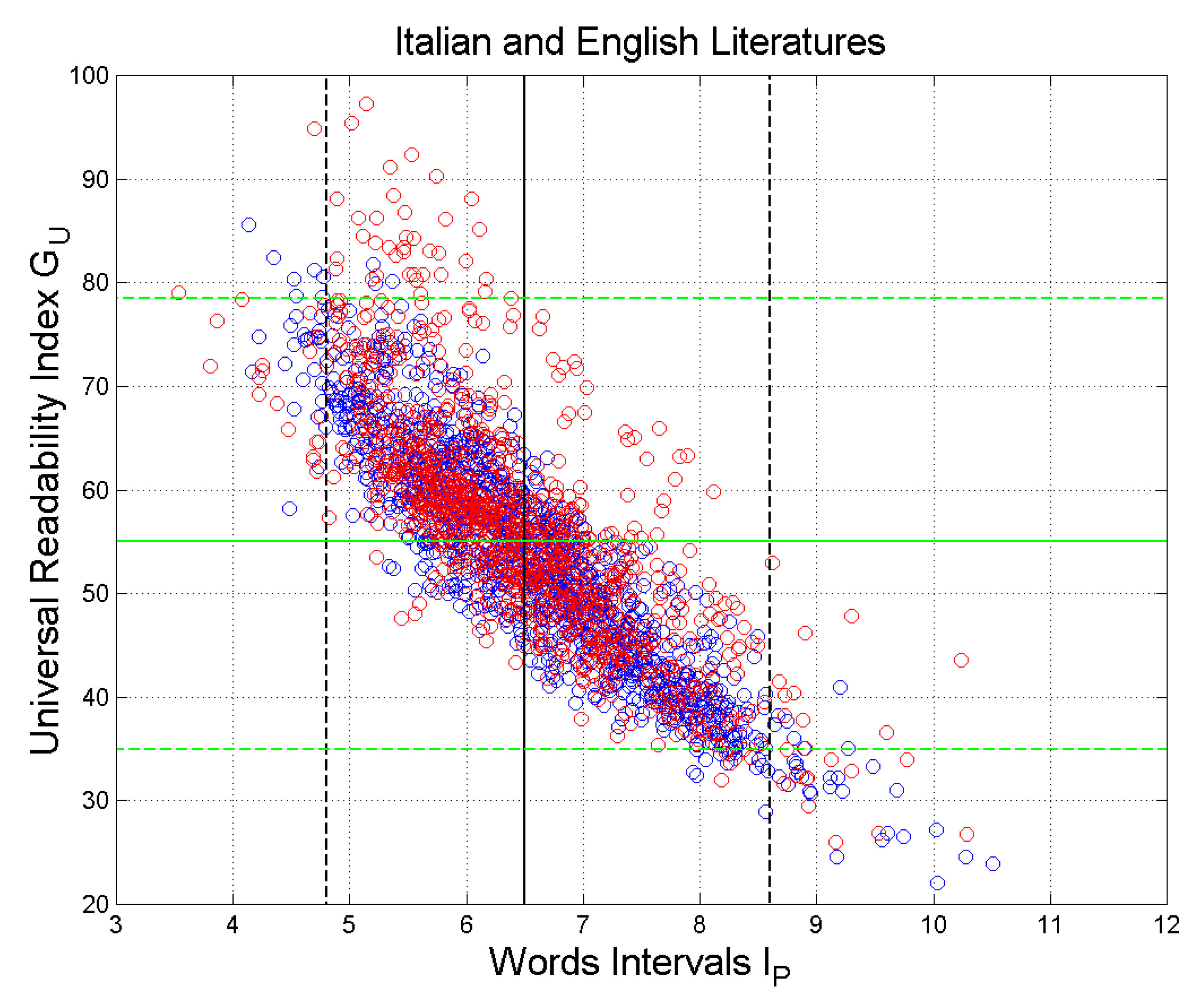

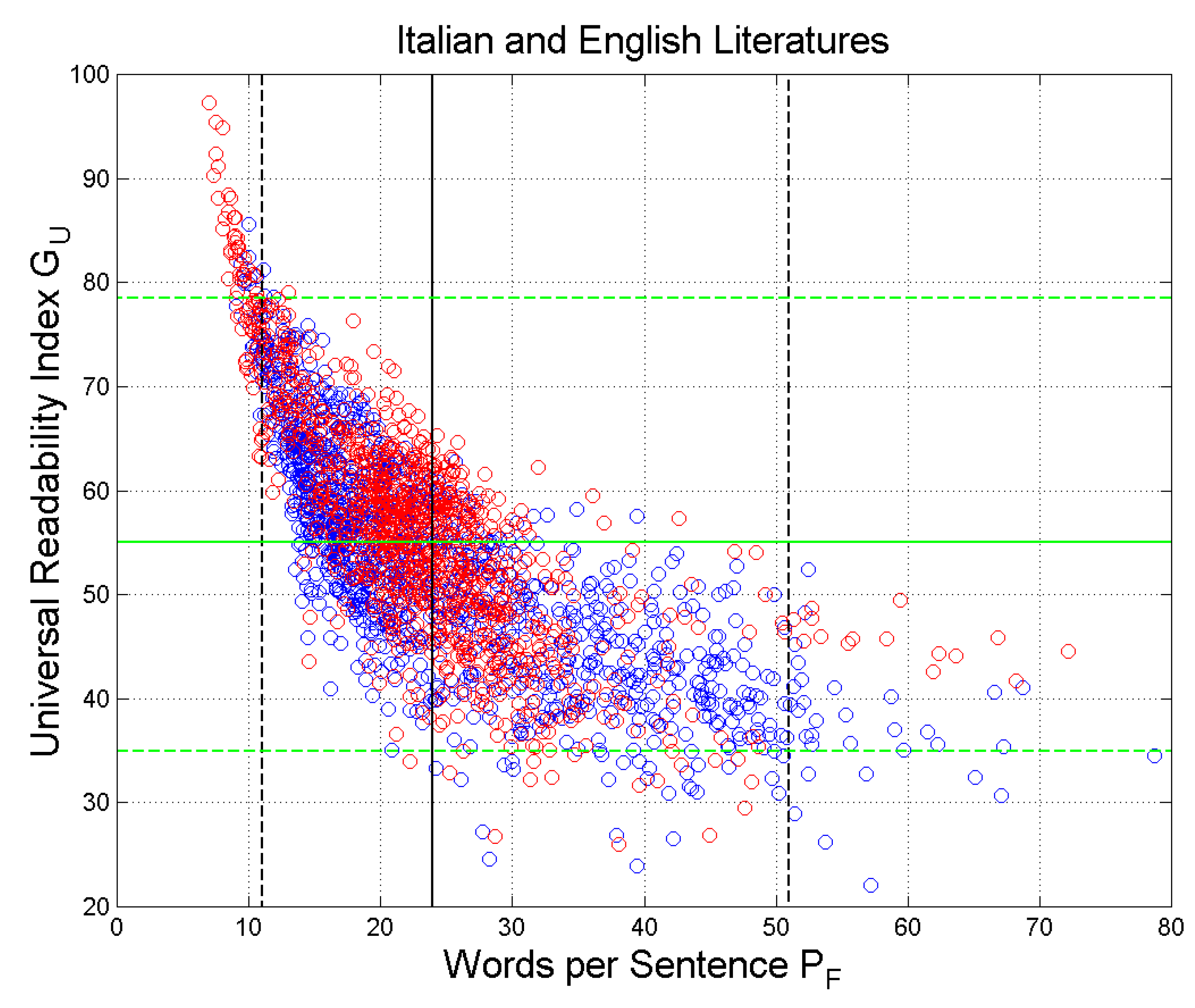

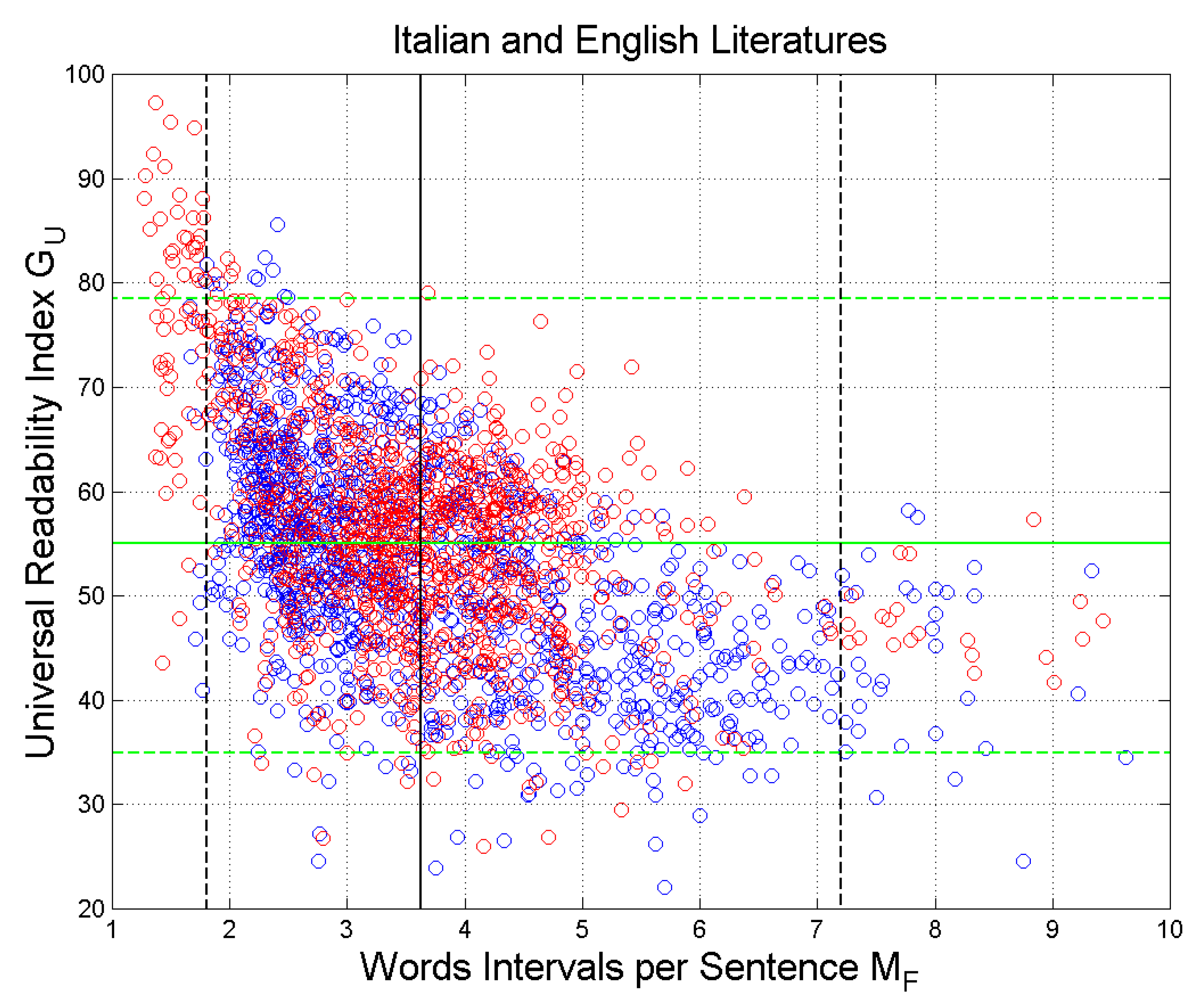

In the present paper, we base our study on a large data base of texts (novels) belonging to the Italian Literature spanning seven centuries [

1], and to the English Literature spanning four centuries [

5]. In References [

1,

5], the reader can find the list of novels considered in the present paper with their full statistics on the linguistic variables recalled above.

We will show, in the following sections, that the two literary corpora can be merged to study the surface structure of texts, therefore, they make a reliable data set from which the size of the two STM capacities can be conjectured.

After this introduction,

Section 2 recalls the deep-language parameters and show some interesting relationships between them, applied to the Italian and English Literatures;

Section 3 recalls the nature of linguistic communication channels present in texts;

Section 4 shows relationships with a universal readability index;

Section 5 models the two STM processing units in series and

Section 6 concludes and proposes future work.