Submitted:

06 October 2023

Posted:

11 October 2023

Read the latest preprint version here

Abstract

Keywords:

Introduction

Speech-2-Text Models

| Company Name | API Name | Release Year | Pricing | Supported Languages |

Accuracy/WER |

|---|---|---|---|---|---|

| Assembly AI | AssemblyAI S2T | 2020 | Paid | 9 | 16.8 (WER) |

| AWS | AWS Transcribe | 2017 | Paid | 75 | 18.42% (WER) |

| Wit.ai | Wit Speech | 2015 | Paid | 132 | — |

| Microsoft | Microsoft Azure Speech | 2018 | Paid | 147 | 9.2% (WER) |

| Vosk | VoskAPI | 2020 | Free | 20 | 63.4% Acc. |

| Whisper | OpenAI | 2022 | Free | 99 | 11.4 % / 4.2 % (best model-EN) |

| IBM | IBM Watson S2T | 2017 | Paid | 13 | 11.3%-36.4% |

| Google S2T | 2017 | Paid | 119 | 16% (WER) |

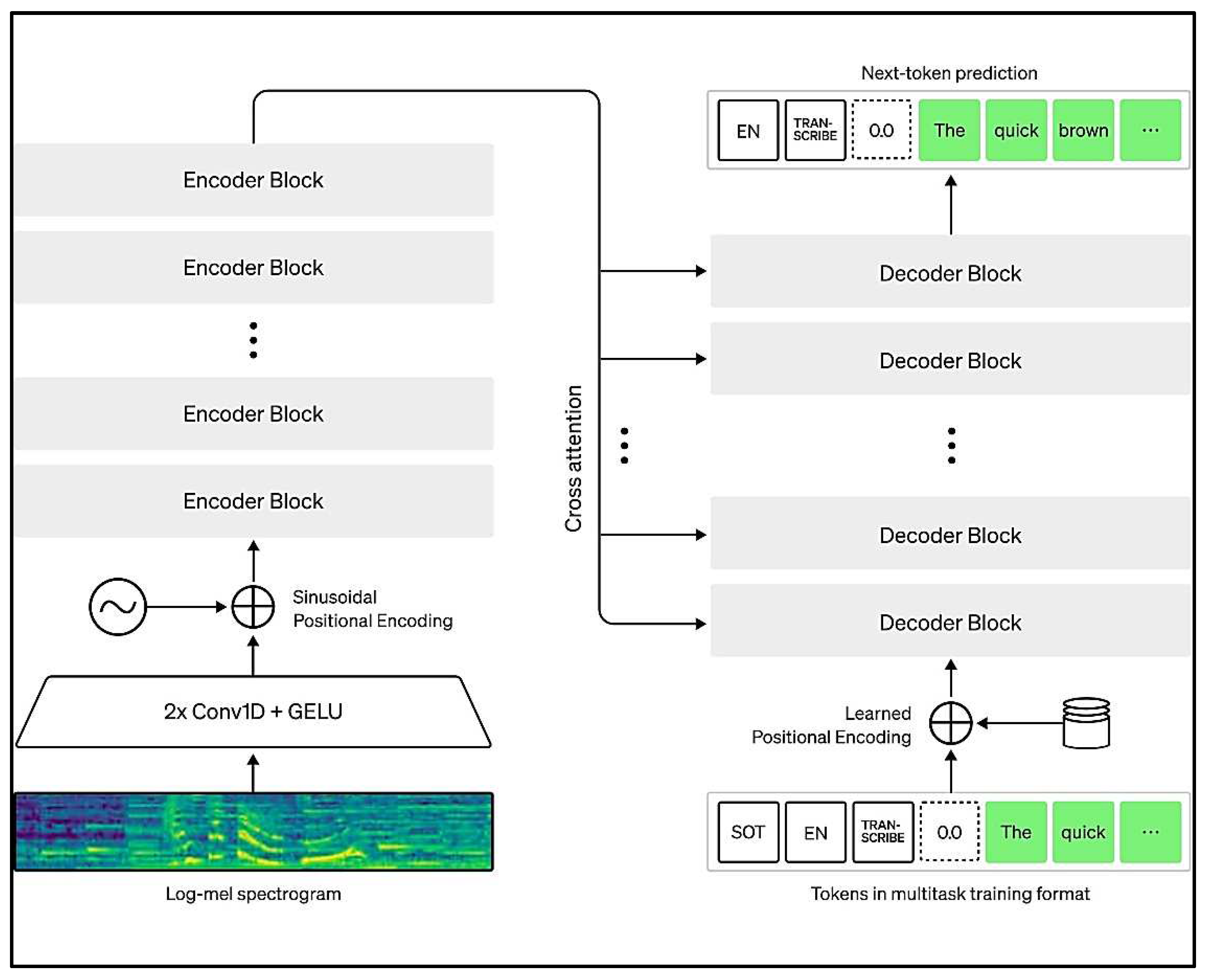

OpenAI Whisper

| Size | Parameters | English-only model | Multilingual model | Required VRAM | Relative speed |

|---|---|---|---|---|---|

| tiny | 39 M | tiny.en | tiny | ~1 GB | ~32x |

| base | 74 M | base.en | base | ~1 GB | ~16x |

| small | 244 M | small.en | small | ~2 GB | ~6x |

| medium | 769 M | medium.en | medium | ~5 GB | ~2x |

| large | 1550 M | N/A | large | ~10 GB | 1x |

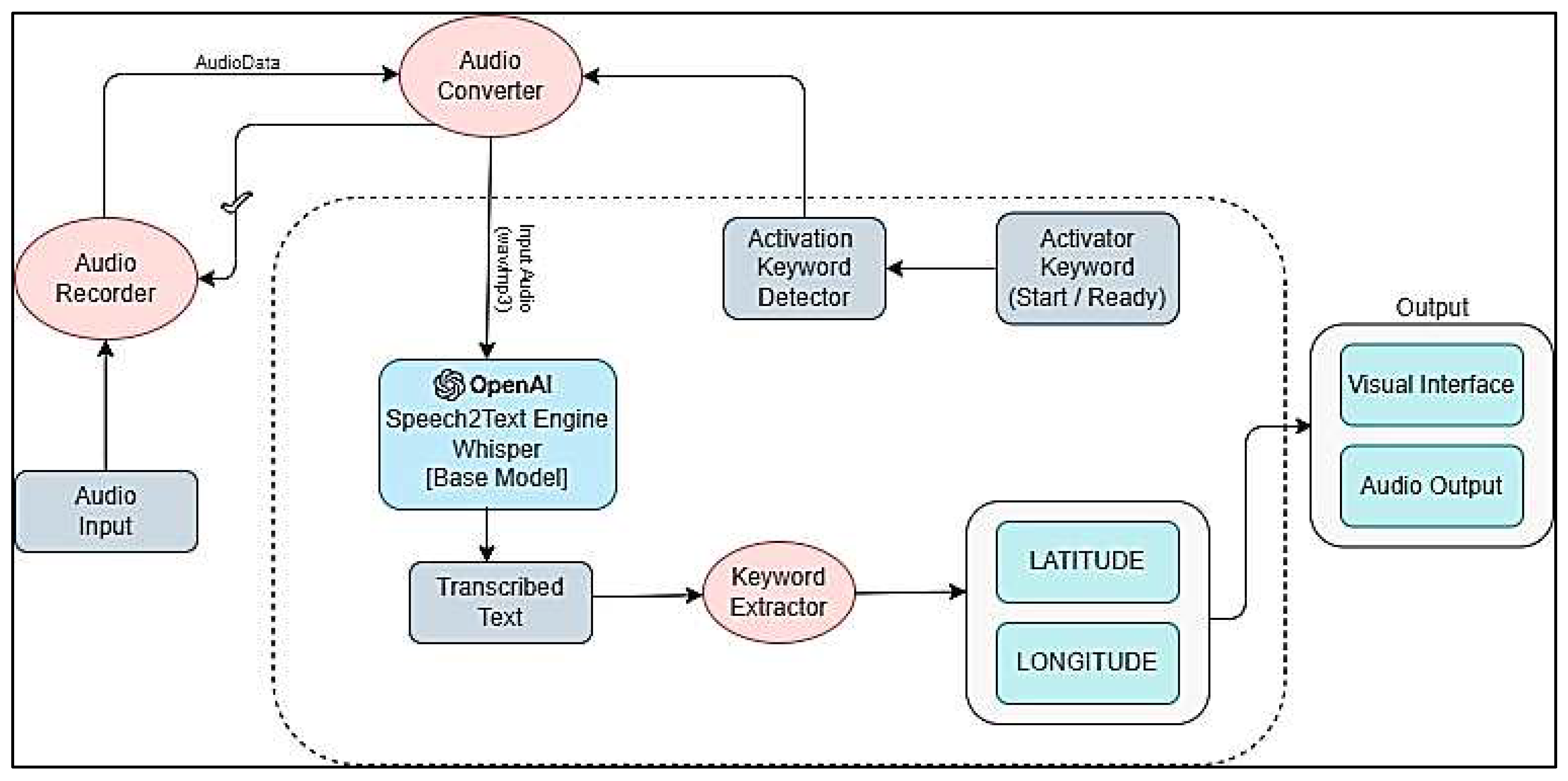

System Overview

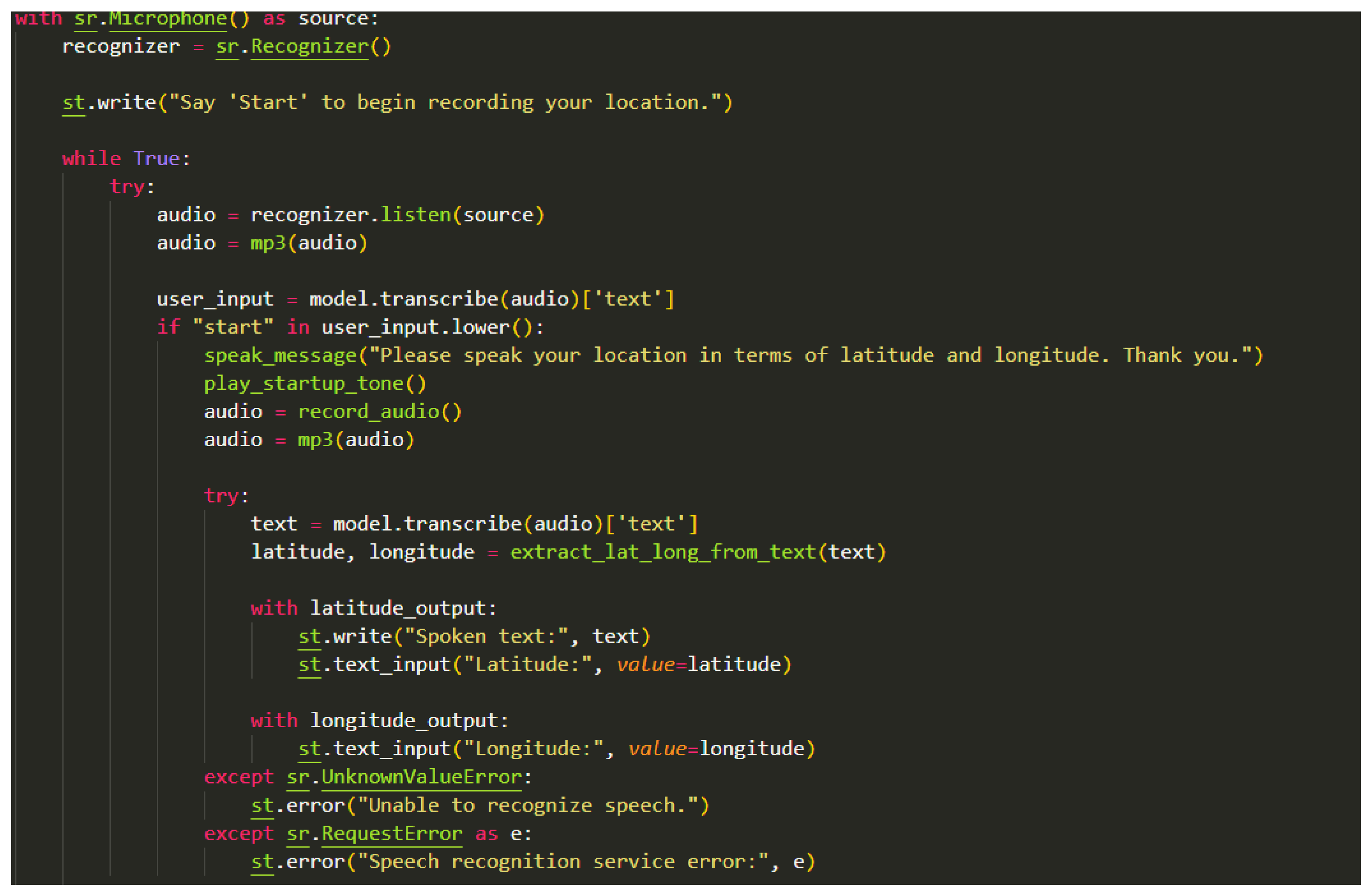

Implementation

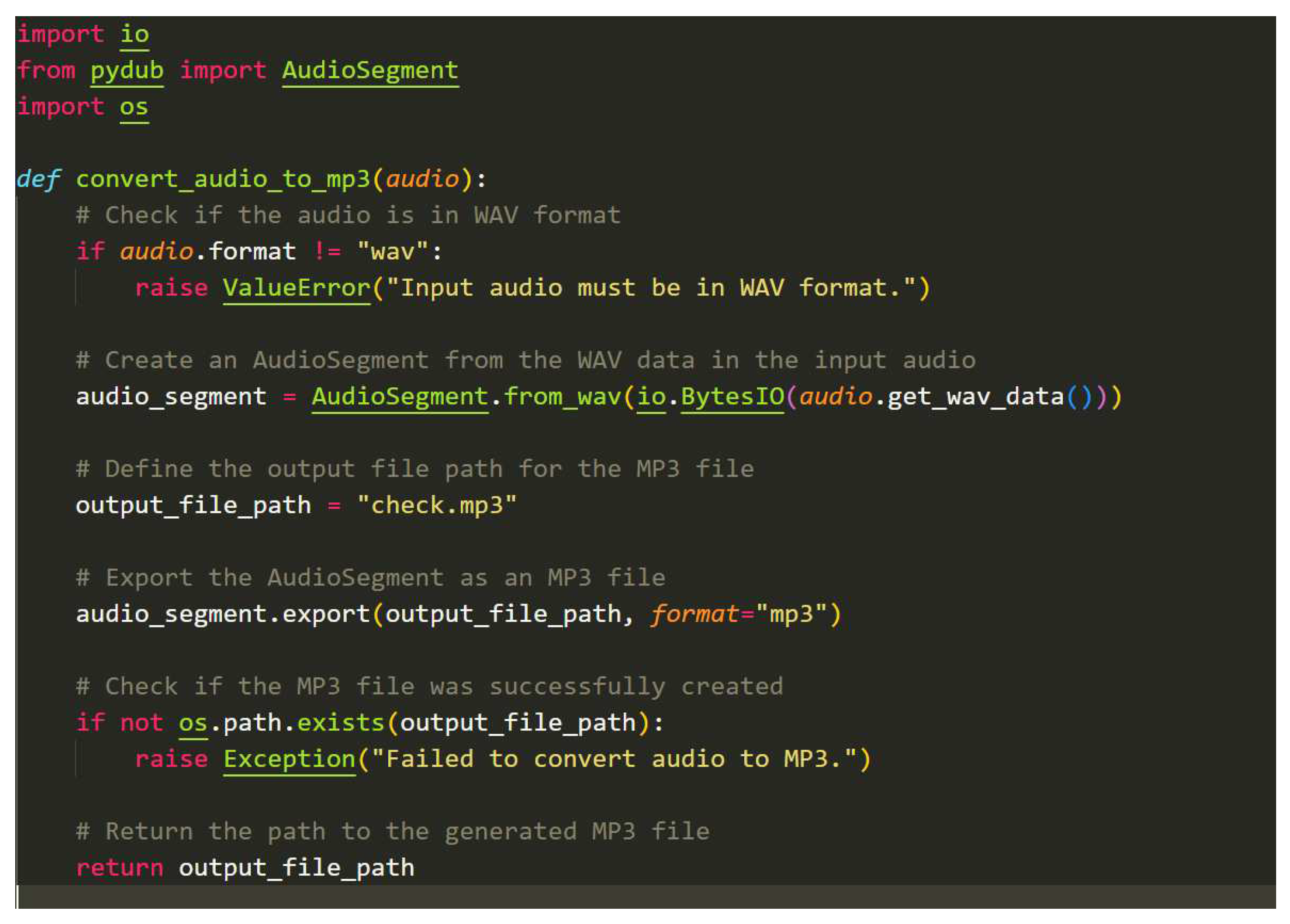

Sound Conversion Algorithm:

- Utilize the pydub library's AudioSegment.from_wav() function. The initial audio input is converted into the WAV format using this function, which creates an audio_segment object. Notably, the io.BytesIO() function is employed to handle and process the audio data produced from the input object, which facilitates this transformation.

- Use the audio_segment object's native export() method. With this technique, the audio is preserved by having it stored as an MP3 file" The output format is wisely specified by setting the format argument to "mp3," which is a directive.

- Allow for the modification of the audio variable, requiring that it be updated to reflect the location of the just created MP3 audio file ("check.mp3").

- The method finally completes its work by supplying an enhanced audio variable that includes the path to the freshly converted MP3 audio file. The transformed audio content can then be accessed quickly thanks to this route.

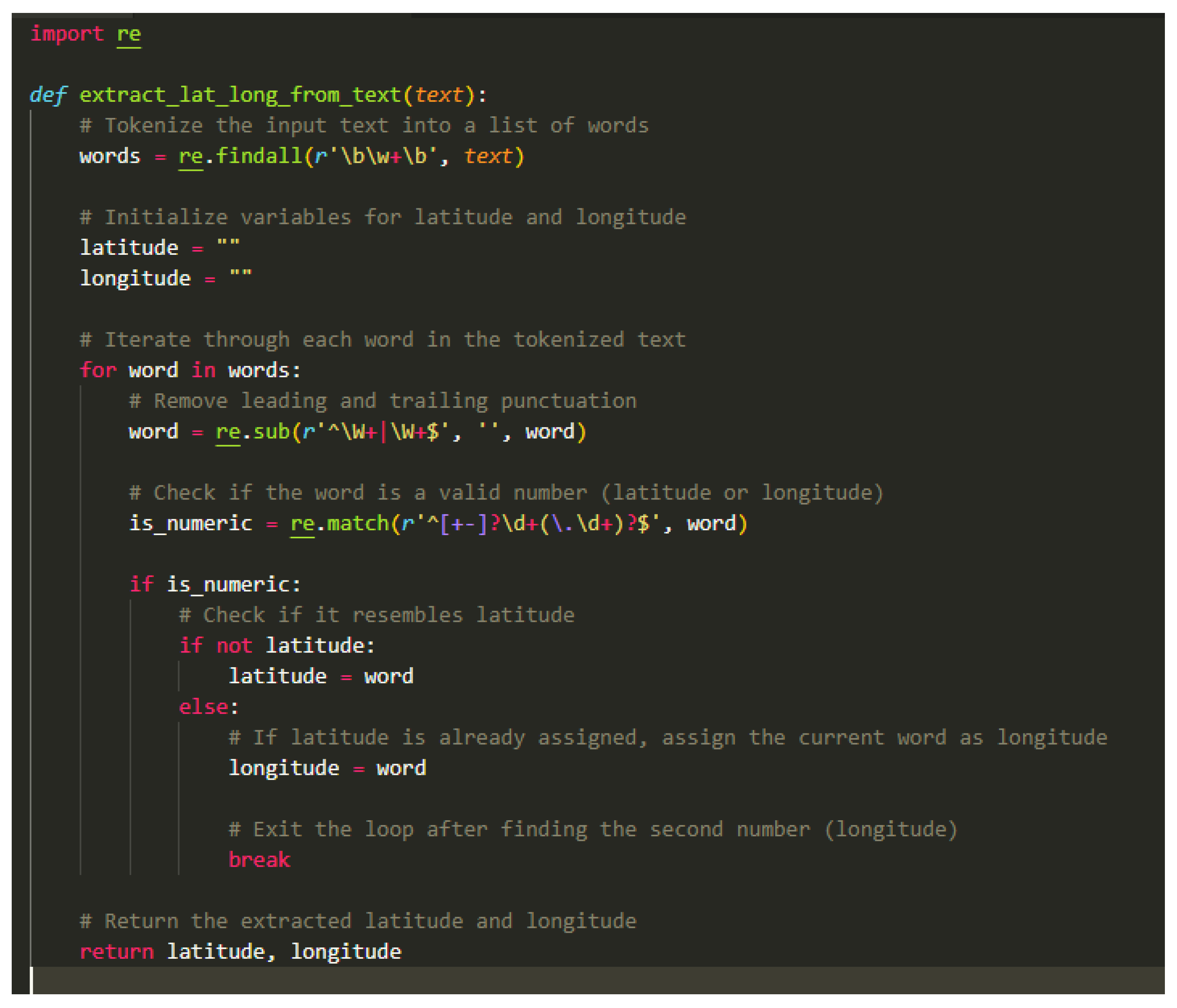

Location Extraction Algorithm:

| ALGORITHM: `extract_lat_long_from_text` functioning | |

| 1. | Start the function extract_lat_long_from_text(text) with text as input. |

| 2. | Split the input text into individual words using the split() function, and store the result in the words list. |

| 3. | Initialize an empty string variable latitude to store the latitude value and another empty string variable longitude to store the longitude value. |

| 4. | For each word in the words list, do the following:

|

| 5. | Within the if block, check if the latitude variable is empty: |

|

|

| 6. | Exit the loop using the break statement after assigning the longitude value. |

| 7. | The function ends, having captured the latitude and longitude values from the input text. |

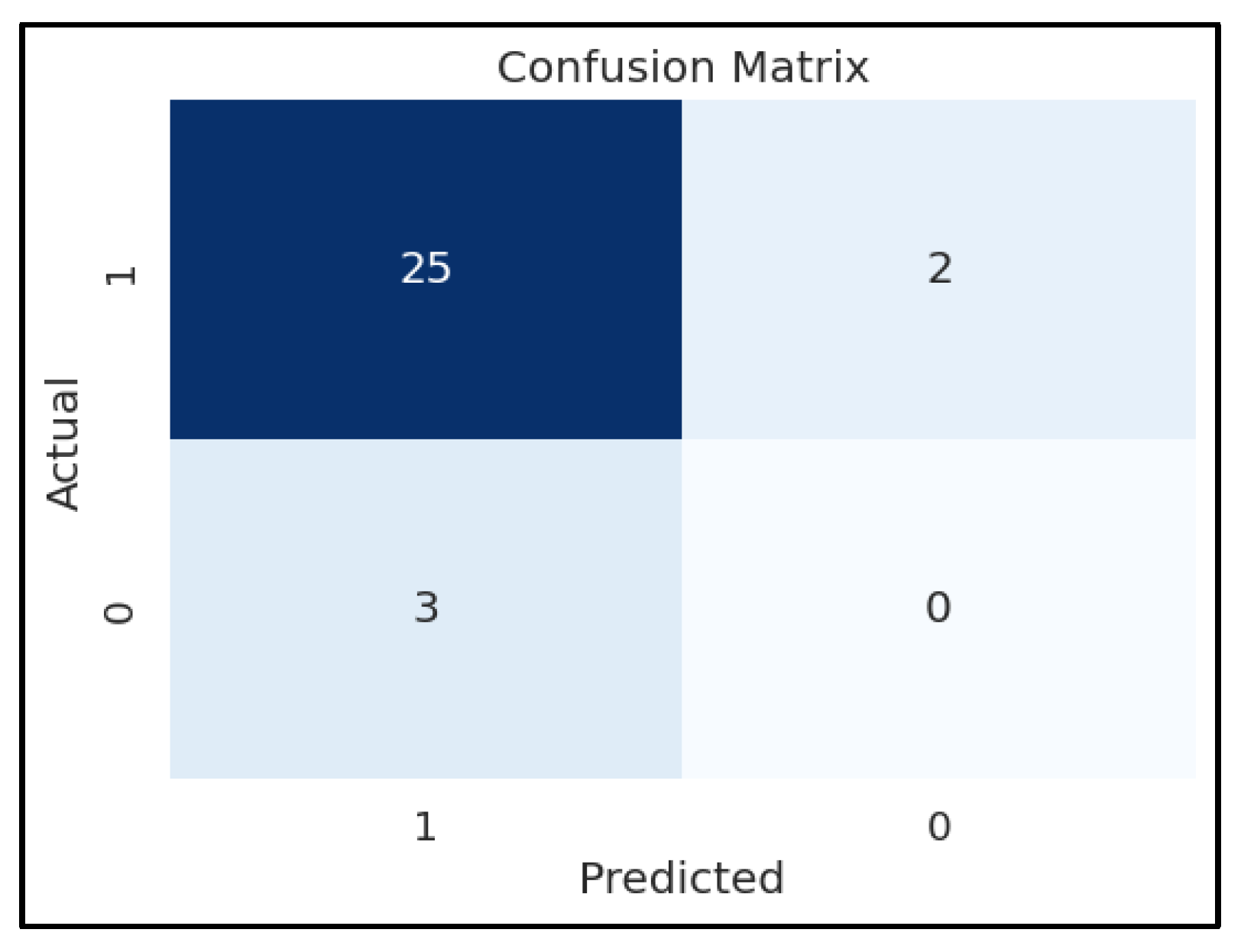

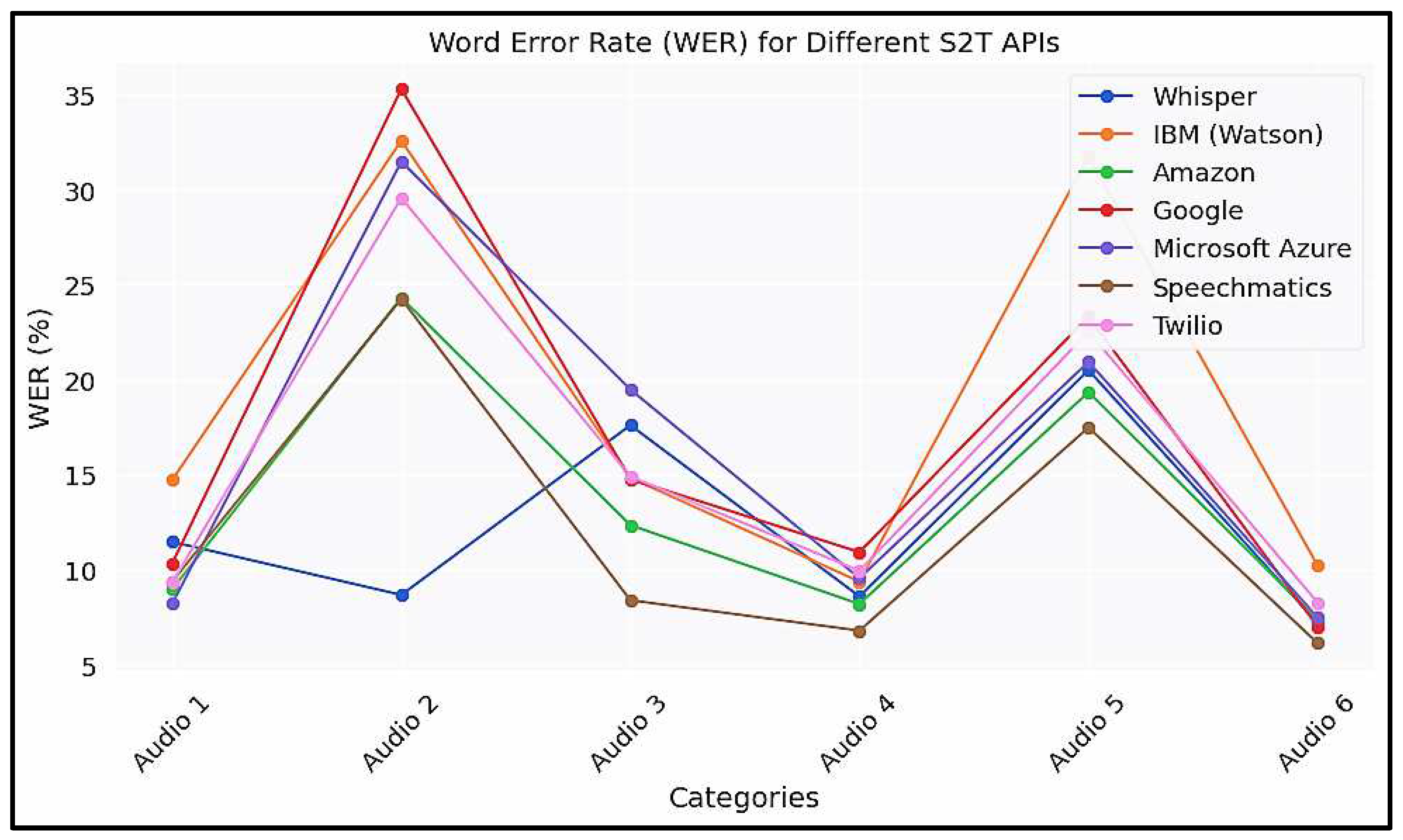

Accuracy

Algorithm for Word Error Rate (WER)

- S — is the number of substitutions,

- D — is the number of deletions,

- I — is the number of insertions and

- N — is the number of words in the reference

| Algorithm 1: Calculation of WER with Levenshtein distance function | |

| 1 | function WER(Reference r, Hypothesis h) |

| 2 | int [|r| + 1 |h| + 1] D*Initialization |

| 3 | for (i = 0; i <= |r|; i++) do |

| 4 | for (j = 0; j <= |h|; j++) do |

| 5 | if i == 0 then |

| 6 | D[0][j] ← j |

| 7 | else if j == 0 then |

| 8 | D[i][0] ← i |

| 9 | end if |

| 10 | end for |

| 11 | end for |

| 12 | for (i = 1; i <= |r|; i++) *Calculation Part |

| 13 | for (j = 1; j <= |h|; j++) do |

| 14 | if r[i - 1] == h[j - 1] then |

| 15 | D[i][j] ← D[i - 1][j - 1] |

| 16 | else |

| 17 | sub ← D[i - 1][j - 1] + 1 |

| 18 | ins ← D[i][j - 1] + 1 |

| 19 | del ← D[i - 1][j] + 1 |

| 20 | D[i][j] ← min(sub, ins, del) |

| 21 | end if |

| 22 | end for |

| 23 | end for |

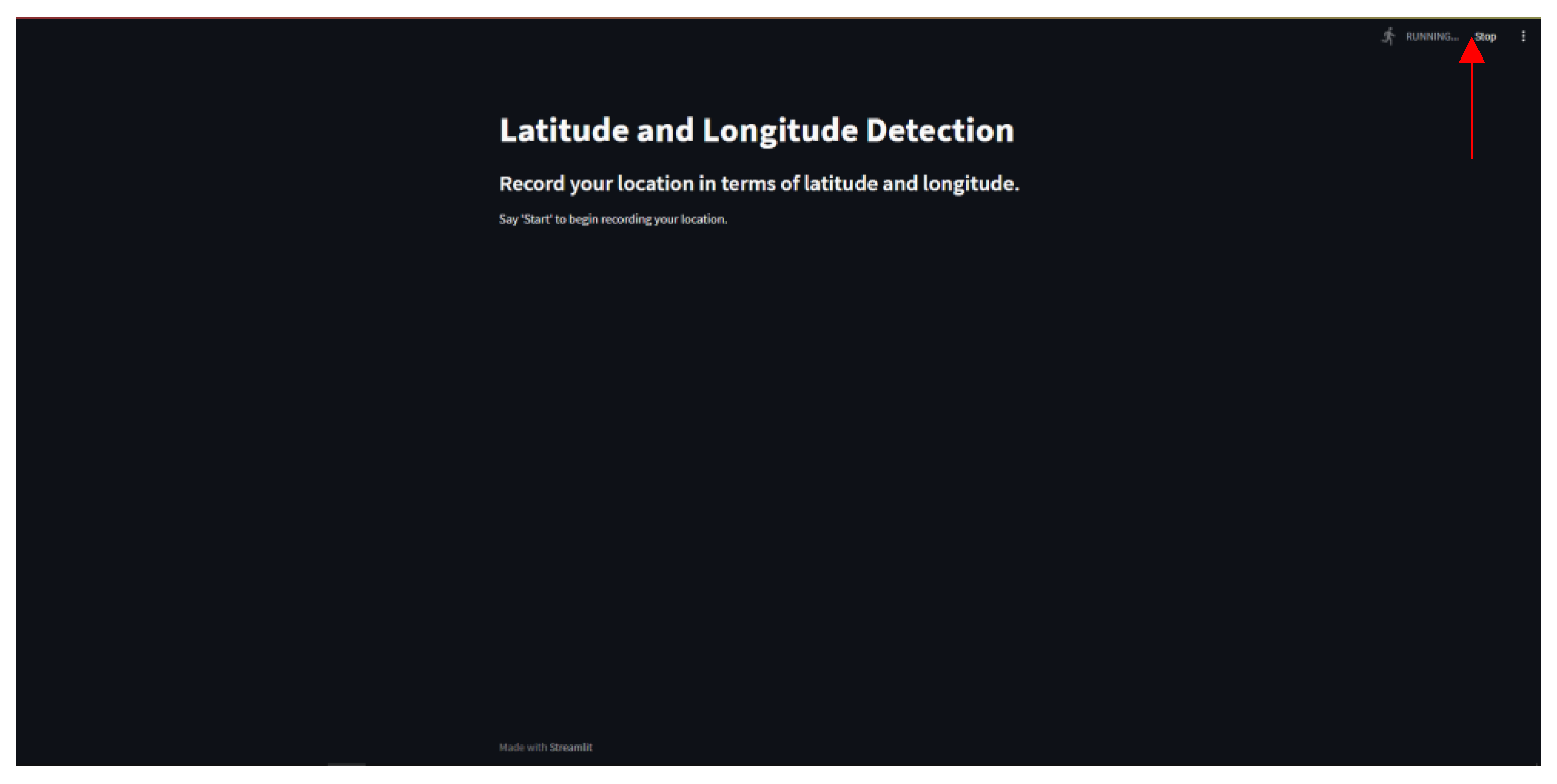

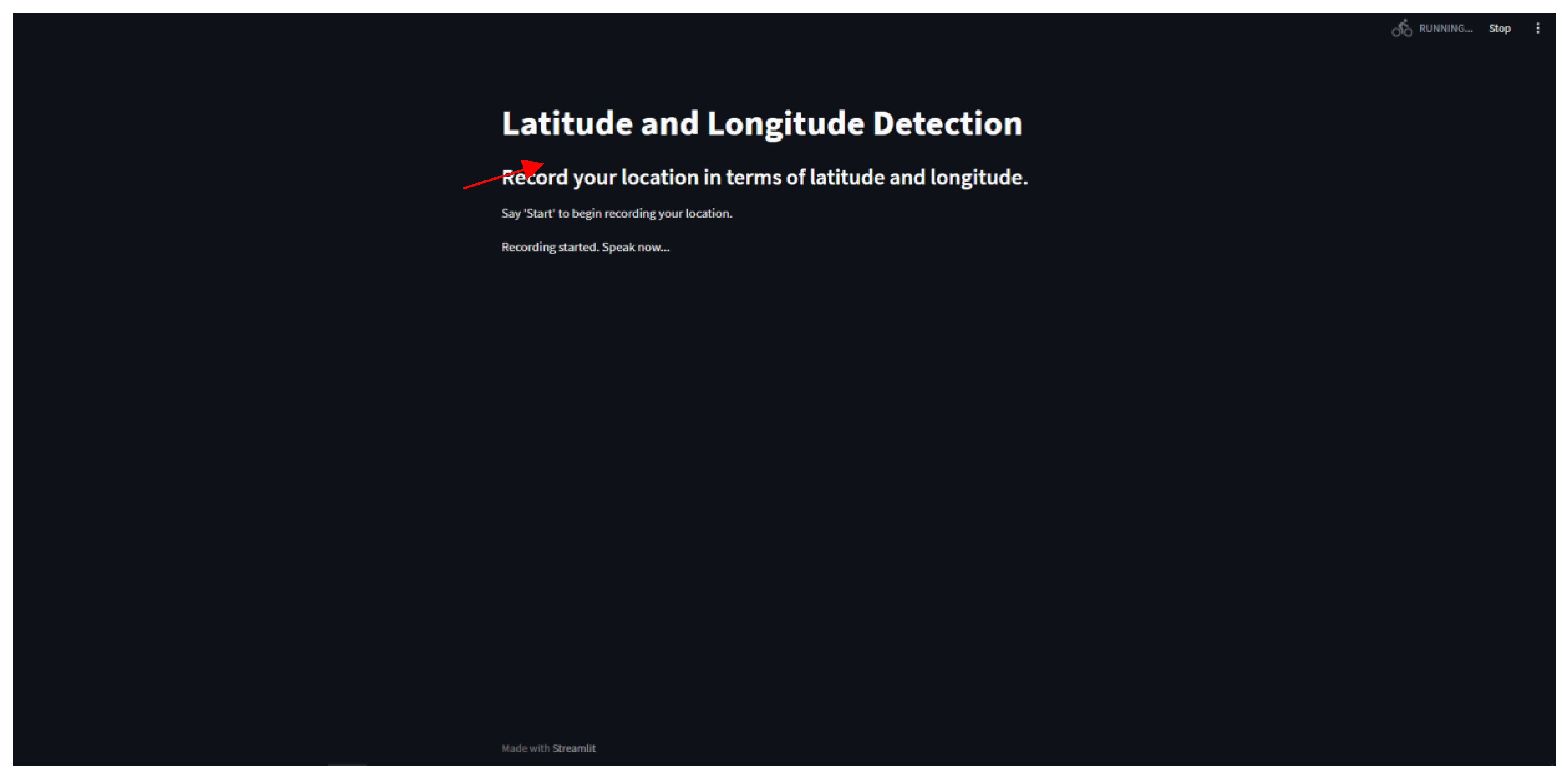

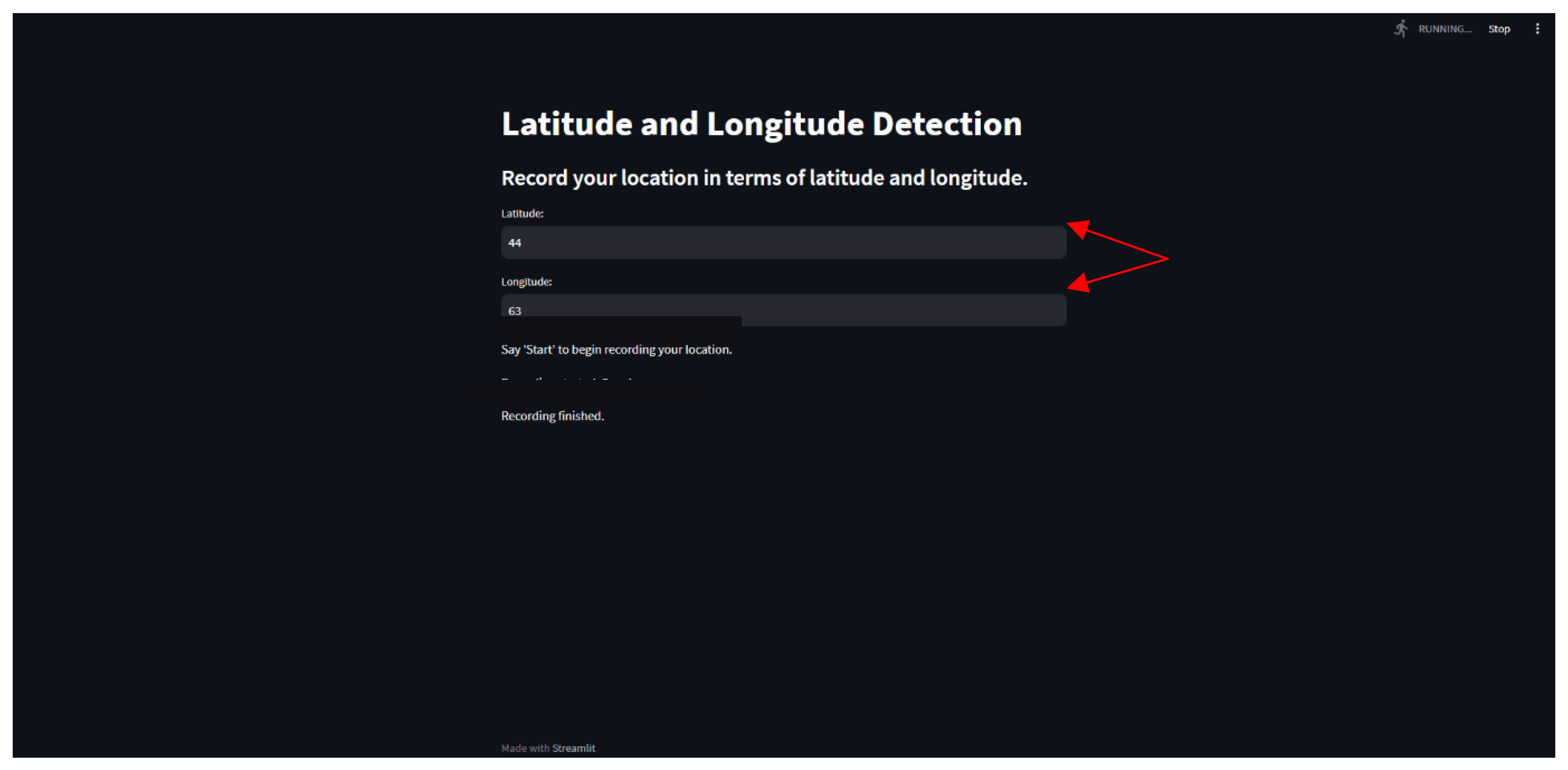

Interface Illustration

Conclusion

Key Code Snippets

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).