Submitted:

06 September 2023

Posted:

07 September 2023

You are already at the latest version

Abstract

Keywords:

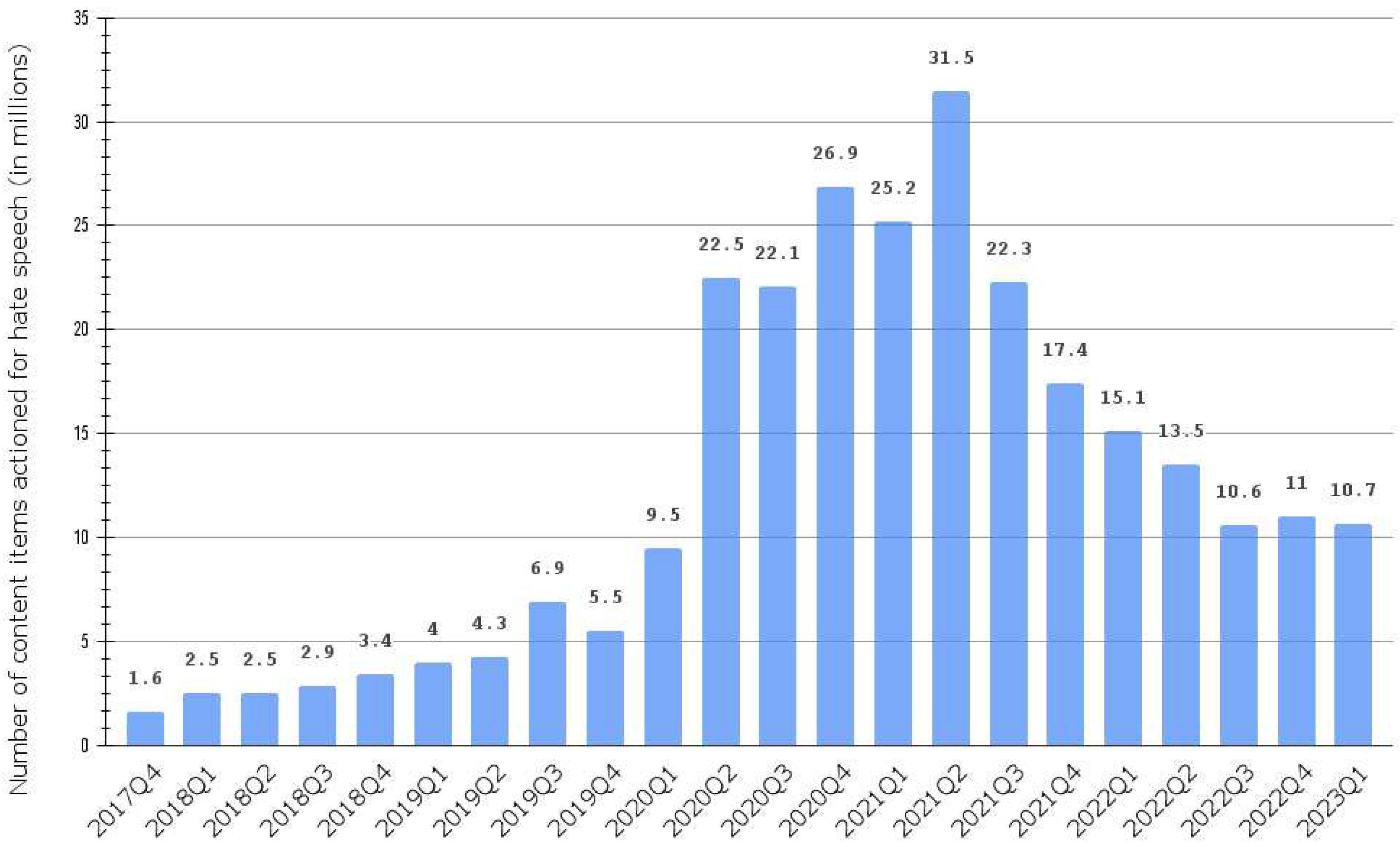

0. Introduction

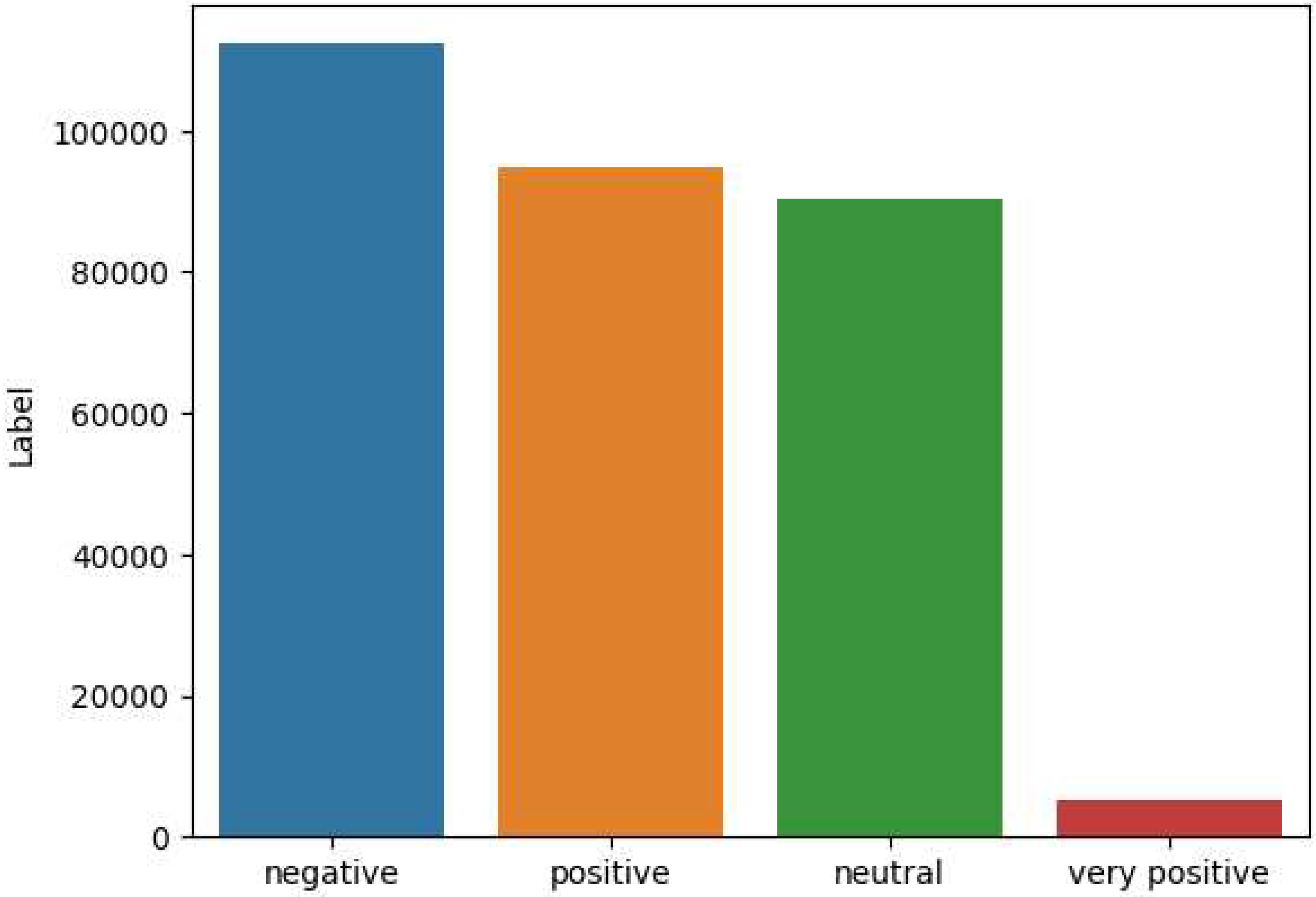

- Construct a public Arabic-Jordanian dataset of 403,688 annotated tweets labeled according to the appearance of hate speech as very positive, positive, neutral, and negative.

- Comparing the performances of machine learning models for Hate speech detection of Arabic Jordanian dialect tweets.

1. Literature Review

1.1. Hate Speech and Related Concepts

1.2. Arabic Hate Speech corpora and detection Systems

| Ref# | Dialect | Source | Dataset Size | Labeling Process | Classes | Best Classifier | Results |

|---|---|---|---|---|---|---|---|

| [5], 2020 | Mixed | Facebook, Twitter, Instagram, YouTube | 20,000 | Manual | Hate, Not hate | RNN | Acc: 98.7%, F1-score: 98.7%, Recall: 98.7%, Precision: 98.7% |

| [42], 2020 | Saudi | 9,316 | Manual | hateful, abusive, or normal | CNN | Acc: 83%, F1-score: 79%, Recall: 78%, Precision: 81%, AUC: 79% | |

| [41], 2021 (AraCOVID19-MFH) | Mixed | 10,828 | Manual | Yes, No, Cannot decide | arabert Cov19 | F1-score: 98,58% | |

| [43], 2021 (ArHS) | Levantine | 9,833 | Manual | Hate or Normal; Hate, Abusive or Normal | Binary Class: CNN, Ternary class: BiLSTM-CNN and CNN, Multi-class: CNN-LSTM and the BiLSTM-CNN | F1-score: 81%, F1-scode: 74%, F1-score: 56% | |

| [34], 2020 | Mixed | 3,696 | Hate, Neutral, or normal | LSTM+ CNN, with word embedding Aravec (N-grams and skip grams) | F1-score: 71.68% | ||

| [44], 2022 (arHateDataset) | mixed | Variaty | 4,203 | racism, Against religion, gender inequality, violence, offense, bullying, normal positive and normal negative | RNN architectures: DRNN-1: binary classification, DRNN-2: multi-labelled classification | Validation accuracy: 83.22%, 90.30% | |

| [5], 2020 | Mixed | Facebook, Twitter, Instagram, YouTube | 20,000 | Manual | Hate, Not hate | RNN | Acc: 98.7%, F1-score: 98.7%, Recall: 98.7%, Precision: 98.7% |

| [35], 2023 | Mixed | Twitter (public datasets) | 34,107 | Unifying annotation in datasets | Hate, no hate | AraBERT | Accuracy: 93% |

| [38], 2021 | Standard and Gulf | Training: 9,345, Unlabelled: 5M, Testing: 4,002 | Semi-supervised Learning | Clean, or offensive | CNN + Skip gram | F1-score: 88.59%, Recall: 89.60%, Precision: 87.69% | |

| [32], 2020 | Mixed | 3,232 | Manual | Hate or Not hate | CNN-FastText | Acc: 71%, F1-score: 52%, Recall: 69%, Precision: 42% | |

| [36], 2020 | Saudi | 9,316 | Hate or Not hate | CNN | Acc: 83%, F1-score: 79%, Recall: 78%, Precision: 81% | ||

| [39], 2022 | Tunisian | 10,000 | Manual | Hateful or Normal | AraBERT | F1-Score: 99% | |

| [30], 2020 | Gulf | 5,361 | Manual | 2-classes: Clean or Offensive/Hate, 3-classes: Clean, Offensive or Hate, 6-classes: Clean, Offensive, Religious Hate, Gender Hate, Nationality Hate or Ethnicity Hate | CNN + mBERT | 2-classes: 87.03 %, 3-classes: 78.99%, 6-classes: 75.51% | |

| [40], 2022 | Mixed | 3,000 | Manual | Extremist or Non-extremist | SVM | Acc: 92%, F1-score: 92%, Recall: 95%, Precision: 89% |

1.3. Hate Speech Datasets for other Languages

| Ref# | Language | Source | Dataset Size | Labeling Process | Classes | Best Classifier | Results |

|---|---|---|---|---|---|---|---|

| [8], 2023 | Turkish | 5,000 | Manual | Cyberbullying or Non-Cyberbullying (balanced) | BERT | F1-score: 92.8% | |

| [33], 2021 | Bengali | YouTube and Facebook Comments | 30,000 | Manual | Hate or Not hate | SVM | Acc: 87.5% |

| [46], 2022 | Turkish | Istanbul Convention Dataset (1,033), Refugee Dataset (1,278) | Manual | 5-classes: No Hate speech, Insult, Exclusion, Wishing Harm or Threatening Harm | BERTurk | F1-score: Istanbul Convention Dataset (71.52%), Refugee Dataset (72.34%) | |

| [37], 2022 | Kurdish | Facebook Comments | 6,882 | Manual | Hate or Not hate | SVM | F1-score: 68.7% |

| [47], 2022 (ETHOS) | English | YouTube and Reddit comments | Binary (998), Multi-label (433) | Auto and Manual | 8-classes: violence, directed vs generalized, gender, race, national origin, disability, sexual orientation, or religion | Binary: DistilBERT, Multi-label: NNBR (BiLSTM + Attention Layer + FF layer | F1-score: Binary: 79.92%, Multi-label: 75.05% |

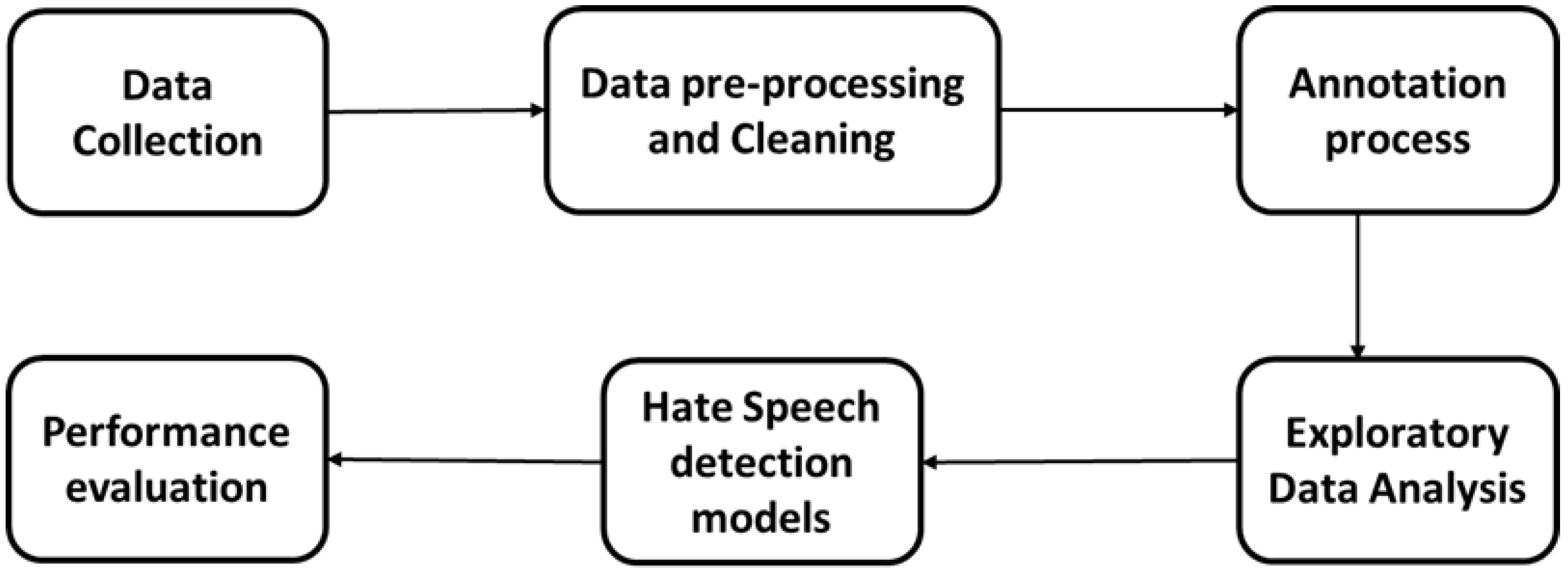

2. Methodology

2.1. Data Collection

- Language Filter: The search parameters were further refined by specifying the Arabic language, ensuring that only tweets written in Arabic were retrieved.

- Location Filter: After scrapping a random sample of tweets, it was found that most tweets do not have the location field that was supposed to be populated in users’ profiles. To overcome this issue, Twitter’s advanced search techniques were used to include location-based filters. The "search" techniques focused on Jordan’s main cities and regions which cover 12 governorates of Jordan and include 20 cities and regions as listed in Table 3.

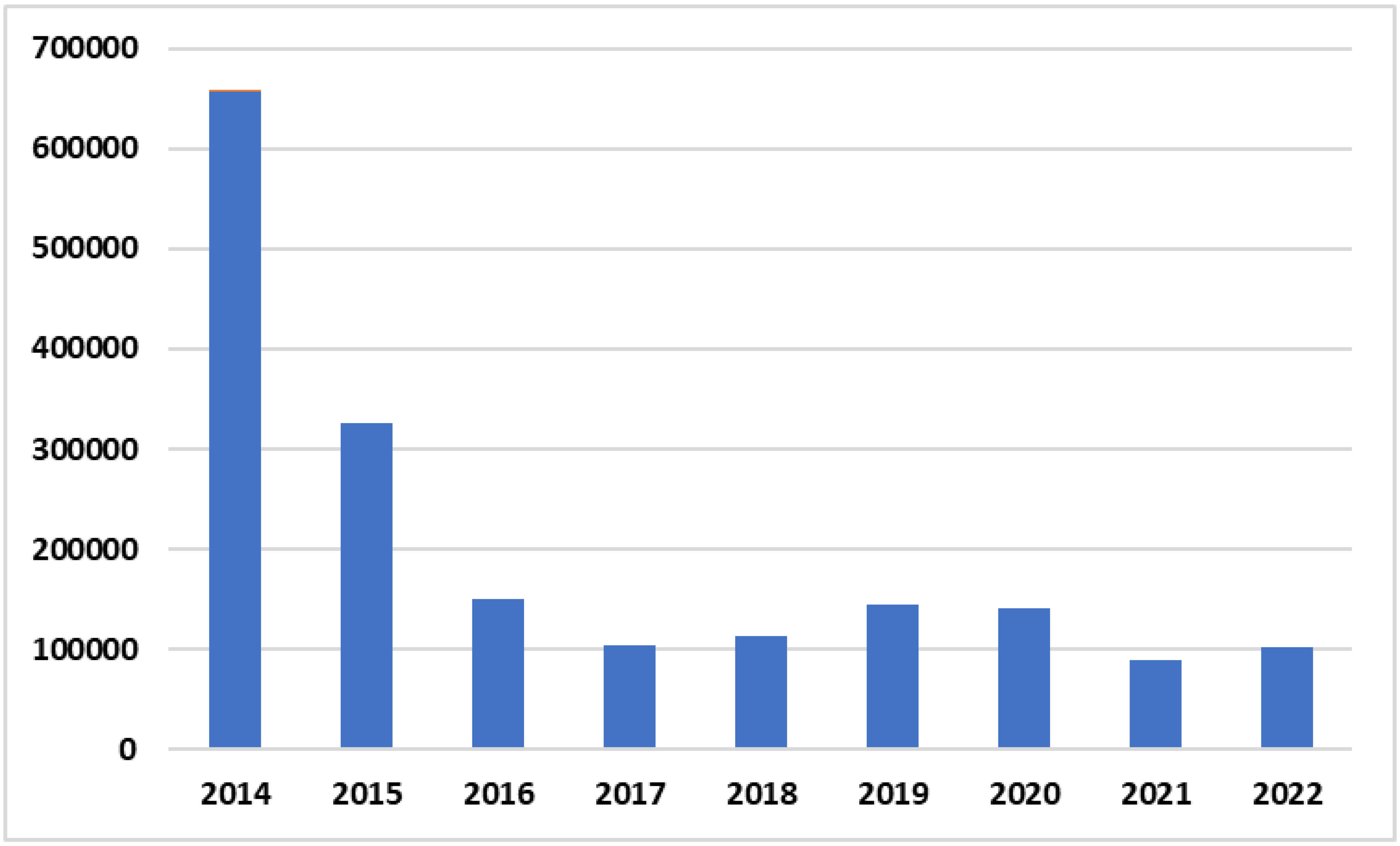

- Systematic temporal approach: The data collection process was organized over a period of time extending from the beginning of 2014 to the end of 2022. A monthly segmentation strategy was adopted where tweets for each year were extracted individually and systematically on a month-by-month basis. This approach ensured the stability of the scrapping process and the systematic accumulation of a large number of tweets spread over a longer period of time. Subsequently, the distinct groups from each month and year were combined into one data set. The initial data set contained 2,034,005 tweets in the Jordanian Arabic dialect.

| Abu Alanda | Ajloun | Al Karak | Al Mafraq | Al salt |

| Amman | Aqaba | Baqaa | Irbid | Jarash |

| Karak | Maan | Madaba | Mafraq | Mutah |

| Ramtha | Salt | Tafilah | Zarqa | Marka |

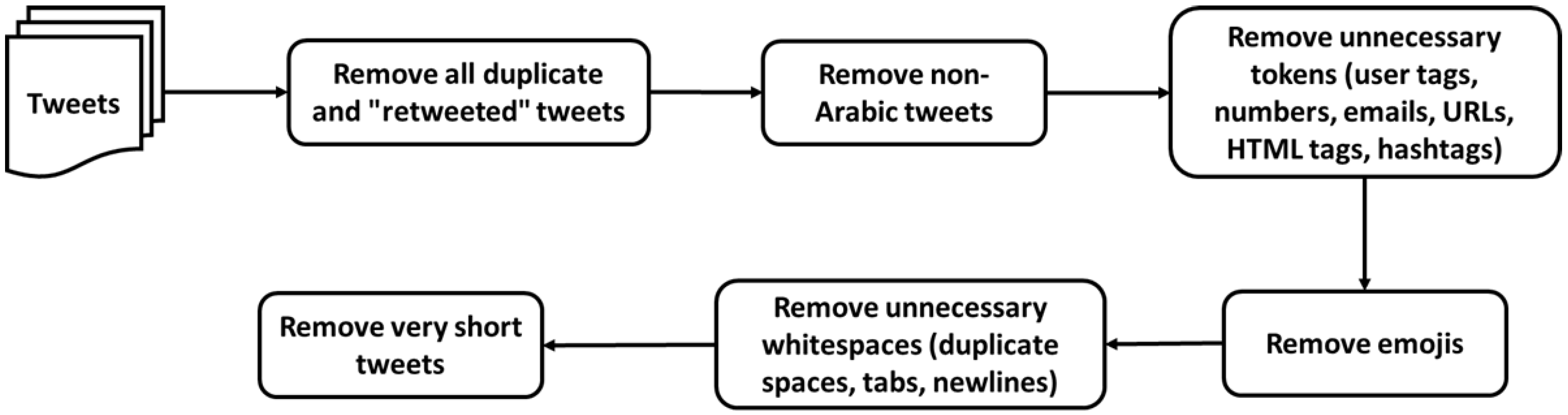

2.2. Data pre-processing and Cleaning

2.3. Data Annotation

2.3.1. Annotation process stages

- Stage one - Lexicon-based annotation

| اباد | طرد | نجس | جاهل | ابليس | حوثي |

| بقر | كلب | حرق | دواعش | حريم | خنزير |

- Stage two - Manual annotation stage

-

Task one - Annotation GuidelinesTo enhance the reliability of annotations, a comprehensive annotation guideline was established. This guideline outlined specific criteria and linguistic indicators for each hate speech class, guiding annotators toward consistent and accurate labeling decisions.

-

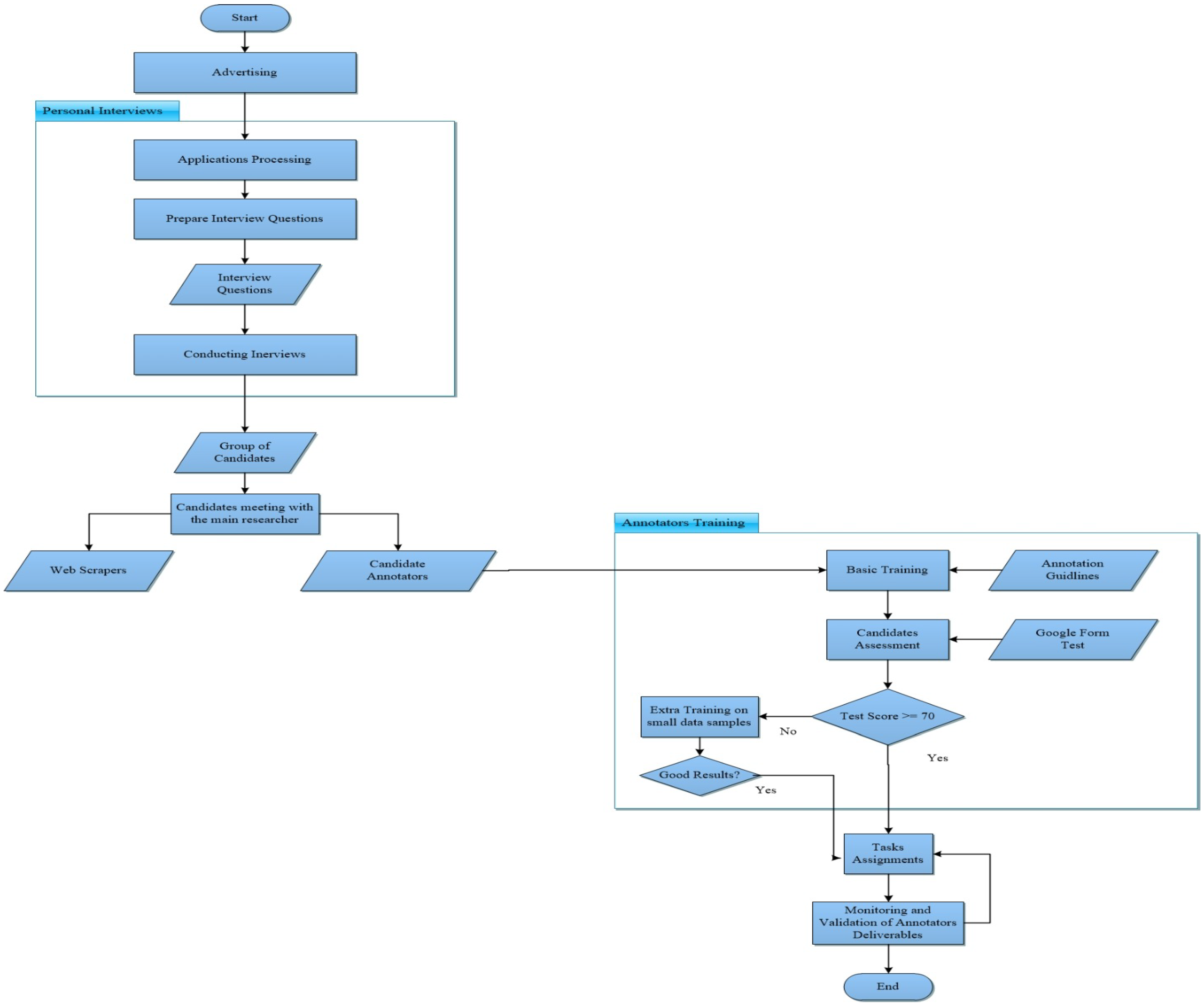

Task two - Hiring annotators teamThe sub-dataset was manually annotated for hate speech by a team of annotators. Figure 4, illustrates the steps conducted to perform this process.Table 5. Tweets Samples.

Label Meaning Tweet Tweet Meaning Label Tweets that have a clear indicator that the opinion is positive يا نساء العالم كل التحية والاحترام بهذا اليوم ولاكن نكن كل الاحترام والتقدير إلى تاج نساء العالم نساء العربي This tweet contains respect and appreciation for women all over the world and Jordanian women in specific negative Tweets that are not offensive or hateful. بضل الواحد يخطط شهر انه كيف بده يدرس المادة This tweet is written by a student talking about his study plans neutral Tweets that are offensive but do not contain hateful content. اطلع يا كلب يا ابن الكلب يا حاميه يا قتله هاي This tweet contains bad words (swearing) positive Tweets that contain hateful content directed at a specific group of people. غريم الاردنيين الداعشي الكلب أبو بلال التونسي This tweet contains bad words (swearing) that target specific names for known terrorists, and violent words such as murder very positive Figure 4. Annotation Team Selection. The process started with an advertisement that has been published on LinkedIn. The purpose was to find qualified personnel, mainly students who could participate in the scraping and annotation part of the project. Figure 5 displays a screenshot of this advertisement.Figure 5. Announcement for building Annotators team.

The process started with an advertisement that has been published on LinkedIn. The purpose was to find qualified personnel, mainly students who could participate in the scraping and annotation part of the project. Figure 5 displays a screenshot of this advertisement.Figure 5. Announcement for building Annotators team. The filled applications have been reviewed and thirty applicants have been interviewed. Twenty of them were selected after passing these interviews. A meeting has been conducted by the main researcher with the interviewed annotators, to clarify the project requirements and the expectations from their side. Part of the candidates have been directed to work on social media scraping, while the others took a quick training to understand the annotation task required. Before starting the annotation task, the candidates took a test that assessed their understanding of the annotation guidelines presented to them during the training and the annotation process. A link to the test is provided in [55]. Candidates who passed the test, with a score of 70) have proceeded with the annotation process. As a start, to validate the annotation guidelines, the annotators, who were native Jordanian Arabic speakers, participated in the following phases:

The filled applications have been reviewed and thirty applicants have been interviewed. Twenty of them were selected after passing these interviews. A meeting has been conducted by the main researcher with the interviewed annotators, to clarify the project requirements and the expectations from their side. Part of the candidates have been directed to work on social media scraping, while the others took a quick training to understand the annotation task required. Before starting the annotation task, the candidates took a test that assessed their understanding of the annotation guidelines presented to them during the training and the annotation process. A link to the test is provided in [55]. Candidates who passed the test, with a score of 70) have proceeded with the annotation process. As a start, to validate the annotation guidelines, the annotators, who were native Jordanian Arabic speakers, participated in the following phases:- The annotators were given a training set of 100 tweets annotated by a human expert.

- The annotators then independently applied the guidelines to another test set of 100 tweets.

- The annotators’ annotations were compared with the annotations of the experts. Differences were addressed through discussion. The guidelines have also been modified as necessary.

In addition to the above, the annotators were monitored closely during the annotation process to ensure the quality of the sub-dataset. The inter-annotator agreement is computed to confirm the quality.

2.3.2. Inter-annotator agreement

2.4. Exploratory Data Analysis

2.5. Feature Engineering and Model Construction

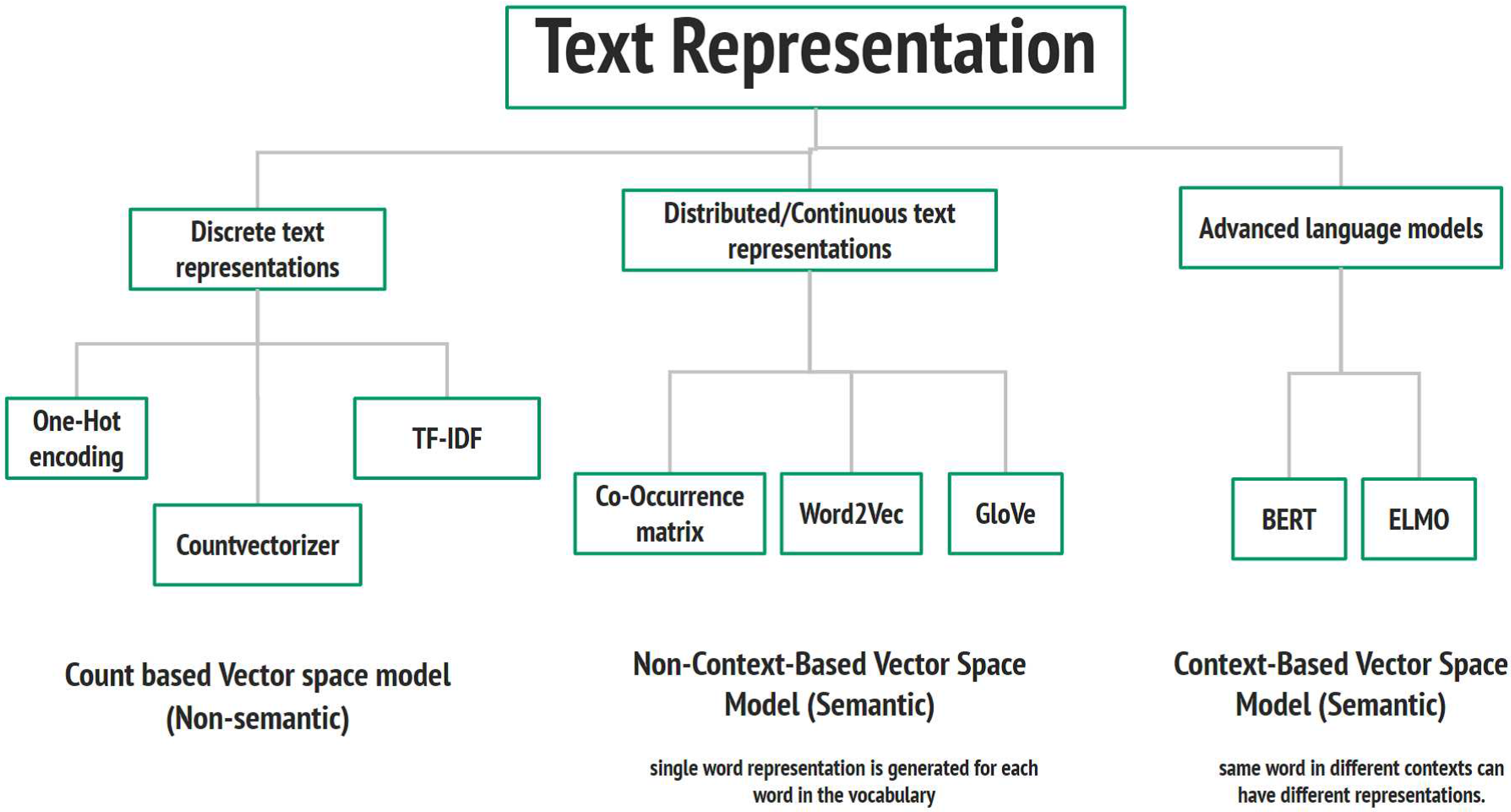

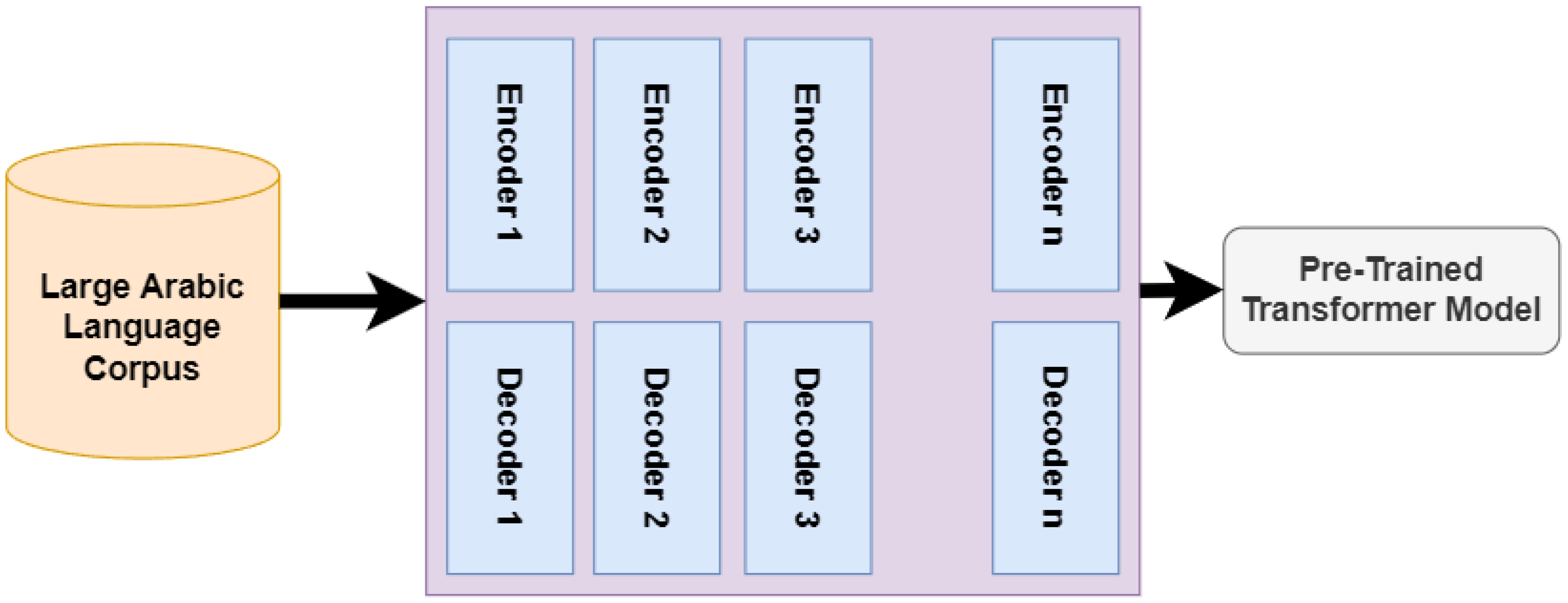

2.5.1. Text Representation

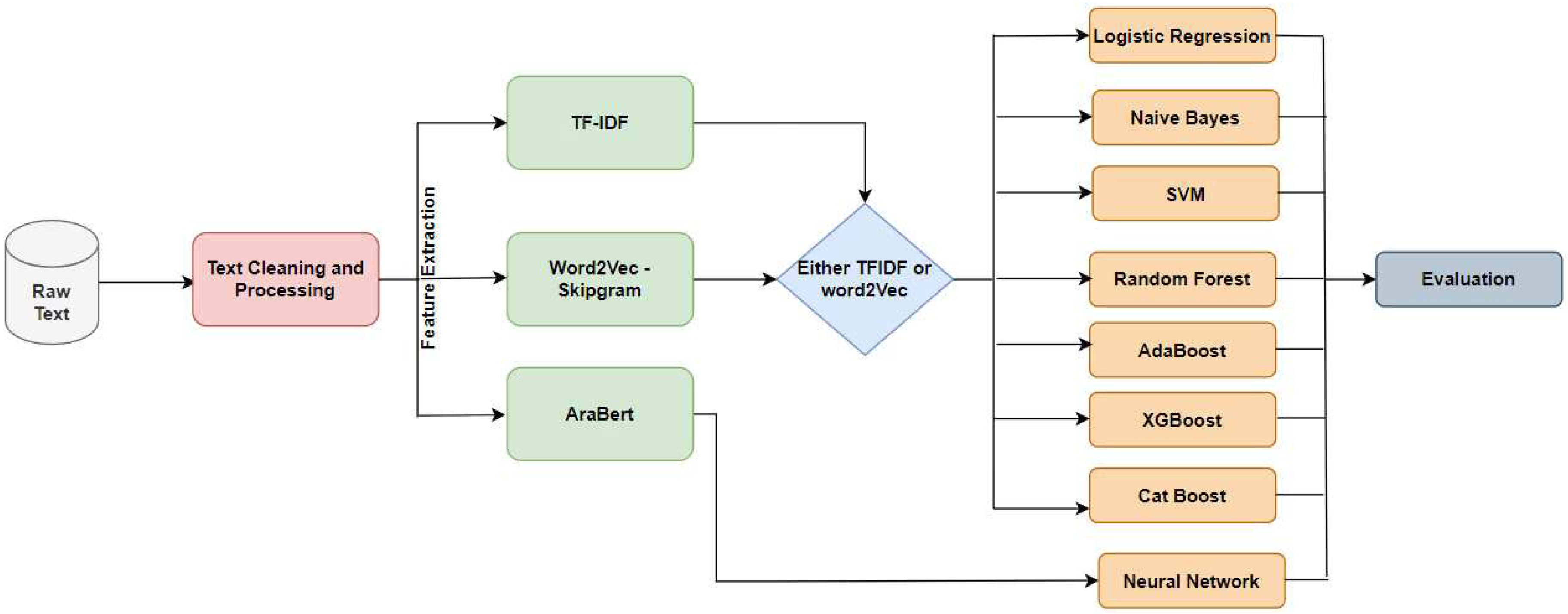

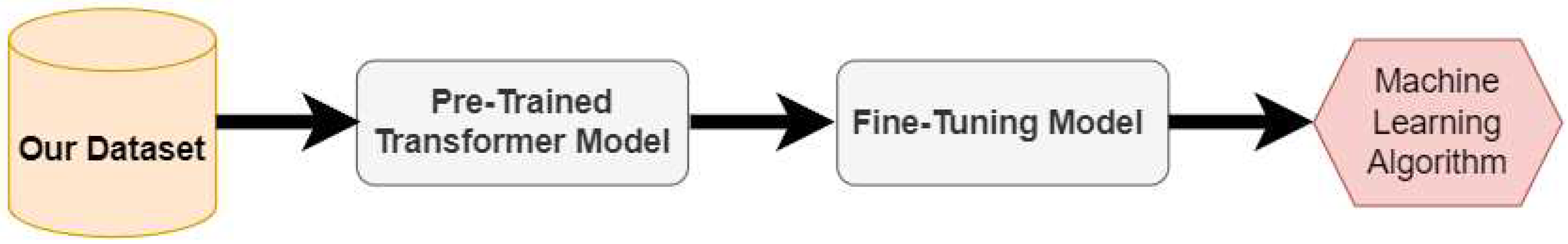

2.5.2. Research Methodology

2.5.3. Evaluation Measures

3. Experiments and Results

3.1. Experimental setup and Hyperparameter tuning

| Recall | Precision | F1 | ||||

| W2V | TF-IDF | W2V | TF-IDF | W2V | TF-IDF | |

| LR | 0.60 | 0.64 | 0.55 | 0.45 | 0.57 | 0.53 |

| NB | 0.39 | 0.30 | 0.58 | 0.53 | 0.47 | 0.38 |

| RF | 0.59 | 0.53 | 0.56 | 0.50 | 0.57 | 0.51 |

| SVM | 0.61 | 0.65 | 0.54 | 0.45 | 0.57 | 0.53 |

| AdaBoost | 0.56 | 0.59 | 0.54 | 0.46 | 0.55 | 0.52 |

| XGBoost | 0.58 | 0.54 | 0.57 | 0.50 | 0.58 | 0.52 |

| CatBoost | 0.59 | 0.55 | 0.57 | 0.51 | 0.58 | 0.53 |

3.2. Results

| Recall | Precision | F1 | ||||

| W2V | TF-IDF | W2V | TF-IDF | W2V | TF-IDF | |

| LR | 0.35 | 0.21 | 0.42 | 0.37 | 0.38 | 0.27 |

| NB | 0.47 | 0.27 | 0.37 | 0.36 | 0.41 | 0.30 |

| RF | 0.39 | 0.36 | 0.42 | 0.37 | 0.40 | 0.36 |

| SVM | 0.34 | 0.21 | 0.43 | 0.37 | 0.38 | 0.26 |

| AdaBoost | 0.31 | 0.23 | 0.40 | 0.35 | 0.35 | 0.28 |

| XGBoost | 0.38 | 0.34 | 0.43 | 0.37 | 0.40 | 0.35 |

| CatBoost | 0.38 | 0.34 | 0.44 | 0.38 | 0.41 | 0.36 |

| Recall | Precision | F1 | ||||

| W2V | TF-IDF | W2V | TF-IDF | W2V | TF-IDF | |

| LR | 0.53 | 0.37 | 0.47 | 0.39 | 0.50 | 0.38 |

| NB | 0.47 | 0.27 | 0.42 | 0.36 | 0.45 | 0.30 |

| RF | 0.52 | 0.36 | 0.48 | 0.37 | 0.50 | 0.36 |

| SVM | 0.52 | 0.36 | 0.48 | 0.38 | 0.50 | 0.37 |

| AdaBoost | 0.52 | 0.41 | 0.44 | 0.38 | 0.48 | 0.40 |

| XGBoost | 0.56 | 0.43 | 0.49 | 0.41 | 0.52 | 0.42 |

| CatBoost | 0.57 | 0.44 | 0.49 | 0.42 | 0.53 | 0.43 |

| Recall | Precision | F1 | ||||

| W2V | TF-IDF | W2V | TF-IDF | W2V | TF-IDF | |

| LR | 0.00 | 0.00 | 0.00 | 0.00 | 0.00 | 0.00 |

| NB | 0.08 | 0.01 | 0.07 | 0.02 | 0.07 | 0.01 |

| RF | 0.02 | 0.04 | 0.37 | 0.34 | 0.04 | 0.07 |

| SVM | 0.00 | 0.00 | 0.26 | 0.00 | 0.01 | 0.00 |

| AdaBoost | 0.00 | 0.00 | 0.06 | 0.00 | 0.00 | 0.00 |

| XGBoost | 0.03 | 0.02 | 0.44 | 0.52 | 0.06 | 0.04 |

| CatBoost | 0.03 | 0.01 | 0.44 | 0.65 | 0.06 | 0.01 |

| Recall | Precision | F1 | Accuracy | AUC | ||||||

| W2V | TF-IDF | W2V | TF-IDF | W2V | TF-IDF | W2V | TF-IDF | W2V | TF-IDF | |

| LR | 0.49 | 0.42 | 0.48 | 0.40 | 0.48 | 0.39 | 0.49 | 0.42 | 0.48 | 0.50 |

| NB | 0.43 | 0.38 | 0.46 | 0.41 | 0.44 | 0.37 | 0.43 | 0.38 | 0.48 | 0.47 |

| RF | 0.50 | 0.43 | 0.49 | 0.43 | 0.49 | 0.43 | 0.50 | 0.43 | 0.49 | 0.49 |

| SVM | 0.49 | 0.41 | 0.48 | 0.39 | 0.48 | 0.41 | 0.49 | 0.41 | 0.49 | 0.50 |

| AdaBoost | 0.47 | 0.40 | 0.46 | 0.41 | 0.46 | 0.40 | 0.47 | 0.41 | 0.41 | 0.46 |

| XGBoost | 0.50 | 0.43 | 0.50 | 0.44 | 0.50 | 0.43 | 0.50 | 0.44 | 0.48 | 0.50 |

| CatBoost | 0.51 | 0.44 | 0.51 | 0.44 | 0.50 | 0.44 | 0.51 | 0.44 | 0.47 | 0.50 |

| metric | Recall | Precision | F1 | Accuracy | AUC |

| value | 0.61 | 0.68 | 0.63 | 0.62 | 0.68 |

4. Conclusion and Future Work

Funding

Data Availability Statement

Conflicts of Interest

References

- Kapoor, K.K.; Tamilmani, K.; Rana, N.P.; Patil, P.; Dwivedi, Y.K.; Nerur, S. Advances in social media research: Past, present and future. Information Systems Frontiers 2018, 20, 531–558. [Google Scholar] [CrossRef]

- Ngai, E.W.; Tao, S.S.; Moon, K.K. Social media research: Theories, constructs, and conceptual frameworks. International Journal of Information Management 2015, 35, 33–44. [Google Scholar] [CrossRef]

- Yalçınkaya, O.D. Instances of Hate Discourse in Turkish and English. Turkish Studies-Language & Literature 2022, 17. [Google Scholar]

- Community Standards Enforcement Report. https://transparency.fb.com/reports/community-standards-enforcement/hate-speech/facebook/. Accessed: 2023-09-05.

- Omar, A.; Mahmoud, T.M.; Abd-El-Hafeez, T. Comparative performance of machine learning and deep learning algorithms for Arabic hate speech detection in osns. In Proceedings of the Proceedings of the International Conference on Artificial Intelligence and Computer Vision (AICV2020). Springer, 2020, pp. 247–257.

- Fortuna, P.; Soler, J.; Wanner, L. Toxic, hateful, offensive or abusive? what are we really classifying? an empirical analysis of hate speech datasets. In Proceedings of the Proceedings of the 12th language resources and evaluation conference, 2020, pp. 6786–6794.

- Gilani, S.R.S.; Cavico, F.J.; Mujtaba, B.G. Harassment at the workplace: A practical review of the laws in the United Kingdom and the United States of America. Public Organization Review 2014, 14, 1–18. [Google Scholar] [CrossRef]

- Coban, O.; Ozel, S.A.; Inan, A. Detection and cross-domain evaluation of cyberbullying in Facebook activity contents for Turkish. ACM Transactions on Asian and Low-Resource Language Information Processing 2023, 22, 1–32. [Google Scholar] [CrossRef]

- Husain, F. Arabic offensive language detection using machine learning and ensemble machine learning approaches. arXiv preprint arXiv:2005.08946 2020. arXiv:2005.08946 2020.

- Nguyen, T. Merging public health and automated approaches to address online hate speech. AI and Ethics 2023, pp. 1–10.

- Chakraborty, T.; Masud, S. Nipping in the bud: detection, diffusion and mitigation of hate speech on social media. ACM SIGWEB Newsletter 2022, 2022, 1–9. [Google Scholar] [CrossRef]

- Zsila, Á.; Reyes, M.E.S. Pros & cons: impacts of social media on mental health. BMC psychology 2023, 11, 201. [Google Scholar]

- Siddiqui, S.; Singh, T.; et al. Social media its impact with positive and negative aspects. International journal of computer applications technology and research 2016, 5, 71–75. [Google Scholar] [CrossRef]

- Akram, W.; Kumar, R. A study on positive and negative effects of social media on society. International journal of computer sciences and engineering 2017, 5, 351–354. [Google Scholar] [CrossRef]

- Sobaih, A.E.E.; Moustafa, M.A.; Ghandforoush, P.; Khan, M. To use or not to use? Social media in higher education in developing countries. Computers in Human Behavior 2016, 58, 296–305. [Google Scholar] [CrossRef]

- Ansari, J.A.N.; Khan, N.A. Exploring the role of social media in collaborative learning the new domain of learning. Smart Learning Environments 2020, 7, 1–16. [Google Scholar] [CrossRef]

- Alghizzawi, M.; Habes, M.; Salloum, S.A.; Ghani, M.; Mhamdi, C.; Shaalan, K. The effect of social media usage on students’e-learning acceptance in higher education: A case study from the United Arab Emirates. Int. J. Inf. Technol. Lang. Stud 2019, 3, 13–26. [Google Scholar]

- Jahan, M.S.; Oussalah, M. A systematic review of Hate Speech automatic detection using Natural Language Processing. Neurocomputing 2023, p. 126232.

- Husain, F.; Uzuner, O. A survey of offensive language detection for the Arabic language. ACM Transactions on Asian and Low-Resource Language Information Processing (TALLIP) 2021, 20, 1–44. [Google Scholar] [CrossRef]

- Schmidt, A.; Wiegand, M. A survey on hate speech detection using natural language processing. In Proceedings of the Proceedings of the fifth international workshop on natural language processing for social media, 2017, pp. 1–10.

- Al-Hassan, A.; Al-Dossari, H. Detection of hate speech in social networks: a survey on multilingual corpus. Computer Science & Information Technology (CS & IT) 2019, 9, 83. [Google Scholar]

- Albadi, N.; Kurdi, M.; Mishra, S. Are they Our Brothers? Analysis and Detection of Religious Hate Speech in the Arabic Twittersphere. In Proceedings of the 2018 IEEE/ACM International Conference on Advances in Social Networks Analysis and Mining (ASONAM). IEEE, 2018, pp. 69–76.

- Yi, P.; Zubiaga, A. Session-based cyberbullying detection in social media: A survey. Online Social Networks and Media 2023, 36, 100250. [Google Scholar] [CrossRef]

- Aldjanabi, W.; Dahou, A.; Al-qaness, M.A.; Elaziz, M.A.; Helmi, A.M.; Damaševičius, R. Arabic offensive and hate speech detection using a cross-corpora multi-task learning model. In Proceedings of the Informatics. MDPI, 2021, Vol. 8, p. 69.

- Mozafari, M.; Farahbakhsh, R.; Crespi, N. A BERT-based transfer learning approach for hate speech detection in online social media. In Proceedings of the Complex Networks and Their Applications VIII: Volume 1 Proceedings of the Eighth International Conference on Complex Networks and Their Applications COMPLEX NETWORKS 2019 8. Springer, 2020, pp. 928–940.

- Awal, M.R.; Cao, R.; Lee, R.K.W.; Mitrović, S. Angrybert: Joint learning target and emotion for hate speech detection. In Proceedings of the Pacific-Asia conference on knowledge discovery and data mining. Springer, 2021, pp. 701–713.

- Haddad, B.; Orabe, Z.; Al-Abood, A.; Ghneim, N. Arabic offensive language detection with attention-based deep neural networks. In Proceedings of the Proceedings of the 4th workshop on open-source Arabic corpora and processing tools, with a shared task on offensive language detection, 2020, pp. 76–81.

- Abuzayed, A.; Elsayed, T. Quick and simple approach for detecting hate speech in Arabic tweets. In Proceedings of the Proceedings of the 4th workshop on open-source Arabic Corpora and processing tools, with a shared task on offensive language detection, 2020, pp. 109–114.

- Hassan, S.; Mubarak, H.; Abdelali, A.; Darwish, K. Asad: Arabic social media analytics and understanding. In Proceedings of the Proceedings of the 16th Conference of the European Chapter of the Association for Computational Linguistics: System Demonstrations, 2021, pp. 113–11.

- Alsafari, S.; Sadaoui, S.; Mouhoub, M. Hate and offensive speech detection on Arabic social media. Online Social Networks and Media 2020, 19, 100096. [Google Scholar] [CrossRef]

- Alsafari, S.; Sadaoui, S.; Mouhoub, M. Deep learning ensembles for hate speech detection. In Proceedings of the 2020 IEEE 32nd International Conference on Tools with Artificial Intelligence (ICTAI). IEEE, 2020, pp. 526–531.

- Aref, A.; Al Mahmoud, R.H.; Taha, K.; Al-Sharif, M.; et al. Hate speech detection of Arabic shorttext. In Proceedings of the CS IT Conf. Proc, 2020, Vol. 10, pp. 81–94.

- Romim, N.; Ahmed, M.; Talukder, H.; Saiful Islam, M. Hate speech detection in the bengali language: A dataset and its baseline evaluation. In Proceedings of the Proceedings of International Joint Conference on Advances in Computational Intelligence: IJCACI 2020. Springer, 2021, pp. 457–468.

- Faris, H.; Aljarah, I.; Habib, M.; Castillo, P.A. Hate Speech Detection using Word Embedding and Deep Learning in the Arabic Language Context. In Proceedings of the ICPRAM, 2020, pp. 453–460.

- Khezzar, R.; Moursi, A.; Al Aghbari, Z. arHateDetector: detection of hate speech from standard and dialectal Arabic Tweets. Discover Internet of Things 2023, 3, 1. [Google Scholar] [CrossRef]

- Alshaalan, R.; Al-Khalifa, H. Hate speech detection in saudi twittersphere: A deep learning approach. In Proceedings of the Proceedings of the fifth Arabic natural language processing workshop, 2020, pp. 12–23.

- Saeed, A.M.; Ismael, A.N.; Rasul, D.L.; Majeed, R.S.; Rashid, T.A. Hate speech detection in social media for the Kurdish language. In Proceedings of the The International Conference on Innovations in Computing Research. Springer, 2022, pp. 253–260.

- Alsafari, S.; Sadaoui, S. Semi-supervised self-learning for arabic hate speech detection. In Proceedings of the 2021 IEEE International Conference on Systems, Man, and Cybernetics (SMC). IEEE, 2021, pp. 863–868.

- Salomon, P.O.; Kechaou, Z.; Wali, A. Arabic hate speech detection system based on AraBERT. In Proceedings of the 2022 IEEE 21st International Conference on Cognitive Informatics & Cognitive Computing (ICCI* CC). IEEE, 2022, pp. 208–213.

- Mursi, K.T.; Alahmadi, M.D.; Alsubaei, F.S.; Alghamdi, A.S. Detecting islamic radicalism arabic tweets using natural language processing. IEEE Access 2022, 10, 72526–72534. [Google Scholar] [CrossRef]

- Ameur, M.S.H.; Aliane, H. AraCOVID19-MFH: Arabic COVID-19 multi-label fake news & hate speech detection dataset. Procedia Computer Science 2021, 189, 232–241. [Google Scholar]

- Alshalan, R.; Al-Khalifa, H. A deep learning approach for automatic hate speech detection in the saudi twittersphere. Applied Sciences 2020, 10, 8614. [Google Scholar] [CrossRef]

- Duwairi, R.; Hayajneh, A.; Quwaider, M. A deep learning framework for automatic detection of hate speech embedded in Arabic tweets. Arabian Journal for Science and Engineering 2021, 46, 4001–4014. [Google Scholar] [CrossRef]

- Anezi, F.Y.A. Arabic hate speech detection using deep recurrent neural networks. Applied Sciences 2022, 12, 6010. [Google Scholar] [CrossRef]

- Ahmed, I.; Abbas, M.; Hatem, R.; Ihab, A.; Fahkr, M.W. Fine-tuning Arabic Pre-Trained Transformer Models for Egyptian-Arabic Dialect Offensive Language and Hate Speech Detection and Classification. In Proceedings of the 2022 20th International Conference on Language Engineering (ESOLEC). IEEE, 2022, Vol. 20, pp. 170–174.

- Beyhan, F.; Çarık, B.; Arın, İ.; Terzioğlu, A.; Yanikoglu, B.; Yeniterzi, R. A Turkish hate speech dataset and detection system. In Proceedings of the Proceedings of the Thirteenth Language Resources and Evaluation Conference, 2022, pp. 4177–4185.

- Mollas, I.; Chrysopoulou, Z.; Karlos, S.; Tsoumakas, G. ETHOS: a multi-label hate speech detection dataset. Complex & Intelligent Systems 2022, 8, 4663–4678. [Google Scholar]

- Althobaiti, M.J. Bert-based approach to arabic hate speech and offensive language detection in twitter: Exploiting emojis and sentiment analysis. International Journal of Advanced Computer Science and Applications 2022, 13. [Google Scholar] [CrossRef]

- Alkomah, F.; Ma, X. A literature review of textual hate speech detection methods and datasets. Information 2022, 13, 273. [Google Scholar] [CrossRef]

- Barbosa, L.; Feng, J. Robust sentiment detection on twitter from biased and noisy data. In Proceedings of the Coling 2010: Posters, 2010, pp. 36–44.

- Alayba, A.M.; Palade, V.; England, M.; Iqbal, R. Arabic language sentiment analysis on health services. In Proceedings of the 2017 1st international workshop on arabic script analysis and recognition (asar). IEEE, 2017, pp. 114–118.

- Refaee, E.; Rieser, V. An arabic twitter corpus for subjectivity and sentiment analysis. In Proceedings of the LREC, 2014, pp. 2268–2273.

- Al-Twairesh, N. Sentiment analysis of Twitter: a study on the Saudi community. PhD thesis, King Saud University Riyadh, Saudi Arabia, 2016.

- Mubarak, H.; Darwish, K.; Magdy, W. Abusive language detection on Arabic social media. In Proceedings of the Proceedings of the first workshop on abusive language online, 2017, pp. 52–56.

- Anootation Exam: https://forms.gle/9e56L2j8vH9mNSiV9.

- Landis, J.R.; Koch, G.G. The measurement of observer agreement for categorical data. biometrics 1977, pp. 159–174.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).