Submitted:

07 November 2023

Posted:

08 November 2023

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

- i)

- Providing a comprehensive definition of binary classification machine learning techniques tailored for imbalanced data.

- ii)

- Conducting an extensive review of diverse sampling techniques designed to address imbalanced data.

- iii)

- Offering a detailed account of the training and validation procedures within imbalanced domains.

- iv)

- Explaining the key evaluation metrics that are well-suited for imbalanced data scenarios.

- v)

- Employing various machine learning models and conducting a thorough assessment, comparing their performance using commonly employed metrics across three distinct phases: after applying feature selection, after applying SMOTE, after applying SMOTE with Tomek Links, after applying SMOTE with ENN, and after applying Optuna hyperparameter tuning.

2. Classification Machine Learning Techniques

2.1. Artificial Neural Network

2.2. Support Vector Machine

2.3. Decision Tree

2.4. Logistic Regression

2.5. Ensemble Learning

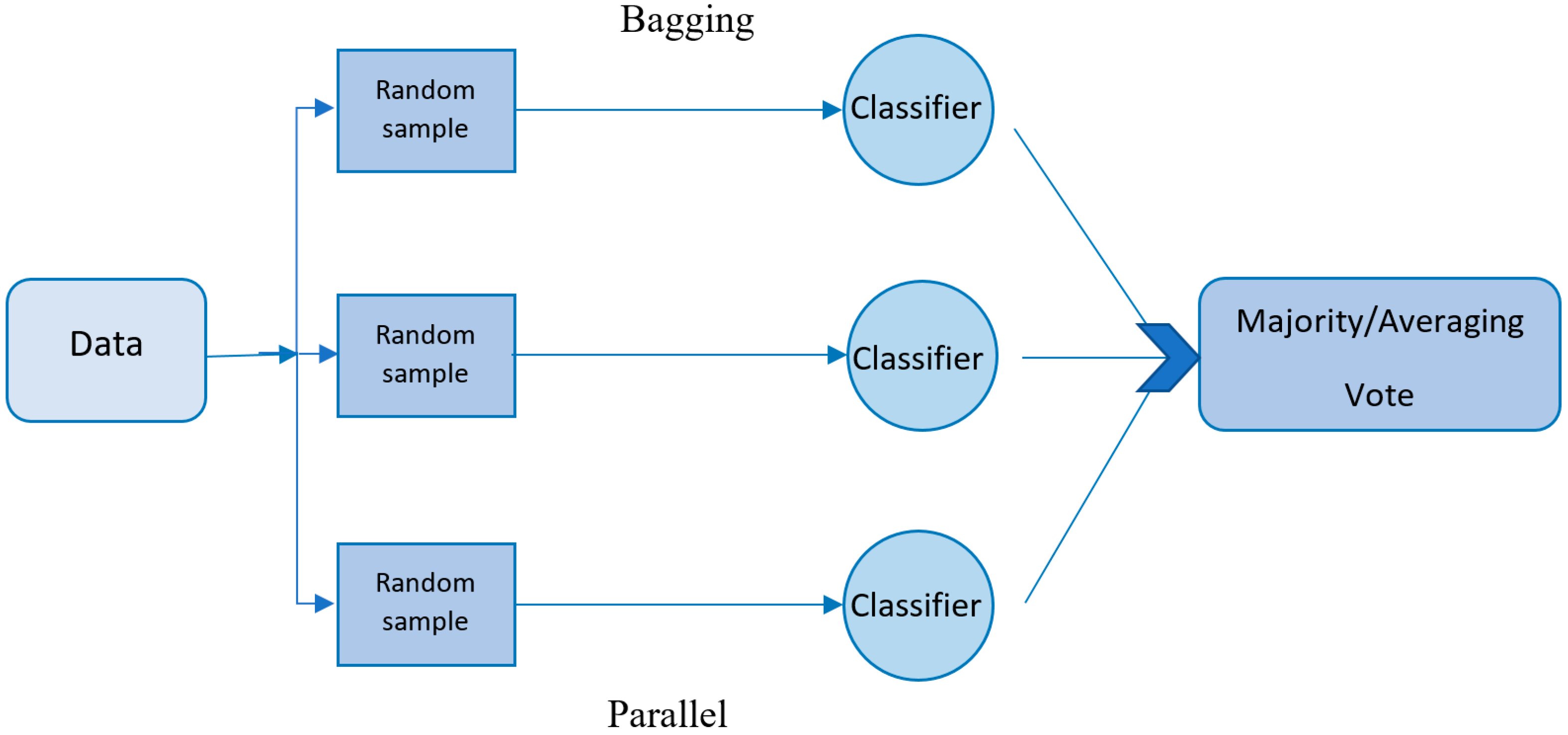

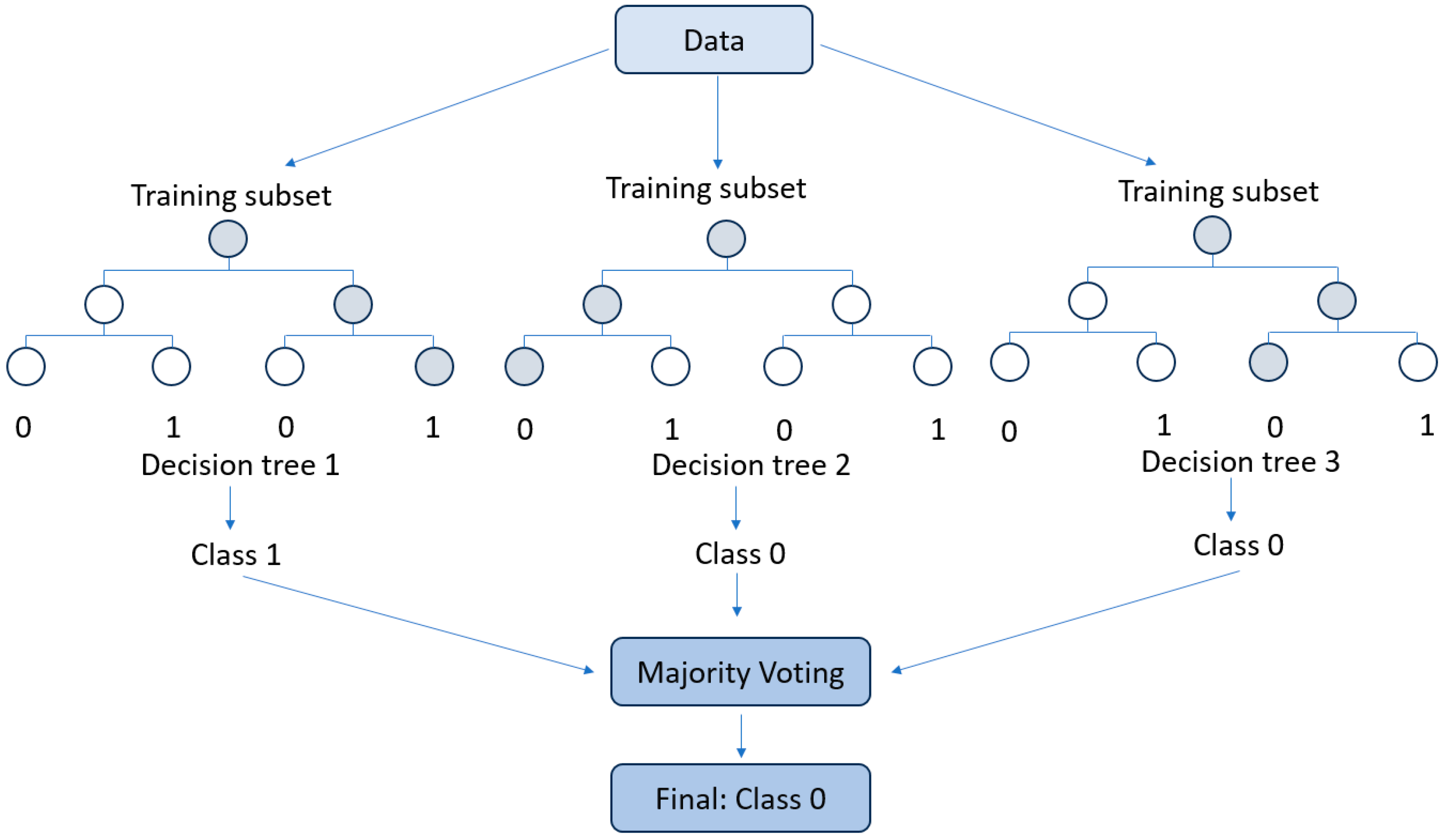

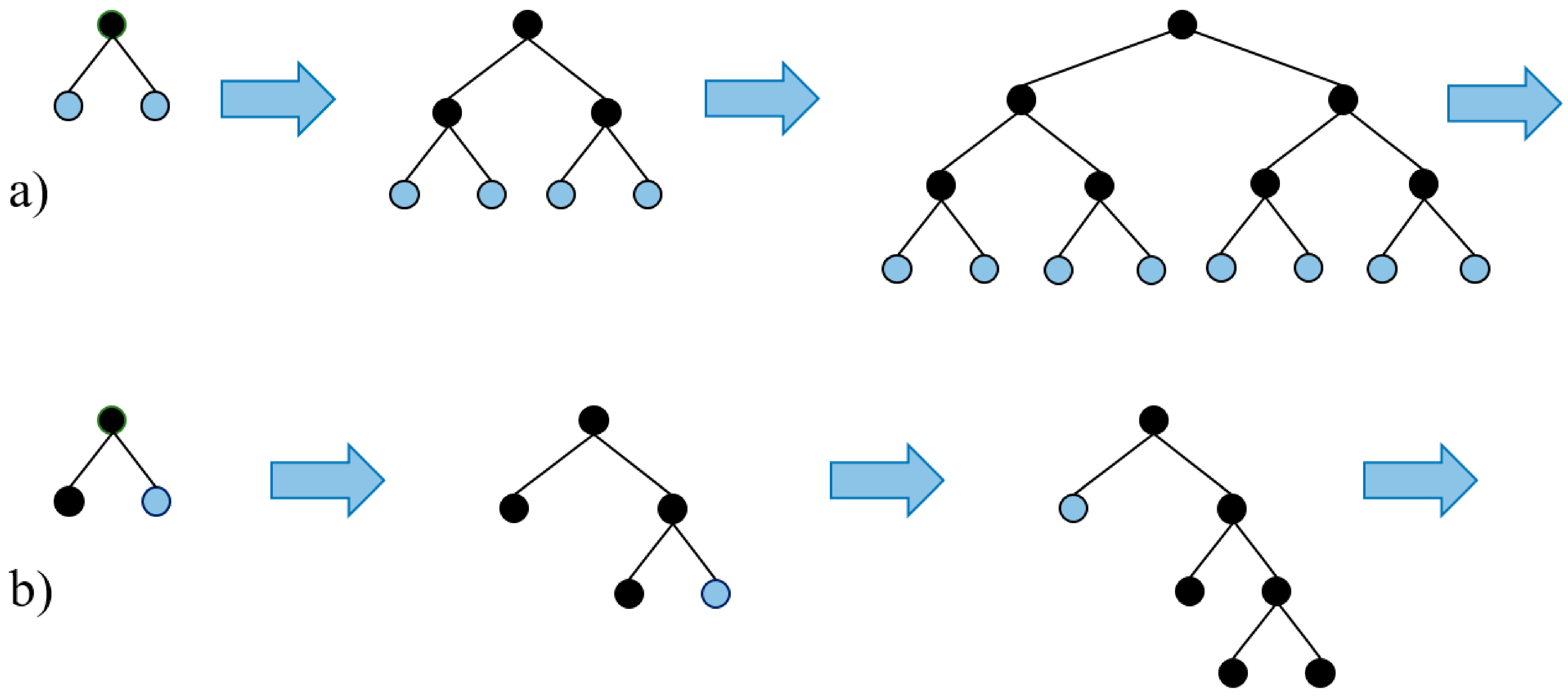

2.5.1. Bagging

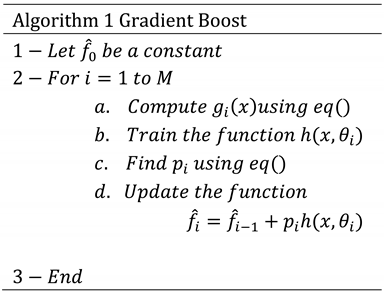

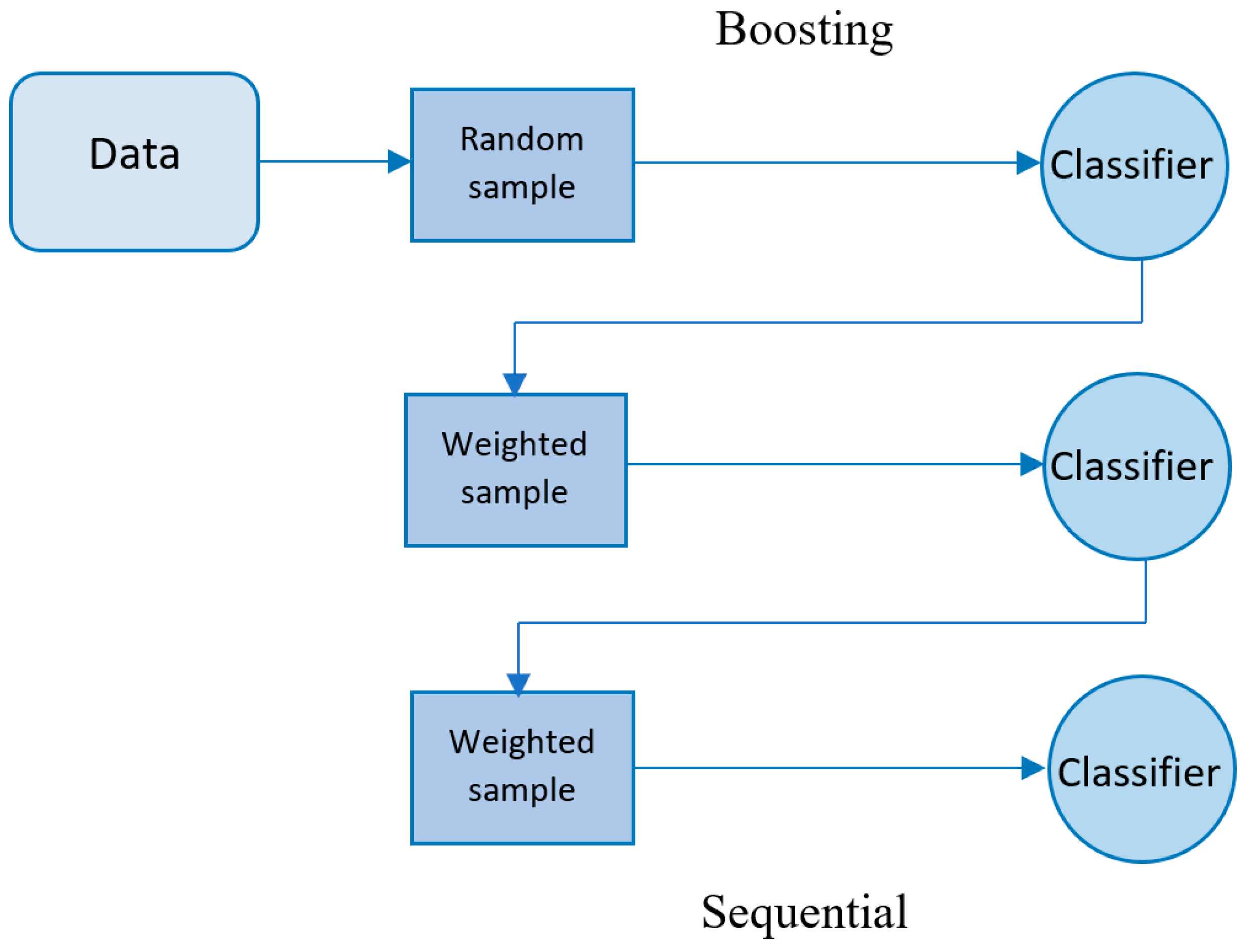

2.5.2. Boosting

- To randomly divide the records into subsets,

- To convert the labels to integer numbers,

- To transform the categorical features to numerical features, as follows:

3. Handling Imbalanced Data

3.1. The Challenge of Imbalanced Data

3.2. Sampling Techniques

3.2.1. Synthetic Minority Over-Sampling Technique (SMOTE)

3.2.2. Tomek Links

3.2.3. Edited Nearest Neighbors (ENN)

3.3. Combined Data Sampling Techniques

4. Training and Validation Process

5. Evaluation Metrics

5.1. Threshold Metrics

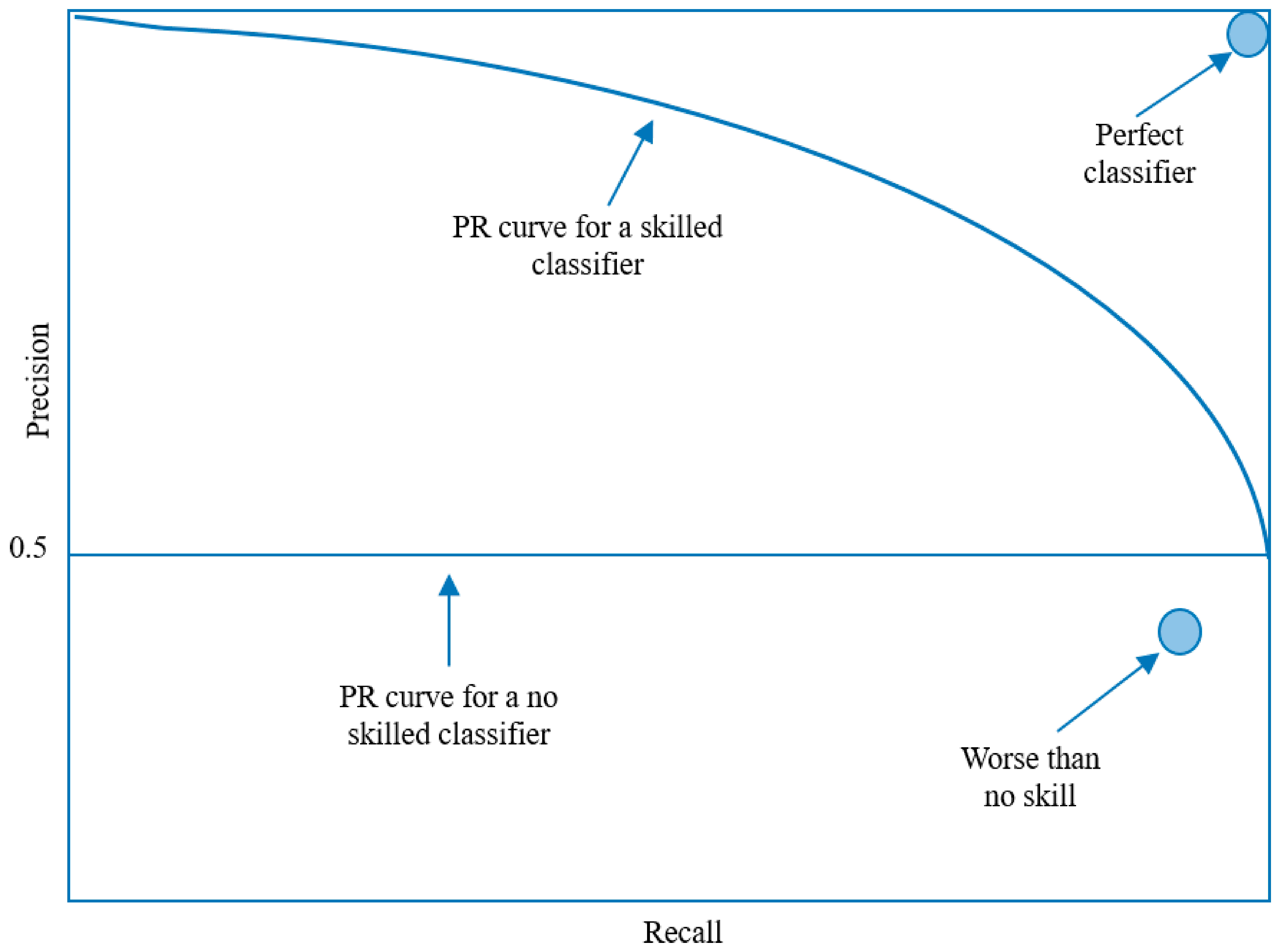

5.2. Ranking Metrics

- ❖ F1-Score: Given the imbalance in our dataset, the F1-score is particularly useful as it does not inflate the performance of the model due to the high number of true negatives, which is a common issue with accuracy in such datasets.

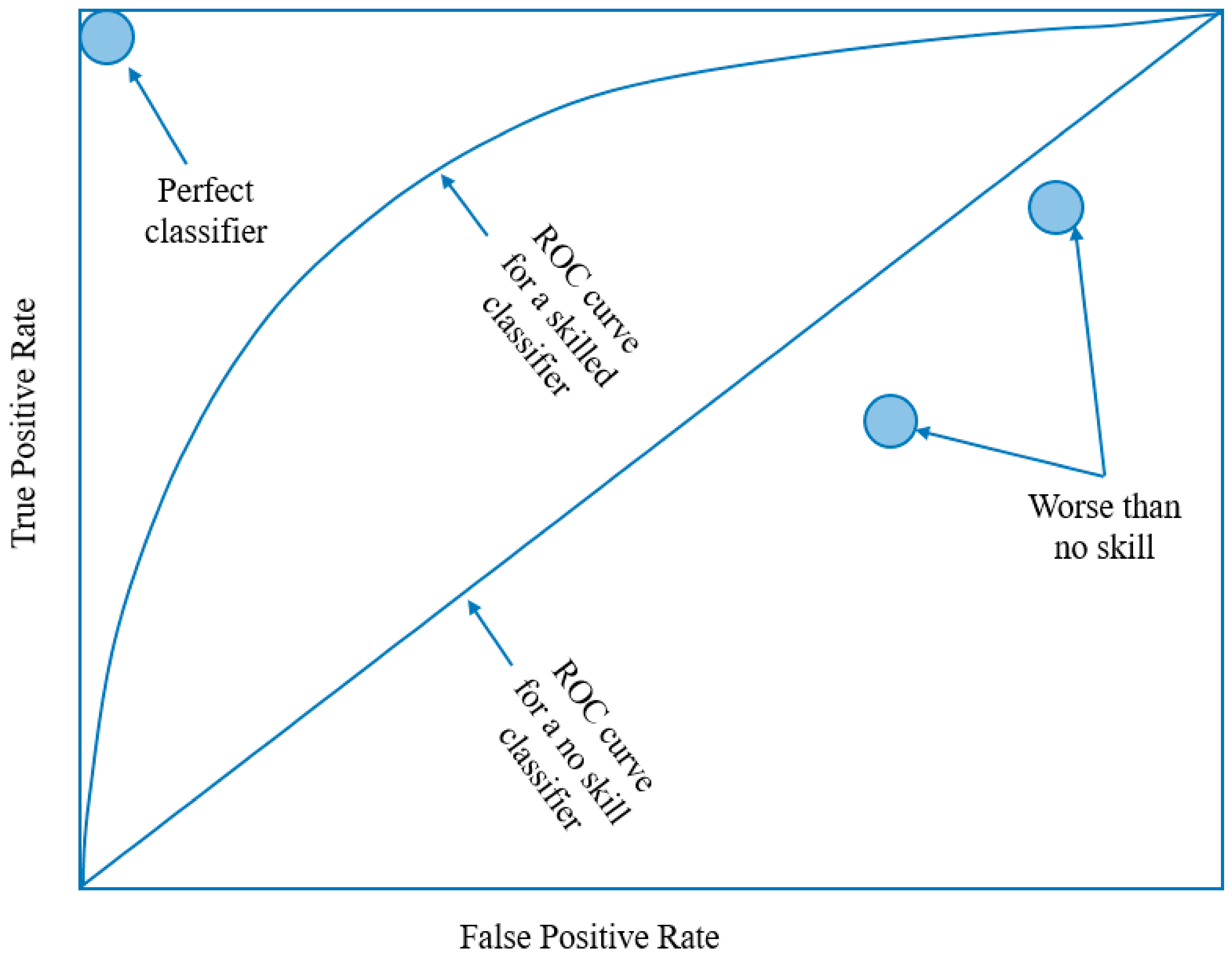

- ❖ ROC AUC: Unlike the standard accuracy metric, ROC AUC places a particular emphasis on the performance of the minority class, and the accurate prediction of minority class instances is central to its calculation. This is particularly useful in situations where the dataset is imbalanced, as it ensures that the model's performance is evaluated fairly. This metric is less sensitive to class imbalance and provides insight into the model's ability to distinguish between classes, making it a robust measure for comparing the performance of different models.

5.3. ROC AUC benchmark

6. Simulation

6.1. Simulation Setup

6.2. Simulation Results

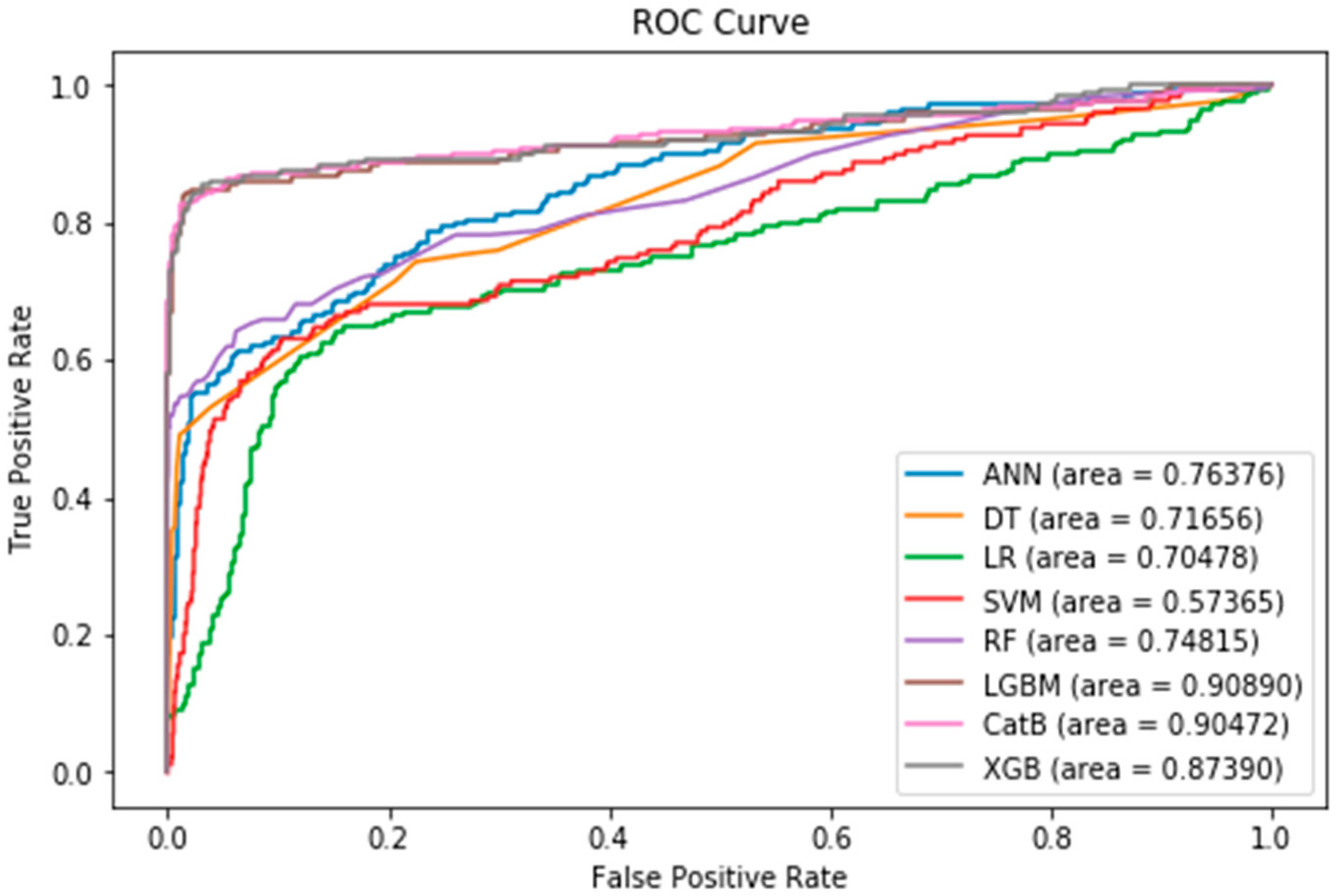

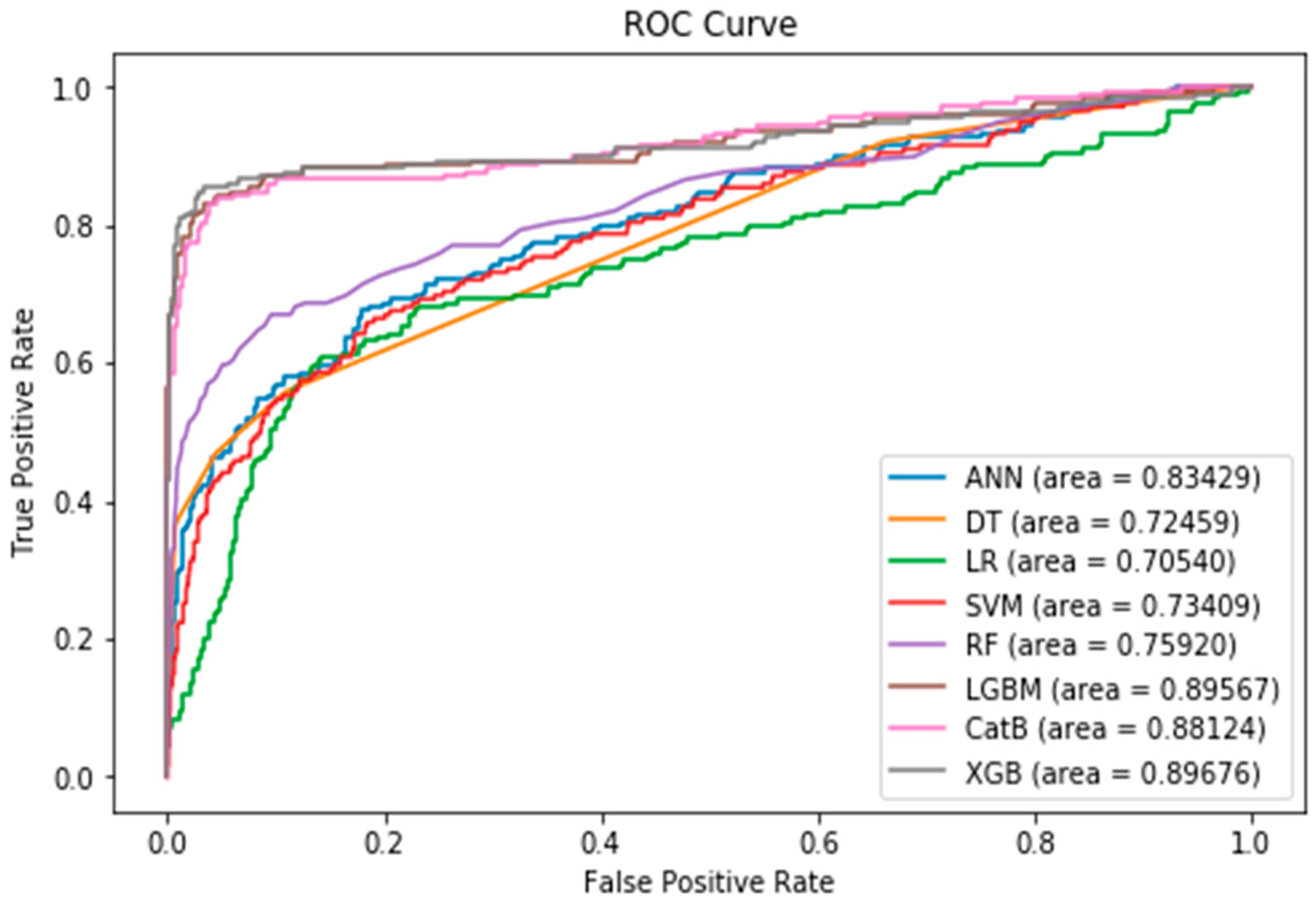

6.2.1. After Pre-processing and Feature Selection

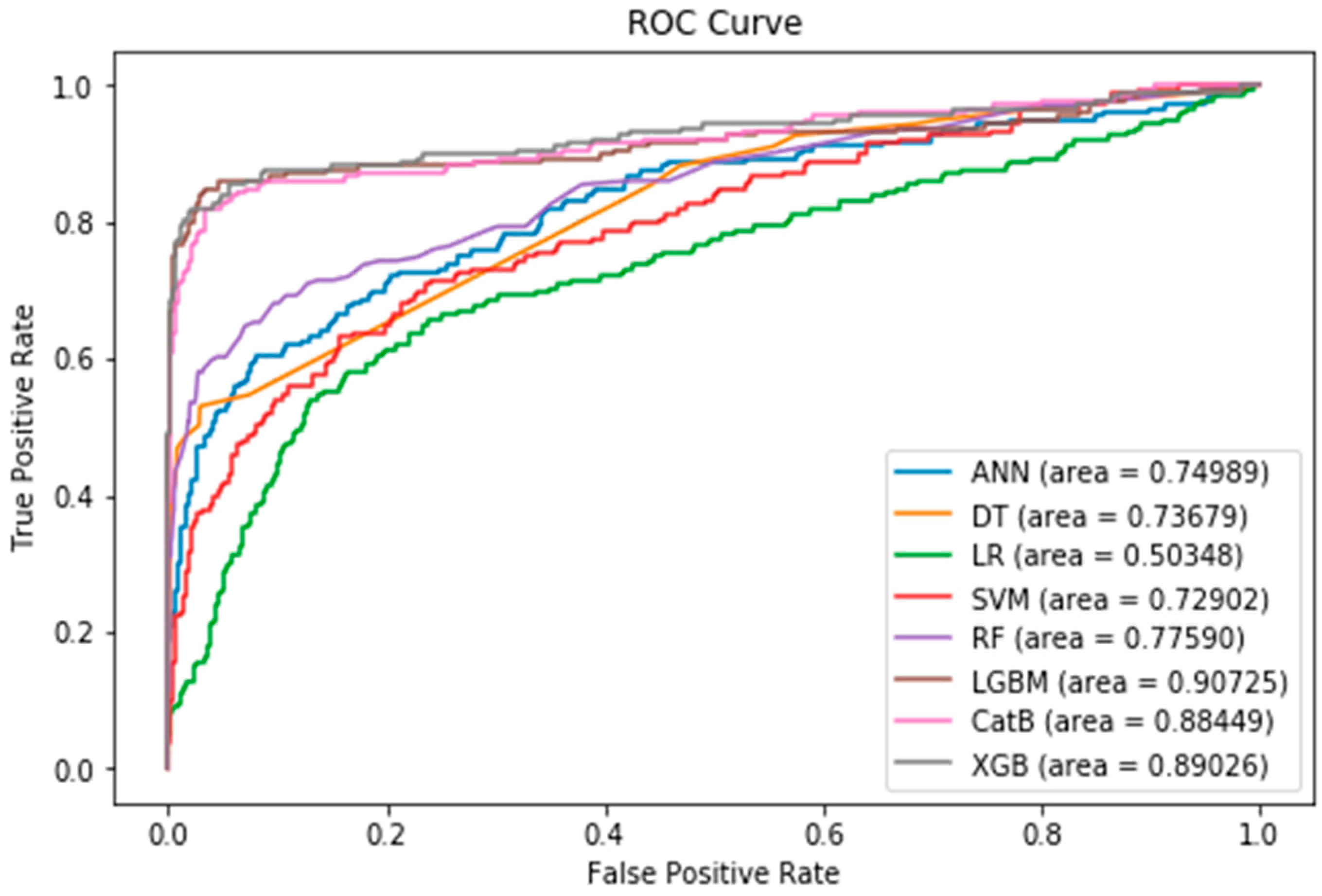

6.2.2. Applying SMOTE

6.2.3. Applying SMOTE with Tomek Links

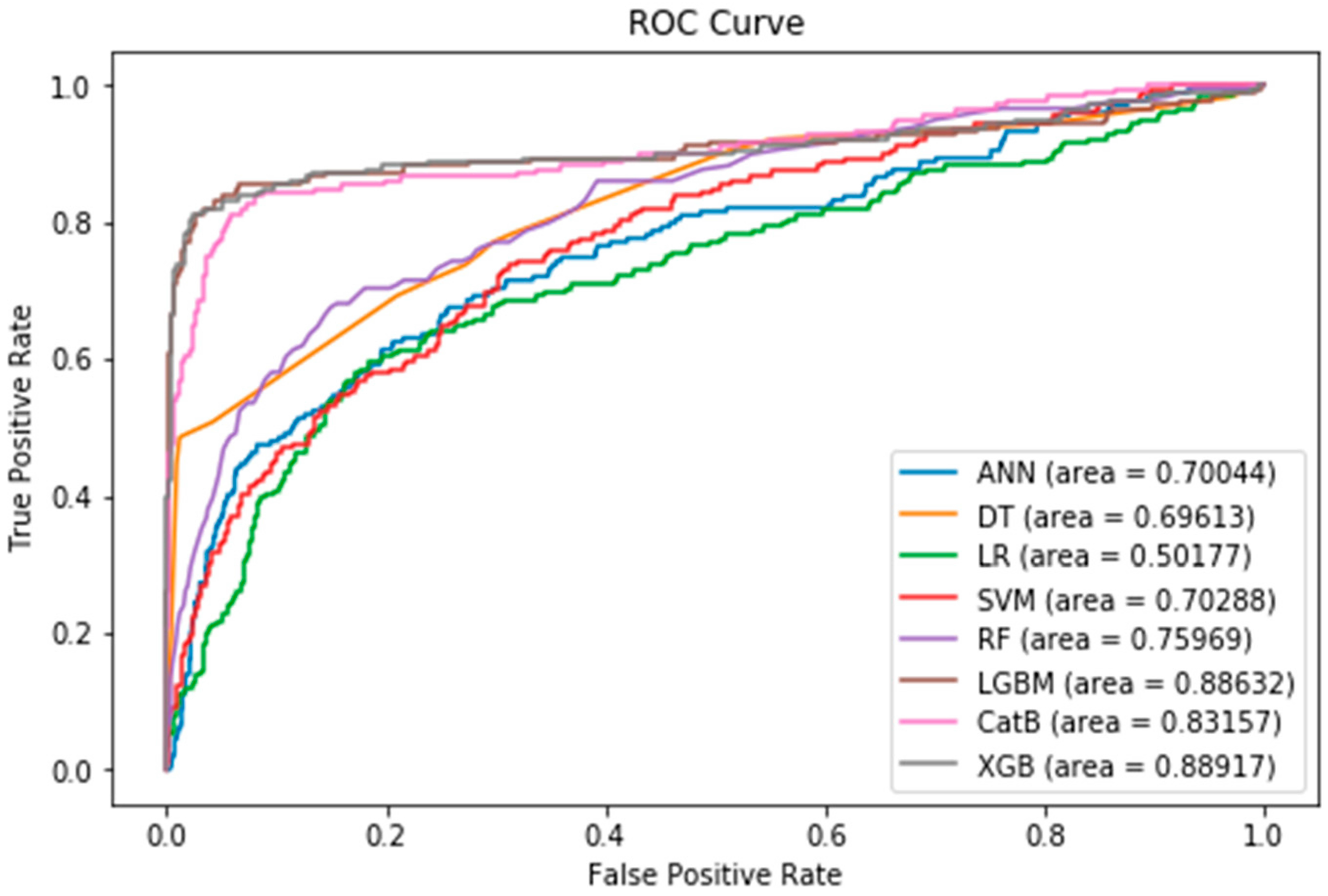

6.2.4. Applying SMOTE with ENN

6.2.5. The Impact of Sampling Techniques

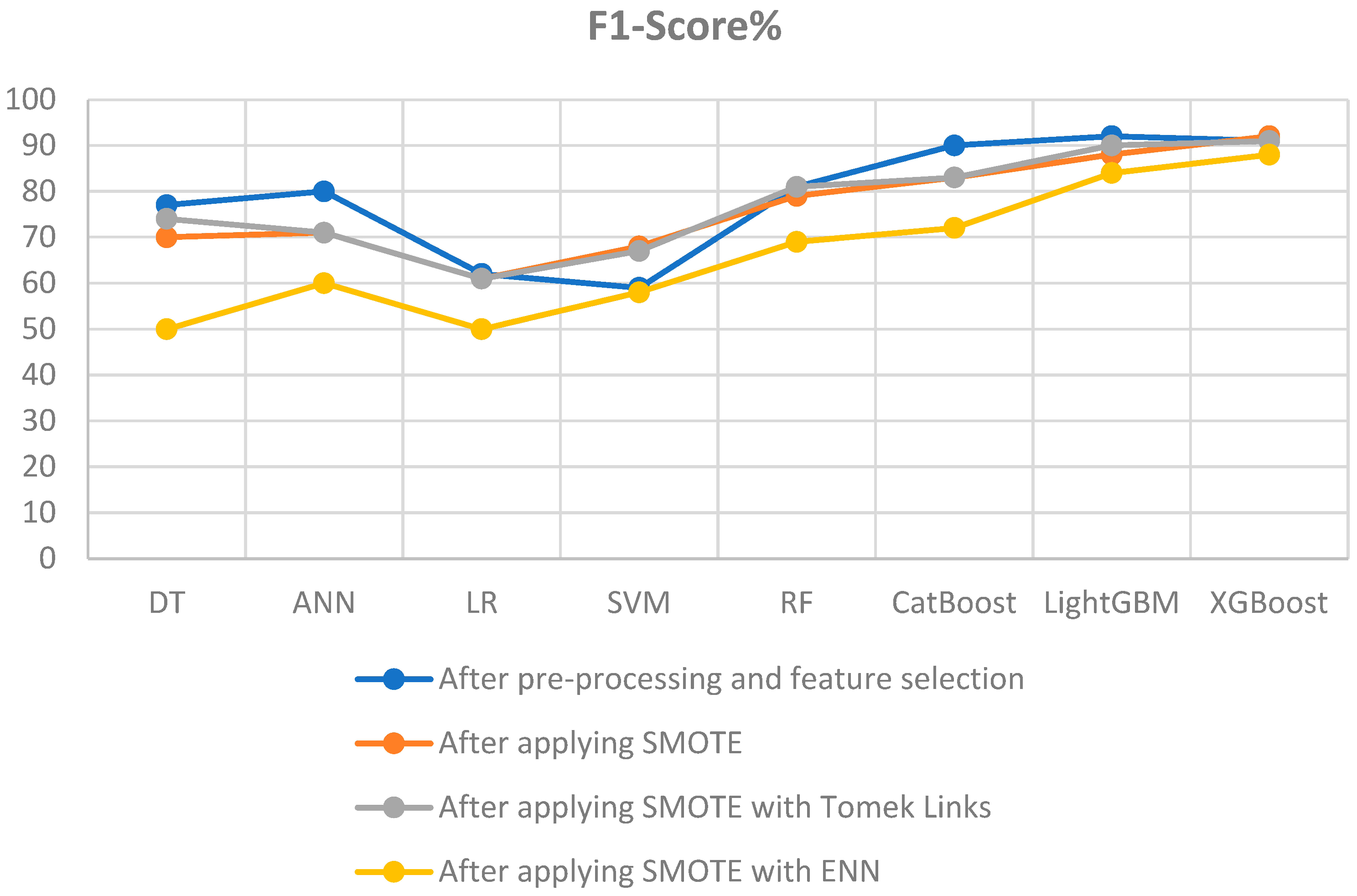

6.2.5.1. F1-Score:

-

Impact of SMOTE Sampling Technique:

- Most models saw a decrease in F1-Score after applying SMOTE compared to the pre-processing and feature selection stage (initial state).

- CatBoost and LightGBM experienced a reduction in F1-Scores, but XGBoost showed slight improvements.

- Support Vector Machine (SVM) exhibits enhanced F1-score.

-

Impact of SMOTE with Tomek Links Sampling Technique:

- SMOTE with Tomek Links demonstrates further enhancements in F1-Scores for several models compared to SMOTE alone.

- Support Vector Machine (SVM) showed improvements.

- CatBoost experienced a reduction in F1-Scores compared to the pre-processing and feature selection stage (initial state).

- LightGBM showed a slight reduction in F1-Scores by 2%.

- XGBoost remained consistent with an F1-Score of 91.

-

Impact of SMOTE with ENN Sampling Technique:

- SMOTE with ENN leads to varied impacts on F1-Scores across models.

- Some models, like Decision Tree (DT), Logistic Regression (LR), and CatBoost experience significant drops in F1-Scores compared to the pre-processing and feature selection stage (initial state).

- LightGBM maintain relatively high F1-Scores, with LightGBM achieving 84%.

- XGBoost remains strong with an F1-score of 88% despite the decline.

- SMOTE with ENN may not consistently enhance performance and should be chosen carefully based on the specific model and dataset characteristics.

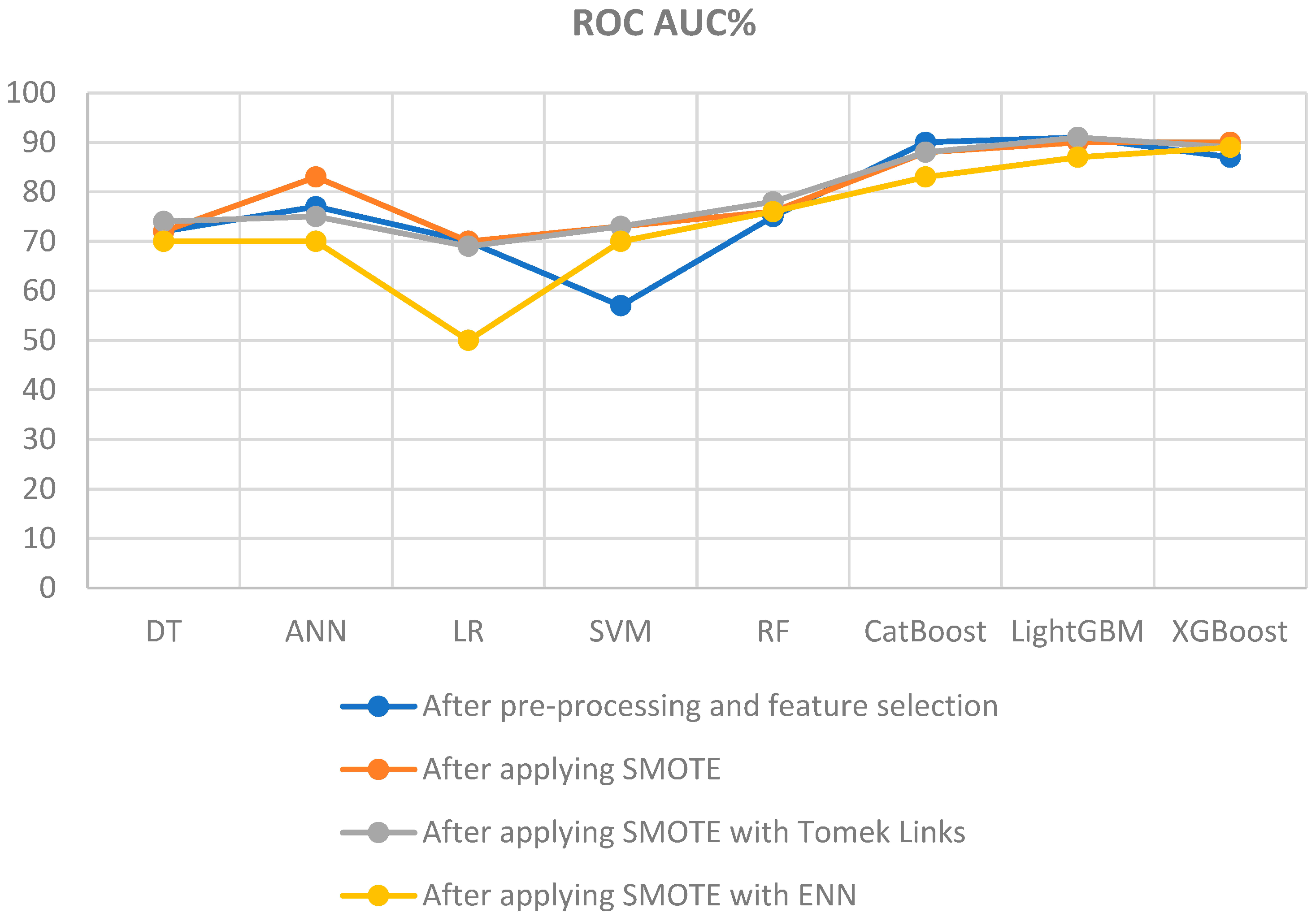

6.2.5.2. ROC AUC:

-

Impact of SMOTE Sampling Technique:

- After applying SMOTE, there are noticeable improvements in ROC AUC metrics for some models.

- ANN, SVM, RF, and XGBoost experience ROC AUC enhancements, but CatBoost, and LightGBM showed a slight reduction compared to the pre-processing and feature selection stage (initial state).

- Models like ANN and SVM see substantial improvements, with ROC AUC scores reaching 83% and 73%, respectively.

-

Impact of SMOTE with Tomek Links Sampling Technique:

- SMOTE combined with Tomek Links maintains or enhances ROC AUC metrics for most models.

- DT, SVM, and RF observe improved ROC AUC metrics.

- LightGBM and CatBoost maintain high ROC AUC scores of 91% and 88%, respectively.

- This technique's combination of class balancing (SMOTE) and removal of borderline instances (Tomek Links) continues to prove effective.

-

Impact of SMOTE with ENN Sampling Technique:

- SMOTE with ENN produces mixed results for ROC AUC metrics.

- While some models, like RF and XGBoost, and SVM showed improvements in ROC AUC metrics, others experienced drops.

- Logistic Regression (LR) encounters a significant reduction in ROC AUC.

- LightGBM maintains a respectable ROC AUC metric of 87%.

- Researchers should exercise caution when applying SMOTE with ENN, as its impact varies across models.

6.2.5.3. Sampling Techniques vs Boosting Techniques:

- Iterative Nature: Boosting methods iteratively train a sequence of weak models, typically decision trees. Each subsequent model focuses on the errors made by the previous ones. Boosting is adaptive in the sense that it can adjust to the errors and potentially correct them in subsequent iterations.

- Adaptive Nature: While oversampling introduces more instances of the minority class, boosting models, given their adaptive nature, can sometimes already compensate for the imbalance to some degree. As a result, oversampling might not always result in significant performance improvements.

- Weighted Loss Function: Many boosting algorithms, like XGBoost, offer a weighted loss function where instances from different classes can be assigned different weights. This built-in mechanism can help in addressing class imbalance, reducing the need for external sampling methods.

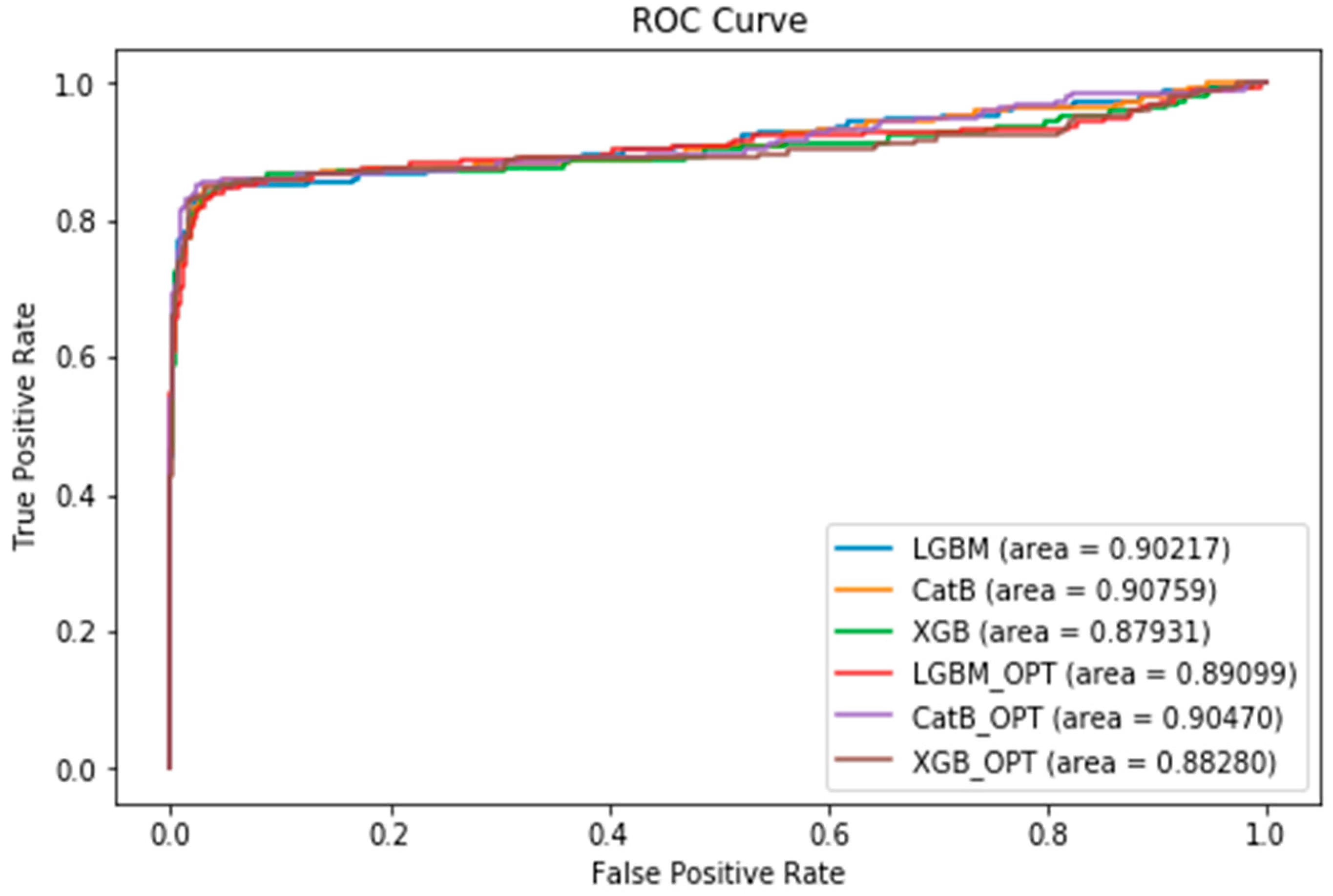

6.2.6. Applying Optuna hyperparameter optimizer

7. Conclusion

- ❖ Impact of SMOTE: After applying SMOTE, both LightGBM and XGBoost achieved impressive ROC AUC scores of 90%. Additionally, XGBoost outperformed other methods with an impressive F1-Score of 92%. SMOTE effectively balanced class distribution, leading to enhanced recall and ROC AUC for most models.

- ❖ SMOTE with Tomek Links: After applying SMOTE with Tomek Links, LightGBM excelled among the methods with an impressive ROC AUC of 91%. XGBoost also outperformed other methods with an impressive F1-Score of 91%. LightGBM demonstrates a slight performance boost, with a modest 2% improvement in F1-Score and a 1% increase in ROC AUC compared to using SMOTE alone. Conversely, XGBoost showed a slight performance decline, experiencing a corresponding 1% reduction in F1-Score and ROC AUC compared to exclusive SMOTE utilization.

- ❖ SMOTE with ENN: After applying SMOTE with ENN, XGBoost surpassed other machine learning techniques, achieving an F1-Score of 88% and an ROC AUC of 89%. However, XGBoost exhibited a performance decline, with a 4% reduction in F1-Score and a 1% decrease in ROC AUC compared to exclusive SMOTE utilization.

- ❖ Impact of Optuna Hyperparameter Tuning: After applying Optuna Hyperparameter Tuning, Cat-Boost outperformed XGBoost and LightGBM when Optuna was utilized for hyperparameter optimization, achieving an impressive F1-Score of 93% and an ROC AUC of 91%. The enhanced F1-Score and ROC AUC results observed after applying Optuna hyperparameter tuning to CatBoost, XGBoost, and LightGBM are likely attributable to improved hyperparameter configurations. Optuna fine-tuned these settings more effectively for the specific dataset, reducing overfitting and enhancing the models' capacity to generalize to new data. This ultimately resulted in improved overall model performance, as hyperparameters significantly influence the performance of these algorithms with your dataset.

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- The Chartered Institute of Marketing, “Cost of Customer Acquisition versus Customer Retention”, 2010.

- F. Eichinger, D.D. Nauck, F. Klawonn, “Sequence mining for customer behaviour predictions in telecommunications”, in: Proceedings of the Workshop on Practical Data Mining at ECML/PKDD, 2006, pp. 3–10.

- U.D. Prasad, S. Madhavi, “Prediction of churn behaviour of bank customers using data mining tools”, Indian J. Market. 42 (9) (2011) 25–30.

- Keramati, Abbas, Hajar Ghaneei, and Seyed Mohammad Mirmohammadi. "Developing a prediction model for customer churn from electronic banking services using data mining." Financial Innovation 2.1 (2016): 1-13.

- Scriney, Michael, Dongyun Nie, and Mark Roantree. "Predicting customer churn for insurance data." International Conference on Big Data Analytics and Knowledge Discovery. Springer, Cham, 2020.

- De Caigny, Arno, Kristof Coussement, and Koen W. De Bock. "A new hybrid classification algorithm for customer churn prediction based on logistic regression and decision trees." European Journal of Operational Research 269.2 (2018): 760-772.

- K. Kim, C.-H. Jun, J. Lee, “Improved churn prediction in telecommunication industry by analyzing a large network”, Expert Syst. Appl. 41 (15) (2014) 6575–6584.

- Ahmad, Abdelrahim Kasem, Assef Jafar, and Kadan Aljoumaa. "Customer churn prediction in telecom using machine learning in big data platform." Journal of Big Data 6.1 (2019): 1-24.

- De Caigny, Arno, Kristof Coussement, and Koen W. De Bock. "A new hybrid classification algorithm for customer churn prediction based on logistic regression and decision trees." European Journal of Operational Research 269.2 (2018): 760-772.

- R.J. Jadhav, U.T. Pawar, “Churn prediction in telecommunication using data mining technology”, IJACSA Edit. 2 (2) (2011) 17–19.

- D. Radosavljevik, P. van der Putten, K.K. Larsen, “The impact of experimental setup in prepaid churn prediction for mobile telecommunications: what to predict, for whom and does the customer experience matter?”, Trans MLDM 3 (2) (2010) 80–99.

- Y. Richter, E. Yom-Tov, N. Slonim, “Predicting customer churn in mobile networks through analysis of social groups”, SDM, vol. 2010, SIAM, 2010, pp. 732–741.

- Amin, Adnan, et al. "Cross-company customer churn prediction in telecommunication: A comparison of data transformation methods." International Journal of Information Management 46 (2019): 304-319.

- K. Tsiptsis, A. Chorianopoulos, “Data Mining Techniques in CRM: Inside Customer Segmentation”, John Wiley & Sons, 2011.

- Joudaki, Majid, et al. "Presenting a New Approach for Predicting and Preventing Active/Deliberate Customer Churn in Telecommunication Industry." Proceedings of the International Conference on Security and Management (SAM). The Steering Committee of the World Congress in Computer Science, Computer Engineering and Applied Computing (WorldComp), 2011.

- Amin, Adnan, et al. "Customer churn prediction in telecommunication industry using data certainty." Journal of Business Research 94 (2019): 290-301.

- E. Shaaban, Y. Helmy, A. Khedr, M. Nasr, “A proposed churn prediction model”, J. Eng. Res. Appl. 2 (4) (2012) 693–697.

- Khan, Yasser, et al. "Customers churn prediction using artificial neural networks (ANN) in telecom industry." Editorial Preface From the Desk of Managing Editor 10.9 (2019).

- Ho, Tin Kam. "Random decision forests." Proceedings of 3rd international conference on document analysis and recognition. Vol. 1. IEEE, 1995.

- Breiman, Leo. "Random forests." Machine learning 45.1 (2001): 5-32.

- Amin, S. Shehzad, C. Khan, I. Ali, S. Anwar, “Churn prediction in telecommunication industry using rough set approach, in: New Trends in Computational Collective Intelligence”, Springer, 2015, pp. 83–95.

- H. Witten, E. Frank, M. A. Hall and C. J. Pal, Data Mining : Practical Machine Learning Tools and Techniques, San Francisco: Elsevier Science & Technology, 2016.

- Kumar and M. Jain, Ensemble Learning for AI Developers: Learn Bagging, Stacking, and Boosting Methods with Use Cases, Apress, 2020.

- M. Van Wezel and R. Potharst, "Improved customer choice predictions using ensemble methods," European Journal of Operational Research, vol. 181, no. 1, pp. 436-452, 2007.

- Ullah, B. Raza, A. K. Malik, M. Imran, S. U. Islam and S. W. Kim, "A churn prediction model using random forest: analysis of machine learning techniques for churn prediction and factor identification in telecom sector," IEEE Access, pp. 60134-60149, 2019.

- P. Lalwani, M. M. Kumar, J. Singh Chadha and P. Sethi, "Customer churn prediction system: a machine learning approach," Computing, pp. 1-24, 2021.

- Tarekegn, Adane, et al. "Predictive modeling for frailty conditions in elderly people: machine learning approaches." JMIR medical informatics 8.6 (2020): e16678.

- Ahmed, Mahreen, et al. "Exploring nested ensemble learners using overproduction and choose approach for churn prediction in telecom industry." Neural Computing and Applications 32.8 (2020): 3237-3251.

- B.E. Boser, I.M. Guyon, V.N. Vapnik, “A training algorithm for optimal margin classifiers”, in Proceedings of the Fifth Annual Workshop on Computational Learning Theory”, ACM, 1992, pp. 144–152.

- Y. Hur, S. Lim, “Customer churning prediction using support vector machines in online auto insurance service, in: Advances in Neural Networks” – ISNN 2005, Springer, 2005, pp. 928–933.

- S.J. Lee, K. Siau, A review of data mining techniques, Ind. Manage. Data Syst. 101 (1) (2001) 41–46. .

- Mazhari, N.,Imani, M., Joudaki, M. and Ghelichpour, A.,"An overview of classification and its algorithms" 3rd Data Mining Conference (IDMC'09): Tehran, 2009.

- G.S. Linoff, M.J. Berry, “Data Mining Techniques: For Marketing, Sales, and Customer Relationship Management”, John Wiley & Sons, 2011.

- Z.-H. Zhou, Ensemble Methods - Foundations and Algorithms, Taylor & Francis group, LLC, 2012.

- Kumar and M. Jain, Ensemble Learning for AI Developers: Learn Bagging, Stacking, and Boosting Methods with Use Cases, Apress, 2020.

- H. Witten, E. Frank, M. A. Hall and C. J. Pal, Data Mining : Practical Machine Learning Tools and Techniques, San Francisco: Elsevier Science & Technology, 2016.

- J. Karlberg and M. Axen, "Binary Classification for Predicting Customer Churn," Umeå University, Umeå, 2020.

- D. Windridge and R. Nagarajan, "Quantum Bootstrap Aggregation," in International Symposium on Quantum Interaction, 2017.

- J. C. Wang, T. Hastie, “Boosted varying-coefficient regression models for product demand prediction,” Journal of Computational and Graphical Statistics, vol. 23, no. 2, pp 361–382, 2014.

- E Al Daoud, “Intrusion Detection Using a New Particle Swarm Method and Support Vector Machines,” World Academy of Science, Engineering and Technology, vol. 77, 59-62, 2013.

- E. Al Daoud, H Turabieh, “New empirical nonparametric kernels for support vector machine classification,” Applied Soft Computing, vol. 13, no. 4, 1759-1765, 2013.

- E. Al Daoud, "An Efficient Algorithm for Finding a Fuzzy Rough Set Reduct Using an Improved Harmony Search," I.J. Modern Education and Computer Science, vol. 7, no. 2, pp16-23, 2015.

- Y. Zhang, A. Haghani. “A gradient boosting method to improve travel time prediction. Transportation Research Part C,” Emerging Technologies, vol. 58,308–324,2015.

- A. Dorogush, V. Ershov, A. Gulin "CatBoost: gradient boosting with categorical features support," NIPS, p1-7, 2017.M. Qi, K. Guolin, W. Taifeng, C. Wei, Y. Qiwei, M. Weidong, L. TieYan, "A Communication-Efficient Parallel Algorithm for Decision Tree," Advances in Neural Information Processing Systems, vol. 29, pp. 1279-1287, 2016.

- M. Qi, K. Guolin, W. Taifeng, C. Wei, Y. Qiwei, M. Weidong, L. TieYan, "A Communication-Efficient Parallel Algorithm for Decision Tree," Advances in Neural Information Processing Systems, vol. 29, pp. 1279-1287, 2016.

- A. Klein, S. Falkner, S. Bartels, P. Hennig, F. Hutter, “Fast Bayesian optimization of machine learning hyperparameters on large datasets,” In Proceedings of Machine Learning Research PMLR, vol. 54, pp 528-536,2017.

- Kubat, Miroslav, and Stan Matwin. "Addressing the curse of imbalanced training sets: one-sided selection." Icml. Vol. 97. No. 1. 1997.

- Chawla, Nitesh V., et al. "SMOTE: synthetic minority over-sampling technique." Journal of artificial intelligence research 16 (2002): 321-357.

- Tomek, Ivan. "Two modifications of CNN." (1976).

- Wilson, Dennis L. "Asymptotic properties of nearest neighbor rules using edited data." IEEE Transactions on Systems, Man, and Cybernetics 3 (1972): 408-421.

- S. Tyagi and S. Mittal, "Sampling Approaches for Imbalanced Data Classification Problem in Machine Learning," in Proceedings of ICRIC 2019, 2020.

- T. Fawcett, “An introduction to roc analysis”, Pattern Recogn. Lett. 27 (8) (2006) 861–874.

- Akiba, Takuya, et al. "Optuna: A next-generation hyperparameter optimization framework." Proceedings of the 25th ACM SIGKDD international conference on knowledge discovery & data mining. 2019.

- Bergstra, James, Daniel Yamins, and David Cox. “Making a science of model search: Hyperparameter optimization in hundreds of dimensions for vision architectures.” Proceedings of The 30th International Conference on Machine Learning. 2013.

- Bergstra, James S., et al. “Algorithms for hyper-parameter optimization.” Advances in Neural Information Processing Systems. 2011.

- Hansen, Nikolaus, and Andreas Ostermeier. "Completely derandomized self-adaptation in evolution strategies." Evolutionary computation 9.2 (2001): 159-195.

- Li, Liam, et al. "A system for massively parallel hyperparameter tuning." Proceedings of Machine Learning and Systems 2 (2020): 230-246.

- Christy, R. (2020). Customer Churn Prediction 2020, Version 1. Retrieved January 20, 2022 from https://www.kaggle.com/code/rinichristy/customer-churn-prediction-2020.

| Predicted class | |||

| Churners | Non-churners | ||

| Actual class | Churners | TP | FN |

| Non-churners | FP | TN | |

| ROC AUC< 50% | Something is wrong * |

| 50%<= ROC AUC <60% | Similar to flipping a coin |

| 60%<= ROC AUC <70% | Weak prediction |

| 70%<= ROC AUC <80% | Good Prediction |

| 80%<= ROC AUC <90% | Very Good Prediction |

| ROC AUC >= 90% | Excellent Prediction |

| Variable name | Type |

|---|---|

| state, (the US state of customers) | string |

| account_length (number of active months) | numerical |

| area_code, (area code of customers) | string |

| international_plan, (whether customers have international plans) | yes/no |

| voice_mail_plan, (whether customers have voice mail plans) | yes/no |

| number_vmail_messages, (number of voice-mail messages) | numerical |

| total_day_minutes, (total minutes of day calls) | numerical |

| total_day_calls, (total number of day calls) | numerical |

| total_day_charge, (total charge of day calls) | numerical |

| total_eve_minutes, (total minutes of evening calls) | numerical |

| total_eve_calls, (total number of evening calls) | numerical |

| total_eve_charge, (total charge of evening calls) | numerical |

| total_night_minutes, (total minutes of night calls) | numerical |

| total_night_calls, (total number of night calls) | numerical |

| total_night_charge, (total charge of night calls) | numerical |

| total_intl_minutes, (total minutes of international calls) | numerical |

| total_intl_calls, (total number of international calls) | numerical |

| total_intl_charge, (total charge of international calls) | numerical |

| number_customer_service_calls, (number of calls to customer service) | numerical |

| churn, (customer churn – the target variable) | yes/no |

| Models | Precision% | Recall% | F1-Score% | ROC AUC% |

|---|---|---|---|---|

| DT | 91 | 72 | 77 | 72 |

| ANN | 85 | 76 | 80 | 77 |

| LR | 61 | 70 | 62 | 70 |

| SVM | 81 | 57 | 59 | 57 |

| RF | 96 | 75 | 81 | 75 |

| CatBoost | 90 | 90 | 90 | 90 |

| LightGBM | 94 | 91 | 92* | 91* |

| XGBoost | 96 | 87 | 91 | 87 |

| Models | Precision% | Recall% | F1-Score% | ROC AUC% |

|---|---|---|---|---|

| DT | 69 | 72 | 70 | 72 |

| ANN | 70 | 73 | 71 | 83 |

| LR | 61 | 71 | 61 | 70 |

| SVM | 65 | 73 | 68 | 73 |

| RF | 83 | 76 | 79 | 76 |

| CatBoost | 79 | 88 | 83 | 88 |

| LightGBM | 87 | 90 | 88 | 90* |

| XGBoost | 95 | 90 | 92* | 90* |

| Models | Precision% | Recall% | F1-Score% | ROC AUC% |

|---|---|---|---|---|

| DT | 74 | 74 | 74 | 74 |

| ANN | 69 | 75 | 71 | 75 |

| LR | 61 | 70 | 61 | 69 |

| SVM | 65 | 73 | 67 | 73 |

| RF | 85 | 78 | 81 | 78 |

| CatBoost | 80 | 88 | 83 | 88 |

| LightGBM | 89 | 91 | 90 | 91* |

| XGBoost | 94 | 89 | 91* | 89 |

| Models | Precision% | Recall% | F1-Score% | ROC AUC% |

|---|---|---|---|---|

| DT | 60 | 70 | 50 | 70 |

| ANN | 61 | 70 | 60 | 70 |

| LR | 52 | 50 | 50 | 50 |

| SVM | 60 | 70 | 58 | 70 |

| RF | 67 | 76 | 69 | 76 |

| CatBoost | 70 | 83 | 72 | 83 |

| LightGBM | 80 | 89 | 84 | 87 |

| XGBoost | 88 | 89 | 88* | 89* |

| DT | ANN | LR | SVM | RF | CatBoost | XGBoost | LightGBM | |

|---|---|---|---|---|---|---|---|---|

| Initial | 77* | 80* | 62* | 59 | 81* | 90* | 92* | 91 |

| SMOTE | 70 | 71 | 61 | 68* | 79 | 83 | 88 | 92* |

| SMOTE-TOMEK | 74 | 71 | 61 | 67 | 81* | 83 | 90 | 91 |

| SMOTE-ENN | 50 | 60 | 50 | 58 | 69 | 72 | 84 | 88 |

| DT | ANN | LR | SVM | RF | CatBoost | XGBoost | LightGBM | |

|---|---|---|---|---|---|---|---|---|

| Initial | 72 | 77 | 70* | 57 | 75 | 90* | 91* | 87 |

| SMOTE | 72 | 83* | 70* | 73* | 76 | 88 | 90 | 90* |

| SMOTE-TOMEK | 74* | 75 | 69 | 73* | 78* | 88 | 91* | 89 |

| SMOTE-ENN | 70 | 70 | 50 | 70 | 76 | 83 | 87 | 89 |

| Models | Precision% | Recall% | F1-Score% | ROC AUC% |

|---|---|---|---|---|

| CatBoost | 89 | 91 | 90 | 91* |

| CatBoost-Optuna | 95 | 91 | 93* | 91* |

| LightGBM | 92 | 90 | 91 | 90 |

| LightGBM-Optuna | 93 | 89 | 90 | 89 |

| XGBoost | 93 | 88 | 90 | 88 |

| XGBoost-Optuna | 94 | 88 | 91 | 88 |

| Parameter | Description | Value |

|---|---|---|

| XGBoost Tuning Parameters | ||

| verbosity | Verbosity of printing messages | 0 |

| objective | Objective function | binary:logistic |

| tree_method | Tree construction method | exact |

| booster | Type of booster | dart |

| lambda | L2 regularization weight | 0.010281489790562261 |

| alpha | L1 regularization weight | 0.0008440304772889829 |

| subsample | Sampling ratio for training data | 0.8298281841818362 |

| colsample_bytree | Sampling according to each tree | 0.9985902928710126 |

| max_depth | Maximum depth of the tree | 7 |

| min_child_weight | Minimum child weight | 2 |

| eta | Learning rate | 0.12406825365082062 |

| gamma | Minimum loss reduction required to make a further partition on a leaf node of the tree | 0.0004490383815764321 |

| grow_policy | Controls a way new nodes are added to the tree | depthwise |

| LightGBM Tuning Parameters | ||

| objective | Objective function | binary |

| metric | Metric for binary classification | binary_logloss |

| verbosity | Verbosity of printing messages | -1 |

| boosting_type | Type of booster | dart |

| num_leaves | Maximum number of leaves in one tree | 1169 |

| max_depth | Maximum depth of the tree | 10 |

| lambda_l1 | L1 regularization weight | 2.689492421801289e-07 |

| lambda_l2 | L2 regularization weight | 7.2387875465462e-08 |

| feature_fraction | LightGBM will randomly select part of features on each iteration | 0.870805980078817 |

| bagging_fraction | LightGBM will randomly select part of data without resampling | 0.6280893693081118 |

| bagging_freq | Frequency for bagging | 7 |

| min_child_samples | Minimum number of data in one leaf | 8 |

| CatBoost Tuning Parameters | ||

| Objective | Objective function | Logloss |

| colsample_bylevel | Subsampling rate per level for each tree | 0.07760972009427407 |

| depth | Depth of the tree | 12 |

| boosting_type | Type of booster | Ordered |

| bootstrap_type | Sampling method for bagging | Bayesian |

| bagging_temperature | Controls the similarity of samples in each bag | 0.0 |

|

Metric/Method |

DT | ANN | LR | SVM | RF | CatBoost | XGBoost | LightGBM |

|

F1-Score |

Initial = 77% | Initial = 80% | Initial = 62% | SMOTE = 68% | Initial and SMOTE-TOMEK = 81% | Initial = 90% | Initial = 92% | SMOTE = 92% |

|

ROC AUC |

SMOTE-TOMEK = 74% | SMOTE = 83% | Initial and SMOTE = 70% | SMOTE and SMOTE-TOMEK = 73% | SMOTE-TOMEK = 78% | Initial = 90% | Initial and SMOTE-TOMEK = 91% | SMOTE = 90% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).